Data Mining Classification Basic Concepts Decision Trees and

Data Mining Classification: Basic Concepts, Decision Trees, and Model Evaluation Lecture Notes for Chapter 4 Introduction to Data Mining by Tan, Steinbach, Kumar © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 1

Classification: Definition l Given a collection of records (training set ) – Each record contains a set of attributes, one of the attributes is the class. l l Find a model for class attribute as a function of the values of other attributes. Goal: previously unseen records should be assigned a class as accurately as possible. – A test set is used to determine the accuracy of the model. Usually, the given data set is divided into training and test sets, with training set used to build the model and test set used to validate it. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 2

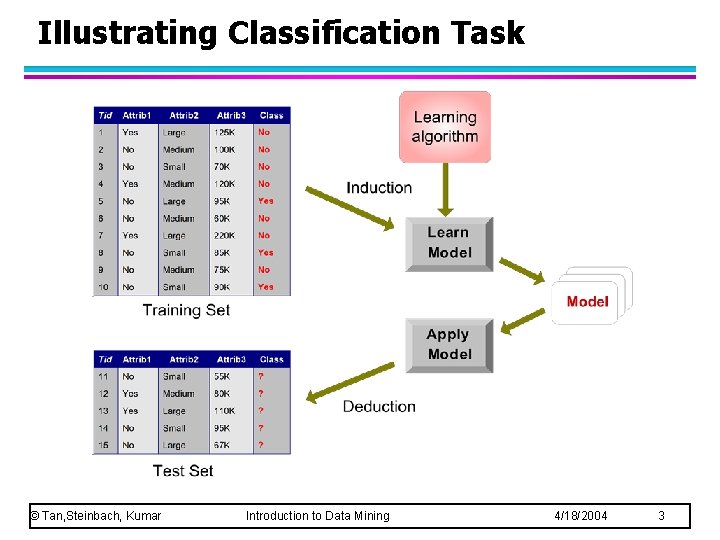

Illustrating Classification Task © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 3

Examples of Classification Task l Predicting tumor cells as benign or malignant l Classifying credit card transactions as legitimate or fraudulent l Classifying secondary structures of protein as alpha-helix, beta-sheet, or random coil l Categorizing news stories as finance, weather, entertainment, sports, etc © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 4

Classification Techniques Decision Tree based Methods l Rule-based Methods l Memory based reasoning l Neural Networks l Naïve Bayes and Bayesian Belief Networks l Support Vector Machines l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 5

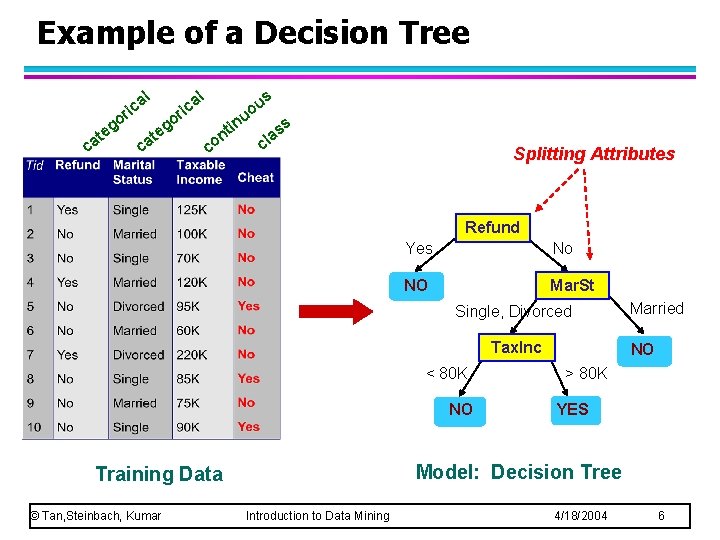

Example of a Decision Tree al ric at c o eg c at al o eg ric in nt co s u o u ss a cl Splitting Attributes Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO NO > 80 K YES Model: Decision Tree Training Data © Tan, Steinbach, Kumar Married Introduction to Data Mining 4/18/2004 6

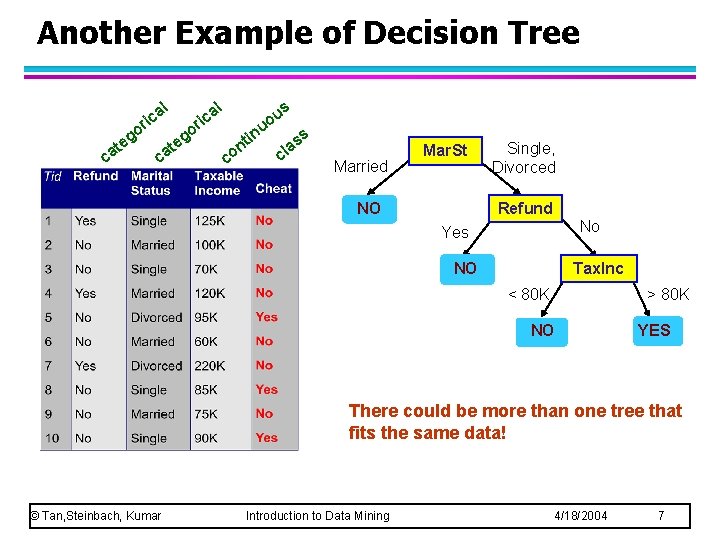

Another Example of Decision Tree l g te ca l a ric o o ca g te s a ric u uo co in t n ss a cl Married Mar. St NO Single, Divorced Refund No Yes NO Tax. Inc < 80 K > 80 K NO YES There could be more than one tree that fits the same data! © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 7

Decision Tree Classification Task Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 8

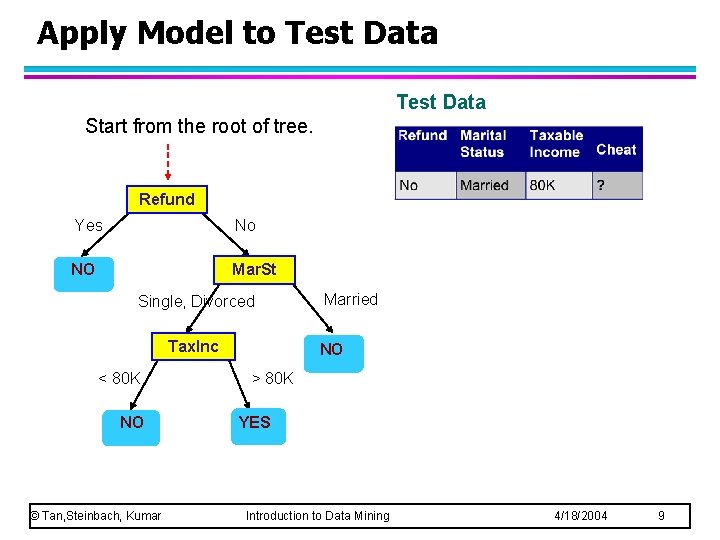

Apply Model to Test Data Start from the root of tree. Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 9

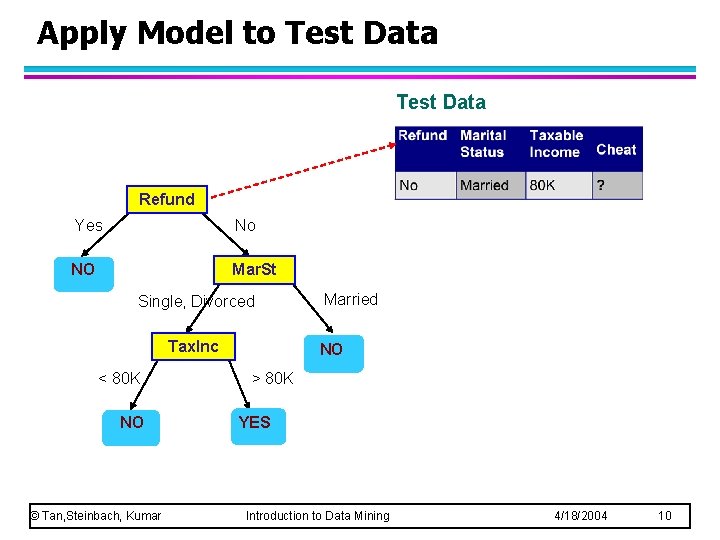

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 10

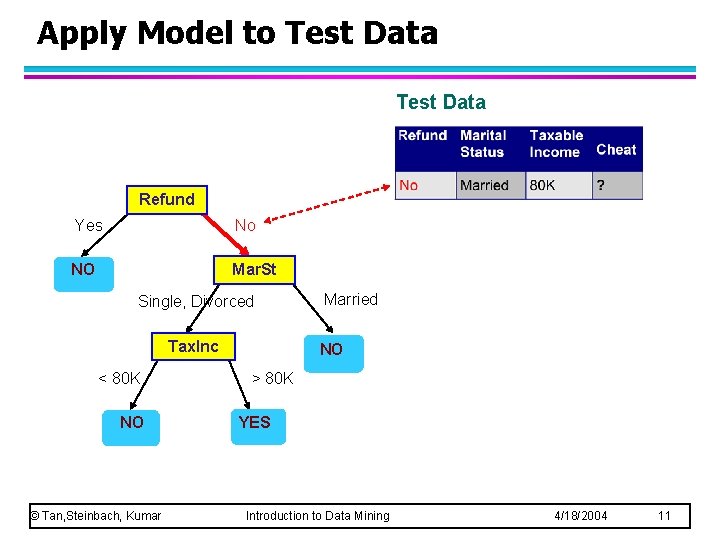

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 11

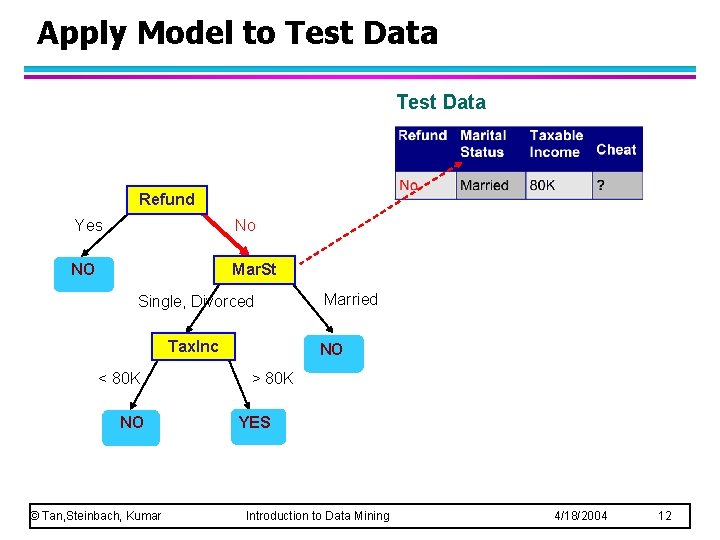

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 12

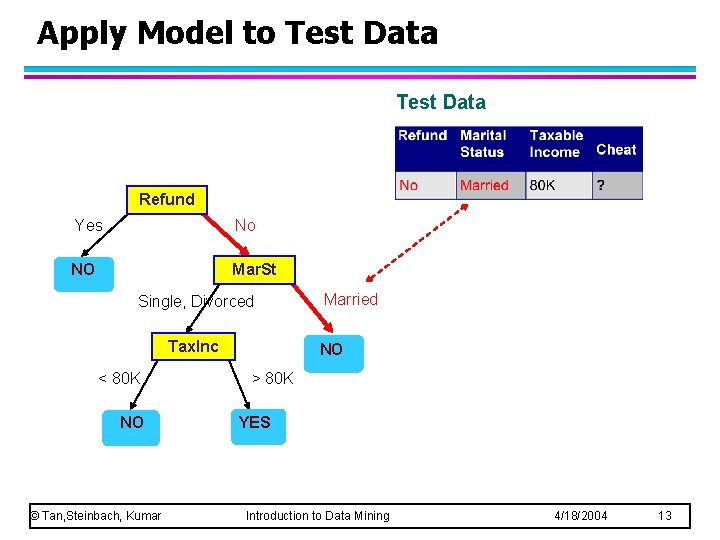

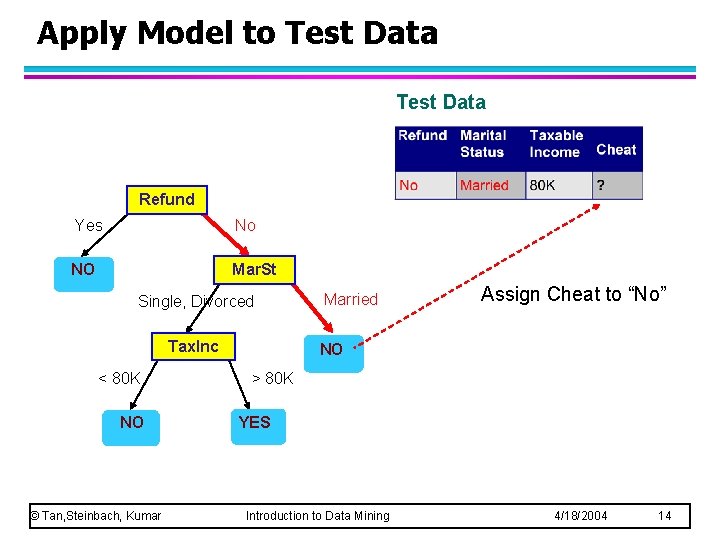

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 13

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married Assign Cheat to “No” NO > 80 K YES Introduction to Data Mining 4/18/2004 14

Decision Tree Classification Task Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 15

Decision Tree Induction l Hunt’s Algorithm (one of the earliest) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 16

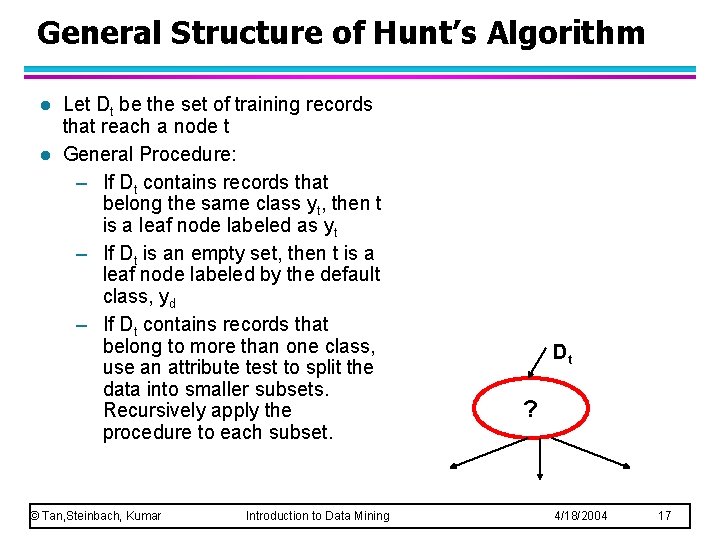

General Structure of Hunt’s Algorithm l l Let Dt be the set of training records that reach a node t General Procedure: – If Dt contains records that belong the same class yt, then t is a leaf node labeled as yt – If Dt is an empty set, then t is a leaf node labeled by the default class, yd – If Dt contains records that belong to more than one class, use an attribute test to split the data into smaller subsets. Recursively apply the procedure to each subset. © Tan, Steinbach, Kumar Introduction to Data Mining Dt ? 4/18/2004 17

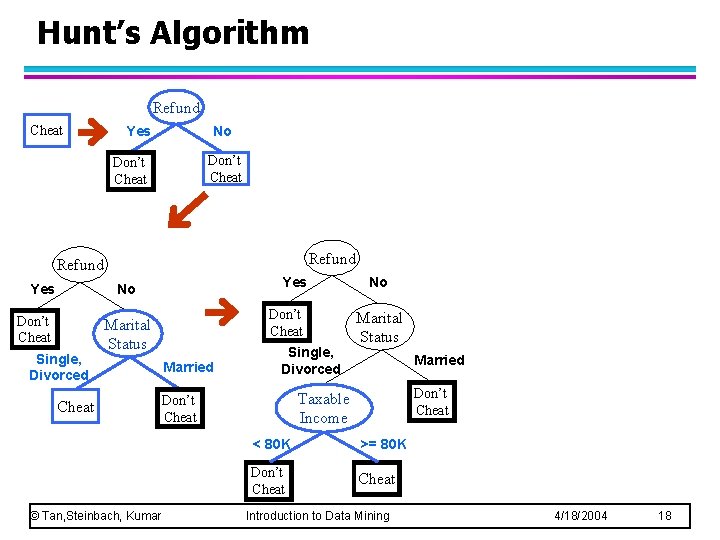

Hunt’s Algorithm Refund Cheat Yes No Don’t Cheat Refund Yes No Don’t Cheat Single, Divorced Don’t Cheat Marital Status Cheat © Tan, Steinbach, Kumar Married Single, Divorced No Marital Status Married Don’t Cheat Taxable Income Don’t Cheat < 80 K >= 80 K Don’t Cheat Introduction to Data Mining 4/18/2004 18

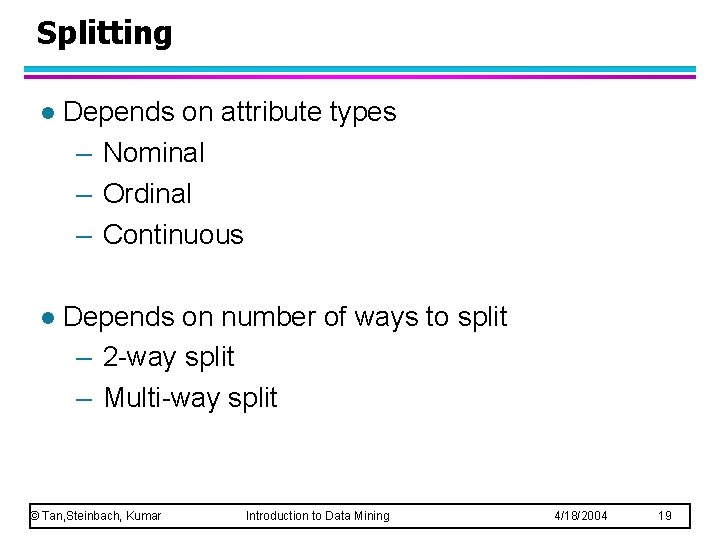

Splitting l Depends on attribute types – Nominal – Ordinal – Continuous l Depends on number of ways to split – 2 -way split – Multi-way split © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 19

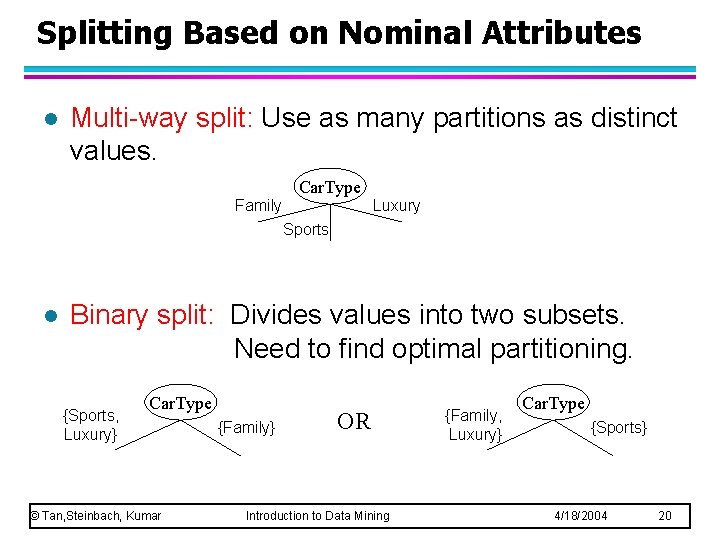

Splitting Based on Nominal Attributes l Multi-way split: Use as many partitions as distinct values. Car. Type Family Luxury Sports l Binary split: Divides values into two subsets. Need to find optimal partitioning. {Sports, Luxury} Car. Type © Tan, Steinbach, Kumar {Family} OR Introduction to Data Mining {Family, Luxury} Car. Type {Sports} 4/18/2004 20

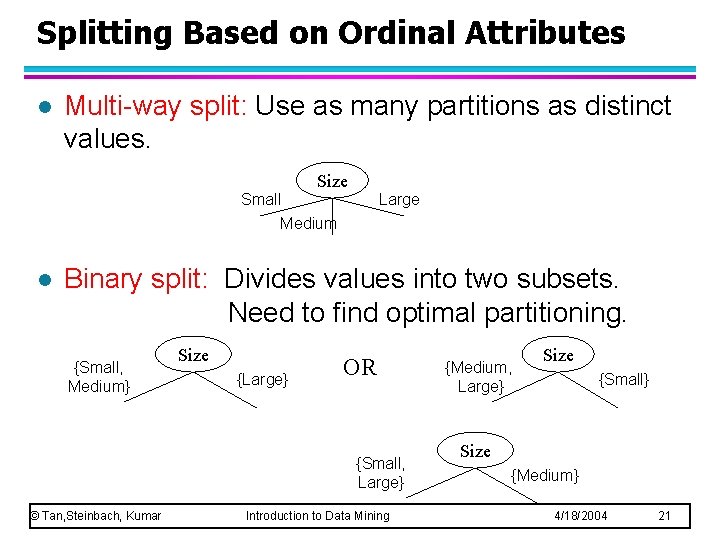

Splitting Based on Ordinal Attributes l Multi-way split: Use as many partitions as distinct values. Size Small Medium l Large Binary split: Divides values into two subsets. Need to find optimal partitioning. {Small, Medium} Size {Large} OR {Small, Large} © Tan, Steinbach, Kumar Introduction to Data Mining {Medium, Large} Size {Small} Size {Medium} 4/18/2004 21

Splitting Based on Continuous Attributes © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 22

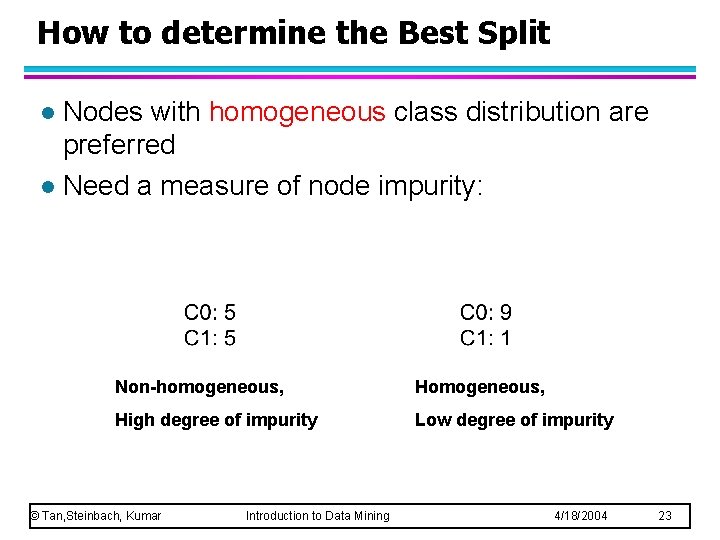

How to determine the Best Split Nodes with homogeneous class distribution are preferred l Need a measure of node impurity: l Non-homogeneous, High degree of impurity Low degree of impurity © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 23

Measures of Node Impurity l Gini Index l Entropy © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 24

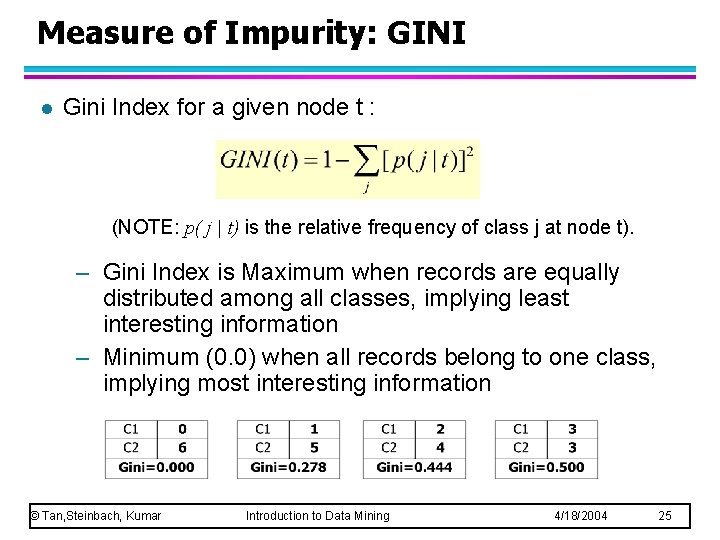

Measure of Impurity: GINI l Gini Index for a given node t : (NOTE: p( j | t) is the relative frequency of class j at node t). – Gini Index is Maximum when records are equally distributed among all classes, implying least interesting information – Minimum (0. 0) when all records belong to one class, implying most interesting information © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 25

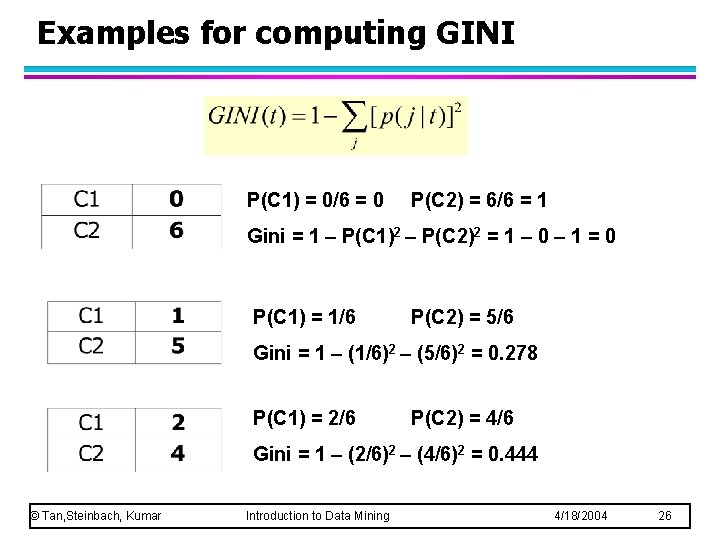

Examples for computing GINI P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Gini = 1 – P(C 1)2 – P(C 2)2 = 1 – 0 – 1 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Gini = 1 – (1/6)2 – (5/6)2 = 0. 278 P(C 1) = 2/6 P(C 2) = 4/6 Gini = 1 – (2/6)2 – (4/6)2 = 0. 444 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 26

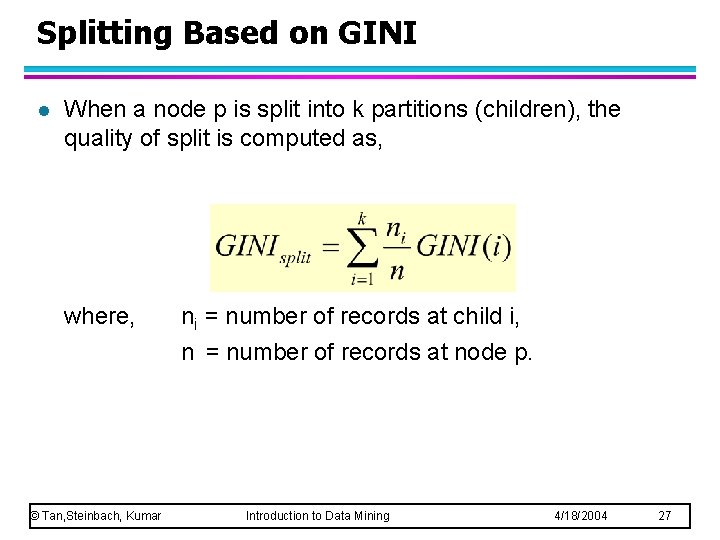

Splitting Based on GINI l When a node p is split into k partitions (children), the quality of split is computed as, where, © Tan, Steinbach, Kumar ni = number of records at child i, n = number of records at node p. Introduction to Data Mining 4/18/2004 27

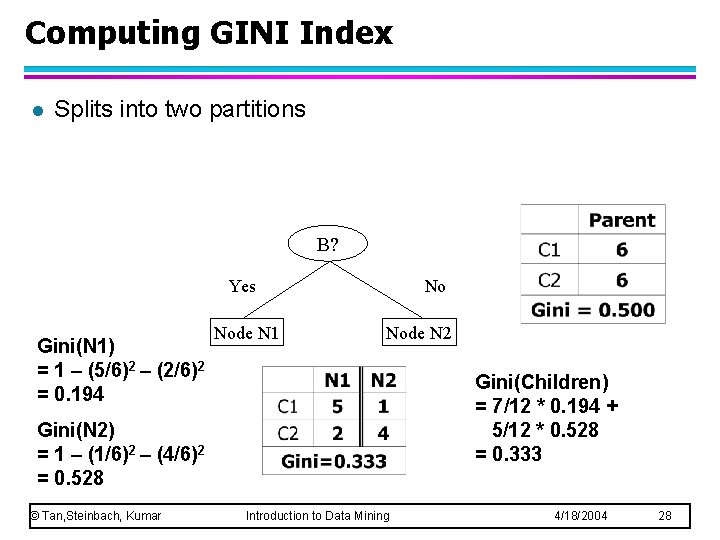

Computing GINI Index l Splits into two partitions B? Yes Gini(N 1) = 1 – (5/6)2 – (2/6)2 = 0. 194 Node N 1 No Node N 2 Gini(Children) = 7/12 * 0. 194 + 5/12 * 0. 528 = 0. 333 Gini(N 2) = 1 – (1/6)2 – (4/6)2 = 0. 528 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 28

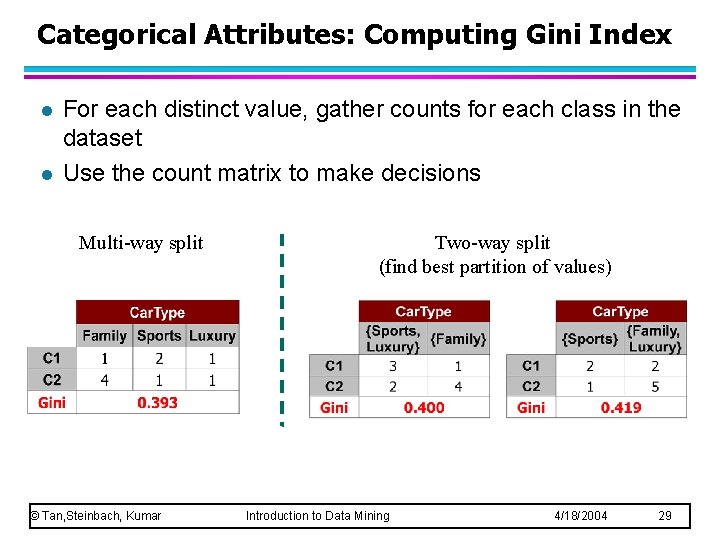

Categorical Attributes: Computing Gini Index l l For each distinct value, gather counts for each class in the dataset Use the count matrix to make decisions Multi-way split © Tan, Steinbach, Kumar Two-way split (find best partition of values) Introduction to Data Mining 4/18/2004 29

Decision Tree Based Classification l Advantages: – Inexpensive to construct – Extremely fast at classifying unknown records – Easy to interpret for small-sized trees – Accuracy is comparable to other classification techniques for many simple data sets © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 30

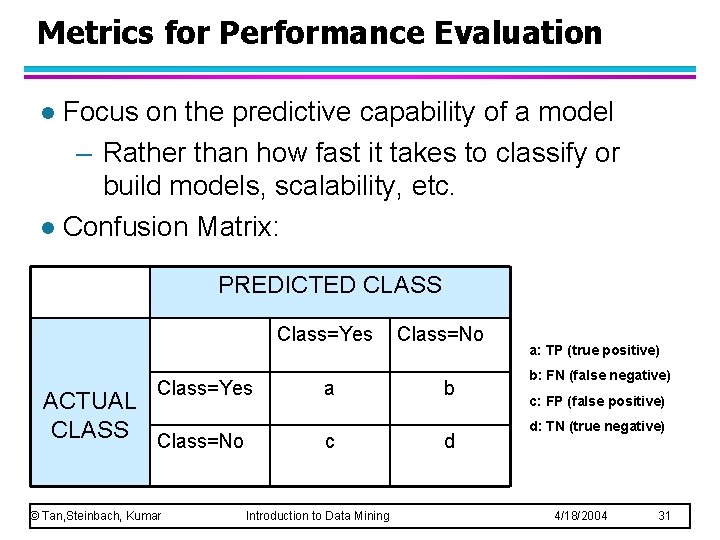

Metrics for Performance Evaluation Focus on the predictive capability of a model – Rather than how fast it takes to classify or build models, scalability, etc. l Confusion Matrix: l PREDICTED CLASS Class=Yes ACTUAL CLASS Class=No © Tan, Steinbach, Kumar a c Introduction to Data Mining Class=No b d a: TP (true positive) b: FN (false negative) c: FP (false positive) d: TN (true negative) 4/18/2004 31

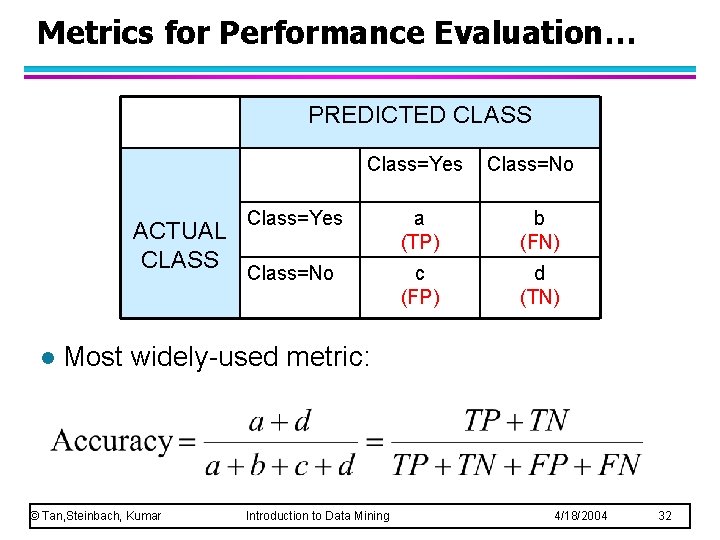

Metrics for Performance Evaluation… PREDICTED CLASS Class=Yes ACTUAL CLASS l Class=No Class=Yes a (TP) b (FN) Class=No c (FP) d (TN) Most widely-used metric: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 32

- Slides: 32