Data Mining Classification Basic Concepts Decision Trees and

Data Mining Classification: Basic Concepts, Decision Trees, and Model Evaluation Lecture Notes for Chapter 4 Introduction to Data Mining by Tan, Steinbach, Kumar © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 1

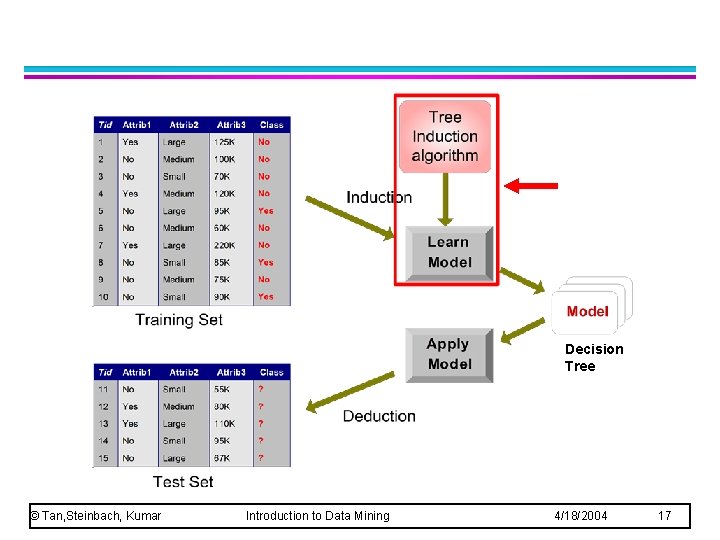

l Given a collection of records (training set ) – Each record contains a set of attributes, one of the attributes is the class. l l Find a model for class attribute as a function of the values of other attributes. Goal: previously unseen records should be assigned a class as accurately as possible. – A test set is used to determine the accuracy of the model. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 2

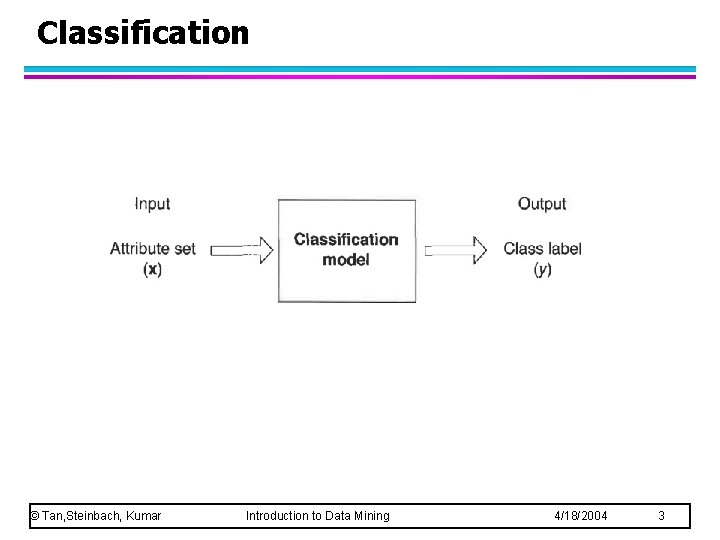

Classification © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 3

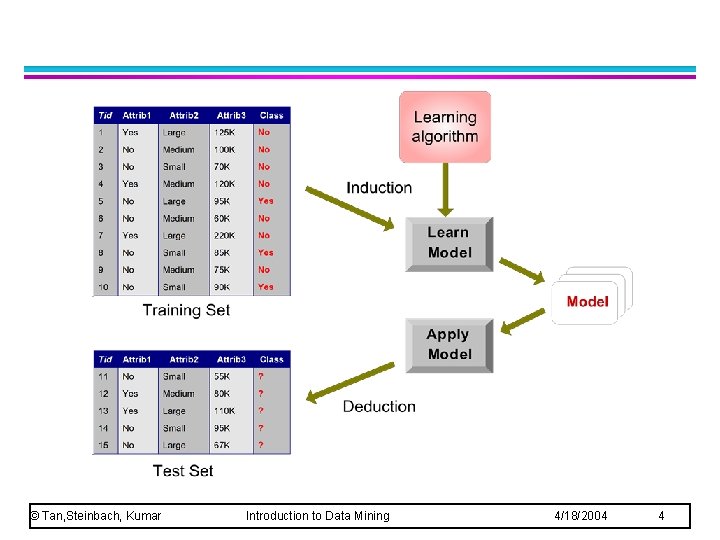

© Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 4

l P r e d i c t i n g t u m o © Tan, Steinbach, Kumar r Introduction to Data Mining 4/18/2004 5

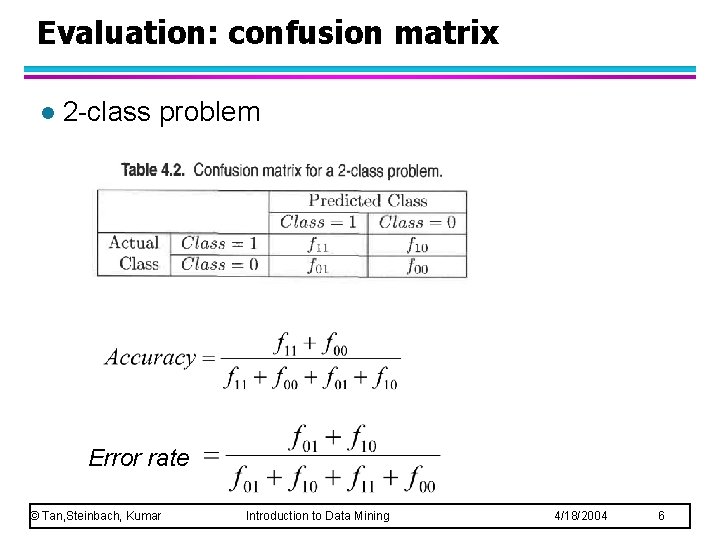

Evaluation: confusion matrix l 2 -class problem Error rate © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 6

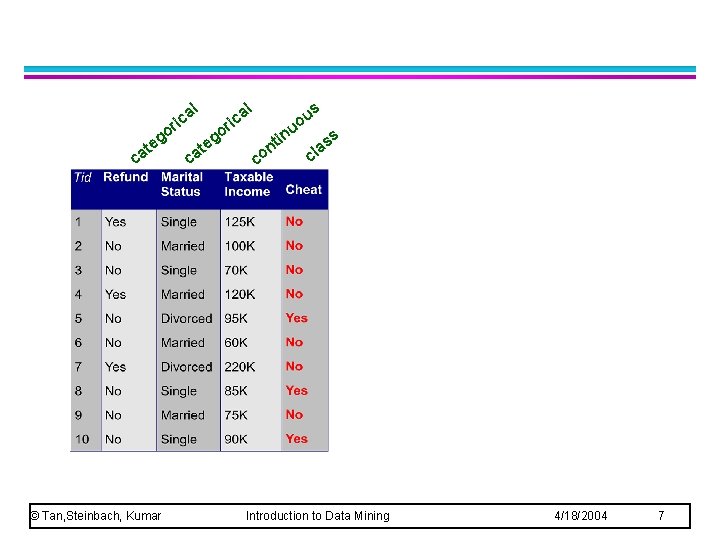

al ric o eg at c © Tan, Steinbach, Kumar c at al o eg ric s u o u in nt co ss a l c Introduction to Data Mining 4/18/2004 7

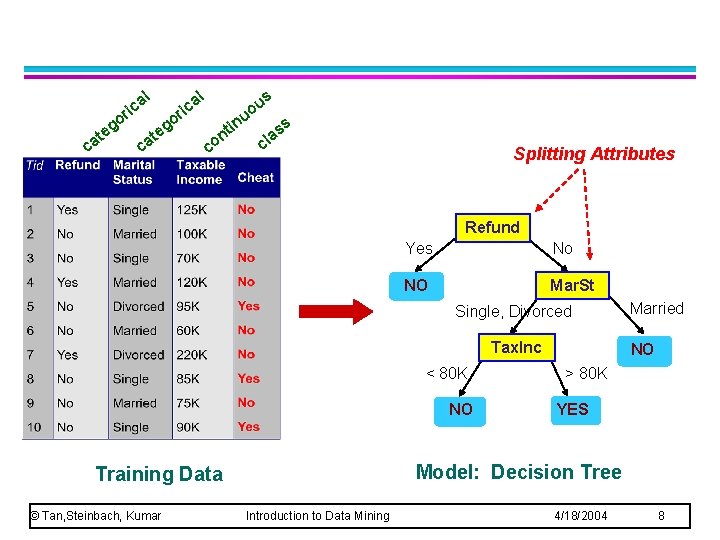

al ric at c o eg c at al o eg ric in nt co s u o u ss a cl Splitting Attributes Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO NO > 80 K YES Model: Decision Tree Training Data © Tan, Steinbach, Kumar Married Introduction to Data Mining 4/18/2004 8

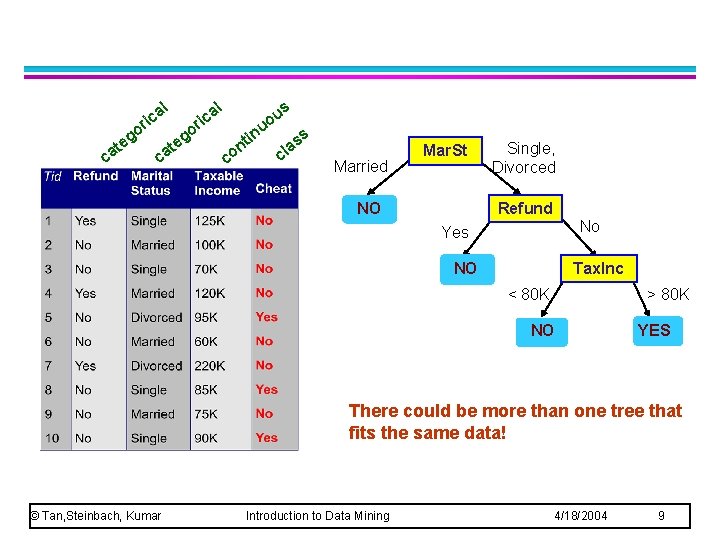

l g te ca l a ric o o ca g te s a ric u uo co in t n ss a cl Married Mar. St NO Single, Divorced Refund No Yes NO Tax. Inc < 80 K > 80 K NO YES There could be more than one tree that fits the same data! © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 9

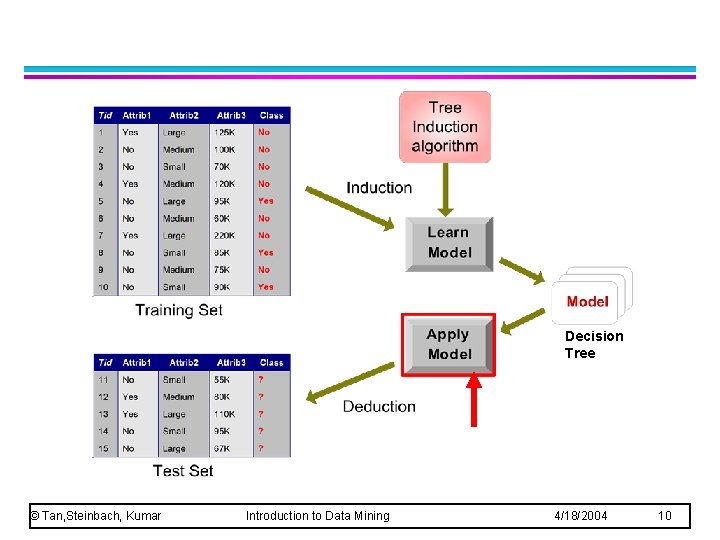

Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 10

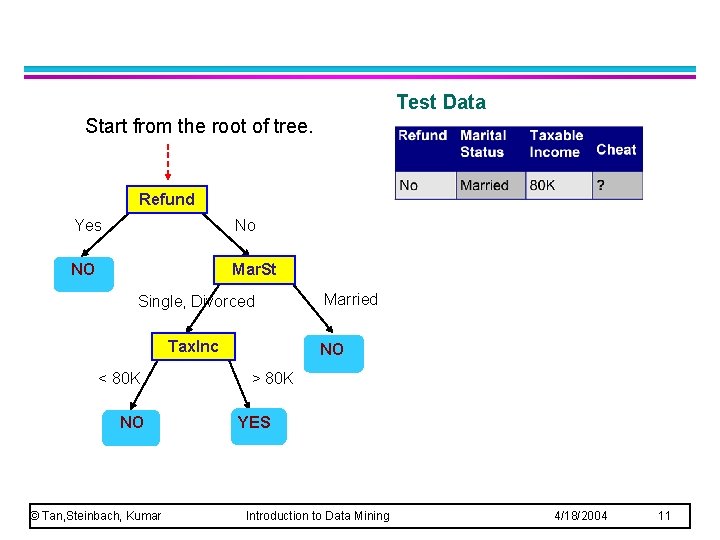

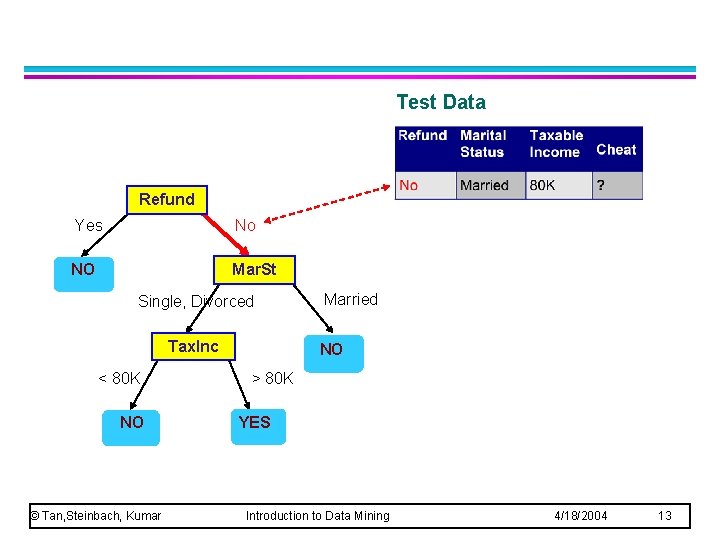

Test Data Start from the root of tree. Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 11

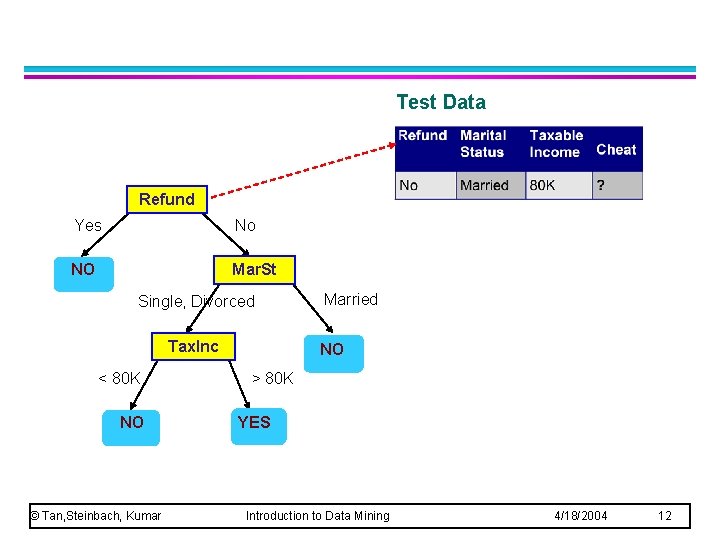

Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 12

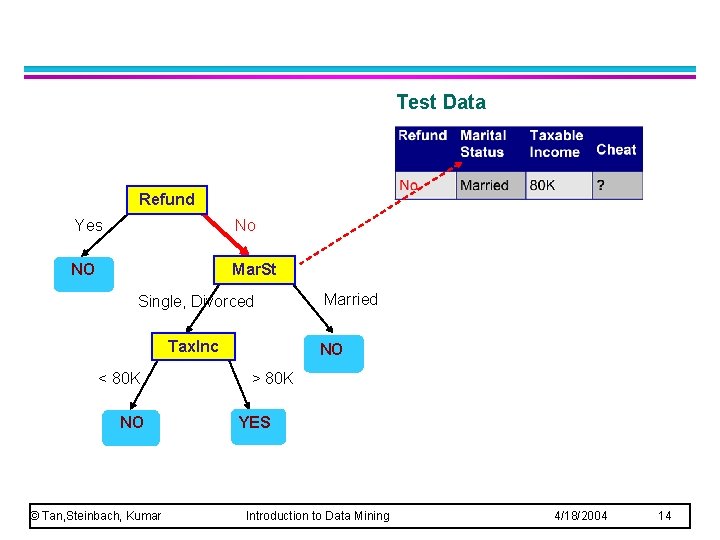

Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 13

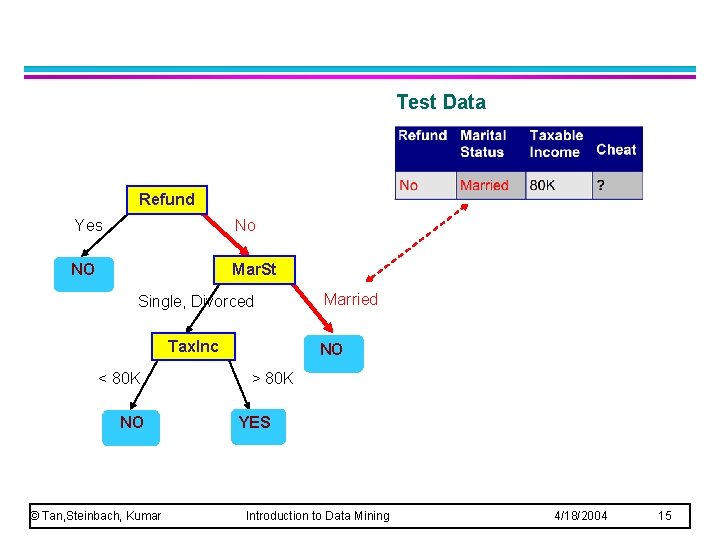

Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 14

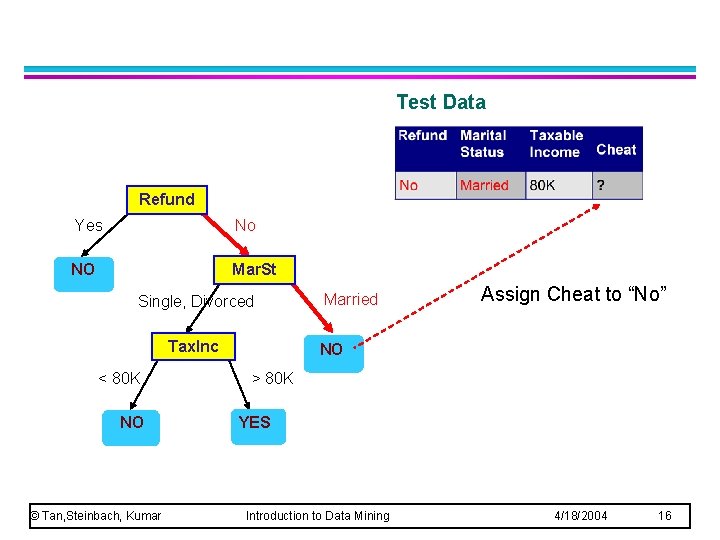

Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 15

Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married Assign Cheat to “No” NO > 80 K YES Introduction to Data Mining 4/18/2004 16

Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 17

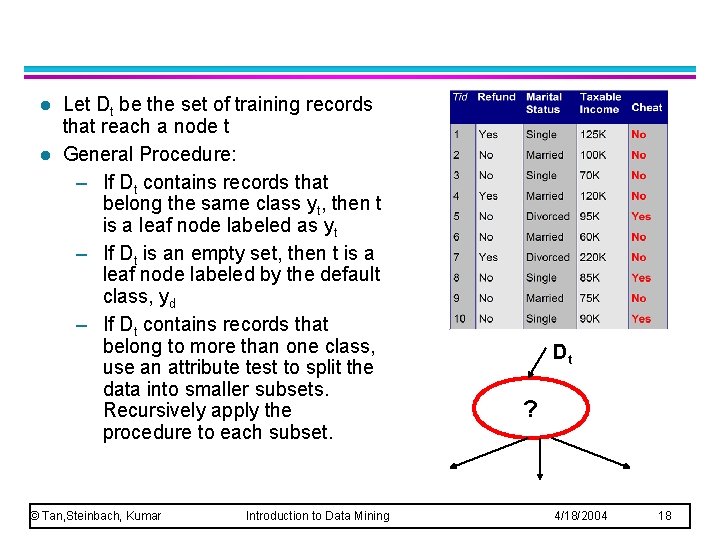

l l Let Dt be the set of training records that reach a node t General Procedure: – If Dt contains records that belong the same class yt, then t is a leaf node labeled as yt – If Dt is an empty set, then t is a leaf node labeled by the default class, yd – If Dt contains records that belong to more than one class, use an attribute test to split the data into smaller subsets. Recursively apply the procedure to each subset. © Tan, Steinbach, Kumar Introduction to Data Mining Dt ? 4/18/2004 18

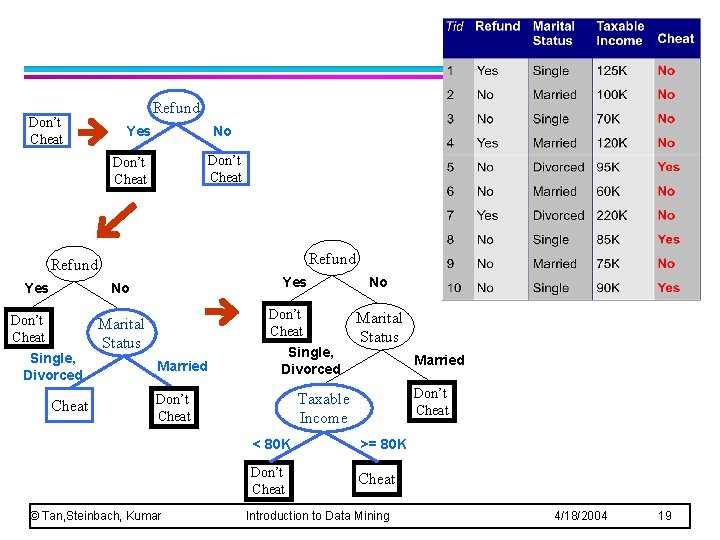

Don’t Cheat Refund Yes No Don’t Cheat Single, Divorced Cheat Don’t Cheat Marital Status Married Single, Divorced Marital Status Married Don’t Cheat Taxable Income Don’t Cheat © Tan, Steinbach, Kumar No < 80 K >= 80 K Don’t Cheat Introduction to Data Mining 4/18/2004 19

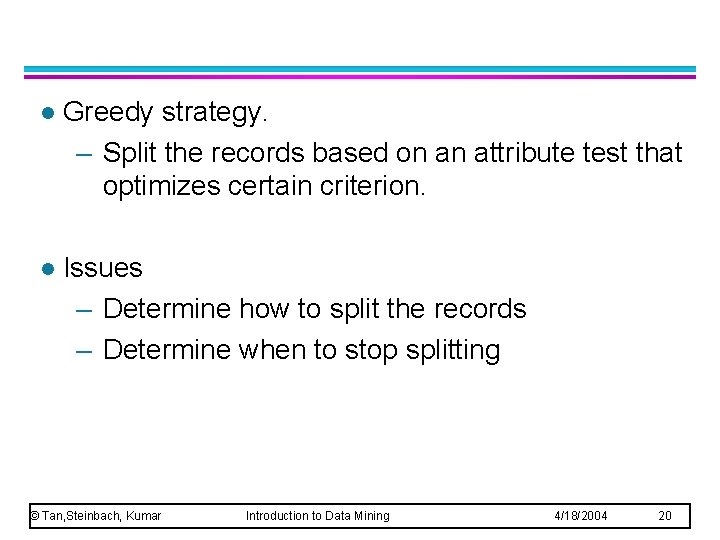

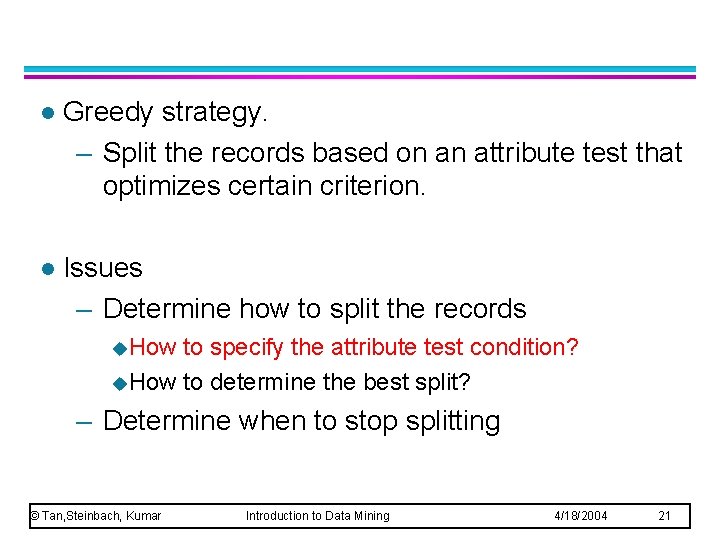

l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 20

l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 21

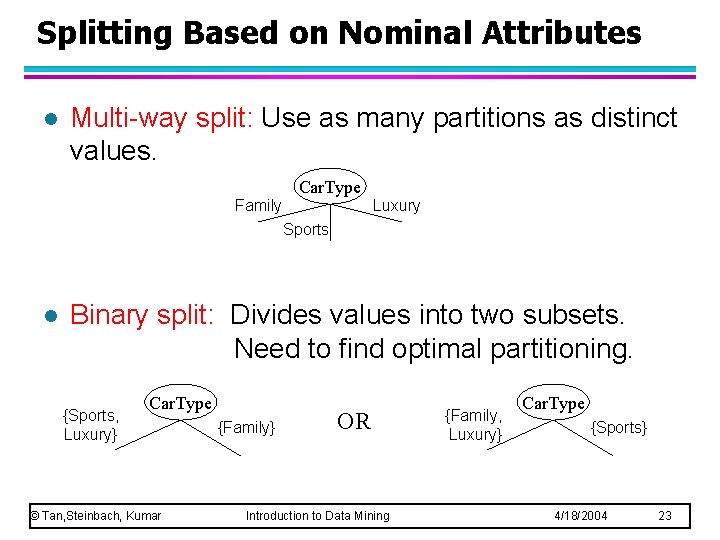

Splitting Based on Nominal Attributes l Multi-way split: Use as many partitions as distinct values. Car. Type Family Luxury Sports l Binary split: Divides values into two subsets. Need to find optimal partitioning. {Sports, Luxury} Car. Type © Tan, Steinbach, Kumar {Family} OR Introduction to Data Mining {Family, Luxury} Car. Type {Sports} 4/18/2004 23

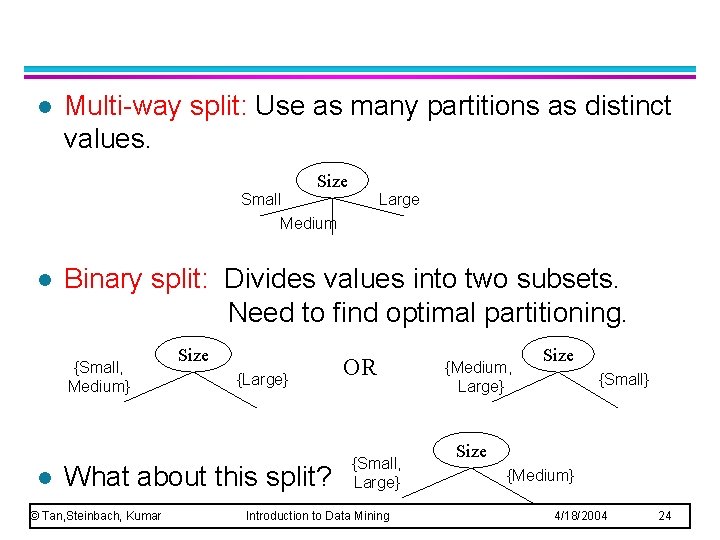

l Multi-way split: Use as many partitions as distinct values. Size Small Medium l Binary split: Divides values into two subsets. Need to find optimal partitioning. {Small, Medium} l Large Size {Large} What about this split? © Tan, Steinbach, Kumar OR {Small, Large} Introduction to Data Mining {Medium, Large} Size {Small} Size {Medium} 4/18/2004 24

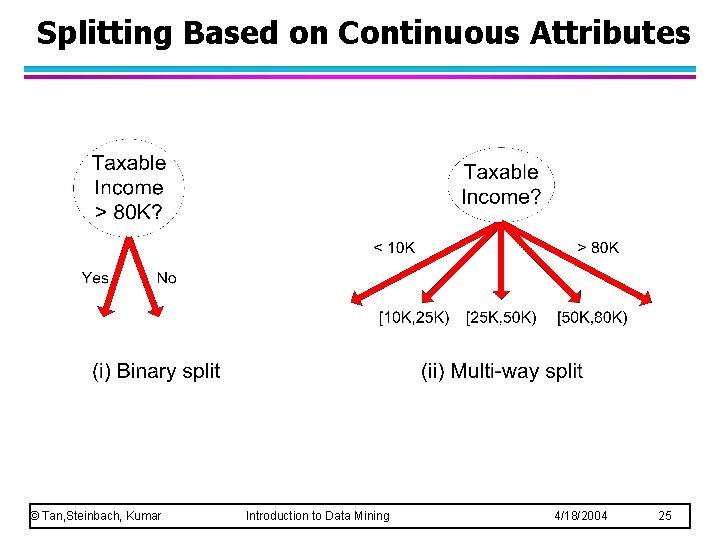

Splitting Based on Continuous Attributes © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 25

l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 26

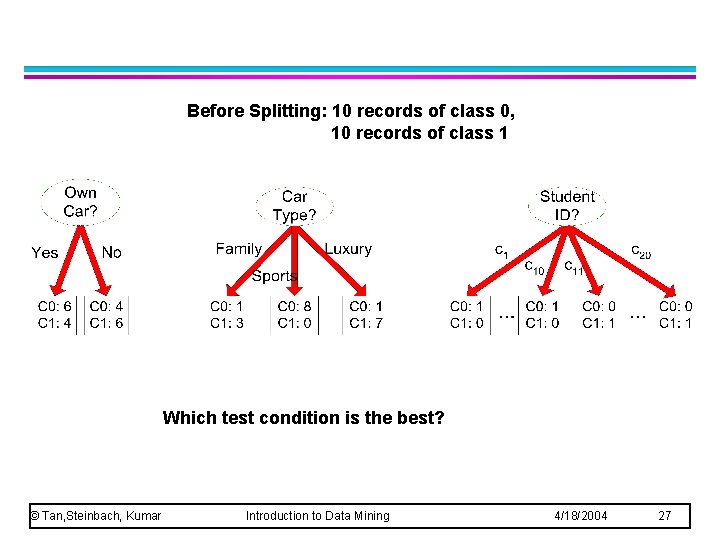

Before Splitting: 10 records of class 0, 10 records of class 1 Which test condition is the best? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 27

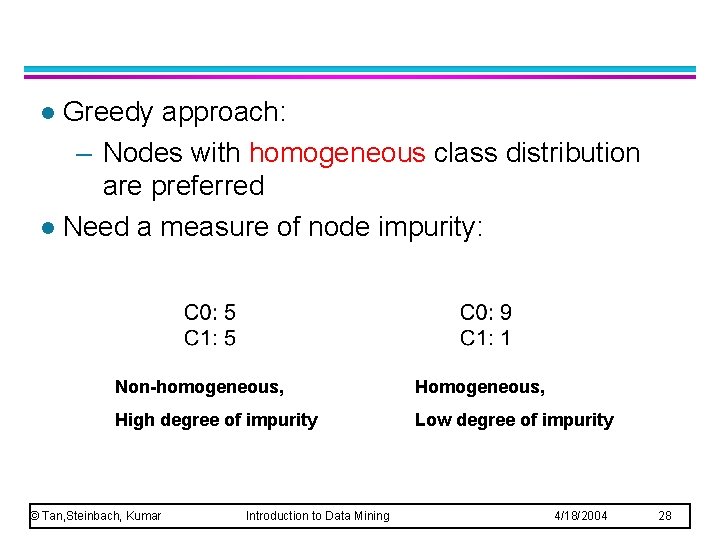

Greedy approach: – Nodes with homogeneous class distribution are preferred l Need a measure of node impurity: l Non-homogeneous, High degree of impurity Low degree of impurity © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 28

l Gini Index l Entropy l Misclassification error © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 29

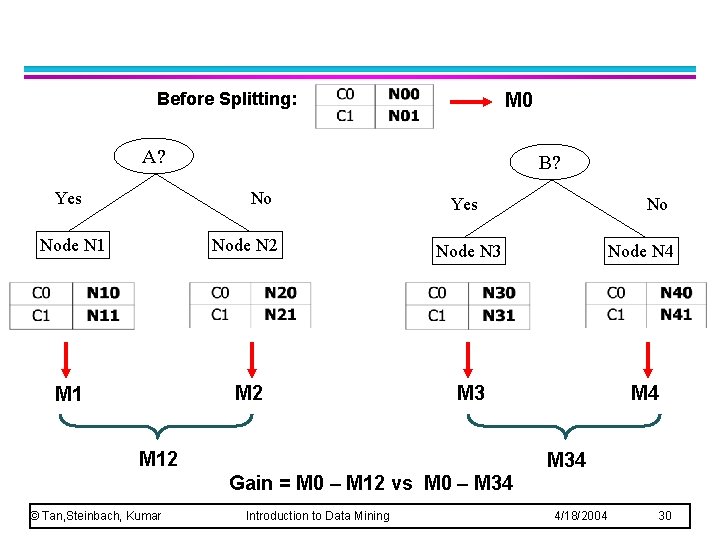

Before Splitting: M 0 A? Yes B? No Yes No Node N 1 Node N 2 Node N 3 Node N 4 M 1 M 2 M 3 M 4 M 12 M 34 Gain = M 0 – M 12 vs M 0 – M 34 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 30

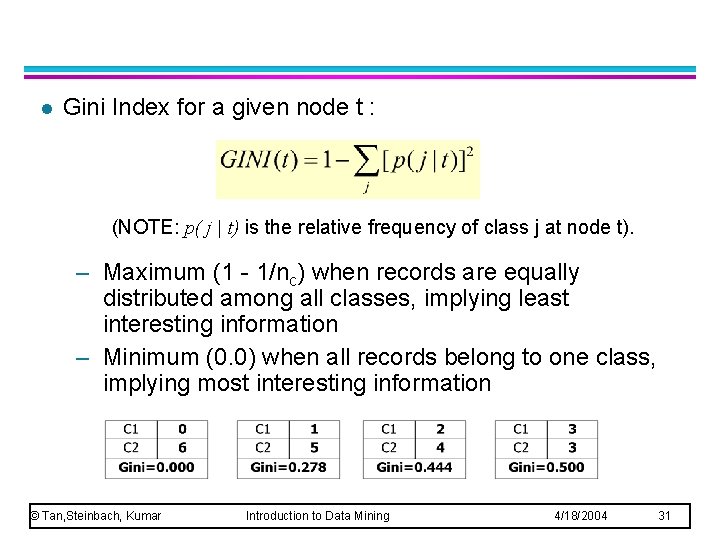

l Gini Index for a given node t : (NOTE: p( j | t) is the relative frequency of class j at node t). – Maximum (1 - 1/nc) when records are equally distributed among all classes, implying least interesting information – Minimum (0. 0) when all records belong to one class, implying most interesting information © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 31

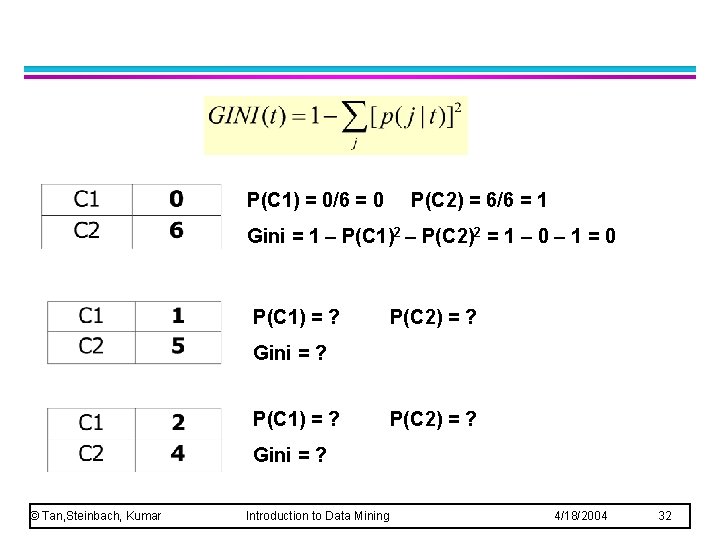

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Gini = 1 – P(C 1)2 – P(C 2)2 = 1 – 0 – 1 = 0 P(C 1) = ? P(C 2) = ? Gini = ? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 32

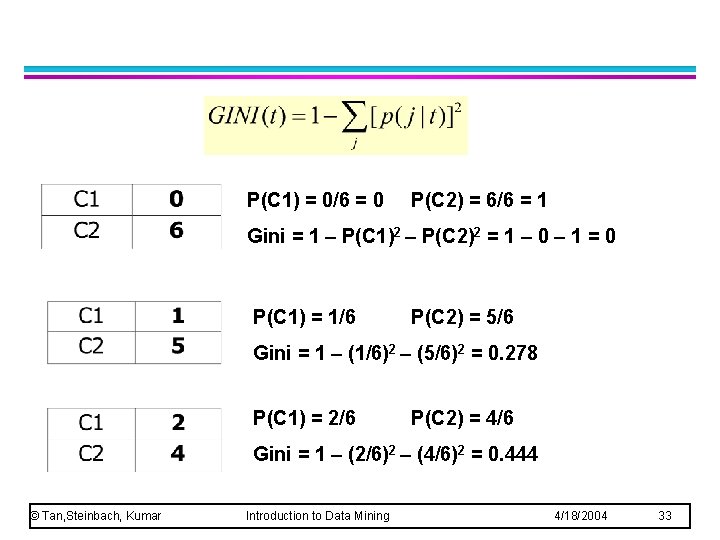

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Gini = 1 – P(C 1)2 – P(C 2)2 = 1 – 0 – 1 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Gini = 1 – (1/6)2 – (5/6)2 = 0. 278 P(C 1) = 2/6 P(C 2) = 4/6 Gini = 1 – (2/6)2 – (4/6)2 = 0. 444 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 33

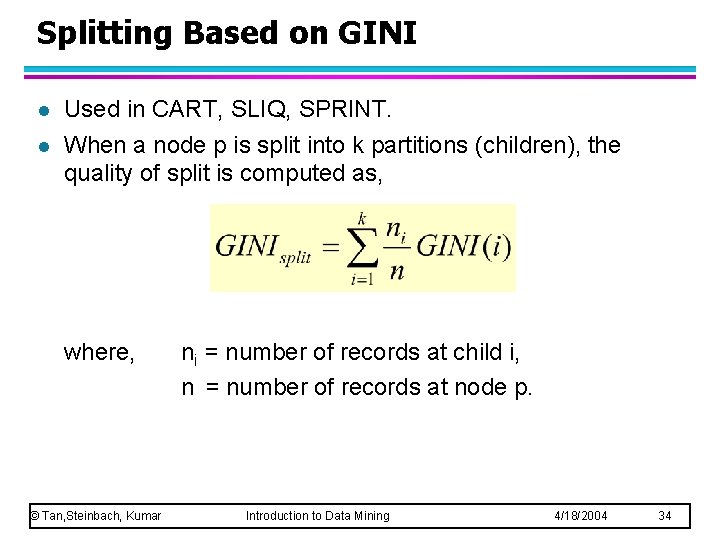

Splitting Based on GINI l l Used in CART, SLIQ, SPRINT. When a node p is split into k partitions (children), the quality of split is computed as, where, © Tan, Steinbach, Kumar ni = number of records at child i, n = number of records at node p. Introduction to Data Mining 4/18/2004 34

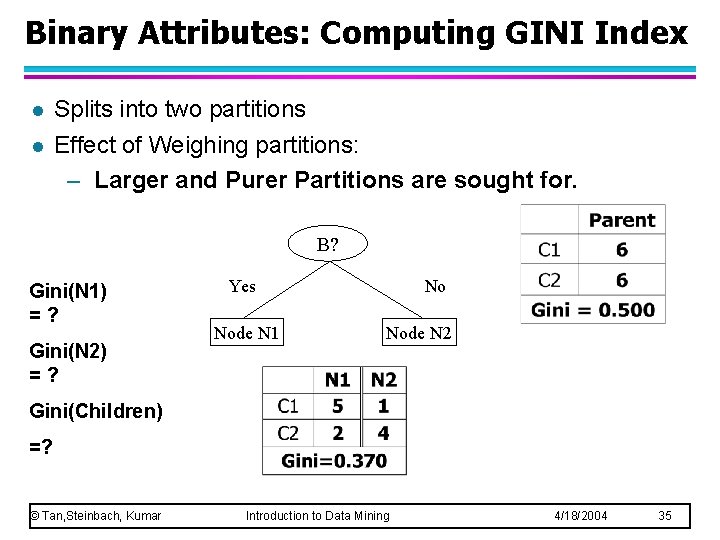

Binary Attributes: Computing GINI Index l l Splits into two partitions Effect of Weighing partitions: – Larger and Purer Partitions are sought for. B? Gini(N 1) =? Gini(N 2) =? Yes Node N 1 No Node N 2 Gini(Children) =? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 35

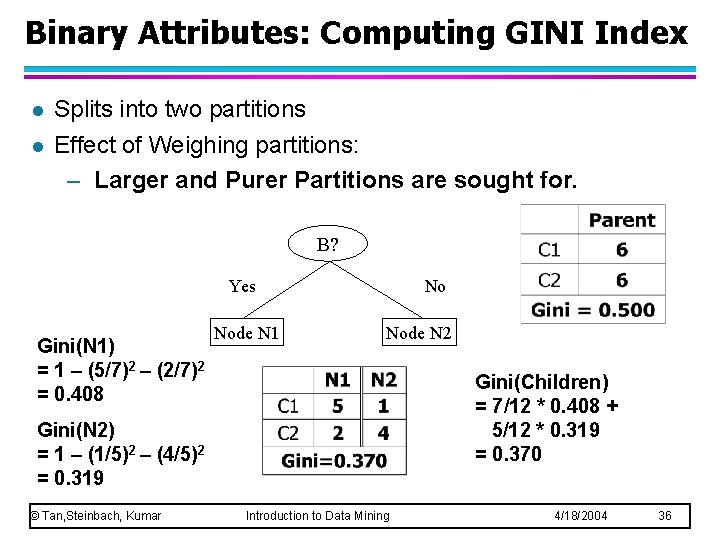

Binary Attributes: Computing GINI Index l l Splits into two partitions Effect of Weighing partitions: – Larger and Purer Partitions are sought for. B? Yes Gini(N 1) = 1 – (5/7)2 – (2/7)2 = 0. 408 Node N 1 No Node N 2 Gini(Children) = 7/12 * 0. 408 + 5/12 * 0. 319 = 0. 370 Gini(N 2) = 1 – (1/5)2 – (4/5)2 = 0. 319 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 36

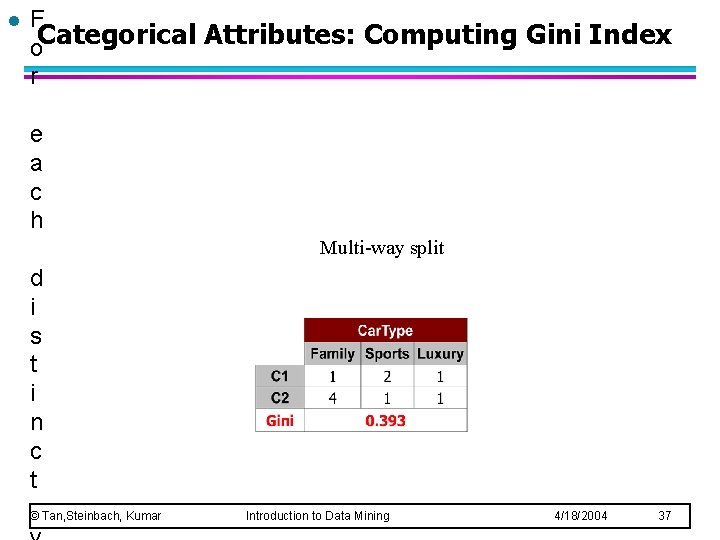

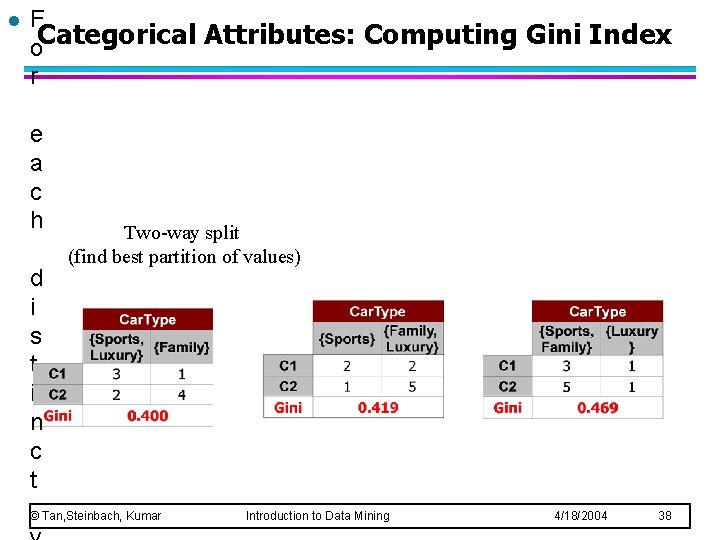

l F o. Categorical Attributes: Computing Gini Index r e a c h Multi-way split d i s t i n c t © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 37

l F o. Categorical Attributes: Computing Gini Index r e a c h d i s t i n c t Two-way split (find best partition of values) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 38

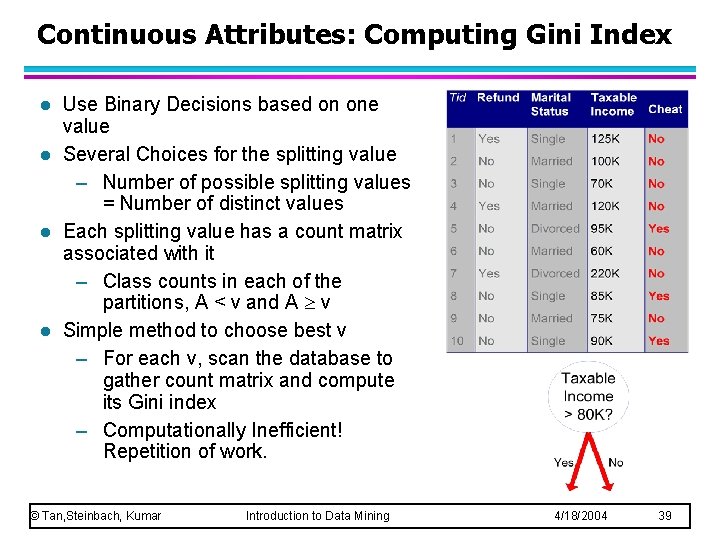

Continuous Attributes: Computing Gini Index l l Use Binary Decisions based on one value Several Choices for the splitting value – Number of possible splitting values = Number of distinct values Each splitting value has a count matrix associated with it – Class counts in each of the partitions, A < v and A v Simple method to choose best v – For each v, scan the database to gather count matrix and compute its Gini index – Computationally Inefficient! Repetition of work. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 39

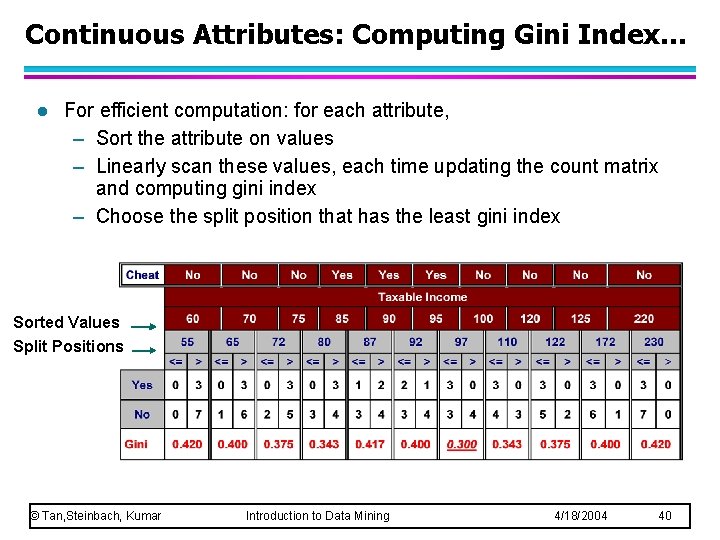

Continuous Attributes: Computing Gini Index. . . l For efficient computation: for each attribute, – Sort the attribute on values – Linearly scan these values, each time updating the count matrix and computing gini index – Choose the split position that has the least gini index Sorted Values Split Positions © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 40

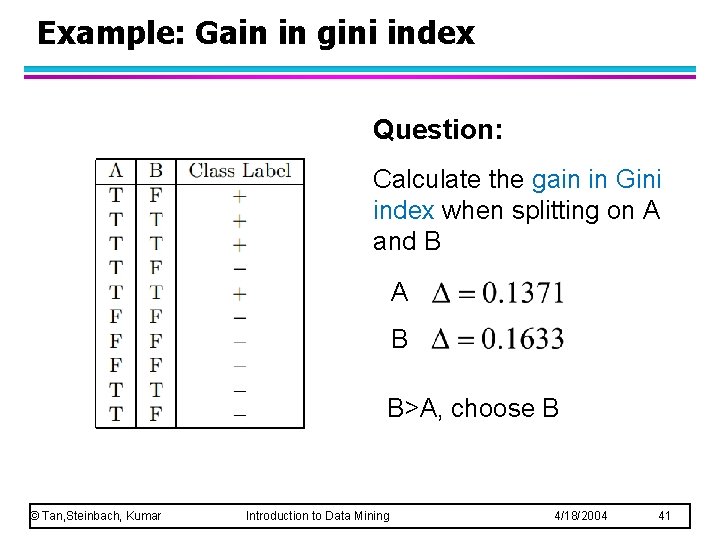

Example: Gain in gini index Question: Calculate the gain in Gini index when splitting on A and B A B B>A, choose B © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 41

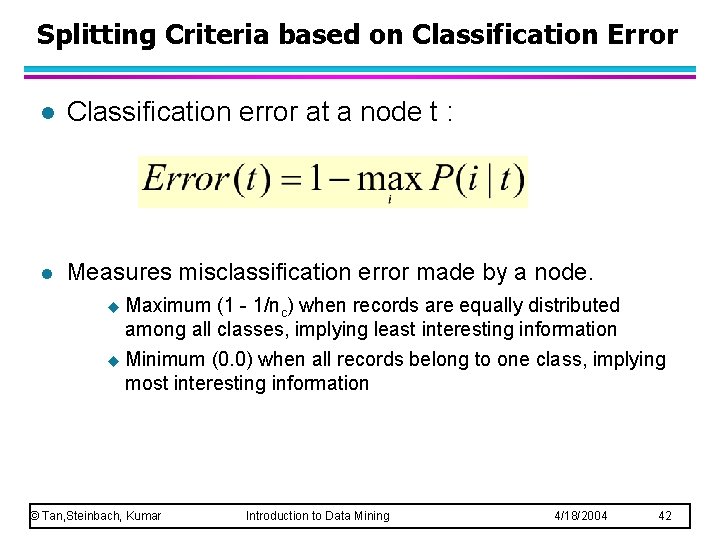

Splitting Criteria based on Classification Error l Classification error at a node t : l Measures misclassification error made by a node. u Maximum (1 - 1/nc) when records are equally distributed among all classes, implying least interesting information u Minimum (0. 0) when all records belong to one class, implying most interesting information © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 42

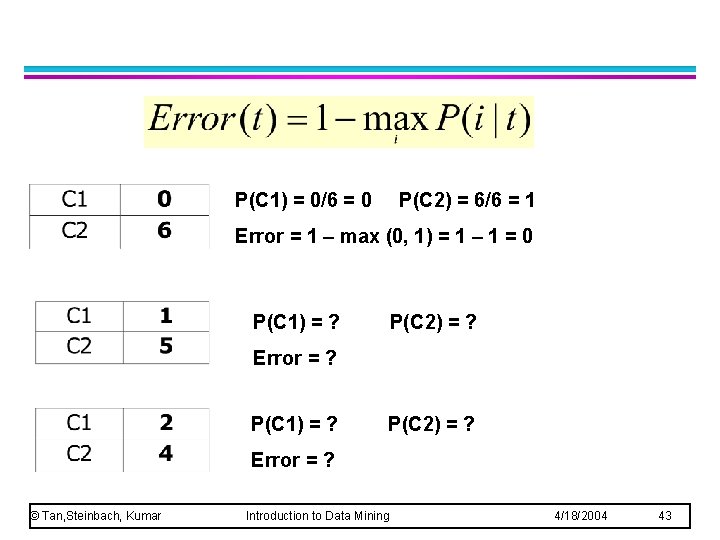

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Error = 1 – max (0, 1) = 1 – 1 = 0 P(C 1) = ? P(C 2) = ? Error = ? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 43

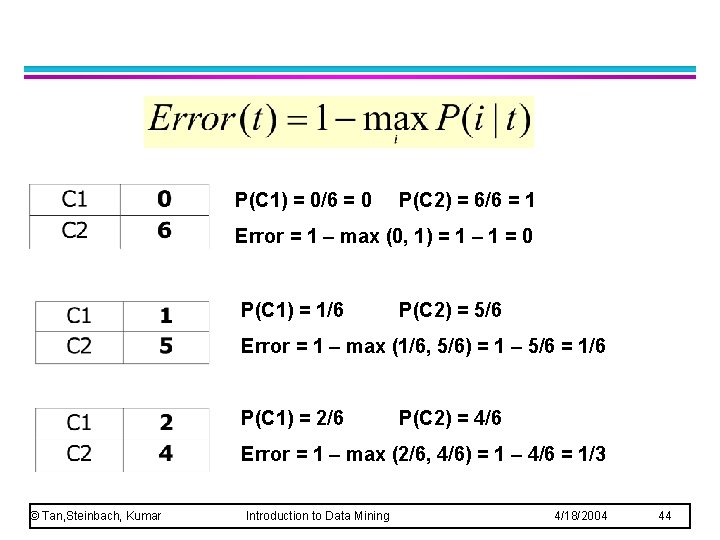

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Error = 1 – max (0, 1) = 1 – 1 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Error = 1 – max (1/6, 5/6) = 1 – 5/6 = 1/6 P(C 1) = 2/6 P(C 2) = 4/6 Error = 1 – max (2/6, 4/6) = 1 – 4/6 = 1/3 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 44

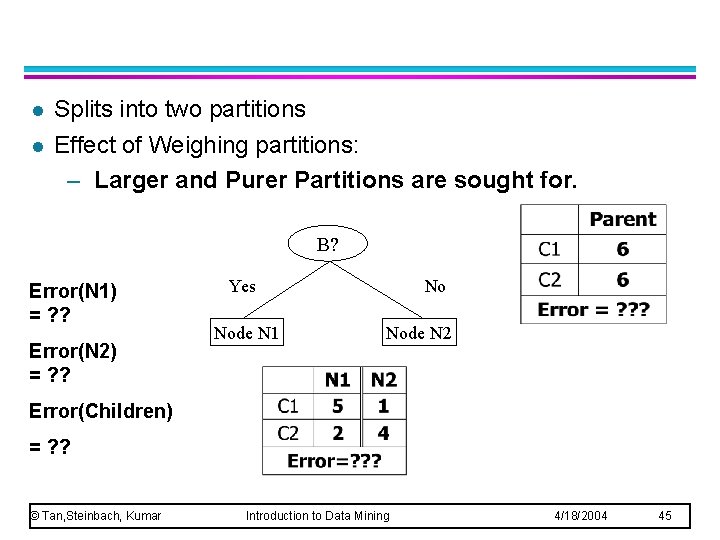

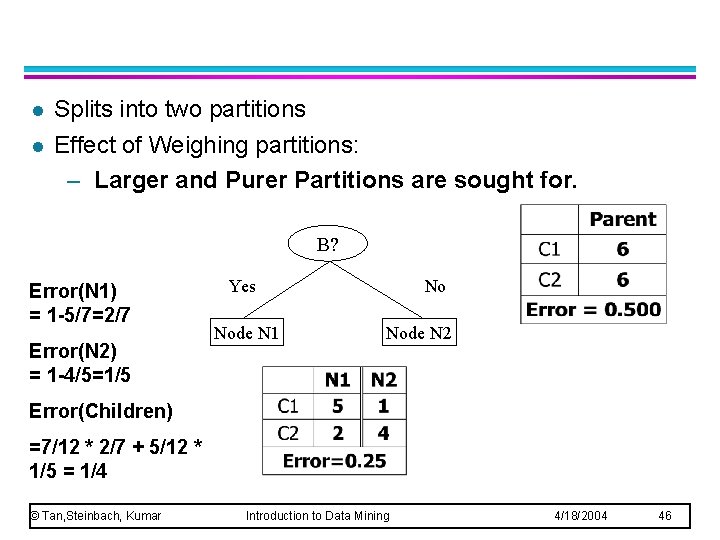

l l Splits into two partitions Effect of Weighing partitions: – Larger and Purer Partitions are sought for. B? Error(N 1) = ? ? Error(N 2) = ? ? Yes Node N 1 No Node N 2 Error(Children) = ? ? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 45

l l Splits into two partitions Effect of Weighing partitions: – Larger and Purer Partitions are sought for. B? Error(N 1) = 1 -5/7=2/7 Error(N 2) = 1 -4/5=1/5 Yes Node N 1 No Node N 2 Error(Children) =7/12 * 2/7 + 5/12 * 1/5 = 1/4 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 46

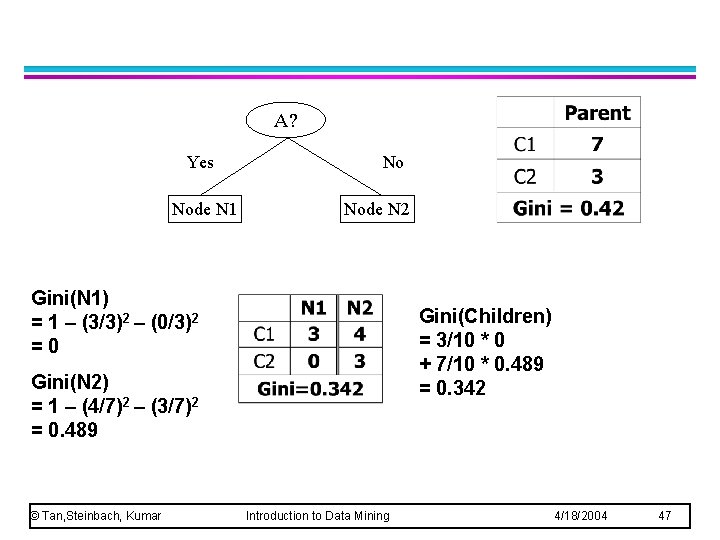

A? Yes Node N 1 No Node N 2 Gini(N 1) = 1 – (3/3)2 – (0/3)2 =0 Gini(Children) = 3/10 * 0 + 7/10 * 0. 489 = 0. 342 Gini(N 2) = 1 – (4/7)2 – (3/7)2 = 0. 489 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 47

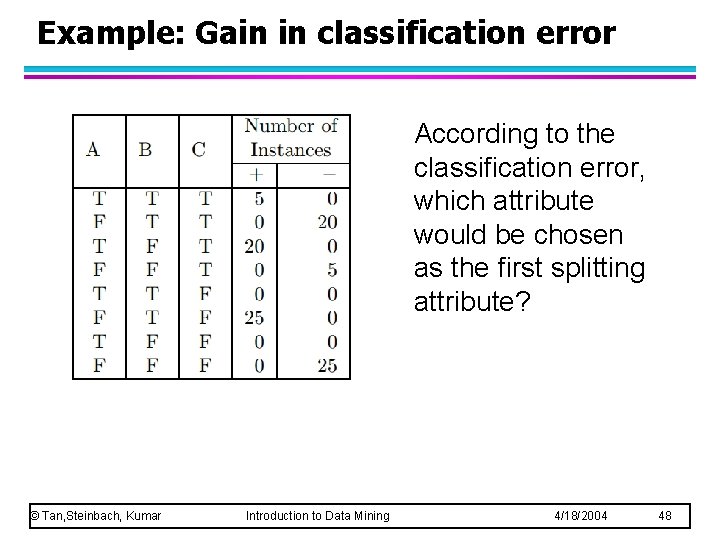

Example: Gain in classification error According to the classification error, which attribute would be chosen as the first splitting attribute? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 48

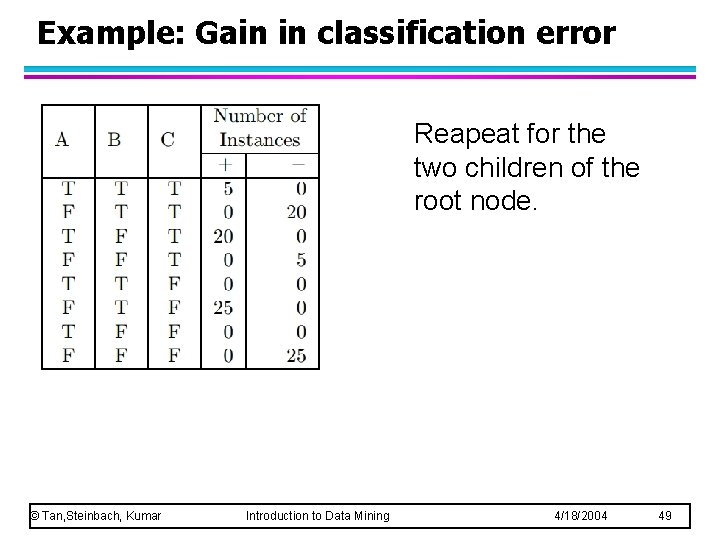

Example: Gain in classification error Reapeat for the two children of the root node. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 49

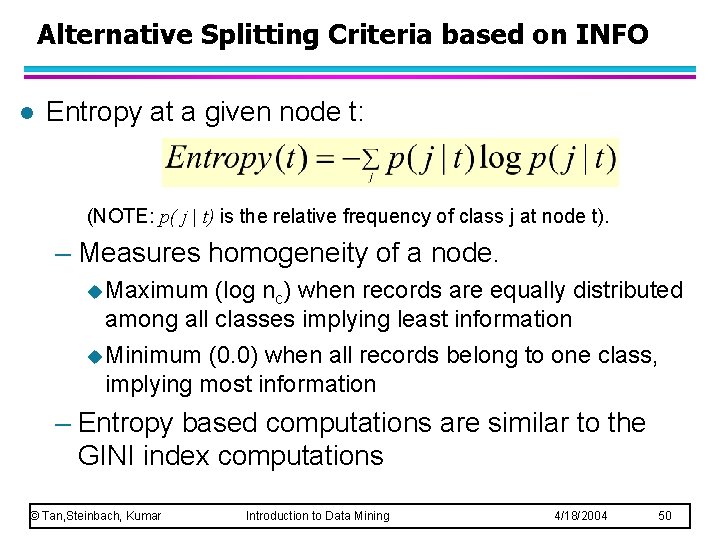

Alternative Splitting Criteria based on INFO l Entropy at a given node t: (NOTE: p( j | t) is the relative frequency of class j at node t). – Measures homogeneity of a node. u Maximum (log nc) when records are equally distributed among all classes implying least information u Minimum (0. 0) when all records belong to one class, implying most information – Entropy based computations are similar to the GINI index computations © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 50

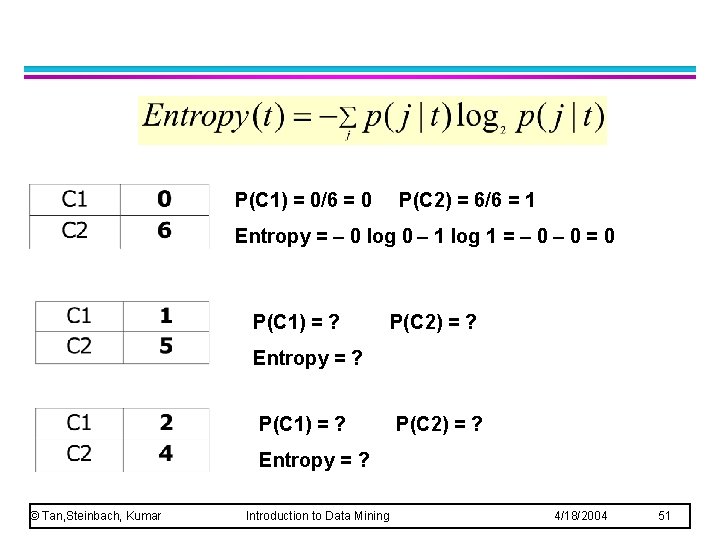

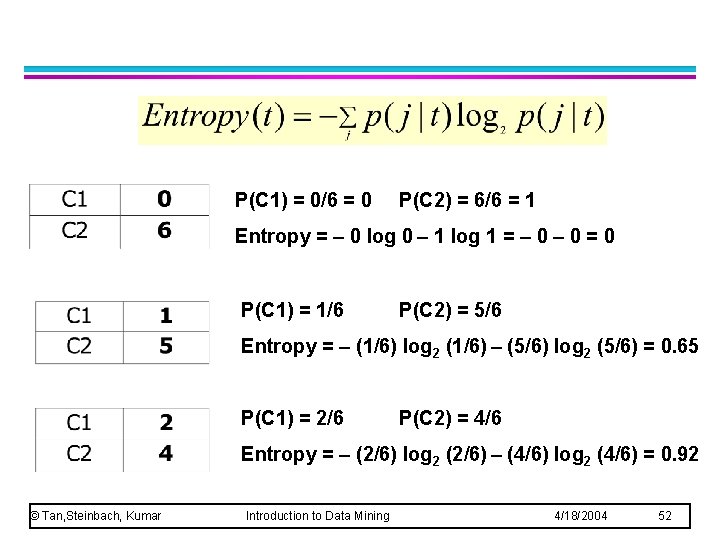

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Entropy = – 0 log 0 – 1 log 1 = – 0 = 0 P(C 1) = ? P(C 2) = ? Entropy = ? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 51

P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Entropy = – 0 log 0 – 1 log 1 = – 0 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Entropy = – (1/6) log 2 (1/6) – (5/6) log 2 (5/6) = 0. 65 P(C 1) = 2/6 P(C 2) = 4/6 Entropy = – (2/6) log 2 (2/6) – (4/6) log 2 (4/6) = 0. 92 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 52

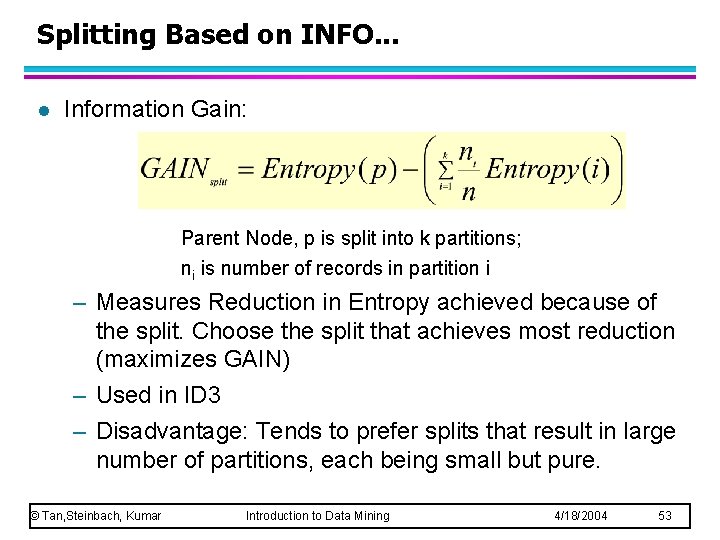

Splitting Based on INFO. . . l Information Gain: Parent Node, p is split into k partitions; ni is number of records in partition i – Measures Reduction in Entropy achieved because of the split. Choose the split that achieves most reduction (maximizes GAIN) – Used in ID 3 – Disadvantage: Tends to prefer splits that result in large number of partitions, each being small but pure. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 53

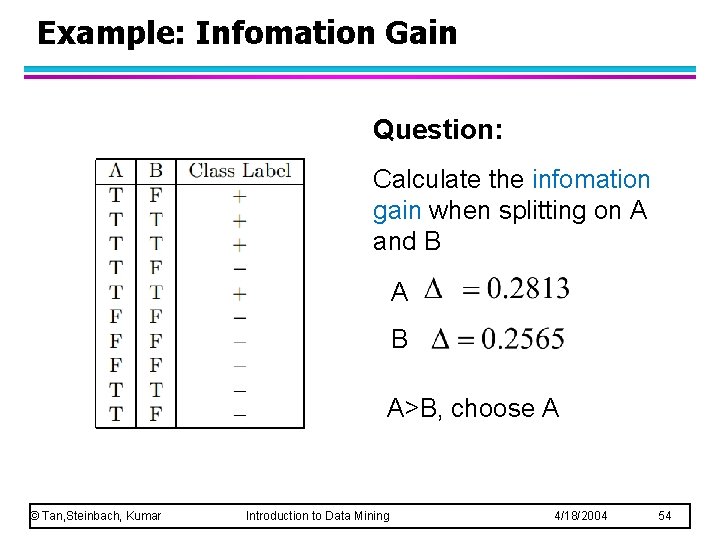

Example: Infomation Gain Question: Calculate the infomation gain when splitting on A and B A>B, choose A © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 54

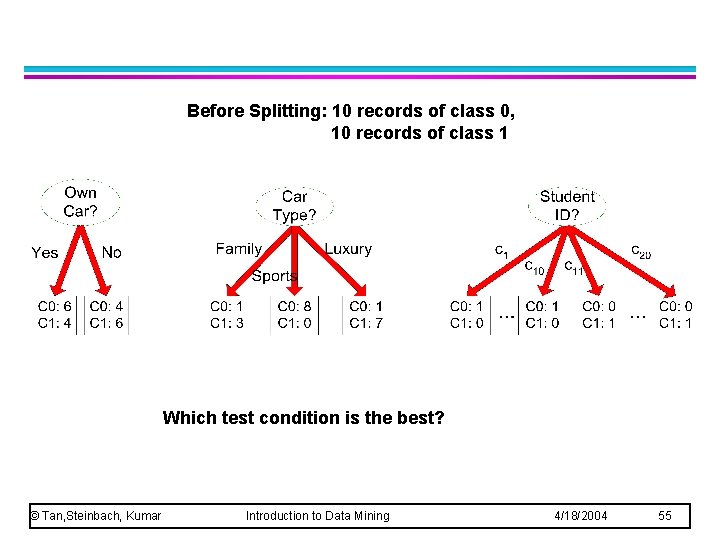

Before Splitting: 10 records of class 0, 10 records of class 1 Which test condition is the best? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 55

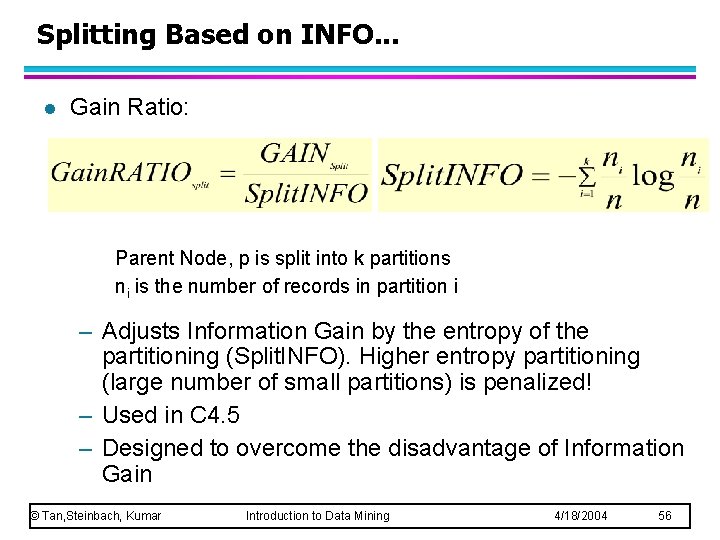

Splitting Based on INFO. . . l Gain Ratio: Parent Node, p is split into k partitions ni is the number of records in partition i – Adjusts Information Gain by the entropy of the partitioning (Split. INFO). Higher entropy partitioning (large number of small partitions) is penalized! – Used in C 4. 5 – Designed to overcome the disadvantage of Information Gain © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 56

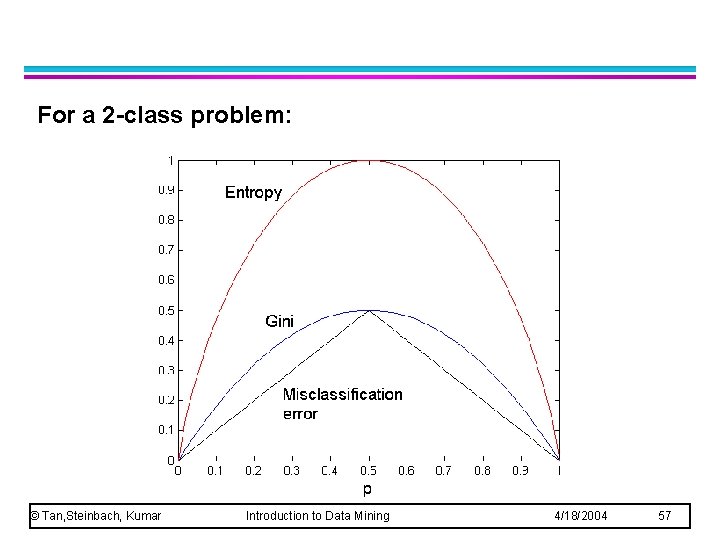

For a 2 -class problem: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 57

l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 58

l Stop expanding a node when all the records belong to the same class l Stop expanding a node when all the records have similar attribute values l Early termination (to be discussed later) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 59

- Slides: 58