Data Mining Classification Basic Concepts Decision Trees and

Data Mining Classification: Basic Concepts, Decision Trees, and Model Evaluation Lecture Notes for Chapter 4 Introduction to Data Mining by Tan, Steinbach, Kumar © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 1

Classification: Definition l Given a collection of records (training set ) – Each record contains a set of attributes, one of the attributes is the class. l l Find a model for class attribute as a function of the values of other attributes. Goal: previously unseen records should be assigned a class as accurately as possible. – A test set is used to determine the accuracy of the model. Usually, the given data set is divided into training and test sets, with training set used to build the model and test set used to validate it. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 2

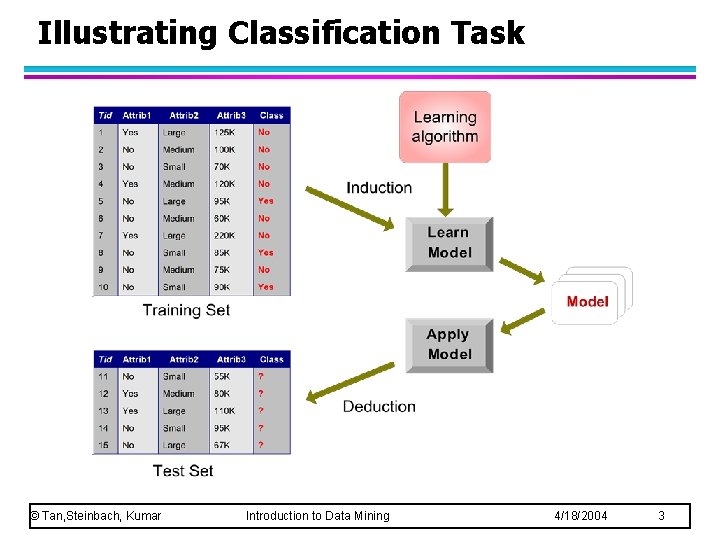

Illustrating Classification Task © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 3

Examples of Classification Task l Predicting tumor cells as benign or malignant l Classifying credit card transactions as legitimate or fraudulent l Classifying secondary structures of protein as alpha-helix, beta-sheet, or random coil l Categorizing news stories as finance, weather, entertainment, sports, etc © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 4

Classification Techniques Decision Tree based Methods l Rule-based Methods l Memory based reasoning l Neural Networks l Naïve Bayes and Bayesian Belief Networks l Support Vector Machines l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 5

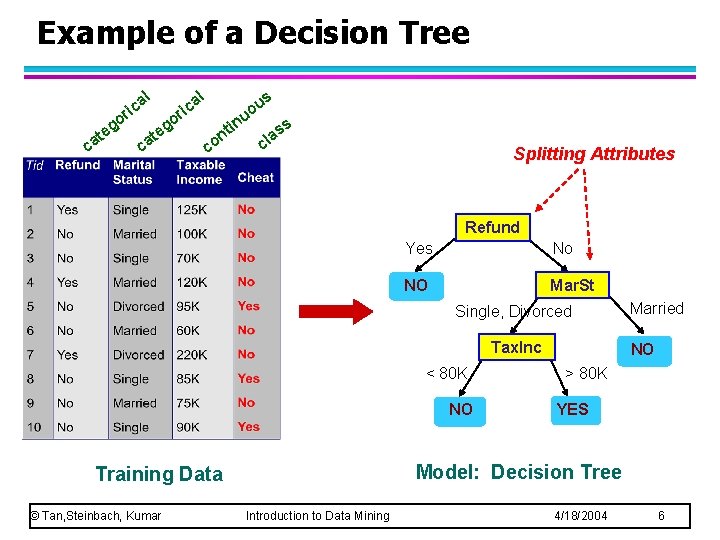

Example of a Decision Tree al c i r go go e e t t ca ca al ric s u o u co in nt s s la c Splitting Attributes Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO NO > 80 K YES Model: Decision Tree Training Data © Tan, Steinbach, Kumar Married Introduction to Data Mining 4/18/2004 6

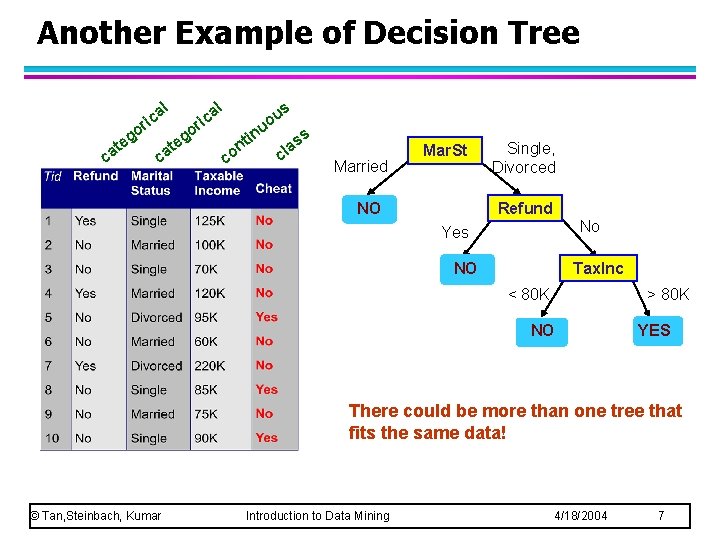

Another Example of Decision Tree al eg t ca al ic r o o ca g te s u uo ric co in t n ss a cl Married Mar. St NO Single, Divorced Refund No Yes NO Tax. Inc < 80 K > 80 K NO YES There could be more than one tree that fits the same data! © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 7

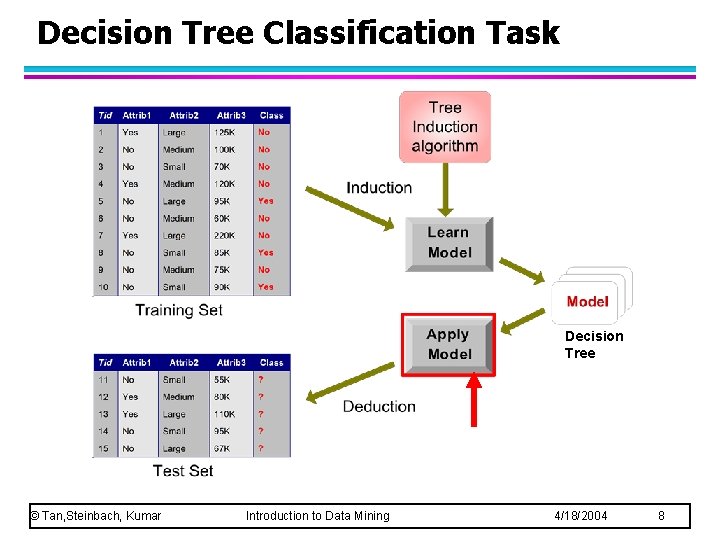

Decision Tree Classification Task Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 8

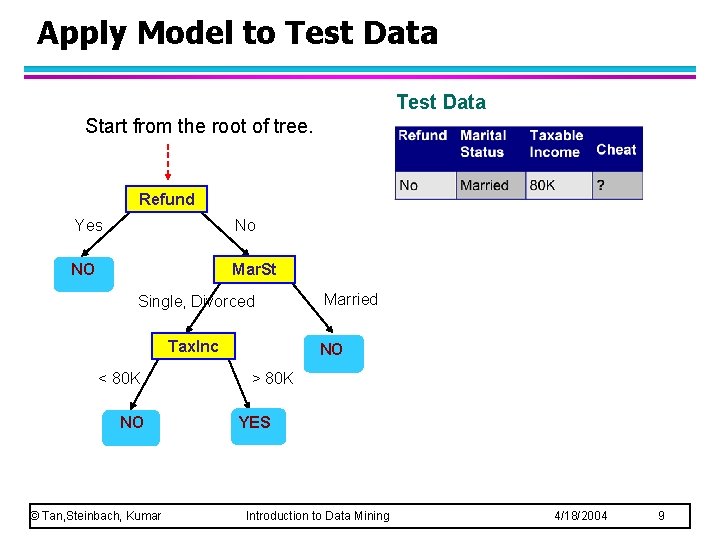

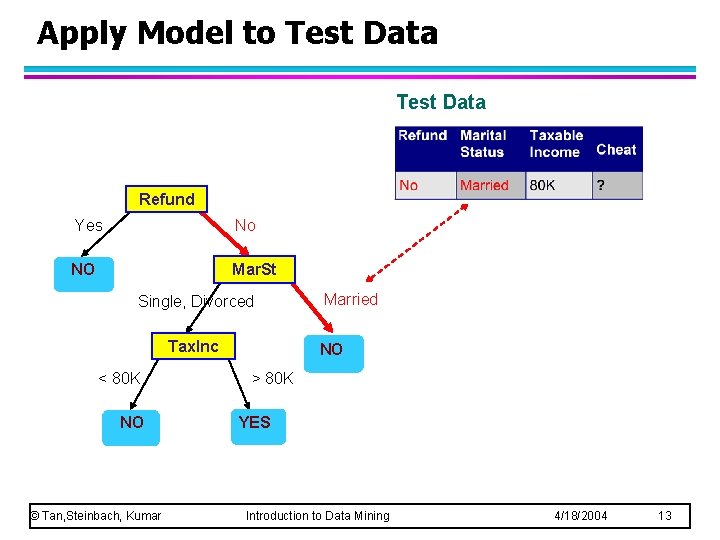

Apply Model to Test Data Start from the root of tree. Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 9

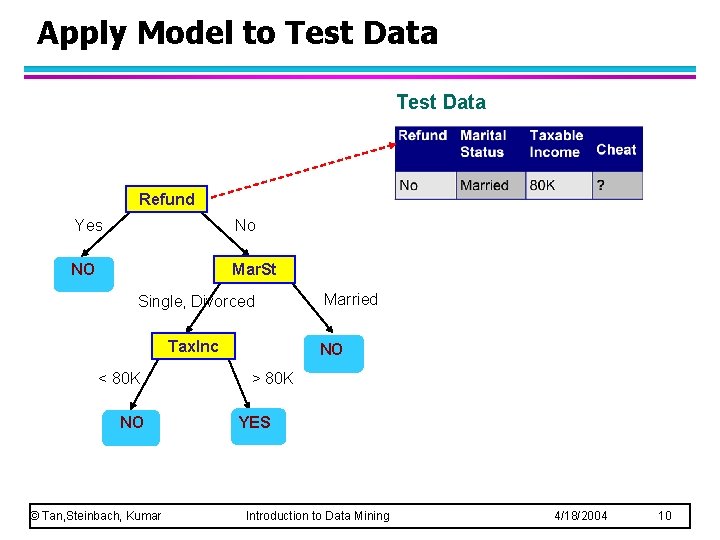

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 10

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 11

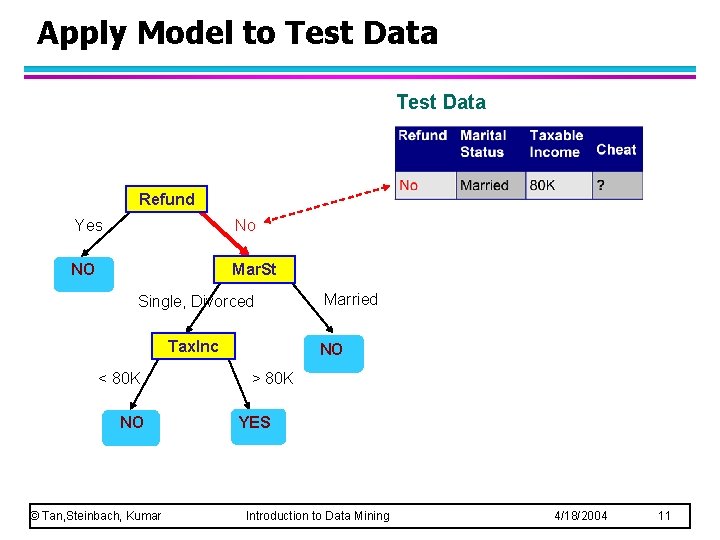

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 12

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married NO > 80 K YES Introduction to Data Mining 4/18/2004 13

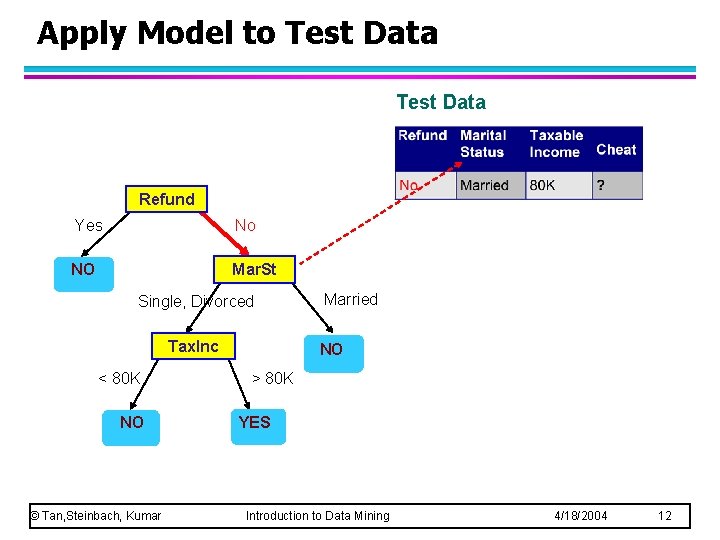

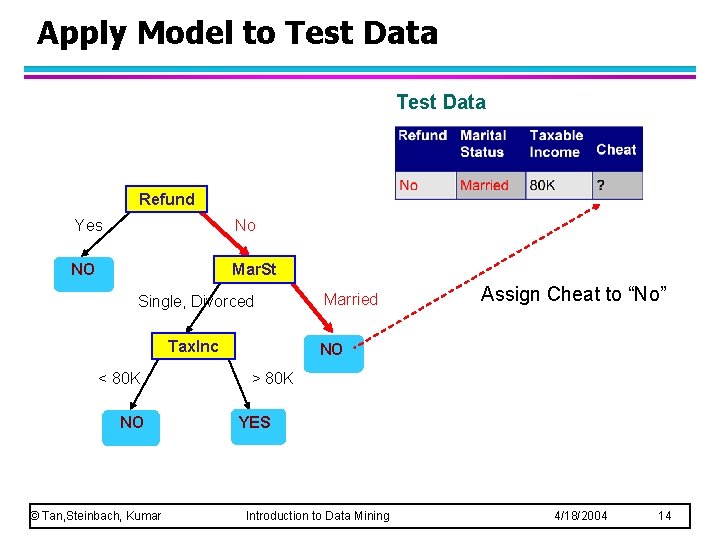

Apply Model to Test Data Refund Yes No NO Mar. St Single, Divorced Tax. Inc < 80 K NO © Tan, Steinbach, Kumar Married Assign Cheat to “No” NO > 80 K YES Introduction to Data Mining 4/18/2004 14

Decision Tree Classification Task Decision Tree © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 15

Decision Tree Induction l Many Algorithms: – Hunt’s Algorithm (one of the earliest) – CART – ID 3, C 4. 5 – SLIQ, SPRINT © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 16

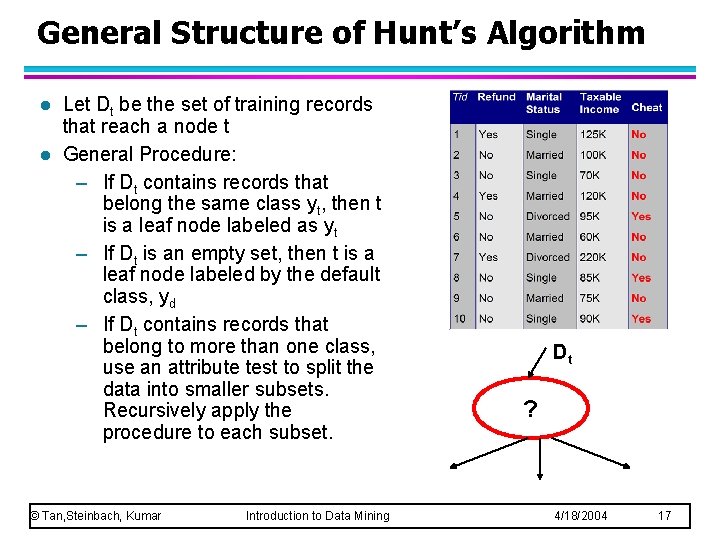

General Structure of Hunt’s Algorithm l l Let Dt be the set of training records that reach a node t General Procedure: – If Dt contains records that belong the same class yt, then t is a leaf node labeled as yt – If Dt is an empty set, then t is a leaf node labeled by the default class, yd – If Dt contains records that belong to more than one class, use an attribute test to split the data into smaller subsets. Recursively apply the procedure to each subset. © Tan, Steinbach, Kumar Introduction to Data Mining Dt ? 4/18/2004 17

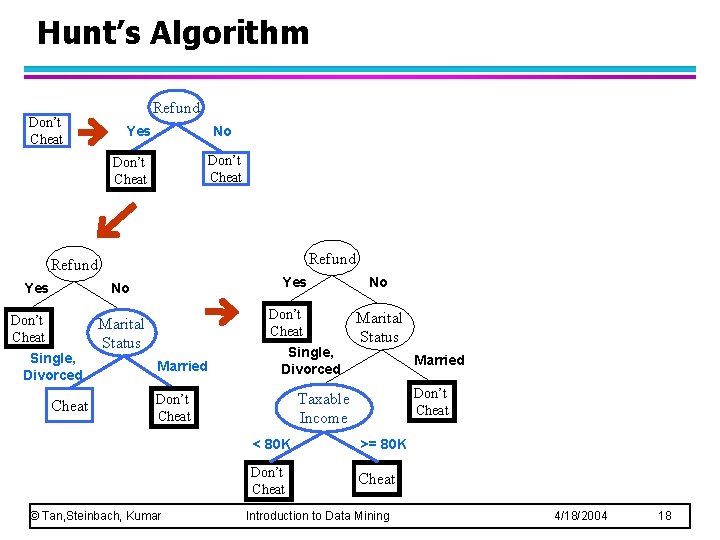

Hunt’s Algorithm Don’t Cheat Refund Yes No Don’t Cheat Single, Divorced Cheat Don’t Cheat Marital Status Married Single, Divorced Marital Status Married Don’t Cheat Taxable Income Don’t Cheat © Tan, Steinbach, Kumar No < 80 K >= 80 K Don’t Cheat Introduction to Data Mining 4/18/2004 18

Tree Induction l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 19

Tree Induction l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 20

How to Specify Test Condition? l Depends on attribute types – Binary – Nominal – Ordinal – Continuous l Depends on number of ways to split – 2 -way split – Multi-way split © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 21

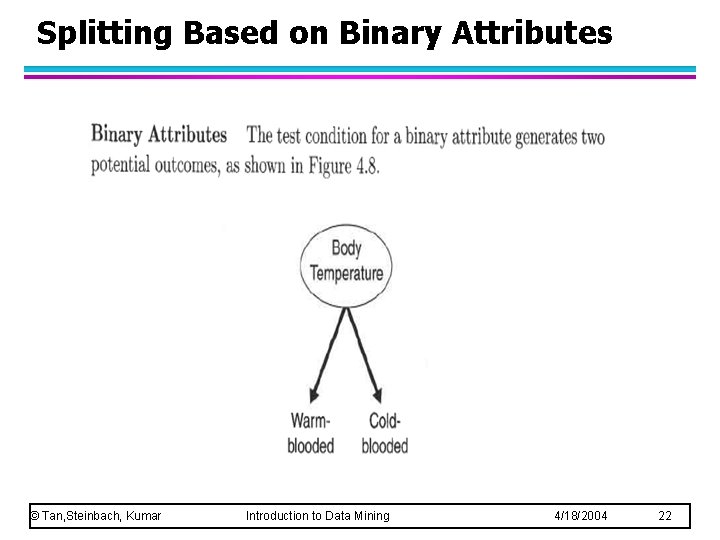

Splitting Based on Binary Attributes © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 22

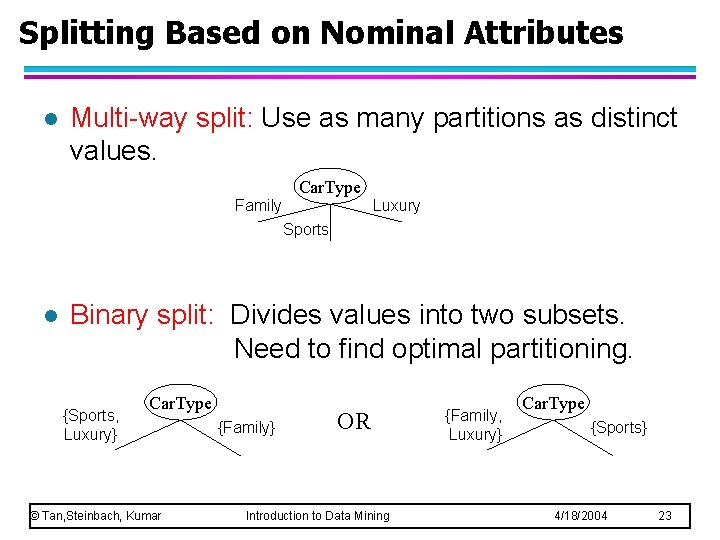

Splitting Based on Nominal Attributes l Multi-way split: Use as many partitions as distinct values. Car. Type Family Luxury Sports l Binary split: Divides values into two subsets. Need to find optimal partitioning. {Sports, Luxury} Car. Type © Tan, Steinbach, Kumar {Family} OR Introduction to Data Mining {Family, Luxury} Car. Type {Sports} 4/18/2004 23

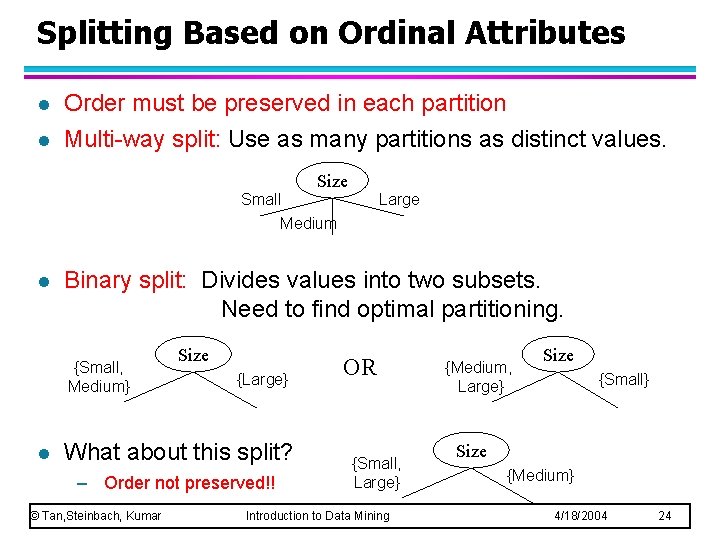

Splitting Based on Ordinal Attributes l l Order must be preserved in each partition Multi-way split: Use as many partitions as distinct values. Size Small Large Medium l Binary split: Divides values into two subsets. Need to find optimal partitioning. {Small, Medium} l Size {Large} What about this split? – Order not preserved!! © Tan, Steinbach, Kumar OR {Small, Large} Introduction to Data Mining {Medium, Large} Size {Small} Size {Medium} 4/18/2004 24

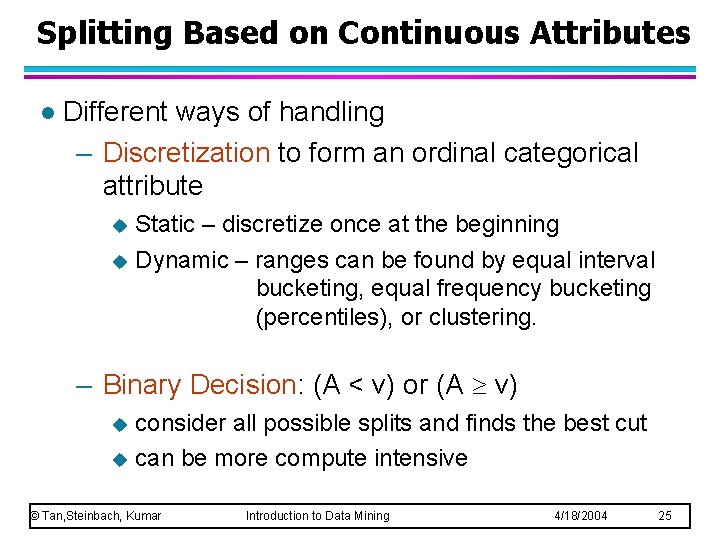

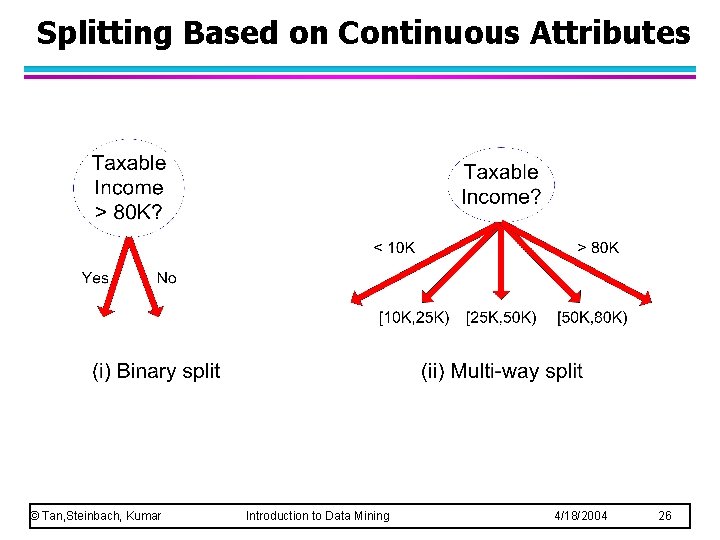

Splitting Based on Continuous Attributes l Different ways of handling – Discretization to form an ordinal categorical attribute Static – discretize once at the beginning u Dynamic – ranges can be found by equal interval bucketing, equal frequency bucketing (percentiles), or clustering. u – Binary Decision: (A < v) or (A v) consider all possible splits and finds the best cut u can be more compute intensive u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 25

Splitting Based on Continuous Attributes © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 26

Tree Induction l Greedy strategy. – Split the records based on an attribute test that optimizes certain criterion. l Issues – Determine how to split the records u. How to specify the attribute test condition? u. How to determine the best split? – Determine when to stop splitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 27

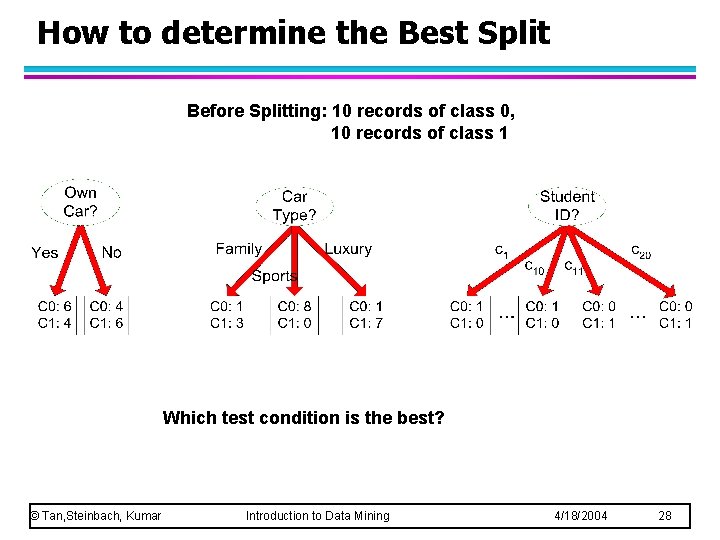

How to determine the Best Split Before Splitting: 10 records of class 0, 10 records of class 1 Which test condition is the best? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 28

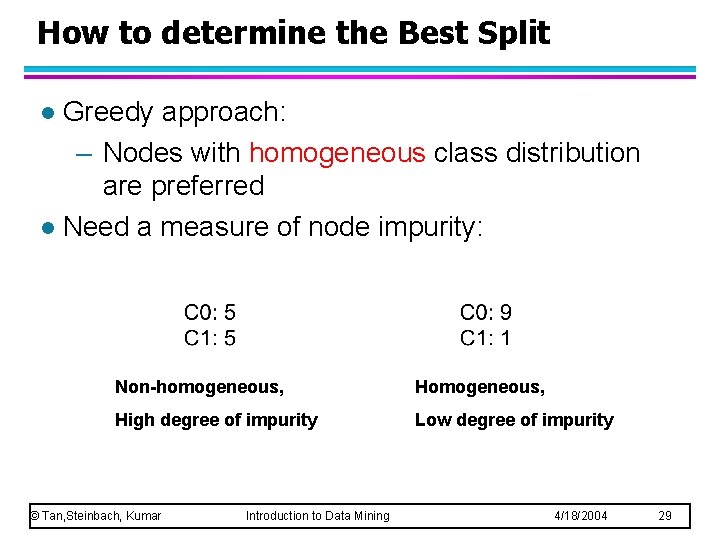

How to determine the Best Split Greedy approach: – Nodes with homogeneous class distribution are preferred l Need a measure of node impurity: l Non-homogeneous, High degree of impurity Low degree of impurity © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 29

Measures of Node Impurity l Gini Index l Entropy l Misclassification error © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 30

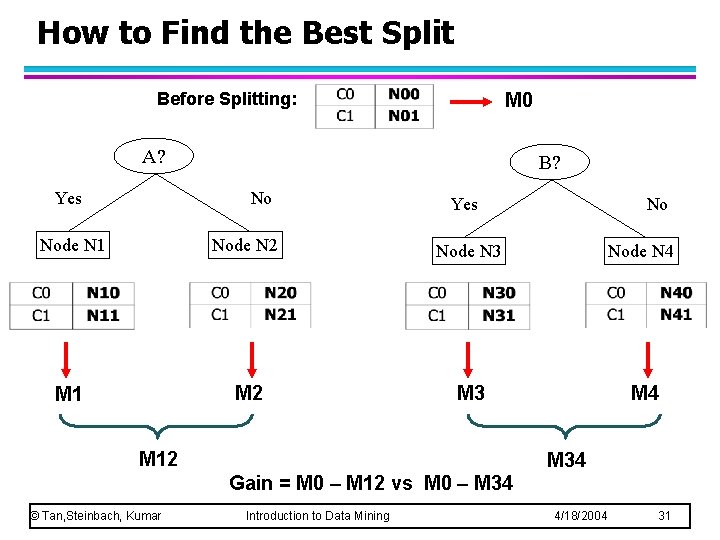

How to Find the Best Split Before Splitting: M 0 A? Yes B? No Yes No Node N 1 Node N 2 Node N 3 Node N 4 M 1 M 2 M 3 M 4 M 12 M 34 Gain = M 0 – M 12 vs M 0 – M 34 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 31

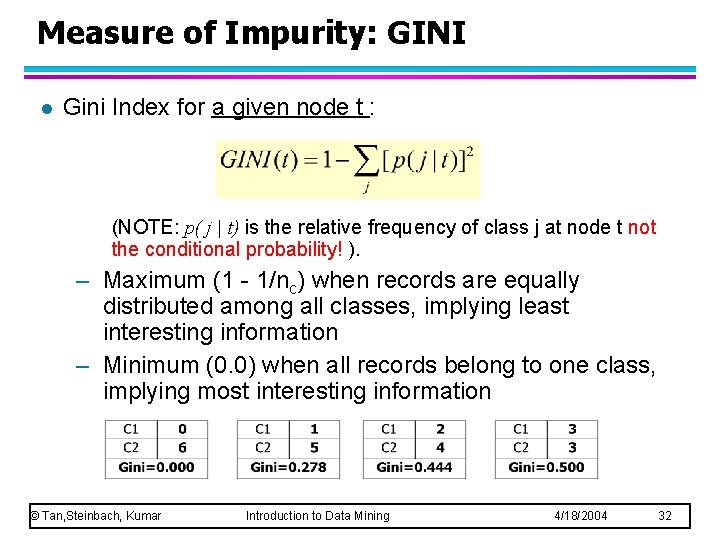

Measure of Impurity: GINI l Gini Index for a given node t : (NOTE: p( j | t) is the relative frequency of class j at node t not the conditional probability! ). – Maximum (1 - 1/nc) when records are equally distributed among all classes, implying least interesting information – Minimum (0. 0) when all records belong to one class, implying most interesting information © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 32

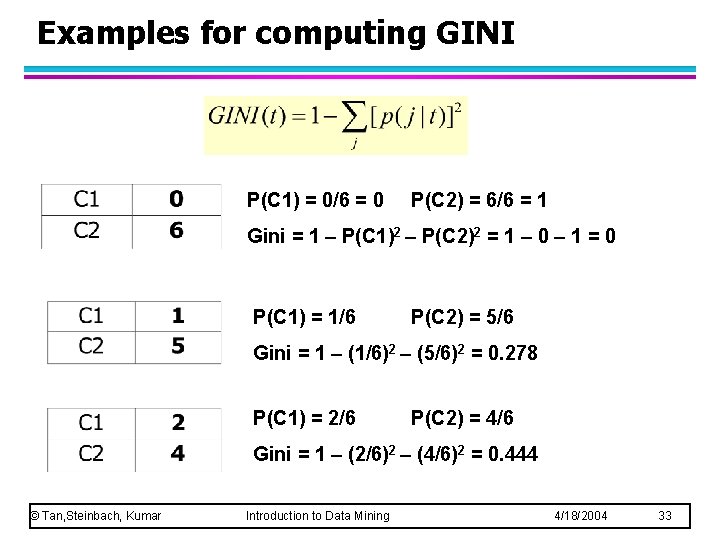

Examples for computing GINI P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Gini = 1 – P(C 1)2 – P(C 2)2 = 1 – 0 – 1 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Gini = 1 – (1/6)2 – (5/6)2 = 0. 278 P(C 1) = 2/6 P(C 2) = 4/6 Gini = 1 – (2/6)2 – (4/6)2 = 0. 444 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 33

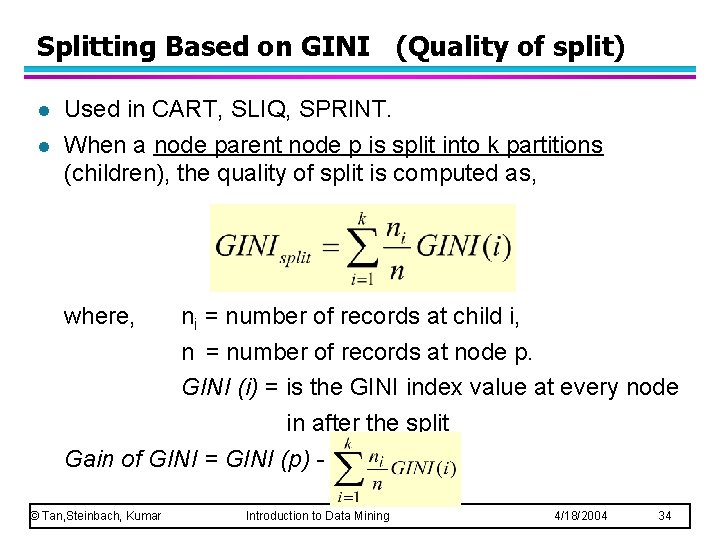

Splitting Based on GINI (Quality of split) l l Used in CART, SLIQ, SPRINT. When a node parent node p is split into k partitions (children), the quality of split is computed as, where, ni = number of records at child i, n = number of records at node p. GINI (i) = is the GINI index value at every node in after the split Gain of GINI = GINI (p) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 34

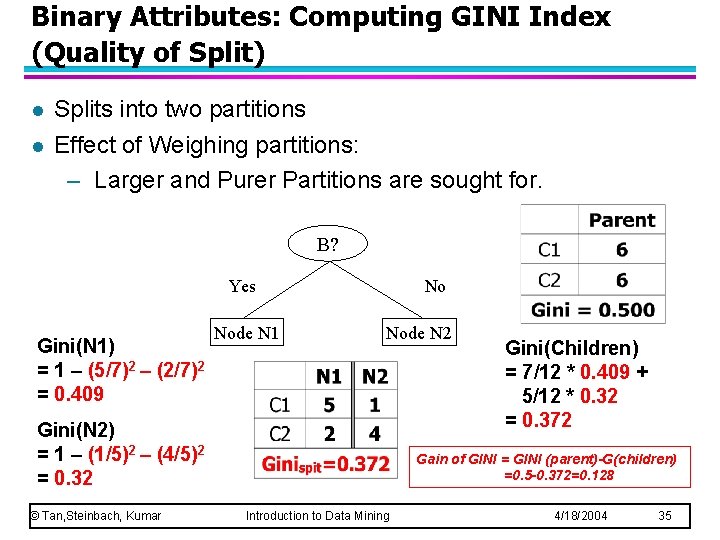

Binary Attributes: Computing GINI Index (Quality of Split) l l Splits into two partitions Effect of Weighing partitions: – Larger and Purer Partitions are sought for. B? Yes Gini(N 1) = 1 – (5/7)2 – (2/7)2 = 0. 409 Node N 1 No Node N 2 Gini(N 2) = 1 – (1/5)2 – (4/5)2 = 0. 32 © Tan, Steinbach, Kumar Gini(Children) = 7/12 * 0. 409 + 5/12 * 0. 32 = 0. 372 Gain of GINI = GINI (parent)-G(children) =0. 5 -0. 372=0. 128 Introduction to Data Mining 4/18/2004 35

Binary Attributes: Computing GINI Index (Quality of Split) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 36

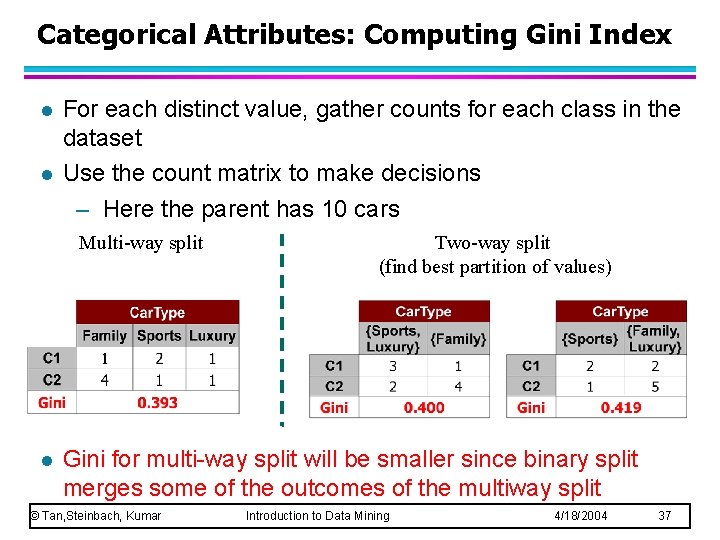

Categorical Attributes: Computing Gini Index l l For each distinct value, gather counts for each class in the dataset Use the count matrix to make decisions – Here the parent has 10 cars Multi-way split l Two-way split (find best partition of values) Gini for multi-way split will be smaller since binary split merges some of the outcomes of the multiway split © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 37

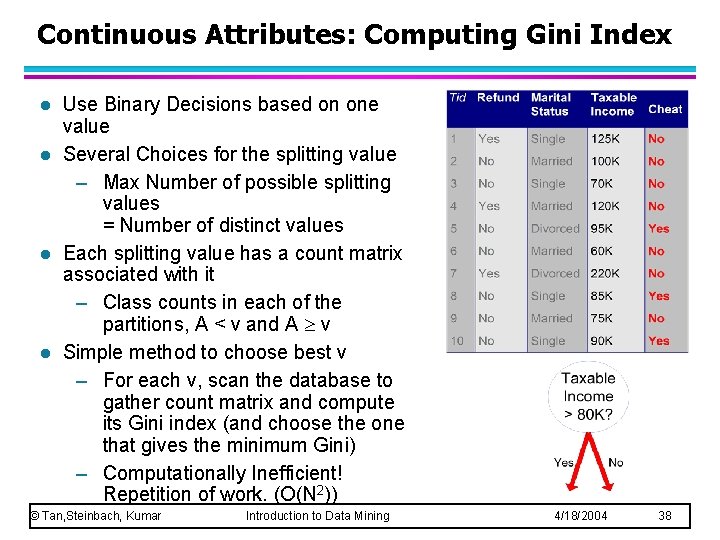

Continuous Attributes: Computing Gini Index l l Use Binary Decisions based on one value Several Choices for the splitting value – Max Number of possible splitting values = Number of distinct values Each splitting value has a count matrix associated with it – Class counts in each of the partitions, A < v and A v Simple method to choose best v – For each v, scan the database to gather count matrix and compute its Gini index (and choose the one that gives the minimum Gini) – Computationally Inefficient! Repetition of work. (O(N 2)) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 38

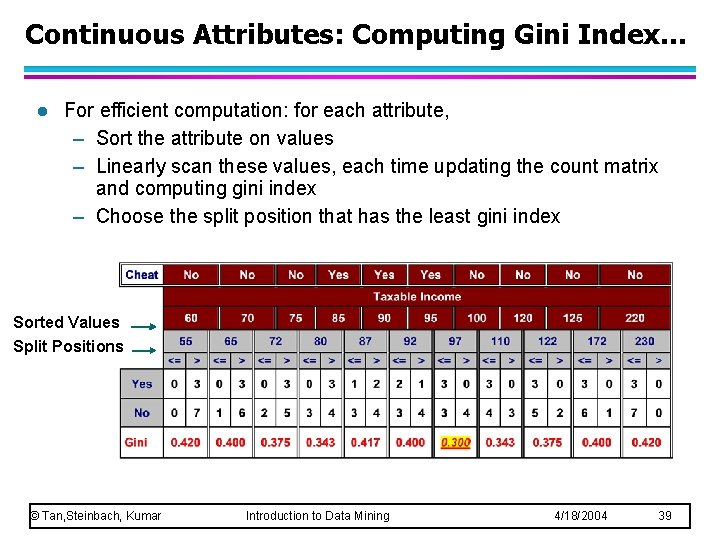

Continuous Attributes: Computing Gini Index. . . l For efficient computation: for each attribute, – Sort the attribute on values – Linearly scan these values, each time updating the count matrix and computing gini index – Choose the split position that has the least gini index Sorted Values Split Positions © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 39

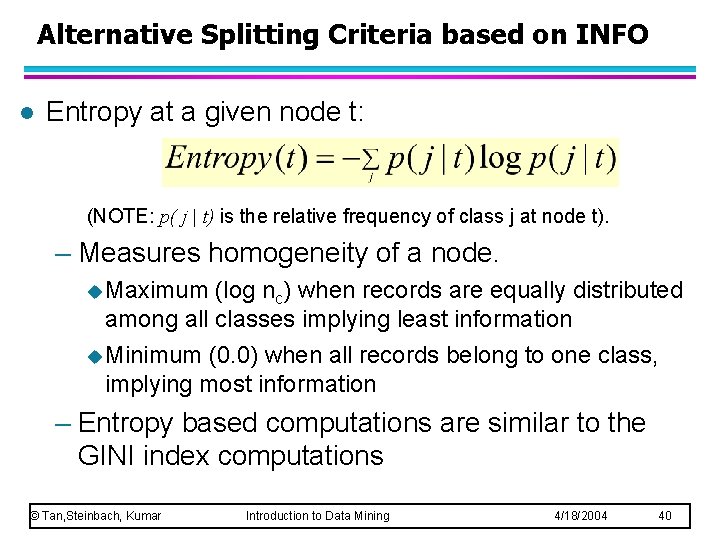

Alternative Splitting Criteria based on INFO l Entropy at a given node t: (NOTE: p( j | t) is the relative frequency of class j at node t). – Measures homogeneity of a node. u Maximum (log nc) when records are equally distributed among all classes implying least information u Minimum (0. 0) when all records belong to one class, implying most information – Entropy based computations are similar to the GINI index computations © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 40

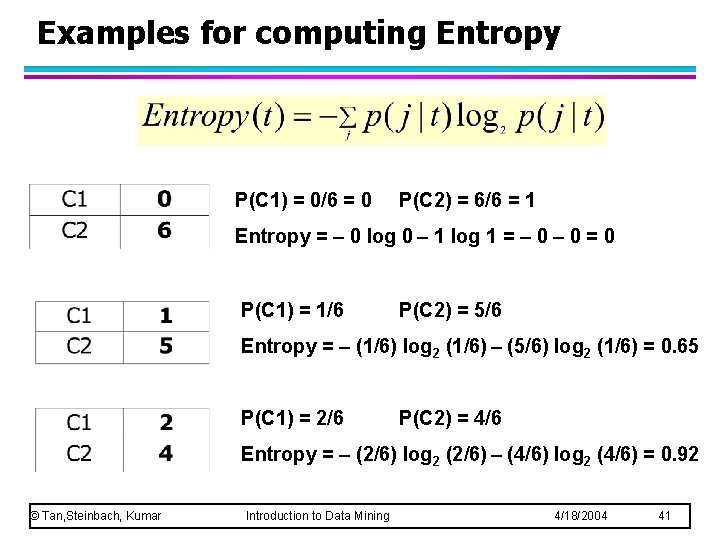

Examples for computing Entropy P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Entropy = – 0 log 0 – 1 log 1 = – 0 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Entropy = – (1/6) log 2 (1/6) – (5/6) log 2 (1/6) = 0. 65 P(C 1) = 2/6 P(C 2) = 4/6 Entropy = – (2/6) log 2 (2/6) – (4/6) log 2 (4/6) = 0. 92 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 41

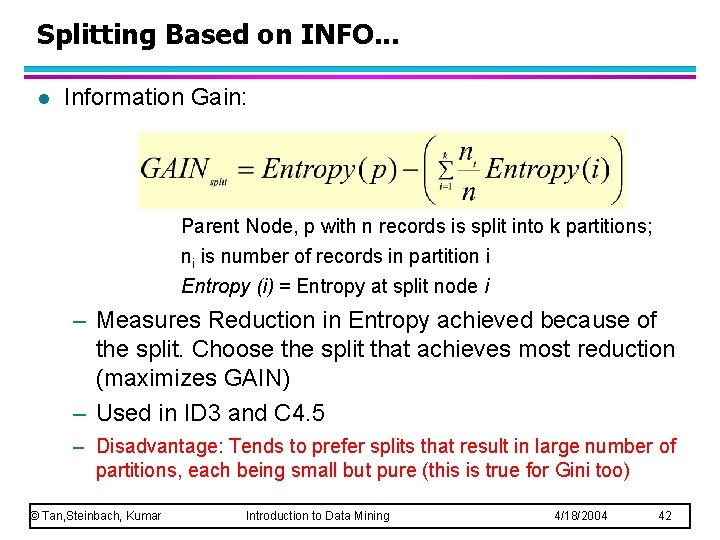

Splitting Based on INFO. . . l Information Gain: Parent Node, p with n records is split into k partitions; ni is number of records in partition i Entropy (i) = Entropy at split node i – Measures Reduction in Entropy achieved because of the split. Choose the split that achieves most reduction (maximizes GAIN) – Used in ID 3 and C 4. 5 – Disadvantage: Tends to prefer splits that result in large number of partitions, each being small but pure (this is true for Gini too) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 42

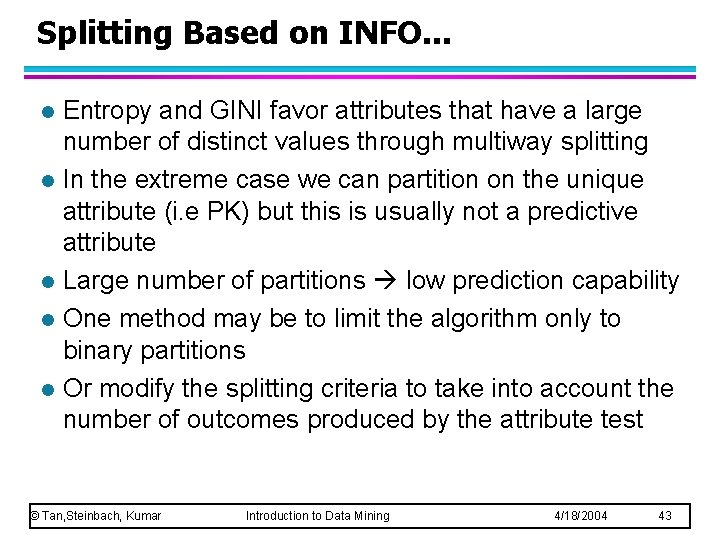

Splitting Based on INFO. . . l l l Entropy and GINI favor attributes that have a large number of distinct values through multiway splitting In the extreme case we can partition on the unique attribute (i. e PK) but this is usually not a predictive attribute Large number of partitions low prediction capability One method may be to limit the algorithm only to binary partitions Or modify the splitting criteria to take into account the number of outcomes produced by the attribute test © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 43

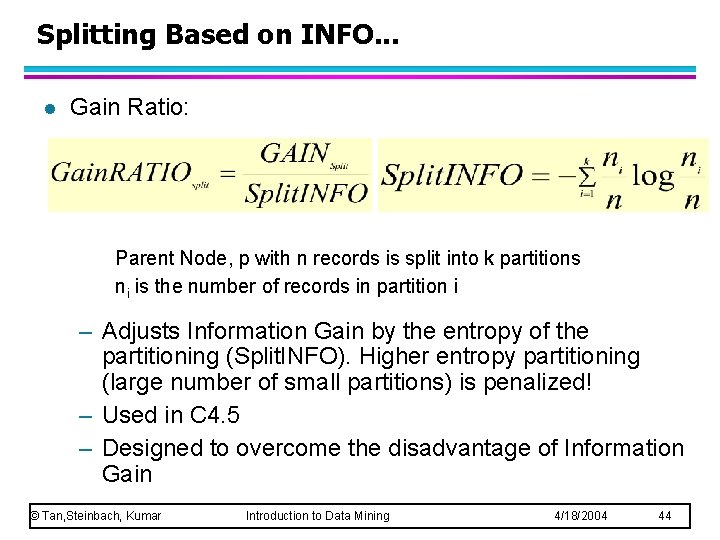

Splitting Based on INFO. . . l Gain Ratio: Parent Node, p with n records is split into k partitions ni is the number of records in partition i – Adjusts Information Gain by the entropy of the partitioning (Split. INFO). Higher entropy partitioning (large number of small partitions) is penalized! – Used in C 4. 5 – Designed to overcome the disadvantage of Information Gain © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 44

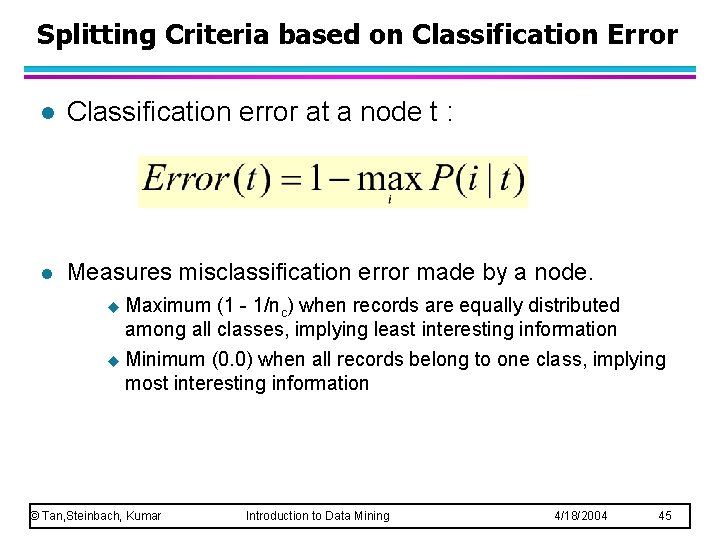

Splitting Criteria based on Classification Error l Classification error at a node t : l Measures misclassification error made by a node. u Maximum (1 - 1/nc) when records are equally distributed among all classes, implying least interesting information u Minimum (0. 0) when all records belong to one class, implying most interesting information © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 45

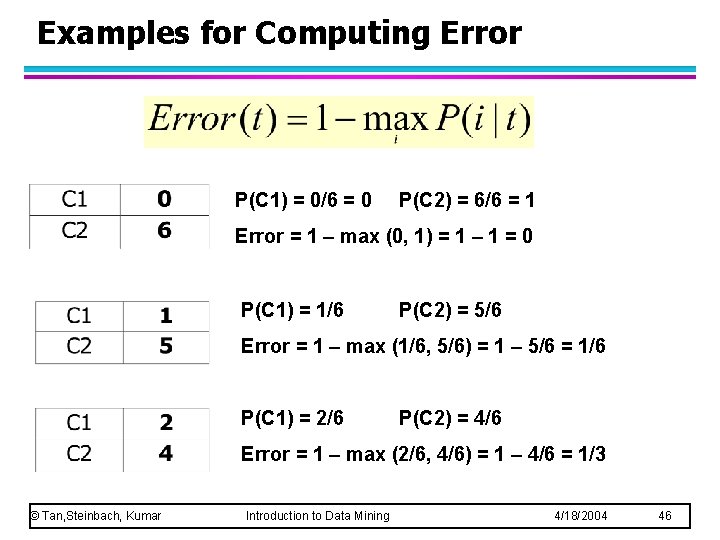

Examples for Computing Error P(C 1) = 0/6 = 0 P(C 2) = 6/6 = 1 Error = 1 – max (0, 1) = 1 – 1 = 0 P(C 1) = 1/6 P(C 2) = 5/6 Error = 1 – max (1/6, 5/6) = 1 – 5/6 = 1/6 P(C 1) = 2/6 P(C 2) = 4/6 Error = 1 – max (2/6, 4/6) = 1 – 4/6 = 1/3 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 46

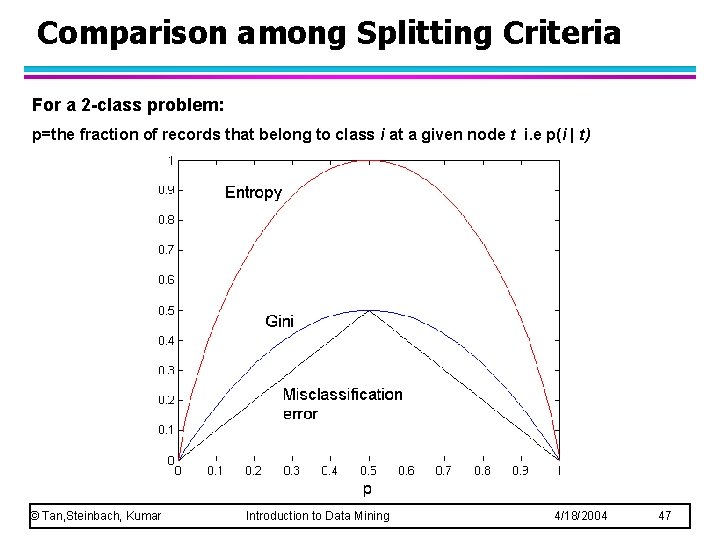

Comparison among Splitting Criteria For a 2 -class problem: p=the fraction of records that belong to class i at a given node t i. e p(i | t) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 47

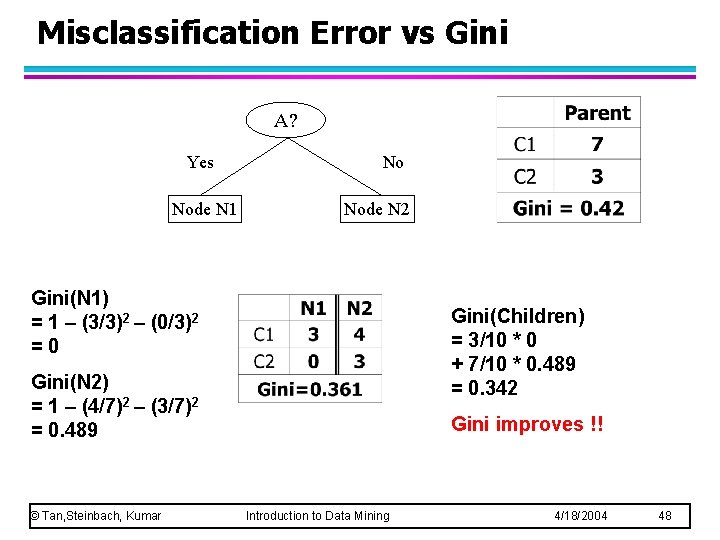

Misclassification Error vs Gini A? Yes Node N 1 No Node N 2 Gini(N 1) = 1 – (3/3)2 – (0/3)2 =0 Gini(Children) = 3/10 * 0 + 7/10 * 0. 489 = 0. 342 Gini(N 2) = 1 – (4/7)2 – (3/7)2 = 0. 489 © Tan, Steinbach, Kumar Gini improves !! Introduction to Data Mining 4/18/2004 48

Stopping Criteria for Tree Induction l Stop expanding a node when all the records belong to the same class l Stop expanding a node when all the records have similar attribute values l Early termination (to be discussed later) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 49

Decision Tree Based Classification l Advantages: – Inexpensive to construct – Extremely fast at classifying unknown records – Easy to interpret for small-sized trees – Accuracy is comparable to other classification techniques for many simple data sets © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 50

Example: C 4. 5 Simple depth-first construction. l Uses Information Gain l Sorts Continuous Attributes at each node. l Needs entire data to fit in memory. l Unsuitable for Large Datasets. – Needs out-of-core sorting. l l You can download the software from: http: //www. cse. unsw. edu. au/~quinlan/c 4. 5 r 8. tar. gz © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 51

Practical Issues of Classification l Underfitting and Overfitting l Missing Values l Costs of Classification © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 52

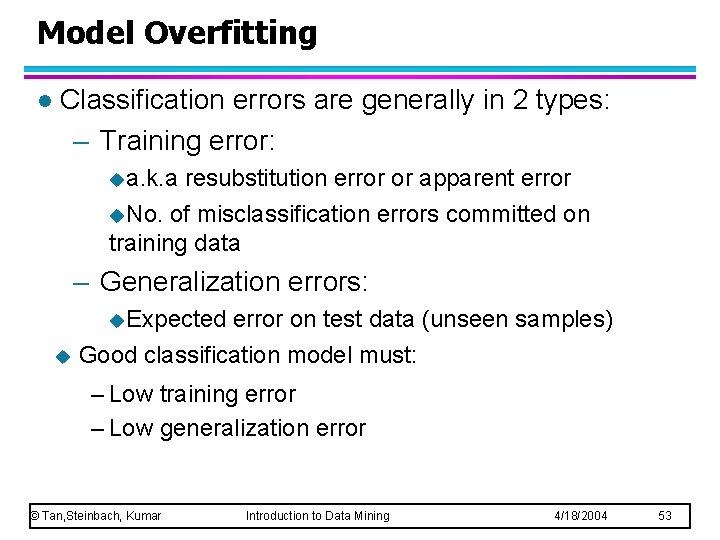

Model Overfitting l Classification errors are generally in 2 types: – Training error: ua. k. a resubstitution error or apparent error u. No. of misclassification errors committed on training data – Generalization errors: u. Expected error on test data (unseen samples) u Good classification model must: – Low training error – Low generalization error © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 53

Model Overfitting l A model which fits the train data too well may have a higher generalization error than a model with high training error l This is known as model overfitting l When model is too simple, both training and test errors are large This is known as underfitting © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 54

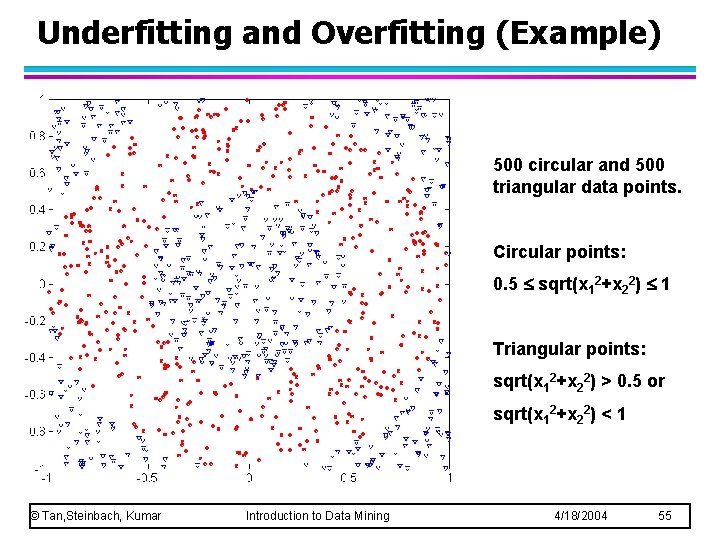

Underfitting and Overfitting (Example) 500 circular and 500 triangular data points. Circular points: 0. 5 sqrt(x 12+x 22) 1 Triangular points: sqrt(x 12+x 22) > 0. 5 or sqrt(x 12+x 22) < 1 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 55

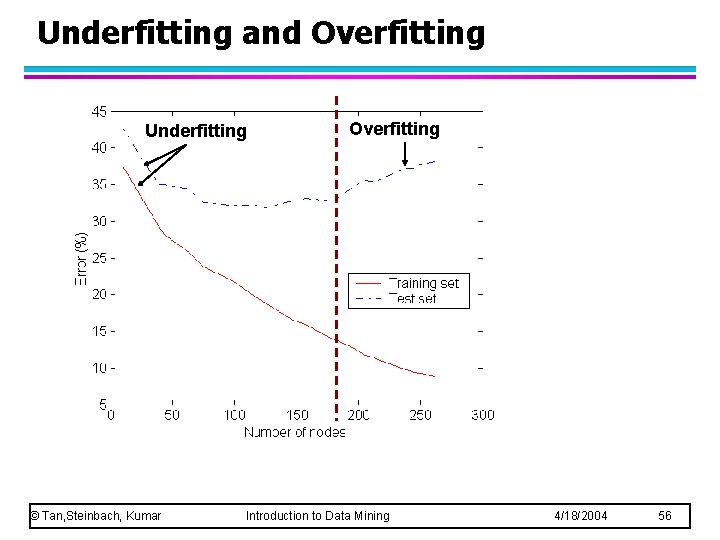

Underfitting and Overfitting Underfitting © Tan, Steinbach, Kumar Overfitting Introduction to Data Mining 4/18/2004 56

Underfitting and Overfitting l l l Both overfitting and underfitting are related to model complexity Underfitting usually occure when the model (such as the tree) is to simple and hence is yet to learn the true structure of the data Overfitting may occur: – When model is too complex where the model (such as the tree) may have nodes that accidentally fit the noise points in the train data – When train data is too small but the model keeps refining itself even when few training records that may not be very representative are available © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 57

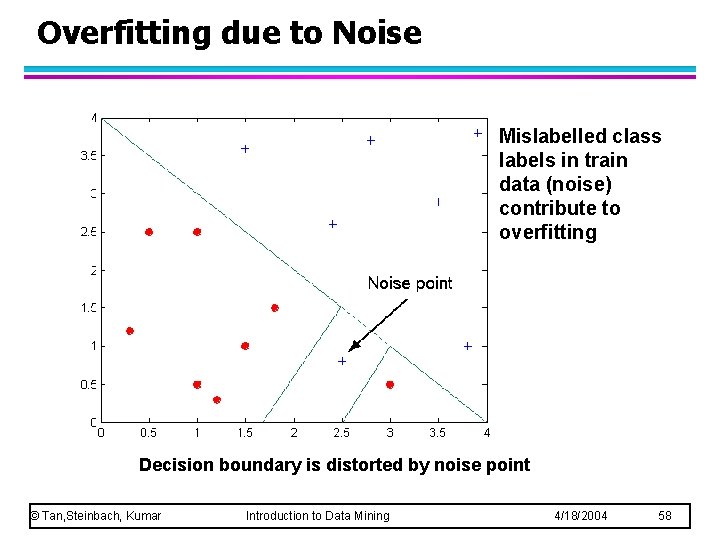

Overfitting due to Noise Mislabelled class labels in train data (noise) contribute to overfitting Decision boundary is distorted by noise point © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 58

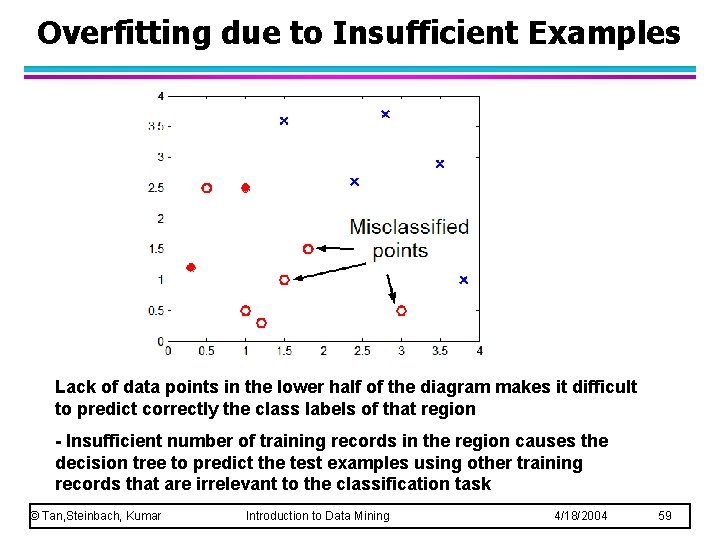

Overfitting due to Insufficient Examples Lack of data points in the lower half of the diagram makes it difficult to predict correctly the class labels of that region - Insufficient number of training records in the region causes the decision tree to predict the test examples using other training records that are irrelevant to the classification task © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 59

Notes on Overfitting l l l Overfitting results in decision trees that are more complex than necessary Training error no longer provides a good estimate of how well the tree will perform on previously unseen records How do we determine the right model complexity (with minimum generalizatin error) ? The algorithm only has access to train data during learning and has no knowledge of the test data to guess how well it will perform on previously unseen data The best it can do is to estimate generalization errors © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 60

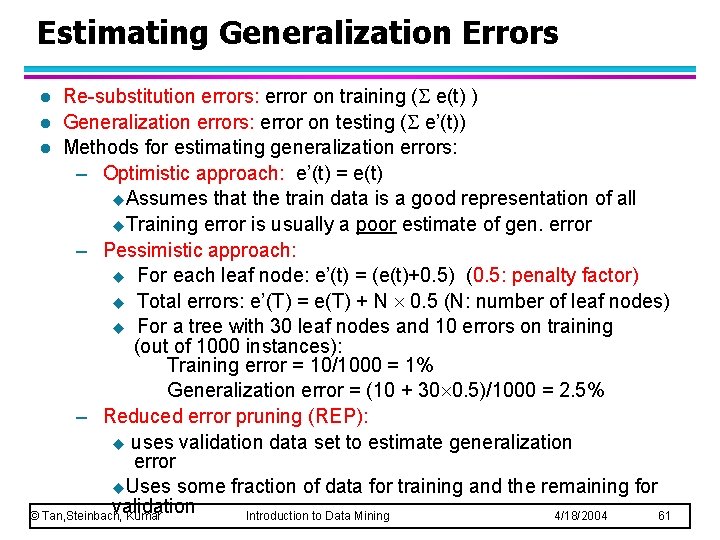

Estimating Generalization Errors Re-substitution errors: error on training ( e(t) ) l Generalization errors: error on testing ( e’(t)) l Methods for estimating generalization errors: – Optimistic approach: e’(t) = e(t) u. Assumes that the train data is a good representation of all u. Training error is usually a poor estimate of gen. error – Pessimistic approach: u For each leaf node: e’(t) = (e(t)+0. 5) (0. 5: penalty factor) u Total errors: e’(T) = e(T) + N 0. 5 (N: number of leaf nodes) u For a tree with 30 leaf nodes and 10 errors on training (out of 1000 instances): Training error = 10/1000 = 1% Generalization error = (10 + 30 0. 5)/1000 = 2. 5% – Reduced error pruning (REP): u uses validation data set to estimate generalization error u. Uses some fraction of data for training and the remaining for validation © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 61 l

Occam’s Razor l Given two models of similar generalization errors, one should prefer the simpler model over the more complex model l For complex models, there is a greater chance that it was fitted accidentally by errors in data l Therefore, one should include model complexity when evaluating a model © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 62

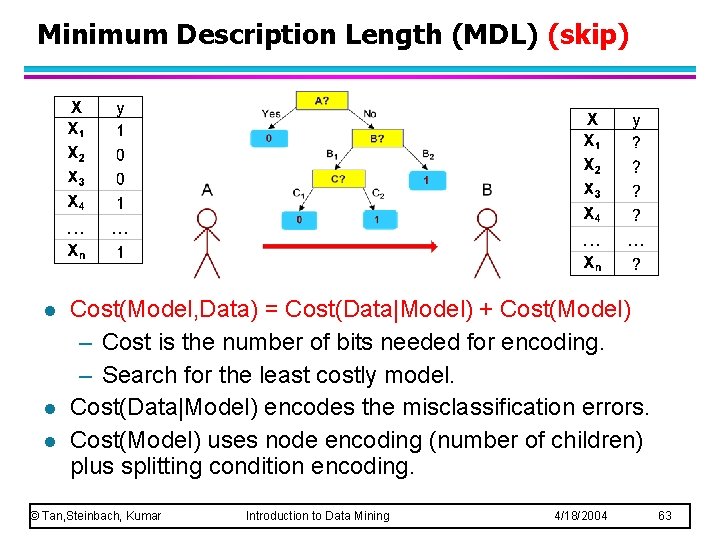

Minimum Description Length (MDL) (skip) l l l Cost(Model, Data) = Cost(Data|Model) + Cost(Model) – Cost is the number of bits needed for encoding. – Search for the least costly model. Cost(Data|Model) encodes the misclassification errors. Cost(Model) uses node encoding (number of children) plus splitting condition encoding. © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 63

How to Address Overfitting l Pre-Pruning (Early Stopping Rule) – Stop the algorithm before it becomes a fully-grown tree – Typical stopping conditions for a node: u Stop if all instances belong to the same class u Stop if all the attribute values are the same – More restrictive conditions: u Stop if number of instances is less than some user-specified threshold u Stop if class distribution of instances are independent of the available features (e. g. , using 2 test) u Stop if expanding the current node does not improve impurity measures (e. g. , Gini or information gain). – Stopping too early may result in underfitting – Even if the first split does not improve, subsequent splits may improve © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 64

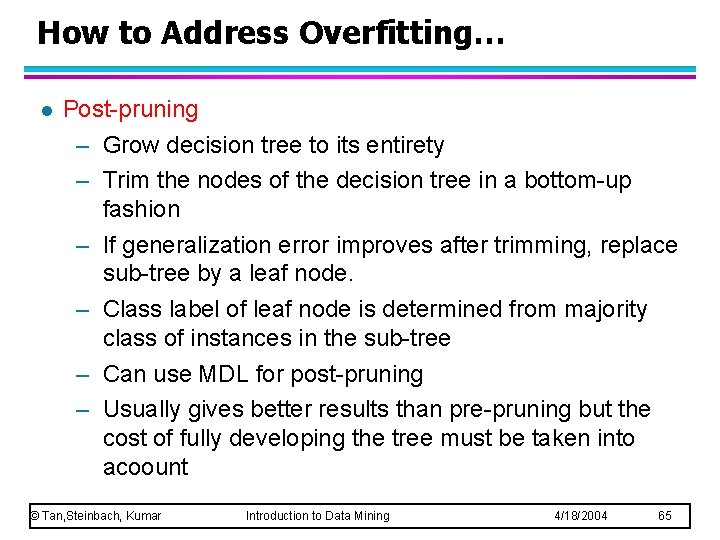

How to Address Overfitting… l Post-pruning – Grow decision tree to its entirety – Trim the nodes of the decision tree in a bottom-up fashion – If generalization error improves after trimming, replace sub-tree by a leaf node. – Class label of leaf node is determined from majority class of instances in the sub-tree – Can use MDL for post-pruning – Usually gives better results than pre-pruning but the cost of fully developing the tree must be taken into acoount © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 65

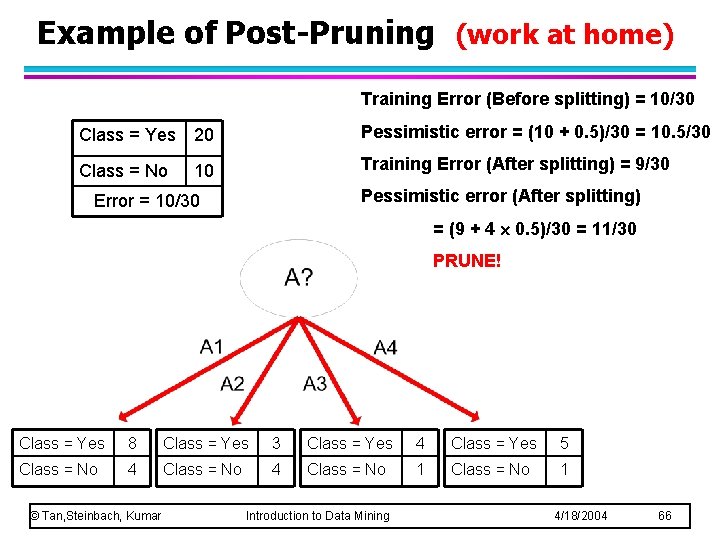

Example of Post-Pruning (work at home) Training Error (Before splitting) = 10/30 Class = Yes 20 Pessimistic error = (10 + 0. 5)/30 = 10. 5/30 Class = No 10 Training Error (After splitting) = 9/30 Pessimistic error (After splitting) Error = 10/30 = (9 + 4 0. 5)/30 = 11/30 PRUNE! Class = Yes 8 Class = Yes 3 Class = Yes 4 Class = Yes 5 Class = No 4 Class = No 1 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 66

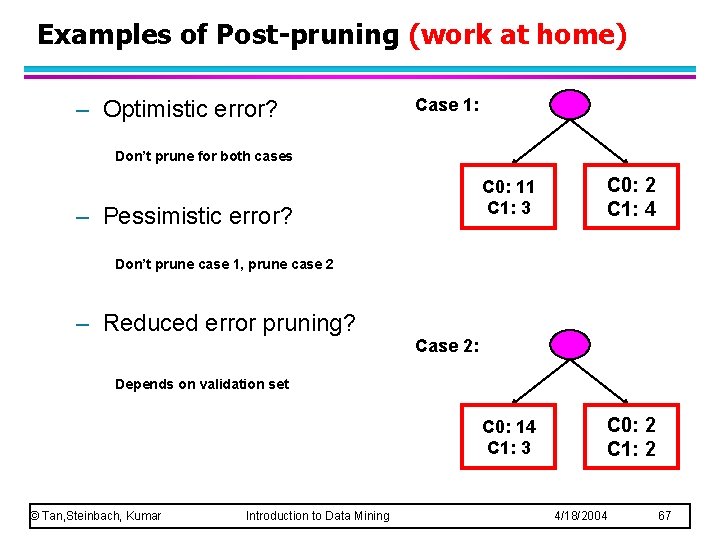

Examples of Post-pruning (work at home) – Optimistic error? Case 1: Don’t prune for both cases – Pessimistic error? C 0: 11 C 1: 3 C 0: 2 C 1: 4 C 0: 14 C 1: 3 C 0: 2 C 1: 2 Don’t prune case 1, prune case 2 – Reduced error pruning? Case 2: Depends on validation set © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 67

Handling Missing Attribute Values l Missing values affect decision tree construction in three different ways: – Affects how impurity measures are computed – Affects how to distribute instance with missing value to child nodes – Affects how a test instance with missing value is classified © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 68

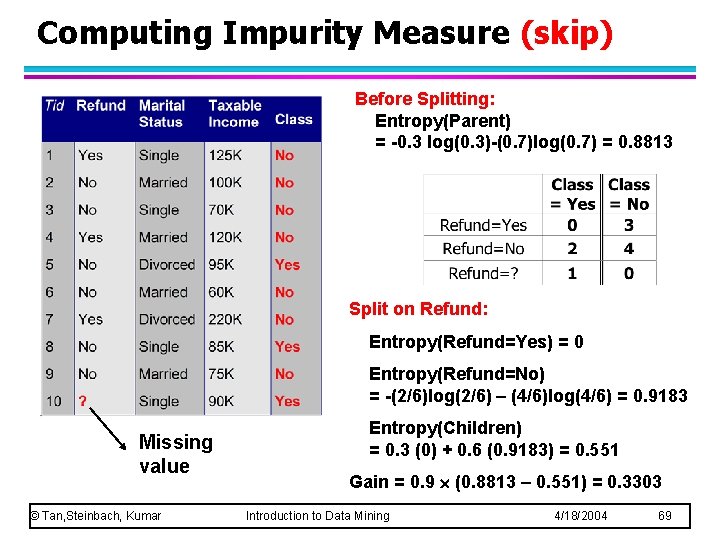

Computing Impurity Measure (skip) Before Splitting: Entropy(Parent) = -0. 3 log(0. 3)-(0. 7)log(0. 7) = 0. 8813 Split on Refund: Entropy(Refund=Yes) = 0 Entropy(Refund=No) = -(2/6)log(2/6) – (4/6)log(4/6) = 0. 9183 Missing value © Tan, Steinbach, Kumar Entropy(Children) = 0. 3 (0) + 0. 6 (0. 9183) = 0. 551 Gain = 0. 9 (0. 8813 – 0. 551) = 0. 3303 Introduction to Data Mining 4/18/2004 69

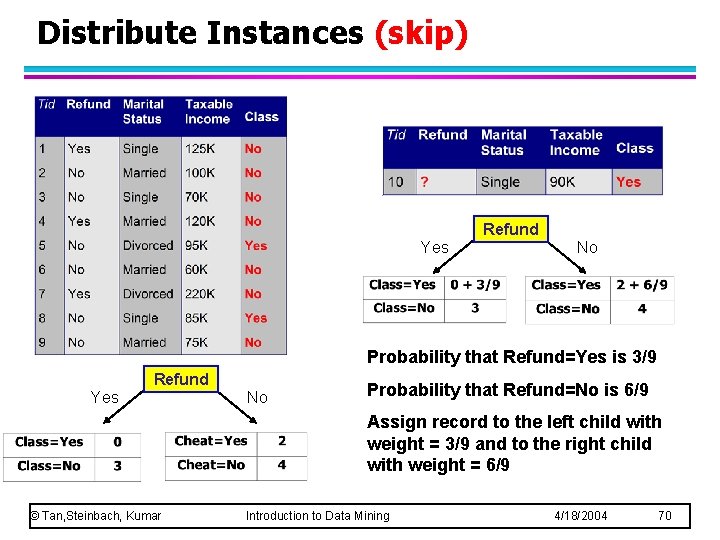

Distribute Instances (skip) Refund Yes No Probability that Refund=Yes is 3/9 Refund Yes No Probability that Refund=No is 6/9 Assign record to the left child with weight = 3/9 and to the right child with weight = 6/9 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 70

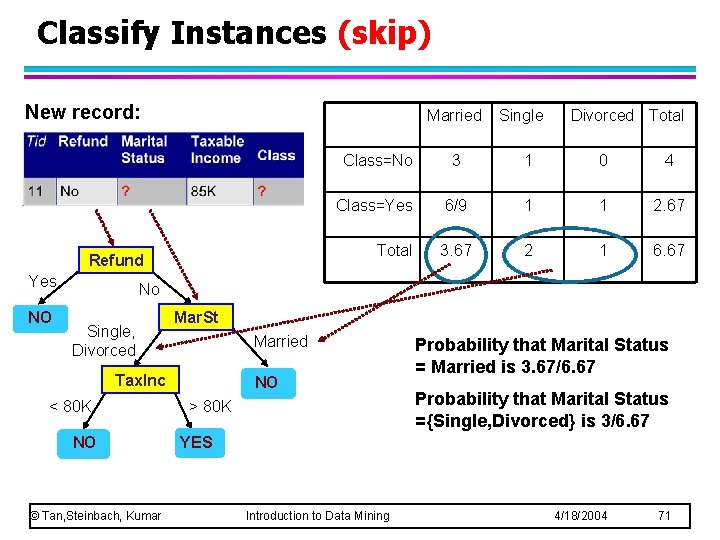

Classify Instances (skip) New record: Married Refund Yes NO Single Divorced Total Class=No 3 1 0 4 Class=Yes 6/9 1 1 2. 67 Total 3. 67 2 1 6. 67 No Single, Divorced Mar. St Married Tax. Inc < 80 K NO © Tan, Steinbach, Kumar NO > 80 K Probability that Marital Status = Married is 3. 67/6. 67 Probability that Marital Status ={Single, Divorced} is 3/6. 67 YES Introduction to Data Mining 4/18/2004 71

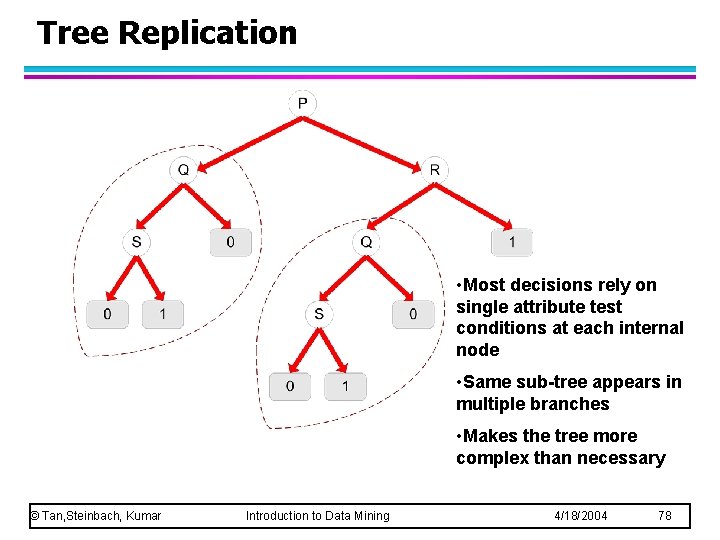

Other Issues (skip) Data Fragmentation l Search Strategy l Expressiveness l Tree Replication l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 72

Data Fragmentation (skip) l Number of instances gets smaller as you traverse down the tree l Number of instances at the leaf nodes could be too small to make any statistically significant decision l One possible solution may be to disallow further splitting when the number of records fall below a certain threshold © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 73

Search Strategy (skip) l Finding an optimal decision tree is NP-hard l The algorithm presented so far uses a greedy, top -down, recursive partitioning strategy to induce a reasonable solution l Other strategies? – Heuristic based – Bottom-up – Bi-directional © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 74

Expressiveness of a decision tree l Decision tree provides expressive representation for learning discrete-valued function – But they do not generalize well to certain types of Boolean functions u Example: parity function: – Class = 1 if there is an even number of Boolean attributes with truth value = True – Class = 0 if there is an odd number of Boolean attributes with truth value = True For accurate modeling, must have a complete tree with 2 d nodes where d is the number of Boolean attributes u l Not expressive enough for modeling continuous variables – Particularly when test condition involves only a single attribute at-a-time © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 75

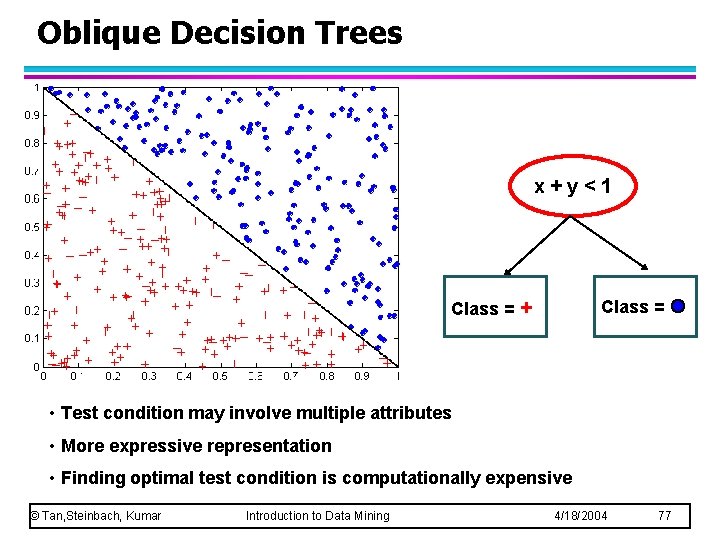

Decision Boundary (single attribute at a time) • Border line between two neighboring regions of different classes is known as decision boundary • Decision boundary is parallel to axes (rectilinear) because test condition involves a single attribute at-a-time • Complex relationships among continuous attributes may not be expressed correctly © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 76

Oblique Decision Trees x+y<1 Class = + Class = • Test condition may involve multiple attributes • More expressive representation • Finding optimal test condition is computationally expensive © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 77

Tree Replication • Most decisions rely on single attribute test conditions at each internal node • Same sub-tree appears in multiple branches • Makes the tree more complex than necessary © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 78

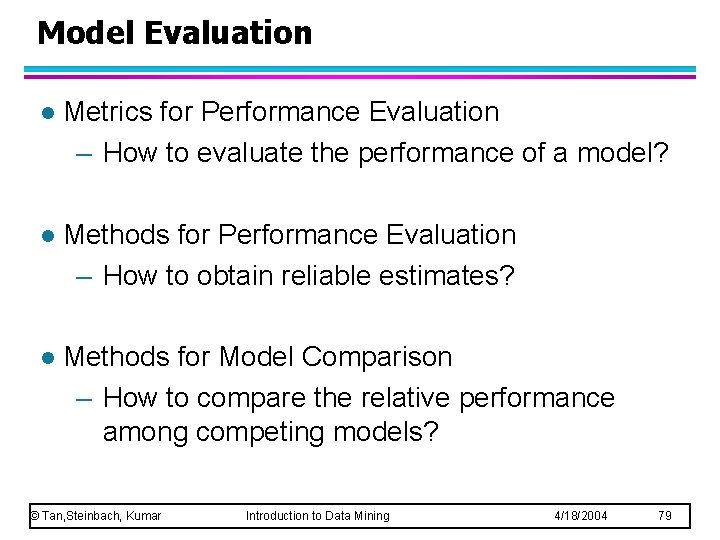

Model Evaluation l Metrics for Performance Evaluation – How to evaluate the performance of a model? l Methods for Performance Evaluation – How to obtain reliable estimates? l Methods for Model Comparison – How to compare the relative performance among competing models? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 79

Model Evaluation l Metrics for Performance Evaluation – How to evaluate the performance of a model? l Methods for Performance Evaluation – How to obtain reliable estimates? l Methods for Model Comparison – How to compare the relative performance among competing models? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 80

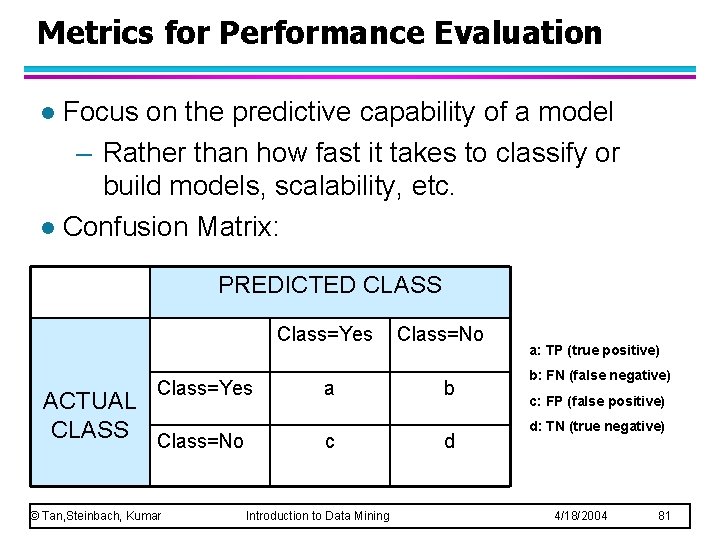

Metrics for Performance Evaluation Focus on the predictive capability of a model – Rather than how fast it takes to classify or build models, scalability, etc. l Confusion Matrix: l PREDICTED CLASS Class=Yes ACTUAL CLASS Class=No © Tan, Steinbach, Kumar a c Introduction to Data Mining Class=No b d a: TP (true positive) b: FN (false negative) c: FP (false positive) d: TN (true negative) 4/18/2004 81

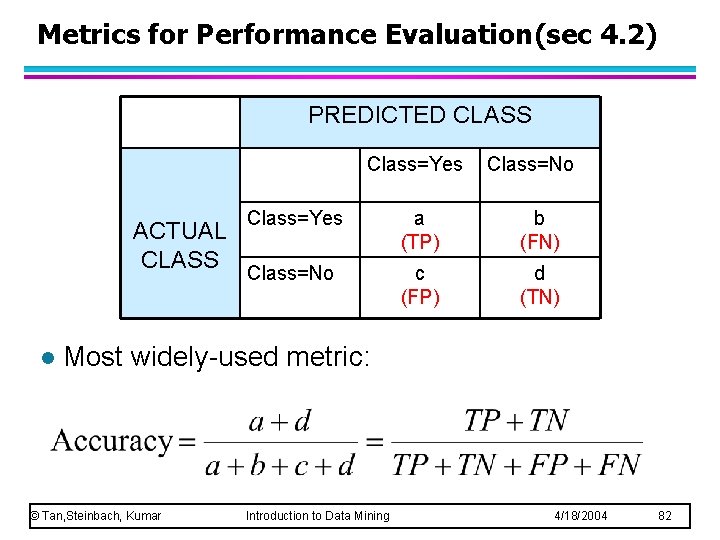

Metrics for Performance Evaluation(sec 4. 2) PREDICTED CLASS Class=Yes ACTUAL CLASS l Class=No Class=Yes a (TP) b (FN) Class=No c (FP) d (TN) Most widely-used metric: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 82

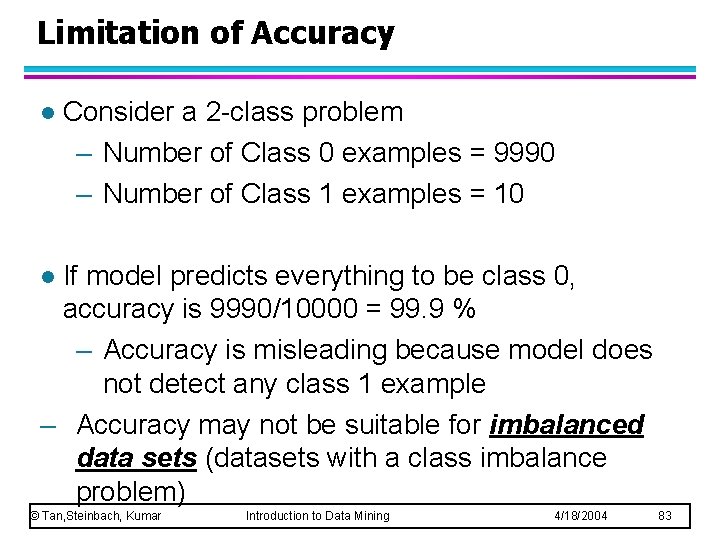

Limitation of Accuracy l Consider a 2 -class problem – Number of Class 0 examples = 9990 – Number of Class 1 examples = 10 If model predicts everything to be class 0, accuracy is 9990/10000 = 99. 9 % – Accuracy is misleading because model does not detect any class 1 example – Accuracy may not be suitable for imbalanced data sets (datasets with a class imbalance problem) l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 83

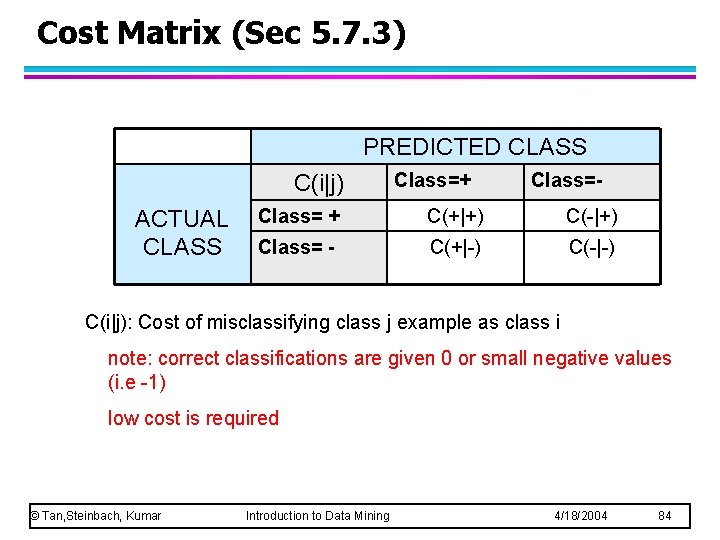

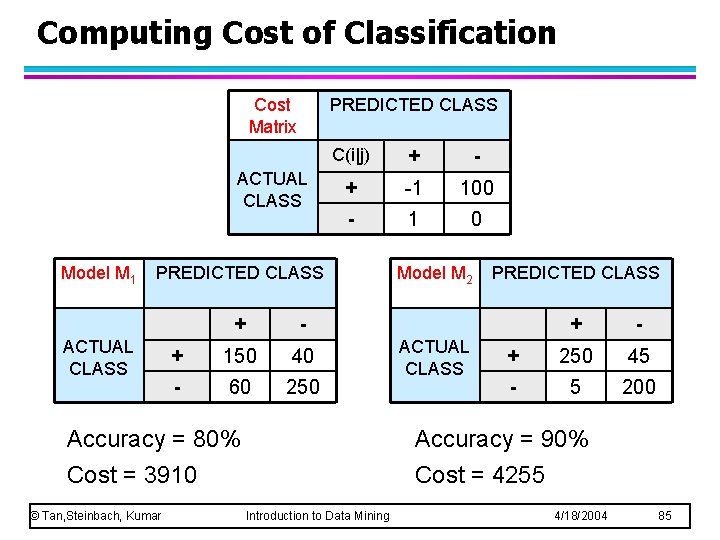

Cost Matrix (Sec 5. 7. 3) PREDICTED CLASS C(i|j) ACTUAL CLASS Class=+ Class=- Class= + C(+|+) C(-|+) Class= - C(+|-) C(-|-) C(i|j): Cost of misclassifying class j example as class i note: correct classifications are given 0 or small negative values (i. e -1) low cost is required © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 84

Computing Cost of Classification Cost Matrix PREDICTED CLASS ACTUAL CLASS Model M 1 C(i|j) + -1 100 - 1 0 PREDICTED CLASS ACTUAL CLASS + - + 150 40 - 60 250 Accuracy = 80% Cost = 3910 © Tan, Steinbach, Kumar Model M 2 ACTUAL CLASS PREDICTED CLASS + - + 250 45 - 5 200 Accuracy = 90% Cost = 4255 Introduction to Data Mining 4/18/2004 85

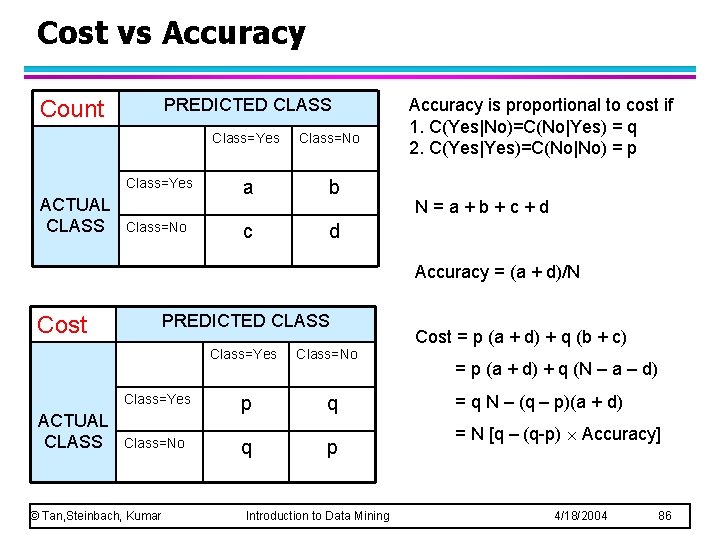

Cost vs Accuracy PREDICTED CLASS Count Class=Yes ACTUAL CLASS Class=No a b c d Accuracy is proportional to cost if 1. C(Yes|No)=C(No|Yes) = q 2. C(Yes|Yes)=C(No|No) = p N=a+b+c+d Accuracy = (a + d)/N PREDICTED CLASS Cost Class=Yes ACTUAL CLASS Class=No © Tan, Steinbach, Kumar p q Class=No q p Introduction to Data Mining Cost = p (a + d) + q (b + c) = p (a + d) + q (N – a – d) = q N – (q – p)(a + d) = N [q – (q-p) Accuracy] 4/18/2004 86

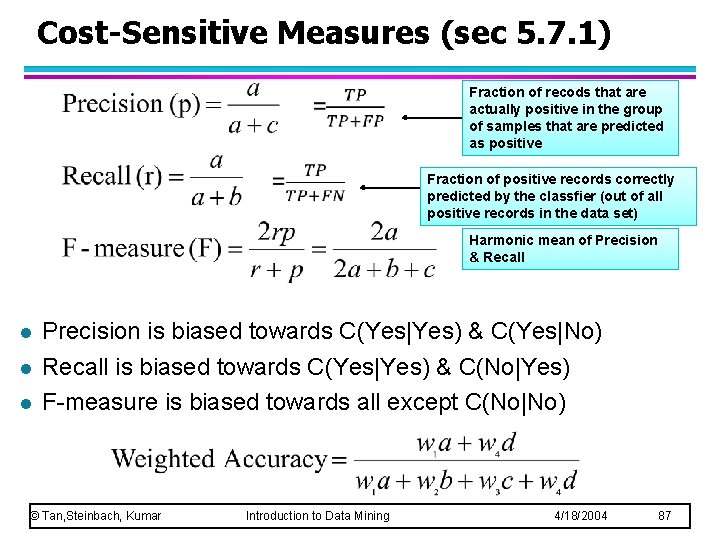

Cost-Sensitive Measures (sec 5. 7. 1) Fraction of recods that are actually positive in the group of samples that are predicted as positive Fraction of positive records correctly predicted by the classfier (out of all positive records in the data set) Harmonic mean of Precision & Recall l Precision is biased towards C(Yes|Yes) & C(Yes|No) Recall is biased towards C(Yes|Yes) & C(No|Yes) F-measure is biased towards all except C(No|No) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 87

Model Evaluation l Metrics for Performance Evaluation – How to evaluate the performance of a model? l Methods for Performance Evaluation – How to obtain reliable estimates? l Methods for Model Comparison – How to compare the relative performance among competing models? © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 88

Methods for Performance Evaluation l How to obtain a reliable estimate of performance? l Performance of a model may depend on other factors besides the learning algorithm: – Class distribution – Cost of misclassification – Size of training and test sets © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 89

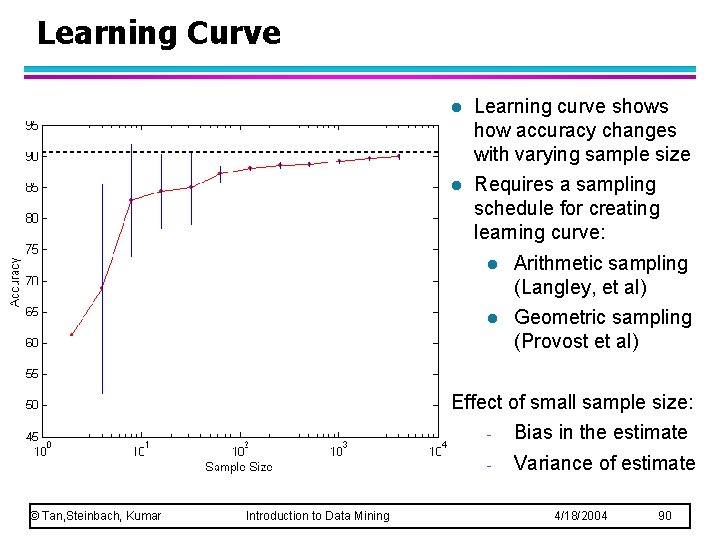

Learning Curve l Learning curve shows how accuracy changes with varying sample size l Requires a sampling schedule for creating learning curve: l Arithmetic sampling (Langley, et al) l Geometric sampling (Provost et al) Effect of small sample size: © Tan, Steinbach, Kumar Introduction to Data Mining - Bias in the estimate - Variance of estimate 4/18/2004 90

Methods of Estimation (Classifier Evaluation) l Holdout – Reserve 2/3 for training and 1/3 for testing ( or 50% each); typically determined by the analyst – Limitations: u. Less data for training (may degrade classifier performance) u. Smaller the train data the larger the variance of the model u. On the other hand: – if train data is too large, accuracy from small test set is less reliable u. Train and test datasets are not independent; – a class that may be overrepresented in the train data may be underreperesented in the test set © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 91

Methods of Estimation (Classifier Evaluation) l l l Random subsampling – Repeated holdout – The overall accuracy is the average of all k holdouts – Again the train data does not use as much data as possible – No control over number of times each record is used for training and testing Cross validation – Partition data into k disjoint subsets – k-fold: train on k-1 partitions, test on the remaining one – Leave-one-out: k=n (n = number of records) u. Has the advantage of utilizing as much as train data as possible u. Test sets are exclusive and cover all data set Bootstrap – Sampling with replacement (Computationally expensive) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 92

Methods of Estimation (Classifier Evaluation) l l So far all methods assume that training records are sampled withou replacement Bootstrap – Sampling with replacement – Records that are already chosen for train set is put back to the original data for a possibility of rechosen – If the set contains N records a bootstrap sample size of N can be shown to include 63. 2% of original data – Records which are not included in the bootstrap sample become part of the test set © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 93

Model Evaluation l Metrics for Performance Evaluation – How to evaluate the performance of a model? l Methods for Performance Evaluation – How to obtain reliable estimates? l Methods for Model Comparison – How to compare the relative performance among competing models? – Go to slide 101 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 94

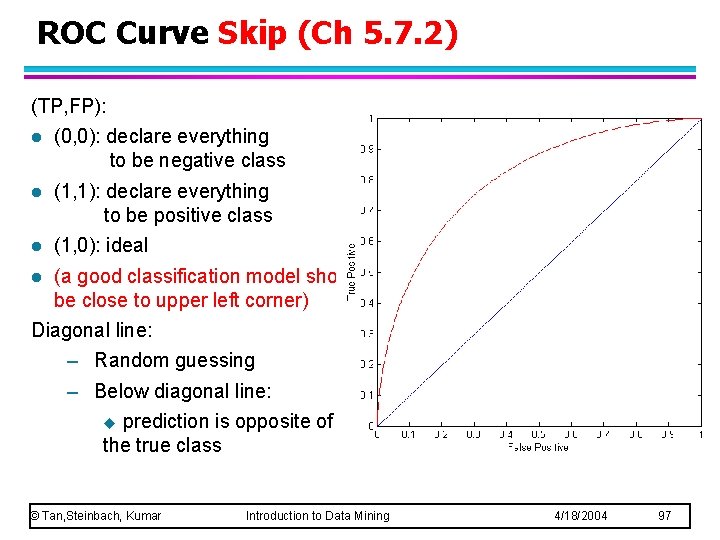

ROC (Receiver Operating Characteristic) Skip (Ch 5. 7. 2) Developed in 1950 s for signal detection theory to analyze noisy signals – Characterize the trade-off between positive hits and false alarms (false positives) l ROC curve plots TP (on the y-axis) against FP (on the x-axis) l Performance of each classifier represented as a point on the ROC curve – changing the threshold of algorithm, sample distribution or cost matrix changes the location of the point l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 95

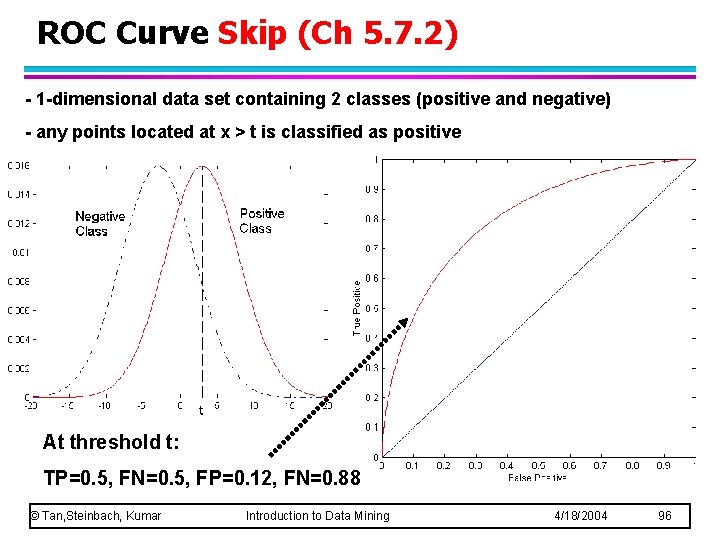

ROC Curve Skip (Ch 5. 7. 2) - 1 -dimensional data set containing 2 classes (positive and negative) - any points located at x > t is classified as positive At threshold t: TP=0. 5, FN=0. 5, FP=0. 12, FN=0. 88 © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 96

ROC Curve Skip (Ch 5. 7. 2) (TP, FP): l (0, 0): declare everything to be negative class l (1, 1): declare everything to be positive class l (1, 0): ideal l (a good classification model should be close to upper left corner) Diagonal line: – Random guessing – Below diagonal line: u prediction is opposite of the true class © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 97

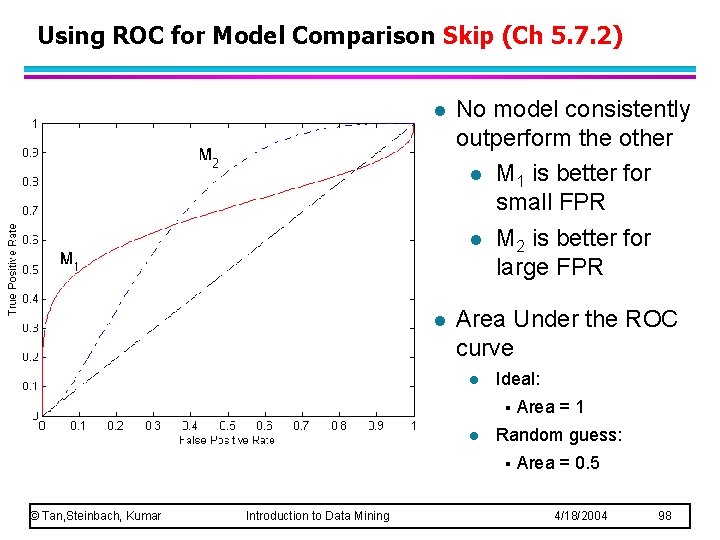

Using ROC for Model Comparison Skip (Ch 5. 7. 2) l No model consistently outperform the other l M 1 is better for small FPR l M 2 is better for large FPR l Area Under the ROC curve l Ideal: § l Random guess: § © Tan, Steinbach, Kumar Introduction to Data Mining Area = 1 Area = 0. 5 4/18/2004 98

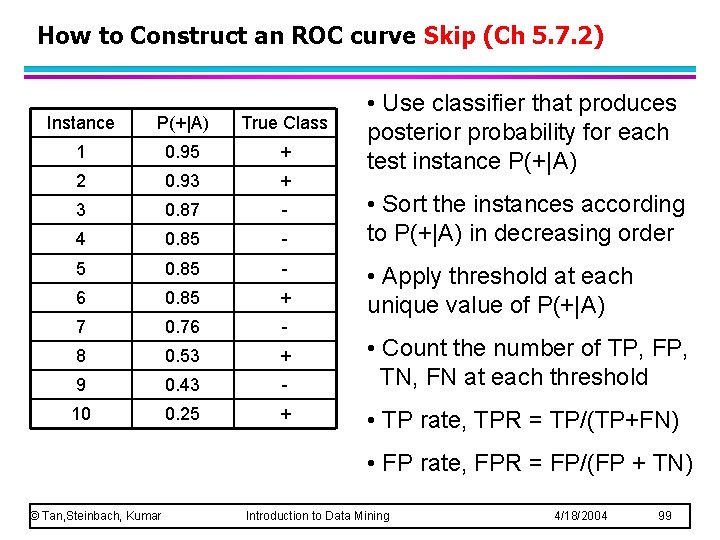

How to Construct an ROC curve Skip (Ch 5. 7. 2) Instance P(+|A) True Class 1 0. 95 + 2 0. 93 + 3 0. 87 - 4 0. 85 - 5 0. 85 - 6 0. 85 + 7 0. 76 - 8 0. 53 + 9 0. 43 - 10 0. 25 + • Use classifier that produces posterior probability for each test instance P(+|A) • Sort the instances according to P(+|A) in decreasing order • Apply threshold at each unique value of P(+|A) • Count the number of TP, FP, TN, FN at each threshold • TP rate, TPR = TP/(TP+FN) • FP rate, FPR = FP/(FP + TN) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 99

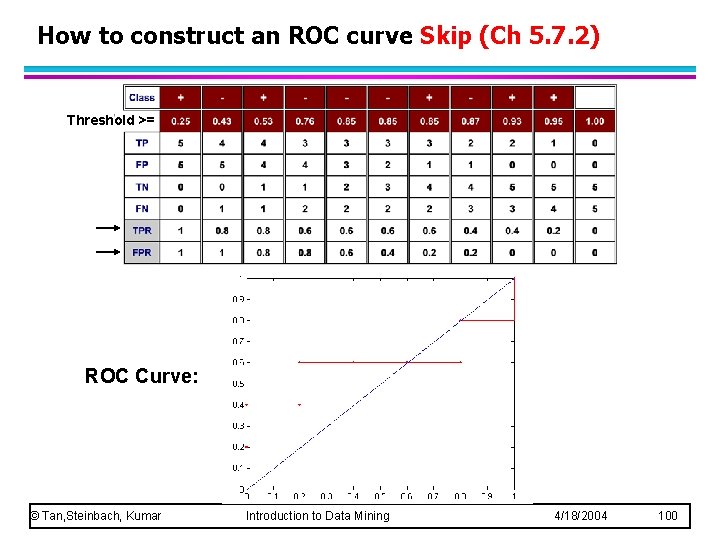

How to construct an ROC curve Skip (Ch 5. 7. 2) Threshold >= ROC Curve: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 100

Test of Significance (Comparing Classifiers) l Need to compare performance of classifiers for a given data set l The difference in accuracy may or may not be significant depending on the size of data l Given two models: l – Model M 1: accuracy = 85%, tested on 30 instances – Model M 2: accuracy = 75%, tested on 5000 instances Can we say M 1 is better than M 2? – How much confidence can we place on accuracy of M 1 and M 2? u( issue of confidence interval for accuracy) – Can the difference in performance measure be explained as a result of random fluctuations (variations) in the composition of the test sets? u(issue © Tan, Steinbach, Kumar of testing the statistical significance of the observed variation) Introduction to Data Mining 4/18/2004 101

Confidence Interval for Accuracy l Need to establish the probability distribution that rules the accuracy measure l The classification task is modeled as a Binomial experiment l Prediction can be regarded as a Bernoulli trial – – A Bernoulli trial has 2 possible outcomes Possible outcomes for prediction: correct or wrong The probability of success, p, is constant Collection of Bernoulli trials has a Binomial distribution: u x Bin(N, p) x: no. of correct predictions, N: the no. of trials e. g: Toss a fair coin (p=0. 5) 50 times, how many heads would turn up? Expected (average) number of heads = N p = 50 0. 5 =25 u © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 102

Confidence Interval for Accuracy The task of predicting the class labels of test records can be seen as a binomial experiment l Let N= no. of records in the test set X= no. of records correctly predicted p= true accuracy of the model • X has a Binomial distribution with; mean=Np variance=Np(1 -p) empirical accuracy = X / N l Can we predict p (true accuracy of model)? l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 103

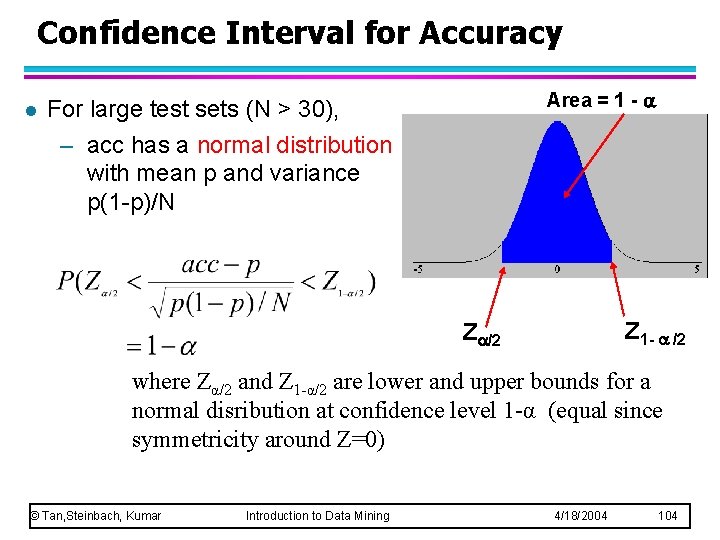

Confidence Interval for Accuracy l Area = 1 - For large test sets (N > 30), – acc has a normal distribution with mean p and variance p(1 -p)/N Z 1 - /2 Z /2 where Zα/2 and Z 1 -α/2 are lower and upper bounds for a normal disribution at confidence level 1 -α (equal since symmetricity around Z=0) © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 104

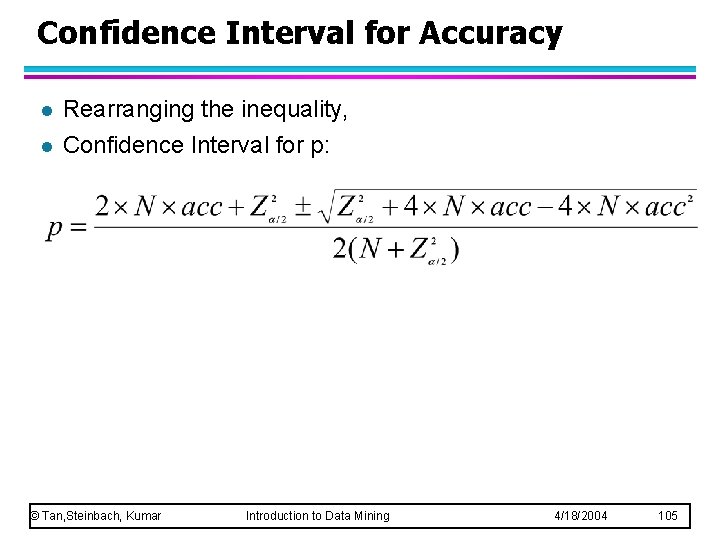

Confidence Interval for Accuracy l l Rearranging the inequality, Confidence Interval for p: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 105

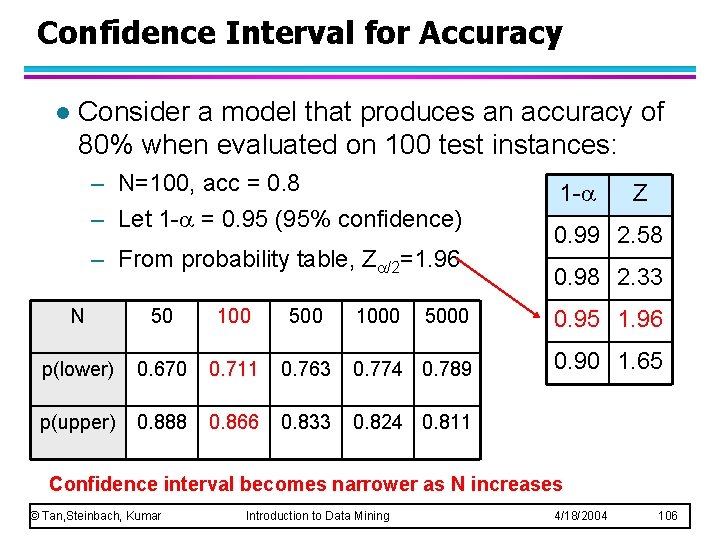

Confidence Interval for Accuracy l Consider a model that produces an accuracy of 80% when evaluated on 100 test instances: – N=100, acc = 0. 8 – Let 1 - = 0. 95 (95% confidence) – From probability table, Z /2=1. 96 N 50 100 500 p(lower) 0. 670 0. 711 p(upper) 0. 888 0. 866 1000 1 - Z 0. 99 2. 58 0. 98 2. 33 5000 0. 95 1. 96 0. 763 0. 774 0. 789 0. 90 1. 65 0. 833 0. 824 0. 811 Confidence interval becomes narrower as N increases © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 106

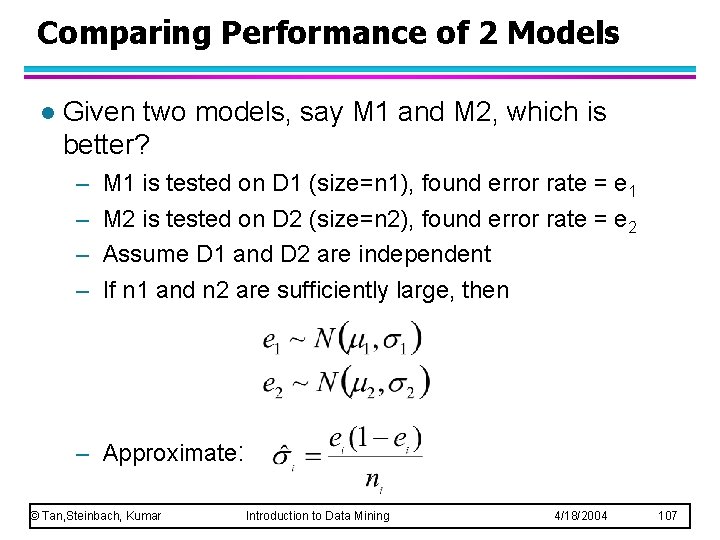

Comparing Performance of 2 Models l Given two models, say M 1 and M 2, which is better? – – M 1 is tested on D 1 (size=n 1), found error rate = e 1 M 2 is tested on D 2 (size=n 2), found error rate = e 2 Assume D 1 and D 2 are independent If n 1 and n 2 are sufficiently large, then – Approximate: © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 107

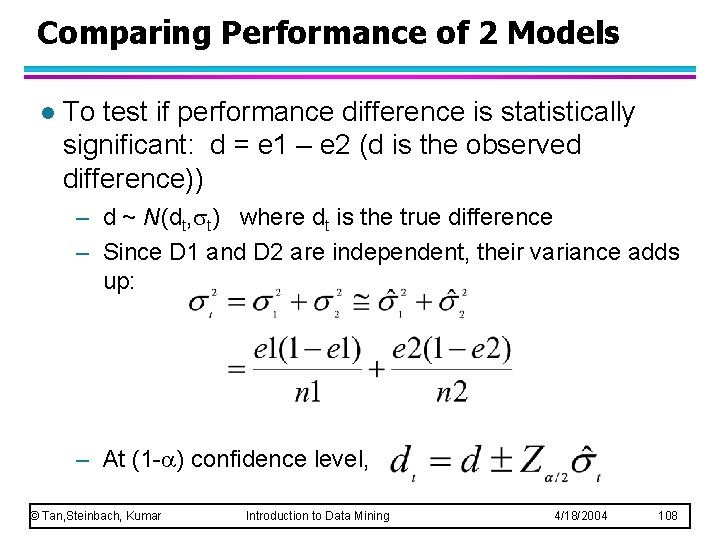

Comparing Performance of 2 Models l To test if performance difference is statistically significant: d = e 1 – e 2 (d is the observed difference)) – d ~ N(dt, t) where dt is the true difference – Since D 1 and D 2 are independent, their variance adds up: – At (1 - ) confidence level, © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 108

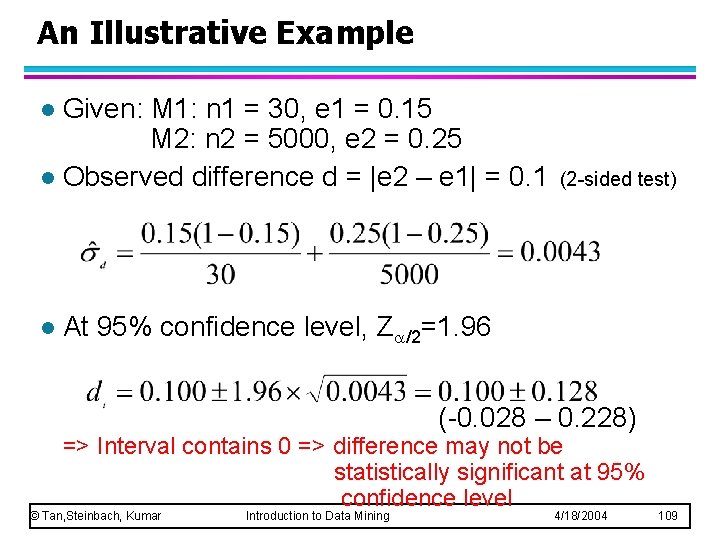

An Illustrative Example Given: M 1: n 1 = 30, e 1 = 0. 15 M 2: n 2 = 5000, e 2 = 0. 25 l Observed difference d = |e 2 – e 1| = 0. 1 l l (2 -sided test) At 95% confidence level, Z /2=1. 96 (-0. 028 – 0. 228) => Interval contains 0 => difference may not be statistically significant at 95% confidence level © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 109

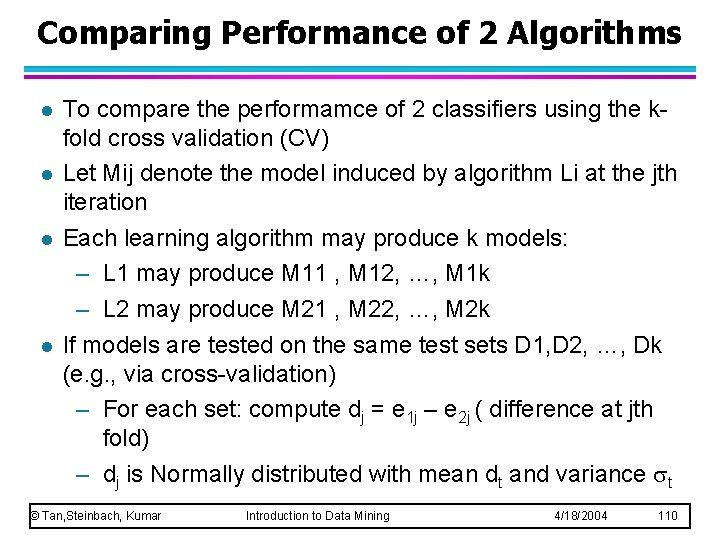

Comparing Performance of 2 Algorithms l l To compare the performamce of 2 classifiers using the kfold cross validation (CV) Let Mij denote the model induced by algorithm Li at the jth iteration Each learning algorithm may produce k models: – L 1 may produce M 11 , M 12, …, M 1 k – L 2 may produce M 21 , M 22, …, M 2 k If models are tested on the same test sets D 1, D 2, …, Dk (e. g. , via cross-validation) – For each set: compute dj = e 1 j – e 2 j ( difference at jth fold) – dj is Normally distributed with mean dt and variance t © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 110

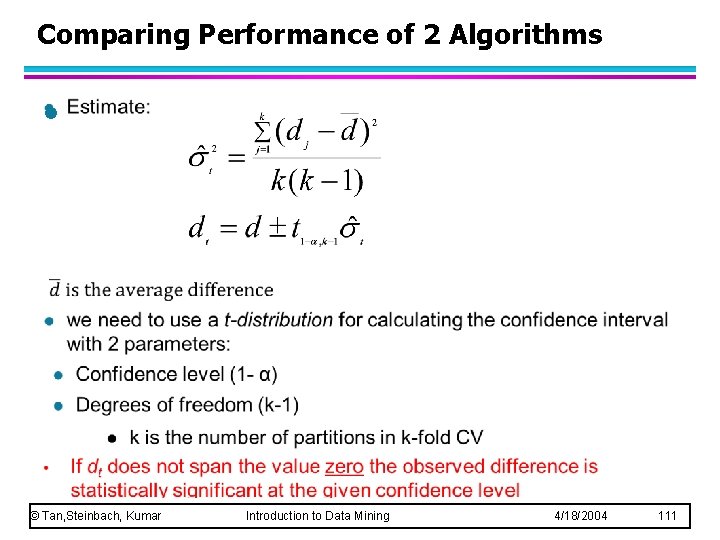

Comparing Performance of 2 Algorithms l © Tan, Steinbach, Kumar Introduction to Data Mining 4/18/2004 111

- Slides: 111