Data Mining Assignments Erik Zeitler Uppsala Database Laboratory

Data Mining Assignments Erik Zeitler Uppsala Database Laboratory Erik Zeitler

Oral exam § Two parts: 1. Validation § Your solution is validated using a script • If your implementation does not work the examination ends immediately (“fail” grade is given) you may re-do the examination later 2. Discussion § § § • Bring one form per student The instructor will ask questions • • about your implementation about the method All group members must be able to answer 2020 -10 -06 Group members can get different grades on the same assignment Erik Zeitler 2

Grades 2020 -10 -06 Erik Zeitler 3

What you need to do Sign up for labs and examintion § • • Groups of 2 – 4 students Forms are on the board outside 1321 Implement a solution § • Deadline: Submit by e-mail no later than 24 h before your examination • • 1: erik. zeitler@it. uu. se 2, 3, 4: gyozo. gidofalvi@it. uu. se Answer the questions on the form § • Bring one printed form per student to the examination Prepare for the discussion § • 2020 -10 -06 Understand theory Erik Zeitler 4

The K Nearest Neighbor Algorithm (k. NN) Erik Zeitler Uppsala Database Laboratory Erik Zeitler

k. NN use cases § GIS queries: • ”Tell me where the 5 nearestaurants are” § Classifier queries: • ”Look at the 5 nearest data points of known class belonging to classify a data point” 2020 -10 -06 Erik Zeitler 6

k. NN classifier intuition § If you don’t know what you are § Look at the nearest ones around you § You are probably of the same kind 2020 -10 -06 Erik Zeitler 7

Classifying you using k. NN § Each one of you belongs to a group: • [F|STS|Int Masters|Exchange Students|Other] § Classify yourself using 1 -NN and 3 -NN • Look at your k nearest neighbors! § How do we select our distance measure? § How do we decide which of 1 -NN and 3 -NN is best? 2020 -10 -06 Erik Zeitler 8

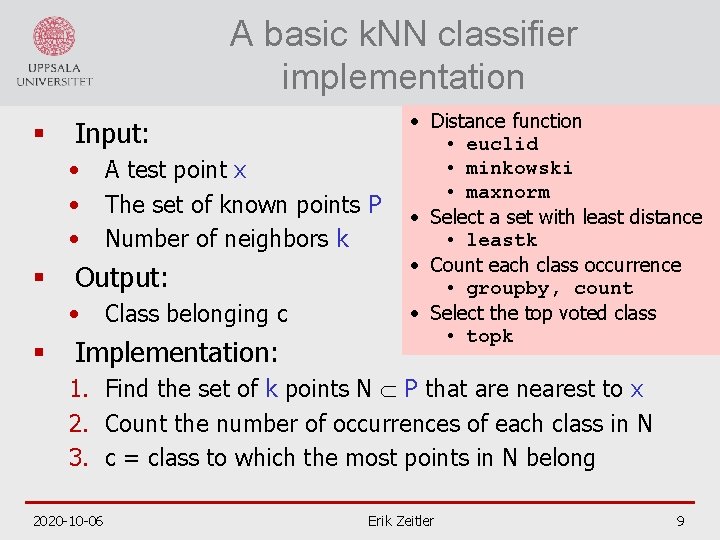

A basic k. NN classifier implementation § Input: • • • § Output: • § A test point x The set of known points P Number of neighbors k Class belonging c Implementation: • Distance function • euclid • minkowski • maxnorm • Select a set with least distance • leastk • Count each class occurrence • groupby, count • Select the top voted class • topk 1. Find the set of k points N P that are nearest to x 2. Count the number of occurrences of each class in N 3. c = class to which the most points in N belong 2020 -10 -06 Erik Zeitler 9

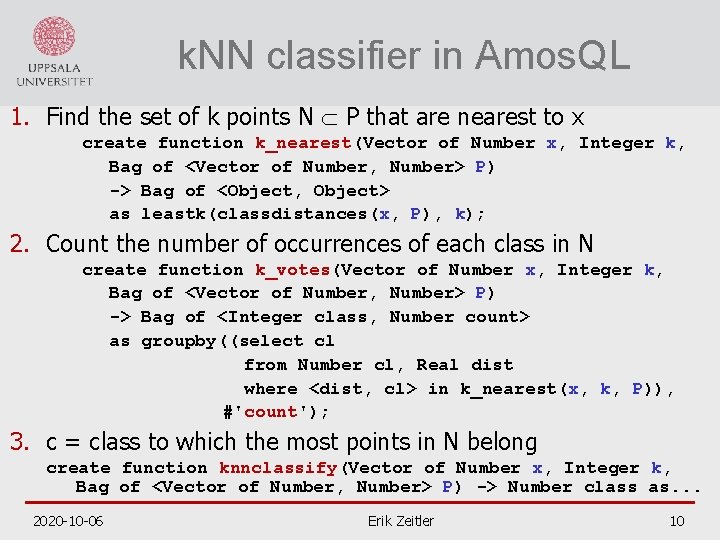

k. NN classifier in Amos. QL 1. Find the set of k points N P that are nearest to x create function k_nearest(Vector of Number x, Integer k, Bag of <Vector of Number, Number> P) -> Bag of <Object, Object> as leastk(classdistances(x, P), k); 2. Count the number of occurrences of each class in N create function k_votes(Vector of Number x, Integer k, Bag of <Vector of Number, Number> P) -> Bag of <Integer class, Number count> as groupby((select cl from Number cl, Real dist where <dist, cl> in k_nearest(x, k, P)), #'count'); 3. c = class to which the most points in N belong create function knnclassify(Vector of Number x, Integer k, Bag of <Vector of Number, Number> P) -> Number class as. . . 2020 -10 -06 Erik Zeitler 10

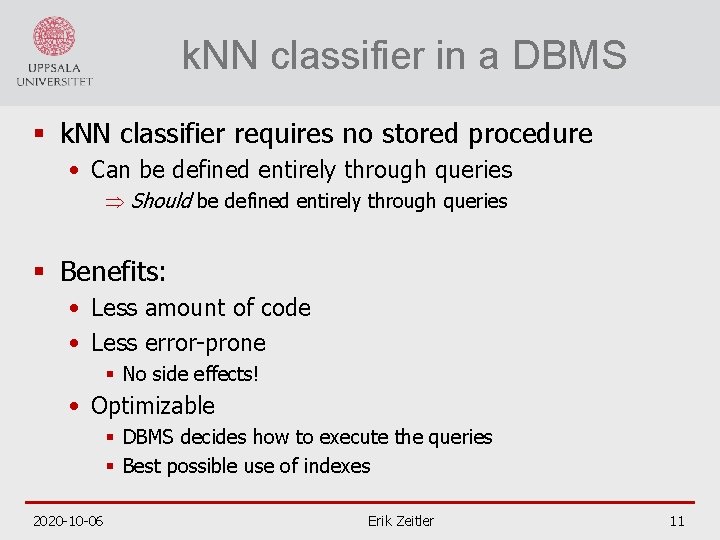

k. NN classifier in a DBMS § k. NN classifier requires no stored procedure • Can be defined entirely through queries Þ Should be defined entirely through queries § Benefits: • Less amount of code • Less error-prone § No side effects! • Optimizable § DBMS decides how to execute the queries § Best possible use of indexes 2020 -10 -06 Erik Zeitler 11

More k. NN classifier intuition § If it walks and sounds like a duck Then it must be a duck § If it walks and sounds like a cow Then it must be a cow 2020 -10 -06 Erik Zeitler 12

Walking and talking § Assume that a duck • has step length 5… 15 cm • quacks at 600… 700 Hz § Assume that a cow • has step length is 30… 60 cm • moos at 100… 200 Hz 2020 -10 -06 Erik Zeitler 13

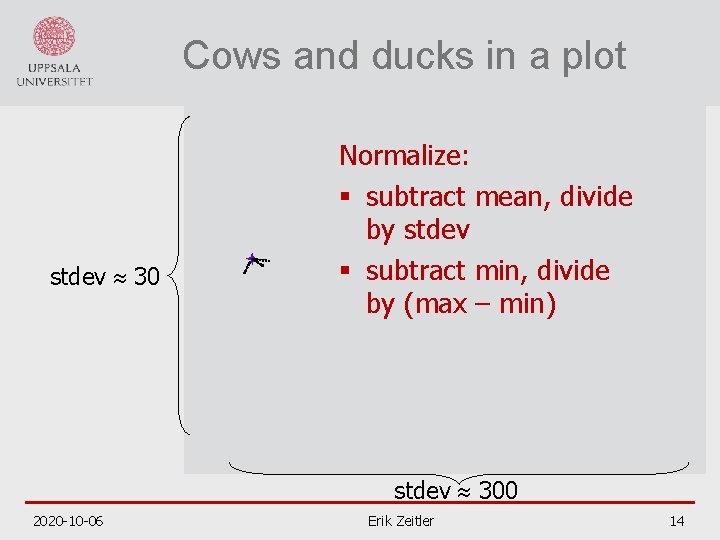

Cows and ducks in a plot stdev 30 Normalize: § subtract mean, divide by stdev § subtract min, divide by (max – min) stdev 300 2020 -10 -06 Erik Zeitler 14

Enter the chicken 2020 -10 -06 Erik Zeitler 15

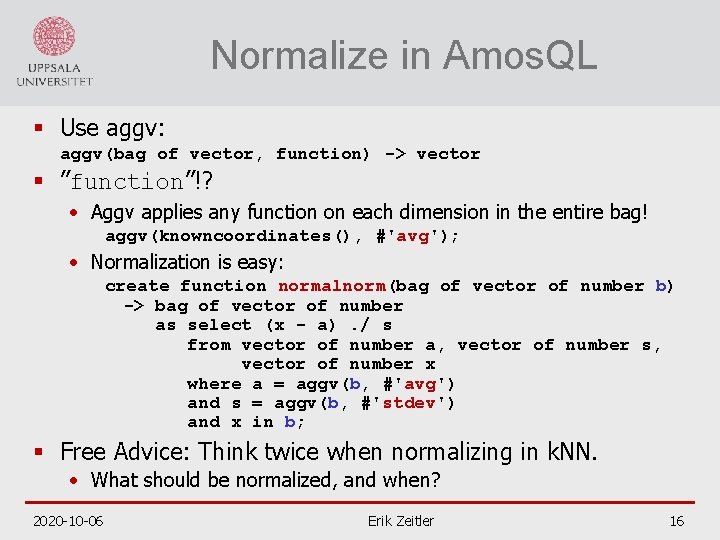

Normalize in Amos. QL § Use aggv: aggv(bag of vector, function) -> vector § ”function”!? • Aggv applies any function on each dimension in the entire bag! aggv(knowncoordinates(), #'avg'); • Normalization is easy: create function normalnorm(bag of vector of number b) -> bag of vector of number as select (x - a). / s from vector of number a, vector of number s, vector of number x where a = aggv(b, #'avg') and s = aggv(b, #'stdev') and x in b; § Free Advice: Think twice when normalizing in k. NN. • What should be normalized, and when? 2020 -10 -06 Erik Zeitler 16

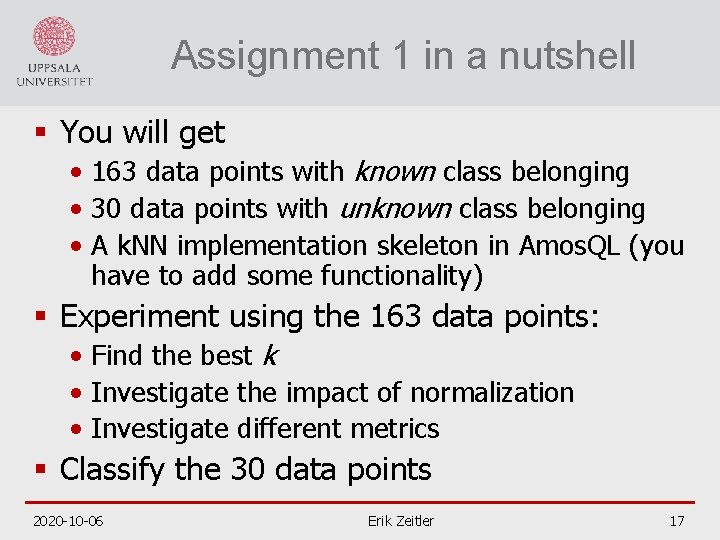

Assignment 1 in a nutshell § You will get • 163 data points with known class belonging • 30 data points with unknown class belonging • A k. NN implementation skeleton in Amos. QL (you have to add some functionality) § Experiment using the 163 data points: • Find the best k • Investigate the impact of normalization • Investigate different metrics § Classify the 30 data points 2020 -10 -06 Erik Zeitler 17

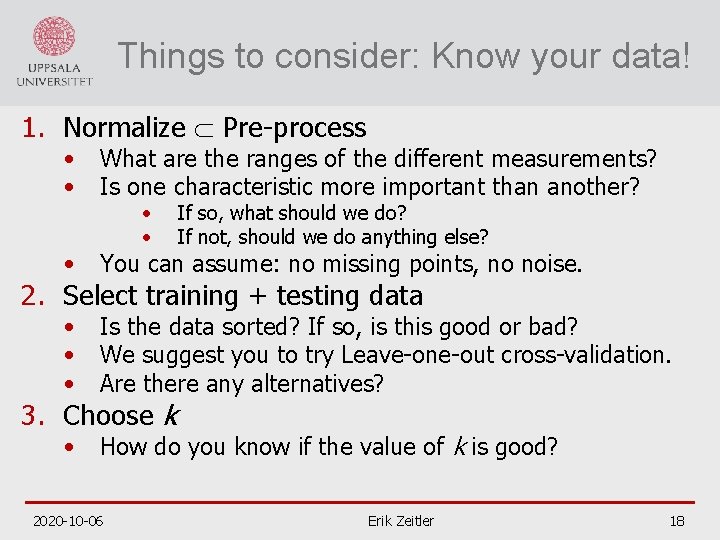

Things to consider: Know your data! 1. Normalize Pre-process • • What are the ranges of the different measurements? Is one characteristic more important than another? • You can assume: no missing points, no noise. • • • Is the data sorted? If so, is this good or bad? We suggest you to try Leave-one-out cross-validation. Are there any alternatives? • How do you know if the value of k is good? • • If so, what should we do? If not, should we do anything else? 2. Select training + testing data 3. Choose k 2020 -10 -06 Erik Zeitler 18

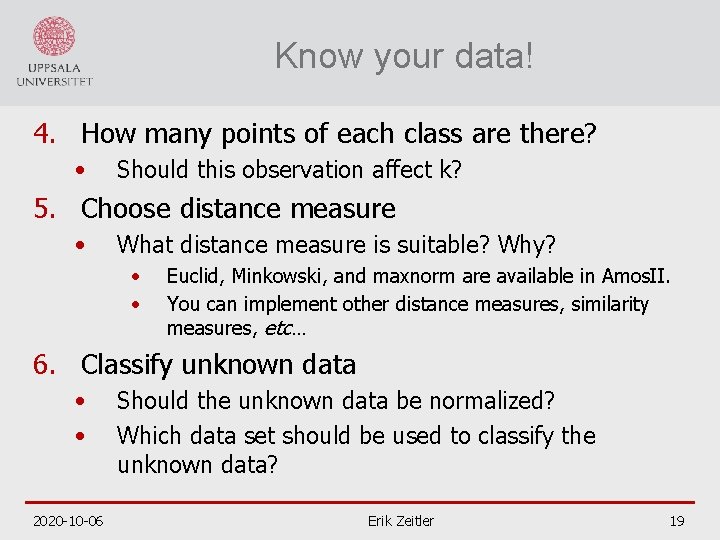

Know your data! 4. How many points of each class are there? • Should this observation affect k? 5. Choose distance measure • What distance measure is suitable? Why? • • Euclid, Minkowski, and maxnorm are available in Amos. II. You can implement other distance measures, similarity measures, etc… 6. Classify unknown data • • 2020 -10 -06 Should the unknown data be normalized? Which data set should be used to classify the unknown data? Erik Zeitler 19

- Slides: 19