CS 347 Distributed Databases and Transaction Processing Data

CS 347: Distributed Databases and Transaction Processing Data Replication Hector Garcia-Molina CS 347 Notes 08 1

Replication Space • Updates – at any copy – at fixed (primary) copy – at one copy but control can migrate – no updates CS 347 Notes 08 2

Replication Space • Correctness – no consistency – local consistency – order preserving – serializable schedule – 1 -copy serializability CS 347 Notes 08 3

Replication Space • Expected Failures – processors: fail-stop, byzantine? – network: reliable, partitions, in-order msgs? – storage: stable disk? CS 347 Notes 08 4

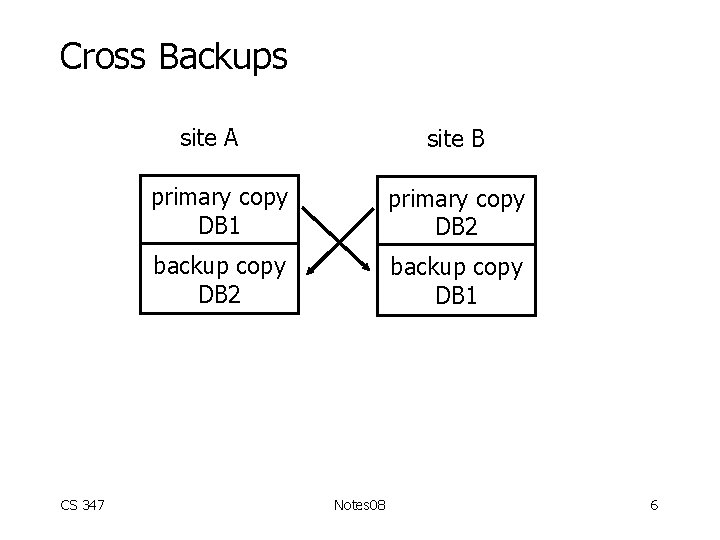

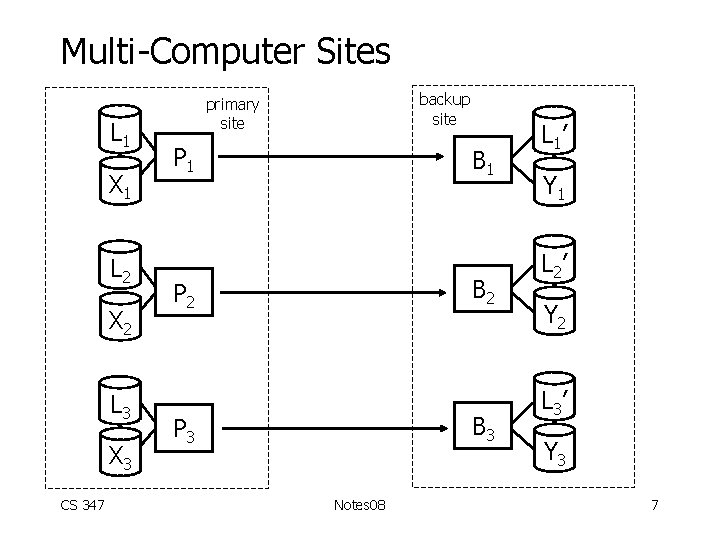

Replication Space • Implementation Details – update propagation – – physical log records logical log records sql updates transactions – reads at backup? – architecture – cross backups – multi-computer copy – initialization of backup copy CS 347 Notes 08 5

Cross Backups site A CS 347 site B primary copy DB 1 primary copy DB 2 backup copy DB 1 Notes 08 6

Multi-Computer Sites L 1 X 1 L 2 X 2 L 3 X 3 CS 347 backup site primary site P 1 B 2 P 2 B 3 P 3 Notes 08 L 1’ Y 1 L 2’ Y 2 L 3’ Y 3 7

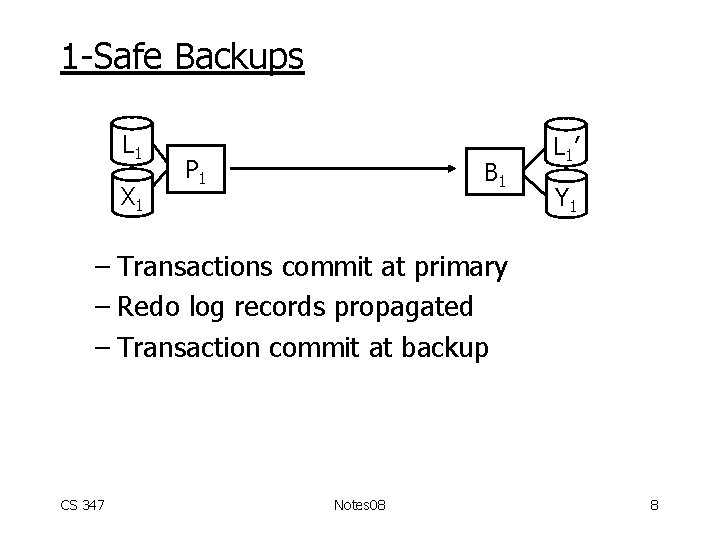

1 -Safe Backups L 1 X 1 P 1 B 1 L 1’ Y 1 – Transactions commit at primary – Redo log records propagated – Transaction commit at backup CS 347 Notes 08 8

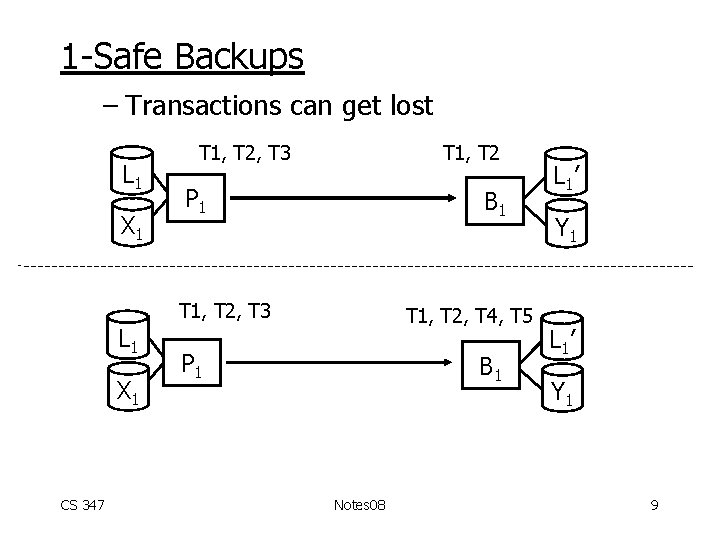

1 -Safe Backups – Transactions can get lost L 1 X 1 T 1, T 2, T 3 T 1, T 2 P 1 B 1 T 1, T 2, T 3 L 1 X 1 CS 347 T 1, T 2, T 4, T 5 P 1 B 1 Notes 08 L 1’ Y 1 9

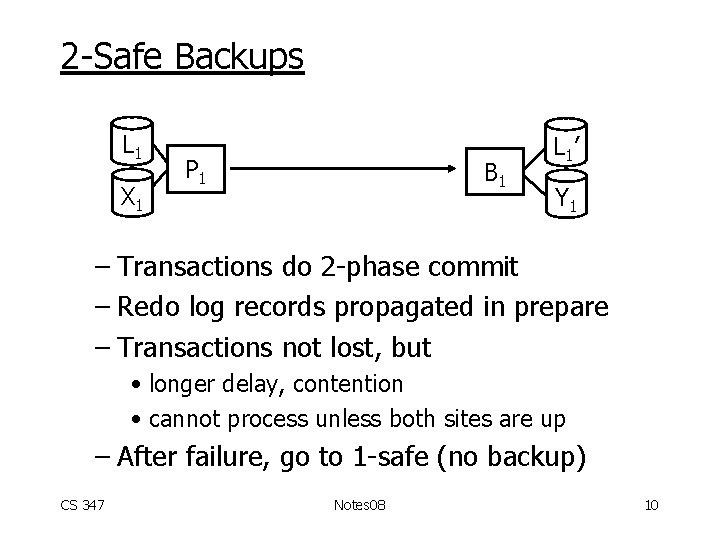

2 -Safe Backups L 1 X 1 P 1 B 1 L 1’ Y 1 – Transactions do 2 -phase commit – Redo log records propagated in prepare – Transactions not lost, but • longer delay, contention • cannot process unless both sites are up – After failure, go to 1 -safe (no backup) CS 347 Notes 08 10

What is Correctness? • In 2 -safe • In 1 -safe CS 347 Notes 08 11

What is in Paper You Read? • Specific Senario – updates at fixed primary site – each site has multiple computers – primary-backup sites are matched – clean site failures; stable storage; rel net – log shipping – no reads at backup – no initialization CS 347 Notes 08 12

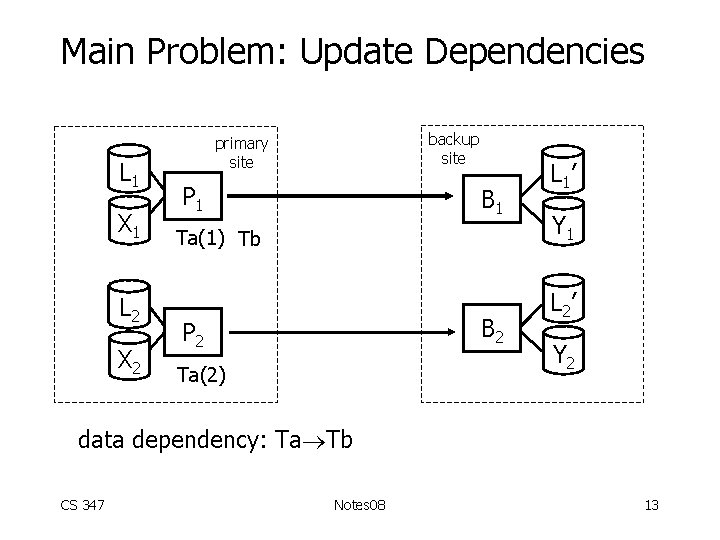

Main Problem: Update Dependencies L 1 X 1 L 2 X 2 backup site primary site P 1 B 1 Ta(1) Tb B 2 P 2 Ta(2) L 1’ Y 1 L 2’ Y 2 data dependency: Ta Tb CS 347 Notes 08 13

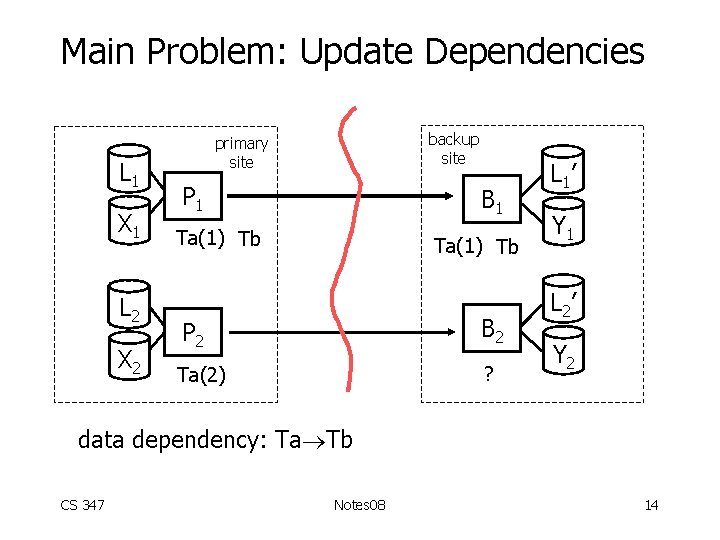

Main Problem: Update Dependencies L 1 X 1 L 2 X 2 backup site primary site P 1 B 1 Ta(1) Tb P 2 B 2 Ta(2) ? L 1’ Y 1 L 2’ Y 2 data dependency: Ta Tb CS 347 Notes 08 14

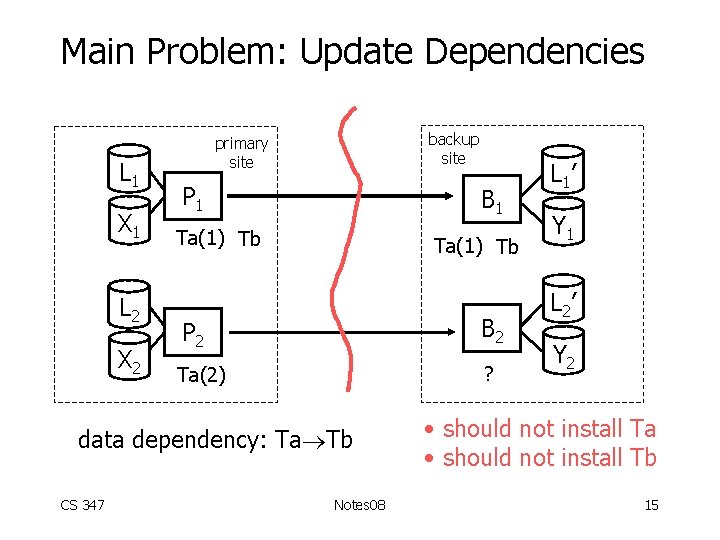

Main Problem: Update Dependencies L 1 X 1 L 2 X 2 backup site primary site P 1 B 1 Ta(1) Tb P 2 B 2 Ta(2) ? data dependency: Ta Tb CS 347 Notes 08 L 1’ Y 1 L 2’ Y 2 • should not install Ta • should not install Tb 15

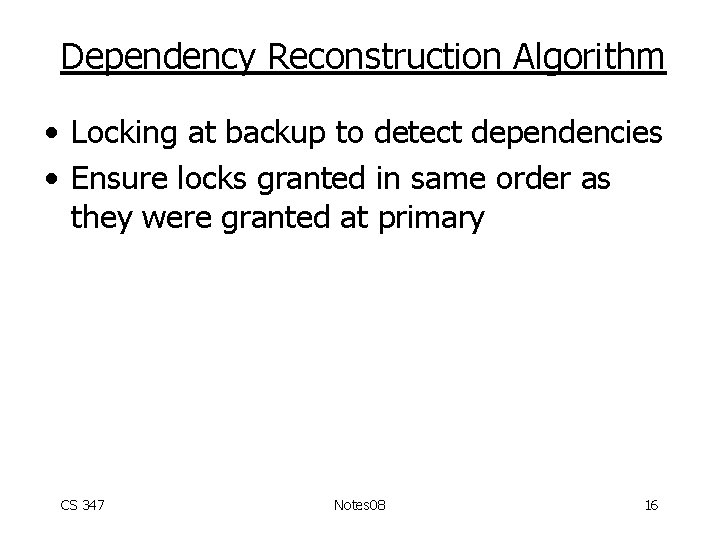

Dependency Reconstruction Algorithm • Locking at backup to detect dependencies • Ensure locks granted in same order as they were granted at primary CS 347 Notes 08 16

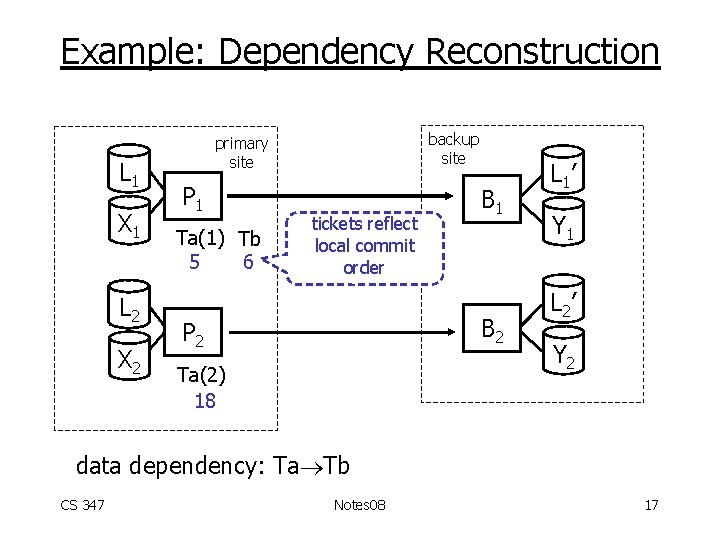

Example: Dependency Reconstruction L 1 X 1 L 2 X 2 backup site primary site P 1 Ta(1) Tb 5 6 tickets reflect local commit order B 1 B 2 P 2 Ta(2) 18 L 1’ Y 1 L 2’ Y 2 data dependency: Ta Tb CS 347 Notes 08 17

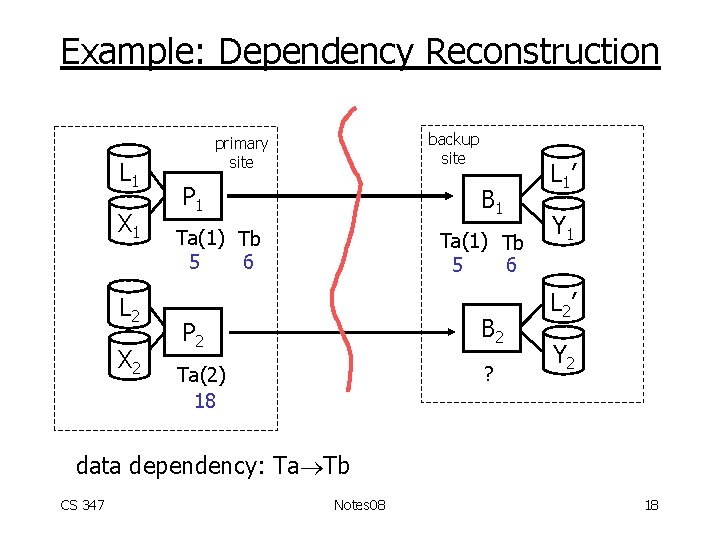

Example: Dependency Reconstruction L 1 X 1 L 2 X 2 backup site primary site P 1 B 1 Ta(1) Tb 5 6 P 2 B 2 Ta(2) 18 ? L 1’ Y 1 L 2’ Y 2 data dependency: Ta Tb CS 347 Notes 08 18

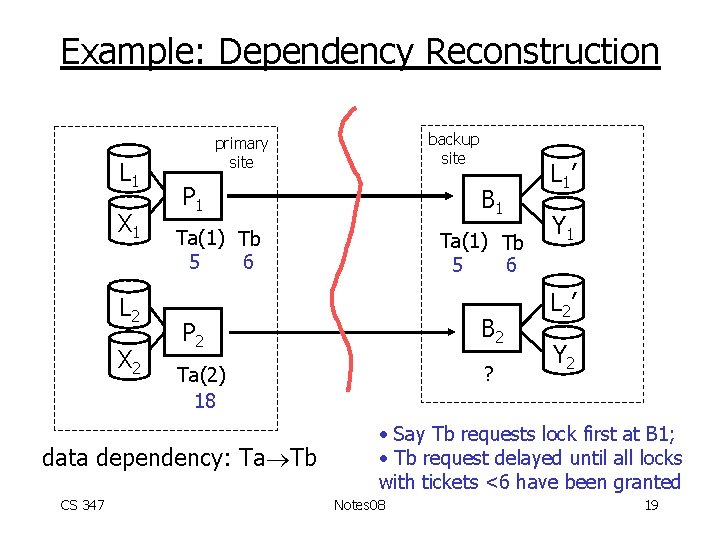

Example: Dependency Reconstruction L 1 X 1 L 2 X 2 P 1 B 1 Ta(1) Tb 5 6 P 2 B 2 Ta(2) 18 ? data dependency: Ta Tb CS 347 backup site primary site L 1’ Y 1 L 2’ Y 2 • Say Tb requests lock first at B 1; • Tb request delayed until all locks with tickets <6 have been granted Notes 08 19

Epoch Algorithm • Backup updates are installed in batches • Epoch delimiters written on log CS 347 Notes 08 20

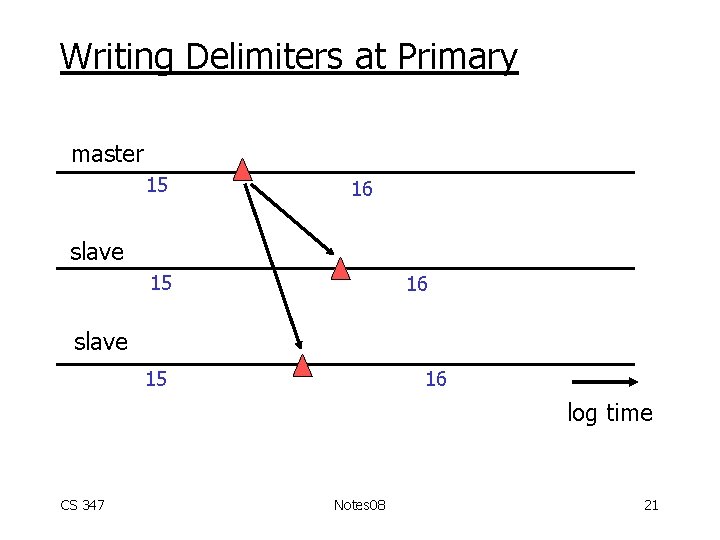

Writing Delimiters at Primary master 15 16 slave 15 16 log time CS 347 Notes 08 21

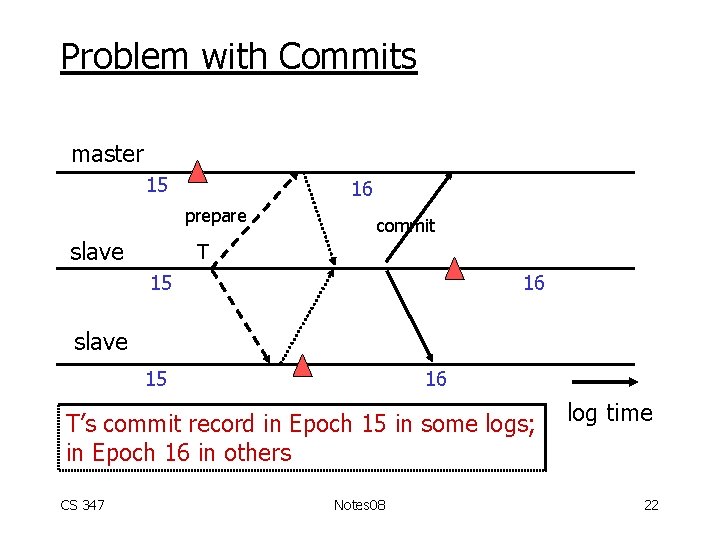

Problem with Commits master 15 16 prepare slave commit T 15 16 slave 15 16 T’s commit record in Epoch 15 in some logs; in Epoch 16 in others CS 347 Notes 08 log time 22

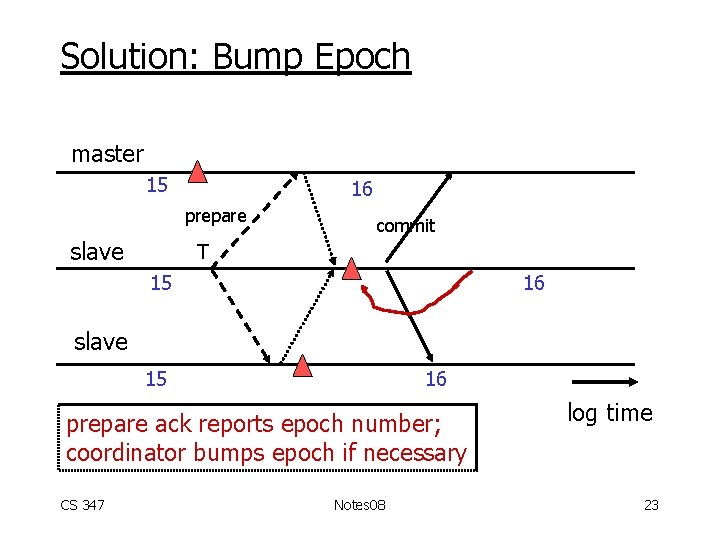

Solution: Bump Epoch master 15 16 prepare slave commit T 15 16 slave 15 16 prepare ack reports epoch number; coordinator bumps epoch if necessary CS 347 Notes 08 log time 23

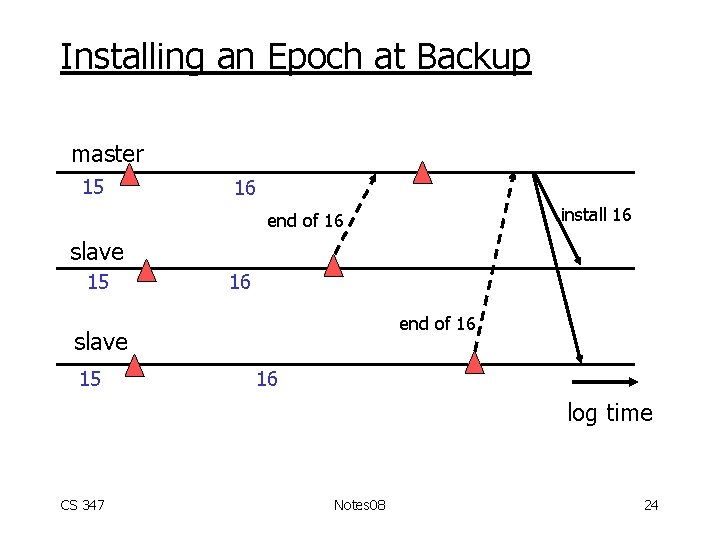

Installing an Epoch at Backup master 15 16 install 16 end of 16 slave 15 16 log time CS 347 Notes 08 24

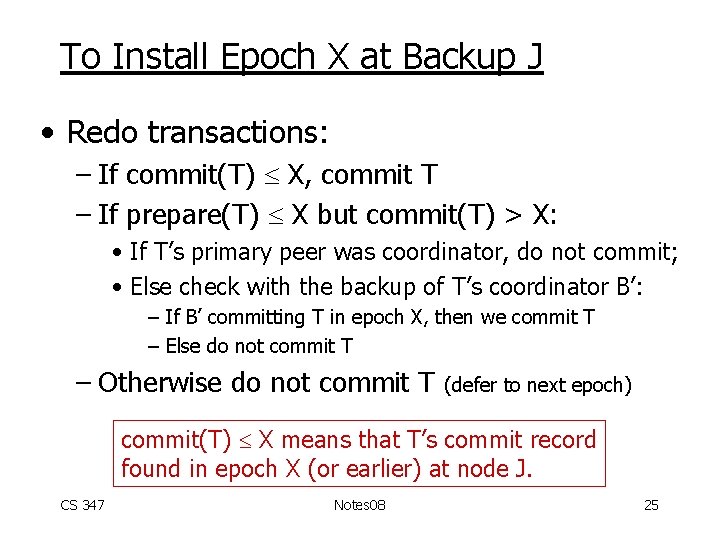

To Install Epoch X at Backup J • Redo transactions: – If commit(T) X, commit T – If prepare(T) X but commit(T) > X: • If T’s primary peer was coordinator, do not commit; • Else check with the backup of T’s coordinator B’: – If B’ committing T in epoch X, then we commit T – Else do not commit T – Otherwise do not commit T (defer to next epoch) commit(T) X means that T’s commit record found in epoch X (or earlier) at node J. CS 347 Notes 08 25

Why Do We Need Coordinator Check? • Assignment: Construct 2 scenarios that look the same to backup J: – In Scenario 1, T should be installed – In Scenario 2, T should not be installed CS 347 Notes 08 26

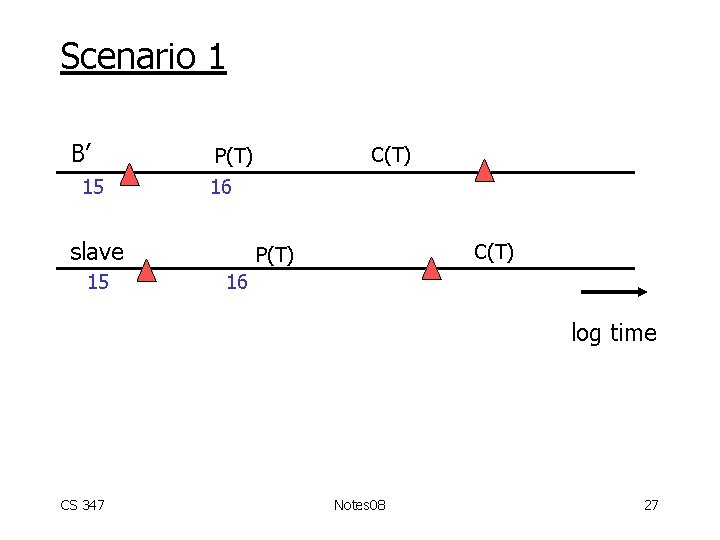

Scenario 1 B’ 15 16 slave 15 C(T) P(T) 16 log time CS 347 Notes 08 27

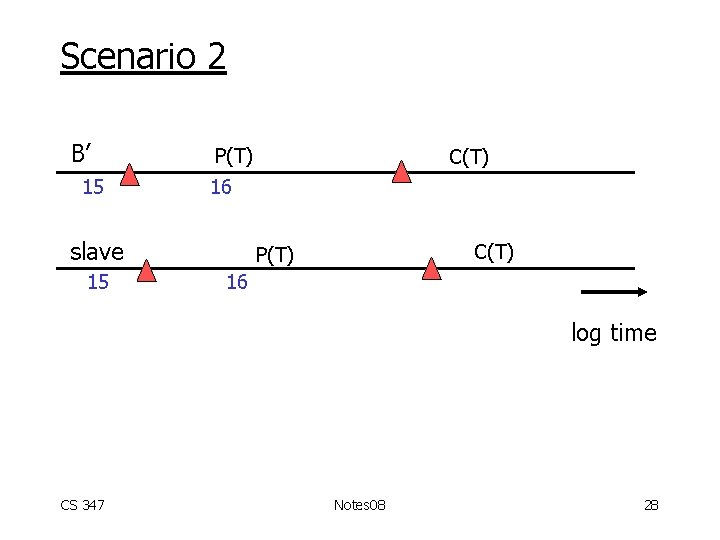

Scenario 2 B’ 15 P(T) 16 slave 15 C(T) P(T) 16 log time CS 347 Notes 08 28

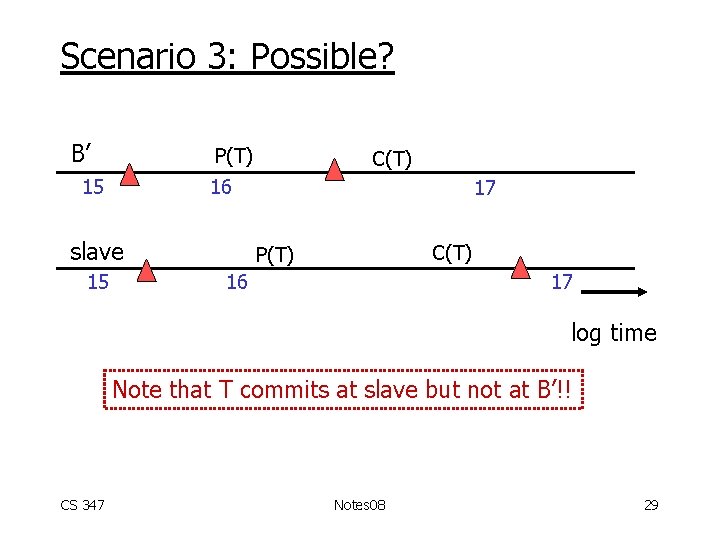

Scenario 3: Possible? B’ P(T) 15 16 slave 15 C(T) 17 C(T) P(T) 16 17 log time Note that T commits at slave but not at B’!! CS 347 Notes 08 29

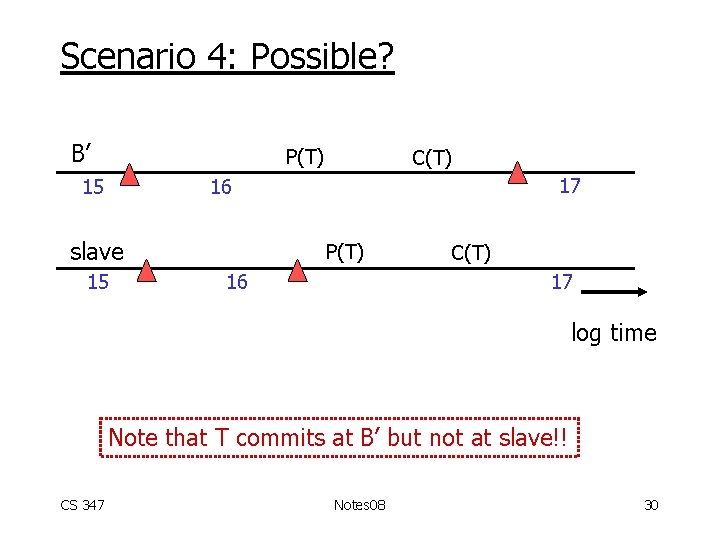

Scenario 4: Possible? B’ P(T) 15 C(T) 17 16 slave 15 P(T) 16 C(T) 17 log time Note that T commits at B’ but not at slave!! CS 347 Notes 08 30

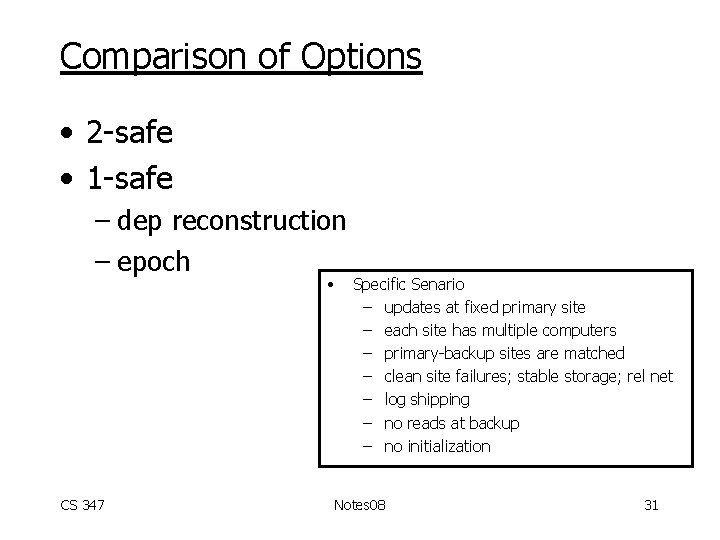

Comparison of Options • 2 -safe • 1 -safe – dep reconstruction – epoch • CS 347 Specific Senario – updates at fixed primary site – each site has multiple computers – primary-backup sites are matched – clean site failures; stable storage; rel net – log shipping – no reads at backup – no initialization Notes 08 31

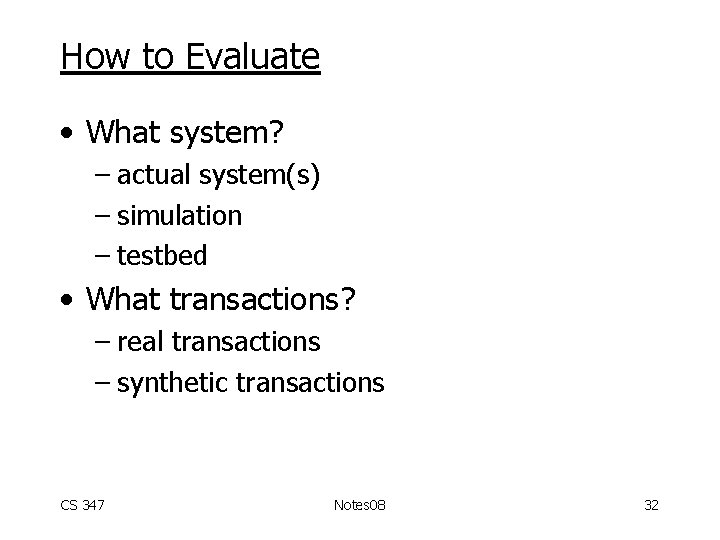

How to Evaluate • What system? – actual system(s) – simulation – testbed • What transactions? – real transactions – synthetic transactions CS 347 Notes 08 32

Metrics • • IO utilization CPU utilization Throughput (given max delay? ) Transaction commit delay Backup copy lag Network overhead Probability of inconsistency CS 347 Notes 08 33

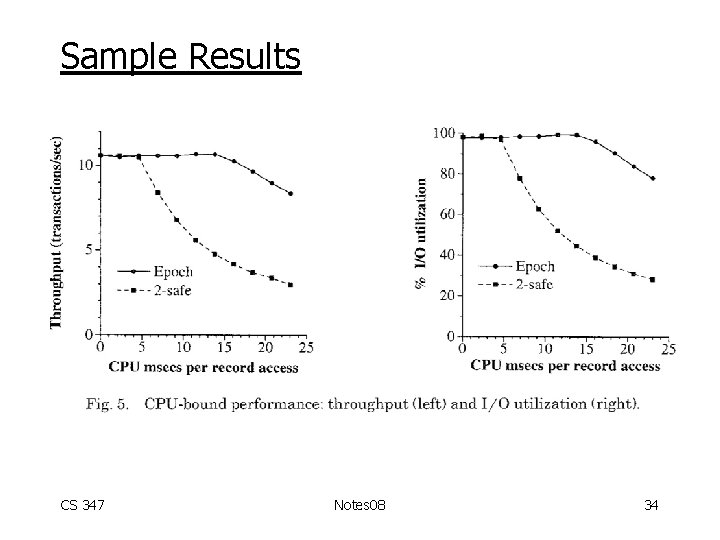

Sample Results CS 347 Notes 08 34

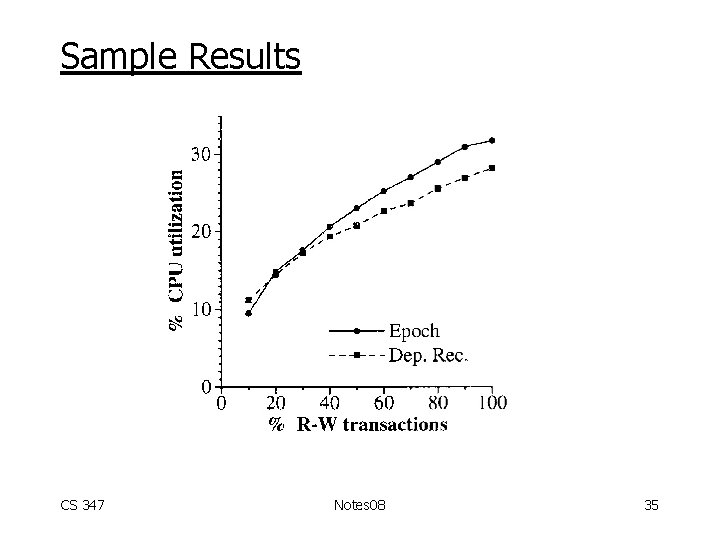

Sample Results CS 347 Notes 08 35

And Now For Something Completely Different: • Updates next: available copies – at any copy – at fixed (primary) copy have seen – at one copy but control can migrate – no updates CS 347 Notes 08 36

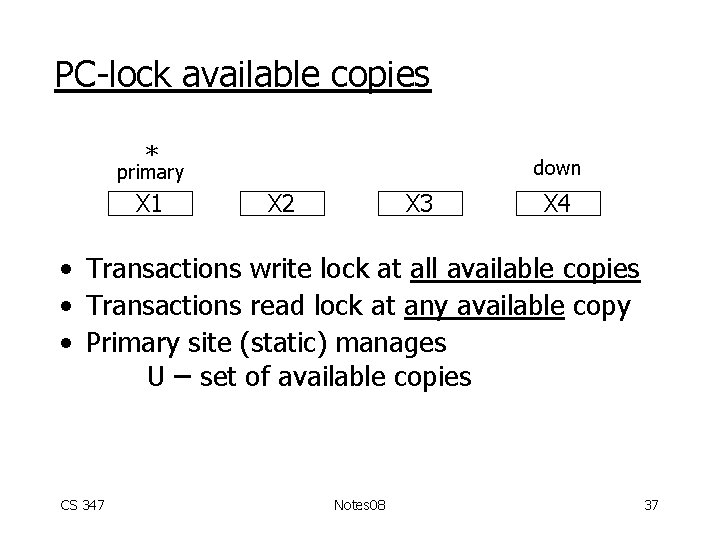

PC-lock available copies * down primary X 1 X 2 X 3 X 4 • Transactions write lock at all available copies • Transactions read lock at any available copy • Primary site (static) manages U – set of available copies CS 347 Notes 08 37

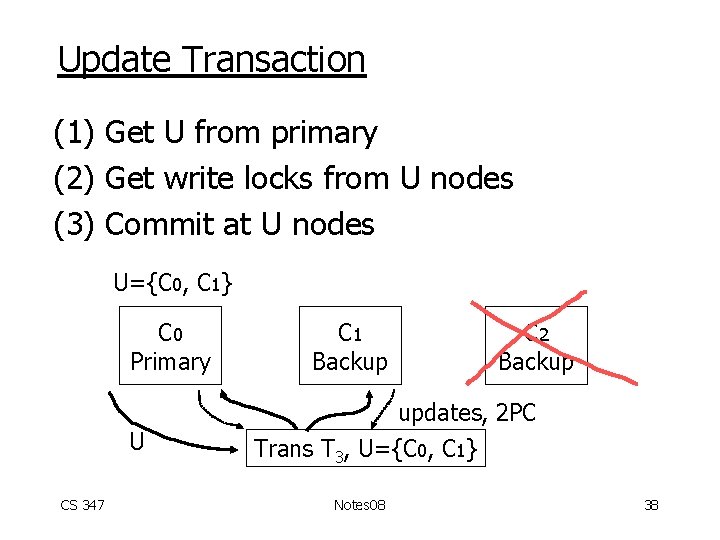

Update Transaction (1) Get U from primary (2) Get write locks from U nodes (3) Commit at U nodes U={C 0, C 1} C 0 Primary C 1 Backup C 2 Backup updates, 2 PC U CS 347 Trans T 3, U={C 0, C 1} Notes 08 38

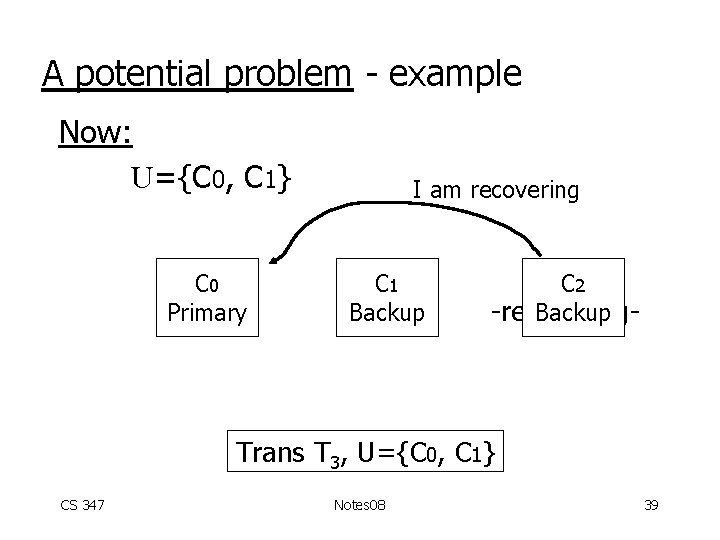

A potential problem - example Now: U={C 0, C 1} C 0 Primary I am recovering C 1 Backup C 2 Backup -recovering- Trans T 3, U={C 0, C 1} CS 347 Notes 08 39

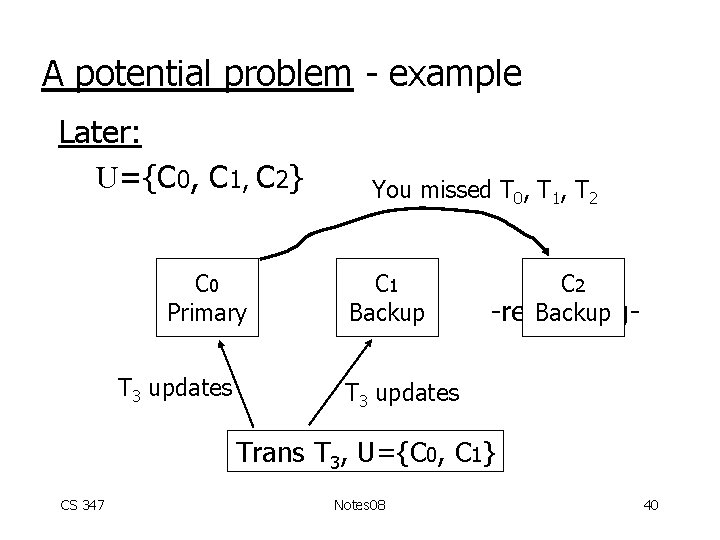

A potential problem - example Later: U={C 0, C 1, C 2} C 0 Primary T 3 updates You missed T 0, T 1, T 2 C 1 Backup C 2 Backup -recovering- T 3 updates Trans T 3, U={C 0, C 1} CS 347 Notes 08 40

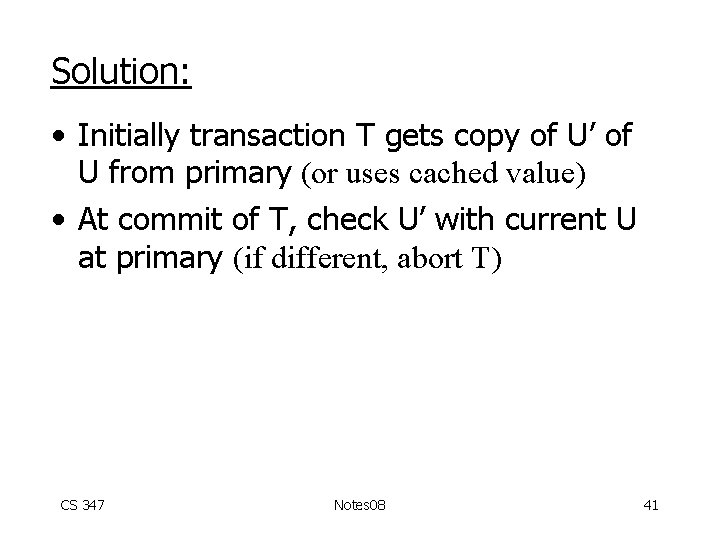

Solution: • Initially transaction T gets copy of U’ of U from primary (or uses cached value) • At commit of T, check U’ with current U at primary (if different, abort T) CS 347 Notes 08 41

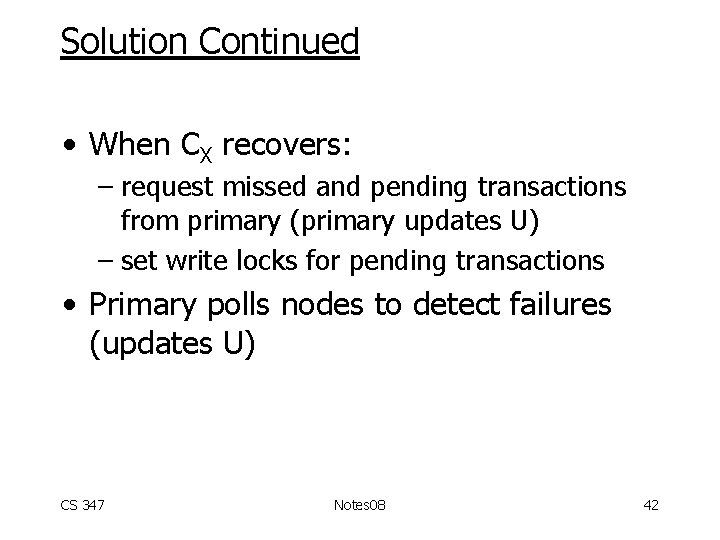

Solution Continued • When CX recovers: – request missed and pending transactions from primary (primary updates U) – set write locks for pending transactions • Primary polls nodes to detect failures (updates U) CS 347 Notes 08 42

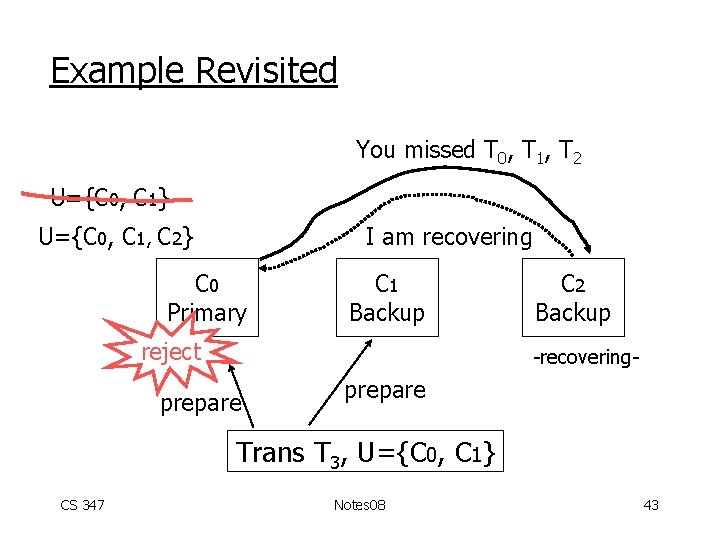

Example Revisited You missed T 0, T 1, T 2 U={C 0, C 1} U={C 0, C 1, C 2} I am recovering C 0 Primary C 1 Backup reject C 2 Backup -recovering- prepare Trans T 3, U={C 0, C 1} CS 347 Notes 08 43

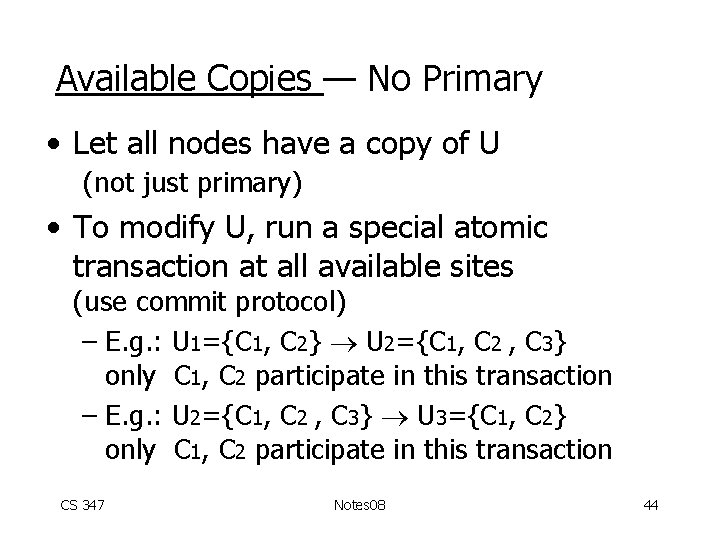

Available Copies — No Primary • Let all nodes have a copy of U (not just primary) • To modify U, run a special atomic transaction at all available sites (use commit protocol) – E. g. : U 1={C 1, C 2} U 2={C 1, C 2 , C 3} only C 1, C 2 participate in this transaction – E. g. : U 2={C 1, C 2 , C 3} U 3={C 1, C 2} only C 1, C 2 participate in this transaction CS 347 Notes 08 44

• Details are tricky. . . • What if commit of U-change blocks? CS 347 Notes 08 45

Node Recovery (no primary) • Get missed updates from any active node • No unique sequence of transactions • If all nodes fail, wait for - all to recover - majority to recover CS 347 Notes 08 46

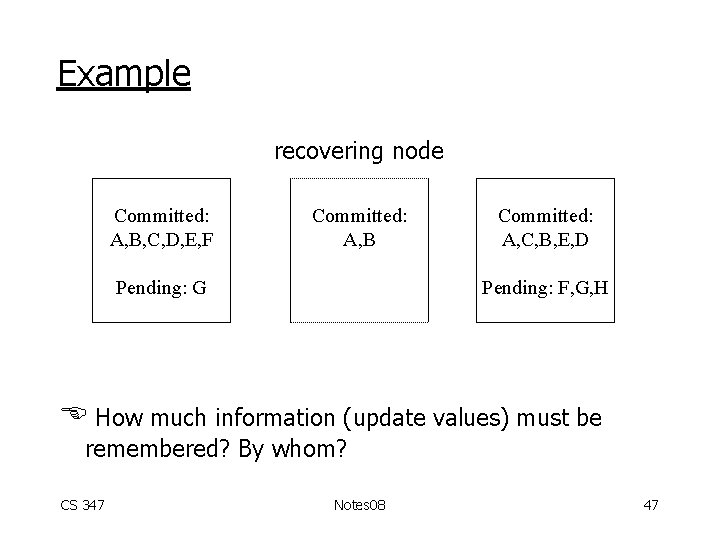

Example recovering node Committed: A, B, C, D, E, F Committed: A, B Pending: G Committed: A, C, B, E, D Pending: F, G, H How much information (update values) must be remembered? By whom? CS 347 Notes 08 47

![Correctness with replicated data X 2 X 1 S 1: r 1[X 1] r Correctness with replicated data X 2 X 1 S 1: r 1[X 1] r](http://slidetodoc.com/presentation_image_h2/c1b2d99f6ef59d5d49fe1169ab9edd75/image-48.jpg)

Correctness with replicated data X 2 X 1 S 1: r 1[X 1] r 2[X 2] w 1[X 1] w 2[X 2] Is this schedule serializable? CS 347 Notes 08 48

One copy serializable (1 SR) A schedule S on replicated data is 1 SR if it is equivalent to a serial history of the same transactions on a one-copy database CS 347 Notes 08 49

![To check 1 SR • Take schedule • Treat ri[Xj] as ri[X] Xj is To check 1 SR • Take schedule • Treat ri[Xj] as ri[X] Xj is](http://slidetodoc.com/presentation_image_h2/c1b2d99f6ef59d5d49fe1169ab9edd75/image-50.jpg)

To check 1 SR • Take schedule • Treat ri[Xj] as ri[X] Xj is copy of X wi[Xj] as wi[X] • Compute P(S) • If P(S) acyclic, S is 1 SR CS 347 Notes 08 50

![Example S 1: r 1[X 1] r 2[X 2] w 1[X 1] w 2[X Example S 1: r 1[X 1] r 2[X 2] w 1[X 1] w 2[X](http://slidetodoc.com/presentation_image_h2/c1b2d99f6ef59d5d49fe1169ab9edd75/image-51.jpg)

Example S 1: r 1[X 1] r 2[X 2] w 1[X 1] w 2[X 2] S 1’: r 1[X] r 2[X] w 1[X] w 2[X] T 2 T 1 T 2 S 1 is not 1 SR! CS 347 Notes 08 51

![Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w](http://slidetodoc.com/presentation_image_h2/c1b2d99f6ef59d5d49fe1169ab9edd75/image-52.jpg)

Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w 2[X 2] S 2’: r 1[X] w 1[X] r 2[X] w 2[X] P(S 2): T 1 T 2 S 2 is 1 SR CS 347 Notes 08 52

![Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w](http://slidetodoc.com/presentation_image_h2/c1b2d99f6ef59d5d49fe1169ab9edd75/image-53.jpg)

Second example S 2: r 1[X 1] w 1[X 2] r 2[X 1] w 2[X 2] S 2’: r 1[X] w 1[X] r 2[X] w 2[X] • Equivalent serial schedule SS: r 1[X] w 1[X] r 2[X] w 2[X] CS 347 Notes 08 53

Summary • Updates available copies – at any copy – at fixed (primary) copy have seen – at one copy but control can migrate – no updates CS 347 Notes 08 54

- Slides: 54