Class Summary ECE5424 G CS5824 JiaBin Huang Virginia

Class Summary ECE-5424 G / CS-5824 Jia-Bin Huang Virginia Tech Spring 2019

• Thank you all for participating this class! • SPOT survey! • Please give us feedback: lectures, topics, homework, exam, office hour, piazza

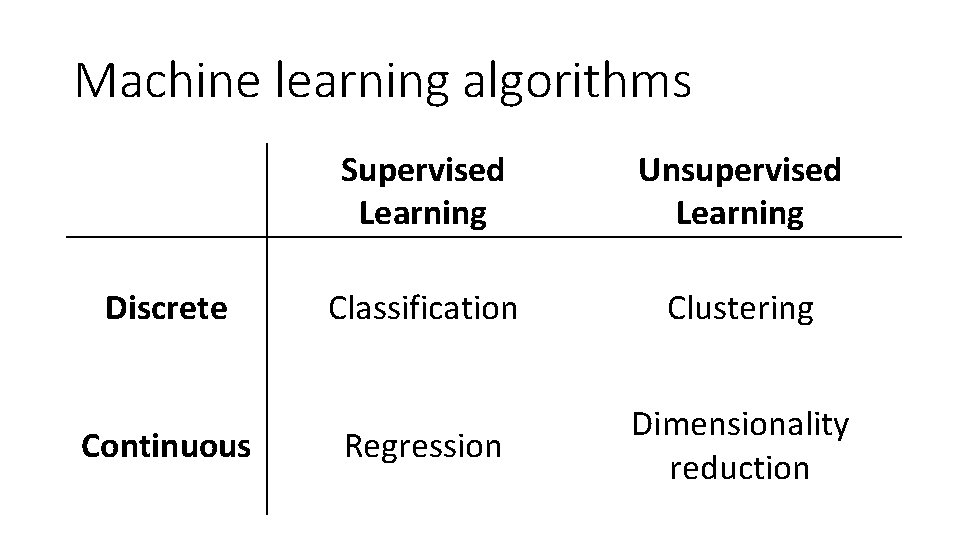

Machine learning algorithms Discrete Continuous Supervised Learning Unsupervised Learning Classification Clustering Regression Dimensionality reduction

k-NN (Classification/Regression) •

Linear regression (Regression) •

Naïve Bayes (Classification) •

Logistic regression (Classification) •

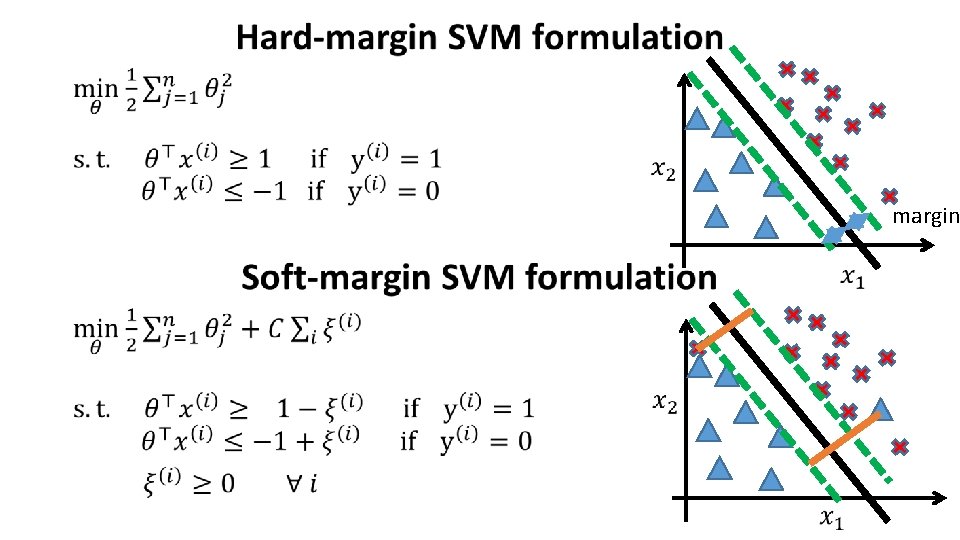

• margin

SVM with kernels

SVM parameters • Slide credit: Andrew Ng

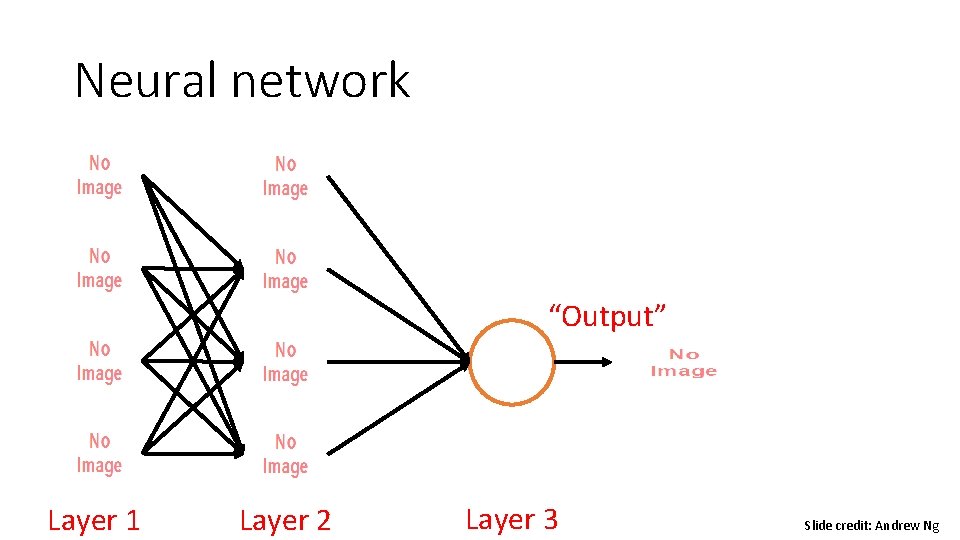

Neural network “Output” Layer 1 Layer 2 Layer 3 Slide credit: Andrew Ng

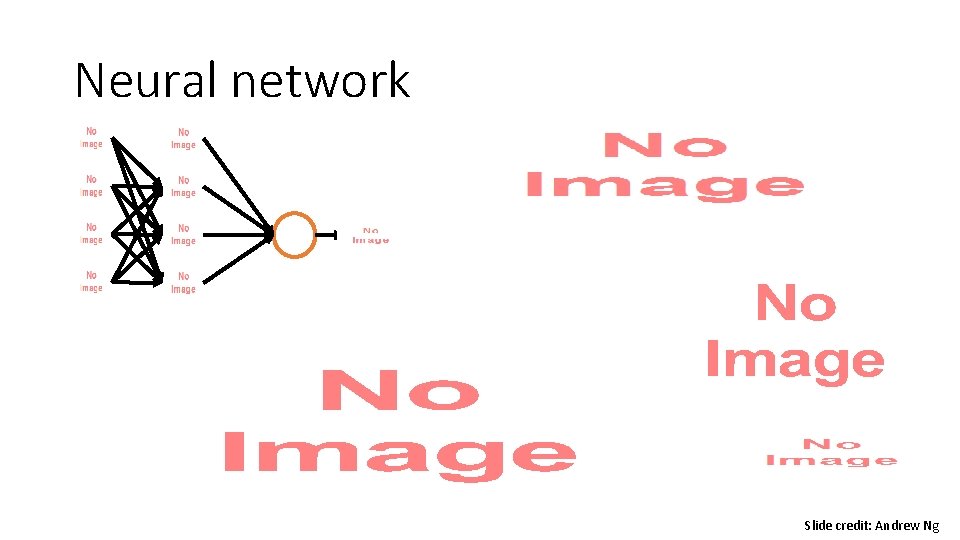

Neural network Slide credit: Andrew Ng

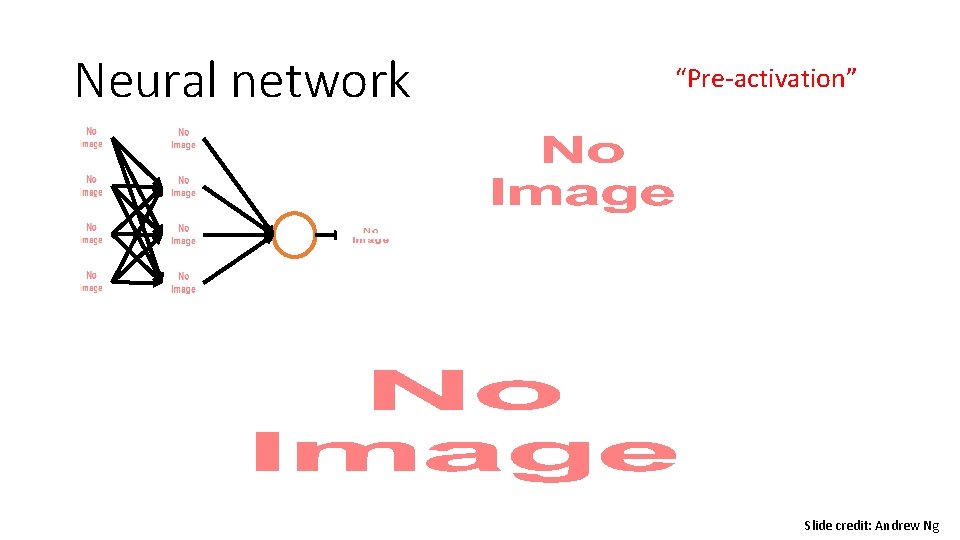

Neural network “Pre-activation” Slide credit: Andrew Ng

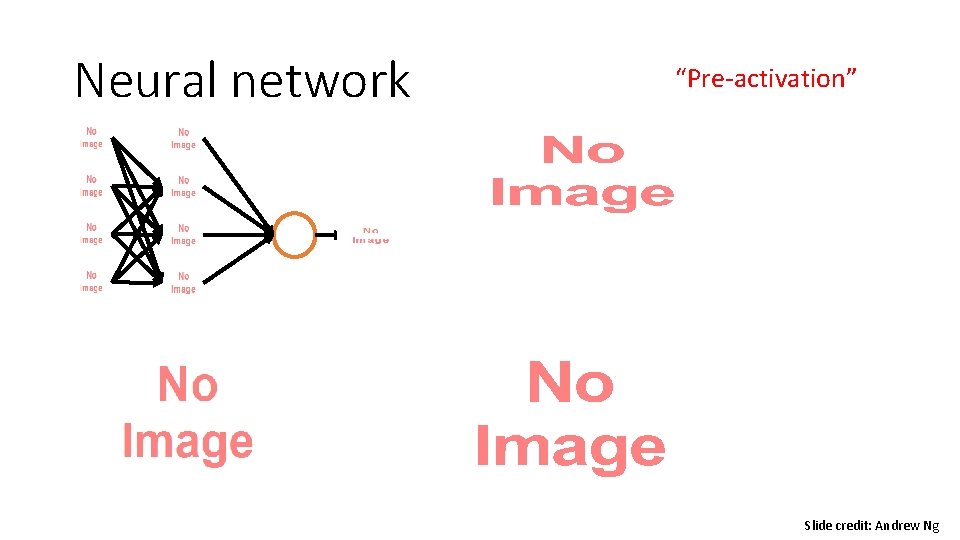

Neural network “Pre-activation” Slide credit: Andrew Ng

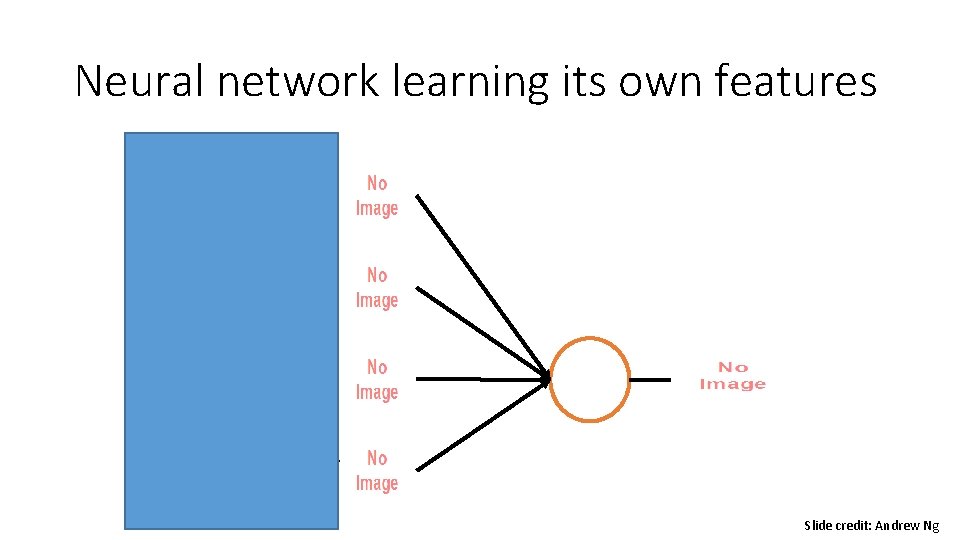

Neural network learning its own features Slide credit: Andrew Ng

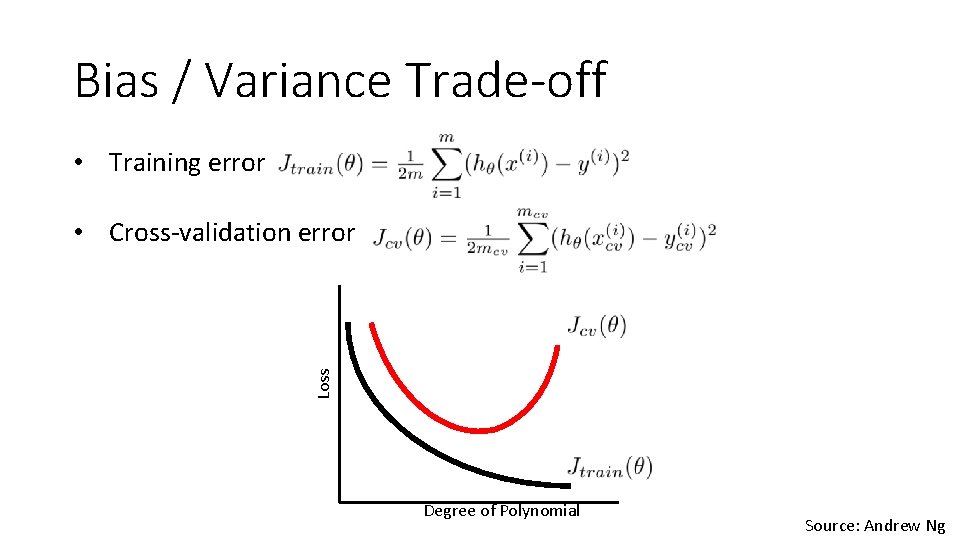

Bias / Variance Trade-off • Training error Loss • Cross-validation error Degree of Polynomial Source: Andrew Ng

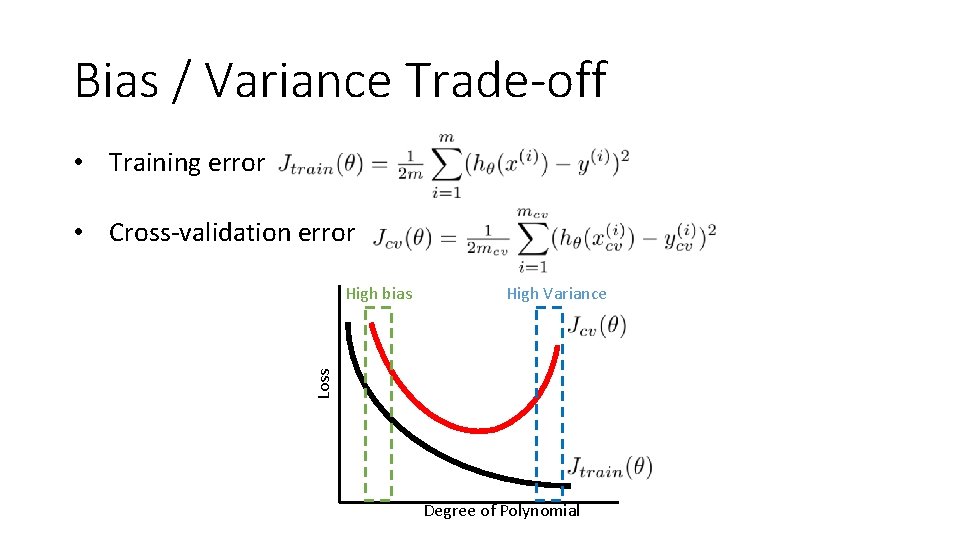

Bias / Variance Trade-off • Training error • Cross-validation error High Variance Loss High bias Degree of Polynomial

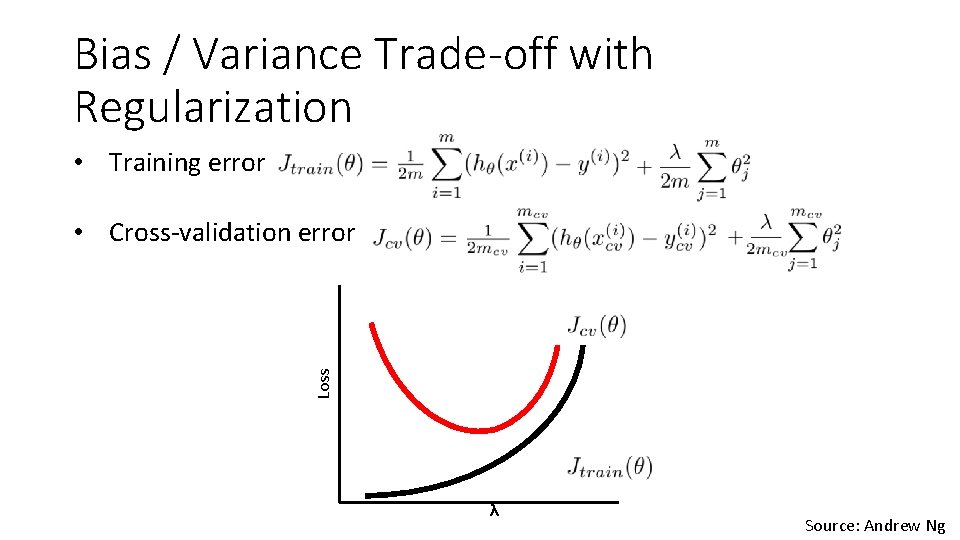

Bias / Variance Trade-off with Regularization • Training error Loss • Cross-validation error λ Source: Andrew Ng

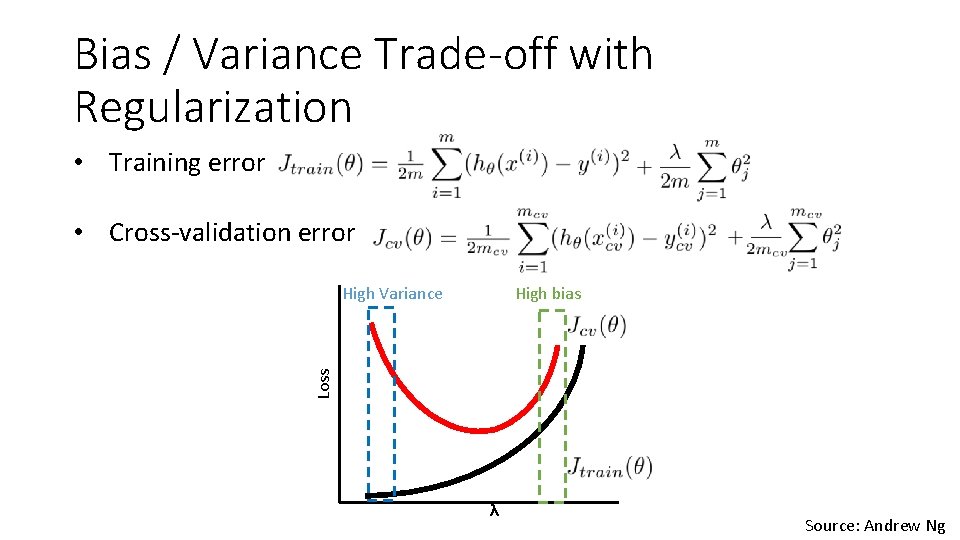

Bias / Variance Trade-off with Regularization • Training error • Cross-validation error High bias Loss High Variance λ Source: Andrew Ng

K-means algorithm • Slide credit: Andrew Ng

Expectation Maximization (EM) Algorithm •

Expectation Maximization (EM) Algorithm •

EM algorithm •

Anomaly detection algorithm •

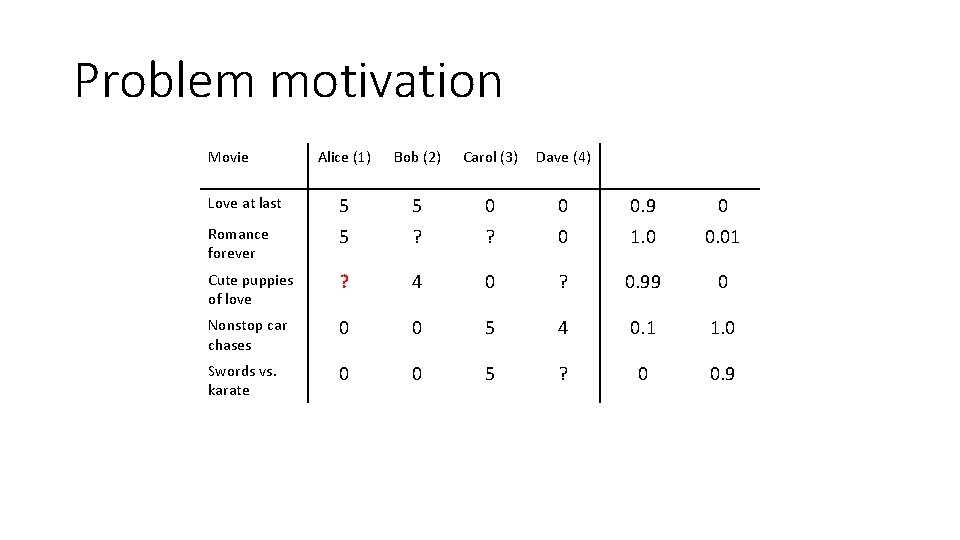

Problem motivation Movie Alice (1) Bob (2) Carol (3) Dave (4) Love at last 5 5 0 0 0. 9 0 Romance forever 5 ? ? 0 1. 0 0. 01 Cute puppies of love ? 4 0 ? 0. 99 0 Nonstop car chases 0 0 5 4 0. 1 1. 0 Swords vs. karate 0 0 5 ? 0 0. 9

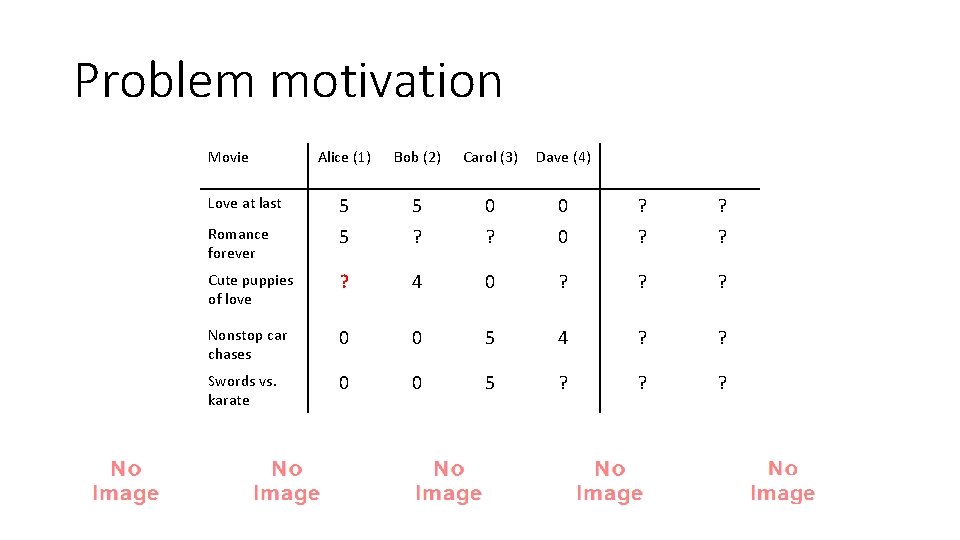

Problem motivation Movie Alice (1) Bob (2) Carol (3) Dave (4) Love at last 5 5 0 0 ? ? Romance forever 5 ? ? 0 ? ? Cute puppies of love ? 4 0 ? ? ? Nonstop car chases 0 0 5 4 ? ? Swords vs. karate 0 0 5 ? ? ?

Collaborative filtering optimization objective •

Collaborative filtering algorithm •

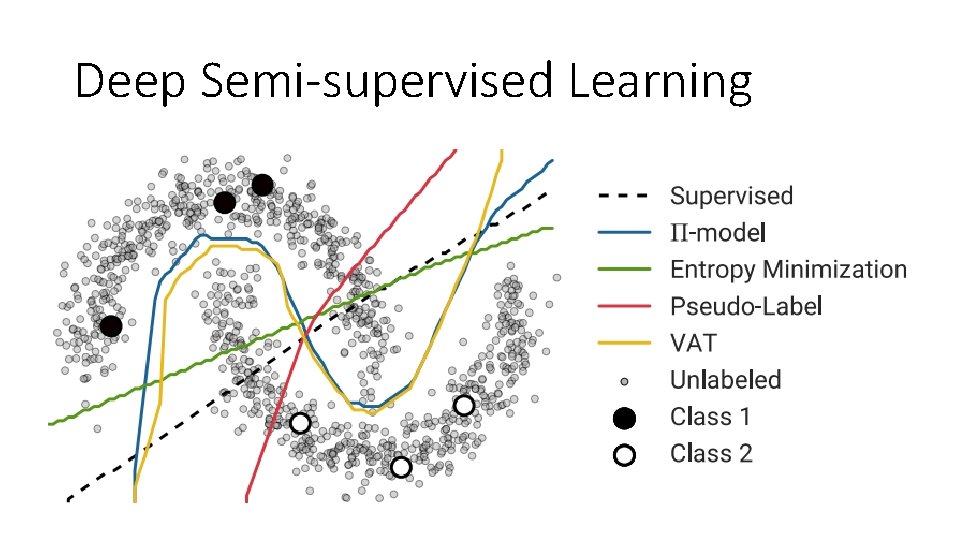

Semi-supervised Learning Problem Formulation •

Deep Semi-supervised Learning

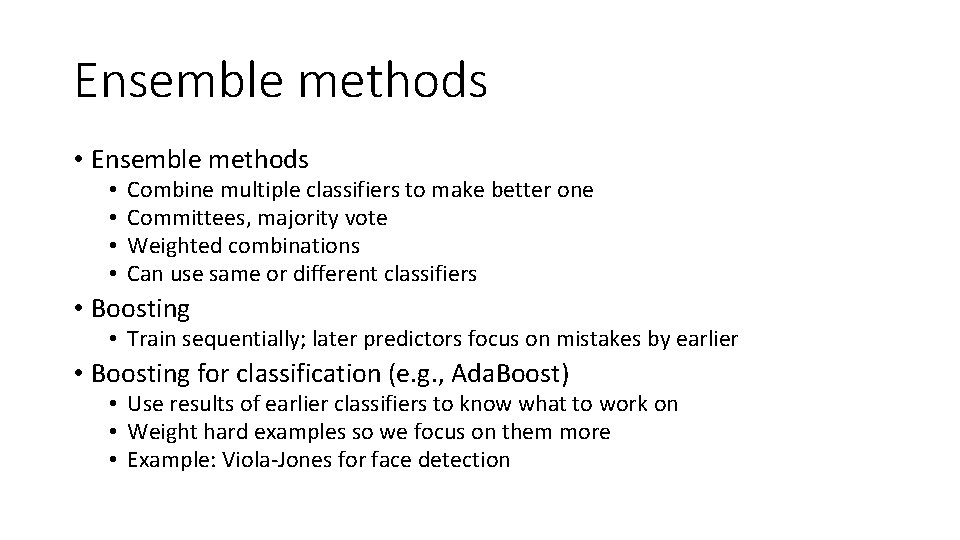

Ensemble methods • • Combine multiple classifiers to make better one Committees, majority vote Weighted combinations Can use same or different classifiers • Boosting • Train sequentially; later predictors focus on mistakes by earlier • Boosting for classification (e. g. , Ada. Boost) • Use results of earlier classifiers to know what to work on • Weight hard examples so we focus on them more • Example: Viola-Jones for face detection

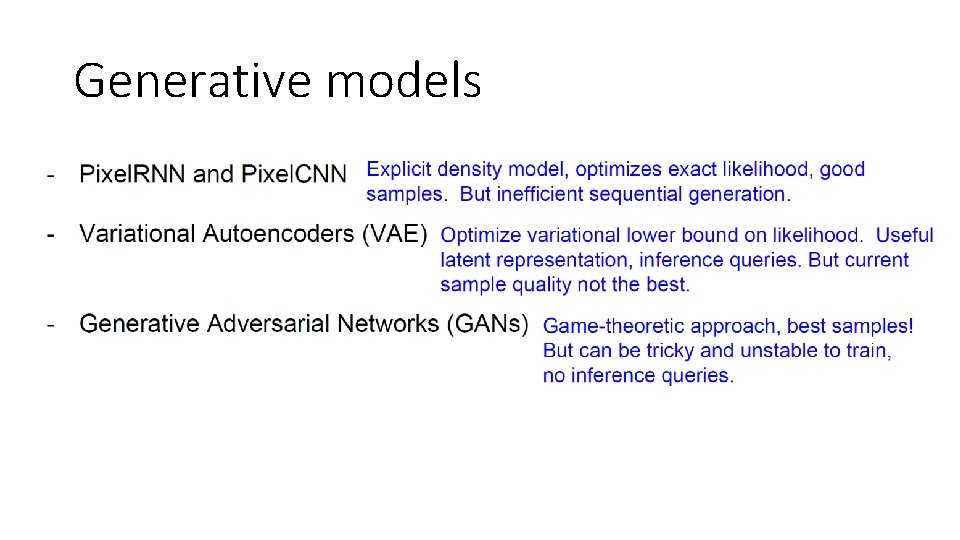

Generative models

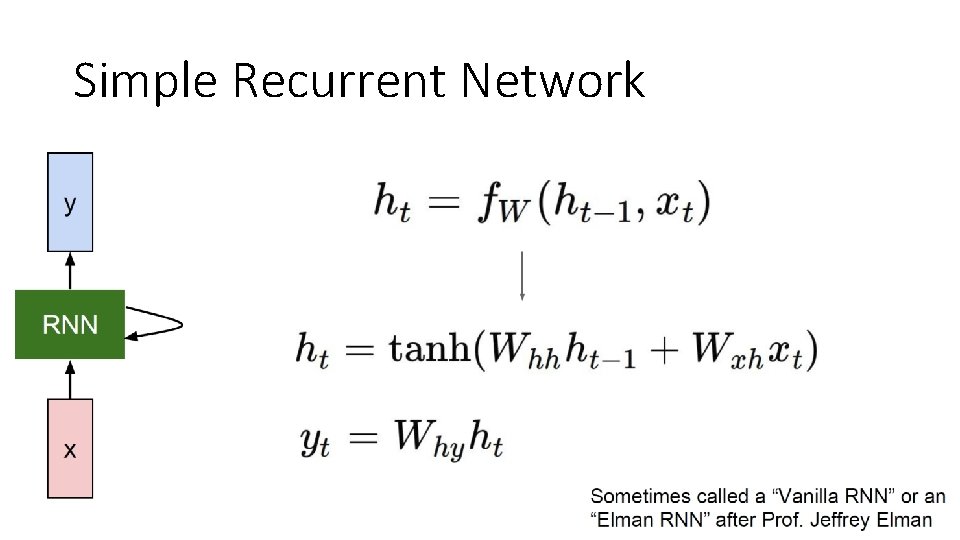

Simple Recurrent Network

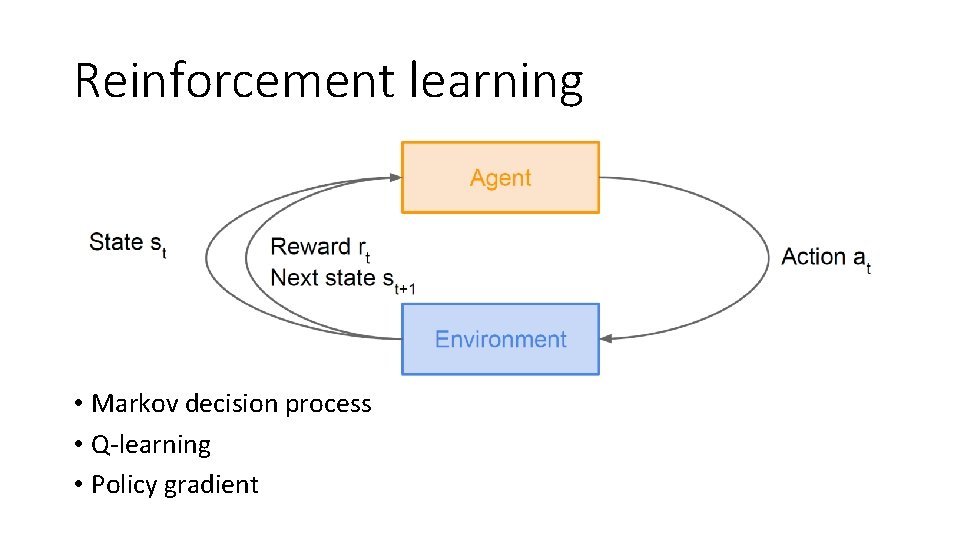

Reinforcement learning • Markov decision process • Q-learning • Policy gradient

Final exam sample questions

![Conceptual questions • [True/False] Increasing the value of k in a k-nearest neighbor classifier Conceptual questions • [True/False] Increasing the value of k in a k-nearest neighbor classifier](http://slidetodoc.com/presentation_image_h/28adc58a152a123acd61d5da16cf0064/image-37.jpg)

Conceptual questions • [True/False] Increasing the value of k in a k-nearest neighbor classifier will decrease its bias • [True/False] Backpropagation helps neural network training get unstuck from local minimum • [True/False] Linear regression can be solved by either matrix algebra or gradient descent • [True/False] Logistic regression can be solved by either matrix algebra or gradient descent • [True/False] K-means clustering has a unique solution • [True/False] PCA has a unique solution

Classification/Regression • Given a simple dataset • 1) Estimate the parameters • 2) Compute training error • 3) Compute leave-one-out cross-validation error • 4) Compute testing error

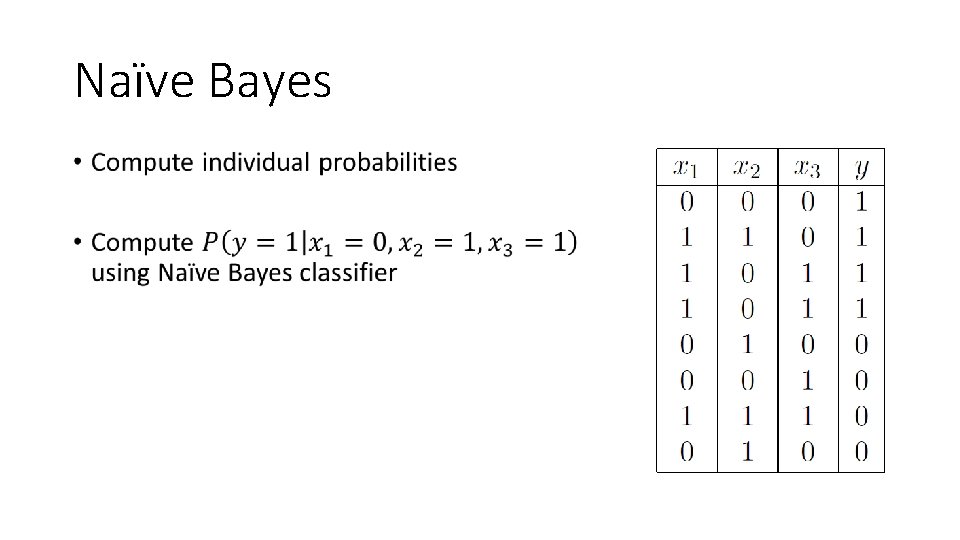

Naïve Bayes •

- Slides: 39