BERKELEY PAR LAB Lithe Composing Parallel Software Efficiently

BERKELEY PAR LAB Lithe Composing Parallel Software Efficiently Heidi Pan xoxo@mit. edu Massachusetts Institute of Technology Ph. D Thesis Committee: Saman Amarasinghe Arvind Krste Asanović Ph. D Thesis Defense April 9, 2010

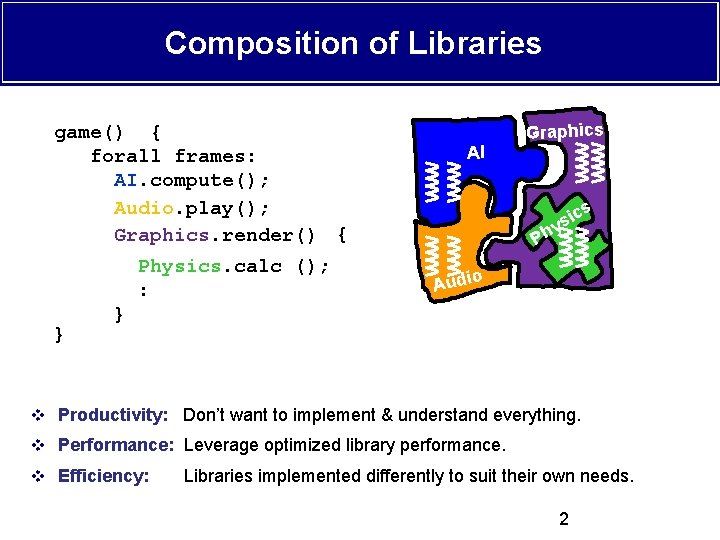

Composition of Libraries game() { forall frames: AI. compute(); Audio. play(); Graphics. render(); { Physics. calc (); : } AI Graphics s c i s y Ph o Audi } v Productivity: Don’t want to implement & understand everything. v Performance: Leverage optimized library performance. v Efficiency: Libraries implemented differently to suit their own needs. 2

Talk Roadmap v Problem: Efficient parallel composition is hard! v Solution v Implementation v Evaluation v Synchronization v Future Work 3

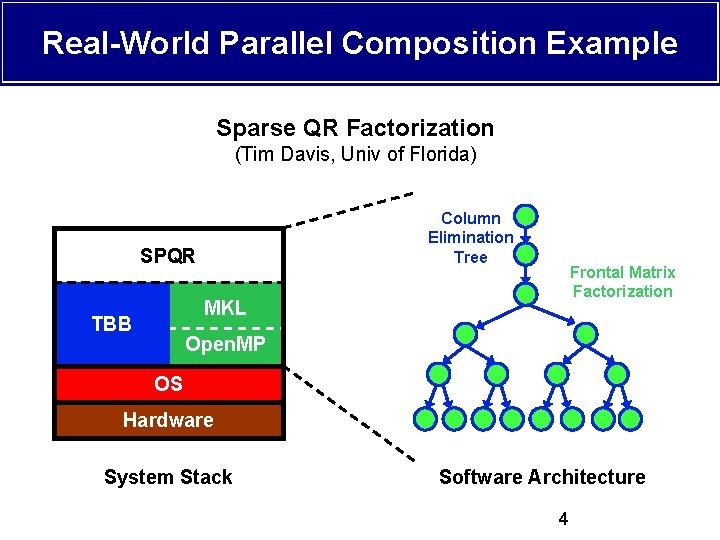

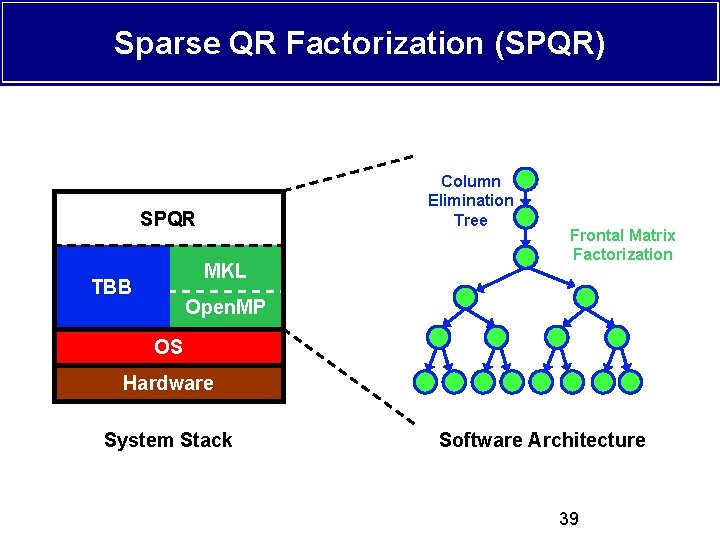

Real-World Parallel Composition Example Sparse QR Factorization (Tim Davis, Univ of Florida) Column Elimination Tree SPQR Frontal Matrix Factorization MKL TBB Open. MP OS Hardware System Stack Software Architecture 4

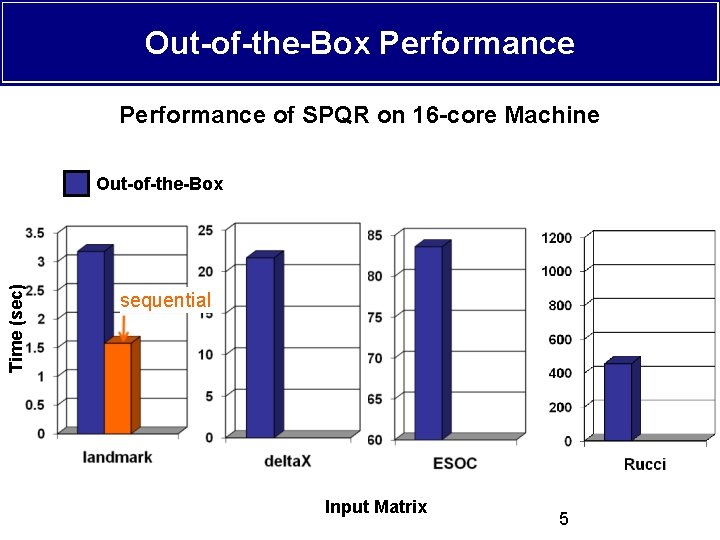

Out-of-the-Box Performance of SPQR on 16 -core Machine Time (sec) Out-of-the-Box sequential Input Matrix 5

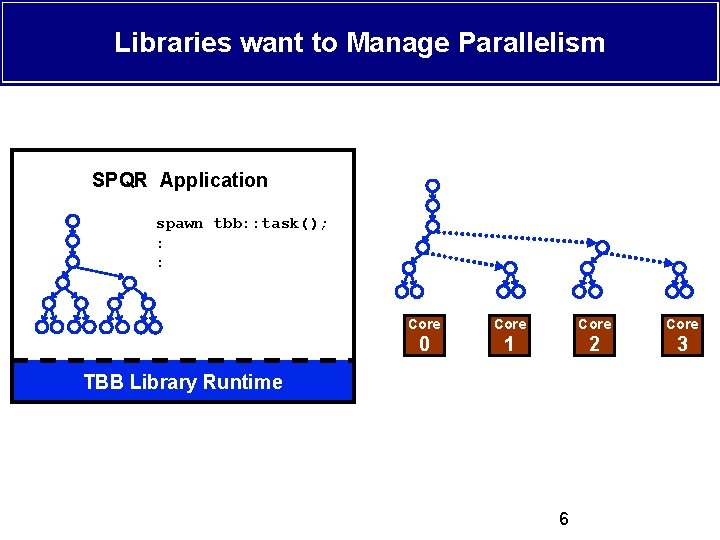

Libraries want to Manage Parallelism SPQR Application spawn tbb: : task(); : : Core 0 1 2 3 TBB Library Runtime 6

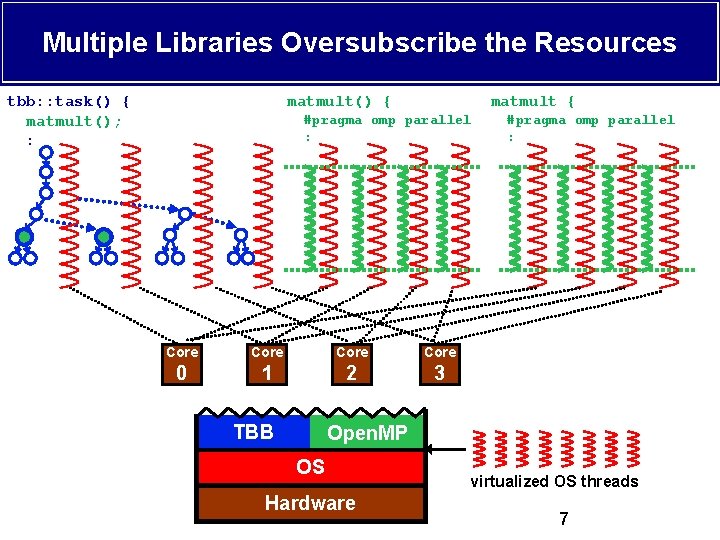

Multiple Libraries Oversubscribe the Resources tbb: : task() { matmult(); : matmult() { matmult { #pragma omp parallel : Core 0 1 2 3 TBB #pragma omp parallel : Open. MP OS Hardware virtualized OS threads 7

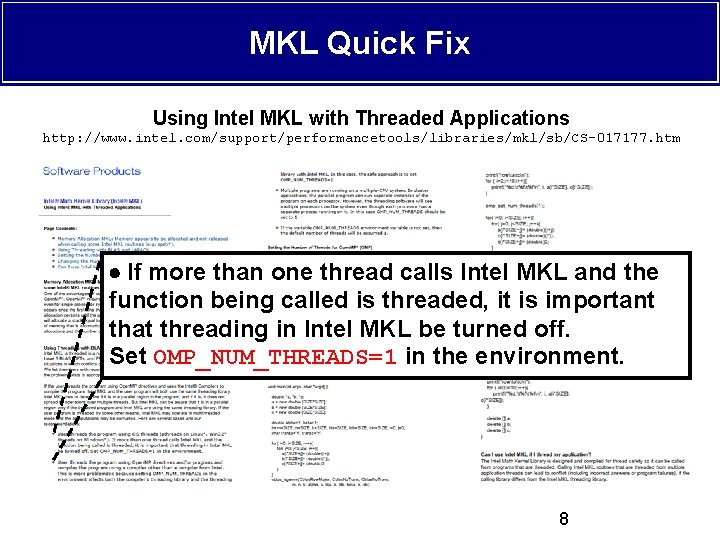

MKL Quick Fix Using Intel MKL with Threaded Applications http: //www. intel. com/support/performancetools/libraries/mkl/sb/CS-017177. htm If more than one thread calls Intel MKL and the function being called is threaded, it is important that threading in Intel MKL be turned off. Set OMP_NUM_THREADS=1 in the environment. 8

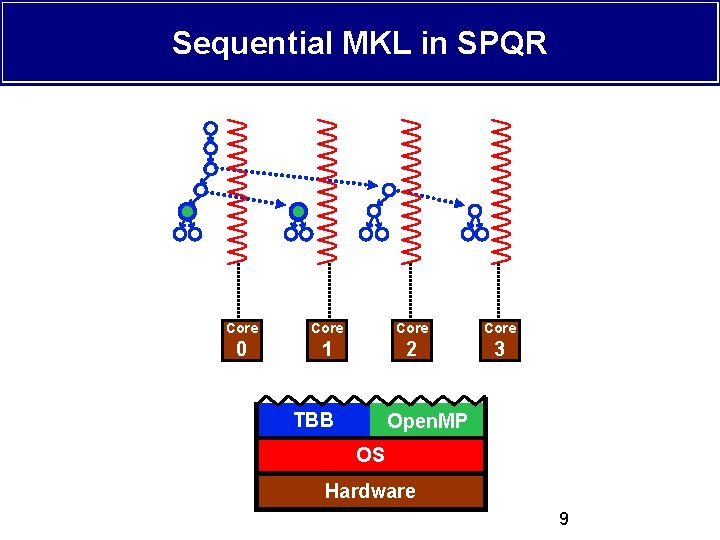

Sequential MKL in SPQR Core 0 1 2 3 TBB Open. MP OS Hardware 9

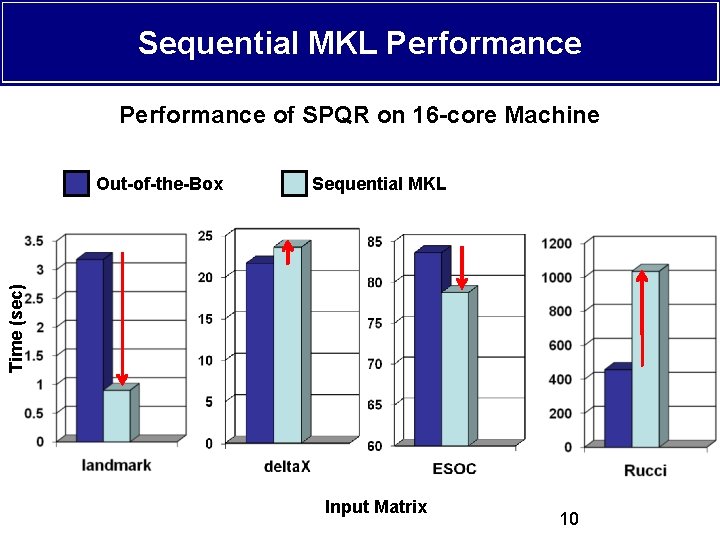

Sequential MKL Performance of SPQR on 16 -core Machine Sequential MKL Time (sec) Out-of-the-Box Input Matrix 10

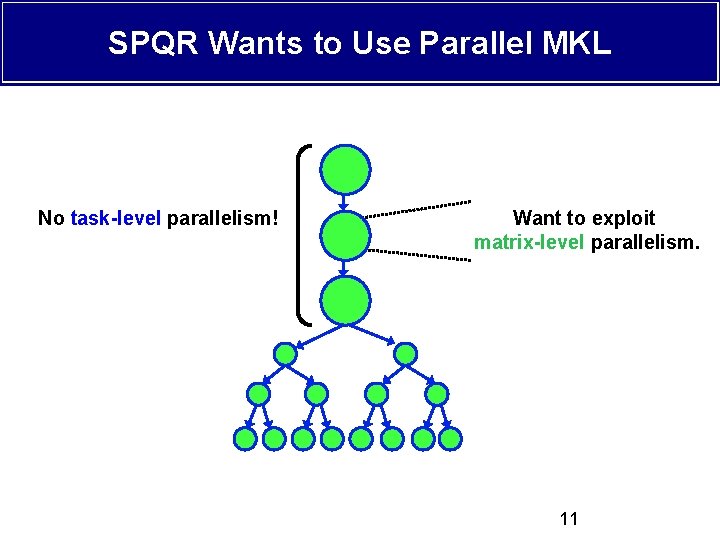

SPQR Wants to Use Parallel MKL No task-level parallelism! Want to exploit matrix-level parallelism. 11

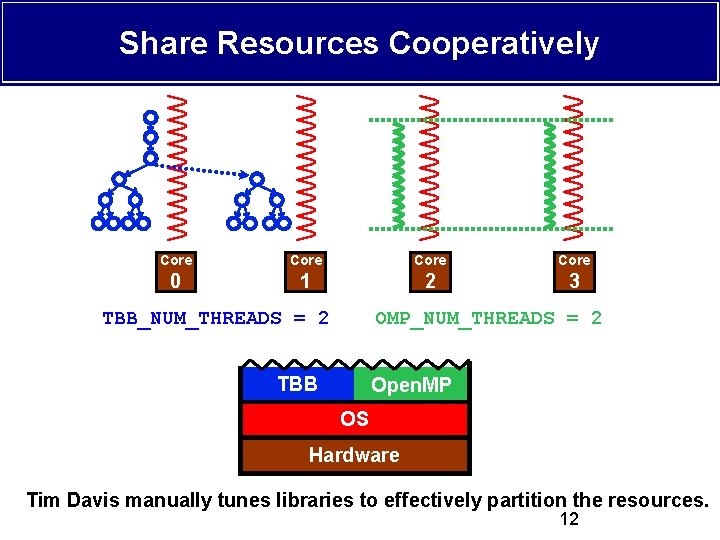

Share Resources Cooperatively Core 0 1 2 3 TBB_NUM_THREADS = 2 OMP_NUM_THREADS = 2 TBB Open. MP OS Hardware Tim Davis manually tunes libraries to effectively partition the resources. 12

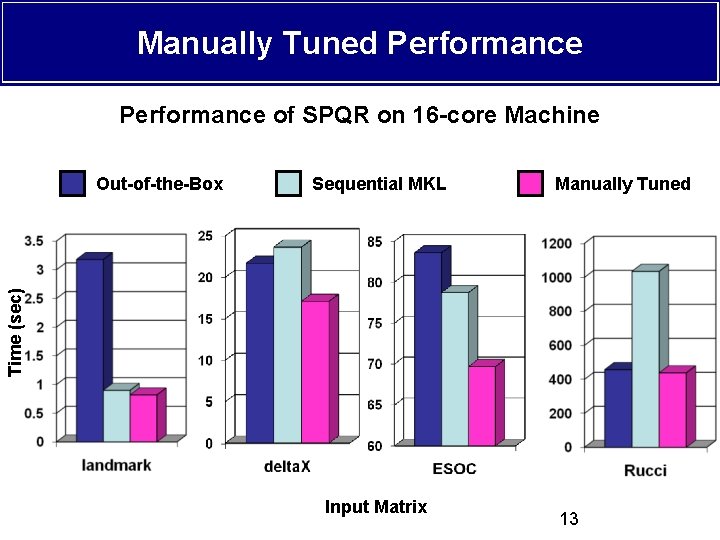

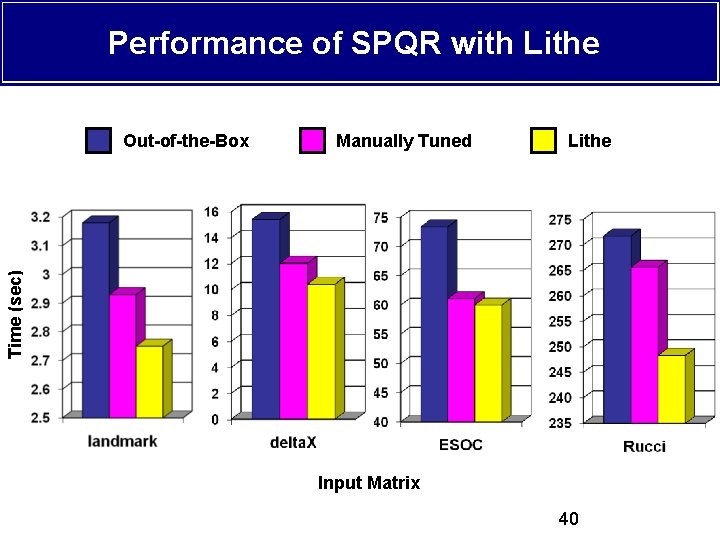

Manually Tuned Performance of SPQR on 16 -core Machine Sequential MKL Manually Tuned Time (sec) Out-of-the-Box Input Matrix 13

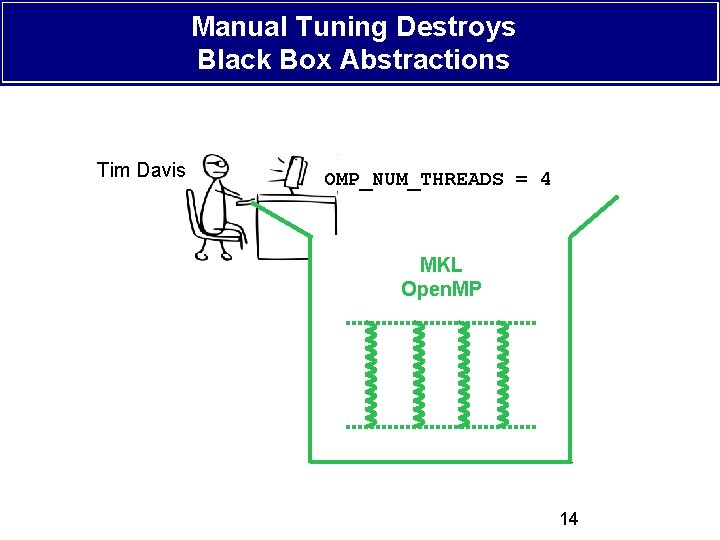

Manual Tuning Destroys Black Box Abstractions Tim Davis OMP_NUM_THREADS = 4 MKL LAPACK Open. MP Ax=b 14

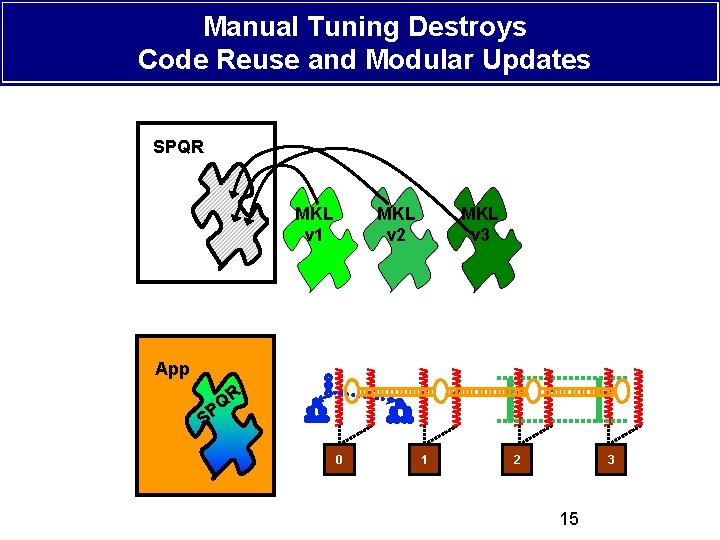

Manual Tuning Destroys Code Reuse and Modular Updates SPQR MKL v 1 MKL v 2 MKL v 3 App R Q P S 0 1 0 2 3 15

Talk Roadmap v Problem: Efficient parallel composability is hard! v Solution: Lithe § § Primitives Resource Sharing Model Standard Interface Runtime v Implementation v Evaluation v Synchronization v Future Work 16

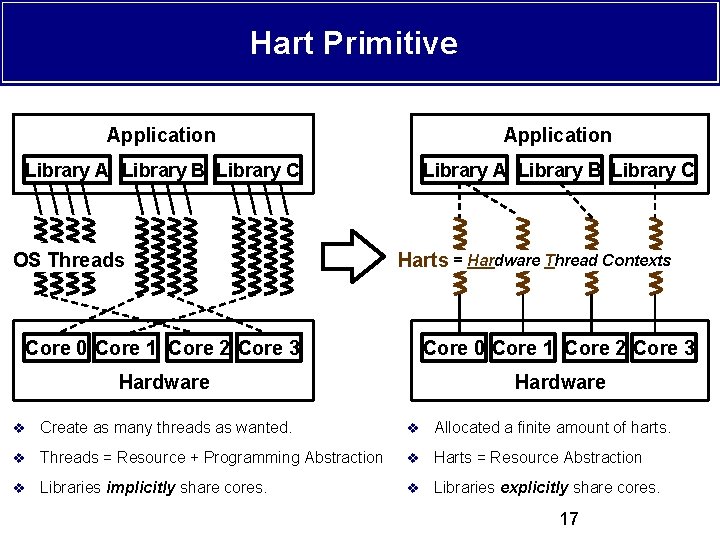

Hart Primitive Application Library A Library B Library C OS Threads Harts = Hardware Thread Contexts Core 0 Core 1 Core 2 Core 3 Hardware v Create as many threads as wanted. v Allocated a finite amount of harts. v Threads = Resource + Programming Abstraction v Harts = Resource Abstraction v Libraries implicitly share cores. v Libraries explicitly share cores. 17

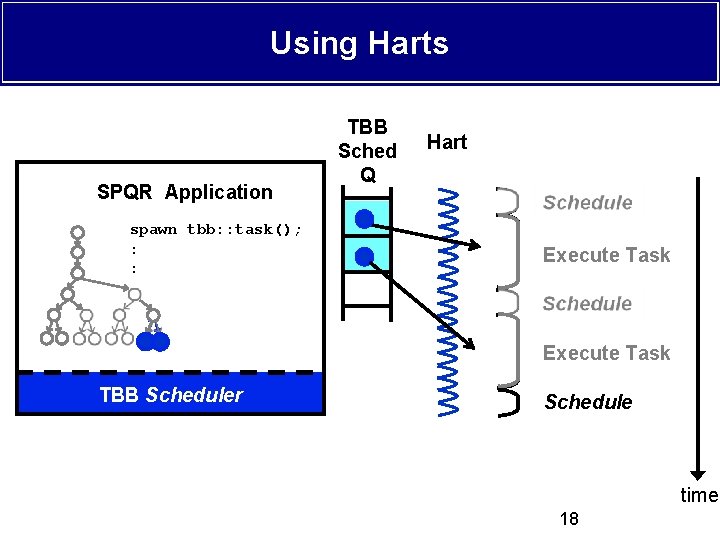

Using Harts SPQR Application spawn tbb: : task(); : : TBB Sched Q Hart Schedule Execute Task TBB Library Runtime TBB Scheduler Schedule time 18

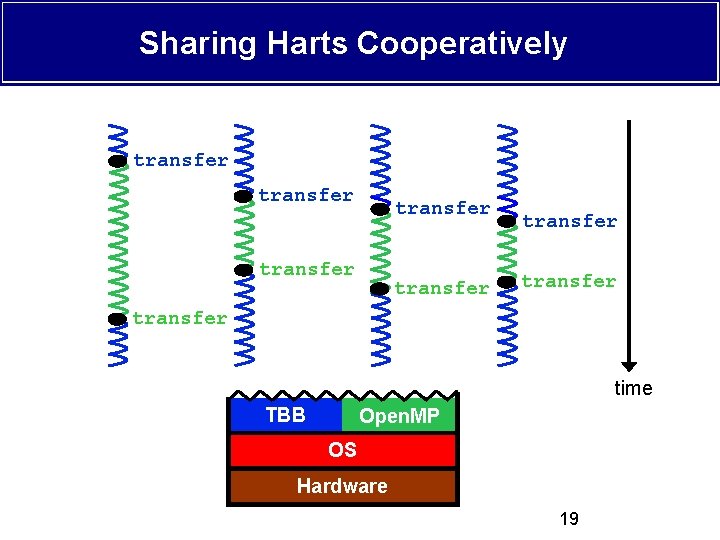

Sharing Harts Cooperatively transfer transfer time TBB Open. MP OS Hardware 19

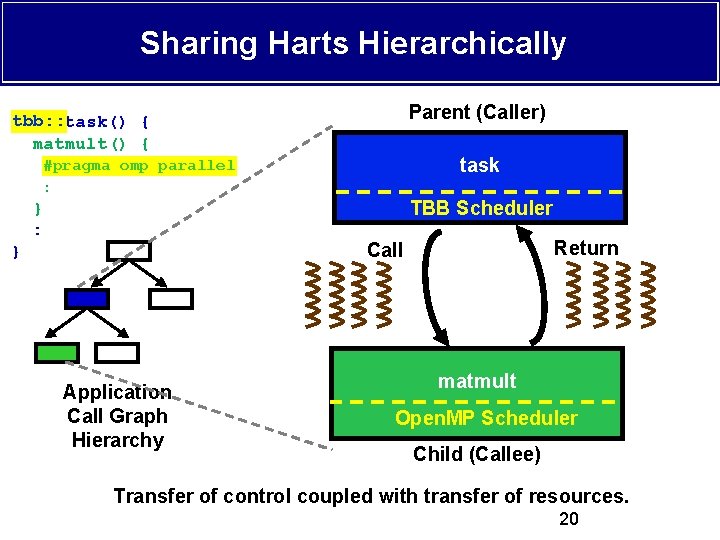

Sharing Harts Hierarchically Parent (Caller) tbb: : { tbb: : task() { matmult() { #pragma omp parallel : } : task TBB Runtime Scheduler Return Call } Application Call Graph Hierarchy matmult Open. MP Runtime Scheduler Open. MP Scheduler Child (Callee) Transfer of control coupled with transfer of resources. 20

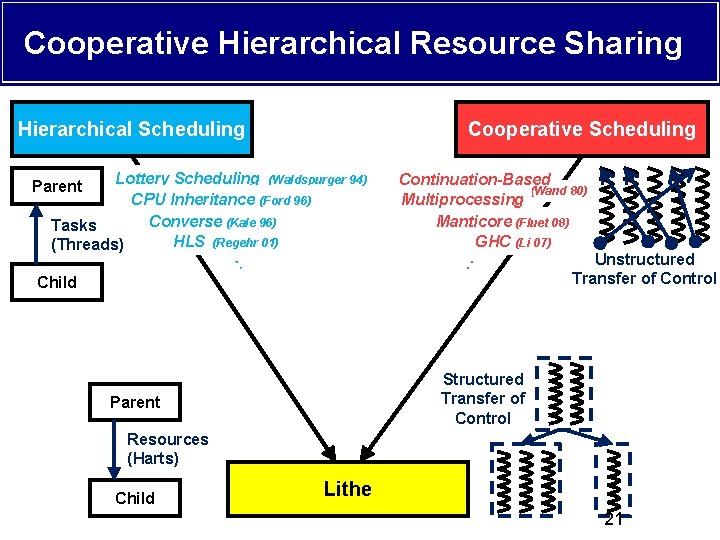

Cooperative Hierarchical Resource Sharing Hierarchical Scheduling Cooperative Scheduling Lottery Scheduling (Waldspurger 94) CPU Inheritance (Ford 96) Converse (Kale 96) Tasks HLS (Regehr 01) (Threads) : Child Parent Continuation-Based (Wand 80) Multiprocessing Manticore (Fluet 08) GHC (Li 07) Unstructured : Transfer of Control Structured Transfer of Control Parent Resources (Harts) Child Lithe 21

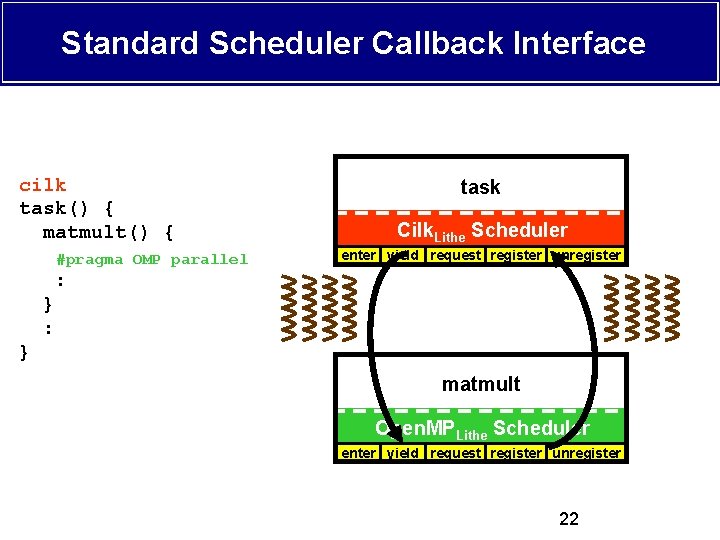

Standard Scheduler Callback Interface cilk tbb: : task() { matmult() { #pragma OMP parallel task TBB Cilk Parent Scheduler Lithe. Scheduler enter yield request register unregister : } matmult Open. MP Child Lithe Scheduler enter yield request register unregister 22

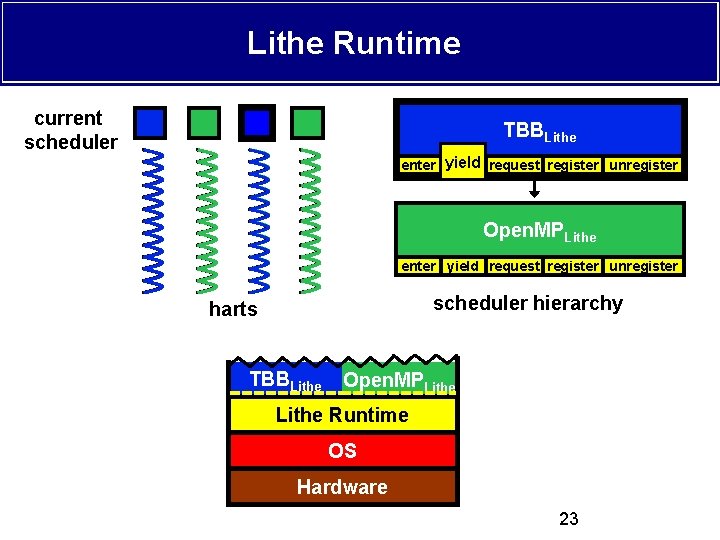

Lithe Runtime current scheduler TBBLithe enter yield request register unregister Open. MPLithe enter yield request register unregister scheduler hierarchy harts TBBLithe Open. MPLithe TBB Lithe Open. MP Lithe Runtime Lithe OS Hardware 23

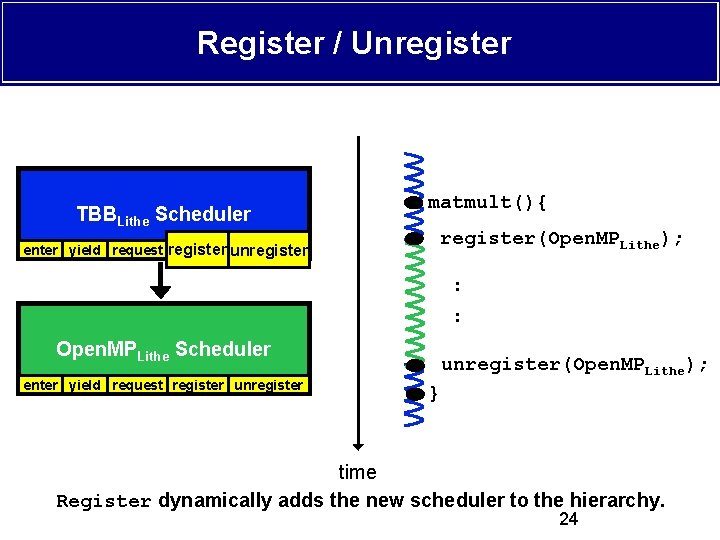

Register / Unregister TBBLithe Scheduler enter yield request register unregister matmult(){ register(Open. MPLithe); : : Open. MPLithe Scheduler enter yield request register unregister(Open. MPLithe); } time Register dynamically adds the new scheduler to the hierarchy. 24

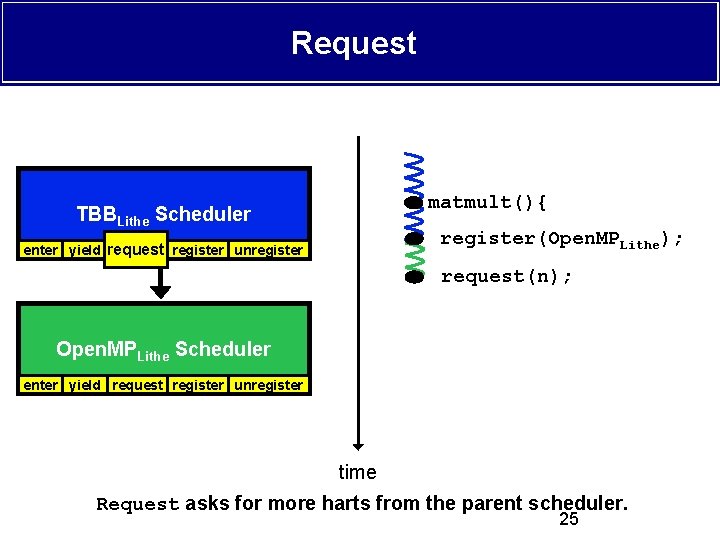

Request matmult(){ TBBLithe Scheduler register(Open. MPLithe); enter yield request register unregister request(n); Open. MPLithe Scheduler enter yield request register unregister time Request asks for more harts from the parent scheduler. 25

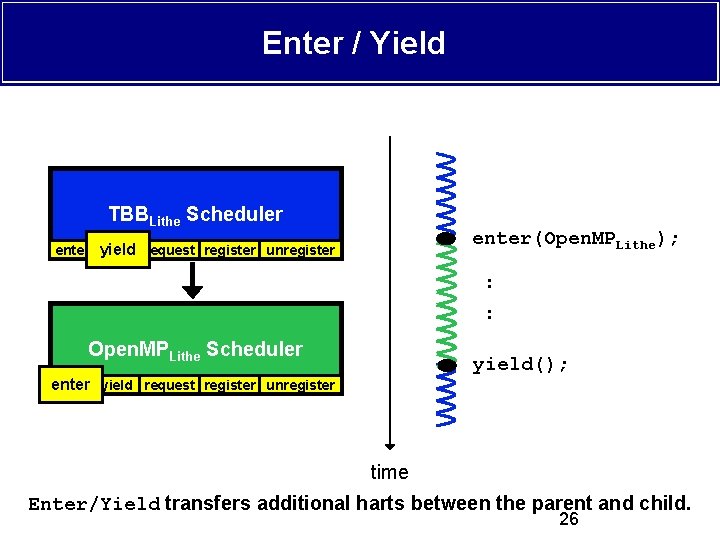

Enter / Yield TBBLithe Scheduler enter(Open. MPLithe); enter yield request register unregister : : Open. MPLithe Scheduler yield(); enter yield request register unregister enter time Enter/Yield transfers additional harts between the parent and child. 26

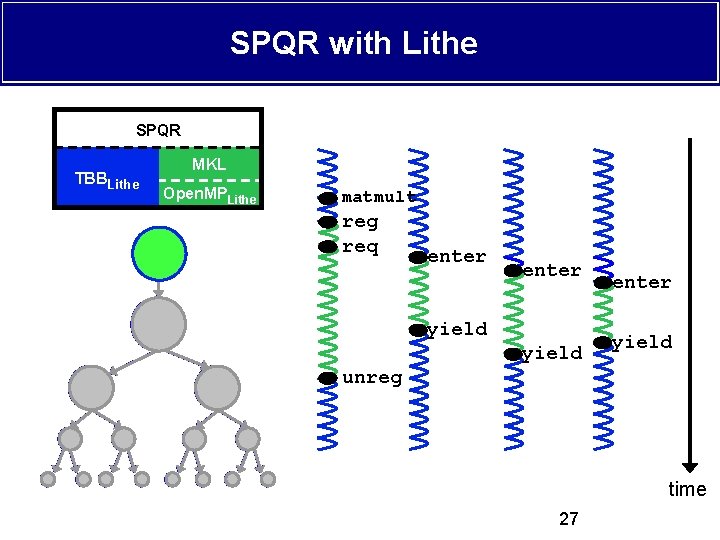

SPQR with Lithe SPQR TBBLithe MKL Open. MPLithe matmult reg req enter yield unreg time 27

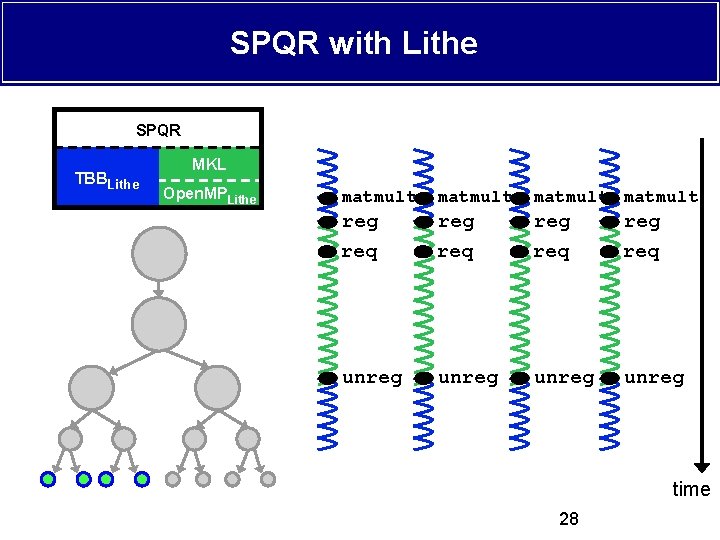

SPQR with Lithe SPQR TBBLithe MKL Open. MPLithe matmult reg req unreg time 28

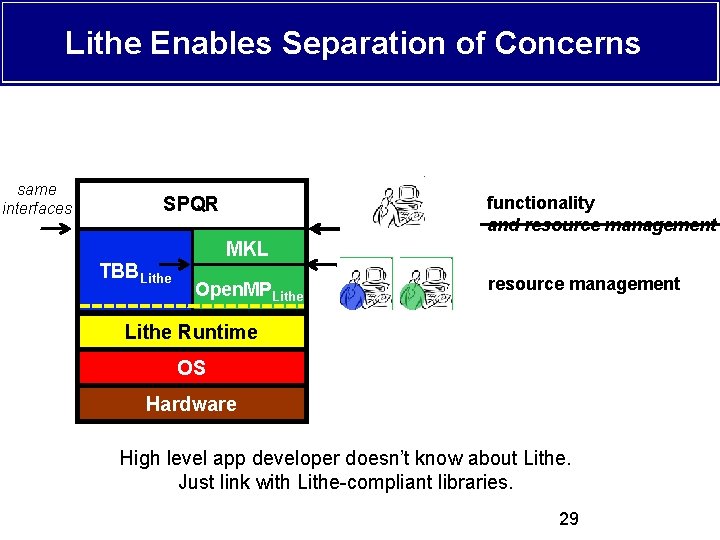

Lithe Enables Separation of Concerns same interfaces SPQR TBB Lithe functionality and resource management MKL Open. MP Lithe resource management Lithe Runtime OS Hardware High level app developer doesn’t know about Lithe. Just link with Lithe-compliant libraries. 29

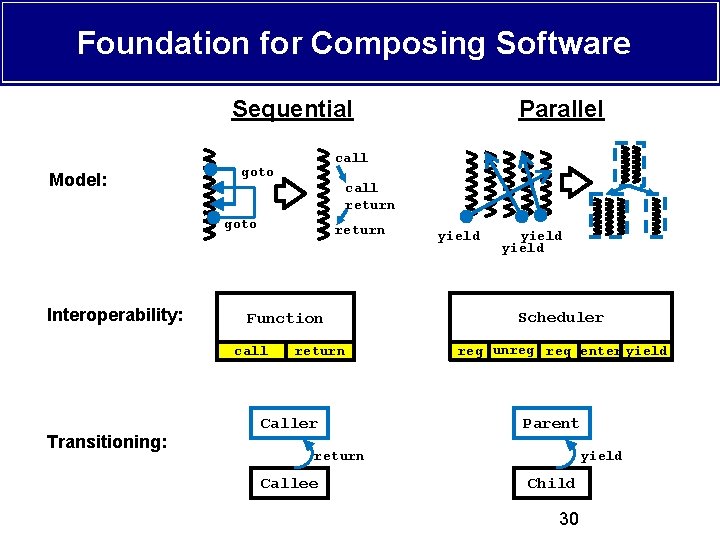

Foundation for Composing Software Sequential Model: call goto call return goto Interoperability: return Function call Transitioning: Parallel return Caller yield Scheduler reg unreg req enter yield Parent return Callee yield Child 30

Talk Roadmap v Problem: Efficient parallel composability is hard! v Solution: Lithe v Implementation § Lithe Runtime § Porting Intel Threading Building Blocks (TBB) § Porting GNU Open. MP (libgomp) v Evaluation v Synchronization v Future Work 31

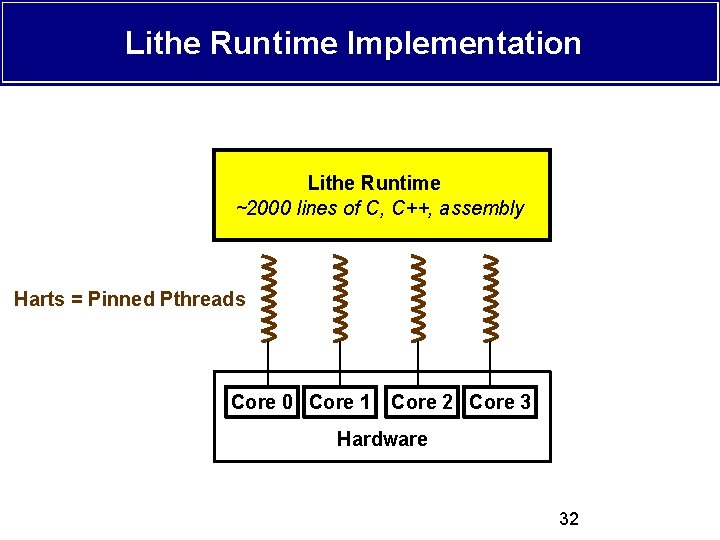

Lithe Runtime Implementation Lithe Runtime ~2000 lines of C, C++, assembly Harts = Pinned Pthreads Core 0 Core 1 Core 2 Core 3 Hardware 32

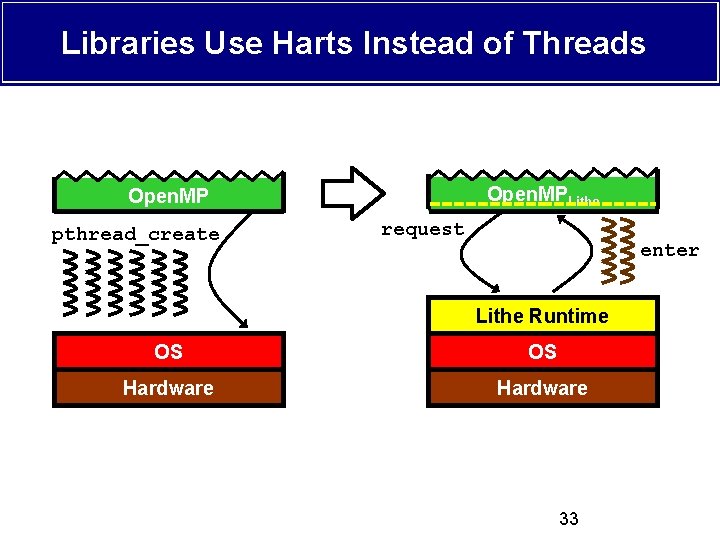

Libraries Use Harts Instead of Threads Open. MPLithe Open. MP pthread_create request enter Lithe Runtime OS OS Hardware 33

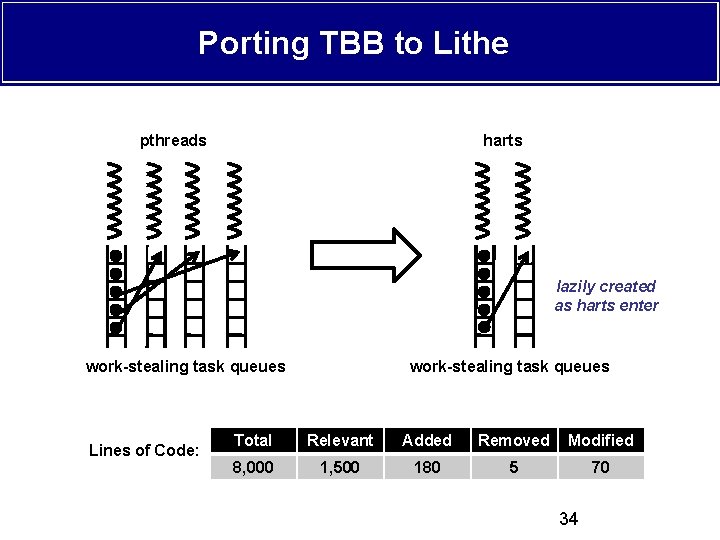

Porting TBB to Lithe pthreads harts lazily created as harts enter work-stealing task queues Lines of Code: work-stealing task queues Total Relevant Added Removed Modified 8, 000 1, 500 180 5 70 34

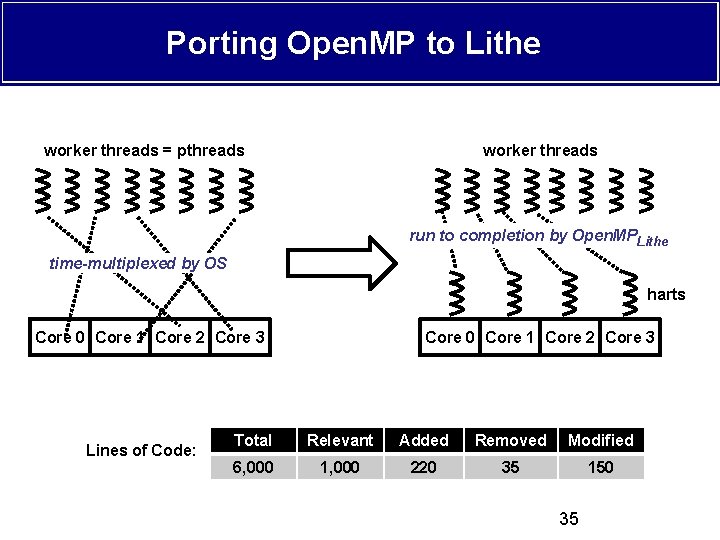

Porting Open. MP to Lithe worker threads = pthreads worker threads run to completion by Open. MPLithe time-multiplexed by OS harts Core 0 Core 1 Core 2 Core 3 Lines of Code: Core 0 Core 1 Core 2 Core 3 Total Relevant Added Removed Modified 6, 000 1, 000 220 35 150 35

Talk Roadmap v Problem: Efficient parallel composability is hard! v Solution: Lithe v Implementation v Evaluation § Ported Libraries Baseline Performance § Sparse QR Factorization § Real-Time Audio Processing v Synchronization v Future Work 36

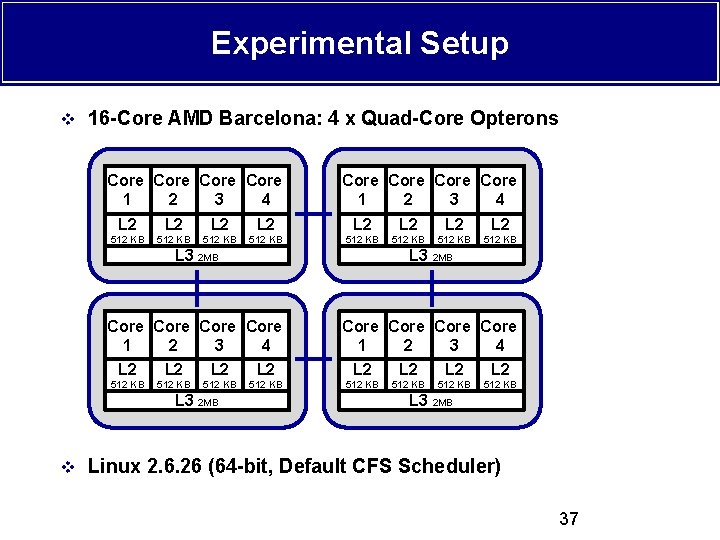

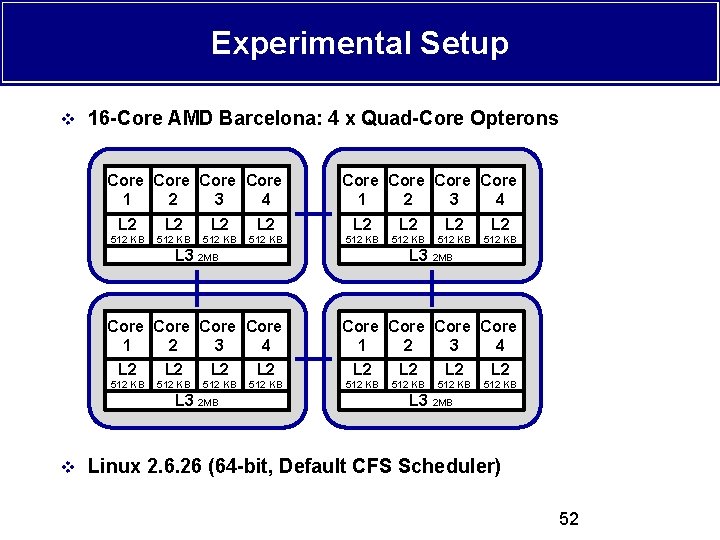

Experimental Setup v 16 -Core AMD Barcelona: 4 x Quad-Core Opterons Core Core Core Core 1 2 3 4 L 2 L 2 L 2 L 2 512 KB 512 KB 512 KB 512 KB L 3 2 MB v Linux 2. 6. 26 (64 -bit, Default CFS Scheduler) 37

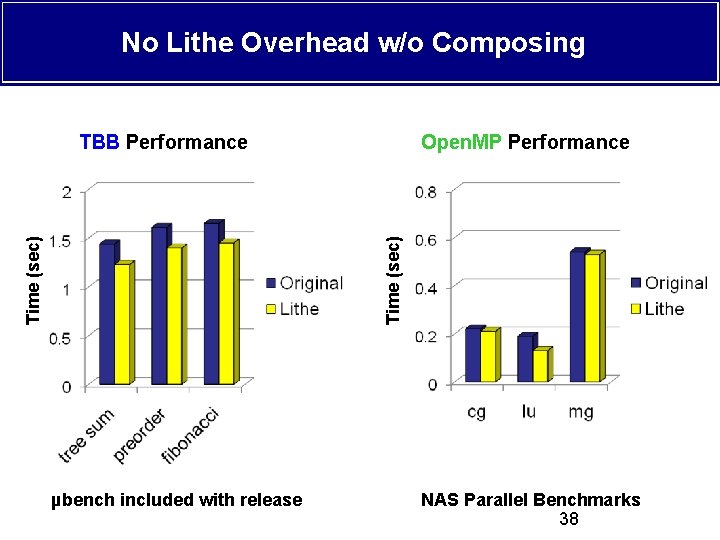

No Lithe Overhead w/o Composing Open. MP Performance Time (sec) TBB Performance µbench included with release NAS Parallel Benchmarks 38

Sparse QR Factorization (SPQR) Column Elimination Tree SPQR MKL TBB Frontal Matrix Factorization Open. MP OS Hardware System Stack Software Architecture 39

Performance of SPQR with Lithe Manually Tuned Lithe Time (sec) Out-of-the-Box Input Matrix 40

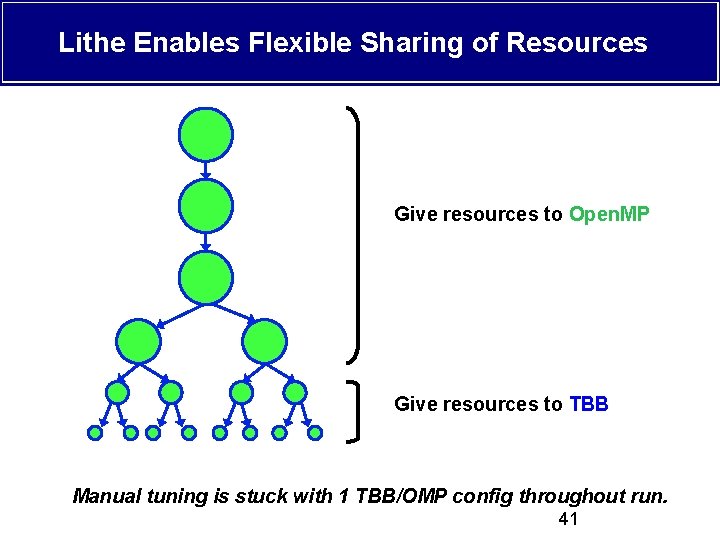

Lithe Enables Flexible Sharing of Resources Give resources to Open. MP Give resources to TBB Manual tuning is stuck with 1 TBB/OMP config throughout run. 41

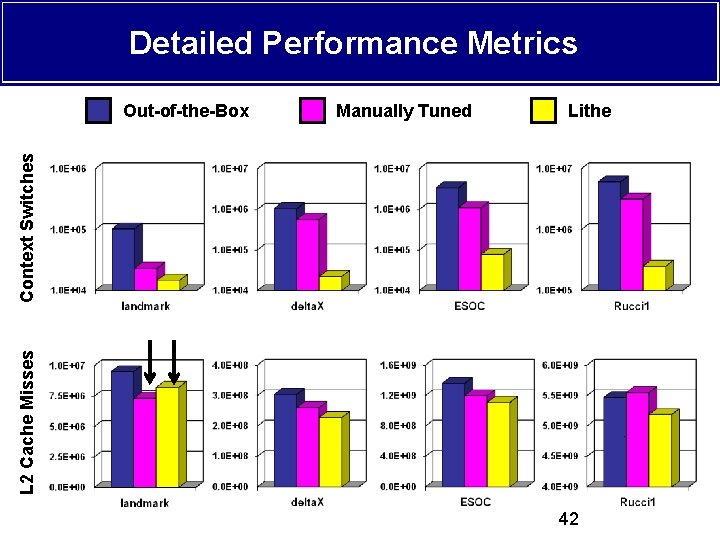

Detailed Performance Metrics Manually Tuned Lithe L 2 Cache Misses Context Switches Out-of-the-Box 42

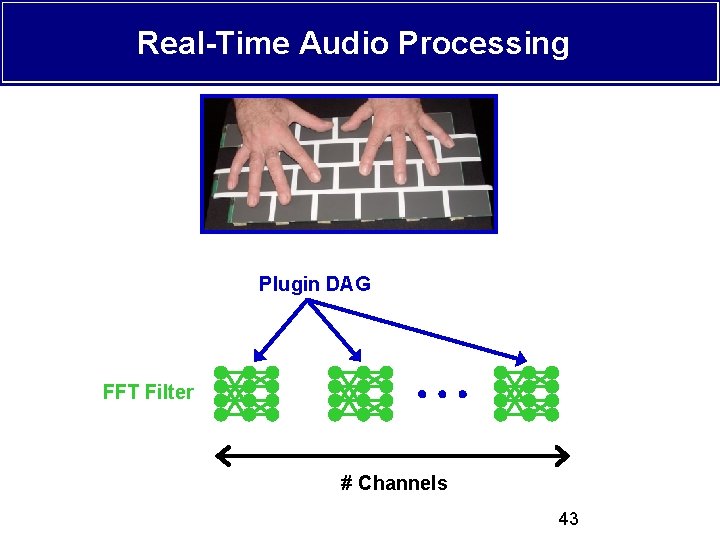

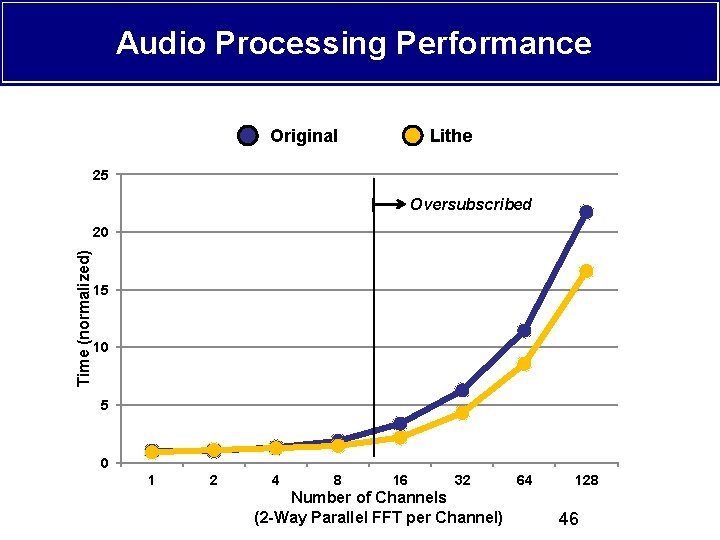

Real-Time Audio Processing Plugin DAG FFT Filter # Channels 43

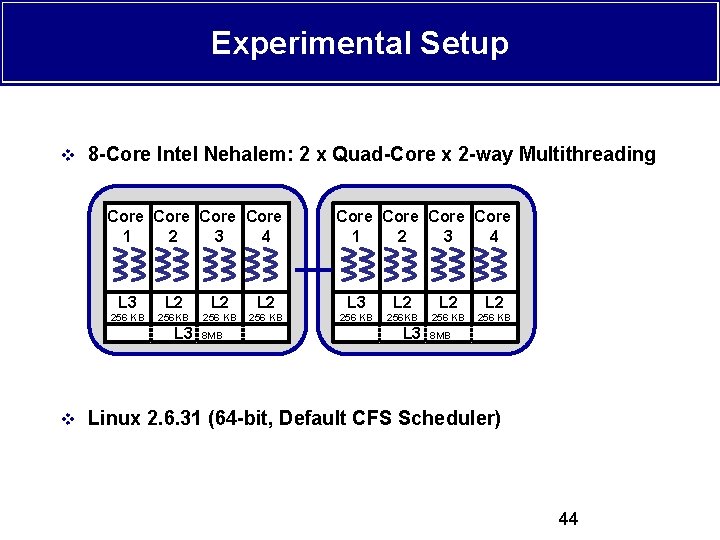

Experimental Setup v 8 -Core Intel Nehalem: 2 x Quad-Core x 2 -way Multithreading Core Core 1 2 3 4 L 3 L 2 L 2 L 2 256 KB 256 KB 256 KB L 3 8 MB v Linux 2. 6. 31 (64 -bit, Default CFS Scheduler) 44

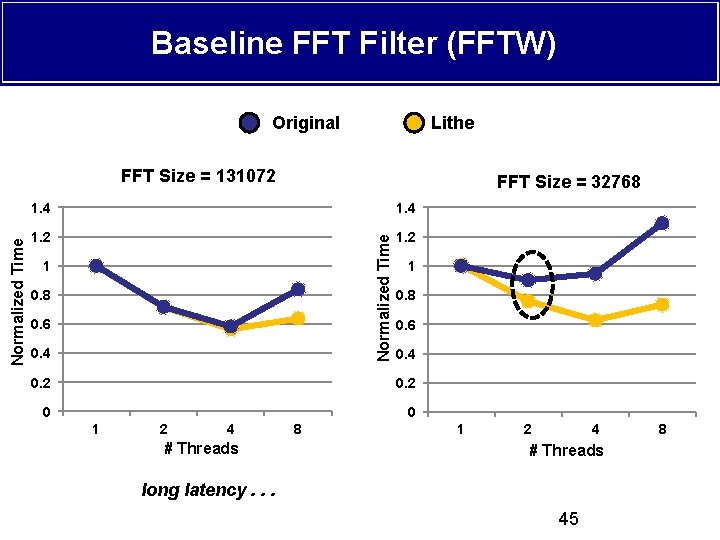

Baseline FFT Filter (FFTW) Original Lithe FFT Size = 32768 1. 4 1. 2 Normalized Time FFT Size = 131072 1 0. 8 0. 6 0. 4 0. 2 0 0 1 2 4 # Threads 8 1 2 4 # Threads long latency. . . 45 8

Audio Processing Performance Original Lithe 25 Oversubscribed Time (normalized) 20 15 10 5 0 1 2 4 8 16 32 Number of Channels (2 -Way Parallel FFT per Channel) 64 128 46

Talk Roadmap v Problem: Efficient parallel composability is hard! v Solution: Lithe v Implementation v Evaluation v Synchronization v Future Work 47

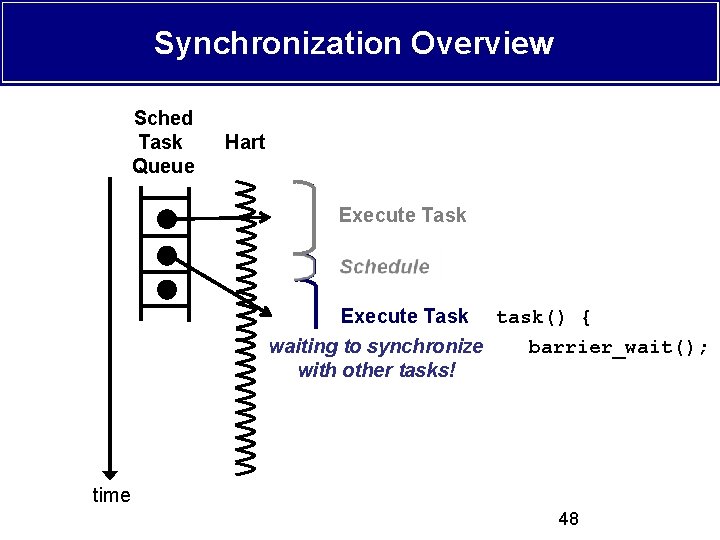

Synchronization Overview Sched Task Queue Hart Execute Task Execute Schedule Execute Task task() { waiting to synchronize barrier_wait(); with other tasks! time 48

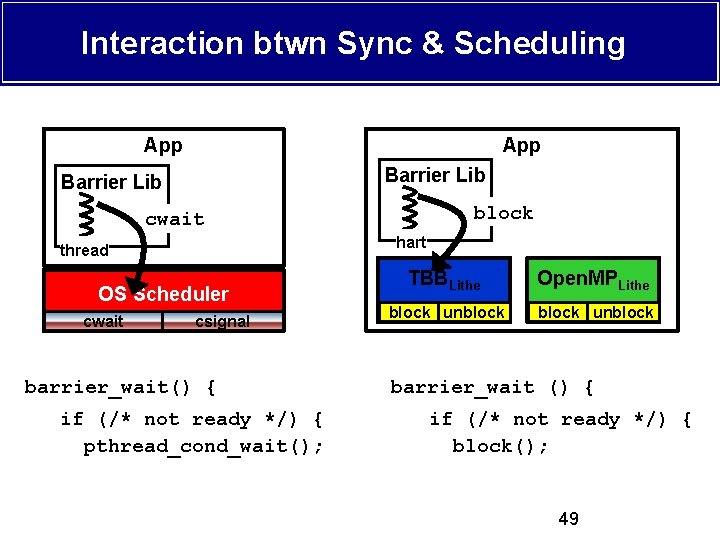

Interaction btwn Sync & Scheduling App Barrier Lib block cwait hart thread OS Scheduler cwait csignal barrier_wait() { if (/* not ready */) { pthread_cond_wait(); TBBLithe Open. MPLithe block unblock barrier_wait () { if (/* not ready */) { block(); 49

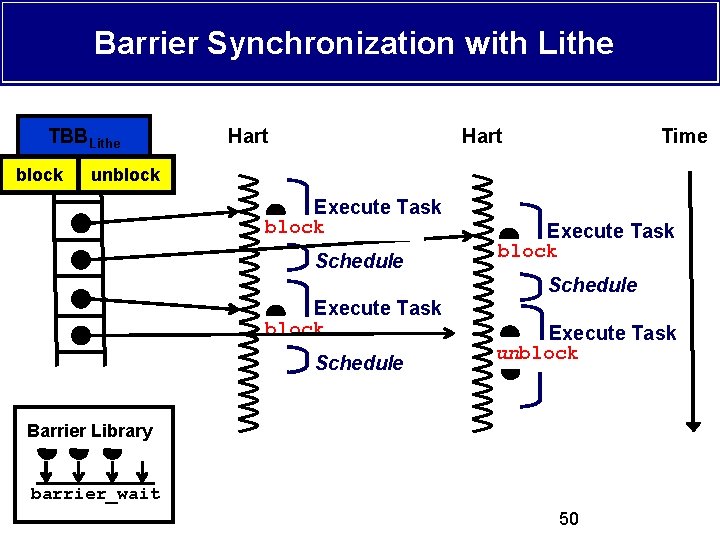

Barrier Synchronization with Lithe TBBLithe Hart Time block unblock Execute Task barrier_wait block Schedule Execute Task barrier_wait unblock Barrier Library barrier_wait 50

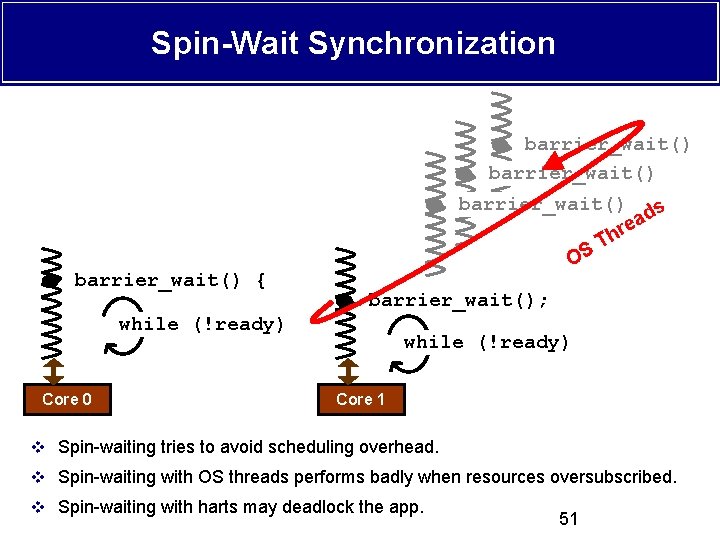

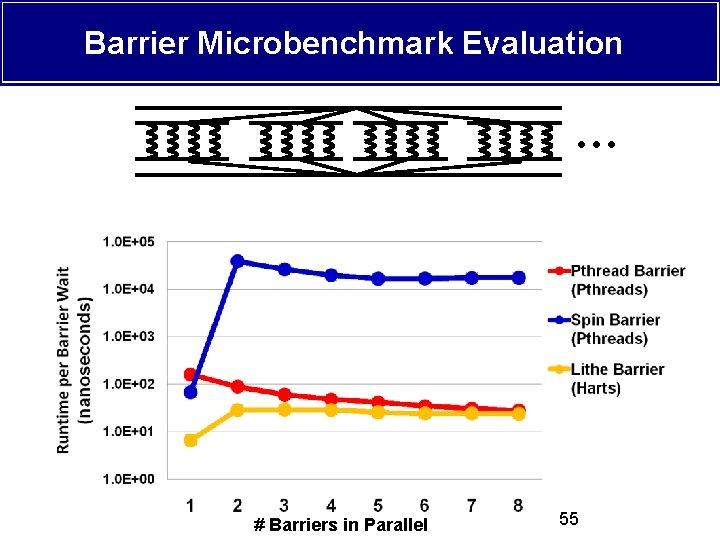

Spin-Wait Synchronization barrier_wait() { barrier_wait() ds a re h T S O barrier_wait(); while (!ready) Core 0 while (!ready) Core 1 v Spin-waiting tries to avoid scheduling overhead. v Spin-waiting with OS threads performs badly when resources oversubscribed. v Spin-waiting with harts may deadlock the app. 51

Experimental Setup v 16 -Core AMD Barcelona: 4 x Quad-Core Opterons Core Core Core Core 1 2 3 4 L 2 L 2 L 2 L 2 512 KB 512 KB 512 KB 512 KB L 3 2 MB v Linux 2. 6. 26 (64 -bit, Default CFS Scheduler) 52

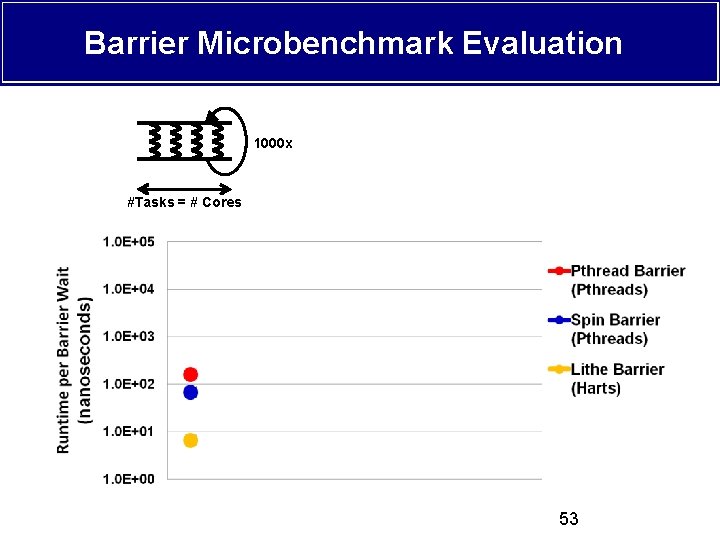

Barrier Microbenchmark Evaluation 1000 x #Tasks = # Cores # Barriers in Parallel 53

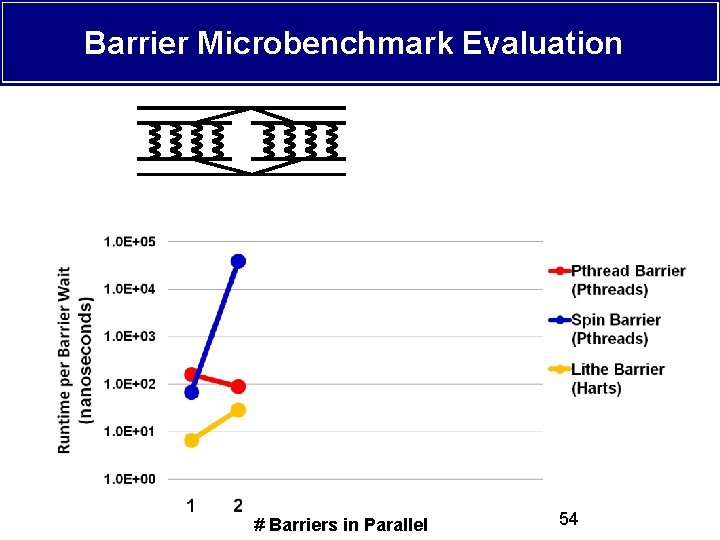

Barrier Microbenchmark Evaluation # Barriers in Parallel 54

Barrier Microbenchmark Evaluation # Barriers in Parallel 55

Talk Roadmap v Problem: Efficient parallel composability is hard! v Solution: Lithe v Implementation v Evaluation v Synchronization v Future Work 56

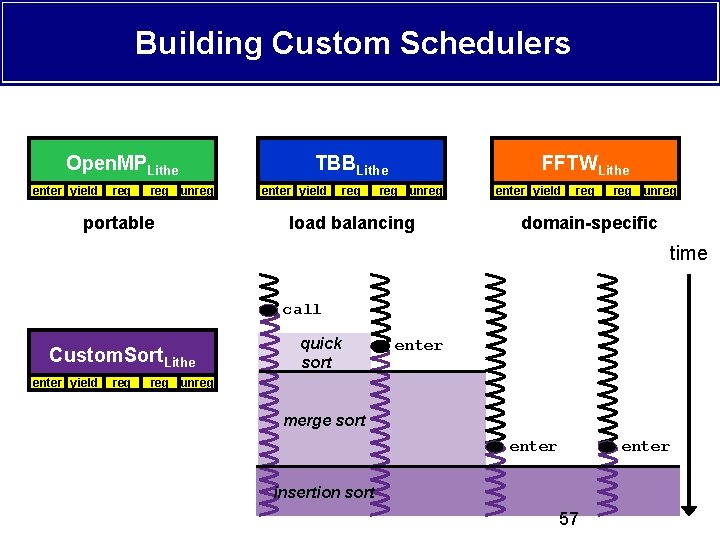

Building Custom Schedulers Open. MPLithe enter yield req reg unreg portable TBBLithe enter yield req FFTWLithe reg unreg load balancing enter yield req reg unreg domain-specific time call Custom. Sort. Lithe enter yield req quick sort enter reg unreg merge sort enter insertion sort 57

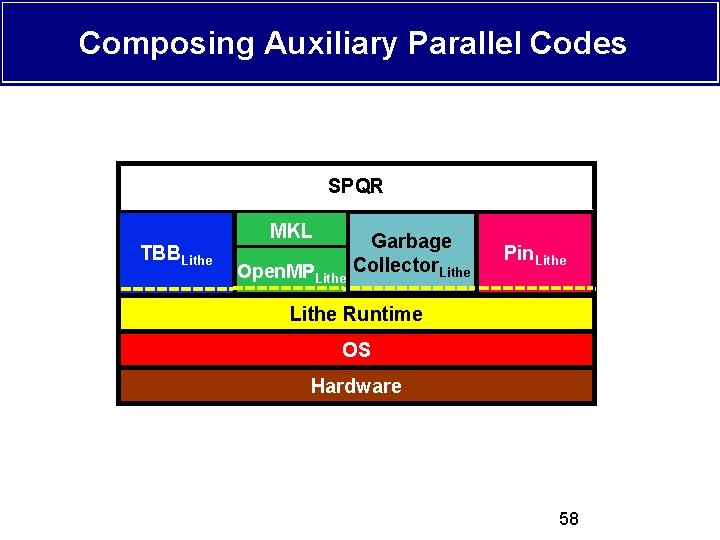

Composing Auxiliary Parallel Codes SPQR MKL TBBLithe Open. MPLithe Garbage MKL Pin. Lithe Collector Lithe Open. MP Lithe Runtime OS Hardware 58

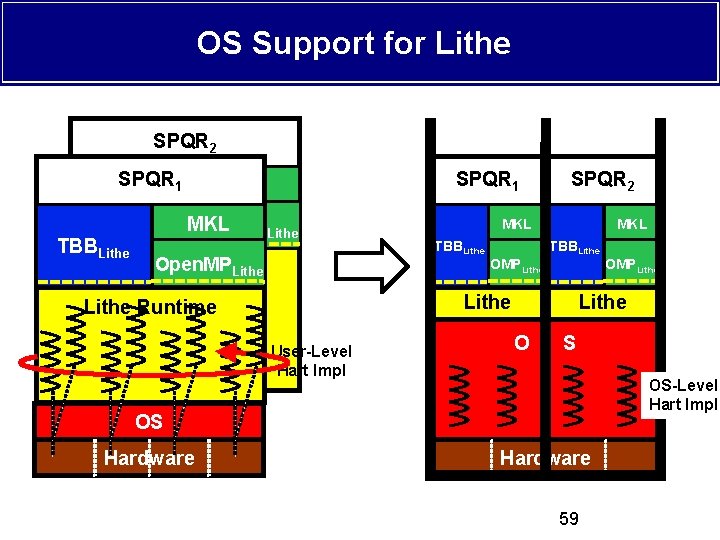

OS Support for Lithe SPQR 2 SPQR 1 TBBLithe SPQR 1 MKL Open. MPLithe SPQR 2 MKL TBBLithe OMPLithe MKL TBBLithe Runtime User-Level Hart Impl Lithe O S OS-Level Hart Impl OS Hardware OMPLithe Hardware 59

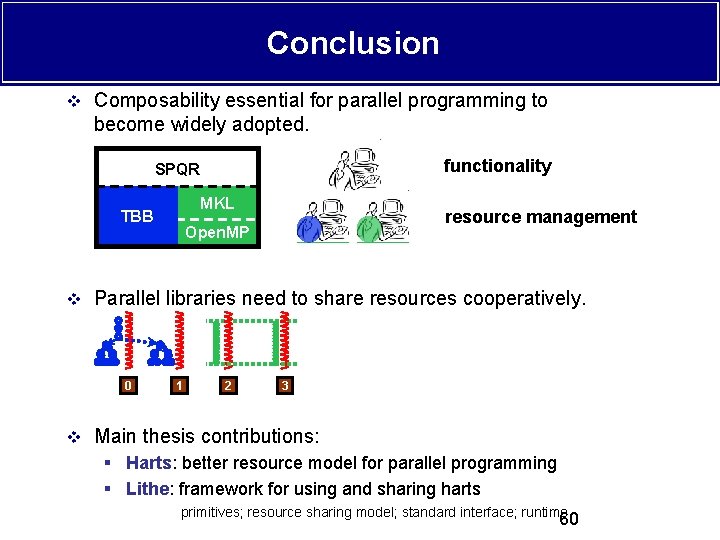

Conclusion v Composability essential for parallel programming to become widely adopted. functionality SPQR MKL TBB resource management Open. MP v Parallel libraries need to share resources cooperatively. 0 1 2 3 v Main thesis contributions: § Harts: better resource model for parallel programming § Lithe: framework for using and sharing harts primitives; resource sharing model; standard interface; runtime 60

Lithe Composing Parallel Software Efficiently Code release at http: //parlab. eecs. berkeley. edu/lithe A big thanks to: § Benjamin Hindman (Lithe, TBB Port, Open. MP Port) § Rimas Avizienis (Audio Processing App Port) § Tim Davis (SPQR), Arch Robison (TBB), Greg Henry (MKL) Research supported by Microsoft (Award #024263), Intel (Award #024894), matching funding by U. C. Discovery (Award #DIG 07 -10227), and the Gigascale Systems Research Focus Center, one of five research centers funded under the Focus Center Research Program, a Semiconductor Research Corporation program. Additional support comes from Par Lab affiliates National Instruments, NEC, Nokia, NVIDIA, Samsung, and Sun Microsystems. 61

Backup Slides 62

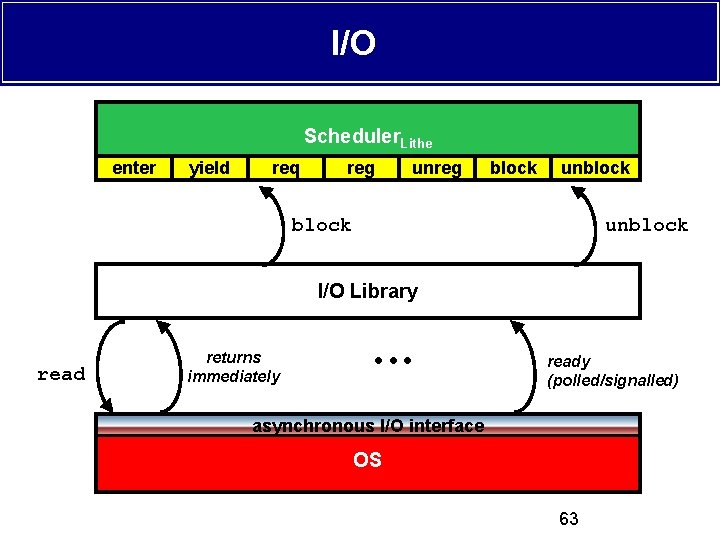

I/O Scheduler. Lithe enter yield req reg unreg block unblock I/O Library read returns immediately ready (polled/signalled) asynchronous I/O interface OS 63

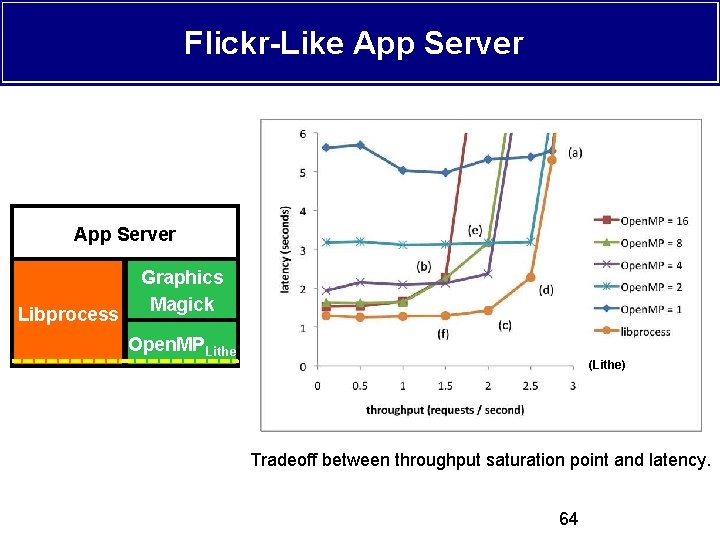

Flickr-Like App Server Libprocess Graphics Magick Open. MPLithe (Lithe) Tradeoff between throughput saturation point and latency. 64

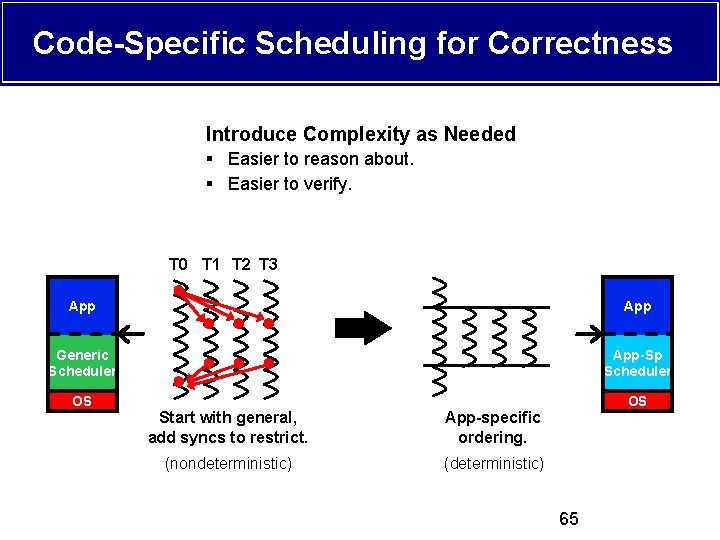

Code-Specific Scheduling for Correctness Introduce Complexity as Needed § Easier to reason about. § Easier to verify. T 0 T 1 T 2 T 3 App Generic Scheduler App-Sp Scheduler OS OS Start with general, add syncs to restrict. App-specific ordering. (nondeterministic) (deterministic) 65

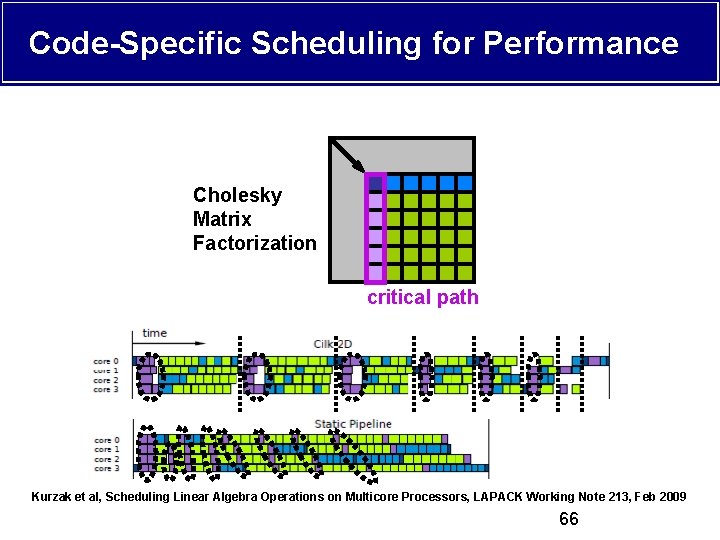

Code-Specific Scheduling for Performance Cholesky Matrix Factorization critical path Kurzak et al, Scheduling Linear Algebra Operations on Multicore Processors, LAPACK Working Note 213, Feb 2009 66

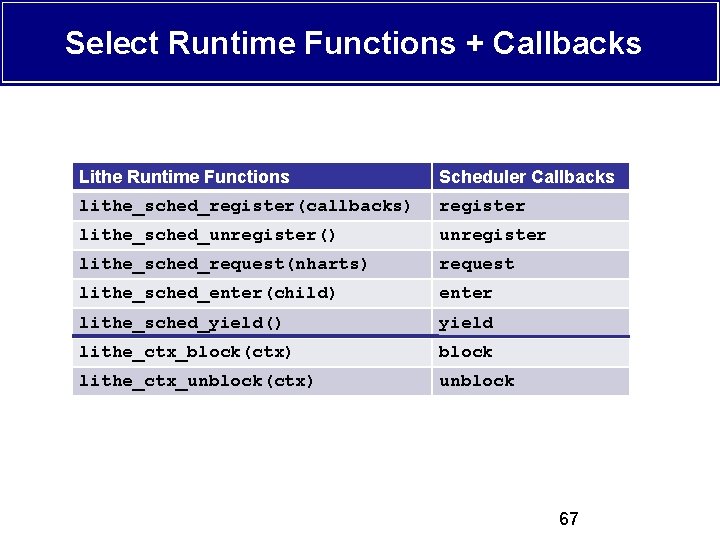

Select Runtime Functions + Callbacks Lithe Runtime Functions Scheduler Callbacks lithe_sched_register(callbacks) register lithe_sched_unregister() unregister lithe_sched_request(nharts) request lithe_sched_enter(child) enter lithe_sched_yield() yield lithe_ctx_block(ctx) block lithe_ctx_unblock(ctx) unblock 67

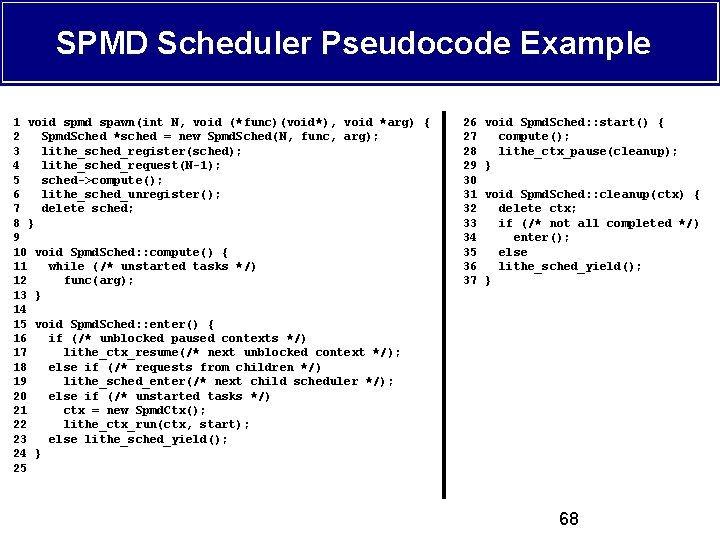

SPMD Scheduler Pseudocode Example 1 void spmd spawn(int N, void (*func)(void*), void *arg) { 2 Spmd. Sched *sched = new Spmd. Sched(N, func, arg); 3 lithe_sched_register(sched); 4 lithe_sched_request(N-1); 5 sched->compute(); 6 lithe_sched_unregister(); 7 delete sched; 8 } 9 10 void Spmd. Sched: : compute() { 11 while (/* unstarted tasks */) 12 func(arg); 13 } 14 15 void Spmd. Sched: : enter() { 16 if (/* unblocked paused contexts */) 17 lithe_ctx_resume(/* next unblocked context */); 18 else if (/* requests from children */) 19 lithe_sched_enter(/* next child scheduler */); 20 else if (/* unstarted tasks */) 21 ctx = new Spmd. Ctx(); 22 lithe_ctx_run(ctx, start); 23 else lithe_sched_yield(); 24 } 25 26 27 28 29 30 31 32 33 34 35 36 37 void Spmd. Sched: : start() { compute(); lithe_ctx_pause(cleanup); } void Spmd. Sched: : cleanup(ctx) { delete ctx; if (/* not all completed */) enter(); else lithe_sched_yield(); } 68

- Slides: 68