Bayesian Theory and Bayesian Modeling Ph D National

Bayesian Theory and Bayesian Modeling 鮑興國 Ph. D. National Taiwan University of Science and Technology

Outline n Bayes rule n Maximum Likelihood Estimation, Maximum A Posteriori Estimation, Frequentist vs. Bayesian n Between Bayesian theory and machine learning n Bayesian Decision Theory n Bayesian Classifiers: Bayes optimal classifier n Naïve Bayes classifier n 3: 48 AM 2

General features n Prior knowledge + Observed data n combine: final prob. of h n Probabilistic prediction n calculate explicit probabilities for hypothesis. n E. g. : this pneumonia patient has a 93% chance of complete recovery. n Predict multiple hypotheses, weighted by their (posterior) probabilities n Resistance to noisy data: in Bayesian modeling, each example can increase/decrease probability that certain hypothesis h is correct, instead of ruling out any inconsistent hypotheses 3 3: 48 AM

General difficulties n Require large initial knowledge of many probability n often estimated in practice n Large computational costs n linear to # of hypotheses n can be reduced in certain situations n Even when intractable n give a standard of optimal decision making against which other methods can be measured 4 3: 48 AM

Bayes Rule

Tossing a coin? n Consider the probability of whether a coin toss will land on heads (or tails) n Problem 1: After 100 tosses, we find out that 51 times the coin land with head up. What is your estimation of P( ) = P(head)? Answer = 51/100? n Problem 2: After 2 tosses, we find out that 2 times the coin land with head up. What is your estimation of P( ) = P(head)? Answer = 2/2? n Suppose that you know more information… 6 3: 48 AM

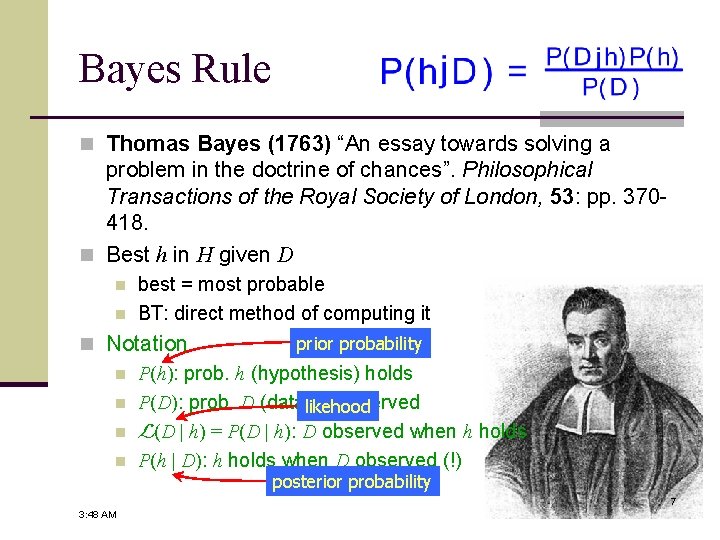

Bayes Rule n Thomas Bayes (1763) “An essay towards solving a problem in the doctrine of chances”. Philosophical Transactions of the Royal Society of London, 53: pp. 370418. n Best h in H given D n n best = most probable BT: direct method of computing it prior probability n Notation n P(h): prob. h (hypothesis) holds n P(D): prob. D (data)likehood is observed n L(D | h) = P(D | h): D observed when h holds n P(h | D): h holds when D observed (!) posterior probability 7 3: 48 AM

Bayes Rule (cont. ) n Bayes rule: the realm of density estimation n Generally, best h maximizes P(h | D) MAP: Maximum A Posteriori n h. MAP = argmaxh P(h | D) n n Especially, if every h equally likely n ML: Maximum Likelihood n h. ML = argmaxh P(D | h) n Note: applicable to general H & D 8 3: 48 AM

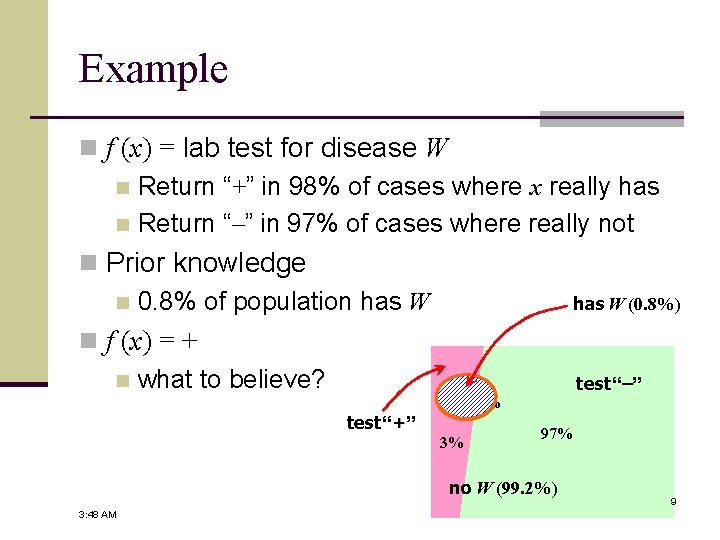

Example n f (x) = lab test for disease W n Return “+” in 98% of cases where x really has n Return “–” in 97% of cases where really not n Prior knowledge n 0. 8% of population has W (0. 8%) n f (x) = + n what to believe? test “–” test “+” 98% 2% 3% 97% no W (99. 2%) 3: 48 AM 9

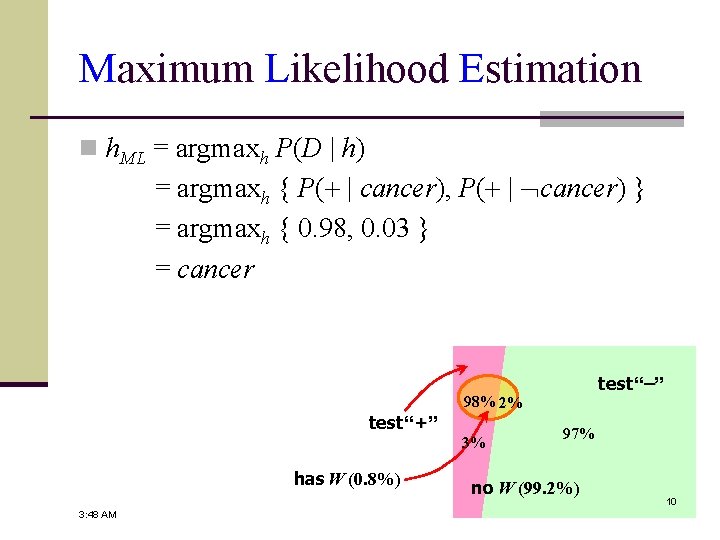

Maximum Likelihood Estimation n h. ML = argmaxh P(D | h) = argmaxh { P(+ | cancer), P(+ | cancer) } = argmaxh { 0. 98, 0. 03 } = cancer test “+” 98% 2% 3% has W (0. 8%) 3: 48 AM test “–” 97% no W (99. 2%) 10

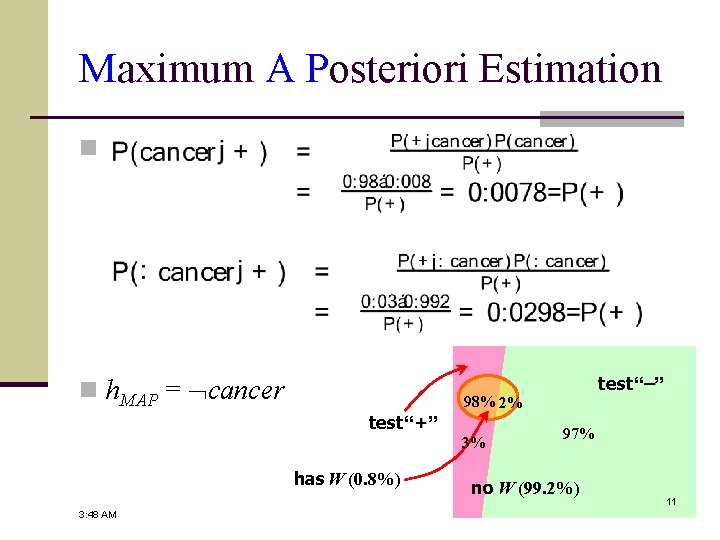

Maximum A Posteriori Estimation n n h. MAP = cancer test “+” 98% 2% 3% has W (0. 8%) 3: 48 AM test “–” 97% no W (99. 2%) 11

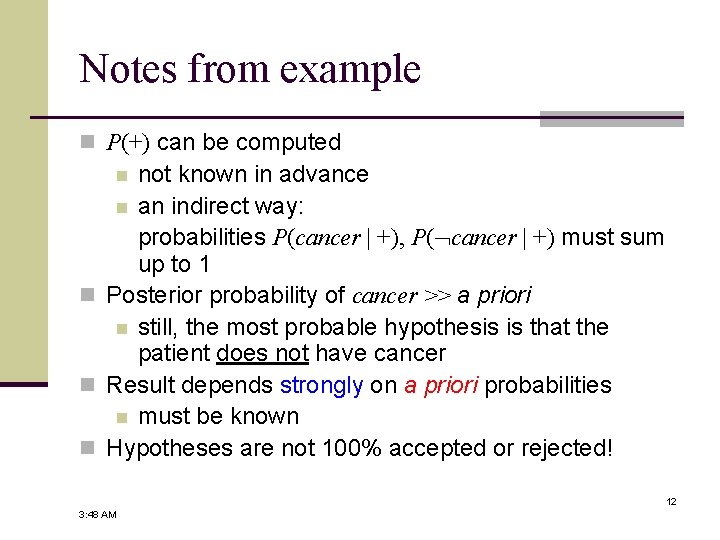

Notes from example n P(+) can be computed not known in advance n an indirect way: probabilities P(cancer | +), P( cancer | +) must sum up to 1 n Posterior probability of cancer >> a priori n still, the most probable hypothesis is that the patient does not have cancer n Result depends strongly on a priori probabilities n must be known n Hypotheses are not 100% accepted or rejected! n 12 3: 48 AM

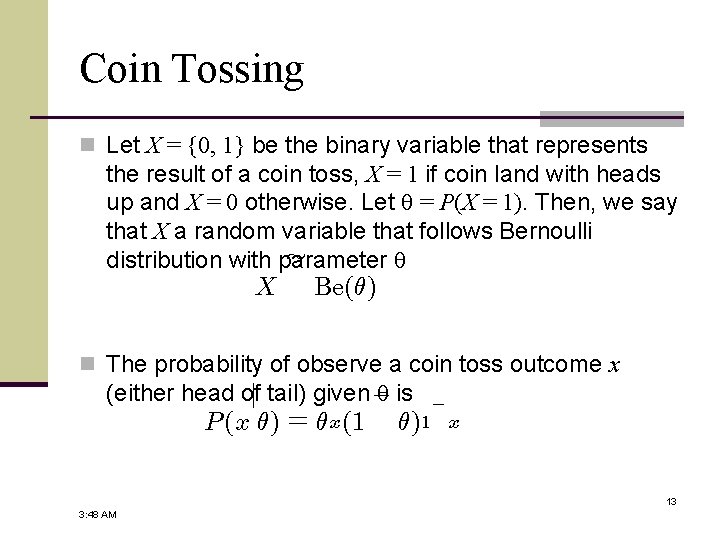

Coin Tossing n Let X = {0, 1} be the binary variable that represents the result of a coin toss, X = 1 if coin land with heads up and X = 0 otherwise. Let = P(X = 1). Then, we say that X a random variable that follows Bernoulli » distribution with parameter X Be(µ) n The probability of observe a coin toss outcome x (either head ofj tail) given ¡ is P (x µ) = µ x (1 µ)1 ¡ x 13 3: 48 AM

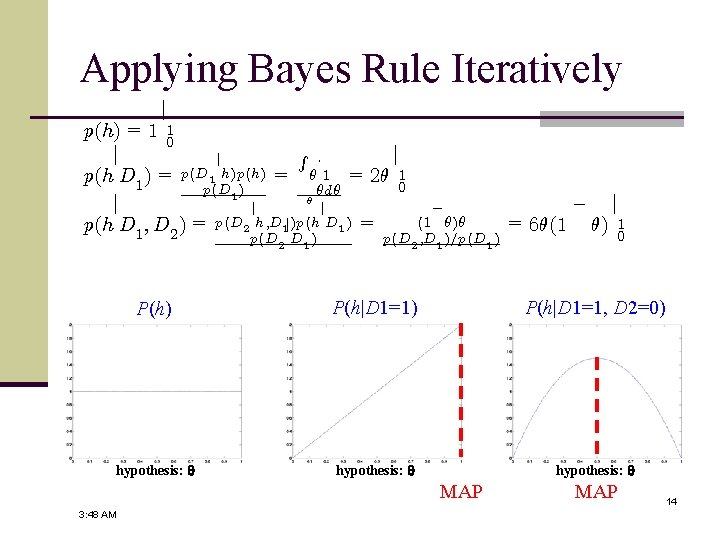

Applying Bayes Rule Iteratively j p(h) = 1 1 0 R j j j ¢ p(h D 1 ) = p(D 1 h)p(h) = µ 1 = 2µ 1 0 p(D 1 ) µdµ µ j j j p(h D 1 ; D 2 ) = p(D 2 h; D 1 j)p(h D 1 ) = p(D 2 D 1 ) ¡ (1 µ)µ p(D 2 ; D 1 )=p(D 1 ) = 6µ(1 ¡ µ) j 1 0 P(h) P(h|D 1=1, D 2=0) hypothesis: MAP 3: 48 AM MAP 14

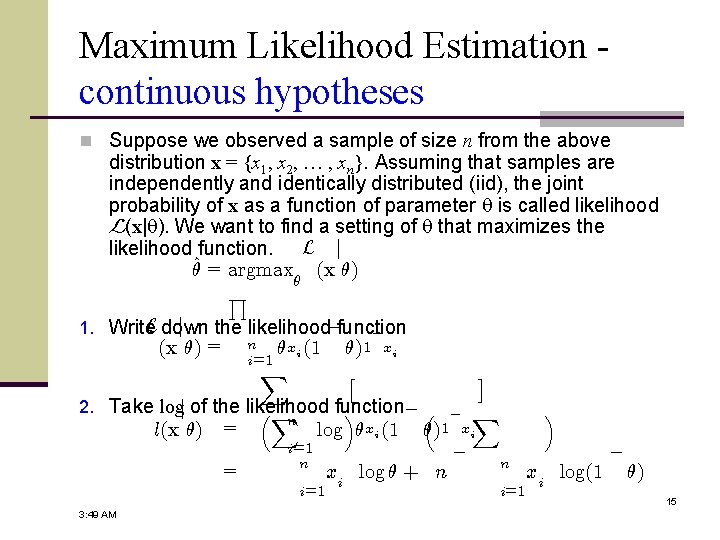

Maximum Likelihood Estimation continuous hypotheses n Suppose we observed a sample of size n from the above distribution x = {x 1, x 2, … , xn}. Assuming that samples are independently and identically distributed (iid), the joint probability of x as a function of parameter is called likelihood L(x| ). We want to find a setting of that maximizes the likelihood function. L j µ^ = argmax (x µ) µ Q L down j 1. Write the likelihood¡function ¡ (x µ) = n i=1 µxi (1 X µ)1 £ xi 2. Take logj of the likelihood function ¡³ ³X ´ l(x µ) = = n log µxi (1 i=1 n i=1 3: 49 AM ¤ ¡ X µ)1 xi xi log µ + n ¡ n i=1 ´ xi log(1 ¡ µ) 15

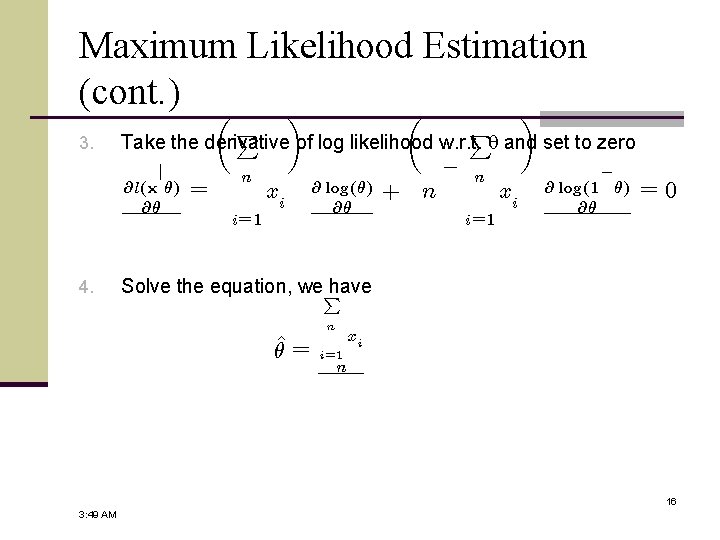

Maximum Likelihood Estimation (cont. ) µ ¶ 3. P Take the derivative of log likelihood w. r. t. and set to zero j @l(x µ) @µ 4. P = n xi i= 1 @ log(µ) @µ + n ¡ n i= 1 xi ¡ @ log(1 µ) @µ =0 Solve the equation, we. P have n µ^ = xi i= 1 n 16 3: 49 AM

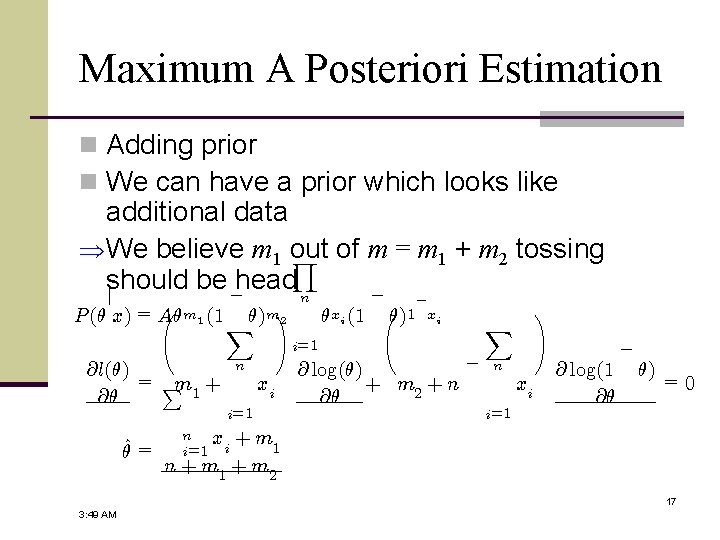

Maximum A Posteriori Estimation n Adding prior n We can have a prior which looks like additional data We believe m 1 out Y of m = m 1 + m 2 tossing should be¡head n ¡ j à m 1 (1 µ)m! P (µ x) = Aµ X 2 @l(µ) = Pm + 1 @µ µ^ = n xi i=1 µxi (1 õ)1 ¡ xi @ log(µ) + m 2 + n @µ ¡ X n i=1 xi ! @ log(1 @µ ¡ µ) =0 n i=1 xi + m 1 n + m 1 + m 2 17 3: 49 AM

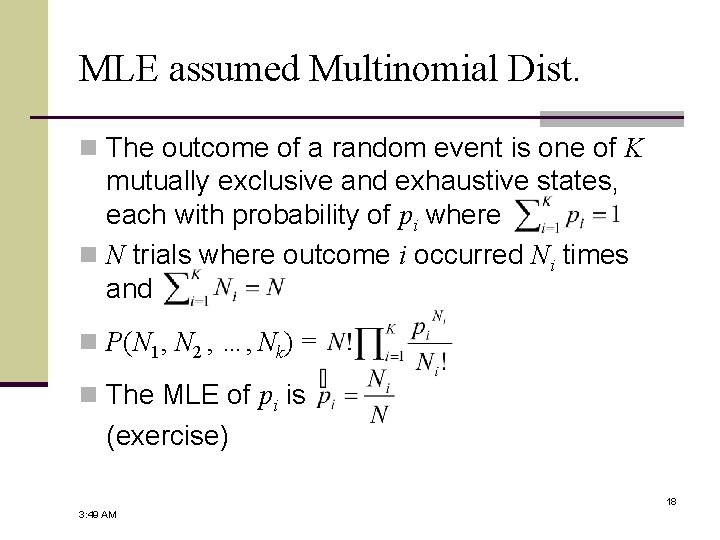

MLE assumed Multinomial Dist. n The outcome of a random event is one of K mutually exclusive and exhaustive states, each with probability of pi where n N trials where outcome i occurred Ni times and n P(N 1, N 2 , …, Nk) = n The MLE of pi is (exercise) 18 3: 49 AM

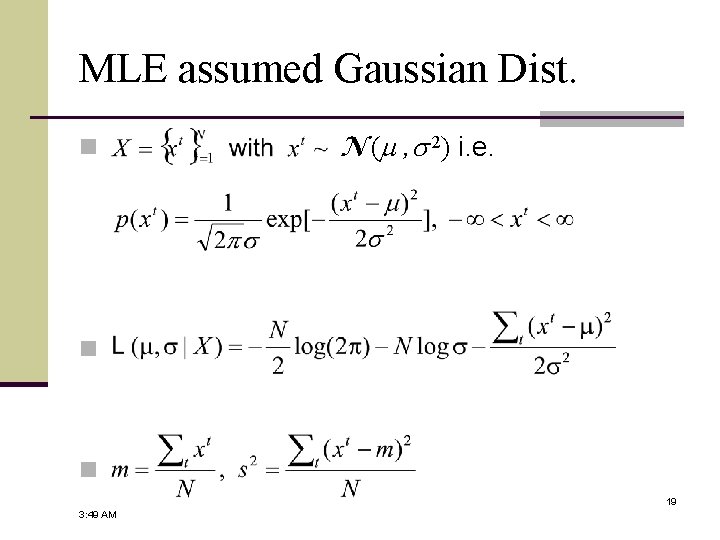

MLE assumed Gaussian Dist. n N (m , 2) i. e. n n 19 3: 49 AM

How to apply MLE or MAP n By enumeration n e. g. : the disease testing case n can be computationally intractable n By parametric methods, with a little calculus (maximization), … e. g. : the coin tossing case n can lead to inappropriate choice of hypothesis space n 20 3: 49 AM

Relation between Bayesian Theory & Machine Learning

MLE, MAP to Explain Other Learning Theories n Up to now, ML (or MAP) is used as a criterion to select the hypothesis. n Parametric methods, or enumeration methods can be applied! n There are links between ML (or MAP) and other learning theories! Will be shown: under certain assumptions, any Mean Squared Estimation learning algorithm outputs an ML hypothesis n MDL and MAP (ML) are related criteria! n 22 3: 49 AM

![Link between MLE & MSE (cont. ) ¢ j E[D x] = w x Link between MLE & MSE (cont. ) ¢ j E[D x] = w x](http://slidetodoc.com/presentation_image_h2/5cf3a1c1fe3eecc7ab9e7e44d19ef1b9/image-23.jpg)

Link between MLE & MSE (cont. ) ¢ j E[D x] = w x + w 0 n Problem setting: j n di = f (xi) + ei (noise) E[di xi ] n f (xi) : noise-free n assumption: ei drawn from ND(0, ) independently n What is the most probable f (called h) given D = (di) ? n By MLE… h. M L = j argmax p(D h) Y 2 h H = X argmax 2 h H = argmin 2 h H i p 1 exp · ¡ (d 2¼¾ ¡ (di h(xi ))2 ¡ i h(xi ))2 2¾ 2 j p(di xi ) xi ¸ i 23 3: 49 AM

Is noise ND distributed? n Would be nice n easier to analyze mathematically n Is likely n n ND approximates other distributions P Central Limit Theorem: For identically distributed random variables Y 1, Y 2, …, Yn governed by an arbitrary ´ probability distribution with mean n Y variance 1 finite Y n and i=1 i n pmean of them 2. The sample N D(¹; ¾ ) n goes to n CLT applies? n n noise = outcome of independent random events? identically distributed? n Note: noise only in di not in xi 24 3: 49 AM

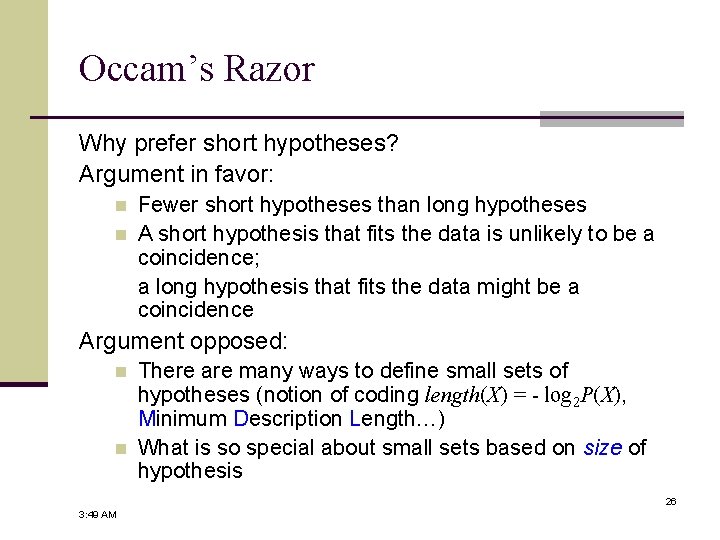

Other than ML & MAP: Occam’s Razor, MDL & All That n We talk about Model Selection! n Clarice Starling: “What did Lecter say about. . . "First principles"? Ardelia Mapp: Simplicity. . . ”《Silence of the lambs》, 1991 n Occam’s Razor (1285 – 1349): “One should not increase, beyond what is necessary, the number of entities required to explain anything. ” n Minimum Description Length: “Select the hypothesis which minimizes the sum of the length of the description of the hypothesis (also called “model”) and the length of the description of the data relative to the hypothesis. ” or best theory h. MDL = argminh (theory + exception) 25 3: 49 AM

Occam’s Razor Why prefer short hypotheses? Argument in favor: n n Fewer short hypotheses than long hypotheses A short hypothesis that fits the data is unlikely to be a coincidence; a long hypothesis that fits the data might be a coincidence Argument opposed: n n There are many ways to define small sets of hypotheses (notion of coding length(X) = - log 2 P(X), Minimum Description Length…) What is so special about small sets based on size of hypothesis 26 3: 49 AM

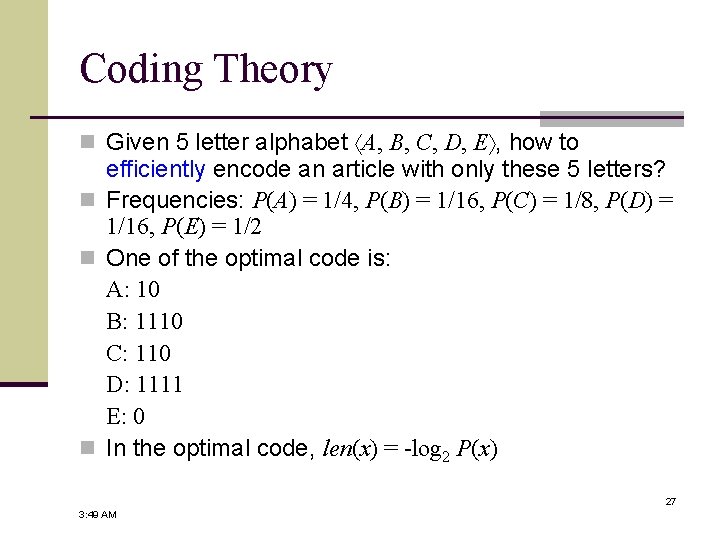

Coding Theory n Given 5 letter alphabet A, B, C, D, E , how to efficiently encode an article with only these 5 letters? n Frequencies: P(A) = 1/4, P(B) = 1/16, P(C) = 1/8, P(D) = 1/16, P(E) = 1/2 n One of the optimal code is: A: 10 B: 1110 C: 110 D: 1111 E: 0 n In the optimal code, len(x) = -log 2 P(x) 27 3: 49 AM

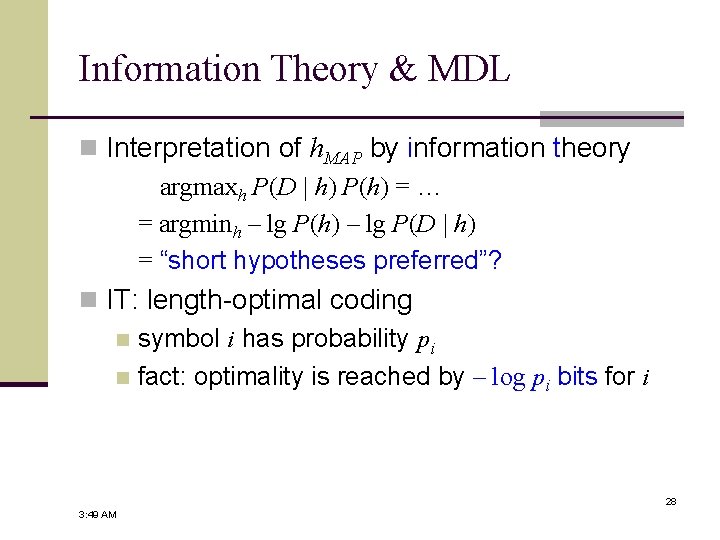

Information Theory & MDL n Interpretation of h. MAP by information theory argmaxh P(D | h) P(h) = … = argminh – lg P(h) – lg P(D | h) = “short hypotheses preferred”? n IT: length-optimal coding n symbol i has probability pi n fact: optimality is reached by – log pi bits for i 28 3: 49 AM

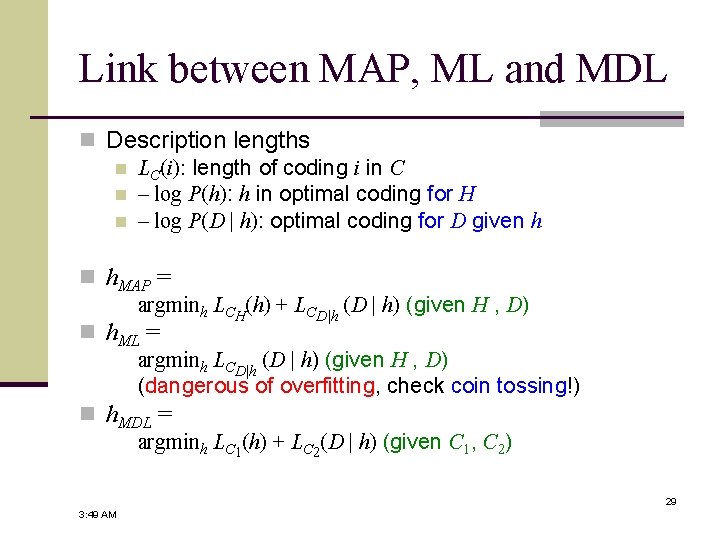

Link between MAP, ML and MDL n Description lengths n LC(i): length of coding i in C n – log P(h): h in optimal coding for H n – log P(D | h): optimal coding for D given h n h. MAP = argminh LCH(h) + LCD|h (D | h) (given H , D) n h. ML = argminh LCD|h (D | h) (given H , D) (dangerous of overfitting, check coin tossing!) n h. MDL = argminh LC 1(h) + LC 2(D | h) (given C 1, C 2) 29 3: 49 AM

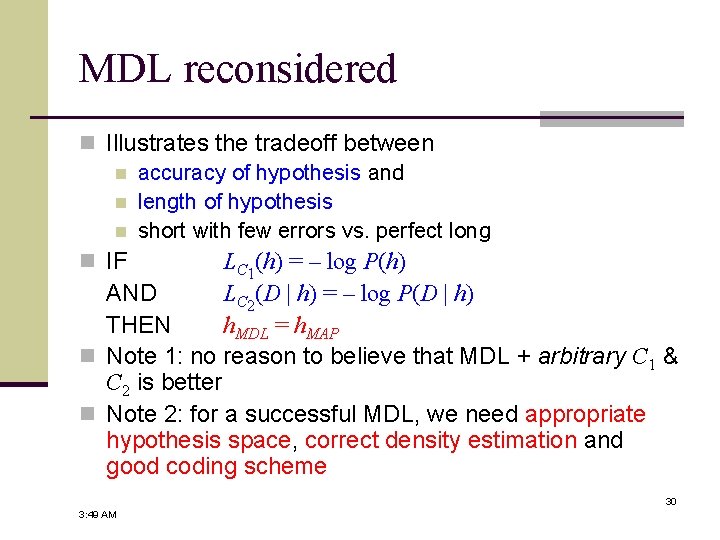

MDL reconsidered n Illustrates the tradeoff between n accuracy of hypothesis and n length of hypothesis n short with few errors vs. perfect long n IF LC 1(h) = – log P(h) AND LC 2(D | h) = – log P(D | h) THEN h. MDL = h. MAP n Note 1: no reason to believe that MDL + arbitrary C 1 & C 2 is better n Note 2: for a successful MDL, we need appropriate hypothesis space, correct density estimation and good coding scheme 30 3: 49 AM

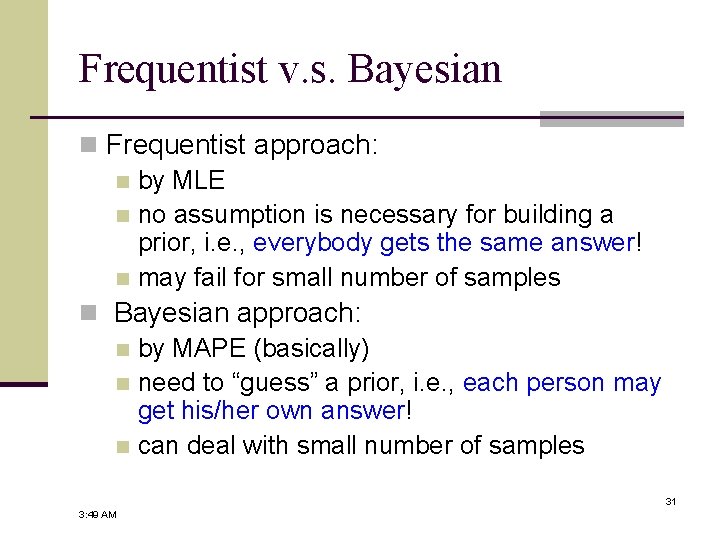

Frequentist v. s. Bayesian n Frequentist approach: n by MLE n no assumption is necessary for building a prior, i. e. , everybody gets the same answer! n may fail for small number of samples n Bayesian approach: n by MAPE (basically) n need to “guess” a prior, i. e. , each person may get his/her own answer! n can deal with small number of samples 31 3: 49 AM

Bayesian Decision Theory

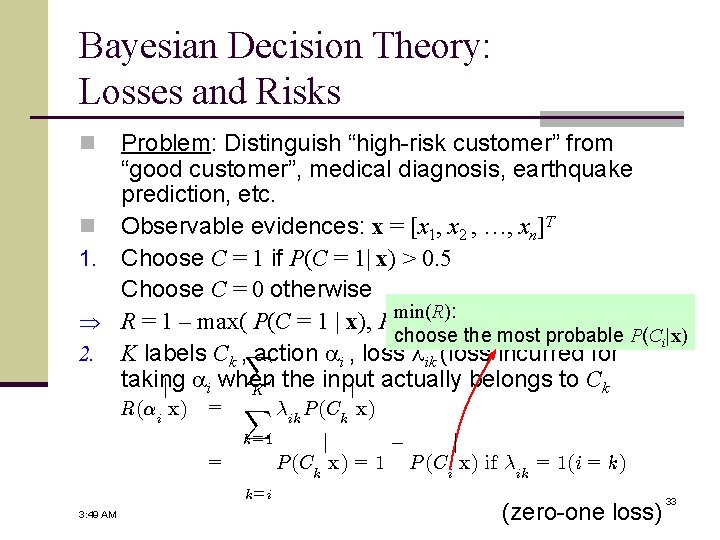

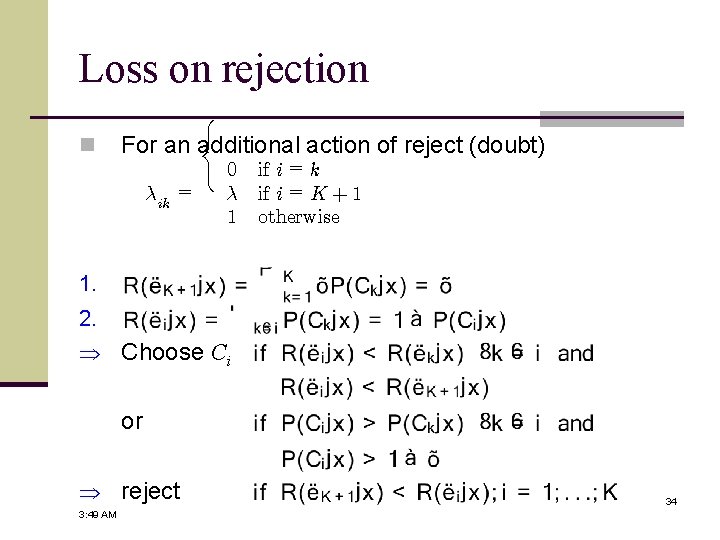

Bayesian Decision Theory: Losses and Risks Problem: Distinguish “high-risk customer” from “good customer”, medical diagnosis, earthquake prediction, etc. n Observable evidences: x = [x 1, x 2 , …, xn]T 1. Choose C = 1 if P(C = 1| x) > 0. 5 Choose C = 0 otherwise min(R): R = 1 – max( P(C = 1 | x), P(C = 0 | x) ) choose the most probable P(Ci|x) 2. K labels Ck , X action ai , loss lik (loss incurred for taking the input j ai when j actually belongs to Ck K n R(®i x) = X ¸ik P (Ck x) k=1 = k=i 3: 49 AM ¡ j j P (Ck x) = 1 P (Ci x) if ¸ik = 1(i = k) (zero-one loss) 33

Loss on rejection n 8 < For an additional action of reject (doubt) ¸ik : 0 if i = k = ¸ if i = K + 1 1 otherwise 1. 2. Choose Ci or reject 3: 49 AM 34

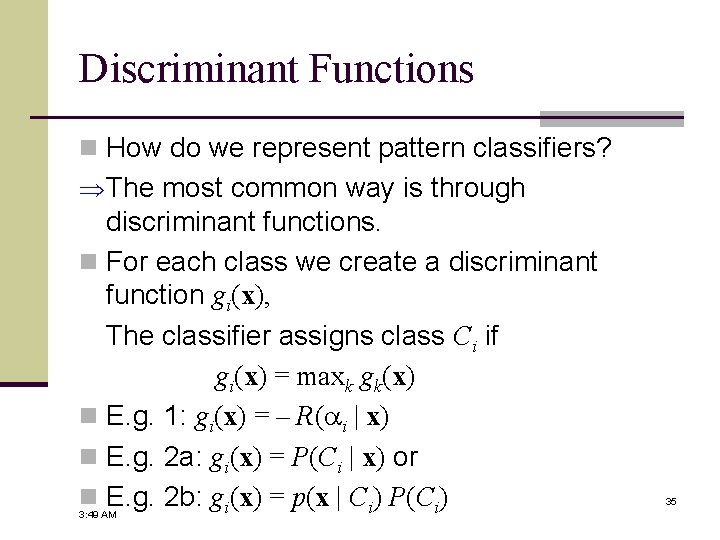

Discriminant Functions n How do we represent pattern classifiers? The most common way is through discriminant functions. n For each class we create a discriminant function gi(x), The classifier assigns class Ci if gi(x) = maxk gk(x) n E. g. 1: gi(x) = – R(ai | x) n E. g. 2 a: gi(x) = P(Ci | x) or n E. g. 2 b: gi(x) = p(x | Ci) P(Ci) 3: 49 AM 35

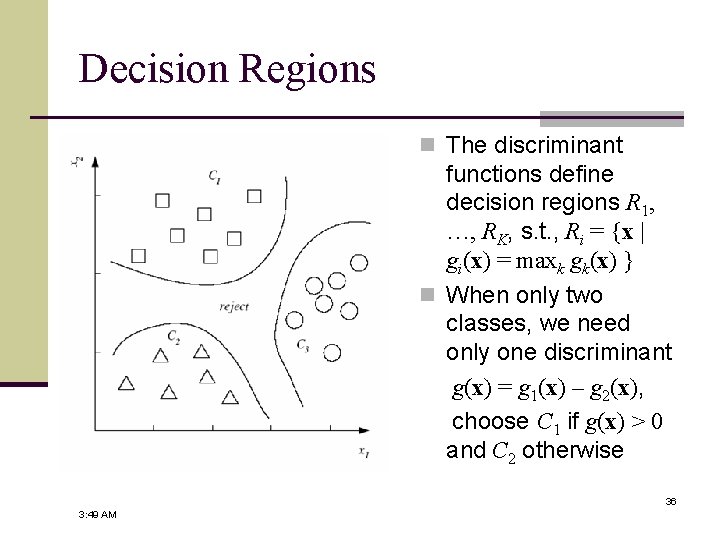

Decision Regions n The discriminant functions define decision regions R 1, …, RK, s. t. , Ri = {x | gi(x) = maxk gk(x) } n When only two classes, we need only one discriminant g(x) = g 1(x) – g 2(x), choose C 1 if g(x) > 0 and C 2 otherwise 36 3: 49 AM

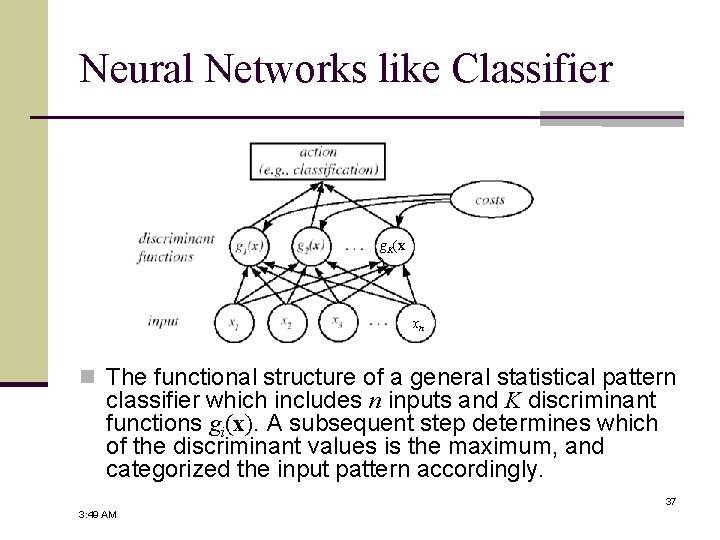

Neural Networks like Classifier g. K(x ) xn n The functional structure of a general statistical pattern classifier which includes n inputs and K discriminant functions gi(x). A subsequent step determines which of the discriminant values is the maximum, and categorized the input pattern accordingly. 37 3: 49 AM

Bayesian Classifiers

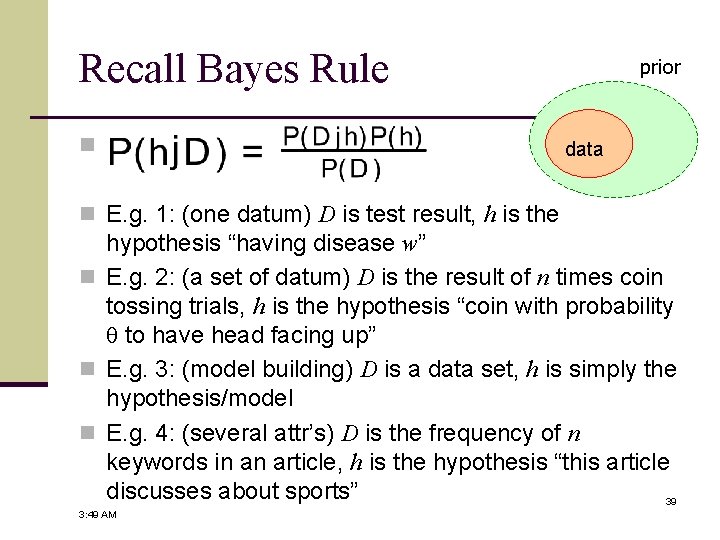

Recall Bayes Rule n prior data n E. g. 1: (one datum) D is test result, h is the hypothesis “having disease w” n E. g. 2: (a set of datum) D is the result of n times coin tossing trials, h is the hypothesis “coin with probability to have head facing up” n E. g. 3: (model building) D is a data set, h is simply the hypothesis/model n E. g. 4: (several attr’s) D is the frequency of n keywords in an article, h is the hypothesis “this article discusses about sports” 39 3: 49 AM

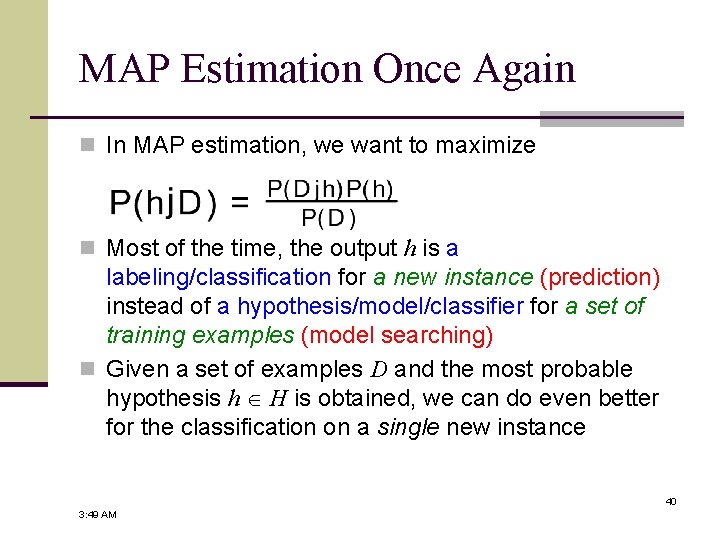

MAP Estimation Once Again n In MAP estimation, we want to maximize n Most of the time, the output h is a labeling/classification for a new instance (prediction) instead of a hypothesis/model/classifier for a set of training examples (model searching) n Given a set of examples D and the most probable hypothesis h H is obtained, we can do even better for the classification on a single new instance 40 3: 49 AM

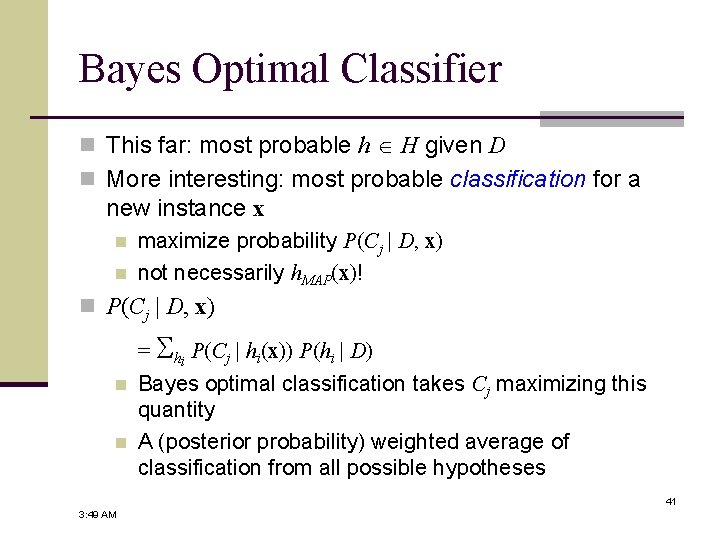

Bayes Optimal Classifier n This far: most probable h H given D n More interesting: most probable classification for a new instance x n n maximize probability P(Cj | D, x) not necessarily h. MAP(x)! n P(Cj | D, x) = hi P(Cj | hi(x)) P(hi | D) n n Bayes optimal classification takes Cj maximizing this quantity A (posterior probability) weighted average of classification from all possible hypotheses 41 3: 49 AM

Bayes Optimal Classifier (cont. ) n Any system n computing argmax. C P(Cj | D, x) is called Bayes optimal j classifier n No other system n with same hypothesis space H & prior knowledge is better on average n Maximizes the probability new x is classified correctly (given x, H, …) n “Learned” h may not be in H! n H’: comparisons on linear combinations of predictions made by H 42 3: 49 AM

Gibbs Algorithm n Optimal method needs too much prior knowledge in practice P(hi | D): linear to |H| n P(Cj | hi): linear to |V| · |H| V: all possible labeling, H: all hypotheses n n Gibbs: pick random h & apply it n according to (estimate of) P(h | D) n surprisingly good! 43 3: 49 AM

Naïve Bayes classifier n Problem setting n examples: attribute tuples & finite # of classes n h. MAP = argmaxj P(Cj | x 1, …, xn) n apply Bayes theorem n Estimates n P(Cj): frequencies in D n P(x 1, …, xn | Cj): can be VERY small for limited samples overfitting! 44 3: 49 AM

Naïve Bayes classifier (cont. ) n Make assumption n x 1, …, xn are independent given Cj n P(x 1, …, xn | Cj) = i P(xi | Cj) n CNB n argmaxj P(Cj) i P(xi | Cj) n considerably smaller amount of priors 45 3: 49 AM

Naïve Bayes learning n Compute estimates n P(Cj): frequencies in D n P(xi | Cj): similarly n Compute argmax Ci for new x n if conditional indep. holds, same as MAP classification n No searching, just computation n space: P(Cj), P(xi | Cj) 46 3: 49 AM

Some subtleties n Independence assumption n is usually violated n but method works anyway n argmax does not require P(Cj | x) is correct n Missing or rare attribute values n add “virtual” examples nc +mp n m-estimate of probability: n+m n: total no. of training examples n. C: no. of examples with given attr. value p: prior probability m: equivalent sample size 3: 49 AM 47

Some References n General Bayesian Theory: “Machine Learning” by T. Mitchell, Mc. GRAW-HILL, 1997 (Ch. 6) n Bayesian Decision Theory: “Pattern Classification” (2 nd ed) by R. O. Duda, P. E. Hart and D. G. Stork, John Wiley & Sons, 2000 (Ch 2) n Others: D. Michie, D. J. Spiegelhalter, C. C. Taylor (1994). Machine Learning, Neural and Statistical Classification, (edited collection). New York: Ellis Horwood. http: //www. amsta. leeds. ac. uk/~charles/statlog/ 48 3: 49 AM

- Slides: 48