Advances in Bayesian Learning and Inference in Bayesian

Advances in Bayesian Learning and Inference in Bayesian Networks Irina Rish IBM T. J. Watson Research Center rish@us. ibm. com

“Road map” Introduction and motivation: n n How to use them n n Probabilistic inference How to learn them n n What are Bayesian networks and why use them? n Learning parameters n Learning graph structure Summary

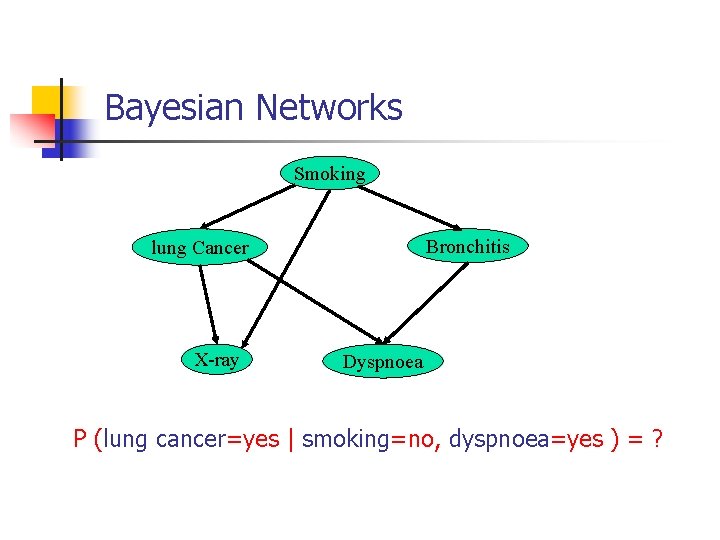

Bayesian Networks Smoking Bronchitis lung Cancer X-ray Dyspnoea P (lung cancer=yes | smoking=no, dyspnoea=yes ) = ?

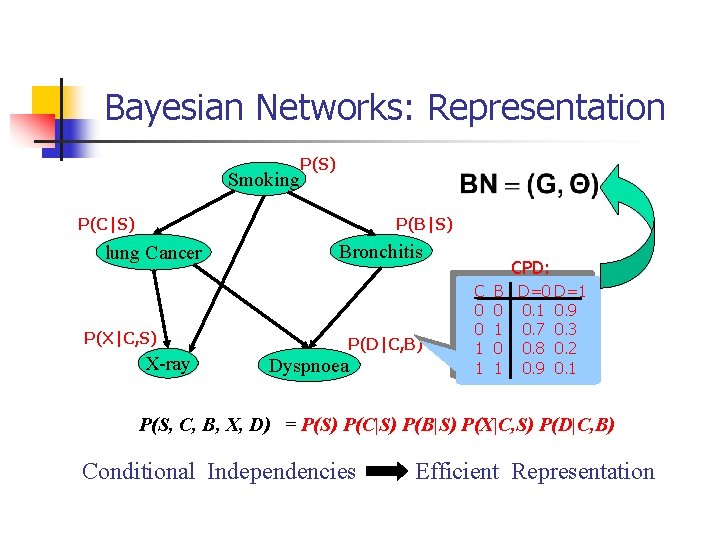

Bayesian Networks: Representation Smoking P(S) P(C|S) P(B|S) lung Cancer Bronchitis P(X|C, S) X-ray P(D|C, B) Dyspnoea CPD: C 0 0 1 1 B D=0 D=1 0 0. 1 0. 9 1 0. 7 0. 3 0 0. 8 0. 2 1 0. 9 0. 1 P(S, C, B, X, D) = P(S) P(C|S) P(B|S) P(X|C, S) P(D|C, B) Conditional Independencies Efficient Representation

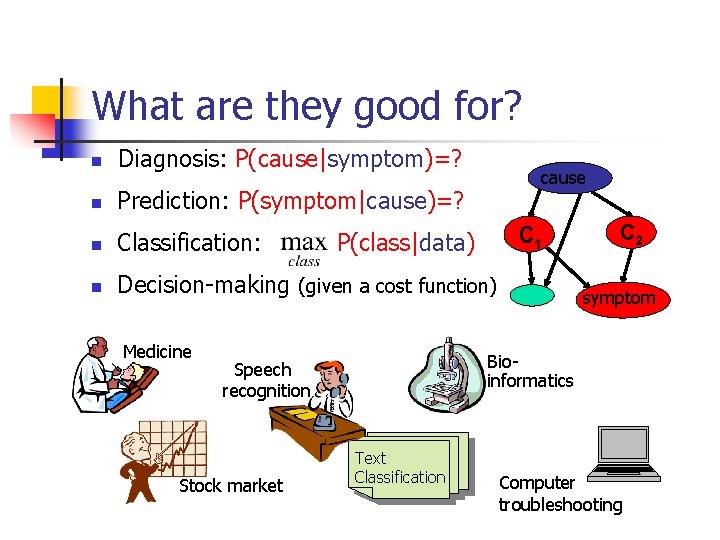

What are they good for? n Diagnosis: P(cause|symptom)=? n Prediction: P(symptom|cause)=? n Classification: n Decision-making (given a cost function) Medicine P(class|data) symptom Bioinformatics Speech recognition Stock market cause Text Classification Computer troubleshooting

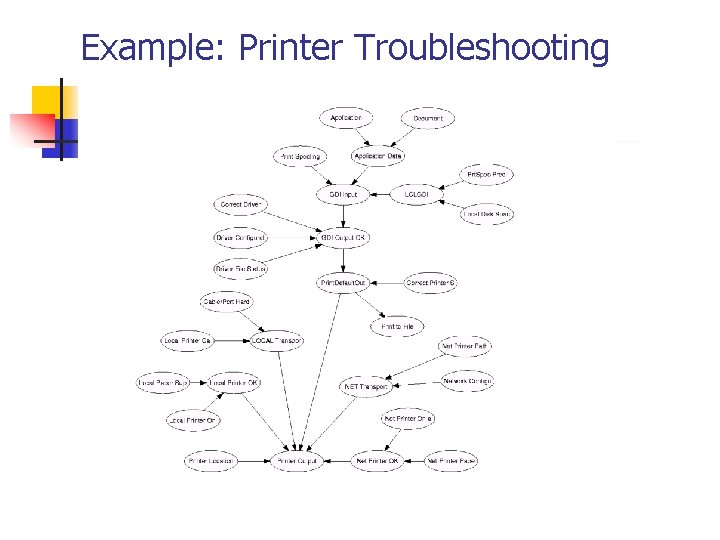

Example: Printer Troubleshooting

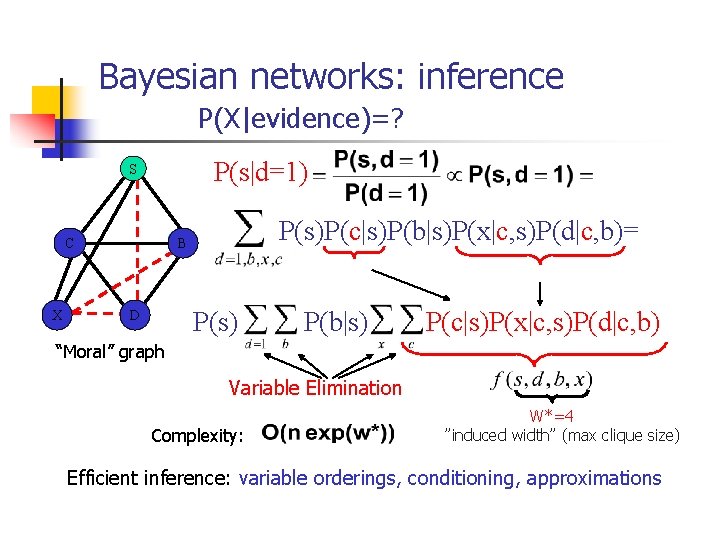

Bayesian networks: inference P(X|evidence)=? P(s|d=1) S CC X X P(s)P(c|s)P(b|s)P(x|c, s)P(d|c, b)= B B P(s) D D P(b|s) P(c|s)P(x|c, s)P(d|c, b) “Moral” graph Variable Elimination Complexity: W*=4 ”induced width” (max clique size) Efficient inference: variable orderings, conditioning, approximations

“Road map” Introduction and motivation: ü ü How to use them ü ü Probabilistic inference Why and how to learn them n n What are Bayesian networks and why use them? n Learning parameters n Learning graph structure Summary

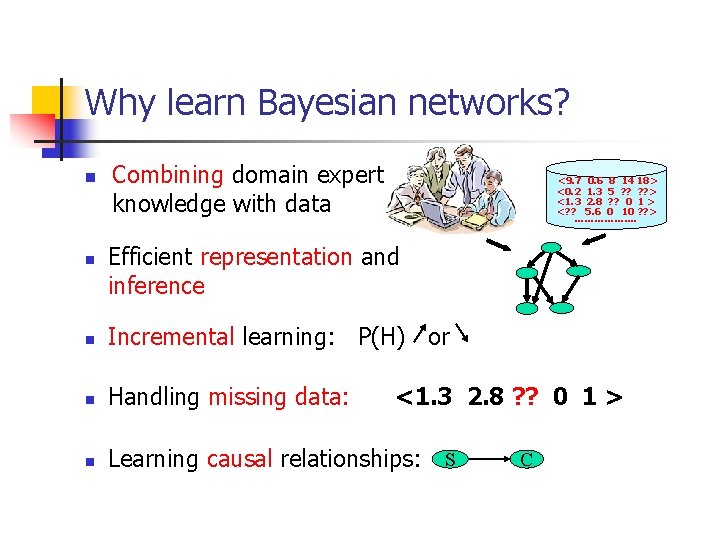

Why learn Bayesian networks? n n Combining domain expert knowledge with data <9. 7 0. 6 8 14 18> <0. 2 1. 3 5 ? ? > <1. 3 2. 8 ? ? 0 1 > <? ? 5. 6 0 10 ? ? > ………………. Efficient representation and inference n Incremental learning: P(H) or n Handling missing data: n Learning causal relationships: S <1. 3 2. 8 ? ? 0 1 > C

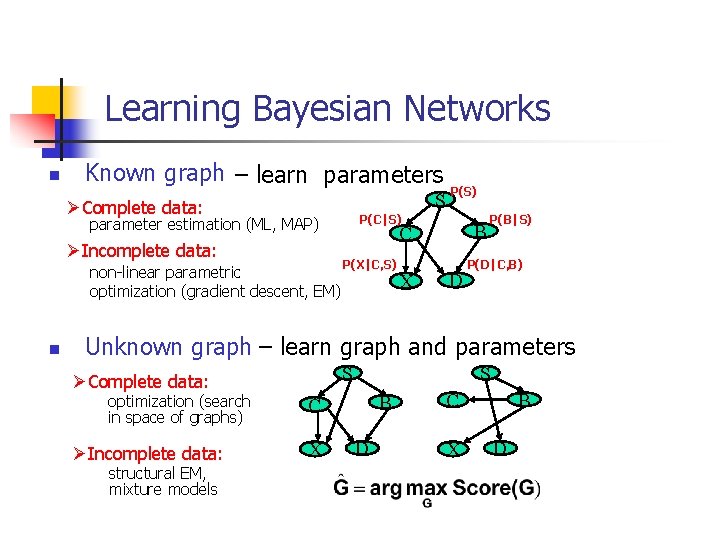

Learning Bayesian Networks n Known graph – learn parameters S ØComplete data: P(C|S) parameter estimation (ML, MAP) ØIncomplete data: non-linear parametric optimization (gradient descent, EM) n P(S) B C P(X|C, S) X D P(B|S) P(D|C, B) Unknown graph – learn graph and parameters S ØComplete data: optimization (search in space of graphs) ØIncomplete data: structural EM, mixture models S B C X D

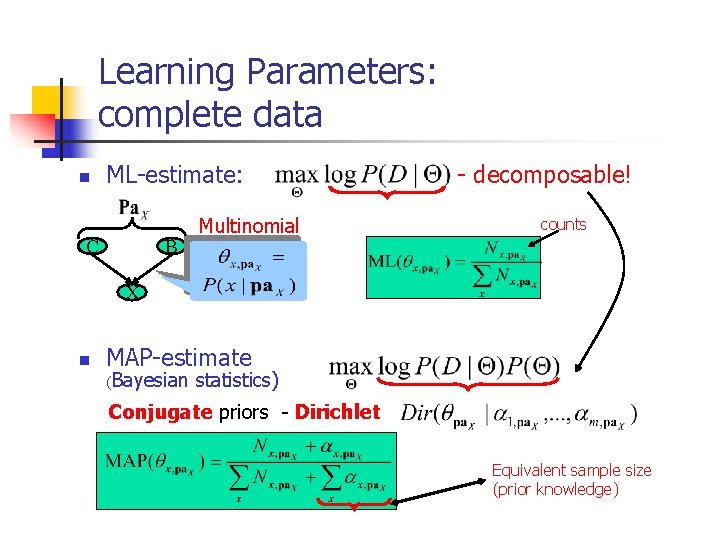

Learning Parameters: complete data n ML-estimate: C B Multinomial - decomposable! counts X n MAP-estimate (Bayesian statistics) Conjugate priors - Dirichlet Equivalent sample size (prior knowledge)

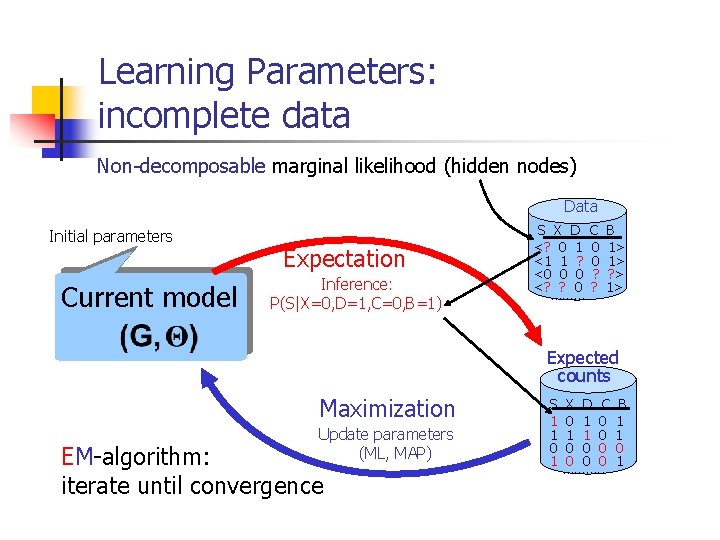

Learning Parameters: incomplete data Non-decomposable marginal likelihood (hidden nodes) Data Initial parameters Current model Expectation Inference: P(S|X=0, D=1, C=0, B=1) S X D <? 0 1 <1 1 ? <0 0 0 <? ? 0 ……… C 0 0 ? ? B 1> 1> ? > 1> Expected counts Maximization Update parameters (ML, MAP) EM-algorithm: iterate until convergence S 1 1 0 1 X D C 0 1 1 0 0 0 0 ………. . B 1 1 0 1

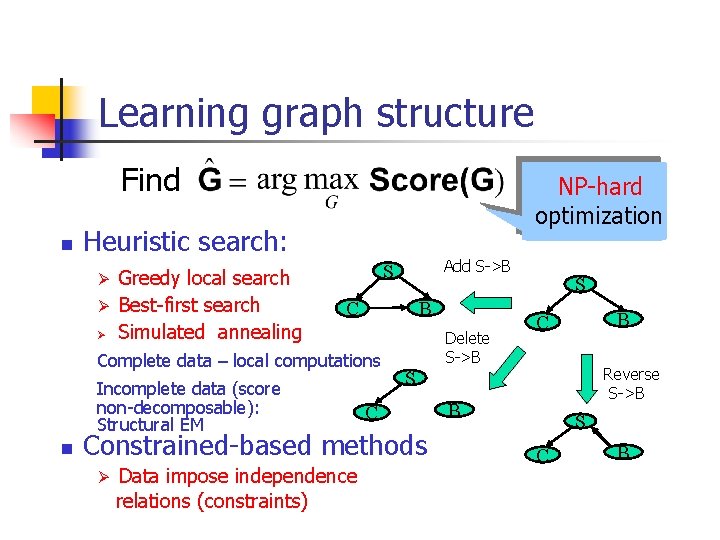

Learning graph structure Find n NP-hard optimization Heuristic search: Ø Ø Ø Greedy local search Best-first search Simulated annealing B C Complete data – local computations Incomplete data (score non-decomposable): Structural EM n Add S->B S Delete S->B Data impose independence relations (constraints) B C Reverse S->B S C Constrained-based methods Ø S B S C B

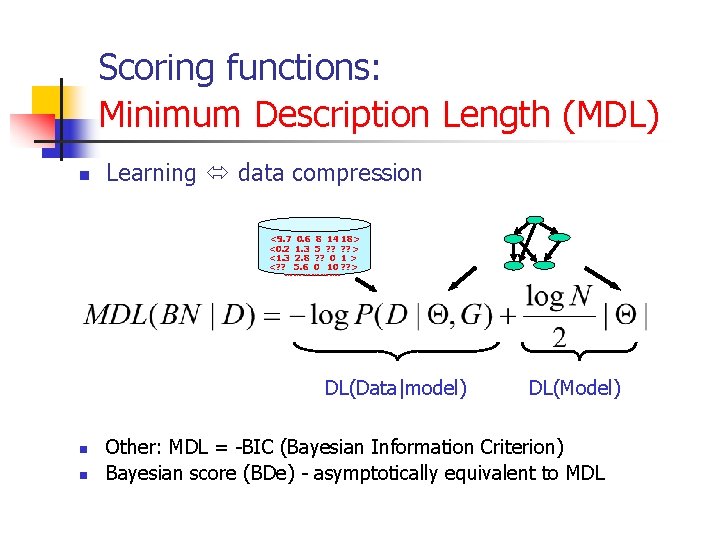

Scoring functions: Minimum Description Length (MDL) n Learning data compression <9. 7 0. 6 8 14 18> <0. 2 1. 3 5 ? ? > <1. 3 2. 8 ? ? 0 1 > <? ? 5. 6 0 10 ? ? > ………………. DL(Data|model) n n DL(Model) Other: MDL = -BIC (Bayesian Information Criterion) Bayesian score (BDe) - asymptotically equivalent to MDL

Summary n n n Bayesian Networks – graphical probabilistic models Efficient representation and inference Expert knowledge + learning from data Learning: n parameters (parameter estimation, EM) n structure (optimization w/ score functions – e. g. , MDL) Applications/systems: collaborative filtering (MSBN), fraud detection (AT&T), classification (Auto. Class (NASA), TANBLT(SRI)) Future directions: causality, time, model evaluation criteria, approximate inference/learning, on-line learning, etc.

- Slides: 15