Bayesian Networks Lecture 9 Edited from Nir Friedmans

Bayesian Networks Lecture 9 . Edited from Nir Friedman’s slides by Dan Geiger from Nir Friedman’s slides.

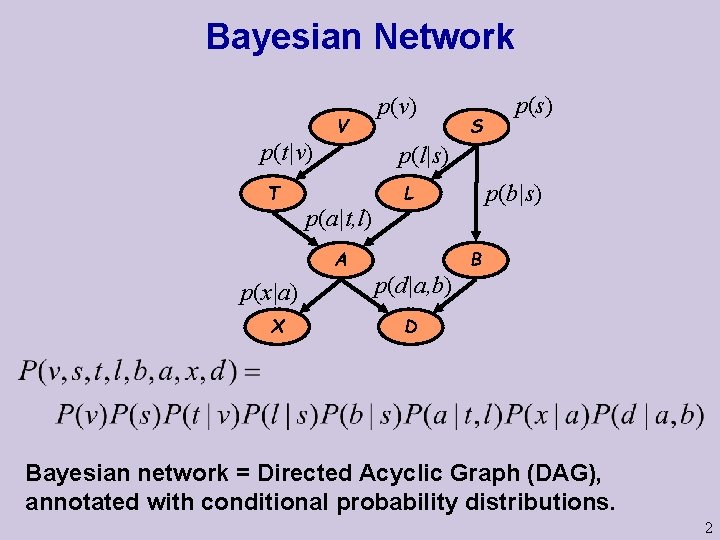

Bayesian Network V p(t|v) T X S p(s) p(l|s) p(a|t, l) A p(x|a) p(v) p(b|s) L p(d|a, b) B D Bayesian network = Directed Acyclic Graph (DAG), annotated with conditional probability distributions. 2

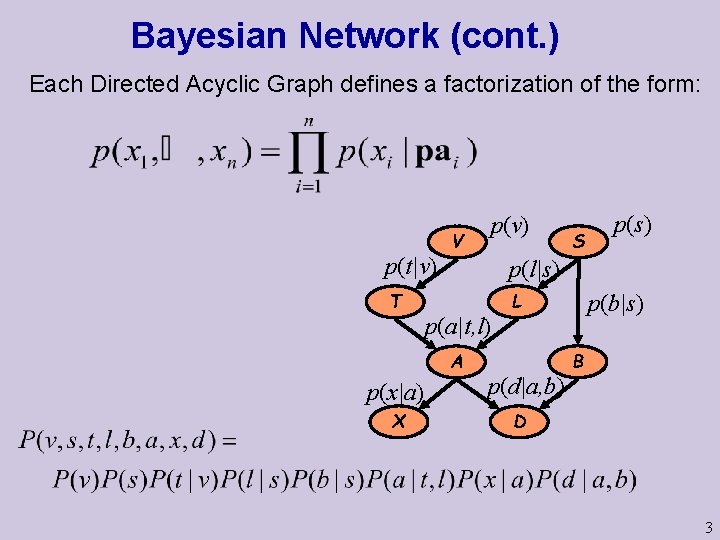

Bayesian Network (cont. ) Each Directed Acyclic Graph defines a factorization of the form: p(t|v) T V X S p(s) p(l|s) p(a|t, l) A p(x|a) p(v) L p(d|a, b) p(b|s) B D 3

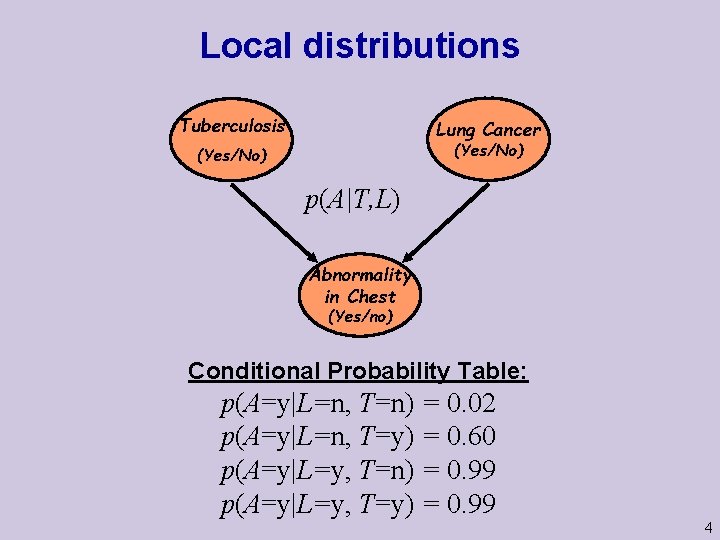

Local distributions Tuberculosis Lung Cancer (Yes/No) p(A|T, L) Abnormality in Chest (Yes/no) Conditional Probability Table: p(A=y|L=n, T=n) = 0. 02 p(A=y|L=n, T=y) = 0. 60 p(A=y|L=y, T=n) = 0. 99 p(A=y|L=y, T=y) = 0. 99 4

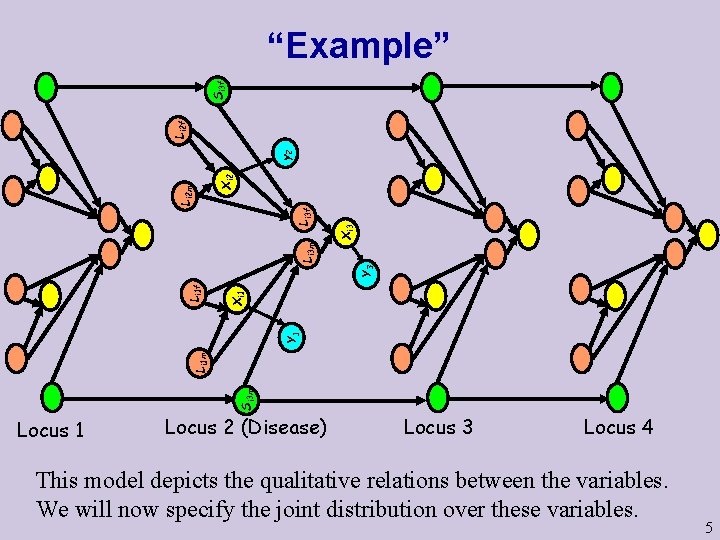

Xi 3 Si 3 m Li 1 m Y 1 Xi 1 Li 1 f Y 3 Li 3 m Li 3 f Xi 2 Li 2 m y 2 Li 2 f Si 3 f “Example” Locus 1 Locus 2 (Disease) Locus 3 Locus 4 This model depicts the qualitative relations between the variables. We will now specify the joint distribution over these variables. 5

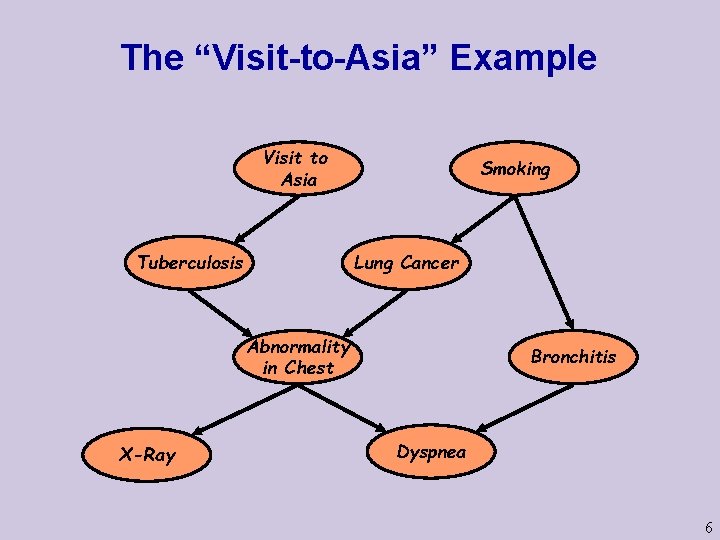

The “Visit-to-Asia” Example Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea 6

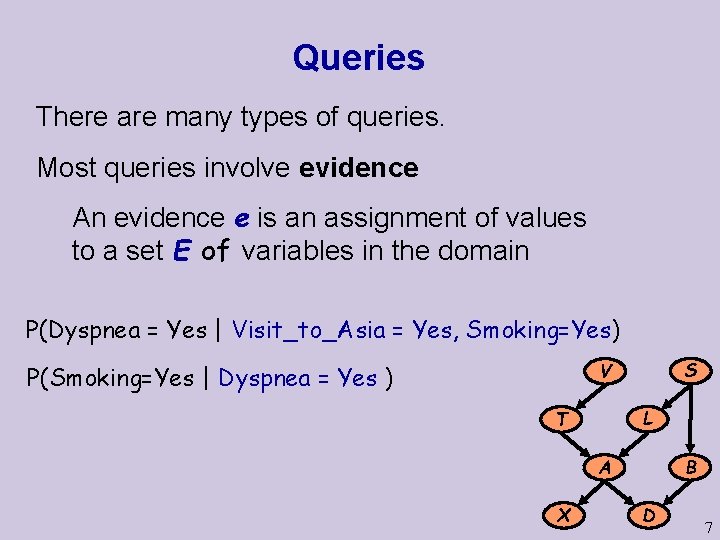

Queries There are many types of queries. Most queries involve evidence An evidence e is an assignment of values to a set E of variables in the domain P(Dyspnea = Yes | Visit_to_Asia = Yes, Smoking=Yes) S V P(Smoking=Yes | Dyspnea = Yes ) L T B A X D 7

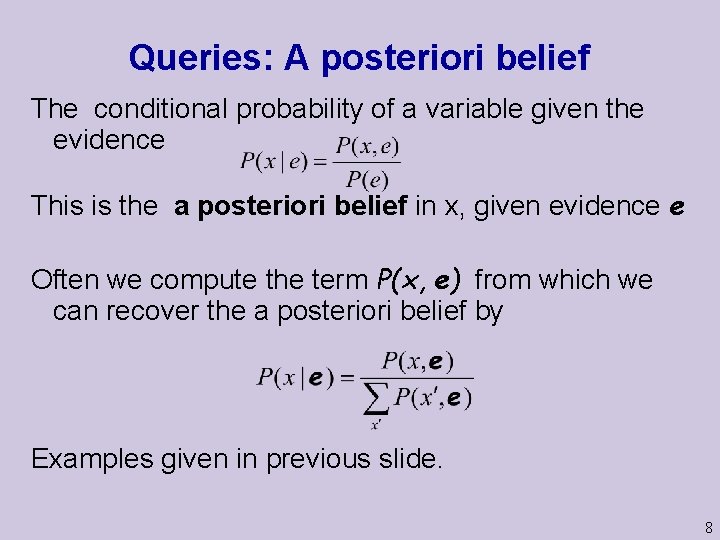

Queries: A posteriori belief The conditional probability of a variable given the evidence This is the a posteriori belief in x, given evidence e Often we compute the term P(x, e) from which we can recover the a posteriori belief by Examples given in previous slide. 8

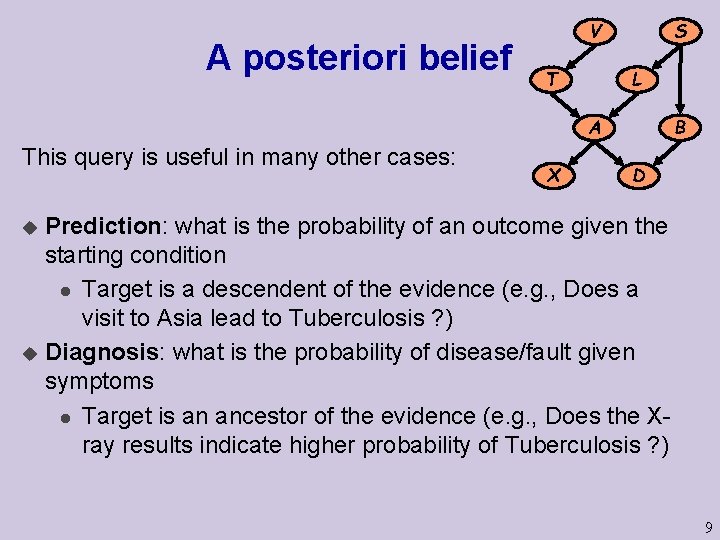

A posteriori belief S V L T B A This query is useful in many other cases: X D Prediction: what is the probability of an outcome given the starting condition l Target is a descendent of the evidence (e. g. , Does a visit to Asia lead to Tuberculosis ? ) u Diagnosis: what is the probability of disease/fault given symptoms l Target is an ancestor of the evidence (e. g. , Does the Xray results indicate higher probability of Tuberculosis ? ) u 9

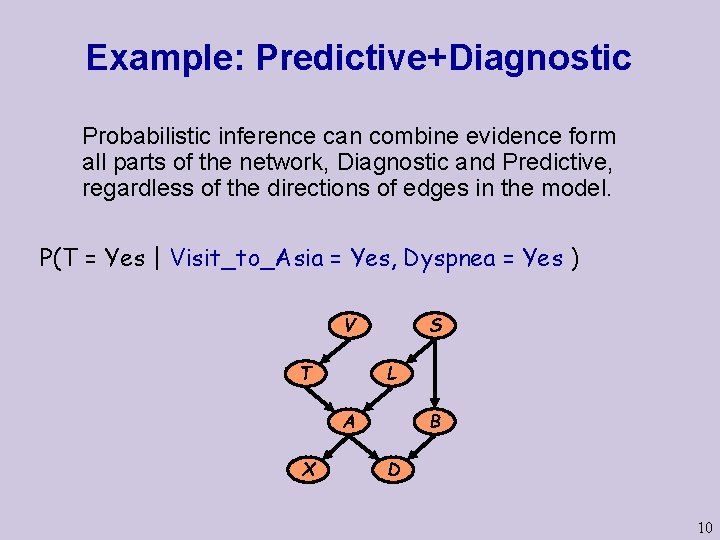

Example: Predictive+Diagnostic Probabilistic inference can combine evidence form all parts of the network, Diagnostic and Predictive, regardless of the directions of edges in the model. P(T = Yes | Visit_to_Asia = Yes, Dyspnea = Yes ) S V L T B A X D 10

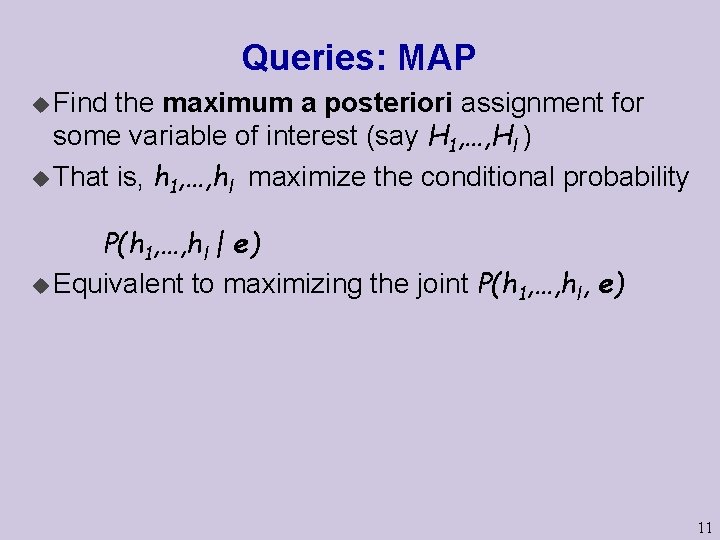

Queries: MAP u Find the maximum a posteriori assignment for some variable of interest (say H 1, …, Hl ) u That is, h 1, …, hl maximize the conditional probability P(h 1, …, hl | e) u Equivalent to maximizing the joint P(h 1, …, hl, e) 11

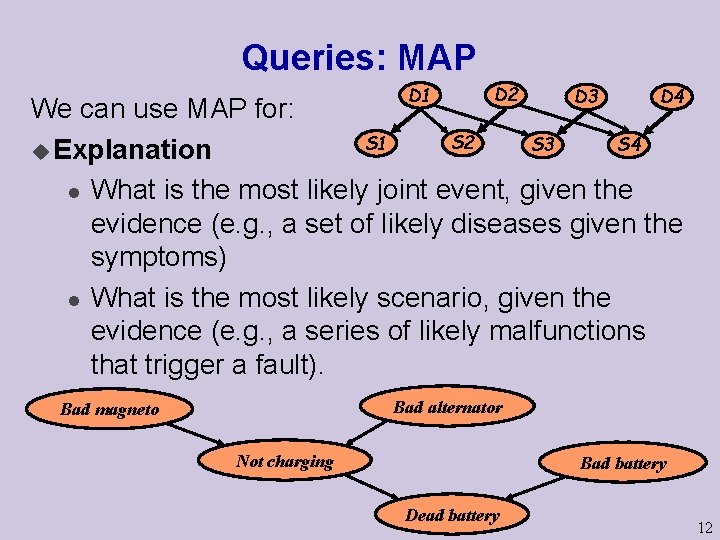

Queries: MAP D 1 D 2 D 3 D 4 We can use MAP for: S 2 S 1 S 4 S 3 u Explanation l What is the most likely joint event, given the evidence (e. g. , a set of likely diseases given the symptoms) l What is the most likely scenario, given the evidence (e. g. , a series of likely malfunctions that trigger a fault). Bad alternator Bad magneto Not charging Bad battery Dead battery 12

Complexity of Inference Thm: Computing P(X = x) in a Bayesian network is NPhard Not surprising, since we can simulate Boolean gates. 13

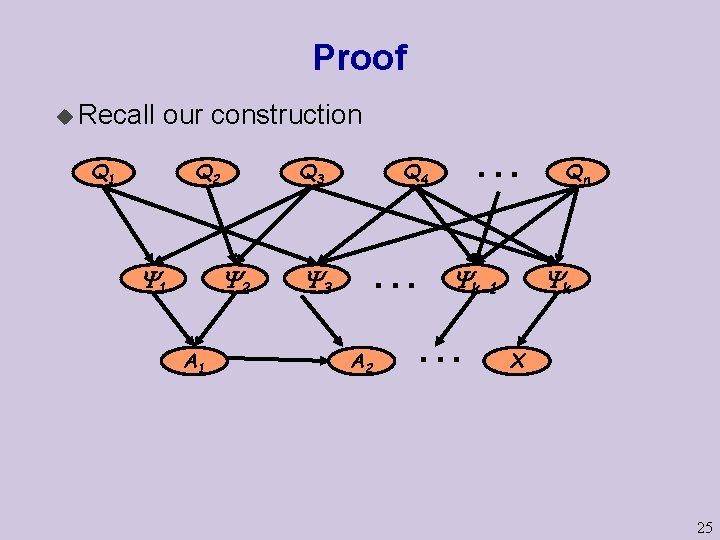

Proof We reduce 3 -SAT to Bayesian network computation Assume we are given a 3 -SAT problem: u Q 1, …, Qn be propositions, u 1 , . . . , k be clauses, such that i = li 1 li 2 li 3 where each lij is a literal over Q 1, …, Qn (e. g. , Q 1 = true ) u = 1. . . k We will construct a Bayesian network s. t. P(X=t) > 0 iff is satisfiable 14

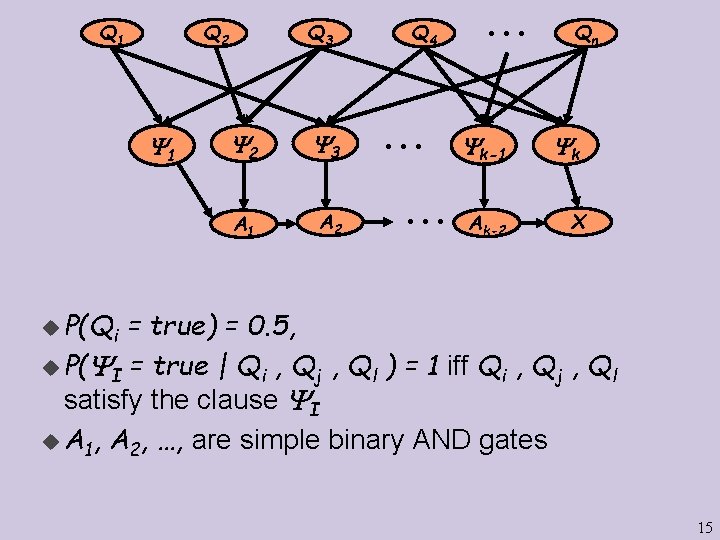

Q 1 Q 2 1 Q 3 2 3 A 1 A 2 . . . Q 4 . . . k-1 Ak-2 Qn k X u P(Qi = true) = 0. 5, u P( I = true | Qi , Qj , Ql ) = 1 iff Qi , Qj , Ql satisfy the clause I u A 1, A 2, …, are simple binary AND gates 15

u It is easy to check l l l Polynomial number of variables Each Conditional Probability Table can be described by a small table (8 parameters at most) P(X = true) > 0 if and only if there exists a satisfying assignment to Q 1, …, Qn u Conclusion: polynomial reduction of 3 -SAT 16

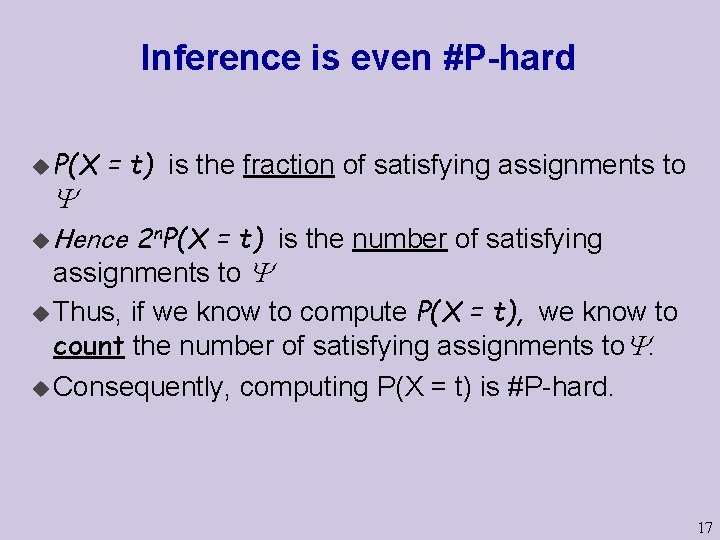

Inference is even #P-hard u P(X = t) is the fraction of satisfying assignments to u Hence 2 n. P(X = t) is the number of satisfying assignments to if we know to compute P(X = t), we know to count the number of satisfying assignments to. u Consequently, computing P(X = t) is #P-hard. u Thus, 17

Hardness - Notes We used deterministic relations in our construction u The same construction works if we use (1 - , ) instead of (1, 0) in each gate for any < 0. 5 Homework: Prove it. u Hardness does not mean we cannot solve inference l It implies that we cannot find a general procedure that works efficiently for all networks l For particular families of networks, we can have provably efficient procedures (e. g. , trees, HMMs). l Variable elimination algorithms. u 18

Approximation u Until now, we examined exact computation u In many applications, approximation are sufficient l Example: P(X = x|e) = 0. 3183098861838 l Maybe P(X = x|e) 0. 3 is a good enough approximation l e. g. , we take action only if P(X = x|e) > 0. 5 u Can we find good approximation algorithms? 19

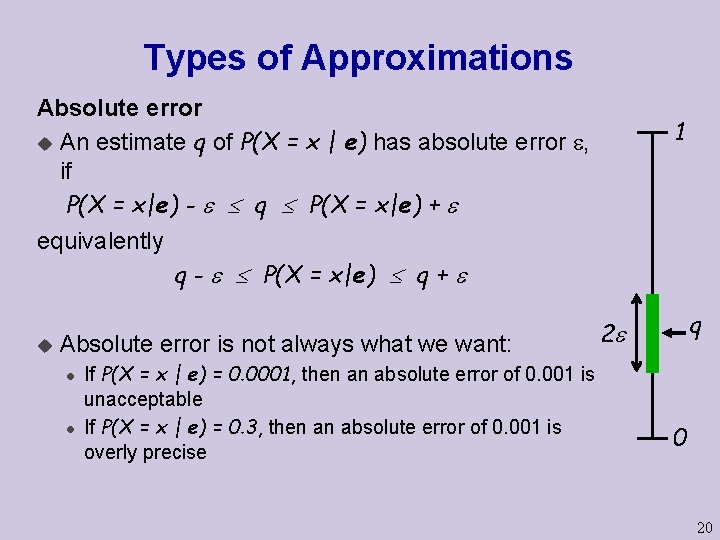

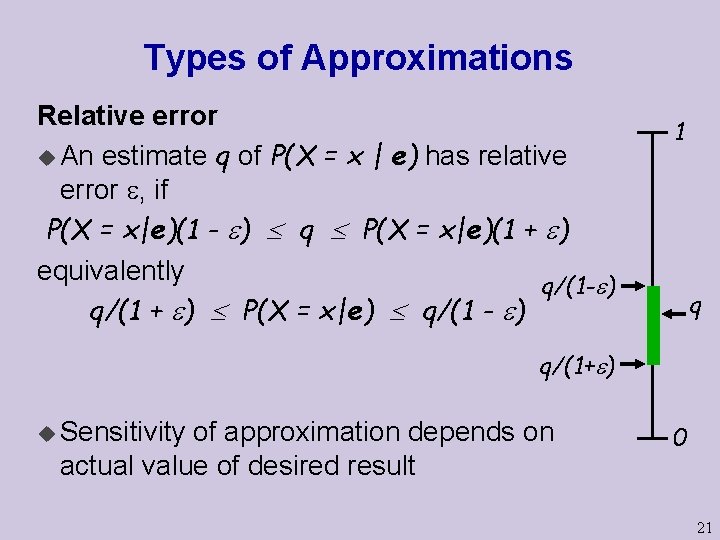

Types of Approximations Absolute error u An estimate q of P(X = x | e) has absolute error , if P(X = x|e) - q P(X = x|e) + equivalently q - P(X = x|e) q + u Absolute error is not always what we want: l l If P(X = x | e) = 0. 0001, then an absolute error of 0. 001 is unacceptable If P(X = x | e) = 0. 3, then an absolute error of 0. 001 is overly precise 1 q 2 0 20

Types of Approximations Relative error u An estimate q of P(X = x | e) has relative error , if P(X = x|e)(1 - ) q P(X = x|e)(1 + ) equivalently q/(1 - ) q/(1 + ) P(X = x|e) q/(1 - ) 1 q q/(1+ ) u Sensitivity of approximation depends on actual value of desired result 0 21

Complexity u Exact inference is hard. u Is approximate inference any easier? 22

Complexity: Relative Error u Suppose that q is a relative error estimate of P(X = t), u If is not satisfiable, then P(X = t)=0. Hence, 0 = P(X = t)(1 - ) q P(X = t)(1 + ) = 0 namely, q=0. Thus, if q > 0, then is satisfiable An immediate consequence: Thm: Given , finding an -relative error approximation is NPhard 23

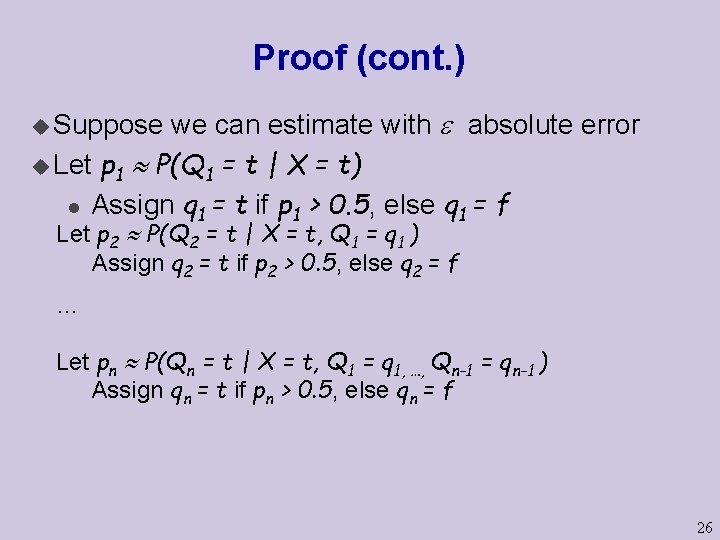

Complexity: Absolute error u We can find absolute error approximations to P(X = x) with high probability (via sampling). l We will see such algorithms next class. u However, once we have evidence, the problem is harder Thm u If < 0. 5, then finding an estimate of P(X=x|e) with absulote error approximation is NP-Hard 24

Proof u Recall our construction Q 1 Q 2 1 Q 3 2 A 1 3 Q 4 . . . A 2 . . . Qn k k-1 X 25

Proof (cont. ) we can estimate with absolute error u Let p 1 P(Q 1 = t | X = t) l Assign q 1 = t if p 1 > 0. 5, else q 1 = f u Suppose Let p 2 P(Q 2 = t | X = t, Q 1 = q 1 ) Assign q 2 = t if p 2 > 0. 5, else q 2 = f … Let pn P(Qn = t | X = t, Q 1 = q 1, …, Qn-1 = qn-1 ) Assign qn = t if pn > 0. 5, else qn = f 26

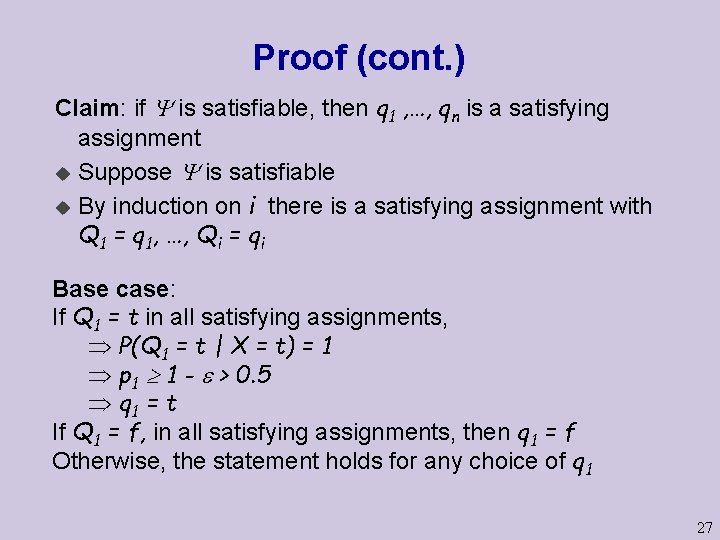

Proof (cont. ) Claim: if is satisfiable, then q 1 , …, qn is a satisfying assignment u Suppose is satisfiable u By induction on i there is a satisfying assignment with Q 1 = q 1, …, Qi = qi Base case: If Q 1 = t in all satisfying assignments, P(Q 1 = t | X = t) = 1 p 1 1 - > 0. 5 q 1 = t If Q 1 = f, in all satisfying assignments, then q 1 = f Otherwise, the statement holds for any choice of q 1 27

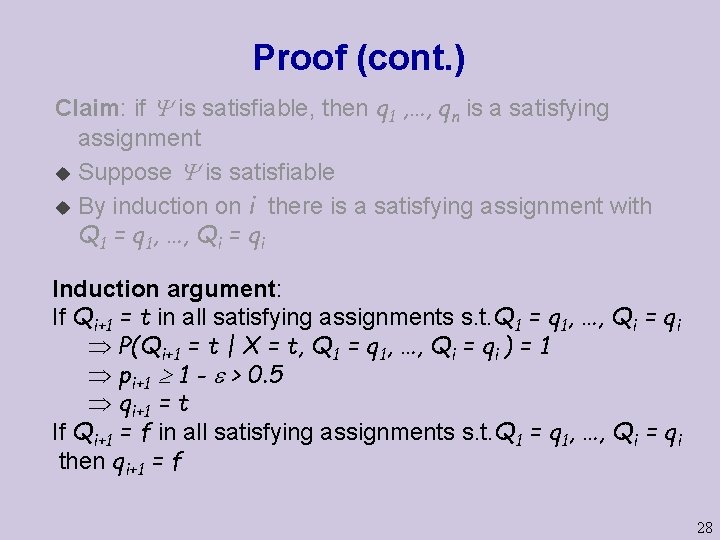

Proof (cont. ) Claim: if is satisfiable, then q 1 , …, qn is a satisfying assignment u Suppose is satisfiable u By induction on i there is a satisfying assignment with Q 1 = q 1, …, Qi = qi Induction argument: If Qi+1 = t in all satisfying assignments s. t. Q 1 = q 1, …, Qi = qi P(Qi+1 = t | X = t, Q 1 = q 1, …, Qi = qi ) = 1 pi+1 1 - > 0. 5 qi+1 = t If Qi+1 = f in all satisfying assignments s. t. Q 1 = q 1, …, Qi = qi then qi+1 = f 28

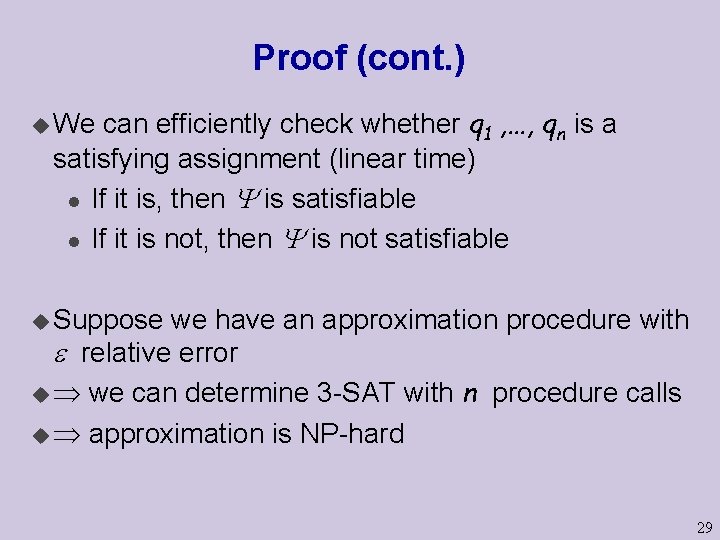

Proof (cont. ) can efficiently check whether q 1 , …, qn is a satisfying assignment (linear time) l If it is, then is satisfiable l If it is not, then is not satisfiable u We u Suppose we have an approximation procedure with relative error u we can determine 3 -SAT with n procedure calls u approximation is NP-hard 29

- Slides: 29