Artificial Neural Networks n Artificial Neural Networks are

- Slides: 16

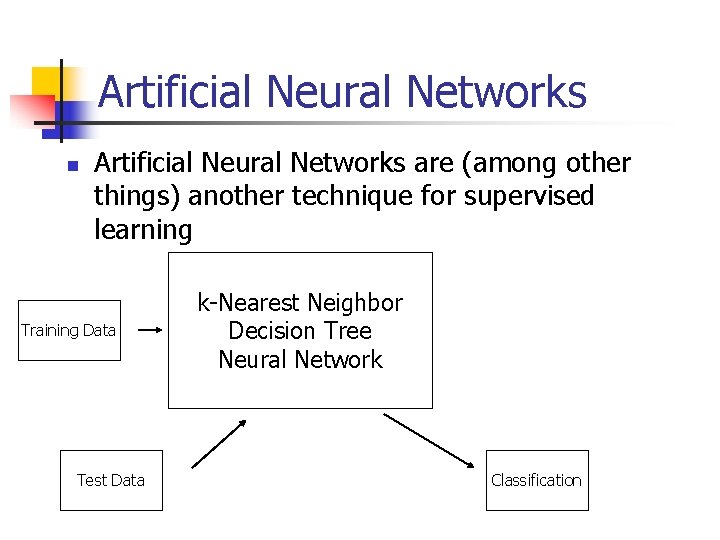

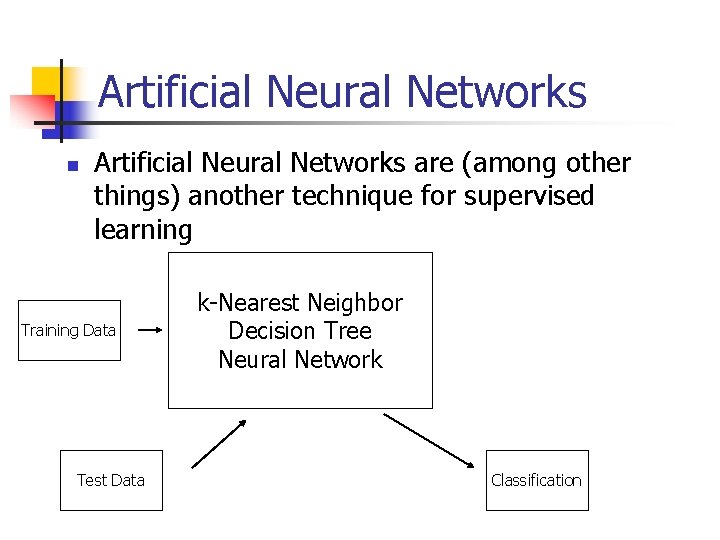

Artificial Neural Networks n Artificial Neural Networks are (among other things) another technique for supervised learning Training Data Test Data k-Nearest Neighbor Decision Tree Neural Network Classification

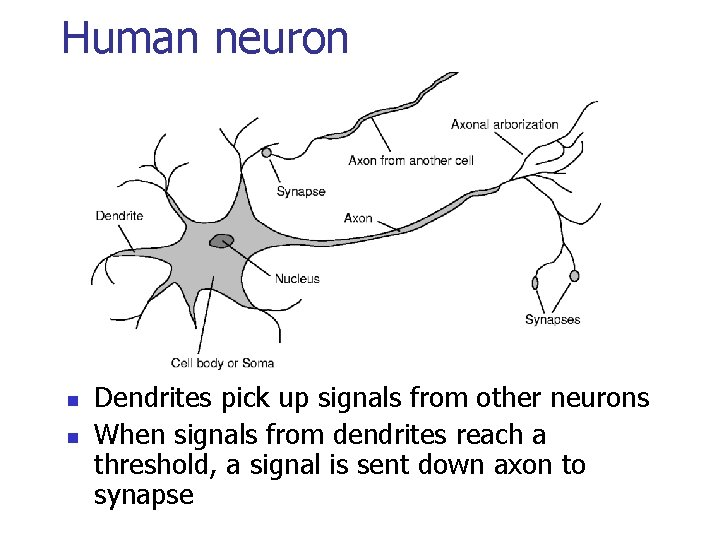

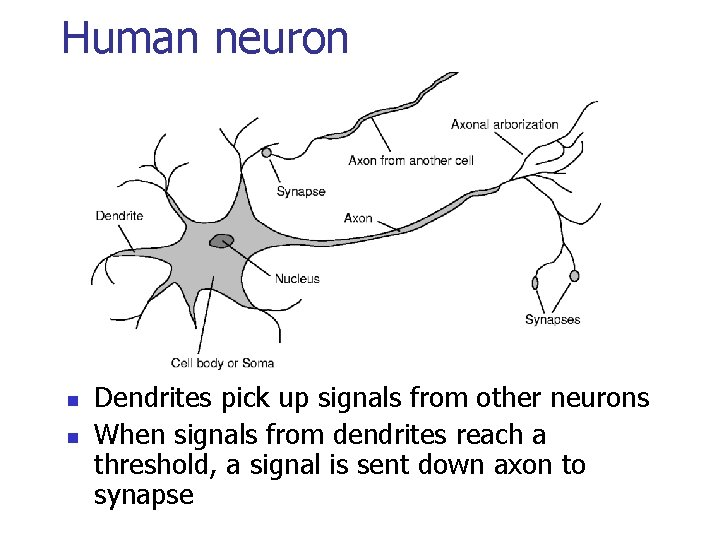

Human neuron n n Dendrites pick up signals from other neurons When signals from dendrites reach a threshold, a signal is sent down axon to synapse

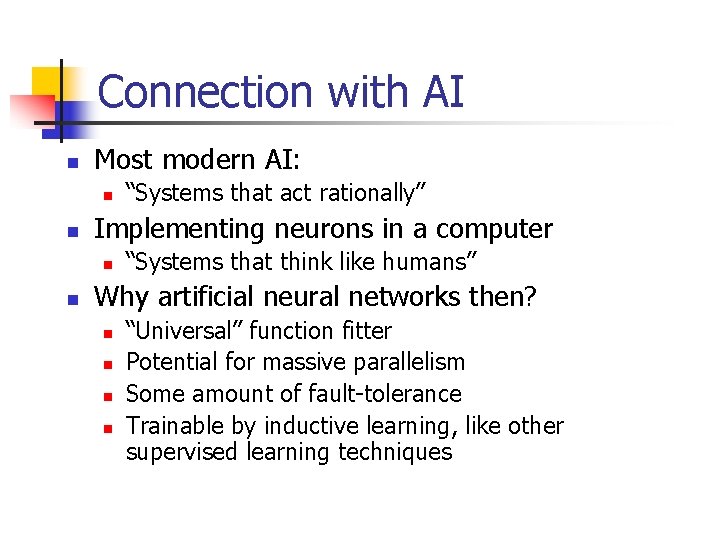

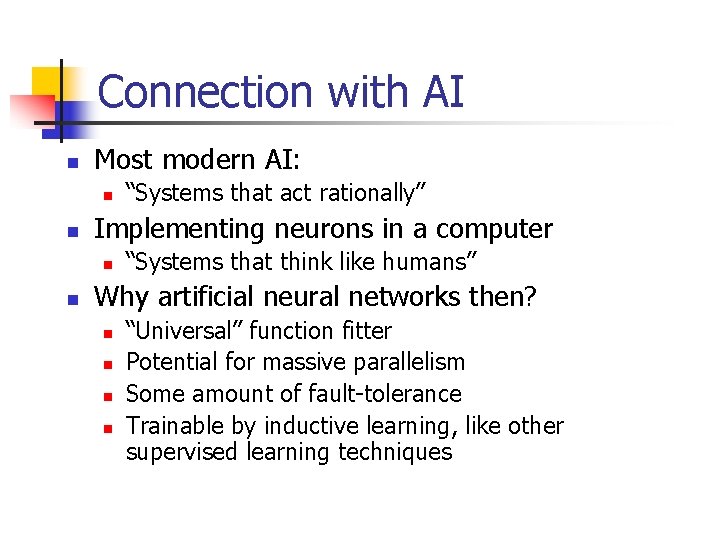

Connection with AI n Most modern AI: n n Implementing neurons in a computer n n “Systems that act rationally” “Systems that think like humans” Why artificial neural networks then? n n “Universal” function fitter Potential for massive parallelism Some amount of fault-tolerance Trainable by inductive learning, like other supervised learning techniques

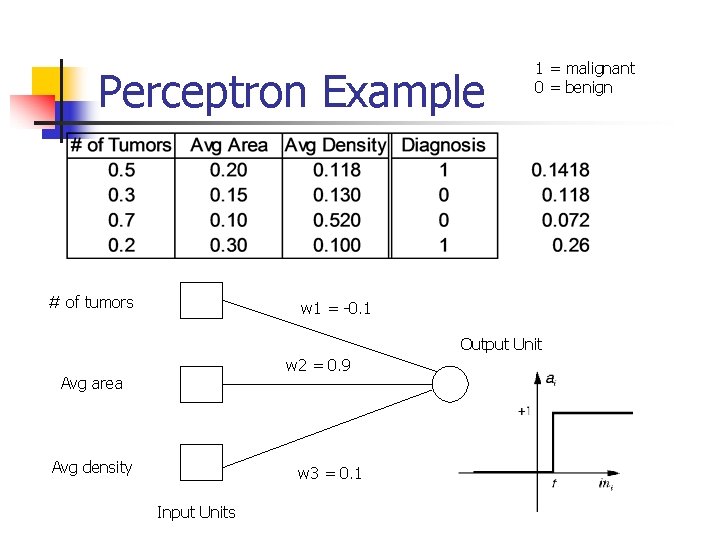

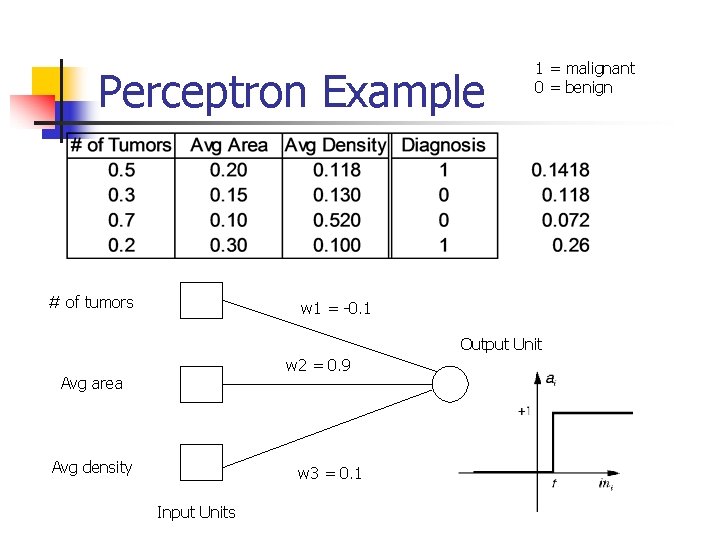

Perceptron Example # of tumors 1 = malignant 0 = benign w 1 = -0. 1 Output Unit w 2 = 0. 9 Avg area Avg density w 3 = 0. 1 Input Units

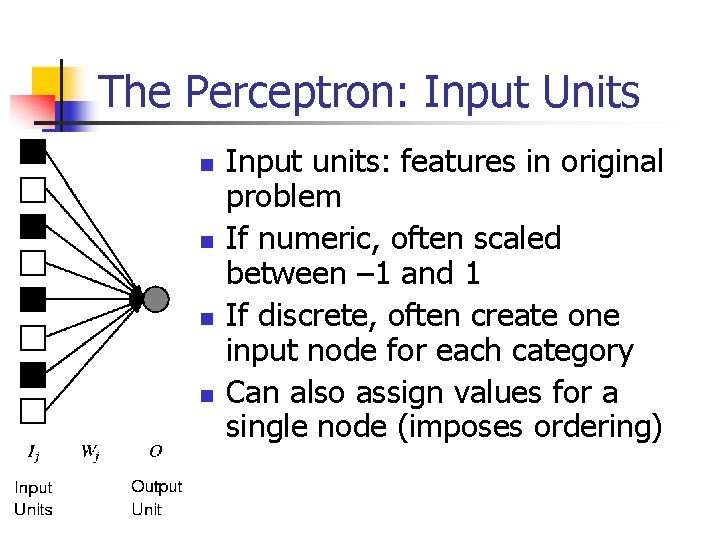

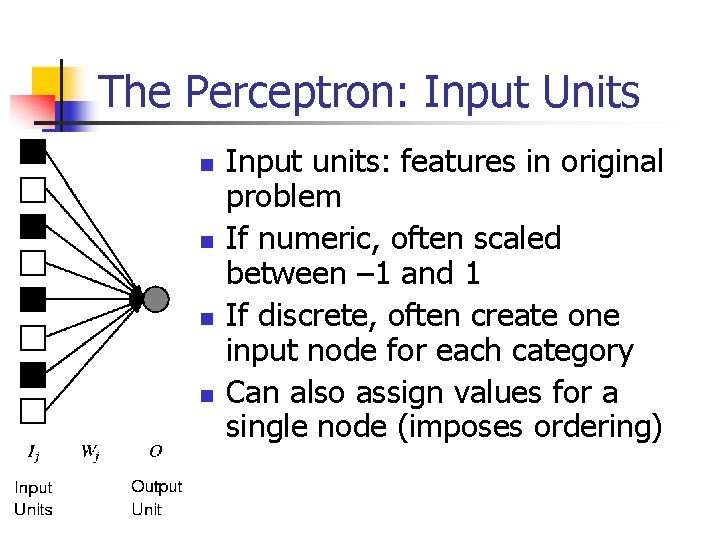

The Perceptron: Input Units n n Input units: features in original problem If numeric, often scaled between – 1 and 1 If discrete, often create one input node for each category Can also assign values for a single node (imposes ordering)

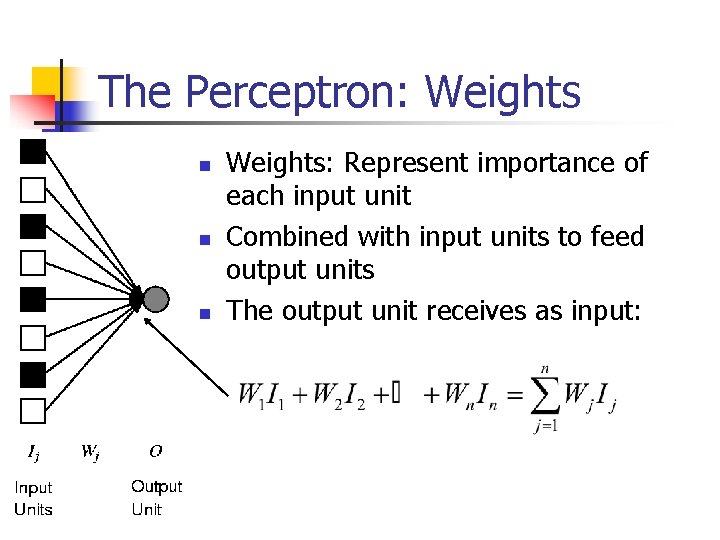

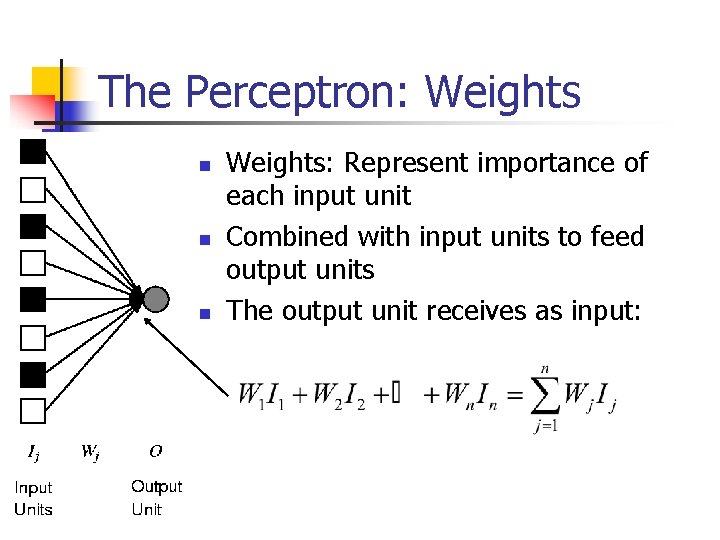

The Perceptron: Weights n n n Weights: Represent importance of each input unit Combined with input units to feed output units The output unit receives as input:

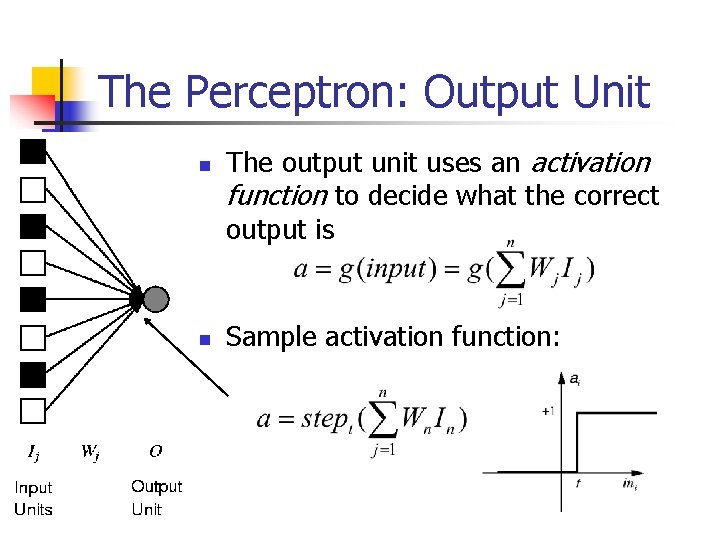

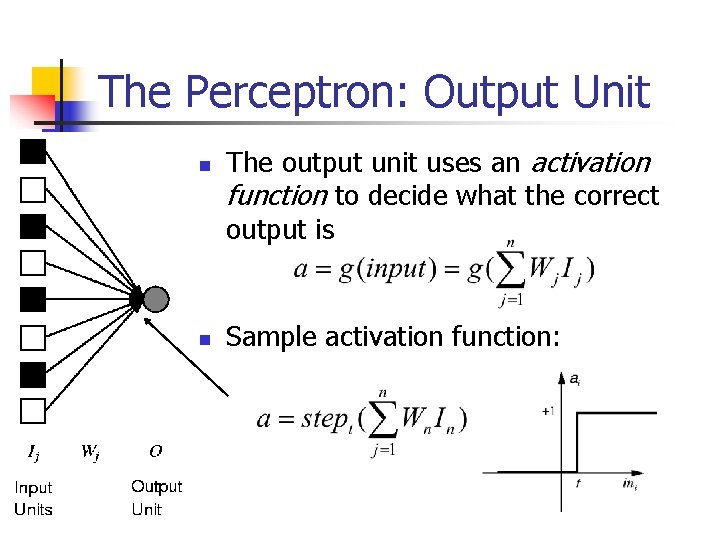

The Perceptron: Output Unit n n The output unit uses an activation function to decide what the correct output is Sample activation function:

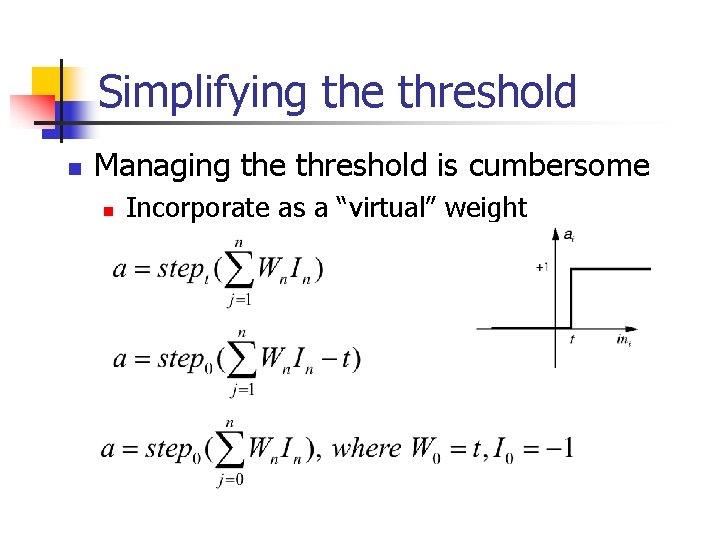

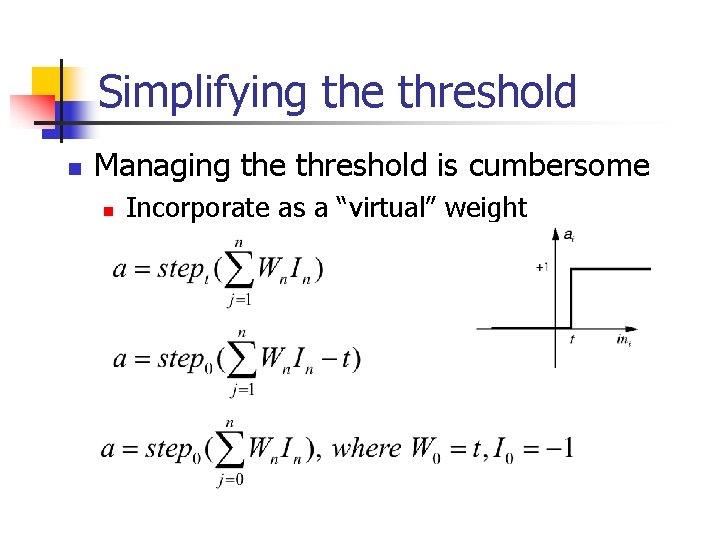

Simplifying the threshold n Managing the threshold is cumbersome n Incorporate as a “virtual” weight

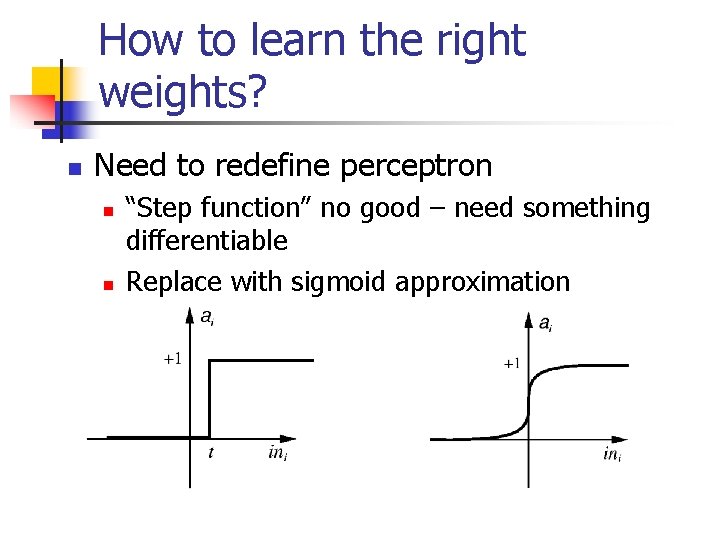

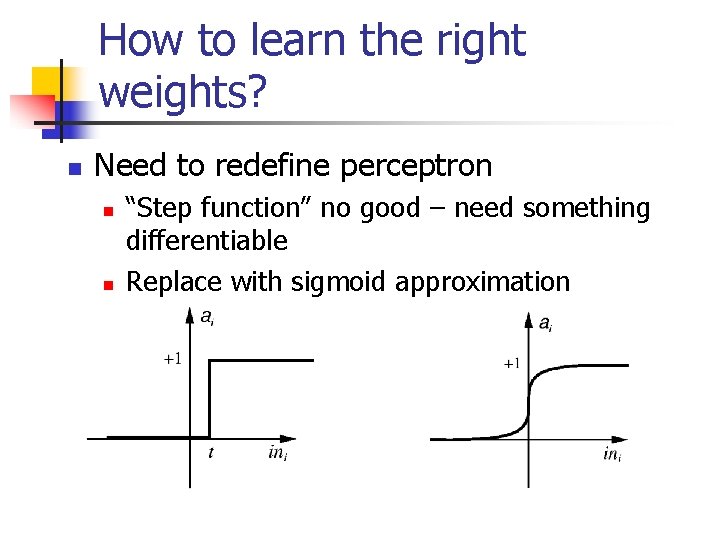

How to learn the right weights? n Need to redefine perceptron n n “Step function” no good – need something differentiable Replace with sigmoid approximation

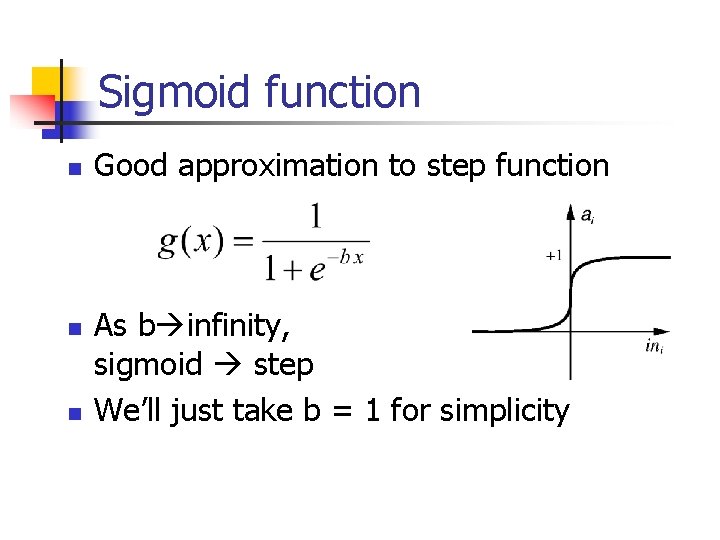

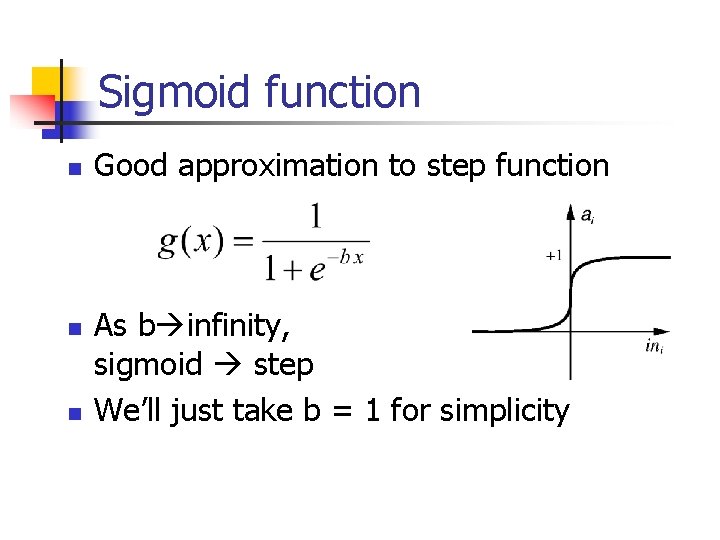

Sigmoid function n Good approximation to step function As b infinity, sigmoid step We’ll just take b = 1 for simplicity

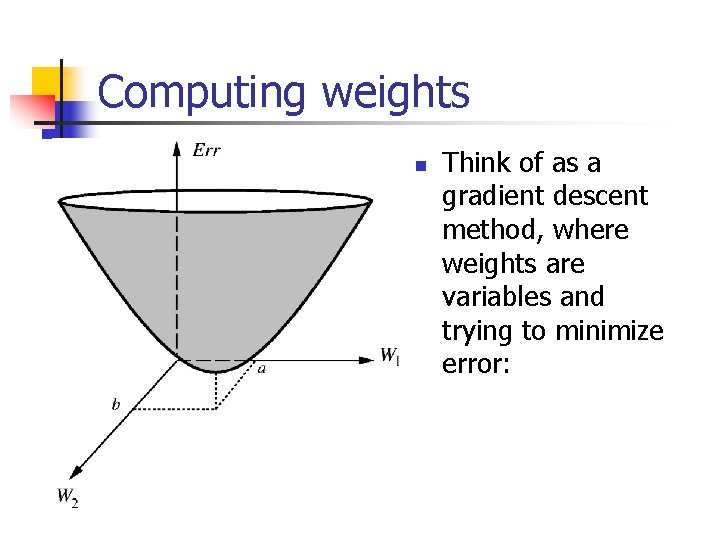

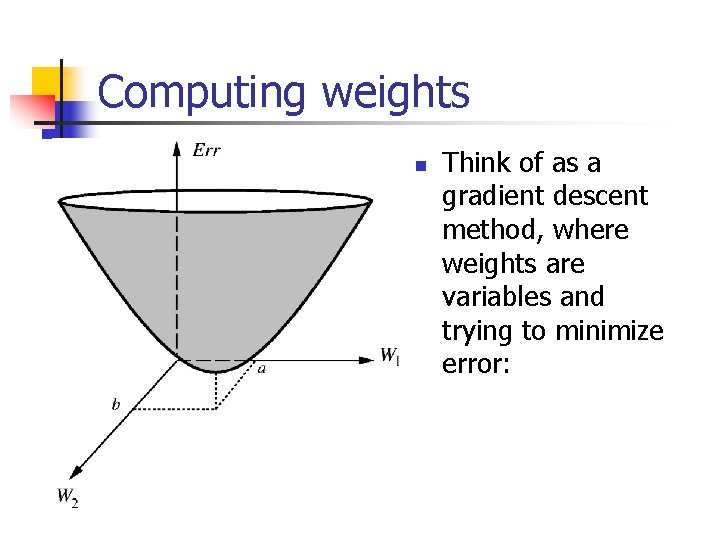

Computing weights n Think of as a gradient descent method, where weights are variables and trying to minimize error:

The Perceptron Learning Rule: How do we compute weights?

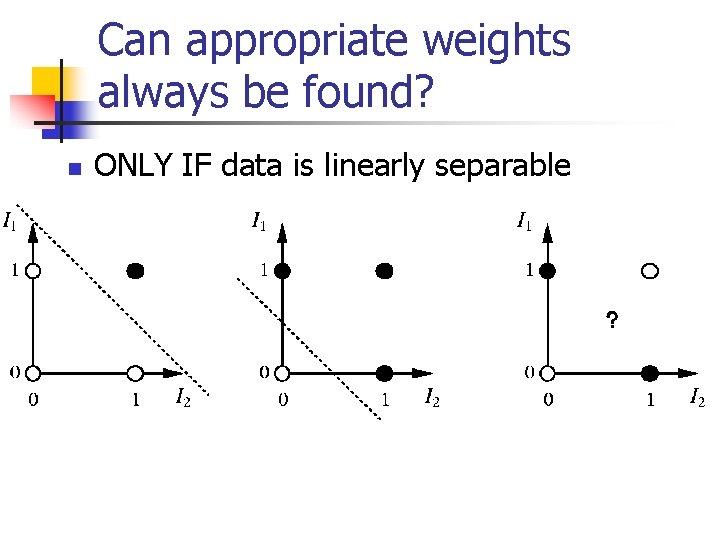

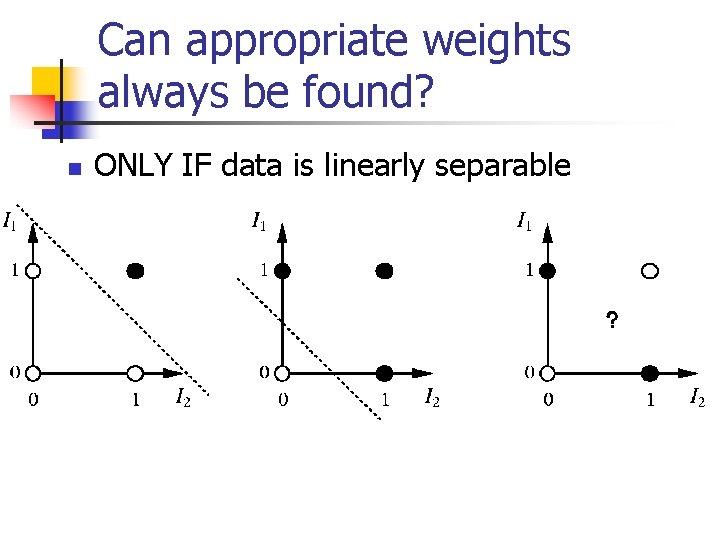

Can appropriate weights always be found? n ONLY IF data is linearly separable

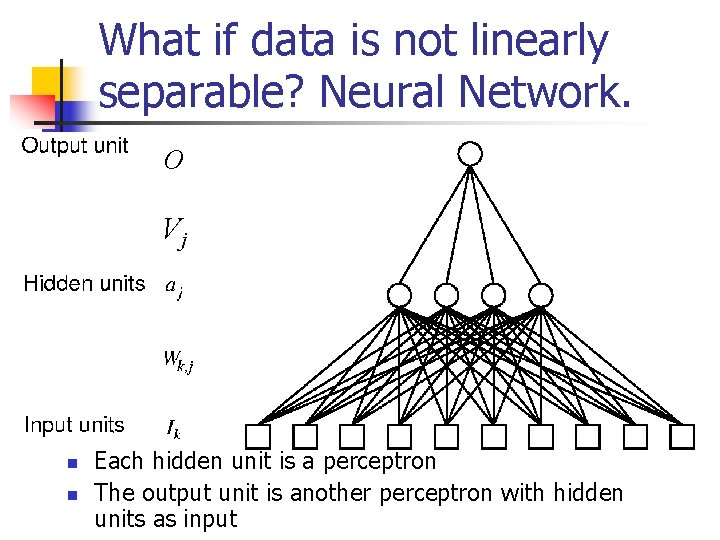

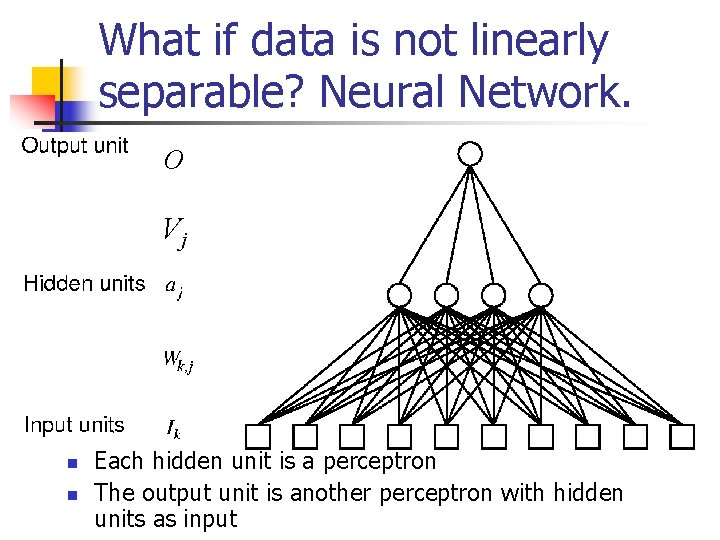

What if data is not linearly separable? Neural Network. O Vj n n Each hidden unit is a perceptron The output unit is another perceptron with hidden units as input

Backpropagation: How do we compute weights?

Neural Networks and machine learning issues n n n Neural networks can represent any training set, if enough hidden units are used How long do they take to train? How much memory? Does backprop find the best set of weights? How to deal with overfitting? How to interpret results?