Applied AI and ML Data Science Lab Ryerson

Applied AI and ML Data Science Lab Ryerson University Dr. Ayse Bener Topology Workshop 2018 Nipissing University May 23, 2018

Outline ● Big Graph Mining ○ ○ ○ ● Use Cases ○ ○ ● Big Data Tools Big Data and ML Big Data and GM Developer Social Networks Taste Graph Reinforcement Learning Tensor Factorization DSL

Big Graph Mining ● Big graphs are everywhere ○ ○ social networks mobile call networks biological networks World Wide Web.

Big Graph Mining ● ● Find patterns and anomalies in very large graphs with billions of nodes and edges How to mine such big graphs efficiently?

Big Graph Mining ● The past: ○ ● the input graph fits in the memory or disks of a single machine. Now: ○ ○ ○ single machine algorithms are not tractable for handling big graphs, distributed algorithms.

Big Graph Mining ● Traditionally, computation has been CPU bound ○ ● Complex computation on small data For decades, the primary push is to increase the computing power of a single machine ○ a. k. a Vertical Scaling / Scaling Up

Big Graph Mining • Traditional Distributed Problems • Focuses on distributing the processing workload • • • Complex programming model Partial failures Bandwidth limitations

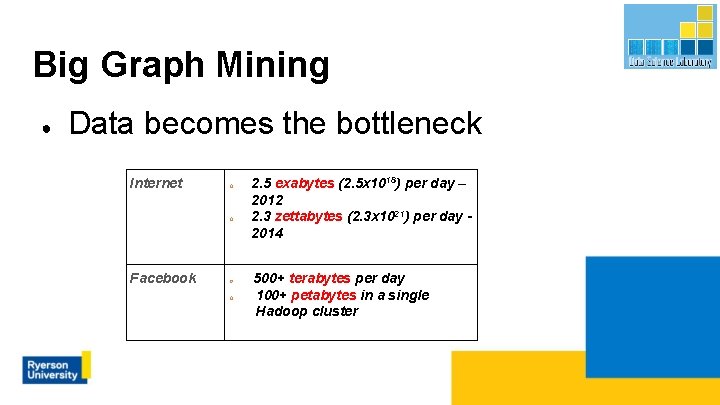

Big Graph Mining ● Data becomes the bottleneck Internet o o Facebook o o 2. 5 exabytes (2. 5 x 1018) per day – 2012 2. 3 zettabytes (2. 3 x 1021) per day 2014 500+ terabytes per day 100+ petabytes in a single Hadoop cluster

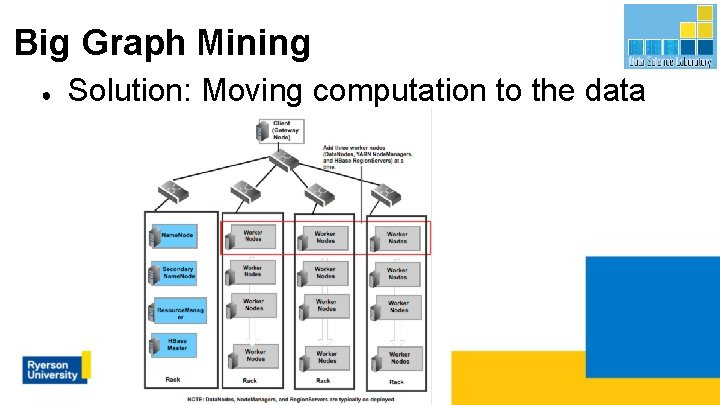

Big Graph Mining ● Solution: Moving computation to the data

Big Data Tools ● ● Hadoop Core large-scale data processing ○ ○ Data storage: HDFS Data processing: Map. Reduce

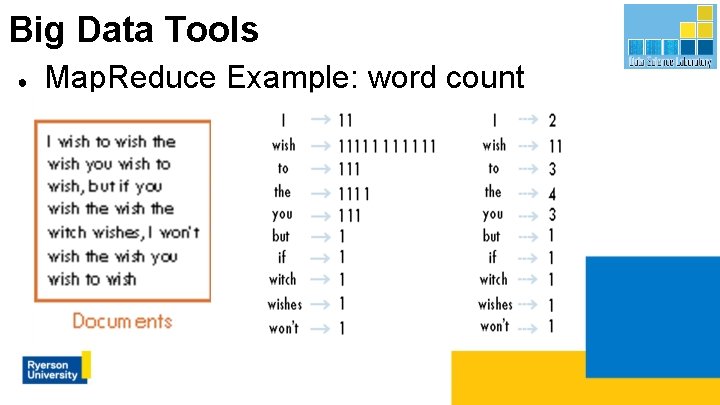

Big Data Tools ● Map. Reduce Example: word count

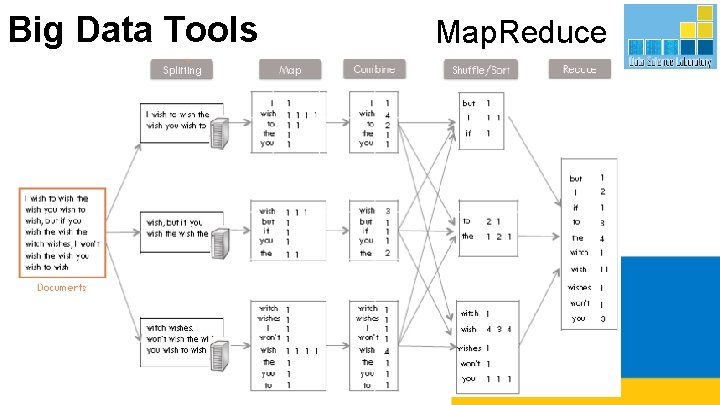

Big Data Tools Map. Reduce

Big Data Tools ● HDFS- Hadoop Distributed File System ○ ○ ○ A distributed file system that runs on large clusters of commodity machines Based on Google GFS (2003) paper Provides redundant storage for massive datasets

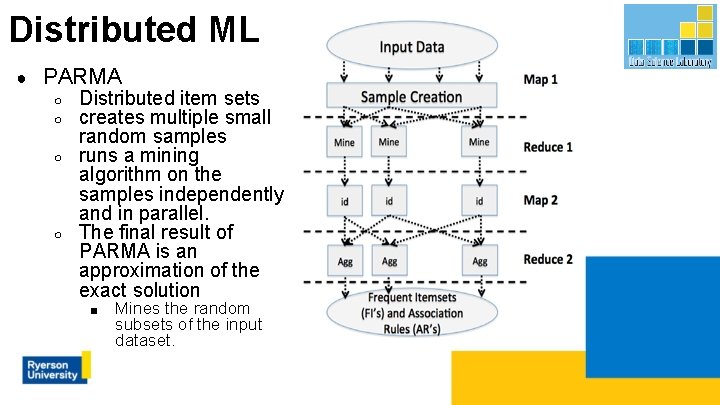

Distributed ML ● PARMA ○ ○ Distributed item sets creates multiple small random samples runs a mining algorithm on the samples independently and in parallel. The final result of PARMA is an approximation of the exact solution ■ Mines the random subsets of the input dataset.

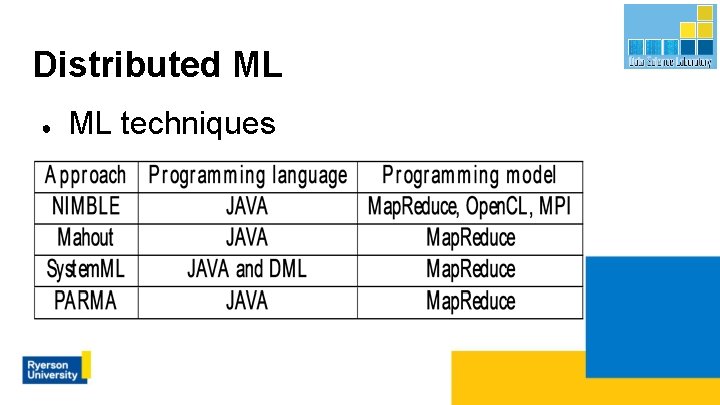

Distributed ML ● ML techniques

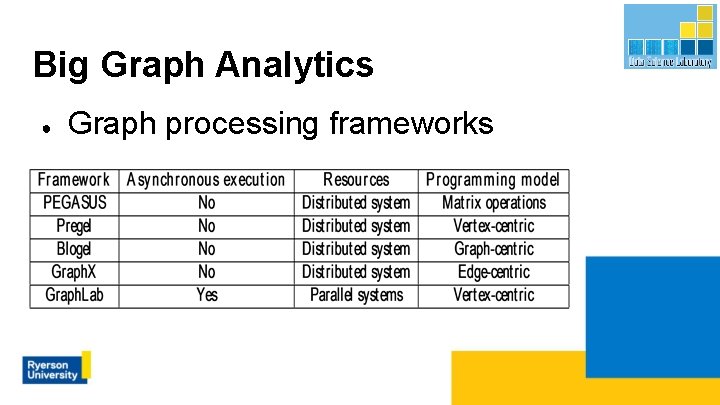

Big Graph Analytics ● Graph processing frameworks

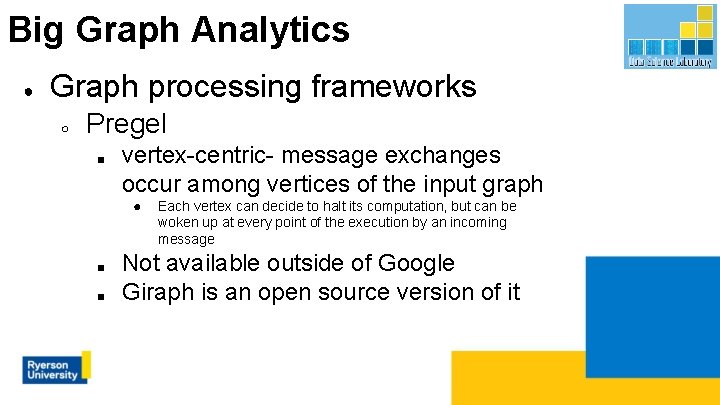

Big Graph Analytics ● Graph processing frameworks ○ Pregel ■ vertex-centric- message exchanges occur among vertices of the input graph ● ■ ■ Each vertex can decide to halt its computation, but can be woken up at every point of the execution by an incoming message Not available outside of Google Giraph is an open source version of it

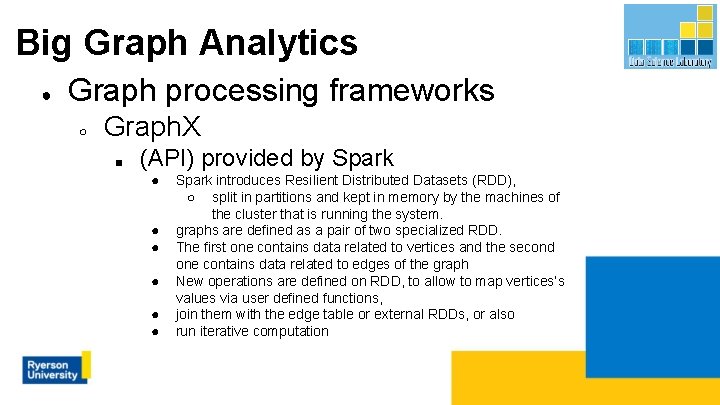

Big Graph Analytics ● Graph processing frameworks ○ Graph. X ■ (API) provided by Spark ● ● ● Spark introduces Resilient Distributed Datasets (RDD), ○ split in partitions and kept in memory by the machines of the cluster that is running the system. graphs are defined as a pair of two specialized RDD. The first one contains data related to vertices and the second one contains data related to edges of the graph New operations are defined on RDD, to allow to map vertices’s values via user defined functions, join them with the edge table or external RDDs, or also run iterative computation

Extracting patterns in big graphs ● Frequent Subgraph Mining (FSM) ○ graph transaction based FSM ■ ○ the input data comprises a collection of medium-size graphs called transactions single graph based ■ the input data comprise one very large graph.

Extracting patterns in big graphs ● Properties of FSM ○ Search strategy ■ Depth-first ● ● ● ■ Breadth-first ● ● ● traversing or searching tree or graph starts at the root explores as far as possible along each branch before backtracking Visit and inspect a node of a graph, Gain access to visit the nodes that neighbor the currently visited node. Algorithms ○ g. Span and FFSM, Close. Graph, SPIN

Extracting patterns in big graphs ● Generation of patterns ○ Apriori-based ■ ■ ○ starts with graphs of small size, and proceeds in a bottom-up manner have considerable overhead when two size-k frequent substructures are joined to generate size- (k+1) graph candidates Pattern growth based ■ ■ extends a frequent graph by adding a new edge, in every possible position the same graph can be discovered many times.

Applications of Graph Mining

1. An issue recommender model using developer collaboration network Caglayan, B. , and Bener, A. , “Effect of Developer Collaboration Activity on Software Quality In Two Large Scale Projects”. Journal of Systems and Software, 2016, 118, 288 -296.

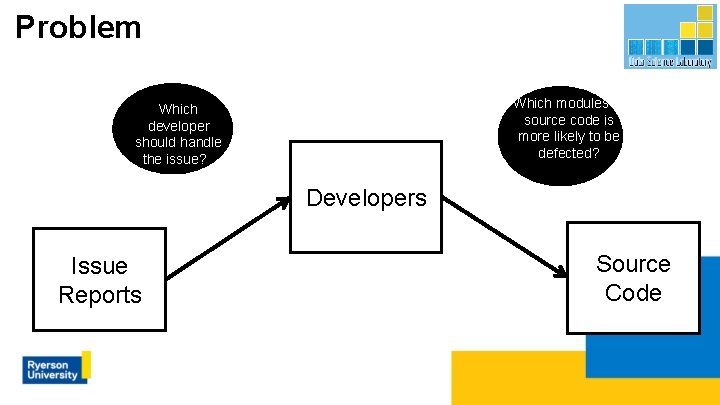

Problem Which modules of source code is more likely to be defected? Which developer should handle the issue? ? Developers Issue Reports Source Code

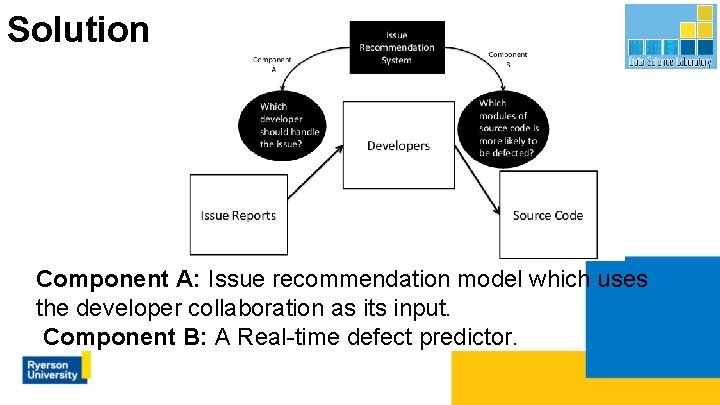

Solution Component A: Issue recommendation model which uses the developer collaboration as its input. Component B: A Real-time defect predictor.

Solution ● ● Model the software as the combination of developer collaboration network and the call graph Build an issue recommendation system using developer collaboration network Usage of Kronecker networks to estimate the future collaborations of new developers Address developer workload balance problem using the issue recommendation model

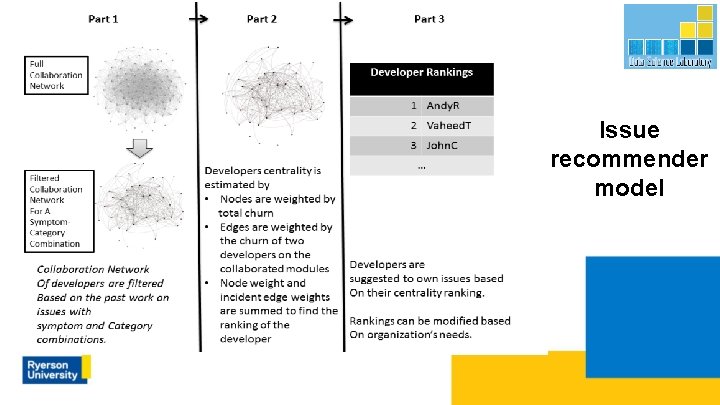

Issue recommender model

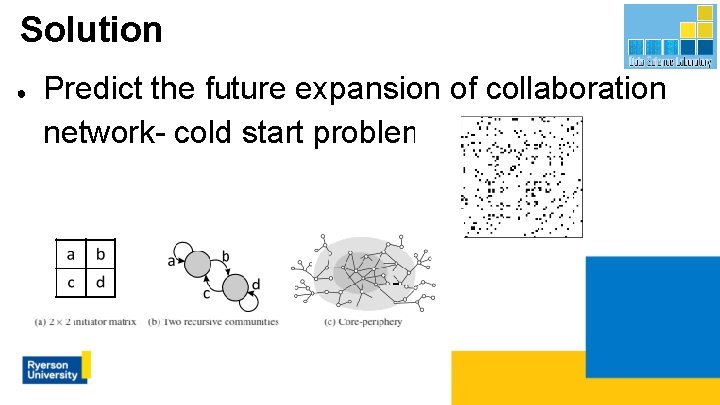

Solution ● Predict the future expansion of collaboration network- cold start problem

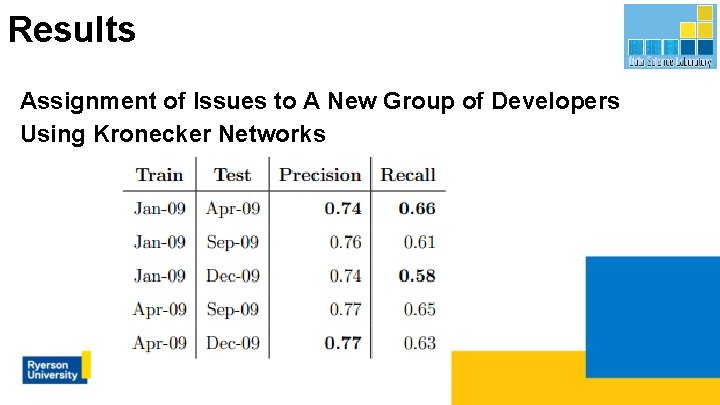

Results Assignment of Issues to A New Group of Developers Using Kronecker Networks

2. Application Recommendation A. Bener, Caglayan, B. , Henry, A. D. , Pralat, P. , “Empirical Models of Social Learning in a Large Evolving Network”, Plos. One, 2016, 11(10).

Problem ● Recommend relevant and/or novel applications to mobile phone users.

Solution ● ● Taste graphs Understanding the properties of graphs

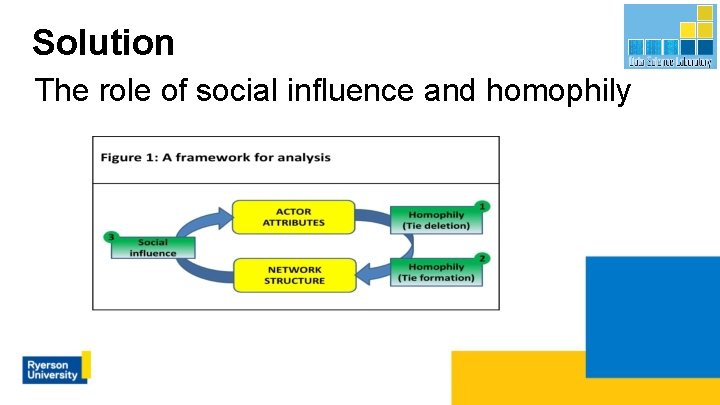

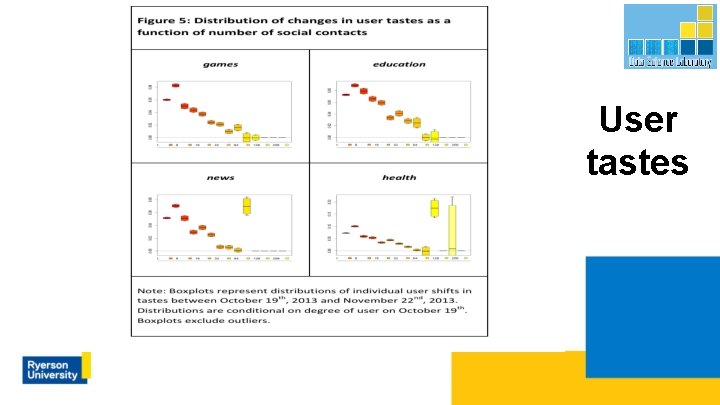

Solution The role of social influence and homophily

User tastes

3. Partially Observable Markov Decision Process to Prioritize Software Defects Akbarinasaji, S. , Kavaklioglu, C. , and Bener, A. , “Defect Prioritization using Reinforcement Learning”, TSE (under review)

Problem How to prioritize the bug reports by considering the consequence of not fixing the bugs in terms of their relative importance?

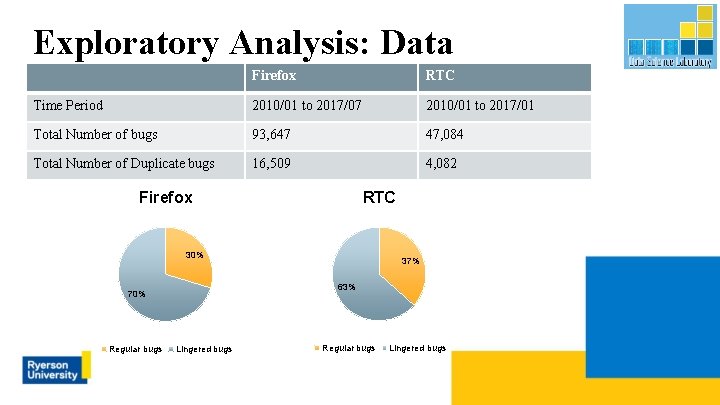

Exploratory Analysis: Data Characteristics Firefox RTC Time Period 2010/01 to 2017/07 2010/01 to 2017/01 Total Number of bugs 93, 647 47, 084 Total Number of Duplicate bugs 16, 509 4, 082 Firefox RTC 30% 63% 70% Regular bugs 37% Lingered bugs Regular bugs Lingered bugs

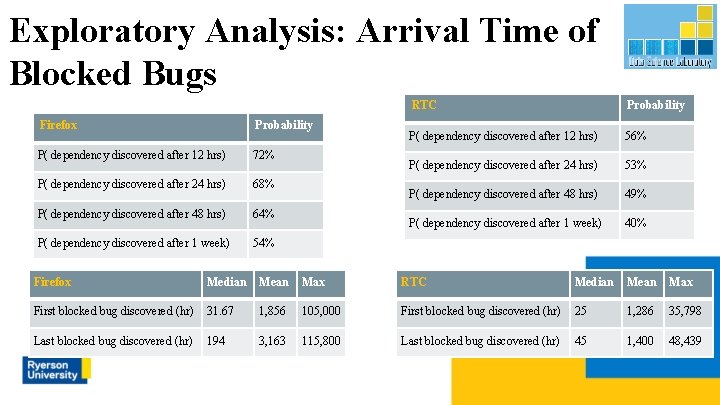

Exploratory Analysis: Arrival Time of Blocked Bugs Firefox Probability P( dependency discovered after 12 hrs) 72% P( dependency discovered after 24 hrs) 68% P( dependency discovered after 48 hrs) 64% P( dependency discovered after 1 week) 54% RTC Probability P( dependency discovered after 12 hrs) 56% P( dependency discovered after 24 hrs) 53% P( dependency discovered after 48 hrs) 49% P( dependency discovered after 1 week) 40% Firefox Median Mean Max RTC Median Mean Max First blocked bug discovered (hr) 31. 67 1, 856 105, 000 First blocked bug discovered (hr) 25 1, 286 35, 798 Last blocked bug discovered (hr) 194 3, 163 115, 800 Last blocked bug discovered (hr) 45 1, 400 48, 439

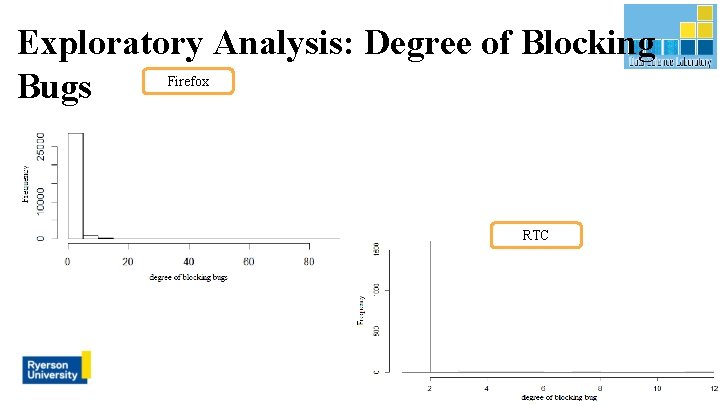

Exploratory Analysis: Degree of Blocking Bugs Firefox RTC

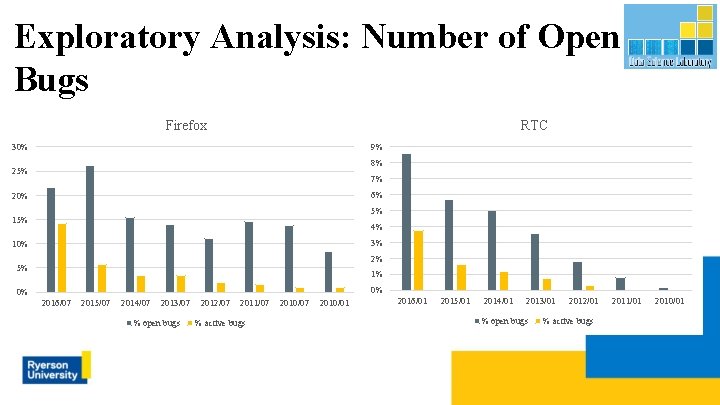

Exploratory Analysis: Number of Open Bugs Firefox RTC 30% 9% 8% 25% 7% 6% 20% 5% 15% 4% 3% 10% 2% 5% 1% 0% 0% 2016/07 2015/07 2014/07 2013/07 % open bugs 2012/07 2011/07 % active bugs 2010/07 2010/01 2016/01 2015/01 2014/01 2013/01 % open bugs 2012/01 % active bugs 2011/01 2010/01

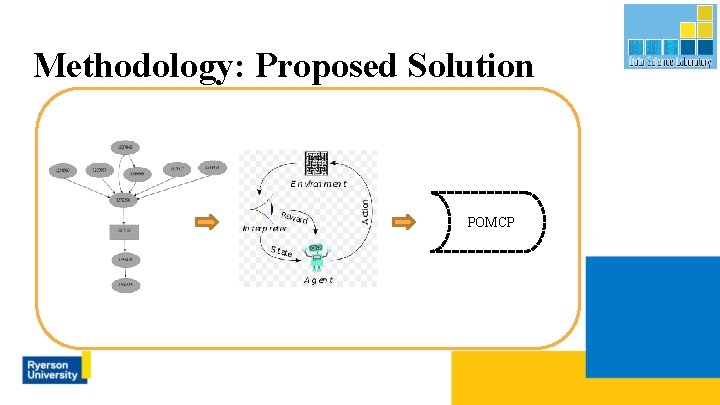

Methodology: Proposed Solution POMCP

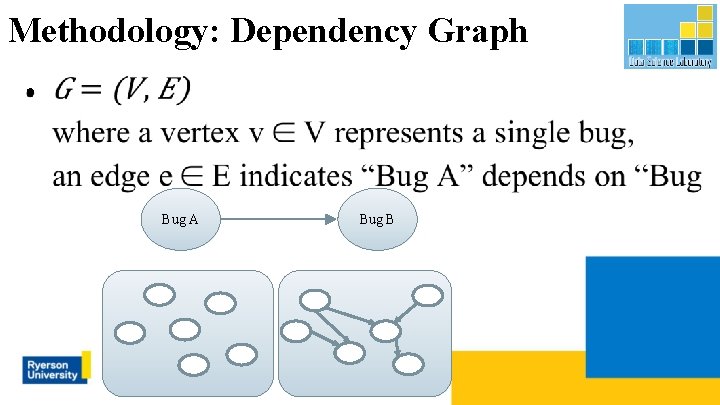

Methodology: Dependency Graph ● Bug A Bug B

Solution ○ ○ ○ Topological analysis of dependency graph Quantifying the impact of the bug report Proposing POMDP framework to prioritize the bug reports Applying POMCP to solve POMDP framework Prioritization of bugs with respect to their relative importance

Future Directions ● Time Evolving Graphs. ○ Many real world graphs are evolving over time; ■ ○ interesting discoveries that could not be observed in static graphs. To use tensor (multi-dimensional array) to model ■ ■ ■ using the time as the 3 rd dimension, find correlations between dimensions using tensor decompositions Link prediction

Data Science Lab (DSL) - Ryerson ● ● A research lab dedicated for Machine Learning applications 10+ industry partners including ○ ○ ○ ○ ● ● Toronto Stock Exchange IBM Canada St Michael's Hospital Communications Research Centre Canada Globe and Mail Canada Blackberry Canada Manulife Canada Toronto Police Services Numerous grants: federal, provincial, industry, government Data Science and Analytics MSc / Certificate Programs

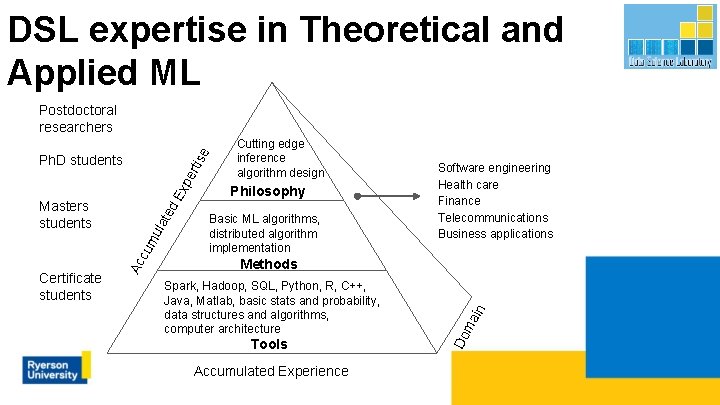

DSL expertise in Theoretical and Applied ML e Postdoctoral researchers Ex pe rtis Ph. D students Philosophy Basic ML algorithms, distributed algorithm implementation Software engineering Health care Finance Telecommunications Business applications Tools Accumulated Experience ma Spark, Hadoop, SQL, Python, R, C++, Java, Matlab, basic stats and probability, data structures and algorithms, computer architecture in Methods Do Certificate students Ac cu mu lat ed Masters students Cutting edge inference algorithm design

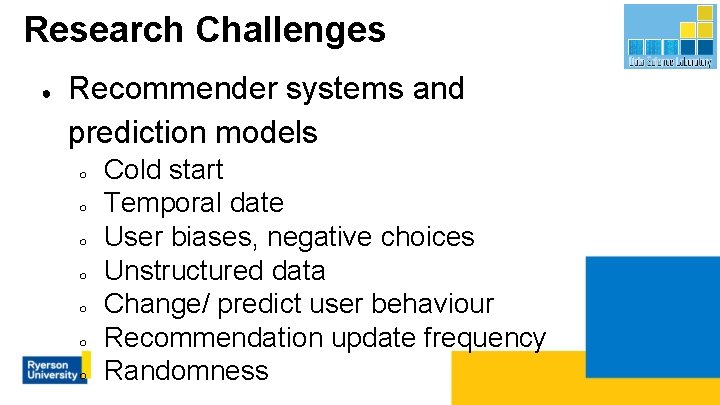

Research Challenges ● Recommender systems and prediction models ○ ○ ○ ○ Cold start Temporal date User biases, negative choices Unstructured data Change/ predict user behaviour Recommendation update frequency Randomness

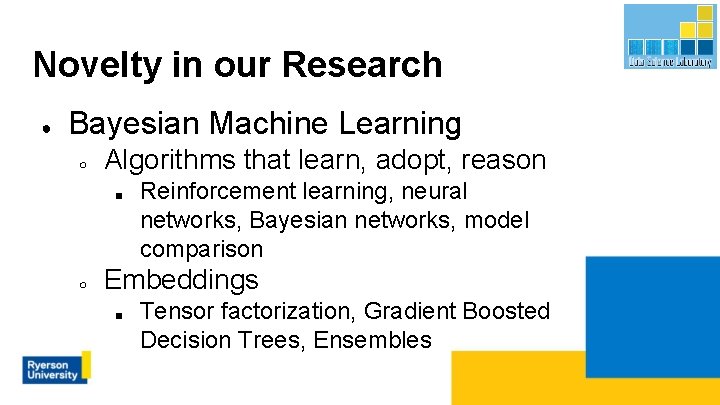

Novelty in our Research ● Bayesian Machine Learning ○ Algorithms that learn, adopt, reason ■ ○ Reinforcement learning, neural networks, Bayesian networks, model comparison Embeddings ■ Tensor factorization, Gradient Boosted Decision Trees, Ensembles

Programs in Data Analytics ● ● ● Certificate in Data Analytics, Big Data, and Predictive Analytics Aligned with CAPS INFORMS Launched in September 2014

Programs in Data Analytics Since 2016 https: //www. ryerson. ca/graduate/datascience

References ● U. Kang, C. Tsourakakis, A. P. Appel, C. Faloutsos, and J. Leskovec. Hadi: Fast diameter estimation and mining in massive graphs with hadoop, 2008. ● U. Kang, C. E. Tsourakakis, and C. Faloutsos. PEGASUS: A Peta-Scale Graph Mining System Implementation and Observations. In Proceedings of the 2009 Ninth IEEE International Conference on Data Mining, ICDM ’ 09, pages 229– 238, Washington, DC, USA, 2009. IEEE Computer Society. ● Aridhi, S. and Nguifo, E. M. , “Big Graph Mining: Frameworks and Techniques”, ar. Xiv: 1602. 03072 v 1 [cs. DC] 9 Feb 2016 ● Acar, E. , Kolda, DG, Dunlavy, D. M. , "Temporal Link Prediction using Matrix and Tensor Factorizations” , ACM Transactions on Knowledge Discovery from Data, 2011/2/1

Thanks for listening http: //www. datasciencelab. ca

- Slides: 52