Web Base Building a Web Warehouse Hector GarciaMolina

Web. Base: Building a Web Warehouse Hector Garcia-Molina Stanford University Work with: Sergey Brin, Junghoo Cho, Taher Haveliwala, Jun Hirai, Glen Jeh, Andy Kacsmar, Sep Kamvar, Wang Lam, Larry Page, Andreas Paepcke, Sriram Raghavan, Gary Wesley 1

The Web • A universal information resource – Model weak, strong agreement • How to exploit it? web 2

Web. Base WEB PAGE 3

Web. Base Goals • Manage very large collections of Web pages – Today: 1500 GB HTML, 200 M pages • Enable large-scale Web-related research • Locally provide a significant portion of the Web • Efficient wide-area Web data distribution 4

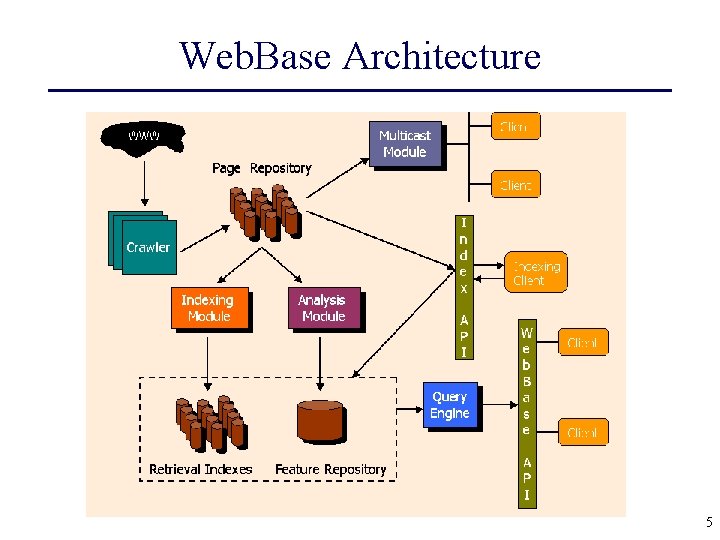

Web. Base Architecture 5

Web. Base Remote Users • • • Berkeley Columbia U. Washington Harvey Mudd Università degli Studi di Milano • U. of Arizona • California Digital Library • Cornell • U. of Houston • Learning Lab Lower Saxony (L 3 S) • France Telecom • U. Texas 6

Outline • Technical Challenges • Web. Base Use • The Future 7

Challenges • Scalability – – crawling archive distribution index construction storage • Consistency – freshness – versions • Dissemination • Archiving – “units” – coordination • IP Management – copy access – link access – access control • Hidden Web • Topic-Specific Collection Building 8

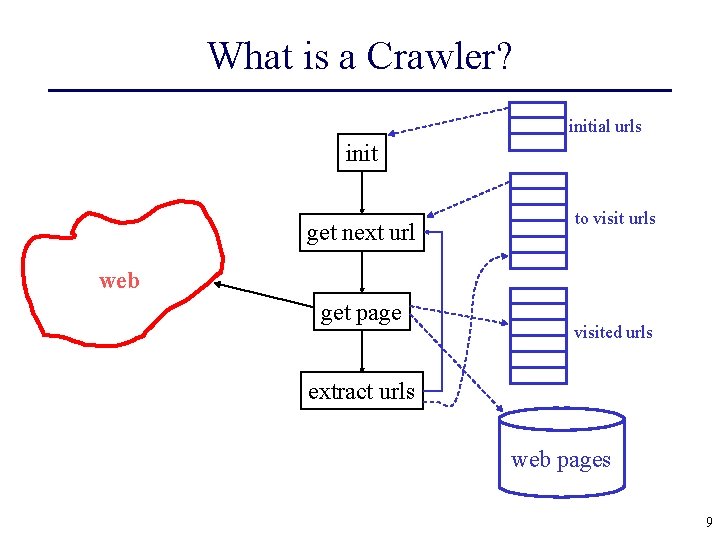

What is a Crawler? initial urls init get next url to visit urls web get page visited urls extract urls web pages 9

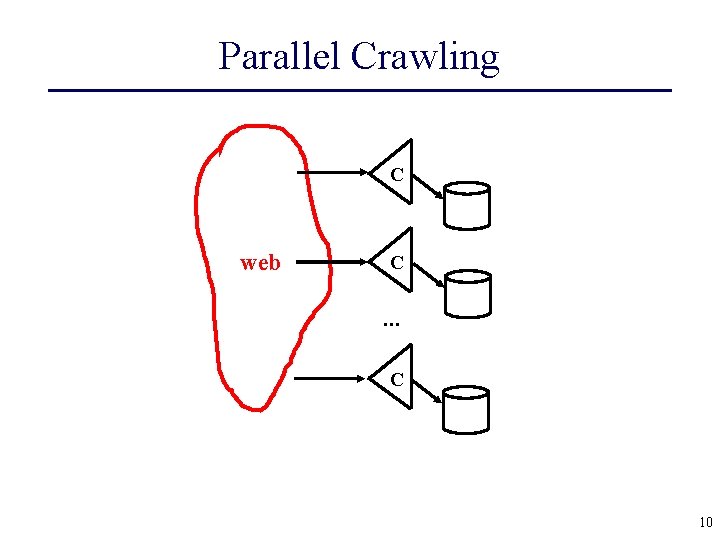

Parallel Crawling C web C . . . C 10

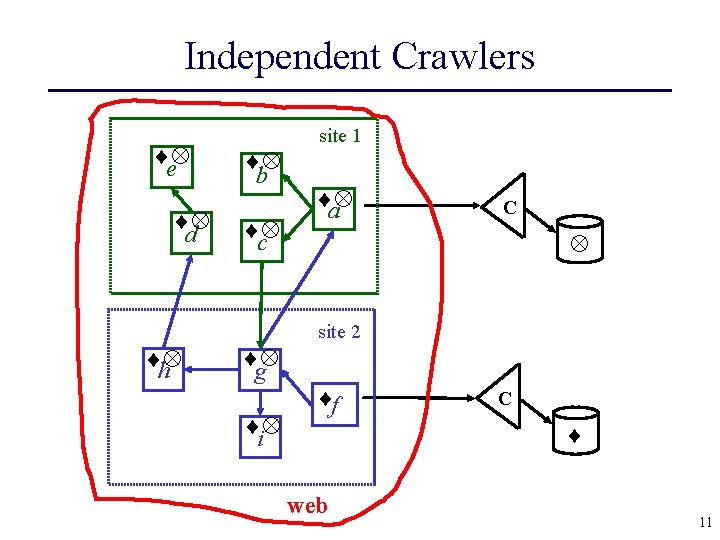

Independent Crawlers e d b c site 1 a C site 2 h g i f C web 11

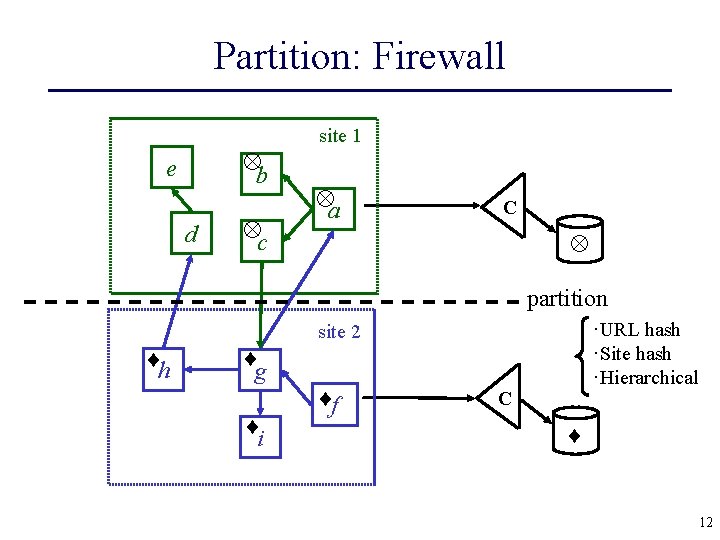

Partition: Firewall b e d c site 1 a C partition ·URL hash ·Site hash ·Hierarchical site 2 h g i f C 12

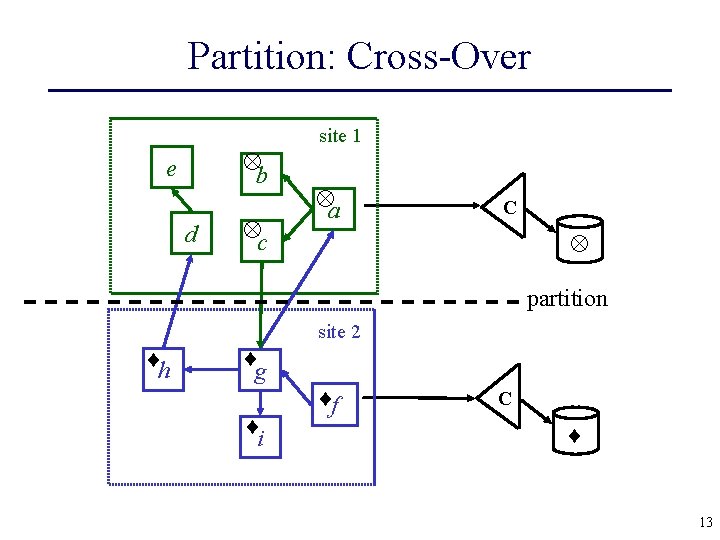

Partition: Cross-Over b e d c site 1 a C partition site 2 h g i f C 13

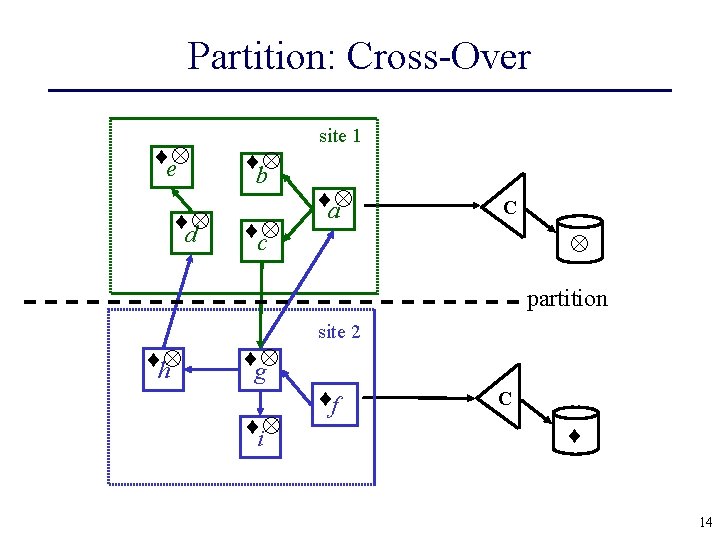

Partition: Cross-Over e d b c site 1 a C partition site 2 h g i f C 14

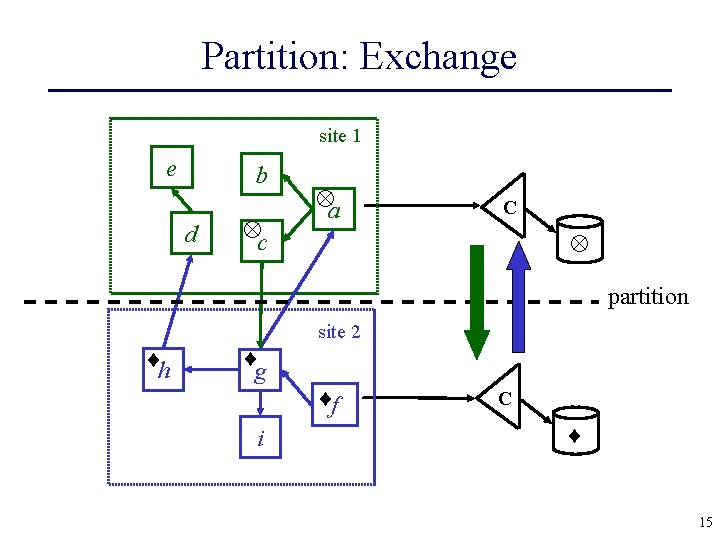

Partition: Exchange site 1 e b d c a C partition site 2 h g i f C 15

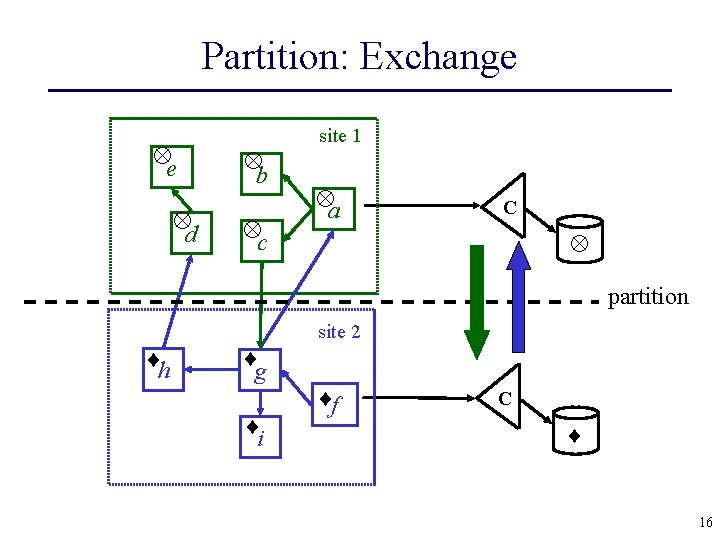

Partition: Exchange e d b c site 1 a C partition site 2 h g i f C 16

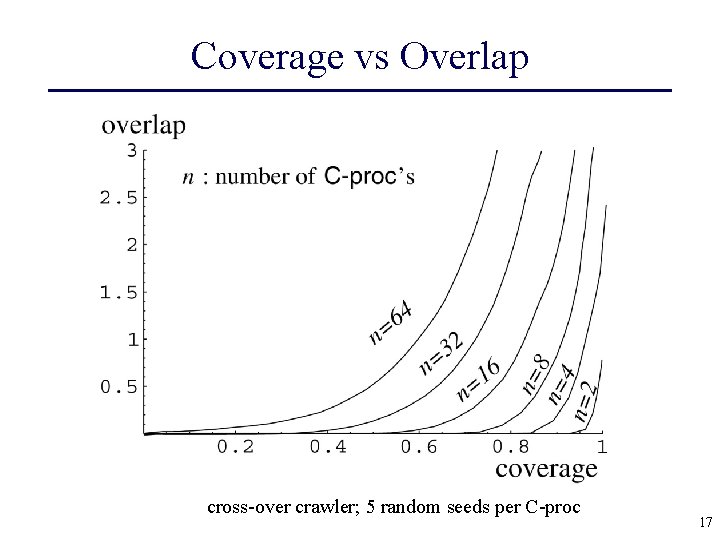

Coverage vs Overlap cross-over crawler; 5 random seeds per C-proc 17

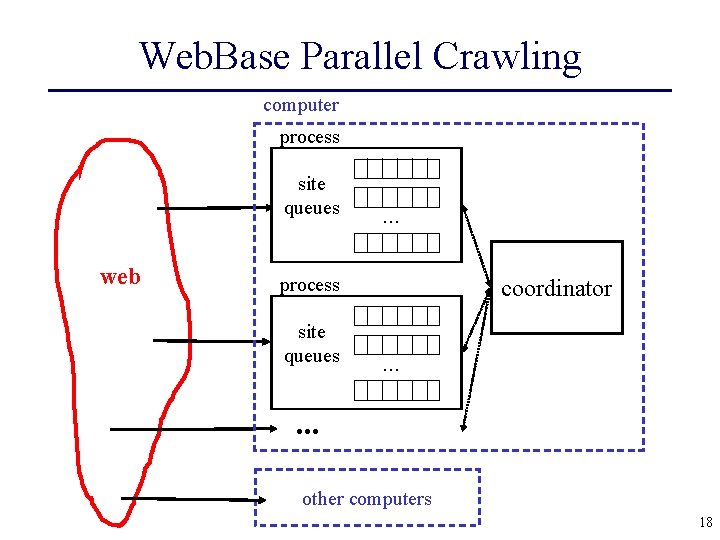

Web. Base Parallel Crawling computer process site queues web . . . process site queues coordinator. . . other computers 18

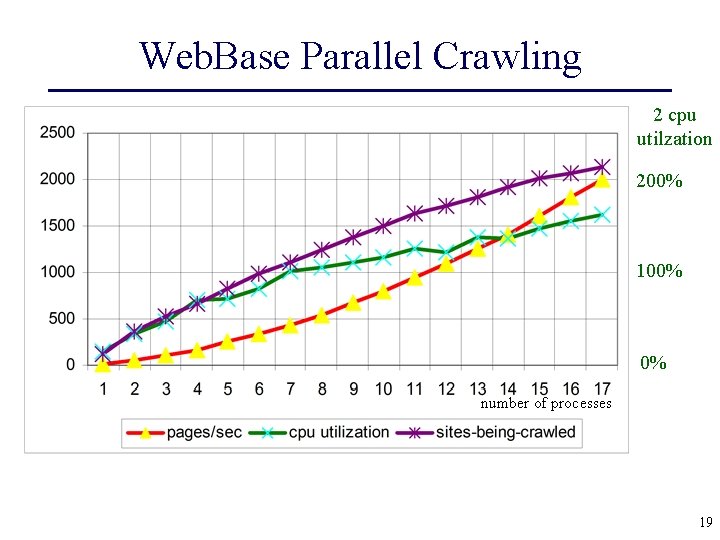

Web. Base Parallel Crawling 2 cpu utilzation 200% 100% 0% number of processes 19

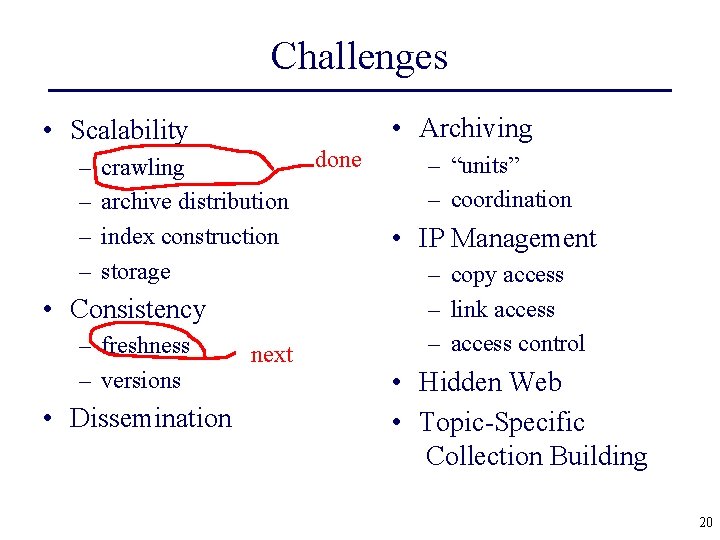

Challenges • Archiving • Scalability – – crawling archive distribution index construction storage • Consistency – freshness – versions • Dissemination next done – “units” – coordination • IP Management – copy access – link access – access control • Hidden Web • Topic-Specific Collection Building 20

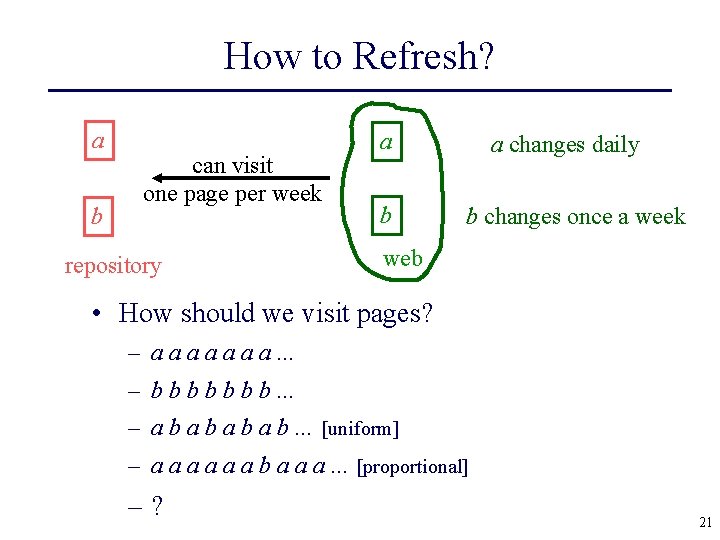

How to Refresh? a b can visit one page per week repository a b a changes daily b changes once a week web • How should we visit pages? – a a a a. . . – b b b b. . . – a b a b. . . [uniform] – a a a b a a a. . . [proportional] –? 21

Using Web. Base • Fast Page Rank • Complex Queries 22

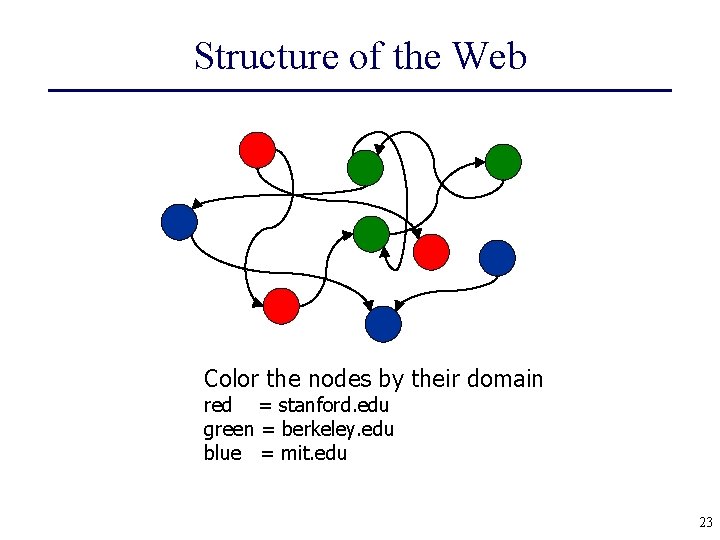

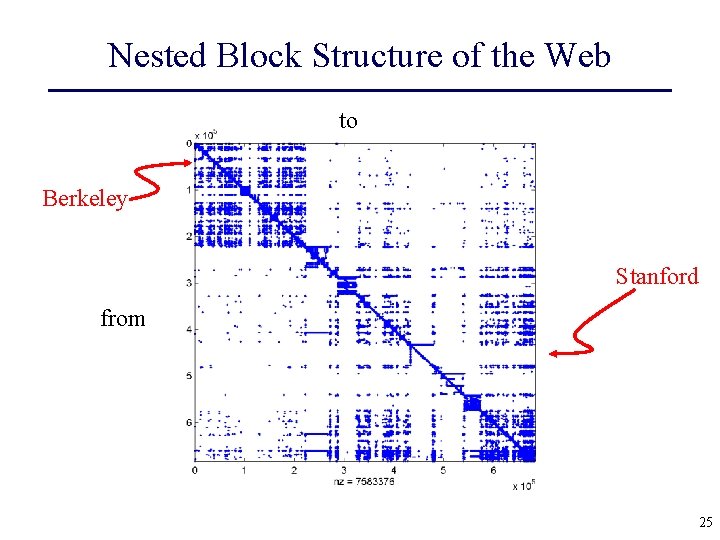

Structure of the Web Color the nodes by their domain red = stanford. edu green = berkeley. edu blue = mit. edu 23

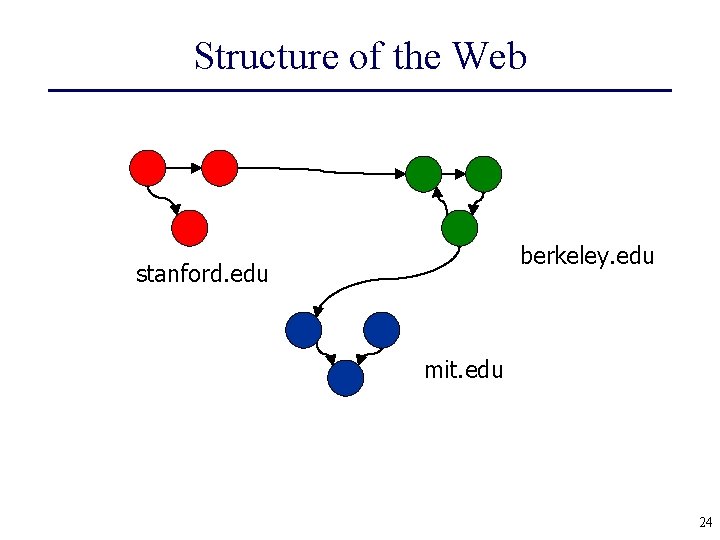

Structure of the Web berkeley. edu stanford. edu mit. edu 24

Nested Block Structure of the Web to Berkeley Stanford from 25

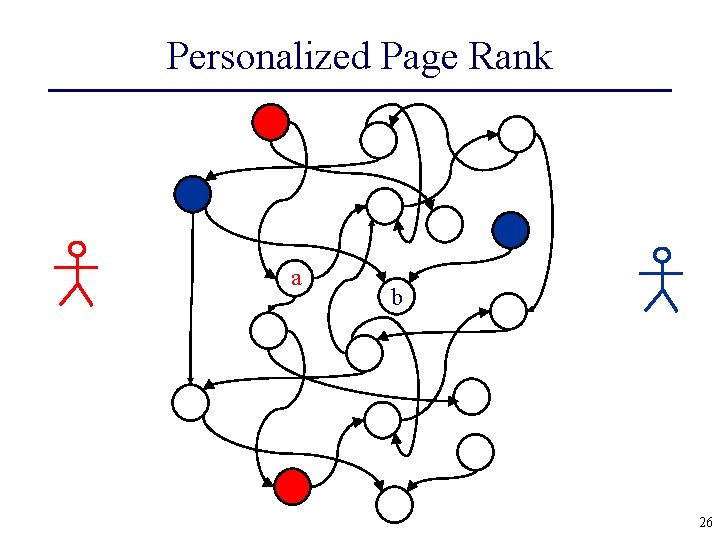

Personalized Page Rank a b 26

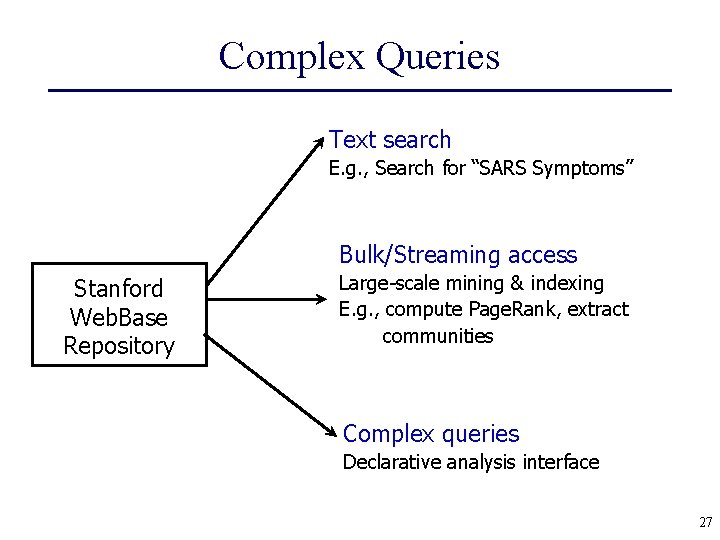

Complex Queries Text search E. g. , Search for “SARS Symptoms” Bulk/Streaming access Stanford Web. Base Repository Large-scale mining & indexing E. g. , compute Page. Rank, extract communities Complex queries Declarative analysis interface 27

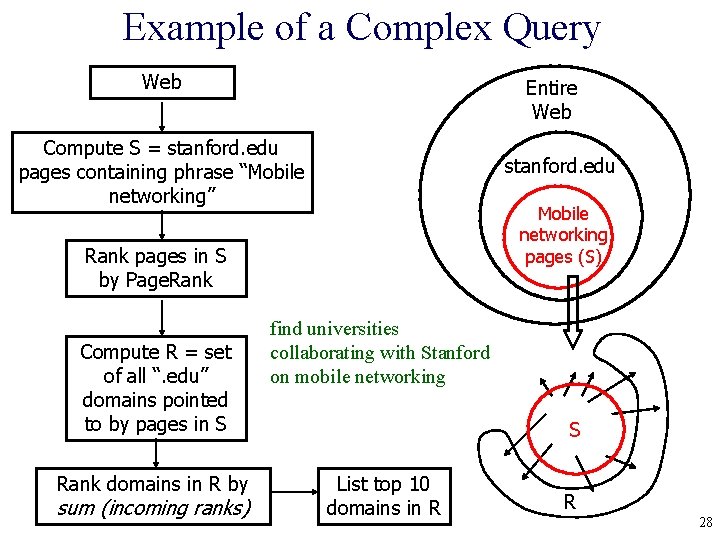

Example of a Complex Query Web Entire Web Compute S = stanford. edu pages containing phrase “Mobile networking” stanford. edu Mobile networking pages (S) Rank pages in S by Page. Rank Compute R = set of all “. edu” domains pointed to by pages in S Rank domains in R by sum (incoming ranks) find universities collaborating with Stanford on mobile networking S List top 10 domains in R R 28

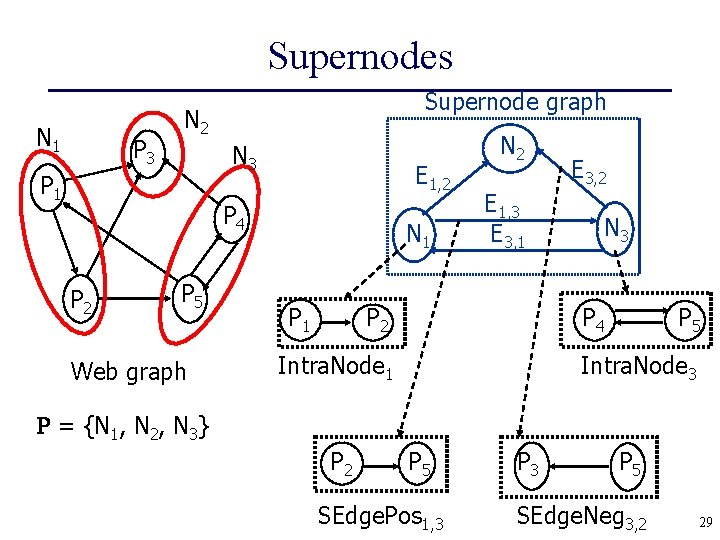

Supernodes N 1 P 3 Supernode graph N 2 N 3 P 1 E 1, 2 P 4 P 2 P 5 Web graph N 1 P 1 N 2 E 3, 2 E 1, 3 E 3, 1 N 3 P 4 P 2 Intra. Node 1 P 5 Intra. Node 3 = {N 1, N 2, N 3} P 2 P 5 SEdge. Pos 1, 3 P 5 SEdge. Neg 3, 2 29

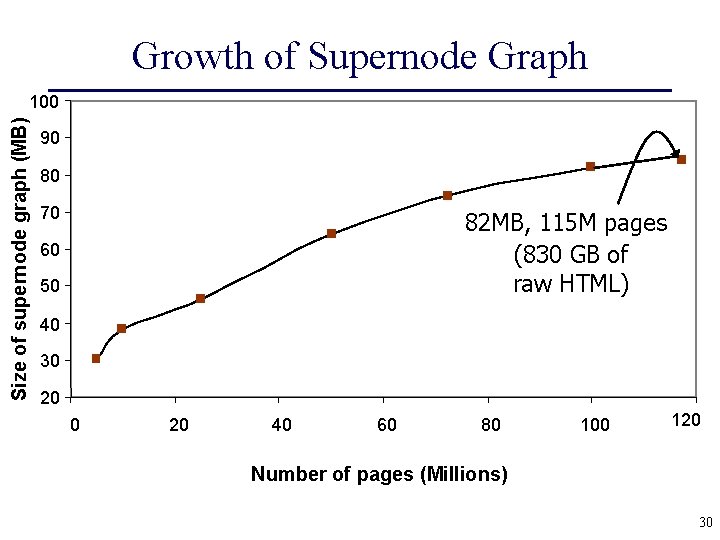

Growth of Supernode Graph Size of supernode graph (MB) 100 90 80 70 82 MB, 115 M pages (830 GB of raw HTML) 60 50 40 30 20 40 60 80 100 120 Number of pages (Millions) 30

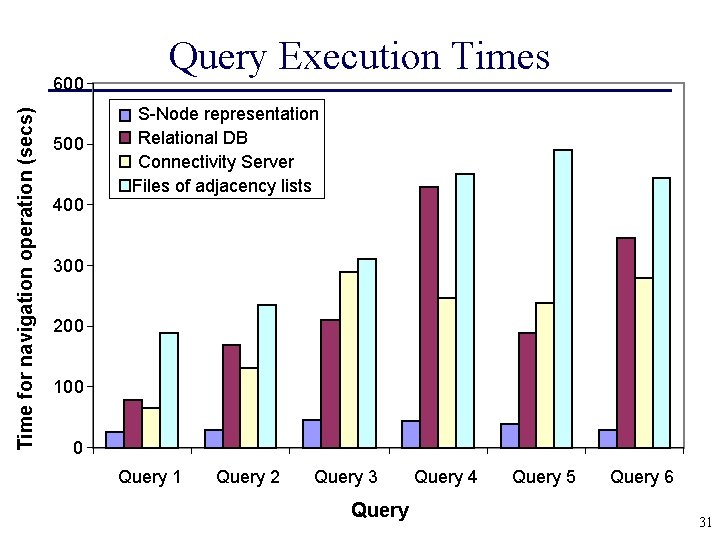

Time for navigation operation (secs) 600 500 400 Query Execution Times S-Node representation Relational DB Connectivity Server Files of adjacency lists 300 200 100 0 Query 1 Query 2 Query 3 Query 4 Query 5 Query 6 31

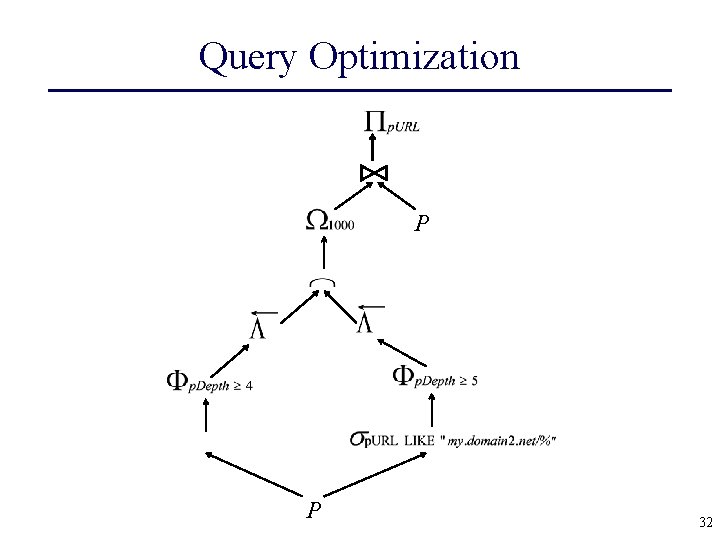

Query Optimization P P 32

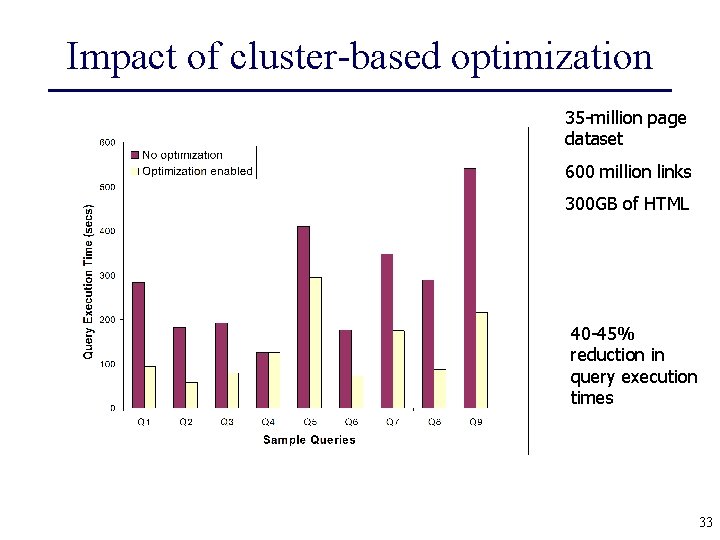

Impact of cluster-based optimization 35 -million page dataset 600 million links 300 GB of HTML 40 -45% reduction in query execution times 33

Conclusion (So Far) • Web is universal information resource • Web. Base exploits this resource • Web. Base Challenges: – scalability, consitency, complex queries. . . • The Future for Web. Base (and clones)? ? 34

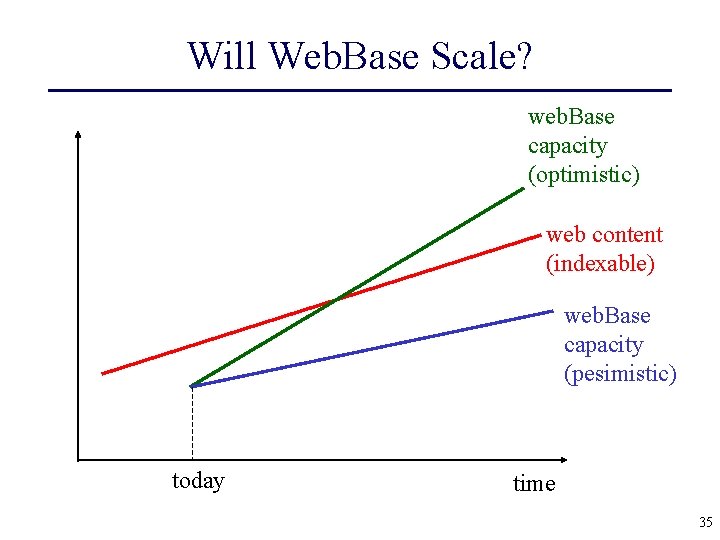

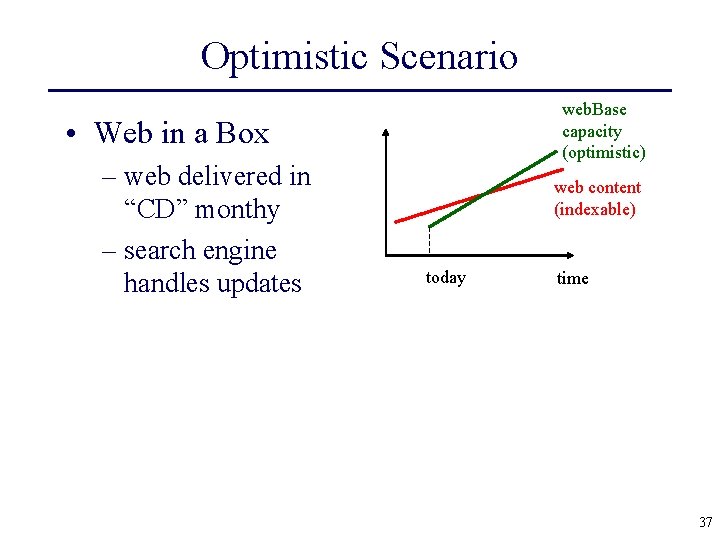

Will Web. Base Scale? web. Base capacity (optimistic) web content (indexable) web. Base capacity (pesimistic) today time 35

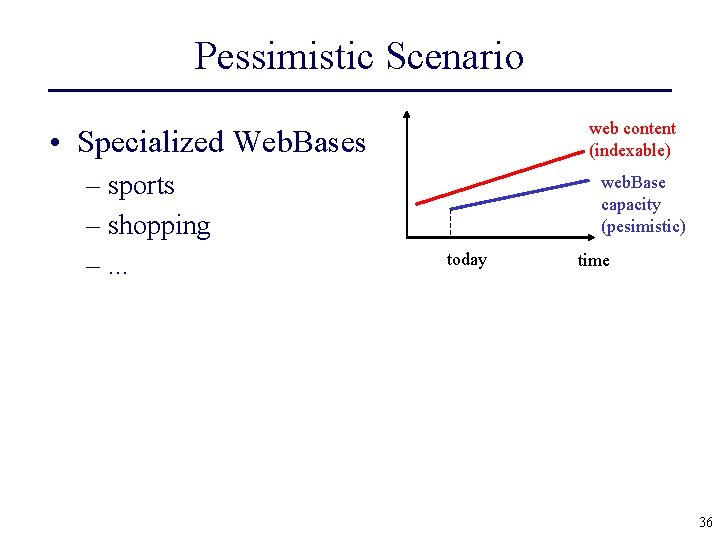

Pessimistic Scenario web content (indexable) • Specialized Web. Bases – sports – shopping –. . . web. Base capacity (pesimistic) today time 36

Optimistic Scenario web. Base capacity (optimistic) • Web in a Box – web delivered in “CD” monthy – search engine handles updates web content (indexable) today time 37

Legal Issues? • Is Web. Base legal? – copies – links, deep linking • International regulations 38

Biasing Results • How long will Google, Altavista, etc. resist “temptations”? • Biasing Crawler • Link and Content Spam 39

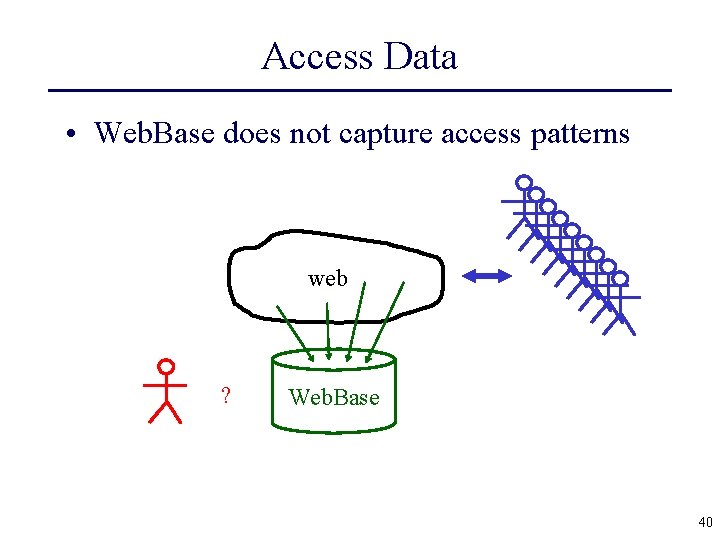

Access Data • Web. Base does not capture access patterns web ? Web. Base 40

Semantic Web? semantic tags web ? Web. Base • Will tags be generated? • By whom? • Agreement? 41

Future Technical Challenges • Incremental Updates • Query Optimization • Crawling Deep Web 42

Final Conclusion • Many challenges ahead. . . • Additional information: Google: Stanford Web. Base WEB PAGE 43

- Slides: 43