Intelligent Crawling Junghoo Cho Hector GarciaMolina Stanford Info

- Slides: 24

Intelligent Crawling Junghoo Cho Hector Garcia-Molina Stanford Info. Lab 1

What is a crawler? n n Program that automatically retrieves pages from the Web. Widely used for search engines. 2

Challenges n n n There are many pages out on the Web. (Major search engines indexed more than 100 M pages) The size of the Web is growing enormously. Most of them are not very interesting In most cases, it is too costly or not worthwhile to visit the entire Web space. 3

Good crawling strategy n Make the crawler visit “important pages” first. n n n Save network bandwidth Save storage space and management cost Serve quality pages to the client application 4

Outline n n Importance metrics : what are important pages? Crawling models : How is crawler evaluated? Experiments Conclusion & Future work 5

Importance metric The metric for determining if a page is HOT n n Similarity to driving query Location Metric Backlink count Page Rank 6

Similarity to a driving query Example) “Sports”, “Bill Clinton” the pages related to a specific topic n n Importance is measured by closeness of the page to the topic (e. g. the number of the topic word in the page) Personalized crawler 7

Importance metric The metric for determining if a page is HOT n n Similarity to driving query Location Metric Backlink count Page Rank 8

Backlink-based metric n Backlink count n number of pages pointing to the page Citation metric Page Rank n n weighted backlink count weight is iteratively defined 9

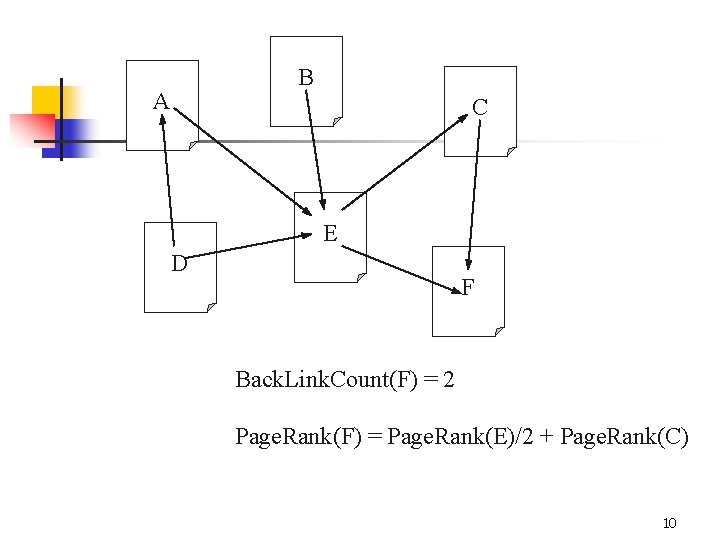

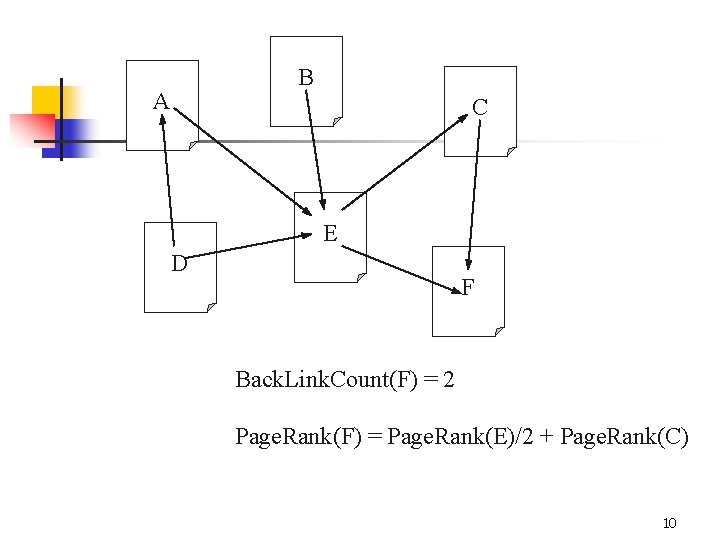

B A C E D F Back. Link. Count(F) = 2 Page. Rank(F) = Page. Rank(E)/2 + Page. Rank(C) 10

Ordering metric n n The metric for a crawler to “estimate” the importance of a page The ordering metric can be different from the importance metric 11

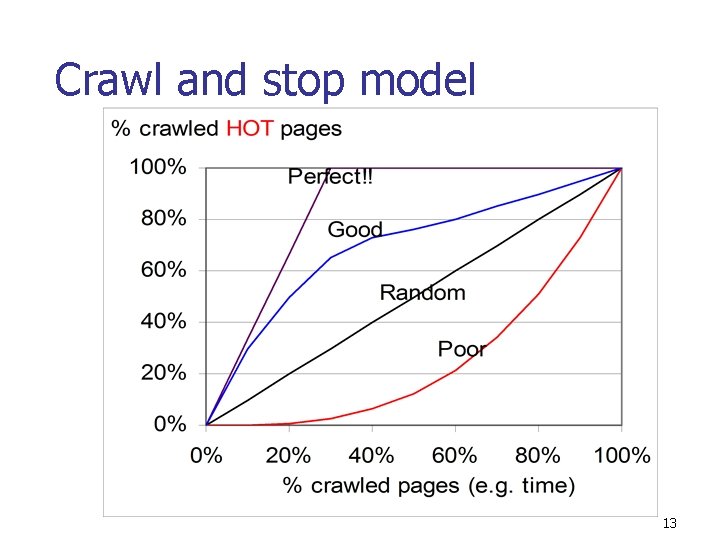

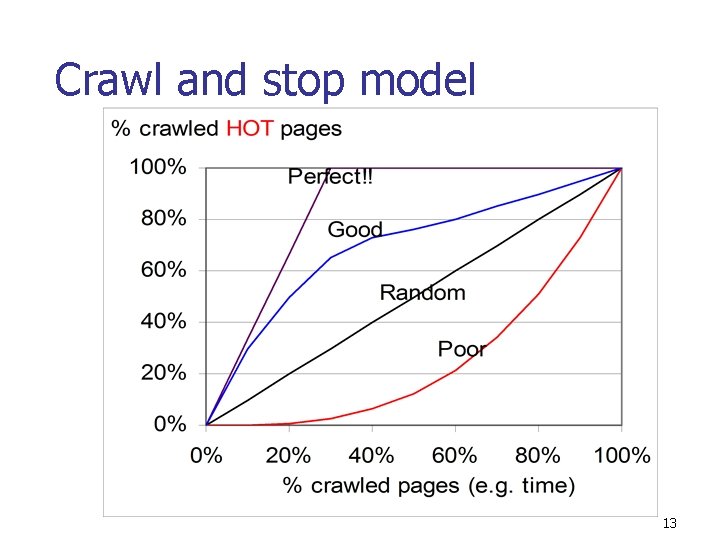

Crawling models n Crawl and Stop n n Keep crawling until the local disk space is full. Limited buffer crawl n Keep crawling until the whole web space is visited throwing out seemingly unimportant pages. 12

Crawl and stop model 13

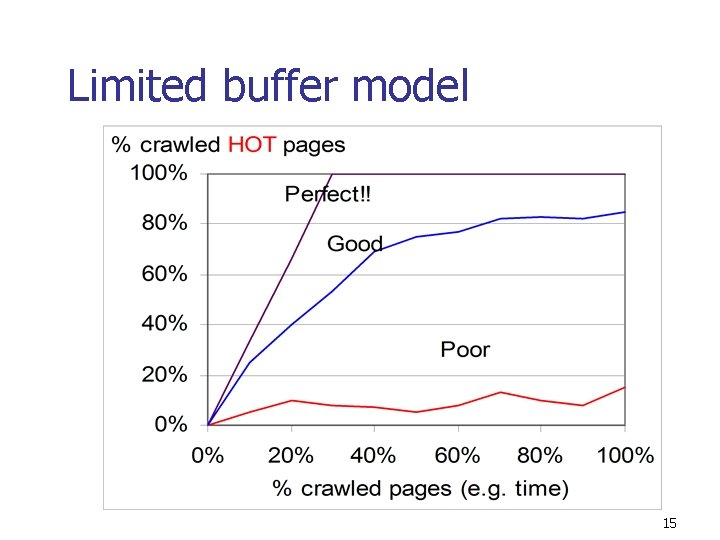

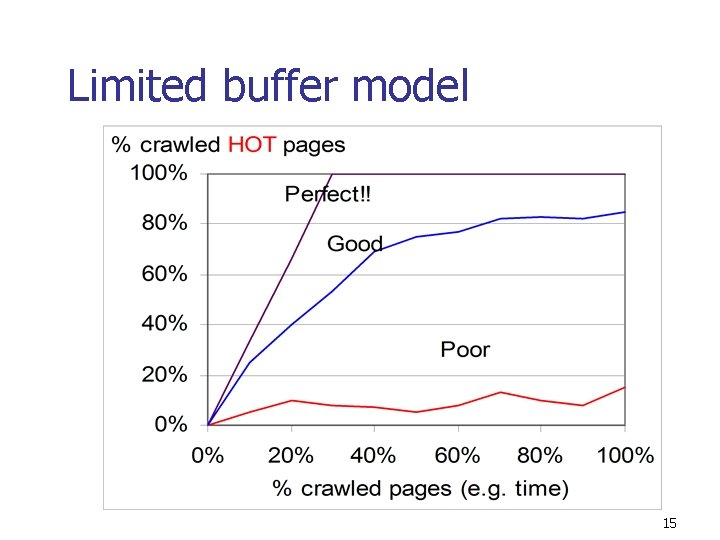

Crawling models n Crawl and Stop n n Keep crawling until the local disk space is full. Limited buffer crawl n Keep crawling until the whole web space is visited throwing out seemingly unimportant pages. 14

Limited buffer model 15

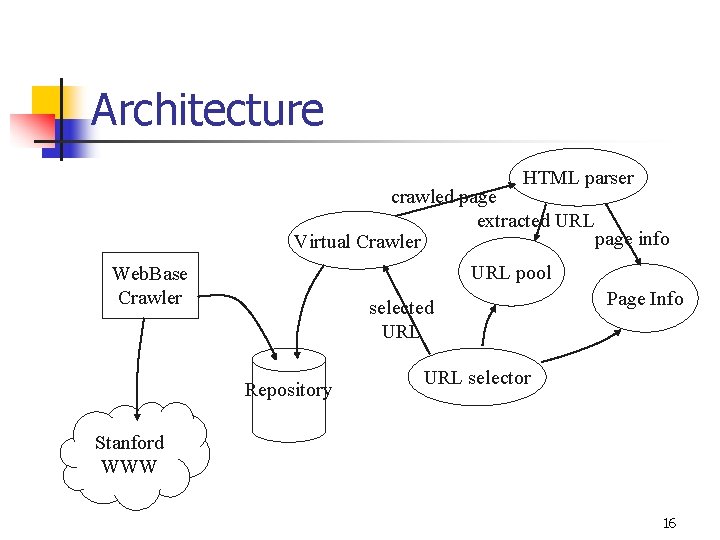

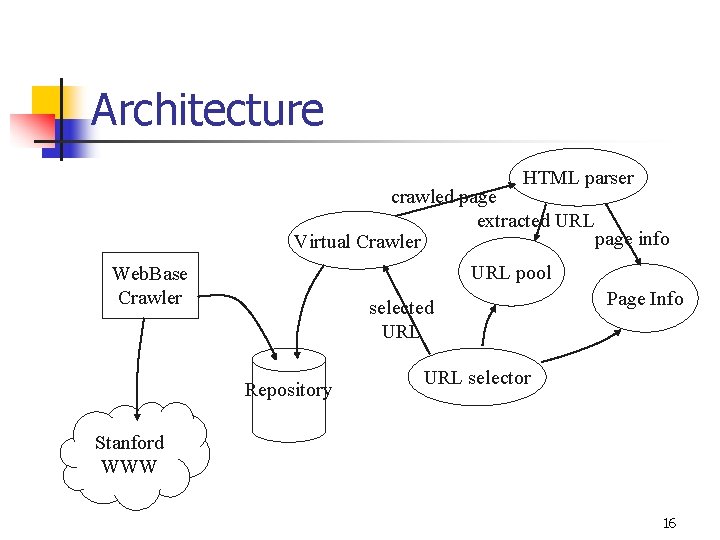

Architecture HTML parser crawled page extracted URL page info Virtual Crawler URL pool Web. Base Crawler selected URL Repository Page Info URL selector Stanford WWW 16

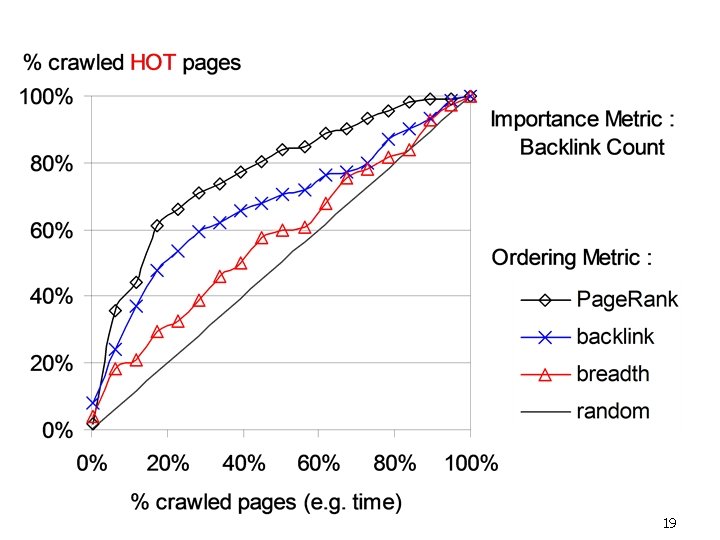

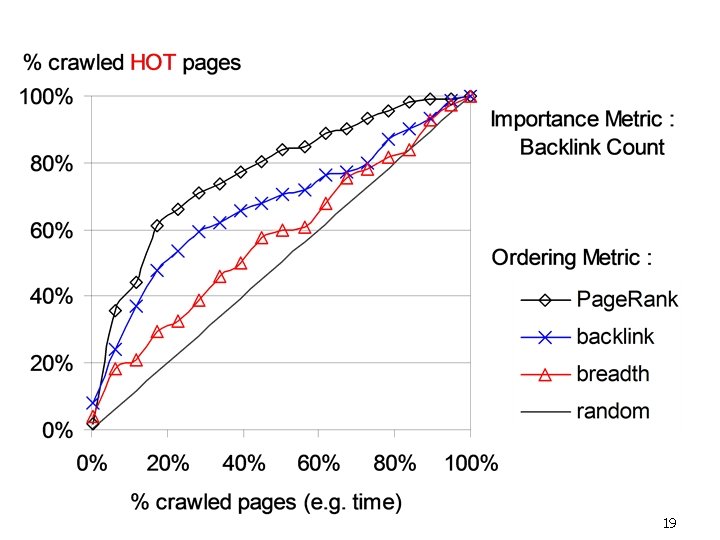

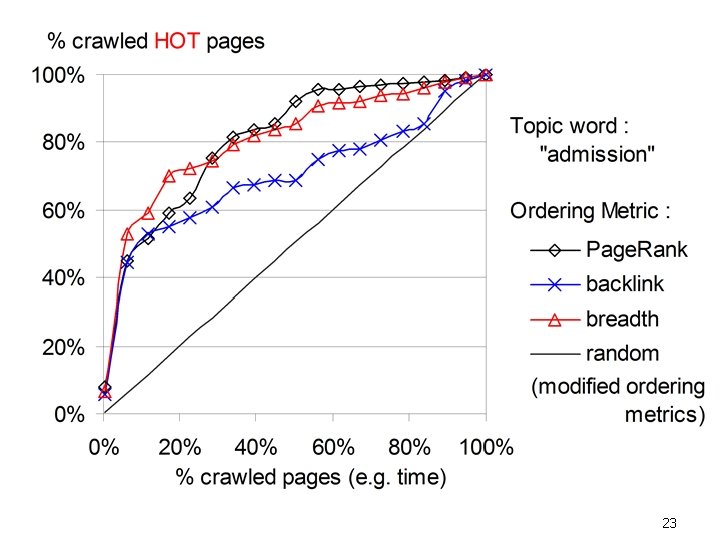

Experiments n Backlink-based importance metric n n n backlink count Page. Rank Similiarty-based importance metric n similarity to a query word 17

Ordering metrics in experiments n Breadth first order n Backlink count n Page. Rank 18

19

Similarity-based crawling n n n The content of the page is not available before it is visited Essentially, the crawler should “guess” the content of the page More difficult than backlink-based crawling 20

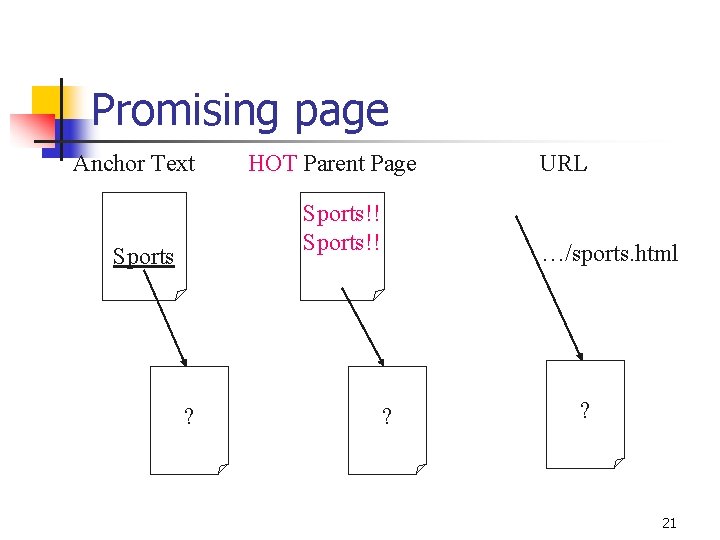

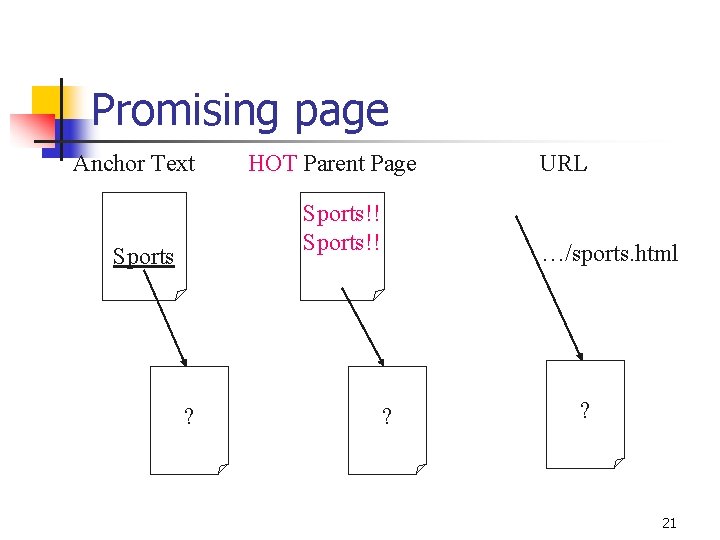

Promising page Anchor Text HOT Parent Page Sports!! Sports ? URL …/sports. html ? ? 21

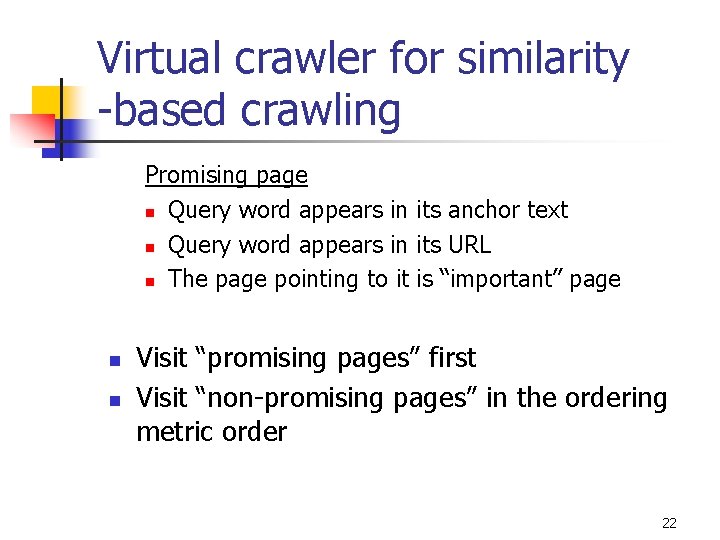

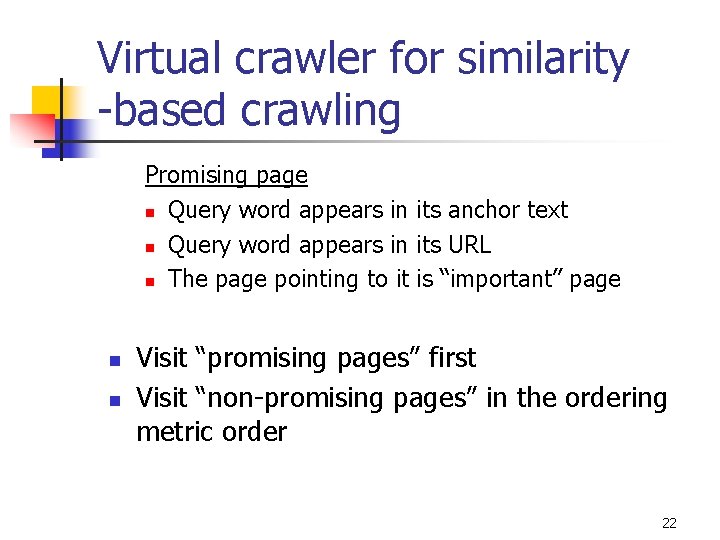

Virtual crawler for similarity -based crawling Promising page n Query word appears in its anchor text n Query word appears in its URL n The page pointing to it is “important” page n n Visit “promising pages” first Visit “non-promising pages” in the ordering metric order 22

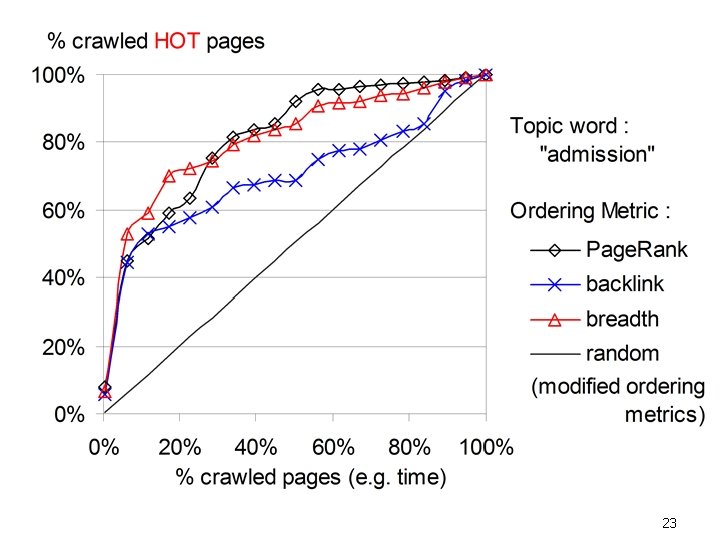

23

Conclusion n n Page. Rank is generally good as an ordering metric. By applying a good ordering metric, it is possible to gather important pages quickly. 24