Thinking Outside of the TeraScale Box Piotr Luszczek

Thinking Outside of the Tera-Scale Box Piotr Luszczek

Brief History of Tera-flop: 1997 ASCI Red

Brief History of Tera-flop: 2007 Intel Polaris 2007 1997 ASCI Red

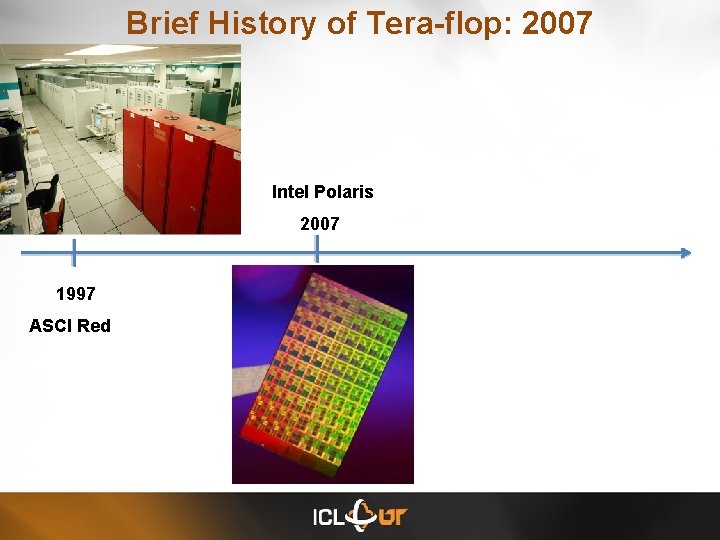

Brief History of Tera-flop: GPGPU Intel Polaris 2007 1997 ASCI Red 2008

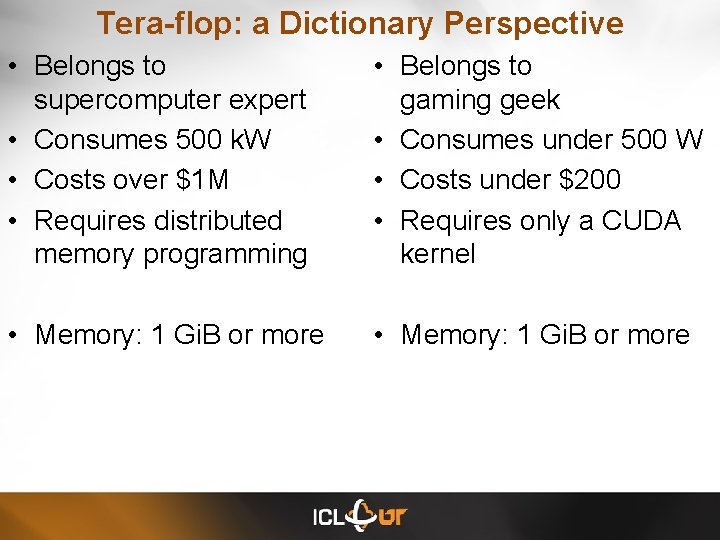

Tera-flop: a Dictionary Perspective • Belongs to supercomputer expert • Consumes 500 k. W • Costs over $1 M • Requires distributed memory programming • Belongs to gaming geek • Consumes under 500 W • Costs under $200 • Requires only a CUDA kernel • Memory: 1 Gi. B or more

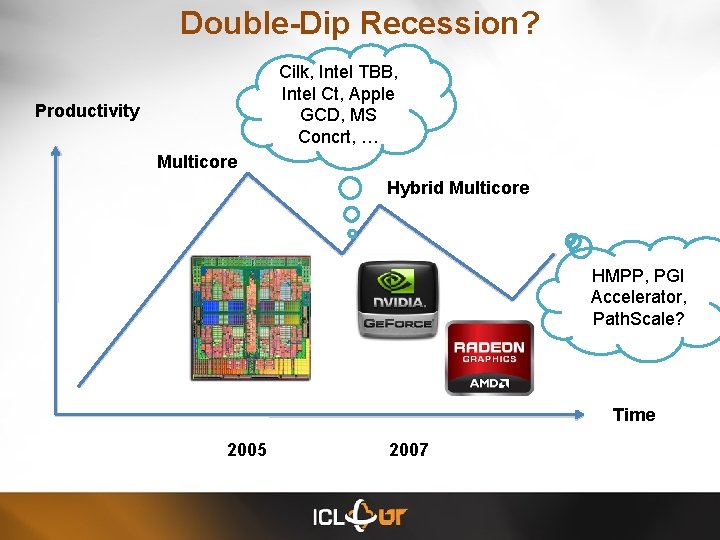

Double-Dip Recession? Cilk, Intel TBB, Intel Ct, Apple GCD, MS Concrt, … Productivity Multicore Hybrid Multicore HMPP, PGI Accelerator, Path. Scale? Time 2005 2007

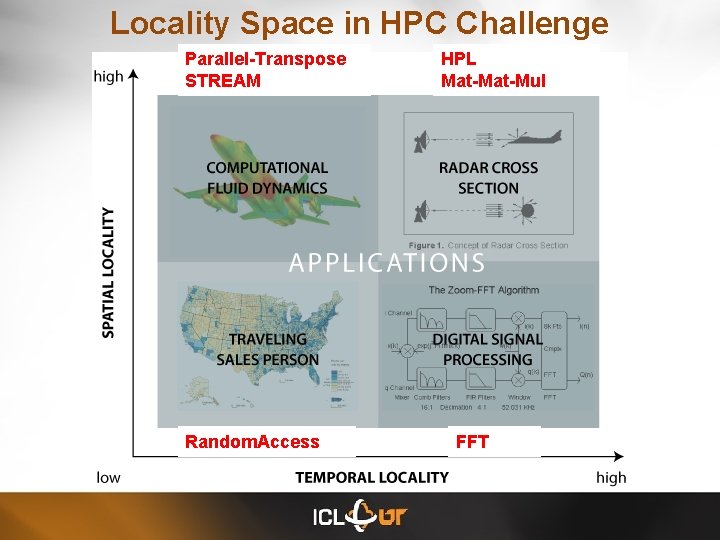

Locality Space in HPC Challenge Parallel-Transpose STREAM Random. Access HPL Mat-Mul FFT

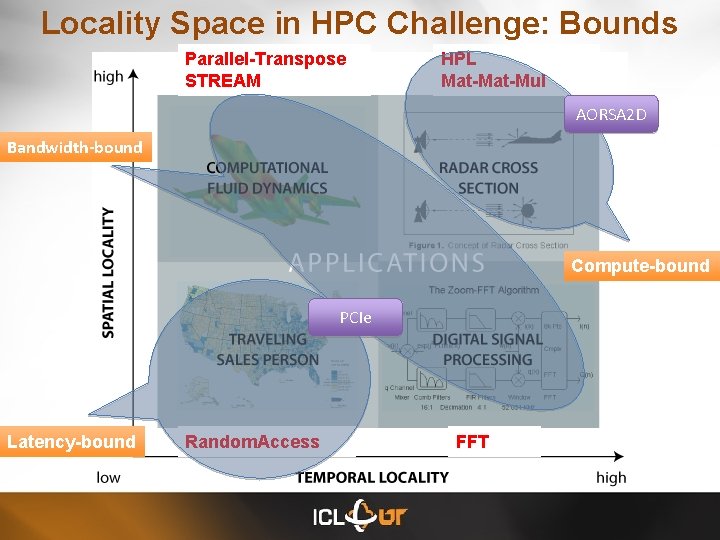

Locality Space in HPC Challenge: Bounds Parallel-Transpose STREAM HPL Mat-Mul AORSA 2 D Bandwidth-bound Compute-bound PCIe Latency-bound Random. Access FFT

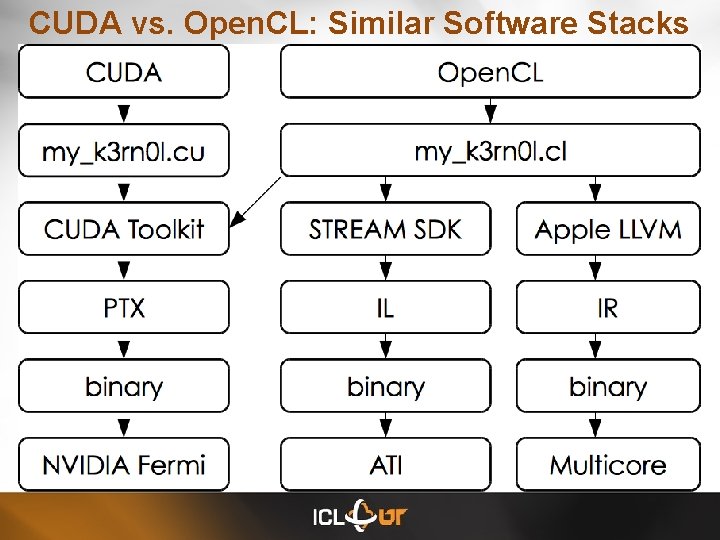

CUDA vs. Open. CL: Similar Software Stacks

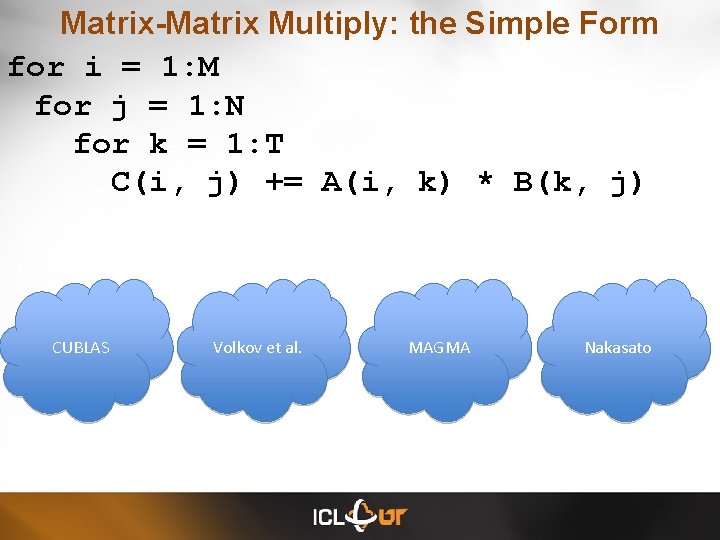

Matrix-Matrix Multiply: the Simple Form for i = 1: M for j = 1: N for k = 1: T C(i, j) += A(i, k) * B(k, j) CUBLAS Volkov et al. MAGMA Nakasato

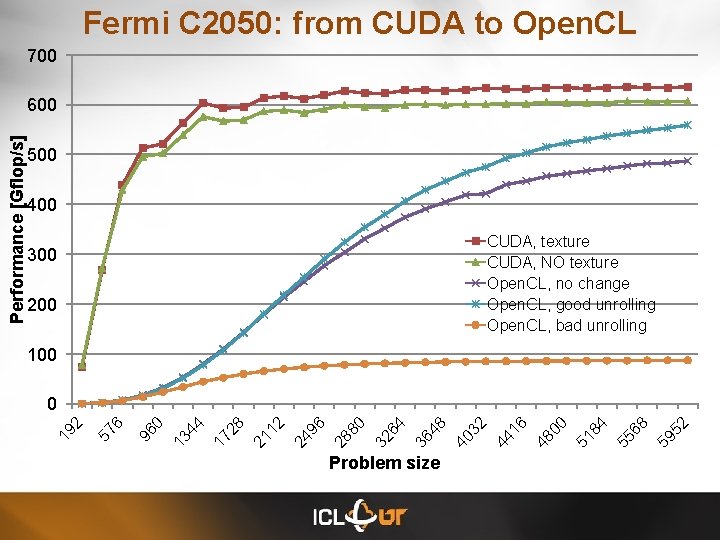

Fermi C 2050: from CUDA to Open. CL 700 500 400 CUDA, texture CUDA, NO texture Open. CL, no change Open. CL, good unrolling Open. CL, bad unrolling 300 200 100 Problem size 59 52 55 68 51 84 48 00 44 16 40 32 36 48 32 64 28 80 24 96 21 12 17 28 13 44 96 0 57 6 0 19 2 Performance [Gflop/s] 600

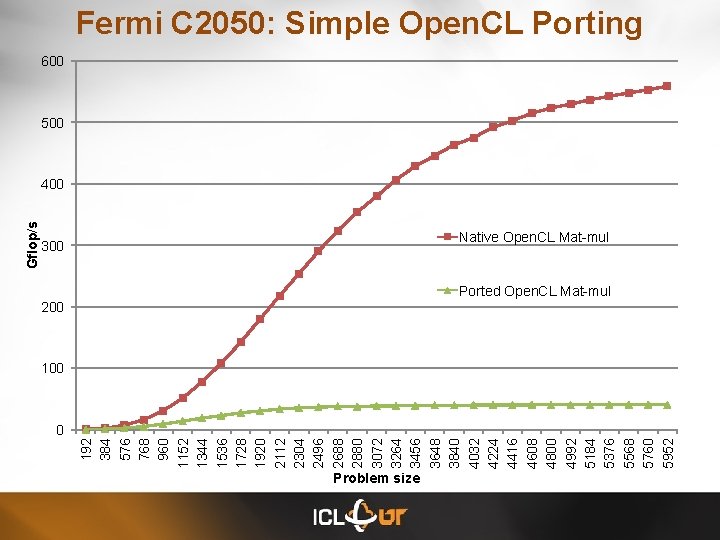

192 384 576 768 960 1152 1344 1536 1728 1920 2112 2304 2496 2688 2880 3072 3264 3456 3648 3840 4032 4224 4416 4608 4800 4992 5184 5376 5568 5760 5952 Gflop/s Fermi C 2050: Simple Open. CL Porting 600 500 400 300 Native Open. CL Mat-mul Ported Open. CL Mat-mul 200 100 0 Problem size

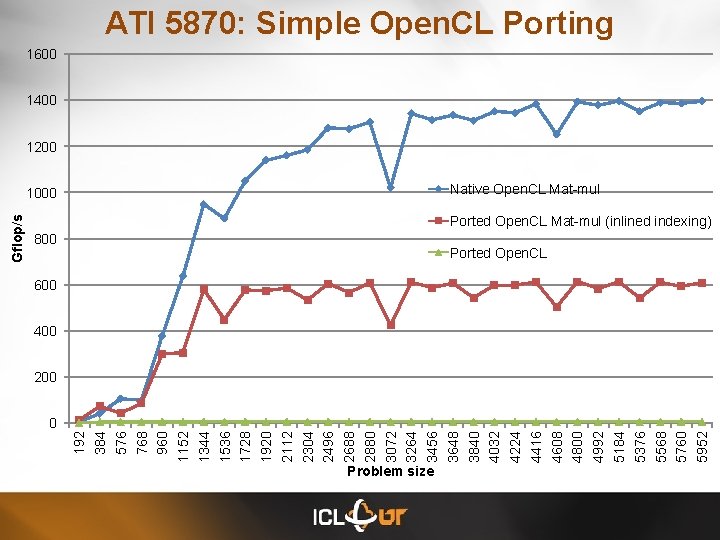

ATI 5870: Simple Open. CL Porting 1600 1400 1200 Native Open. CL Mat-mul Ported Open. CL Mat-mul (inlined indexing) 800 Ported Open. CL 600 400 200 0 192 384 576 768 960 1152 1344 1536 1728 1920 2112 2304 2496 2688 2880 3072 3264 3456 3648 3840 4032 4224 4416 4608 4800 4992 5184 5376 5568 5760 5952 Gflop/s 1000 Problem size

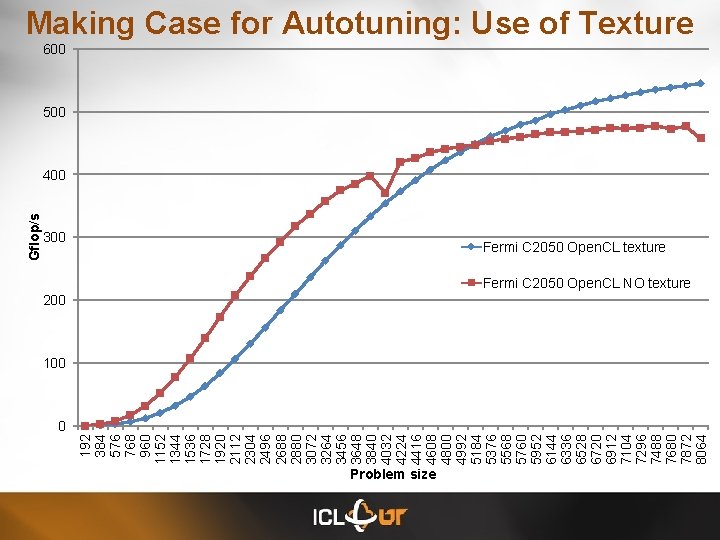

192 384 576 768 960 1152 1344 1536 1728 1920 2112 2304 2496 2688 2880 3072 3264 3456 3648 3840 4032 4224 4416 4608 4800 4992 5184 5376 5568 5760 5952 6144 6336 6528 6720 6912 7104 7296 7488 7680 7872 8064 Gflop/s Making Case for Autotuning: Use of Texture 600 500 400 300 Fermi C 2050 Open. CL texture Fermi C 2050 Open. CL NO texture 200 100 0 Problem size

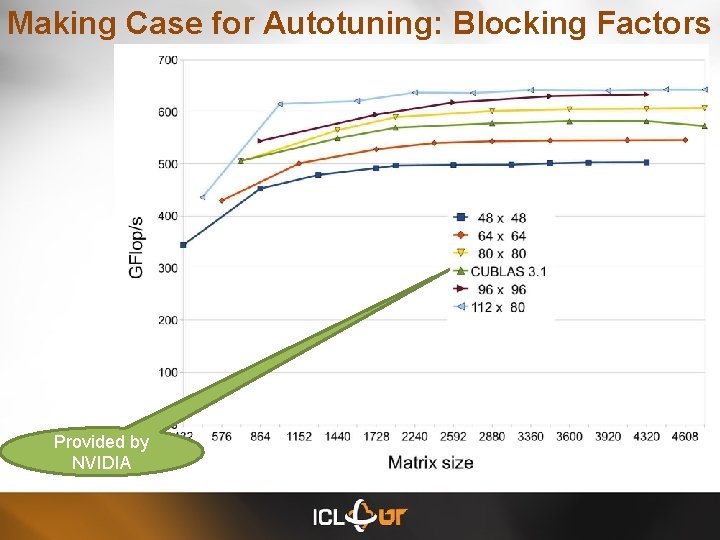

Making Case for Autotuning: Blocking Factors Provided by NVIDIA

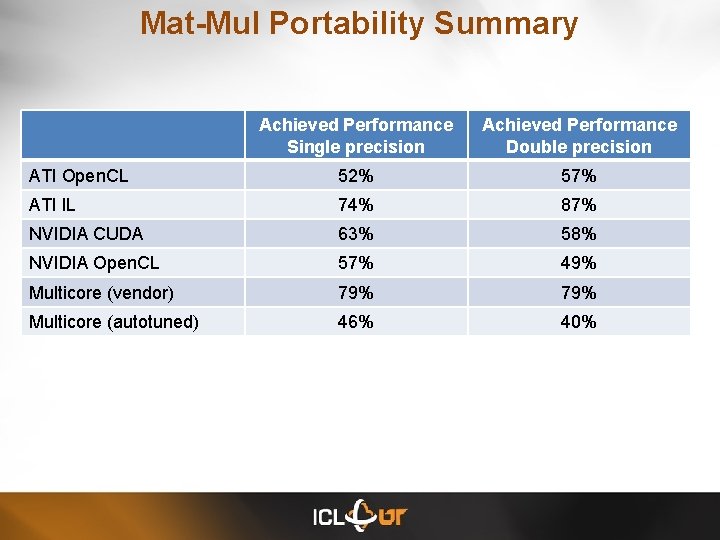

Mat-Mul Portability Summary Achieved Performance Single precision Achieved Performance Double precision ATI Open. CL 52% 57% ATI IL 74% 87% NVIDIA CUDA 63% 58% NVIDIA Open. CL 57% 49% Multicore (vendor) 79% Multicore (autotuned) 46% 40%

Now, let’s think outside of the box

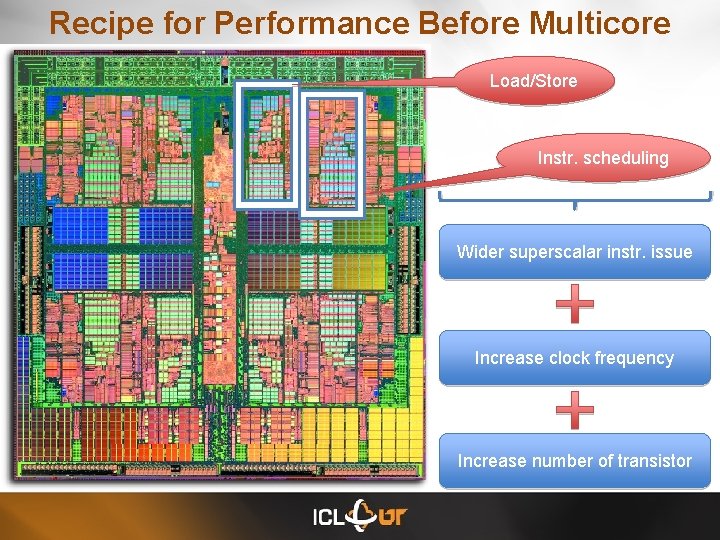

Recipe for Performance Before Multicore Load/Store Instr. scheduling Wider superscalar instr. issue Increase clock frequency Increase number of transistor

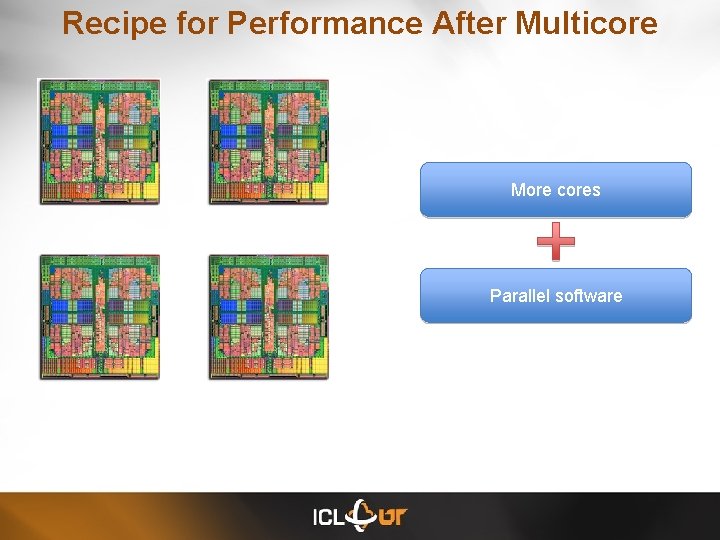

Recipe for Performance After Multicore More cores Parallel software

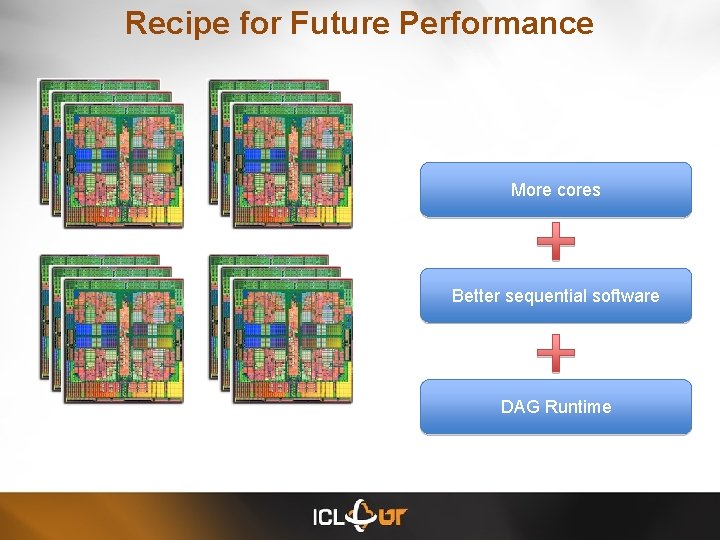

Recipe for Future Performance More cores Better sequential software DAG Runtime

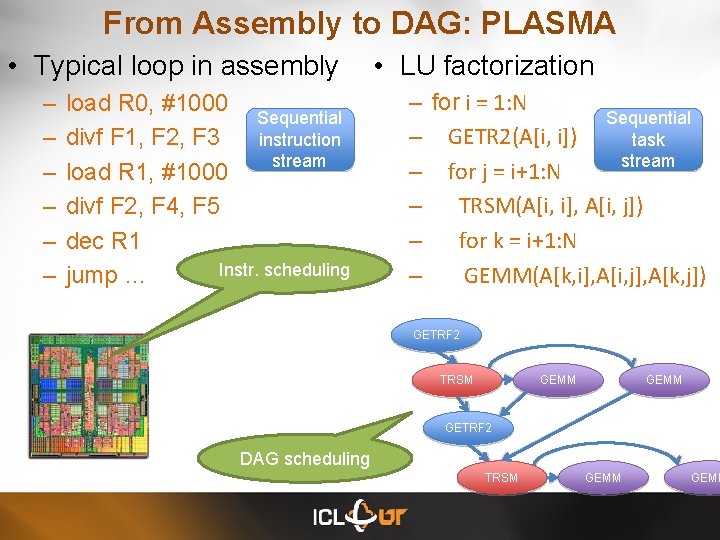

From Assembly to DAG: PLASMA • Typical loop in assembly – – – load R 0, #1000 Sequential divf F 1, F 2, F 3 instruction stream load R 1, #1000 divf F 2, F 4, F 5 dec R 1 Instr. scheduling jump … • LU factorization – for i = 1: N Sequential – GETR 2(A[i, i]) task stream – for j = i+1: N – TRSM(A[i, i], A[i, j]) – for k = i+1: N – GEMM(A[k, i], A[i, j], A[k, j]) GETRF 2 TRSM GEMM GETRF 2 DAG scheduling TRSM GEMM

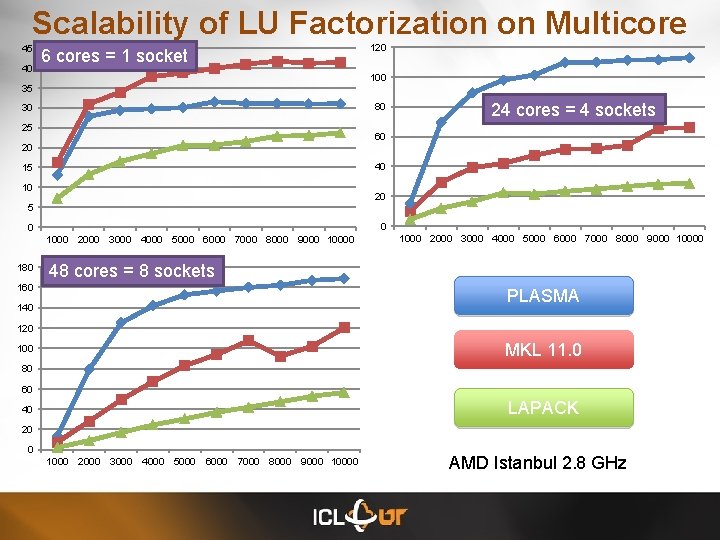

Scalability of LU Factorization on Multicore 45 40 6 cores = 1 socket 100 35 80 30 25 24 cores = 4 sockets 60 20 40 15 10 20 5 0 0 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000 180 120 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000 48 cores = 8 sockets 160 PLASMA 140 120 MKL 11. 0 100 80 60 LAPACK 40 20 0 1000 2000 3000 4000 5000 6000 7000 8000 9000 10000 AMD Istanbul 2. 8 GHz

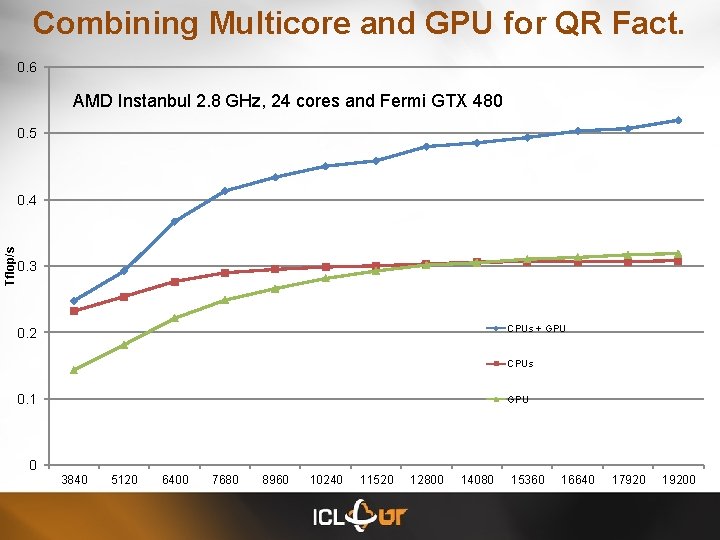

Combining Multicore and GPU for QR Fact. 0. 6 AMD Instanbul 2. 8 GHz, 24 cores and Fermi GTX 480 0. 5 Tflop/s 0. 4 0. 3 CPUs + GPU 0. 2 CPUs 0. 1 0 GPU 3840 5120 6400 7680 8960 10240 11520 12800 14080 15360 16640 17920 19200

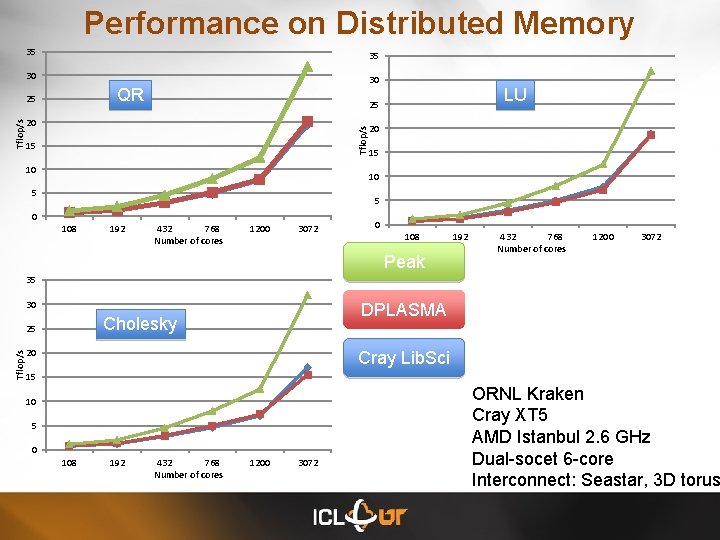

Performance on Distributed Memory 35 35 30 30 QR LU 25 20 Tflop/s 25 15 10 20 15 10 5 5 0 108 192 432 768 Number of cores 1200 3072 0 108 Peak 192 432 768 Number of cores 1200 3072 35 30 Tflop/s DPLASMA Cholesky 25 20 Cray Lib. Sci 15 10 5 0 108 192 432 768 Number of cores 1200 3072 ORNL Kraken Cray XT 5 AMD Istanbul 2. 6 GHz Dual-socet 6 -core Interconnect: Seastar, 3 D torus

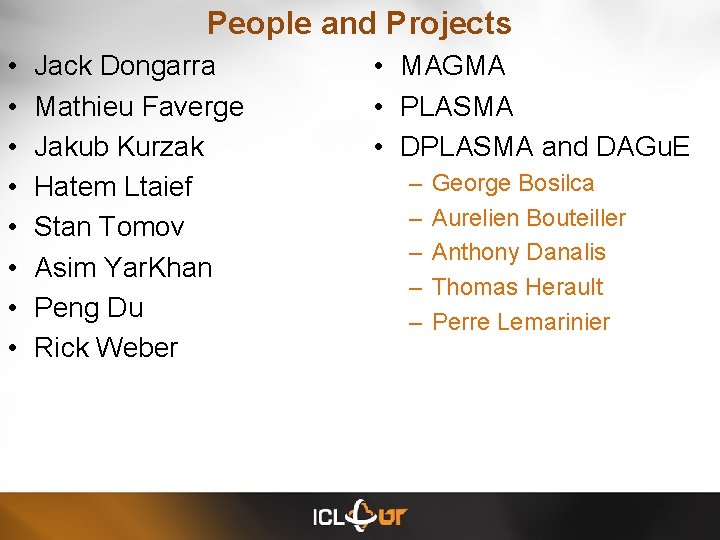

People and Projects • • Jack Dongarra Mathieu Faverge Jakub Kurzak Hatem Ltaief Stan Tomov Asim Yar. Khan Peng Du Rick Weber • MAGMA • PLASMA • DPLASMA and DAGu. E – – – George Bosilca Aurelien Bouteiller Anthony Danalis Thomas Herault Perre Lemarinier

- Slides: 25