SuperScalable Algorithms Ph D Dissertation Defense Nathan Brunelle

Super-Scalable Algorithms Ph. D Dissertation Defense Nathan Brunelle July 31, 2017 Video of this presentation viewable at https: //www. youtube. com/watch? v=GP 2 rm. Oz 3 eb. I Dissertation PDF: http: //www. cs. virginia. edu/~njb 2 b/Brunelle_phd. Dissertation_UVACS_2017. pdf Slides PDF: http: //www. cs. virginia. edu/~njb 2 b/Brunelle_phd. Defense_UVACS_July 2017. pdf Slides PPT: http: //www. cs. virginia. edu/~njb 2 b/Brunelle_phd. Defense_UVACS_July 2017. pptx 1

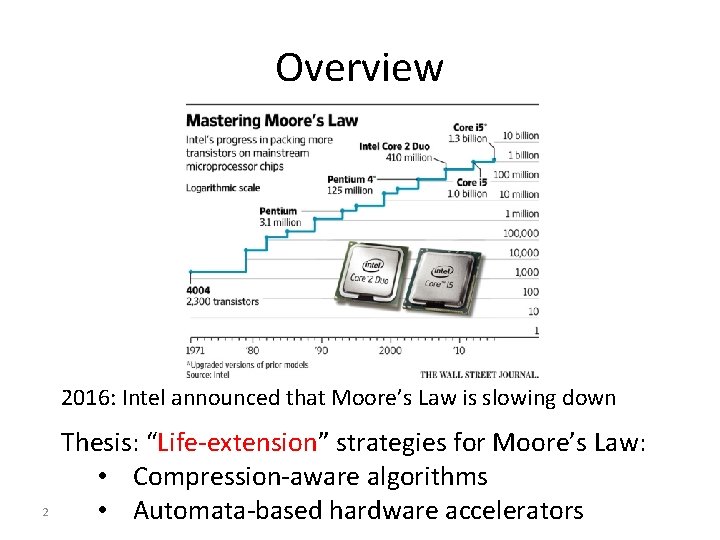

Overview 2016: Intel announced that Moore’s Law is slowing down 2 Thesis: “Life-extension” strategies for Moore’s Law: • Compression-aware algorithms • Automata-based hardware accelerators

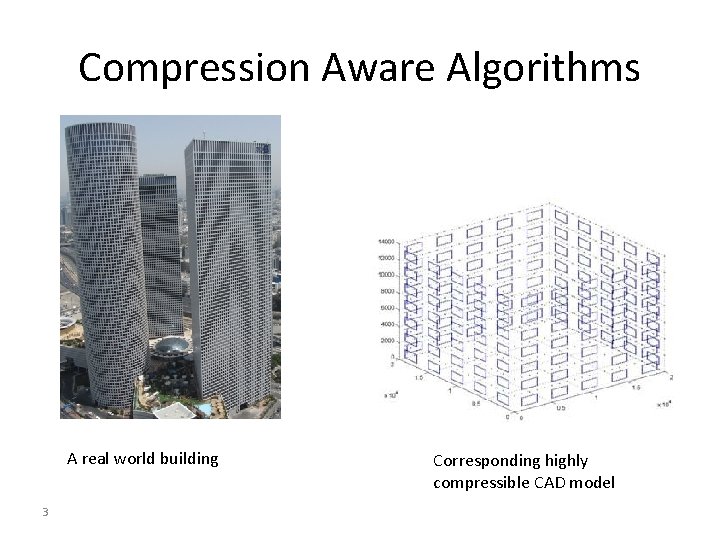

Compression Aware Algorithms A real world building 3 Corresponding highly compressible CAD model

Compression-Aware Benefits • Compression’s size grows more slowly than the volume of the data it represents • Less data for the algorithm to manage 4

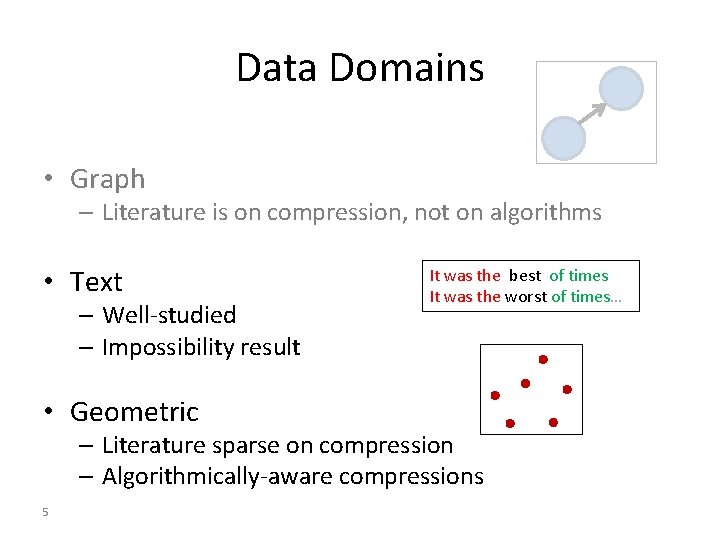

Data Domains • Graph – Literature is on compression, not on algorithms • Text – Well-studied – Impossibility result • Geometric It was the best of times It was the worst of times… – Literature sparse on compression – Algorithmically-aware compressions 5

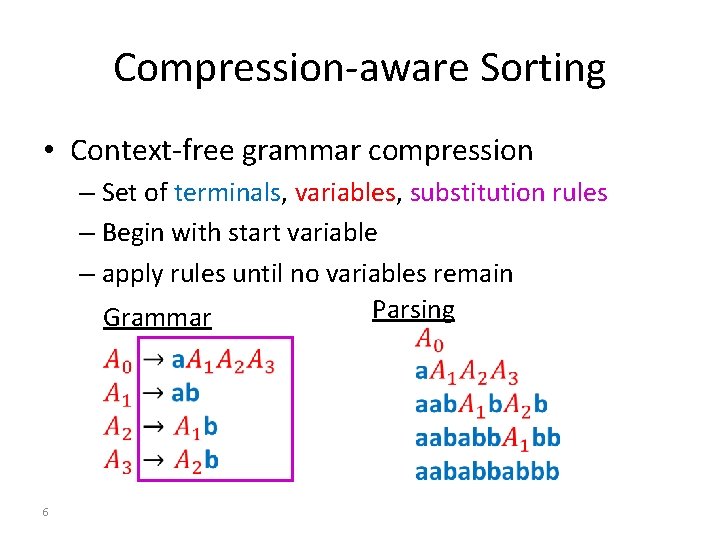

Compression-aware Sorting • Context-free grammar compression – Set of terminals, variables, substitution rules – Begin with start variable – apply rules until no variables remain Parsing Grammar 6

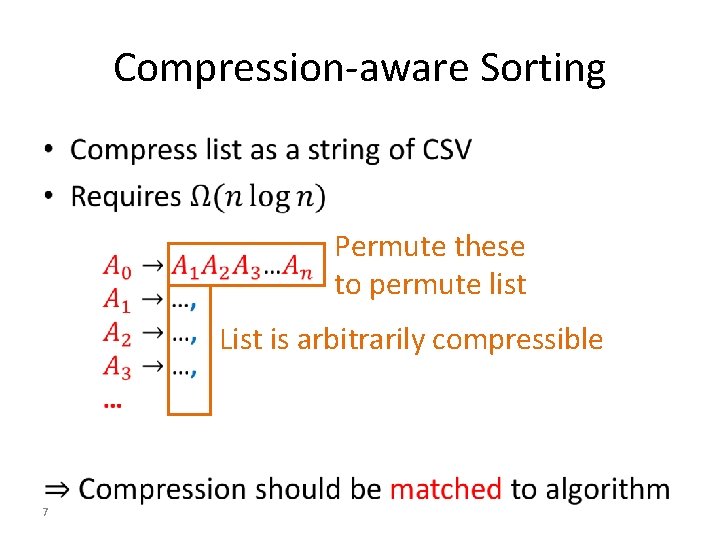

Compression-aware Sorting • Permute these to permute list List is arbitrarily compressible 7

Algorithmically-Aware Compressions • Dual of compression-aware algorithms • Compressions designed for faster algorithms • Focus on geometric data 8

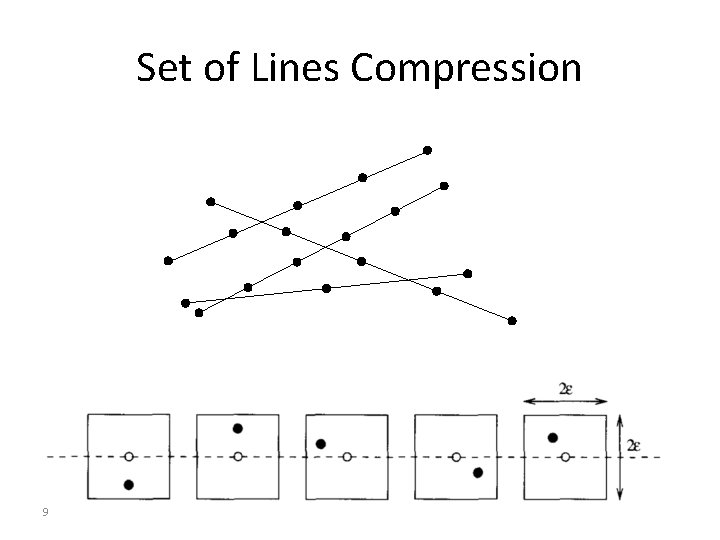

Set of Lines Compression 9

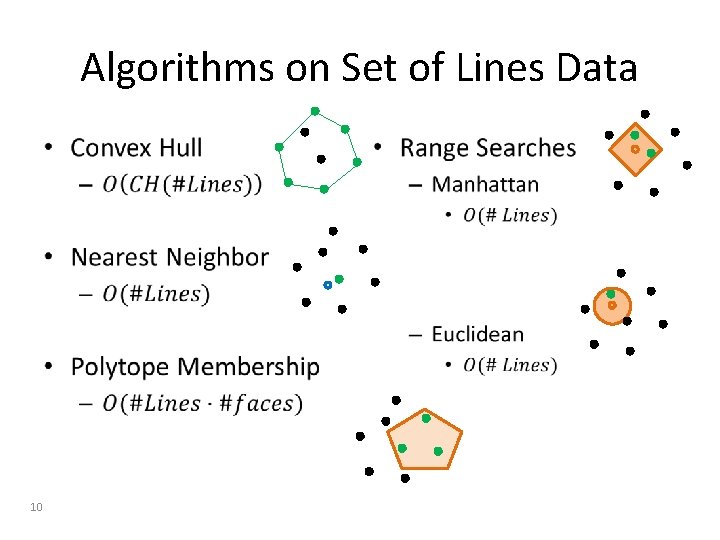

Algorithms on Set of Lines Data • 10 •

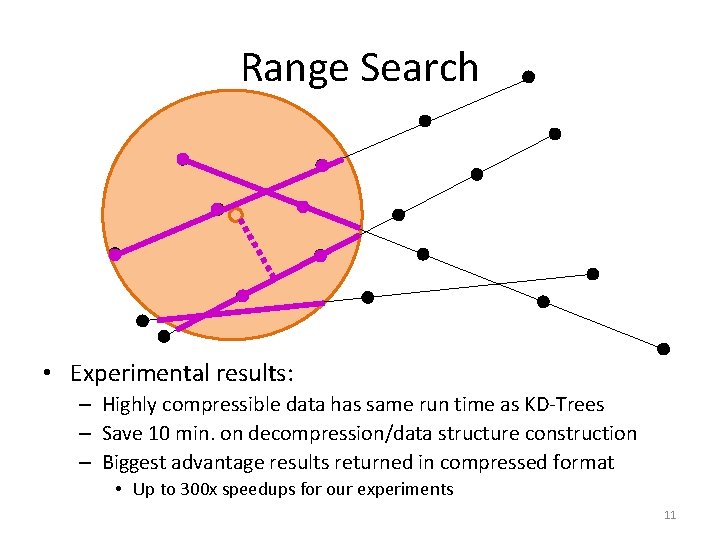

Range Search • Experimental results: – Highly compressible data has same run time as KD-Trees – Save 10 min. on decompression/data structure construction – Biggest advantage results returned in compressed format • Up to 300 x speedups for our experiments 11

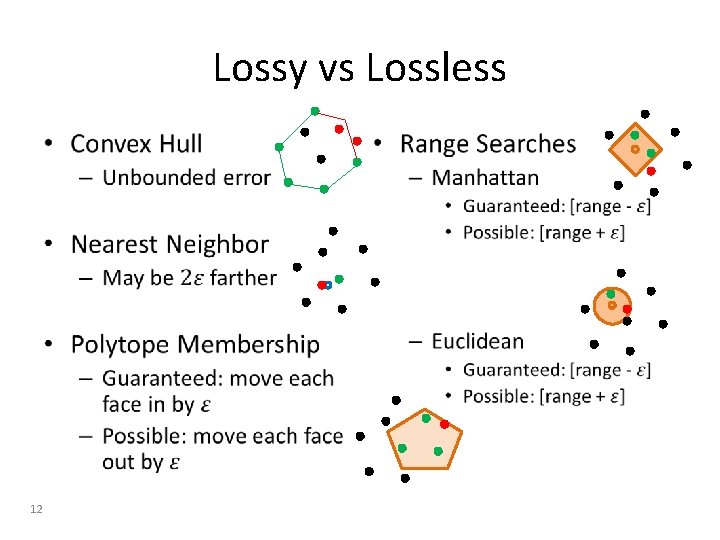

Lossy vs Lossless • 12 •

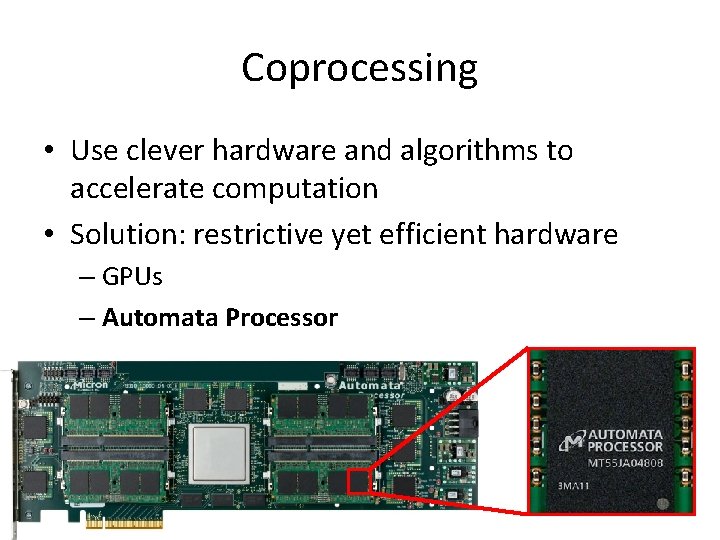

Coprocessing • Use clever hardware and algorithms to accelerate computation • Solution: restrictive yet efficient hardware – GPUs – Automata Processor 13

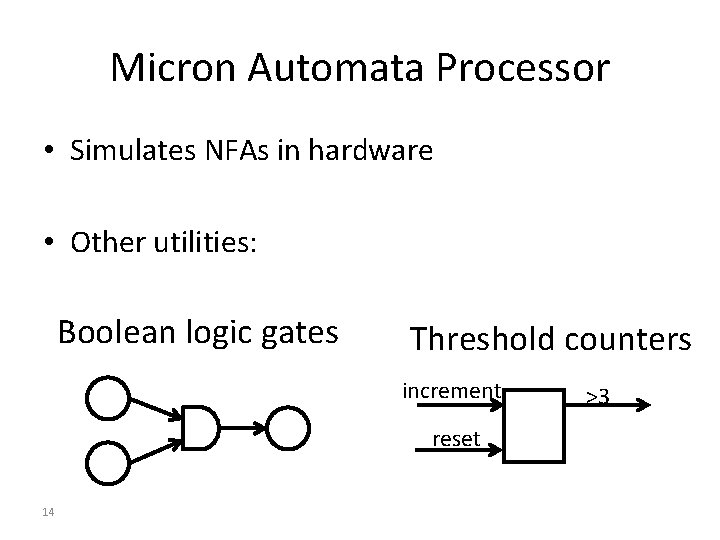

Micron Automata Processor • Simulates NFAs in hardware • Other utilities: Boolean logic gates Threshold counters increment reset 14 >3

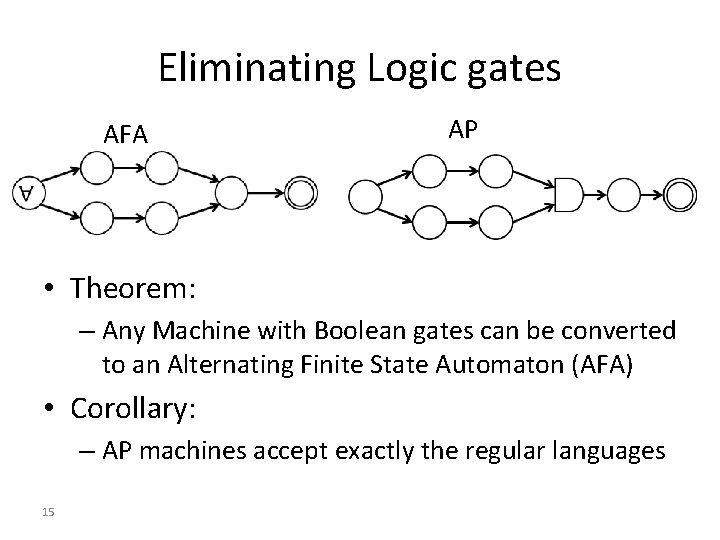

Eliminating Logic gates AFA AP • Theorem: – Any Machine with Boolean gates can be converted to an Alternating Finite State Automaton (AFA) • Corollary: – AP machines accept exactly the regular languages 15

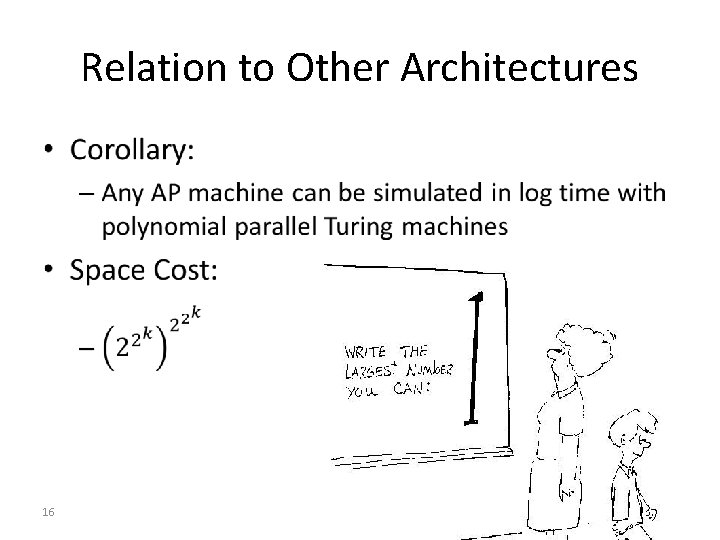

Relation to Other Architectures • 16

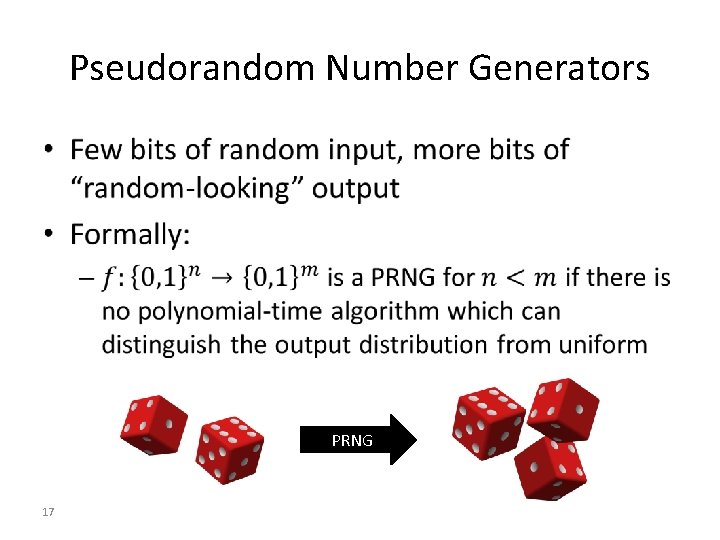

Pseudorandom Number Generators • PRNG 17

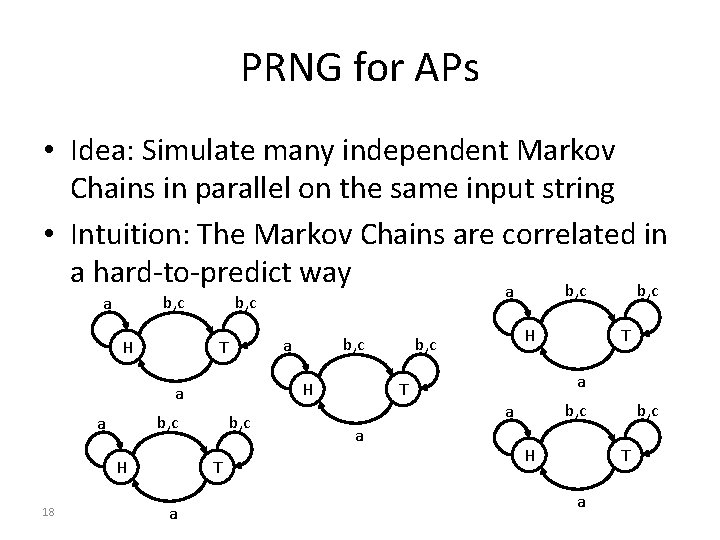

PRNG for APs • Idea: Simulate many independent Markov Chains in parallel on the same input string • Intuition: The Markov Chains are correlated in a hard-to-predict way b, c a H b, c H a b, c a H 18 b, c T a b, c a T H b, c a T b, c a a T H b, c T a

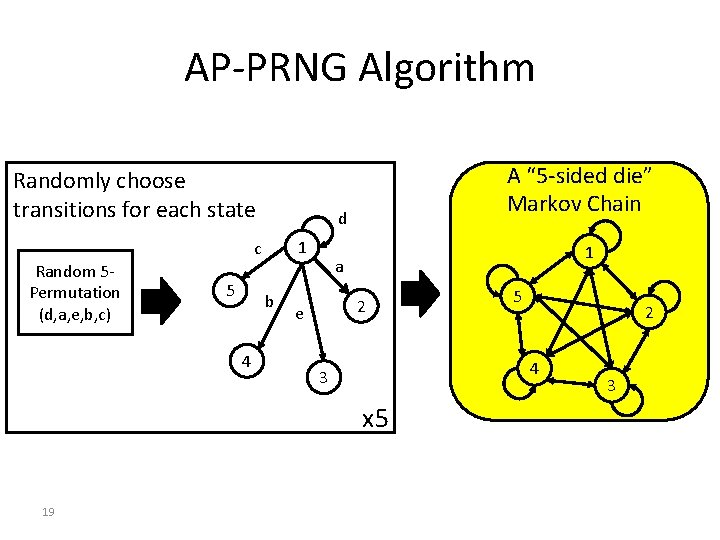

AP-PRNG Algorithm Randomly choose transitions for each state d 1 c Random 5 Permutation (d, a, e, b, c) 5 b 4 A “ 5 -sided die” Markov Chain 1 a 2 e 2 4 3 x 5 19 5 3

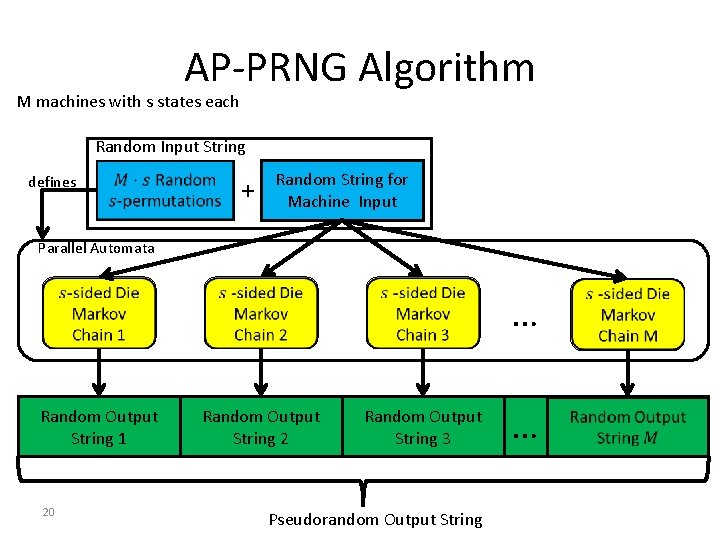

AP-PRNG Algorithm M machines with s states each Random Input String defines + Random String for Machine Input Parallel Automata Random Output String 1 20 Random Output String 2 Random Output String 3 Pseudorandom Output String … …

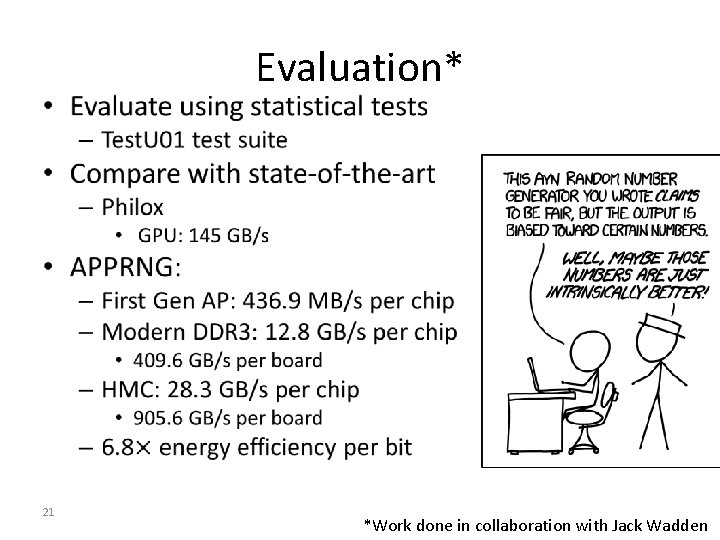

• 21 Evaluation* *Work done in collaboration with Jack Wadden

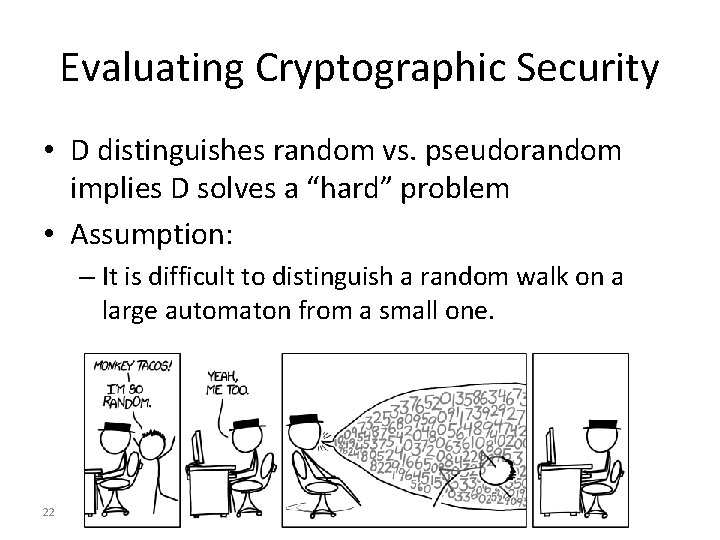

Evaluating Cryptographic Security • D distinguishes random vs. pseudorandom implies D solves a “hard” problem • Assumption: – It is difficult to distinguish a random walk on a large automaton from a small one. 22

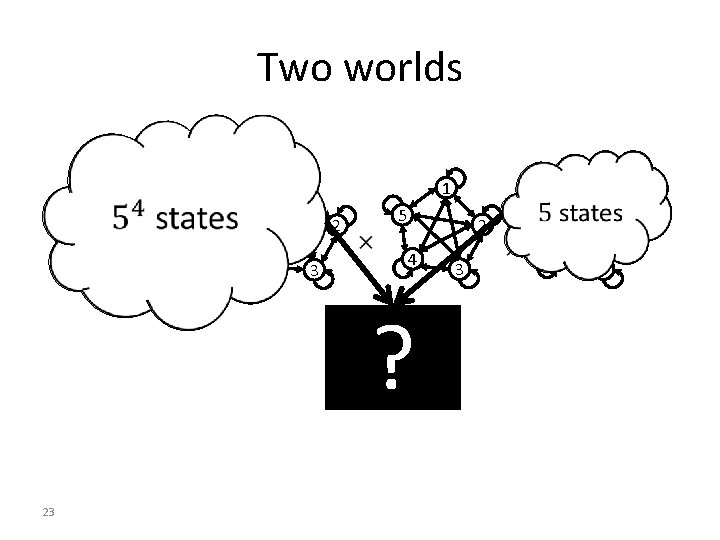

Two worlds 5 2 4 3 5 2 4 ? 23 1 1 1 3 1 5 2 4 3

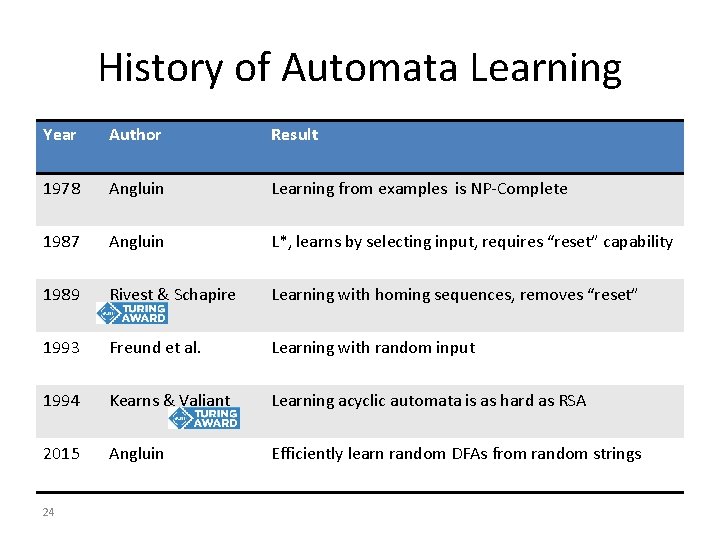

History of Automata Learning Year Author Result 1978 Angluin Learning from examples is NP-Complete 1987 Angluin L*, learns by selecting input, requires “reset” capability 1989 Rivest & Schapire Learning with homing sequences, removes “reset” 1993 Freund et al. Learning with random input 1994 Kearns & Valiant Learning acyclic automata is as hard as RSA 2015 Angluin Efficiently learn random DFAs from random strings 24

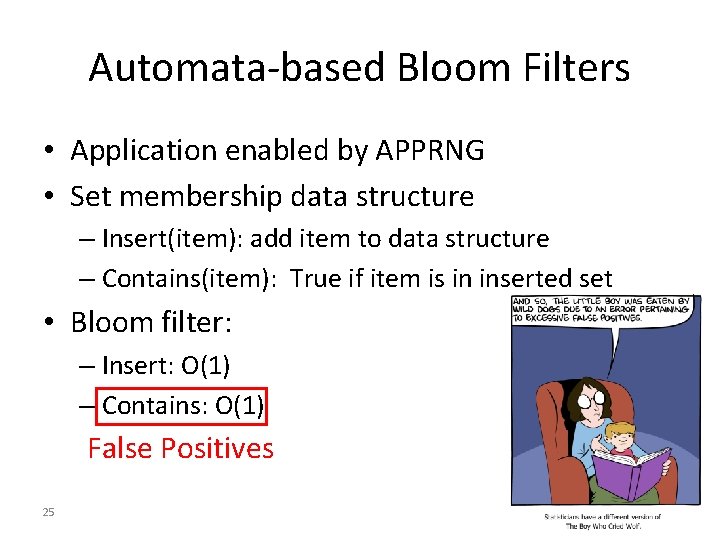

Automata-based Bloom Filters • Application enabled by APPRNG • Set membership data structure – Insert(item): add item to data structure – Contains(item): True if item is in inserted set • Bloom filter: – Insert: O(1) – Contains: O(1) False Positives 25

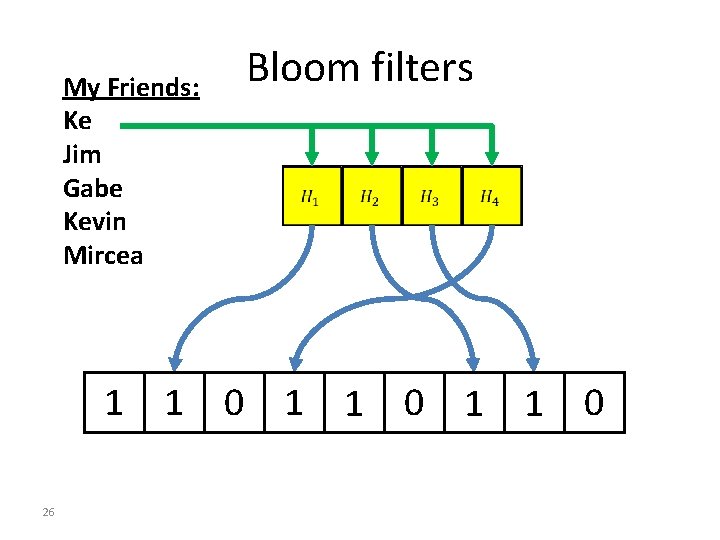

My Friends: Ke Jim Gabe Kevin Mircea Bloom filters 0 1 1 0 0 1 0 1 0 0 26

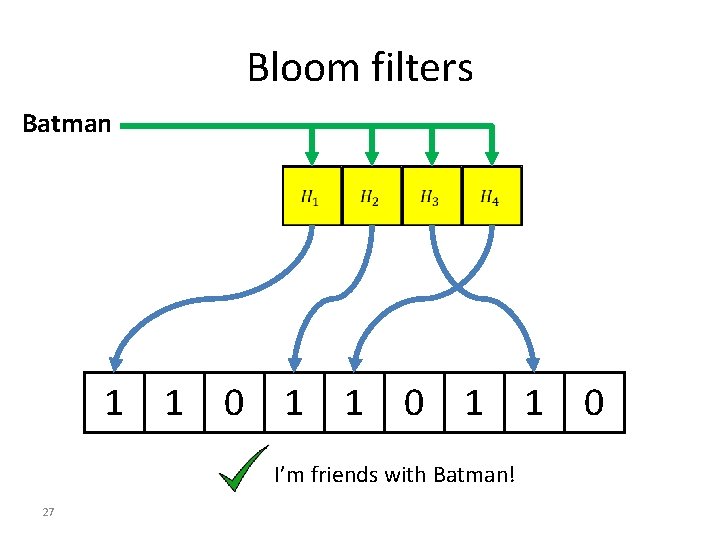

Bloom filters Batman 1 1 0 I’m friends with Batman! 27

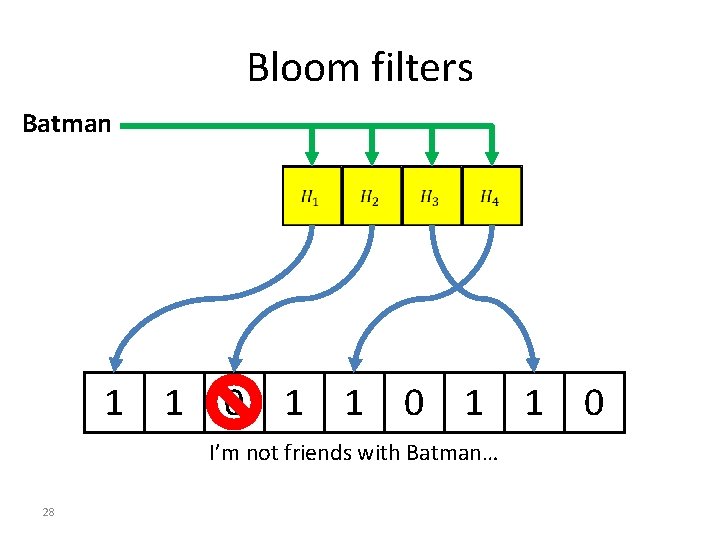

Bloom filters Batman 1 1 0 I’m not friends with Batman… 28

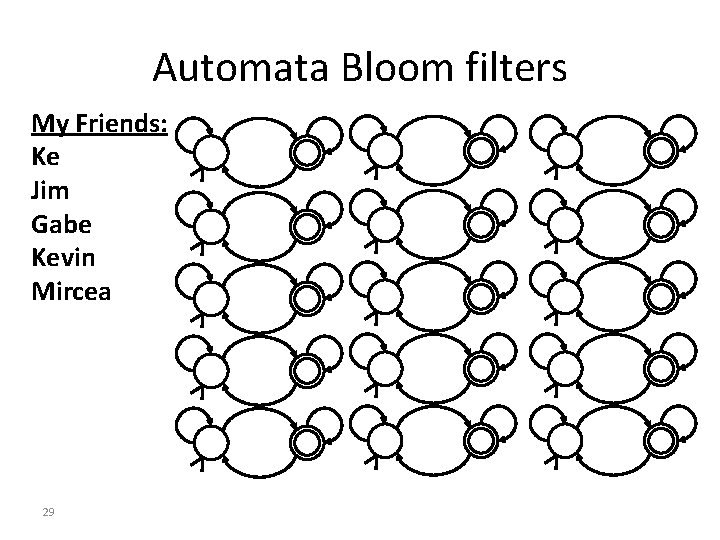

Automata Bloom filters My Friends: Ke Jim Gabe Kevin Mircea 29

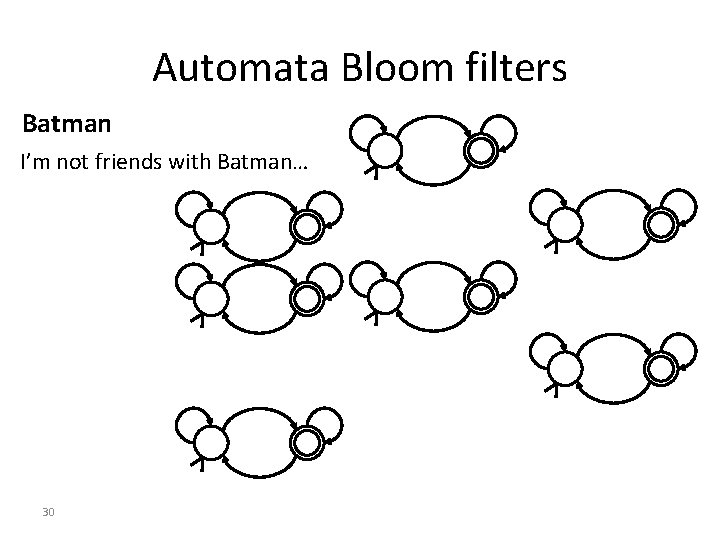

Automata Bloom filters Batman I’m not friends with Batman… 30

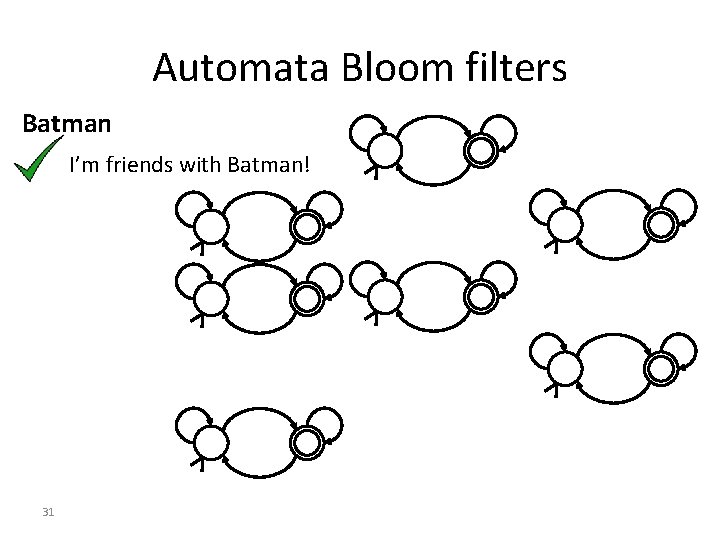

Automata Bloom filters Batman I’m friends with Batman! 31

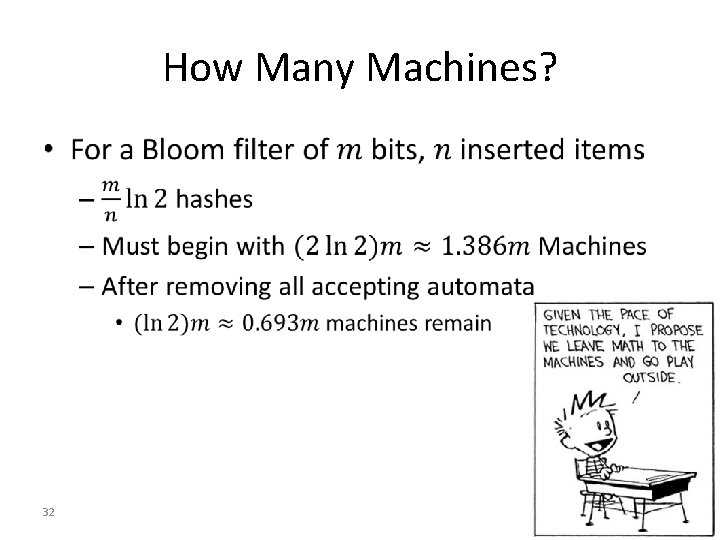

How Many Machines? • 32

Conclusions • Life-extension techniques for Moore’s Law: – Software: Compression aware algorithms • Geometric and text data • Lossy and Lossless compressions • Compression scheme & Algorithm Codesign – Hardware: Automata Processors • Efficiently compute regular languages • Pseudorandom number generator • Bloom filters • Longevity? 33

Future Directions • Compression-aware data structures – Revisit classical data structures w. r. t. compression – E. g. Compression-aware KD-Trees • Combine AP with compression-aware algorithms – Algorithms for schemes with AP-based decompression – Compressed pattern matching – E. g. Pattern matching on Huffman-coded data • Explore lossyness in compressions – Leverage imprecise hardware – Markov Chains vs. imprecision vs. lossyness 34

Questions? • J. Hott, N. Brunelle, J. Myers, J. Rassen and a. shelat. KD-Tree Algorithm for Propensity Score Matching With Three or More Treatment Groups. Technical Report Series. Division of Pharmacoepidemiology And Pharmacoeconomics, Department of Medicine, Brigham and Women’s Hospital and Harvard Medical School, 2012. • N. Brunelle, G. Robins, a. shelat. Compression-Aware Algorithms for Massive Datasets. Data Compression Conference (DCC), 2015. • N. Brunelle, G. Robins, a. shelat. Algorithms for Compressed Inputs. DCC, 2013. • T. Tracy, M. Stan, N. Brunelle, J. Wadden, K. Wang, K. Skadron, G. Robins. Nondeterministic Finite Automata in Hardware - the Case of the Levenshtein Automaton. Workshop on Architectures and Systems for Big Data (ASBD), in conjunction with ISCA, 2015. • J. Wadden, N. Brunelle, K. Wang, M. El-Hadedy, G. Robins, M. Stan, K. Skadron. Generating efficient and highquality pseudo-random behavior on automata processors. ICCD, 2016. • J. Wadden, V. Dang, N. Brunelle, T. Tracy II, D. Guo, E. Sadredini, K. Wang, C. Bo, G. Robins, M. Stan, K. Skadron. ANMLZoo: A benchmark suite for exploring bottlenecks in automata processing engines and architectures. IISWC, 2016. • N. Brunelle, G. Robins, a. shelat, Algorithms for compressed inputs. In preparation for Journal of Discrete Algorithms. • N Brunelle, J. Wadden, T. Tracy, M. Wallace, G. Robins, K. Skadron. Pseudorandom Number Generation using Parallel Automata. In preparation for Journal of Experimental Algorithmicss. • J. Wadden, N. Brunelle. System, Method, and Computer-Readable Medium for High Throughput Pseudorandom Number Generation. Patent Application no. 15/091925, Filed April 2015

- Slides: 35