Sum of squares optimization scalability improvements and applications

Sum of squares optimization: scalability improvements and applications to difference of convex programming. Georgina Hall Princeton, ORFE Joint work with Amir Ali Ahmadi Princeton, ORFE 1

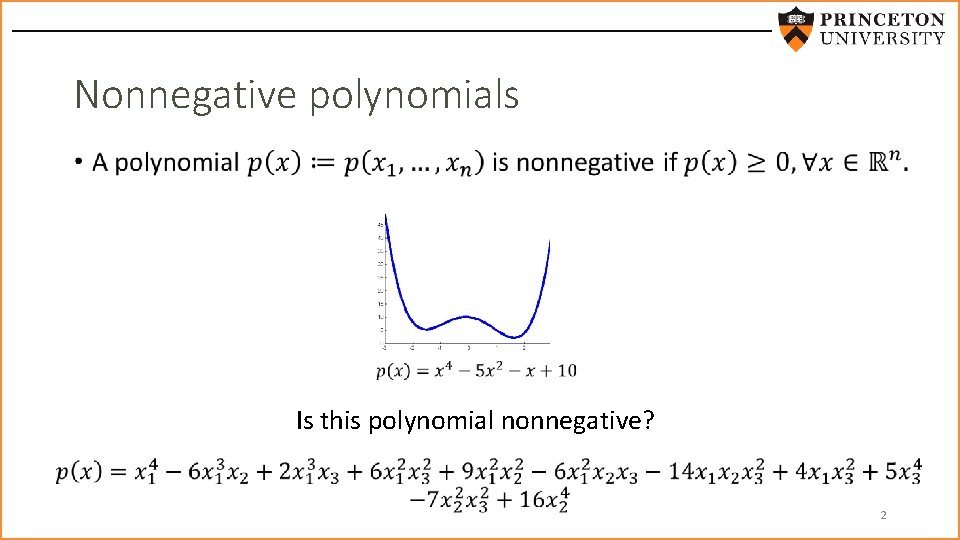

Nonnegative polynomials • Is this polynomial nonnegative? 2

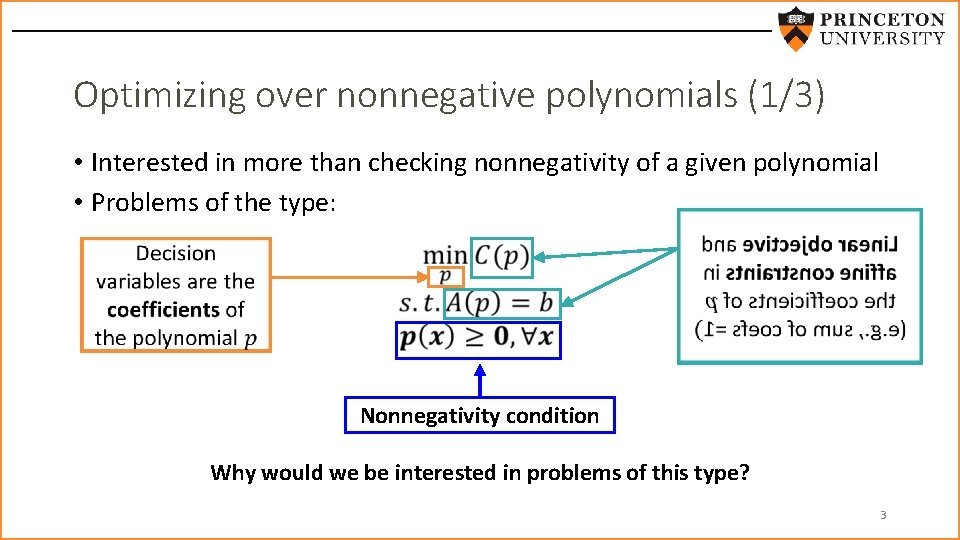

Optimizing over nonnegative polynomials (1/3) • Interested in more than checking nonnegativity of a given polynomial • Problems of the type: Nonnegativity condition Why would we be interested in problems of this type? 3

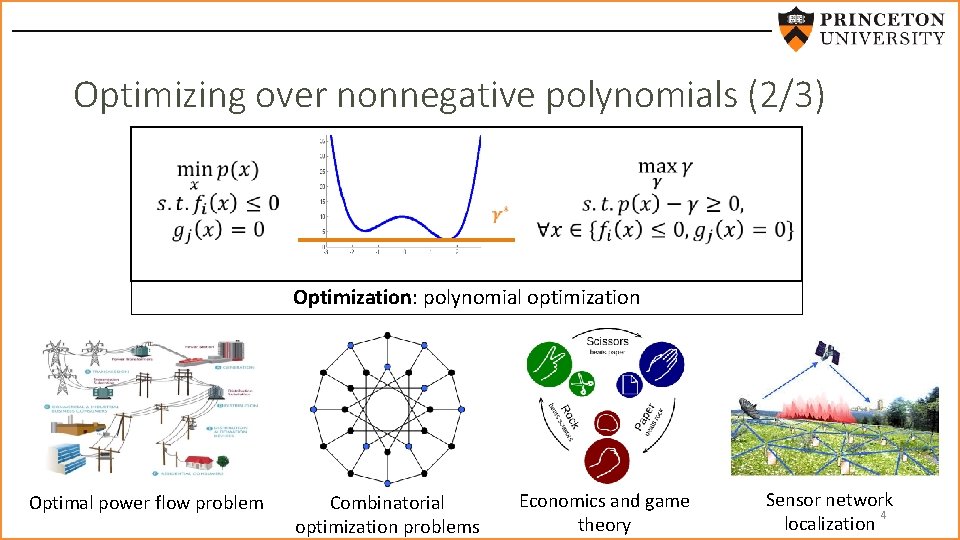

Optimizing over nonnegative polynomials (2/3) Optimization: polynomial optimization Optimal power flow problem Combinatorial optimization problems Economics and game theory Sensor network 4 localization

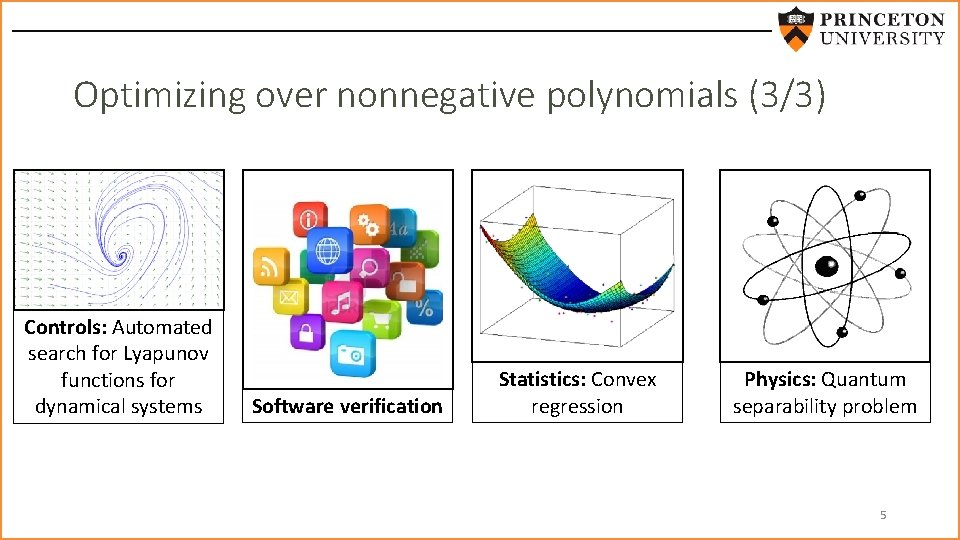

Optimizing over nonnegative polynomials (3/3) Controls: Automated search for Lyapunov functions for dynamical systems Software verification Statistics: Convex regression Physics: Quantum separability problem 5

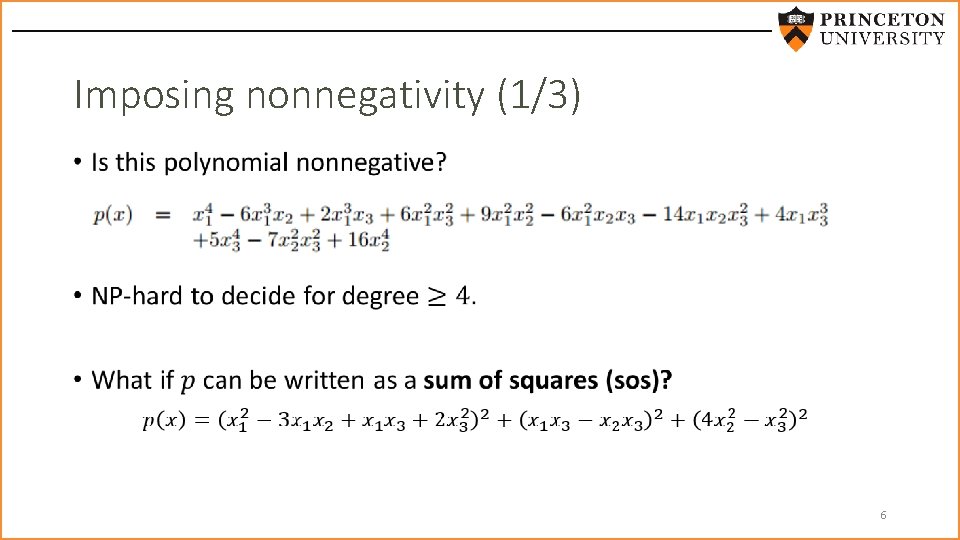

Imposing nonnegativity (1/3) • 6

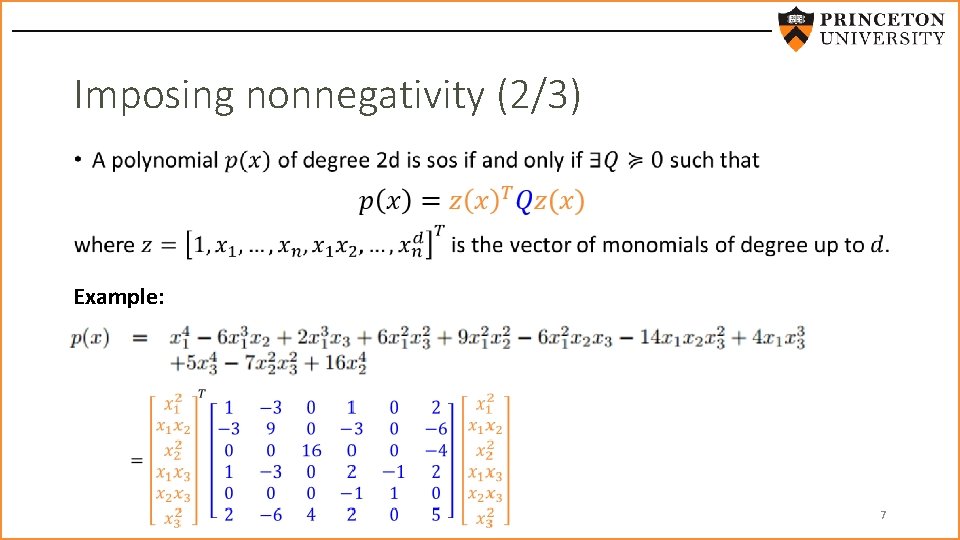

Imposing nonnegativity (2/3) • Example: 7

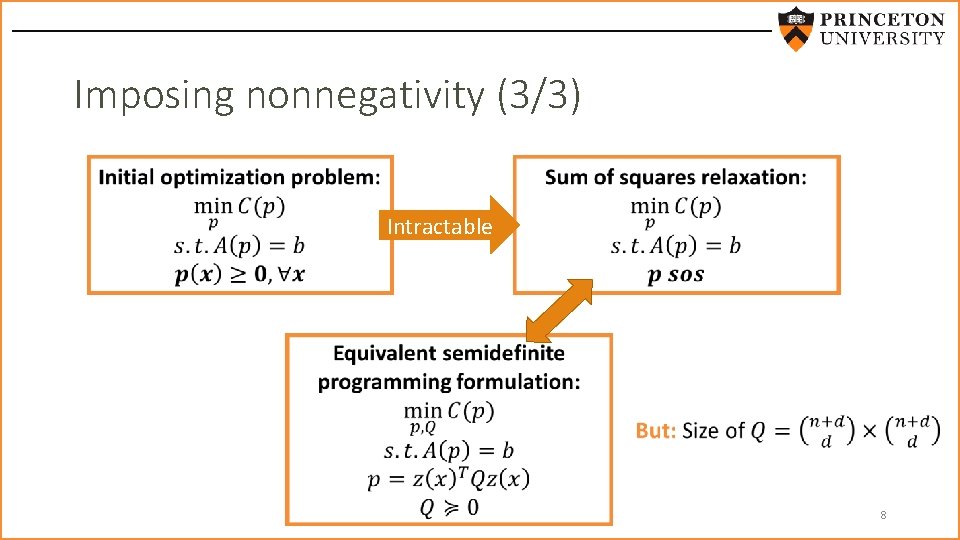

Imposing nonnegativity (3/3) Intractable 8

This talk • Recent efforts to make sos more scalable by avoiding SDP • Using sum of squares to optimize over convex functions 9

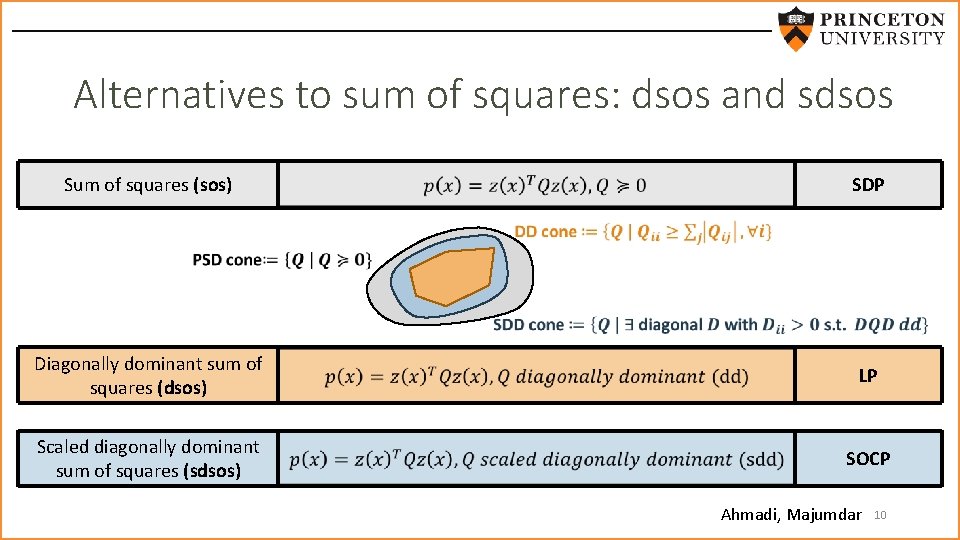

Alternatives to sum of squares: dsos and sdsos Sum of squares (sos) SDP Diagonally dominant sum of squares (dsos) LP Scaled diagonally dominant sum of squares (sdsos) SOCP Ahmadi, Majumdar 10

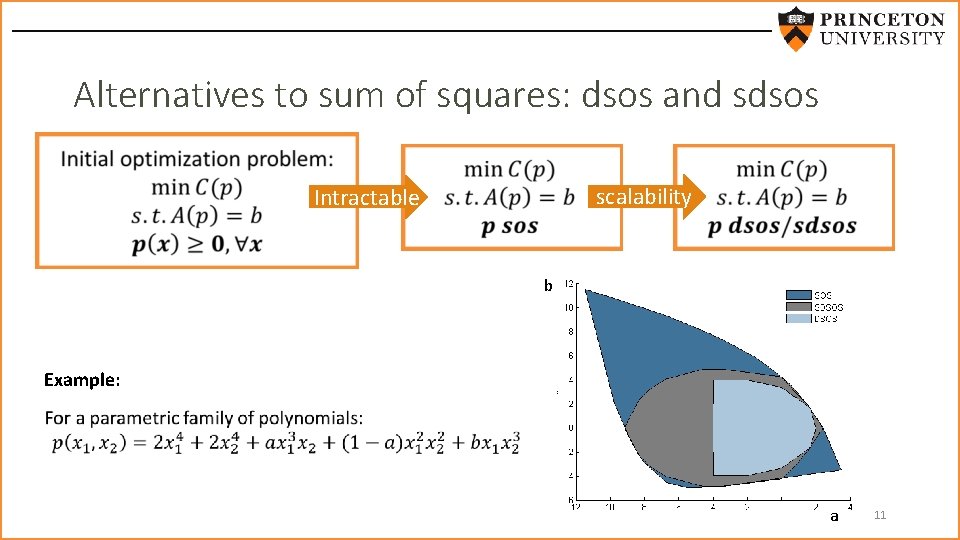

Alternatives to sum of squares: dsos and sdsos scalability Intractable b Example: a 11

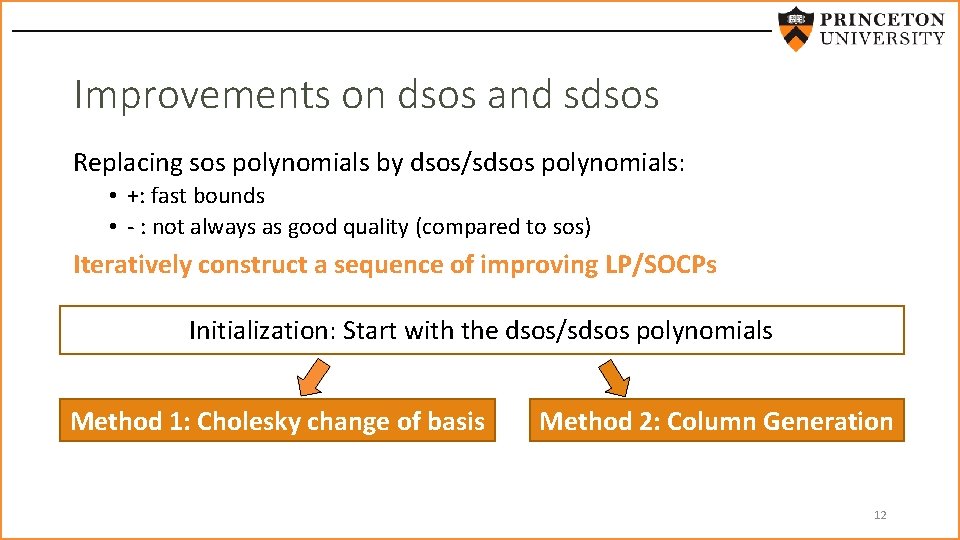

Improvements on dsos and sdsos Replacing sos polynomials by dsos/sdsos polynomials: • +: fast bounds • - : not always as good quality (compared to sos) Iteratively construct a sequence of improving LP/SOCPs Initialization: Start with the dsos/sdsos polynomials Method 1: Cholesky change of basis Method 2: Column Generation 12

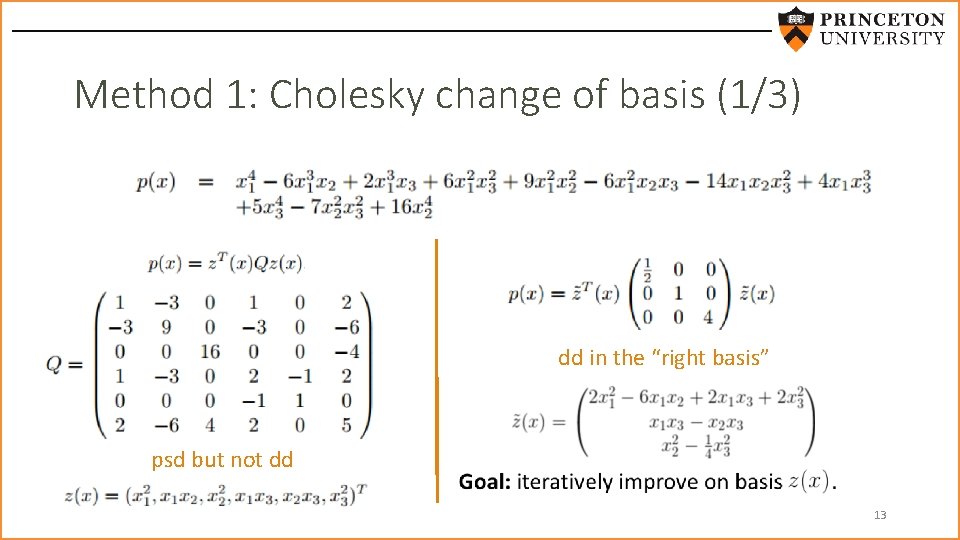

Method 1: Cholesky change of basis (1/3) dd in the “right basis” psd but not dd 13

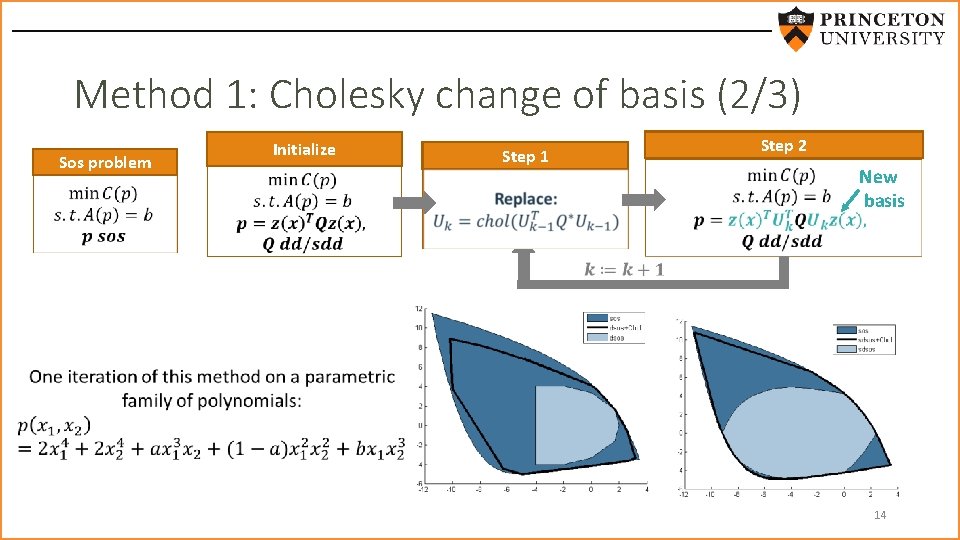

Method 1: Cholesky change of basis (2/3) Sos problem Initialize Step 1 Step 2 New basis 14

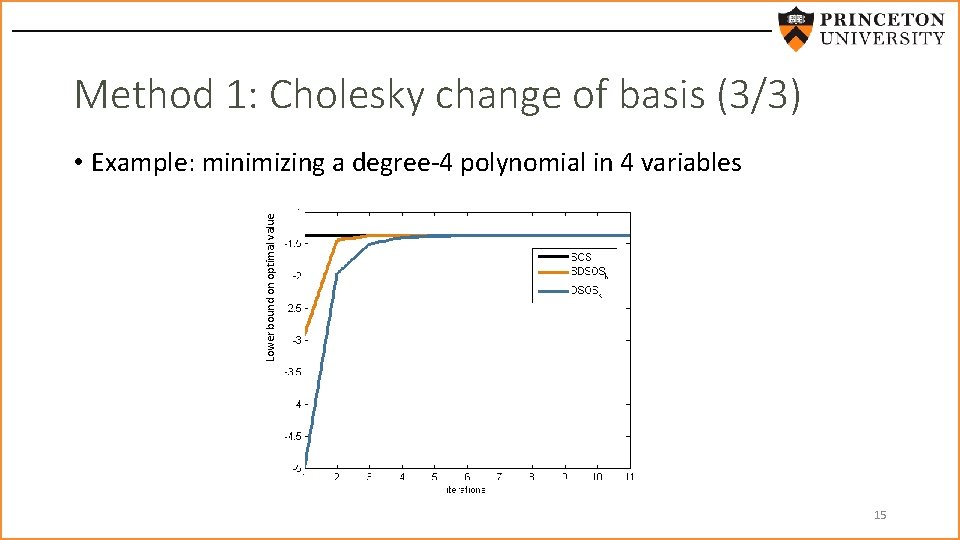

Method 1: Cholesky change of basis (3/3) Lower bound on optimal value • Example: minimizing a degree-4 polynomial in 4 variables 15

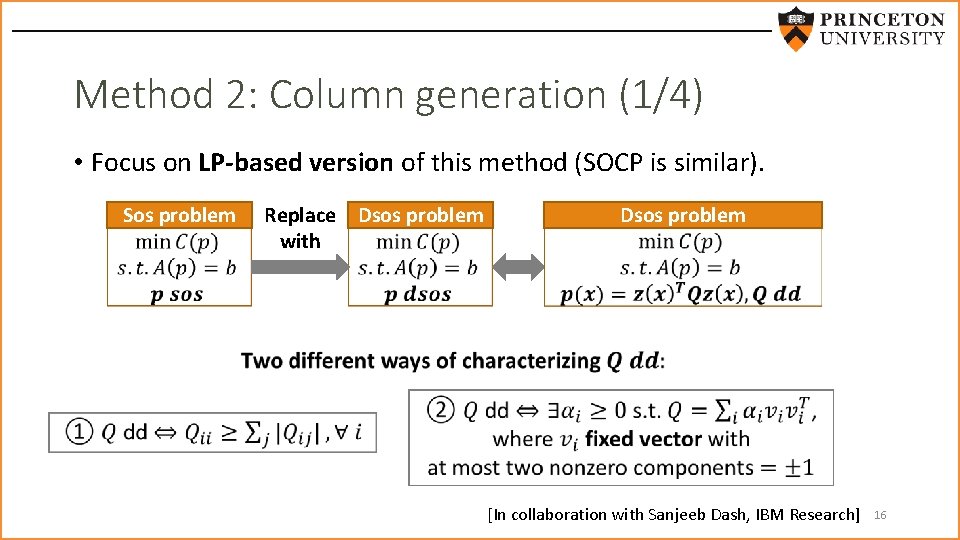

Method 2: Column generation (1/4) • Focus on LP-based version of this method (SOCP is similar). Sos problem Replace Dsos problem with Dsos problem [In collaboration with Sanjeeb Dash, IBM Research] 16

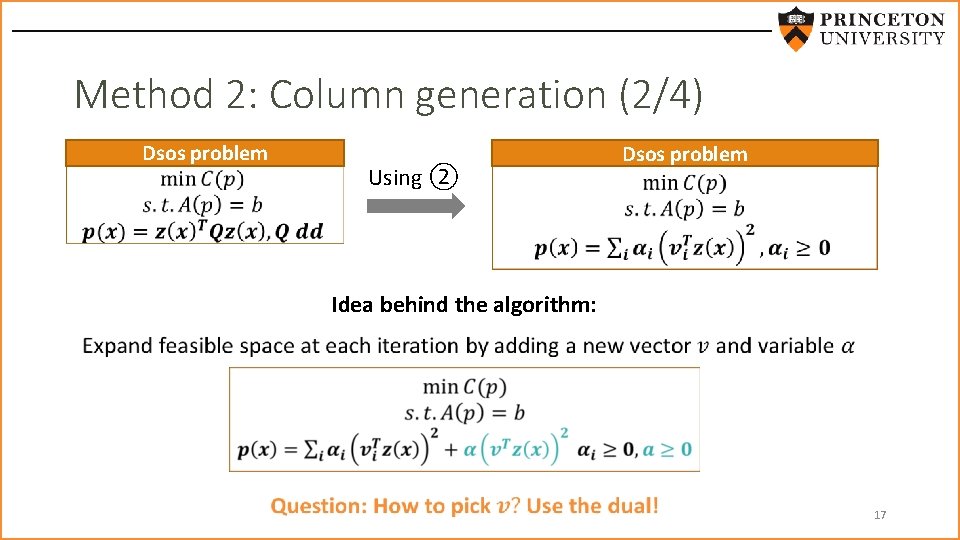

Method 2: Column generation (2/4) Dsos problem Using ② Dsos problem Idea behind the algorithm: 17

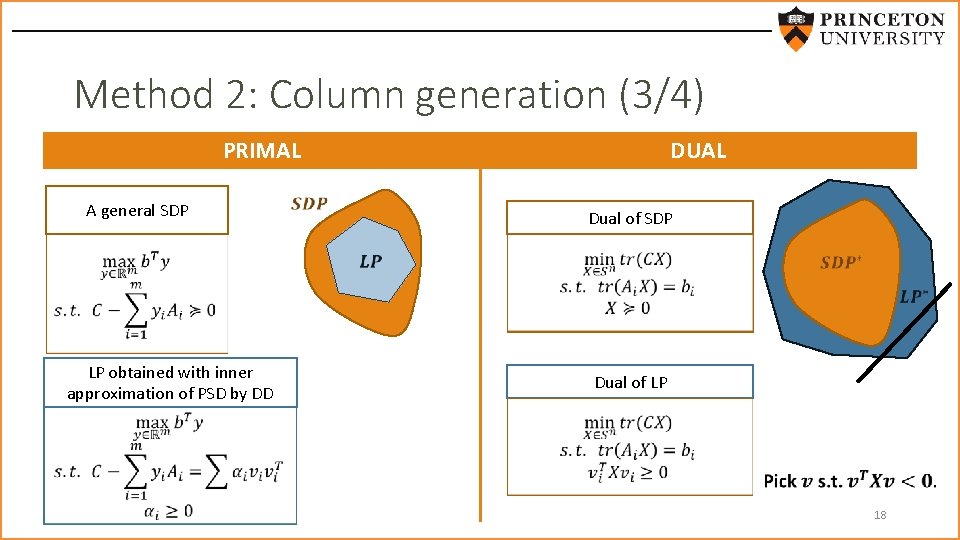

Method 2: Column generation (3/4) PRIMAL A general SDP LP obtained with inner approximation of PSD by DD DUAL Dual of SDP Dual of LP 18

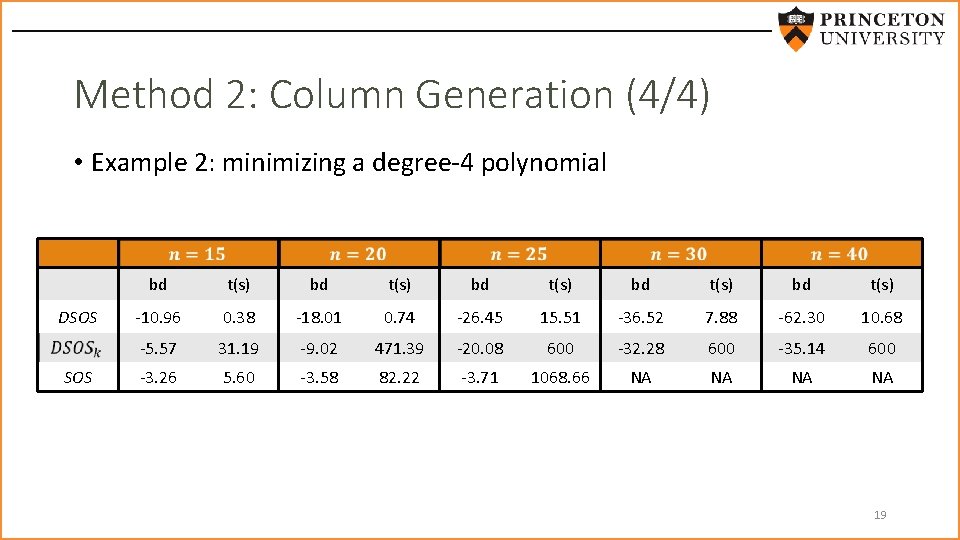

Method 2: Column Generation (4/4) • Example 2: minimizing a degree-4 polynomial DSOS bd t(s) bd t(s) -10. 96 0. 38 -18. 01 0. 74 -26. 45 15. 51 -36. 52 7. 88 -62. 30 10. 68 -5. 57 31. 19 -9. 02 471. 39 -20. 08 600 -32. 28 600 -35. 14 600 -3. 26 5. 60 -3. 58 82. 22 -3. 71 1068. 66 NA NA 19

This talk • Recent efforts to make sos more scalable by avoiding SDP • Using sum of squares to optimize over convex functions 20

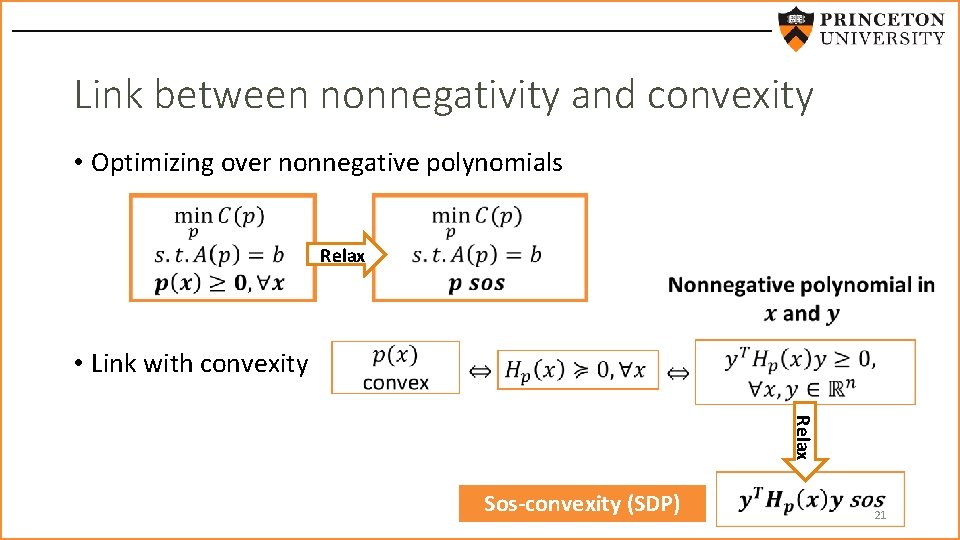

Link between nonnegativity and convexity • Optimizing over nonnegative polynomials Relax • Link with convexity Relax Sos-convexity (SDP) 21

Application 1: 3 D geometry problems 22

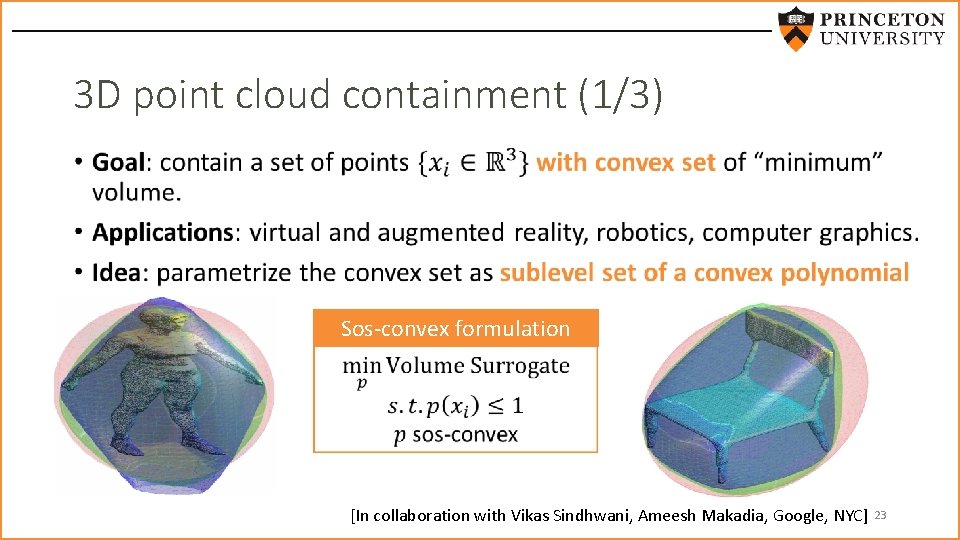

3 D point cloud containment (1/3) • Sos-convex formulation [In collaboration with Vikas Sindhwani, Ameesh Makadia, Google, NYC] 23

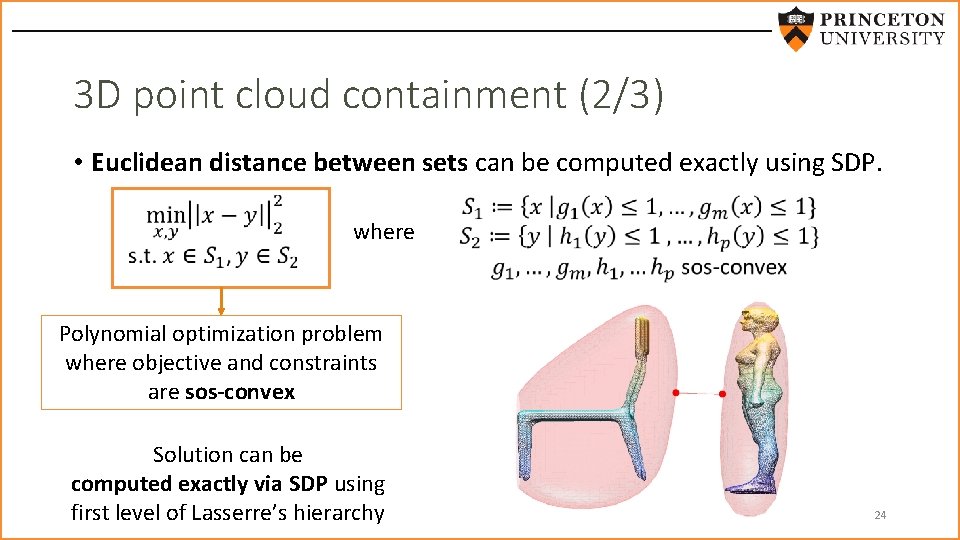

3 D point cloud containment (2/3) • Euclidean distance between sets can be computed exactly using SDP. where Polynomial optimization problem where objective and constraints are sos-convex Solution can be computed exactly via SDP using first level of Lasserre’s hierarchy 24

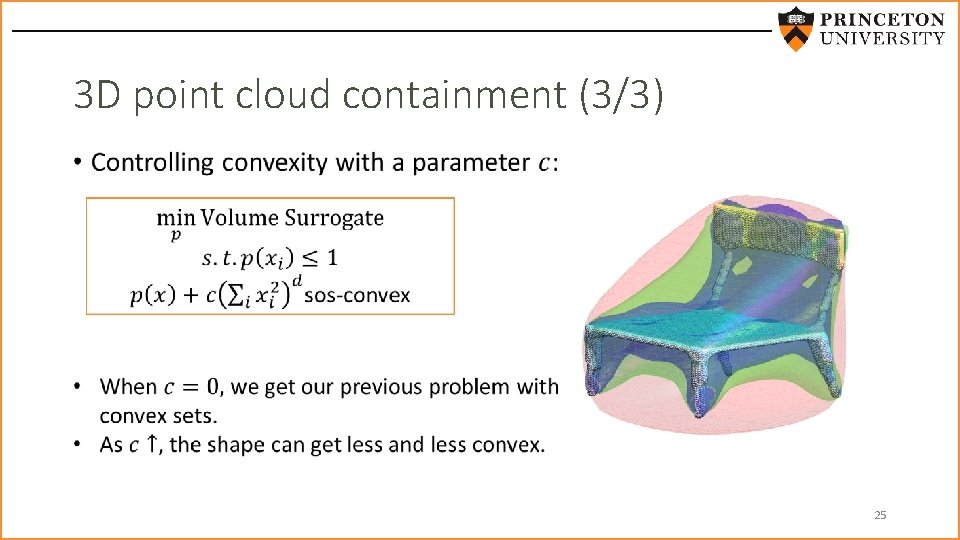

3 D point cloud containment (3/3) • 25

![Application 2: Difference of convex programming [INFORMS Computing Society Best Student Paper Prize 2016] Application 2: Difference of convex programming [INFORMS Computing Society Best Student Paper Prize 2016]](http://slidetodoc.com/presentation_image_h2/98f1f0949672043aa9f3823766583606/image-26.jpg)

Application 2: Difference of convex programming [INFORMS Computing Society Best Student Paper Prize 2016] 26

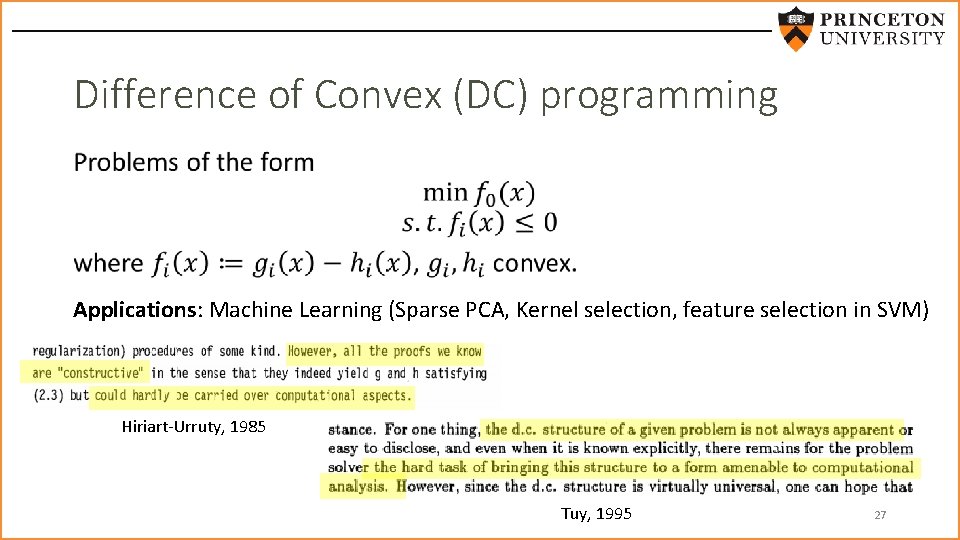

Difference of Convex (DC) programming • Applications: Machine Learning (Sparse PCA, Kernel selection, feature selection in SVM) Hiriart-Urruty, 1985 Tuy, 1995 27

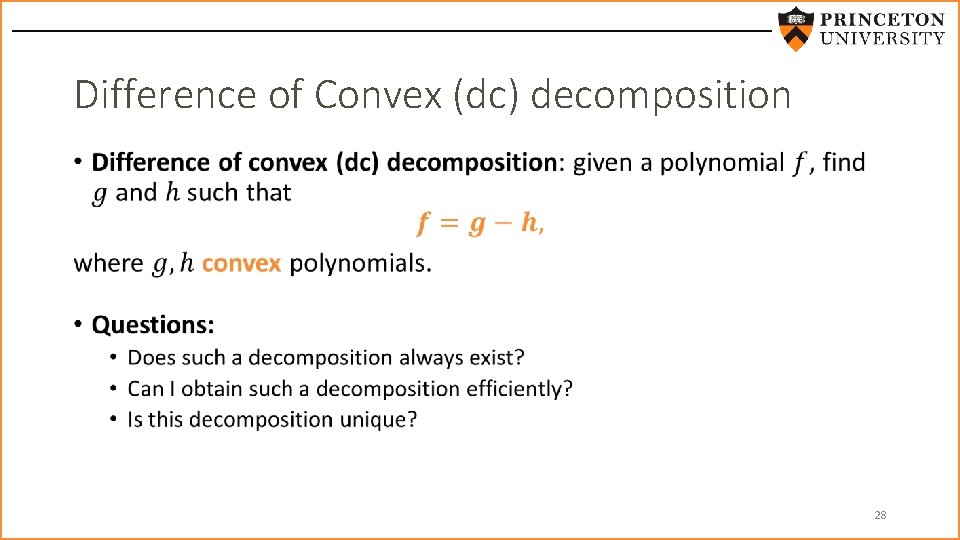

Difference of Convex (dc) decomposition • 28

Existence of dc decomposition (1/5) Theorem: Any polynomial can be written as the difference of two sos-convex polynomials. Corollary: Any polynomial can be written as the difference of two convex polynomials. 29

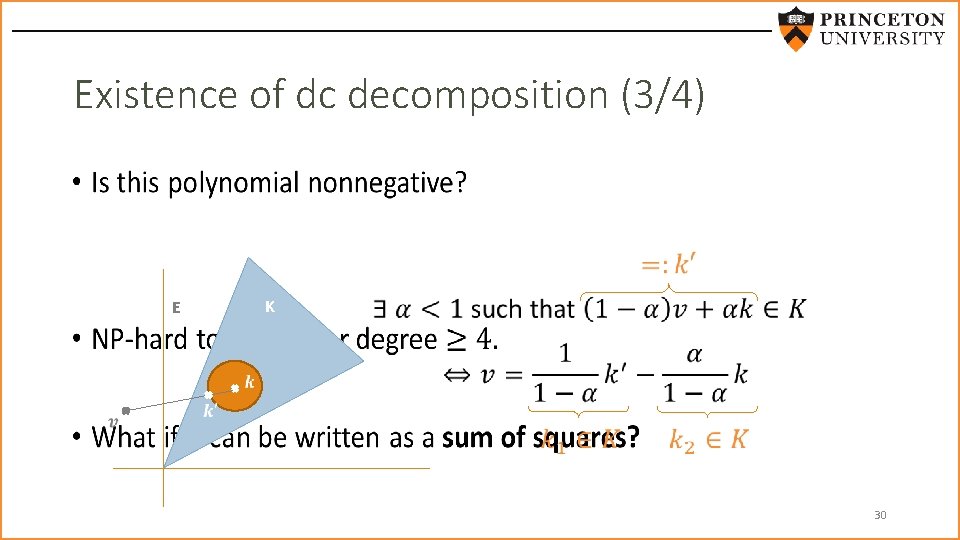

Existence of dc decomposition (3/4) • E K 30

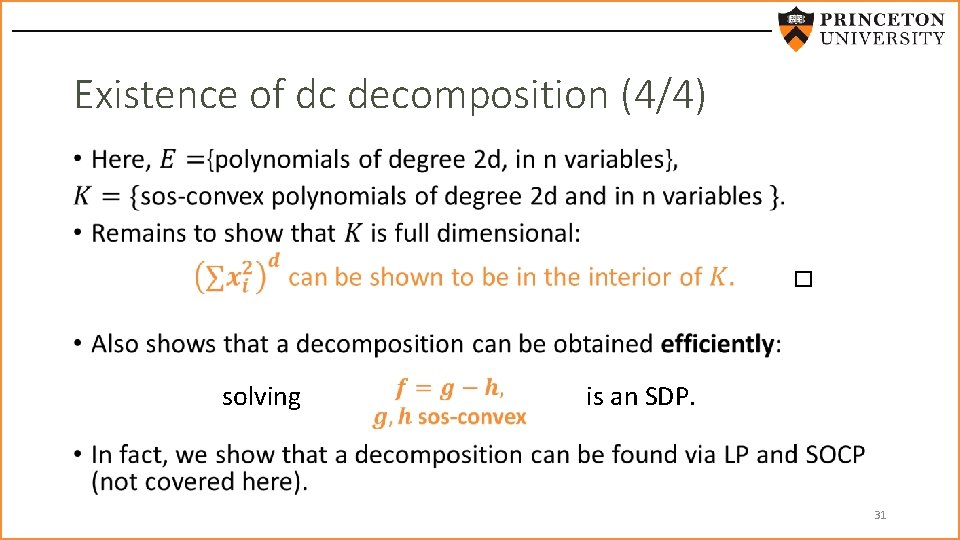

Existence of dc decomposition (4/4) • solving is an SDP. 31

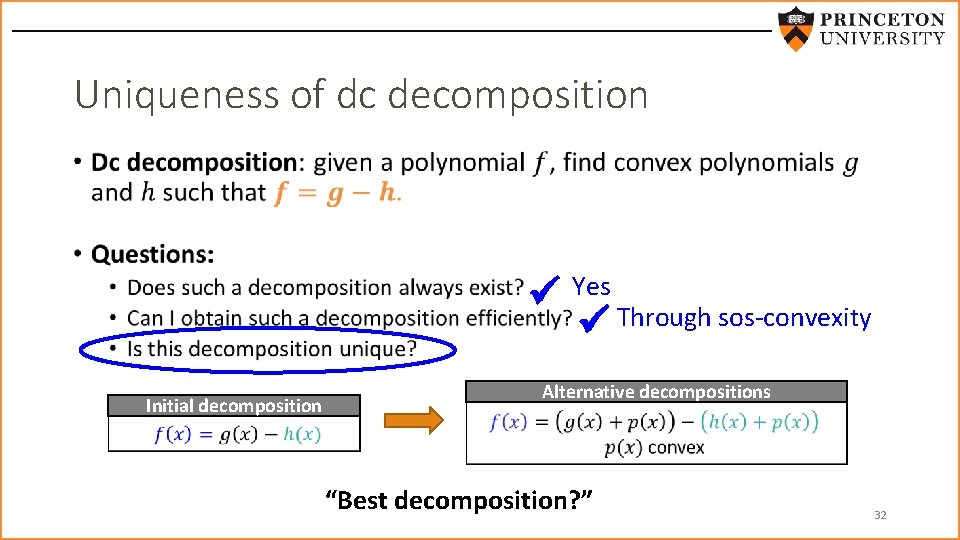

Uniqueness of dc decomposition • Initial decomposition Yes Through sos-convexity Alternative decompositions “Best decomposition? ” 32

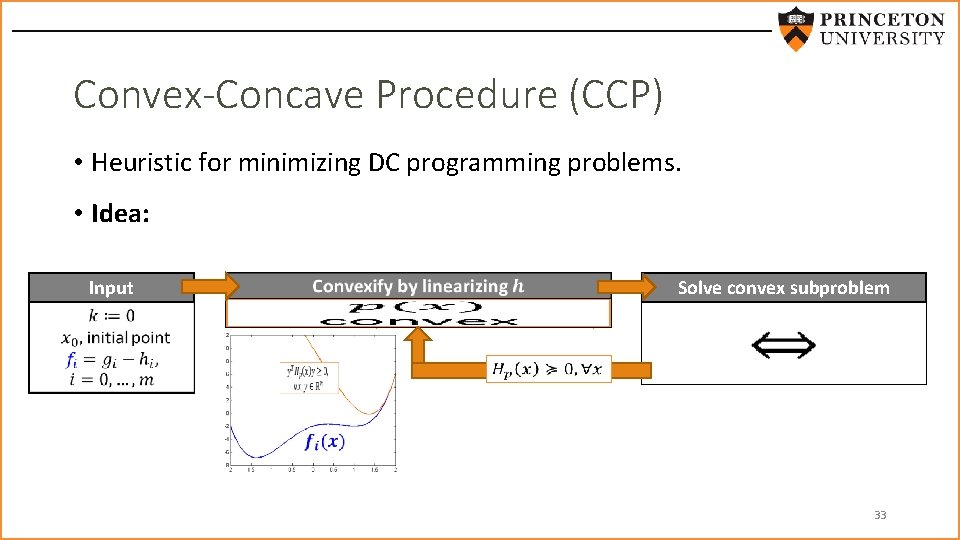

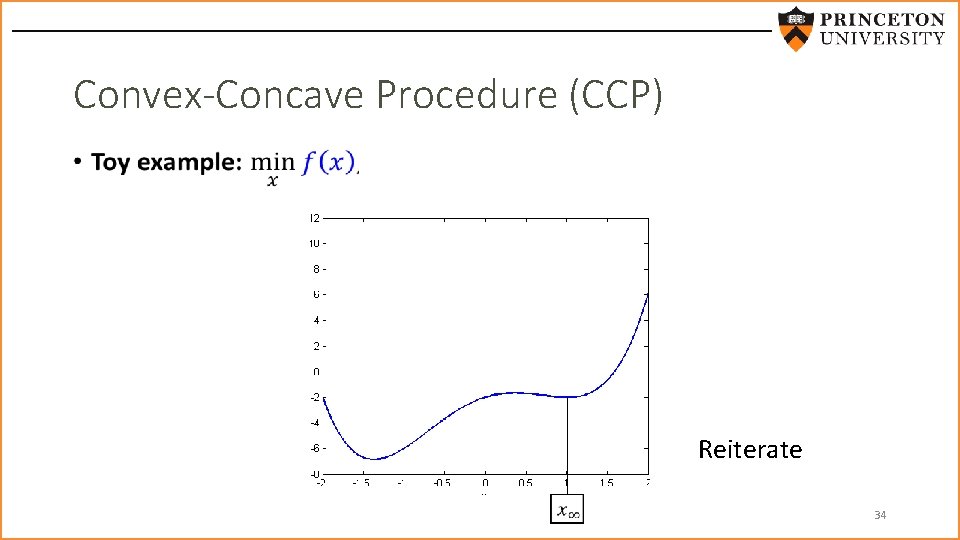

Convex-Concave Procedure (CCP) • Heuristic for minimizing DC programming problems. • Idea: Input Solve convex subproblem convex affine 33

Convex-Concave Procedure (CCP) • Reiterate 34

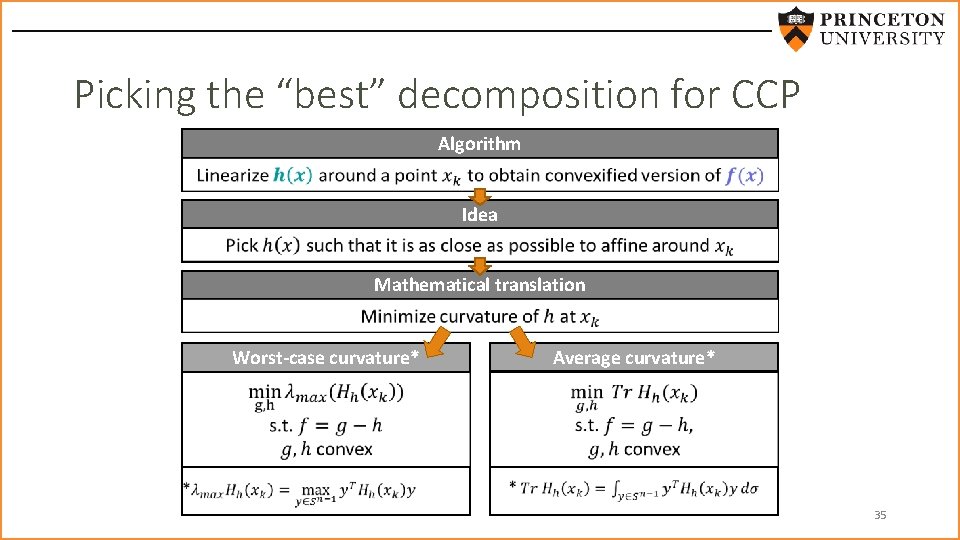

Picking the “best” decomposition for CCP Algorithm Idea Mathematical translation Worst-case curvature* Average curvature* 35

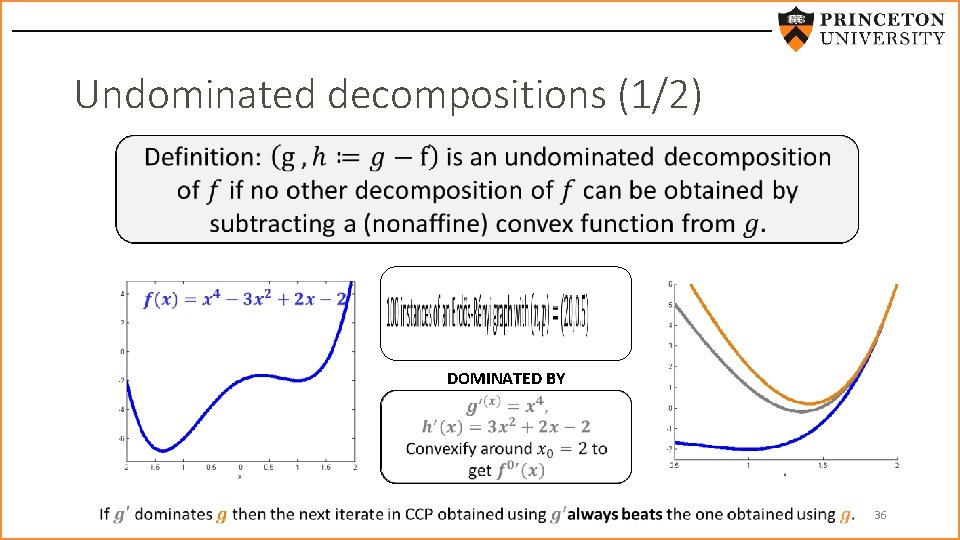

Undominated decompositions (1/2) DOMINATED BY 36

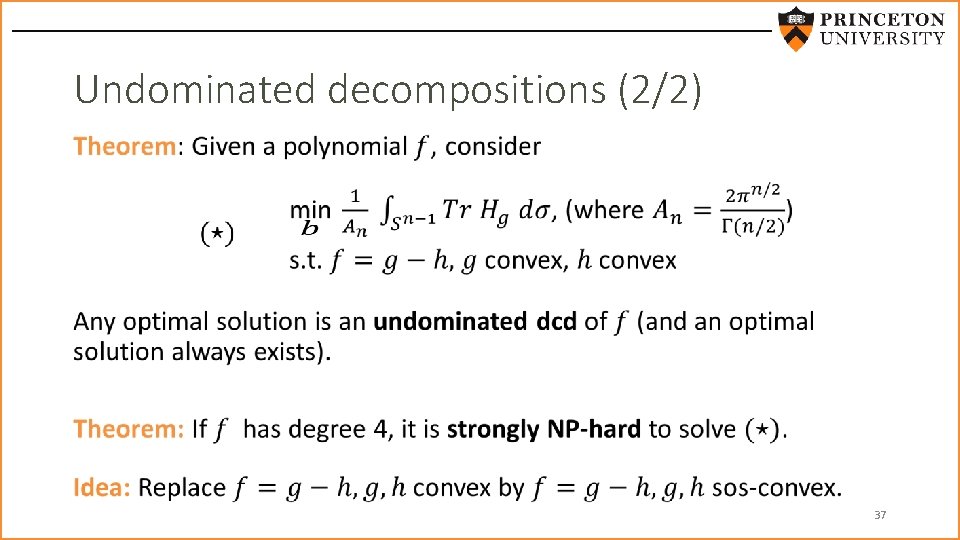

Undominated decompositions (2/2) • 37

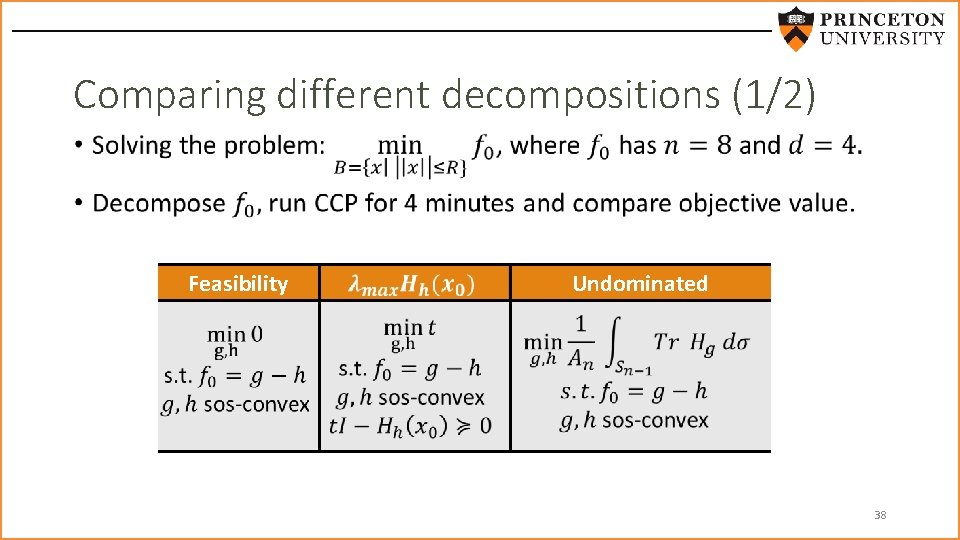

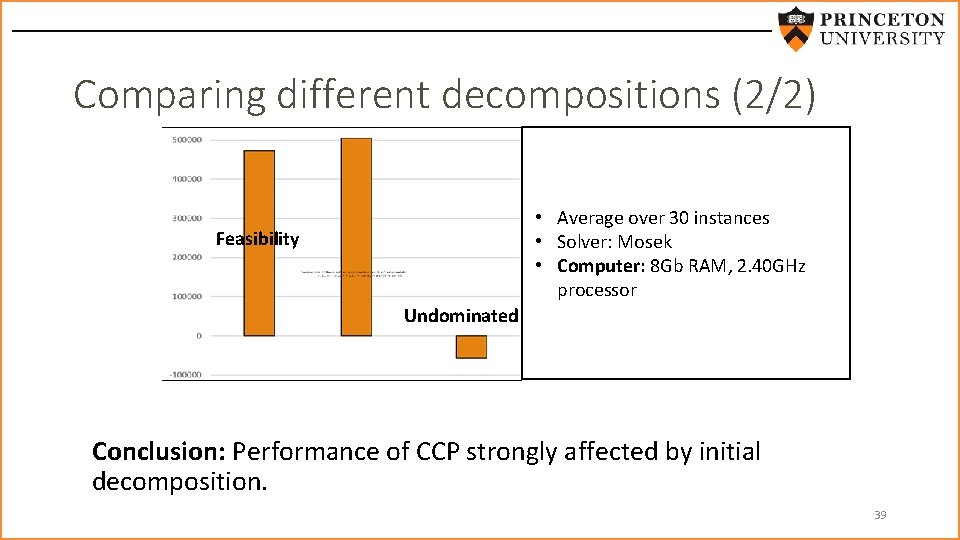

Comparing different decompositions (1/2) • Feasibility Undominated 38

Comparing different decompositions (2/2) • Average over 30 instances • Solver: Mosek • Computer: 8 Gb RAM, 2. 40 GHz processor Feasibility Undominated Conclusion: Performance of CCP strongly affected by initial decomposition. 39

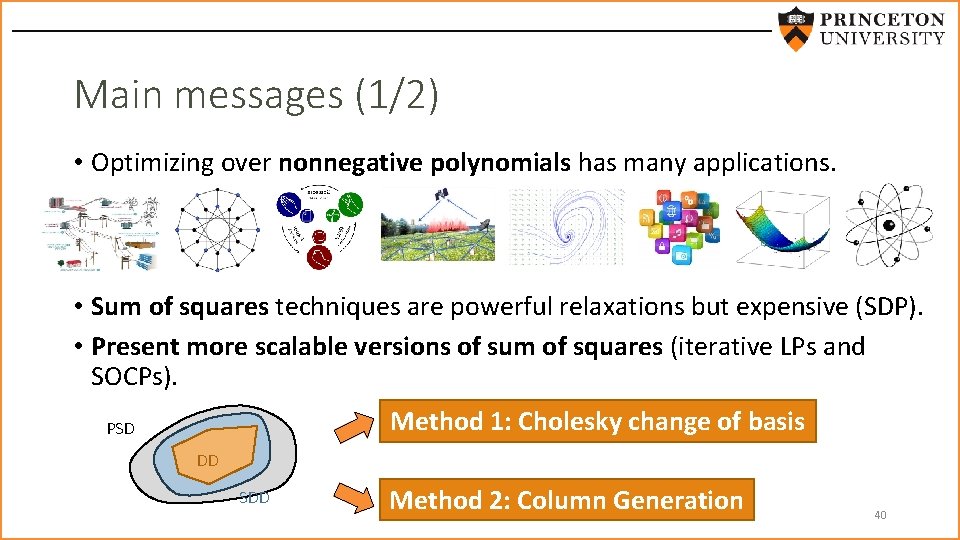

Main messages (1/2) • Optimizing over nonnegative polynomials has many applications. • Sum of squares techniques are powerful relaxations but expensive (SDP). • Present more scalable versions of sum of squares (iterative LPs and SOCPs). Method 1: Cholesky change of basis PSD DD SDD Method 2: Column Generation 40

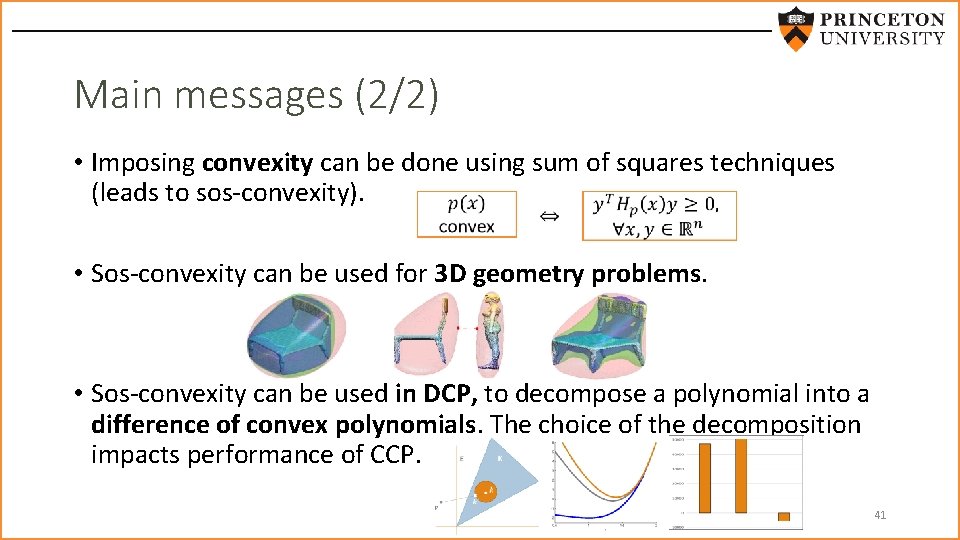

Main messages (2/2) • Imposing convexity can be done using sum of squares techniques (leads to sos-convexity). • Sos-convexity can be used for 3 D geometry problems. • Sos-convexity can be used in DCP, to decompose a polynomial into a difference of convex polynomials. The choice of the decomposition impacts performance of CCP. 41

Thank you for listening Questions? Want to learn more? http: //scholar. princeton. edu/ghall/ 42

- Slides: 42