Samplingbased Approximation Algorithms for Multistage Stochastic Optimization Chaitanya

Sampling-based Approximation Algorithms for Multi-stage Stochastic Optimization Chaitanya Swamy University of Waterloo Joint work with David Shmoys Cornell University

Stochastic Optimization • Way of modeling uncertainty. • Exact data is unavailable or expensive – data is uncertain, specified by a probability distribution. • Want to make the best decisions given this uncertainty in the data. Applications in logistics, transportation models, financial instruments, network design, production planning, … • Dates back to 1950’s and the work of Dantzig.

Stochastic Recourse Models Given : Probability distribution over inputs. Stage I : Make some advance decisions – plan ahead or hedge against uncertainty. Uncertainty evolves through various stages. Learn new information in each stage. Can take recourse actions in each stage – can augment earlier solution paying a recourse cost. Choose initial (stage I) decisions to minimize (stage I cost) + (expected recourse cost).

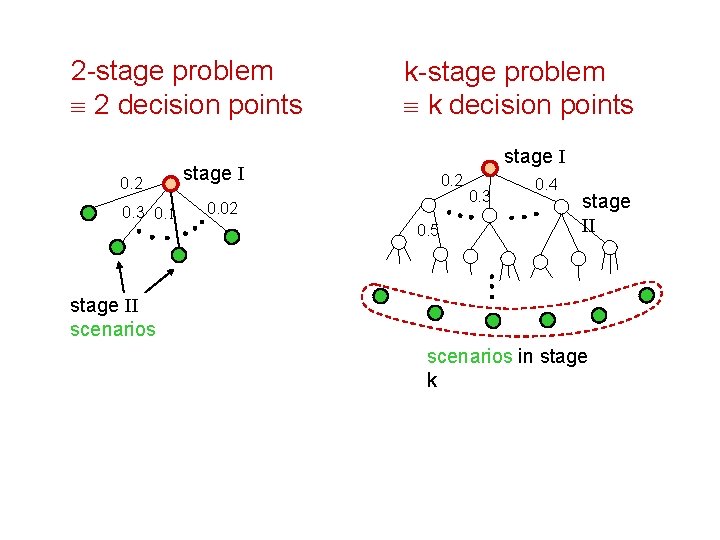

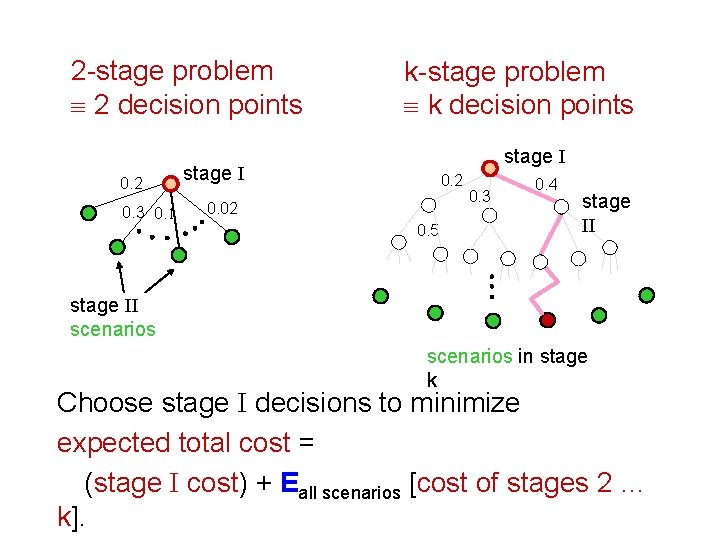

2 -stage problem º 2 decision points 0. 2 0. 3 0. 1 k-stage problem º k decision points stage I 0. 2 0. 02 0. 5 0. 3 0. 4 stage II scenarios in stage k

2 -stage problem º 2 decision points 0. 2 0. 3 0. 1 k-stage problem º k decision points stage I 0. 2 0. 02 0. 5 0. 3 0. 4 stage II scenarios in stage k Choose stage I decisions to minimize expected total cost = (stage I cost) + Eall scenarios [cost of stages 2 … k].

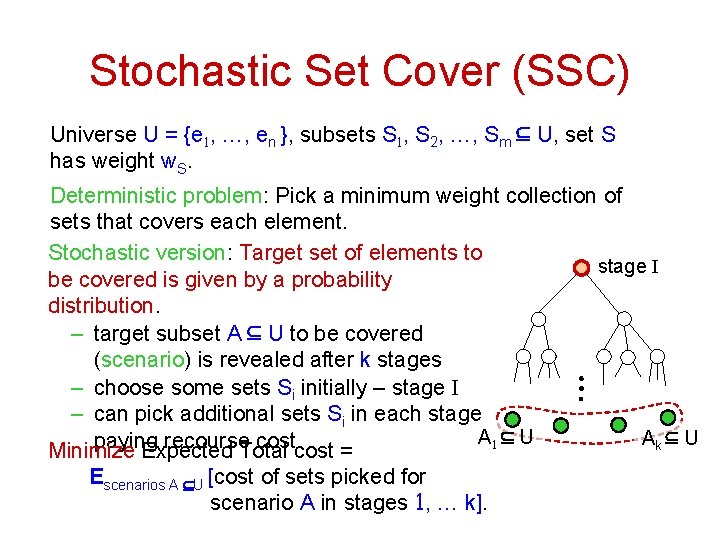

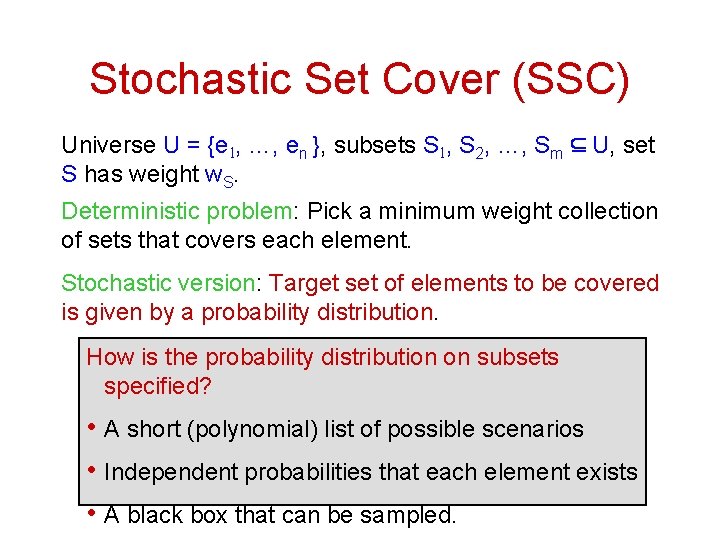

Stochastic Set Cover (SSC) Universe U = {e 1, …, en }, subsets S 1, S 2, …, Sm Í U, set S has weight w. S. Deterministic problem: Pick a minimum weight collection of sets that covers each element. Stochastic version: Target set of elements to stage I be covered is given by a probability distribution. – target subset A Í U to be covered (scenario) is revealed after k stages – choose some sets Si initially – stage I – can pick additional sets Si in each stage A 1 Í U Ak Í U paying recourse cost. Minimize Expected Total cost = Escenarios A ÍU [cost of sets picked for scenario A in stages 1, … k].

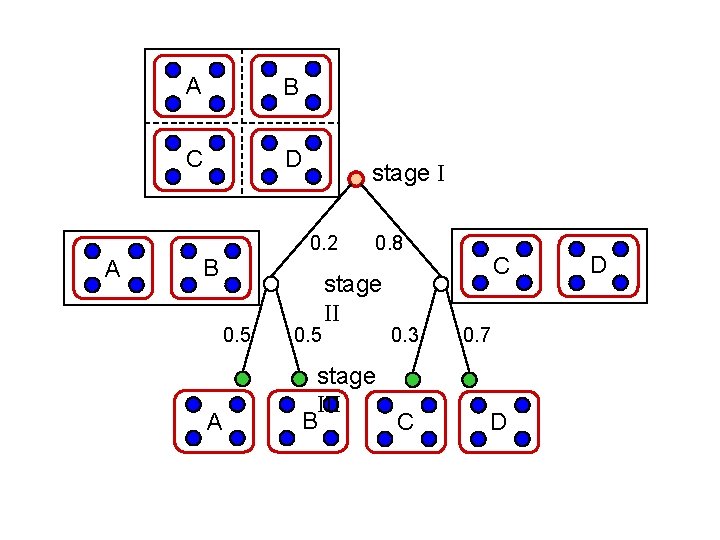

A B C D stage I 0. 2 A B 0. 5 A 0. 5 0. 8 stage II 0. 3 stage III B C C 0. 7 D D

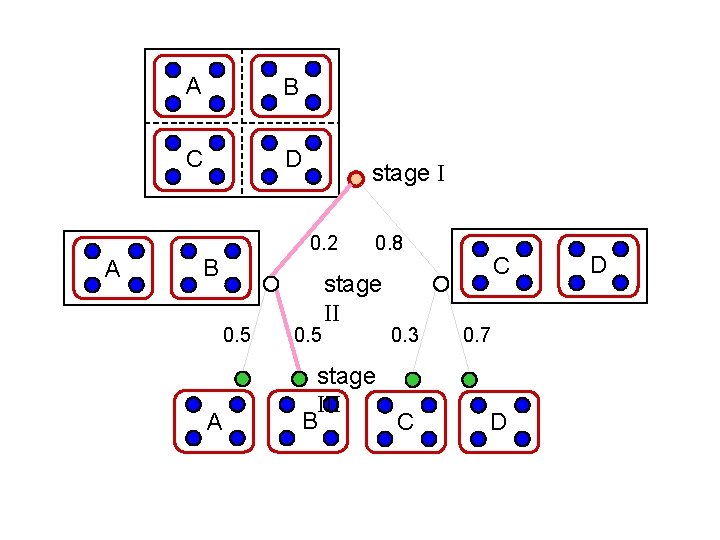

A B C D stage I 0. 2 A B 0. 5 A 0. 5 0. 8 stage II 0. 3 stage III B C C 0. 7 D D

Stochastic Set Cover (SSC) Universe U = {e 1, …, en }, subsets S 1, S 2, …, Sm Í U, set S has weight w. S. Deterministic problem: Pick a minimum weight collection of sets that covers each element. Stochastic version: Target set of elements to be covered is given by a probability distribution. How is the probability distribution on subsets specified? • A short (polynomial) list of possible scenarios • Independent probabilities that each element exists • A black box that can be sampled.

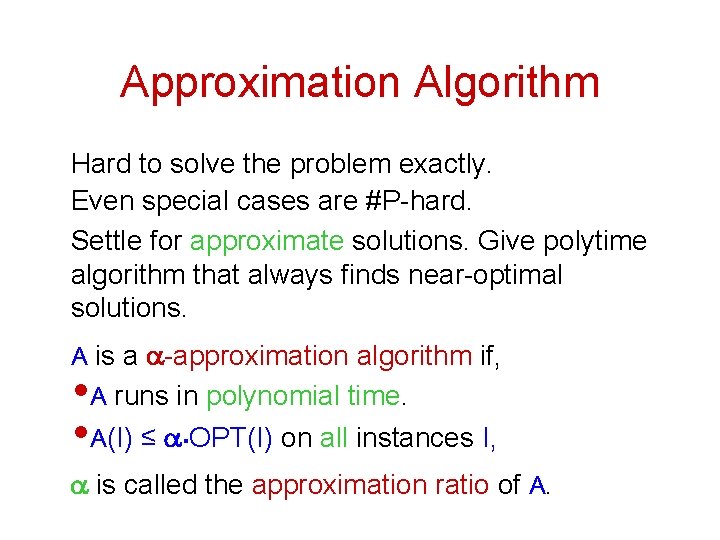

Approximation Algorithm Hard to solve the problem exactly. Even special cases are #P-hard. Settle for approximate solutions. Give polytime algorithm that always finds near-optimal solutions. A is a a-approximation algorithm if, A runs in polynomial time. A(I) ≤ a. OPT(I) on all instances I, • • a is called the approximation ratio of A.

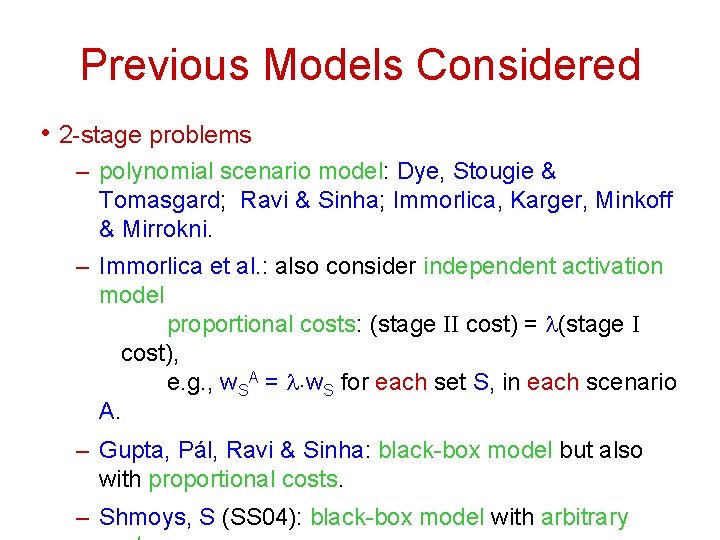

Previous Models Considered • 2 -stage problems – polynomial scenario model: Dye, Stougie & Tomasgard; Ravi & Sinha; Immorlica, Karger, Minkoff & Mirrokni. – Immorlica et al. : also consider independent activation model proportional costs: (stage II cost) = l(stage I cost), e. g. , w. SA = l. w. S for each set S, in each scenario A. – Gupta, Pál, Ravi & Sinha: black-box model but also with proportional costs. – Shmoys, S (SS 04): black-box model with arbitrary

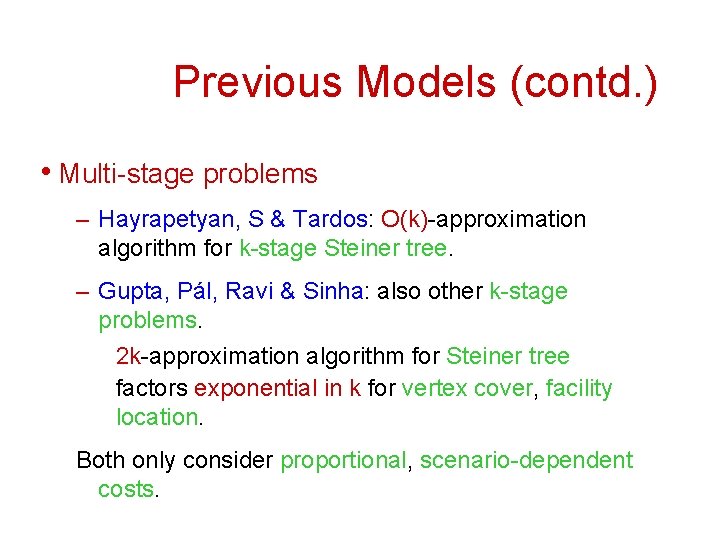

Previous Models (contd. ) • Multi-stage problems – Hayrapetyan, S & Tardos: O(k)-approximation algorithm for k-stage Steiner tree. – Gupta, Pál, Ravi & Sinha: also other k-stage problems. 2 k-approximation algorithm for Steiner tree factors exponential in k for vertex cover, facility location. Both only consider proportional, scenario-dependent costs.

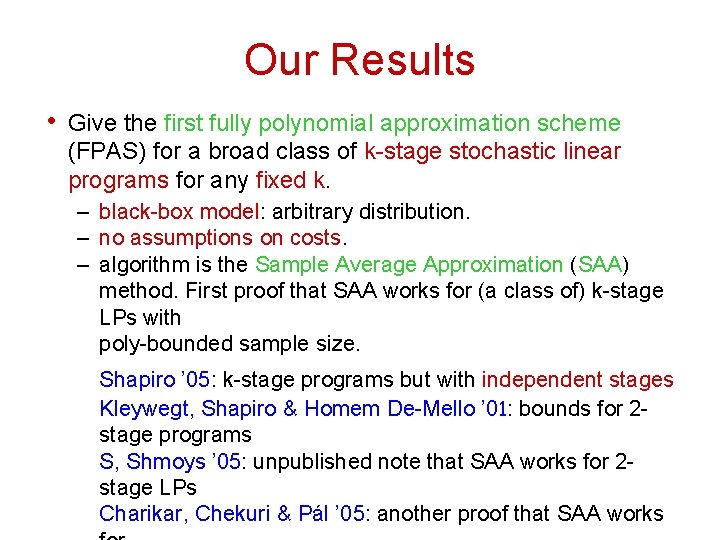

Our Results • Give the first fully polynomial approximation scheme (FPAS) for a broad class of k-stage stochastic linear programs for any fixed k. – black-box model: arbitrary distribution. – no assumptions on costs. – algorithm is the Sample Average Approximation (SAA) method. First proof that SAA works for (a class of) k-stage LPs with poly-bounded sample size. Shapiro ’ 05: k-stage programs but with independent stages Kleywegt, Shapiro & Homem De-Mello ’ 01: bounds for 2 stage programs S, Shmoys ’ 05: unpublished note that SAA works for 2 stage LPs Charikar, Chekuri & Pál ’ 05: another proof that SAA works

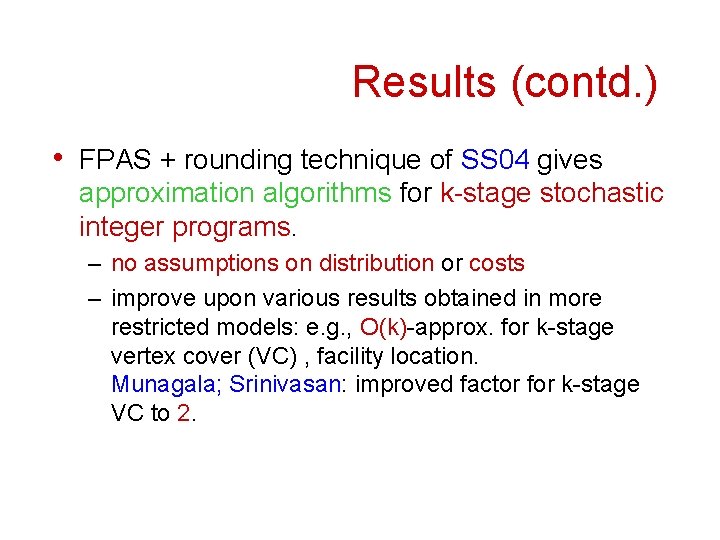

Results (contd. ) • FPAS + rounding technique of SS 04 gives approximation algorithms for k-stage stochastic integer programs. – no assumptions on distribution or costs – improve upon various results obtained in more restricted models: e. g. , O(k)-approx. for k-stage vertex cover (VC) , facility location. Munagala; Srinivasan: improved factor for k-stage VC to 2.

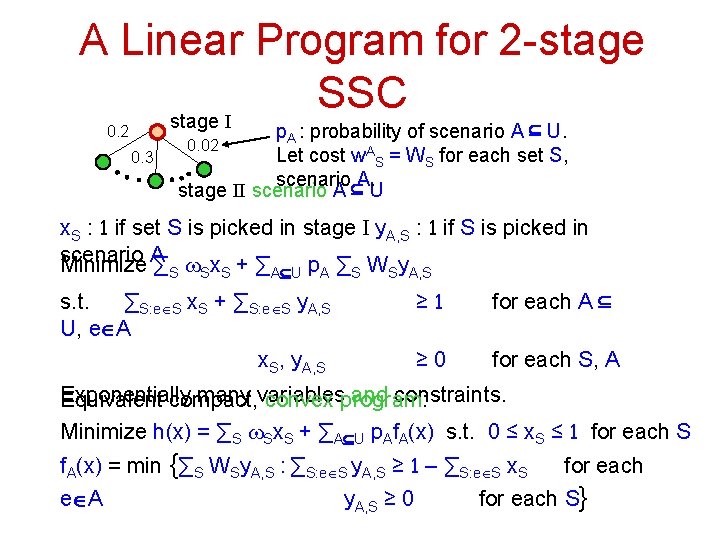

A Linear Program for 2 -stage SSC stage I 0. 2 0. 3 p. A : probability of scenario A Í U. 0. 02 Let cost w. AS = WS for each set S, scenario A. stage II scenario A Í U x. S : 1 if set S is picked in stage I y. A, S : 1 if S is picked in scenario Minimize A ∑ w x +∑ p ∑ W y S S S AÍU A S S A, S s. t. ∑S: eÎS x. S + ∑S: eÎS y. A, S U, eÎA x. S, y. A, S ≥ 1 for each A Í ≥ 0 for each S, A Exponentially many variables and constraints. Equivalent compact, convex program: Minimize h(x) = ∑S w. Sx. S + ∑AÍU p. Af. A(x) s. t. 0 ≤ x. S ≤ 1 for each S f. A(x) = min {∑S WSy. A, S : ∑S: eÎS y. A, S ≥ 1 – ∑S: eÎS x. S eÎA y. A, S ≥ 0 for each S}

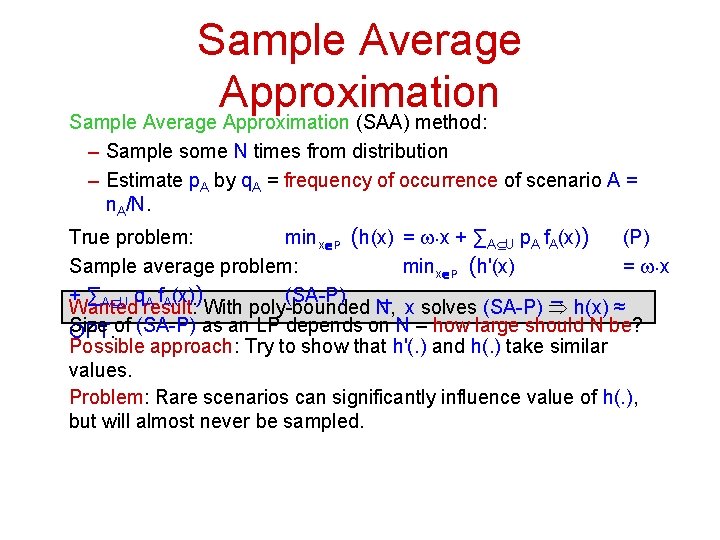

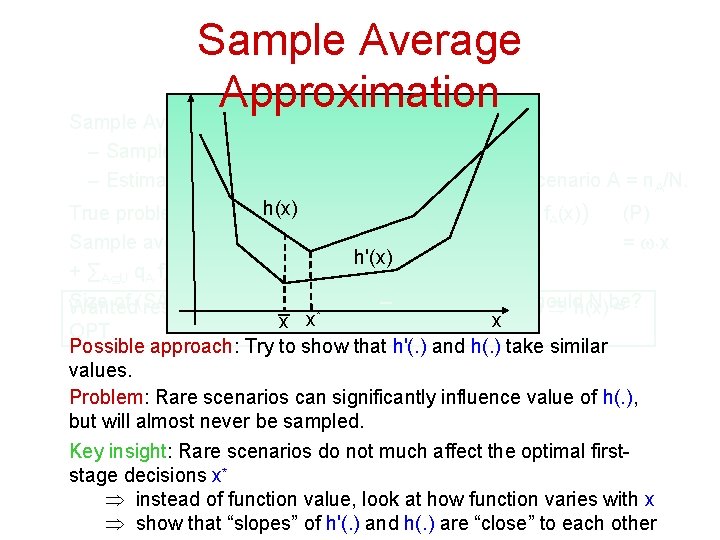

Sample Average Approximation (SAA) method: – Sample some N times from distribution – Estimate p. A by q. A = frequency of occurrence of scenario A = n. A/N. True problem: minxÎP (h(x) = w. x + ∑AÍU p. A f. A(x)) (P) Sample average problem: minxÎP (h'(x) = w. x + ∑AÍU q. A f. A(x)) (SA-P) Wanted result: With poly-bounded N, x solves (SA-P) Þ h(x) ≈ Size of (SA-P) as an LP depends on N – how large should N be? OPT. Possible approach: Try to show that h'(. ) and h(. ) take similar values. Problem: Rare scenarios can significantly influence value of h(. ), but will almost never be sampled.

Sample Average Approximation (SAA) method: – Sample some N times from distribution – Estimate p. A by q. A = frequency of occurrence of scenario A = n. A/N. h(x) True problem: min (h(x) = w. x + ∑ Í p f (x)) (P) xÎP A U A A Sample average problem: minxÎP (h'(x) = w. x h'(x) + ∑AÍU q. A f. A(x)) (SA-P) Size of (SA-P) anpoly-bounded LP depends on Nx –solves how large should N be? Wanted result: as With N, (SA-P) Þ h(x) ≈ x* x x OPT. Possible approach: Try to show that h'(. ) and h(. ) take similar values. Problem: Rare scenarios can significantly influence value of h(. ), but will almost never be sampled. Key insight: Rare scenarios do not much affect the optimal firststage decisions x* Þ instead of function value, look at how function varies with x Þ show that “slopes” of h'(. ) and h(. ) are “close” to each other

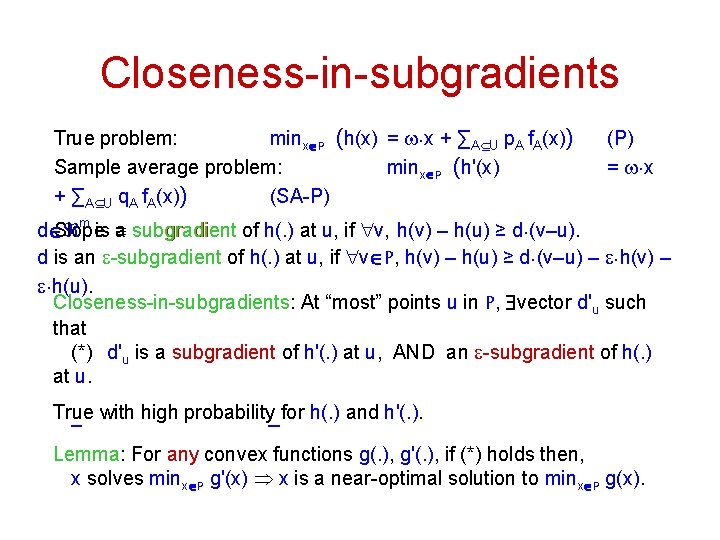

Closeness-in-subgradients True problem: minxÎP (h(x) = w. x + ∑AÍU p. A f. A(x)) Sample average problem: minxÎP (h'(x) + ∑AÍU q. A f. A(x)) (SA-P) (P) = w. x m Slope º subgradient of h(. ) at u, if "v, h(v) – h(u) ≥ d. (v–u). dÎ is a d is an e-subgradient of h(. ) at u, if "vÎP, h(v) – h(u) ≥ d. (v–u) – e. h(v) – e. h(u). Closeness-in-subgradients: At “most” points u in P, $vector d'u such that (*) d'u is a subgradient of h'(. ) at u, AND an e-subgradient of h(. ) at u. True with high probability for h(. ) and h'(. ). Lemma: For any convex functions g(. ), g'(. ), if (*) holds then, x solves minxÎP g'(x) Þ x is a near-optimal solution to minxÎP g(x).

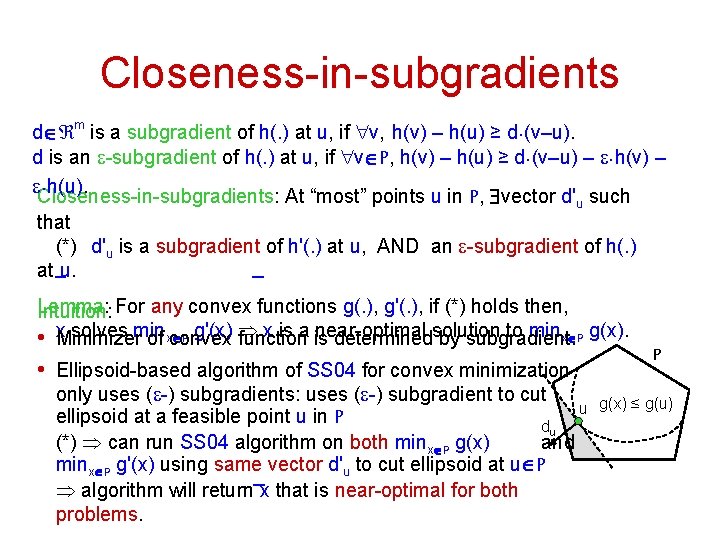

Closeness-in-subgradients dÎ m is a subgradient of h(. ) at u, if "v, h(v) – h(u) ≥ d. (v–u). d is an e-subgradient of h(. ) at u, if "vÎP, h(v) – h(u) ≥ d. (v–u) – e. h(v) – e. h(u). Closeness-in-subgradients: At “most” points u in P, $vector d'u such that (*) d'u is a subgradient of h'(. ) at u, AND an e-subgradient of h(. ) at u. Lemma: Intuition: For any convex functions g(. ), g'(. ), if (*) holds then, solves min g'(x) Þ x is a is near-optimal to minxÎP g(x). • x. Minimizer of xÎP convex function determinedsolution by subgradient. • Ellipsoid-based algorithm of SS 04 for convex minimization only uses (e-) subgradients: uses (e-) subgradient to cut u ellipsoid at a feasible point u in P du (*) Þ can run SS 04 algorithm on both minxÎP g(x) and minxÎP g'(x) using same vector d'u to cut ellipsoid at uÎP Þ algorithm will return x that is near-optimal for both problems. P g(x) ≤ g(u)

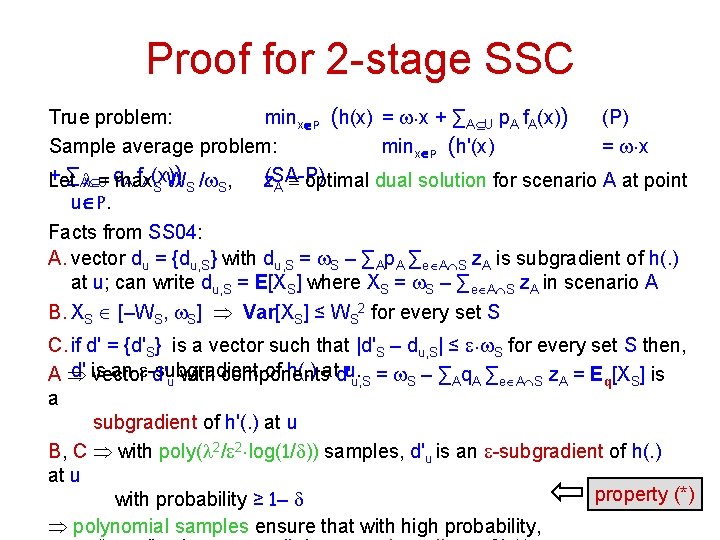

Proof for 2 -stage SSC True problem: minxÎP (h(x) = w. x + ∑AÍU p. A f. A(x)) (P) Sample average problem: minxÎP (h'(x) = w. x + ∑AlÍ= ) U q A f. A(x) Let max z(SA-P) S WS /w. S, A º optimal dual solution for scenario A at point uÎP. Facts from SS 04: A. vector du = {du, S} with du, S = w. S – ∑Ap. A ∑eÎAÇS z. A is subgradient of h(. ) at u; can write du, S = E[XS] where XS = w. S – ∑eÎAÇS z. A in scenario A B. XS Î [–WS, w. S] Þ Var[XS] ≤ WS 2 for every set S C. if d' = {d'S} is a vector such that |d'S – du, S| ≤ e. w. S for every set S then, d' is an e-subgradient of h(. ) atd'u. AÞ vector d'u with components u, S = w. S – ∑Aq. A ∑eÎAÇS z. A = Eq[XS] is a subgradient of h'(. ) at u B, C Þ with poly(l 2/e 2. log(1/d)) samples, d'u is an e-subgradient of h(. ) at u property (*) with probability ≥ 1– d Þ polynomial samples ensure that with high probability,

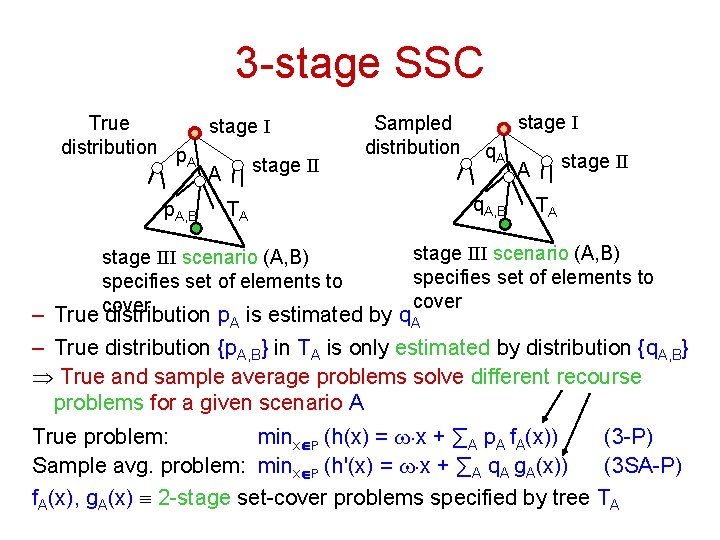

3 -stage SSC True stage I distribution p A stage II A p. A, B TA Sampled distribution stage I q. A, B stage II A TA stage III scenario (A, B) specifies set of elements to cover – True cover distribution p. A is estimated by q. A – True distribution {p. A, B} in TA is only estimated by distribution {q. A, B} Þ True and sample average problems solve different recourse problems for a given scenario A True problem: minxÎP (h(x) = w. x + ∑A p. A f. A(x)) (3 -P) Sample avg. problem: minxÎP (h'(x) = w. x + ∑A q. A g. A(x)) (3 SA-P) f. A(x), g. A(x) º 2 -stage set-cover problems specified by tree TA

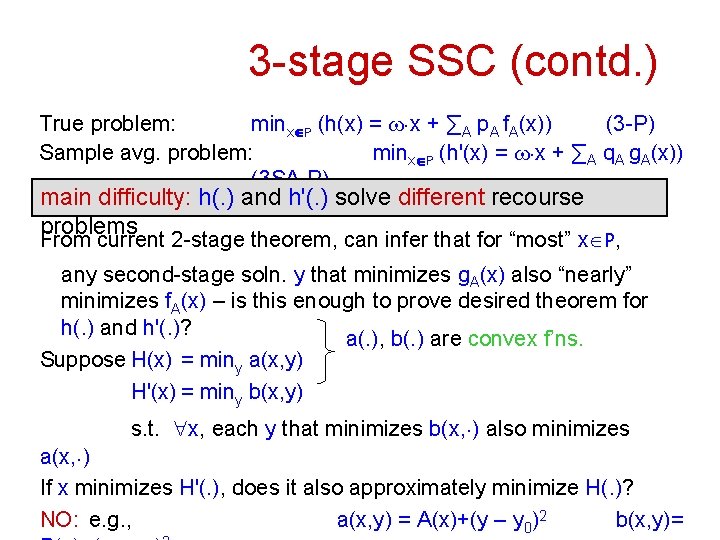

3 -stage SSC (contd. ) True problem: minxÎP (h(x) = w. x + ∑A p. A f. A(x)) (3 -P) Sample avg. problem: minxÎP (h'(x) = w. x + ∑A q. A g. A(x)) (3 SA-P) main difficulty: h(. ) and h'(. ) solve different recourse problems From current 2 -stage theorem, can infer that for “most” xÎP, any second-stage soln. y that minimizes g. A(x) also “nearly” minimizes f. A(x) – is this enough to prove desired theorem for h(. ) and h'(. )? a(. ), b(. ) are convex f’ns. Suppose H(x) = miny a(x, y) H'(x) = miny b(x, y) s. t. "x, each y that minimizes b(x, . ) also minimizes a(x, . ) If x minimizes H'(. ), does it also approximately minimize H(. )? NO: e. g. , a(x, y) = A(x)+(y – y 0)2 b(x, y)=

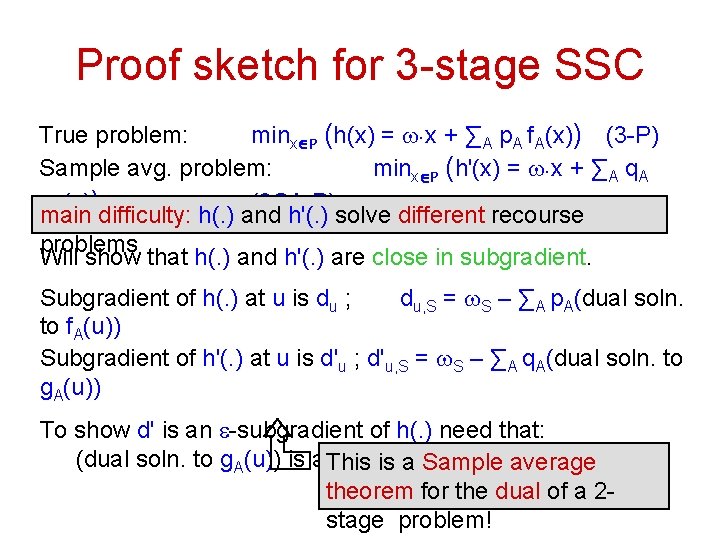

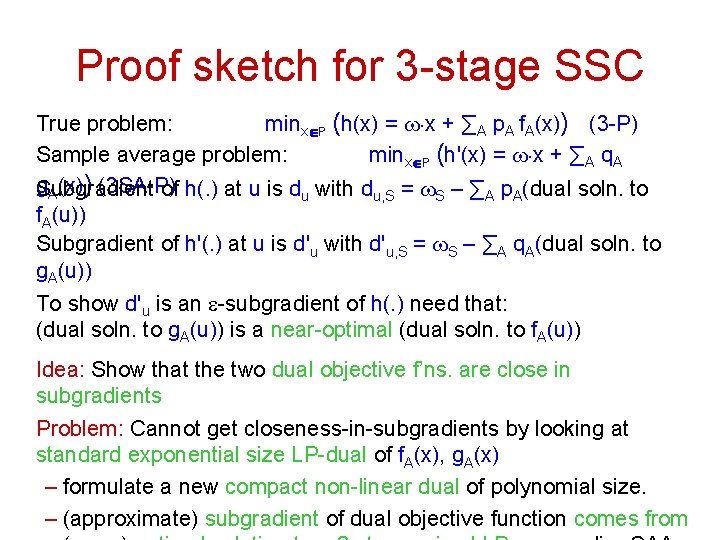

Proof sketch for 3 -stage SSC True problem: minxÎP (h(x) = w. x + ∑A p. A f. A(x)) (3 -P) Sample avg. problem: minxÎP (h'(x) = w. x + ∑A q. A g. A(x)) (3 SA-P) main difficulty: h(. ) and h'(. ) solve different recourse problems Will show that h(. ) and h'(. ) are close in subgradient. Subgradient of h(. ) at u is du ; du, S = w. S – ∑A p. A(dual soln. to f. A(u)) Subgradient of h'(. ) at u is d'u ; d'u, S = w. S – ∑A q. A(dual soln. to g. A(u)) To show d' is an e-subgradient of h(. ) need that: (dual soln. to g. A(u)) is a This near-optimal (dual soln. to f. A(u)) is a Sample average theorem for the dual of a 2 stage problem!

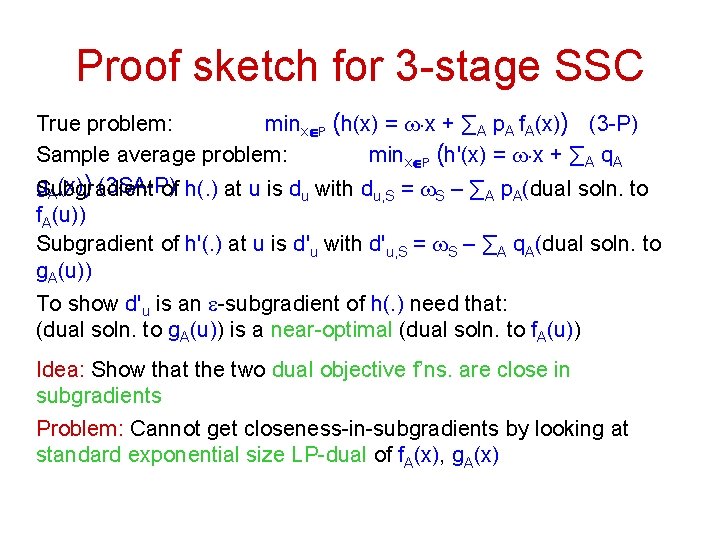

Proof sketch for 3 -stage SSC True problem: minxÎP (h(x) = w. x + ∑A p. A f. A(x)) (3 -P) Sample average problem: minxÎP (h'(x) = w. x + ∑A q. A g. A(x)) (3 SA-P) Subgradient of h(. ) at u is du with du, S = w. S – ∑A p. A(dual soln. to f. A(u)) Subgradient of h'(. ) at u is d'u with d'u, S = w. S – ∑A q. A(dual soln. to g. A(u)) To show d'u is an e-subgradient of h(. ) need that: (dual soln. to g. A(u)) is a near-optimal (dual soln. to f. A(u)) Idea: Show that the two dual objective f’ns. are close in subgradients Problem: Cannot get closeness-in-subgradients by looking at standard exponential size LP-dual of f. A(x), g. A(x)

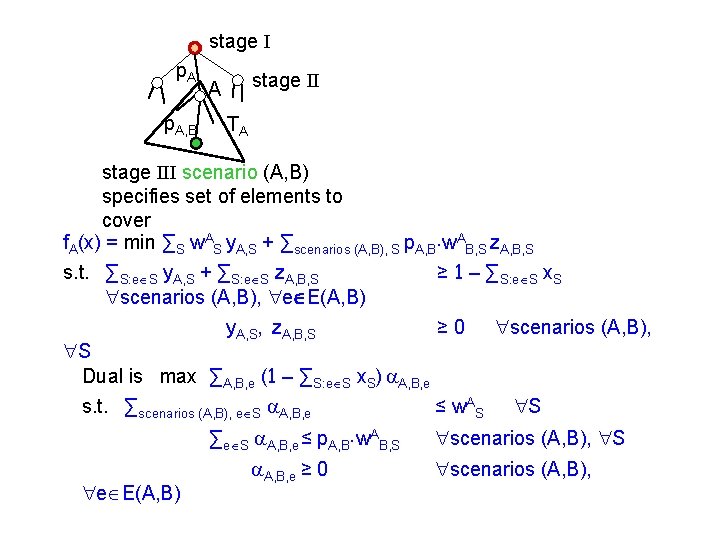

stage I p. A, B stage II A TA stage III scenario (A, B) specifies set of elements to cover f. A(x) = min ∑S w. AS y. A, S + ∑scenarios (A, B), S p. A, B. w. AB, S z. A, B, S s. t. ∑S: eÎS y. A, S + ∑S: eÎS z. A, B, S ≥ 1 – ∑S: eÎS x. S "scenarios (A, B), "eÎE(A, B) y. A, S, z. A, B, S ≥ 0 "scenarios (A, B), "S Dual is max ∑A, B, e (1 – ∑S: eÎS x. S) a. A, B, e s. t. ∑scenarios (A, B), eÎS a. A, B, e ∑eÎS a. A, B, e ≤ p. A, B. w. AB, S "eÎE(A, B) a. A, B, e ≥ 0 ≤ w. AS "S "scenarios (A, B),

Proof sketch for 3 -stage SSC True problem: minxÎP (h(x) = w. x + ∑A p. A f. A(x)) (3 -P) Sample average problem: minxÎP (h'(x) = w. x + ∑A q. A g. A(x)) (3 SA-P) Subgradient of h(. ) at u is du with du, S = w. S – ∑A p. A(dual soln. to f. A(u)) Subgradient of h'(. ) at u is d'u with d'u, S = w. S – ∑A q. A(dual soln. to g. A(u)) To show d'u is an e-subgradient of h(. ) need that: (dual soln. to g. A(u)) is a near-optimal (dual soln. to f. A(u)) Idea: Show that the two dual objective f’ns. are close in subgradients Problem: Cannot get closeness-in-subgradients by looking at standard exponential size LP-dual of f. A(x), g. A(x) – formulate a new compact non-linear dual of polynomial size. – (approximate) subgradient of dual objective function comes from

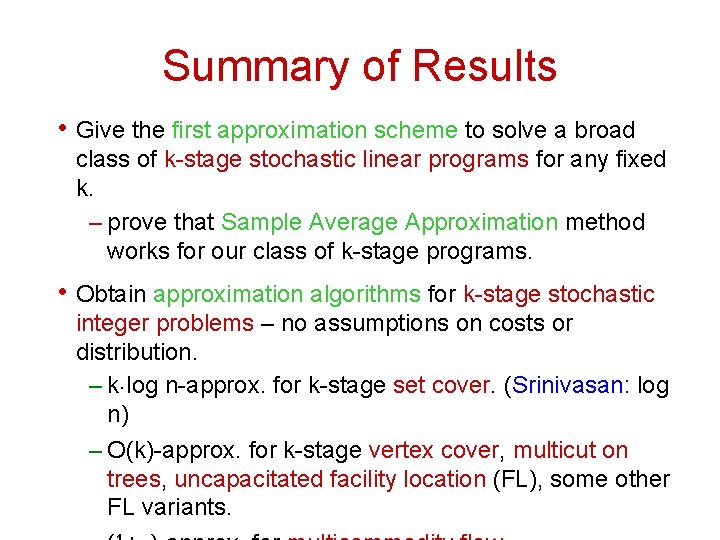

Summary of Results • Give the first approximation scheme to solve a broad class of k-stage stochastic linear programs for any fixed k. – prove that Sample Average Approximation method works for our class of k-stage programs. • Obtain approximation algorithms for k-stage stochastic integer problems – no assumptions on costs or distribution. – k. log n-approx. for k-stage set cover. (Srinivasan: log n) – O(k)-approx. for k-stage vertex cover, multicut on trees, uncapacitated facility location (FL), some other FL variants.

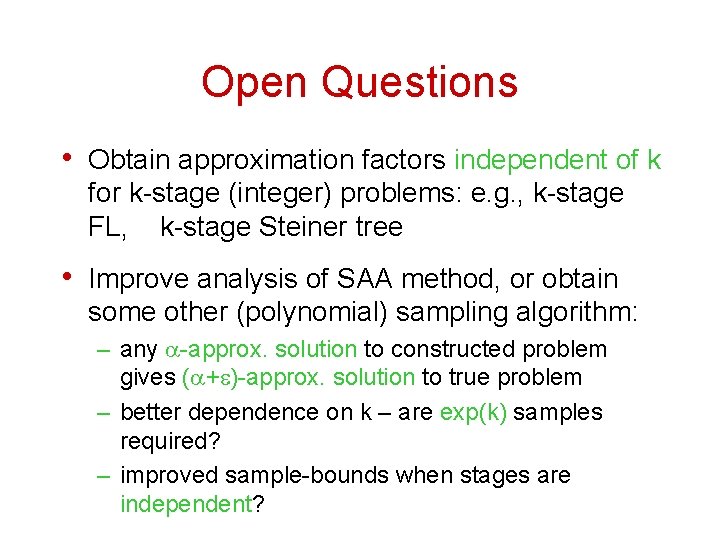

Open Questions • Obtain approximation factors independent of k for k-stage (integer) problems: e. g. , k-stage FL, k-stage Steiner tree • Improve analysis of SAA method, or obtain some other (polynomial) sampling algorithm: – any a-approx. solution to constructed problem gives (a+e)-approx. solution to true problem – better dependence on k – are exp(k) samples required? – improved sample-bounds when stages are independent?

Thank You.

- Slides: 30