Sampling Lower Bounds via Information Theory Ziv BarYossef

Sampling Lower Bounds via Information Theory Ziv Bar-Yossef IBM Almaden 1

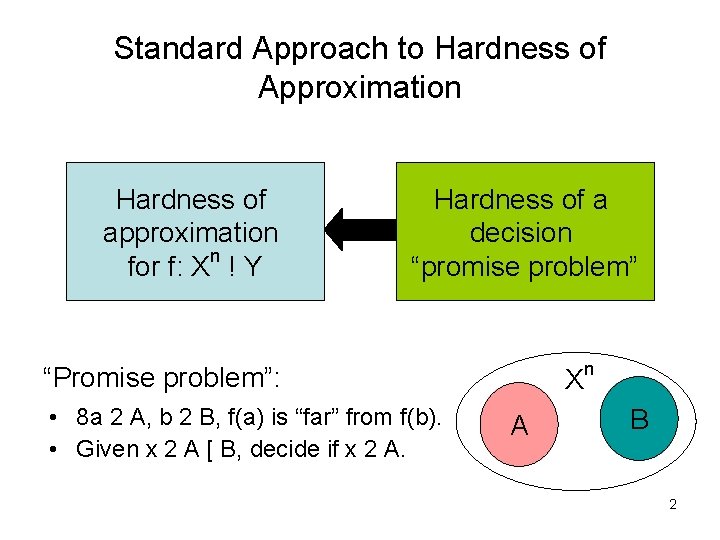

Standard Approach to Hardness of Approximation Hardness of approximation for f: Xn ! Y Hardness of a decision “promise problem” “Promise problem”: • 8 a 2 A, b 2 B, f(a) is “far” from f(b). • Given x 2 A [ B, decide if x 2 A. X A n B 2

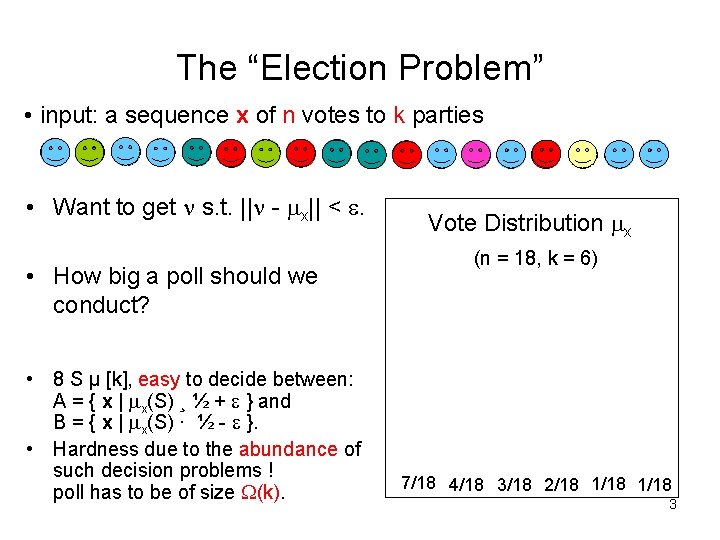

The “Election Problem” • input: a sequence x of n votes to k parties • Want to get s. t. || - x|| < . • How big a poll should we conduct? • 8 S µ [k], easy to decide between: A = { x | x(S) ¸ ½ + } and B = { x | x(S) · ½ - }. • Hardness due to the abundance of such decision problems ! poll has to be of size (k). Vote Distribution x (n = 18, k = 6) 7/18 4/18 3/18 2/18 1/18 3

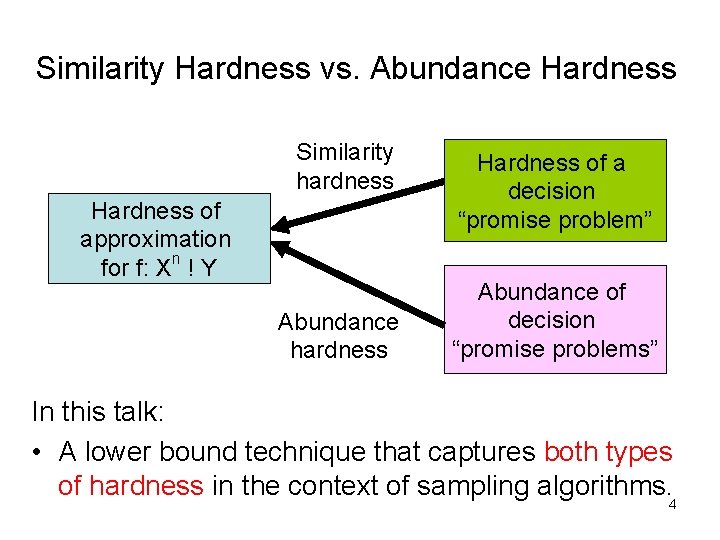

Similarity Hardness vs. Abundance Hardness Similarity hardness Hardness of approximation for f: Xn ! Y Abundance hardness Hardness of a decision “promise problem” Abundance of decision “promise problems” In this talk: • A lower bound technique that captures both types of hardness in the context of sampling algorithms. 4

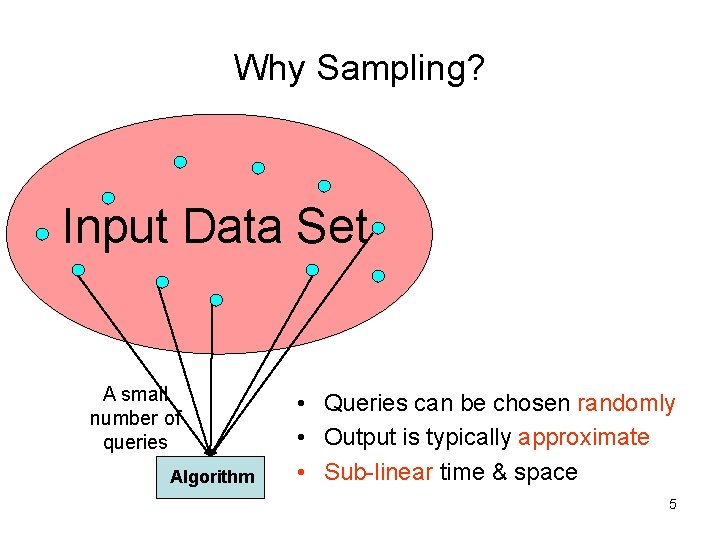

Why Sampling? Input Data Set A small number of queries Algorithm • Queries can be chosen randomly • Output is typically approximate • Sub-linear time & space 5

Some Examples Statistics • Statistical decision and estimation • Statistical learning • … CS • • • PAC and machine learning Property testing Sub-linear time approximation algorithms Extractors and dispersers … 6

Query Complexity Query complexity of a function f: # of queries required to approximate f Examples: • High query complexity: – Parity – # of distinct elements • Low query complexity: – Mean in [0, 1] – Median 7

Our Main Result • A technique for obtaining lower bounds on the query complexity of approximating functions – Template for obtaining specific lower bounds • Arbitrary domain and range • All types of approximation • Usable for wide classes of functions with symmetry properties – Outperforms previous techniques for functions with “abundance hardness” – Matches previous techniques for functions with “similarity hardness” 8

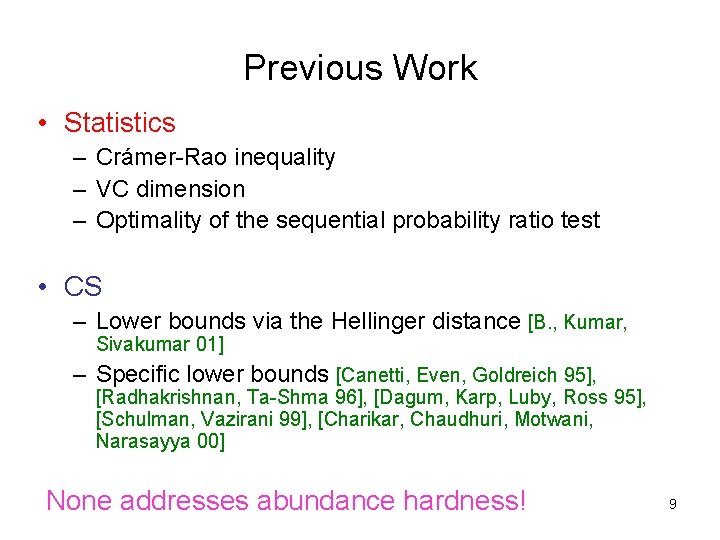

Previous Work • Statistics – Crámer-Rao inequality – VC dimension – Optimality of the sequential probability ratio test • CS – Lower bounds via the Hellinger distance [B. , Kumar, Sivakumar 01] – Specific lower bounds [Canetti, Even, Goldreich 95], [Radhakrishnan, Ta-Shma 96], [Dagum, Karp, Luby, Ross 95], [Schulman, Vazirani 99], [Charikar, Chaudhuri, Motwani, Narasayya 00] None addresses abundance hardness! 9

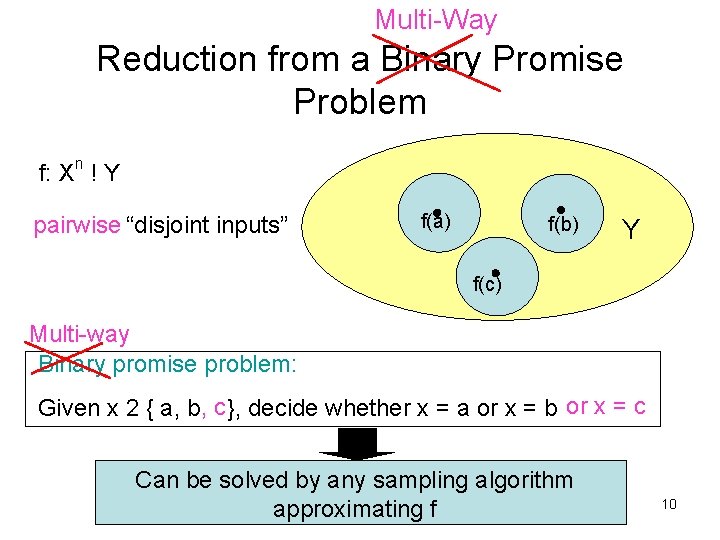

Multi-Way Reduction from a Binary Promise Problem n f: X ! Y pairwise “disjoint inputs” f(a) f(b) Y f(c) Multi-way Binary promise problem: Given x 2 { a, b, c }, decide whether x = a or x = b or x = c Can be solved by any sampling algorithm approximating f 10

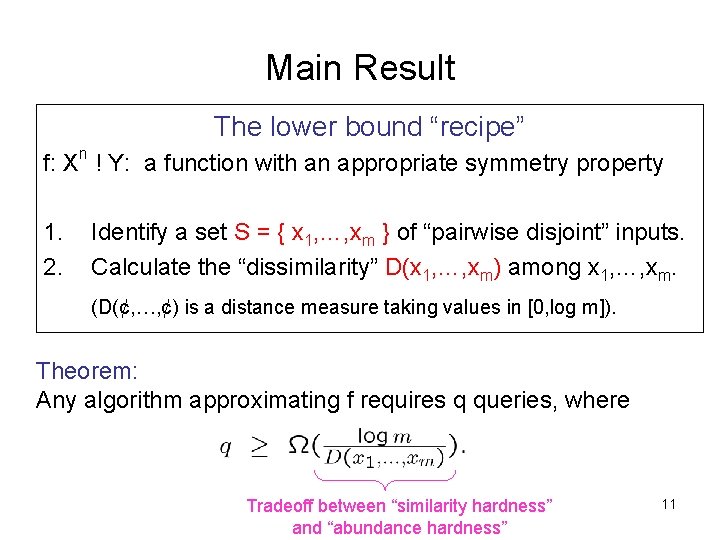

Main Result The lower bound “recipe” f: Xn ! Y: a function with an appropriate symmetry property 1. 2. Identify a set S = { x 1, …, xm } of “pairwise disjoint” inputs. Calculate the “dissimilarity” D(x 1, …, xm) among x 1, …, xm. (D(¢, …, ¢) is a distance measure taking values in [0, log m]). Theorem: Any algorithm approximating f requires q queries, where Tradeoff between “similarity hardness” and “abundance hardness” 11

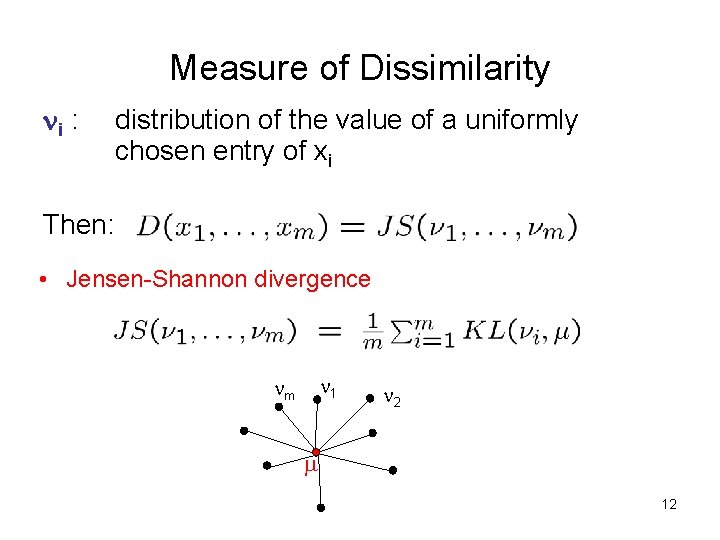

Measure of Dissimilarity i : distribution of the value of a uniformly chosen entry of xi Then: • Jensen-Shannon divergence 1 m 2 12

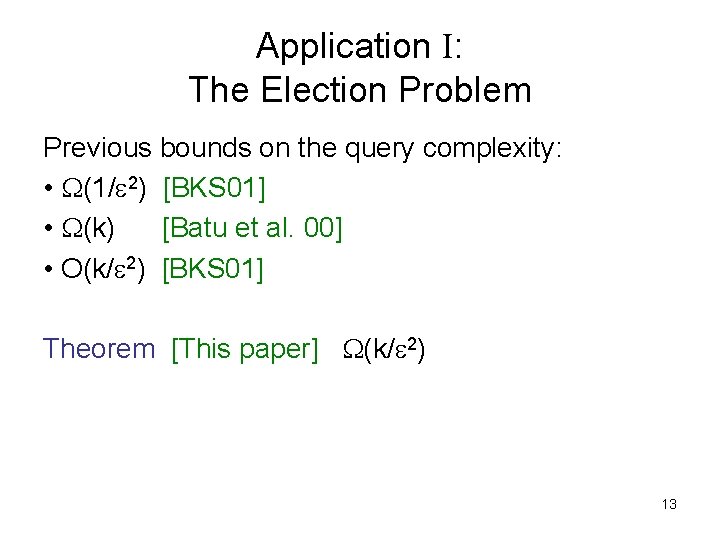

Application I: The Election Problem Previous bounds on the query complexity: • (1/ 2) [BKS 01] • (k) [Batu et al. 00] • O(k/ 2) [BKS 01] Theorem [This paper] (k/ 2) 13

![Combinatorial Designs t-design: B 1 [k] B 3 B 2 Proposition For all k Combinatorial Designs t-design: B 1 [k] B 3 B 2 Proposition For all k](http://slidetodoc.com/presentation_image/77107fbc14245a68376b4a9789b190bd/image-14.jpg)

Combinatorial Designs t-design: B 1 [k] B 3 B 2 Proposition For all k and for all t ¸ 12, there exists a t-design of size m = 2 (k). 14

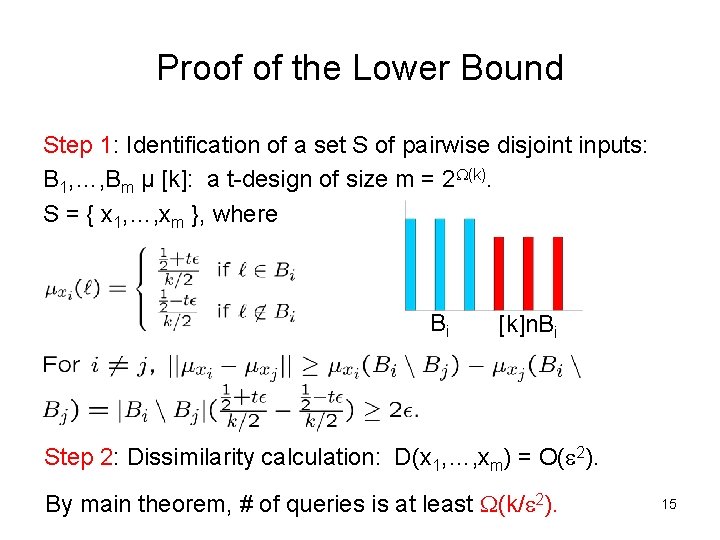

Proof of the Lower Bound Step 1: Identification of a set S of pairwise disjoint inputs: B 1, …, Bm µ [k]: a t-design of size m = 2 (k). S = { x 1, …, xm }, where Bi [k]n. Bi Step 2: Dissimilarity calculation: D(x 1, …, xm) = O( 2). By main theorem, # of queries is at least (k/ 2). 15

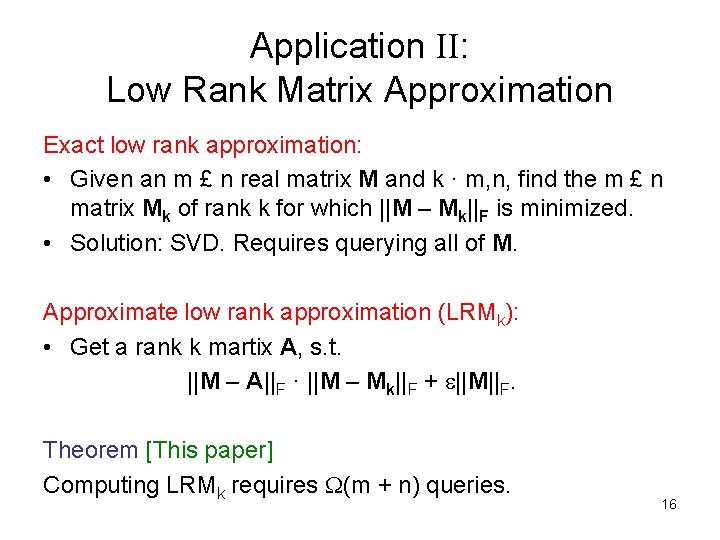

Application II: Low Rank Matrix Approximation Exact low rank approximation: • Given an m £ n real matrix M and k · m, n, find the m £ n matrix Mk of rank k for which ||M – Mk||F is minimized. • Solution: SVD. Requires querying all of M. Approximate low rank approximation (LRMk): • Get a rank k martix A, s. t. ||M – A||F · ||M – Mk||F + ||M||F. Theorem [This paper] Computing LRMk requires (m + n) queries. 16

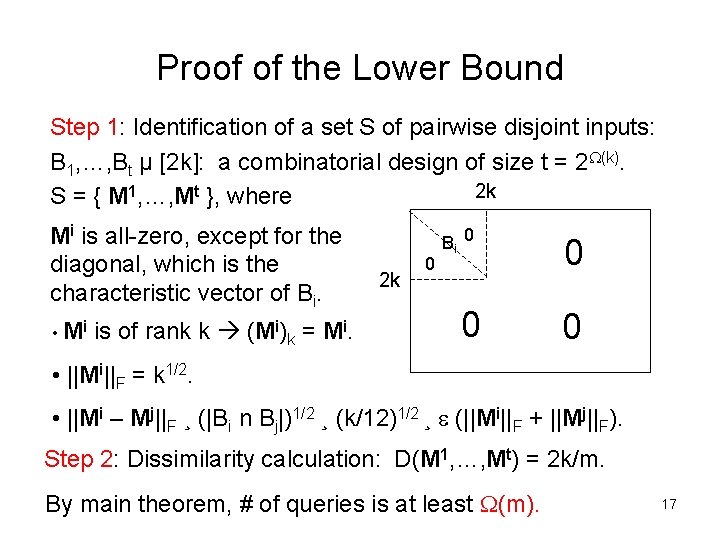

Proof of the Lower Bound Step 1: Identification of a set S of pairwise disjoint inputs: B 1, …, Bt µ [2 k]: a combinatorial design of size t = 2 (k). 2 k S = { M 1, …, Mt }, where Mi is all-zero, except for the diagonal, which is the characteristic vector of Bi. • Mi is of rank k (Mi)k = Mi. 2 k 0 Bi 0 0 • ||Mi||F = k 1/2. • ||Mi – Mj||F ¸ (|Bi n Bj|)1/2 ¸ (k/12)1/2 ¸ (||Mi||F + ||Mj||F). Step 2: Dissimilarity calculation: D(M 1, …, Mt) = 2 k/m. By main theorem, # of queries is at least (m). 17

![Low Rank Matrix Approximation (cont. ) Theorem [Frieze, Kannan, Vempala 98] By querying an Low Rank Matrix Approximation (cont. ) Theorem [Frieze, Kannan, Vempala 98] By querying an](http://slidetodoc.com/presentation_image/77107fbc14245a68376b4a9789b190bd/image-18.jpg)

Low Rank Matrix Approximation (cont. ) Theorem [Frieze, Kannan, Vempala 98] By querying an s £ s submatrix of M chosen using any distributions which “approximate” the row and column weight distributions of M, one can solve LRMk with s = O(k 4/ 3). Theorem [This paper] Solving LRMk by querying an s £ s submatrix of M chosen even according to the exact row and column weight distributions of M requires s = (k/ 2). 18

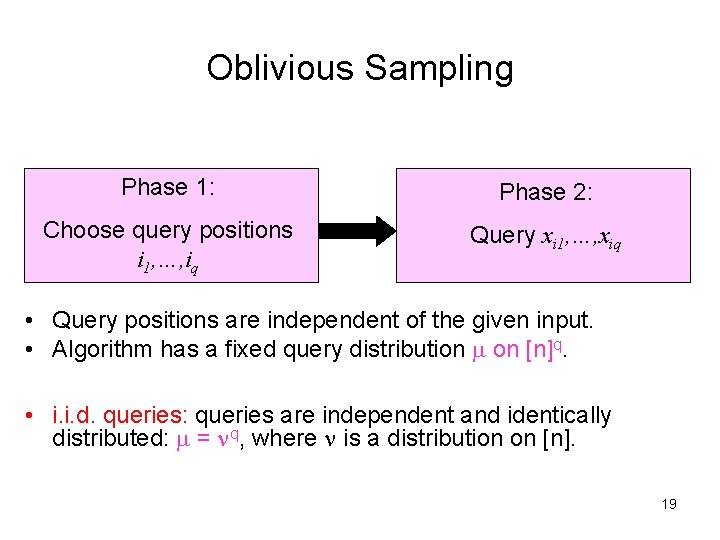

Oblivious Sampling Phase 1: Phase 2: Choose query positions i 1, …, iq Query xi 1, …, xiq • Query positions are independent of the given input. • Algorithm has a fixed query distribution on [n]q. • i. i. d. queries: queries are independent and identically distributed: = q, where is a distribution on [n]. 19

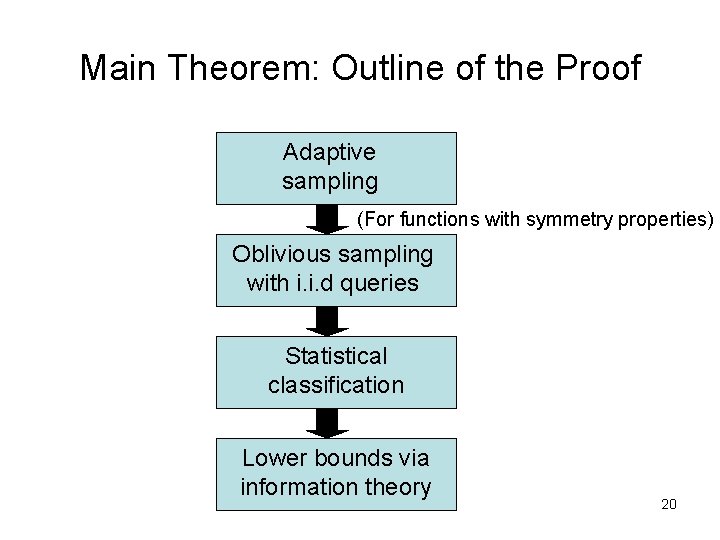

Main Theorem: Outline of the Proof Adaptive sampling (For functions with symmetry properties) Oblivious sampling with i. i. d queries Statistical classification Lower bounds via information theory 20

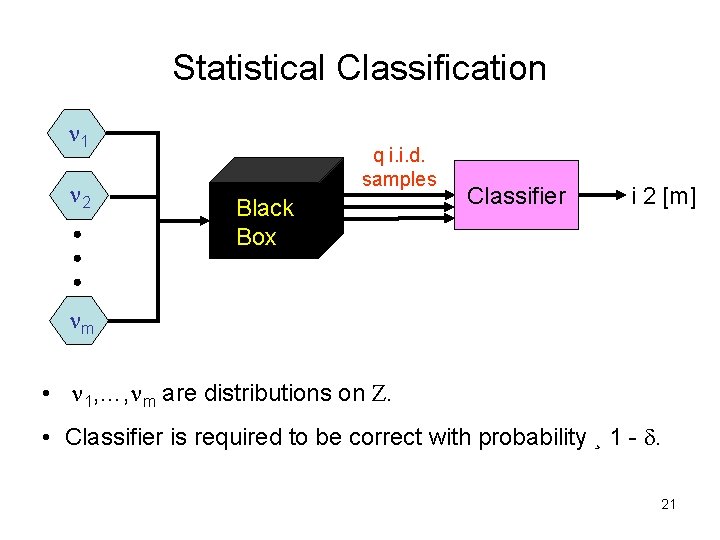

Statistical Classification 1 2 q i. i. d. samples Black Box Classifier i 2 [m] m • 1, …, m are distributions on Z. • Classifier is required to be correct with probability ¸ 1 - . 21

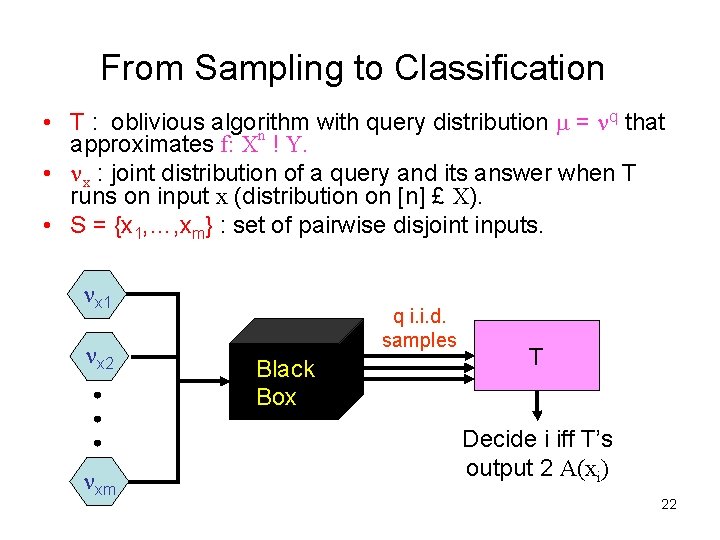

From Sampling to Classification q that • T : oblivious algorithm with query distribution = approximates f: Xn ! Y. • x : joint distribution of a query and its answer when T runs on input x (distribution on [n] £ X). • S = {x 1, …, xm} : set of pairwise disjoint inputs. x 1 x 2 xm q i. i. d. samples Black Box T Decide i iff T’s output 2 A(xi) 22

![Jensen-Shannon Divergence [Lin 91] • KL divergence between distributions , on Z: • Jensen-Shannon Jensen-Shannon Divergence [Lin 91] • KL divergence between distributions , on Z: • Jensen-Shannon](http://slidetodoc.com/presentation_image/77107fbc14245a68376b4a9789b190bd/image-23.jpg)

Jensen-Shannon Divergence [Lin 91] • KL divergence between distributions , on Z: • Jensen-Shannon divergence among distributions 1, …, m on Z: ( = (1/m) i i) 7 6 1 8 5 2 3 4 23

![Main Result Theorem [Classification lower bound] Any -error classifier for 1, …, m requires Main Result Theorem [Classification lower bound] Any -error classifier for 1, …, m requires](http://slidetodoc.com/presentation_image/77107fbc14245a68376b4a9789b190bd/image-24.jpg)

Main Result Theorem [Classification lower bound] Any -error classifier for 1, …, m requires q queries, where Corollary [Query complexity lower bound] For any oblivious algorithm with query distribution = q that ( , )-approximates f, and for any set S = {x 1, …, xm} of “pairwise disjoint” inputs, the number of queries q is at least 24

![Outline of the Proof Lemma 1 [Classification error lower bound] Proof: by Fano’s inequality. Outline of the Proof Lemma 1 [Classification error lower bound] Proof: by Fano’s inequality.](http://slidetodoc.com/presentation_image/77107fbc14245a68376b4a9789b190bd/image-25.jpg)

Outline of the Proof Lemma 1 [Classification error lower bound] Proof: by Fano’s inequality. Lemma 2 [Decomposition of Jensen-Shannon] Proof: By subadditivity of entropy and conditional independence. 25

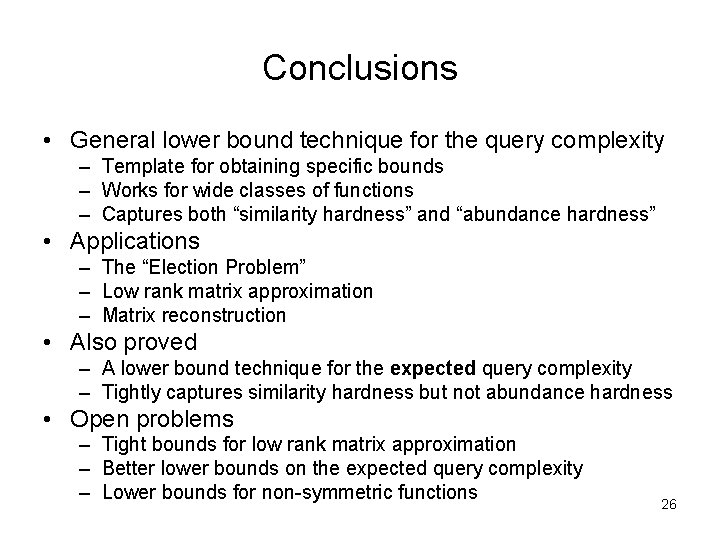

Conclusions • General lower bound technique for the query complexity – Template for obtaining specific bounds – Works for wide classes of functions – Captures both “similarity hardness” and “abundance hardness” • Applications – The “Election Problem” – Low rank matrix approximation – Matrix reconstruction • Also proved – A lower bound technique for the expected query complexity – Tightly captures similarity hardness but not abundance hardness • Open problems – Tight bounds for low rank matrix approximation – Better lower bounds on the expected query complexity – Lower bounds for non-symmetric functions 26

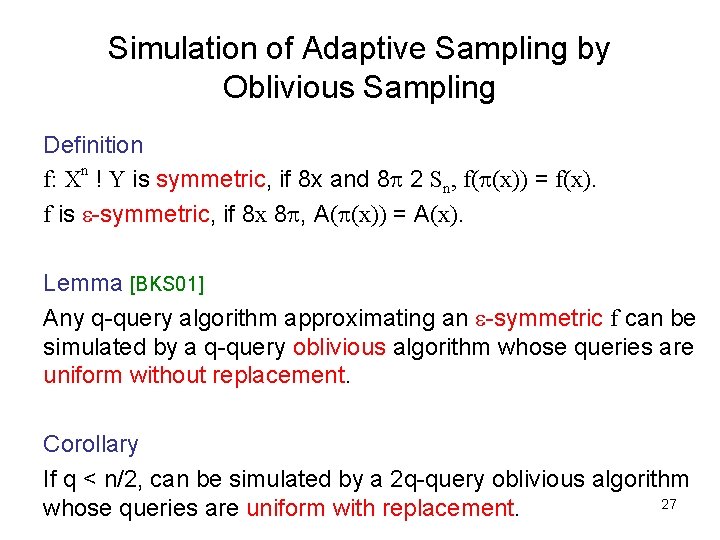

Simulation of Adaptive Sampling by Oblivious Sampling Definition f: Xn ! Y is symmetric, if 8 x and 8 2 Sn, f( (x)) = f(x). f is -symmetric, if 8 x 8 , A( (x)) = A(x). Lemma [BKS 01] Any q-query algorithm approximating an -symmetric f can be simulated by a q-query oblivious algorithm whose queries are uniform without replacement. Corollary If q < n/2, can be simulated by a 2 q-query oblivious algorithm 27 whose queries are uniform with replacement.

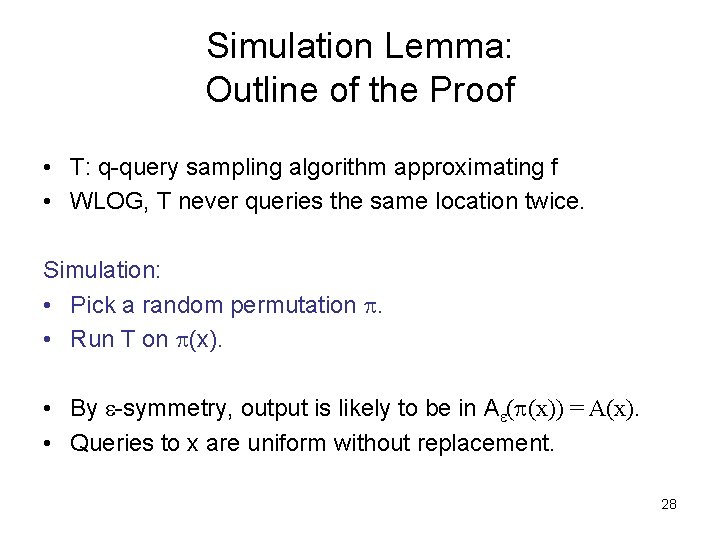

Simulation Lemma: Outline of the Proof • T: q-query sampling algorithm approximating f • WLOG, T never queries the same location twice. Simulation: • Pick a random permutation . • Run T on (x). • By -symmetry, output is likely to be in A ( (x)) = A(x). • Queries to x are uniform without replacement. 28

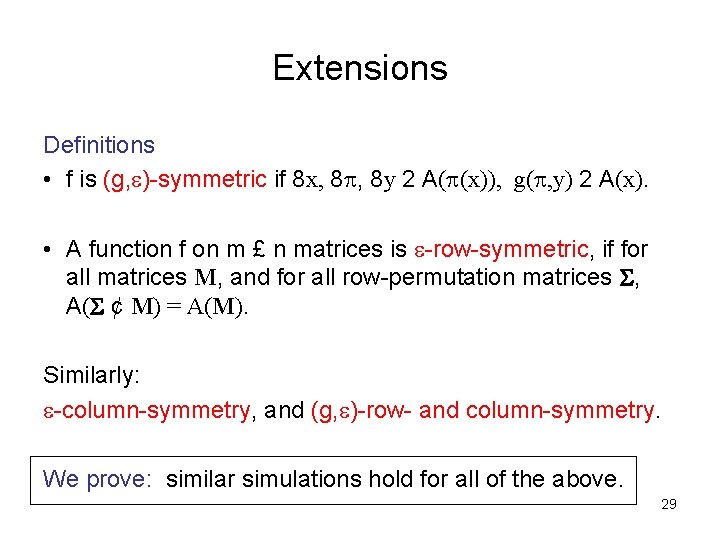

Extensions Definitions • f is (g, )-symmetric if 8 x, 8 , 8 y 2 A( (x)), g( , y) 2 A(x). • A function f on m £ n matrices is -row-symmetric, if for all matrices M, and for all row-permutation matrices , A( ¢ M) = A(M). Similarly: -column-symmetry, and (g, )-row- and column-symmetry. We prove: similar simulations hold for all of the above. 29

- Slides: 29