Realtime Ray Tracing on GPU with BVHbased Packet

Real-time Ray Tracing on GPU with BVH-based Packet Traversal Stefan Popov, Johannes Günther, Hans. Peter Seidel, Philipp Slusallek Stefan Popov High Performance GPU Ray Tracing

Background § GPUs attractive for ray tracing § High computational power § Shading oriented architecture § GPU ray tracers § § § Carr – the ray engine Purcell – Full ray tracing on the GPU, based on grids Ernst – KD trees with parallel stack Carr, Thrane & Simonsen – BVH Foley, Horn, Popov – KD trees - stackless traversal Stefan Popov High Performance GPU Ray Tracing

Motivation § So far § Interactive RT on GPU, but § Limited model size § No dynamic scene support § The G 80 – new approach to the GPU § High performance general purpose processor with graphics extensions § PRAM architecture § BVH allow for § Dynamic/deformable scenes § Small memory footprint § Goal: Recursive ordered traversal of BVH on the G 80 Stefan Popov High Performance GPU Ray Tracing

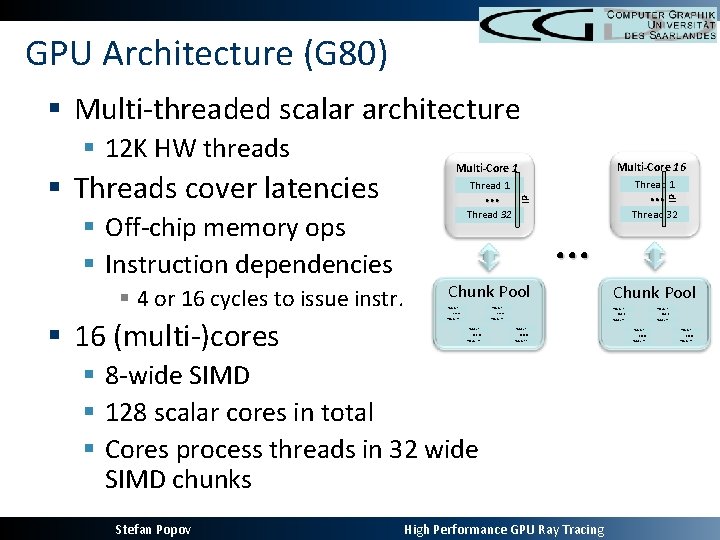

GPU Architecture (G 80) § Multi-threaded scalar architecture § Off-chip memory ops § Instruction dependencies § 4 or 16 cycles to issue instr. § 16 (multi-)cores Thread 1 … … IP § Threads cover latencies Multi-Core 16 Multi-Core 1 IP § 12 K HW threads Thread 32 … Chunk Pool Thread 1 … Thread 1 Thread 32 … … Thread 1 Thread` 32 Thread 32 … … § 8 -wide SIMD § 128 scalar cores in total § Cores process threads in 32 wide SIMD chunks Stefan Popov … High Performance GPU Ray Tracing … …

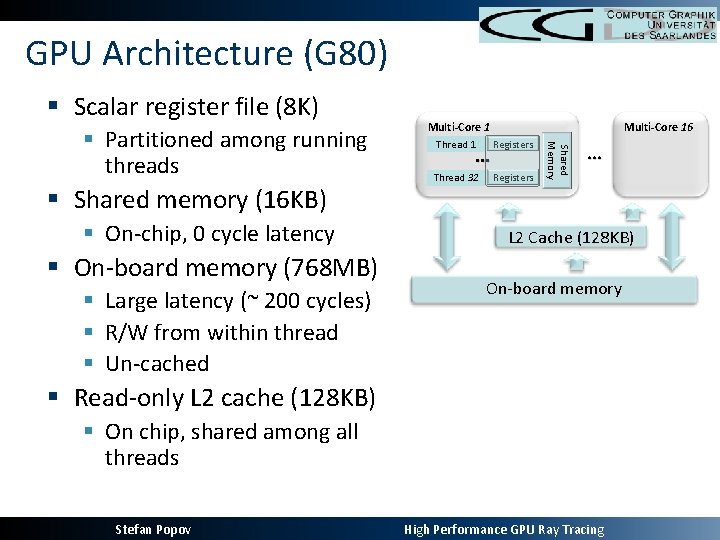

GPU Architecture (G 80) § Scalar register file (8 K) § Shared memory (16 KB) § On-chip, 0 cycle latency § On-board memory (768 MB) § Large latency (~ 200 cycles) § R/W from within thread § Un-cached Thread 1 Registers Thread 32 Registers … Shared Memory § Partitioned among running threads Multi-Core 16 Multi-Core 1 … L 2 Cache (128 KB) On-board memory § Read-only L 2 cache (128 KB) § On chip, shared among all threads Stefan Popov High Performance GPU Ray Tracing

Programming the G 80 § CUDA § C based language with parallel extensions § GPU utilization at 100% only if § Enough threads are present (>> 12 K) § Every thread uses less than 10 registers and 5 words (32 bit) of shared memory § Enough computations per transferred word of data § Bandwidth << computational power § Adequate memory access pattern to allow read combining Stefan Popov High Performance GPU Ray Tracing

Performance Bottlenecks § Efficient per-thread stack implementation § Shared memory too small – will limit parallelism § On-board memory – uncached § Need enough computations between stack ops § Efficient memory access pattern § Use texture caches § However, only few words of cache / thread § Read successive memory locations in successive threads of a chunk § Single roundtrip to memory (read combining) § Cover latency with enough computations Stefan Popov High Performance GPU Ray Tracing

Ray Tracing on the G 80 § Map each ray to one thread § Enough threads to keep the GPU busy § Recursive ray tracing § Use per-thread stack stored on on-board memory § Efficient, since enough computations are present § But how to do the traversal ? § Skip pointers (Thrane) – no ordered traversal § Geometric images (Carr) – single mesh only § Shared stack traversal Stefan Popov High Performance GPU Ray Tracing

SIMD Packet Traversal of BVH § Traverse a node with the whole packet § At an internal node: § Intersect all rays with both children and determine traversal order § Push far child (if any) on a stack and descend to the near one with the packet § At a leaf: § Intersect all rays with contained geometry § Pop next node to visit from the stack Stefan Popov High Performance GPU Ray Tracing

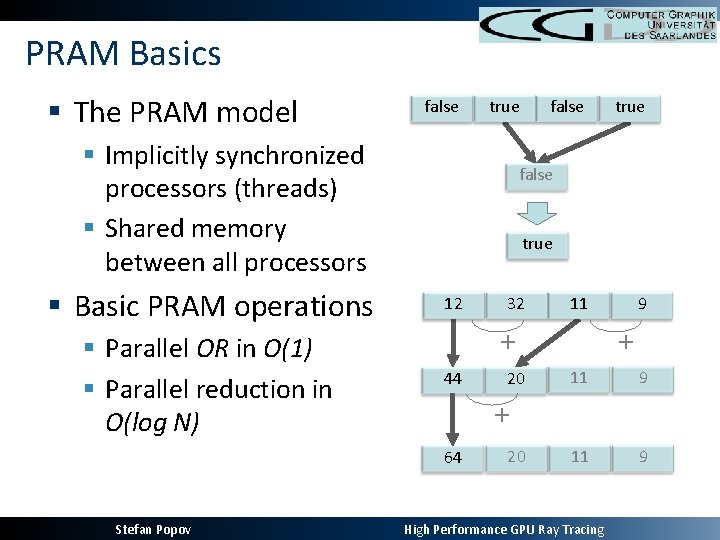

PRAM Basics § The PRAM model false true § Implicitly synchronized processors (threads) § Shared memory between all processors § Basic PRAM operations § Parallel OR in O(1) § Parallel reduction in O(log N) true false true 12 32 11 + 44 20 9 + 11 9 + 64 Stefan Popov false 20 High Performance GPU Ray Tracing

PRAM Packet Traversal of BVH § The G 80 – PRAM machine on chunk level § Map packet chunk, ray thread § Threads behave as in the single ray traversal § At leaf: Intersect with geometry. Pop next node from stack § At node: Decide which children to visit and in what order. Push far child § Difference: § How rays choose which node to visit first § Might not be the one they want to Stefan Popov High Performance GPU Ray Tracing

PRAM Packet Traversal of BVH § Choose child traversal order § PRAM OR to determine if all rays agree on visiting the same node first § The result is stored in shared memory § In case of divergence: choose child with more ray candidates § Use PRAM SUM on +/- 1 for each thread, -1 left node § Look at result’s sign § Guarantees synchronous traversal of BVH Stefan Popov High Performance GPU Ray Tracing

PRAM Packet Traversal of BVH § Stack: § Near & far child – the same for all threads => store once § Keep stack in shared memory. Only few bits per thread! § Only Thread 0 does all stack ops. § Reading data: § All threads work with the same node / triangle § Sequential threads bring in sequential words § Single load operation. Single round trip to memory § Implementable in CUDA Stefan Popov High Performance GPU Ray Tracing

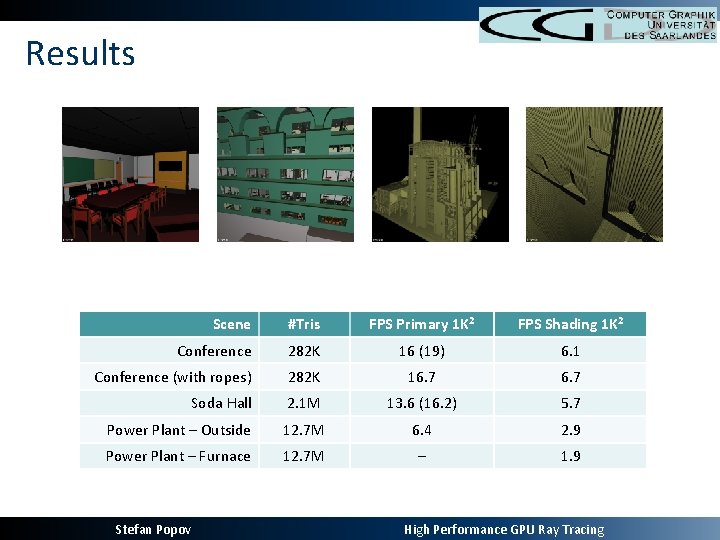

Results Scene #Tris FPS Primary 1 K 2 FPS Shading 1 K 2 Conference 282 K 16 (19) 6. 1 Conference (with ropes) 282 K 16. 7 Soda Hall 2. 1 M 13. 6 (16. 2) 5. 7 Power Plant – Outside 12. 7 M 6. 4 2. 9 Power Plant – Furnace 12. 7 M – 1. 9 Stefan Popov High Performance GPU Ray Tracing

Analysis § Coherent branch decisions / memory access § Small footprint of the data structure § Can trace up to 12 million triangle models § Program becomes compute bound § Determined by over/under-clocking the core/memory § No frustums required § Good for secondary rays, bad for primary § Can use rasterization for primary rays § Implicit SIMD – easy shader programming § Running on a GPU – shading “for free” Stefan Popov High Performance GPU Ray Tracing

Dynamic Scenes § Update parts / whole BVH and geometry on GPU § Use GPU for RT and CPU for BVH construction / refitting § Construct BVH using binning § Similar to Wald RT 07 / Popov RT 06 § Bin all 3 dimensions using SIMD § Results in > 10% better trees § Measured as SAH quality, not FPS § Speed loss is almost negligible Stefan Popov High Performance GPU Ray Tracing

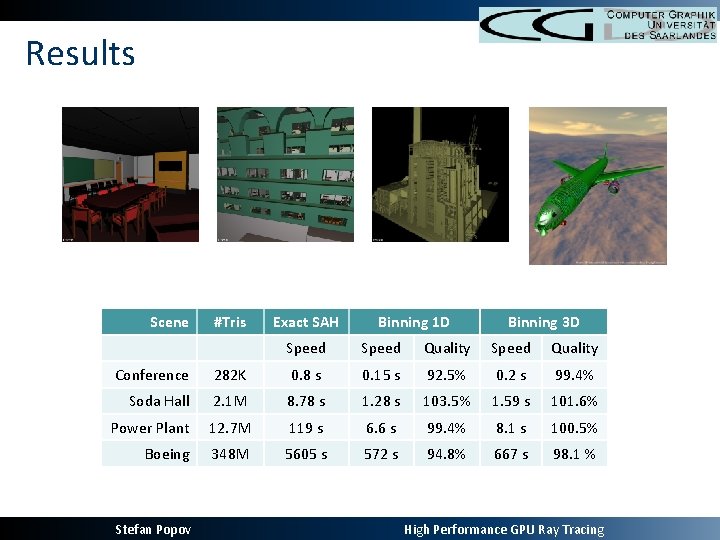

Results Scene #Tris Exact SAH Binning 1 D Binning 3 D Speed Quality Conference 282 K 0. 8 s 0. 15 s 92. 5% 0. 2 s 99. 4% Soda Hall 2. 1 M 8. 78 s 1. 28 s 103. 5% 1. 59 s 101. 6% Power Plant 12. 7 M 119 s 6. 6 s 99. 4% 8. 1 s 100. 5% Boeing 348 M 5605 s 572 s 94. 8% 667 s 98. 1 % Stefan Popov High Performance GPU Ray Tracing

Conclusions § New recursive PRAM BVH traversal algorithm § Very well suited for the new generation of GPUs § No additional pre-computed data required § First GPU ray tracer to handle large models § Previous implementations were limited to < 300 K § Can handle dynamic scenes § By using the CPU to update the geometry / BVH Stefan Popov High Performance GPU Ray Tracing

Future Work § More features § Shaders, adaptive anti-aliasing, … § Global illumination § Code optimizations § Current implementation uses too many registers Stefan Popov High Performance GPU Ray Tracing

Thank you! Stefan Popov High Performance GPU Ray Tracing

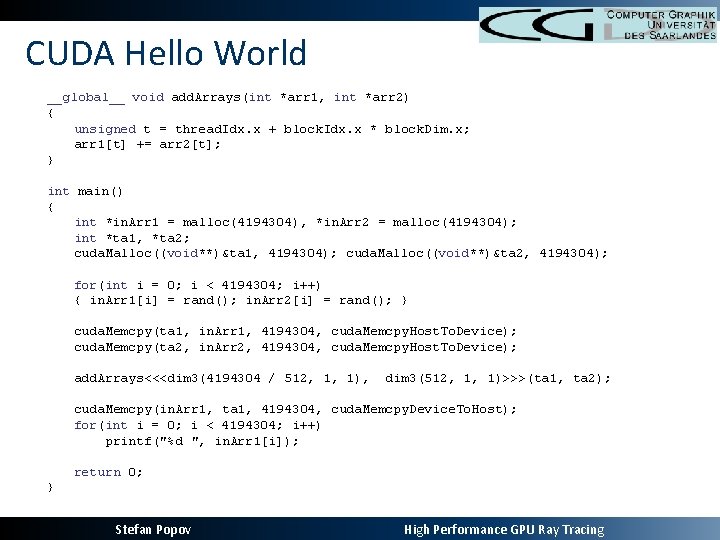

CUDA Hello World __global__ void add. Arrays(int *arr 1, int *arr 2) { unsigned t = thread. Idx. x + block. Idx. x * block. Dim. x; arr 1[t] += arr 2[t]; } int main() { int *in. Arr 1 = malloc(4194304), *in. Arr 2 = malloc(4194304); int *ta 1, *ta 2; cuda. Malloc((void**)&ta 1, 4194304); cuda. Malloc((void**)&ta 2, 4194304); for(int i = 0; i < 4194304; i++) { in. Arr 1[i] = rand(); in. Arr 2[i] = rand(); } cuda. Memcpy(ta 1, in. Arr 1, 4194304, cuda. Memcpy. Host. To. Device); cuda. Memcpy(ta 2, in. Arr 2, 4194304, cuda. Memcpy. Host. To. Device); add. Arrays<<<dim 3(4194304 / 512, 1, 1), dim 3(512, 1, 1)>>>(ta 1, ta 2); cuda. Memcpy(in. Arr 1, ta 1, 4194304, cuda. Memcpy. Device. To. Host); for(int i = 0; i < 4194304; i++) printf("%d ", in. Arr 1[i]); return 0; } Stefan Popov High Performance GPU Ray Tracing

- Slides: 21