PUMA A Programmable Ultraefficient Memristorbased Accelerator for Machine

PUMA: A Programmable Ultraefficient Memristor-based Accelerator for Machine Learning Inference Aayush Ankit 1, 3, Izzat El Hajj 2, 4, Sai Rahul Chalamalasetti 3, Geoffrey Ndu 3, Martin Foltin 3, R. Stanley Williams 3, Paolo Faraboschi 3, Wen-mei Hwu 4, John Paul Strachan 3, Kaushik Roy 1, Dejan S Milojicic 3 PURDUE UNIVERSITY 1 AMERICAN UNIVERSITY OF BEIRUT 2 HEWLETT PACKARD ENTERPRISE 3 UNIVERSITY OF ILLINOIS AT URBANA-CHAMPAIGN 4

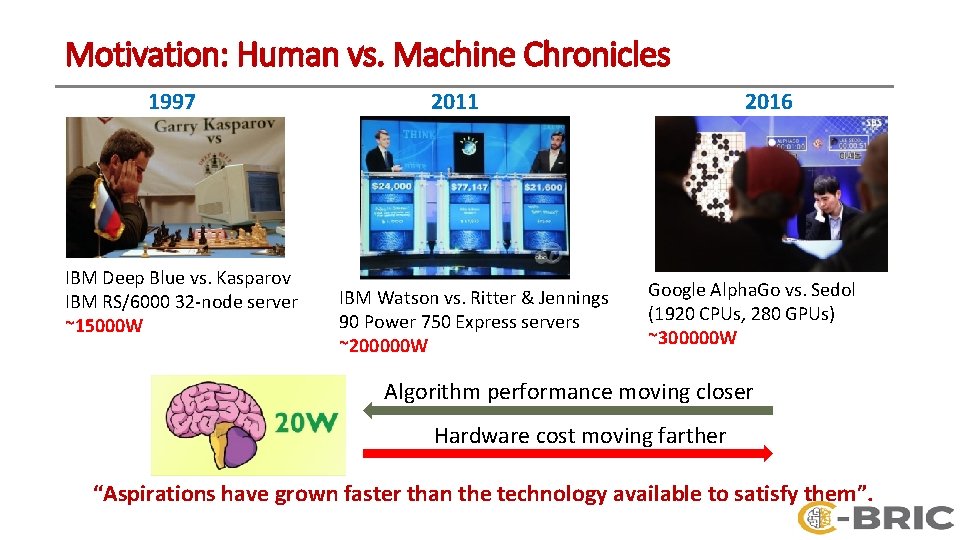

Motivation: Human vs. Machine Chronicles 1997 IBM Deep Blue vs. Kasparov IBM RS/6000 32 -node server ~15000 W 2011 IBM Watson vs. Ritter & Jennings 90 Power 750 Express servers ~200000 W 2016 Google Alpha. Go vs. Sedol (1920 CPUs, 280 GPUs) ~300000 W Algorithm performance moving closer Hardware cost moving farther “Aspirations have grown faster than the technology available to satisfy them”.

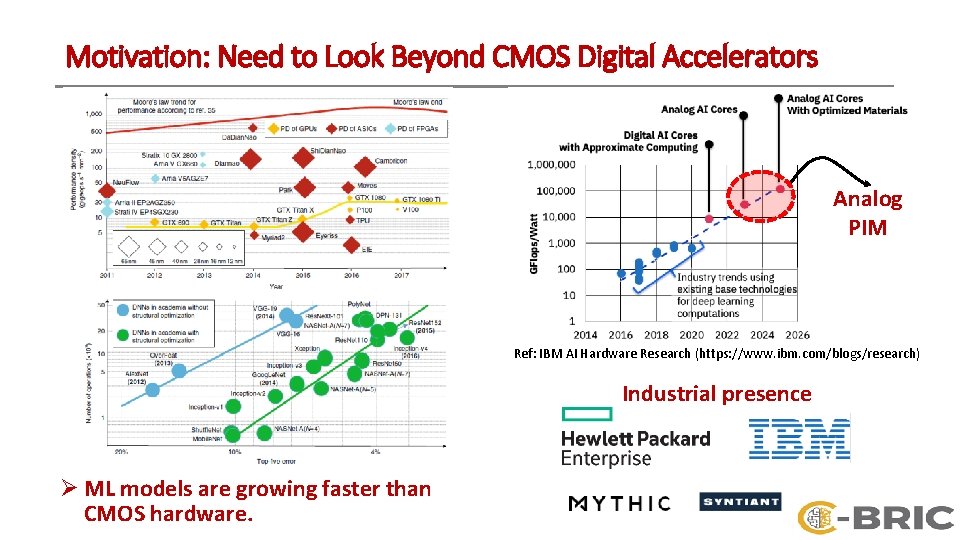

Motivation: Need to Look Beyond CMOS Digital Accelerators Analog PIM Ref: IBM AI Hardware Research (https: //www. ibm. com/blogs/research) Industrial presence Ø ML models are growing faster than CMOS hardware.

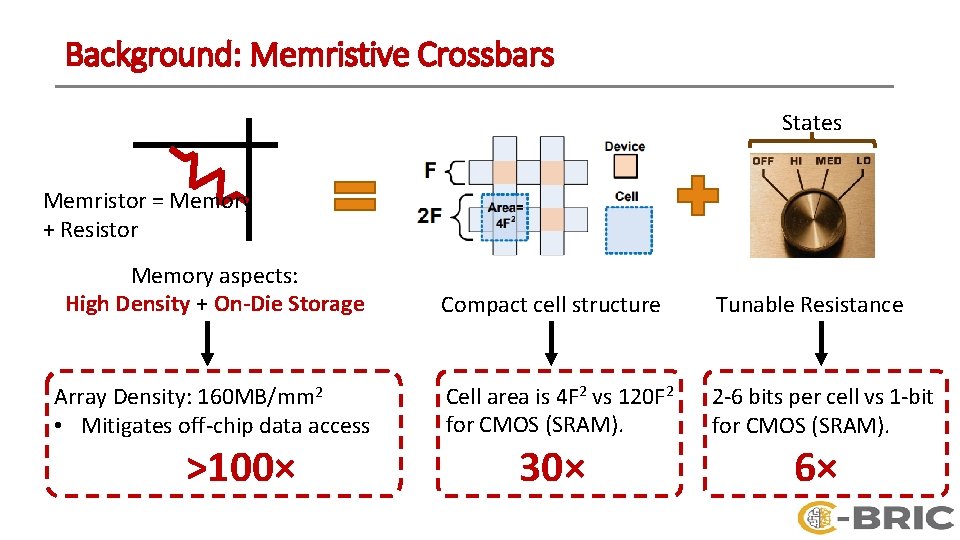

Background: Memristive Crossbars States Memristor = Memory + Resistor Memory aspects: High Density + On-Die Storage Array Density: 160 MB/mm 2 • Mitigates off-chip data access >100× Compact cell structure Tunable Resistance Cell area is 4 F 2 vs 120 F 2 for CMOS (SRAM). 2 -6 bits per cell vs 1 -bit for CMOS (SRAM). 30× 6×

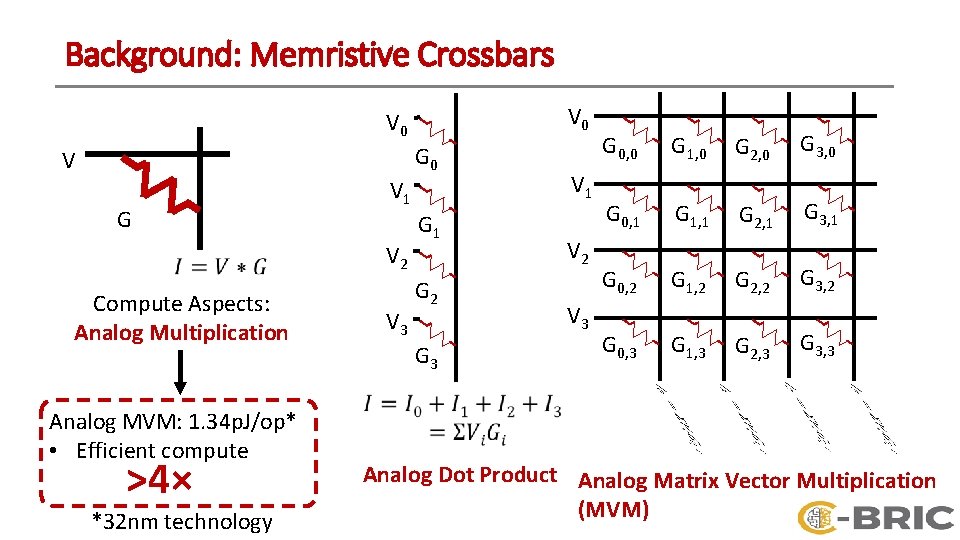

Background: Memristive Crossbars V 0 G 0 V G V 1 V 2 Compute Aspects: Analog Multiplication Analog MVM: 1. 34 p. J/op* • Efficient compute >4× *32 nm technology V 3 G 1 G 2 G 3 V 1 V 2 V 3 G 0, 0 G 1, 0 G 2, 0 G 3, 0 G 0, 1 G 1, 1 G 2, 1 G 3, 1 G 0, 2 G 1, 2 G 2, 2 G 3, 2 G 0, 3 G 1, 3 G 2, 3 G 3, 3 Analog Dot Product Analog Matrix Vector Multiplication (MVM)

![Background: Past Memristive Based Accelerators DAC Ø Past accelerators: RENO [DAC’ 15], ISAAC and Background: Past Memristive Based Accelerators DAC Ø Past accelerators: RENO [DAC’ 15], ISAAC and](http://slidetodoc.com/presentation_image_h2/ca9a97525a48b53af9688d7c7f575f98/image-6.jpg)

Background: Past Memristive Based Accelerators DAC Ø Past accelerators: RENO [DAC’ 15], ISAAC and PRIME [ISCA’ 16] achieve significant benefits over CMOS accelerators for limited applications (1 or 2). DAC Ø Memristive crossbars are not drop-in replacement of traditional memory structures (Cache, Register File). o General purpose ML architecture ? o Programmability ? DAC MVM Unit (MVMU): Add peripherals to interface crossbar with digital domain. INT INT Multiplexer ADC Shift-&-Add INT Ø PUMA is the first general-purpose and programmable accelerator with memristive crossbars.

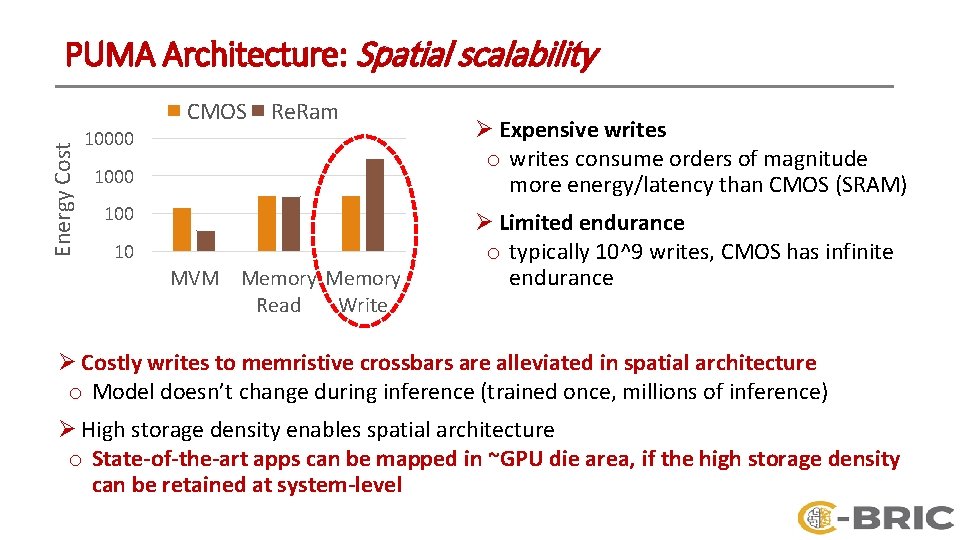

PUMA Architecture: Spatial scalability Energy Cost CMOS Re. Ram 10000 100 10 MVM Memory Read Write Ø Expensive writes o writes consume orders of magnitude more energy/latency than CMOS (SRAM) Ø Limited endurance o typically 10^9 writes, CMOS has infinite endurance Ø Costly writes to memristive crossbars are alleviated in spatial architecture o Model doesn’t change during inference (trained once, millions of inference) Ø High storage density enables spatial architecture o State-of-the-art apps can be mapped in ~GPU die area, if the high storage density can be retained at system-level

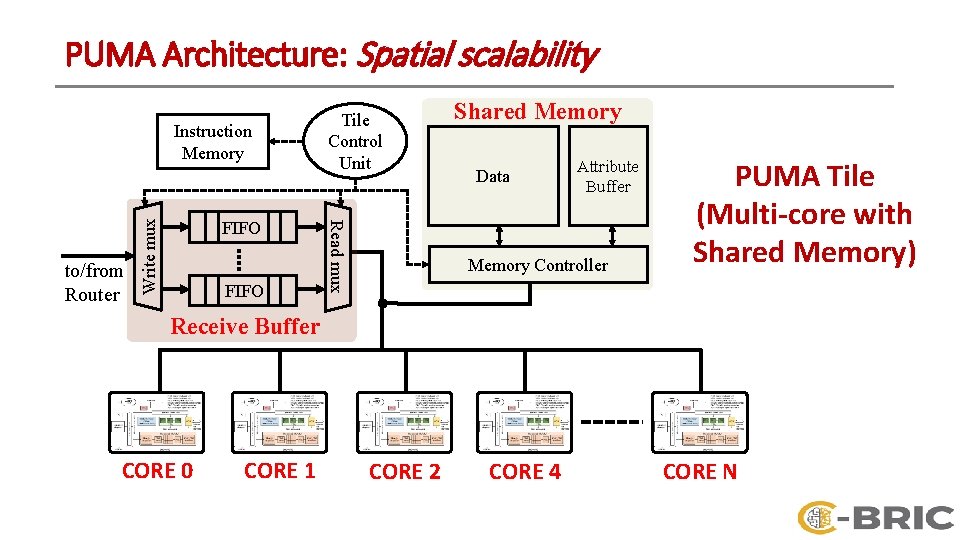

PUMA Architecture: Spatial scalability FIFO Read mux to/from Router Write mux Instruction Memory Tile Control Unit Shared Memory Data Attribute Buffer Memory Controller PUMA Tile (Multi-core with Shared Memory) Receive Buffer CORE 0 CORE 1 CORE 2 CORE 4 CORE N

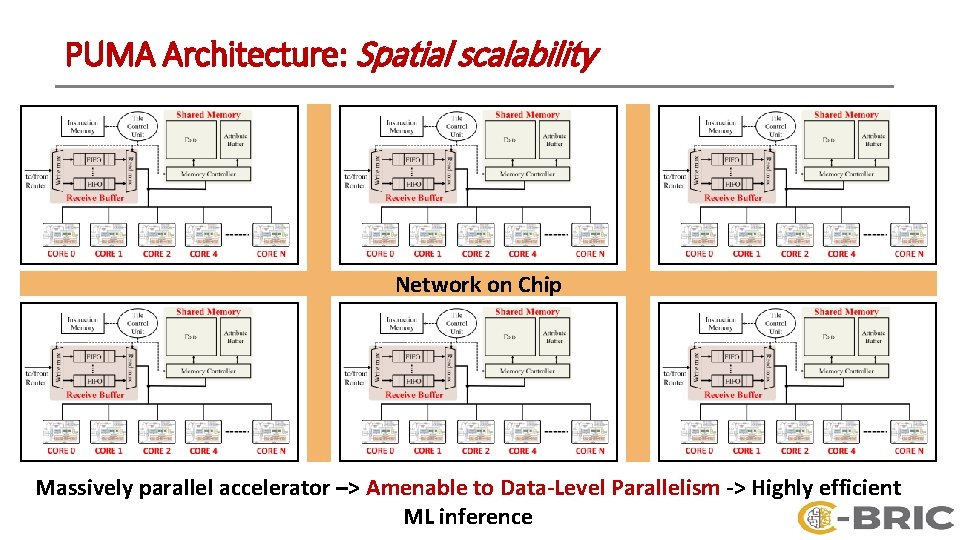

PUMA Architecture: Spatial scalability Network on Chip Massively parallel accelerator –> Amenable to Data-Level Parallelism -> Highly efficient ML inference

Key challenges Ø Enable general-purpose and programmable architecture while preserving the crossbar storage density o Memristive technology has orders of magnitude higher area-efficiency than CMOS technology, for both storage and compute o Storage density drops from 160 MB/mm 2 at array-level to 2. 6 MB/mm 2 at MVMUlevel (ISAAC, ISCA’ 16) !!! Ø Optimize data movements o Weights remain stationary, but partial sums and activations lead to data movements o Spatiality incurs high cost for data movement between cores Ø Proposal: Architecture-ISA-Compiler codesign. 10

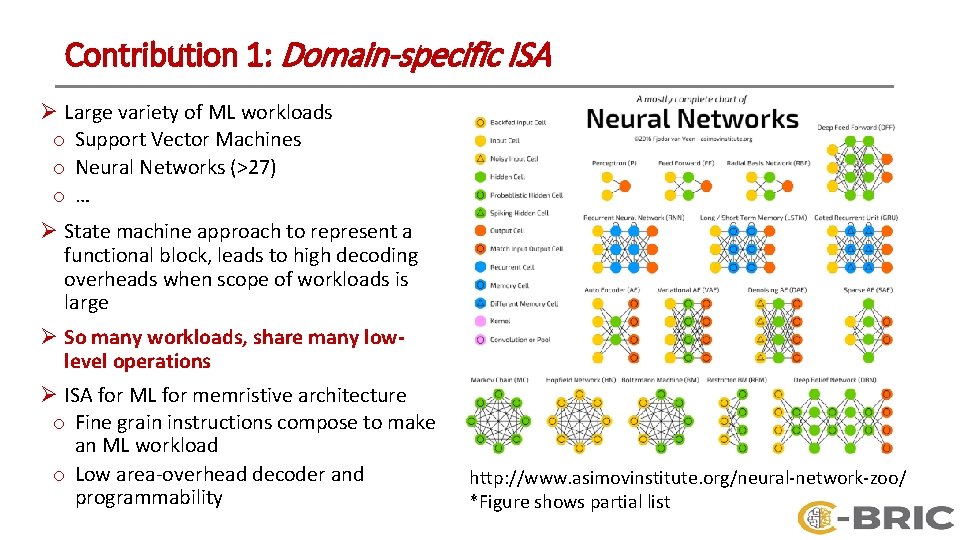

Contribution 1: Domain-specific ISA Ø Large variety of ML workloads o Support Vector Machines o Neural Networks (>27) o… Ø State machine approach to represent a functional block, leads to high decoding overheads when scope of workloads is large Ø So many workloads, share many lowlevel operations Ø ISA for ML for memristive architecture o Fine grain instructions compose to make an ML workload o Low area-overhead decoder and programmability http: //www. asimovinstitute. org/neural-network-zoo/ *Figure shows partial list

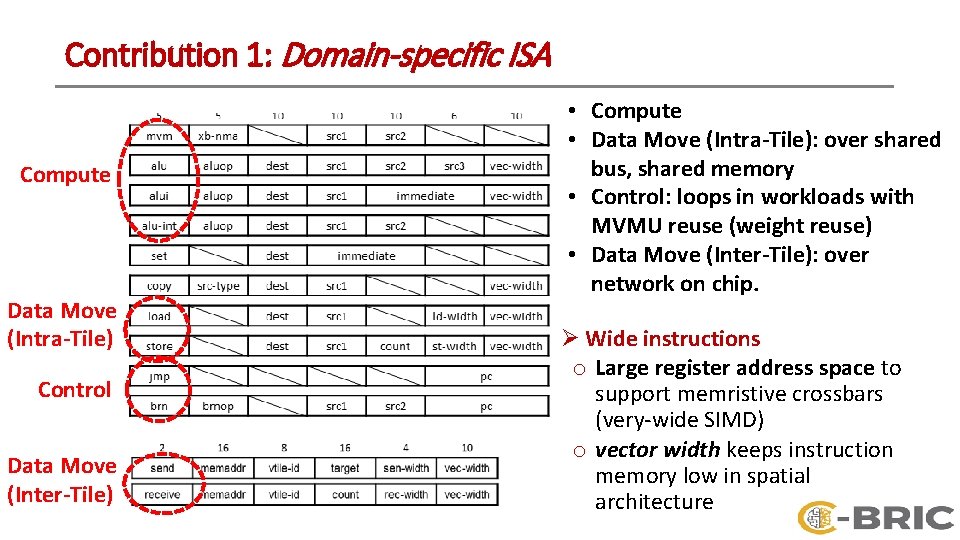

Contribution 1: Domain-specific ISA Compute Data Move (Intra-Tile) Control Data Move (Inter-Tile) • Compute • Data Move (Intra-Tile): over shared bus, shared memory • Control: loops in workloads with MVMU reuse (weight reuse) • Data Move (Inter-Tile): over network on chip. Ø Wide instructions o Large register address space to support memristive crossbars (very-wide SIMD) o vector width keeps instruction memory low in spatial architecture

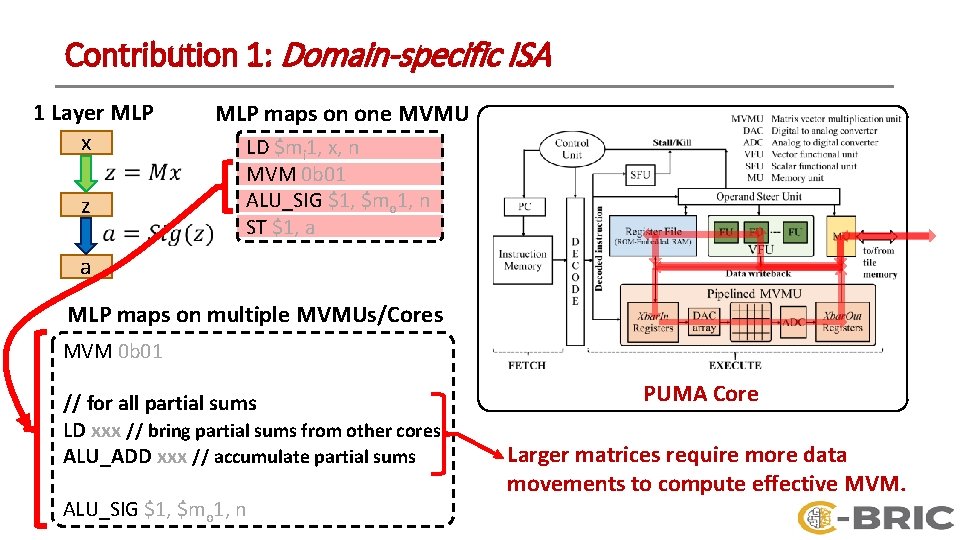

Contribution 1: Domain-specific ISA 1 Layer MLP x z MLP maps on one MVMU LD $mi 1, x, n MVM 0 b 01 ALU_SIG $1, $mo 1, n ST $1, a a MLP maps on multiple MVMUs/Cores MVM 0 b 01 // for all partial sums LD xxx // bring partial sums from other cores ALU_ADD xxx // accumulate partial sums ALU_SIG $1, $mo 1, n PUMA Core Larger matrices require more data movements to compute effective MVM.

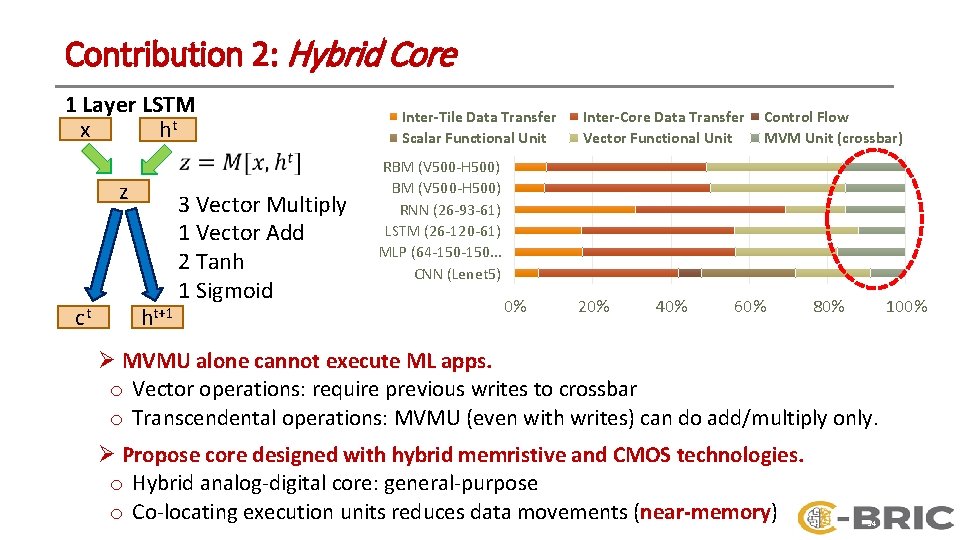

Contribution 2: Hybrid Core 1 Layer LSTM x ht z ct ht+1 3 Vector Multiply 1 Vector Add 2 Tanh 1 Sigmoid Inter-Tile Data Transfer Scalar Functional Unit Inter-Core Data Transfer Vector Functional Unit Control Flow MVM Unit (crossbar) RBM (V 500 -H 500) RNN (26 -93 -61) LSTM (26 -120 -61) MLP (64 -150. . . CNN (Lenet 5) 0% 20% 40% 60% 80% 100% Ø MVMU alone cannot execute ML apps. o Vector operations: require previous writes to crossbar o Transcendental operations: MVMU (even with writes) can do add/multiply only. Ø Propose core designed with hybrid memristive and CMOS technologies. o Hybrid analog-digital core: general-purpose o Co-locating execution units reduces data movements (near-memory) 14

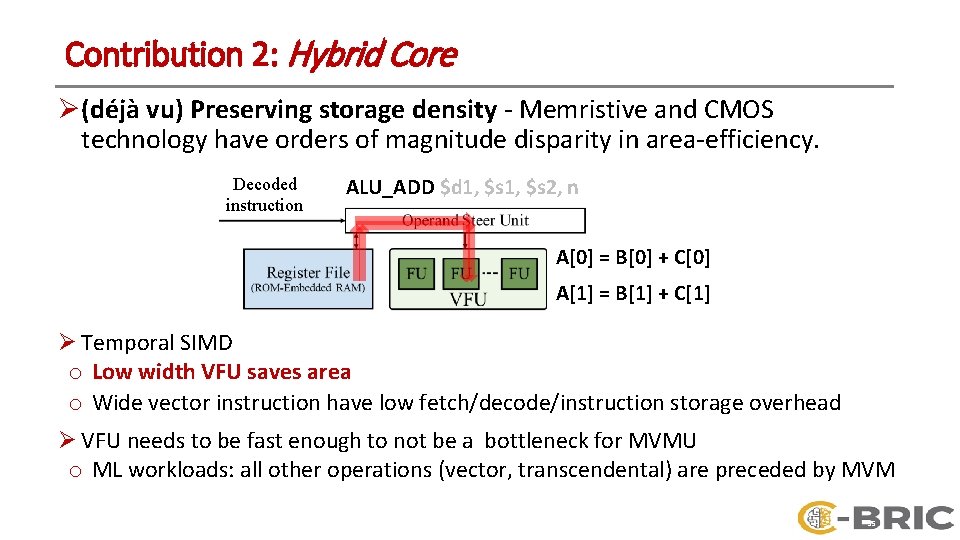

Contribution 2: Hybrid Core Ø (déjà vu) Preserving storage density - Memristive and CMOS technology have orders of magnitude disparity in area-efficiency. Decoded instruction ALU_ADD $d 1, $s 2, n A[0] = B[0] + C[0] A[1] = B[1] + C[1] Ø Temporal SIMD o Low width VFU saves area o Wide vector instruction have low fetch/decode/instruction storage overhead Ø VFU needs to be fast enough to not be a bottleneck for MVMU o ML workloads: all other operations (vector, transcendental) are preceded by MVM 15

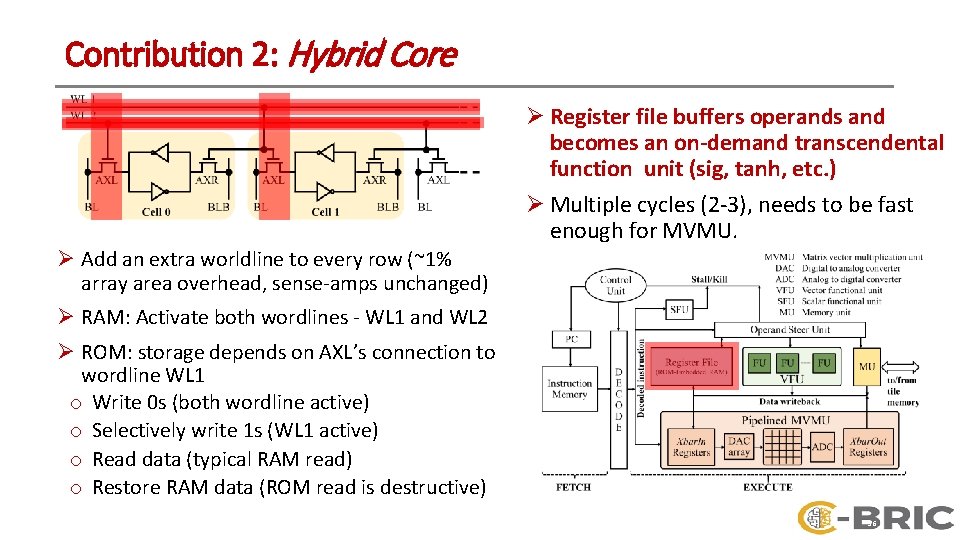

Contribution 2: Hybrid Core Ø Register file buffers operands and becomes an on-demand transcendental function unit (sig, tanh, etc. ) Ø Multiple cycles (2 -3), needs to be fast enough for MVMU. Ø Add an extra worldline to every row (~1% array area overhead, sense-amps unchanged) Ø RAM: Activate both wordlines - WL 1 and WL 2 Ø ROM: storage depends on AXL’s connection to wordline WL 1 o Write 0 s (both wordline active) o Selectively write 1 s (WL 1 active) o Read data (typical RAM read) o Restore RAM data (ROM read is destructive) 16

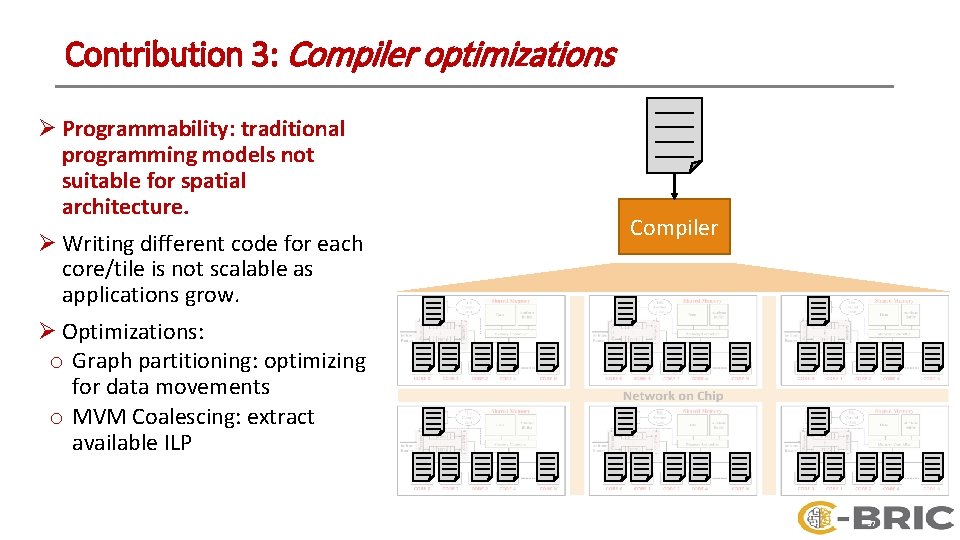

Contribution 3: Compiler optimizations Ø Programmability: traditional programming models not suitable for spatial architecture. Ø Writing different code for each core/tile is not scalable as applications grow. Ø Optimizations: o Graph partitioning: optimizing for data movements o MVM Coalescing: extract available ILP Compiler 17

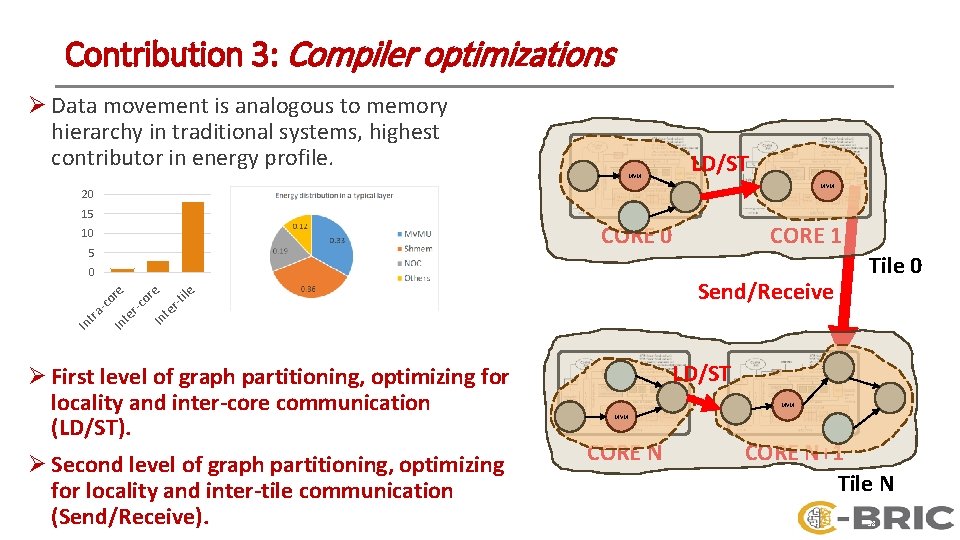

Contribution 3: Compiler optimizations Ø Data movement is analogous to memory hierarchy in traditional systems, highest contributor in energy profile. MVM LD/ST MVM 20 15 CORE 0 10 5 ile r-t te or e Send/Receive Tile 0 In r-c te In In tra -c or e 0 CORE 1 Ø First level of graph partitioning, optimizing for locality and inter-core communication (LD/ST). Ø Second level of graph partitioning, optimizing for locality and inter-tile communication (Send/Receive). LD/ST MVM CORE N+1 Tile N 18

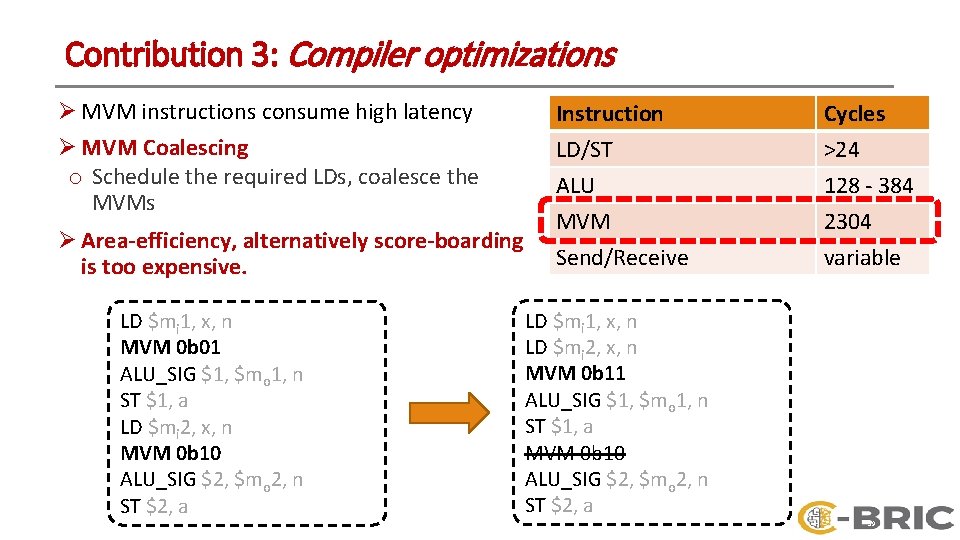

Contribution 3: Compiler optimizations Ø MVM instructions consume high latency Ø MVM Coalescing o Schedule the required LDs, coalesce the MVMs Ø Area-efficiency, alternatively score-boarding is too expensive. LD $mi 1, x, n MVM 0 b 01 ALU_SIG $1, $mo 1, n ST $1, a LD $mi 2, x, n MVM 0 b 10 ALU_SIG $2, $mo 2, n ST $2, a Instruction LD/ST ALU MVM Cycles >24 128 - 384 2304 Send/Receive variable LD $mi 1, x, n LD $mi 2, x, n MVM 0 b 11 ALU_SIG $1, $mo 1, n ST $1, a MVM 0 b 10 ALU_SIG $2, $mo 2, n ST $2, a 19

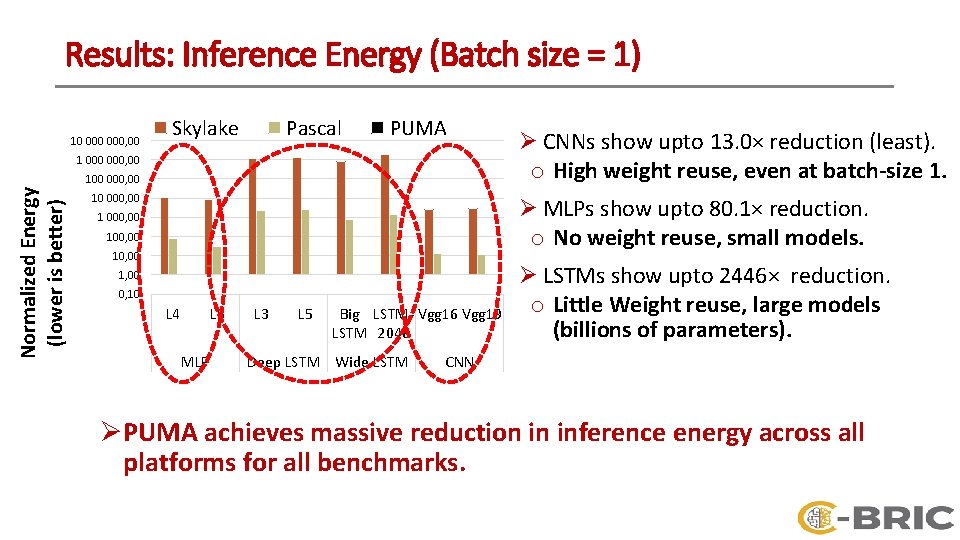

Results: Inference Energy (Batch size = 1) 10 000, 00 Skylake Pascal PUMA 1 000, 00 Normalized Energy (lower is better) 100 000, 00 10 000, 00 Ø CNNs show upto 13. 0× reduction (least). o High weight reuse, even at batch-size 1. Ø MLPs show upto 80. 1× reduction. o No weight reuse, small models. 1 000, 00 10, 00 1, 00 0, 10 L 4 L 5 MLP L 3 L 5 Big LSTM- Vgg 16 Vgg 19 LSTM 2048 Deep LSTM Wide LSTM Ø LSTMs show upto 2446× reduction. o Little Weight reuse, large models (billions of parameters). CNN Ø PUMA achieves massive reduction in inference energy across all platforms for all benchmarks.

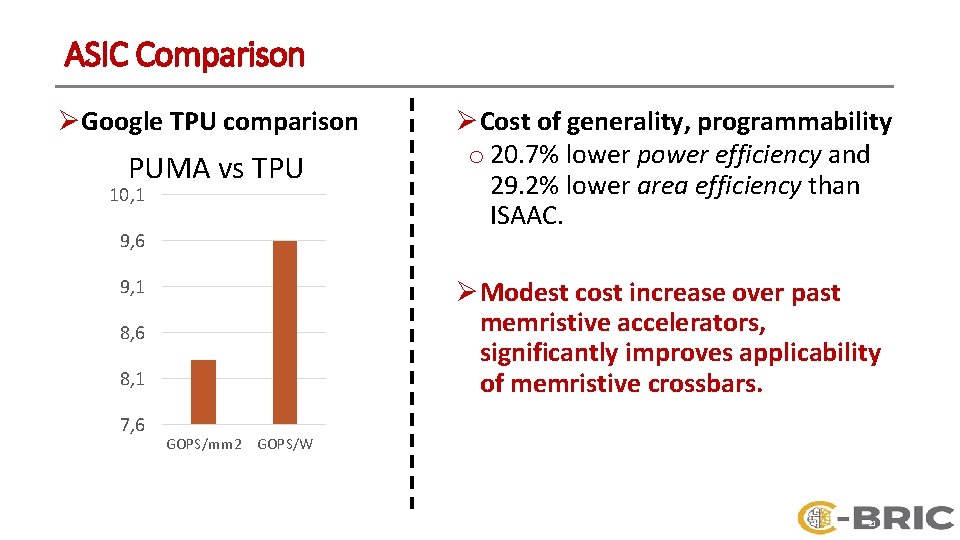

ASIC Comparison Ø Google TPU comparison PUMA vs TPU 10, 1 9, 6 9, 1 Ø Modest cost increase over past memristive accelerators, significantly improves applicability of memristive crossbars. 8, 6 8, 1 7, 6 Ø Cost of generality, programmability o 20. 7% lower power efficiency and 29. 2% lower area efficiency than ISAAC. GOPS/mm 2 GOPS/W 21

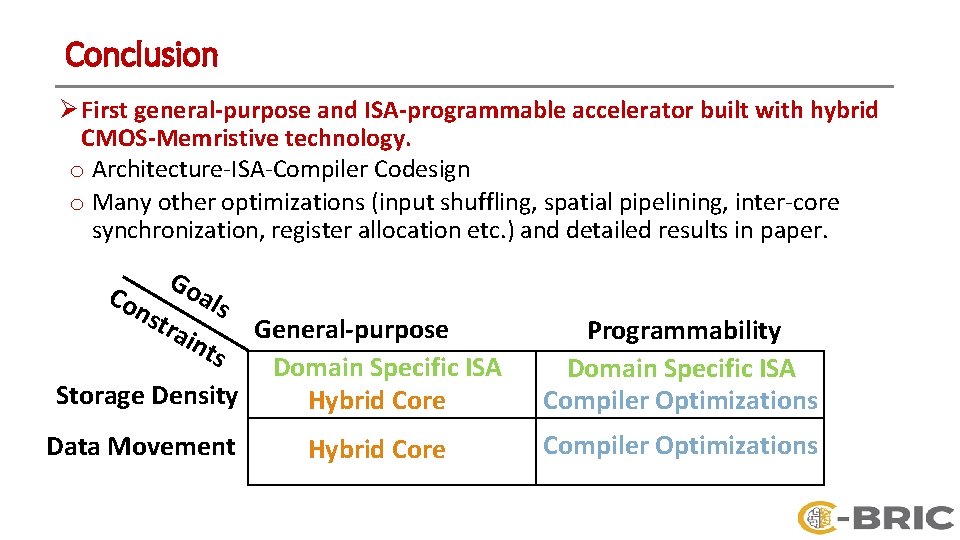

Conclusion Ø First general-purpose and ISA-programmable accelerator built with hybrid CMOS-Memristive technology. o Architecture-ISA-Compiler Codesign o Many other optimizations (input shuffling, spatial pipelining, inter-core synchronization, register allocation etc. ) and detailed results in paper. Go Co a ls nst rai nts General-purpose Domain Specific ISA Storage Density Hybrid Core Data Movement Hybrid Core Programmability Domain Specific ISA Compiler Optimizations

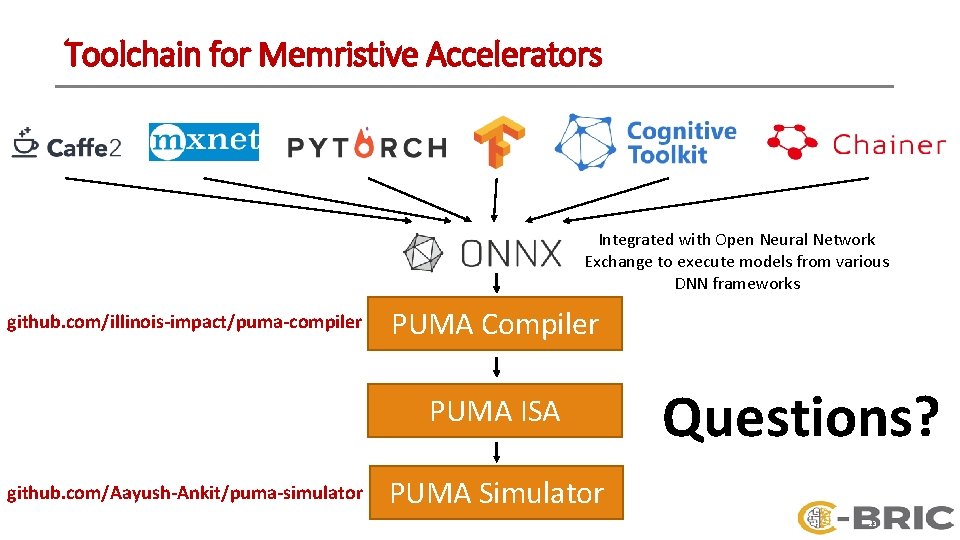

Toolchain for Memristive Accelerators Integrated with Open Neural Network Exchange to execute models from various DNN frameworks github. com/illinois-impact/puma-compiler PUMA Compiler PUMA ISA github. com/Aayush-Ankit/puma-simulator Questions? PUMA Simulator 23

- Slides: 23