PUMA A Programmable Ultraefficient Memristorbased Accelerator for Machine

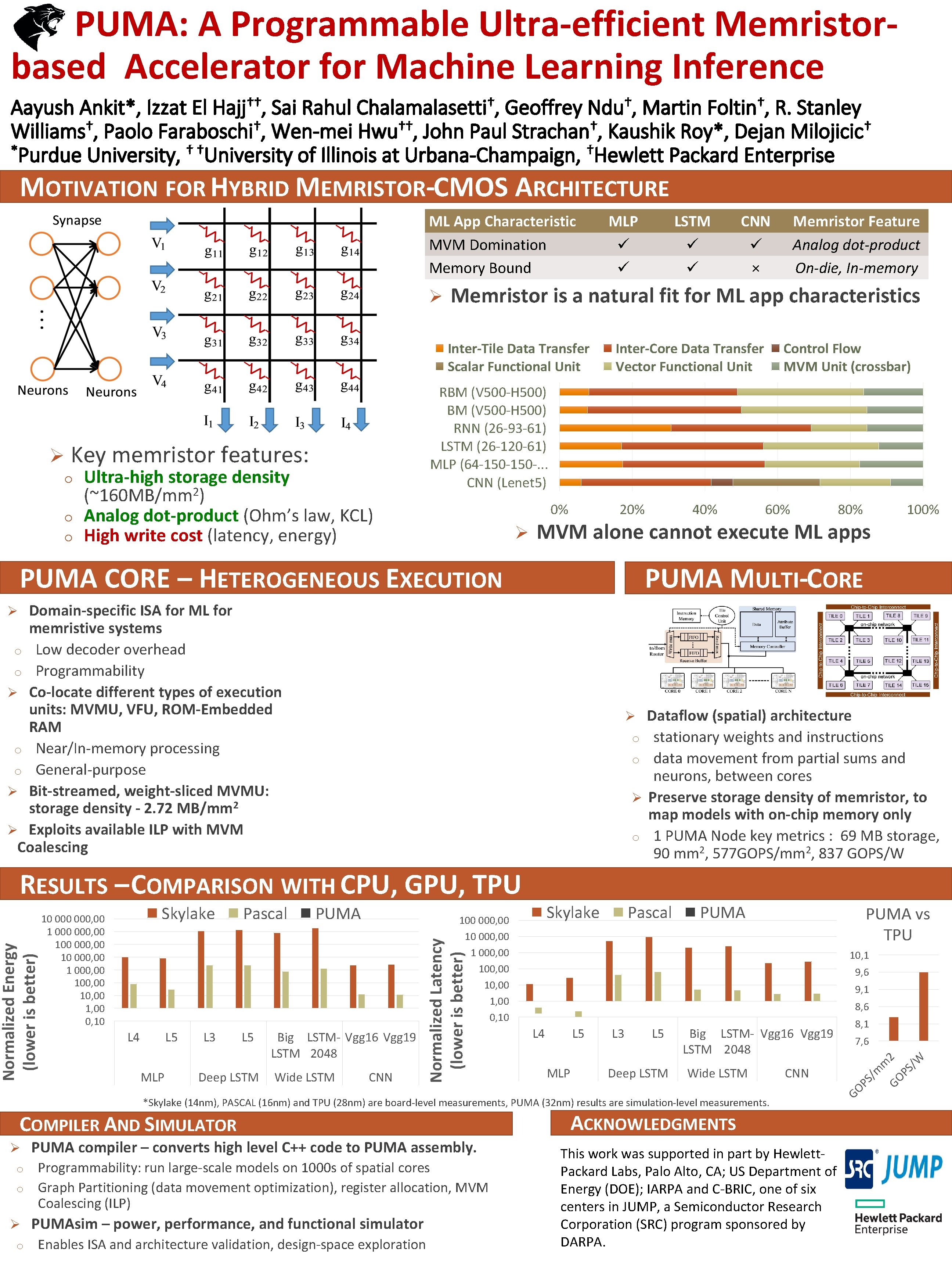

PUMA: A Programmable Ultra-efficient Memristorbased Accelerator for Machine Learning Inference †† Hajj , † Chalamalasetti , † Ndu , † Foltin , Aayush Ankit*, Izzat El Sai Rahul Geoffrey Martin R. Stanley † † † Williams , Paolo Faraboschi , Wen-mei Hwu , John Paul Strachan , Kaushik Roy*, Dejan Milojicic *Purdue University, † †University of Illinois at Urbana-Champaign, †Hewlett Packard Enterprise MOTIVATION FOR HYBRID MEMRISTOR-CMOS ARCHITECTURE ML App Characteristic MVM Domination Memory Bound Ø LSTM CNN × Memristor Feature Analog dot-product On-die, In-memory Memristor is a natural fit for ML app characteristics Inter-Tile Data Transfer Scalar Functional Unit Ø MLP Inter-Core Data Transfer Vector Functional Unit Control Flow MVM Unit (crossbar) RBM (V 500 -H 500) RNN (26 -93 -61) LSTM (26 -120 -61) MLP (64 -150 -. . . CNN (Lenet 5) Key memristor features: Ultra-high storage density 2 (~160 MB/mm ) o Analog dot-product (Ohm’s law, KCL) o High write cost (latency, energy) o 0% Ø 20% 40% 60% 80% MVM alone cannot execute ML apps PUMA CORE – HETEROGENEOUS EXECUTION 100% PUMA MULTI-CORE Domain-specific ISA for ML for memristive systems o Low decoder overhead o Programmability Ø Co-locate different types of execution units: MVMU, VFU, ROM-Embedded RAM o Near/In-memory processing o General-purpose Ø Bit-streamed, weight-sliced MVMU: storage density - 2. 72 MB/mm 2 Ø Exploits available ILP with MVM Coalescing Ø Dataflow (spatial) architecture o stationary weights and instructions o data movement from partial sums and neurons, between cores Ø Preserve storage density of memristor, to map models with on-chip memory only o 1 PUMA Node key metrics : 69 MB storage, 90 mm 2, 577 GOPS/mm 2, 837 GOPS/W Ø L 5 MLP L 3 L 5 Deep LSTM PUMA 100 000, 00 10, 00 1, 00 0, 10 Big LSTM- Vgg 16 Vgg 19 LSTM 2048 Wide LSTM Skylake CNN Pascal PUMA vs TPU 10, 1 9, 6 9, 1 8, 6 L 4 L 5 MLP L 3 L 5 Deep LSTM Big LSTM- Vgg 16 Vgg 19 LSTM 2048 Wide LSTM CNN *Skylake (14 nm), PASCAL (16 nm) and TPU (28 nm) are board-level measurements, PUMA (32 nm) results are simulation-level measurements. COMPILER AND SIMULATOR Ø PUMA compiler – converts high level C++ code to PUMA assembly. Programmability: run large-scale models on 1000 s of spatial cores o Graph Partitioning (data movement optimization), register allocation, MVM Coalescing (ILP) o Ø PUMAsim – power, performance, and functional simulator o Enables ISA and architecture validation, design-space exploration ACKNOWLEDGMENTS This work was supported in part by Hewlett. Packard Labs, Palo Alto, CA; US Department of Energy (DOE); IARPA and C-BRIC, one of six centers in JUMP, a Semiconductor Research Corporation (SRC) program sponsored by DARPA. 8, 1 7, 6 PS /m m GO 2 PS /W L 4 Pascal GO Skylake 10 000, 00 100 000, 00 10, 00 1, 00 0, 10 Normalized Latency (lower is better) Normalized Energy (lower is better) RESULTS – COMPARISON WITH CPU, GPU, TPU

- Slides: 1