ProblemSolving Examples Preemptive Case 8 1 Outline Preemptive

Problem-Solving Examples (Preemptive Case) 8 -1

Outline • Preemptive job-shop scheduling problem (P -JSSP) – Problem definition – Basic search procedure – Dominance properties – Heuristics – Results 8 -2

P-JSSP: Problem definition • • • Set of machines {M 1. . . Mm} Set of jobs {J 1. . . Jn} List of operations Oi 1. . . Oim(i) for each job Ji Processing time pij for each operation Oij Machine Mij for each operation Oij 8 -3

![P-JSSP: Problem variables • Set variable set(O): set[integer] for each operation O • Integer P-JSSP: Problem variables • Set variable set(O): set[integer] for each operation O • Integer](http://slidetodoc.com/presentation_image_h/2ebe3f8b80607e7fadccb13ad1a3c2ac/image-4.jpg)

P-JSSP: Problem variables • Set variable set(O): set[integer] for each operation O • Integer variables start(O) and end(O) for each operation O start(O) = mintÎset(O)(t) end(O) = maxtÎset(O)(t + 1) • Optimization criterion makespan = maxi(end(Oim(i))) 8 -4

P-JSSP: Problem constraints • Temporal constraints 0 £ start(Oij) end(Oij) £ start(Oij+1) • Duration constraints |set(Oij)| = pij • Exclusion constraints Mij = Mkl implies "tÎset(Oij), tÏset(Okl) 8 -5

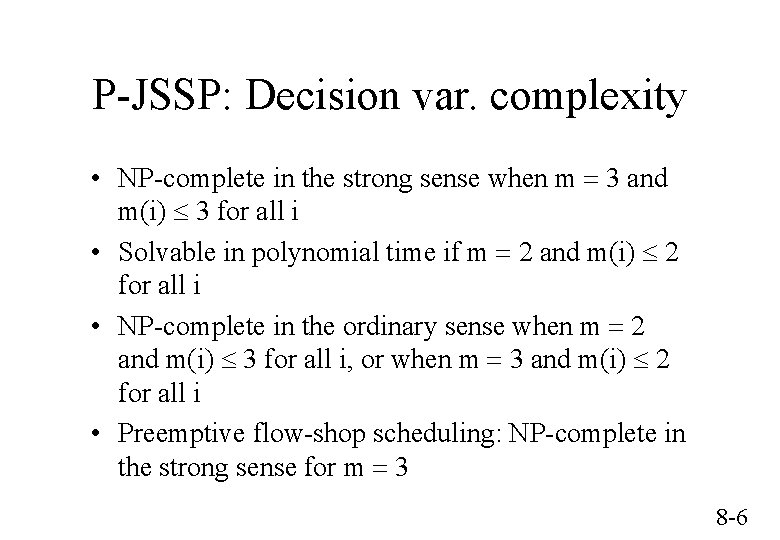

P-JSSP: Decision var. complexity • NP-complete in the strong sense when m = 3 and m(i) £ 3 for all i • Solvable in polynomial time if m = 2 and m(i) £ 2 for all i • NP-complete in the ordinary sense when m = 2 and m(i) £ 3 for all i, or when m = 3 and m(i) £ 2 for all i • Preemptive flow-shop scheduling: NP-complete in the strong sense for m = 3 8 -6

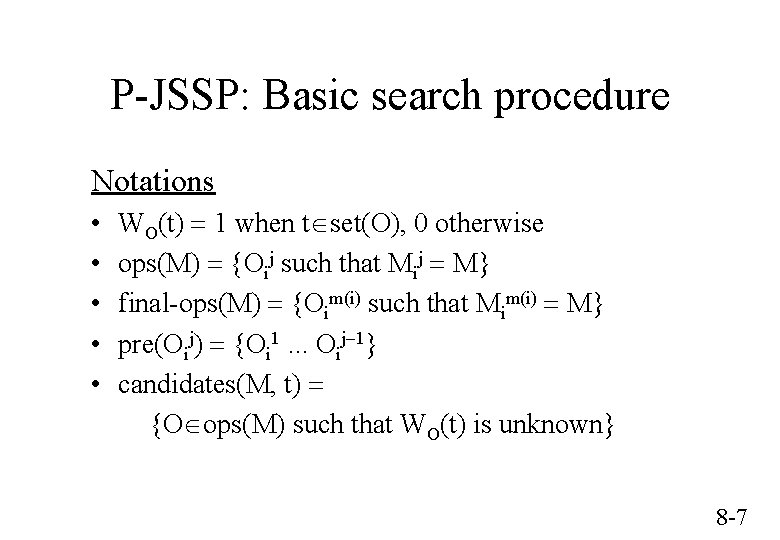

P-JSSP: Basic search procedure Notations • • • WO(t) = 1 when tÎset(O), 0 otherwise ops(M) = {Oij such that Mij = M} final-ops(M) = {Oim(i) such that Mim(i) = M} pre(Oij) = {Oi 1. . . Oij-1} candidates(M, t) = {OÎops(M) such that WO(t) is unknown} 8 -7

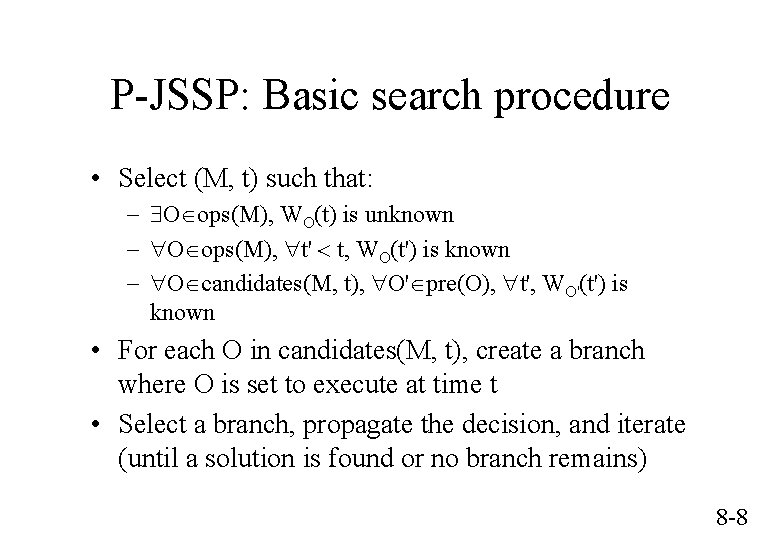

P-JSSP: Basic search procedure • Select (M, t) such that: - $OÎops(M), WO(t) is unknown - "OÎops(M), "t' < t, WO(t') is known - "OÎcandidates(M, t), "O'Îpre(O), "t', WO'(t') is known • For each O in candidates(M, t), create a branch where O is set to execute at time t • Select a branch, propagate the decision, and iterate (until a solution is found or no branch remains) 8 -8

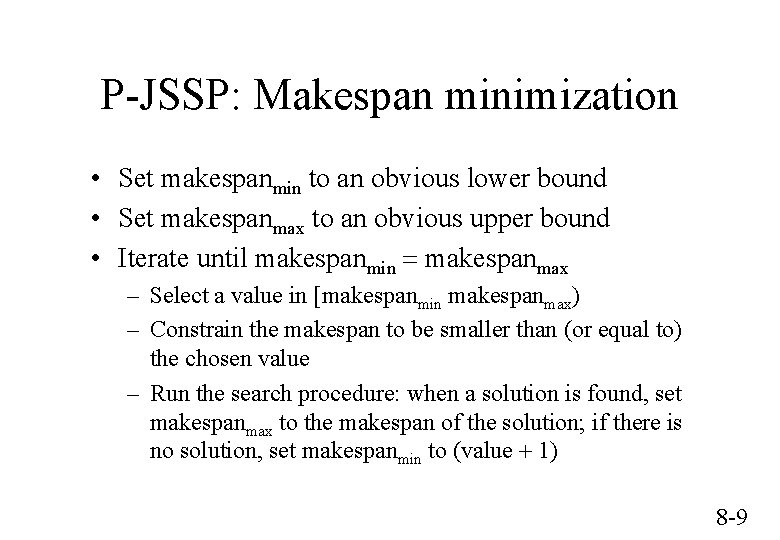

P-JSSP: Makespan minimization • Set makespanmin to an obvious lower bound • Set makespanmax to an obvious upper bound • Iterate until makespanmin = makespanmax – Select a value in [makespanmin makespanmax) – Constrain the makespan to be smaller than (or equal to) the chosen value – Run the search procedure: when a solution is found, set makespanmax to the makespan of the solution; if there is no solution, set makespanmin to (value + 1) 8 -9

P-JSSP: Makespan minimization • Example – start with makespanmin obtained by propagation – until a solution has been found, increment the tested value by min(2 i-1, (makespanmax - makespanmin) / 2) (after the ith iteration) – after a solution has been found, set the tested value to (makespanmax - makespanmin) / 2 8 -10

P-JSSP: Dominance properties • Given an optimal schedule S, the "due-date" of an operation O in S is defined as: – the makespan of S if O is the last operation of its job – the start time of the following operation otherwise • There exists an optimal schedule J(S) such that "M, "AÎops(M), "BÎops(M)-{A}, "t, if A executes at t while B is available, then the due-date of A in S is smaller than or equal to the due-date of B in S • Proof (by construction) 8 -11

P-JSSP: Dominance properties • Applications – The set candidates(M, t) is reduced – When O is set to execute at time t, O is set to execute up to, either endmin(O), or startmin(O') for an operation O' not available at time t – A redundant constraint (cut) is added for each operation O' available at time t: • [end(O) + remaining-duration(O') £ end(O')] • [end(O) £ start(O')] if O' is not started at time t 8 -12

P-JSSP: Dominance properties • There exists an optimal schedule such that "M, "A = Oim(i)Îops(M), "BÎops(M)-{A} such that either BÏfinal-ops(M) or B = Ojm(j) with j < i, "t, A does not execute at t if B is available • Proof Direct consequence of the previous result 8 -13

P-JSSP: Dominance properties • Applications – If candidates(M, t) contains at least a non-final operation, final operations are removed from candidates(M, t) – Otherwise, candidates(M, t) is reduced to a unique final operation 8 -14

P-JSSP: Dominance properties • Combination of the dominance rules – Use the "final operations" dominance rules – Among non-final operations, use "Jackson's preemptive schedule" dominance rules 8 -15

P-JSSP: Heuristics • Selection of the pair (M, t) – Chronological scheduling – Select a machine on which non-final operations remain • Branch exploration ordering – Select the branch on which the operation with the smallest endmax is scheduled (EDD) 8 -16

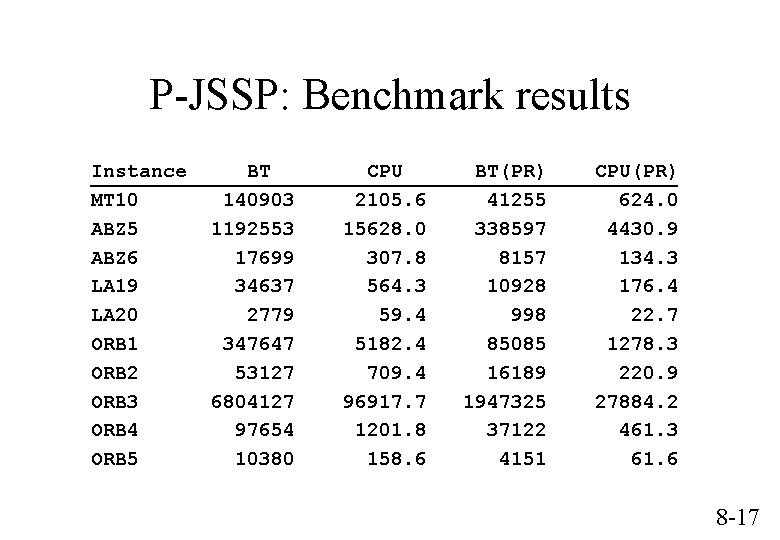

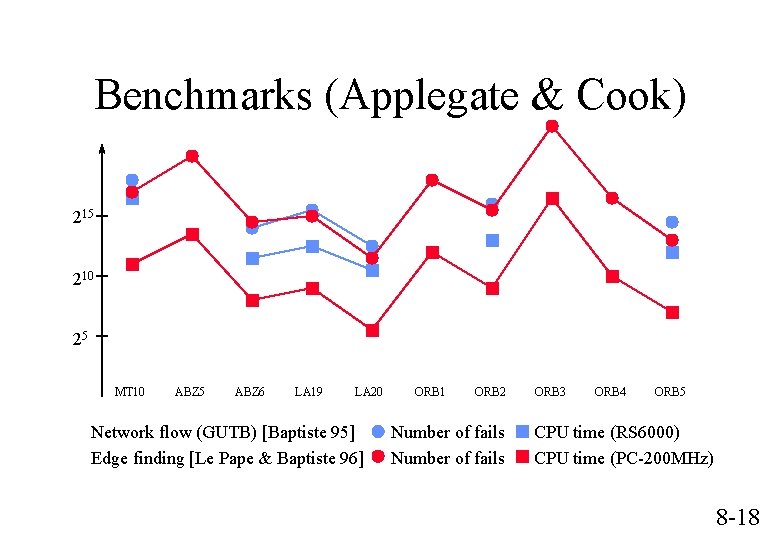

P-JSSP: Benchmark results Instance MT 10 ABZ 5 ABZ 6 LA 19 LA 20 ORB 1 ORB 2 ORB 3 ORB 4 ORB 5 BT 140903 1192553 17699 34637 2779 347647 53127 6804127 97654 10380 CPU 2105. 6 15628. 0 307. 8 564. 3 59. 4 5182. 4 709. 4 96917. 7 1201. 8 158. 6 BT(PR) 41255 338597 8157 10928 998 85085 16189 1947325 37122 4151 CPU(PR) 624. 0 4430. 9 134. 3 176. 4 22. 7 1278. 3 220. 9 27884. 2 461. 3 61. 6 8 -17

Benchmarks (Applegate & Cook) 215 210 25 MT 10 ABZ 5 ABZ 6 LA 19 LA 20 Network flow (GUTB) [Baptiste 95] Edge finding [Le Pape & Baptiste 96] ORB 1 ORB 2 Number of fails ORB 3 ORB 4 ORB 5 CPU time (RS 6000) CPU time (PC-200 MHz) 8 -18

Heuristic variants (1) Constraint propagation • Costly edge-finding algorithm • Less costly but less effective timetable algorithm 8 -19

Heuristic variants (2) Branch exploration ordering heuristic • EDD as in the exact algorithm • Use the previous schedule S to guide the search, i. e. , use the due-date of O in S rather than the current latest end time of O 8 -20

Heuristic variants (3) Local optimization • Restart the search with a new bound on the makespan each time a solution is found • Each time a new solution is found, use the operator J and its symmetric counterpart K to improve the schedule; restart the search with a new bound on the makespan when J and K become ineffective 8 -21

Heuristic variants (4) Overall search strategy • Use standard chronological backtracking • Use limited discrepancy search 8 -22

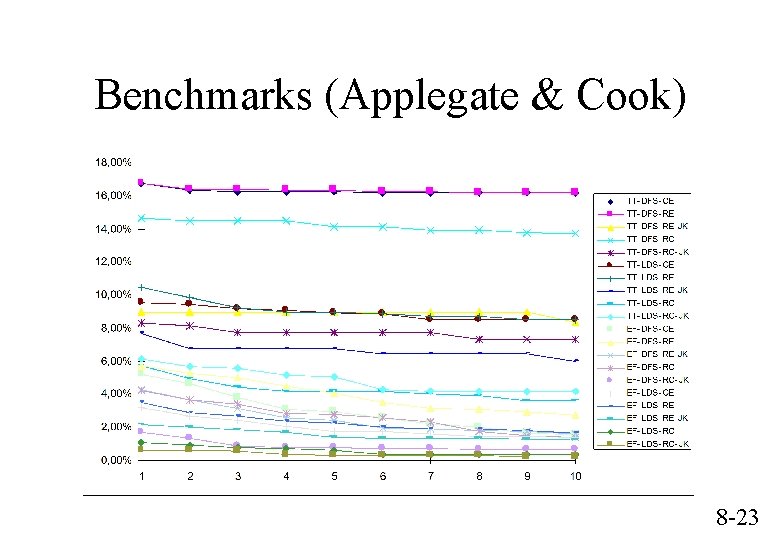

Benchmarks (Applegate & Cook) 8 -23

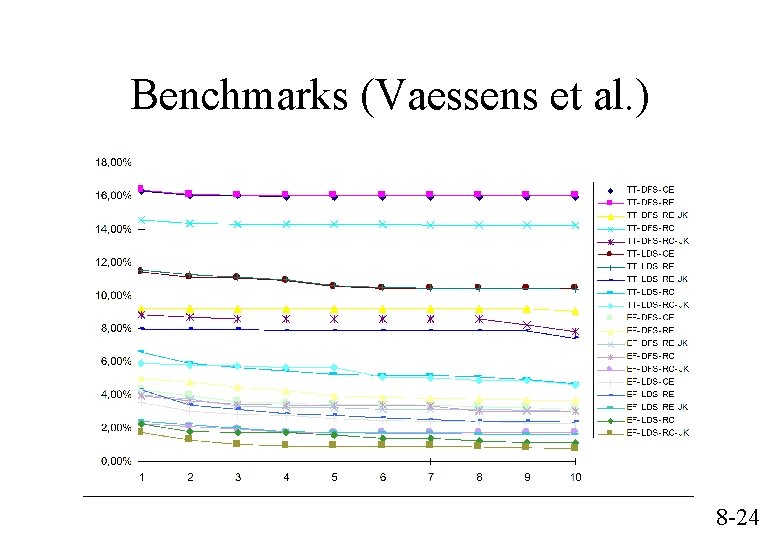

Benchmarks (Vaessens et al. ) 8 -24

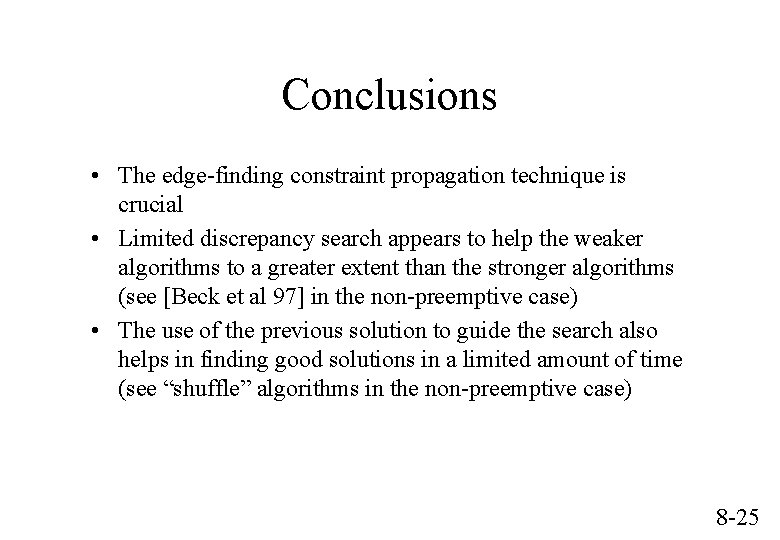

Conclusions • The edge-finding constraint propagation technique is crucial • Limited discrepancy search appears to help the weaker algorithms to a greater extent than the stronger algorithms (see [Beck et al 97] in the non-preemptive case) • The use of the previous solution to guide the search also helps in finding good solutions in a limited amount of time (see “shuffle” algorithms in the non-preemptive case) 8 -25

- Slides: 25