Optimal Lower Bounds for 2 Query Locally Decodable

Optimal Lower Bounds for 2 -Query Locally Decodable Linear Codes Kenji Obata

Codes Error correcting code C : {0, 1}n → {0, 1}m with decoding procedure A s. t. for y {0, 1}m with d(y, C(x)) ≤ δm, A(y) = x

“Locally Decodable” Codes • Weaken power of A: Can only look at a constant number q of input bits • Weaken requirements: § A need only recover a single given bit of x § Can fail with some probability bounded away from ½ Study initiated by Katz and Trevisan [KT 00]

“Locally Decodable” Codes Define a (q, δ, )-locally decodable code: • A can make ≤ q queries (w. l. o. g. exactly q queries) • For all x {0, 1}n, all y {0, 1}m with d(y, C(x)) ≤ δm, all inputs bits i 1, …, n A(y, i) = xi w/ probability ½ +

LDC Applications • Direct: Scalable fault-tolerant information storage • Indirect: Lower bounds for certain classes of private information retrieval schemes (more on this later)

![Lower Bounds for LDCs • [KT 00] proved a general lower bound m ≥ Lower Bounds for LDCs • [KT 00] proved a general lower bound m ≥](http://slidetodoc.com/presentation_image_h2/6e8b1acfa4ee66a73d0e20d1e675e08e/image-6.jpg)

Lower Bounds for LDCs • [KT 00] proved a general lower bound m ≥ nq/(q-1) (at best n 2, but known codes exponential) • For 2 -query linear LDCs Goldreich, Karloff, Schulman, Trevisan [GKST 02] proved an exponential bound m ≥ 2Ω(εδn)

Lower Bounds for LDCs • Restriction to linear codes interesting, since known LDC constructions are linear • But 2Ω(εδn) not quite right: – Lower bound should increase arbitrarily as decoding probability → 1 (ε → ½) – No matching construction

Lower Bounds for LDCs • In this work, we prove that for 2 -query linear LDCs, m ≥ 2Ω(δ/(1 -2ε)n) • Optimal: There is an LDC construction matching this within a constant factor in the exponent

![Techniques from [KT 00] • Fact: An LDC is also a “smooth” code (A Techniques from [KT 00] • Fact: An LDC is also a “smooth” code (A](http://slidetodoc.com/presentation_image_h2/6e8b1acfa4ee66a73d0e20d1e675e08e/image-9.jpg)

Techniques from [KT 00] • Fact: An LDC is also a “smooth” code (A queries each position w/ roughly the same probability) … so can study smooth codes • Connects LDCs to information-theoretic PIR schemes: § q queries ↔ q servers § smoothness ↔ statistical indistinguishability

![Techniques from [KT 00] • For i 1, …, n, define the recovery graph Techniques from [KT 00] • For i 1, …, n, define the recovery graph](http://slidetodoc.com/presentation_image_h2/6e8b1acfa4ee66a73d0e20d1e675e08e/image-10.jpg)

Techniques from [KT 00] • For i 1, …, n, define the recovery graph Gi associated with C: 1. Vertex set {1, …, m} (bits of the codeword) 2. Edges are pairs (q 1, q 2) such that, conditioned on A querying q 1, q 2, A(C(x), i) outputs xi with prob > ½ • Call these edges good edges (endpoints contain information about xi)

![Techniques from [KT 00]/[GKST 02] • Theorem: If C is (2, c, ε)-smooth, then Techniques from [KT 00]/[GKST 02] • Theorem: If C is (2, c, ε)-smooth, then](http://slidetodoc.com/presentation_image_h2/6e8b1acfa4ee66a73d0e20d1e675e08e/image-11.jpg)

Techniques from [KT 00]/[GKST 02] • Theorem: If C is (2, c, ε)-smooth, then Gi contains a matching of size ≥ εm/c. • Better to work with non-degenerate codes § Each bit of the encoding depends on more than one bit of the message § For linear codes, good edges are non-trivial linear combinations • Fact: Any smooth code can be made nondegenerate (with constant loss in parameters).

![Core Lemma [GKST 02] Let q 1, …, qm be linear functions on {0, Core Lemma [GKST 02] Let q 1, …, qm be linear functions on {0,](http://slidetodoc.com/presentation_image_h2/6e8b1acfa4ee66a73d0e20d1e675e08e/image-12.jpg)

Core Lemma [GKST 02] Let q 1, …, qm be linear functions on {0, 1}n s. t. for every i 1, …, n there is a set Mi of at least γm disjoint pairs of indices j 1, j 2 such that xi = qj 1(x) + qj 2(x). Then m ≥ 2γn.

Putting it all together… • If C is a (2, c, )-smooth linear code, then (by reduction to non-degenerate code + existence of large matchings + core lemma), m ≥ 2 n/4 c. • If C is a (2, δ, )-locally decodable linear code, then (by LDC → smooth reduction), m ≥ 2 δn/8.

Putting it all together… • Summary: locally decodable → smooth → big matchings → exponential size • This work: locally decodable → big matchings (skip smoothness reduction, argue directly about LDCs)

The Blocking Game • Let G(V, E) be a graph on n vertices, w a prob distribution on E, Xw an edge sampled according to w, S a subset of V • Define the blocking probability βδ(G) as minw (max|S|≤δn Pr (Xw intersects S))

The Blocking Game • Want to characterize βδ(G) in terms of size of a maximum matching M(G), equivalently defect d(G) = n – 2 M(G) • Theorem: Let G be a graph with defect αn. Then βδ(G) ≥ min (δ/(1 -α), 1).

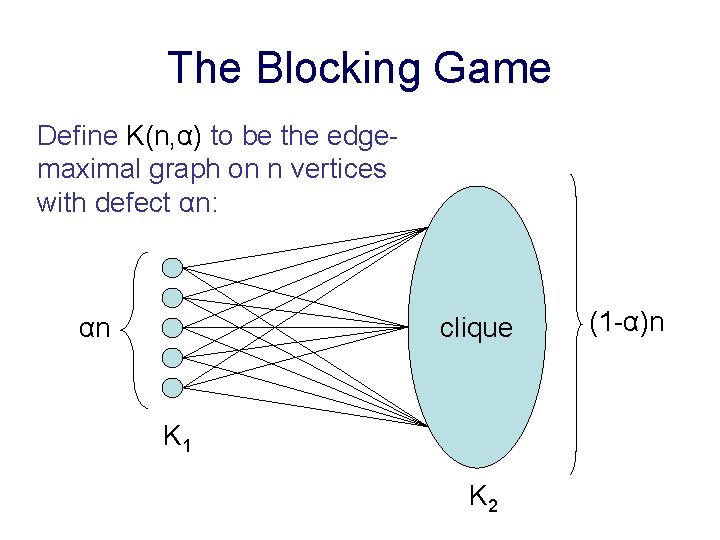

The Blocking Game Define K(n, α) to be the edgemaximal graph on n vertices with defect αn: αn clique K 1 K 2 (1 -α)n

The Blocking Game • Optimization on K(n, α) is a relaxation of optimization on any graph with defect αn • If d(G) ≥ αn then βδ(G) ≥ βδ(K(n, α)) • So, enough to think about K(n, α).

The Blocking Game • Intuitively, best strategy for player 1 is to spread distribution as uniformly as possible • A (λ 1, λ 2)-symmetric dist: § all edges in (K 1, K 2) have weight λ 1 § all edges in (K 2, K 2) have weight λ 2 • Lemma: (λ 1, λ 2)-symmetric dist w s. t. βδ(K(n, α)) = max|S|≤δn Pr (Xw intersects S).

The Blocking Game • Claim: Let w 1, …, wk be dists s. t. max|S|≤δn Pr (Xwi intersects S) = βδ(G). Then for any convex comb w = γi wi max|S|≤δn Pr (Xw intersects S) = βδ(G). • Proof: For S V, |S| ≤ δn, intersection prob is ≤ γi βδ(G) = βδ(G). So max|S| ≤ δn Pr (Xw intersects S) ≤ βδ(G). But by def’n of βδ(G), this must be ≥ βδ(G).

The Blocking Game • Proof: Let w’ be any distribution optimizing βδ(G). If w’ does, then so does π(w’) for π Aut(G) = Γ. By prior claim, so does w = (1/|Γ|) π Γ π(w’). For e E, σ Γ, w(e) = (1/|Γ|) π Γ w’(π(e)) = (1/|Γ|) π Γ w’(πσ(e)) = w(σ(e)). . So, if e, e’ are in the same Γ-orbit, they have the same weight in w w is (λ 1, λ 2)-symmetric.

The Blocking Game • Claim: If w is (λ 1, λ 2)-sym then S V, |S| ≤ δn s. t. Pr (Xw intersects S) ≥ min (δ/(1 -α), 1). • Proof: If δ ≥ 1 – α then can cover every edge. Otherwise, set S = any δn vertices of K 2. Then Pr = δ (1/(1 - α) + ½ n 2 (1 - α – δ) λ 2) which, for δ < 1 - α, is at least δ/(1 - α) (optimized when λ 2 = 0).

The Blocking Game • Theorem: Let G be a graph with defect αn. Then βδ(G) ≥ min (δ/(1 -α), 1). • Proof: βδ(G) ≥ βδ(K(n, α)). Blocking prob on K(n, α) is optimized by some (λ 1, λ 2)-sym dist. For any such dist w, δn vertices blocking w with Pr ≥ min (δ/(1 -α), 1).

Lower Bound for LDLCs • Still need a degenerate non-degenerate reduction (this time, for LDCs instead of smooth codes) • Theorem: Let C be a (2, δ, ε)-locally decodable linear code. Then, for large enough n, there exists a non-degenerate (2, δ/2. 01, ε)-locally decodable linear code C’ : {0, 1}n {0, 1}2 m.

Lower Bound for LDLCs • Theorem: Let C be a (2, δ, ε)-LDLC. Then, for large enough n, m ≥ 21/4. 03 δ/(1 -2ε) n. Proof: • Make C non-degenerate • Local decodability Þ low blocking probability (at most ¼ - ½ ε) Þ low defect (α ≤ 1 – (δ/2. 01)/(1 -2ε)) Þ big matching (½ (δ/2. 01)/(1 -2ε) (2 m) ) Þ exponentially long encoding (m ≥ 2(1/4. 02) δ/(1 -2ε)n – 1)

Matching Upper Bound • Hadamard code on {0, 1}n § yi = ai · x (ai runs through {0, 1}n) § 2 -query locally decodable § Recovery graphs are perfect matchings on n-dim hypercube § Success parameter ε = ½ - 2δ § Can use concatenated Hadamard codes (Trevisan):

Matching Upper Bound • Set c = (1 -2ε)/4δ (can be shown that for feasible values of δ, ε, c ≥ 1). • Divide input into c blocks of n/c bits, encode each block with Hadamard code on {0, 1}n/c. • Each block has a fraction ≤ cδ corrupt entries, so code has recovery parameter ½ - 2 (1 -2ε)/4δ δ = ε • Code has length (1 -2ε)/4δ 24δ/(1 -2ε)n

Conclusions • There is a matching upper bound (concatenated Hadamard code) • New results for 2 -query non-linear codes (but using apparently completely different techniques) • q > 2? – No analog to the core lemma for more queries – But blocking game analysis might generalize to useful properties other than matching size

- Slides: 28