Optimal lower bounds for Locality Sensitive Hashing except

Optimal lower bounds for Locality Sensitive Hashing (except whenq is tiny) Ryan O’Donnell (CMU, IAS) joint work with Yi Wu (CMU, IBM), Yuan Zhou (CMU)

![Locality Sensitive Hashing [Indyk–Motwani ’ 98] h: objects sketches H: family of hash functions Locality Sensitive Hashing [Indyk–Motwani ’ 98] h: objects sketches H: family of hash functions](http://slidetodoc.com/presentation_image_h/f5faaa8b26508a3b339ca95015a74b40/image-2.jpg)

Locality Sensitive Hashing [Indyk–Motwani ’ 98] h: objects sketches H: family of hash functions h s. t. “similar” objects collide w/ high prob. “dissimilar” objects collide w/ low prob.

Abbreviated history

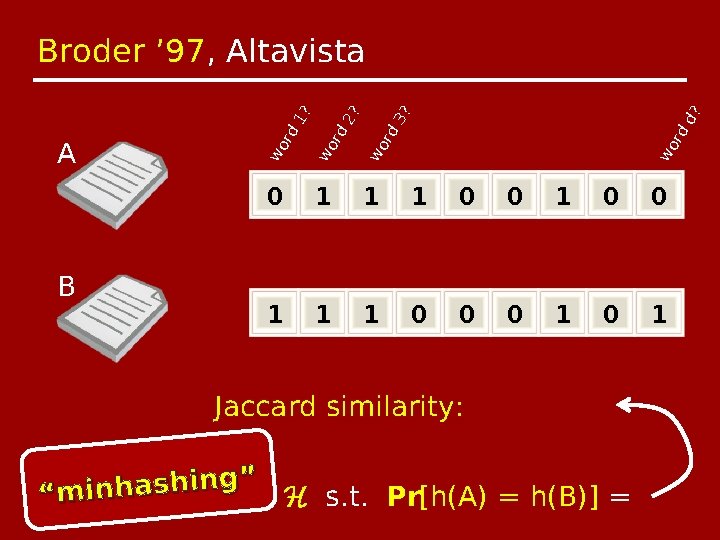

d? 3? 0 1 1 1 0 0 0 1 wo wo rd rd 2? rd wo B wo A rd 1? Broder ’ 97, Altavista Jaccard similarity: g” n i h s a h n i m “ Invented simple H s. t. Pr[h(A) = h(B)] =

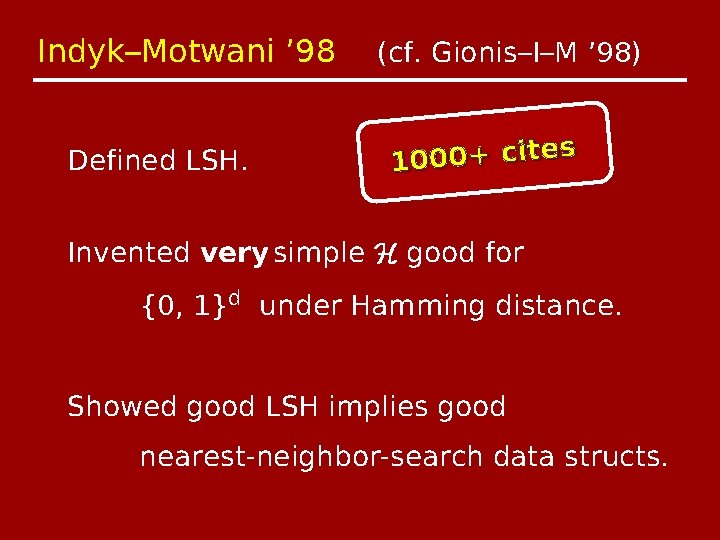

Indyk–Motwani ’ 98 Defined LSH. (cf. Gionis–I–M ’ 98) s 1000+ cite Invented very simple H good for {0, 1}d under Hamming distance. Showed good LSH implies good nearest-neighbor-search data structs.

Charikar ’ 02, STOC Proposed alternate H (“simhash”) for Jaccard similarity. Patented by Google.

Many papers about LSH

![Practice Theory Free code base [AI’ 04] [Broder ’ 97] Sequence comparison in bioinformatics Practice Theory Free code base [AI’ 04] [Broder ’ 97] Sequence comparison in bioinformatics](http://slidetodoc.com/presentation_image_h/f5faaa8b26508a3b339ca95015a74b40/image-8.jpg)

Practice Theory Free code base [AI’ 04] [Broder ’ 97] Sequence comparison in bioinformatics [Indyk–Motwani ’ 98] Association-rule finding in data mining [Gionis–Indyk–Motwani ’ 98] [Charikar ’ 02] [Datar–Immorlica– –Indyk–Mirrokni ’ 04] Collaborative filtering [Motwani–Naor–Panigrahi ’ 06] Clustering nouns by meaning in NLP [Andoni–Indyk ’ 06] [Tenesawa–Tanaka ’ 07] Pose estimation in vision [Andoni–Indyk ’ 08, CACM] • • • [Neylon ’ 10]

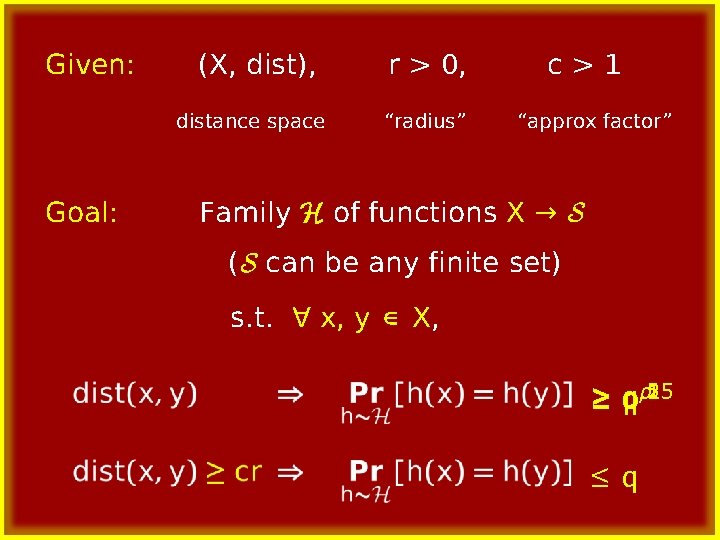

Given: (X, dist), distance space Goal: r > 0, “radius” c>1 “approx factor” Family H of functions X → S (S can be any finite set) s. t. ∀ x, y ∈ X, . 5. 25 ≥q pρ. 1 ≤q

![Theorem [IM’ 98, GIM’ 98] Given LSH family for (X, dist), can solve “(r, Theorem [IM’ 98, GIM’ 98] Given LSH family for (X, dist), can solve “(r,](http://slidetodoc.com/presentation_image_h/f5faaa8b26508a3b339ca95015a74b40/image-10.jpg)

Theorem [IM’ 98, GIM’ 98] Given LSH family for (X, dist), can solve “(r, cr)-near-neighbor search” for n points with data structure of size: query time: O(n 1+ρ) Õ(nρ) hash fcn evals.

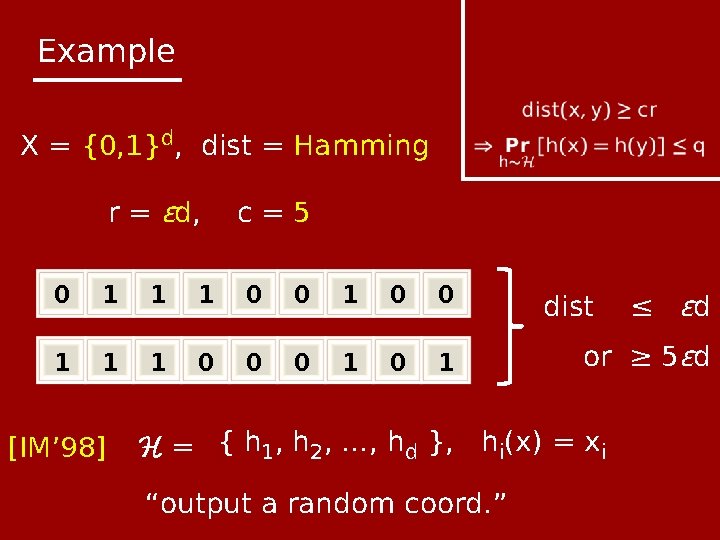

Example X = {0, 1}d, dist = Hamming r = ϵd, c=5 0 1 1 1 0 0 0 1 [IM’ 98] dist or ≥ 5ϵd H = { h 1, h 2, …, hd }, hi(x) = xi “output a random coord. ” ≤ ϵd

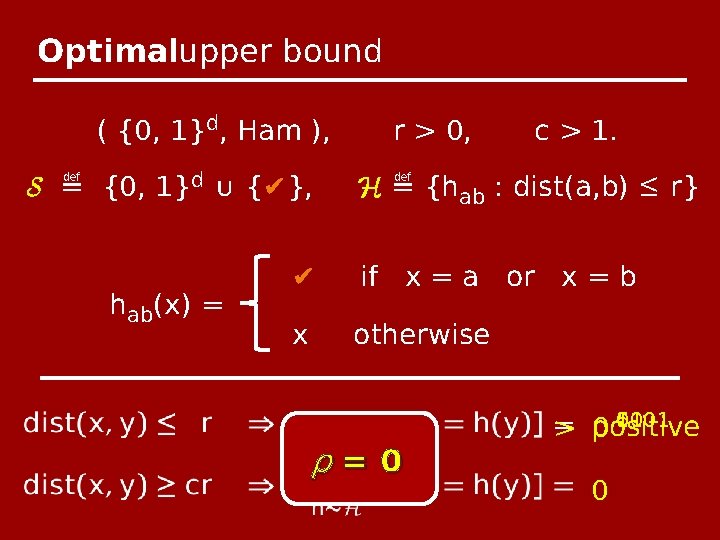

Optimalupper bound ( {0, 1}d, Ham ), S ≝ {0, 1}d ∪ {✔}, hab(x) = r > 0, c > 1. H ≝ {hab : dist(a, b) ≤ r} ✔ if x = a or x = b x otherwise ρ=0 . 5. 1. 01 = > positive 0. 0001 0

The End. Any questions?

![Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can](http://slidetodoc.com/presentation_image_h/f5faaa8b26508a3b339ca95015a74b40/image-15.jpg)

Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can solve “(r, cr)-near-neighbor search” for n points with data structure of size: query time: Õ(n 1+ρ) Õ(nρ) hash fcn evals

![Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can](http://slidetodoc.com/presentation_image_h/f5faaa8b26508a3b339ca95015a74b40/image-16.jpg)

Wait, what? [IM’ 98, GIM’ 98] Theorem: Given LSH family for (X, dist), can solve “(r, cr)-near-neighbor search” for n points with data structure of size: query time: Õ(n 1+ρ) Õ(nρ) hash fcn evals assuming q ≥ n−o(1) (“not tiny”).

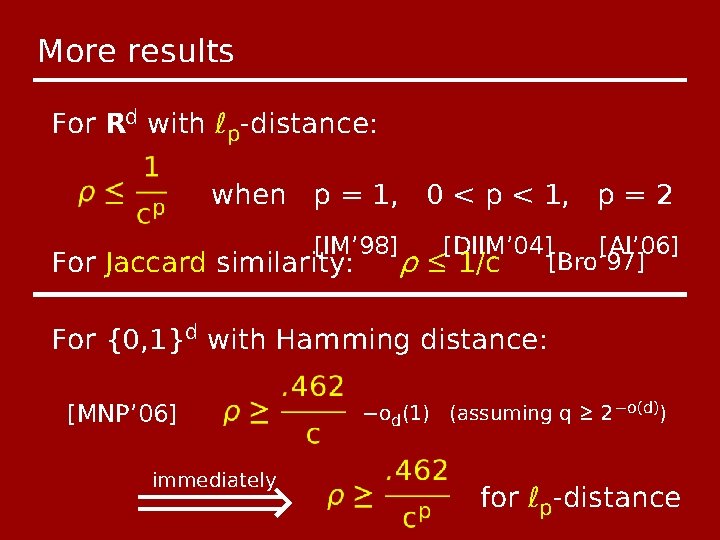

More results For Rd with ℓp-distance: when p = 1, 0 < p < 1, p = 2 [IM’ 98] For Jaccard similarity: ρ [DIIM’ 04] [AI’ 06] [Bro’ 97] ≤ 1/c For {0, 1}d with Hamming distance: [MNP’ 06] immediately −od(1) (assuming q ≥ 2−o(d)) for ℓp-distance

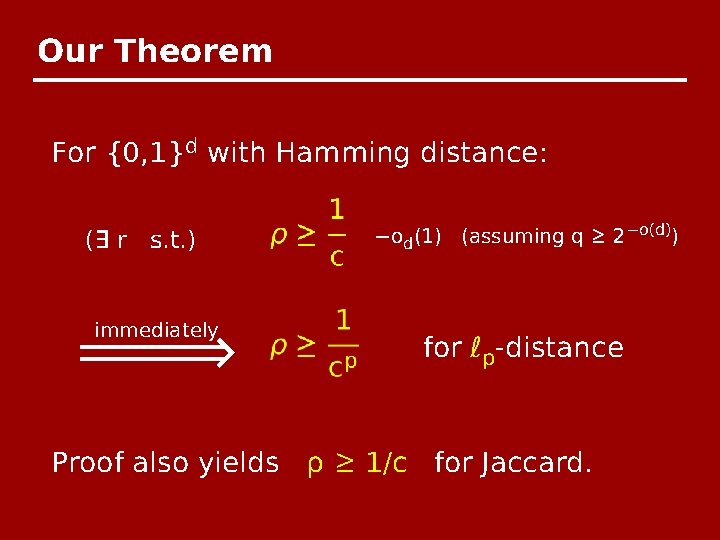

Our Theorem For {0, 1}d with Hamming distance: (∃ r s. t. ) immediately −od(1) (assuming q ≥ 2−o(d)) for ℓp-distance Proof also yields ρ ≥ 1/c for Jaccard.

Proof:

Proof: Noise-stability is log-convex.

Proof: A definition, and two lemmas.

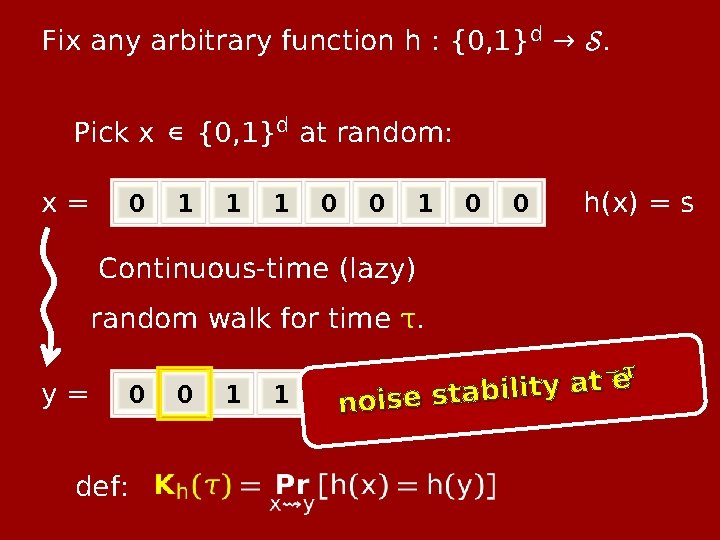

Fix any arbitrary function h : {0, 1}d → S. Pick x ∈ {0, 1}d at random: x= 0 1 1 1 0 0 h(x) = s Continuous-time (lazy) random walk for time τ. y= 0 def: 0 1 1 −eτ t 0 no 0 ise 1 sta 1 bil 0 ity ah(y) = s’

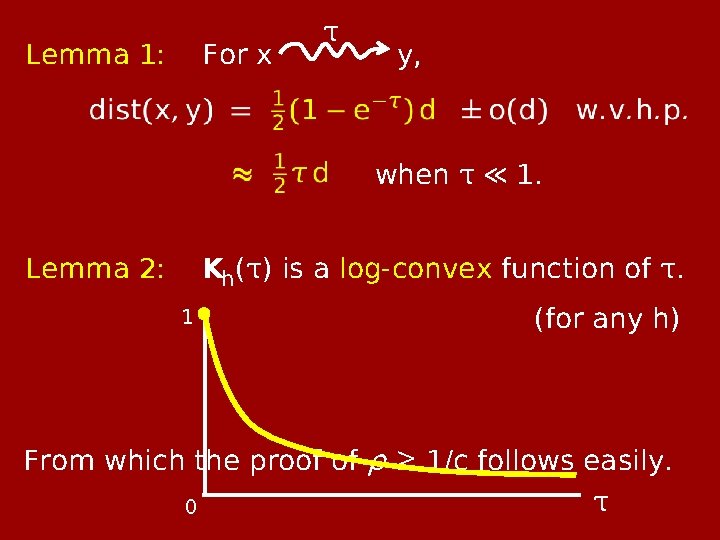

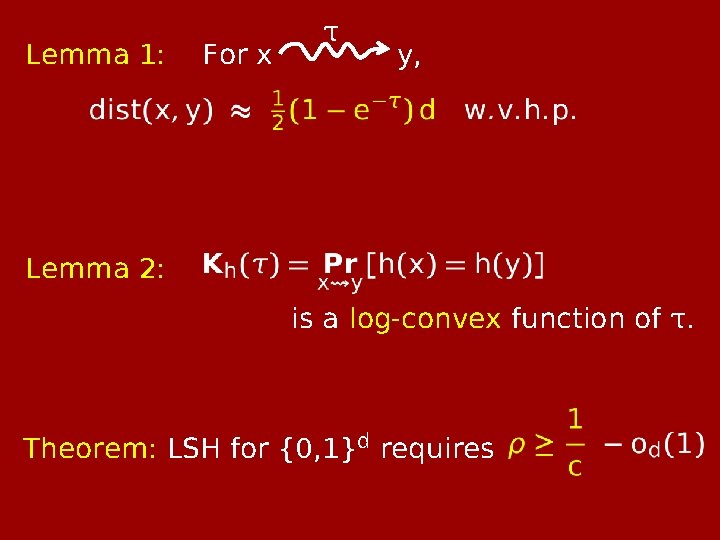

Lemma 1: For x τ y, when τ ≪ 1. Kh(τ) is a log-convex function of τ. Lemma 2: 1 (for any h) From which the proof of ρ ≥ 1/c follows easily. τ 0

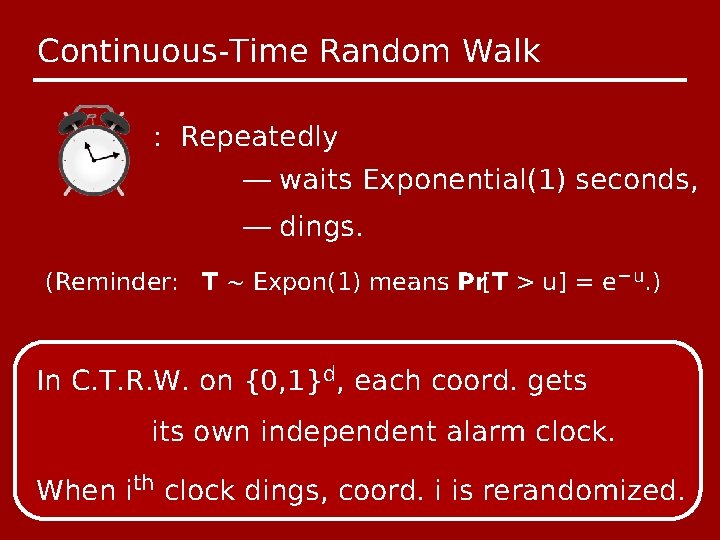

Continuous-Time Random Walk : Repeatedly — waits Exponential(1) seconds, — dings. (Reminder: T ~ Expon(1) means Pr[T > u] = e−u. ) In C. T. R. W. on {0, 1}d, each coord. gets its own independent alarm clock. When ith clock dings, coord. i is rerandomized.

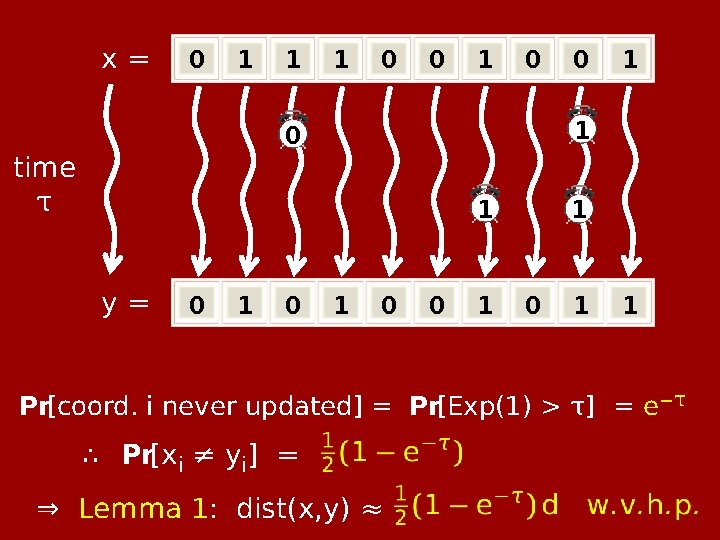

x= 0 1 1 1 0 0 1 y= 0 1 1 0 time τ 0 1 0 0 1 1 Pr[coord. i never updated] = Pr[Exp(1) > τ] = e−τ ∴ Pr[xi ≠ yi] = ⇒ Lemma 1: dist(x, y) ≈

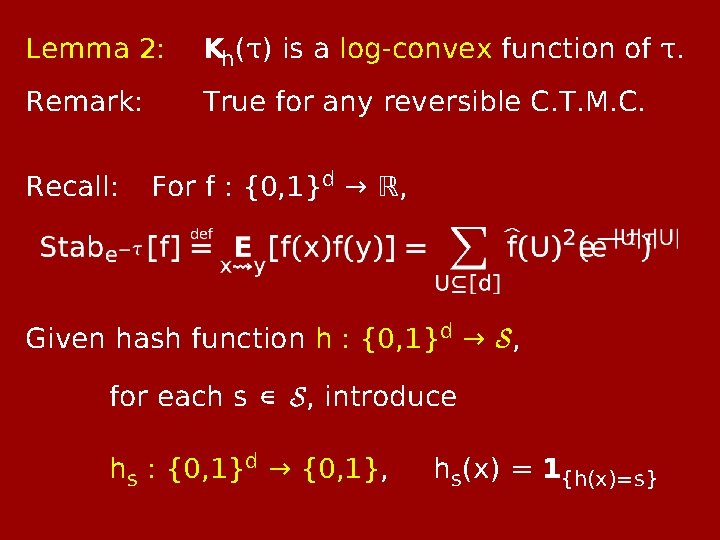

Lemma 2: Kh(τ) is a log-convex function of τ. Remark: True for any reversible C. T. M. C. Recall: For f : {0, 1}d → ℝ, Given hash function h : {0, 1}d → S, for each s ∈ S, introduce hs : {0, 1}d → {0, 1}, hs(x) = 1{h(x)=s}

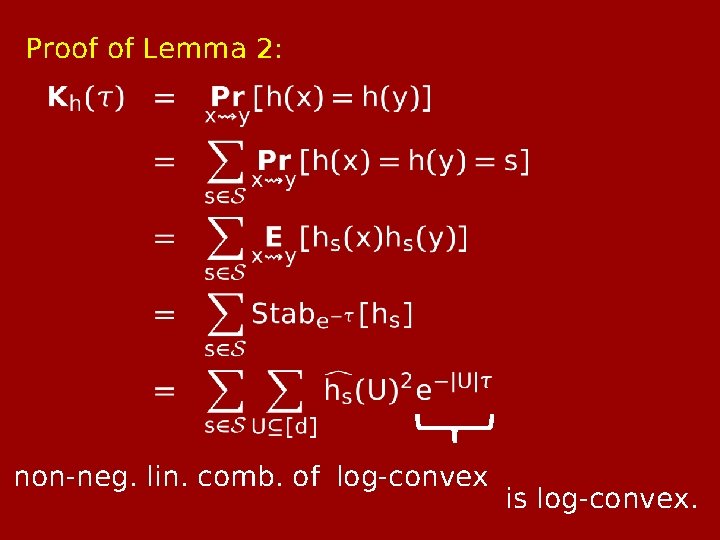

Proof of Lemma 2: non-neg. lin. comb. of log-convex is log-convex.

Lemma 1: For x τ y, Lemma 2: is a log-convex function of τ. Theorem: LSH for {0, 1}d requires

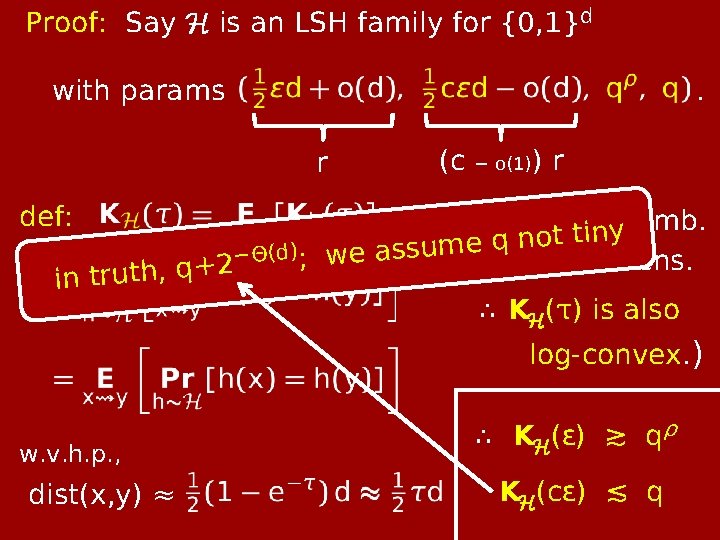

Proof: Say H is an LSH family for {0, 1}d with params . r def: −Θ(d) ; 2 + q , h t u r t n i (c − o(1)) r (Non-neg. t lin. tinycomb. o n q e m u s s we a of log-convex fcns. ∴ KH(τ) is also log-convex. ) w. v. h. p. , dist(x, y) ≈ ∴ KH(ϵ) ≳ qρ KH(cϵ) ≲ q

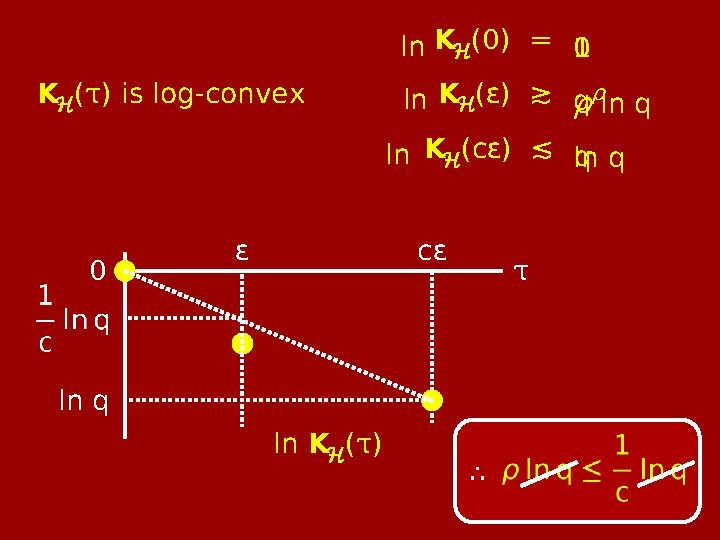

KH(τ) is log-convex 0 ln q ϵ ∴ ln KH(0) = 0 1 ∴ ln KH(ϵ) ≳ q ρρln q ln KH(cϵ) ≲ ln q q cϵ ln KH(τ) τ ∴

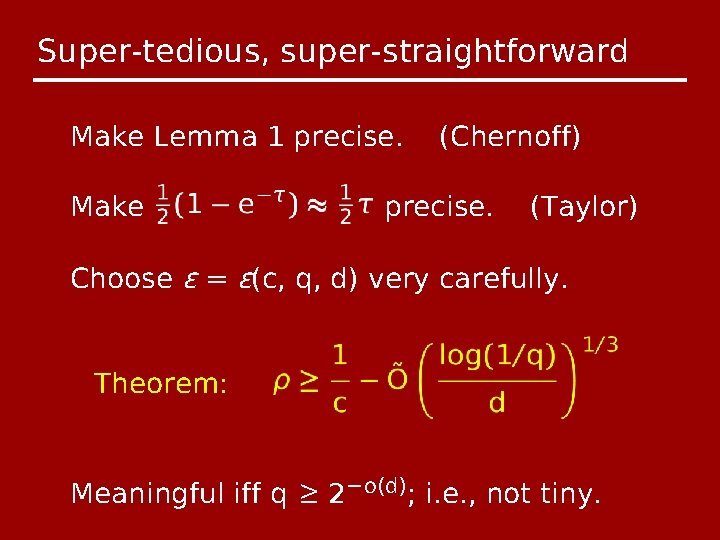

Super-tedious, super-straightforward Make Lemma 1 precise. Make (Chernoff) precise. (Taylor) Choose ϵ = ϵ(c, q, d) very carefully. Theorem: Meaningful iff q ≥ 2−o(d); i. e. , not tiny.

The End. Any questions?

- Slides: 32