Locality Sensitive Hashing Basics and applications A wellknown

Locality Sensitive Hashing Basics and applications

A well-known problem n Given a large collection of documents Identify the near-duplicate documents n Web search engines n n Proliferation of near-duplicate documents n n n Legitimate – mirrors, local copies, updates, … Malicious – spam, spider-traps, dynamic URLs, … 30% of web-pages are near-duplicates [1997]

Natural Approaches n Fingerprinting: n n Edit-distance n n only works for exact matches Karp Rabin (rolling hash) – collision probability guarantees MD 5 – cryptographically-secure string hashes metric for approximate string-matching expensive – even for one pair of documents impossible – for billion web documents Random Sampling n n n sample substrings (phrases, sentences, etc) hope: similar documents similar samples But – even samples of same document will differ

![Basic Idea: Shingling n [Broder 1997] dissect document into q-grams (shingles) T = I Basic Idea: Shingling n [Broder 1997] dissect document into q-grams (shingles) T = I](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-4.jpg)

Basic Idea: Shingling n [Broder 1997] dissect document into q-grams (shingles) T = I leave and study in Pisa, …. If we set q=3 the 3 -grams are: <I leave and><leave and study><and study in><study in Pisa>… n represent documents by sets of hash[shingle] The problem reduces to set intersection among int

![Basic Idea: Shingling Doc A SA [Broder 1997] SB Doc B Set intersection Jaccard Basic Idea: Shingling Doc A SA [Broder 1997] SB Doc B Set intersection Jaccard](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-5.jpg)

Basic Idea: Shingling Doc A SA [Broder 1997] SB Doc B Set intersection Jaccard similarity • Claim: A & B are near-duplicates if sim(SA, SB) is high

Sec. 19. 6 Sketching of a document From each shingle-set we build a “sketch vector” (~200 size) Postulate: Documents that share ≥ t components of their sketch-vectors are claimed to be near duplicates

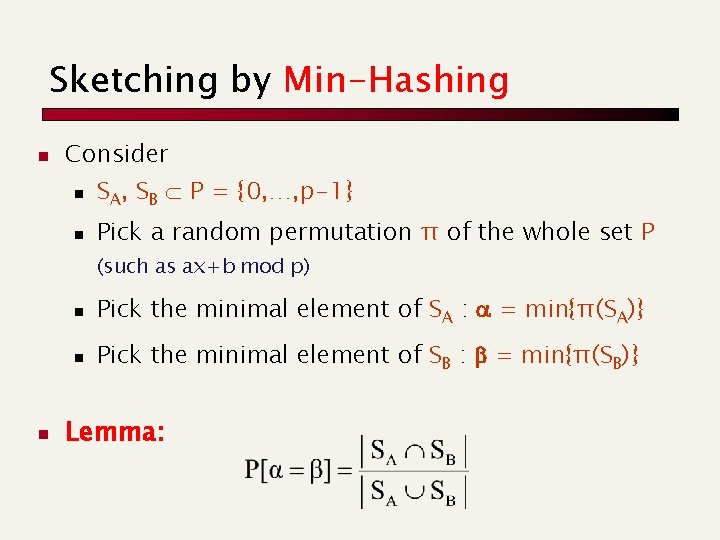

Sketching by Min-Hashing n Consider n SA, SB P = {0, …, p-1} n Pick a random permutation π of the whole set P (such as ax+b mod p) n n Pick the minimal element of SA : = min{π(SA)} n Pick the minimal element of SB : b = min{π(SB)} Lemma:

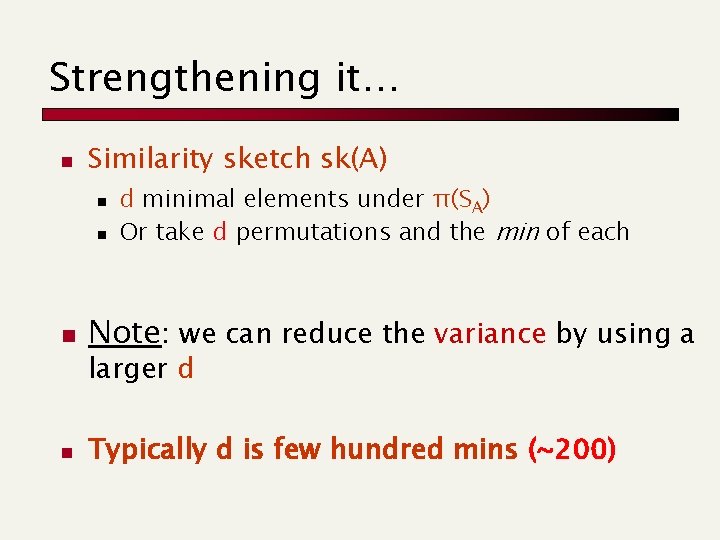

Strengthening it… n Similarity sketch sk(A) n n n d minimal elements under π(SA) Or take d permutations and the min of each Note: we can reduce the variance by using a larger d n Typically d is few hundred mins (~200)

![Sec. 19. 6 Computing Sketch[i] for Doc 1 Document 1 264 Start with 64 Sec. 19. 6 Computing Sketch[i] for Doc 1 Document 1 264 Start with 64](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-9.jpg)

Sec. 19. 6 Computing Sketch[i] for Doc 1 Document 1 264 Start with 64 -bit f(shingles) 264 Permute with pi 264 Pick the min value

![Sec. 19. 6 Test if Doc 1. Sketch[i] = Doc 2. Sketch[i] Document 2 Sec. 19. 6 Test if Doc 1. Sketch[i] = Doc 2. Sketch[i] Document 2](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-10.jpg)

Sec. 19. 6 Test if Doc 1. Sketch[i] = Doc 2. Sketch[i] Document 2 Document 1 A 264 264 B 264 Are these equal? Claim: This happens with probability Size_of_intersection / Size_of_union Use 200 random permutations (minimum), and thus create one 200 -dim vector per document and evaluate the fraction of shared components

It’s even more difficult… So we have squeezed few Kbs of data (web page) into few hundred bytes But you still need a brute-force comparison (quadratic time) to compute all nearly-duplicate documents n This is too much even if it is executed in RAM

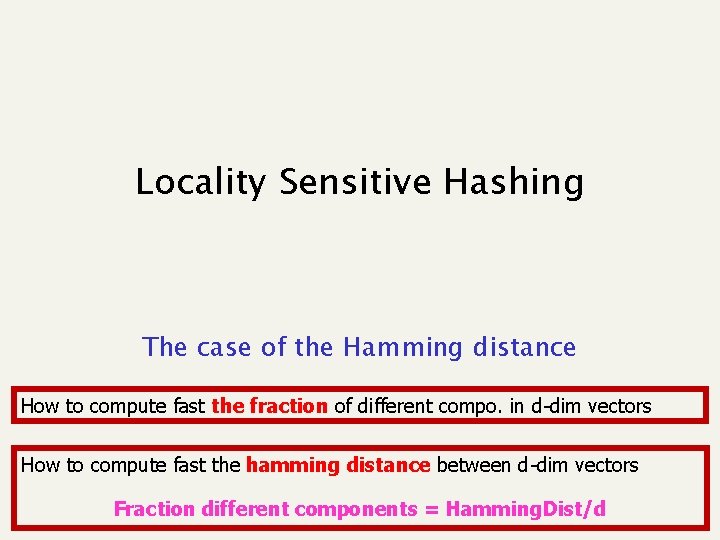

Locality Sensitive Hashing The case of the Hamming distance How to compute fast the fraction of different compo. in d-dim vectors How to compute fast the hamming distance between d-dim vectors Fraction different components = Hamming. Dist/d

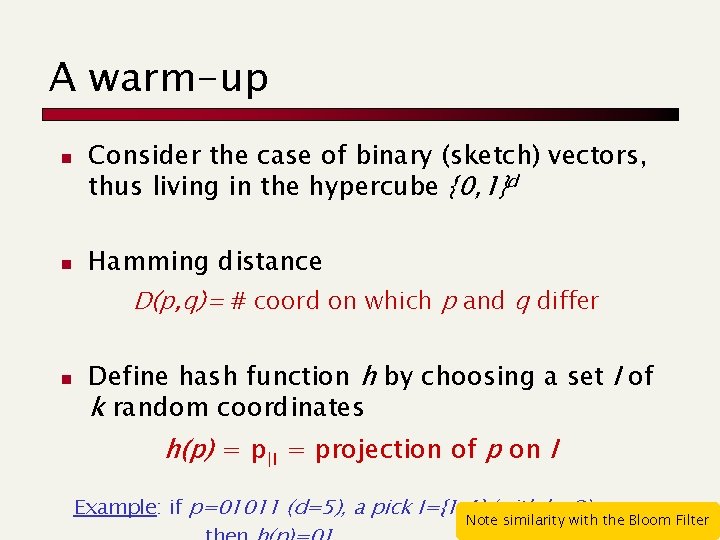

A warm-up n n Consider the case of binary (sketch) vectors, thus living in the hypercube {0, 1}d Hamming distance D(p, q)= # coord on which p and q differ n Define hash function h by choosing a set I of k random coordinates h(p) = p|I = projection of p on I Example: if p=01011 (d=5), a pick I={1, 4} (with k=2), Note similarity with the Bloom Filter

![A key property 1 n 2 …. d Pr[to pick an equal component]= (d A key property 1 n 2 …. d Pr[to pick an equal component]= (d](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-14.jpg)

A key property 1 n 2 …. d Pr[to pick an equal component]= (d - D(p, q))/d equal n We can vary the probability by changing k Pr k=1 Pr k=2 What about False Negatives ? distance

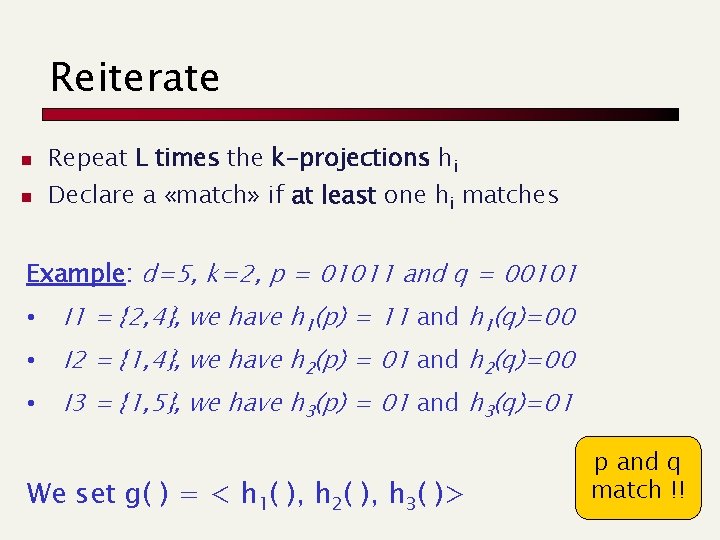

Reiterate n n Repeat L times the k-projections hi Declare a «match» if at least one hi matches Example: d=5, k=2, p = 01011 and q = 00101 • I 1 = {2, 4}, we have h 1(p) = 11 and h 1(q)=00 • I 2 = {1, 4}, we have h 2(p) = 01 and h 2(q)=00 • I 3 = {1, 5}, we have h 3(p) = 01 and h 3(q)=01 We set g( ) = < h 1( ), h 2( ), h 3( )> p and q match !!

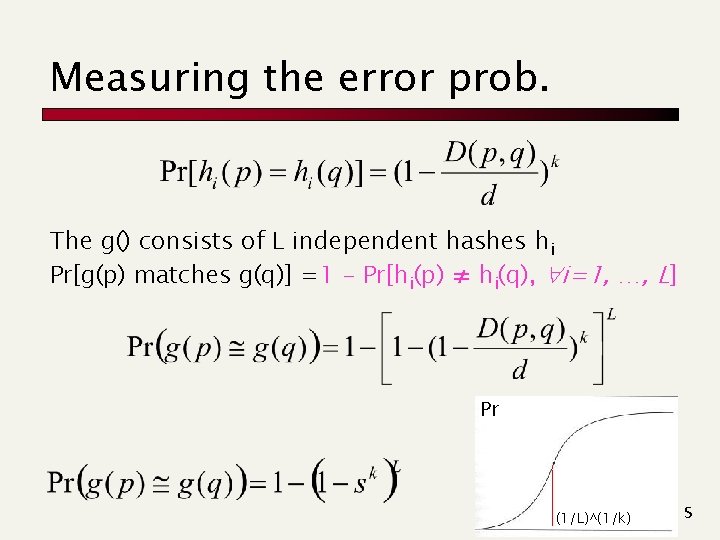

Measuring the error prob. The g() consists of L independent hashes hi Pr[g(p) matches g(q)] =1 - Pr[hi(p) ≠ hi(q), i=1, …, L] Pr (1/L)^(1/k) s

Find groups of similar items n SOL 1: Buckets provide the candidate similar items «Merge» similar sets if they share items p h 1(p) T 1 Points in a bucket are possibly similar objects h 2(p) T 2 h. L(p) TL

Find groups of similar items n SOL 1: Buckets provide the candidate similar items n SOL 2: n n n Sort items by the hi(), and pick as similar candidate the equal ones Repeat L times, for all hi() «Merge» candidate sets if they share items. Check candidates !!! What about clustering ?

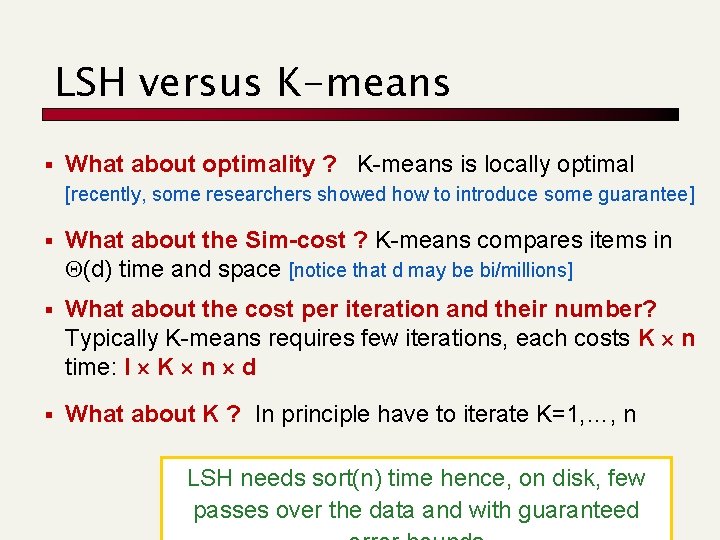

LSH versus K-means § What about optimality ? K-means is locally optimal [recently, some researchers showed how to introduce some guarantee] § What about the Sim-cost ? K-means compares items in Q(d) time and space [notice that d may be bi/millions] § What about the cost per iteration and their number? Typically K-means requires few iterations, each costs K n time: I K n d § What about K ? In principle have to iterate K=1, …, n LSH needs sort(n) time hence, on disk, few passes over the data and with guaranteed

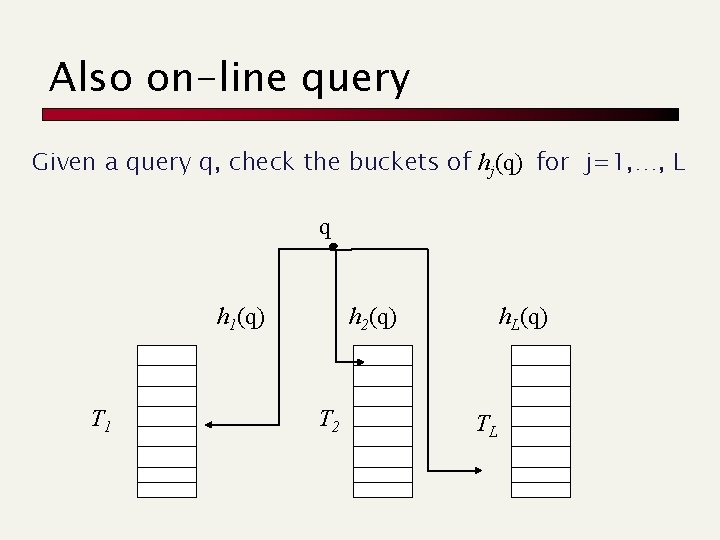

Also on-line query Given a query q, check the buckets of hj(q) for j=1, …, L q h 1(q) T 1 h 2(q) T 2 h. L(q) TL

Locality Sensitive Hashing and its applications More problems, indeed

Another classic problem The problem: Given U users, the goal is to find groups of similar users (or, smilar to user Q) Features = Personal data, preferences, purchases, navigational behavior, followers/ing or +1, … n Ø A feature is typically a numerical value: binary or real 1 2 3 4 5 U 1 0 1 1 U 2 0 1 1 0 0 U 3 0 1 1 Hamming distance: #different components

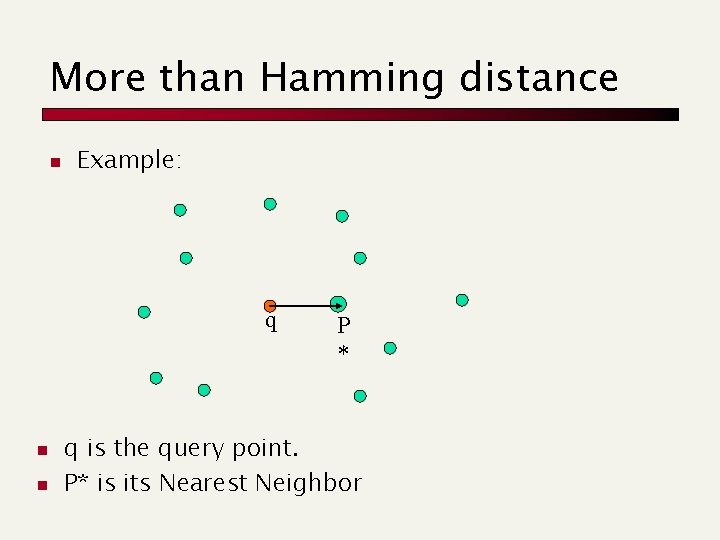

More than Hamming distance n Example: q n n P * q is the query point. P* is its Nearest Neighbor

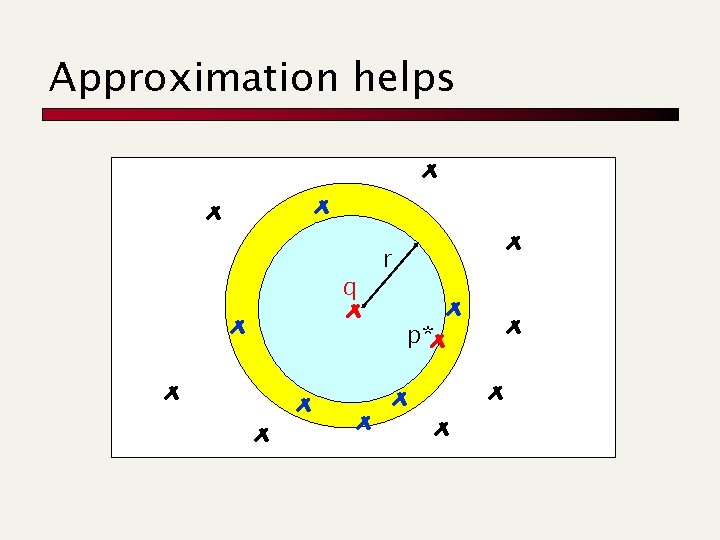

Approximation helps q r p*

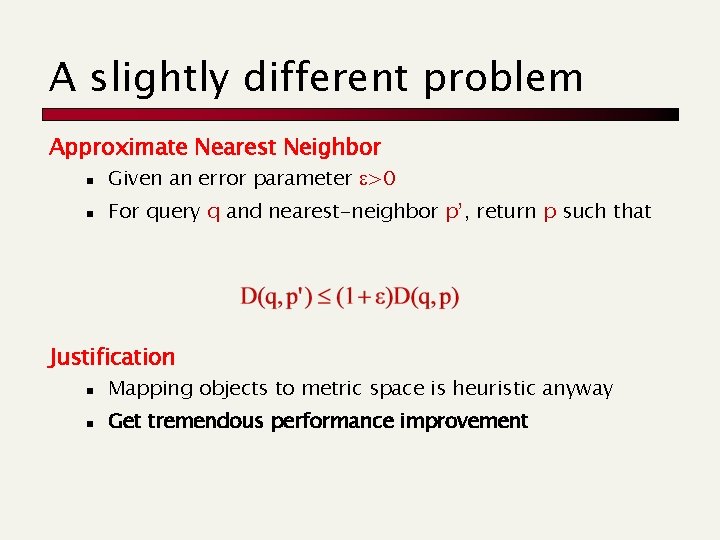

A slightly different problem Approximate Nearest Neighbor n Given an error parameter >0 n For query q and nearest-neighbor p’, return p such that Justification n Mapping objects to metric space is heuristic anyway n Get tremendous performance improvement

A workable approach n Given an error parameter >0, distance threshold t>0 n (t, )-Approximate NN Query n n n If no point p with D(q, p)<t, return FAILURE Else, return any p’ with D(q, p’)< (1+ )t Application: Approximate Nearest Neighbor n Assume maximum distance is T n Run in parallel for n Time/space – O(log 1+ T) overhead

Locality Sensitive Hashing and its applications The analysis

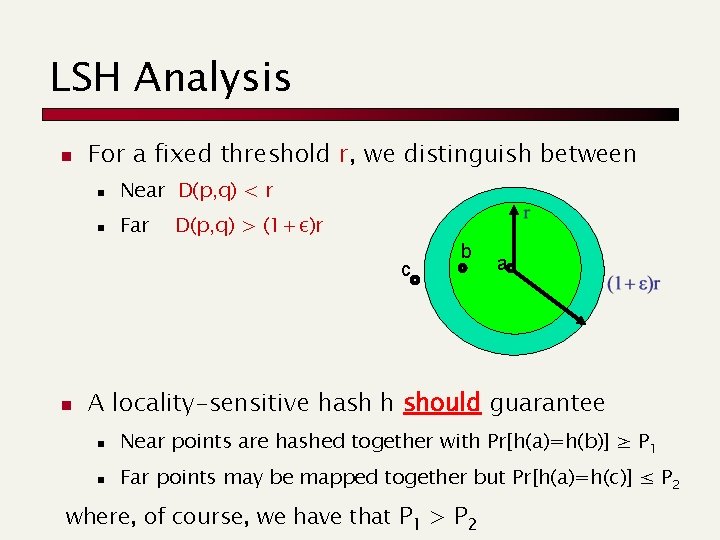

LSH Analysis n For a fixed threshold r, we distinguish between n Near D(p, q) < r n Far D(p, q) > (1+ε)r c n b a A locality-sensitive hash h should guarantee n Near points are hashed together with Pr[h(a)=h(b)] ≥ P 1 n Far points may be mapped together but Pr[h(a)=h(c)] ≤ P 2 where, of course, we have that P 1 > P 2

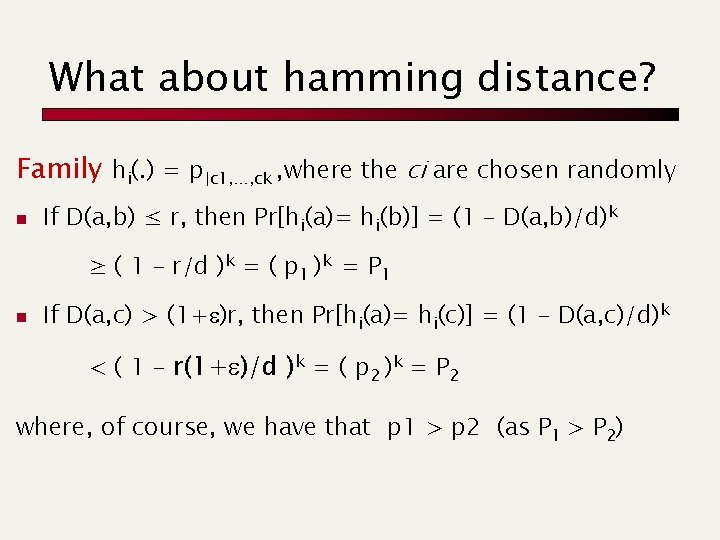

What about hamming distance? Family hi(. ) = p|c 1, …, ck , where the ci are chosen randomly n If D(a, b) ≤ r, then Pr[hi(a)= hi(b)] = (1 – D(a, b)/d)k ≥ ( 1 – r/d )k = ( p 1 )k = P 1 n If D(a, c) > (1+ )r, then Pr[hi(a)= hi(c)] = (1 – D(a, c)/d)k < ( 1 – r(1+ )/d )k = ( p 2 )k = P 2 where, of course, we have that p 1 > p 2 (as P 1 > P 2)

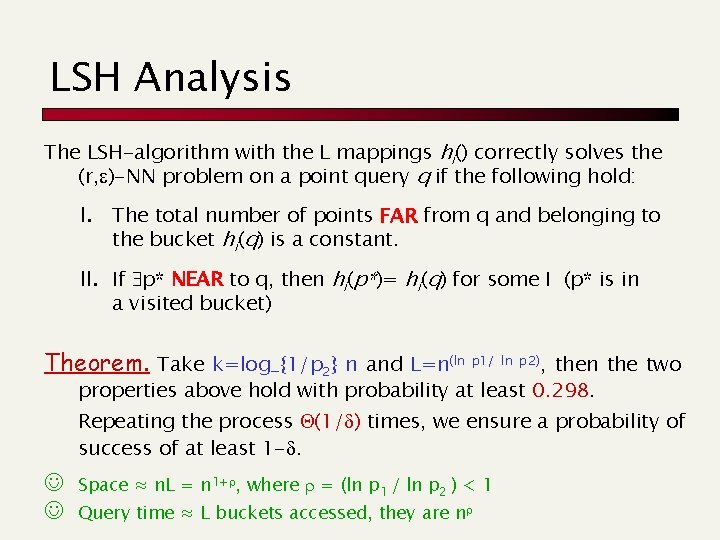

LSH Analysis The LSH-algorithm with the L mappings hi() correctly solves the (r, )-NN problem on a point query q if the following hold: I. The total number of points FAR from q and belonging to the bucket hi(q) is a constant. II. If p* NEAR to q, then hi(p*)= hi(q) for some I (p* is in a visited bucket) Theorem. Take k=log_{1/p 2} n and L=n(ln p 1/ ln p 2), then the two properties above hold with probability at least 0. 298. Repeating the process Q(1/d) times, we ensure a probability of success of at least 1 -d. Space ≈ n. L = n 1+r, where r = (ln p 1 / ln p 2 ) < 1 Query time ≈ L buckets accessed, they are nr

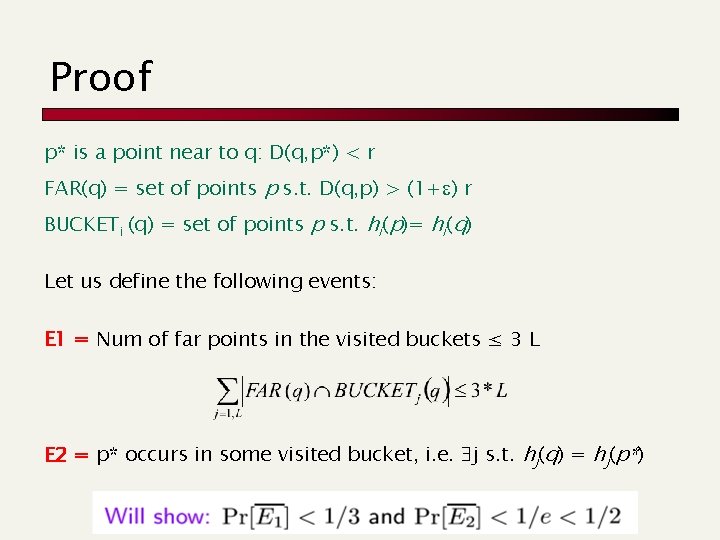

Proof p* is a point near to q: D(q, p*) < r FAR(q) = set of points p s. t. D(q, p) > (1+ ) r BUCKETi (q) = set of points p s. t. hi(p)= hi(q) Let us define the following events: E 1 = Num of far points in the visited buckets ≤ 3 L E 2 = p* occurs in some visited bucket, i. e. j s. t. hj(q) = hj(p*)

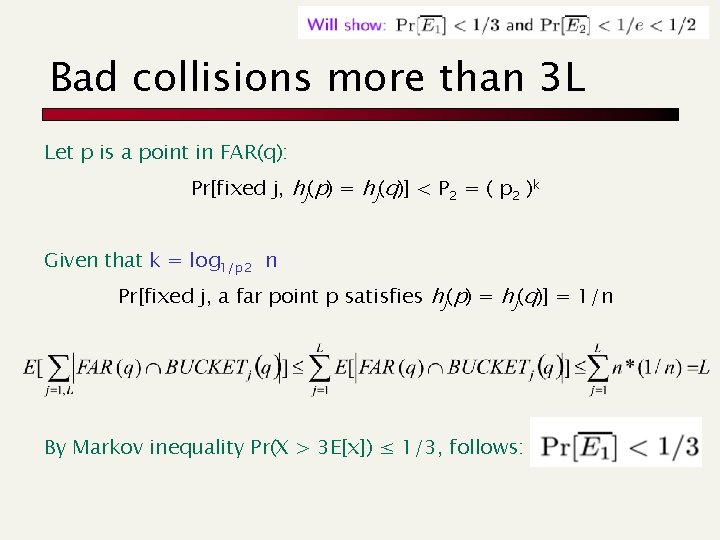

Bad collisions more than 3 L Let p is a point in FAR(q): Pr[fixed j, hj(p) = hj(q)] < P 2 = ( p 2 )k Given that k = log 1/p 2 n Pr[fixed j, a far point p satisfies hj(p) = hj(q)] = 1/n By Markov inequality Pr(X > 3 E[x]) ≤ 1/3, follows:

![Good collision: p* occurs For any hj the probability that Pr[hj(p*) = hj(q)] ≥ Good collision: p* occurs For any hj the probability that Pr[hj(p*) = hj(q)] ≥](http://slidetodoc.com/presentation_image_h/01c47ce13def47fd30185d45ebd30357/image-33.jpg)

Good collision: p* occurs For any hj the probability that Pr[hj(p*) = hj(q)] ≥ P 1 = ( p 1 )k = ( p 1 )^{ log 1/p 2 n } = Given that L=n(ln p 1/ ln p 2), this is actually 1/L. So we have that Pr[not E 2] = Pr[not finding p* in q’s buckets] = = (1 - Pr[hj(p*) = hj(q)])L = (1 – 1/L)L ≤ 1/e Finally Pr[E 1 and E 2] ≥ 1 – Pr[not E 1 OR not E 2] ≥ 1 – (Pr[not E 1] + Pr [not E 2]) ≥ 1 - (1/3) - (1/e) = 0. 298

- Slides: 33