Locally Decodable Codes Uri Nadav Contents What is

Locally Decodable Codes Uri Nadav

Contents • What is Locally Decodable Code (LDC) ? • Constructions • Lower Bounds • Reduction from Private Information Retrieval (PIR) to LDC

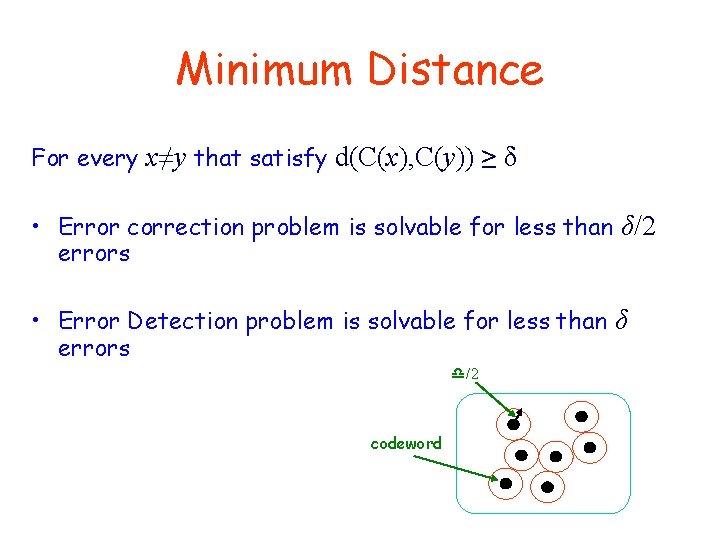

Minimum Distance For every x≠y that satisfy d(C(x), C(y)) ≥ δ • Error correction problem is solvable for less than δ/2 errors • Error Detection problem is solvable for less than δ errors /2 codeword

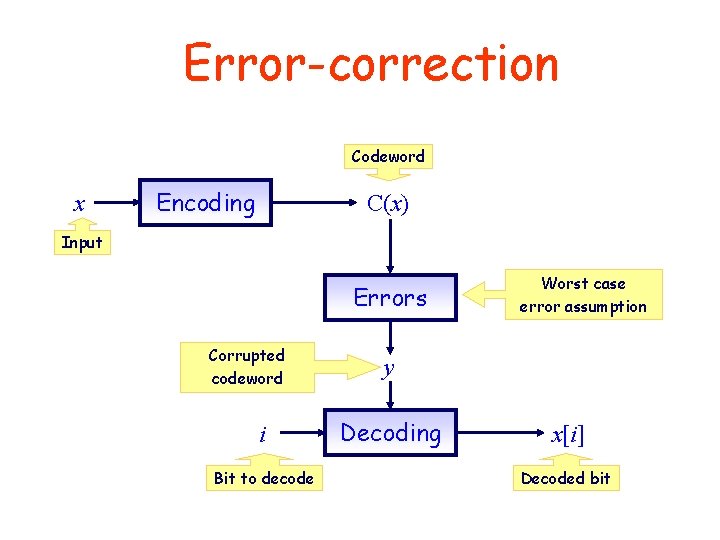

Error-correction Codeword x Encoding C(x) Input Errors Corrupted codeword i Bit to decode Worst case error assumption y Decoding x[i] Decoded bit

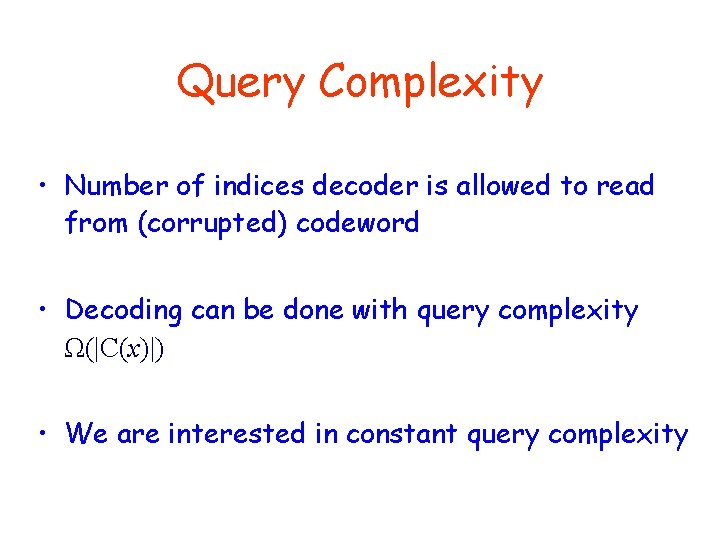

Query Complexity • Number of indices decoder is allowed to read from (corrupted) codeword • Decoding can be done with query complexity Ω(|C(x)|) • We are interested in constant query complexity

Adversarial Model We can view the errors model as an adversary that chooses positions to destroy, and has access to the decoding/encoding scheme (but not to random coins) The adversary is allowed to insert at most m errors

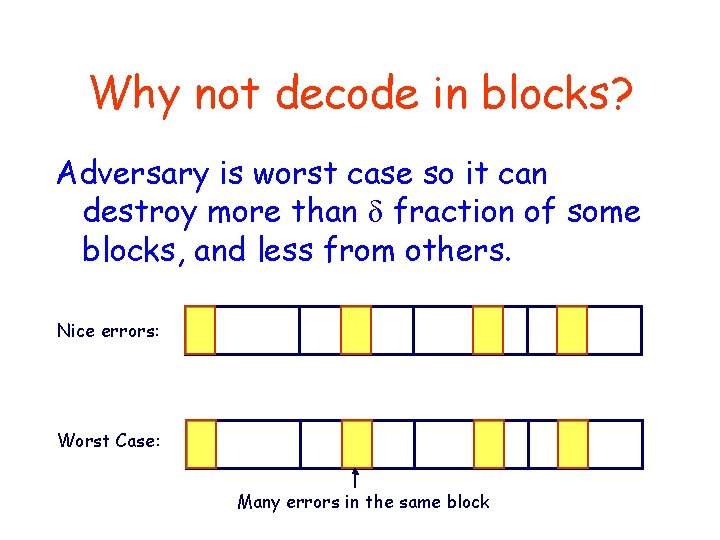

Why not decode in blocks? Adversary is worst case so it can destroy more than δ fraction of some blocks, and less from others. Nice errors: Worst Case: Many errors in the same block

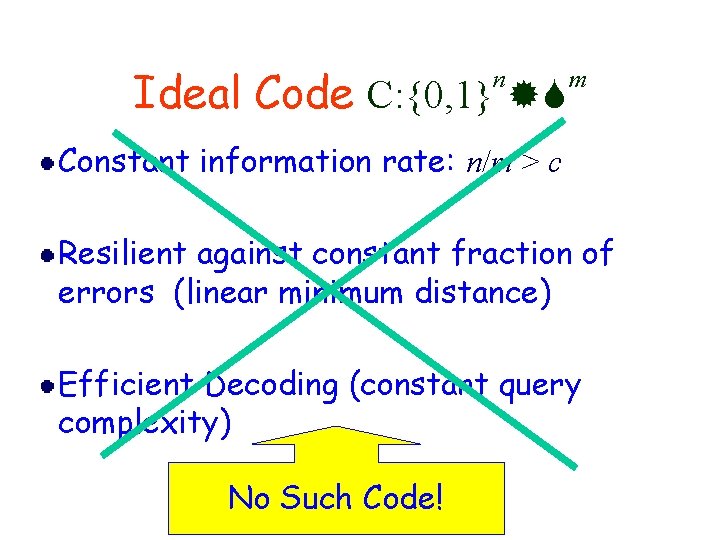

Ideal Code C: {0, 1} n m Constant information rate: n/m > c Resilient against constant fraction of errors (linear minimum distance) Efficient Decoding (constant query complexity) No Such Code!

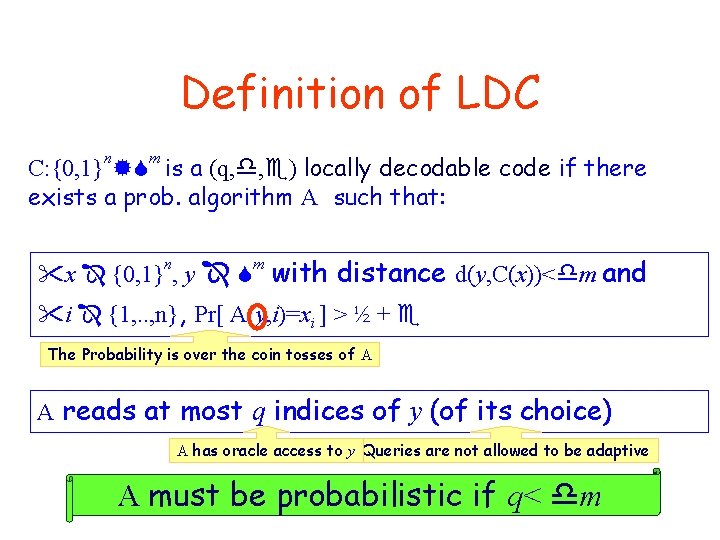

Definition of LDC n m C: {0, 1} is a (q, , ) locally decodable code if there exists a prob. algorithm A such that: x {0, 1} , y with distance d(y, C(x))< m and n m i {1, . . , n}, Pr[ A(y, i)=xi ] > ½ + The Probability is over the coin tosses of A A reads at most q indices of y (of its choice) A has oracle access to y Queries are not allowed to be adaptive A must be probabilistic if q< m

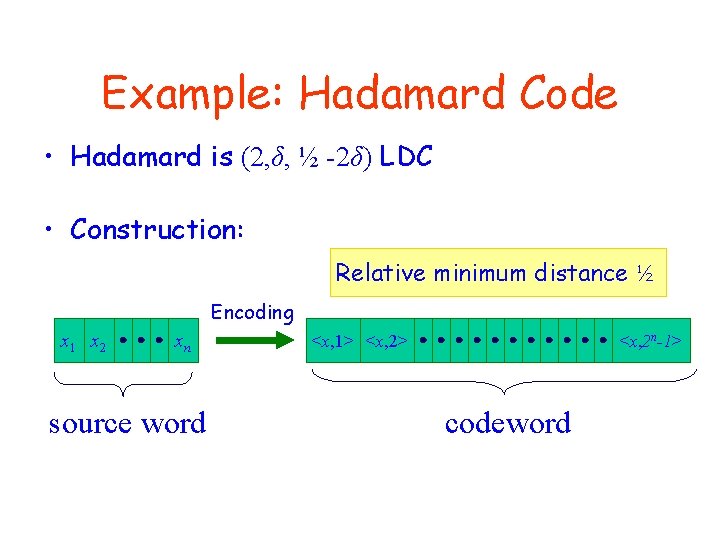

Example: Hadamard Code • Hadamard is (2, δ, ½ -2δ) LDC • Construction: Relative minimum distance ½ Encoding x 1 x 2 xn source word <x, 1> <x, 2 n-1> codeword

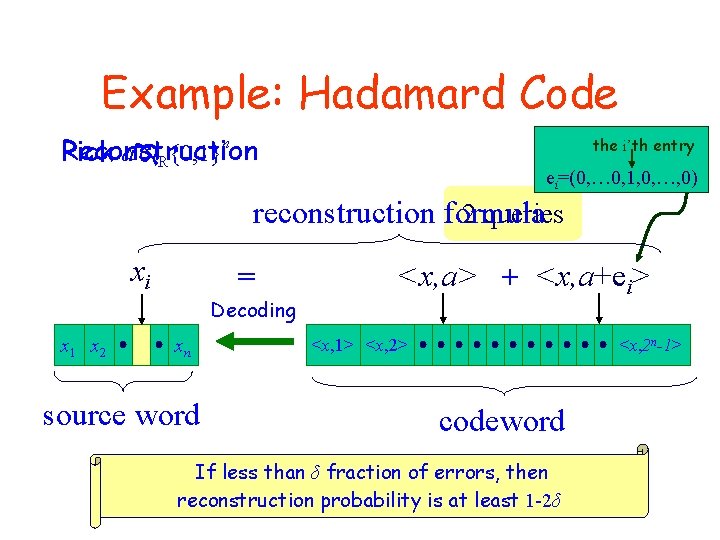

Example: Hadamard Code Reconstruction Pick a R{0, 1}n the i’th entry ei=(0, … 0, 1, 0, …, 0) reconstruction formula 2 queries xi = Decoding x 1 x 2 xn source word <x, a> + <x, a+ei> <x, 1> <x, 2 n-1> codeword If less than δ fraction of errors, then reconstruction probability is at least 1 -2δ

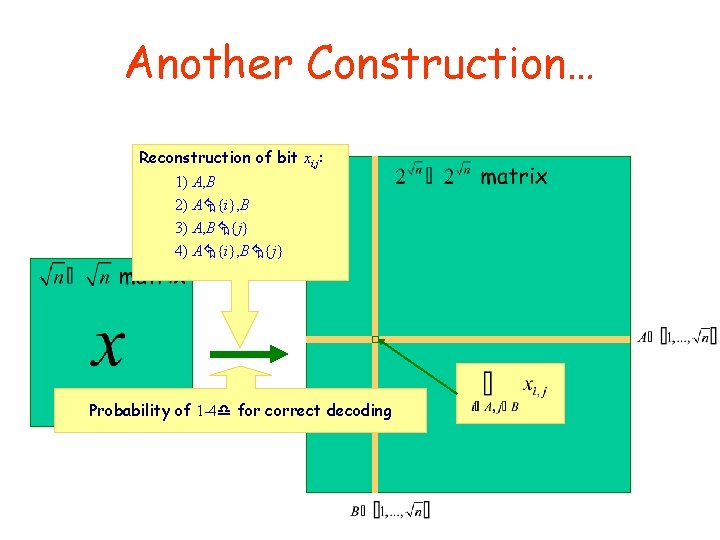

Another Construction… Reconstruction of bit xi, j: 1) A, B 2) A {i}, B 3) A, B {j} 4) A {i}, B {j} Probability of 1 -4 for correct decoding

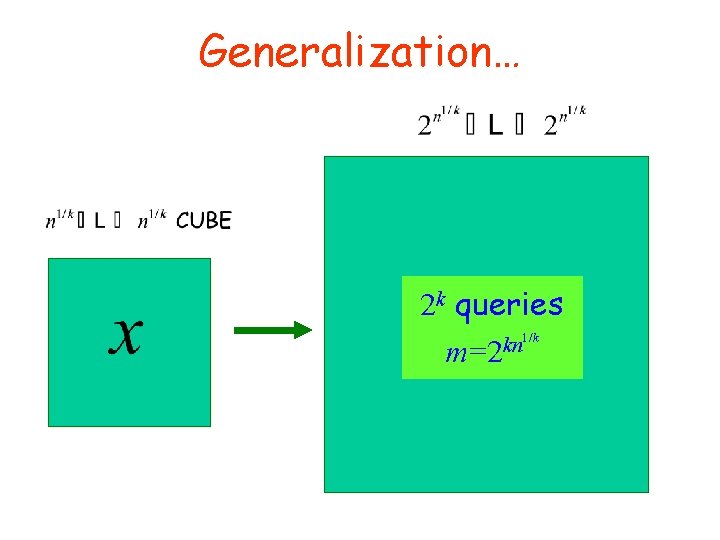

Generalization… 2 k queries 1/k kn m=2

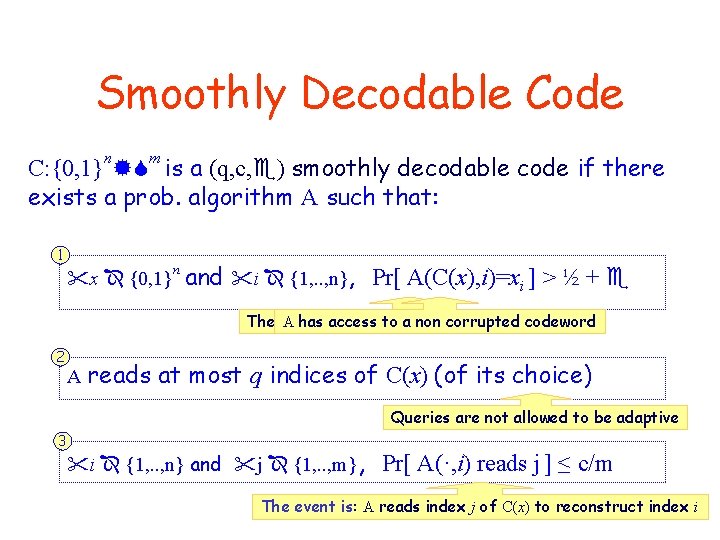

Smoothly Decodable Code n m C: {0, 1} is a (q, c, ) smoothly decodable code if there exists a prob. algorithm A such that: 1 x {0, 1} n and i {1, . . , n}, Pr[ A(C(x), i)=xi ] > ½ + The Probability A has access is to over a non thecorrupted coin tosses codeword of A 2 A reads at most q indices of C(x) (of its choice) Queries are not allowed to be adaptive 3 i {1, . . , n} and j {1, . . , m}, Pr[ A(·, i) reads j ] ≤ c/m The event is: A reads index j of C(x) to reconstruct index i

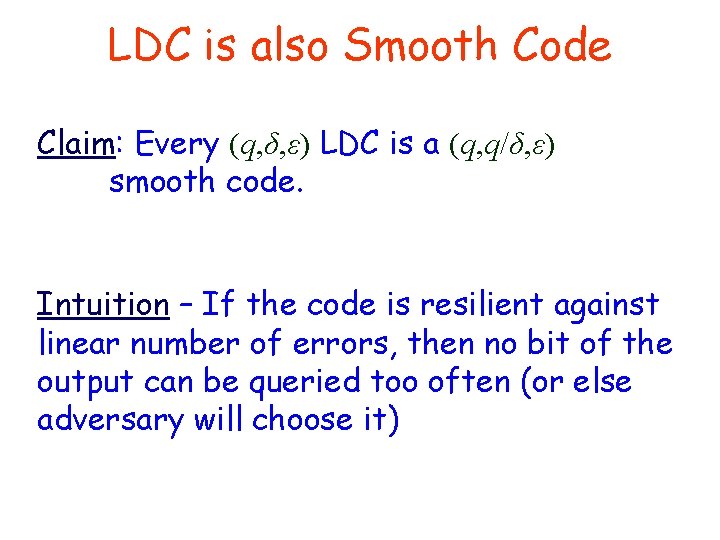

LDC is also Smooth Code Claim: Every (q, δ, ε) LDC is a (q, q/δ, ε) smooth code. Intuition – If the code is resilient against linear number of errors, then no bit of the output can be queried too often (or else adversary will choose it)

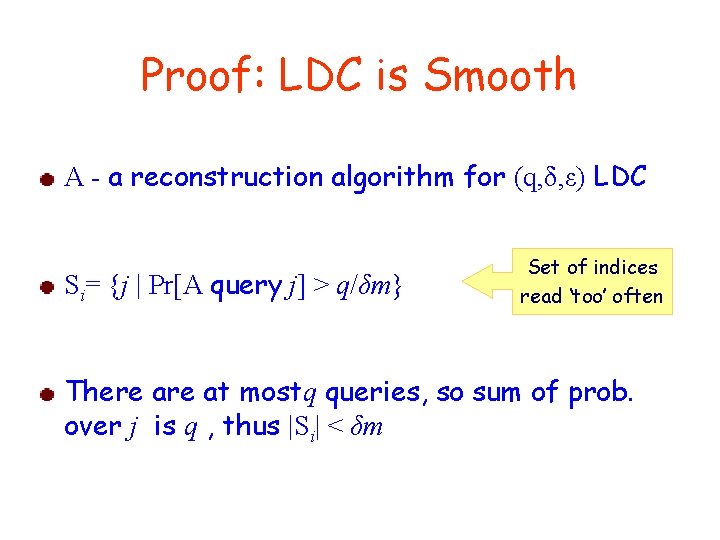

Proof: LDC is Smooth A - a reconstruction algorithm for (q, δ, ε) LDC Si= {j | Pr[A query j] > q/δm} Set of indices read ‘too’ often There at mostq queries, so sum of prob. over j is q , thus |Si| < δm

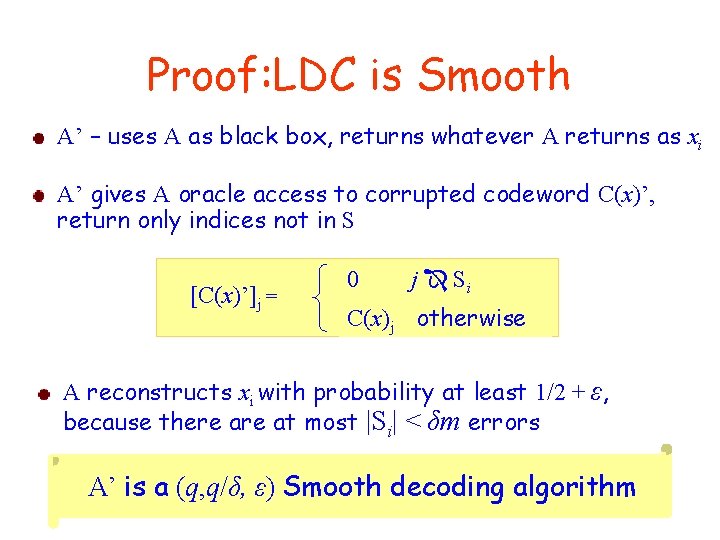

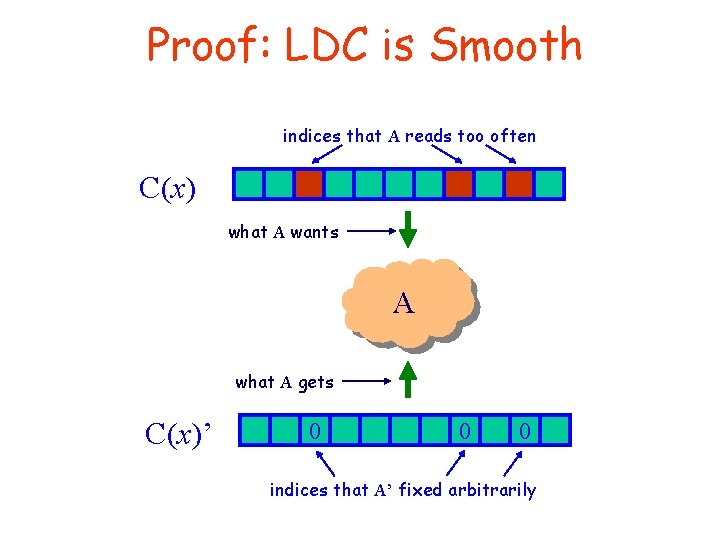

Proof: LDC is Smooth A’ – uses A as black box, returns whatever A returns as xi A’ gives A oracle access to corrupted codeword C(x)’, return only indices not in S [C(x)’]j = 0 j Si C(x)j otherwise A reconstructs xi with probability at least 1/2 + ε, because there at most |Si| < δm errors A’ is a (q, q/δ, ε) Smooth decoding algorithm

Proof: LDC is Smooth indices that A reads too often C(x) what A wants A what A gets C(x)’ 0 0 0 indices that A’ fixed arbitrarily

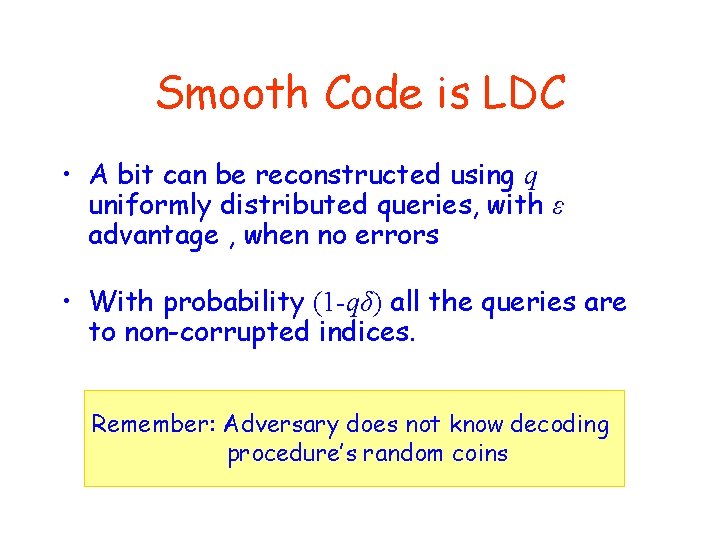

Smooth Code is LDC • A bit can be reconstructed using q uniformly distributed queries, with ε advantage , when no errors • With probability (1 -qδ) all the queries are to non-corrupted indices. Remember: Adversary does not know decoding procedure’s random coins

![Lower Bounds • Non existence for q = 1 [KT] • Non linear rate Lower Bounds • Non existence for q = 1 [KT] • Non linear rate](http://slidetodoc.com/presentation_image_h2/3b08d6d417d7f230e81b3844df0588e5/image-20.jpg)

Lower Bounds • Non existence for q = 1 [KT] • Non linear rate for q ≥ 2 [KT] • Exponential rate for linear code, q=2 [Goldreich et al] • Exponential rate for every code, q=2 [Kerenidis, de Wolf] (using quantum arguments)

![Information Theory basics • Entropy H(x) = -∑Pr[x=i] log(Pr[x=i]) • Mutual Information I(x, y) Information Theory basics • Entropy H(x) = -∑Pr[x=i] log(Pr[x=i]) • Mutual Information I(x, y)](http://slidetodoc.com/presentation_image_h2/3b08d6d417d7f230e81b3844df0588e5/image-21.jpg)

Information Theory basics • Entropy H(x) = -∑Pr[x=i] log(Pr[x=i]) • Mutual Information I(x, y) = H(x)-H(x|y)

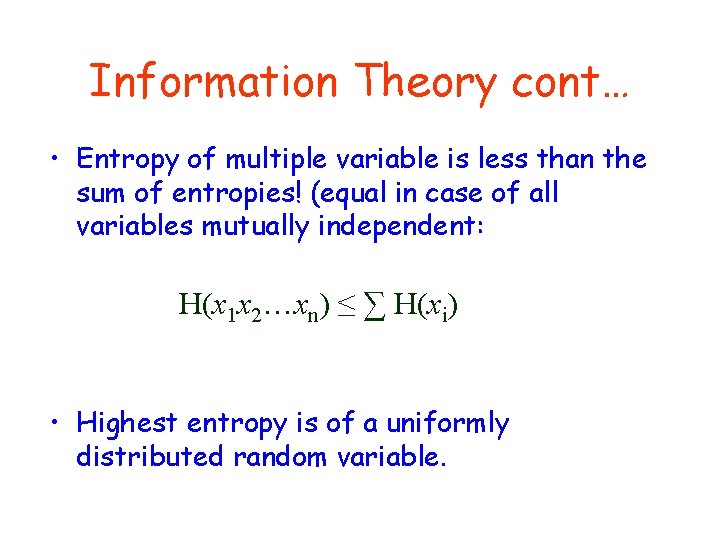

Information Theory cont… • Entropy of multiple variable is less than the sum of entropies! (equal in case of all variables mutually independent: H(x 1 x 2…xn) ≤ ∑ H(xi) • Highest entropy is of a uniformly distributed random variable.

![IT result from [KT] IT result from [KT]](http://slidetodoc.com/presentation_image_h2/3b08d6d417d7f230e81b3844df0588e5/image-23.jpg)

IT result from [KT]

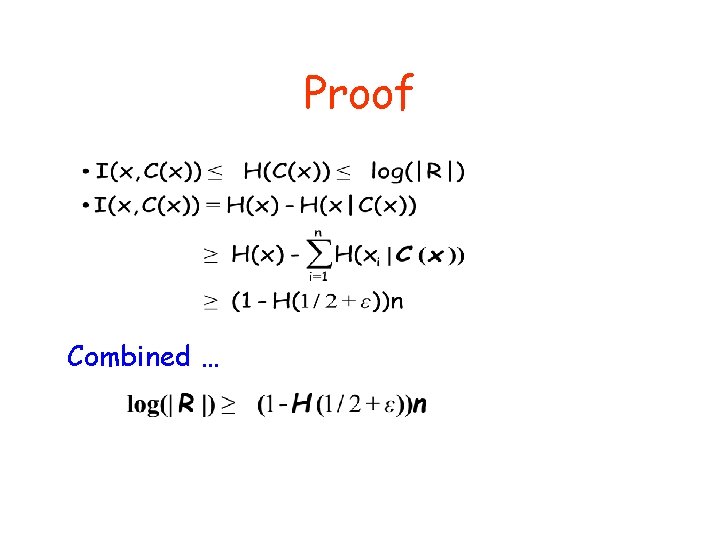

Proof Combined …

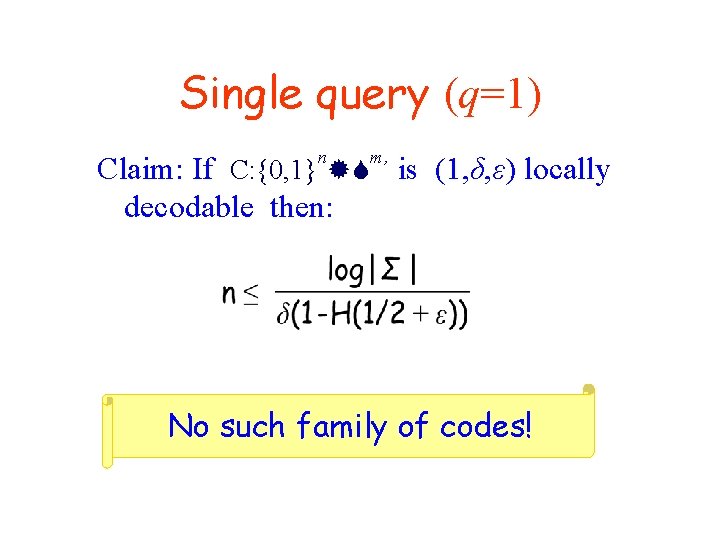

Single query (q=1) n m, Claim: If C: {0, 1} is (1, δ, ε) locally decodable then: No such family of codes!

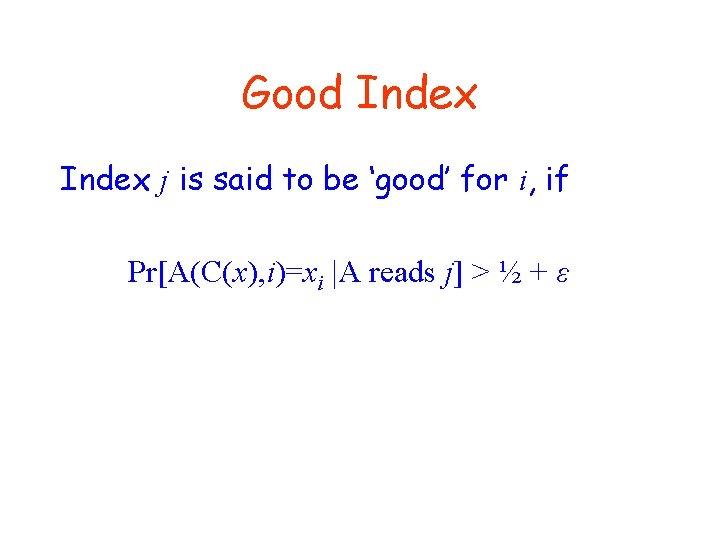

Good Index j is said to be ‘good’ for i, if Pr[A(C(x), i)=xi |A reads j] > ½ + ε

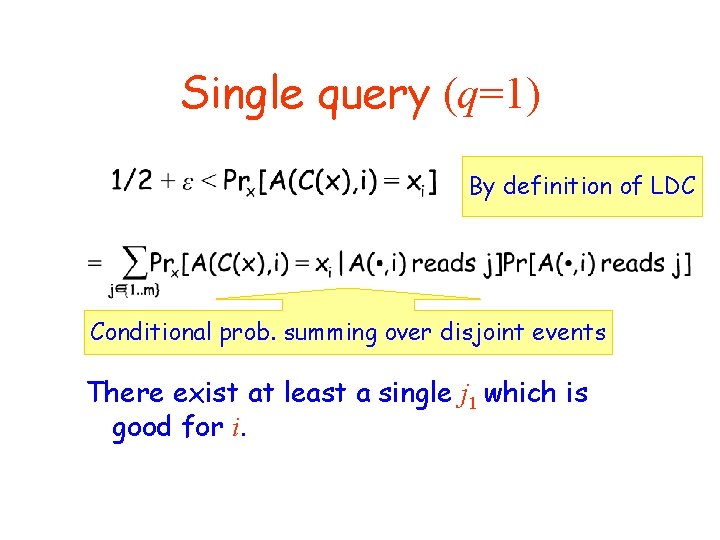

Single query (q=1) By definition of LDC Conditional prob. summing over disjoint events There exist at least a single j 1 which is good for i.

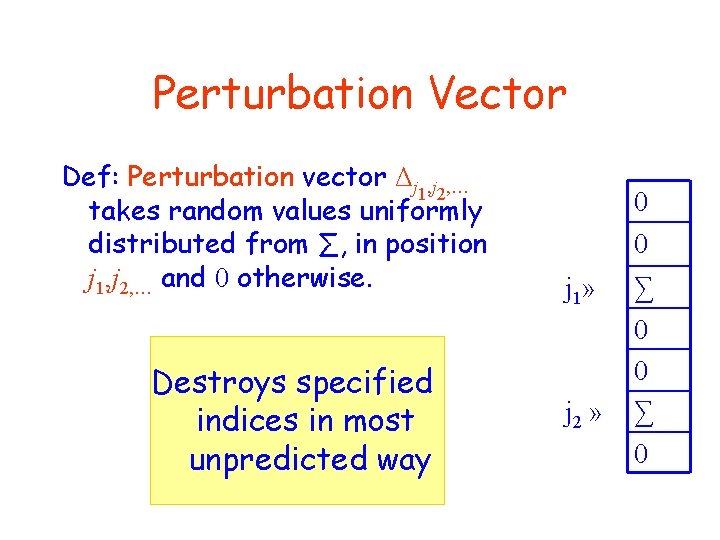

Perturbation Vector Def: Perturbation vector Δj 1, j 2, … takes random values uniformly distributed from ∑, in position j 1, j 2, … and 0 otherwise. Destroys specified indices in most unpredicted way 0 0 j 1» j 2 » ∑ 0 0 ∑ 0

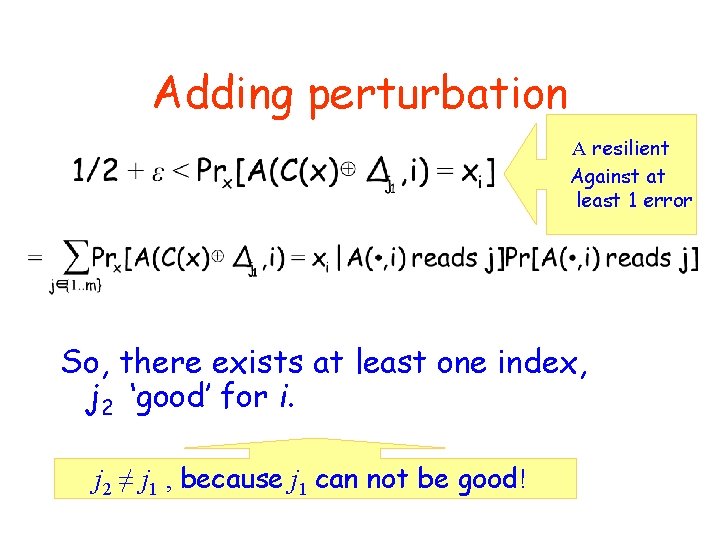

Adding perturbation A resilient Against at least 1 error So, there exists at least one index, j 2 ‘good’ for i. j 2 ≠ j 1 , because j 1 can not be good!

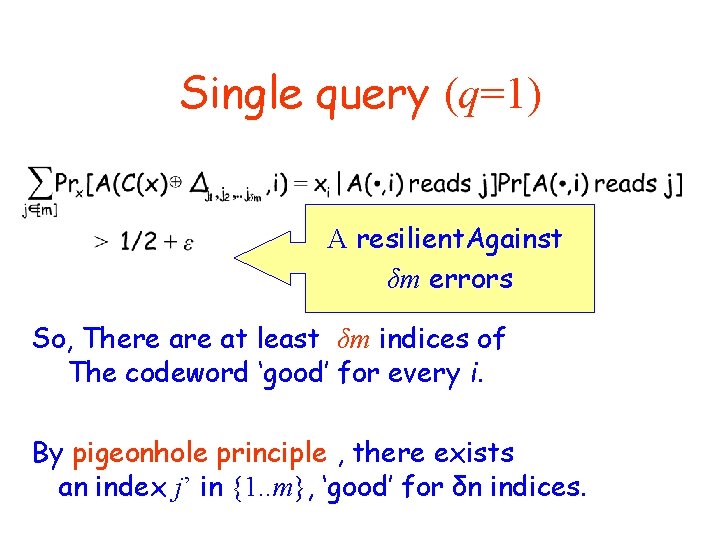

Single query (q=1) A resilient. Against δm errors So, There at least δm indices of The codeword ‘good’ for every i. By pigeonhole principle , there exists an index j’ in {1. . m}, ‘good’ for δn indices.

![Single query (q=1) Think of C(x[1. . δn]) projected on j’ as a function Single query (q=1) Think of C(x[1. . δn]) projected on j’ as a function](http://slidetodoc.com/presentation_image_h2/3b08d6d417d7f230e81b3844df0588e5/image-31.jpg)

Single query (q=1) Think of C(x[1. . δn]) projected on j’ as a function from the δn indices of the input. The range is ∑, and each bit of the input can be reconstructed w. p. ½ + ε. Thus by IT result:

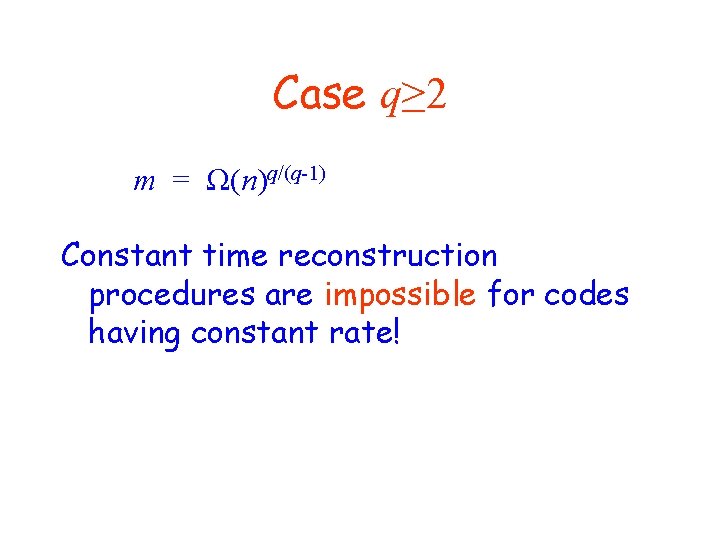

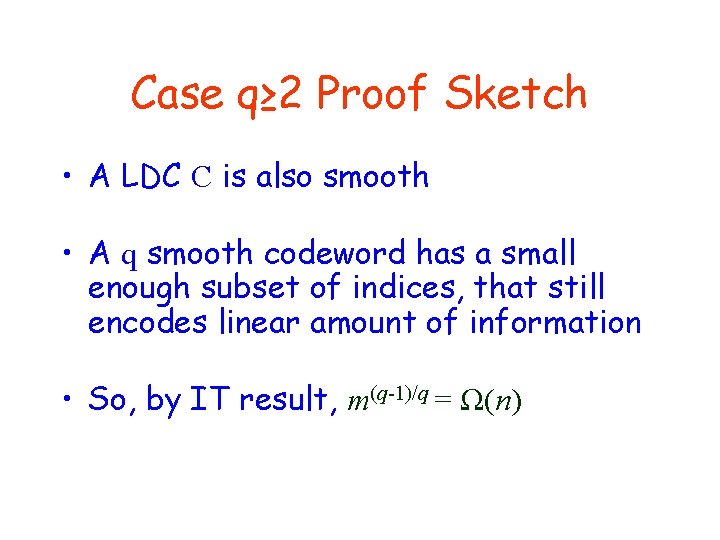

Case q≥ 2 m = Ω(n)q/(q-1) Constant time reconstruction procedures are impossible for codes having constant rate!

Case q≥ 2 Proof Sketch • A LDC C is also smooth • A q smooth codeword has a small enough subset of indices, that still encodes linear amount of information • So, by IT result, m(q-1)/q = Ω(n)

Applications? • Better locally decodable codes have applications to PIR • Applications to the practice of faulttolerant data storage/transmission?

What about Locally Encodable • A ‘Respectable Code’ is resilient against Ω(m) fraction of errors. • We expect a bit of the encoding to depend on many bits of the encoding Otherwise, there exists a bit which influence less than 1/n fraction of the encoding.

Open Issues • Adaptive vs Non-Adaptive Queries guess first q-1 answers with succeess probability ∑q-1 • Closing the gap

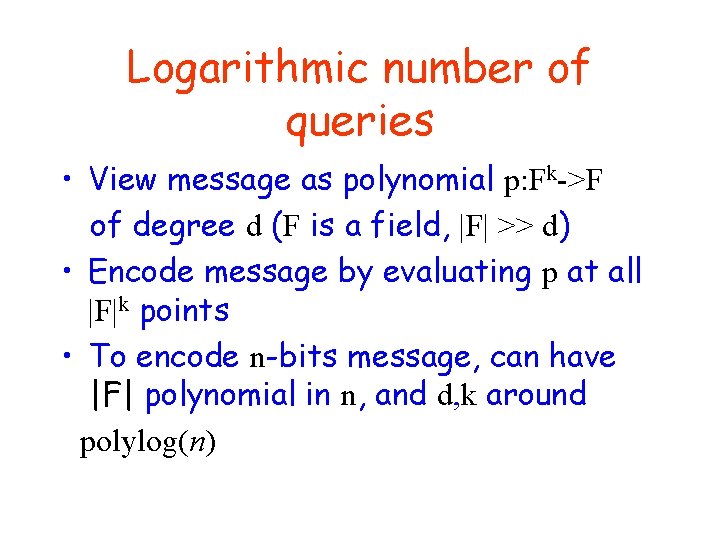

Logarithmic number of queries • View message as polynomial p: Fk->F of degree d (F is a field, |F| >> d) • Encode message by evaluating p at all |F|k points • To encode n-bits message, can have |F| polynomial in n, and d, k around polylog(n)

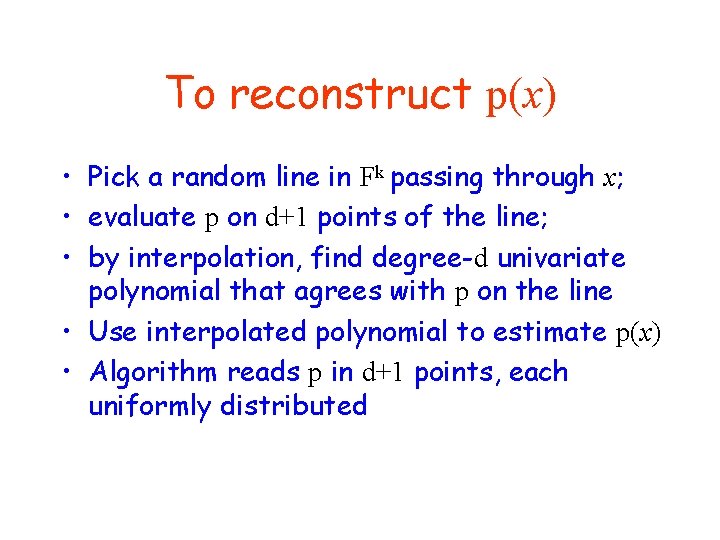

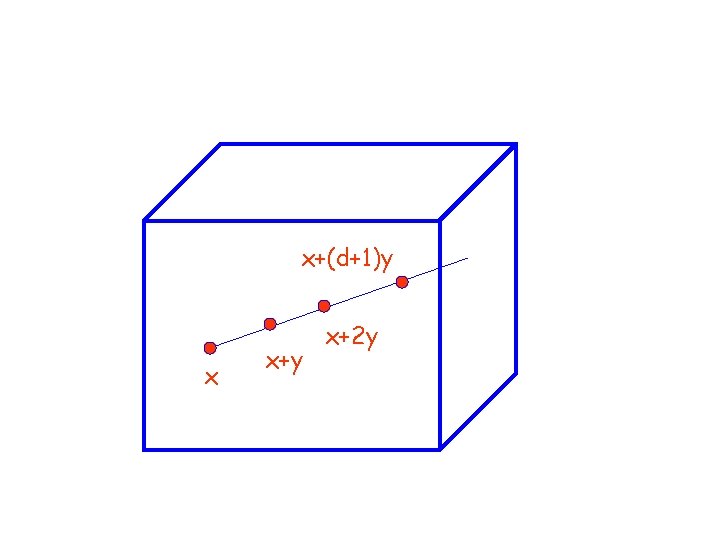

To reconstruct p(x) • Pick a random line in Fk passing through x; • evaluate p on d+1 points of the line; • by interpolation, find degree-d univariate polynomial that agrees with p on the line • Use interpolated polynomial to estimate p(x) • Algorithm reads p in d+1 points, each uniformly distributed

x+(d+1)y x x+y x+2 y

Private Information Retrieval (PIR) • Query a public database, without revealing the queried record. • Example: A broker needs to query NASDAQ database about a stock, but don’t won’t anyone to know he is interested.

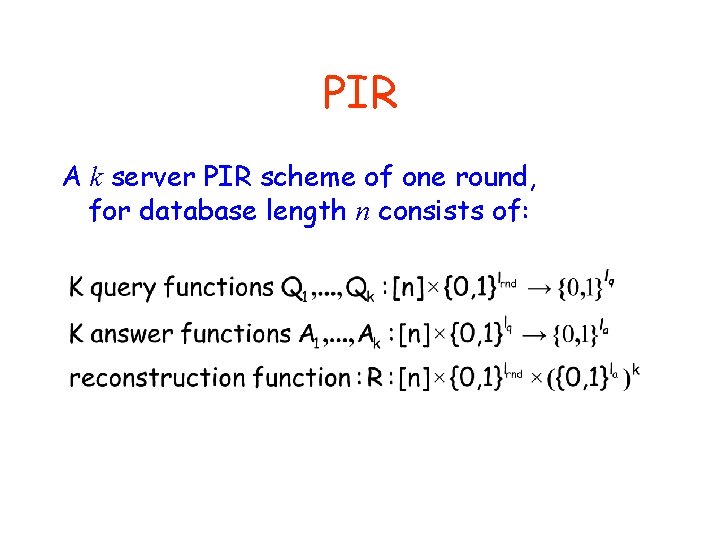

PIR A k server PIR scheme of one round, for database length n consists of:

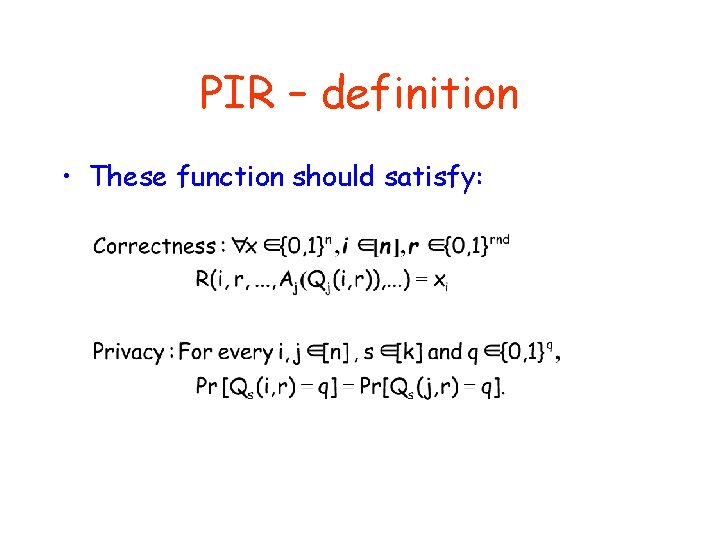

PIR – definition • These function should satisfy:

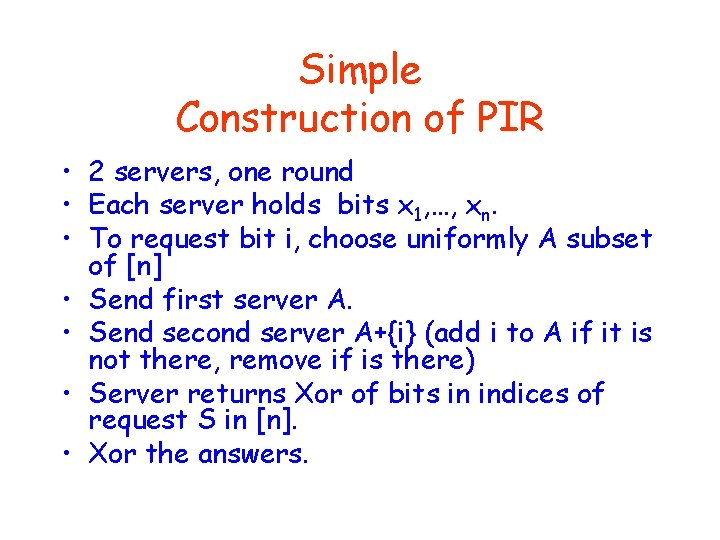

Simple Construction of PIR • 2 servers, one round • Each server holds bits x 1, …, xn. • To request bit i, choose uniformly A subset of [n] • Send first server A. • Send second server A+{i} (add i to A if it is not there, remove if is there) • Server returns Xor of bits in indices of request S in [n]. • Xor the answers.

Lower Bounds On Communication Complexity • To achieve privacy in case of single server, we need n bits message. • (not too far from the one round 2 server scheme we suggested).

Reduction from PIR to LDC • A codeword is a Concatenation of all possible answers from both servers • A query procedure is made of 2 queries to the database

- Slides: 45