Sparse Random Linear Codes are Locally Decodable and

Sparse Random Linear Codes are Locally Decodable and Testable Tali Kaufman (MIT) Joint work with Madhu Sudan (MIT)

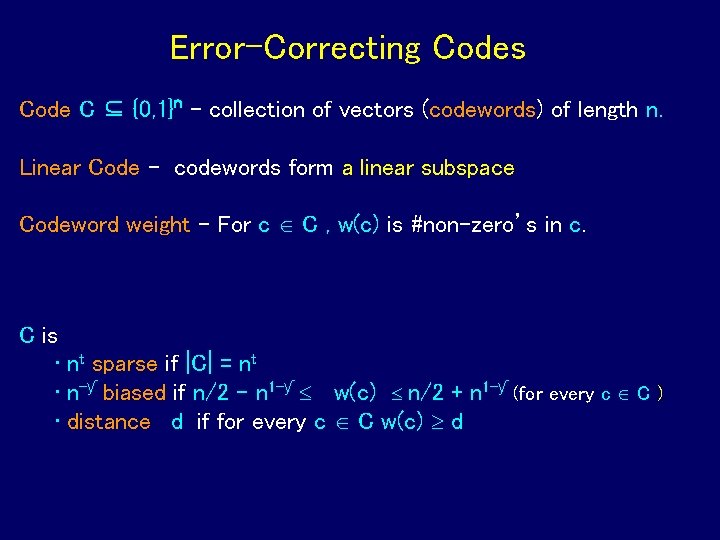

Error-Correcting Codes Code C ⊆ {0, 1}n - collection of vectors (codewords) of length n. Linear Code - codewords form a linear subspace Codeword weight – For c C , w(c) is #non-zero’s in c. C is • nt sparse if |C| = nt • n-ƴ biased if n/2 – n 1 -ƴ w(c) n/2 + n 1 -ƴ (for every c C ) • distance d if for every c C w(c) d

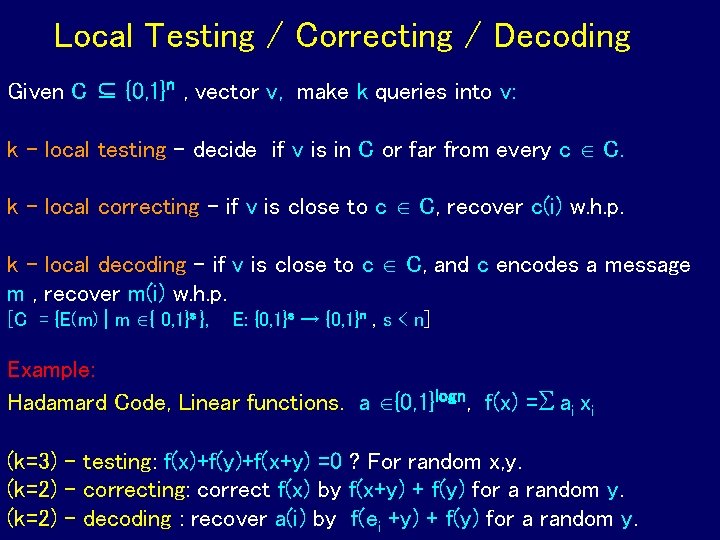

Local Testing / Correcting / Decoding Given C ⊆ {0, 1}n , vector v, make k queries into v: k - local testing - decide if v is in C or far from every c C. k - local correcting - if v is close to c C, recover c(i) w. h. p. k - local decoding - if v is close to c C, and c encodes a message m , recover m(i) w. h. p. [C = {E(m) | m { 0, 1}s }, E: {0, 1}s → {0, 1}n , s < n] Example: Hadamard Code, Linear functions. a {0, 1}logn, f(x) = ai xi (k=3) - testing: f(x)+f(y)+f(x+y) =0 ? For random x, y. (k=2) - correcting: correct f(x) by f(x+y) + f(y) for a random y. (k=2) - decoding : recover a(i) by f(ei +y) + f(y) for a random y.

![Brief History Local Correction: [Blum, Luby, Rubinfeld] In the context of Program Checking. Local Brief History Local Correction: [Blum, Luby, Rubinfeld] In the context of Program Checking. Local](http://slidetodoc.com/presentation_image_h2/17d92b76ed1a70c74067c113c8ee4121/image-4.jpg)

Brief History Local Correction: [Blum, Luby, Rubinfeld] In the context of Program Checking. Local Testability : [Blum, Luby, Rubinfeld] [Rubinfeld, Sudan], [Goldreich, Sudan] The core hardness of PCP. Local Decoding: [Katz, Trevisan], [Yekhanin] In the context of Private Information Retrieval (PIR) schemes. Most previous results (apart from [K, Litsyn] ) focus on specific codes obtained by their “nice” algebraic structures. This work: results for general codes based only on their density and distance.

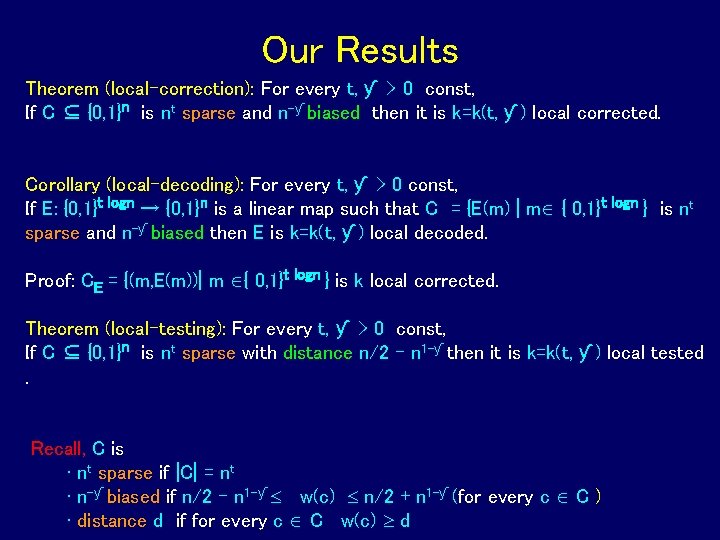

Our Results Theorem (local-correction): For every t, ƴ > 0 const, If C ⊆ {0, 1}n is nt sparse and n-ƴ biased then it is k=k(t, ƴ ) local corrected. Corollary (local-decoding): For every t, ƴ > 0 const, If E: {0, 1}t logn → {0, 1}n is a linear map such that C = {E(m) | m { 0, 1}t logn } is nt sparse and n-ƴ biased then E is k=k(t, ƴ ) local decoded. Proof: CE = {(m, E(m))| m { 0, 1}t logn } is k local corrected. Theorem (local-testing): For every t, ƴ > 0 const, If C ⊆ {0, 1}n is nt sparse with distance n/2 – n 1 -ƴ then it is k=k(t, ƴ ) local tested. Recall, C is • nt sparse if |C| = nt • n-ƴ biased if n/2 – n 1 -ƴ w(c) n/2 + n 1 -ƴ (for every c C ) • distance d if for every c C w(c) d

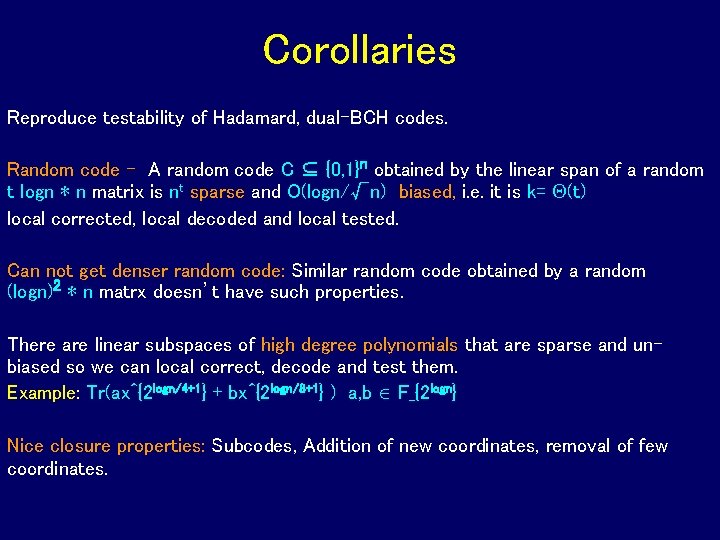

Corollaries Reproduce testability of Hadamard, dual-BCH codes. Random code - A random code C ⊆ {0, 1}n obtained by the linear span of a random t logn ∗ n matrix is nt sparse and O(logn/√n) biased, i. e. it is k= (t) local corrected, local decoded and local tested. Can not get denser random code: Similar random code obtained by a random (logn)2 ∗ n matrx doesn’t have such properties. There are linear subspaces of high degree polynomials that are sparse and unbiased so we can local correct, decode and test them. Example: Tr(ax^{2 logn/4+1} + bx^{2 logn/8+1} ) a, b F_{2 logn} Nice closure properties: Subcodes, Addition of new coordinates, removal of few coordinates.

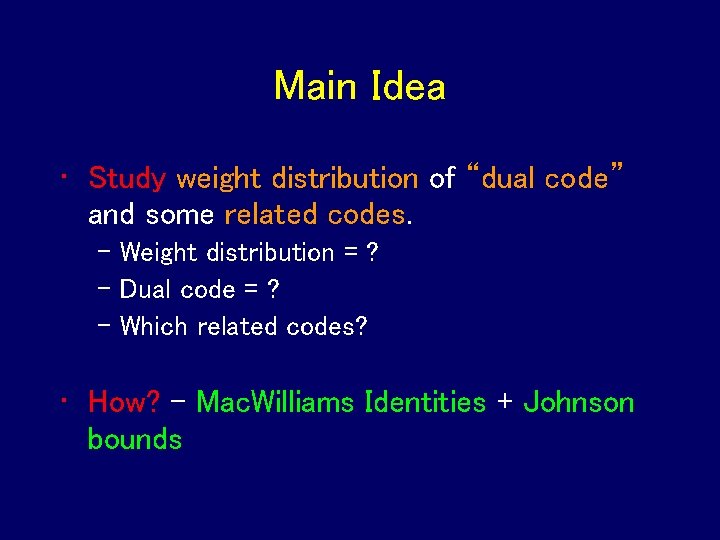

Main Idea • Study weight distribution of “dual code” and some related codes. – Weight distribution = ? – Dual code = ? – Which related codes? • How? – Mac. Williams Identities + Johnson bounds

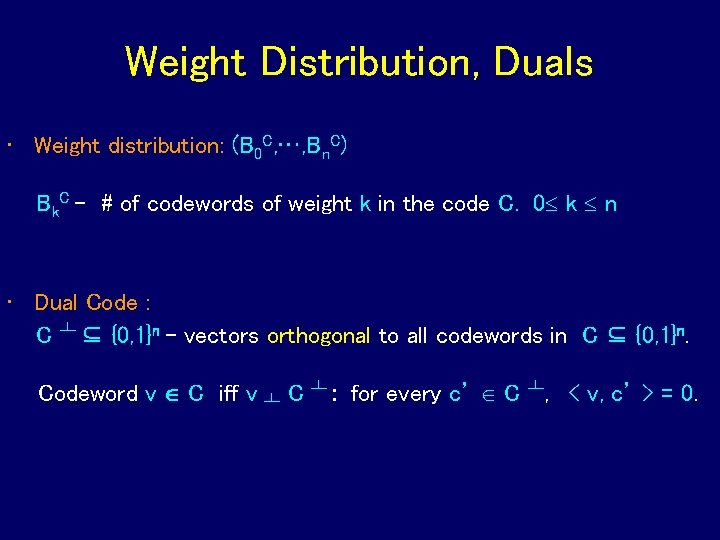

Weight Distribution, Duals • Weight distribution: (B 0 C, …, Bn. C) Bk. C - # of codewords of weight k in the code C. 0 k n • Dual Code : C ┴ ⊆ {0, 1}n - vectors orthogonal to all codewords in C ⊆ {0, 1}n. Codeword v C iff v ┴ C ┴ : for every c’ C ┴, < v, c’ > = 0.

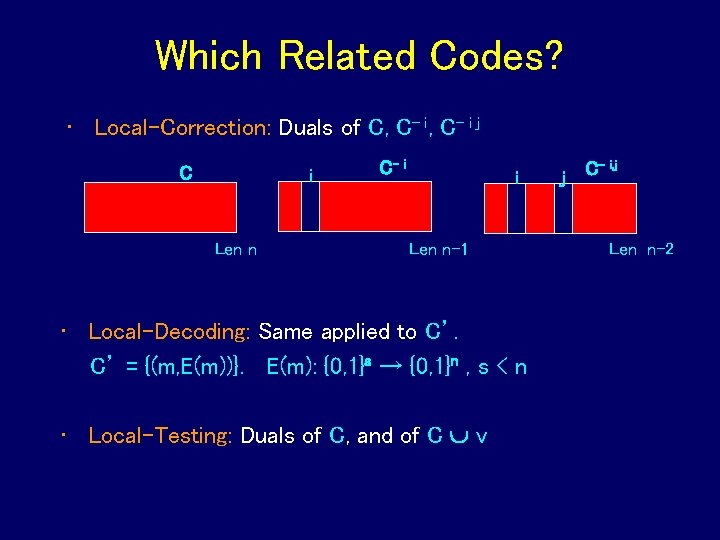

Which Related Codes? • Local-Correction: Duals of C, C- i j C i Len n C- i i Len n-1 • Local-Decoding: Same applied to C’. C’ = {(m, E(m))}. E(m): {0, 1}s → {0, 1}n , s < n • Local-Testing: Duals of C, and of C v j C- i, j Len n-2

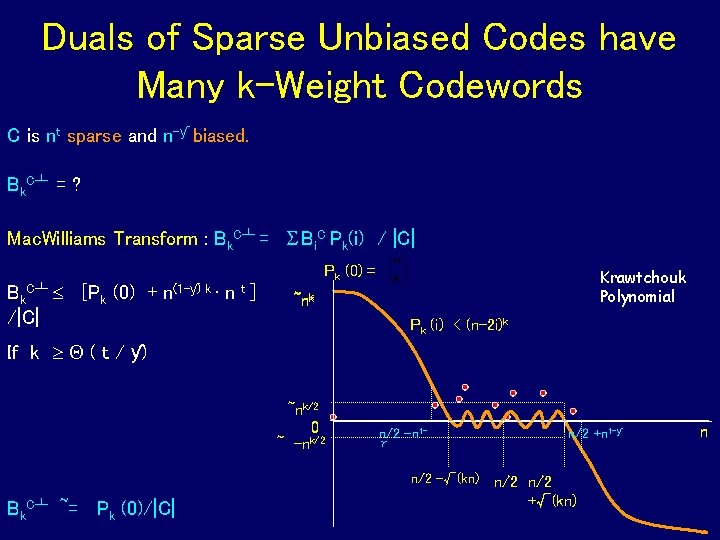

Duals of Sparse Unbiased Codes have Many k-Weight Codewords C is nt sparse and n-ƴ biased. Bk. C┴ = ? Mac. Williams Transform : Bk. C┴ = Bi. C Pk(i) / |C| Bk [Pk (0) + /|C| C┴ Pk (0) = n(1 -ƴ) k t ·n ] Krawtchouk Polynomial ~nk Pk (i) < (n-2 i)k If k ( t / ƴ) ~nk/2 0 k/2 ~ -n n/2 –n 1γ n/2 -√(kn) Bk. C┴ ~= Pk (0)/|C| n/2 +n 1 -ƴ n/2 +√(kn) n

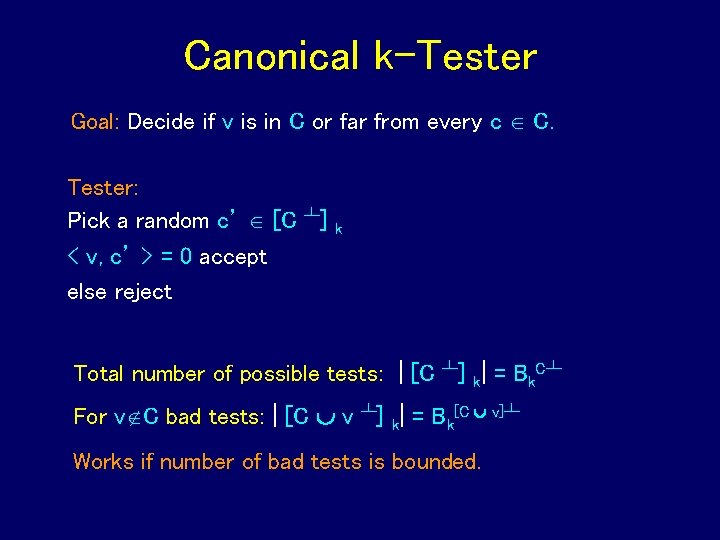

Canonical k-Tester Goal: Decide if v is in C or far from every c C. Tester: Pick a random c’ [C ┴] < v, c’ > = 0 accept else reject k Total number of possible tests: | [C ┴] k| = Bk. C┴ For v C bad tests: | [C v ┴] k| = Bk[C v]┴ Works if number of bad tests is bounded.

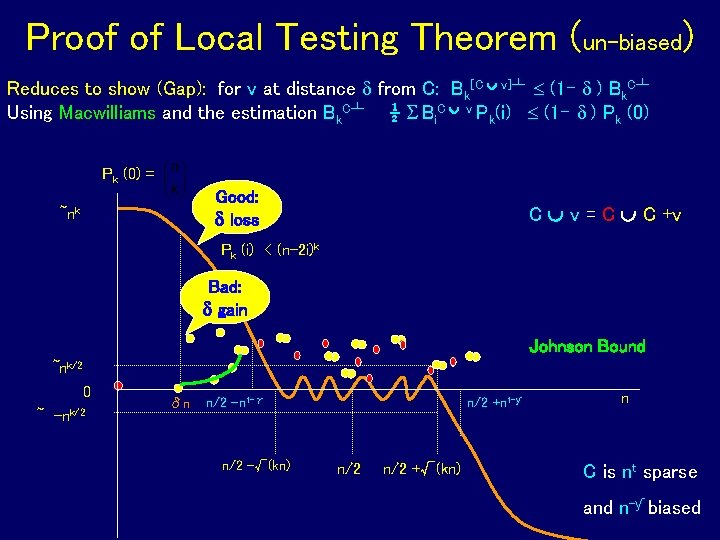

Proof of Local Testing Theorem (un-biased) Reduces to show (Gap): for v at distance from C: Bk[C v]┴ (1 - ) Bk. C┴ Using Macwilliams and the estimation Bk. C┴ ½ Bi. C v Pk(i) (1 - ) Pk (0) = Good: loss ~nk C v = C C +v Pk (i) < (n-2 i)k Bad: gain Johnson Bound ~nk/2 0 ~ -nk/2 δn n/2 –n 1 -γ n/2 -√(kn) n/2 +n 1 -ƴ n/2 +√(kn) n C is nt sparse and n-ƴ biased

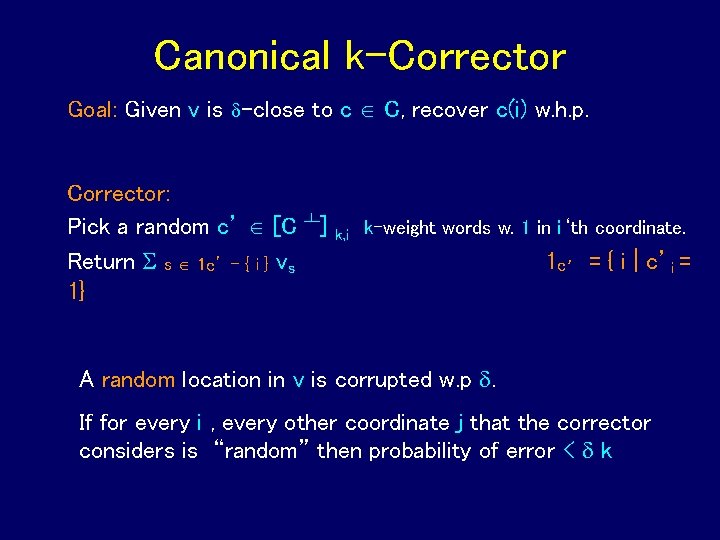

Canonical k-Corrector Goal: Given v is -close to c C, recover c(i) w. h. p. Corrector: Pick a random c’ [C ┴] Return s 1 c’ – { i } vs 1} k, i k-weight words w. 1 in i‘th coordinate. 1 c’ = { i | c’i = A random location in v is corrupted w. p . If for every i , every other coordinate j that the corrector considers is “random” then probability of error < k

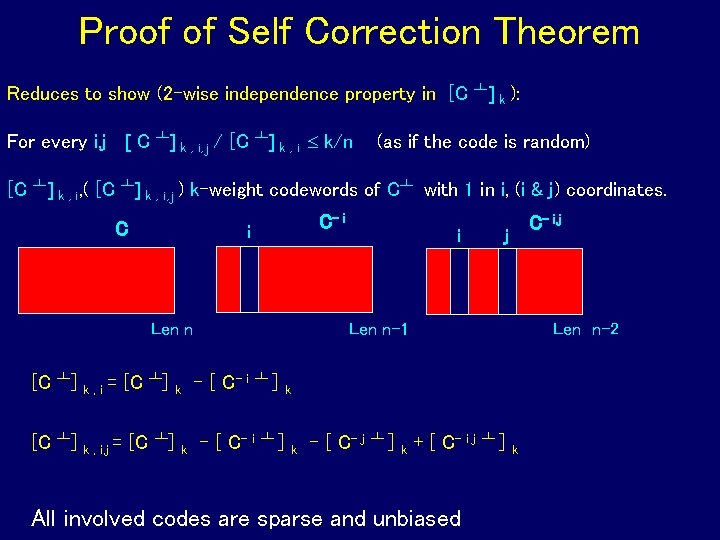

Proof of Self Correction Theorem Reduces to show (2 -wise independence property in [C ┴] k ): For every i, j [ C ┴] k , i, j / [C ┴] k , i k/n (as if the code is random) [C ┴] k , i, ( [C ┴] k , i, j ) k-weight codewords of C┴ with 1 in i, (i & j) coordinates. C C- i i Len n [C ┴] k, i= [C ┴] k , i, j = [C ┴] k k i C- i, j j Len n-1 - [ C- i ┴ ] Len n-2 k k - [ C- j ┴ ] k + [ C- i j All involved codes are sparse and unbiased ┴ ] k

Open Issues Local Correction based on distance. Obtain general k-local correction, local-decoding local testing results for denser codes. Which denser codes?

Thank You!!!

- Slides: 16