New Consistency Orchestrators for Emerging Distributed Systems Shegufta

New Consistency Orchestrators for Emerging Distributed Systems Shegufta Bakht Ahsan 03 April 2020 Doctoral Committee: Prof. Indranil Gupta (Chair), Prof. Klara Nahrstedt, Prof. Nikita Borisov, Dr. Nitin Agrawal

Dependency on Distributed Systems 2

Consistency Leader Election Failure Detection Membership … 3

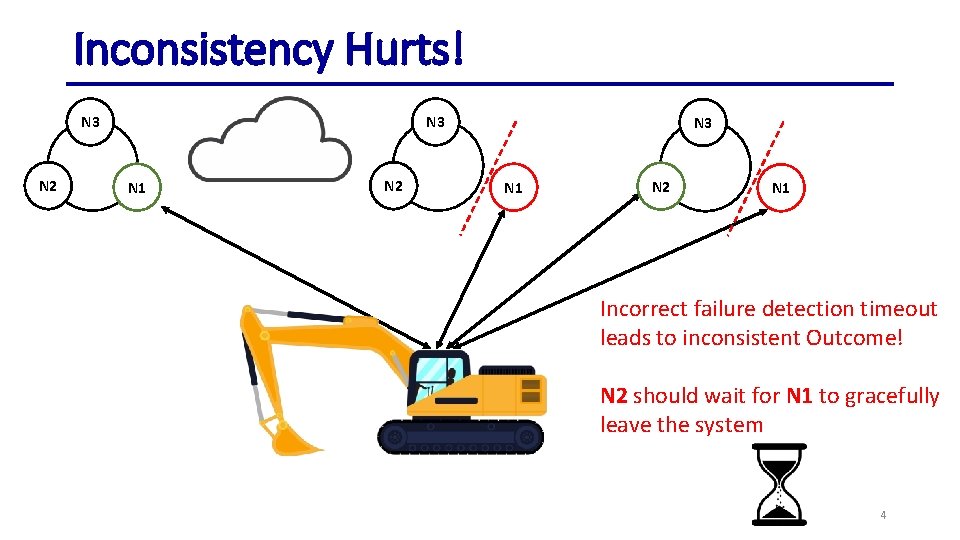

Inconsistency Hurts! N 3 N 2 N 3 N 1 N 2 N 1 Incorrect failure detection timeout leads to inconsistent Outcome! N 2 should wait for N 1 to gracefully leave the system 4

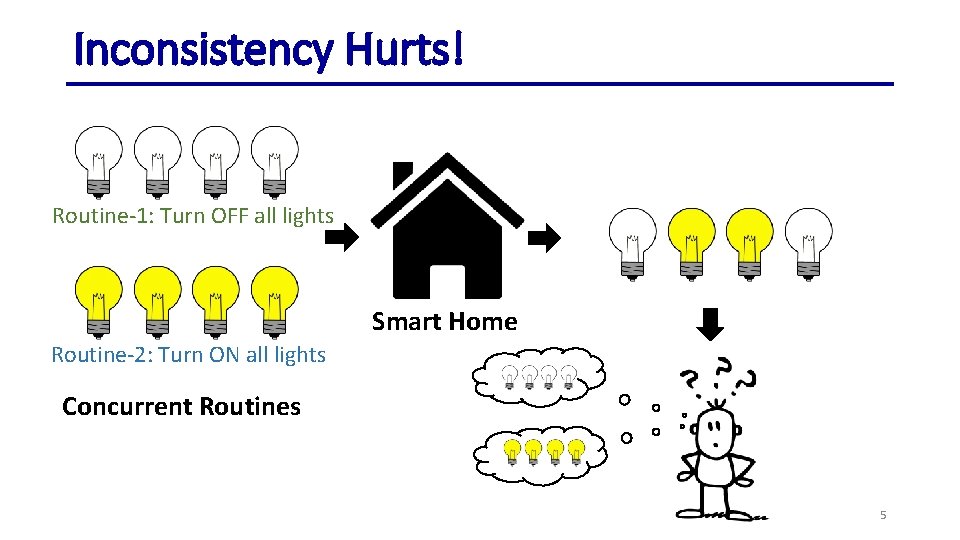

Inconsistency Hurts! Routine-1: Turn OFF all lights Smart Home Routine-2: Turn ON all lights Concurrent Routines 5

In Search of an Efficient Orchestrator! 6

Orchestrators • Dedicated entities that use specialized protocols to coordinate the disparate components of distributed systems • Typically deployed on multiple coordinating nodes to ensure fault tolerance 7

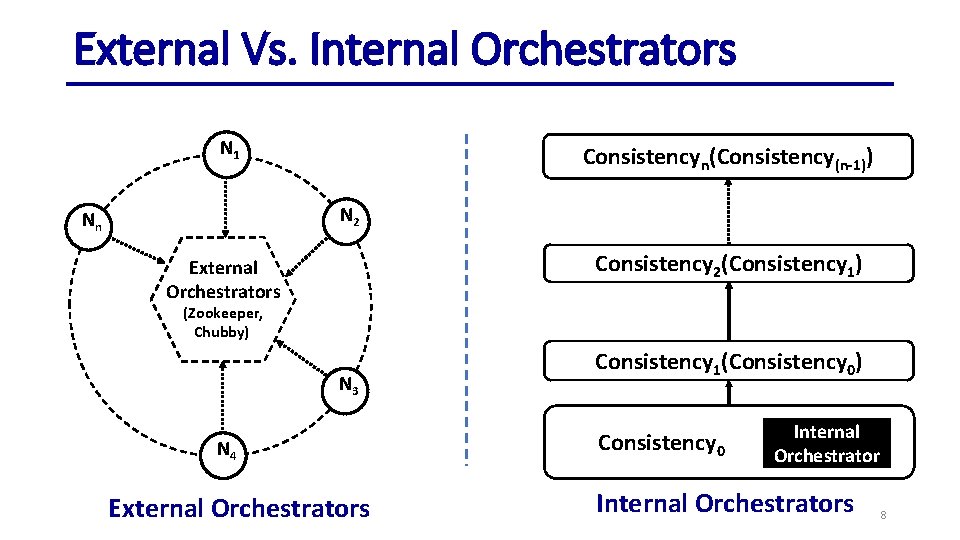

External Vs. Internal Orchestrators N 1 Consistencyn(Consistency(n-1)) N 2 Nn Consistency 2(Consistency 1) External Orchestrators (Zookeeper, Chubby) N 3 N 4 External Orchestrators Consistency 1(Consistency 0) Consistency 0 Internal Orchestrators 8

Thesis Statement We present new internal orchestrators for maintaining consistency in both edge-based and cloud-based distributed systems 9

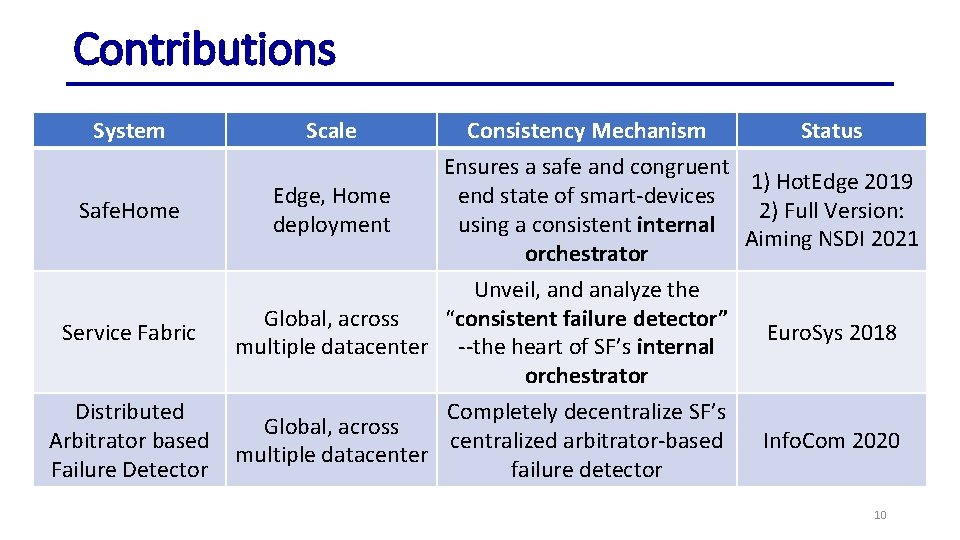

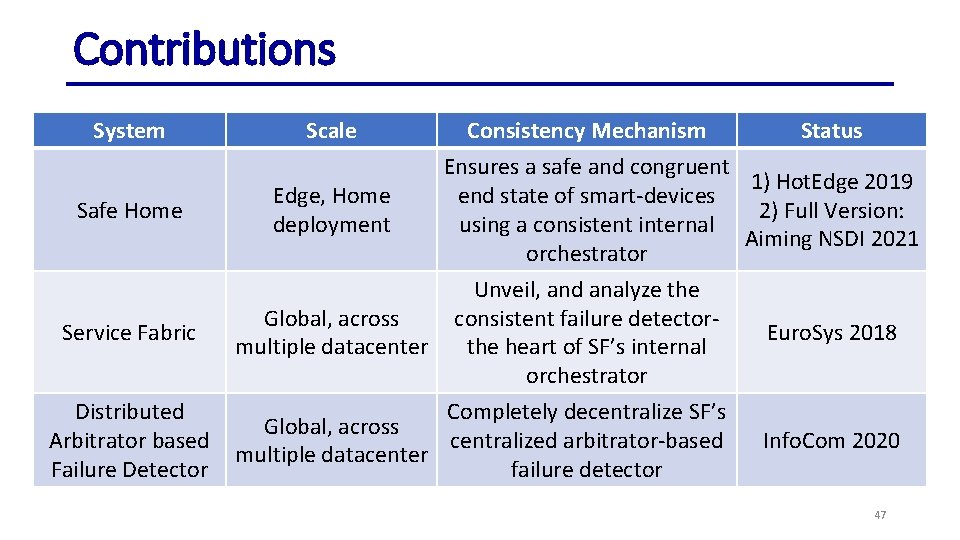

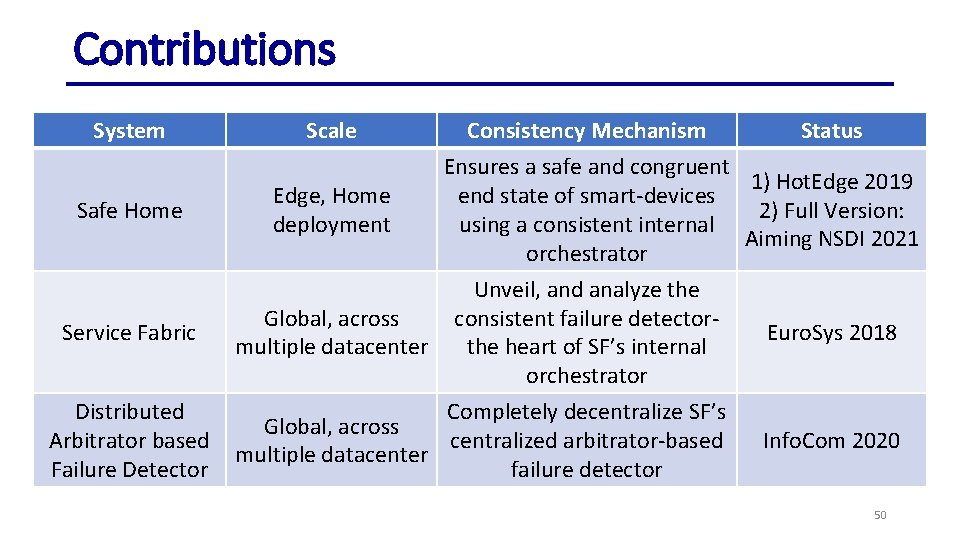

Contributions System Scale Consistency Mechanism Status Ensures a safe and congruent 1) Hot. Edge 2019 Edge, Home end state of smart-devices Safe. Home 2) Full Version: deployment using a consistent internal Aiming NSDI 2021 orchestrator Unveil, and analyze the Global, across “consistent failure detector” Service Fabric Euro. Sys 2018 multiple datacenter --the heart of SF’s internal orchestrator Distributed Completely decentralize SF’s Global, across Arbitrator based centralized arbitrator-based Info. Com 2020 multiple datacenter Failure Detector failure detector 10

Home, Safe. Home: Smart Home Reliability with Visibility and Atomicity • At Prelim: Proposed the idea • Workshop version: Hot. Edge 2019 • Full Version: Aiming for NSDI 2021 11

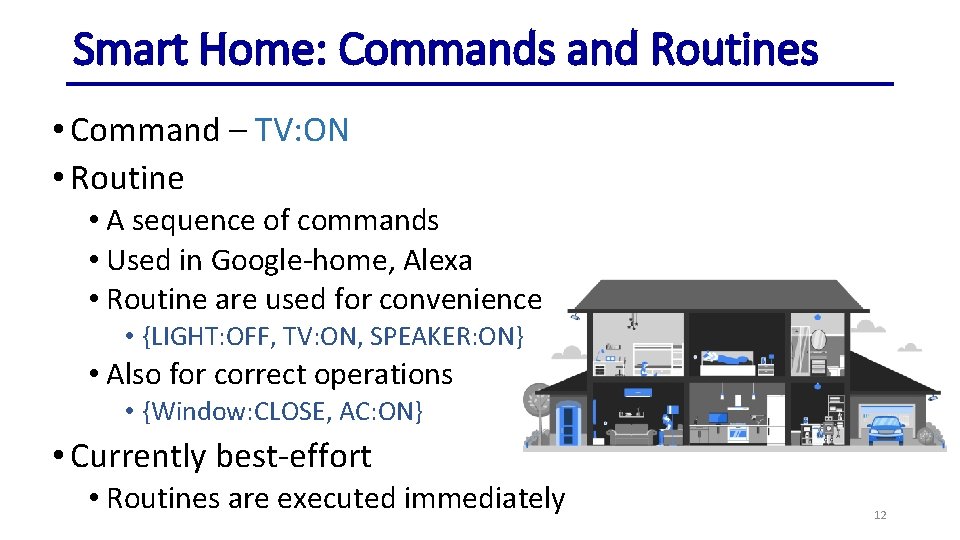

Smart Home: Commands and Routines • Command – TV: ON • Routine • A sequence of commands • Used in Google-home, Alexa • Routine are used for convenience • {LIGHT: OFF, TV: ON, SPEAKER: ON} • Also for correct operations • {Window: CLOSE, AC: ON} • Currently best-effort • Routines are executed immediately 12

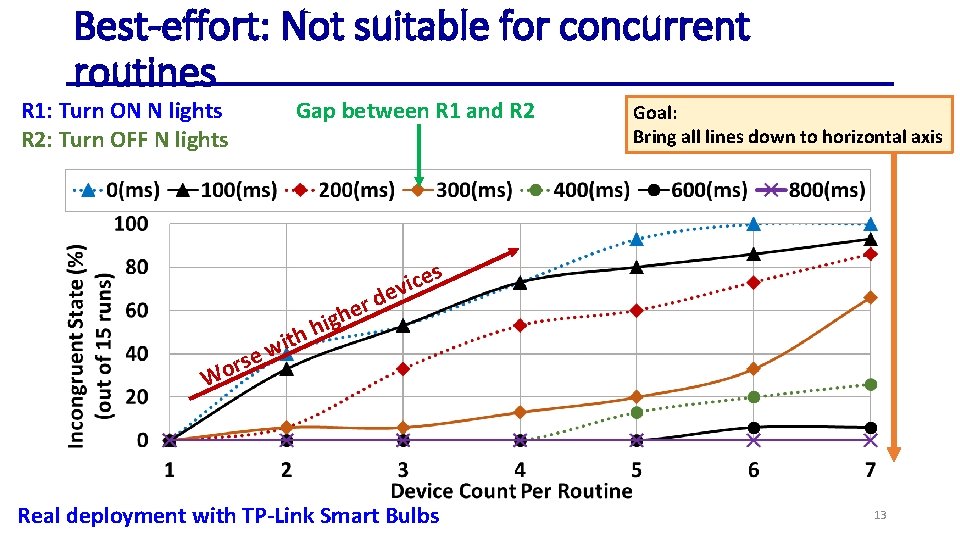

Best-effort: Not suitable for concurrent routines R 1: Turn ON N lights R 2: Turn OFF N lights Gap between R 1 and R 2 Goal: Bring all lines down to horizontal axis s e c i ev i h ith w e s or d r e gh W Real deployment with TP-Link Smart Bulbs 13

We Argue That… • Smart Home orchestrator should: • Properly isolate concurrent routines (serializability) • Ensure atomicity (all or nothing) • Routine: {Window: CLOSE; AC: ON } • Rollback if any of these commands fails 14

Challenges • Every action is immediately visible • Needs to consider human in the loop • Should avoid unnecessary on/off/status-change • Failure: • Device crashes and restarts are the norm • Reasoning about device failure/restart events - challenging • Long Running routines: • Common in smart home (e. g. turn water sprinkler ON for 15 minutes) 15

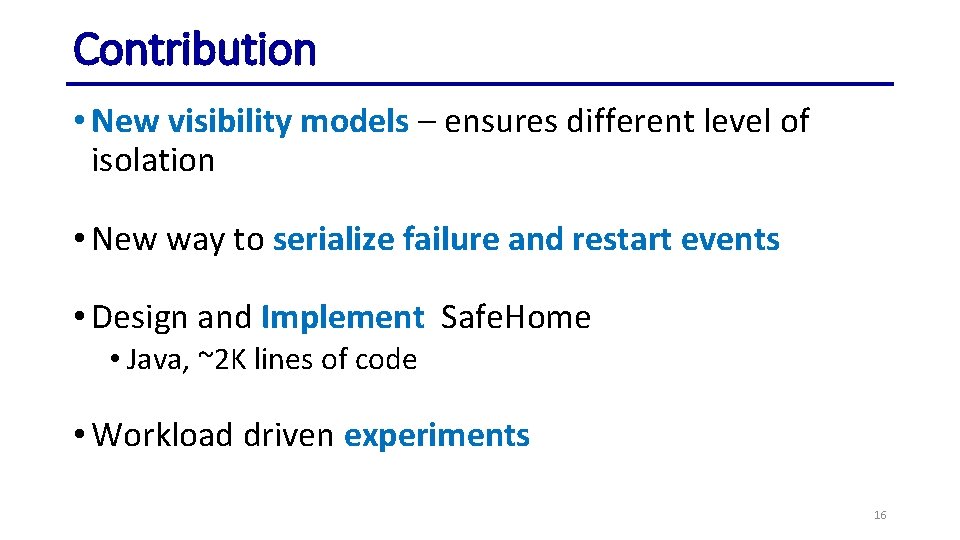

Contribution • New visibility models – ensures different level of isolation • New way to serialize failure and restart events • Design and Implement Safe. Home • Java, ~2 K lines of code • Workload driven experiments 16

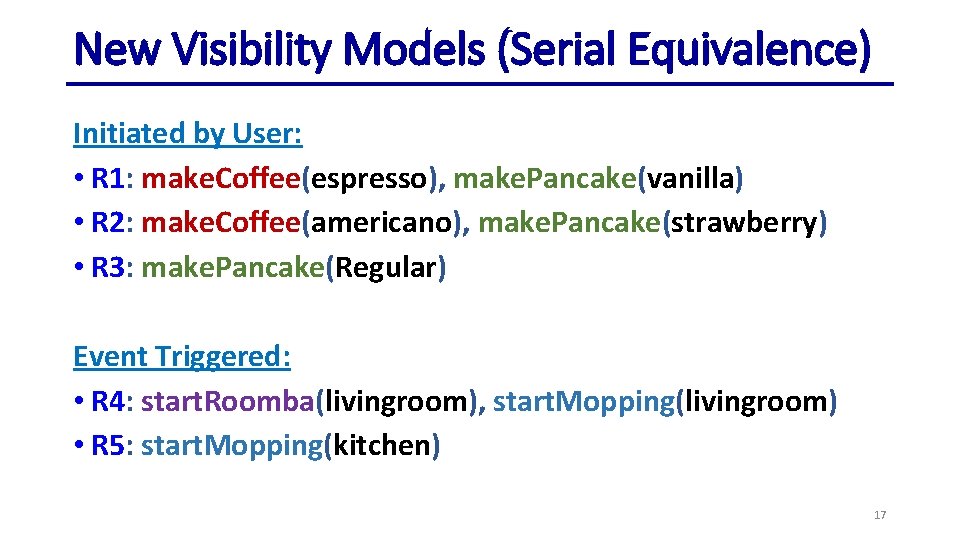

New Visibility Models (Serial Equivalence) Initiated by User: • R 1: make. Coffee(espresso), make. Pancake(vanilla) • R 2: make. Coffee(americano), make. Pancake(strawberry) • R 3: make. Pancake(Regular) Event Triggered: • R 4: start. Roomba(livingroom), start. Mopping(livingroom) • R 5: start. Mopping(kitchen) 17

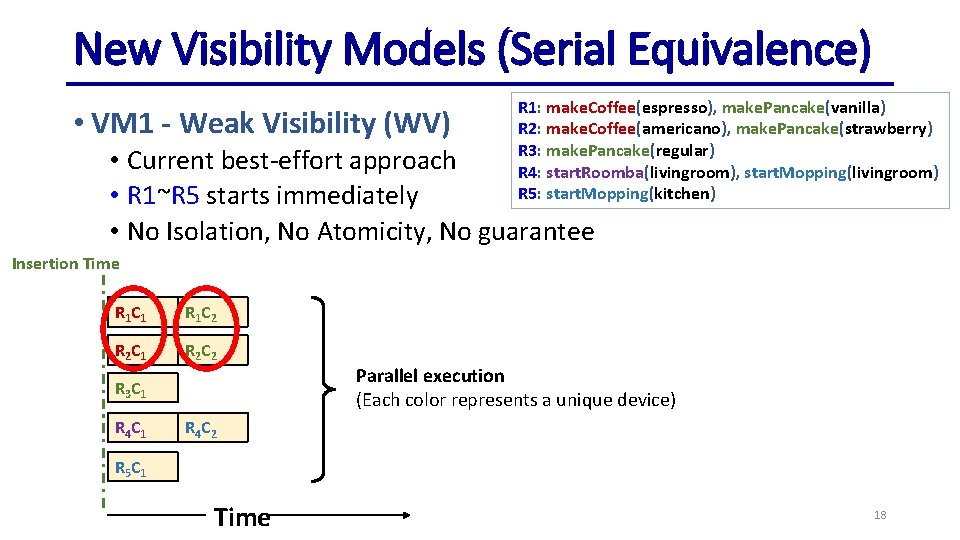

New Visibility Models (Serial Equivalence) • VM 1 - Weak Visibility (WV) R 1: make. Coffee(espresso), make. Pancake(vanilla) R 2: make. Coffee(americano), make. Pancake(strawberry) R 3: make. Pancake(regular) R 4: start. Roomba(livingroom), start. Mopping(livingroom) R 5: start. Mopping(kitchen) • Current best-effort approach • R 1~R 5 starts immediately • No Isolation, No Atomicity, No guarantee Insertion Time R 1 C 1 R 1 C 2 R 2 C 1 R 2 C 2 R 3 C 1 R 4 C 1 Parallel execution (Each color represents a unique device) R 4 C 2 R 5 C 1 Time 18

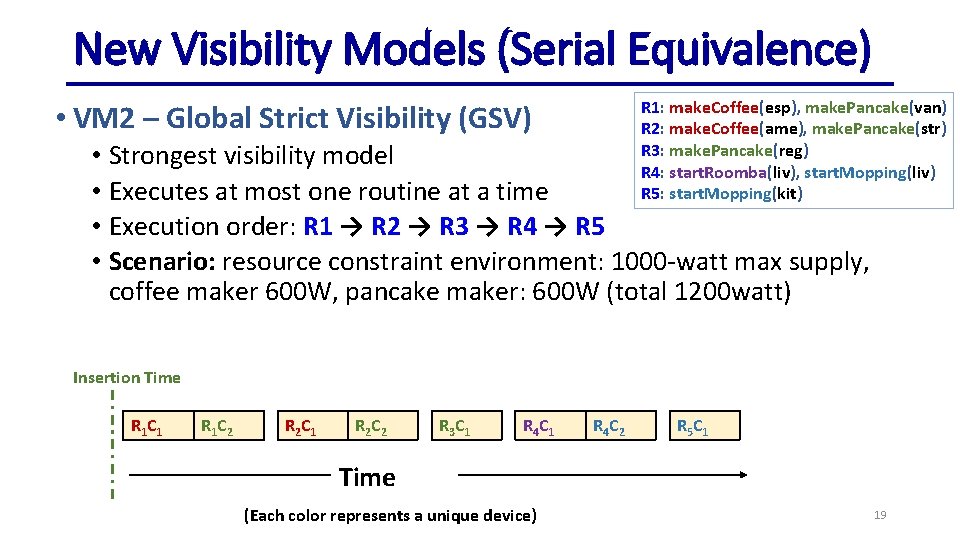

New Visibility Models (Serial Equivalence) R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 3: make. Pancake(reg) R 4: start. Roomba(liv), start. Mopping(liv) R 5: start. Mopping(kit) • VM 2 – Global Strict Visibility (GSV) • Strongest visibility model • Executes at most one routine at a time • Execution order: R 1 → R 2 → R 3 → R 4 → R 5 • Scenario: resource constraint environment: 1000 -watt max supply, coffee maker 600 W, pancake maker: 600 W (total 1200 watt) Insertion Time R 1 C 1 R 1 C 2 R 2 C 1 R 2 C 2 R 3 C 1 R 4 C 2 R 5 C 1 Time (Each color represents a unique device) 19

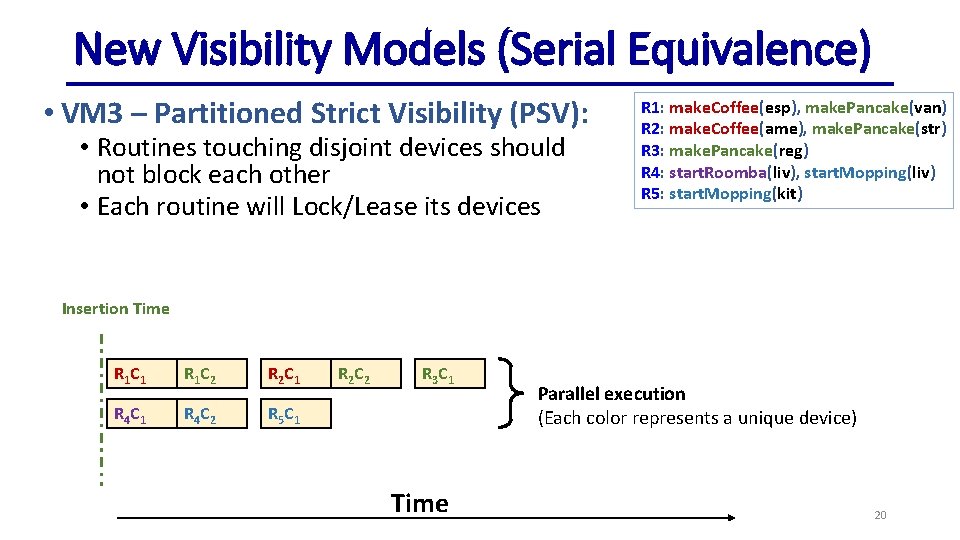

New Visibility Models (Serial Equivalence) • VM 3 – Partitioned Strict Visibility (PSV): • Routines touching disjoint devices should not block each other • Each routine will Lock/Lease its devices R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 3: make. Pancake(reg) R 4: start. Roomba(liv), start. Mopping(liv) R 5: start. Mopping(kit) Insertion Time R 1 C 1 R 1 C 2 R 2 C 1 R 4 C 2 R 5 C 1 R 2 C 2 R 3 C 1 Time Parallel execution (Each color represents a unique device) 20

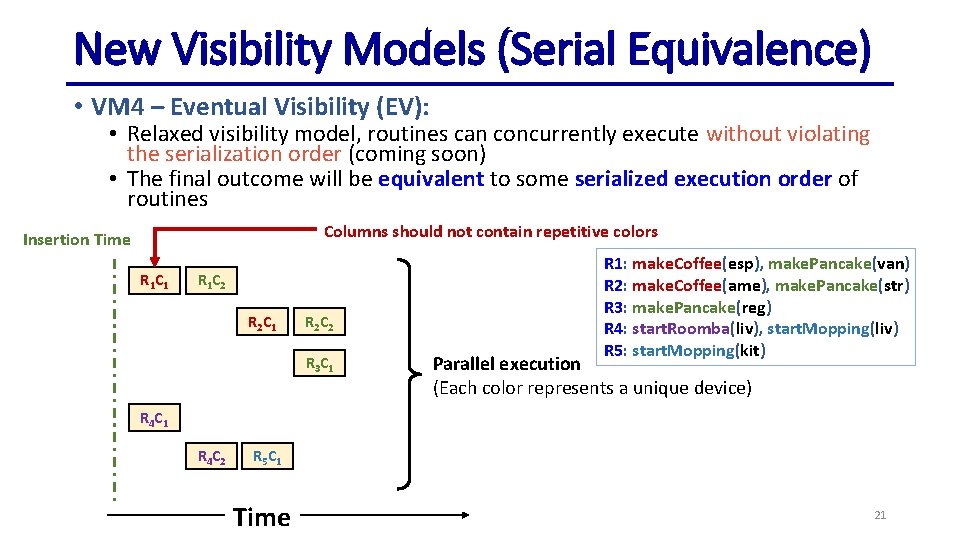

New Visibility Models (Serial Equivalence) • VM 4 – Eventual Visibility (EV): • Relaxed visibility model, routines can concurrently execute without violating the serialization order (coming soon) • The final outcome will be equivalent to some serialized execution order of routines Columns should not contain repetitive colors Insertion Time R 1 C 1 R 1 C 2 R 2 C 1 R 2 C 2 R 3 C 1 R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 3: make. Pancake(reg) R 4: start. Roomba(liv), start. Mopping(liv) R 5: start. Mopping(kit) Parallel execution (Each color represents a unique device) R 4 C 1 R 4 C 2 R 5 C 1 Time 21

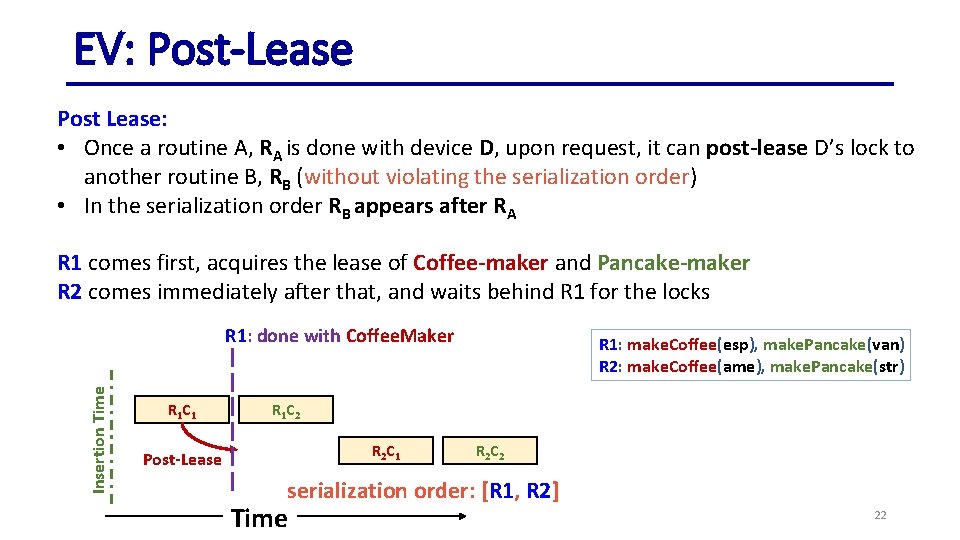

EV: Post-Lease Post Lease: • Once a routine A, RA is done with device D, upon request, it can post-lease D’s lock to another routine B, RB (without violating the serialization order) • In the serialization order RB appears after RA R 1 comes first, acquires the lease of Coffee-maker and Pancake-maker R 2 comes immediately after that, and waits behind R 1 for the locks Insertion Time R 1: done with Coffee. Maker R 1 C 1 R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 1 C 2 R 2 C 1 Post-Lease R 2 C 2 serialization order: [R 1, R 2] Time 22

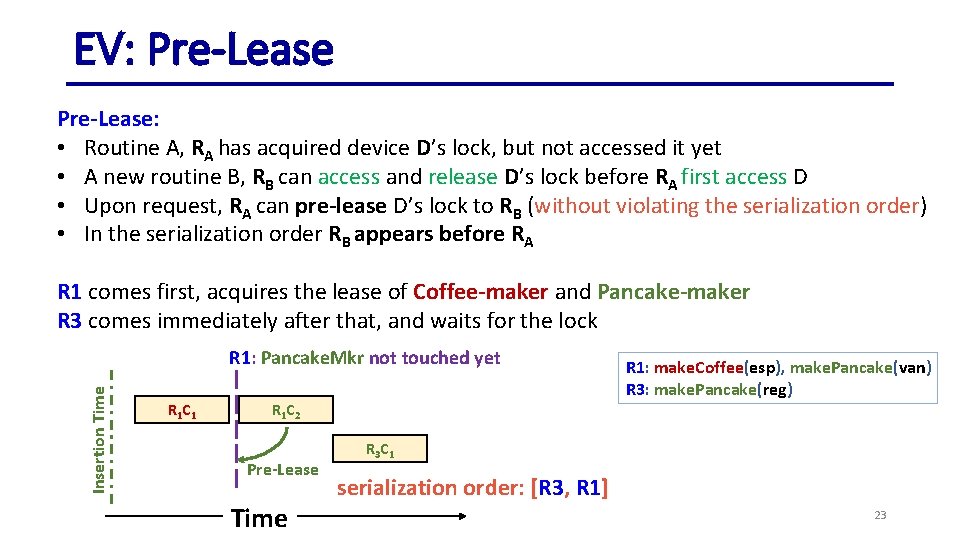

EV: Pre-Lease: • Routine A, RA has acquired device D’s lock, but not accessed it yet • A new routine B, RB can access and release D’s lock before RA first access D • Upon request, RA can pre-lease D’s lock to RB (without violating the serialization order) • In the serialization order RB appears before RA R 1 comes first, acquires the lease of Coffee-maker and Pancake-maker R 3 comes immediately after that, and waits for the lock Insertion Time R 1: Pancake. Mkr not touched yet R 1 C 1 R 1 C 2 Pre-Lease Time R 1: make. Coffee(esp), make. Pancake(van) R 3: make. Pancake(reg) R 3 C 1 serialization order: [R 3, R 1] 23

![Eventual Visibility (EV) – Lineage Table Released [R] Serialization R 1 order: Acquired [A] Eventual Visibility (EV) – Lineage Table Released [R] Serialization R 1 order: Acquired [A]](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-24.jpg)

Eventual Visibility (EV) – Lineage Table Released [R] Serialization R 1 order: Acquired [A] R 1, R 2 Scheduled [S] Committed [C] R 3, R 1, R 2 R 4 R 3, R 1, R 2 R 5, R 4 Table Head Cf. Mkr R 1 C 1 [S] R 2 C 1 [S] Pnck. Mkr R 3 C 1 [S] R 1 C 2 [S] Roomba R 4 C 1 [S] Mopper R 5 C 1 [S] R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 3: make. Pancake(reg) R 4: start. Roomba(liv), start. Mopping(liv) R 5: start. Mopping(kit) Post-Lease R 2 C 2 [S] Pre-Lease R 4 C 2 [S] Pre-Lease Time 24

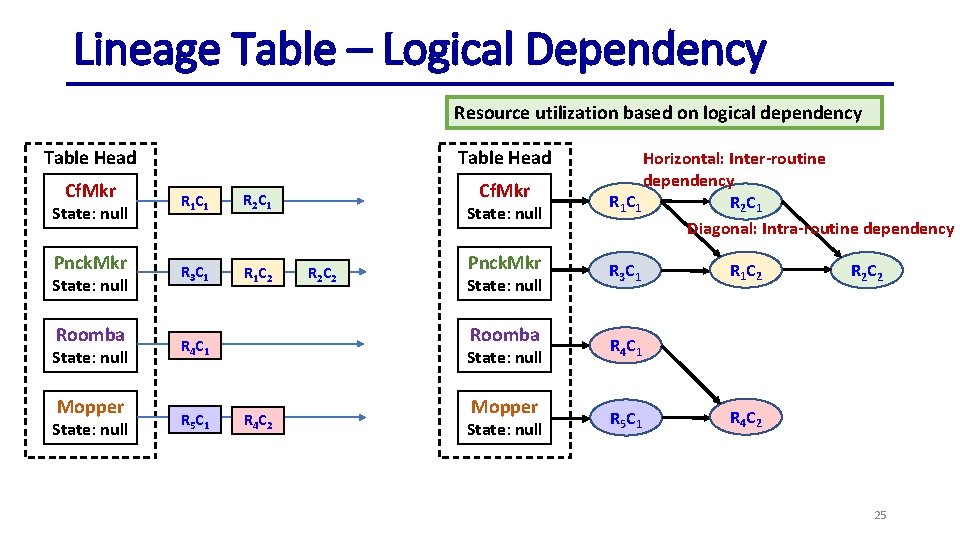

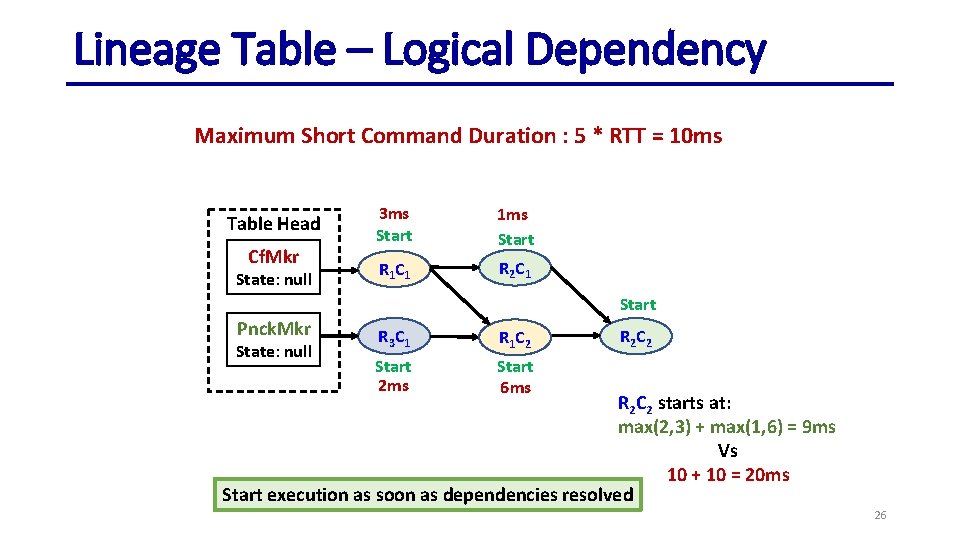

Lineage Table – Logical Dependency Resource utilization based on logical dependency Table Head Cf. Mkr State: null Pnck. Mkr State: null Roomba State: null Mopper State: null Table Head R 1 C 1 R 2 C 1 R 3 C 1 R 1 C 2 State: null R 2 C 2 Pnck. Mkr State: null Roomba R 4 C 1 R 5 C 1 Cf. Mkr State: null R 4 C 2 Mopper State: null Horizontal: Inter-routine dependency R 1 C 1 R 2 C 1 Diagonal: Intra-routine dependency R 3 C 1 R 1 C 2 R 2 C 2 R 4 C 1 R 5 C 1 R 4 C 2 25

Lineage Table – Logical Dependency Maximum Short Command Duration : 5 * RTT = 10 ms Table Head Cf. Mkr State: null 3 ms Start 1 ms Start R 1 C 1 R 2 C 1 Start Pnck. Mkr State: null R 3 C 1 R 1 C 2 Start 2 ms Start 6 ms R 2 C 2 starts at: max(2, 3) + max(1, 6) = 9 ms Vs 10 + 10 = 20 ms Start execution as soon as dependencies resolved 26

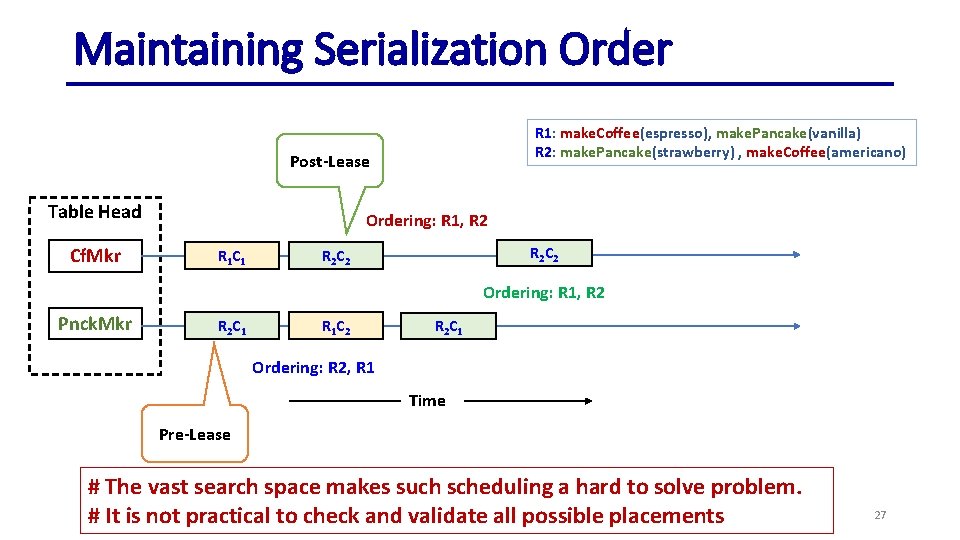

Maintaining Serialization Order R 1: make. Coffee(espresso), make. Pancake(vanilla) R 2: make. Pancake(strawberry) , make. Coffee(americano) Post-Lease Table Head Cf. Mkr Ordering: R 1, R 2 R 1 C 1 R 2 C 2 Ordering: R 1, R 2 Pnck. Mkr R 2 C 1 R 1 C 2 R 2 C 1 Ordering: R 2, R 1 Time Pre-Lease # The vast search space makes such scheduling a hard to solve problem. # It is not practical to check and validate all possible placements 27

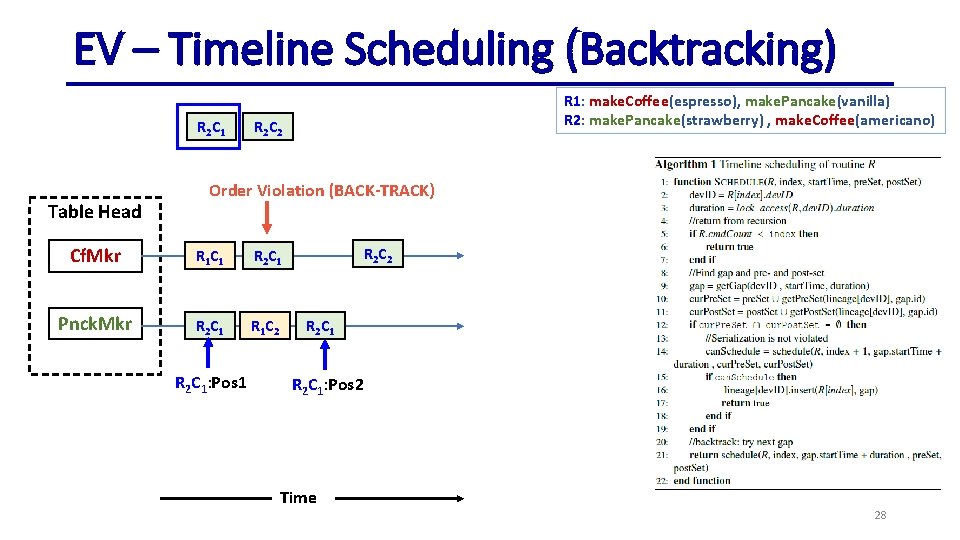

EV – Timeline Scheduling (Backtracking) R 2 C 1 Table Head R 1: make. Coffee(espresso), make. Pancake(vanilla) R 2: make. Pancake(strawberry) , make. Coffee(americano) R 2 C 2 Order Violation (BACK-TRACK) Cf. Mkr R 1 C 1 R 2 C 1 Pnck. Mkr R 2 C 1 R 1 C 2 R 2 C 1: Pos 1 R 2 C 2 R 2 C 1 R 2 C 1: Pos 2 Time 28

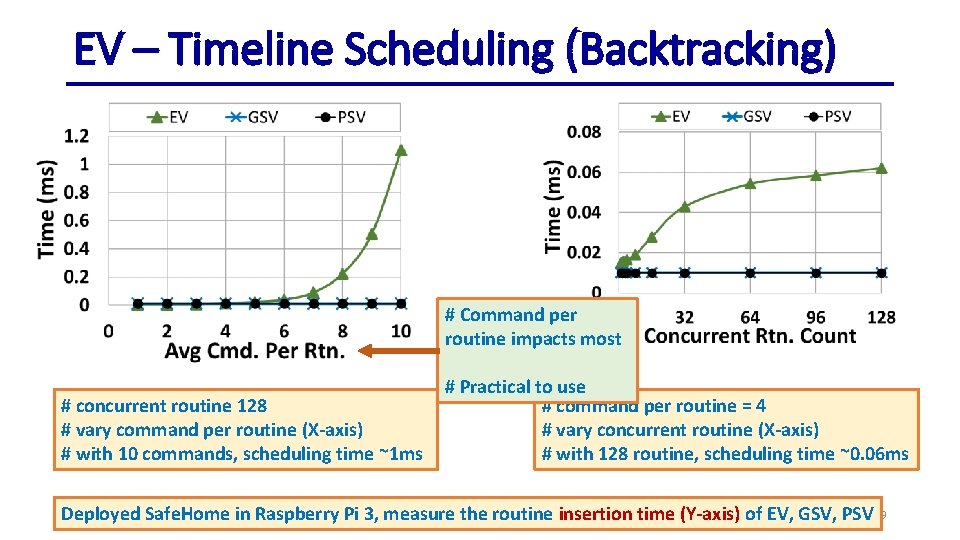

EV – Timeline Scheduling (Backtracking) # Command per routine impacts most # concurrent routine 128 # vary command per routine (X-axis) # with 10 commands, scheduling time ~1 ms # Practical to use # command per routine = 4 # vary concurrent routine (X-axis) # with 128 routine, scheduling time ~0. 06 ms Deployed Safe. Home in Raspberry Pi 3, measure the routine insertion time (Y-axis) of EV, GSV, PSV 29

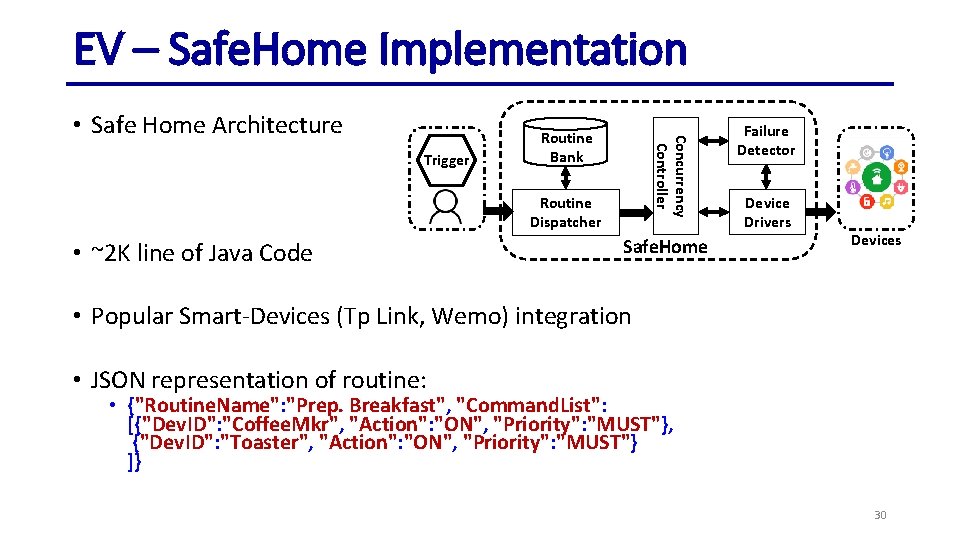

EV – Safe. Home Implementation Trigger Routine Bank Concurrency Controller • Safe Home Architecture Routine Dispatcher • ~2 K line of Java Code Safe. Home Failure Detector Device Drivers Devices • Popular Smart-Devices (Tp Link, Wemo) integration • JSON representation of routine: • {"Routine. Name": "Prep. Breakfast", "Command. List": [{"Dev. ID": "Coffee. Mkr", "Action": "ON", "Priority": "MUST"}, {"Dev. ID": "Toaster", "Action": "ON", "Priority": "MUST"} ]} 30

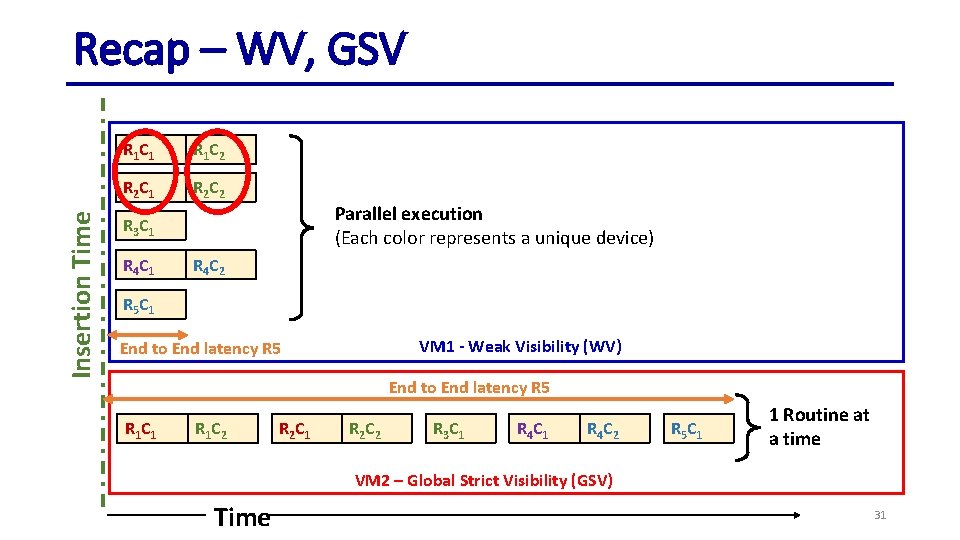

Insertion Time Recap – WV, GSV R 1 C 1 R 1 C 2 R 2 C 1 R 2 C 2 Parallel execution (Each color represents a unique device) R 3 C 1 R 4 C 2 R 5 C 1 VM 1 - Weak Visibility (WV) End to End latency R 5 R 1 C 1 R 1 C 2 R 2 C 1 R 2 C 2 R 3 C 1 R 4 C 2 R 5 C 1 1 Routine at a time VM 2 – Global Strict Visibility (GSV) Time 31

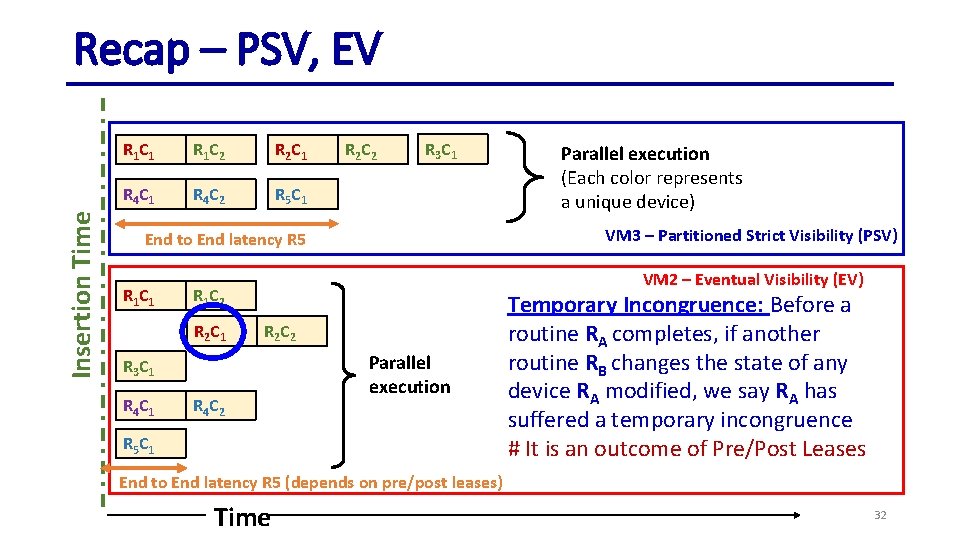

Insertion Time Recap – PSV, EV R 1 C 1 R 1 C 2 R 2 C 1 R 4 C 2 R 5 C 1 R 2 C 2 R 3 C 1 VM 3 – Partitioned Strict Visibility (PSV) End to End latency R 5 R 1 C 1 VM 2 – Eventual Visibility (EV) R 1 C 2 R 2 C 1 R 2 C 2 R 3 C 1 R 4 C 1 Parallel execution (Each color represents a unique device) R 4 C 2 Parallel execution R 5 C 1 Temporary Incongruence: Before a routine RA completes, if another routine RB changes the state of any device RA modified, we say RA has suffered a temporary incongruence # It is an outcome of Pre/Post Leases End to End latency R 5 (depends on pre/post leases) Time 32

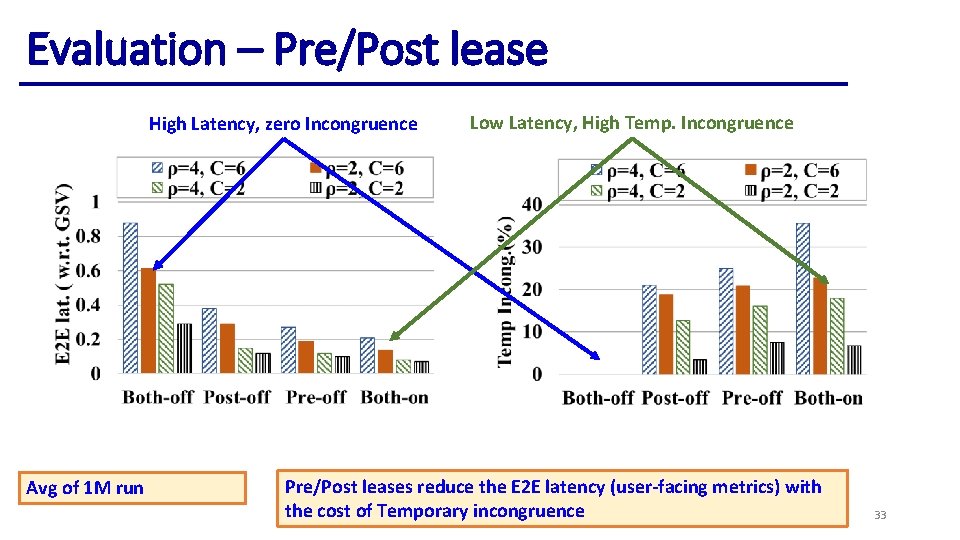

Evaluation – Pre/Post lease High Latency, zero Incongruence Avg of 1 M run Low Latency, High Temp. Incongruence Pre/Post leases reduce the E 2 E latency (user-facing metrics) with the cost of Temporary incongruence 33

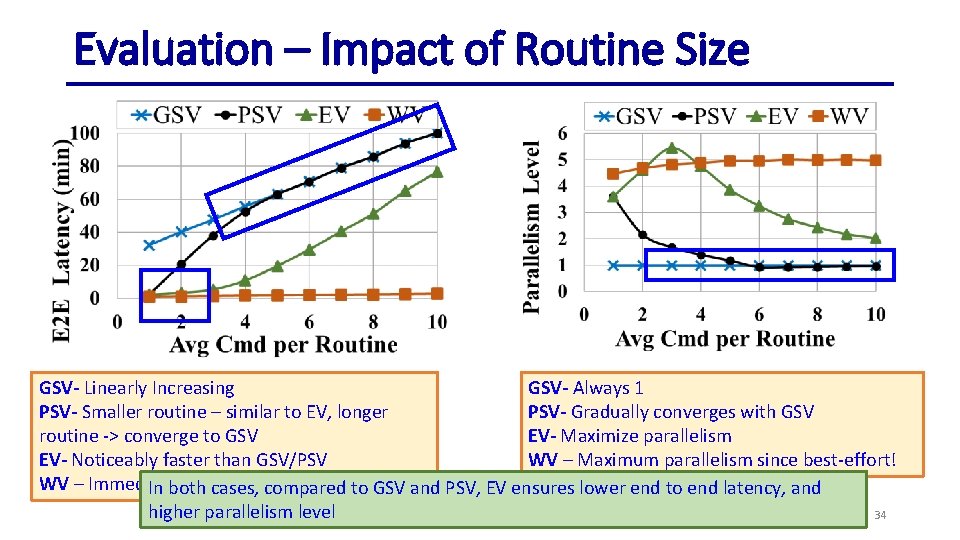

Evaluation – Impact of Routine Size GSV- Linearly Increasing GSV- Always 1 PSV- Smaller routine – similar to EV, longer PSV- Gradually converges with GSV routine -> converge to GSV EV- Maximize parallelism EV- Noticeably faster than GSV/PSV WV – Maximum parallelism since best-effort! WV – Immediate since best-effort! In both cases, compared to GSV and PSV, EV ensures lower end to end latency, and higher parallelism level 34

![Related Works • Safety, Reliability and Usability • Transactuations [ATC’ 19] – ensures consistency Related Works • Safety, Reliability and Usability • Transactuations [ATC’ 19] – ensures consistency](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-35.jpg)

Related Works • Safety, Reliability and Usability • Transactuations [ATC’ 19] – ensures consistency across physical device state and application state • Apex [Io. TDI’ 19] – automatically satisfy preconditions of a user command – DAG based approach • Rivulet [Middleware’ 17] – fault-tolerant distributed platform for running smart-home applications • Home. OS [NSDI’ 12] – “operating system” for home that provides a centralized, holistic control of heterogeneous smart devices. • Support for Routine • Alexa, Google Home, i. Robot’s Imprint (long running routine) • Concurrency Control in Transactional Database: • Optimistic Concurrency Control – Simulate transactions first, validate at the last stage • Pessimistic Concurrency Control – Acquire all locks first, only then execute transaction 35

Conclusion • Developed smart home management framework Safe. Home • Offers various visibility model • Offers failure serialization • Offers atomicity of routine execution • Workshop version: Hot. Edge 2019 • Full Version: NSDI 2021 36

Service Fabric: a distributed platform for building microservices in the cloud • ~2 years collaboration between Microsoft and UIUC • Published in Euro. Sys 2018 • At Prelim: Presented finished work 37

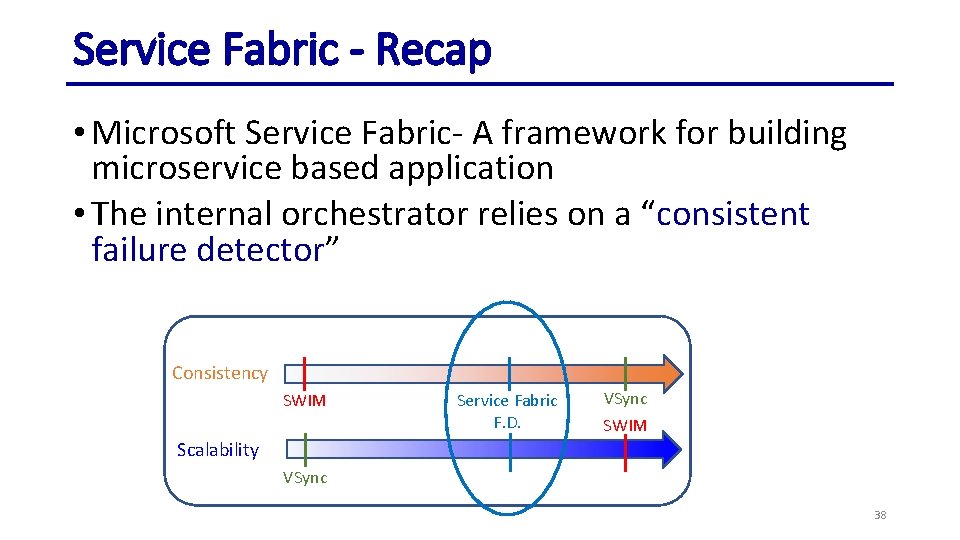

Service Fabric - Recap • Microsoft Service Fabric- A framework for building microservice based application • The internal orchestrator relies on a “consistent failure detector” Consistency SWIM Service Fabric F. D. VSync SWIM Scalability VSync 38

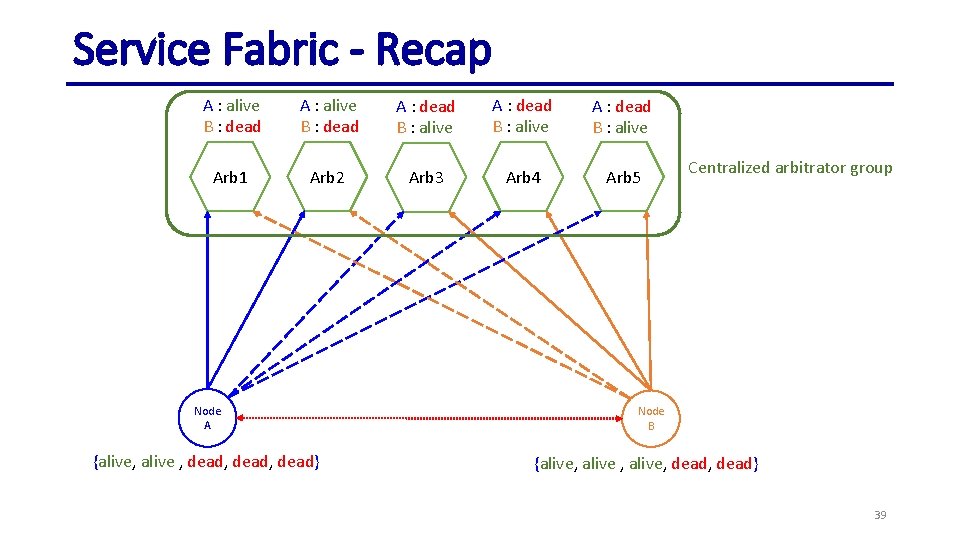

Service Fabric - Recap A : alive B : dead A : dead B : alive Arb 1 Arb 2 Arb 3 Arb 4 Arb 5 Node A {alive, alive , dead, dead} Centralized arbitrator group Node B {alive, alive, dead} 39

![Service Fabric - Recap Reliable Collection (Queue, Dictionary): [Highly Available] & [Fault Tolerant] & Service Fabric - Recap Reliable Collection (Queue, Dictionary): [Highly Available] & [Fault Tolerant] &](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-40.jpg)

Service Fabric - Recap Reliable Collection (Queue, Dictionary): [Highly Available] & [Fault Tolerant] & [Persisted] & [Transactional] Reliable Primary Selection Consistent Replica Set Failover Management Replicated State Machines Reliability Subsystem Leader Election Routing Consistency Consistent Failure Detector Routing Token Federation Subsystem 40

A New Fully-Distributed Arbitration-Based Membership Protocol • An improvement over Service Fabric’s centralized arbitration scheme • Accepted in Info. Com 2020 • At Prelim: Presented partial work 41

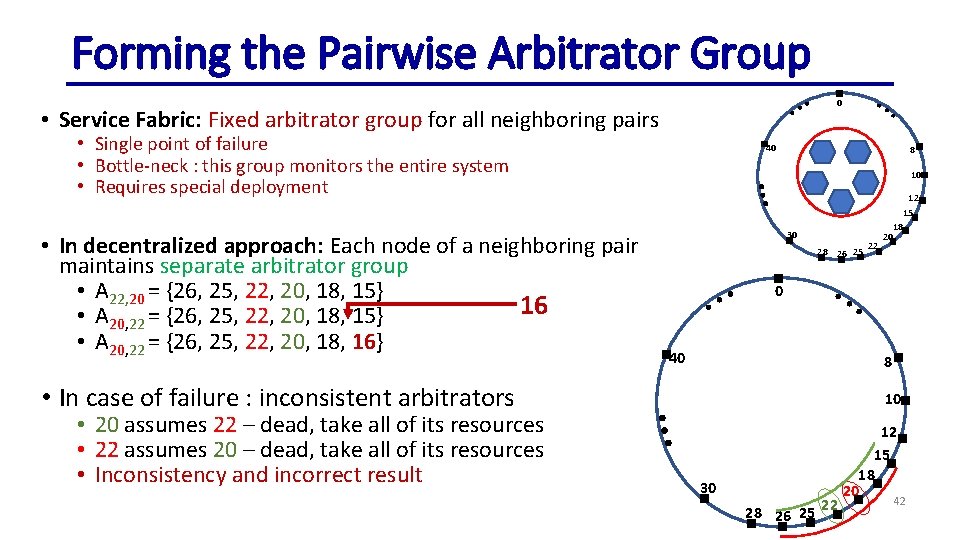

Forming the Pairwise Arbitrator Group 0 • Service Fabric: Fixed arbitrator group for all neighboring pairs • Single point of failure • Bottle-neck : this group monitors the entire system • Requires special deployment • In decentralized approach: Each node of a neighboring pair maintains separate arbitrator group • A 22, 20 = {26, 25, 22, 20, 18, 15} 16 • A 20, 22 = {26, 25, 22, 20, 18, 15} • A 20, 22 = {26, 25, 22, 20, 18, 16} 40 8 10 30 28 26 25 0 40 8 • In case of failure : inconsistent arbitrators • 20 assumes 22 – dead, take all of its resources • 22 assumes 20 – dead, take all of its resources • Inconsistency and incorrect result 22 12 15 18 20 10 30 28 26 25 22 12 15 18 20 42

Contributions • Decentralize the arbitrator group • Safe and Consistent Arbitrator Handoff • Solves the problem showed in previous slide • A coherent Node Join Protocol • Formal proof of correctness and time-bound of both approaches: • Neither Original Service Fabric paper nor any of its white papers provide the formal proof or time-bound 43

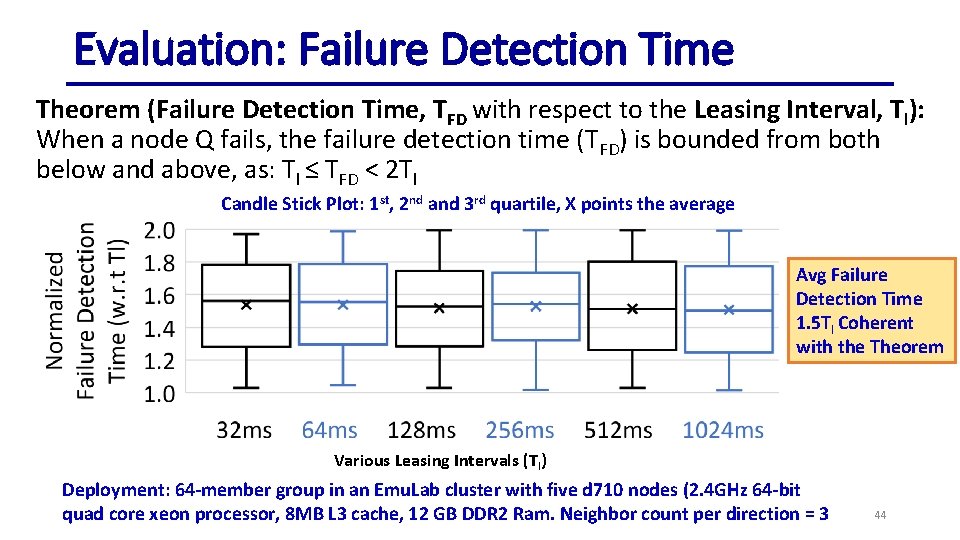

Evaluation: Failure Detection Time Theorem (Failure Detection Time, TFD with respect to the Leasing Interval, Tl): When a node Q fails, the failure detection time (TFD) is bounded from both below and above, as: Tl ≤ TFD < 2 Tl Candle Stick Plot: 1 st, 2 nd and 3 rd quartile, X points the average Avg Failure Detection Time 1. 5 Tl Coherent with the Theorem Various Leasing Intervals (Tl) Deployment: 64 -member group in an Emu. Lab cluster with five d 710 nodes (2. 4 GHz 64 -bit quad core xeon processor, 8 MB L 3 cache, 12 GB DDR 2 Ram. Neighbor count per direction = 3 44

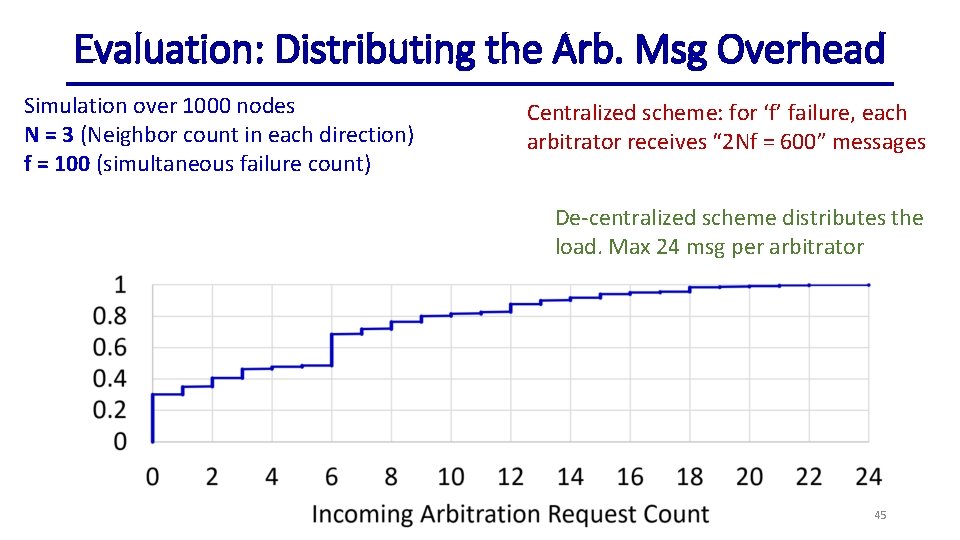

Evaluation: Distributing the Arb. Msg Overhead Simulation over 1000 nodes N = 3 (Neighbor count in each direction) f = 100 (simultaneous failure count) Centralized scheme: for ‘f’ failure, each arbitrator receives “ 2 Nf = 600” messages De-centralized scheme distributes the load. Max 24 msg per arbitrator 45

Thesis Statement We present new internal orchestrators for maintaining consistency in both edge-based and cloud-based distributed systems 46

Contributions System Scale Consistency Mechanism Status Ensures a safe and congruent 1) Hot. Edge 2019 Edge, Home end state of smart-devices Safe Home 2) Full Version: deployment using a consistent internal Aiming NSDI 2021 orchestrator Unveil, and analyze the Global, across consistent failure detector- Service Fabric Euro. Sys 2018 multiple datacenter the heart of SF’s internal orchestrator Distributed Completely decentralize SF’s Global, across Arbitrator based centralized arbitrator-based Info. Com 2020 multiple datacenter Failure Detector failure detector 47

Future Work • Exploring the Optimistic Concurrency Control for Smart Home Scenario • Lock based pessimistic concurrency control is slower • OCC will bridge the gap between current smart-homes and traditional transaction management systems • Decentralize the Safe. Home Orchestrator • Safe. Home runs on a single device – not fault tolerant • Challenges: decentralized Safe. Home – need to sync before executing each command • Exploring the “Human” facing side of Safe. Home • In case of conflict – which routine to abort? Different user might have different preference • User friendly way to design routine 48

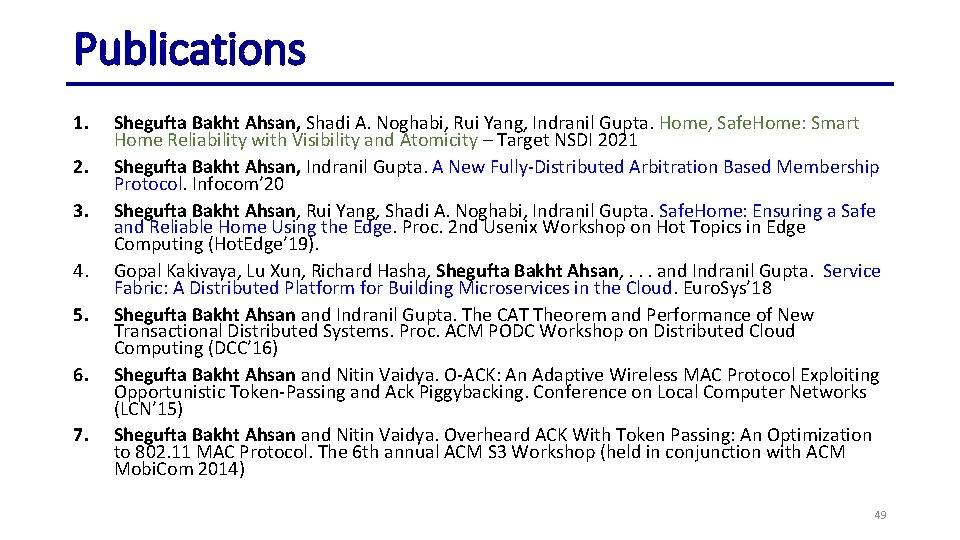

Publications 1. 2. 3. 4. 5. 6. 7. Shegufta Bakht Ahsan, Shadi A. Noghabi, Rui Yang, Indranil Gupta. Home, Safe. Home: Smart Home Reliability with Visibility and Atomicity – Target NSDI 2021 Shegufta Bakht Ahsan, Indranil Gupta. A New Fully-Distributed Arbitration Based Membership Protocol. Infocom’ 20 Shegufta Bakht Ahsan, Rui Yang, Shadi A. Noghabi, Indranil Gupta. Safe. Home: Ensuring a Safe and Reliable Home Using the Edge. Proc. 2 nd Usenix Workshop on Hot Topics in Edge Computing (Hot. Edge’ 19). Gopal Kakivaya, Lu Xun, Richard Hasha, Shegufta Bakht Ahsan, . . . and Indranil Gupta. Service Fabric: A Distributed Platform for Building Microservices in the Cloud. Euro. Sys’ 18 Shegufta Bakht Ahsan and Indranil Gupta. The CAT Theorem and Performance of New Transactional Distributed Systems. Proc. ACM PODC Workshop on Distributed Cloud Computing (DCC’ 16) Shegufta Bakht Ahsan and Nitin Vaidya. O-ACK: An Adaptive Wireless MAC Protocol Exploiting Opportunistic Token-Passing and Ack Piggybacking. Conference on Local Computer Networks (LCN’ 15) Shegufta Bakht Ahsan and Nitin Vaidya. Overheard ACK With Token Passing: An Optimization to 802. 11 MAC Protocol. The 6 th annual ACM S 3 Workshop (held in conjunction with ACM Mobi. Com 2014) 49

Contributions System Scale Consistency Mechanism Status Ensures a safe and congruent 1) Hot. Edge 2019 Edge, Home end state of smart-devices Safe Home 2) Full Version: deployment using a consistent internal Aiming NSDI 2021 orchestrator Unveil, and analyze the Global, across consistent failure detector- Service Fabric Euro. Sys 2018 multiple datacenter the heart of SF’s internal orchestrator Distributed Completely decentralize SF’s Global, across Arbitrator based centralized arbitrator-based Info. Com 2020 multiple datacenter Failure Detector failure detector 50

Disclaimer • Some images are collected from internet 51

Title 52

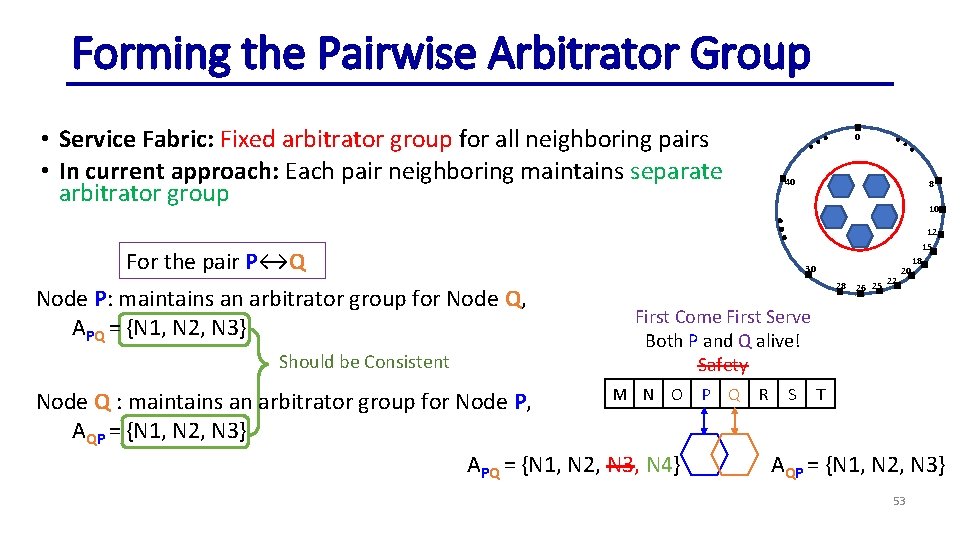

Forming the Pairwise Arbitrator Group • Service Fabric: Fixed arbitrator group for all neighboring pairs • In current approach: Each pair neighboring maintains separate arbitrator group 0 40 10 For the pair P↔Q Node P: maintains an arbitrator group for Node Q, APQ = {N 1, N 2, N 3} Should be Consistent 8 30 28 26 25 22 12 15 18 20 First Come First Serve Both P and Q alive! Safety M N O Node Q : maintains an arbitrator group for Node P, AQP = {N 1, N 2, N 3} APQ = {N 1, N 2, N 3, N 4} P Q R S T AQP = {N 1, N 2, N 3} 53

![Eventual Visibility (EV) – Lineage Table Released [R] Acquired [A] Scheduled [S] Committed States Eventual Visibility (EV) – Lineage Table Released [R] Acquired [A] Scheduled [S] Committed States](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-54.jpg)

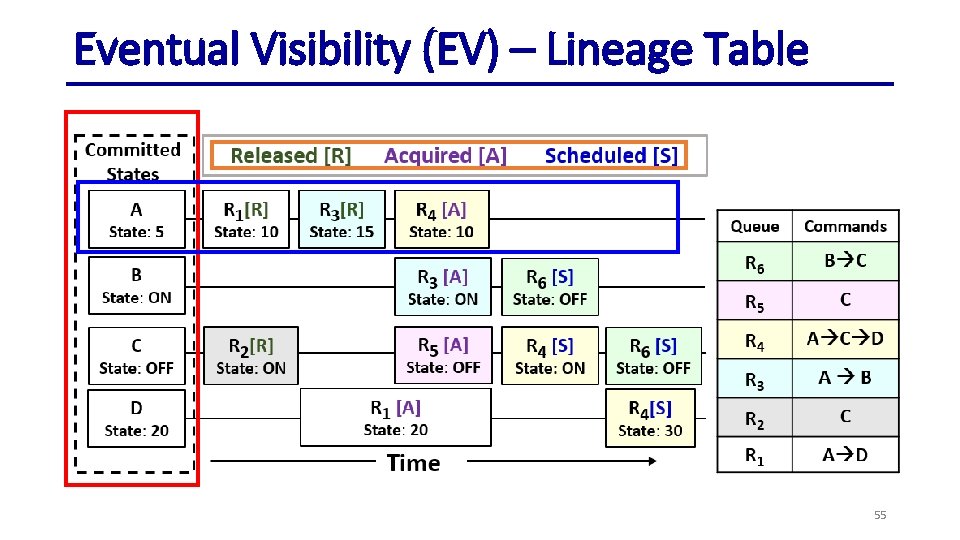

Eventual Visibility (EV) – Lineage Table Released [R] Acquired [A] Scheduled [S] Committed States A State: 5 R 1 [A] State: 10 R 3 [S] State: ON D State: 20 R 4 [S] State: 10 State: 15 State: ON State: OFF R 0: make. Coffee(expresso), make. Pancake(vanilla) R 1: make. Coffee(americano), make. Pancake(strawberry) R 2: make. Pancake(regular) R 3: start. Roomba(livingroom), start. Mopping(livingroom) R 4: start. Mopping(kitchen) R 3[S] B C C = Coffee Maker P = Pancake Maker R = Roomba M = Mopper R 2[A] R 4 [S] State: ON R 1 [S] State: 20 Time R 4[S] State: 30 54

Eventual Visibility (EV) – Lineage Table 55

Title 56

![Eventual Visibility (EV) – Lineage Table Released [R] Ordering: R 1 Acquired [A] R Eventual Visibility (EV) – Lineage Table Released [R] Ordering: R 1 Acquired [A] R](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-57.jpg)

Eventual Visibility (EV) – Lineage Table Released [R] Ordering: R 1 Acquired [A] R 1, R 2 Scheduled [S] Committed [C] R 3, R 1, R 2 R 4 R 3, R 1, R 2 R 5, R 4 Table Head Cf. Mkr R 1 C 1 [S] R 2 C 1 [S] State: null State: esp State: ame Pnck. Mkr R 3 C 1 [S] R 1 C 2 [S] State: null State: reg Roomba R 4 C 1 [S] State: null State: liv Mopper R 5 C 1 [S] State: null State: kit State: van R 1: make. Coffee(esp), make. Pancake(van) R 2: make. Coffee(ame), make. Pancake(str) R 3: make. Pancake(reg) R 4: start. Roomba(liv), start. Mopping(liv) R 5: start. Mopping(kit) Post-Lease R 2 C 2 [S] State: str Pre-Lease R 4 C 2 [S] State: liv Pre-Lease Time 57

![EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-58.jpg)

EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed [C] Current Time Table Head Cf. Mkr R 1 C 1 [C] R 2 C 1 [R] State: null State: esp State: ame Pnck. Mkr R 3 C 1 [C] R 1 C 2 [C] State: null State: reg Roomba R 4 C 1 [C] Mopper R 5 C 1 [C] State: null State: van R 2 C 2 [A] State: str State: liv State: kit R 4 C 2 [C] State: liv Time 58

![EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-59.jpg)

EV – State Compaction and Current State Released [R] Acquired [A] Scheduled [S] Committed [C] Current Time Table Head Cf. Mkr State: esp Pnck. Mkr State: van R 2 C 1 [R] State: ame R 2 C 2 [A] State: str Roomba State: liv Mopper State: liv Time 59

![[X] External Vs. Internal Orchestrators External Orchestrators Generic External communication Rigid Difficult to build [X] External Vs. Internal Orchestrators External Orchestrators Generic External communication Rigid Difficult to build](http://slidetodoc.com/presentation_image/49add1c2023bcbb23128d531bb47131c/image-60.jpg)

[X] External Vs. Internal Orchestrators External Orchestrators Generic External communication Rigid Difficult to build efficient, consistent components Preferred for generic tasks Internal Orchestrators Specialize Internal communication Flexible Allow building efficient, consistent components (e. g. Reliable Collection) Preferred for specialized tasks 60

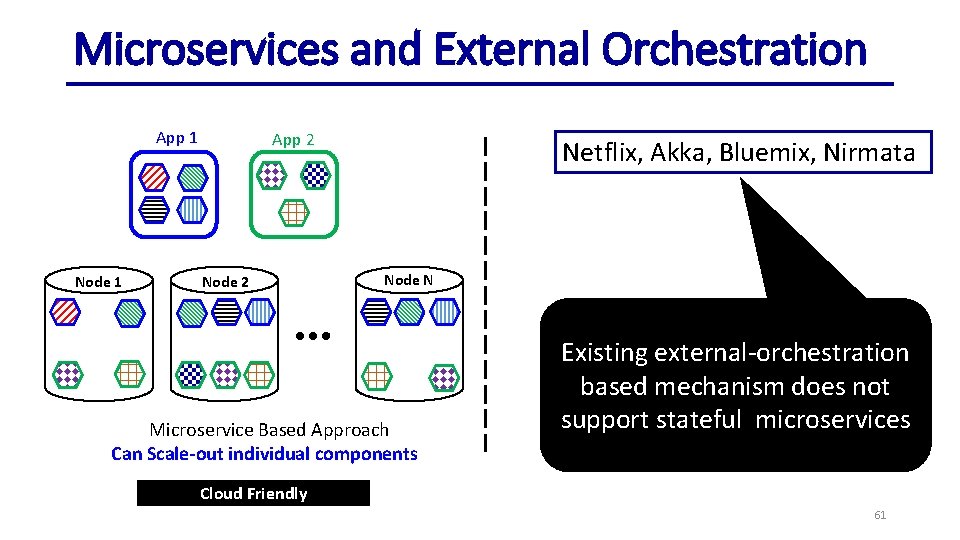

Microservices and External Orchestration App 1 Node 1 App 2 Node 2 Netflix, Akka, Bluemix, Nirmata Node N Microservice Based Approach Can Scale-out individual components Existing external-orchestration based mechanism does not support stateful microservices Cloud Friendly 61

“Stateful” Microservices! • Microsoft Service Fabric is the first to offer • Stateful microservices • Provide inherent data structures • Consistent and distributed • Distributed Dictionary, Queue etc. • Transactional guarantees Service Fabric’s unique internal orchestration mechanism makes it possible! 62

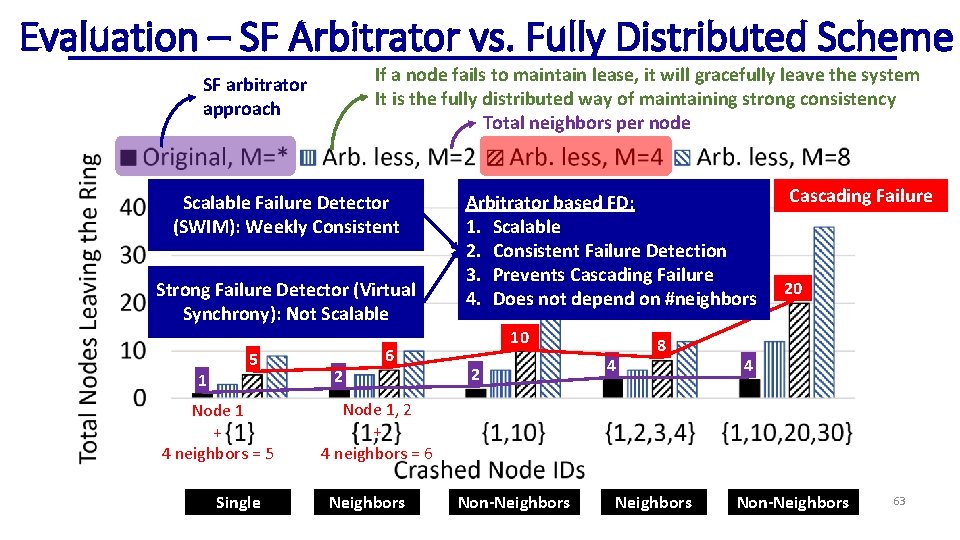

Evaluation – SF Arbitrator vs. Fully Distributed Scheme If a node fails to maintain lease, it will gracefully leave the system It is the fully distributed way of maintaining strong consistency Total neighbors per node SF arbitrator approach Scalable Failure Detector (SWIM): Weekly Consistent Strong Failure Detector (Virtual Synchrony): Not Scalable 1 5 Node 1 + 4 neighbors = 5 Single 2 6 Arbitrator based FD: 1. Scalable 2. Consistent Failure Detection 3. Prevents Cascading Failure 4. Does not depend on #neighbors 10 2 4 8 Cascading Failure 20 4 Node 1, 2 + 4 neighbors = 6 Neighbors Non-Neighbors 63

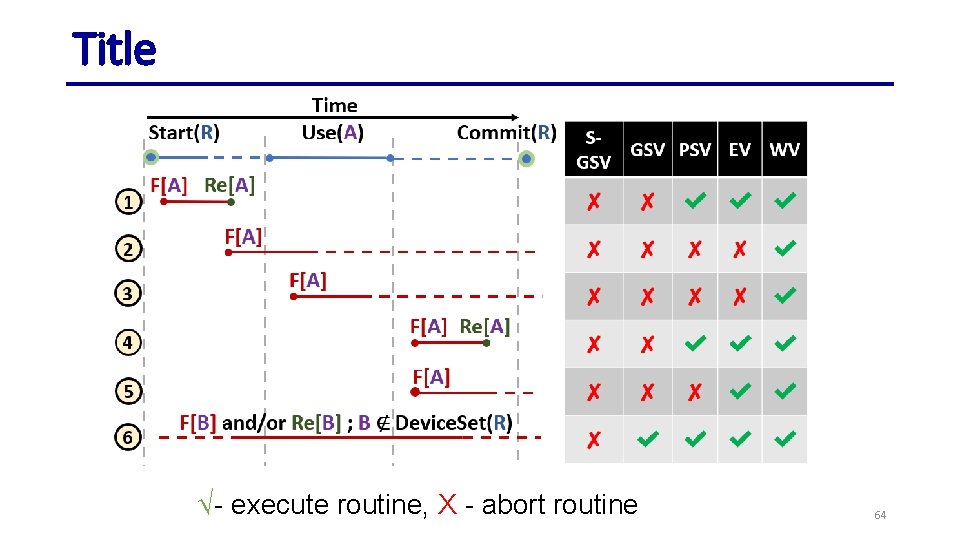

Title √- execute routine, X - abort routine 64

Title 65

- Slides: 65