Multiple Pattern Matching Revisited Robert Susik 1 Szymon

Multiple Pattern Matching Revisited Robert Susik 1, Szymon Grabowski 1, Kimmo Fredriksson 2 1 Lodz University of Technology, Institute of Applied Computer Science, Łódź, Poland 2 University of Eastern Finland, School of Computing, Kuopio, Finland PSC, Prague, Sept. 2014

Multiple pattern matching The problem: report all text T 1. . n positions i such that one of r patterns P 1. . m matches T for some 1 ≤ i ≤ n both over a common integer alphabet of size σ. Usage: • antivirus scanning, • intrusion detection, • web searches, • etc. 2

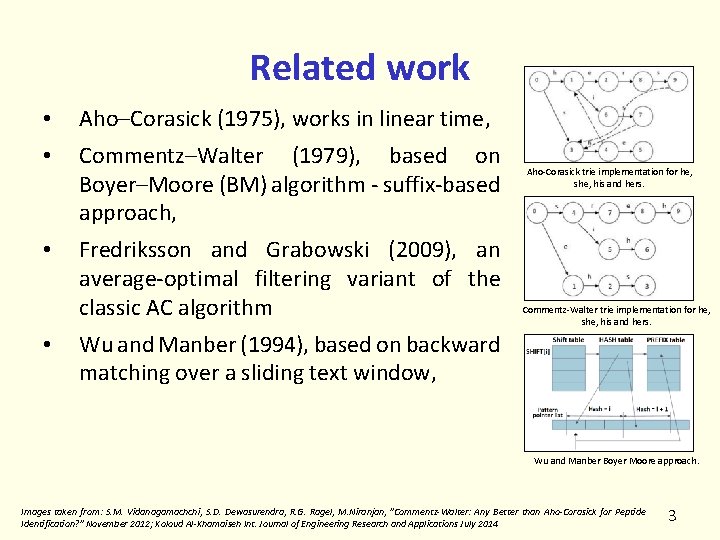

Related work • Aho–Corasick (1975), works in linear time, • Commentz–Walter (1979), based on Boyer–Moore (BM) algorithm - suffix-based approach, • Fredriksson and Grabowski (2009), an average-optimal filtering variant of the classic AC algorithm • Aho-Corasick trie implementation for he, she, his and hers. Commentz-Walter trie implementation for he, she, his and hers. Wu and Manber (1994), based on backward matching over a sliding text window, Wu and Manber Boyer Moore approach. Images taken from: S. M. Vidanagamachchi, S. D. Dewasurendra, R. G. Ragel, M. Niranjan, "Commentz-Walter: Any Better than Aho-Corasick for Peptide Identification? " November 2012; Koloud Al-Khamaiseh Int. Journal of Engineering Research and Applications July 2014 3

Related work • DAWG-match (Crochemore et al. , 1999) and Multi. BDM (Crochemore & Rytter, 1994), based on backward matching, linear in the worst case, complex, Multi-BNDM (Navarro & Raffinot, 1998) – bit-parallel version, simplified, • Set Backward Oracle Matching (Allauzen & Raffinot, 1999), similar as above but simpler and is very efficient in practice, • Succinct Backward DAWG Matching (Fredriksson, 2003), practical for huge pattern sets due to use of succinct index, • Faro & Külekci, use of the SSE technology, e. g. wsfp (wordsize fingerprint instruction) operation used to identify text blocks that may contain a matching pattern (2012), • Salmela et al. tried a similar approach to ours (not very successful for short patterns in their tests), 2006. 4

Shift-Or (Baeza-Yates & Gonnet, 1992) • Shift-Or simulates a non-deterministic finite automaton (NFA), with bit-parallelism • Bit-parallelism: • Frequently used in stringology when the results of single operations are boolean or small integers • Many (even w, computer word size) operations can be made in parallel • Reinvented several times, but BY-G (1992) is the most known 5

![Preproc gcaga B[g] = 01101 B[c] = 10111 B[a] = 11010 Search Shift-Or – Preproc gcaga B[g] = 01101 B[c] = 10111 B[a] = 11010 Search Shift-Or –](http://slidetodoc.com/presentation_image/1e26bf2914a79483428db7e726206507/image-6.jpg)

Preproc gcaga B[g] = 01101 B[c] = 10111 B[a] = 11010 Search Shift-Or – in work V : = ~0; i : = 0 while i < n do V : = (V << 1) | B[T[i]] if (m– 1)-th bit of V is 0 then report match at position i i : = i + 1 B[ ] – bit-vector for each alphabet symbol, m * bits in total. T = gcatcgcagagat P = gcaga 6

Shift-Or • Pros: • Fast: O(n m / w ) time in the worst case • when m = O(w), it is linear in time • Cons: • Avg-case is the same as the worst-case but faster methods are possible 7

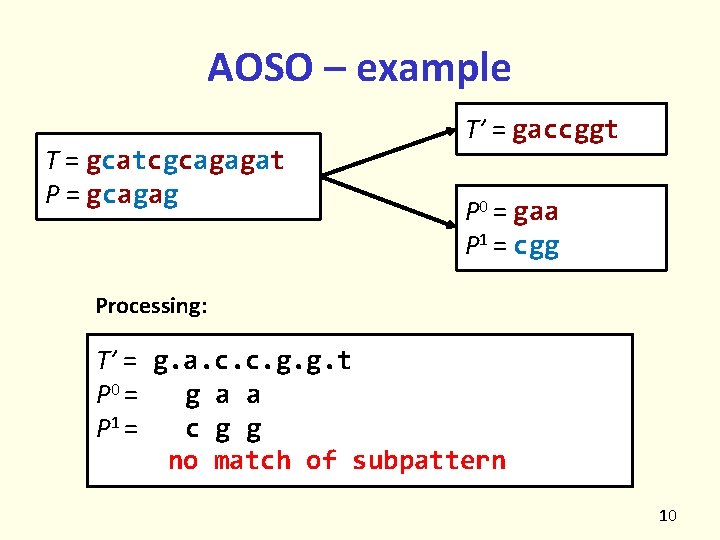

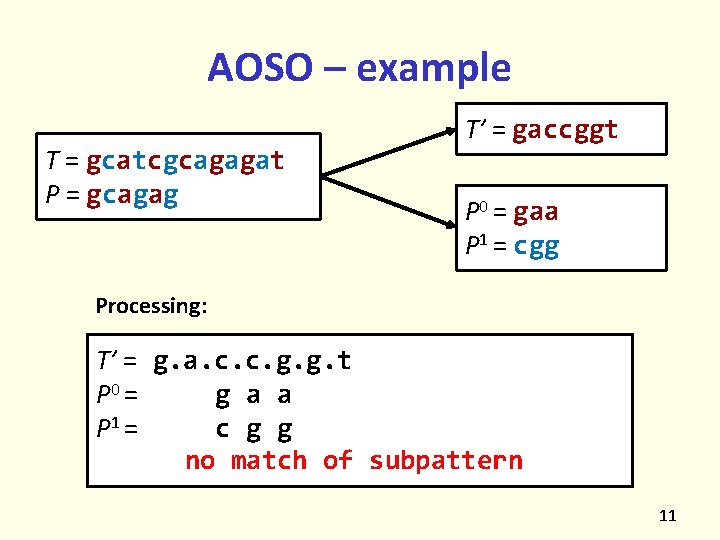

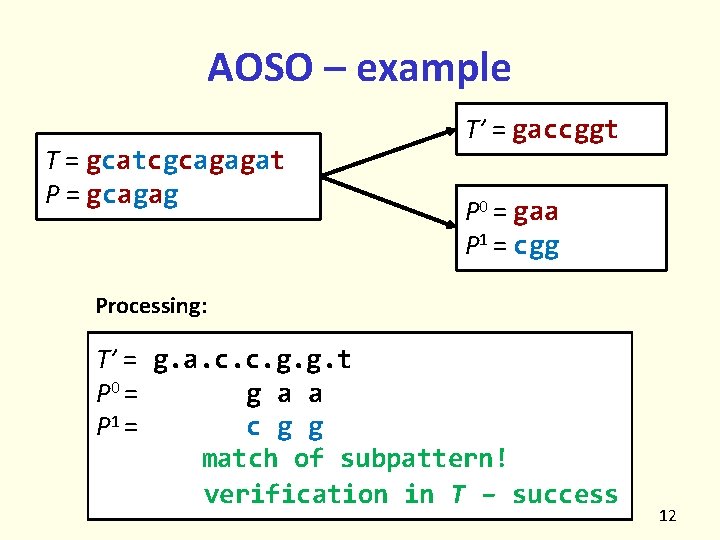

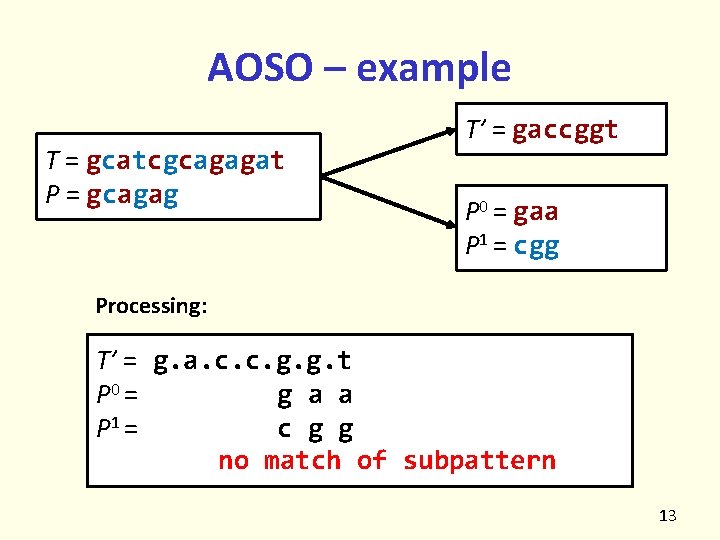

Average Optimal Shift-Or (AOSO) (Fredriksson & Grabowski, 2005, 2009) • Motivation: • Improve the avg-case of Shift-Or • Idea: • Sample T every k symbols: T’ = t 0, tk, t 2 k, … • Need to match k subpatterns of P: P 0, …, Pk– 1, each sampled in the same way as T, starting from 0, 1, …, k– 1 • When some subpattern matches, verify whethere is a true match 8

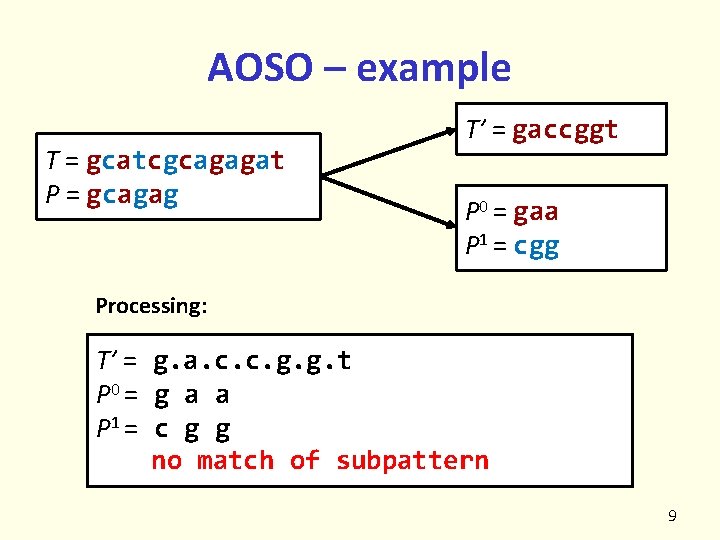

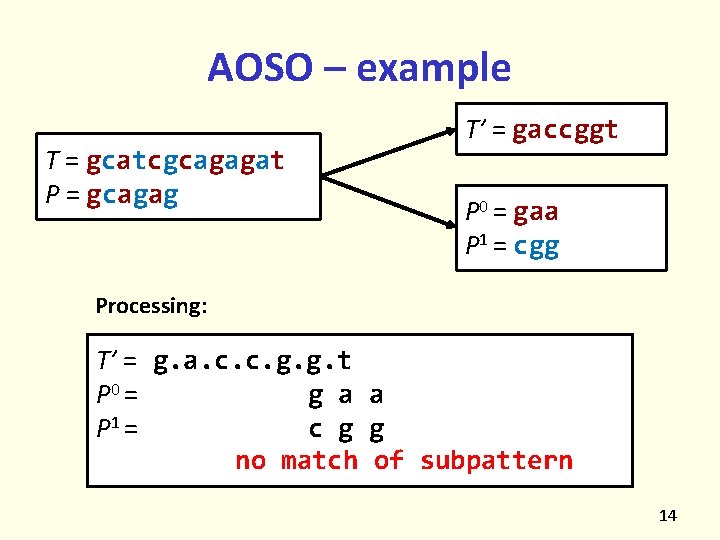

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = P 0 = P 1 = g. a. c. c. g. g. t g a a c g g no match of subpattern 9

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = g. a. c. c. g. g. t P 0 = g a a P 1 = c g g no match of subpattern 10

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = g. a. c. c. g. g. t P 0 = g a a P 1 = c g g no match of subpattern 11

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = g. a. c. c. g. g. t P 0 = g a a P 1 = c g g match of subpattern! verification in T – success 12

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = g. a. c. c. g. g. t P 0 = g a a P 1 = c g g no match of subpattern 13

AOSO – example T = gcatcgcagagat P = gcagag T’ = gaccggt P 0 = gaa P 1 = cgg Processing: T’ = g. a. c. c. g. g. t P 0 = g a a P 1 = c g g no match of subpattern 14

AOSO • Pros: • Faster than Shift-Or: O(n log (m)/m) time in the avg case • Cons: • Needs verification to exclude false matches, not a big problem in practice 15

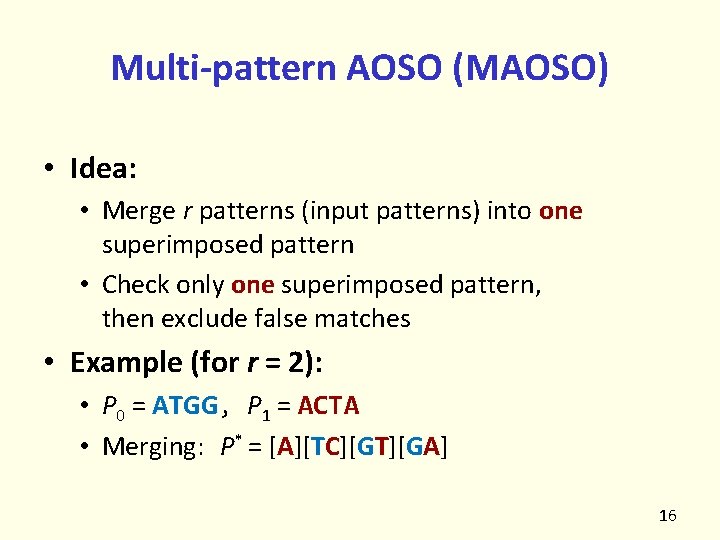

Multi-pattern AOSO (MAOSO) • Idea: • Merge r patterns (input patterns) into one superimposed pattern • Check only one superimposed pattern, then exclude false matches • Example (for r = 2): • P 0 = ATGG, P 1 = ACTA • Merging: P* = [A][TC][GT][GA] 16

MAOSO – some details • Just set the bit-vectors (in the manner of Shift. Or) if any of the symbols at given position of superimposed pattern is present • Use AOSO for such superimposed pattern • Problem: If r is large and (especially) σ small, then there’s a lot of verifications 17

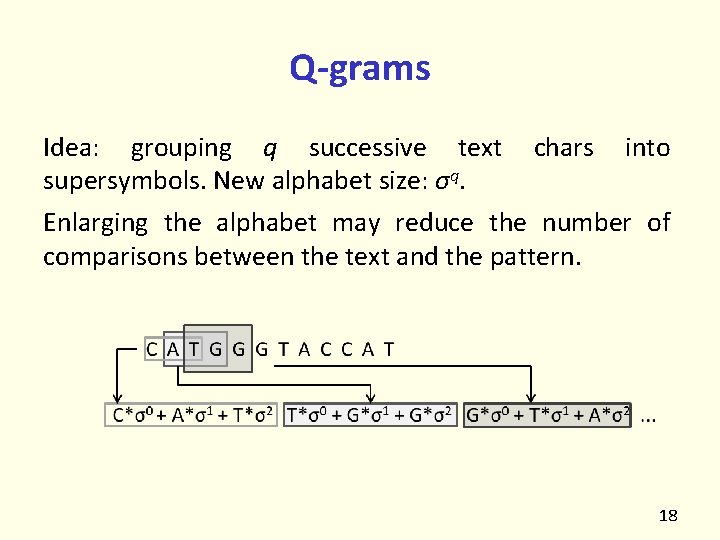

Q-grams Idea: grouping q successive text chars into supersymbols. New alphabet size: σq. Enlarging the alphabet may reduce the number of comparisons between the text and the pattern. 18

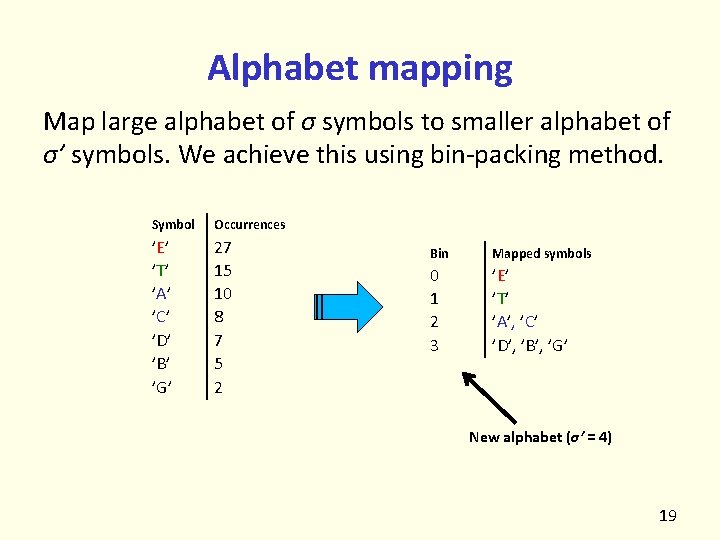

Alphabet mapping Map large alphabet of σ symbols to smaller alphabet of σ’ symbols. We achieve this using bin-packing method. Symbol Occurrences ’E’ ’T’ ’A’ ’C’ ’D’ ’B’ ’G’ 27 15 10 8 7 5 2 Bin Mapped symbols 0 1 2 3 ’E’ ’T’ ’A’, ’C’ ’D’, ’B’, ’G’ New alphabet (σ’ = 4) 19

Multi AOSO on q-Grams (MAG) • Super-alphabet reduces verification number. We have p = O( (qr)/σq ) probability of match, so verification probability is O( p�m / (kq) �) and the cost is O(rqm) • q-gram based search makes steps bigger (equals q), or in other words text is smaller (n/q) • FAOSO runs in O(n/k · �(m/q)/w�) time in our case, where w is the number of bits in computer word (typically 64). 20

Simple Multi AOSO on q-Grams (SMAG) • Simpler version of previously mentioned method. In this case the whole text is encoded prior to starting the actual search algorithm, which is then more streamlined. • Total complexity is Ω(n), the time to encode the text. • A little faster search, but much longer preprocessing phase. • Maybe useful if text is searched multiple times in short period and we have space to store it in encoded form. 21

Experimental results • Hardware: Intel Core i 3 2100 3. 1 GHz CPU 128 KB L 1, 512 KB L 2 and 3 MB L 3 cache, 4 GB of 1333 MHz DDR 3 RAM • Compiler: g++ version 4. 8. 1 with -O 3 optimization • OS: Ubuntu 64 -bit OS with kernel 3. 11. 0 -17 • Text: taken from Pizza & Chili Corpus (http: //pizzachili. dcc. uchile. cl), 200 MB each • Tests: All source codes have been taken from authors and compiled on the same test machine (some of them cannot handle long patterns, ie. m=64). 22

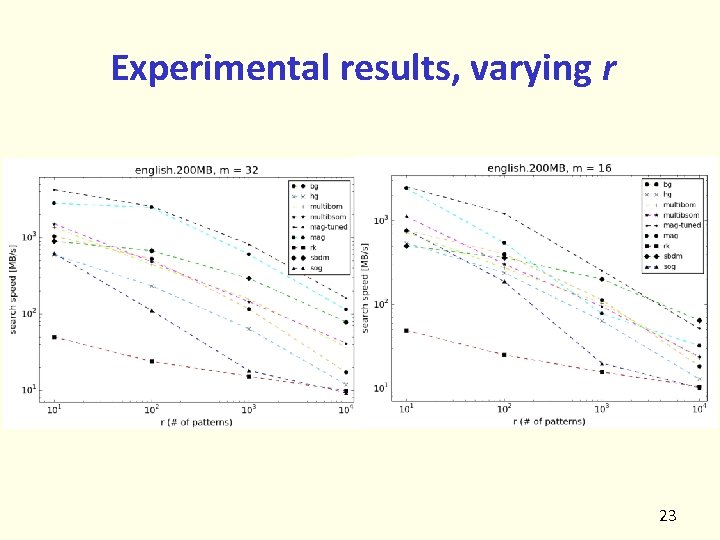

Experimental results, varying r 23

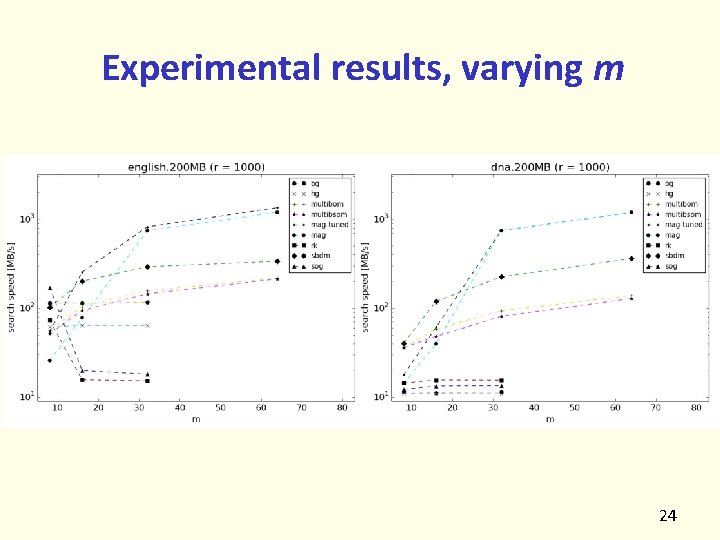

Experimental results, varying m 24

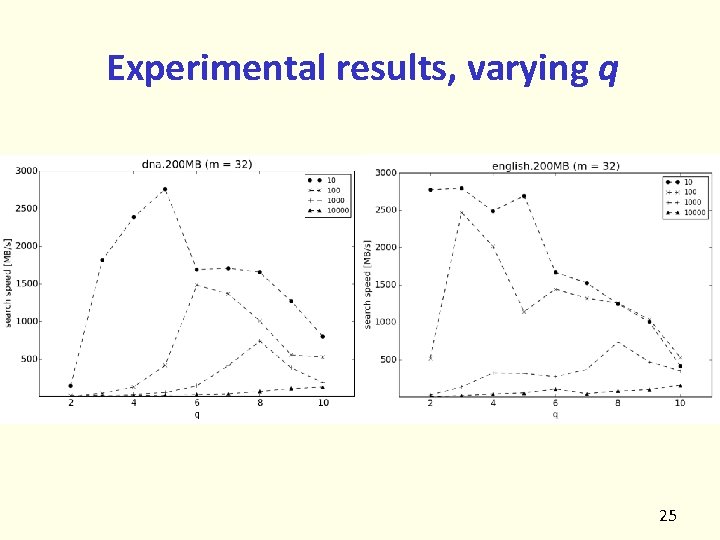

Experimental results, varying q 25

Conclusions • Our work can be seen as a new and quite successful combination of known building bricks. • The presented algorithm, MAG, usually wins with its competitors on the three test datasets (english and proteins, dna). • One of the key successful ideas was alphabet quantization (binning), which is performed in a greedy manner, after sorting the original alphabet by frequency. 26

Future work • Different alphabet mapping techniques could improve efficiency. • Is it possible to choose the algorithm’s parameters in order to reach average optimality (for m = O(w))? • SSE instructions seem to offer great opportunities, especially for bit-parallel algorithms. • Dense codes (e. g. , ETDC) for words or q-grams not only serve for compressing data (texts), but also enable faster pattern searches (our preliminary results are rather promising). 27

- Slides: 27