Microsoft in the Enterprise Windows Scalability Technology Challenges

Microsoft in the Enterprise Windows Scalability: Technology, Challenges and Limitations Andreas Kampert

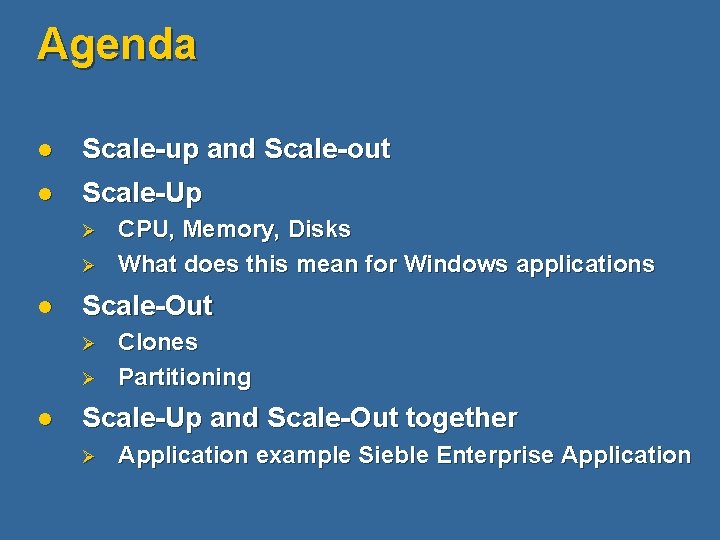

Agenda l Scale-up and Scale-out l Scale-Up Ø Ø l Scale-Out Ø Ø l CPU, Memory, Disks What does this mean for Windows applications Clones Partitioning Scale-Up and Scale-Out together Ø Application example Sieble Enterprise Application

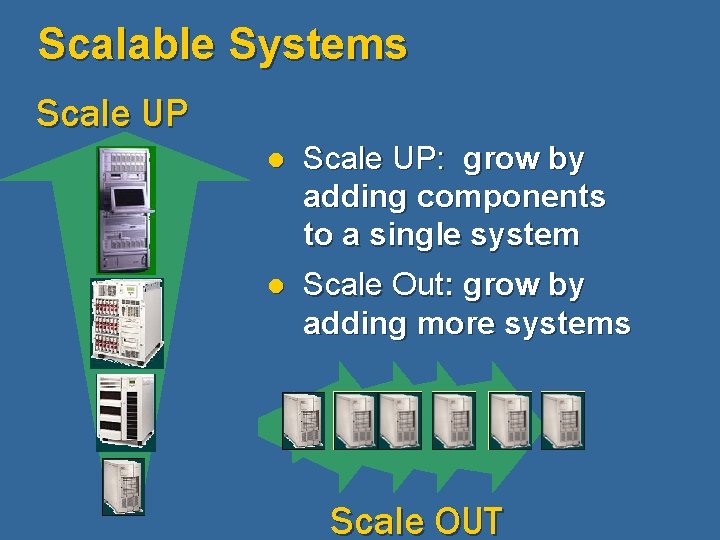

Scalable Systems Scale UP l Scale UP: grow by adding components to a single system l Scale Out: grow by adding more systems Scale OUT

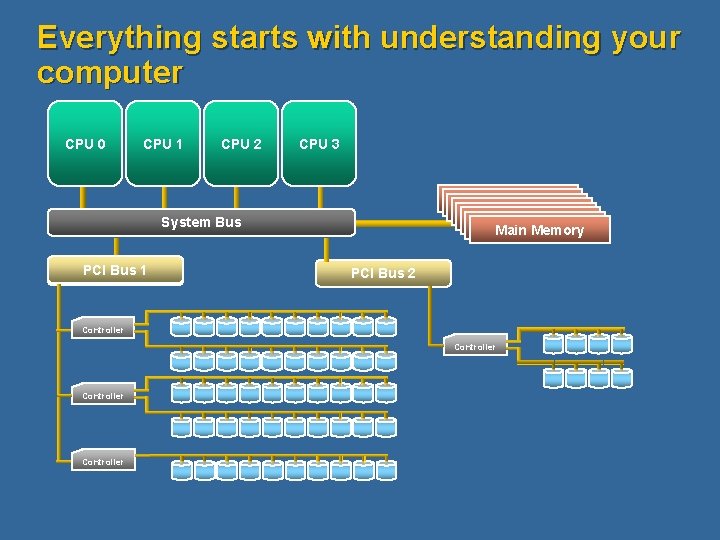

Everything starts with understanding your computer CPU 0 CPU 1 CPU 2 CPU 3 Main Memory System Bus PCI Bus 1 PCI Bus Main Memory PCI Bus 2 Controller

Agenda l Scale-up and Scale-out l Scale-Up Ø Ø l Scale-Out Ø Ø l CPU, Memory, Disks What does this mean for Windows applications Clones Partitioning Scale-Up and Scale-Out together Ø Application example Sieble Enterprise Application

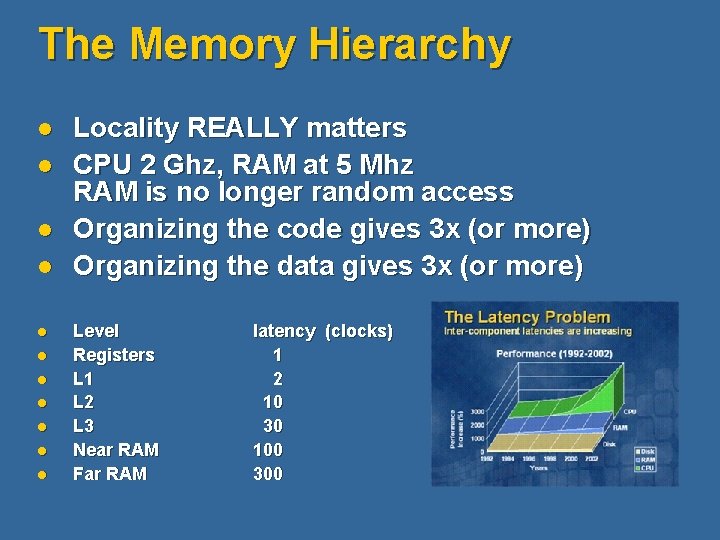

The Memory Hierarchy l l l Locality REALLY matters CPU 2 Ghz, RAM at 5 Mhz RAM is no longer random access Organizing the code gives 3 x (or more) Organizing the data gives 3 x (or more) Level Registers L 1 L 2 L 3 Near RAM Far RAM latency (clocks) 1 2 10 30 100 300

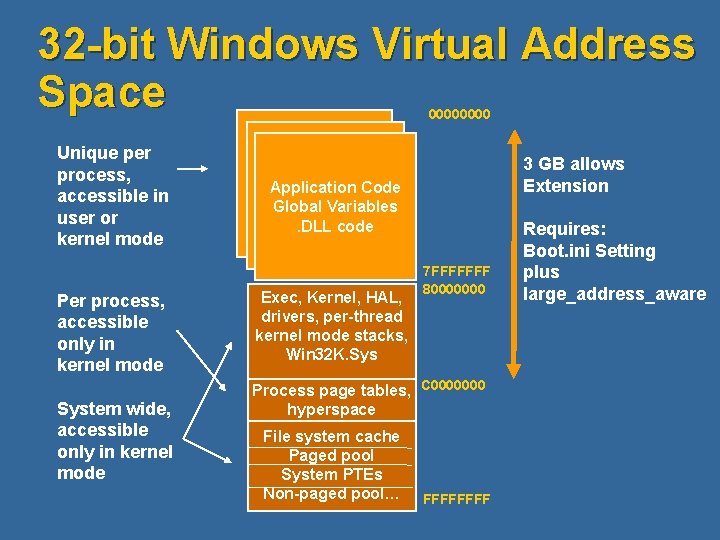

32 -bit Windows Virtual Address Space 0000 Unique per process, accessible in user or kernel mode Per process, accessible only in kernel mode System wide, accessible only in kernel mode 3 GB allows Extension Application Code Global Variables. DLL code Exec, Kernel, HAL, drivers, per-thread kernel mode stacks, Win 32 K. Sys 7 FFFFFFF 80000000 Process page tables, C 0000000 hyperspace File system cache Paged pool System PTEs Non-paged pool… FFFF Requires: Boot. ini Setting plus large_address_aware

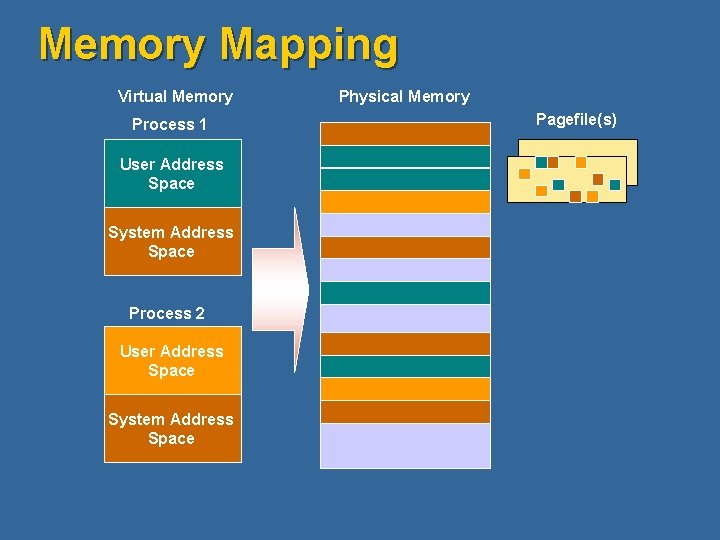

Memory Mapping Virtual Memory Process 1 User Address Space System Address Space Process 2 User Address Space System Address Space Physical Memory Pagefile(s)

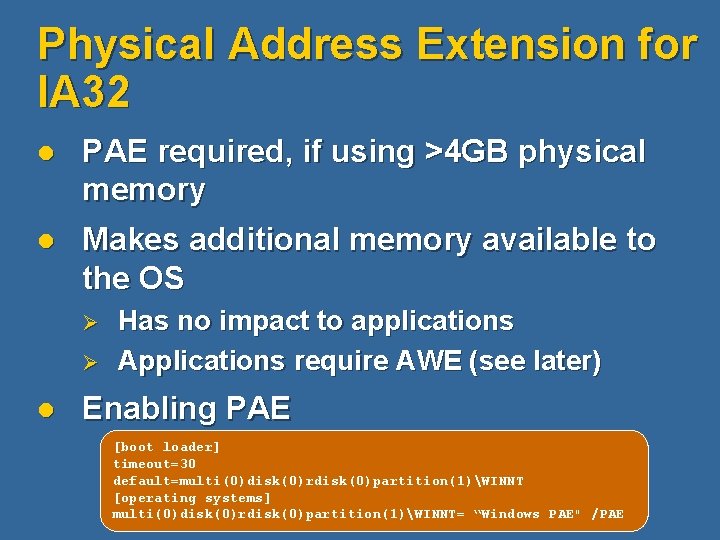

Physical Address Extension for IA 32 l PAE required, if using >4 GB physical memory l Makes additional memory available to the OS Ø Ø l Has no impact to applications Applications require AWE (see later) Enabling PAE [boot loader] timeout=30 default=multi(0)disk(0)rdisk(0)partition(1)WINNT [operating systems] multi(0)disk(0)rdisk(0)partition(1)WINNT= “Windows PAE" /PAE

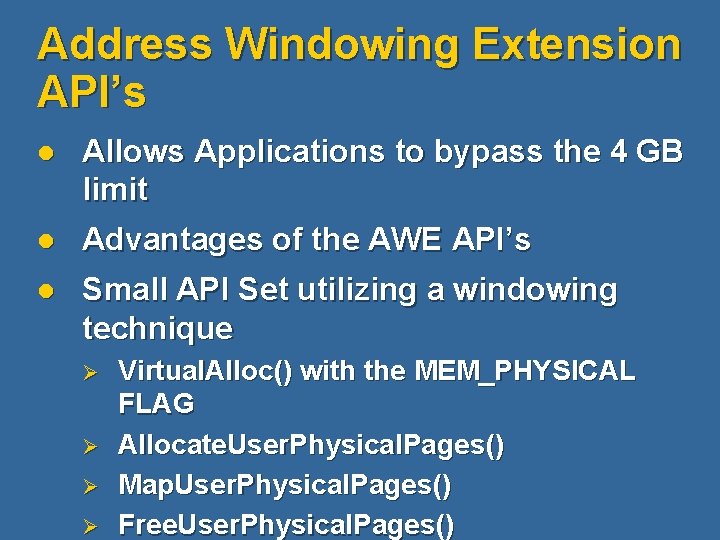

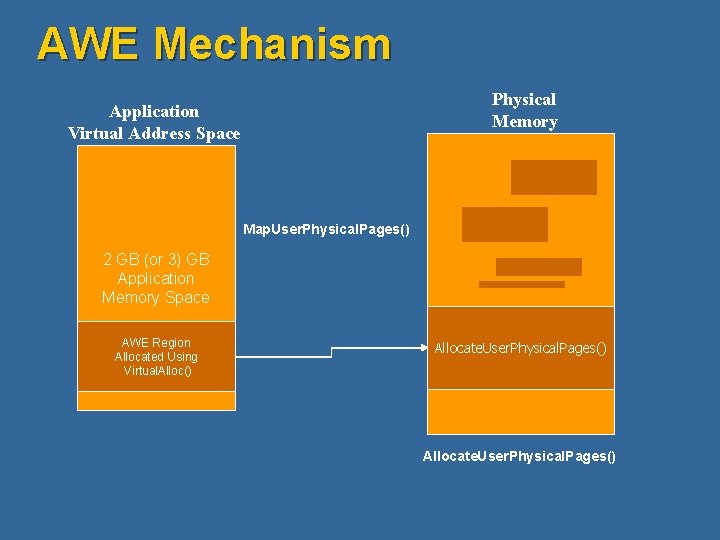

Address Windowing Extension API’s l Allows Applications to bypass the 4 GB limit l Advantages of the AWE API’s l Small API Set utilizing a windowing technique Ø Ø Virtual. Alloc() with the MEM_PHYSICAL FLAG Allocate. User. Physical. Pages() Map. User. Physical. Pages() Free. User. Physical. Pages()

AWE Mechanism Physical Memory Application Virtual Address Space Map. User. Physical. Pages() 2 GB (or 3) GB Application Memory Space AWE Region Allocated Using Virtual. Alloc() Allocate. User. Physical. Pages()

Hot-Add Memory l Requires Ø Ø Hardware and BIOS support § § § SRAT ACPI 2. 0 Reporting Memory at Post

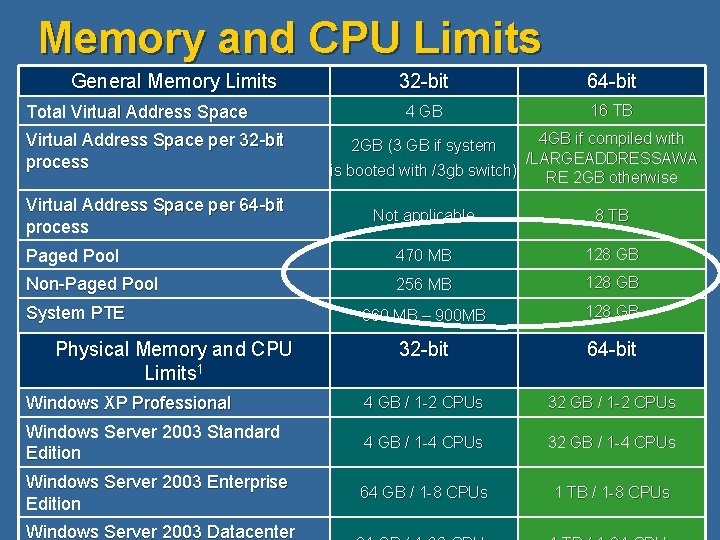

Memory and CPU Limits General Memory Limits Total Virtual Address Space per 32 -bit process Virtual Address Space per 64 -bit process 32 -bit 64 -bit 4 GB 16 TB 4 GB if compiled with /LARGEADDRESSAWA is booted with /3 gb switch) RE 2 GB otherwise 2 GB (3 GB if system Not applicable 8 TB Paged Pool 470 MB 128 GB Non-Paged Pool 256 MB 128 GB 660 MB – 900 MB 128 GB 32 -bit 64 -bit Windows XP Professional 4 GB / 1 -2 CPUs 32 GB / 1 -2 CPUs Windows Server 2003 Standard Edition 4 GB / 1 -4 CPUs 32 GB / 1 -4 CPUs Windows Server 2003 Enterprise Edition 64 GB / 1 -8 CPUs 1 TB / 1 -8 CPUs System PTE Physical Memory and CPU Limits 1 Windows Server 2003 Datacenter

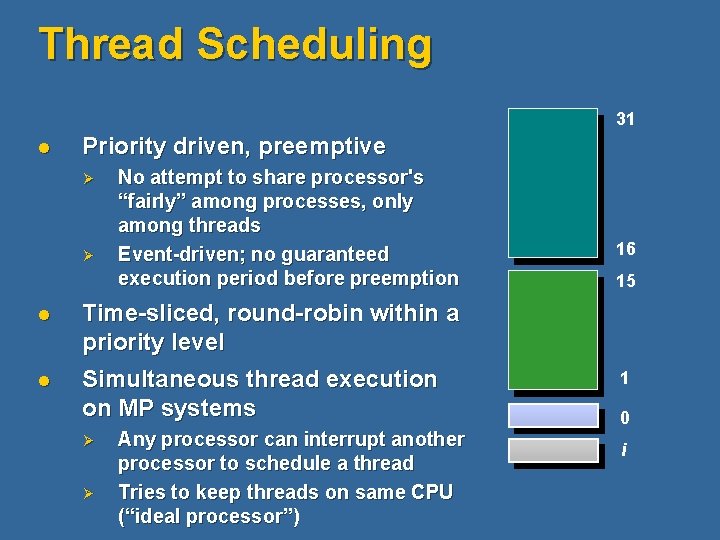

Thread Scheduling 31 l Priority driven, preemptive Ø Ø No attempt to share processor's “fairly” among processes, only among threads Event-driven; no guaranteed execution period before preemption l Time-sliced, round-robin within a priority level l Simultaneous thread execution on MP systems Ø Ø Any processor can interrupt another processor to schedule a thread Tries to keep threads on same CPU (“ideal processor”) 16 15 1 0 i

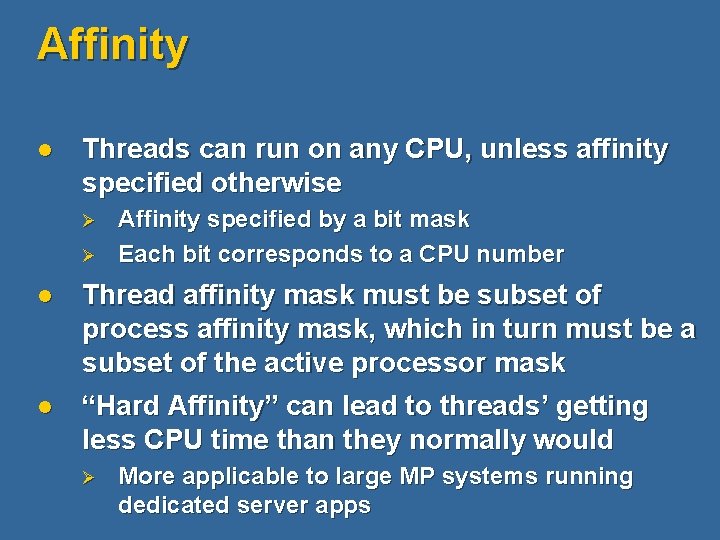

Affinity l Threads can run on any CPU, unless affinity specified otherwise Ø Ø Affinity specified by a bit mask Each bit corresponds to a CPU number l Thread affinity mask must be subset of process affinity mask, which in turn must be a subset of the active processor mask l “Hard Affinity” can lead to threads’ getting less CPU time than they normally would Ø More applicable to large MP systems running dedicated server apps

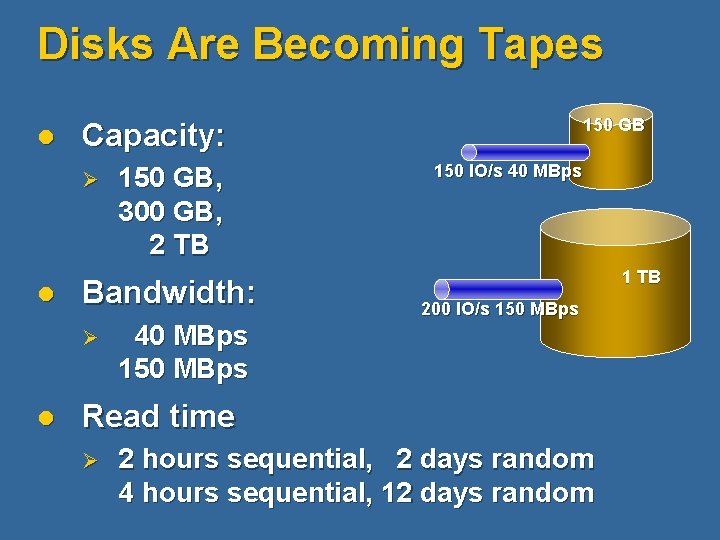

Disks Are Becoming Tapes l Capacity: Ø l 150 GB, 300 GB, 2 TB Bandwidth: Ø l 150 GB 40 MBps 150 IO/s 40 MBps 1 TB 200 IO/s 150 MBps Read time Ø 2 hours sequential, 2 days random 4 hours sequential, 12 days random

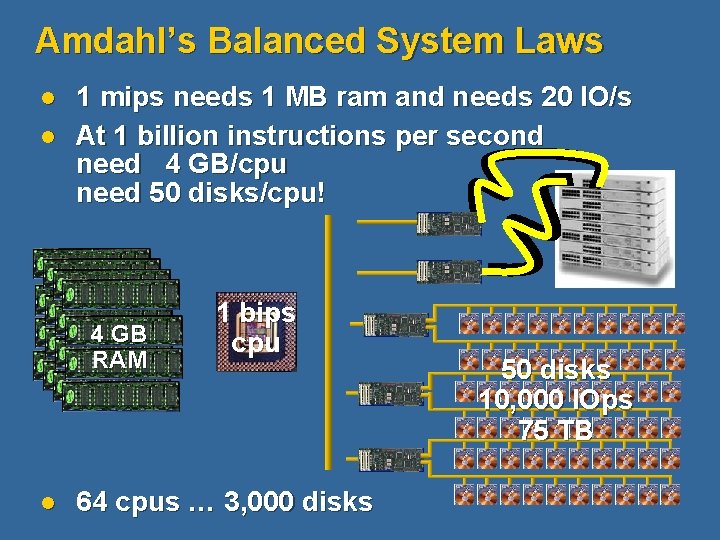

Amdahl’s Balanced System Laws l l 1 mips needs 1 MB ram and needs 20 IO/s At 1 billion instructions per second need 4 GB/cpu need 50 disks/cpu! 4 GB RAM l 1 bips cpu 64 cpus … 3, 000 disks 50 disks 10, 000 IOps 75 TB

Exchange Server Memory Management l Exchange Server does not use memory beyond 4 GB efficiently l Exchange Server 2003 requires /3 GB with more than 1 GB RAM l Exchange Server 2003 has no advantage through the usage of PAE l AWE not used by Exchange Server MSExchange. ISVM Largest Block Size MSExchange. ISVM Total 16 MB Free Blocks MSExchange. ISVM Total Large Free Block Bytes

Exchange Server Processors l Exchange Server Mailbox Server scales well up to 8 Processors l With more than 8 processors mostly hardware partitioning is recommended l With more than 8 processors use affinity mask to reduce to 8 processors for Exchange Server 2003 l Eventually additional processors for Virus Scanner, etc

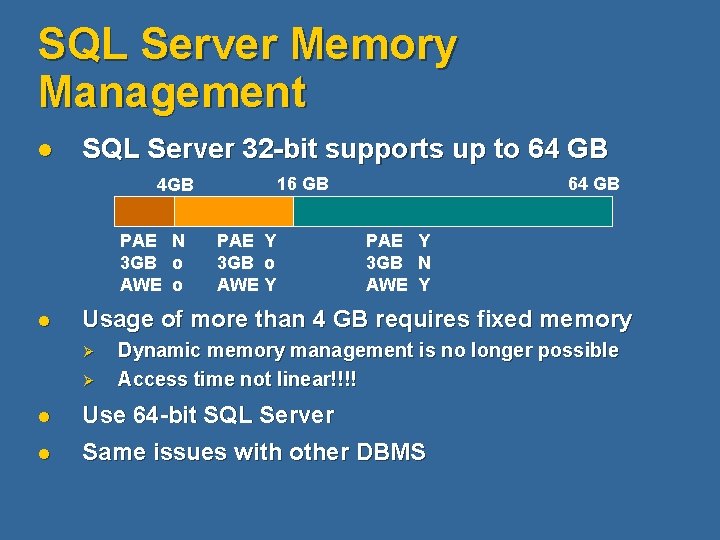

SQL Server Memory Management l SQL Server 32 -bit supports up to 64 GB 16 GB 4 GB PAE N 3 GB o AWE o l PAE Y 3 GB o AWE Y 64 GB PAE Y 3 GB N AWE Y Usage of more than 4 GB requires fixed memory Ø Ø Dynamic memory management is no longer possible Access time not linear!!!! l Use 64 -bit SQL Server l Same issues with other DBMS

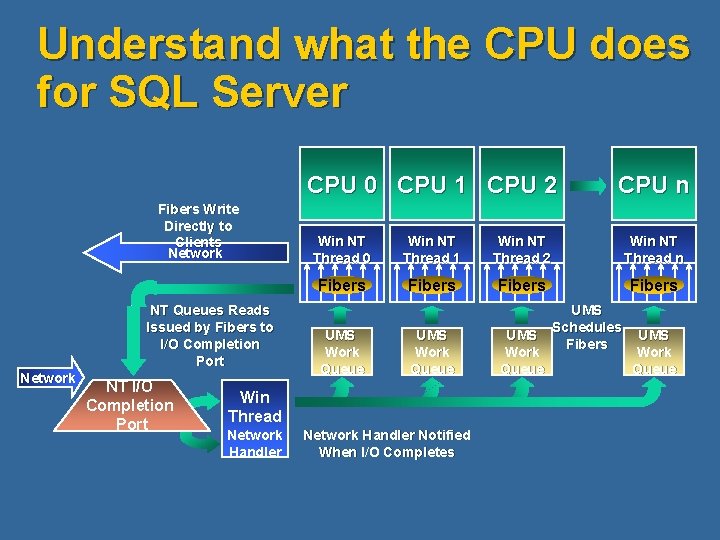

Understand what the CPU does for SQL Server CPU 0 CPU 1 CPU 2 Fibers Write Directly to Clients Network NT Queues Reads Issued by Fibers to I/O Completion Port Network NT I/O Completion Port Win NT Thread 0 Win NT Thread 1 Win NT Thread 2 Win NT Thread n Fibers UMS Work Queue UMS Schedules UMS Fibers Work Queue UMS Work Queue Win Thread Network Handler CPU n Network Handler Notified When I/O Completes

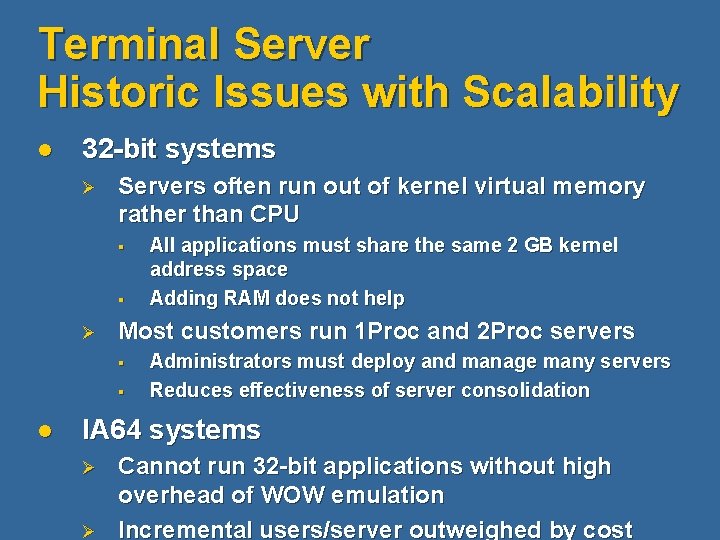

Terminal Server Historic Issues with Scalability l 32 -bit systems Ø Servers often run out of kernel virtual memory rather than CPU § § Ø Most customers run 1 Proc and 2 Proc servers § § l All applications must share the same 2 GB kernel address space Adding RAM does not help Administrators must deploy and manage many servers Reduces effectiveness of server consolidation IA 64 systems Ø Ø Cannot run 32 -bit applications without high overhead of WOW emulation Incremental users/server outweighed by cost

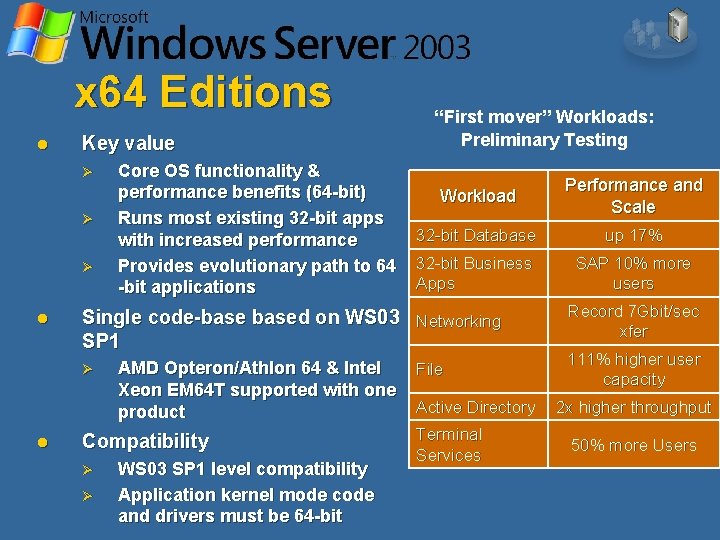

x 64 Editions l Key value Ø Ø Ø l Workload Performance and Scale 32 -bit Database up 17% 32 -bit Business Apps SAP 10% more users Single code-based on WS 03 Networking SP 1 Ø l Core OS functionality & performance benefits (64 -bit) Runs most existing 32 -bit apps with increased performance Provides evolutionary path to 64 -bit applications “First mover” Workloads: Preliminary Testing AMD Opteron/Athlon 64 & Intel Xeon EM 64 T supported with one product Compatibility Ø Ø WS 03 SP 1 level compatibility Application kernel mode code and drivers must be 64 -bit File Active Directory Terminal Services Record 7 Gbit/sec xfer 111% higher user capacity 2 x higher throughput 50% more Users

Agenda l Scale-up and Scale-out l Scale-Up Ø Ø l Scale-Out Ø Ø l CPU, Memory, Disks What does this mean for Windows applications Clones Partitioning Scale-Up and Scale-Out together Ø Application example Sieble Enterprise Application

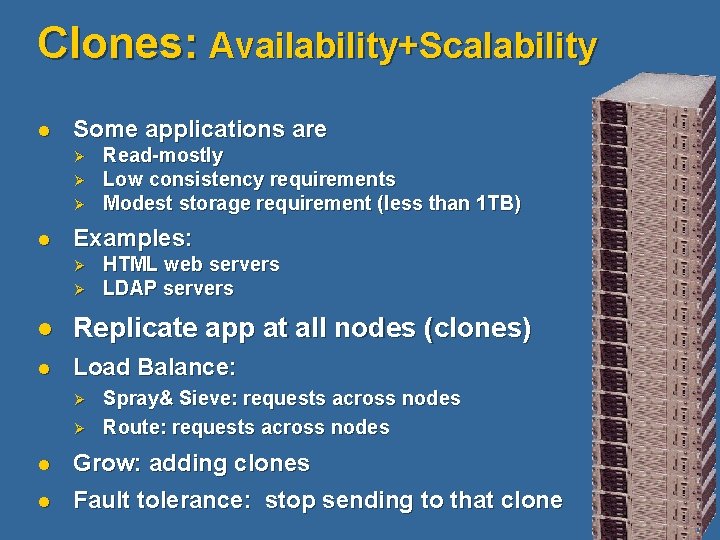

Clones: Availability+Scalability l Some applications are Ø Ø Ø l Read-mostly Low consistency requirements Modest storage requirement (less than 1 TB) Examples: Ø Ø HTML web servers LDAP servers l Replicate app at all nodes (clones) l Load Balance: Ø Ø Spray& Sieve: requests across nodes Route: requests across nodes l Grow: adding clones l Fault tolerance: stop sending to that clone

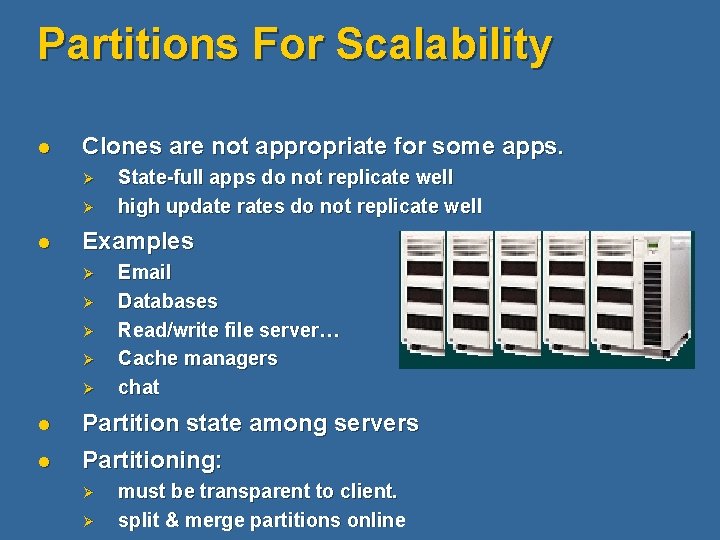

Partitions For Scalability l Clones are not appropriate for some apps. Ø Ø l State-full apps do not replicate well high update rates do not replicate well Examples Ø Ø Ø Email Databases Read/write file server… Cache managers chat l Partition state among servers l Partitioning: Ø Ø must be transparent to client. split & merge partitions online

Agenda l Scale-up and Scale-out l Scale-Up Ø Ø l Scale-Out Ø Ø l CPU, Memory, Disks What does this mean for Windows applications Clones Partitioning Scale-Up and Scale-Out together Ø Application example Sieble Enterprise Application

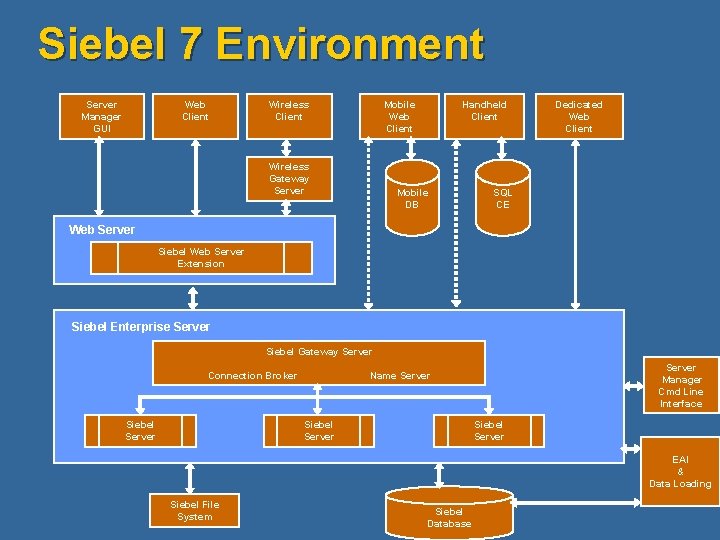

Siebel 7 Environment Server Manager GUI Web Client Wireless Client Mobile Web Client Wireless Gateway Server Handheld Client Mobile DB Dedicated Web Client SQL CE Web Server Siebel Web Server Extension Siebel Enterprise Server Siebel Gateway Server Connection Broker Siebel Server Manager Cmd Line Interface Name Server Siebel Server EAI & Data Loading Siebel File System Siebel Database

Questions ?

© 2004 Microsoft Corporation. All rights reserved. This presentation is for informational purposes only. MICROSOFT MAKES NO WARRANTIES, EXPRESS OR IMPLIED, IN THIS SUMMARY.

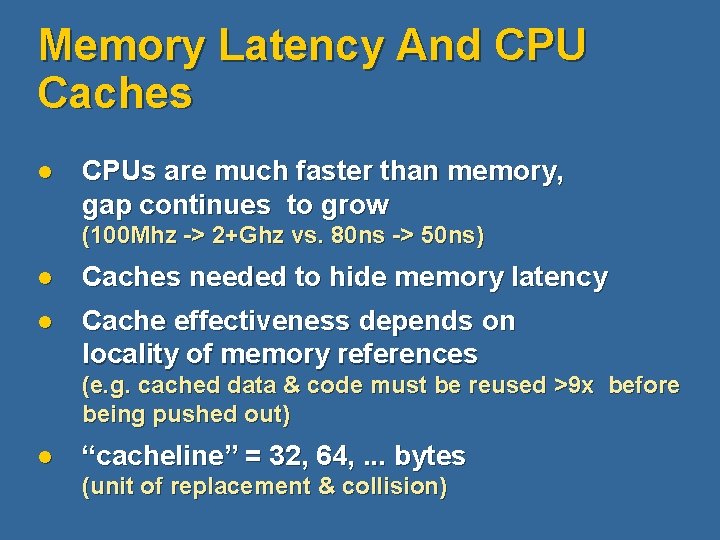

Memory Latency And CPU Caches l CPUs are much faster than memory, gap continues to grow (100 Mhz -> 2+Ghz vs. 80 ns -> 50 ns) l Caches needed to hide memory latency l Cache effectiveness depends on locality of memory references (e. g. cached data & code must be reused >9 x before being pushed out) l “cacheline” = 32, 64, . . . bytes (unit of replacement & collision)

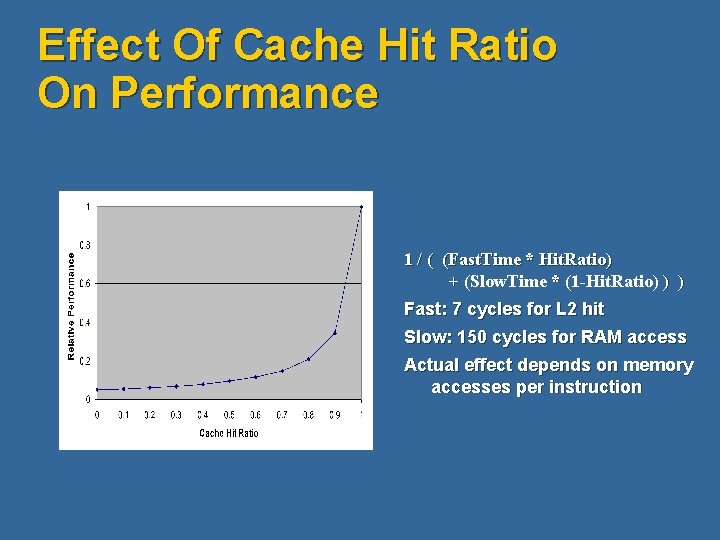

Effect Of Cache Hit Ratio On Performance 1 / ( (Fast. Time * Hit. Ratio) + (Slow. Time * (1 -Hit. Ratio) ) ) Fast: 7 cycles for L 2 hit Slow: 150 cycles for RAM access Actual effect depends on memory accesses per instruction

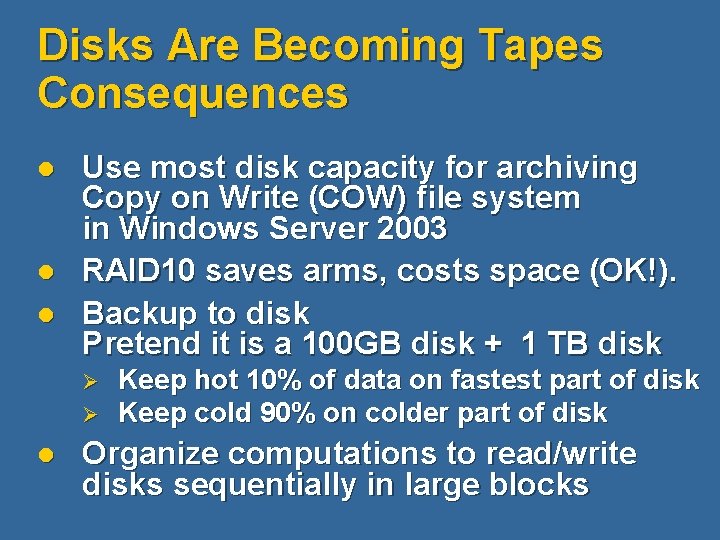

Disks Are Becoming Tapes Consequences l l l Use most disk capacity for archiving Copy on Write (COW) file system in Windows Server 2003 RAID 10 saves arms, costs space (OK!). Backup to disk Pretend it is a 100 GB disk + 1 TB disk Ø Ø l Keep hot 10% of data on fastest part of disk Keep cold 90% on colder part of disk Organize computations to read/write disks sequentially in large blocks

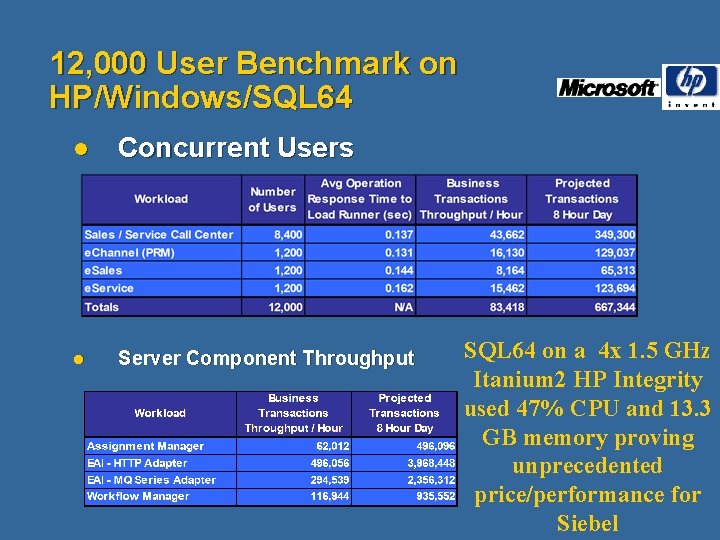

12, 000 User Benchmark on HP/Windows/SQL 64 l Concurrent Users l Server Component Throughput SQL 64 on a 4 x 1. 5 GHz Itanium 2 HP Integrity used 47% CPU and 13. 3 GB memory proving unprecedented price/performance for Siebel

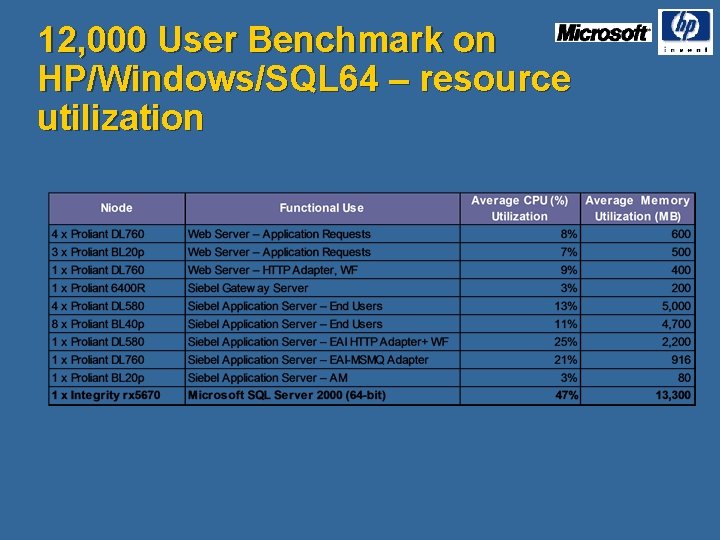

12, 000 User Benchmark on HP/Windows/SQL 64 – resource utilization

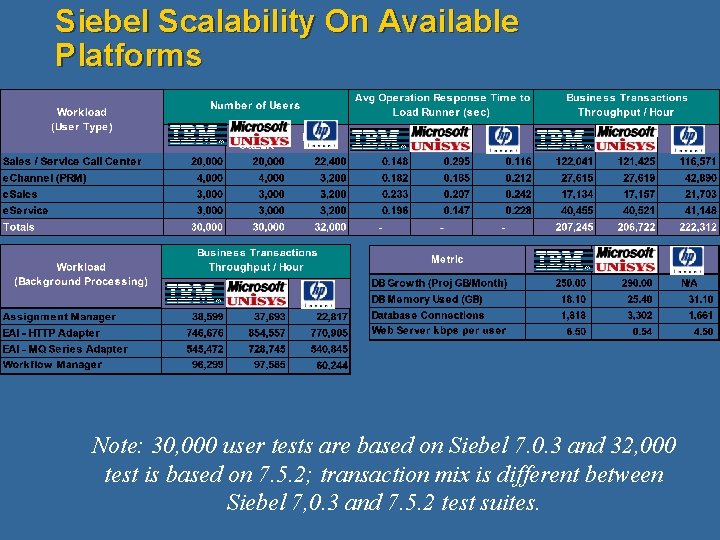

Siebel Scalability On Available Platforms Note: 30, 000 user tests are based on Siebel 7. 0. 3 and 32, 000 test is based on 7. 5. 2; transaction mix is different between Siebel 7, 0. 3 and 7. 5. 2 test suites.

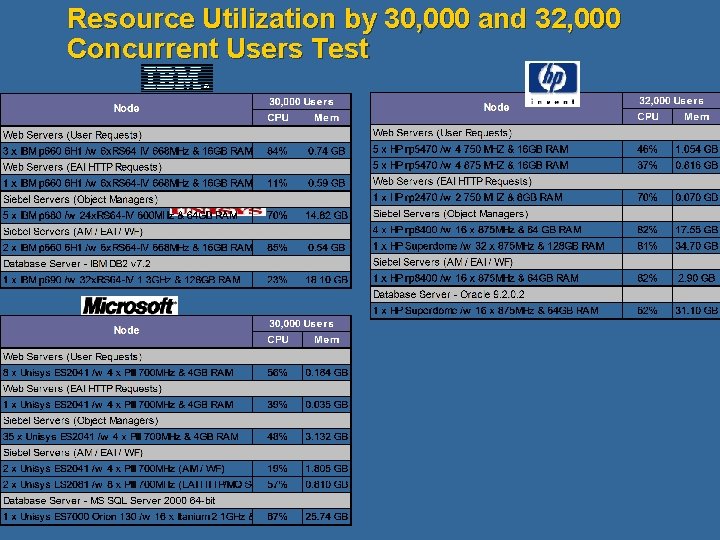

Resource Utilization by 30, 000 and 32, 000 Concurrent Users Test

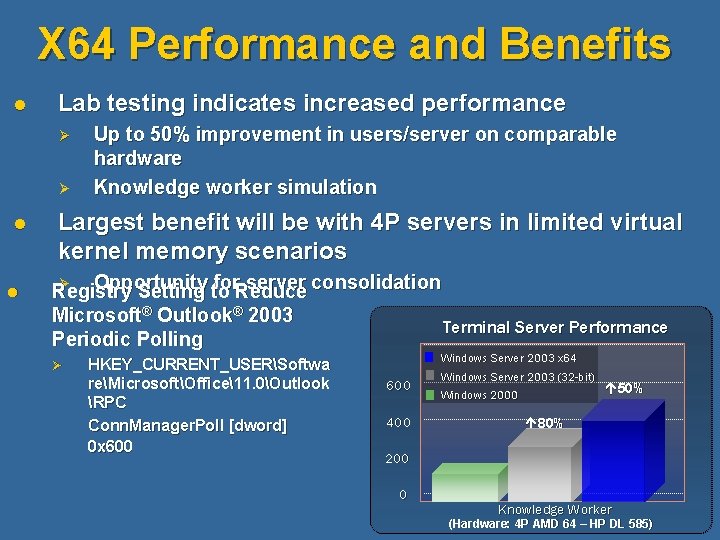

X 64 Performance and Benefits l Lab testing indicates increased performance Ø Ø l l Up to 50% improvement in users/server on comparable hardware Knowledge worker simulation Largest benefit will be with 4 P servers in limited virtual kernel memory scenarios Ø Opportunity for. Reduce server consolidation Registry Setting to Microsoft® Outlook® 2003 Terminal Server Performance Periodic Polling Ø HKEY_CURRENT_USERSoftwa reMicrosoftOffice11. 0Outlook RPC Conn. Manager. Poll [dword] 0 x 600 Windows Server 2003 x 64 600 400 Windows Server 2003 (32 -bit) Windows 2000 50% 80% 200 0 Knowledge Worker (Hardware: 4 P AMD 64 – HP DL 585)

- Slides: 38