Lattice Boltzmann for Blood Flow A Software Engineering

Lattice Boltzmann for Blood Flow: A Software Engineering Approach for a Data. Flow Super. Computer Nenad Korolija, nenadko@etf. rs Tijana Djukic, tijana@kg. ac. rs Nenad Filipovic, nfilipov@hsph. harvard. edu Veljko Milutinovic, vm@etf. rs 1/21

My. Work in a Nut. Shell 1. Introduction: Synergy of Physics and Logics 2. Problem: Moving LB to Maxeler 3. Existing. Solutions: None : ) 4. Essence: Map+Opt(PACT) 5. Details: My. Ph. D 6. Analysis: Ba. U 7. Conclusions: 1000 (SPC) 2/21

Cooperation between Bio. IRC, Uni. KG and School of Electrical Engineering, Uni. BG 3/21

Lattice Boltzmann for Blood Flow: A Software Engineering Approach Expensive Quiet Fast Electrical 20 m cord Environment-friendly Big-pack Wide-track Easy handling Reparation manual Reparation kit 5 Y warranty Service in your town New-technology high-quality non-rusting heavy-duty precise-cutting recyclable blades streaming grass only to bag. . . 4/21

Lattice Boltzmann for Blood Flow: A Software Engineering Approach Expensive Quiet Electrical 20 m cord Environment-friendly Big-pack Wide-track Easy handling Reparation manual Reparation kit 5 Y warranty Service in your town New-technology high-quality non-rusting heavy-duty precise-cutting recyclable blades streaming grass only to bag. . . 5/21

Lattice Boltzmann for Blood Flow: A Software Engineering Approach 6/21

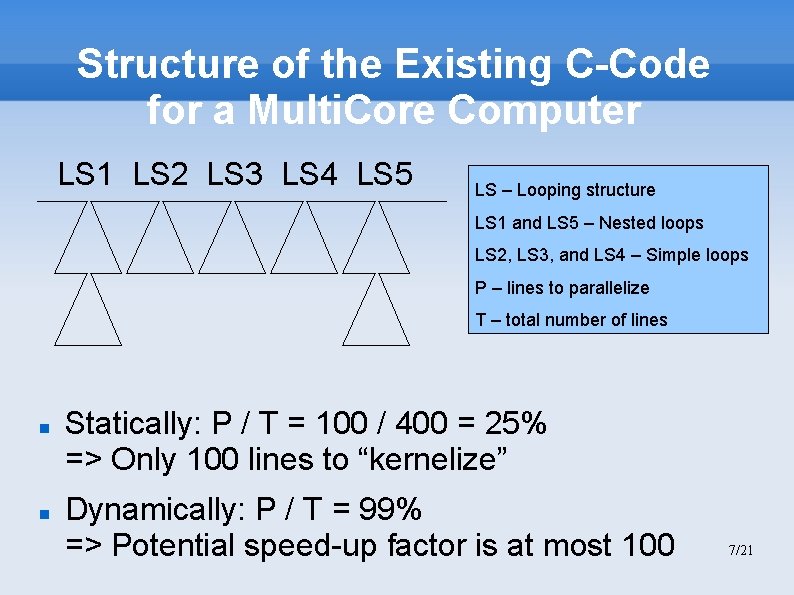

Structure of the Existing C-Code for a Multi. Core Computer LS 1 LS 2 LS 3 LS 4 LS 5 LS – Looping structure LS 1 and LS 5 – Nested loops LS 2, LS 3, and LS 4 – Simple loops P – lines to parallelize T – total number of lines Statically: P / T = 100 / 400 = 25% => Only 100 lines to “kernelize” Dynamically: P / T = 99% => Potential speed-up factor is at most 100 7/21

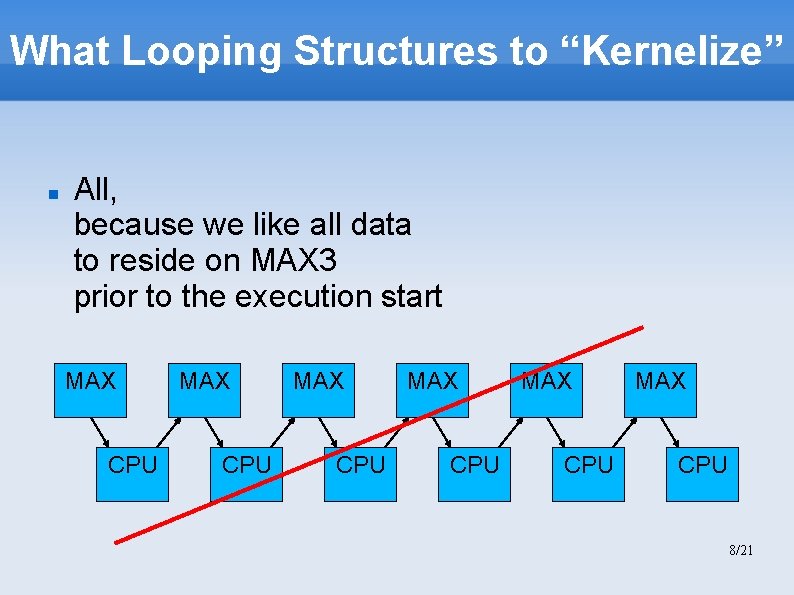

What Looping Structures to “Kernelize” All, because we like all data to reside on MAX 3 prior to the execution start MAX CPU MAX CPU 8/21

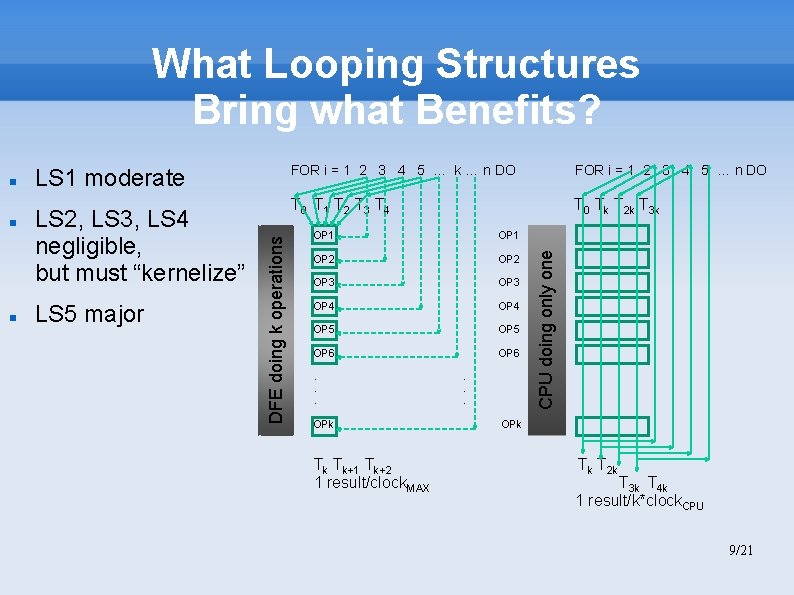

What Looping Structures Bring what Benefits? LS 2, LS 3, LS 4 negligible, but must “kernelize” LS 5 major FOR i = 1 2 3 4 5 … k … n DO FOR i = 1 2 3 4 5 … n DO T 0 T 1 T 2 T 3 T 4 T 0 Tk T 2 k T 3 k OP 1 OP 2 OP 3 OP 4 OP 5 OP 6 . . . OPk Tk Tk+1 Tk+2 1 result/clock. MAX . . . CPU doing only one LS 1 moderate DFE doing k operations OPk Tk T 2 k T 3 k T 4 k 1 result/k*clock. CPU 9/21

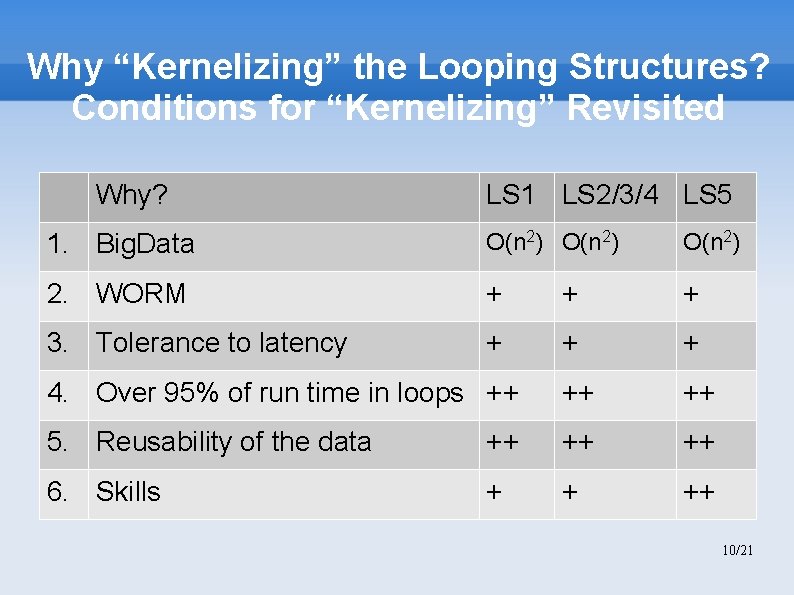

Why “Kernelizing” the Looping Structures? Conditions for “Kernelizing” Revisited Why? LS 1 LS 2/3/4 LS 5 1. Big. Data O(n 2) 2. WORM + + + 3. Tolerance to latency + + + 4. Over 95% of run time in loops ++ ++ ++ 5. Reusability of the data ++ ++ ++ 6. Skills + + ++ 10/21

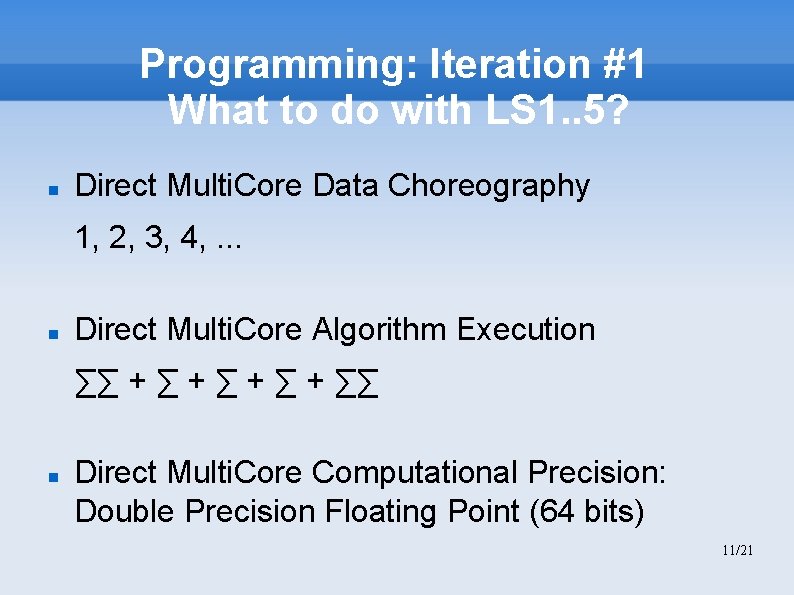

Programming: Iteration #1 What to do with LS 1. . 5? Direct Multi. Core Data Choreography 1, 2, 3, 4, . . . Direct Multi. Core Algorithm Execution ∑∑ + ∑ + ∑∑ Direct Multi. Core Computational Precision: Double Precision Floating Point (64 bits) 11/21

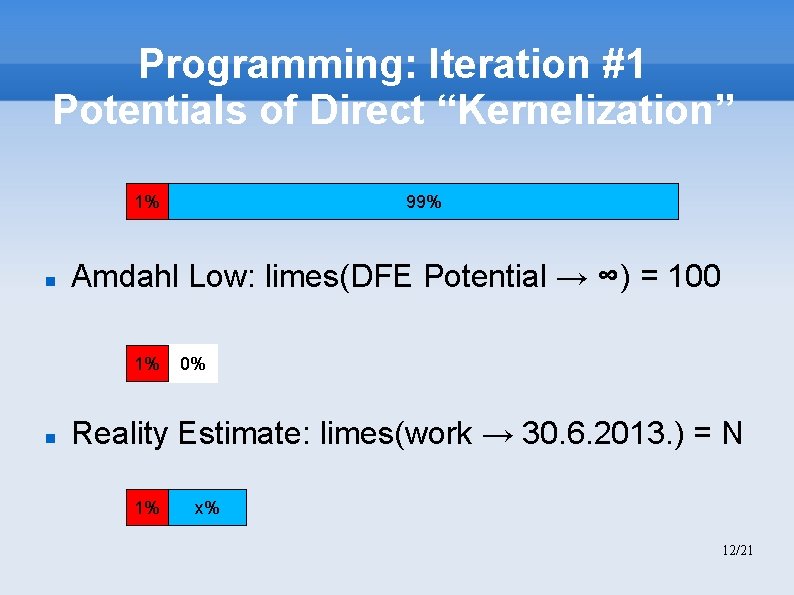

Programming: Iteration #1 Potentials of Direct “Kernelization” 1% Amdahl Low: limes(DFE Potential → ∞) = 100 1% 99% 0% Reality Estimate: limes(work → 30. 6. 2013. ) = N 1% x% 12/21

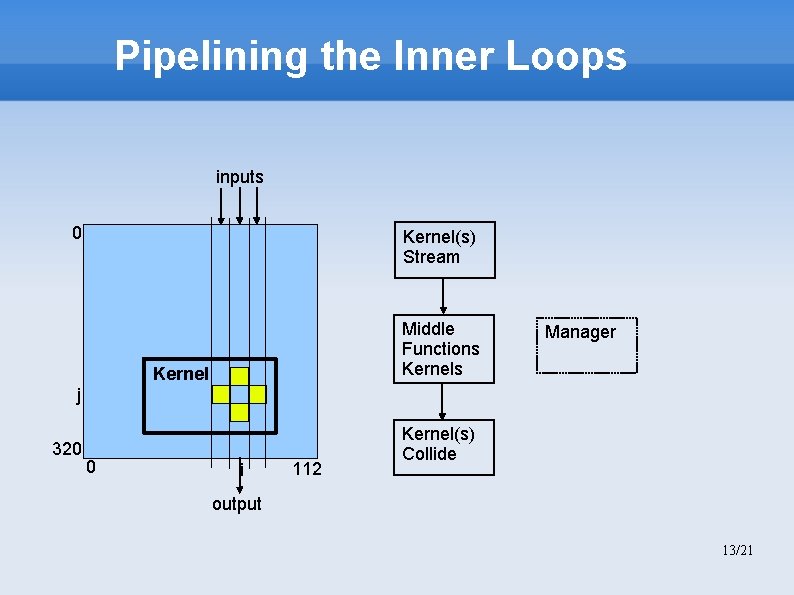

Pipelining the Inner Loops inputs 0 Kernel(s) Stream Middle Functions Kernel Manager j 320 0 i 112 Kernel(s) Collide output 13/21

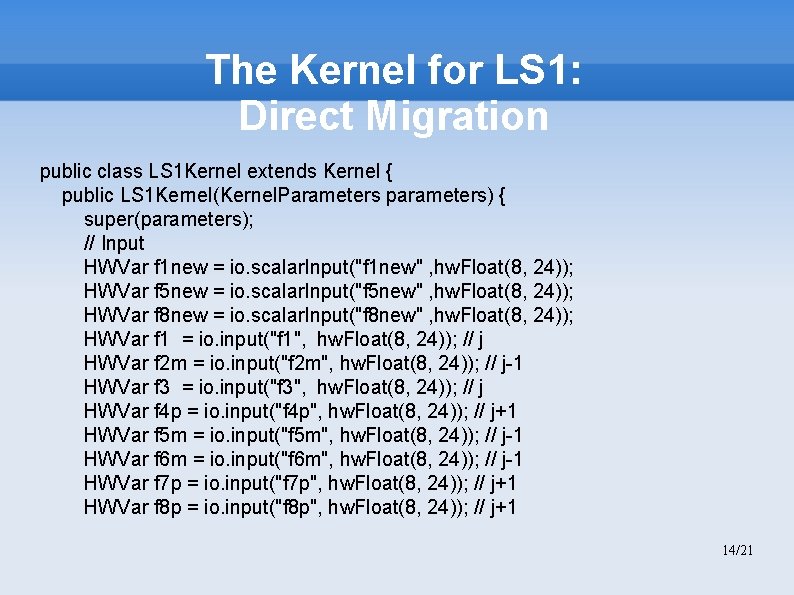

The Kernel for LS 1: Direct Migration public class LS 1 Kernel extends Kernel { public LS 1 Kernel(Kernel. Parameters parameters) { super(parameters); // Input HWVar f 1 new = io. scalar. Input("f 1 new" , hw. Float(8, 24)); HWVar f 5 new = io. scalar. Input("f 5 new" , hw. Float(8, 24)); HWVar f 8 new = io. scalar. Input("f 8 new" , hw. Float(8, 24)); HWVar f 1 = io. input("f 1", hw. Float(8, 24)); // j HWVar f 2 m = io. input("f 2 m", hw. Float(8, 24)); // j-1 HWVar f 3 = io. input("f 3", hw. Float(8, 24)); // j HWVar f 4 p = io. input("f 4 p", hw. Float(8, 24)); // j+1 HWVar f 5 m = io. input("f 5 m", hw. Float(8, 24)); // j-1 HWVar f 6 m = io. input("f 6 m", hw. Float(8, 24)); // j-1 HWVar f 7 p = io. input("f 7 p", hw. Float(8, 24)); // j+1 HWVar f 8 p = io. input("f 8 p", hw. Float(8, 24)); // j+1 14/21

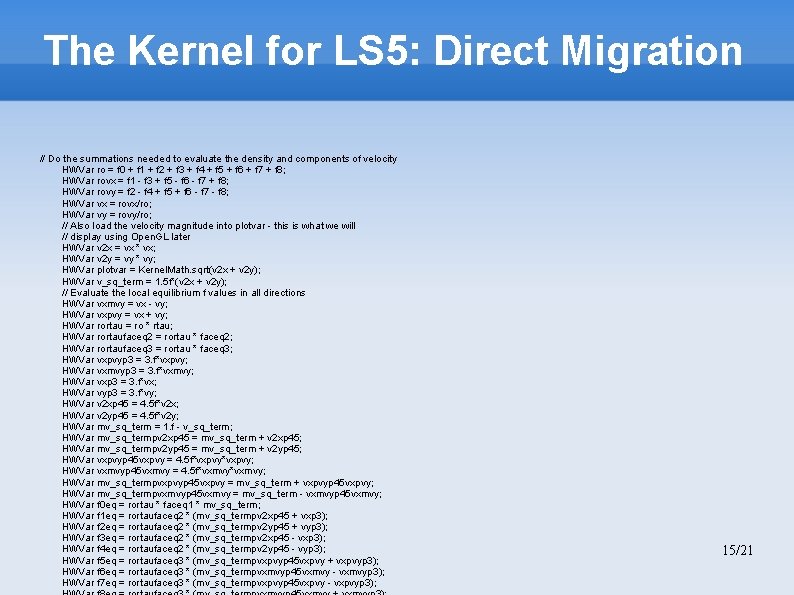

The Kernel for LS 5: Direct Migration // Do the summations needed to evaluate the density and components of velocity HWVar ro = f 0 + f 1 + f 2 + f 3 + f 4 + f 5 + f 6 + f 7 + f 8; HWVar rovx = f 1 - f 3 + f 5 - f 6 - f 7 + f 8; HWVar rovy = f 2 - f 4 + f 5 + f 6 - f 7 - f 8; HWVar vx = rovx/ro; HWVar vy = rovy/ro; // Also load the velocity magnitude into plotvar - this is what we will // display using Open. GL later HWVar v 2 x = vx * vx; HWVar v 2 y = vy * vy; HWVar plotvar = Kernel. Math. sqrt(v 2 x + v 2 y); HWVar v_sq_term = 1. 5 f*(v 2 x + v 2 y); // Evaluate the local equilibrium f values in all directions HWVar vxmvy = vx - vy; HWVar vxpvy = vx + vy; HWVar rortau = ro * rtau; HWVar rortaufaceq 2 = rortau * faceq 2; HWVar rortaufaceq 3 = rortau * faceq 3; HWVar vxpvyp 3 = 3. f*vxpvy; HWVar vxmvyp 3 = 3. f*vxmvy; HWVar vxp 3 = 3. f*vx; HWVar vyp 3 = 3. f*vy; HWVar v 2 xp 45 = 4. 5 f*v 2 x; HWVar v 2 yp 45 = 4. 5 f*v 2 y; HWVar mv_sq_term = 1. f - v_sq_term; HWVar mv_sq_termpv 2 xp 45 = mv_sq_term + v 2 xp 45; HWVar mv_sq_termpv 2 yp 45 = mv_sq_term + v 2 yp 45; HWVar vxpvyp 45 vxpvy = 4. 5 f*vxpvy; HWVar vxmvyp 45 vxmvy = 4. 5 f*vxmvy; HWVar mv_sq_termpvxpvyp 45 vxpvy = mv_sq_term + vxpvyp 45 vxpvy; HWVar mv_sq_termpvxmvyp 45 vxmvy = mv_sq_term - vxmvyp 45 vxmvy; HWVar f 0 eq = rortau * faceq 1 * mv_sq_term; HWVar f 1 eq = rortaufaceq 2 * (mv_sq_termpv 2 xp 45 + vxp 3); HWVar f 2 eq = rortaufaceq 2 * (mv_sq_termpv 2 yp 45 + vyp 3); HWVar f 3 eq = rortaufaceq 2 * (mv_sq_termpv 2 xp 45 - vxp 3); HWVar f 4 eq = rortaufaceq 2 * (mv_sq_termpv 2 yp 45 - vyp 3); HWVar f 5 eq = rortaufaceq 3 * (mv_sq_termpvxpvyp 45 vxpvy + vxpvyp 3); HWVar f 6 eq = rortaufaceq 3 * (mv_sq_termpvxmvyp 45 vxmvy - vxmvyp 3); HWVar f 7 eq = rortaufaceq 3 * (mv_sq_termpvxpvyp 45 vxpvy - vxpvyp 3); 15/21

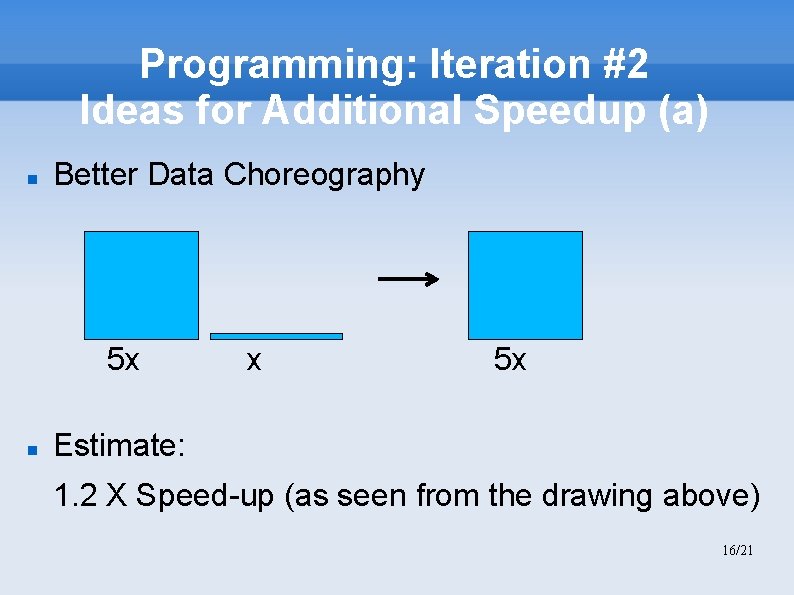

Programming: Iteration #2 Ideas for Additional Speedup (a) Better Data Choreography 5 x x 5 x Estimate: 1. 2 X Speed-up (as seen from the drawing above) 16/21

Programming: Iteration #3 Ideas for Additional Speedup (b) Algorithmic Changes: ∑∑ + ∑ + ∑∑ → ∑∑ + ∑∑ Explanation: As seen from the previous drawing, LS 2 and LS 3 can be integrated with LS 1 Estimate: 1. 6 17/21

Programming: Iteration #4 Ideas for Additional Speedup (c) Precision Changes: LUT (Double-precision floating point, 64) = 500 LUT (Maxeler-precision floating point, 24) = 24 Explanation: With less precision, hardware complexity can be reduced by a factor of about 20. Increasing number of iterations 4 times brings approximately similar precision, much faster. Estimate: Factor = (500/24)/4 ≈ 5 This is the only action, before which an topic expert has to be consulted! 18/21

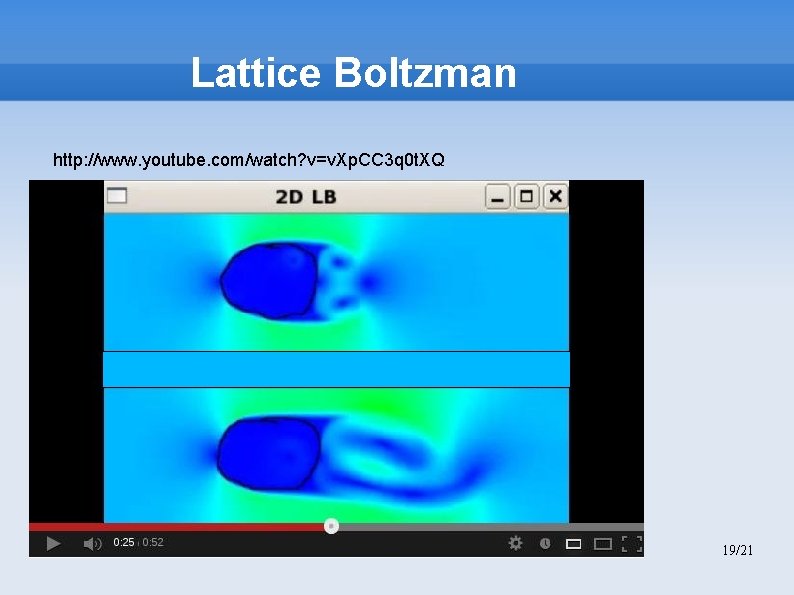

Lattice Boltzman http: //www. youtube. com/watch? v=v. Xp. CC 3 q 0 t. XQ 19/21

Results: SPTC ≈ 1000 x “Maxeler’s technology enables organizations to speed up processing times by 20 -50 x, with over 90% reduction in energy usage and over 95% reduction in data centre space”. Speedup factor: 1. 2 x 1. 6 x 5 x N ≈ 10 N - Precisely 30. 6. 2013. Power reduction factor(i 7/MAX 3) = 17. 6 / (MAX 2 / MAX 3) ≈ 10 - Precisely: the Wall. Cord method Transistor count reduction factor = i 7 / MAX 3 - Precisely: about 20 Cost reduction factor: x - Precisely: depends on production volumes 20/21

10 km/h ! Tahiti Hawaii Q&A: nenadko@etf. rs 30 km/h !!! 21/21

- Slides: 21