KAIST Computer Architecture Lab The Effect of Multicore

KAIST Computer Architecture Lab. The Effect of Multi-core on HPC Applications in Virtualized Systems Jaeung Han¹, Jeongseob Ahn¹, Changdae Kim¹, Youngjin Kwon¹, Young-ri Choi², and Jaehyuk Huh¹ ¹ KAIST(Korea Advanced Institute of Science and Technology) ² KISTI(Korea Institute of Science and Technology Information)

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 2

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 3

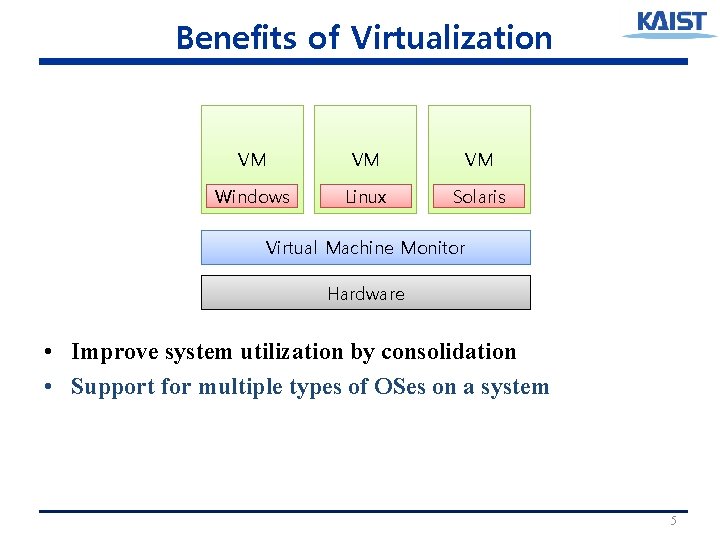

Benefits of Virtualization VM VM VM Virtual Machine Monitor Hardware • Improve system utilization by consolidation 4

Benefits of Virtualization VM VM VM Windows Linux Solaris Virtual Machine Monitor Hardware • Improve system utilization by consolidation • Support for multiple types of OSes on a system 5

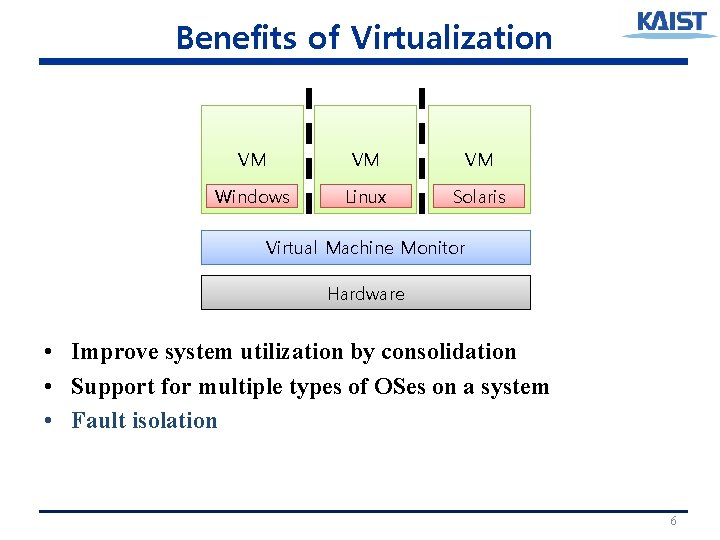

Benefits of Virtualization VM VM VM Windows Linux Solaris Virtual Machine Monitor Hardware • Improve system utilization by consolidation • Support for multiple types of OSes on a system • Fault isolation 6

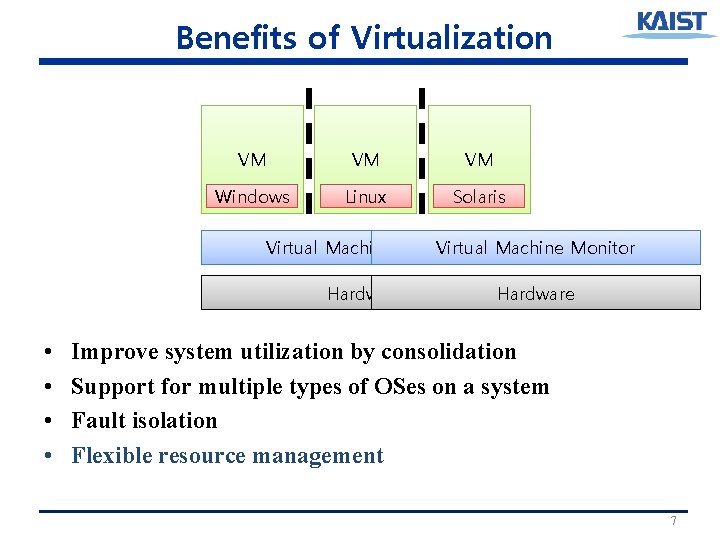

Benefits of Virtualization VM VM VM Windows Linux Solaris Virtual Machine Monitor Hardware • • Hardware Improve system utilization by consolidation Support for multiple types of OSes on a system Fault isolation Flexible resource management 7

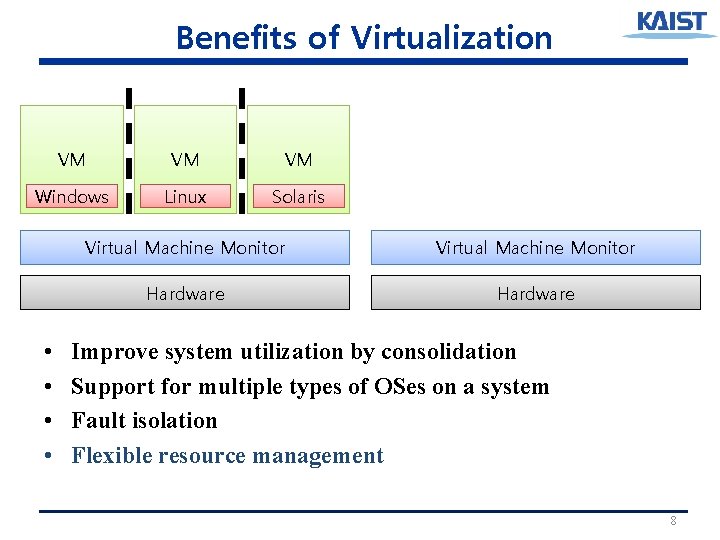

Benefits of Virtualization VM VM VM Windows Linux Solaris • • Virtual Machine Monitor Hardware Improve system utilization by consolidation Support for multiple types of OSes on a system Fault isolation Flexible resource management 8

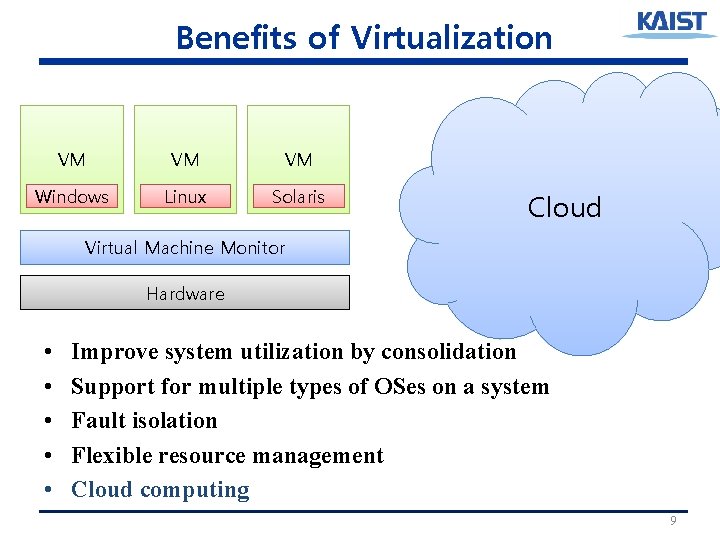

Benefits of Virtualization VM VM VM Windows Linux Solaris Cloud Virtual Machine Monitor Hardware • • • Improve system utilization by consolidation Support for multiple types of OSes on a system Fault isolation Flexible resource management Cloud computing 9

Virtualization for HPC • Benefits of virtualization – Improve system utilization by consolidation – Support for multiple types of OSes on a system – Fault isolation – Flexible resource management – Cloud computing • HPC is performance-sensitive resource-sensitive • Virtualization can help HPC workloads 10

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 11

Virtualization on Multi-core VM VM VM VM core core Shared cache Memory • More VMs on a physical machine • More complex memory hierarchy (NUCA, NUMA) 12

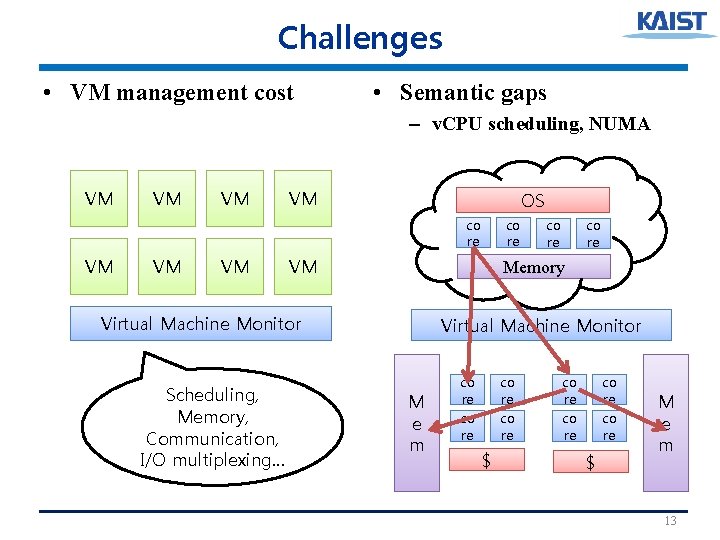

Challenges • VM management cost • Semantic gaps – v. CPU scheduling, NUMA VM VM OS co re VM VM co re Memory Virtual Machine Monitor Scheduling, Memory, Communication, I/O multiplexing… co re Virtual Machine Monitor M e m co re co re $ M e m 13

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 14

Virtualization for HPC on Multi-core • • Virtualization may help HPC Virtualization on multi-core may have some overheads For servers, improving system utilization is a key factor For HPC, performance is a key factor. How much overheads are there? Where do they come from? 15

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 16

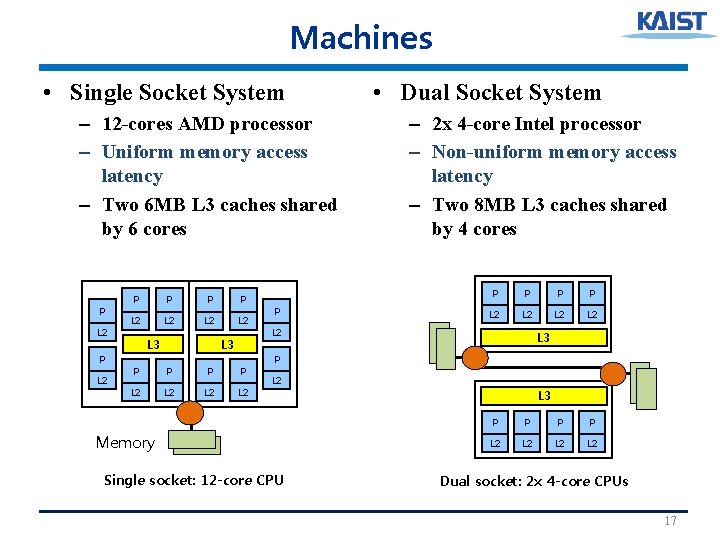

Machines • Single Socket System – 12 -cores AMD processor – Uniform memory access latency – Two 6 MB L 3 caches shared by 6 cores P L 2 P P L 2 L 2 L 3 P P L 2 L 2 P • Dual Socket System – 2 x 4 -core Intel processor – Non-uniform memory access latency – Two 8 MB L 3 caches shared by 4 cores P P L 2 L 2 L 2 L 3 P L 2 Memory Single socket: 12 -core CPU L 3 P P L 2 L 2 Dual socket: 2 x 4 -core CPUs 17

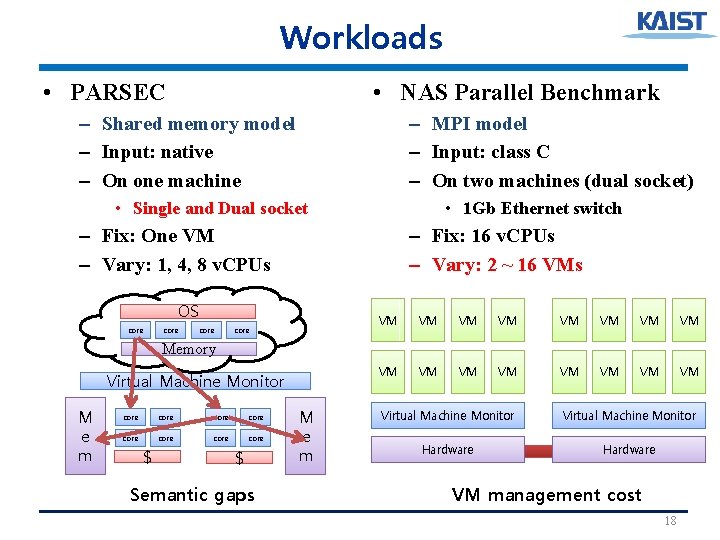

Workloads • PARSEC • NAS Parallel Benchmark – Shared memory model – Input: native – On one machine – MPI model – Input: class C – On two machines (dual socket) • Single and Dual socket • 1 Gb Ethernet switch – Fix: One VM – Vary: 1, 4, 8 v. CPUs – Fix: 16 v. CPUs – Vary: 2 ~ 16 VMs OS core VM VM VM VM Memory Virtual Machine Monitor M e m core core $ $ Semantic gaps M e m Virtual Machine Monitor Hardware VM management cost 18

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 19

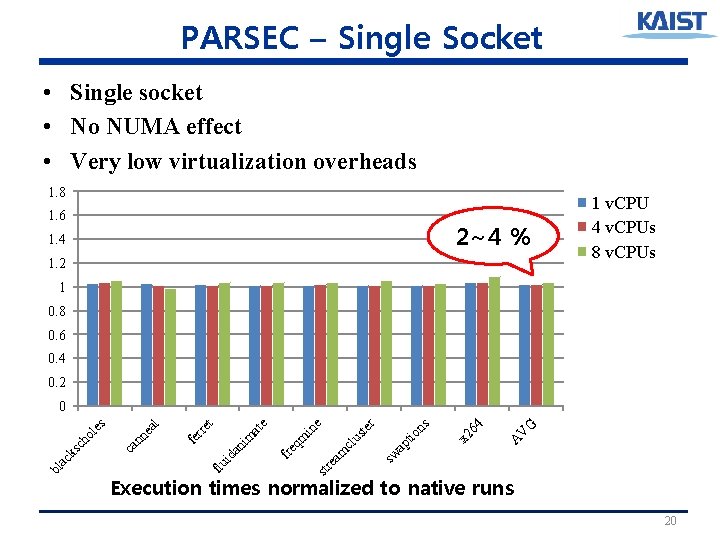

PARSEC – Single Socket • Single socket • No NUMA effect • Very low virtualization overheads 1. 8 1. 6 2~4 % 1. 4 1. 2 1 v. CPU 4 v. CPUs 8 v. CPUs 1 0. 8 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am str e sw us te r e in qm fre id an im at e t al nn e ca fe rre flu bl ac ks c ho l es 0 Execution times normalized to native runs 20

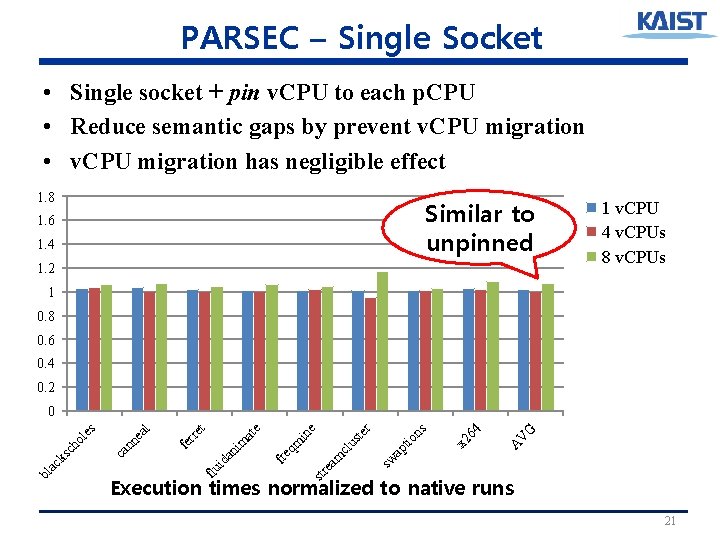

PARSEC – Single Socket • Single socket + pin v. CPU to each p. CPU • Reduce semantic gaps by prevent v. CPU migration • v. CPU migration has negligible effect 1. 8 Similar to unpinned 1. 6 1. 4 1. 2 1 v. CPU 4 v. CPUs 8 v. CPUs 1 0. 8 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am str e sw us te r e qm fre id an i m at in e t al nn e ca fe rre flu bl ac ks c ho l es 0 Execution times normalized to native runs 21

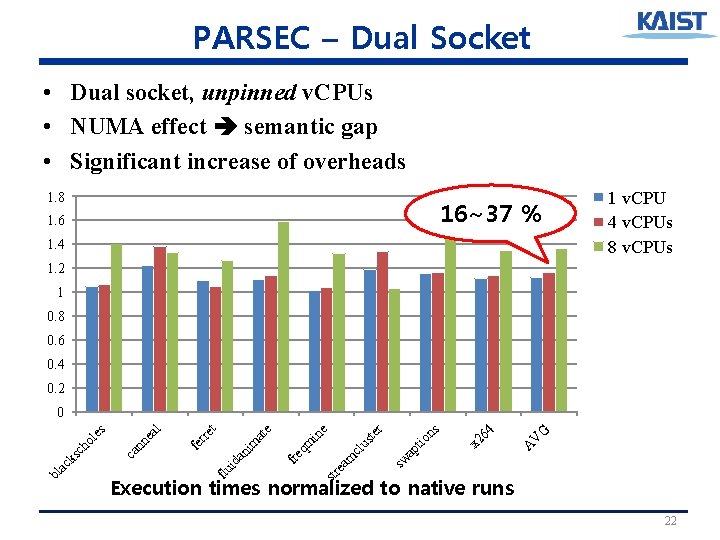

PARSEC – Dual Socket • Dual socket, unpinned v. CPUs • NUMA effect semantic gap • Significant increase of overheads 1. 8 16~37 % 1. 6 1. 4 1 v. CPU 4 v. CPUs 8 v. CPUs 1. 2 1 0. 8 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am str e sw us te r e qm fre id an im at in e t al nn e ca fe rre flu bl ac ks c ho l es 0 Execution times normalized to native runs 22

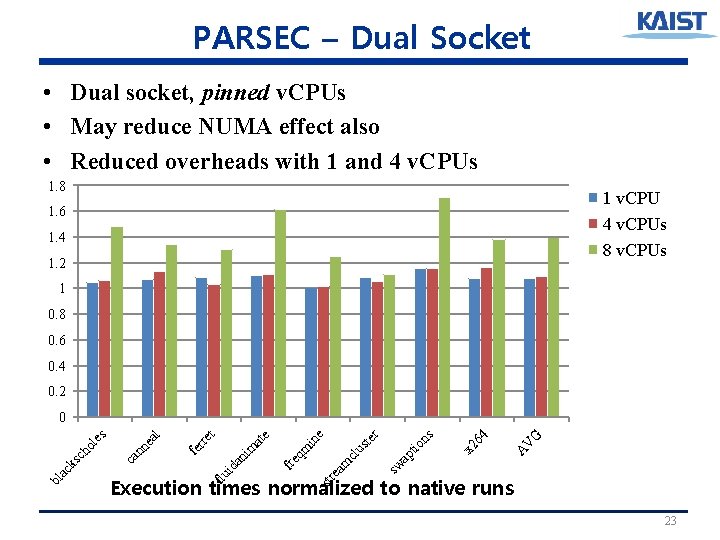

PARSEC – Dual Socket • Dual socket, pinned v. CPUs • May reduce NUMA effect also • Reduced overheads with 1 and 4 v. CPUs 1. 8 1 v. CPU 4 v. CPUs 8 v. CPUs 1. 6 1. 4 1. 2 1 0. 8 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am sw us te r e in qm fre id an im at e t fe rre al nn e ca str e Execution times normalized to native runs flu bl ac ks c ho l es 0 23

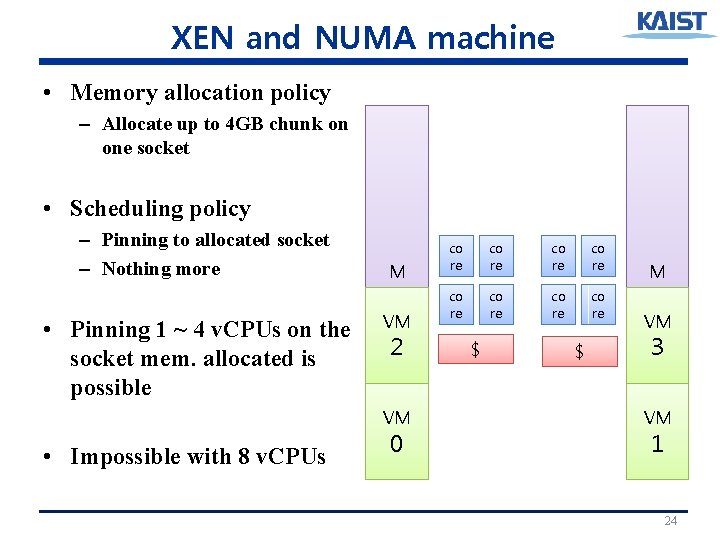

XEN and NUMA machine • Memory allocation policy – Allocate up to 4 GB chunk on one socket • Scheduling policy – Pinning to allocated socket – Nothing more • Pinning 1 ~ 4 v. CPUs on the socket mem. allocated is possible M e m VM 2 VM • Impossible with 8 v. CPUs 0 co re co re $ $ M e m VM 3 VM 1 24

Mitigating NUMA Effects • Range pinning – Pin v. CPUs of a VM on a socket – Work only if # of v. CPUs < # of cores on a socket – Range-pinned (best): memory of VM in the same socket – Range-pinned (worst): memory of VM in the other socket • NUMA-first scheduler – If there is an idle core in the socket memory allocated, pick it – If not, anyway, pick a core in the machine – All v. CPUs are not active all the time (sync. or I/O) 25

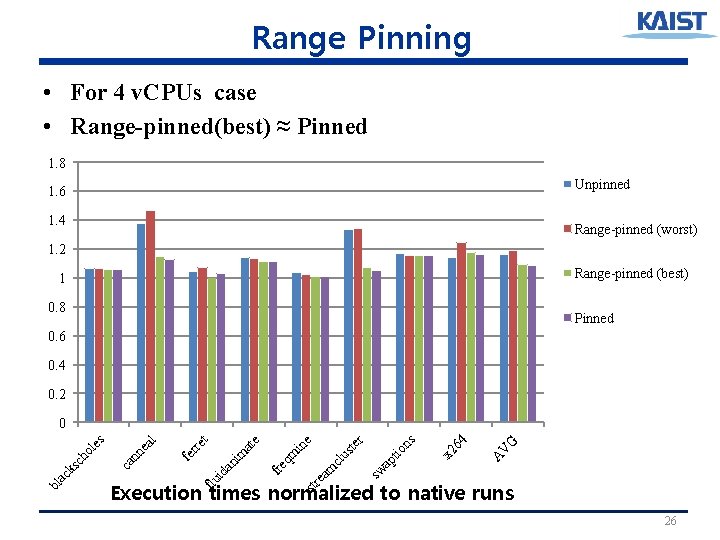

Range Pinning • For 4 v. CPUs case • Range-pinned(best) ≈ Pinned 1. 8 Unpinned 1. 6 1. 4 Range-pinned (worst) 1. 2 Range-pinned (best) 1 0. 8 Pinned 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am sw us te r e in qm fre id an im at e t fe rre al nn e ca str e Execution times normalized to native runs flu bl ac ks c ho l es 0 26

NUMA-first Scheduler • For 8 v. CPUs case • Significant improvement by NUMA-first scheduler 1. 8 Unpinned Pinned NUMA-first 1. 6 1. 4 1. 2 1 0. 8 0. 6 0. 4 0. 2 G V A x 2 64 io ns ap t cl am str e sw us te r e in qm fre id an im at e t fe rre al nn e ca flu bl ac ks c ho l es 0 Execution times normalized to native runs 27

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 28

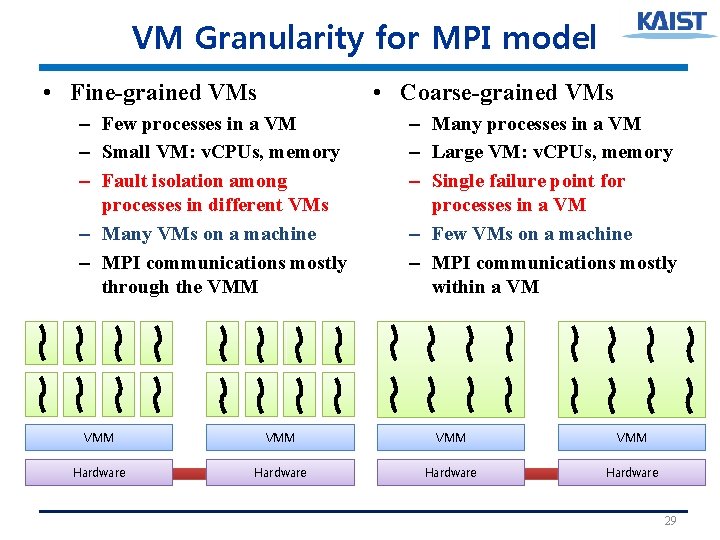

VM Granularity for MPI model • Fine-grained VMs • Coarse-grained VMs – Few processes in a VM – Small VM: v. CPUs, memory – Fault isolation among processes in different VMs – Many VMs on a machine – MPI communications mostly through the VMM – Many processes in a VM – Large VM: v. CPUs, memory – Single failure point for processes in a VM – Few VMs on a machine – MPI communications mostly within a VM • VMM VMM Hardware 29

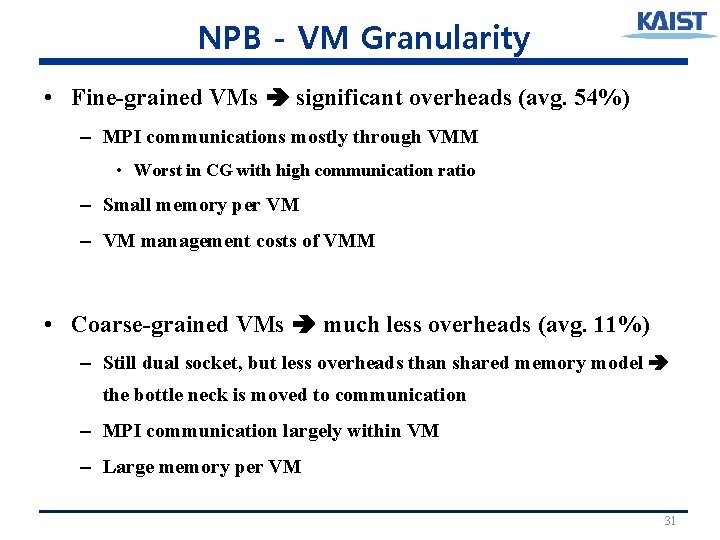

NPB - VM Granularity • Work to do are same for all granularity • 2 VMs: each VM has 8 v. CPUs, 8 MPI processes • 16 VMs: each VM has 1 v. CPU, 1 MPI processes 3 2. 5 11~54 % 2 2 VMs 4 VMs 8 VMs 16 VMs 1. 5 1 0. 5 0 BT CG EP FT IS LU MG SP AVG Execution times normalized to native runs 30

NPB - VM Granularity • Fine-grained VMs significant overheads (avg. 54%) – MPI communications mostly through VMM • Worst in CG with high communication ratio – Small memory per VM – VM management costs of VMM • Coarse-grained VMs much less overheads (avg. 11%) – Still dual socket, but less overheads than shared memory model the bottle neck is moved to communication – MPI communication largely within VM – Large memory per VM 31

Outline • Virtualization for HPC • Virtualization on Multi-core • Virtualization for HPC on Multi-core • Methodology • PARSEC – shared memory model • NPB – MPI model • Conclusion 32

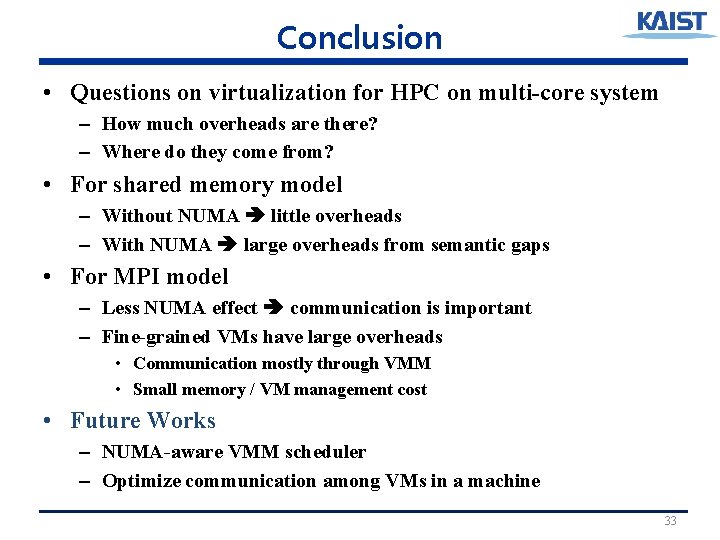

Conclusion • Questions on virtualization for HPC on multi-core system – How much overheads are there? – Where do they come from? • For shared memory model – Without NUMA little overheads – With NUMA large overheads from semantic gaps • For MPI model – Less NUMA effect communication is important – Fine-grained VMs have large overheads • Communication mostly through VMM • Small memory / VM management cost • Future Works – NUMA-aware VMM scheduler – Optimize communication among VMs in a machine 33

Thank you! 34

Backup slides 35

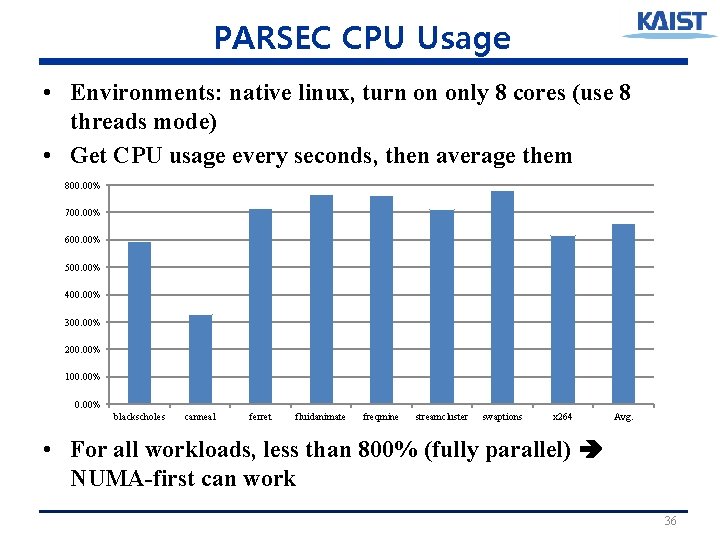

PARSEC CPU Usage • Environments: native linux, turn on only 8 cores (use 8 threads mode) • Get CPU usage every seconds, then average them 800. 00% 700. 00% 600. 00% 500. 00% 400. 00% 300. 00% 200. 00% 100. 00% blackscholes canneal ferret fluidanimate freqmine streamcluster swaptions x 264 Avg. • For all workloads, less than 800% (fully parallel) NUMA-first can work 36

- Slides: 36