Information Bottleneck EM Gal Elidan and Nir Friedman

Information Bottleneck EM Gal Elidan and Nir Friedman School of Engineering & Computer Science The Hebrew University, Jerusalem, Israel

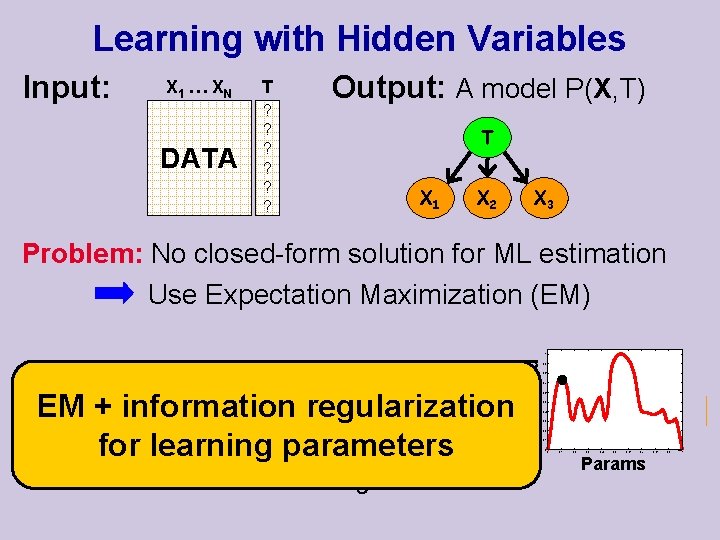

Learning with Hidden Variables Input: X 1 … X N T DATA ? ? ? Output: A model P(X, T) T X 1 X 2 X 3 Problem: No closed-form solution for ML estimation Use Expectation Maximization (EM) Problem: Stuck in inferior local Maxima EM + information regularization Random Restarts Deterministicparameters for learning Simulated annealing Likelihood 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 0 0. 1 0. 2 0. 3 0. 4 0. 5 0. 6 0. 7 Params 0. 8 0. 9 1

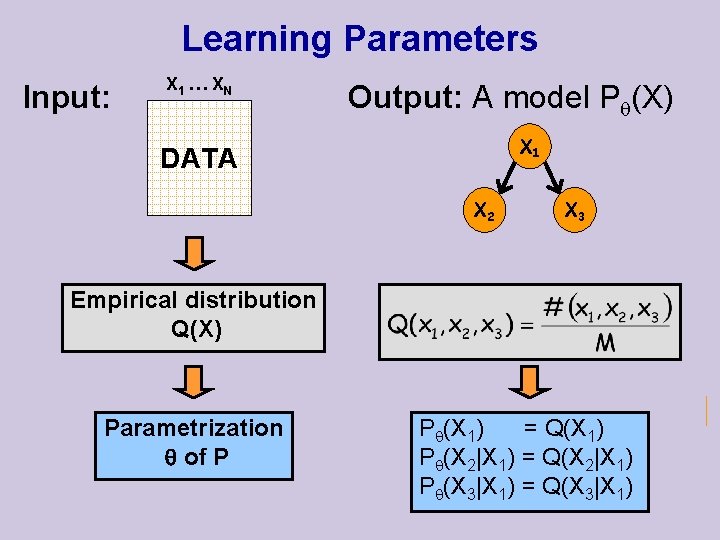

Learning Parameters Input: X 1 … X N Output: A model P (X) X 1 DATA X 2 X 3 Empirical distribution Q(X) Parametrization of P P (X 1) = Q(X 1) P (X 2|X 1) = Q(X 2|X 1) P (X 3|X 1) = Q(X 3|X 1)

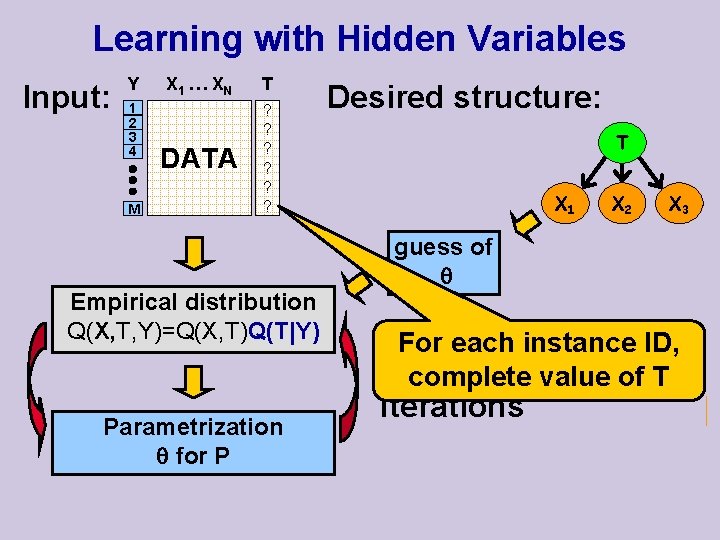

Learning with Hidden Variables Input: Y 1 2 3 4 M X 1 … X N T DATA ? ? ? Empirical distribution Q(X, T, Y)=Q(X, T)Q(T|Y) Q(X, T, Y) Q(X, T) = ? = Parametrization for P Desired structure: T X 1 X 2 X 3 guess of For each instance ID, EM complete value of T Iterations

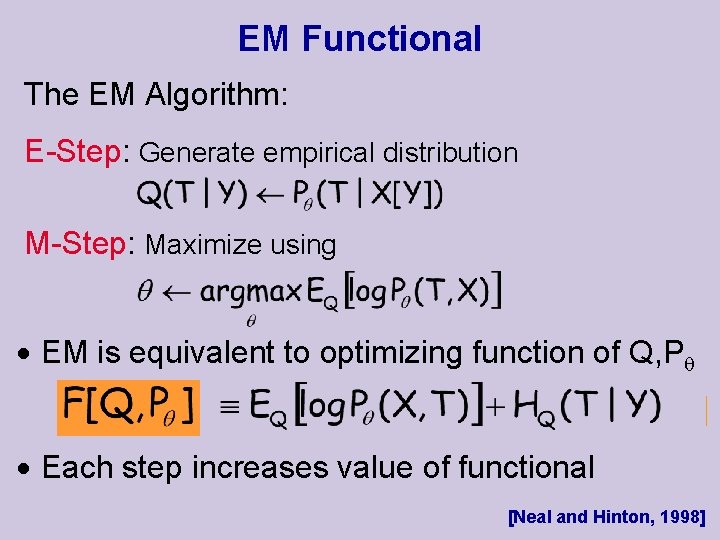

EM Functional The EM Algorithm: E-Step: Generate empirical distribution M-Step: Maximize using EM is equivalent to optimizing function of Q, P Each step increases value of functional [Neal and Hinton, 1998]

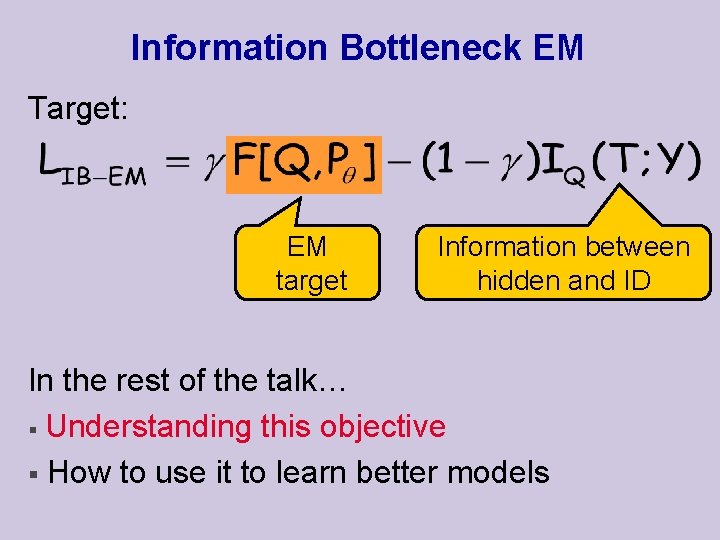

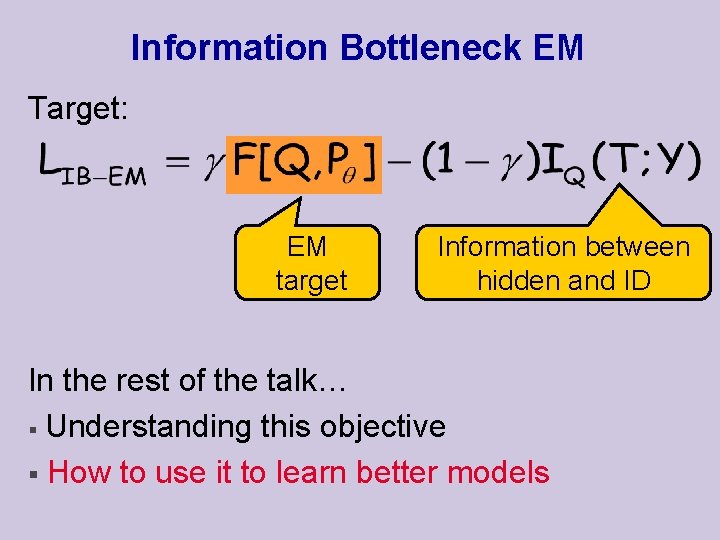

Information Bottleneck EM Target: EM target Information between hidden and ID In the rest of the talk… § Understanding this objective § How to use it to learn better models

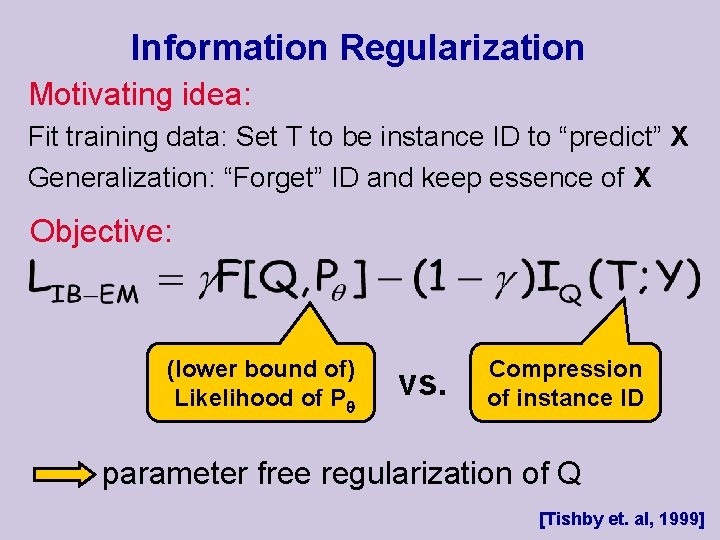

Information Regularization Motivating idea: Fit training data: Set T to be instance ID to “predict” X Generalization: “Forget” ID and keep essence of X Objective: (lower bound of) Likelihood of P vs. Compression of instance ID parameter free regularization of Q [Tishby et. al, 1999]

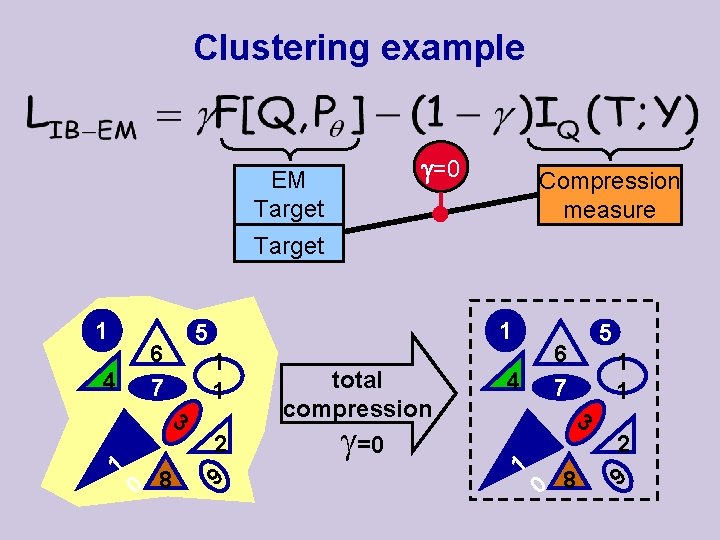

Clustering example =0 EM Target EM Compression measure Target 1 4 6 7 3 1 1 5 0 8 1 1 2 9 total compression =0 4 5 6 7 3 1 0 8 1 1 2 9

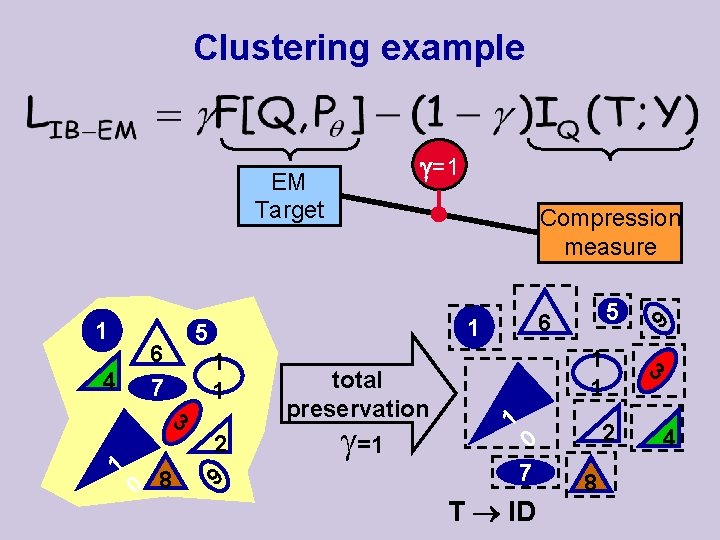

Clustering example =1 EM Target 1 4 6 7 0 8 1 1 2 9 total preservation =1 5 6 1 5 3 1 Compression measure 1 1 1 0 7 T ID 2 8 9 3 4

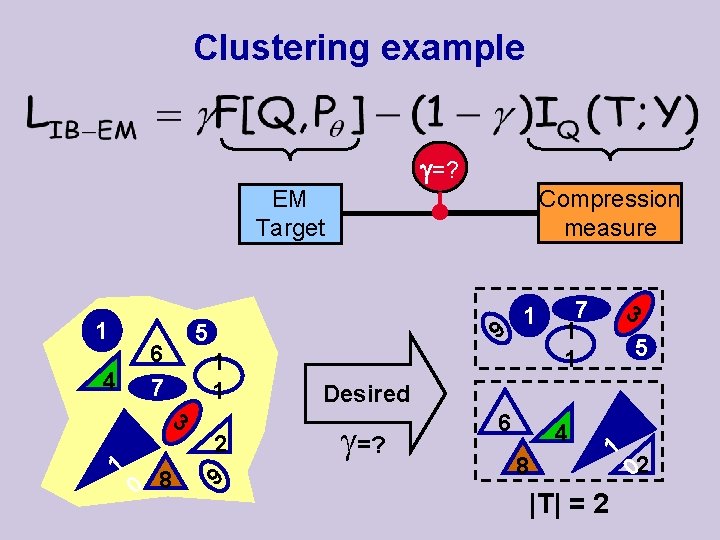

Clustering example =? Compression measure EM Target 1 4 6 7 3 1 9 5 0 8 1 1 2 9 1 3 7 1 1 5 Desired =? 6 4 8 |T| = 2 1 02

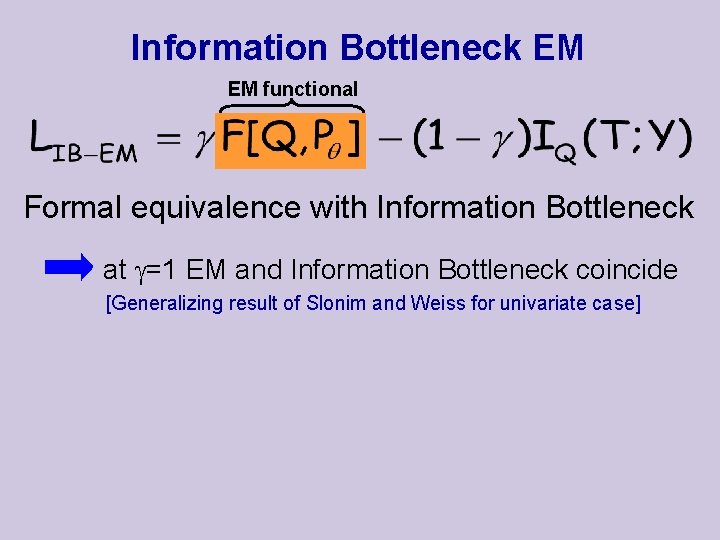

Information Bottleneck EM EM functional Formal equivalence with Information Bottleneck at =1 EM and Information Bottleneck coincide [Generalizing result of Slonim and Weiss for univariate case]

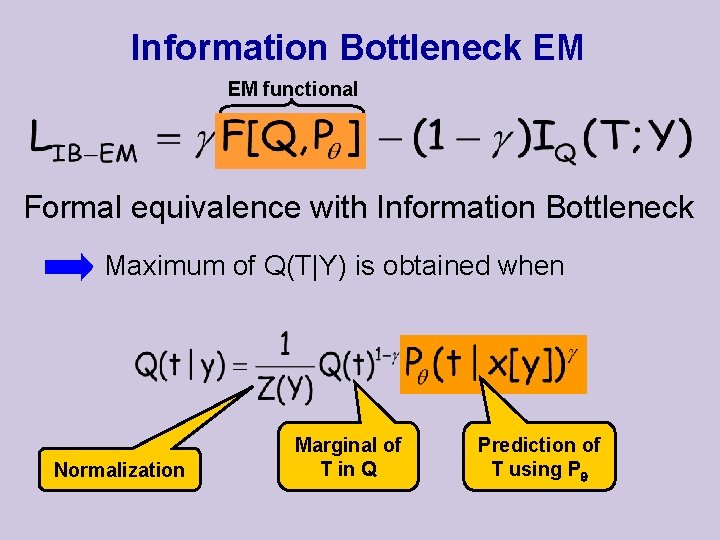

Information Bottleneck EM EM functional Formal equivalence with Information Bottleneck Maximum of Q(T|Y) is obtained when Normalization Marginal of T in Q Prediction of T using P

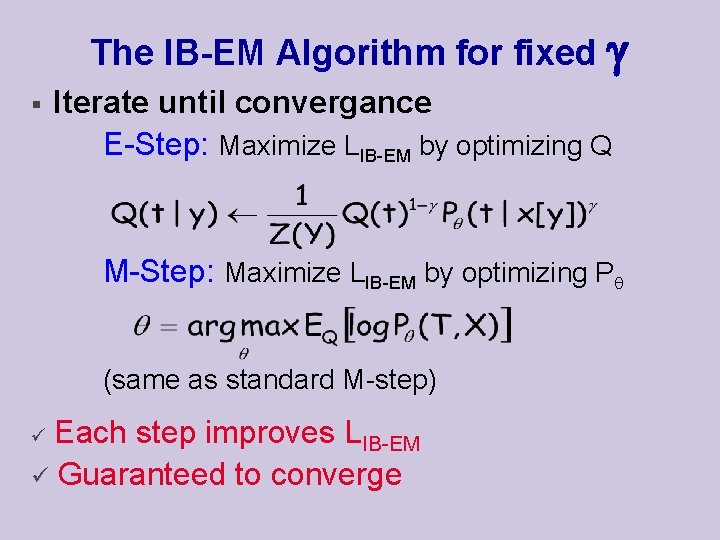

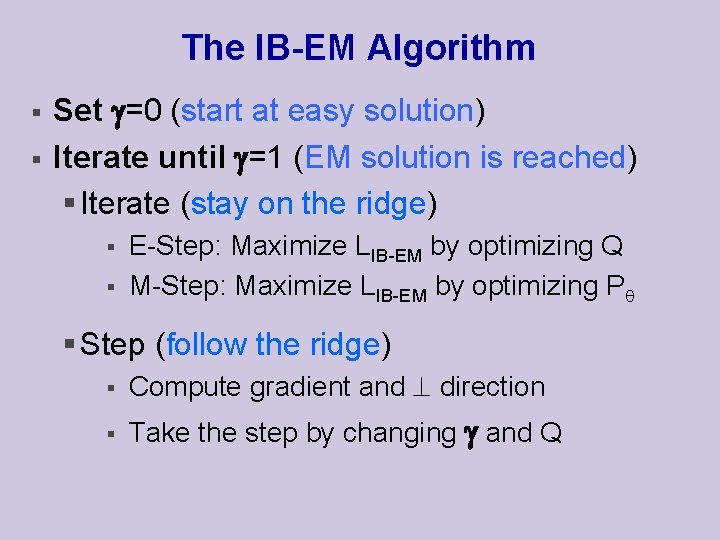

The IB-EM Algorithm for fixed § Iterate until convergance E-Step: Maximize LIB-EM by optimizing Q M-Step: Maximize LIB-EM by optimizing P (same as standard M-step) Each step improves LIB-EM ü Guaranteed to converge ü

Information Bottleneck EM Target: EM target Information between hidden and ID In the rest of the talk… § Understanding this objective § How to use it to learn better models

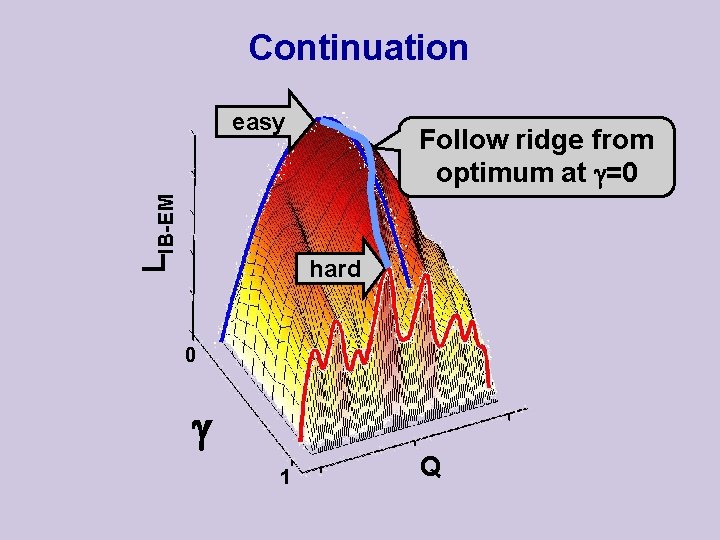

Continuation LIB-EM easy Follow ridge from optimum at =0 hard 0 1 Q

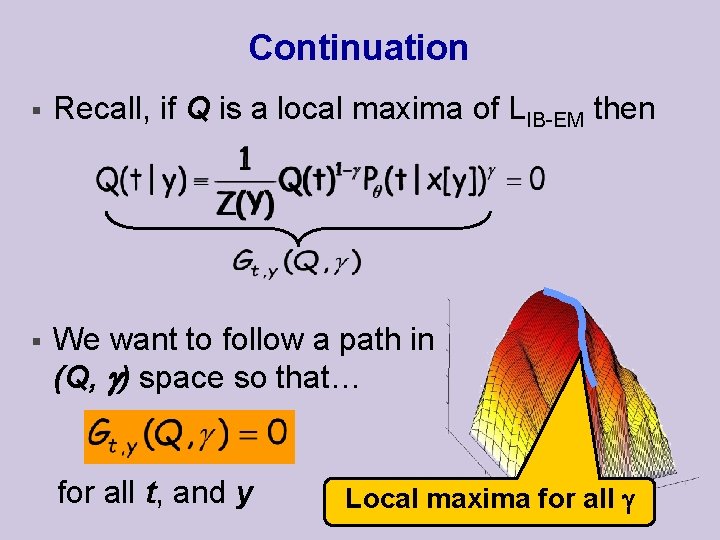

Continuation § Recall, if Q is a local maxima of LIB-EM then § We want to follow a path in (Q, ) space so that… for all t, and y Local maxima for all Q

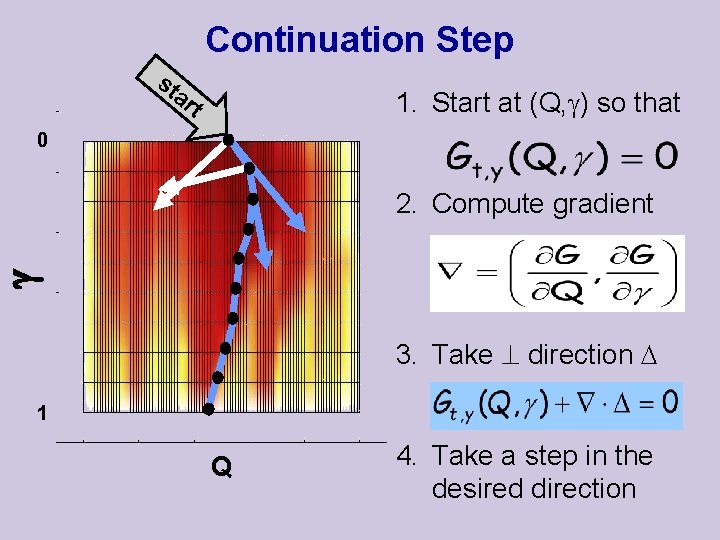

Continuation Step st ar 1. Start at (Q, ) so that t 0 2. Compute gradient 3. Take direction 1 Q 4. Take a step in the desired direction

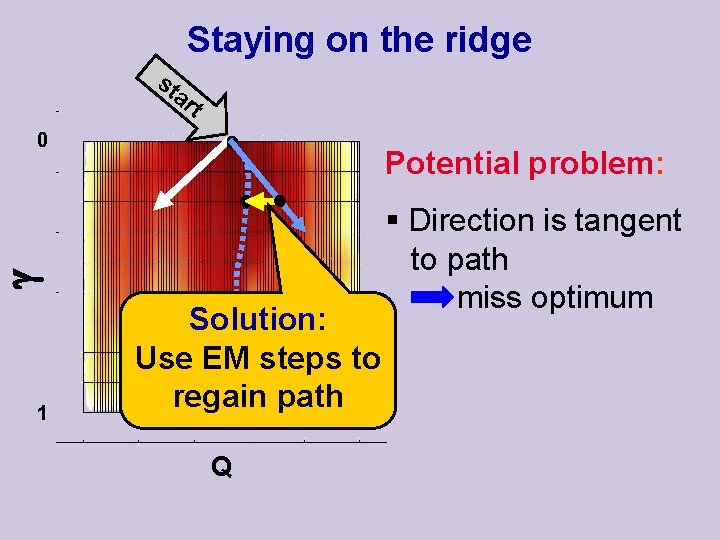

Staying on the ridge st ar t 0 Potential problem: 1 Solution: Use EM steps to regain path Q § Direction is tangent to path miss optimum

The IB-EM Algorithm § § Set =0 (start at easy solution) Iterate until =1 (EM solution is reached) § Iterate (stay on the ridge) § § E-Step: Maximize LIB-EM by optimizing Q M-Step: Maximize LIB-EM by optimizing P § Step (follow the ridge) § Compute gradient and direction § Take the step by changing and Q

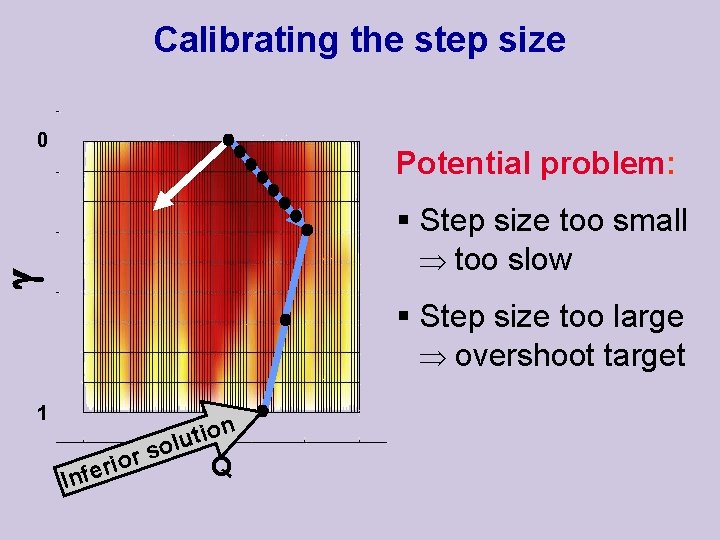

Calibrating the step size 0 Potential problem: § Step size too small too slow § Step size too large overshoot target 1 r io r e f In n o i t solu Q

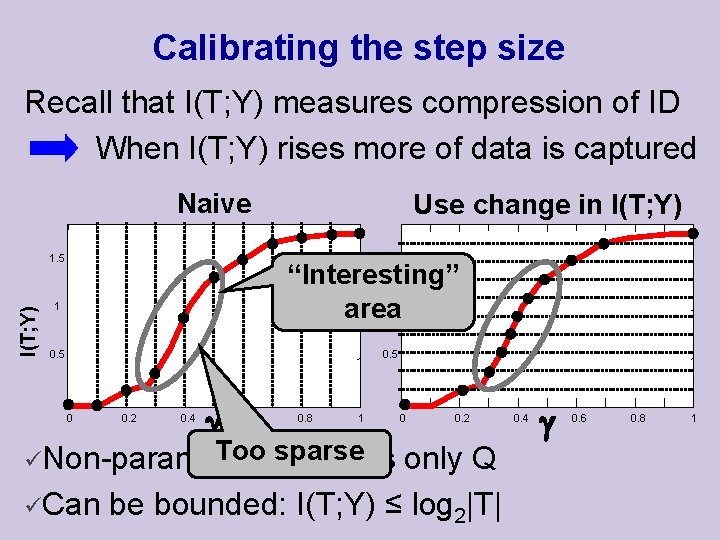

Calibrating the step size Recall that I(T; Y) measures compression of ID When I(T; Y) rises more of data is captured Naive I(T; Y) 1. 5 Use change in I(T; Y) 1. 5 “Interesting” area 1 1 0. 5 0 0. 2 0. 4 0. 6 0. 8 1 Too sparse üNon-parametric: involves 0 0. 2 only Q üCan be bounded: I(T; Y) ≤ log 2|T| 0. 4 0. 6 0. 8 1

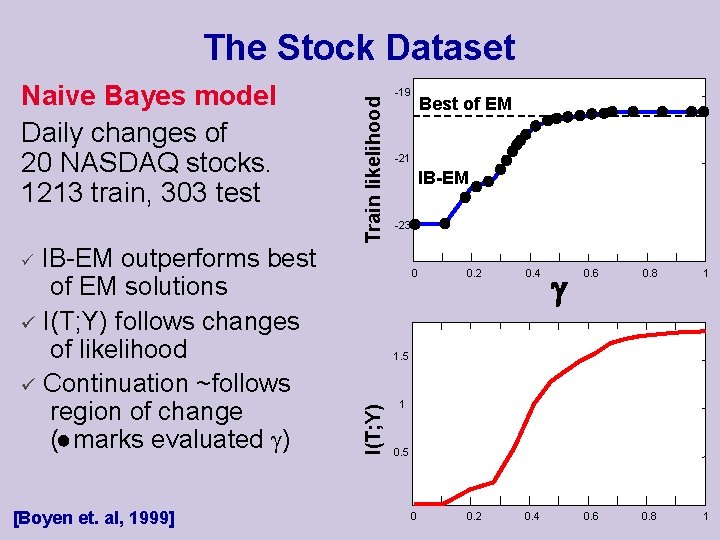

Naive Bayes model Daily changes of 20 NASDAQ stocks. 1213 train, 303 test IB-EM outperforms best of EM solutions ü I(T; Y) follows changes of likelihood ü Continuation ~follows region of change ( marks evaluated ) Train likelihood The Stock Dataset -19 -21 IB-EM -23 ü [Boyen et. al, 1999] Best of EM 0 0. 2 0. 4 0. 6 0. 8 1 I(T; Y) 1. 5 1 0. 5

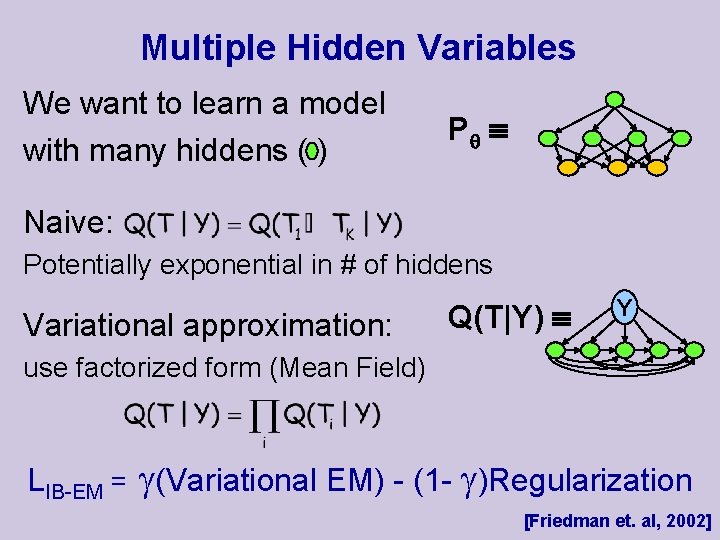

Multiple Hidden Variables We want to learn a model with many hiddens ( ) P Naive: Potentially exponential in # of hiddens Variational approximation: Q(T|Y) Y use factorized form (Mean Field) LIB-EM = (Variational EM) - (1 - )Regularization [Friedman et. al, 2002]

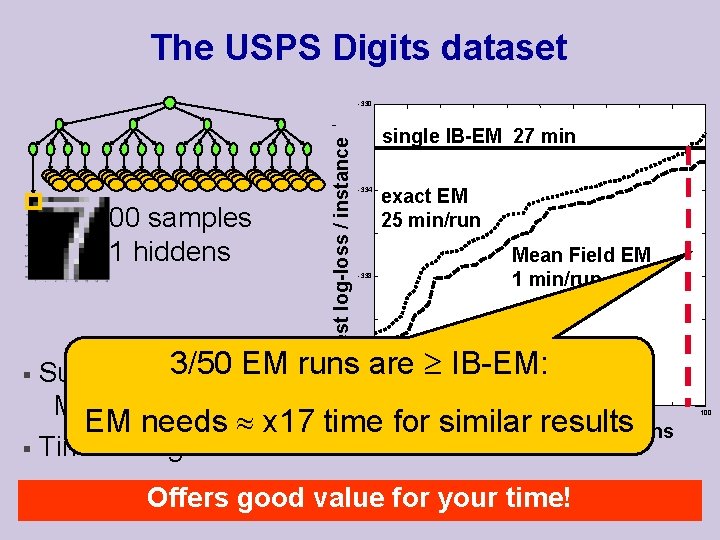

The USPS Digits dataset 400 samples 21 hiddens Test log-loss / instance -330 single IB-EM 27 min -334 exact EM 25 min/run Mean Field EM 1 min/run -338 § Superior to 3/50 all EM runs are IB-EM: -342 Mean Field EM runs EM needs x 17 time for. Percentage similarofresults random runs § Time single exact EM run 20 40 60 Offers good value for your time! 80 100

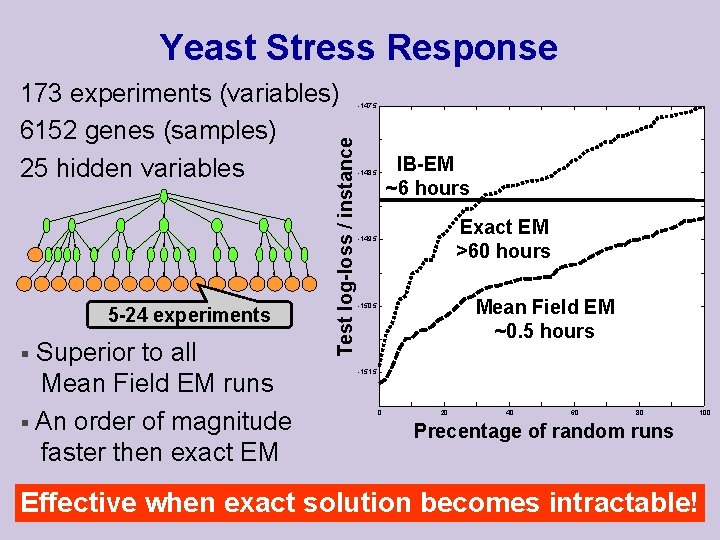

Yeast Stress Response 5 -24 experiments Superior to all Mean Field EM runs § An order of magnitude faster then exact EM § Test log-loss / instance 173 experiments (variables) 6152 genes (samples) 25 hidden variables -147. 5 IB-EM ~6 hours -148. 5 Exact EM >60 hours -149. 5 Mean Field EM ~0. 5 hours -150. 5 -151. 5 0 20 40 60 80 100 Precentage of random runs Effective when exact solution becomes intractable!

Summary New framework for learning hidden variables ü Formal relation of Bottleneck and EM ü Continuation for bypassing local maxima ü Flexible: structure / variational approximation Future Work • • • Learn optimal ≤ 1 for better generalization Explore other approximations of Q(T|Y) Model selection: learning cardinality and enrich structure

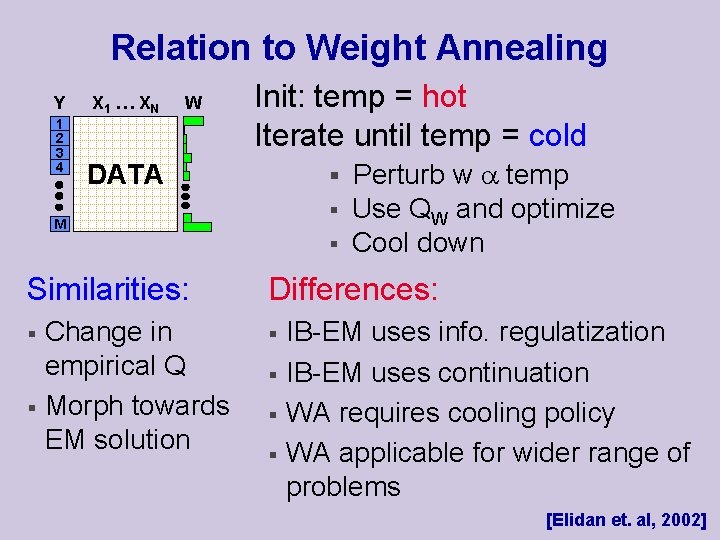

Relation to Weight Annealing Y 1 2 3 4 X 1 … X N W Init: temp = hot Iterate until temp = cold § Perturb w temp DATA § M § Similarities: § § Change in empirical Q Morph towards EM solution Use QW and optimize Cool down Differences: § § IB-EM uses info. regulatization IB-EM uses continuation WA requires cooling policy WA applicable for wider range of problems [Elidan et. al, 2002]

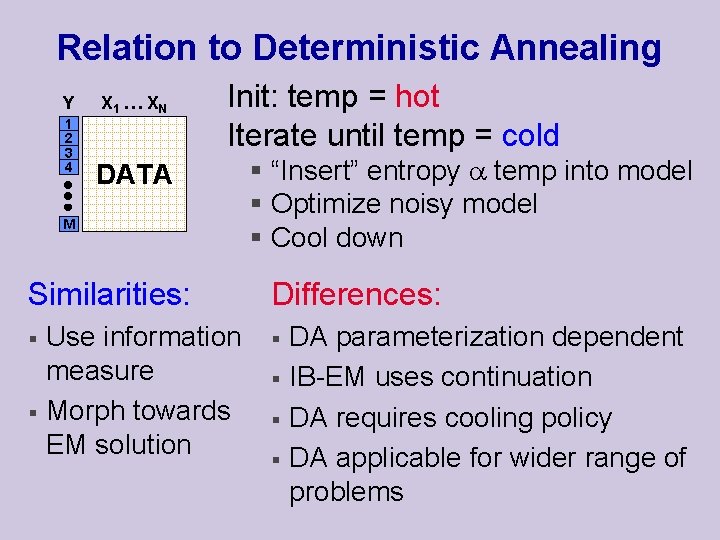

Relation to Deterministic Annealing Y 1 2 3 4 X 1 … X N Init: temp = hot Iterate until temp = cold DATA M Similarities: § § Use information measure Morph towards EM solution § “Insert” entropy temp into model § Optimize noisy model § Cool down Differences: § § DA parameterization dependent IB-EM uses continuation DA requires cooling policy DA applicable for wider range of problems

- Slides: 28