Bayesian Learning Thanks to Nir Friedman HU Example

Bayesian Learning Thanks to Nir Friedman, HU .

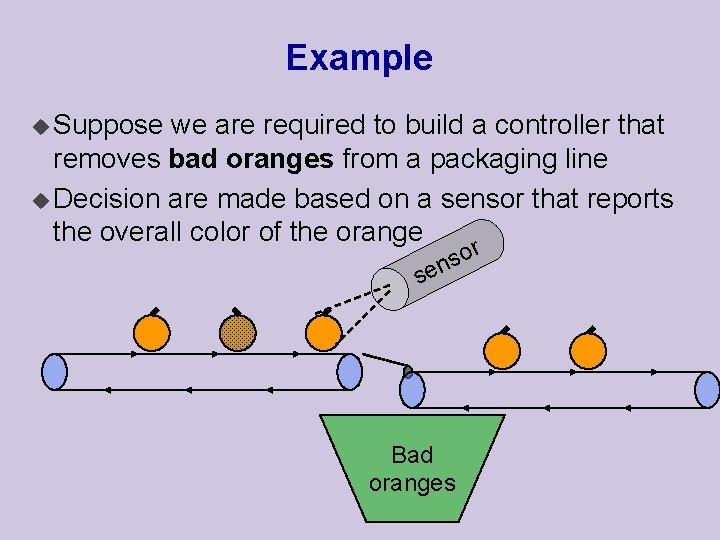

Example u Suppose we are required to build a controller that removes bad oranges from a packaging line u Decision are made based on a sensor that reports the overall color of the orange se r o s n Bad oranges

Classifying oranges Suppose we know all the aspects of the problem: Prior Probabilities: u Probability of good (+1) and bad (-1) oranges ¨ P(C = +1) = probability of a good orange ¨ P(C = -1) = probability of a bad orange · Note: P(C = +1) + P(C = -1) = 1 u Assumption: oranges are independent The occurrence of a bad orange does not depend on previous

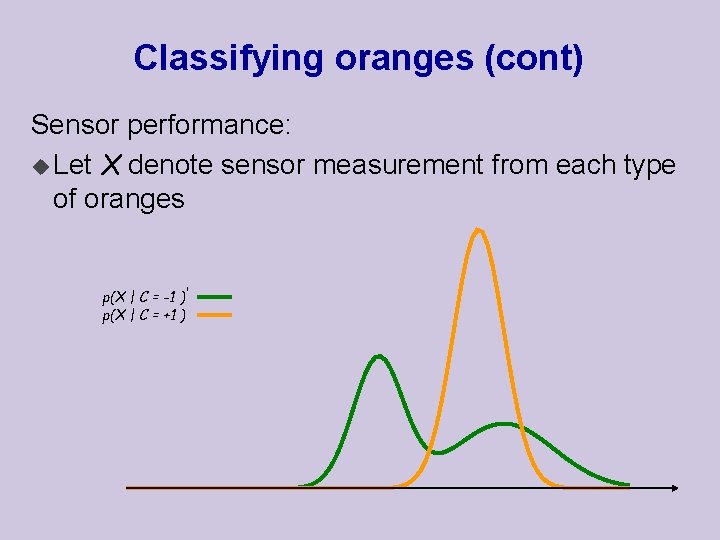

Classifying oranges (cont) Sensor performance: u Let X denote sensor measurement from each type of oranges p(X | C = -1 ) p(X | C = +1 )

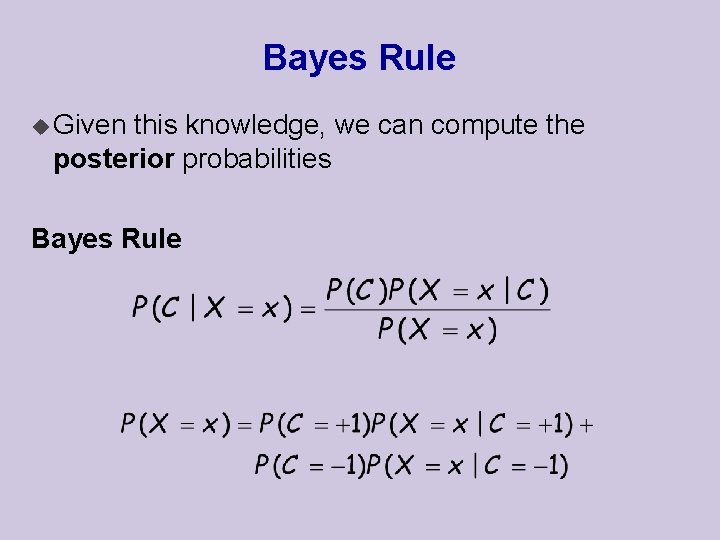

Bayes Rule u Given this knowledge, we can compute the posterior probabilities Bayes Rule

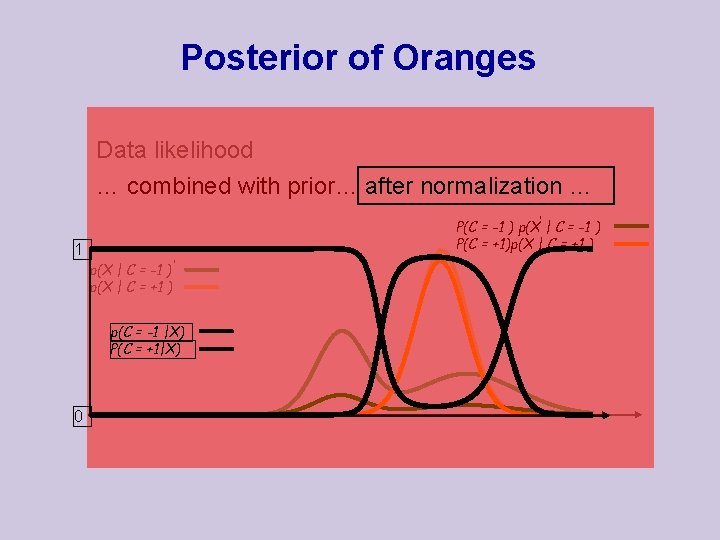

Posterior of Oranges Data likelihood … combined with prior… after normalization … P(C = -1 ) p(X | C = -1 ) P(C = +1)p(X | C = +1 ) 1 p(X | C = -1 ) p(X | C = +1 ) p(C = -1 |X) P(C = +1|X) 0

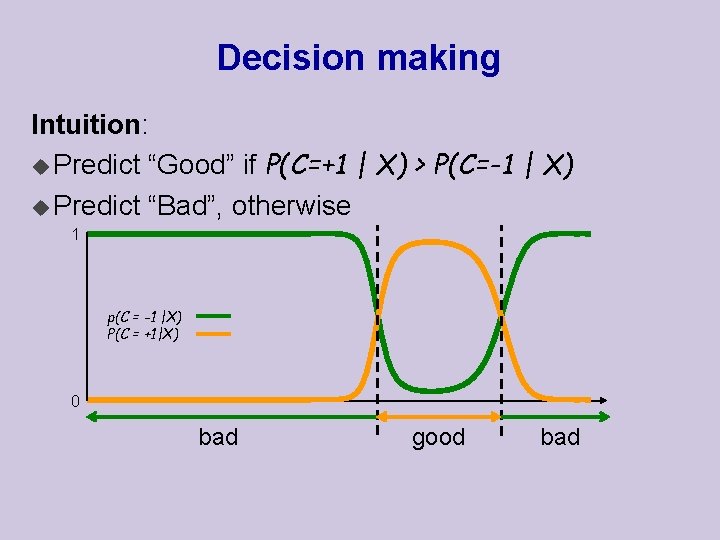

Decision making Intuition: u Predict “Good” if P(C=+1 | X) > P(C=-1 | X) u Predict “Bad”, otherwise 1 p(C = -1 |X) P(C = +1|X) 0 bad good bad

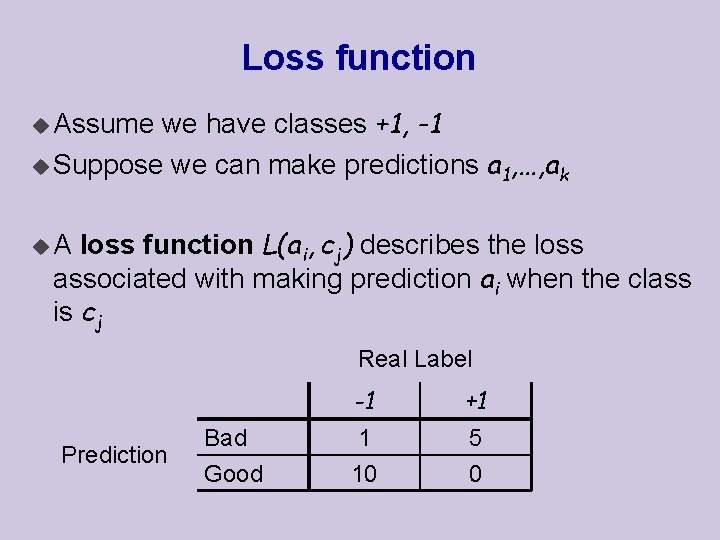

Loss function we have classes +1, -1 u Suppose we can make predictions a 1, …, ak u Assume loss function L(ai, cj) describes the loss associated with making prediction ai when the class is cj u. A Real Label Prediction Bad Good -1 +1 1 10 5 0

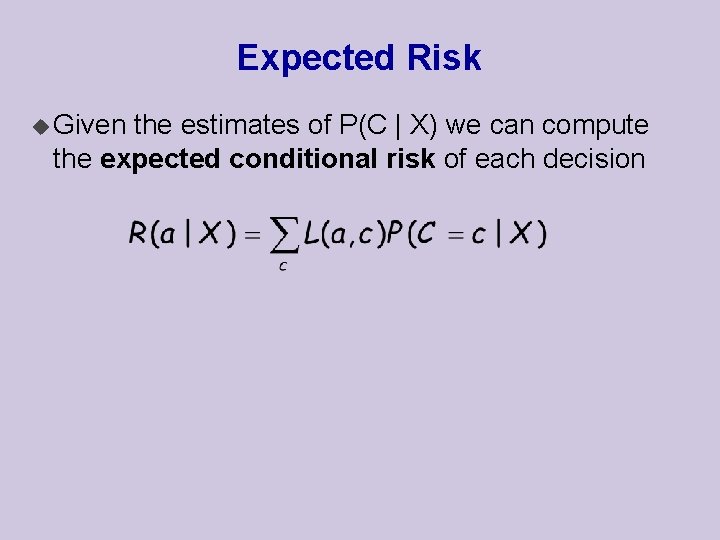

Expected Risk u Given the estimates of P(C | X) we can compute the expected conditional risk of each decision

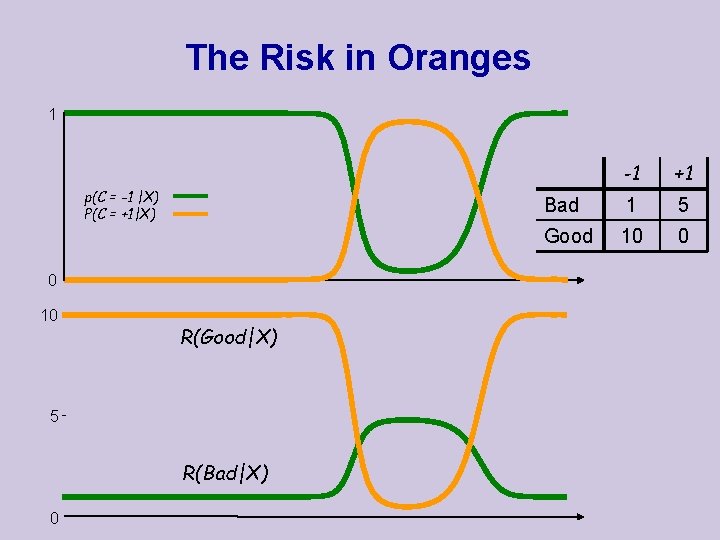

The Risk in Oranges 1 p(C = -1 |X) P(C = +1|X) 0 10 R(Good|X) 5 R(Bad|X) 0 -1 +1 Bad 1 5 Good 10 0

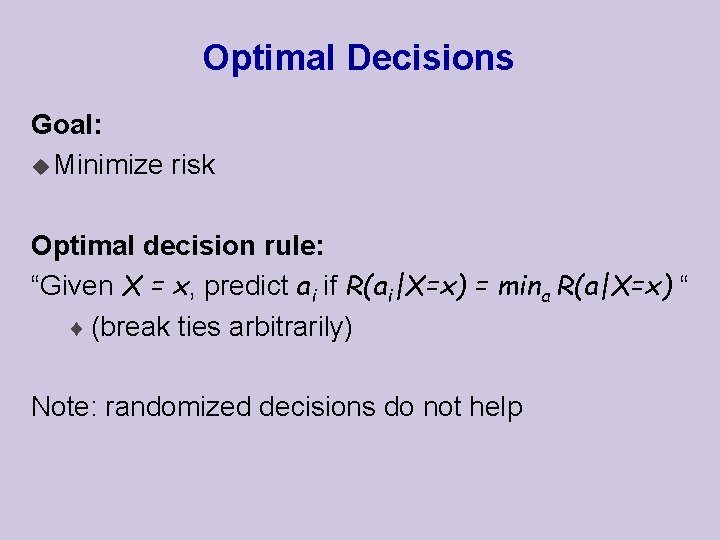

Optimal Decisions Goal: u Minimize risk Optimal decision rule: “Given X = x, predict ai if R(ai|X=x) = mina R(a|X=x) “ ¨ (break ties arbitrarily) Note: randomized decisions do not help

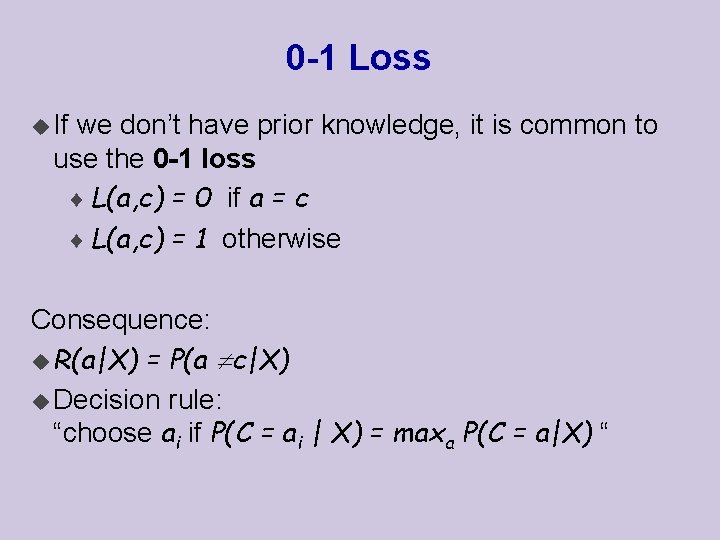

0 -1 Loss u If we don’t have prior knowledge, it is common to use the 0 -1 loss ¨ L(a, c) = 0 if a = c ¨ L(a, c) = 1 otherwise Consequence: u R(a|X) = P(a c|X) u Decision rule: “choose ai if P(C = ai | X) = maxa P(C = a|X) “

Bayesian Decisions: Summery Decisions based on two components: ¨ Conditional distribution P(C|X) ¨ Loss function L(A, C) Pros: u Specifies optimal actions in presence of noisy signals u Can deal with skewed loss functions Cons: u Requires P(C|X)

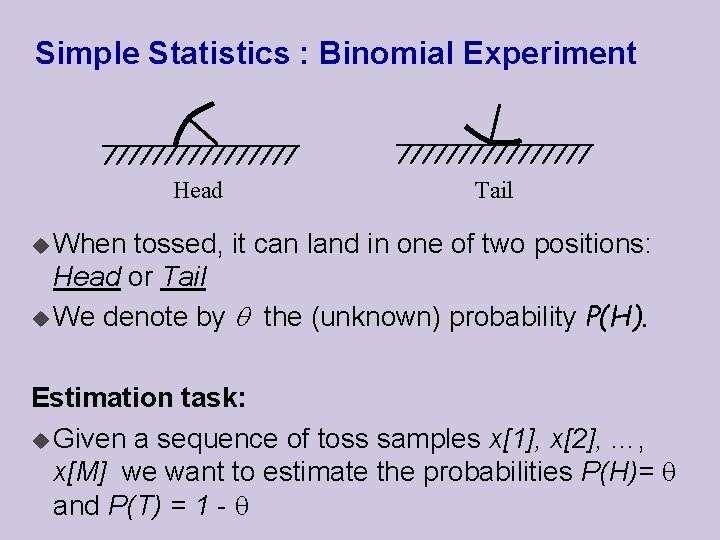

Simple Statistics : Binomial Experiment Head Tail u When tossed, it can land in one of two positions: Head or Tail u We denote by the (unknown) probability P(H). Estimation task: u Given a sequence of toss samples x[1], x[2], …, x[M] we want to estimate the probabilities P(H)= and P(T) = 1 -

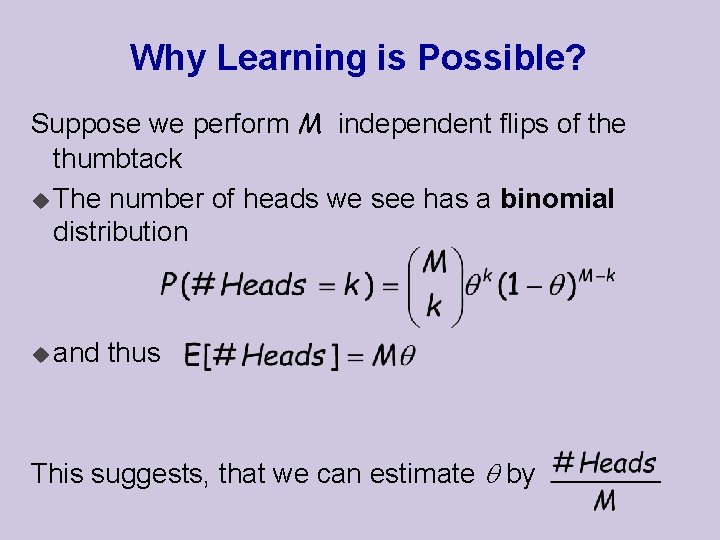

Why Learning is Possible? Suppose we perform M independent flips of the thumbtack u The number of heads we see has a binomial distribution u and thus This suggests, that we can estimate by

Maximum Likelihood Estimation MLE Principle: Learn parameters that maximize the likelihood function u This is one of the most commonly used estimators in statistics u Intuitively appealing u Well studied properties

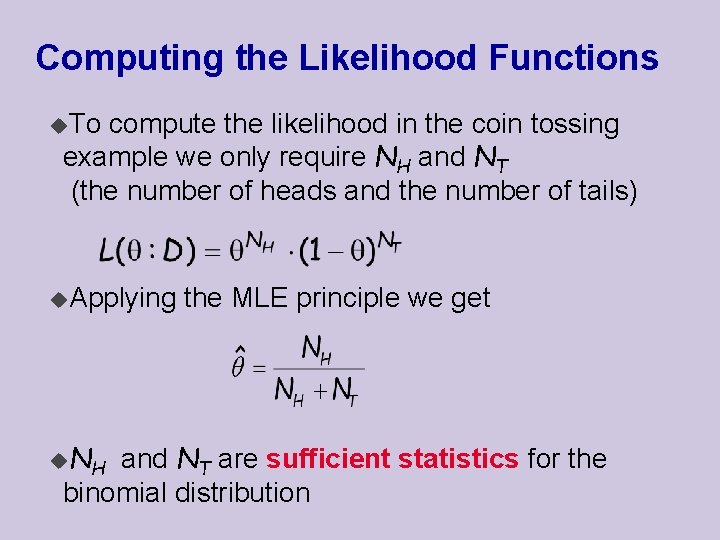

Computing the Likelihood Functions u. To compute the likelihood in the coin tossing example we only require NH and NT (the number of heads and the number of tails) u. Applying u. N H the MLE principle we get and NT are sufficient statistics for the binomial distribution

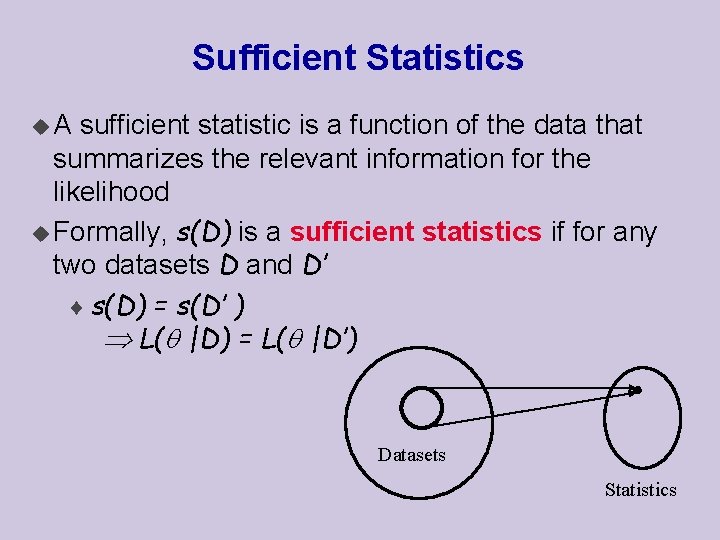

Sufficient Statistics u. A sufficient statistic is a function of the data that summarizes the relevant information for the likelihood u Formally, s(D) is a sufficient statistics if for any two datasets D and D’ ¨ s(D) = s(D’ ) L( |D) = L( |D’) Datasets Statistics

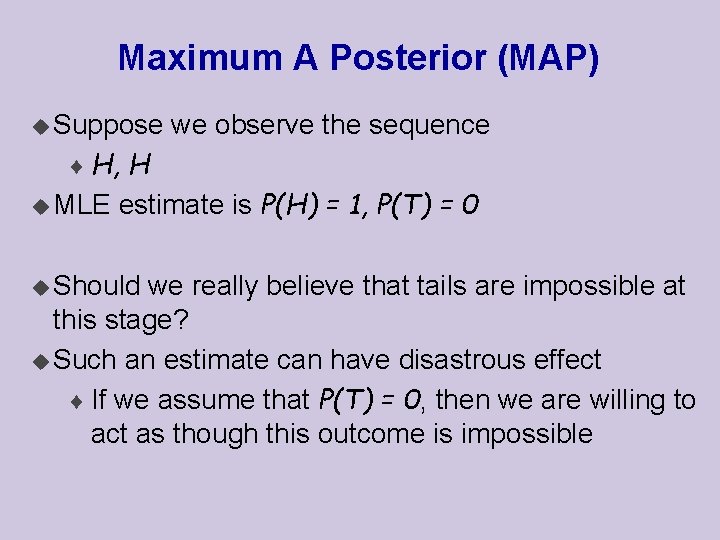

Maximum A Posterior (MAP) u Suppose we observe the sequence ¨ H, H u MLE estimate is P(H) = 1, P(T) = 0 u Should we really believe that tails are impossible at this stage? u Such an estimate can have disastrous effect ¨ If we assume that P(T) = 0, then we are willing to act as though this outcome is impossible

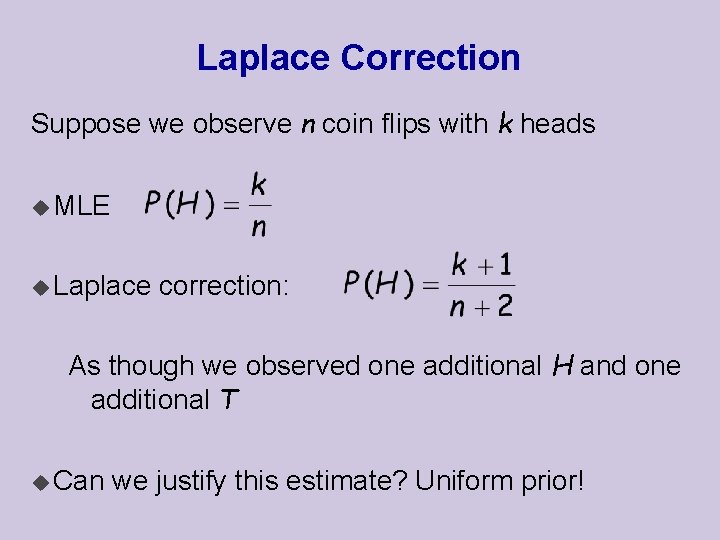

Laplace Correction Suppose we observe n coin flips with k heads u MLE u Laplace correction: As though we observed one additional H and one additional T u Can we justify this estimate? Uniform prior!

Bayesian Reasoning u In Bayesian reasoning we represent our uncertainty about the unknown parameter by a probability distribution u This probability distribution can be viewed as subjective probability ¨ This is a personal judgment of uncertainty

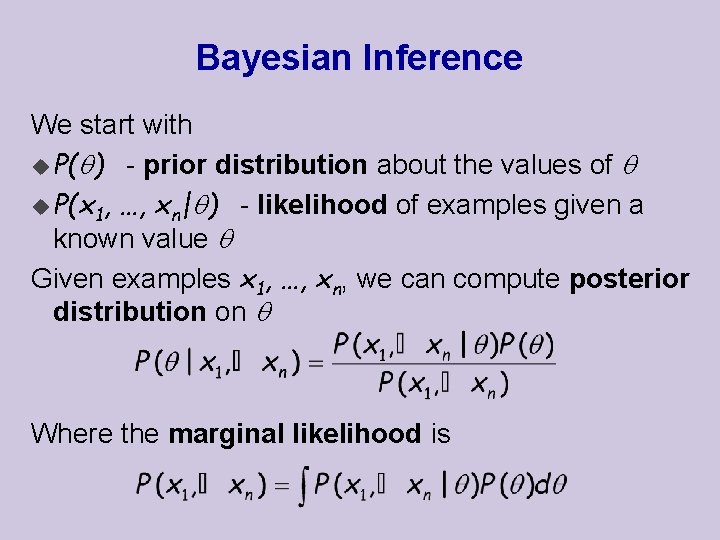

Bayesian Inference We start with u P( ) - prior distribution about the values of u P(x 1, …, xn| ) - likelihood of examples given a known value Given examples x 1, …, xn, we can compute posterior distribution on Where the marginal likelihood is

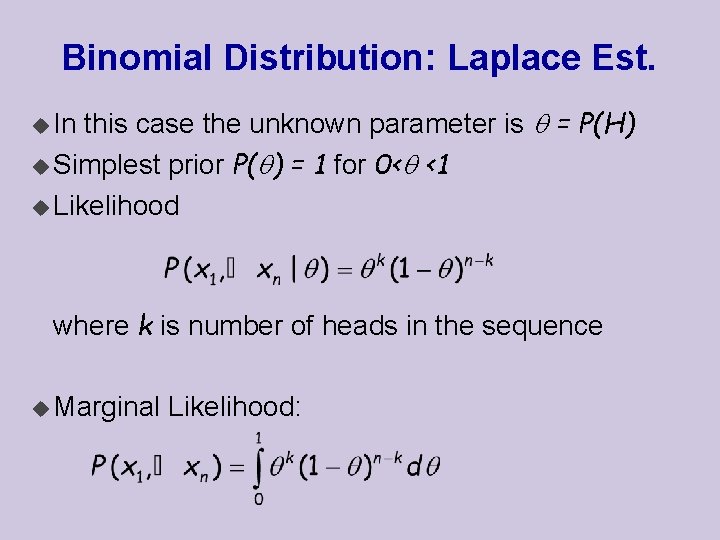

Binomial Distribution: Laplace Est. this case the unknown parameter is = P(H) u Simplest prior P( ) = 1 for 0< <1 u Likelihood u In where k is number of heads in the sequence u Marginal Likelihood:

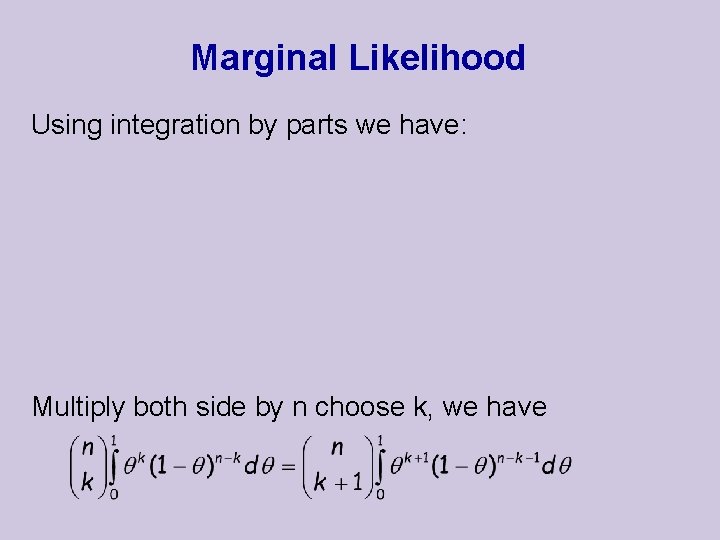

Marginal Likelihood Using integration by parts we have: Multiply both side by n choose k, we have

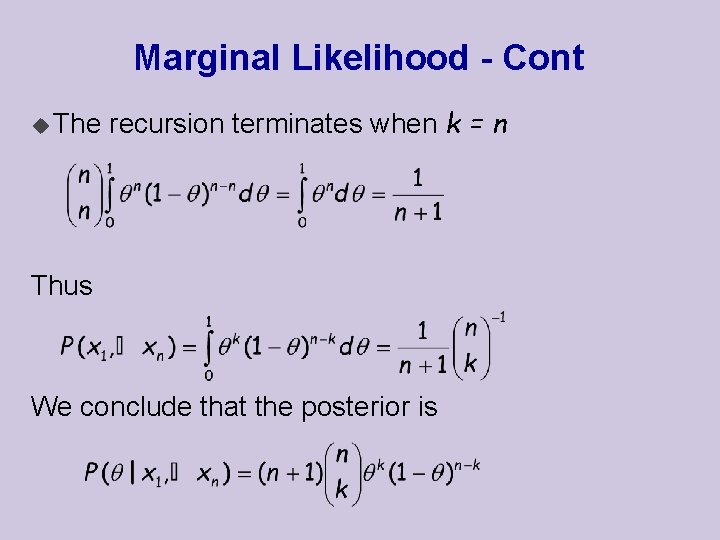

Marginal Likelihood - Cont u The recursion terminates when k = n Thus We conclude that the posterior is

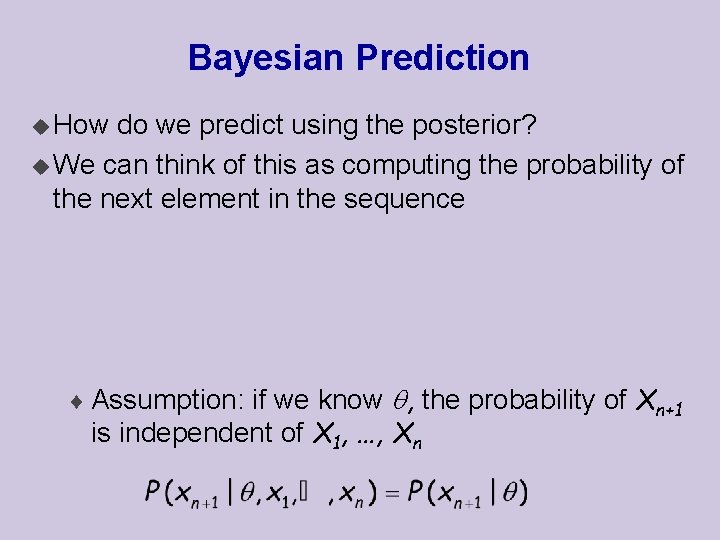

Bayesian Prediction u How do we predict using the posterior? u We can think of this as computing the probability of the next element in the sequence ¨ Assumption: if we know , the probability of Xn+1 is independent of X 1, …, Xn

Bayesian Prediction u Thus, we conclude that

Naïve Bayes .

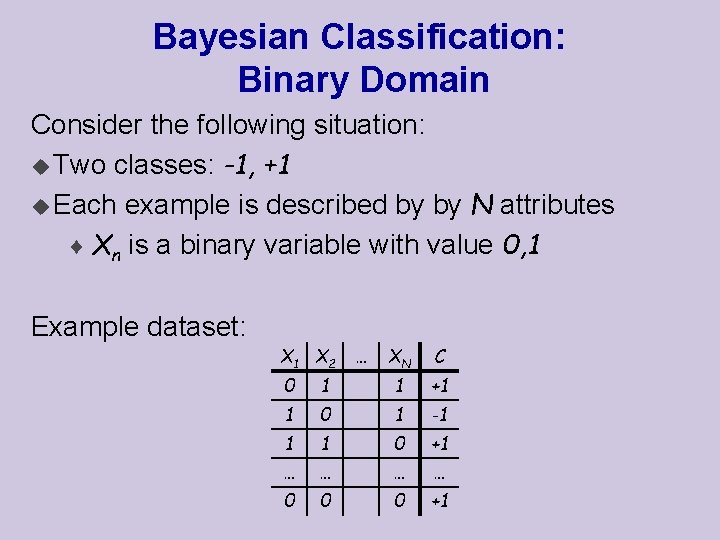

Bayesian Classification: Binary Domain Consider the following situation: u Two classes: -1, +1 u Each example is described by by N attributes ¨ Xn is a binary variable with value 0, 1 Example dataset: X 1 X 2 … XN C 0 1 1 +1 1 0 1 -1 1 1 0 +1 … … 0 0 0 +1

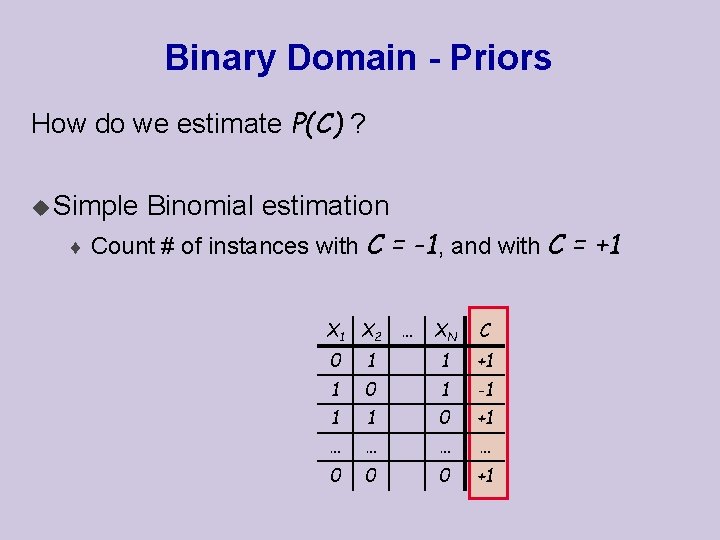

Binary Domain - Priors How do we estimate P(C) ? u Simple Binomial estimation ¨ Count # of instances with C = -1, and with C = +1 X 2 … XN C 0 1 1 +1 1 0 1 -1 1 1 0 +1 … … 0 0 0 +1

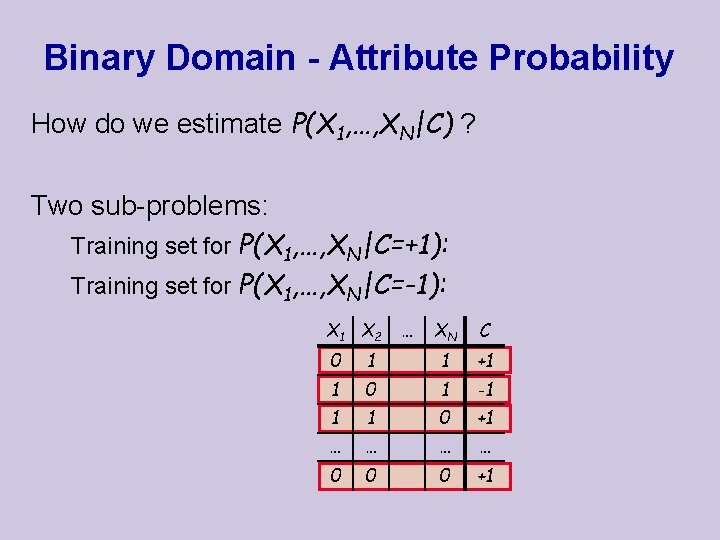

Binary Domain - Attribute Probability How do we estimate P(X 1, …, XN|C) ? Two sub-problems: Training set for P(X 1, …, XN|C=+1): Training set for P(X 1, …, XN|C=-1): X 1 X 2 … XN C 0 1 1 +1 1 0 1 -1 1 1 0 +1 … … 0 0 0 +1

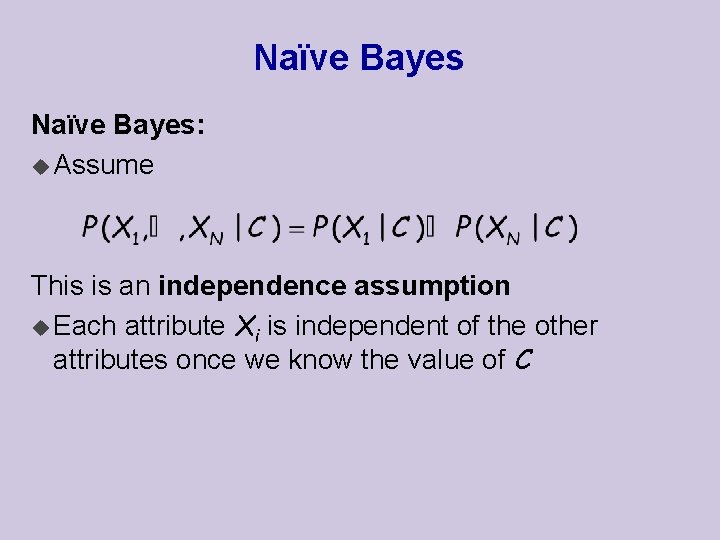

Naïve Bayes: u Assume This is an independence assumption u Each attribute Xi is independent of the other attributes once we know the value of C

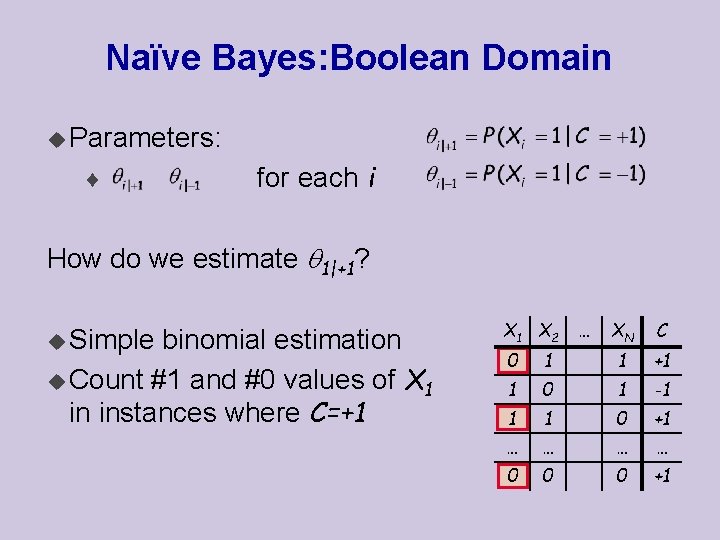

Naïve Bayes: Boolean Domain u Parameters: ¨ for each i How do we estimate 1|+1? u Simple binomial estimation u Count #1 and #0 values of X 1 in instances where C=+1 X 2 … XN C 0 1 1 +1 1 0 1 -1 1 1 0 +1 … … 0 0 0 +1

Interpretation of Naïve Bayes

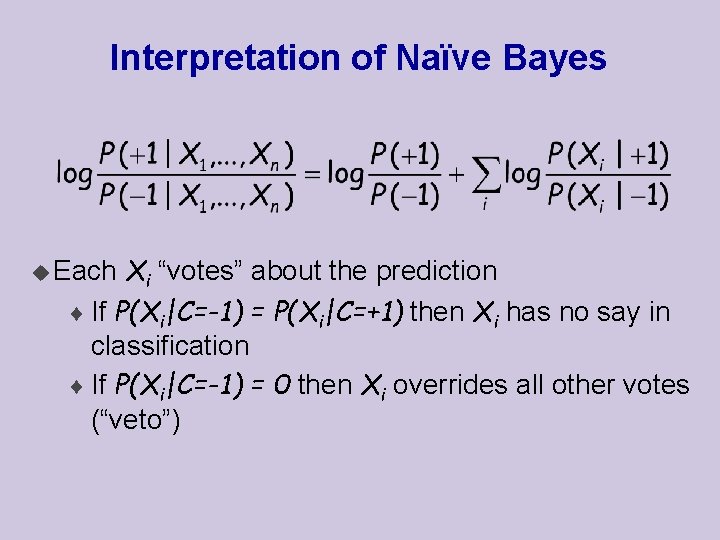

Interpretation of Naïve Bayes Xi “votes” about the prediction ¨ If P(Xi|C=-1) = P(Xi|C=+1) then Xi has no say in classification ¨ If P(Xi|C=-1) = 0 then Xi overrides all other votes (“veto”) u Each

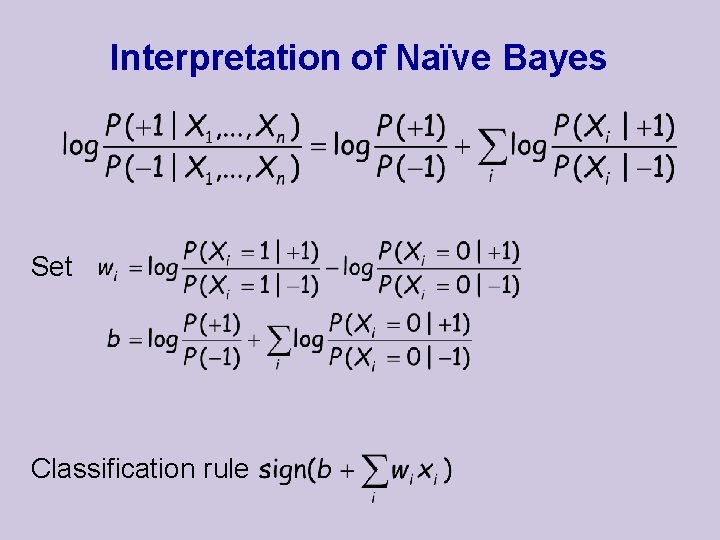

Interpretation of Naïve Bayes Set Classification rule

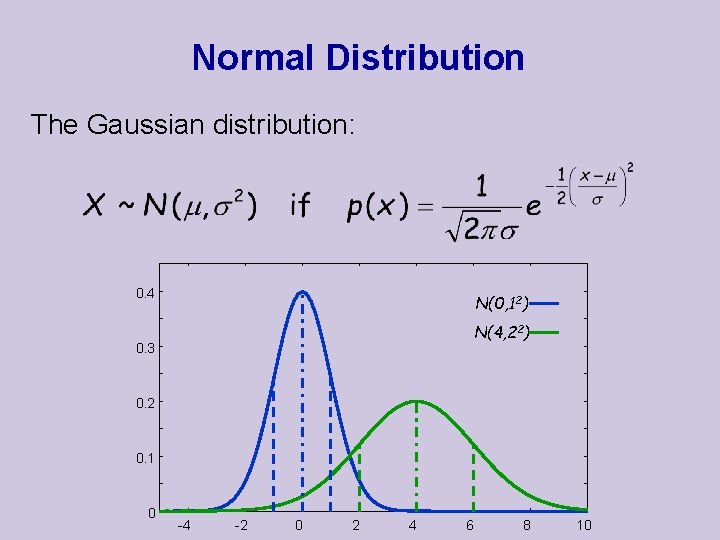

Normal Distribution The Gaussian distribution: 0. 4 N(0, 12) N(4, 22) 0. 3 0. 2 0. 1 0 -4 -2 0 2 4 6 8 10

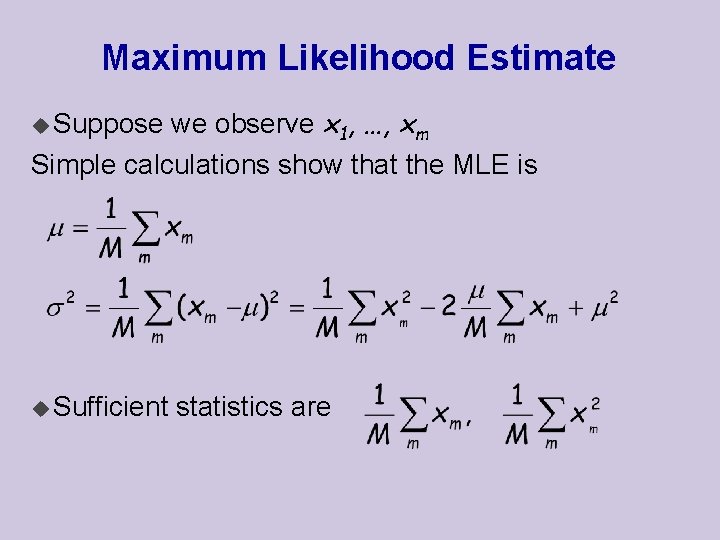

Maximum Likelihood Estimate we observe x 1, …, xm Simple calculations show that the MLE is u Suppose u Sufficient statistics are

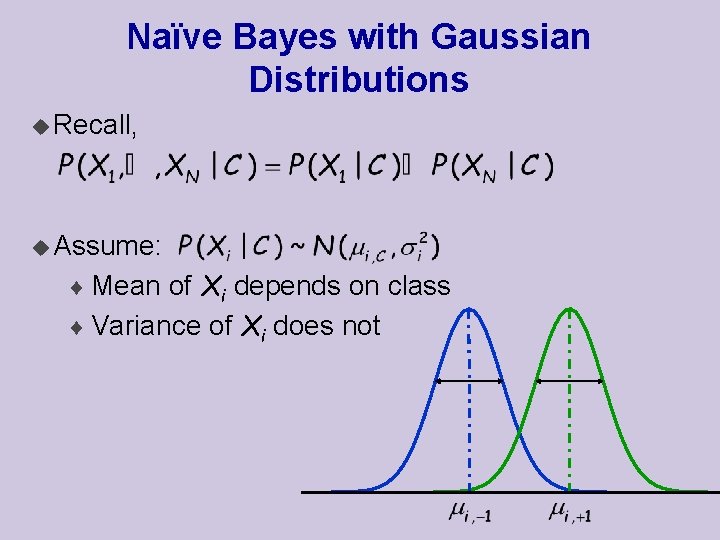

Naïve Bayes with Gaussian Distributions u Recall, u Assume: ¨ Mean of Xi depends on class ¨ Variance of Xi does not

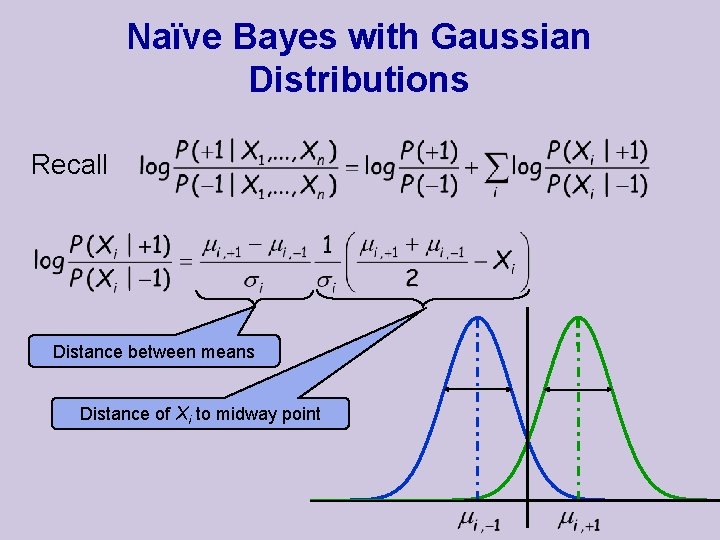

Naïve Bayes with Gaussian Distributions Recall Distance between means Distance of Xi to midway point

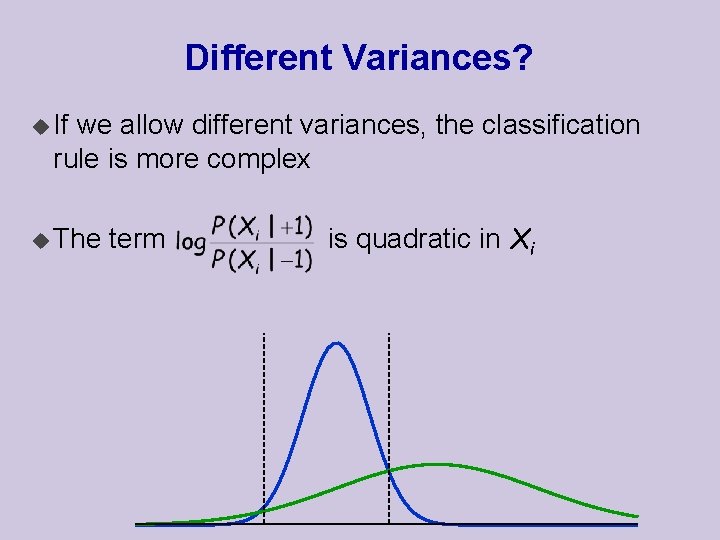

Different Variances? u If we allow different variances, the classification rule is more complex u The term is quadratic in Xi

- Slides: 41