Hierarchical Load Balancing for Charm Applications on Large

Hierarchical Load Balancing for Charm++ Applications on Large Supercomputers Gengbin Zheng, Esteban Meneses, Abhinav Bhatele and Laxmikant V. Kale Parallel Programming Lab University of Illinois at Urbana Champaign

Motivations n n Load balancing is key to scalability on very large supercomputers Load balancing becomes challenging n n n Increasing machine and problem size leads to more complex and costly load balancing algorithms Considerable large amount of resource needed Scale load balancing itself 11/26/2020 P 2 S 2 -2010 2

Periodic Load Balancing n Perform load balancing periodically n n E. g. stop and go scheme Persistent tasks Pay load balancing cost only when it is needed Task and data migrate as needed 11/26/2020 P 2 S 2 -2010 3

Charm++ n Parallel C++ n n n Objects with methods that can be called remotely Migratable objects Dynamic load balancing Fault tolerance An MPI implementation on Charm++ 11/26/2020 P 2 S 2 -2010 4

Principle of Persistence n n Once an application is expressed in terms of interacting objects, object communication patterns and computational loads tend to persist over time In spite of dynamic behavior n n n Abrupt and large, but infrequent changes (e. g. AMR) Slow and small changes (e. g. particle migration) Parallel analog of principle of locality n Heuristics, that holds for most CSE applications 11/26/2020 P 2 S 2 -2010 5

Measurement Based Load Balancing n n Based on Principle of persistence Runtime instrumentation (LB Database) n n communication volume and computation time Measurement based load balancers n n Use the database periodically to make new decisions Many alternative strategies can use the database n n n 11/26/2020 Centralized vs distributed Greedy vs refinement Taking communication into account Taking dependencies into account (More complex) Topology-aware P 2 S 2 -2010 6

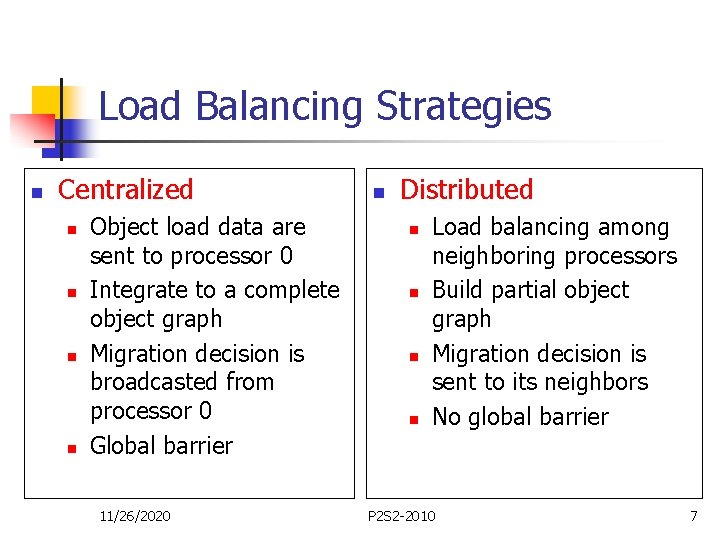

Load Balancing Strategies n Centralized n n Object load data are sent to processor 0 Integrate to a complete object graph Migration decision is broadcasted from processor 0 Global barrier 11/26/2020 n Distributed n n Load balancing among neighboring processors Build partial object graph Migration decision is sent to its neighbors No global barrier P 2 S 2 -2010 7

Limitations of Centralized Strategies n n Now consider an application with 1 M objects on 64 K processors Limitations (inherently not scalable) n n Central node - memory/communication bottleneck Decision-making algorithms tend to be very slow 11/26/2020 P 2 S 2 -2010 8

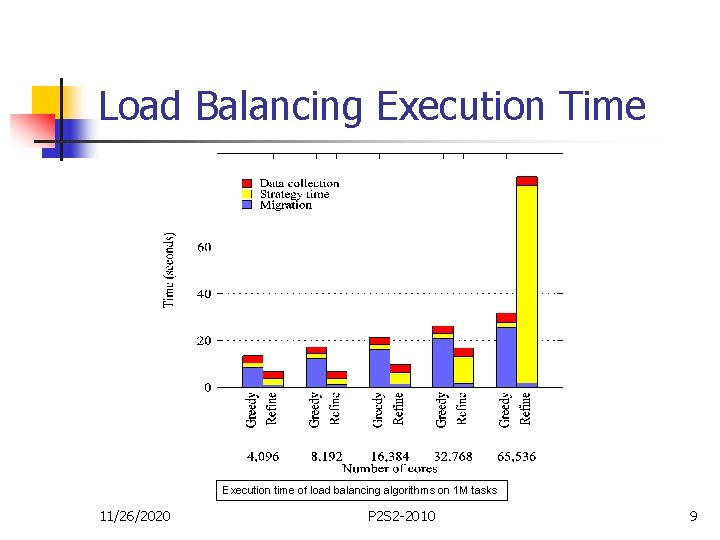

Load Balancing Execution Time Execution time of load balancing algorithms on 1 M tasks 11/26/2020 P 2 S 2 -2010 9

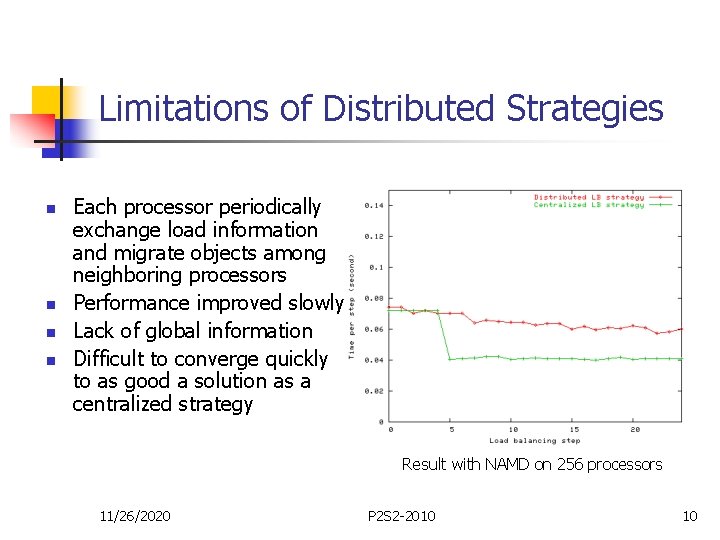

Limitations of Distributed Strategies n n Each processor periodically exchange load information and migrate objects among neighboring processors Performance improved slowly Lack of global information Difficult to converge quickly to as good a solution as a centralized strategy Result with NAMD on 256 processors 11/26/2020 P 2 S 2 -2010 10

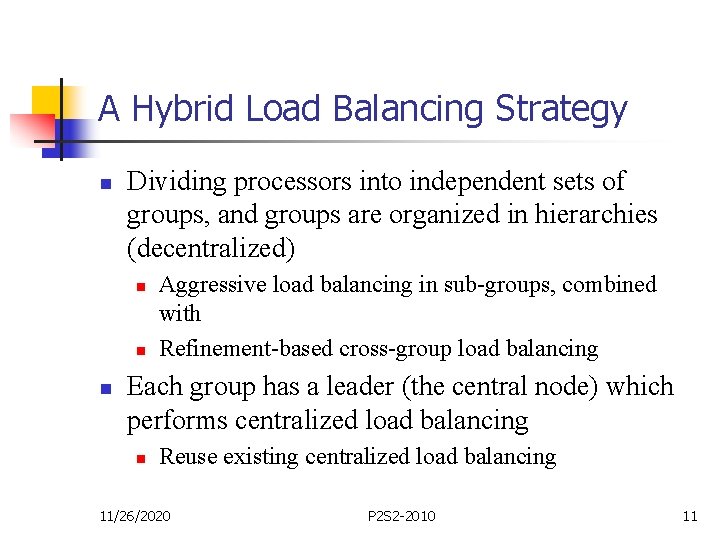

A Hybrid Load Balancing Strategy n Dividing processors into independent sets of groups, and groups are organized in hierarchies (decentralized) n n n Aggressive load balancing in sub-groups, combined with Refinement-based cross-group load balancing Each group has a leader (the central node) which performs centralized load balancing n Reuse existing centralized load balancing 11/26/2020 P 2 S 2 -2010 11

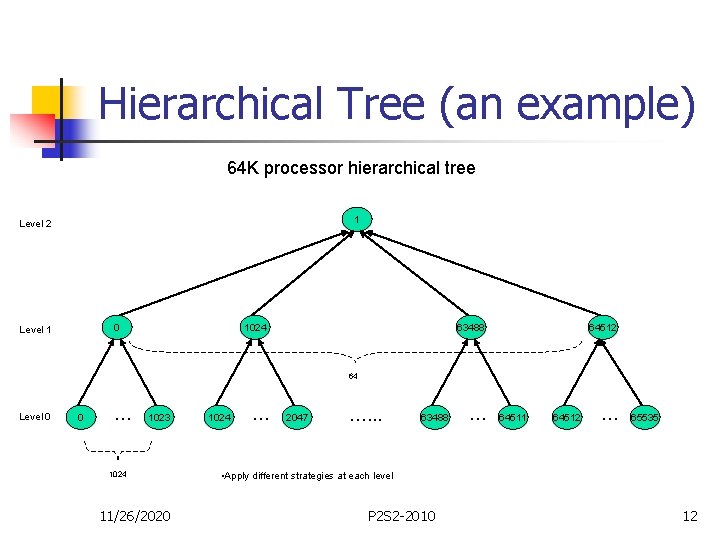

Hierarchical Tree (an example) 64 K processor hierarchical tree 1 Level 2 0 Level 1 1024 63488 64512 64 Level 0 0 … 1023 1024 11/26/2020 1024 … 2047 …. . . 63488 … 64511 64512 … 65535 • Apply different strategies at each level P 2 S 2 -2010 12

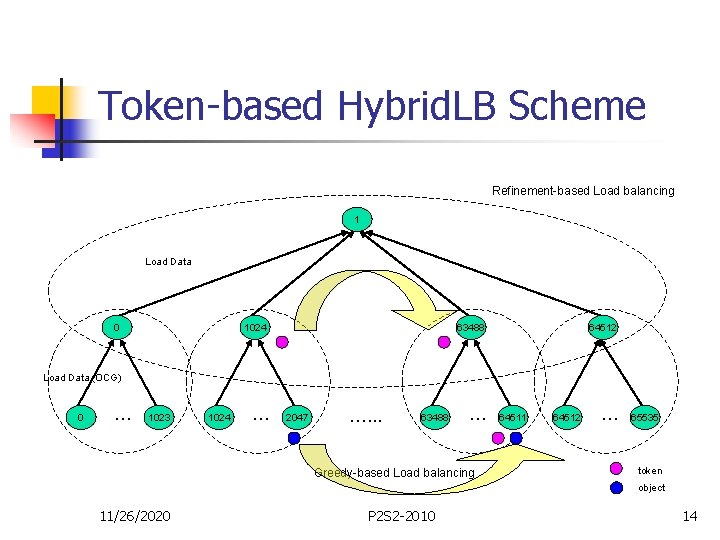

Issues n Load data reduction n n Reducing data movement n n Semi-centralized load balancing scheme Token-based local balancing Topology-aware tree construction 11/26/2020 P 2 S 2 -2010 13

Token-based Hybrid. LB Scheme Refinement-based Load balancing 1 Load Data 0 1024 63488 64512 Load Data (OCG) 0 … 1023 1024 … 2047 …. . . 63488 … Greedy-based Load balancing 64511 64512 … 65535 token object 11/26/2020 P 2 S 2 -2010 14

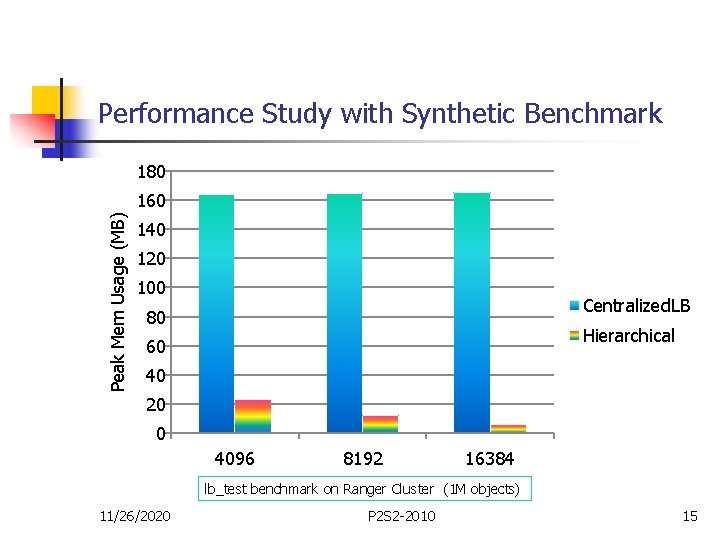

Performance Study with Synthetic Benchmark 180 Peak Mem Usage (MB) 160 140 120 100 Centralized. LB 80 Hierarchical 60 40 20 0 4096 8192 16384 lb_test benchmark on Ranger Cluster (1 M objects) 11/26/2020 P 2 S 2 -2010 15

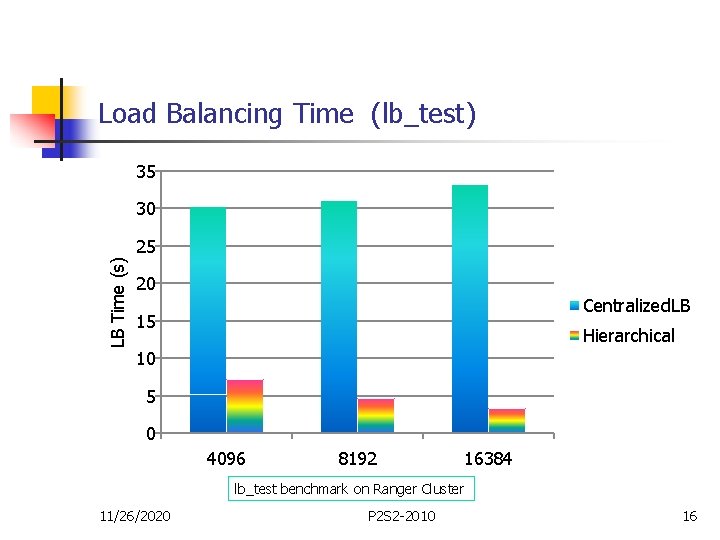

Load Balancing Time (lb_test) 35 30 LB Time (s) 25 20 Centralized. LB 15 Hierarchical 10 5 0 4096 8192 16384 lb_test benchmark on Ranger Cluster 11/26/2020 P 2 S 2 -2010 16

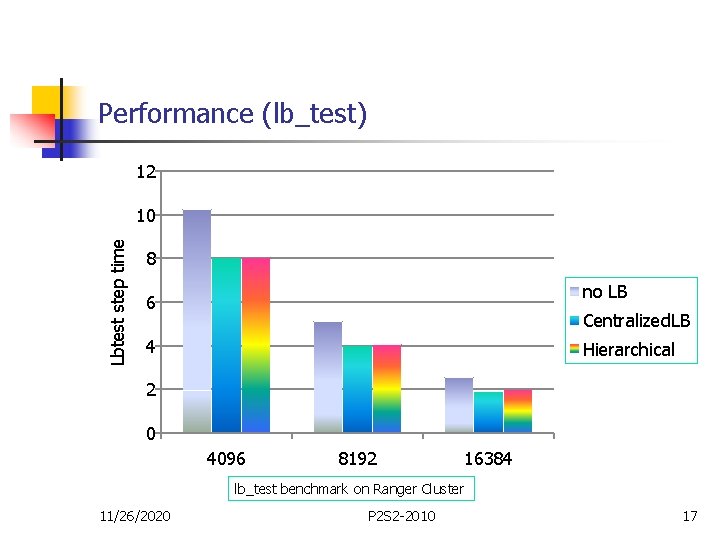

Performance (lb_test) 12 Lbtest step time 10 8 no LB 6 Centralized. LB 4 Hierarchical 2 0 4096 8192 16384 lb_test benchmark on Ranger Cluster 11/26/2020 P 2 S 2 -2010 17

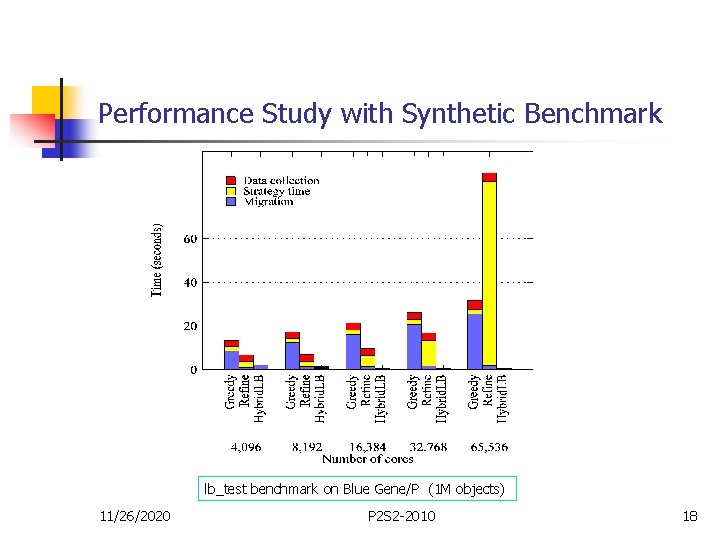

Performance Study with Synthetic Benchmark lb_test benchmark on Blue Gene/P (1 M objects) 11/26/2020 P 2 S 2 -2010 18

NAMD Hierarchical LB n NAMD implements its own specialized load balancing strategies n n Based on Charm++ load balancing framework Extended NAMD comprehensive and refinement-based solution n Work on subset of processors 11/26/2020 P 2 S 2 -2010 19

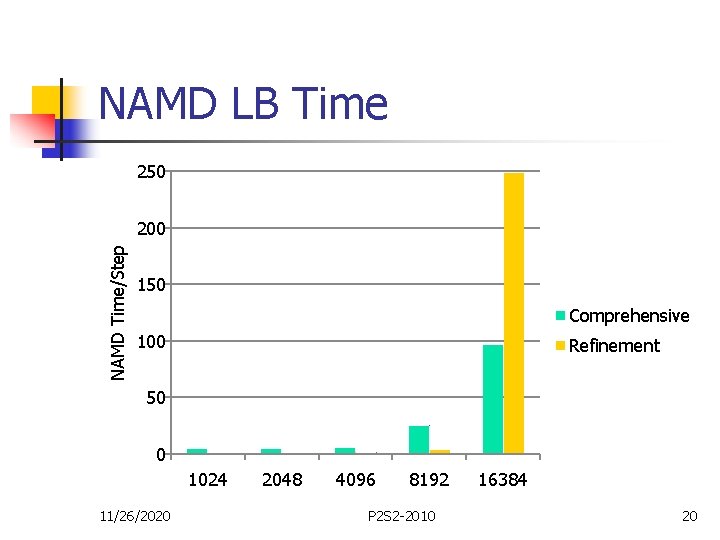

NAMD LB Time 250 NAMD Time/Step 200 150 Comprehensive 100 Refinement 50 0 1024 11/26/2020 2048 4096 8192 P 2 S 2 -2010 16384 20

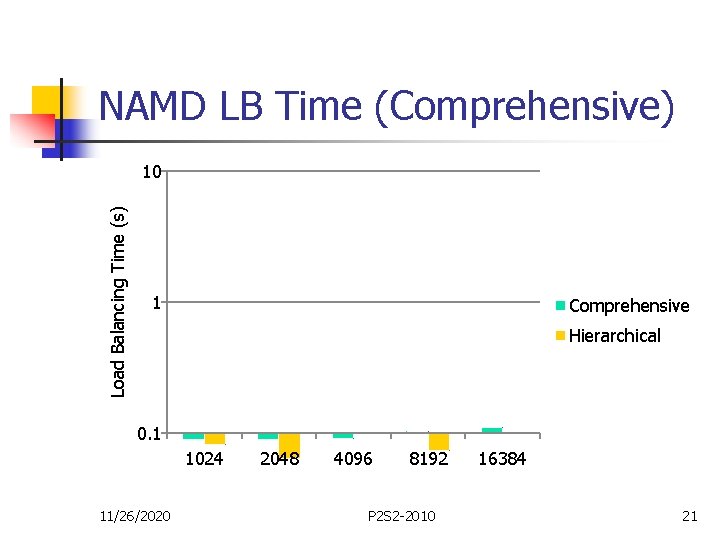

NAMD LB Time (Comprehensive) Load Balancing Time (s) 10 1 Comprehensive Hierarchical 0. 1 1024 11/26/2020 2048 4096 8192 P 2 S 2 -2010 16384 21

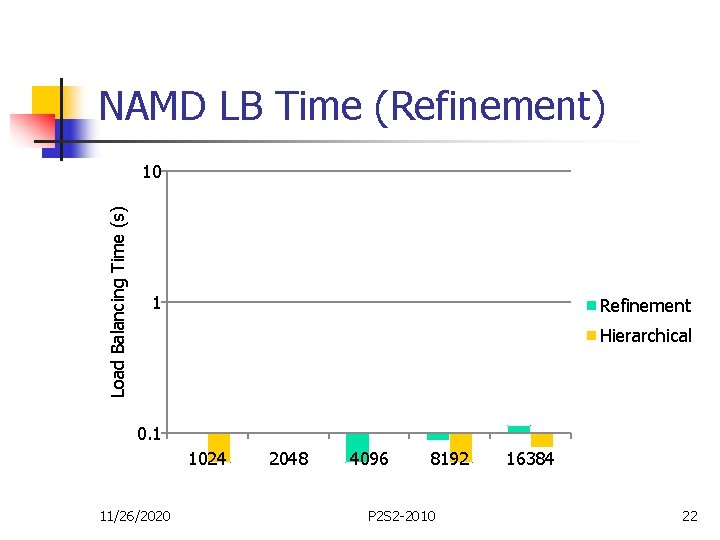

NAMD LB Time (Refinement) Load Balancing Time (s) 10 1 Refinement Hierarchical 0. 1 1024 11/26/2020 2048 4096 8192 P 2 S 2 -2010 16384 22

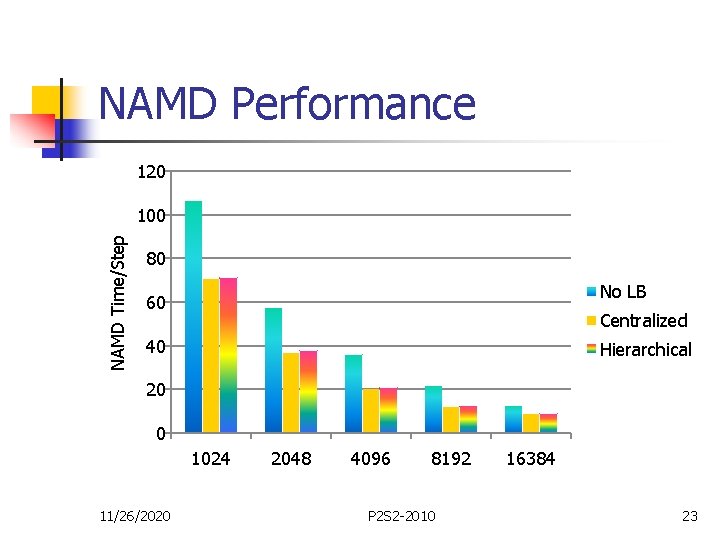

NAMD Performance 120 NAMD Time/Step 100 80 No LB 60 Centralized 40 Hierarchical 20 0 1024 11/26/2020 2048 4096 8192 P 2 S 2 -2010 16384 23

Conclusions n n Load balancing is challenging and potentially costly on very large machines Hierarchical load balancing is effective n n Using 64 K cores with synthetic benchmark And 16 K with real application 11/26/2020 P 2 S 2 -2010 24

Thank you! Any questions?

- Slides: 25