Basic Charm and Load Balancing Gengbin Zheng charm

Basic Charm++ and Load Balancing Gengbin Zheng charm. cs. uiuc. edu 10/11/2005 1

Charm++ Basics 2

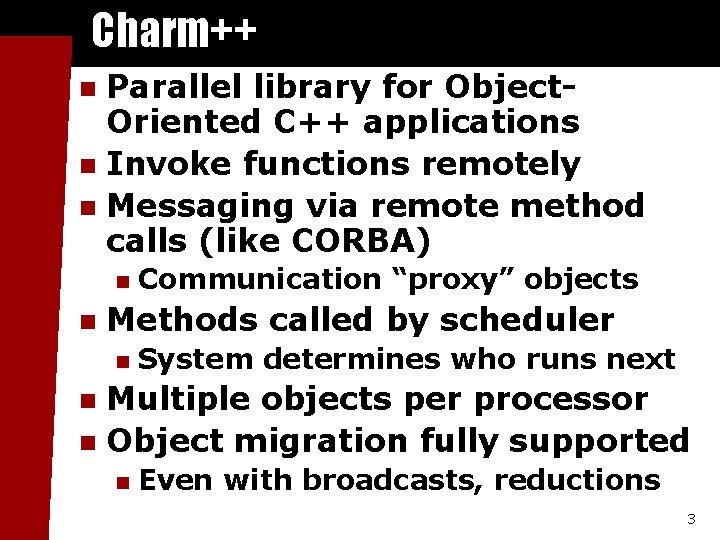

Charm++ Parallel library for Object. Oriented C++ applications n Invoke functions remotely n Messaging via remote method calls (like CORBA) n n n Communication “proxy” objects Methods called by scheduler n System determines who runs next Multiple objects per processor n Object migration fully supported n n Even with broadcasts, reductions 3

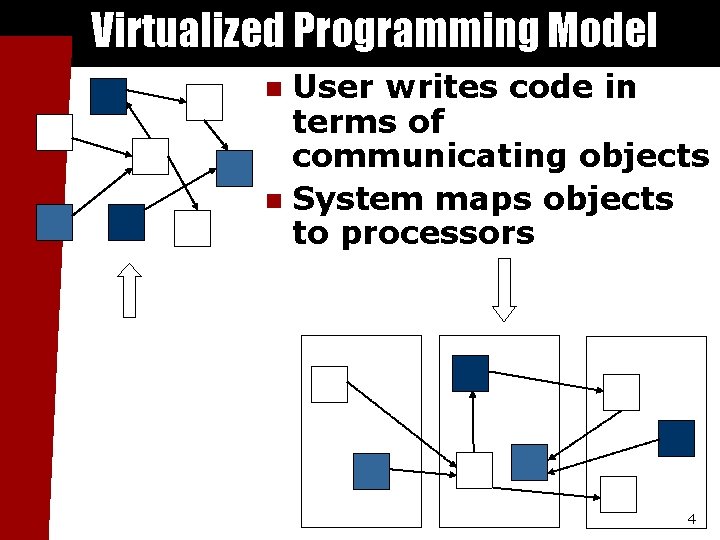

Virtualized Programming Model User writes code in terms of communicating objects n System maps objects System implementation to processors n User View 4

Chares – Concurrent Objects n n Can be dynamically created on any available processor Can be accessed from remote processors Send messages to each other asynchronously Contain “entry methods” 5

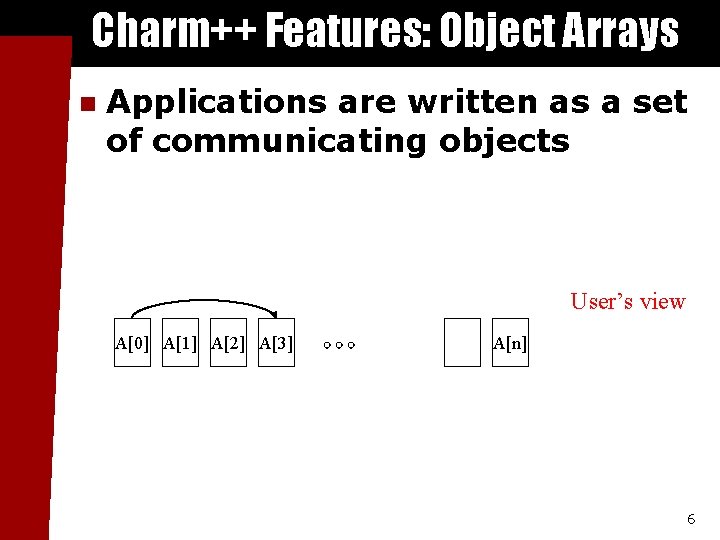

Charm++ Features: Object Arrays n Applications are written as a set of communicating objects User’s view A[0] A[1] A[2] A[3] A[n] 6

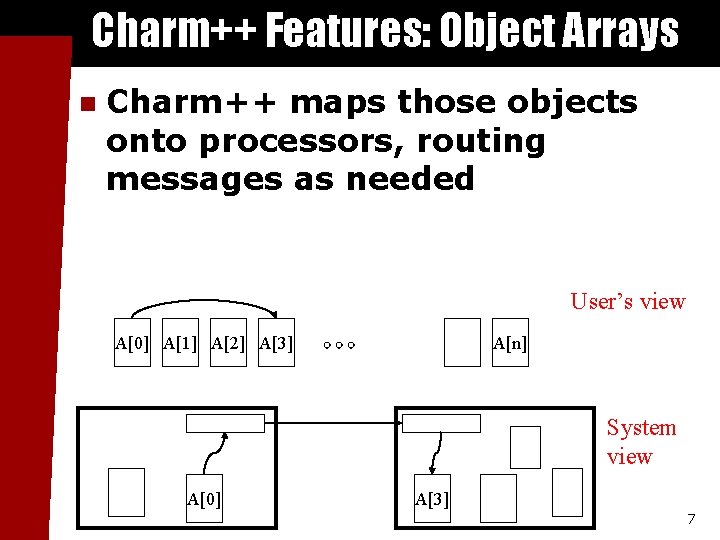

Charm++ Features: Object Arrays n Charm++ maps those objects onto processors, routing messages as needed User’s view A[0] A[1] A[2] A[3] A[n] System view A[0] A[3] 7

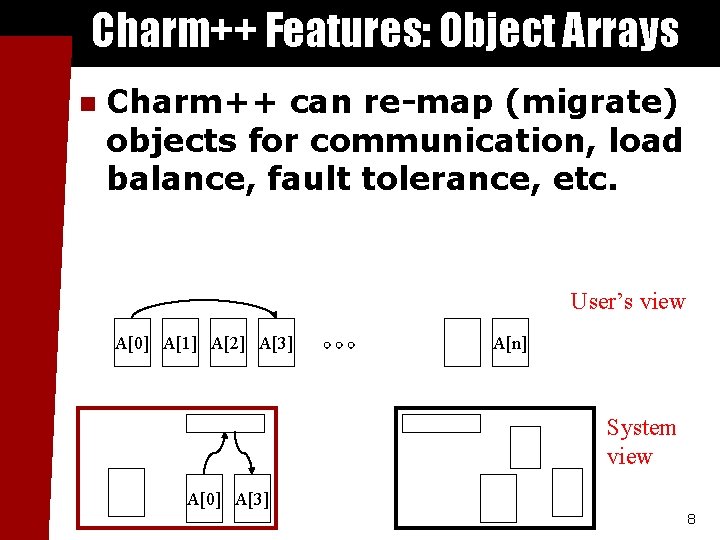

Charm++ Features: Object Arrays n Charm++ can re-map (migrate) objects for communication, load balance, fault tolerance, etc. User’s view A[0] A[1] A[2] A[3] A[n] System view A[0] A[3] 8

![Charm++ Array Definition Interface (. ci) file array[1 D] foo { entry foo(int problem. Charm++ Array Definition Interface (. ci) file array[1 D] foo { entry foo(int problem.](http://slidetodoc.com/presentation_image/2dc2df83f8e386aea5f22e69d7636871/image-9.jpg)

Charm++ Array Definition Interface (. ci) file array[1 D] foo { entry foo(int problem. No); entry void bar(int x); } In a. C file class foo : public CBase_foo { public: // Remote calls foo(int problem. No) {. . . } void bar(int x) {. . . } // Migration support: foo(Ck. Migrate. Message *m) {} void pup(PUP: : er &p) {. . . } }; 9

![Charm++ Remote Method Calls Interface (. ci) file array[1 D] foo { entry foo(int Charm++ Remote Method Calls Interface (. ci) file array[1 D] foo { entry foo(int](http://slidetodoc.com/presentation_image/2dc2df83f8e386aea5f22e69d7636871/image-10.jpg)

Charm++ Remote Method Calls Interface (. ci) file array[1 D] foo { entry foo(int problem. No); entry void bar(int x); }; n To call a method on a remote C++ object foo, use the local “proxy” C++ object CProxy_foo generated from the interface file: Generated class In a. C file CProxy_foo some. Foo=. . . ; some. Foo[i]. bar(17); i’th object n method and parameters This results in a network message, and eventually to a call to the real object’s method: In another. C file void foo: : bar(int x) {. . . } 10

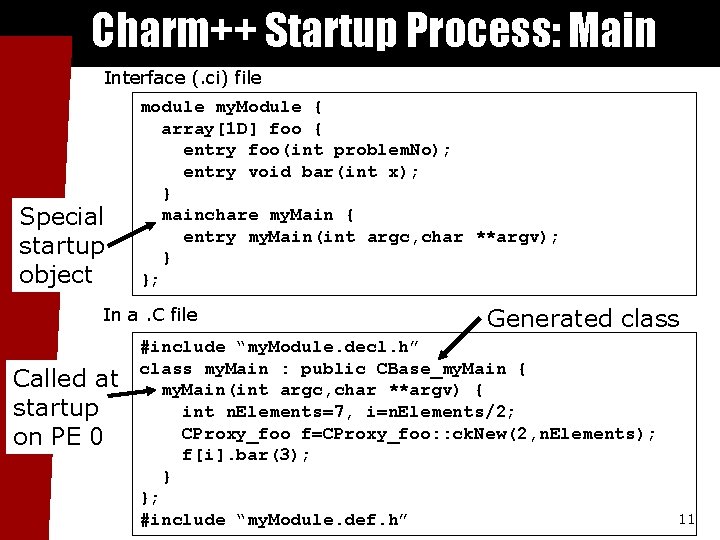

Charm++ Startup Process: Main Interface (. ci) file Special startup object module my. Module { array[1 D] foo { entry foo(int problem. No); entry void bar(int x); } mainchare my. Main { entry my. Main(int argc, char **argv); } }; In a. C file Called at startup on PE 0 Generated class #include “my. Module. decl. h” class my. Main : public CBase_my. Main { my. Main(int argc, char **argv) { int n. Elements=7, i=n. Elements/2; CProxy_foo f=CProxy_foo: : ck. New(2, n. Elements); f[i]. bar(3); } }; #include “my. Module. def. h” 11

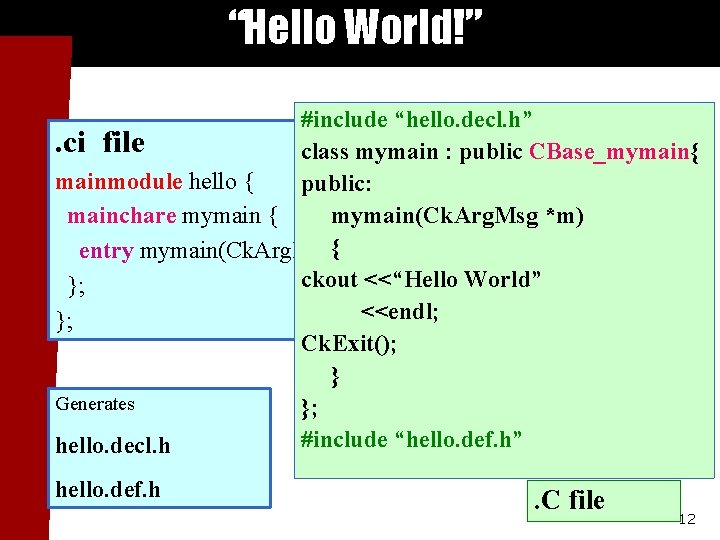

“Hello World!” #include “hello. decl. h”. ci file class mymain : public CBase_mymain{ mainmodule hello { public: mymain(Ck. Arg. Msg *m) mainchare mymain { entry mymain(Ck. Arg. Msg{*m); ckout <<“Hello World” }; <<endl; }; Ck. Exit(); } Generates }; #include “hello. def. h” hello. decl. h hello. def. h . C file 12

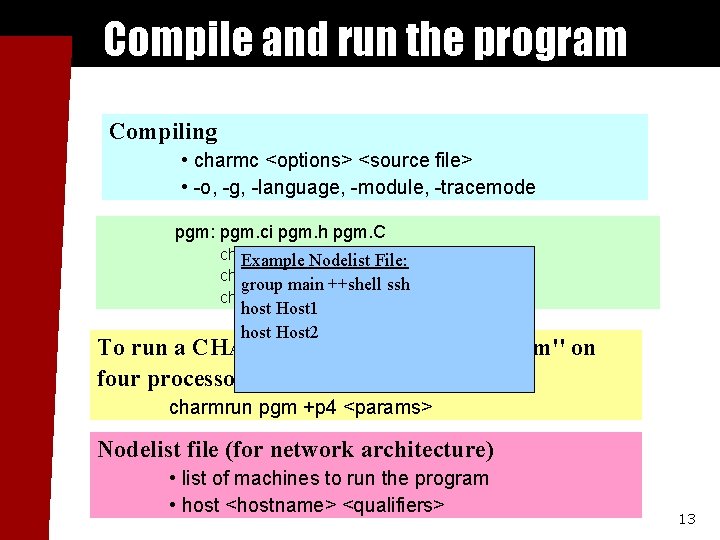

Compile and run the program Compiling • charmc <options> <source file> • -o, -g, -language, -module, -tracemode pgm: pgm. ci pgm. h pgm. C charmc pgm. ci Example Nodelist File: charmc pgm. C group main ++shell ssh charmc –o pgm. o –language charm++ host Host 1 host Host 2 To run a CHARM++ program named ``pgm'' on four processors, type: charmrun pgm +p 4 <params> Nodelist file (for network architecture) • list of machines to run the program • host <hostname> <qualifiers> 13

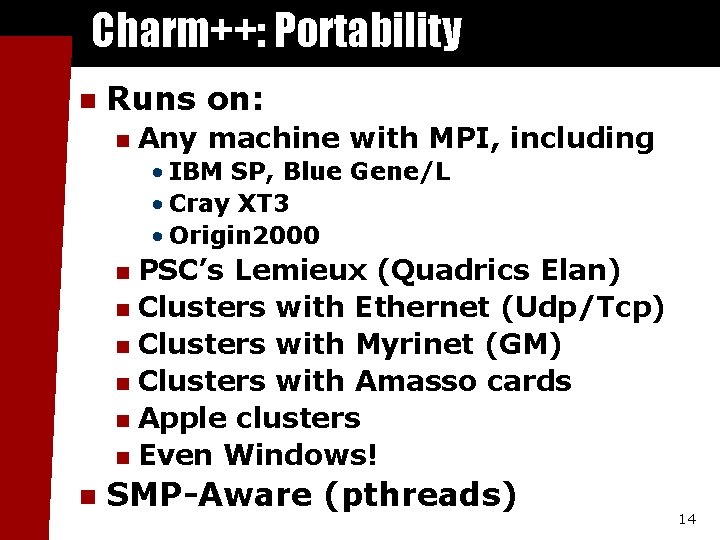

Charm++: Portability n Runs on: n Any machine with MPI, including • IBM SP, Blue Gene/L • Cray XT 3 • Origin 2000 PSC’s Lemieux (Quadrics Elan) n Clusters with Ethernet (Udp/Tcp) n Clusters with Myrinet (GM) n Clusters with Amasso cards n Apple clusters n Even Windows! n n SMP-Aware (pthreads) 14

Build Charm++ n Download from website n n http: //charm. cs. uiuc. edu/download. html Build Charm++ n . /build <target> <version> <options> [compile flags] • . /build charm++ net-linux gm -g n n Parallel make (-j 2) Compile code using charmc Portable compiler wrapper n Link with “-language charm++” n n Run code using charmrun 15

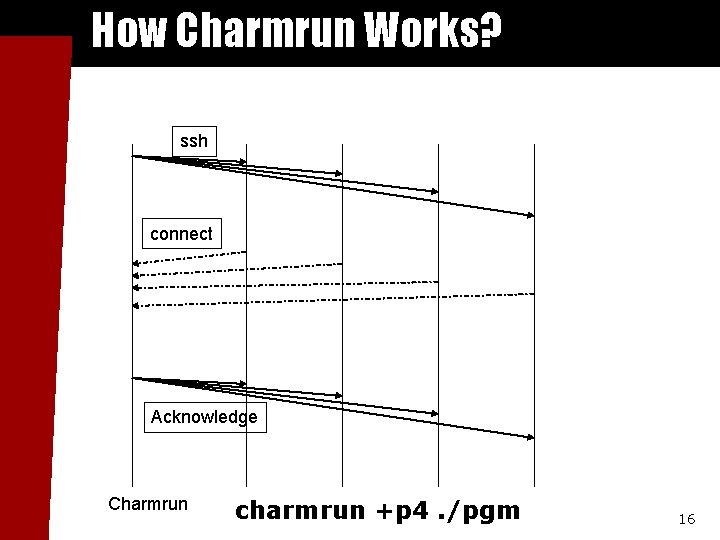

How Charmrun Works? ssh connect Acknowledge Charmrun charmrun +p 4. /pgm 16

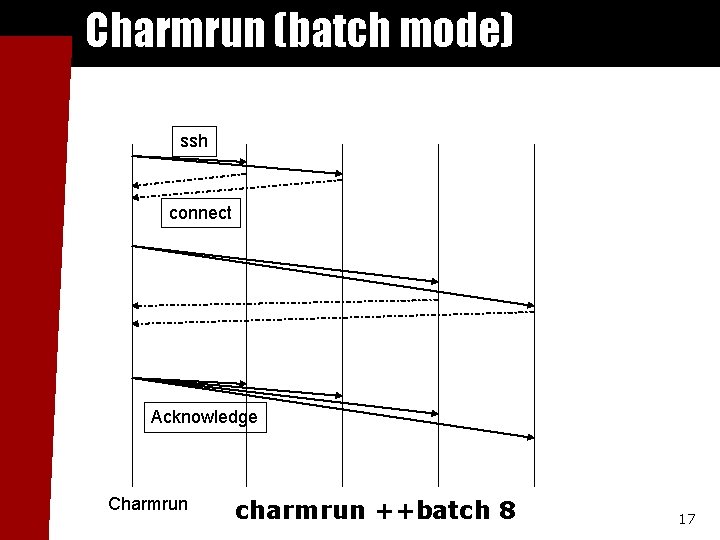

Charmrun (batch mode) ssh connect Acknowledge Charmrun charmrun ++batch 8 17

Debugging Charm++ Applications n n Printf Gdb n Sequentially (standalone mode) • gdb. /pgm +vp 16 n Run debugger in xterm • charmrun +p 4 pgm ++debug-nopause n n Memory paranoid Parallel debugger 18

Charm++ Features 19

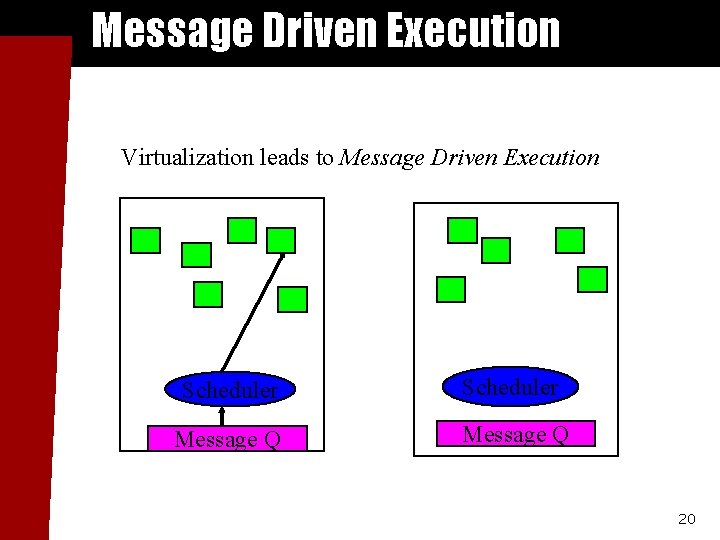

Message Driven Execution Virtualization leads to Message Driven Execution Scheduler Message Q 20

Prioritized Messages n Number of priority bits passed during message allocation Foo. Msg * msg = new (size, nbits) Foo. Msg; n n Priorities stored at the end of messages Signed integer priorities: *Ck. Priority. Ptr(msg)=-1; Ck. Set. Queueing(m, CK_QUEUEING_IFIFO); n Unsigned bitvector priorities Ck. Priority. Ptr(msg)[0]=0 x 7 fffffff; Ck. Set. Queueing(m, CK_QUEUEING_BFIFO); 21

Advanced Message Features n Expedited messages Message do not go through the charm++ scheduler (faster) n Top priority messages n n Immediate messages Entries are executed in an interrupt or the communication thread n Very fast, but tough to get right n 22

Object Migration 23

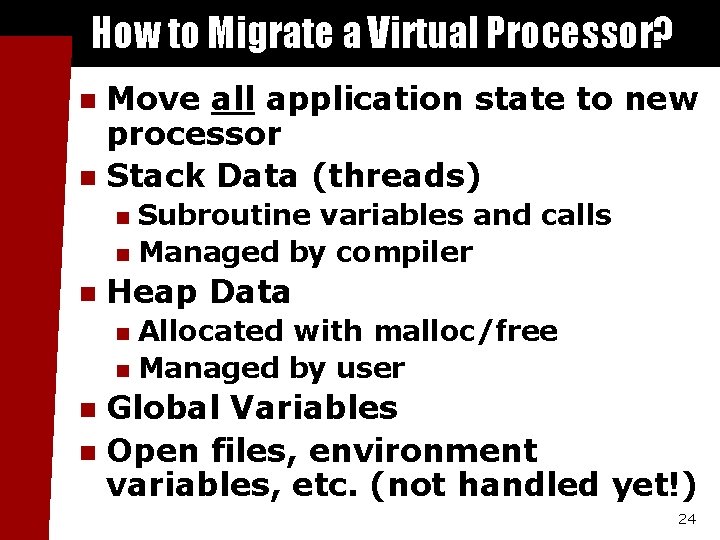

How to Migrate a Virtual Processor? Move all application state to new processor n Stack Data (threads) n Subroutine variables and calls n Managed by compiler n n Heap Data Allocated with malloc/free n Managed by user n Global Variables n Open files, environment variables, etc. (not handled yet!) n 24

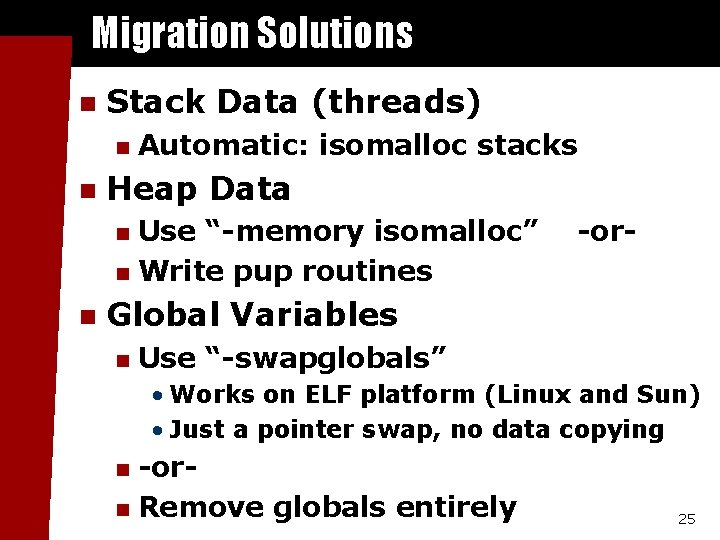

Migration Solutions n Stack Data (threads) n n Automatic: isomalloc stacks Heap Data Use “-memory isomalloc” n Write pup routines n n -or- Global Variables n Use “-swapglobals” • Works on ELF platform (Linux and Sun) • Just a pointer swap, no data copying -orn Remove globals entirely n 25

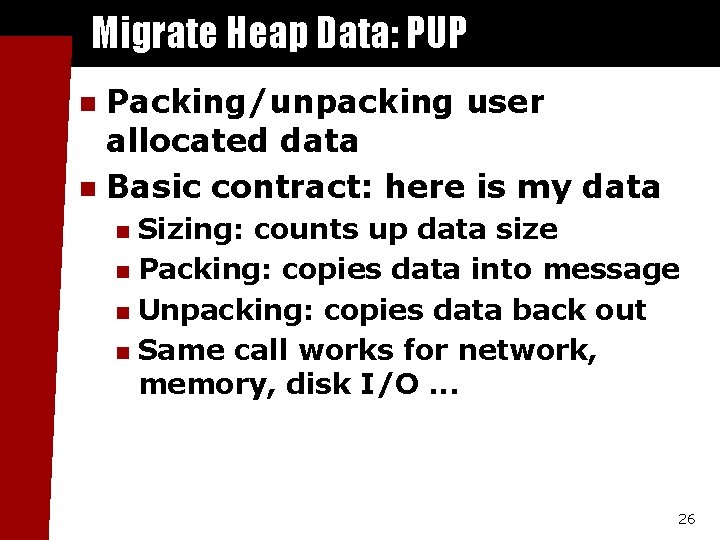

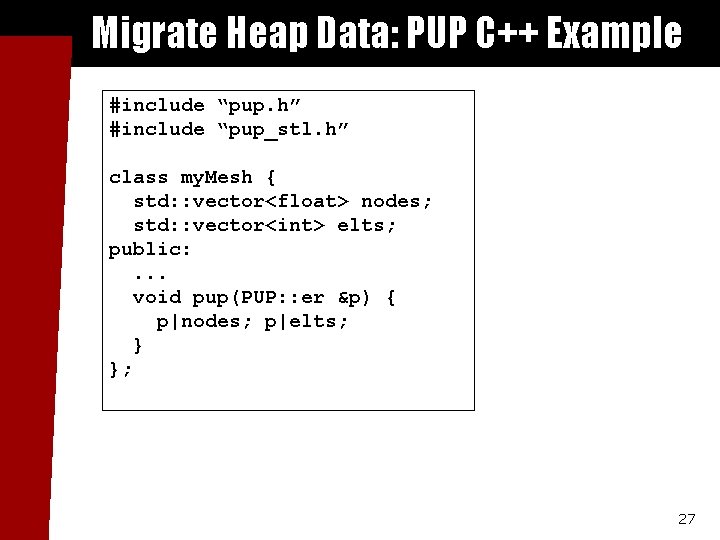

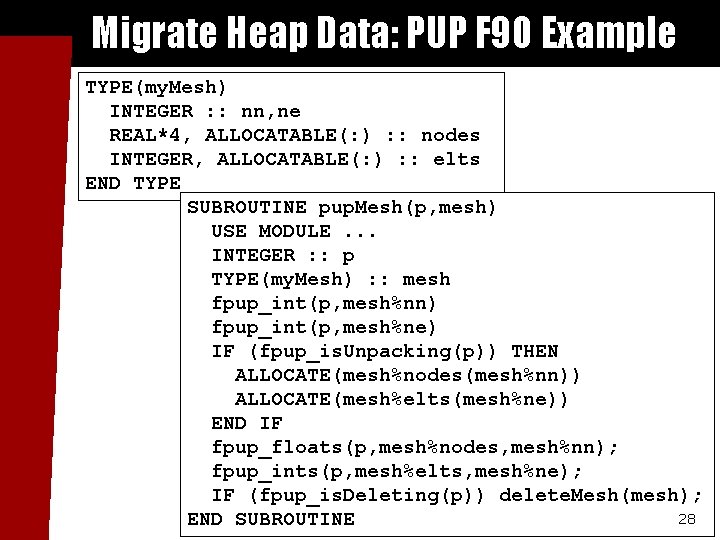

Migrate Heap Data: PUP Packing/unpacking user allocated data n Basic contract: here is my data n Sizing: counts up data size n Packing: copies data into message n Unpacking: copies data back out n Same call works for network, memory, disk I/O. . . n 26

Migrate Heap Data: PUP C++ Example #include “pup. h” #include “pup_stl. h” class my. Mesh { std: : vector<float> nodes; std: : vector<int> elts; public: . . . void pup(PUP: : er &p) { p|nodes; p|elts; } }; 27

Migrate Heap Data: PUP F 90 Example TYPE(my. Mesh) INTEGER : : nn, ne REAL*4, ALLOCATABLE(: ) : : nodes INTEGER, ALLOCATABLE(: ) : : elts END TYPE SUBROUTINE pup. Mesh(p, mesh) USE MODULE. . . INTEGER : : p TYPE(my. Mesh) : : mesh fpup_int(p, mesh%nn) fpup_int(p, mesh%ne) IF (fpup_is. Unpacking(p)) THEN ALLOCATE(mesh%nodes(mesh%nn)) ALLOCATE(mesh%elts(mesh%ne)) END IF fpup_floats(p, mesh%nodes, mesh%nn); fpup_ints(p, mesh%elts, mesh%ne); IF (fpup_is. Deleting(p)) delete. Mesh(mesh); 28 END SUBROUTINE

Automatic Load Balancing 29

Motivation n Irregular or dynamic applications n n Initial static load balancing Application behaviors change dynamically Difficult to implement with good parallel efficiency Versatile, automatic load balancers n n n Application independent No/little user effort is needed in load balance Work for both Charm++ and Adaptive MPI 30

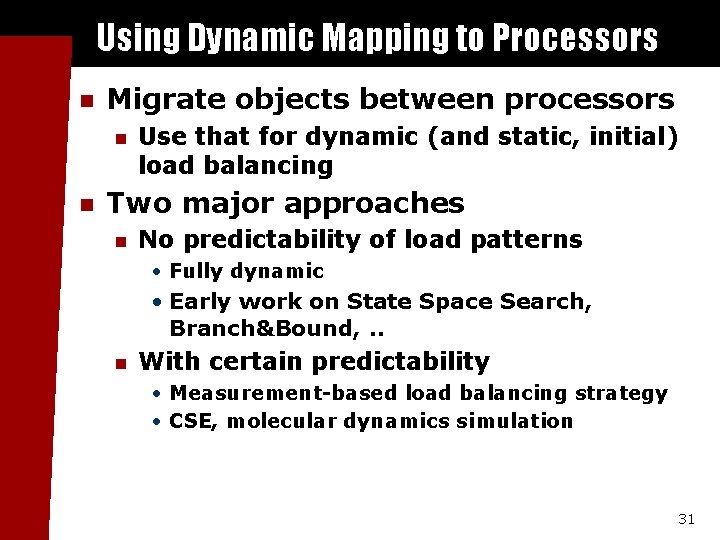

Using Dynamic Mapping to Processors n Migrate objects between processors n n Use that for dynamic (and static, initial) load balancing Two major approaches n No predictability of load patterns • Fully dynamic • Early work on State Space Search, Branch&Bound, . . n With certain predictability • Measurement-based load balancing strategy • CSE, molecular dynamics simulation 31

Applications lack of predictability n Flow of tasks - application generates a continuous flow of tasks n n The goal of the load balancing strategies is to balance these tasks across the system for a fast response time and a better throughput Tasks are assigned at creation time, no migration afterwards 32

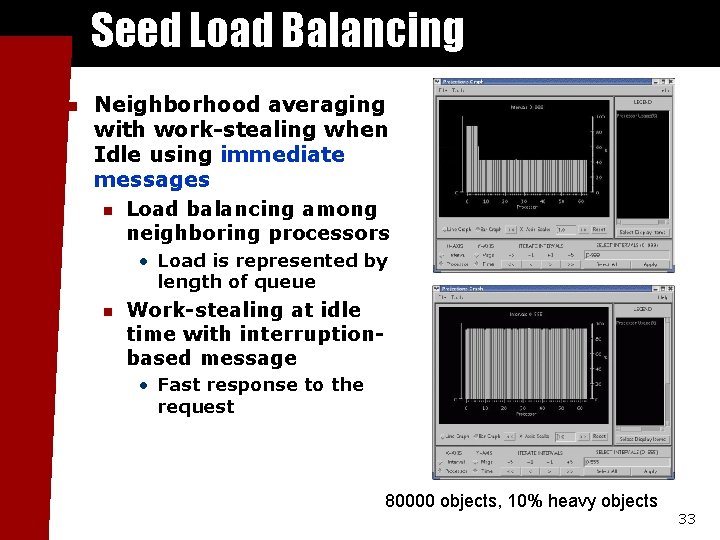

Seed Load Balancing n Neighborhood averaging with work-stealing when Idle using immediate messages n Load balancing among neighboring processors • Load is represented by length of queue n Work-stealing at idle time with interruptionbased message • Fast response to the request 80000 objects, 10% heavy objects 33

Link with a seed load balancer n Use –balance <random|neighbor> n n Charmc –o pgm. o –balance neighbor Specify topology n +LBTopo <ring|torus 2 d|…> 34

Principle of Persistence n Once an application is expressed in terms of interacting objects, object communication patterns and computational loads tend to persist over time n In spite of dynamic behavior • Abrupt and large, but infrequent changes (eg: AMR) • Slow and small changes (eg: particle migration) n Parallel analog of principle of locality Heuristics, that holds for most CSE applications Run-time instrumentation is possible n n 35

Measurement Based Load Balancing n Runtime instrumentation Measures CPU load per object n Measures communication volume between objects n n Measurement based load balancers Use the instrumented database periodically to make new decisions n A load balancing strategy takes the database as input and generates a new object-to-processor mapping n 36

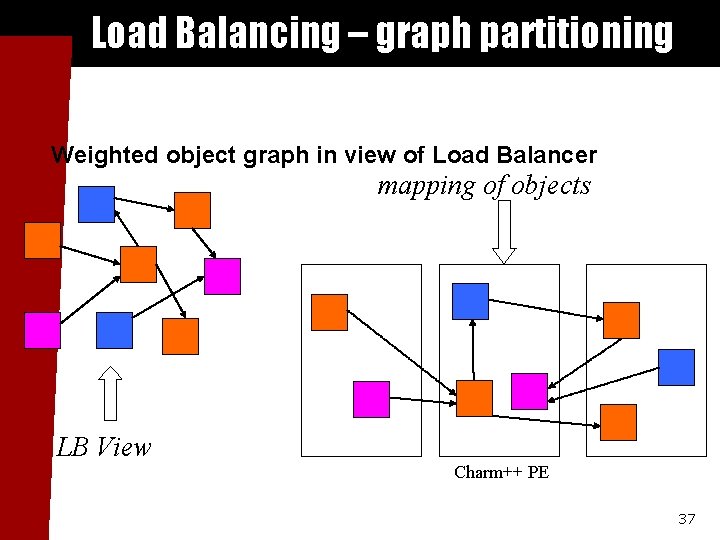

Load Balancing – graph partitioning Weighted object graph in view of Load Balancer mapping of objects LB View Charm++ PE 37

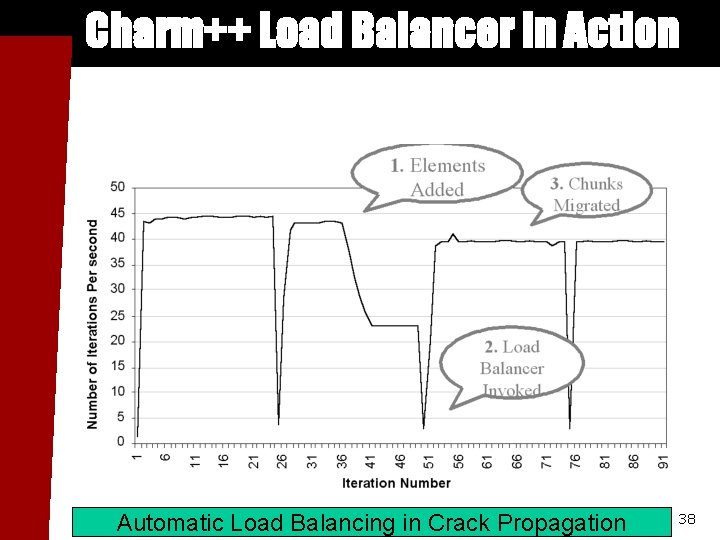

Charm++ Load Balancer in Action Automatic Load Balancing in Crack Propagation 38

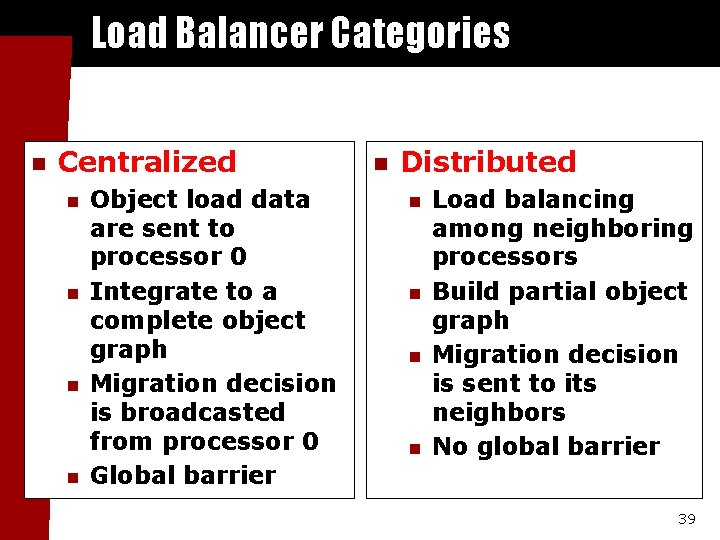

Load Balancer Categories n Centralized n n Object load data are sent to processor 0 Integrate to a complete object graph Migration decision is broadcasted from processor 0 Global barrier n Distributed n n Load balancing among neighboring processors Build partial object graph Migration decision is sent to its neighbors No global barrier 39

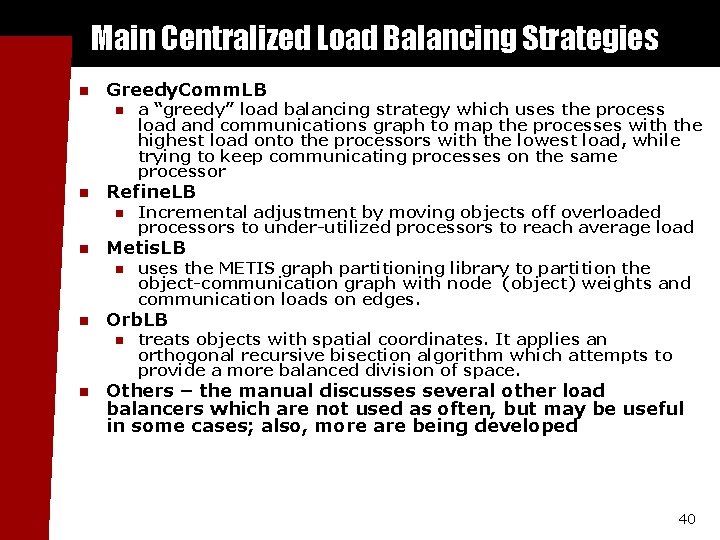

Main Centralized Load Balancing Strategies n Greedy. Comm. LB n n Refine. LB n n uses the METIS graph partitioning library to partition the object-communication graph with node (object) weights and communication loads on edges. Orb. LB n n Incremental adjustment by moving objects off overloaded processors to under-utilized processors to reach average load Metis. LB n n a “greedy” load balancing strategy which uses the process load and communications graph to map the processes with the highest load onto the processors with the lowest load, while trying to keep communicating processes on the same processor treats objects with spatial coordinates. It applies an orthogonal recursive bisection algorithm which attempts to provide a more balanced division of space. Others – the manual discusses several other load balancers which are not used as often, but may be useful in some cases; also, more are being developed 40

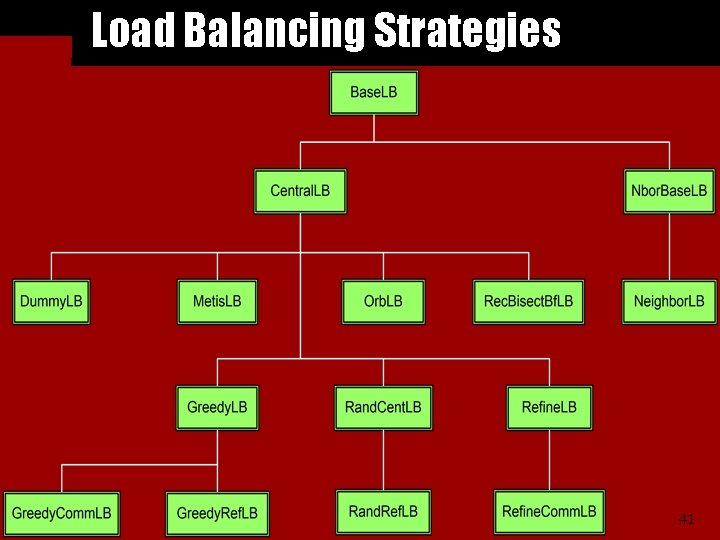

Load Balancing Strategies 41

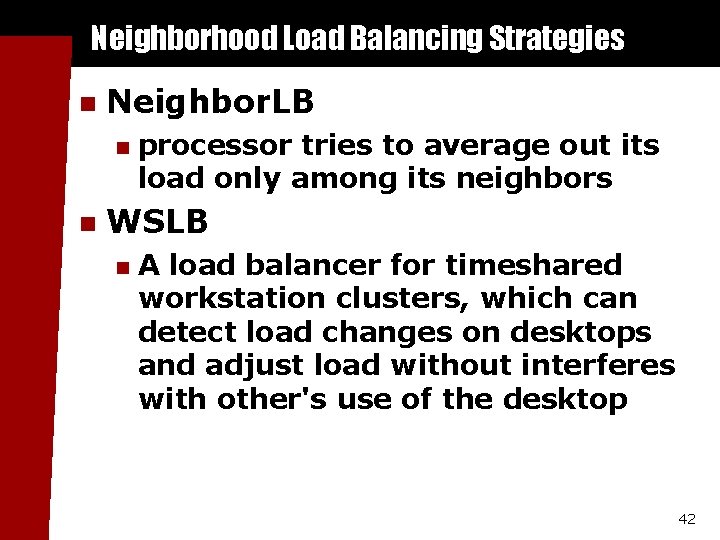

Neighborhood Load Balancing Strategies n Neighbor. LB n n processor tries to average out its load only among its neighbors WSLB n A load balancer for timeshared workstation clusters, which can detect load changes on desktops and adjust load without interferes with other's use of the desktop 42

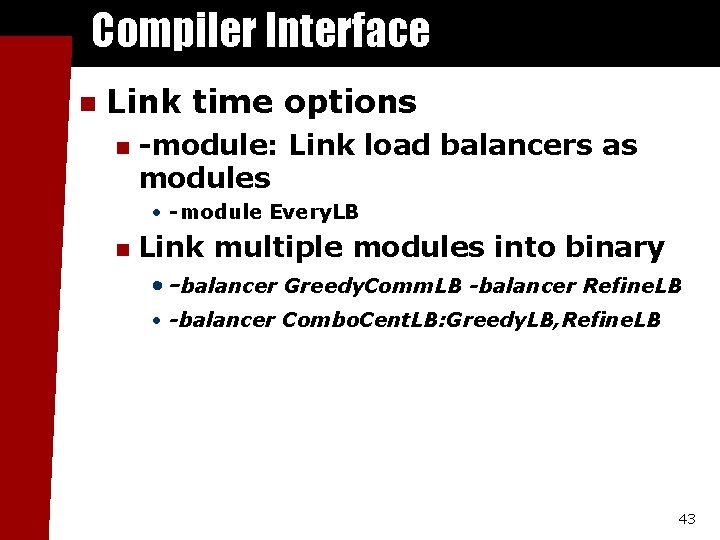

Compiler Interface n Link time options n -module: Link load balancers as modules • -module Every. LB n Link multiple modules into binary • -balancer Greedy. Comm. LB -balancer Refine. LB • -balancer Combo. Cent. LB: Greedy. LB, Refine. LB 43

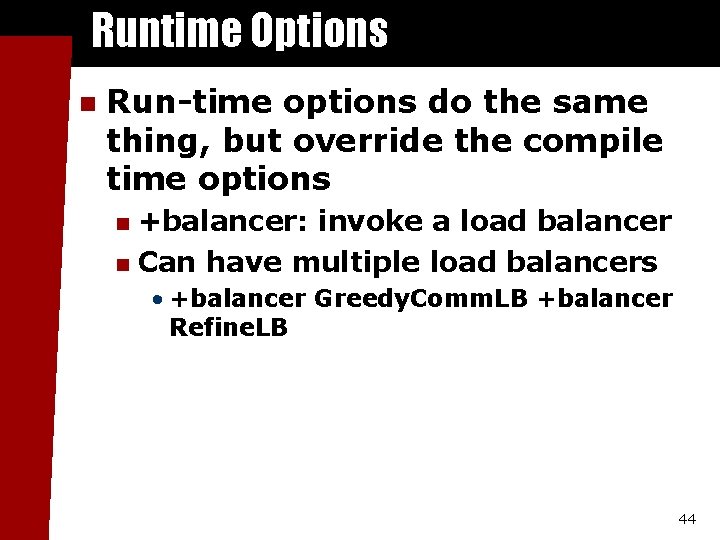

Runtime Options n Run-time options do the same thing, but override the compile time options +balancer: invoke a load balancer n Can have multiple load balancers n • +balancer Greedy. Comm. LB +balancer Refine. LB 44

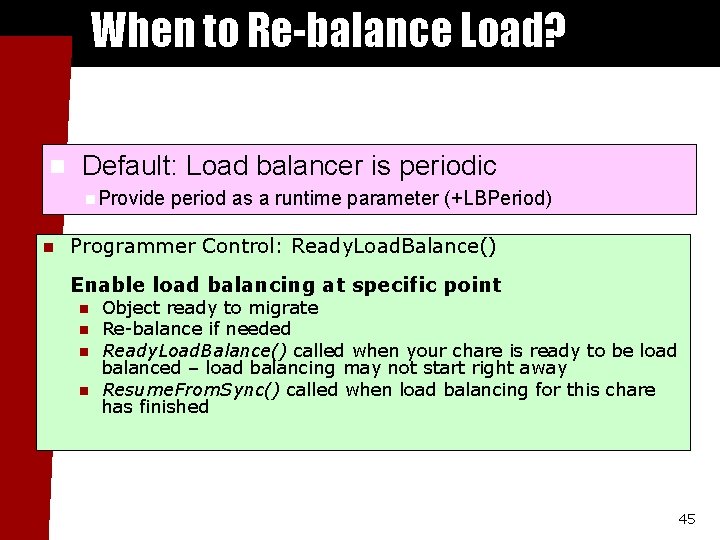

When to Re-balance Load? n Default: Load balancer is periodic n. Provide n period as a runtime parameter (+LBPeriod) Programmer Control: Ready. Load. Balance() Enable load balancing at specific point n n Object ready to migrate Re-balance if needed Ready. Load. Balance() called when your chare is ready to be load balanced – load balancing may not start right away Resume. From. Sync() called when load balancing for this chare has finished 45

Thank You! Free source, binaries, manuals, and more information at: http: //charm. cs. uiuc. edu/ Parallel Programming Lab at University of Illinois 46

- Slides: 46