Fully Automatic CrossAssociations Deepayan Chakrabarti CMU Spiros Papadimitriou

Fully Automatic Cross-Associations Deepayan Chakrabarti (CMU) Spiros Papadimitriou (CMU) Dharmendra Modha (IBM) Christos Faloutsos (CMU and IBM) 1

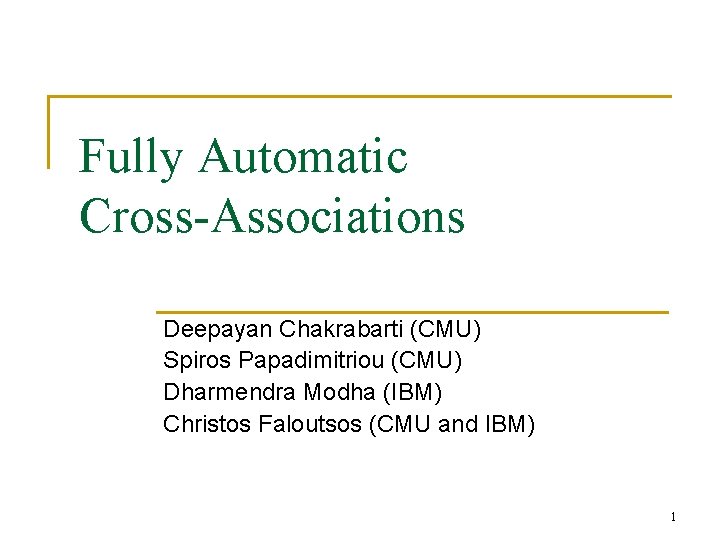

Customers Customer Groups Problem Definition Products Product Groups Simultaneously group customers and products, or, documents and words, or, users and preferences … 2

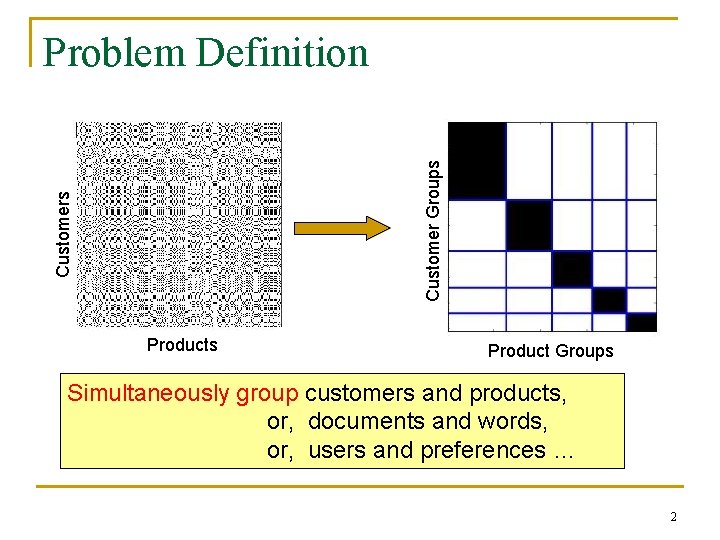

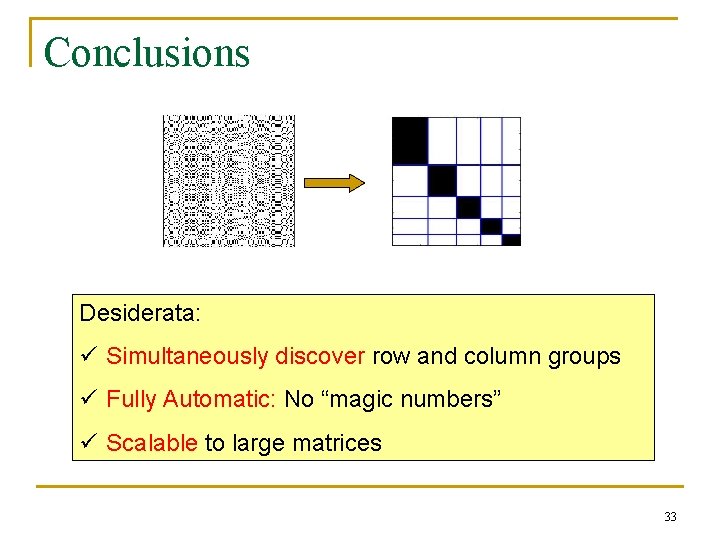

Problem Definition Desiderata: 1. Simultaneously discover row and column groups 2. Fully Automatic: No “magic numbers” 3. Scalable to large matrices 3

![Closely Related Work n Information Theoretic Co-clustering [Dhillon+/2003] q Number of row and column Closely Related Work n Information Theoretic Co-clustering [Dhillon+/2003] q Number of row and column](http://slidetodoc.com/presentation_image_h2/3c3ade32af9ef17241a94bbcedea807a/image-4.jpg)

Closely Related Work n Information Theoretic Co-clustering [Dhillon+/2003] q Number of row and column groups must be specified Desiderata: ü Simultaneously discover row and column groups Fully Automatic: No “magic numbers” ü Scalable to large graphs 4

![Other Related Work n n K-means and variants: [Pelleg+/2000, Hamerly+/2003] Do not cluster rows Other Related Work n n K-means and variants: [Pelleg+/2000, Hamerly+/2003] Do not cluster rows](http://slidetodoc.com/presentation_image_h2/3c3ade32af9ef17241a94bbcedea807a/image-5.jpg)

Other Related Work n n K-means and variants: [Pelleg+/2000, Hamerly+/2003] Do not cluster rows and cols simultaneously “Frequent itemsets”: User must specify “support” Information Retrieval: Choosing the number of “concepts” [Agrawal+/1994] n [Deerwester+1990, Hoffman/1999] n Graph Partitioning: [Karypis+/1998] Number of partitions Measure of imbalance between clusters 5

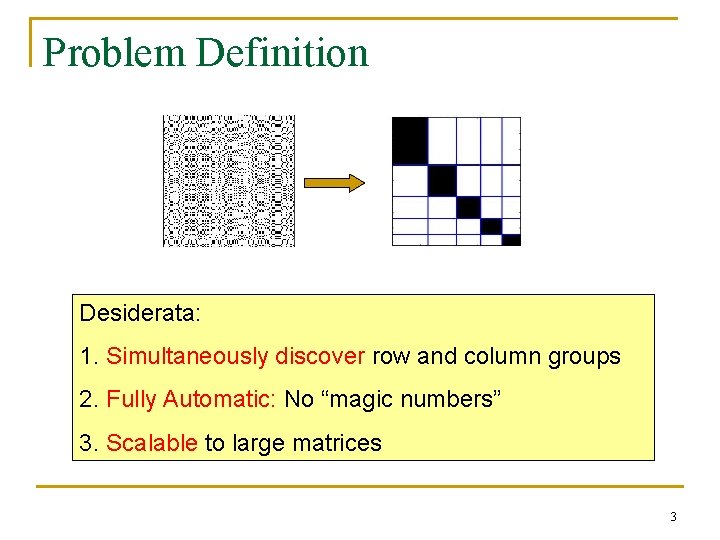

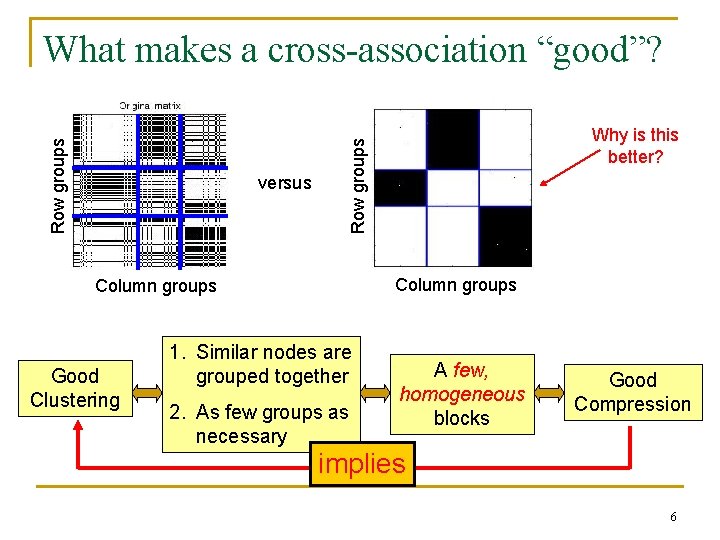

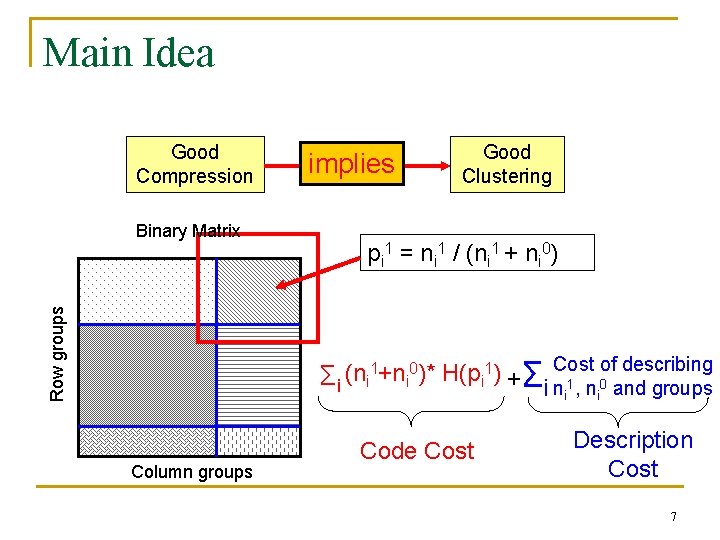

versus Column groups Good Clustering Why is this better? Row groups What makes a cross-association “good”? 1. Similar nodes are grouped together 2. As few groups as necessary A few, homogeneous blocks Good Compression implies 6

Main Idea Good Compression Row groups Binary Matrix implies Good Clustering pi 1 = ni 1 / (ni 1 + ni 0) Cost of describing 1+n 0)* H(p 1) (n Σi i +Σ i ni 1, ni 0 and groups Column groups Code Cost Description Cost 7

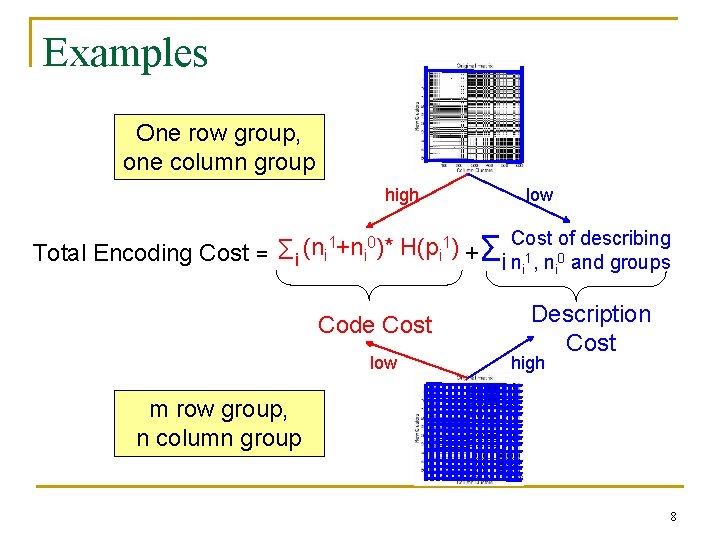

Examples One row group, one column group high Total Encoding Cost = low of describing Σi (ni 1+ni 0)* H(pi 1) +Σi Cost n 1, n 0 and groups i Code Cost low i Description Cost high m row group, n column group 8

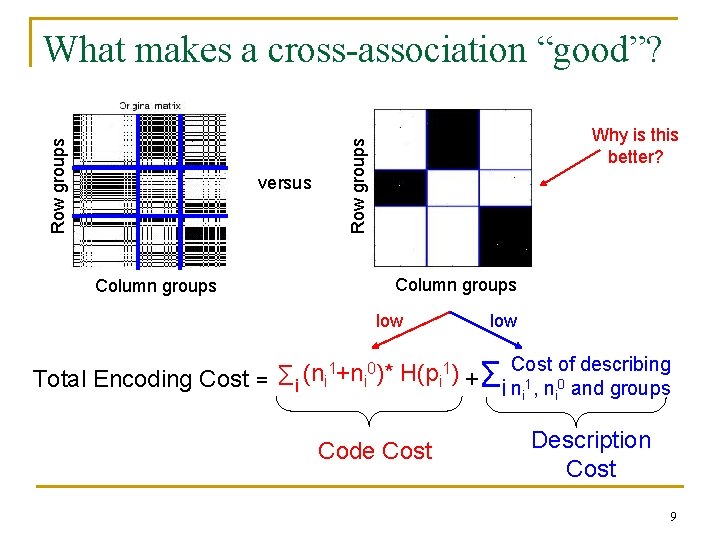

versus Column groups Why is this better? Row groups What makes a cross-association “good”? Column groups low Cost of describing 1+n 0)* H(p 1) (n i i +Σ Total Encoding Cost = Σi i i ni 1, ni 0 and groups Code Cost Description Cost 9

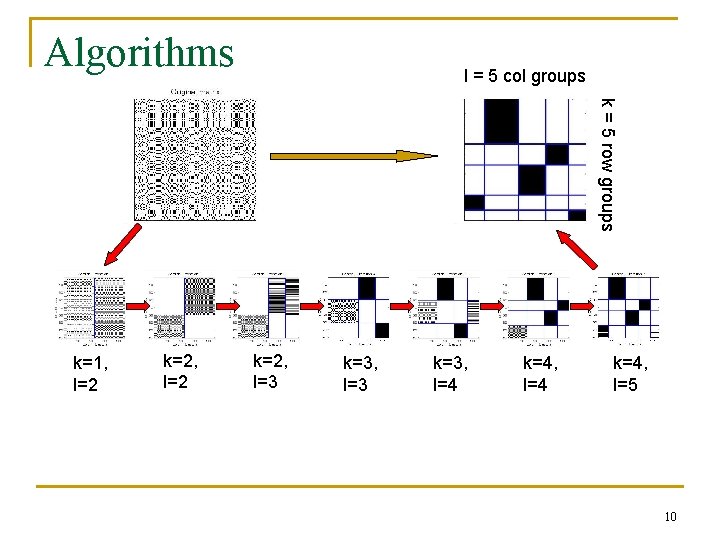

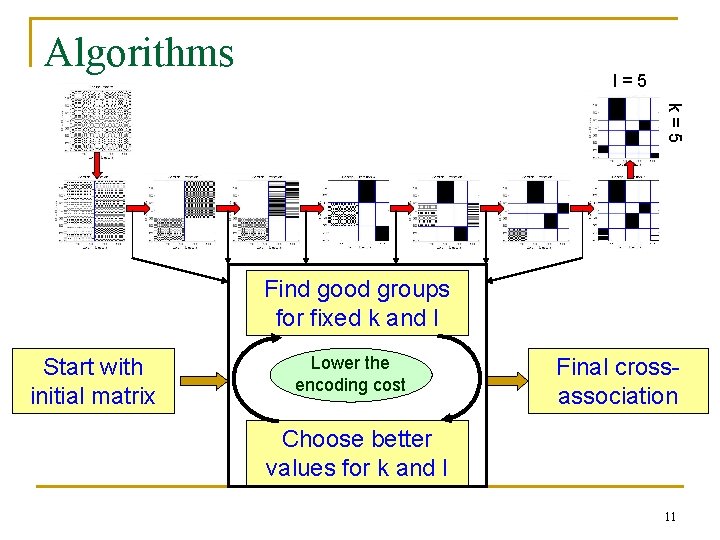

Algorithms l = 5 col groups k = 5 row groups k=1, l=2 k=2, l=3 k=3, l=4 k=4, l=5 10

Algorithms l=5 k=5 Find good groups for fixed k and l Start with initial matrix Lower the encoding cost Final crossassociation Choose better values for k and l 11

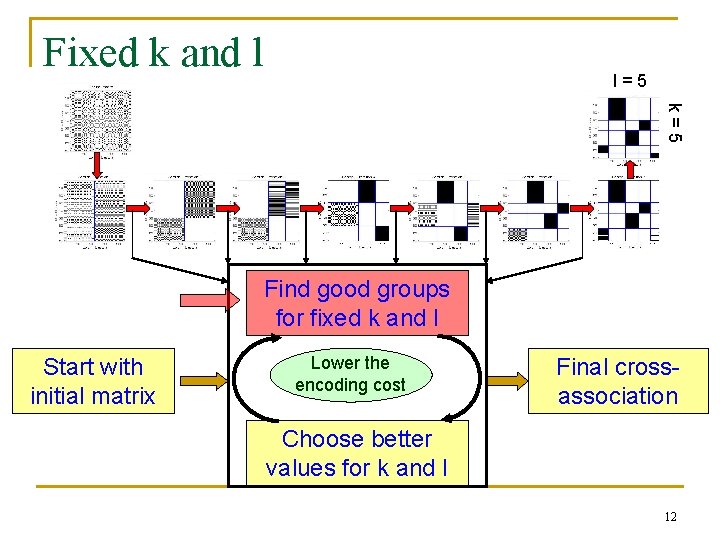

Fixed k and l l=5 k=5 Find good groups for fixed k and l Start with initial matrix Lower the encoding cost Final crossassociation Choose better values for k and l 12

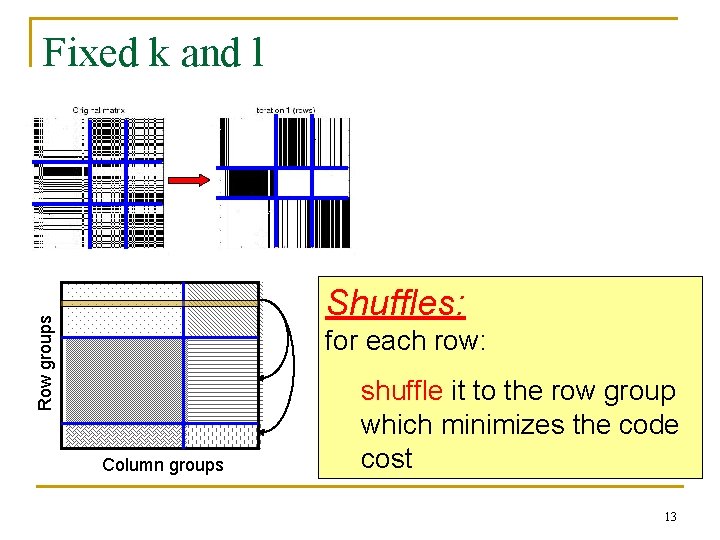

Fixed k and l Row groups Shuffles: for each row: Column groups shuffle it to the row group which minimizes the code cost 13

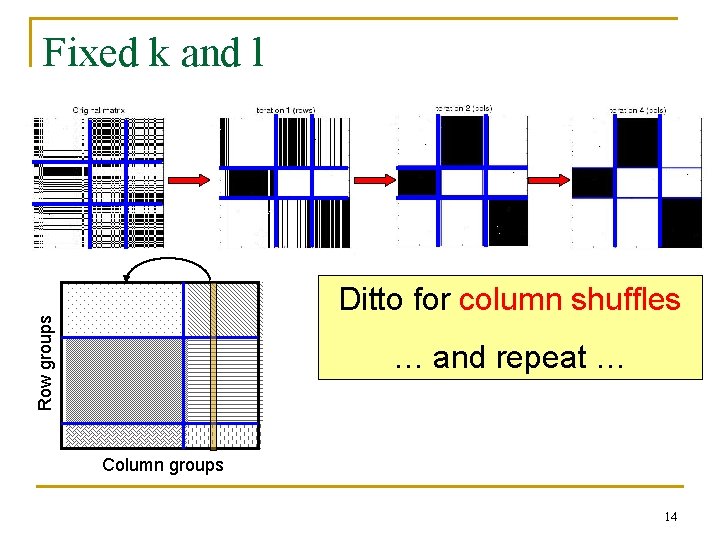

Fixed k and l Row groups Ditto for column shuffles … and repeat … Column groups 14

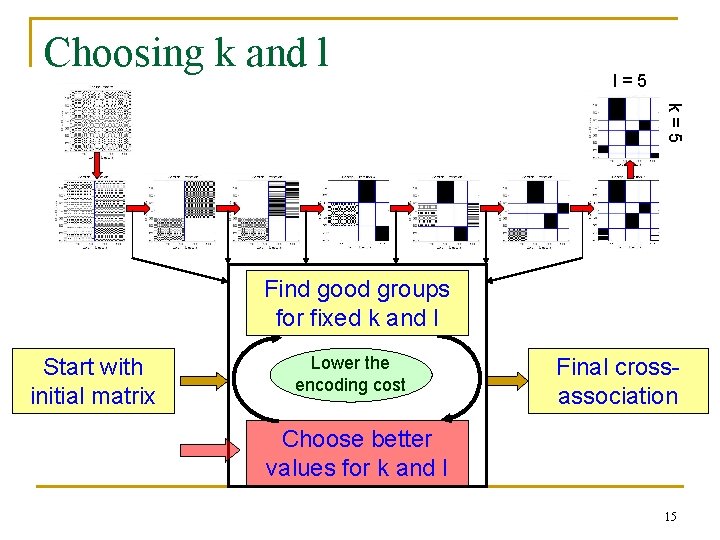

Choosing k and l l=5 k=5 Find good groups for fixed k and l Start with initial matrix Lower the encoding cost Final crossassociation Choose better values for k and l 15

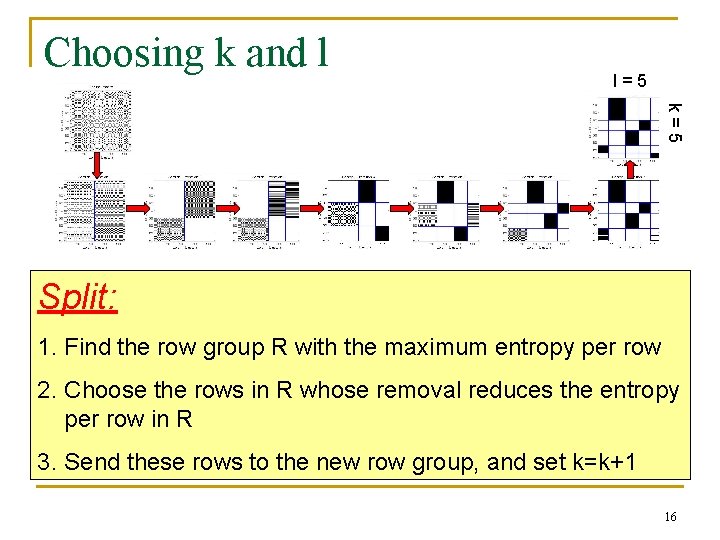

Choosing k and l l=5 k=5 Split: 1. Find the row group R with the maximum entropy per row 2. Choose the rows in R whose removal reduces the entropy per row in R 3. Send these rows to the new row group, and set k=k+1 16

Choosing k and l l=5 k=5 Split: Similar for column groups too. 17

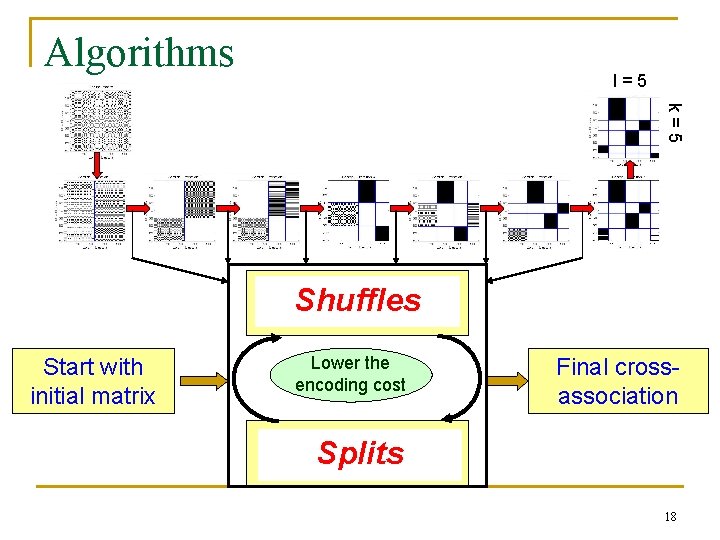

Algorithms l=5 k=5 Find good groups Shuffles for fixed k and l Start with initial matrix Lower the encoding cost Final crossassociation Choose better Splits values for k and l 18

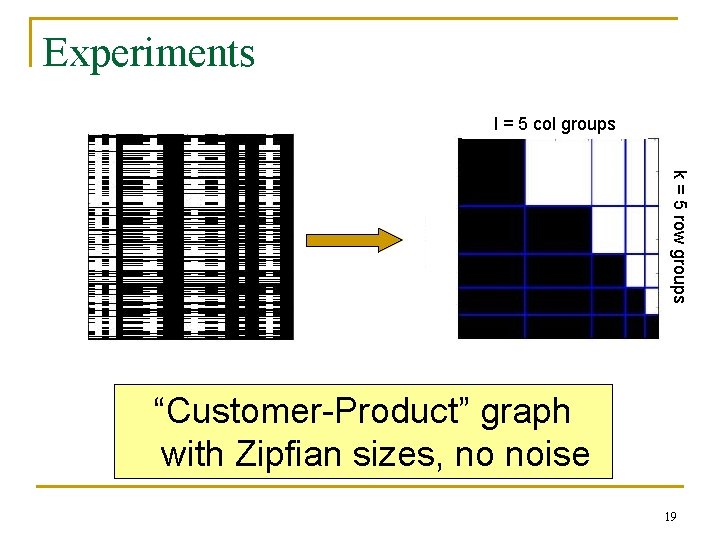

Experiments l = 5 col groups k = 5 row groups “Customer-Product” graph with Zipfian sizes, no noise 19

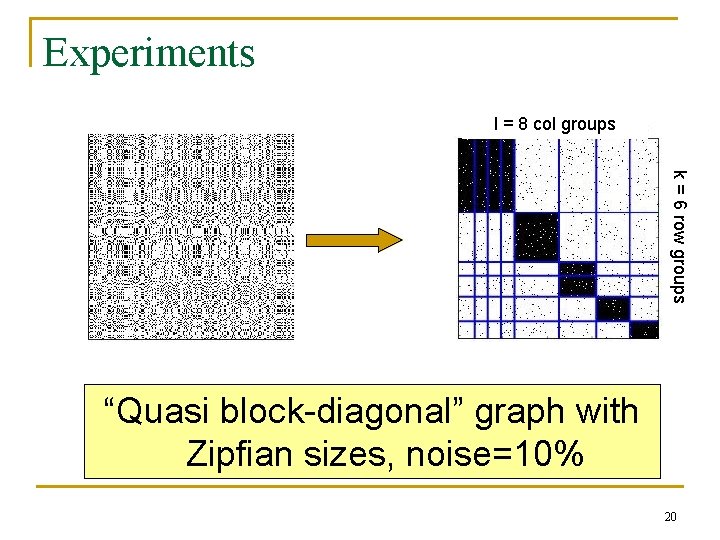

Experiments l = 8 col groups k = 6 row groups “Quasi block-diagonal” graph with Zipfian sizes, noise=10% 20

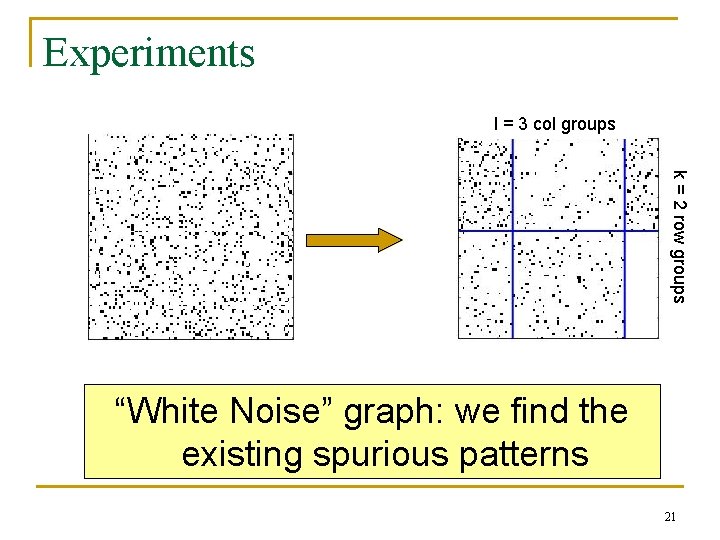

Experiments l = 3 col groups k = 2 row groups “White Noise” graph: we find the existing spurious patterns 21

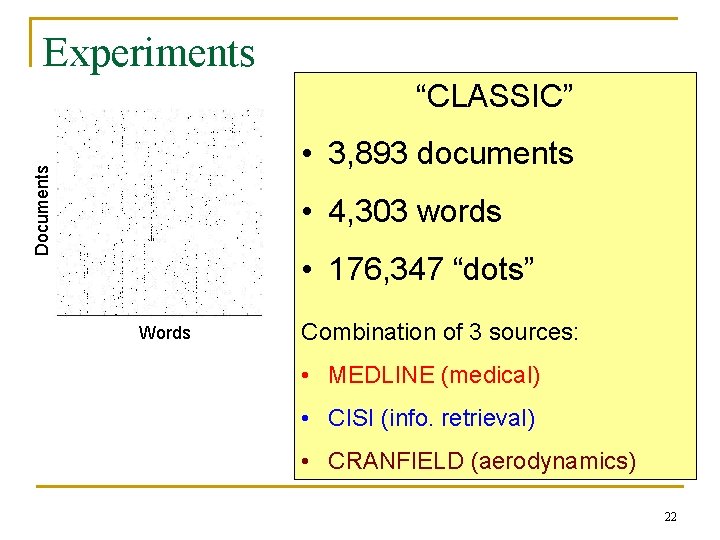

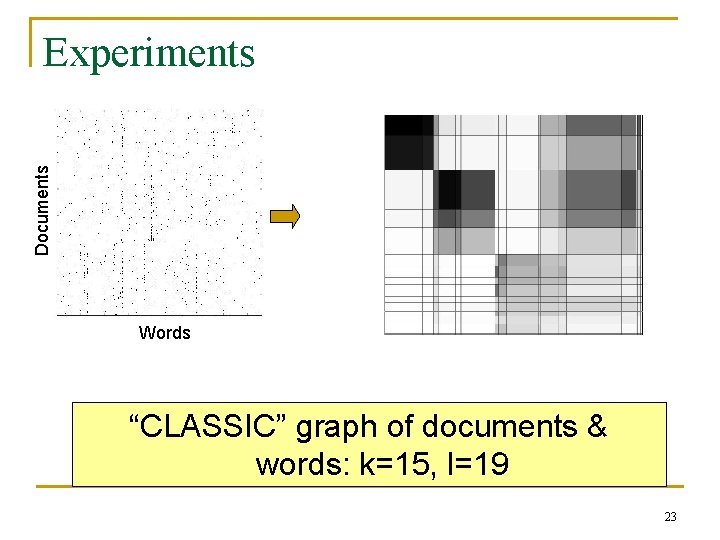

Experiments “CLASSIC” Documents • 3, 893 documents • 4, 303 words • 176, 347 “dots” Words Combination of 3 sources: • MEDLINE (medical) • CISI (info. retrieval) • CRANFIELD (aerodynamics) 22

Documents Experiments Words “CLASSIC” graph of documents & words: k=15, l=19 23

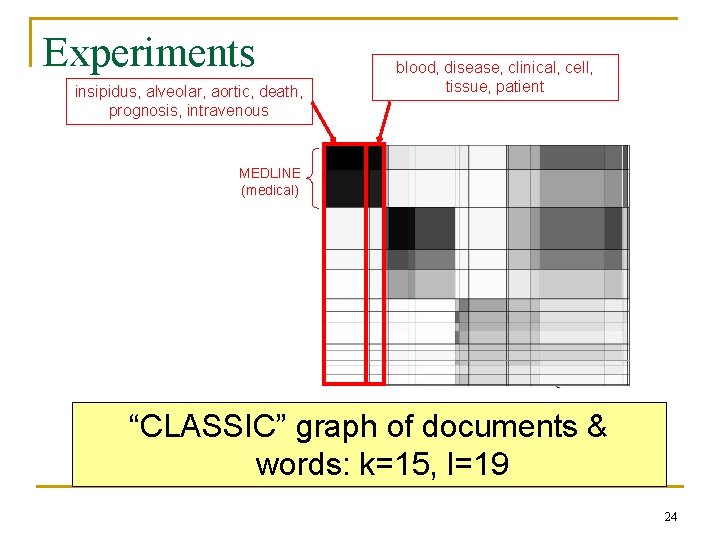

Experiments insipidus, alveolar, aortic, death, prognosis, intravenous blood, disease, clinical, cell, tissue, patient MEDLINE (medical) “CLASSIC” graph of documents & words: k=15, l=19 24

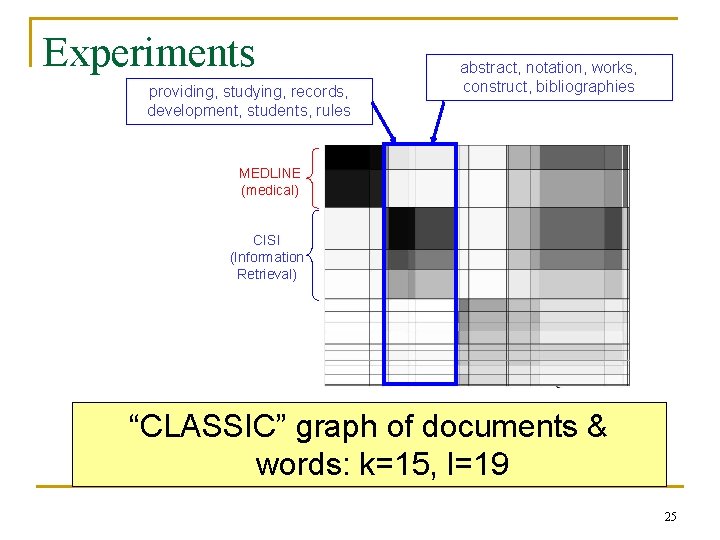

Experiments providing, studying, records, development, students, rules abstract, notation, works, construct, bibliographies MEDLINE (medical) CISI (Information Retrieval) “CLASSIC” graph of documents & words: k=15, l=19 25

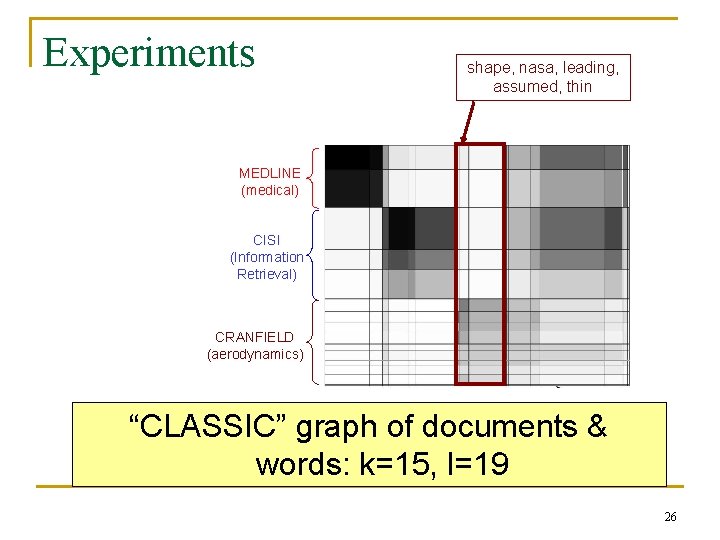

Experiments shape, nasa, leading, assumed, thin MEDLINE (medical) CISI (Information Retrieval) CRANFIELD (aerodynamics) “CLASSIC” graph of documents & words: k=15, l=19 26

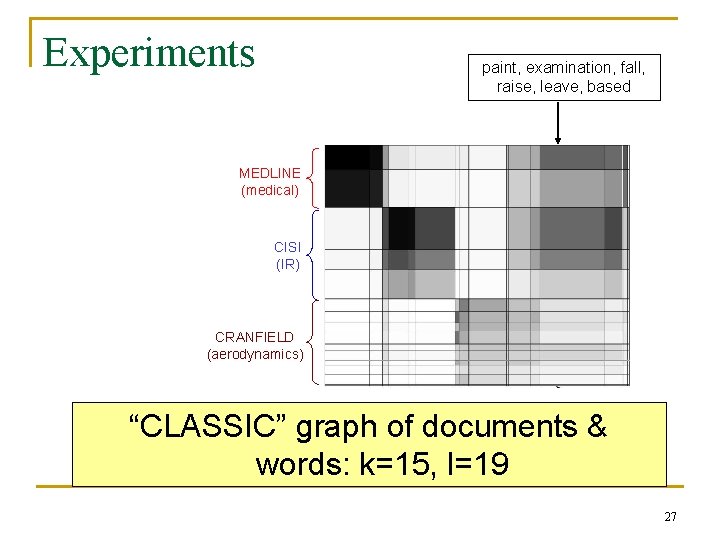

Experiments paint, examination, fall, raise, leave, based MEDLINE (medical) CISI (IR) CRANFIELD (aerodynamics) “CLASSIC” graph of documents & words: k=15, l=19 27

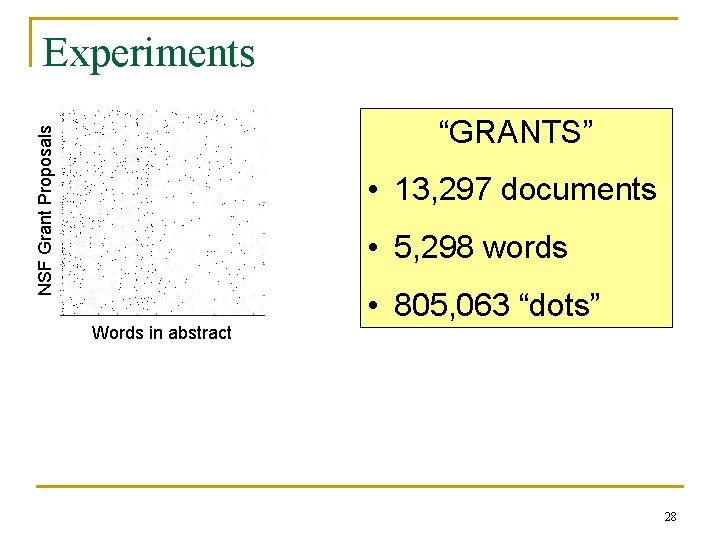

Experiments NSF Grant Proposals “GRANTS” • 13, 297 documents • 5, 298 words • 805, 063 “dots” Words in abstract 28

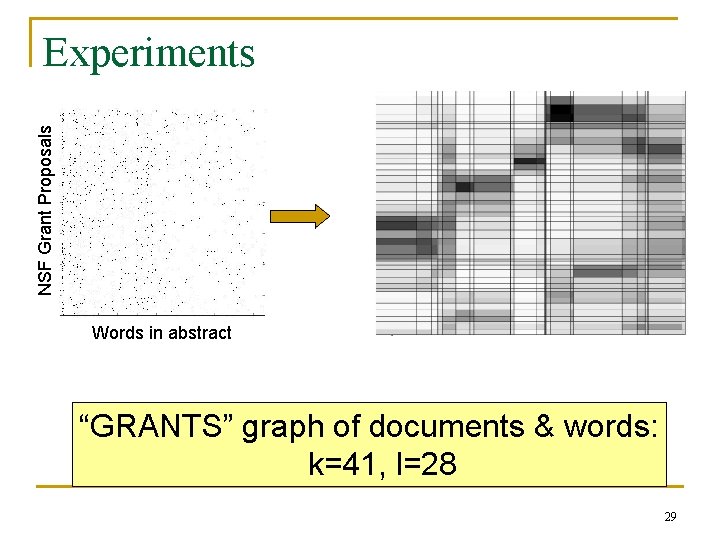

NSF Grant Proposals Experiments Words in abstract “GRANTS” graph of documents & words: k=41, l=28 29

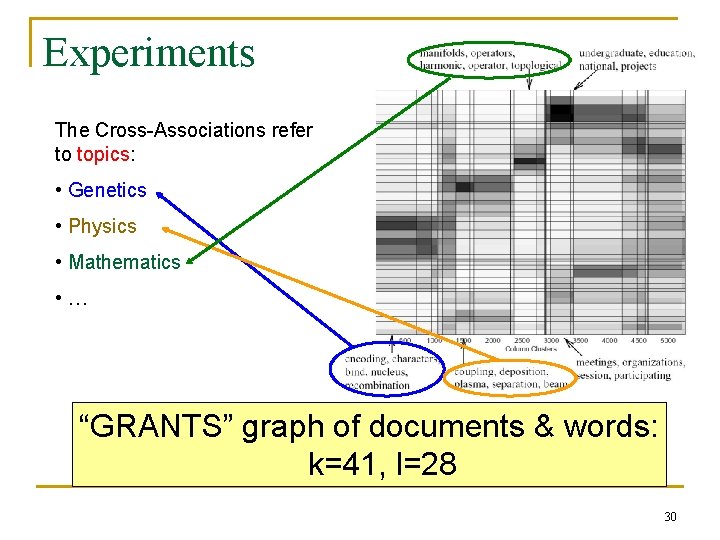

Experiments The Cross-Associations refer to topics: • Genetics • Physics • Mathematics • … “GRANTS” graph of documents & words: k=41, l=28 30

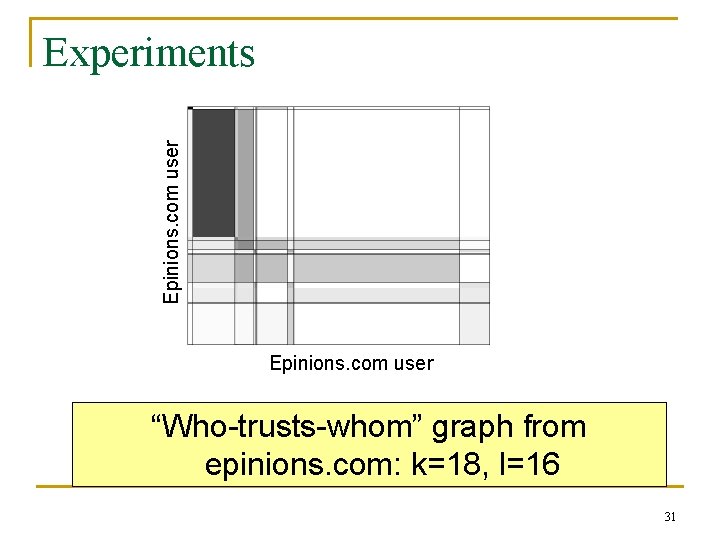

Epinions. com user Experiments Epinions. com user “Who-trusts-whom” graph from epinions. com: k=18, l=16 31

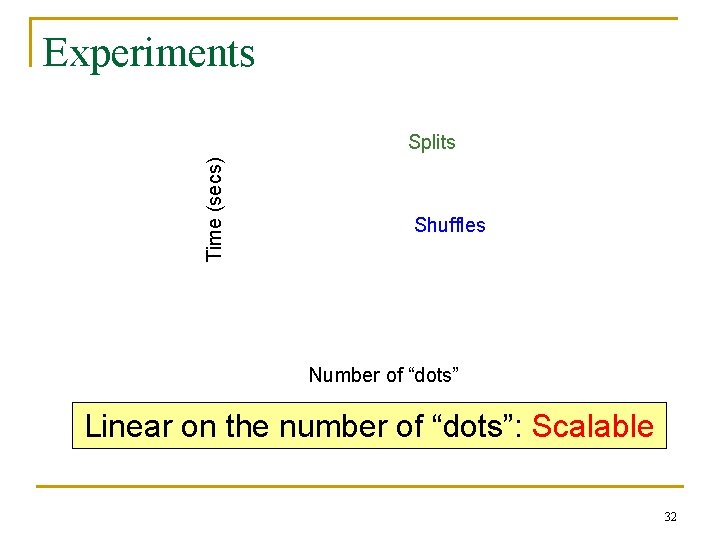

Experiments Time (secs) Splits Shuffles Number of “dots” Linear on the number of “dots”: Scalable 32

Conclusions Desiderata: ü Simultaneously discover row and column groups ü Fully Automatic: No “magic numbers” ü Scalable to large matrices 33

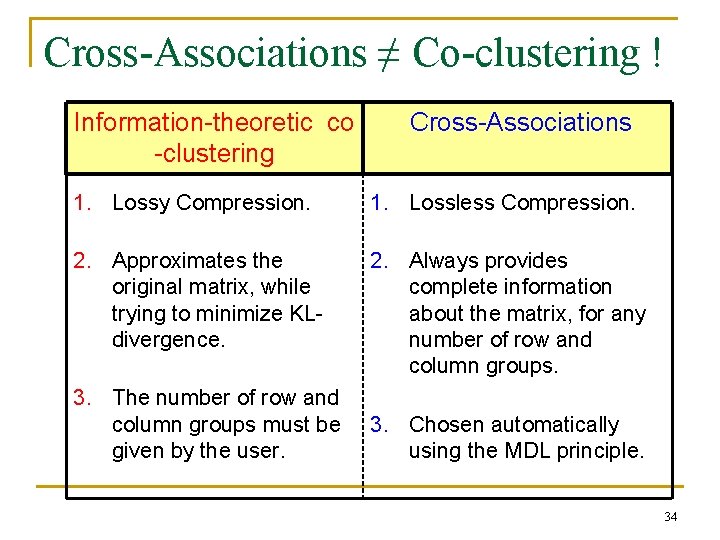

Cross-Associations ≠ Co-clustering ! Information-theoretic co -clustering Cross-Associations 1. Lossy Compression. 1. Lossless Compression. 2. Approximates the original matrix, while trying to minimize KLdivergence. 2. Always provides complete information about the matrix, for any number of row and column groups. 3. The number of row and column groups must be given by the user. 3. Chosen automatically using the MDL principle. 34

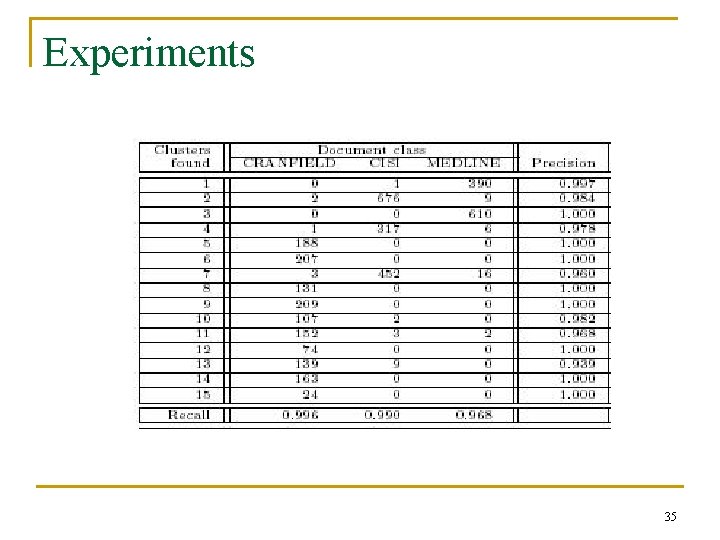

Experiments 35

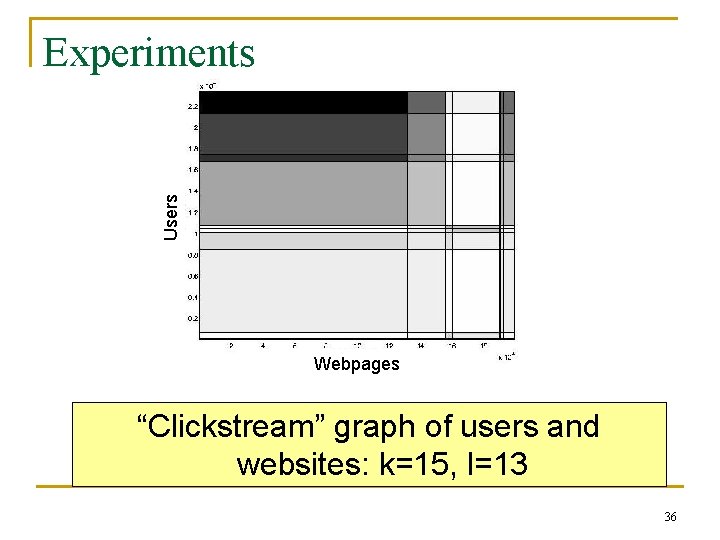

Users Experiments Webpages “Clickstream” graph of users and websites: k=15, l=13 36

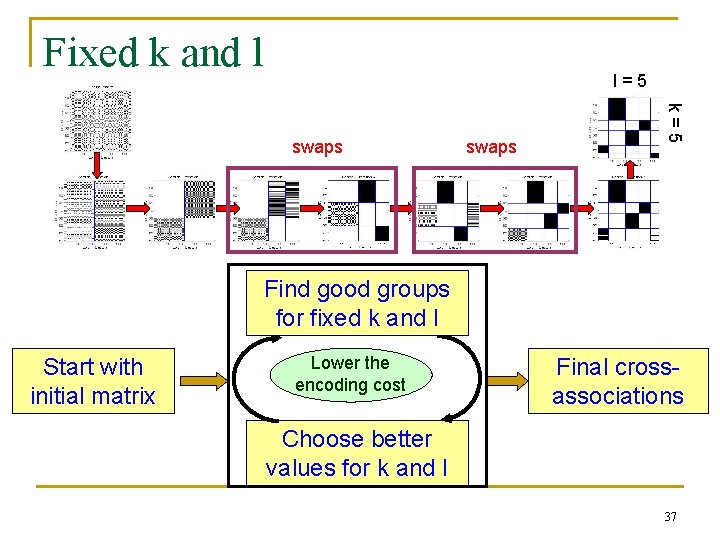

Fixed k and l l=5 swaps k=5 swaps Find good groups for fixed k and l Start with initial matrix Lower the encoding cost Final crossassociations Choose better values for k and l 37

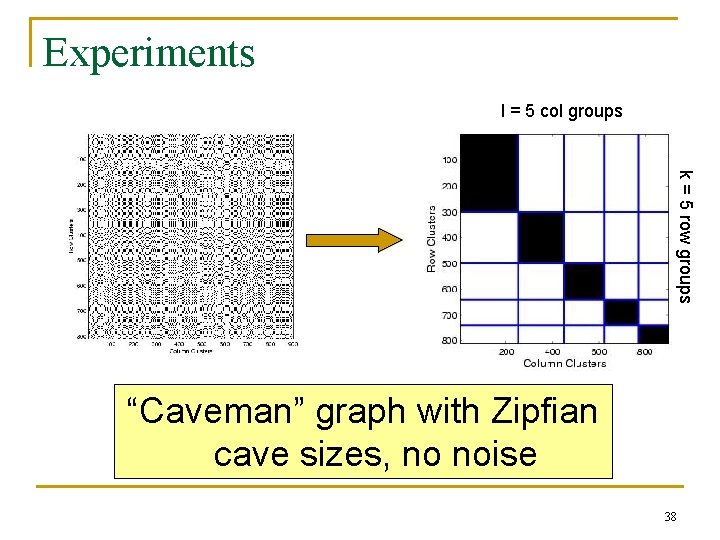

Experiments l = 5 col groups k = 5 row groups “Caveman” graph with Zipfian cave sizes, no noise 38

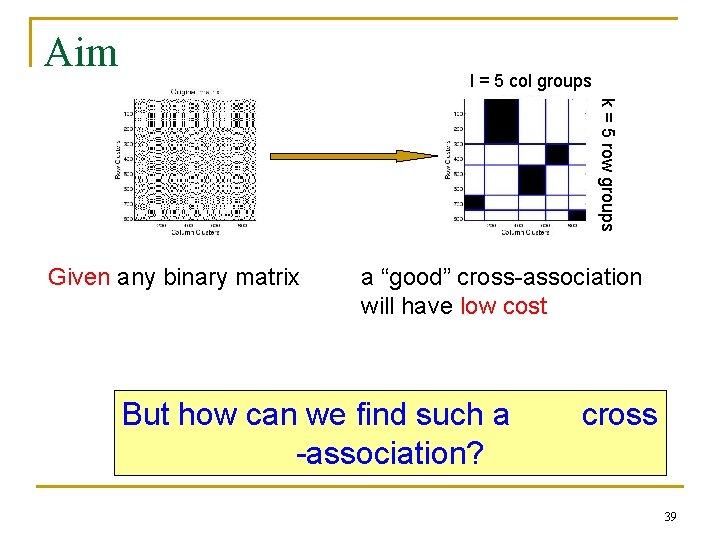

Aim l = 5 col groups k = 5 row groups Given any binary matrix a “good” cross-association will have low cost But how can we find such a -association? cross 39

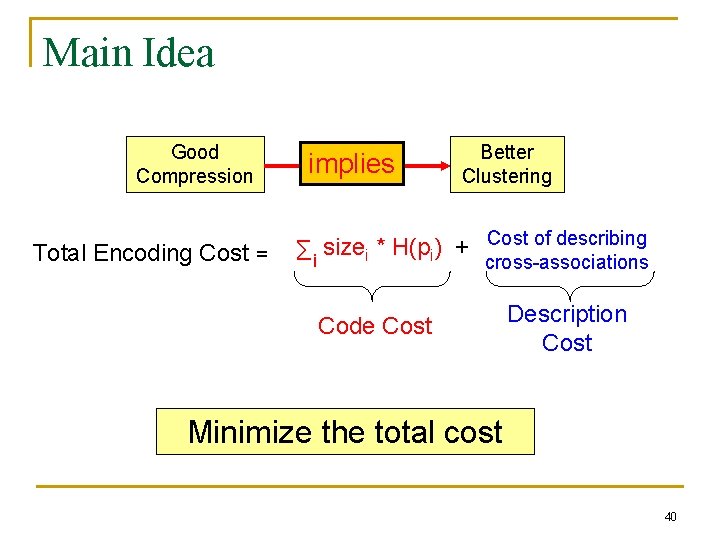

Main Idea Good Compression Total Encoding Cost = implies Better Clustering Cost of describing size * H(p ) + Σi i i cross-associations Code Cost Description Cost Minimize the total cost 40

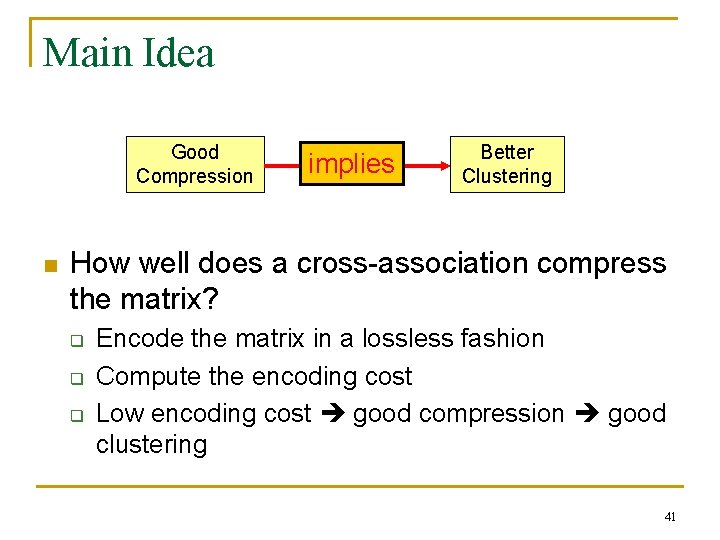

Main Idea Good Compression n implies Better Clustering How well does a cross-association compress the matrix? q q q Encode the matrix in a lossless fashion Compute the encoding cost Low encoding cost good compression good clustering 41

- Slides: 41