Mining Stream and Graph Data Spiros Papadimitriou IBM

Mining Stream and Graph Data Spiros Papadimitriou IBM T. J. Watson Research Center

Overview @ 12, 500 ft n Data mining: Finding patterns / rules / trends in large datasets This talk: n Numerical streams n Graphs and graph streams 2

Streams and mining n Streams arise in many application domains, for example: ¨ Sensor networks ¨ Network monitoring/analysis ¨ Pervasive healthcare ¨ Environmental monitoring ¨ Social interactions 3 Convert raw “data” into concise “patterns”, on the fly

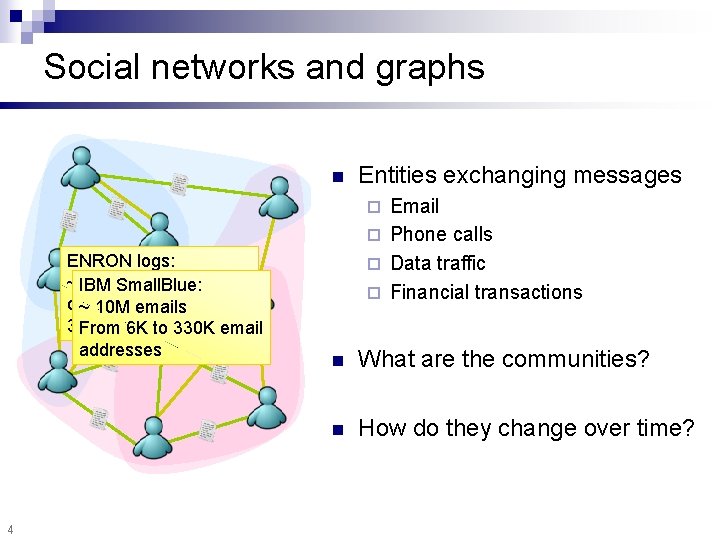

Social networks and graphs n Entities exchanging messages Email ¨ Phone calls ¨ Data traffic ¨ Financial transactions ¨ ENRON logs: ~ IBM 2 M emails Small. Blue: over 165 emails weeks, ~ 10 M 34 K email From 6 K addresses to 330 K email addresses 4 n What are the communities? n How do they change over time?

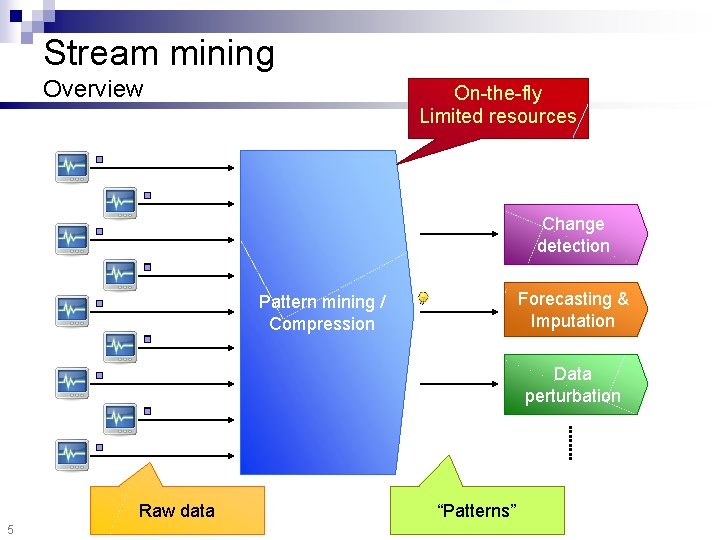

Stream mining Overview On-the-fly Limited resources Change detection Forecasting & Imputation Pattern mining / Compression Data perturbation Raw data 5 “Patterns”

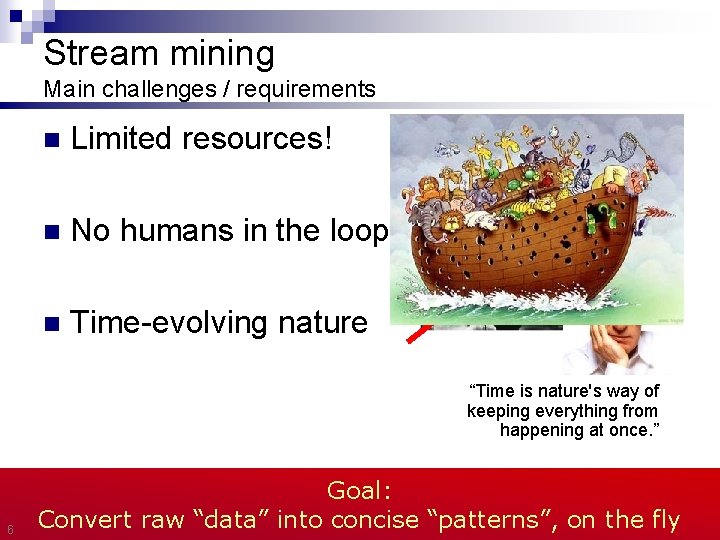

Stream mining Main challenges / requirements n Limited resources! n No humans in the loop n Time-evolving nature “Time is nature's way of keeping everything from happening at once. ” 6 Goal: Convert raw “data” into concise “patterns”, on the fly

Outline Mining numerical streams n Mining graph streams n Conclusions n 7

Motivation n Several settings where many deployed sensors measure some quantity—e. g. : ¨ Traffic in a network ¨ Temperatures in a large building ¨ Chlorine concentration in water distribution network Values are typically correlated 8 Would be very useful if we could summarize them on the fly

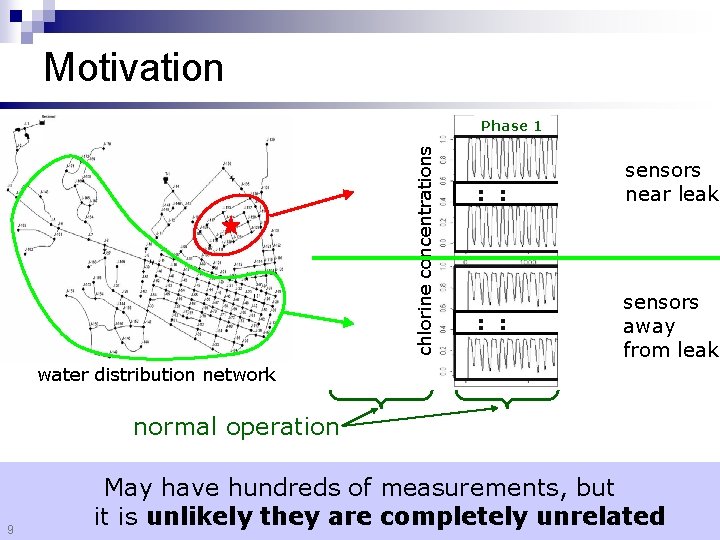

Motivation chlorine concentrations Phase 1 Phase 2 sensors near leak : : sensors : : away : : from leak water distribution network normal operation 9 Phase 3 May have hundreds of measurements, but it is unlikely they are completely unrelated

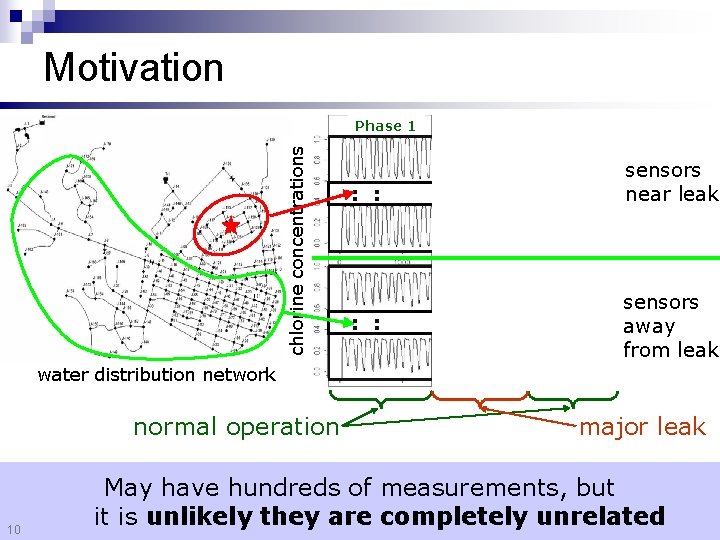

Motivation chlorine concentrations Phase 1 : : Phase 2 : : Phase 3 : : sensors near leak sensors away from leak water distribution network normal operation 10 major leak May have hundreds of measurements, but it is unlikely they are completely unrelated

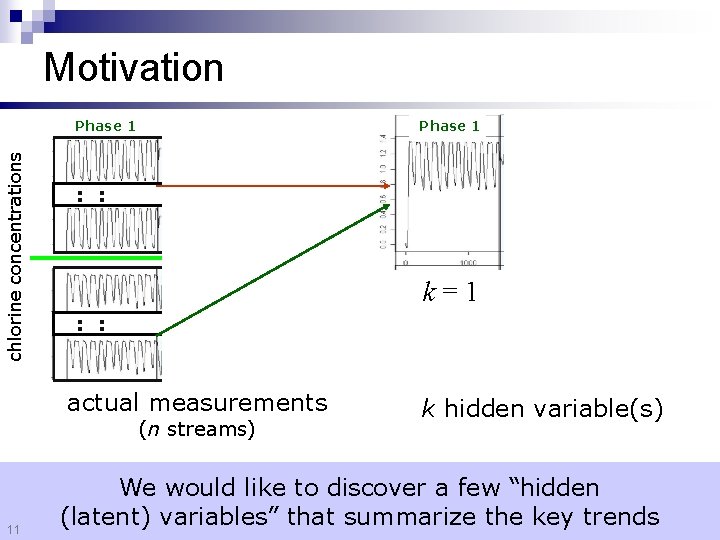

Motivation chlorine concentrations Phase 1 : : k=1 : : actual measurements (n streams) 11 k hidden variable(s) We would like to discover a few “hidden (latent) variables” that summarize the key trends

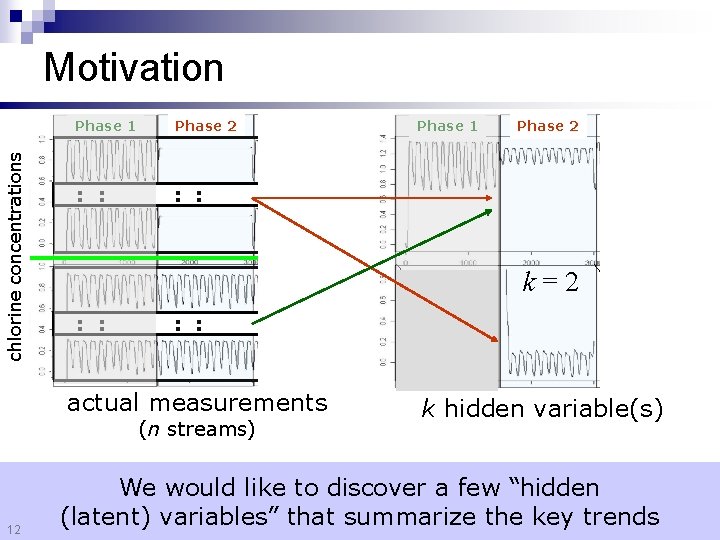

chlorine concentrations Motivation Phase 1 Phase 2 : : Phase 1 : : k=2 : : actual measurements (n streams) 12 Phase 2 k hidden variable(s) We would like to discover a few “hidden (latent) variables” that summarize the key trends

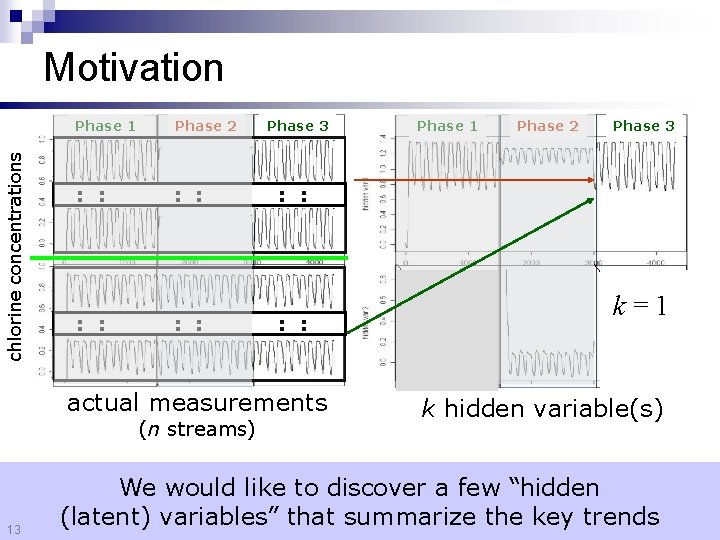

chlorine concentrations Motivation Phase 1 Phase 2 : : Phase 3 13 Phase 2 Phase 3 : : actual measurements (n streams) Phase 1 k=1 k hidden variable(s) We would like to discover a few “hidden (latent) variables” that summarize the key trends

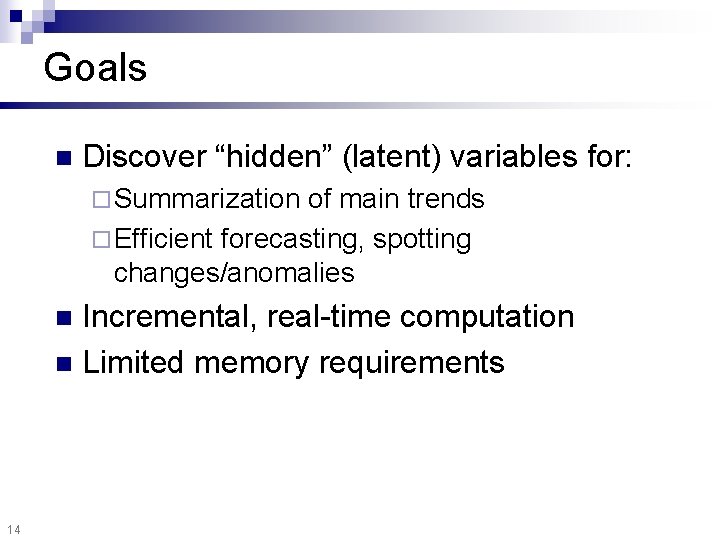

Goals n Discover “hidden” (latent) variables for: ¨ Summarization of main trends ¨ Efficient forecasting, spotting changes/anomalies Incremental, real-time computation n Limited memory requirements n 14

![Outline n Mining numerical streams ¨ Stream correlations / definitions [VLDB 05] ¨ Forecasting Outline n Mining numerical streams ¨ Stream correlations / definitions [VLDB 05] ¨ Forecasting](http://slidetodoc.com/presentation_image_h/a39bac782bfdf3708734c6abc5fb7aa3/image-15.jpg)

Outline n Mining numerical streams ¨ Stream correlations / definitions [VLDB 05] ¨ Forecasting and imputation ¨ Experiments ¨ Distributed estimation Mining graph streams n Conclusions n 15

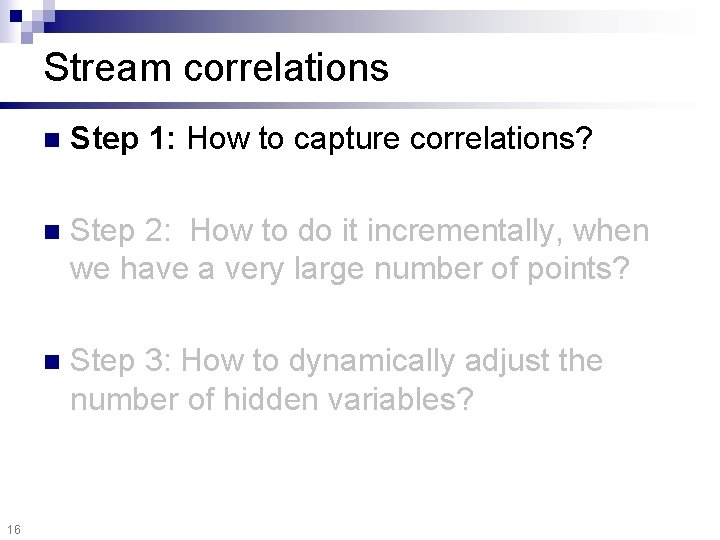

Stream correlations 16 n Step 1: How to capture correlations? n Step 2: How to do it incrementally, when we have a very large number of points? n Step 3: How to dynamically adjust the number of hidden variables?

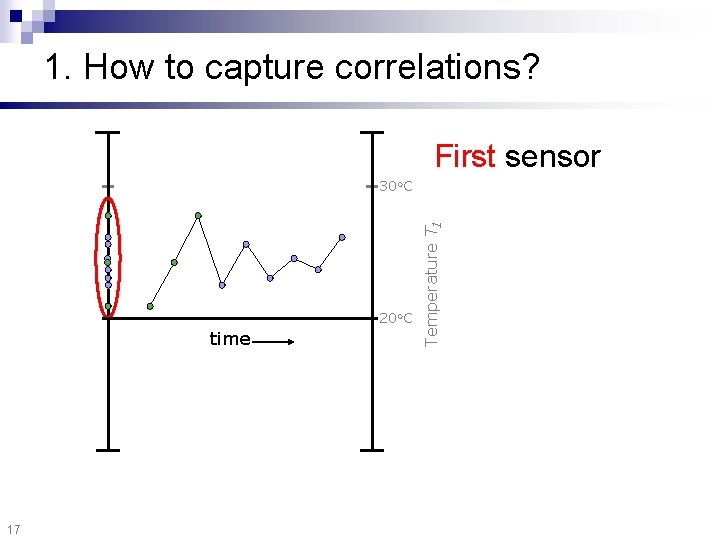

1. How to capture correlations? First sensor 20 o. C time 17 Temperature T 1 30 o. C

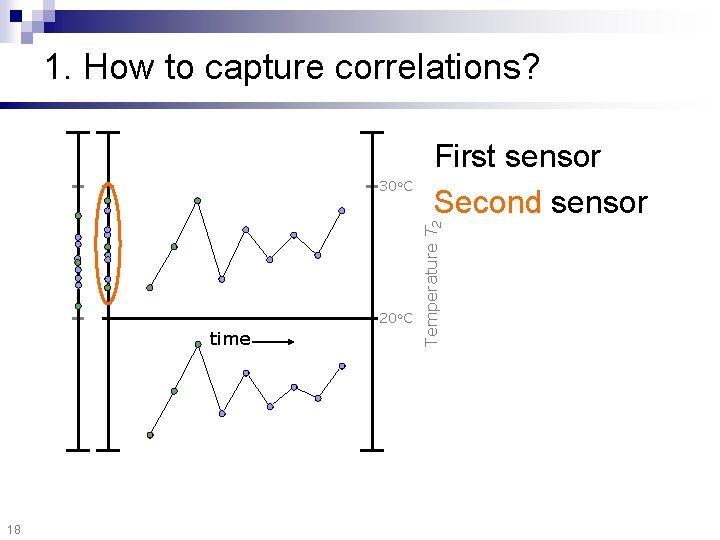

1. How to capture correlations? 20 o. C time 18 Temperature T 2 30 o. C First sensor Second sensor

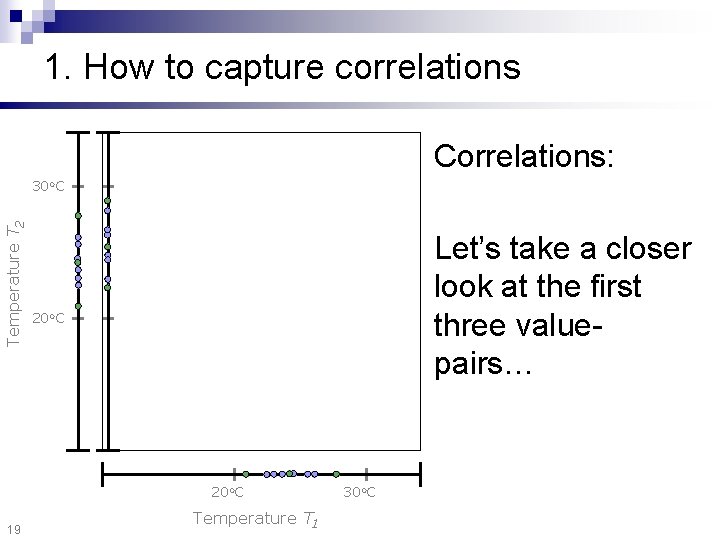

1. How to capture correlations Correlations: Temperature T 2 30 o. C Let’s take a closer look at the first three valuepairs… 20 o. C 19 Temperature T 1 30 o. C

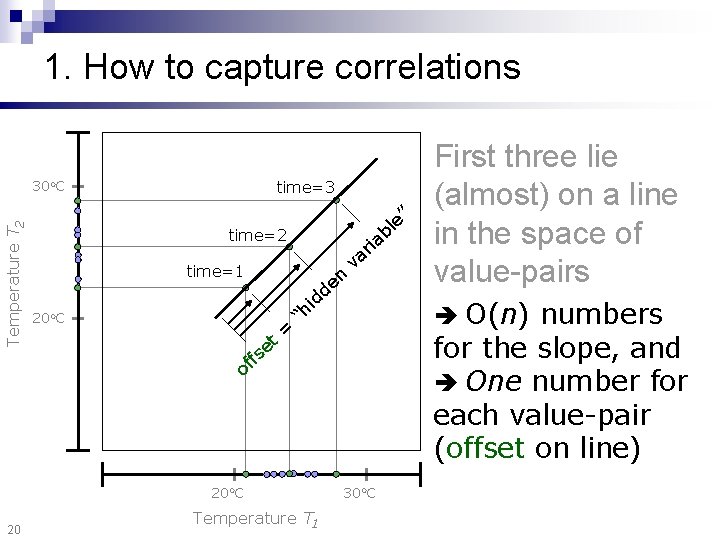

1. How to capture correlations time=3 Temperature T 2 30 o. C ” time=2 time=1 d 20 o. C t e s = i “h Temperature T 1 O(n) numbers for the slope, and One number for each value-pair (offset on line) f of 20 o. C 20 n e d ia r va e bl First three lie (almost) on a line in the space of value-pairs 30 o. C

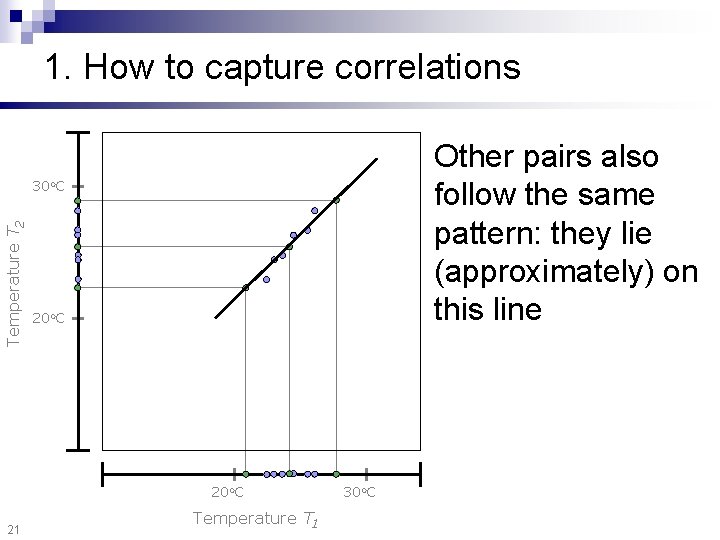

1. How to capture correlations Other pairs also follow the same pattern: they lie (approximately) on this line Temperature T 2 30 o. C 20 o. C 21 Temperature T 1 30 o. C

Stream correlations 22 n Step 1: How to capture correlations? n Step 2: How to do it incrementally, when we have a very large number of points? n Step 3: How to dynamically adjust the number of hidden variables?

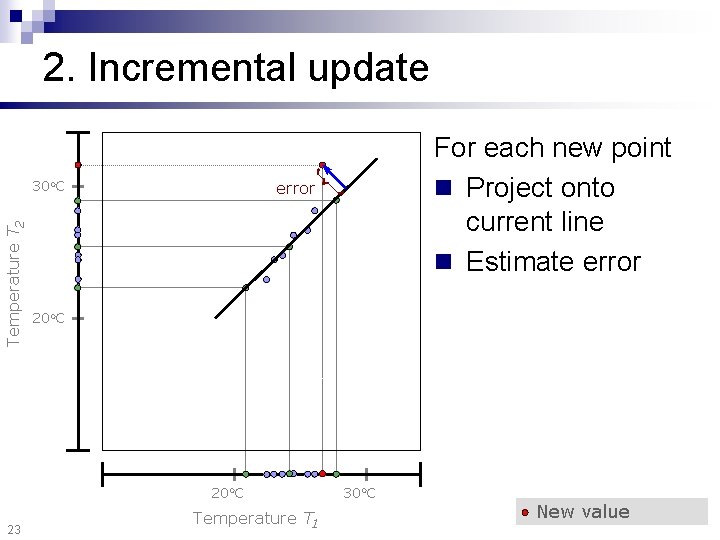

2. Incremental update Temperature T 2 30 o. C error 20 o. C 23 For each new point n Project onto current line n Estimate error Temperature T 1 30 o. C New value

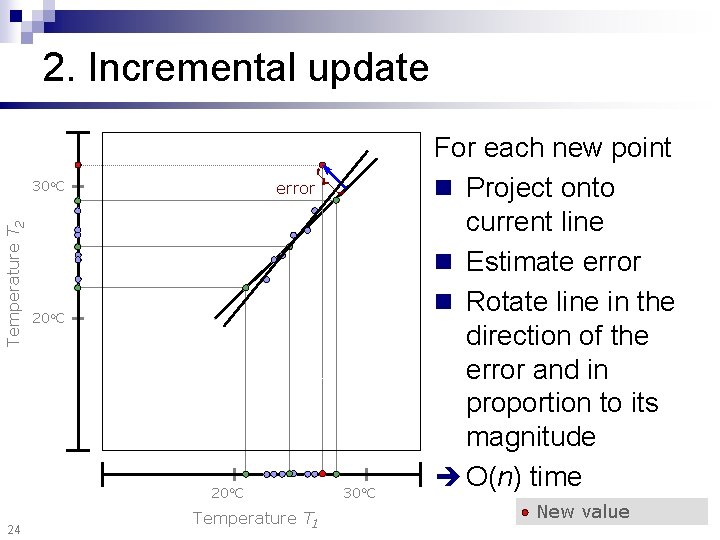

2. Incremental update Temperature T 2 30 o. C error 20 o. C 24 Temperature T 1 30 o. C For each new point n Project onto current line n Estimate error n Rotate line in the direction of the error and in proportion to its magnitude O(n) time New value

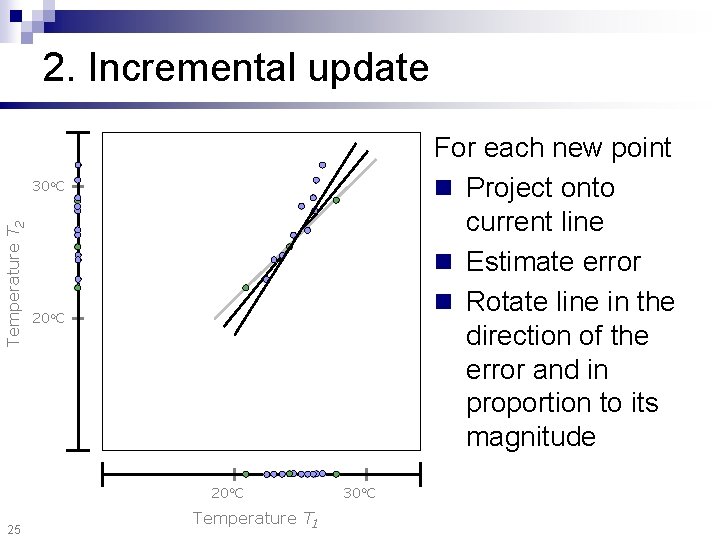

2. Incremental update For each new point n Project onto current line n Estimate error n Rotate line in the direction of the error and in proportion to its magnitude Temperature T 2 30 o. C 20 o. C 25 Temperature T 1 30 o. C

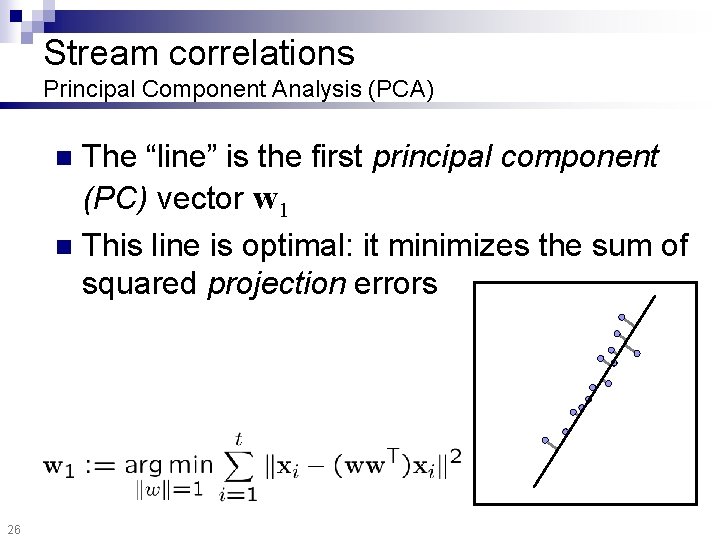

Stream correlations Principal Component Analysis (PCA) The “line” is the first principal component (PC) vector w 1 n This line is optimal: it minimizes the sum of squared projection errors n 26

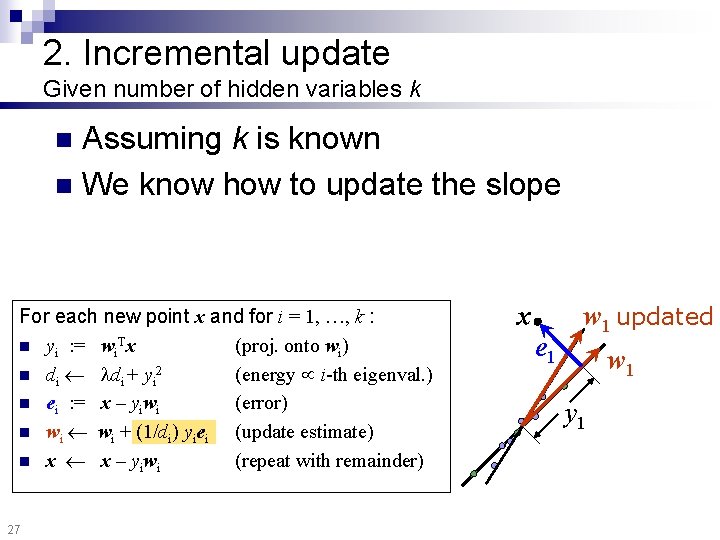

2. Incremental update Given number of hidden variables k Assuming k is known n We know how to update the slope n For each new point x and for i = 1, …, k : n yi : = wi. Tx (proj. onto wi) n di + yi 2 (energy i-th eigenval. ) n ei : = x – yiwi (error) n wi + (1/di) yiei (update estimate) n x x – yiwi (repeat with remainder) 27 x e 1 w 1 updated w 1 y 1

Stream correlations 28 n Step 1: How to capture correlations? n Step 2: How to do it incrementally, when we have a very large number of points? n Step 3: How to dynamically adjust k, the number of hidden variables?

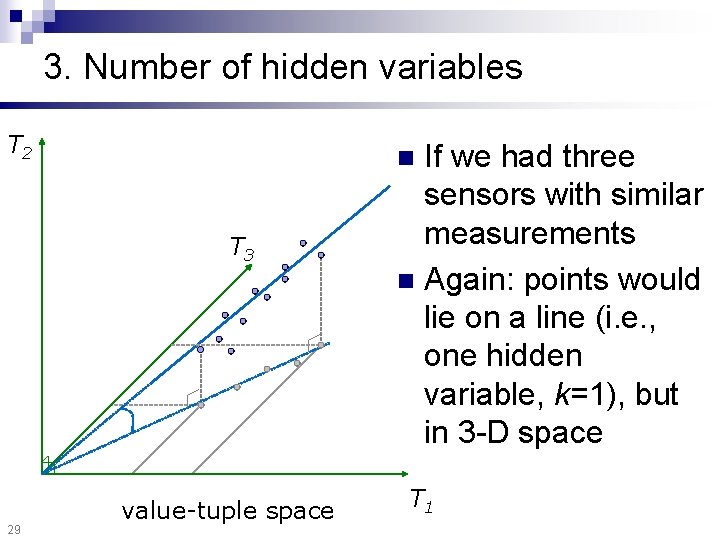

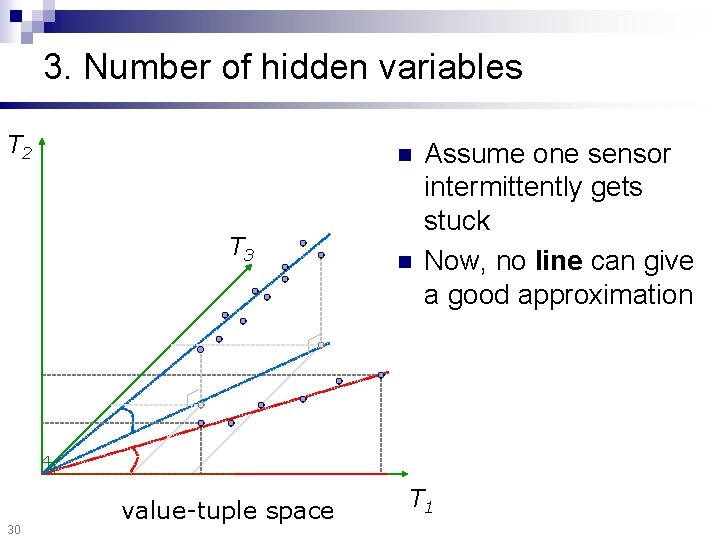

3. Number of hidden variables T 2 T 3 29 If we had three sensors with similar measurements n Again: points would lie on a line (i. e. , one hidden variable, k=1), but in 3 -D space n value-tuple space T 1

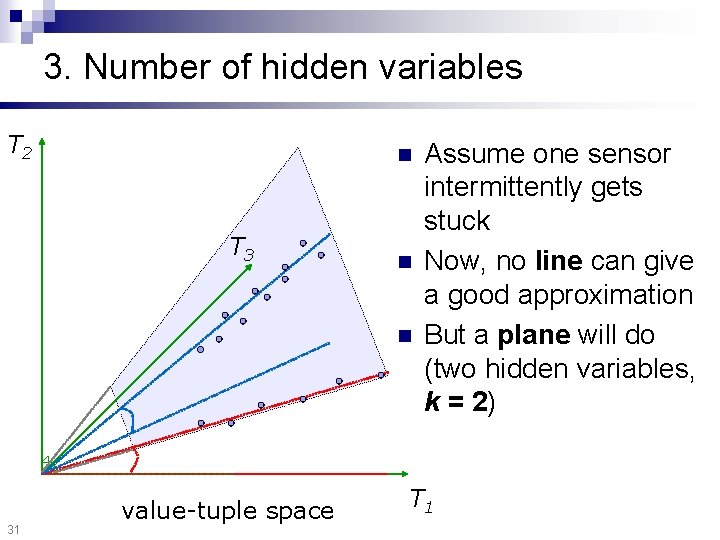

3. Number of hidden variables T 2 n T 3 30 value-tuple space n Assume one sensor intermittently gets stuck Now, no line can give a good approximation T 1

3. Number of hidden variables T 2 n T 3 n n 31 value-tuple space Assume one sensor intermittently gets stuck Now, no line can give a good approximation But a plane will do (two hidden variables, k = 2) T 1

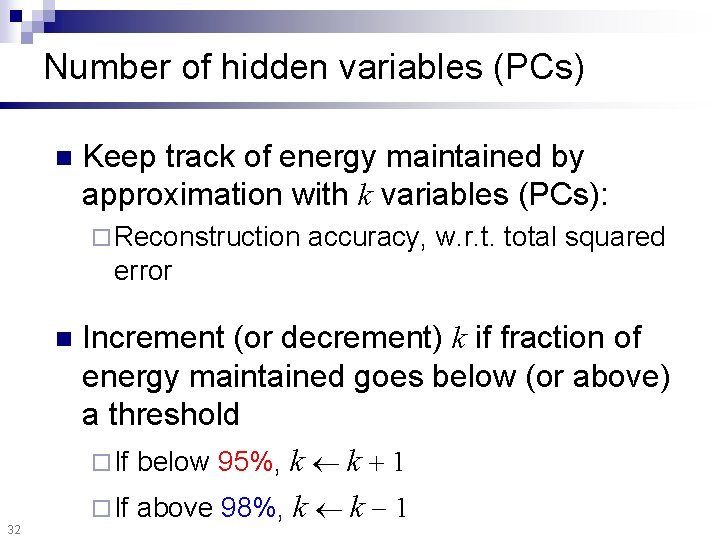

Number of hidden variables (PCs) n Keep track of energy maintained by approximation with k variables (PCs): ¨ Reconstruction accuracy, w. r. t. total squared error n 32 Increment (or decrement) k if fraction of energy maintained goes below (or above) a threshold ¨ If below 95%, k k 1 ¨ If above 98%, k k 1

Outline n Mining numerical streams ¨ Stream correlations / definitions ¨ Forecasting and imputation ¨ Experiments ¨ Distributed estimation Mining graph streams n Conclusions n 34

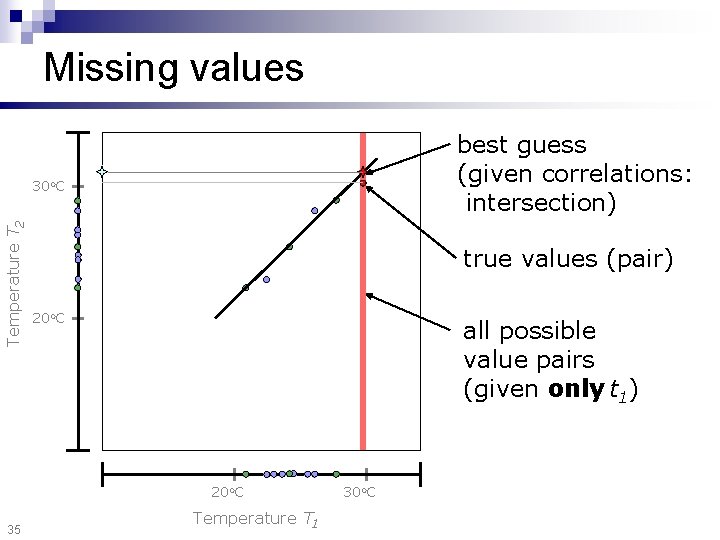

Missing values best guess (given correlations: intersection) Temperature T 2 30 o. C true values (pair) 20 o. C all possible value pairs (given only t 1) 20 o. C 35 Temperature T 1 30 o. C

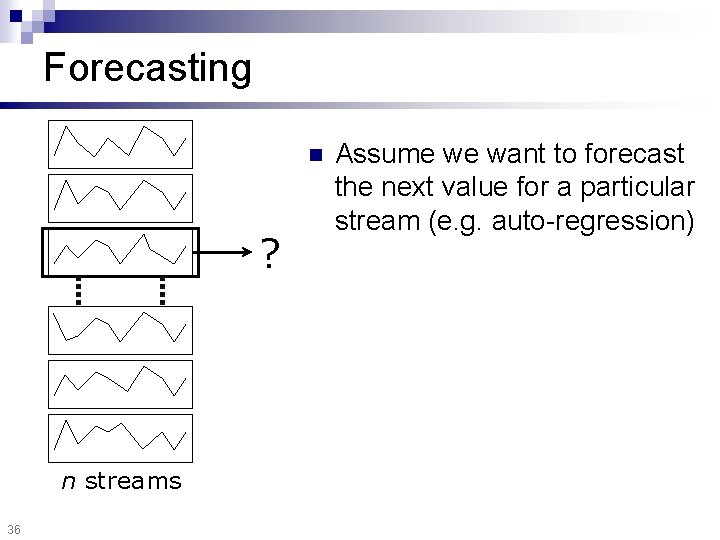

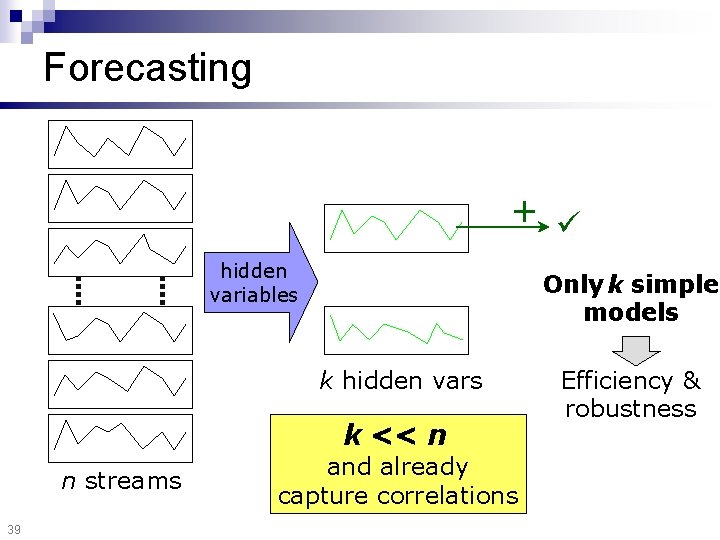

Forecasting n ? n streams 36 Assume we want to forecast the next value for a particular stream (e. g. auto-regression)

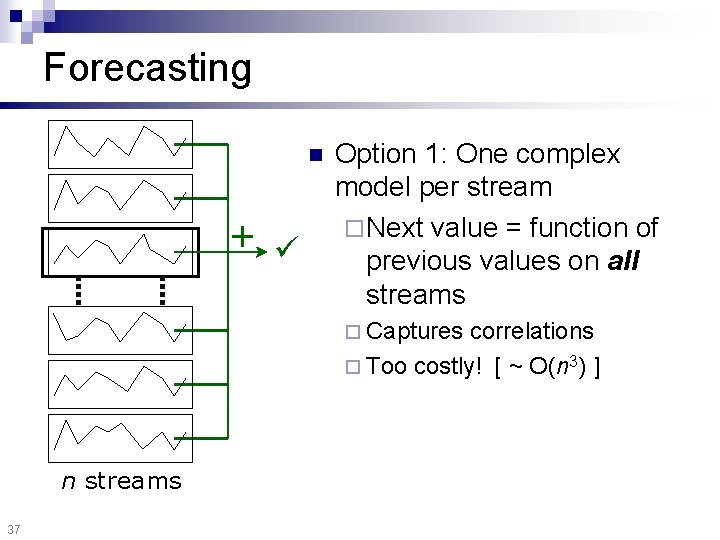

Forecasting n + Option 1: One complex model per stream ¨ Next value = function of previous values on all streams ¨ Captures correlations ¨ Too costly! [ ~ O(n 3) ] n streams 37

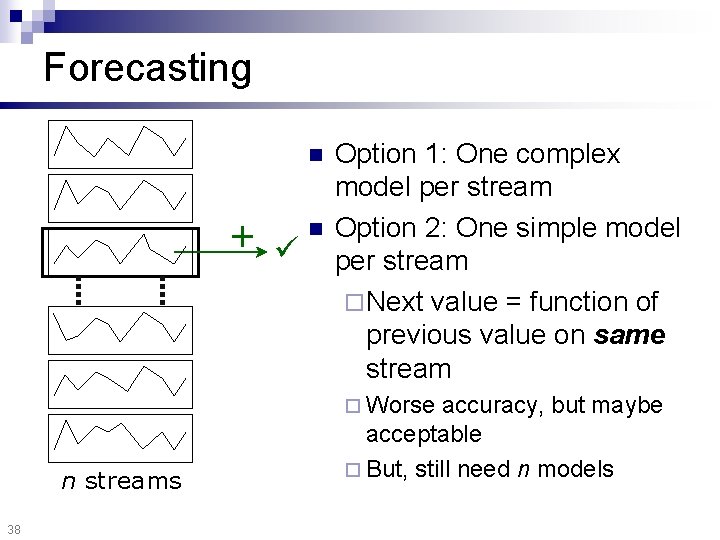

Forecasting n + n Option 1: One complex model per stream Option 2: One simple model per stream ¨ Next value = function of previous value on same stream ¨ Worse n streams 38 accuracy, but maybe acceptable ¨ But, still need n models

Forecasting + hidden variables Only k simple models k hidden vars k << n n streams 39 and already capture correlations Efficiency & robustness

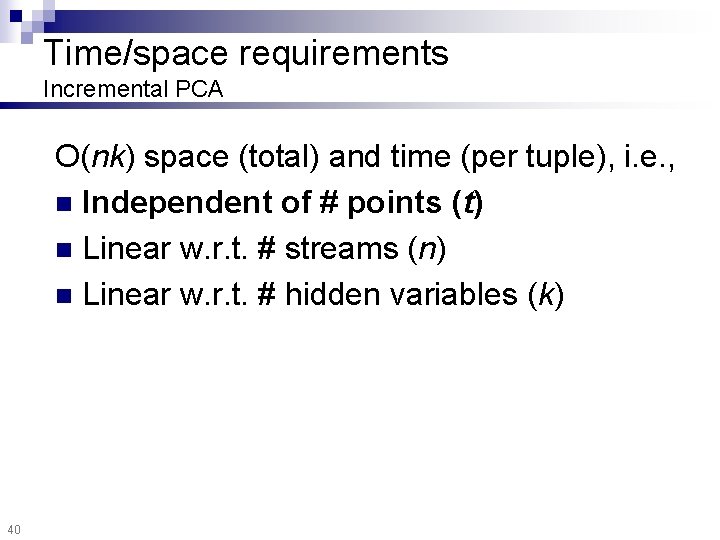

Time/space requirements Incremental PCA O(nk) space (total) and time (per tuple), i. e. , n Independent of # points (t) n Linear w. r. t. # streams (n) n Linear w. r. t. # hidden variables (k) 40

Outline n Mining numerical streams ¨ Stream correlations / definitions ¨ Forecasting and imputation ¨ Experiments ¨ Distributed estimation Mining graph streams n Conclusions n 41

![Experiments Chlorine concentration Measurements Reconstruction ~80 x [CMU Civil Engineering] 42 166 streams 2 Experiments Chlorine concentration Measurements Reconstruction ~80 x [CMU Civil Engineering] 42 166 streams 2](http://slidetodoc.com/presentation_image_h/a39bac782bfdf3708734c6abc5fb7aa3/image-41.jpg)

Experiments Chlorine concentration Measurements Reconstruction ~80 x [CMU Civil Engineering] 42 166 streams 2 hidden variables (~4% error)

![Experiments Chlorine concentration Hidden variables n n [CMU Civil Engineering] 43 n Both capture Experiments Chlorine concentration Hidden variables n n [CMU Civil Engineering] 43 n Both capture](http://slidetodoc.com/presentation_image_h/a39bac782bfdf3708734c6abc5fb7aa3/image-42.jpg)

Experiments Chlorine concentration Hidden variables n n [CMU Civil Engineering] 43 n Both capture global, periodic pattern Second: ~ first, but “phase-shifted” Can express any “phase-shift”…

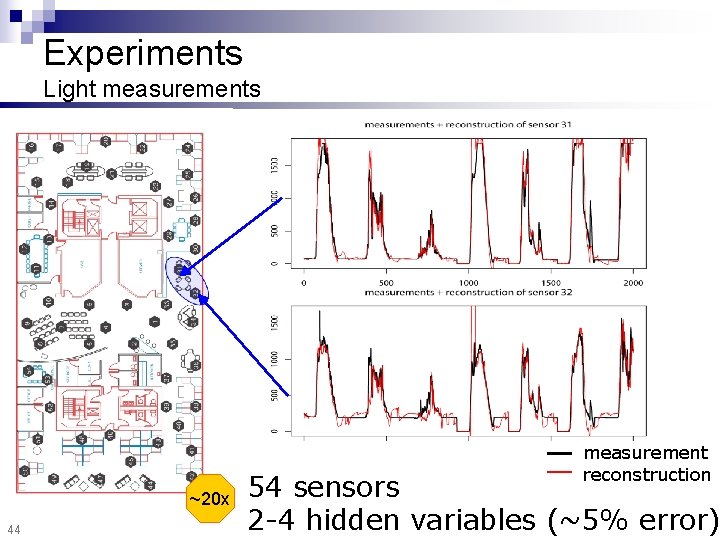

Experiments Light measurements measurement reconstruction ~20 x 44 54 sensors 2 -4 hidden variables (~5% error)

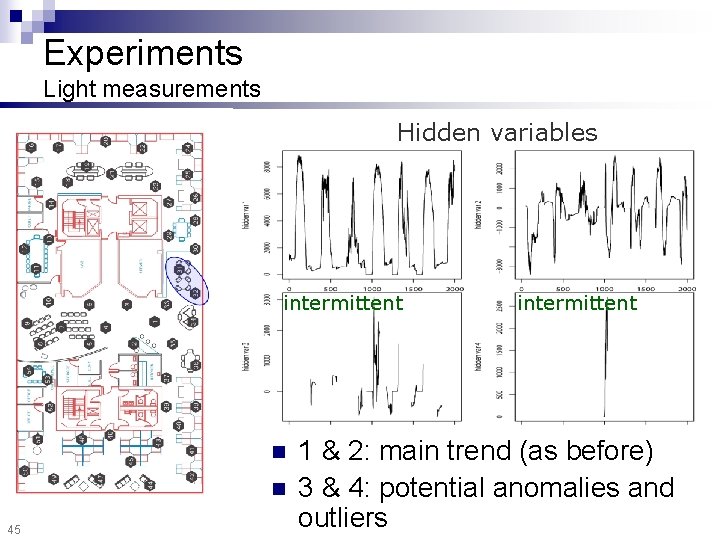

Experiments Light measurements Hidden variables intermittent n n 45 intermittent 1 & 2: main trend (as before) 3 & 4: potential anomalies and outliers

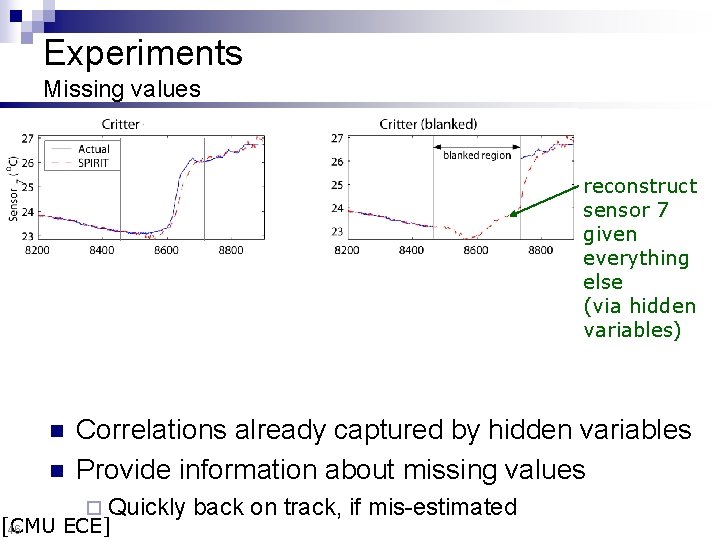

Experiments Missing values reconstruct sensor 7 given everything else (via hidden variables) n n Correlations already captured by hidden variables Provide information about missing values ¨ Quickly [CMU ECE] 46 back on track, if mis-estimated

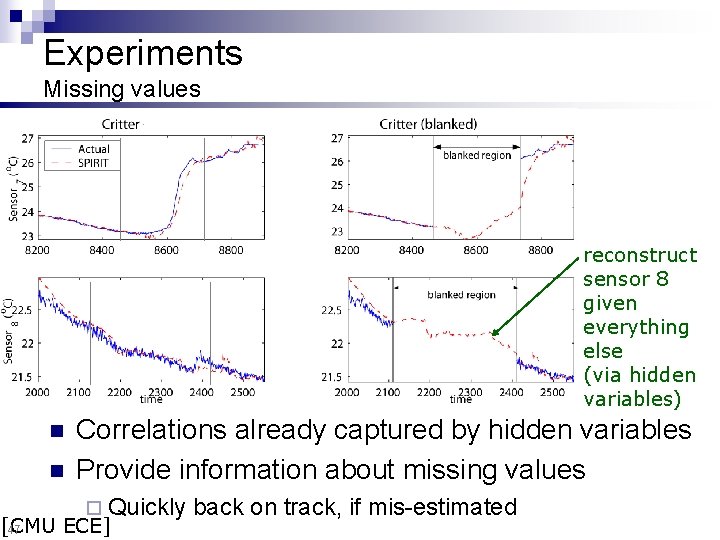

Experiments Missing values reconstruct sensor 8 given everything else (via hidden variables) n n Correlations already captured by hidden variables Provide information about missing values ¨ Quickly [CMU ECE] 47 back on track, if mis-estimated

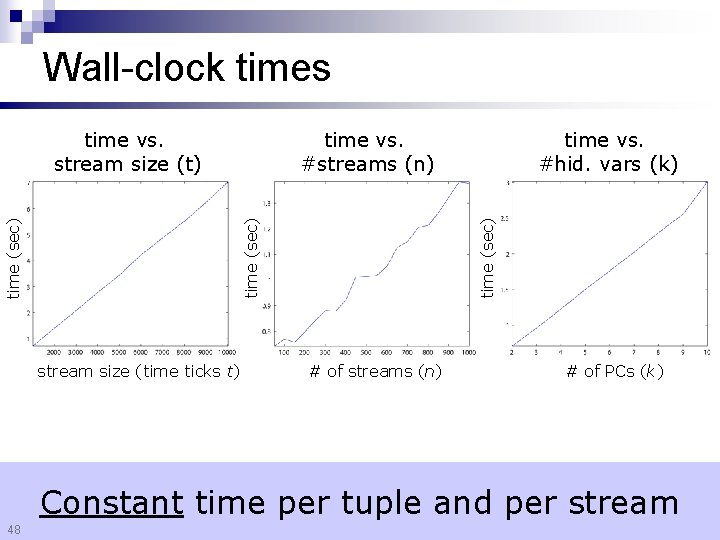

Wall-clock times time (sec) stream size (time ticks t) time vs. #hid. vars (k) time (sec) time vs. #streams (n) time (sec) time vs. stream size (t) # of streams (n) # of PCs (k) Constant time per tuple and per stream 48

Streaming correlations Summary n Many settings with hundreds of streams, but ¨ Stream values are, by nature, related ¨ In reality, there are only a few variables Discover hidden variables for ¨ Summarization of main trends ¨ Efficient forecasting, spotting outliers/anomalies Incremental, real time computation With limited memory 49 Convert raw “data” into concise “patterns”, on the fly

Outline n Mining numerical streams ¨ Stream correlations / definitions ¨ Forecasting and imputation ¨ Experiments ¨ Distributed estimation [PAKDD 06] Mining graph streams n Conclusions n 50

Streaming correlations n 51 So far we assumed a central base station

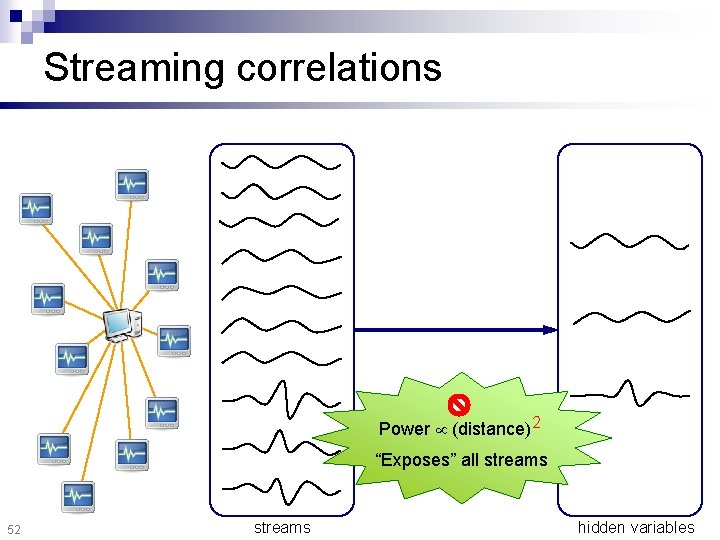

Streaming correlations Power (distance)2 “Exposes” all streams 52 streams hidden variables

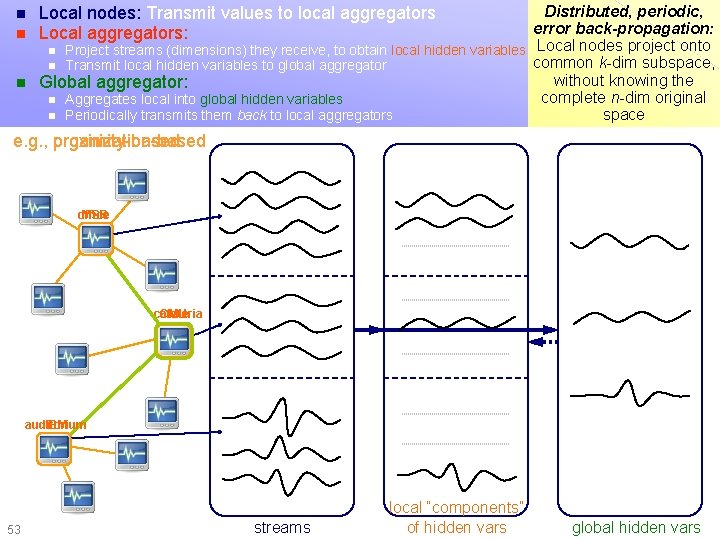

Distributed, periodic, error back-propagation: n Project streams (dimensions) they receive, to obtain local hidden variables Local nodes project onto common k-dim subspace, n Transmit local hidden variables to global aggregator without knowing the n Global aggregator: complete n-dim original n Aggregates local into global hidden variables n Periodically transmits them back to local aggregators space n n Local nodes: Transmit values to local aggregators Local aggregators: Distributed streaming correlations e. g. , proximity-based organization-based office MSR cafeteria CMU auditorium IBM 53 streams local “components” of hidden vars global hidden vars

Distributed streaming correlations Summary Centralized Distributed, hierarchical n The same hidden variables n Increase concurrency n Limit communication distance n Added benefit: limit information disclosure n 55

Outline Mining numerical streams n Mining graph streams n Conclusions n 56

Social software n Identity and relations n ¨ Facebook Friend. Feed ¨ My. Blog. Log ¨ Lijit ¨ … ¨ ¨ Linked. In ¨ Open. Social ¨… n Collaboration ¨ Google ¨ Zoho ¨… Social aggregators Docs n Geo-location Dopplr ¨ Loopt ¨ …and many more 57

Social software Rapidly growing n Fueled by n ¨ The internet ¨ Standard protocols ¨ Convergence Online ↔ “Offline” identity n Becoming ubiquitous n ¨ “Giant Global Graph (GGG)” — cf. WWW http: //dig. csail. mit. edu/breadcrumbs/node/215 58 IBM Small. Blue / Lotus Atlas: How to leverage social networks in the enterprise?

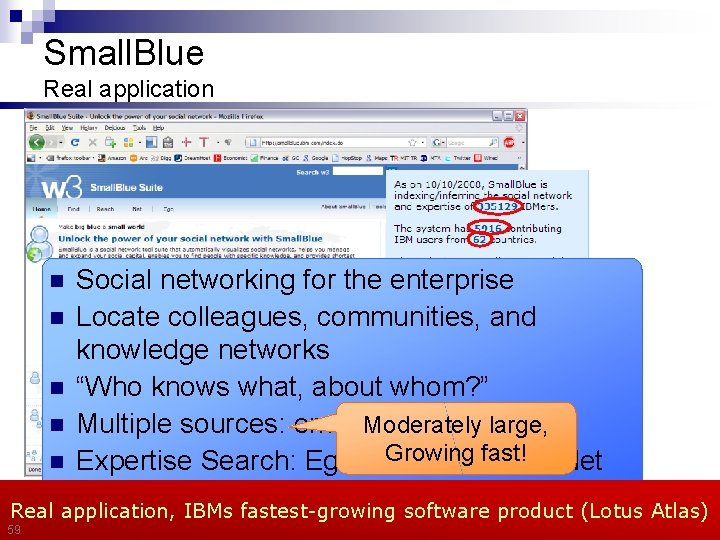

Small. Blue Real application Social networking for the enterprise n Locate colleagues, communities, and knowledge networks n “Who knows what, about whom? ” large, n Multiple sources: email Moderately / IM / profiles /… Growing/ fast! n Expertise Search: Ego / Reach Find / Net n Social Search Real application, IBMs fastest-growing software product (Lotus Atlas) n 59

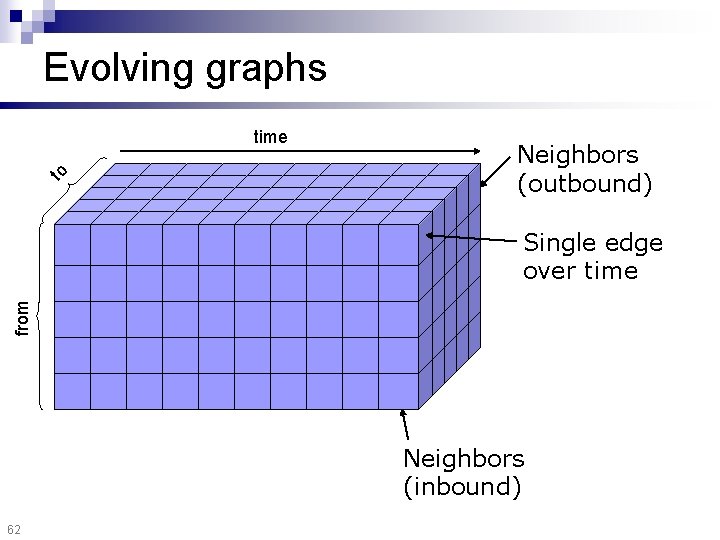

Evolving graphs n Graphs represent relationships that may evolve over time: ¨ Email exchanges ¨ Co-authorship ¨ etc… n Formally: ¨ New edges appear, old edges disappear ¨ Edge weights may change 60

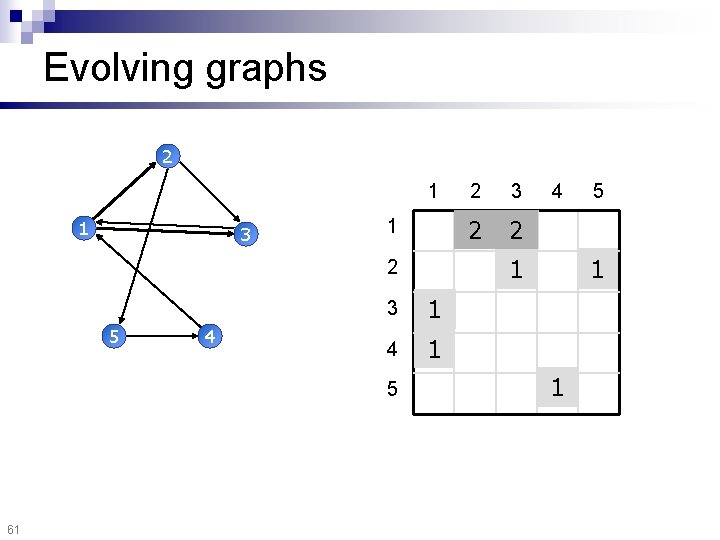

Evolving graphs 2 1 1 3 1 4 1 5 61 3 2 1 4 5 1 1 2 5 2 1

Evolving graphs to time Neighbors (outbound) from Single edge over time Neighbors (inbound) 62

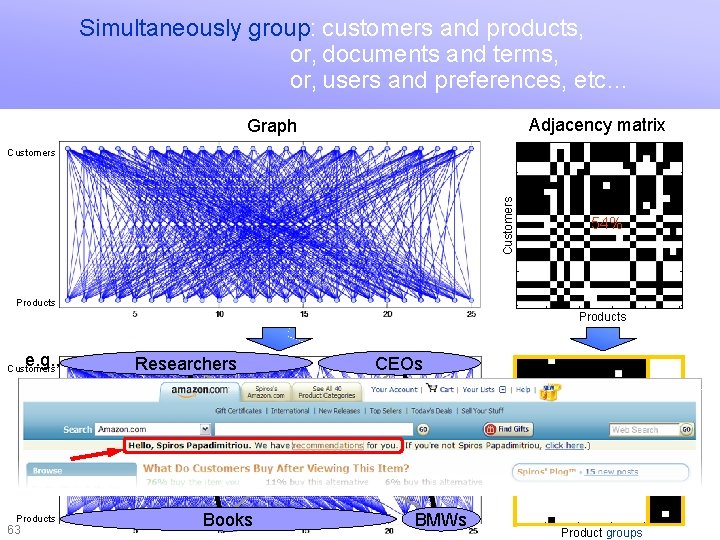

Simultaneously group: customers and products, or, documents and terms, or, users and preferences, etc… Graph clustering Adjacency matrix Graph Customers 5 10 54% 15 20 Products e. g. , Customers 25 Researchers 5 10 15 Products 25 20 CEOs 2/7 5 48/50 00 2 5/ Products Books 3% 3% 96% 15 20 63 97% 10 289 / 300 Customer groups 5 BMWs 25 5 10 15 20 Product groups 25

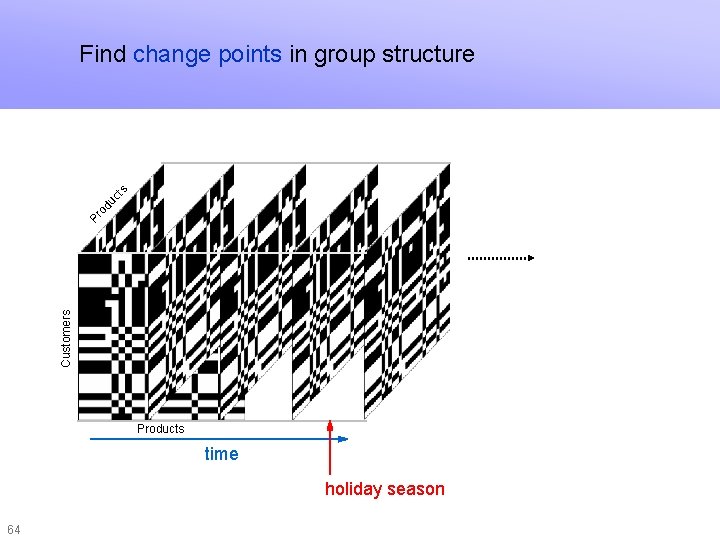

Find change points in group structure Customers Pr o du ct s Graph stream clustering Products time holiday season 64

Goals n Question 1 (single graph snapshot): Organize into few, homogeneous communities n Question 2 (stream of snapshots): Find changes in community structure n Scalable Parameter-free n 65

![Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp [KDD 04] Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp [KDD 04]](http://slidetodoc.com/presentation_image_h/a39bac782bfdf3708734c6abc5fb7aa3/image-64.jpg)

Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp [KDD 04] ¨ Multiple timestamps ¨ Experiments ¨ Hierarchical exploration ¨ Proximity tracking n 66 Conclusions

Graph clustering n Given a graph of interactions or associations ¨ Customers to products ¨ Documents to terms ¨ People to people ¨ Computer communications ¨ Financial transactions n Find simultaneously ¨ Communities ¨ Their 67 number (source and destination)

Graph clustering 68 n Step 1: How to define a “good” partitioning? Intiuition and formalization n Step 2: How to find it?

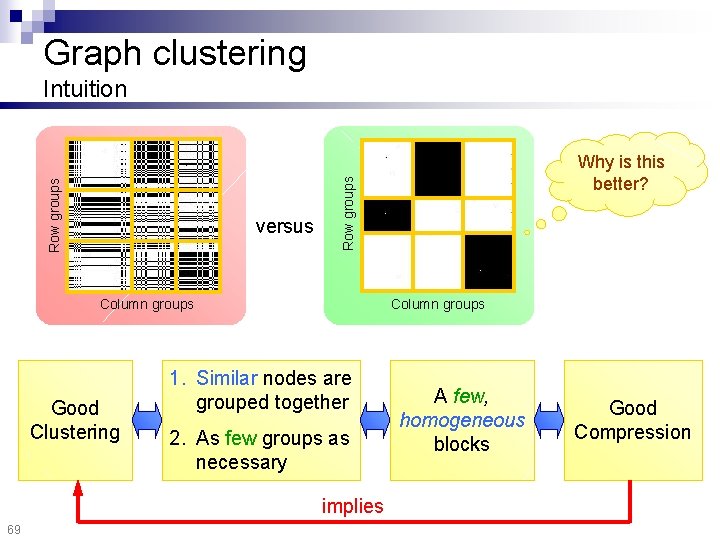

Graph clustering versus Column groups Good Clustering 1. Similar nodes are grouped together 2. As few groups as necessary implies 69 Why is this better? Row groups Intuition A few, homogeneous blocks Good Compression

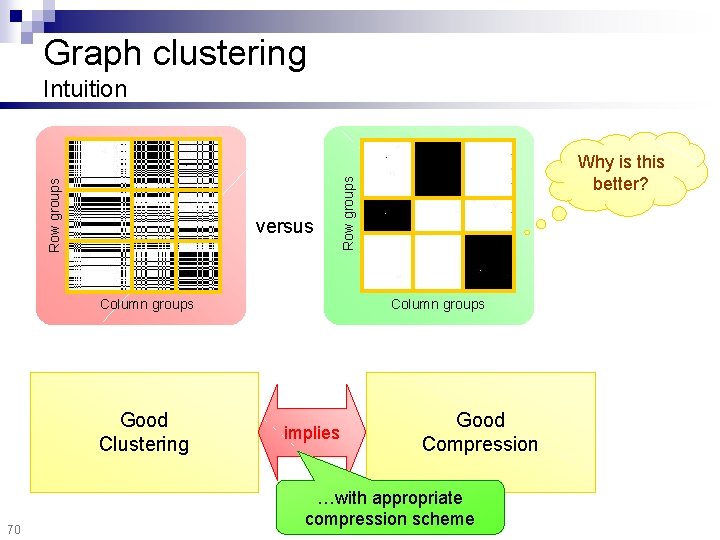

Graph clustering versus Column groups 1. Similar nodes are grouped together Good implies Clustering 2. As few groups as Clustering necessary 70 Why is this better? Row groups Intuition A few, homogeneous Good Compression blocks …with appropriate implies compression scheme Good Compression

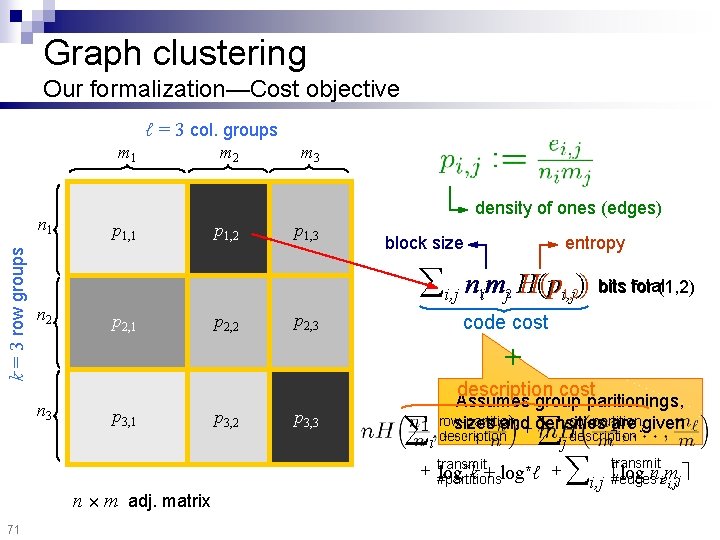

Graph clustering Our formalization—Cost objective ℓ = 3 col. groups m 1 k = 3 row groups n 1 m 2 m 3 density of ones (edges) p 1, 1 p 1, 2 p 1, 3 block size entropy for (1, 2) i, j nimj H(pi, j)) bits total 1 n 2 p 2, 1 p 2, 2 p 2, 3 2 1, 2 code cost + n 3 description cost p 3, 1 p 3, 2 p 3, 3 Assumes group paritionings, row-partition col-partition sizes and are jgiven i + densities description i j + transmit log*k + log*ℓ + #partitions n £ m adj. matrix 71 i, j transmit log niem #edges i, j j

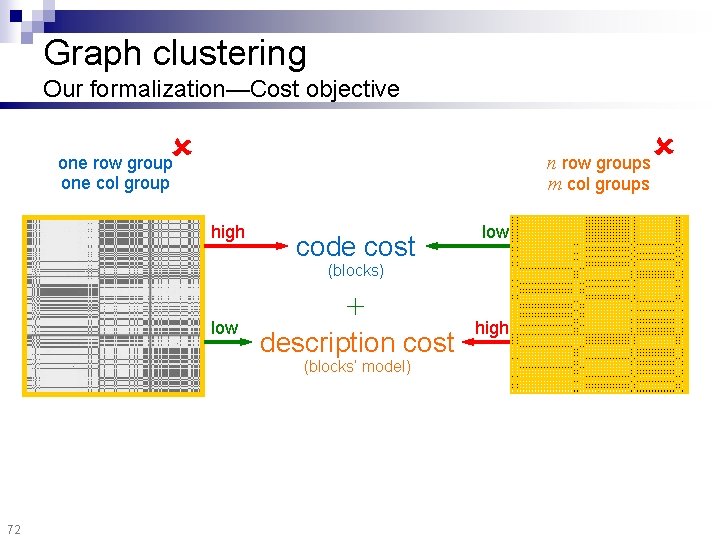

Graph clustering Our formalization—Cost objective n row groups m col groups one row group one col group high code cost low (blocks) low + description cost (blocks’ model) 72 high

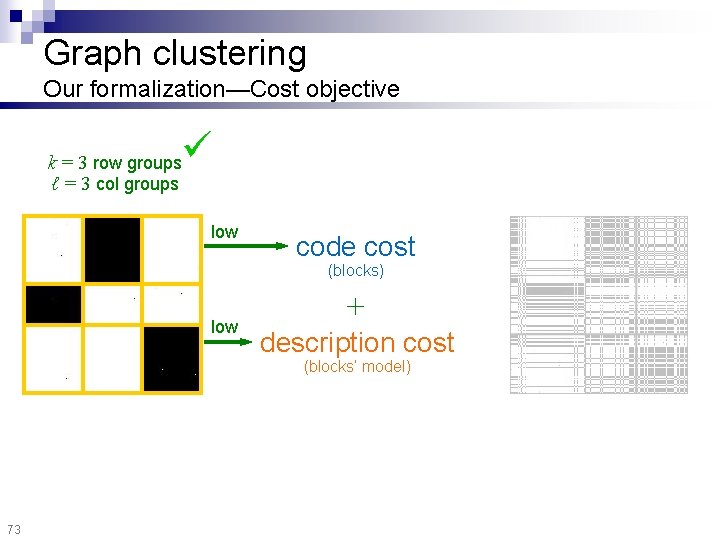

Graph clustering Our formalization—Cost objective k = 3 row groups ℓ = 3 col groups low code cost (blocks) low + description cost (blocks’ model) 73

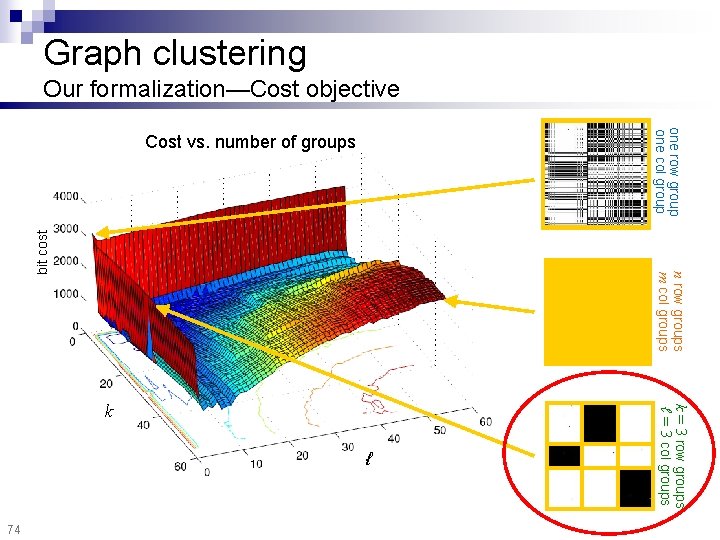

n row groups m col groups Cost vs. number of groups k ℓ k = 3 row groups ℓ = 3 col groups 74 one row group one col group bit cost Graph clustering Our formalization—Cost objective

Graph clustering 75 n Step 1: How to define a “good” partitioning? Intuition and formalization n Step 2: How to find it?

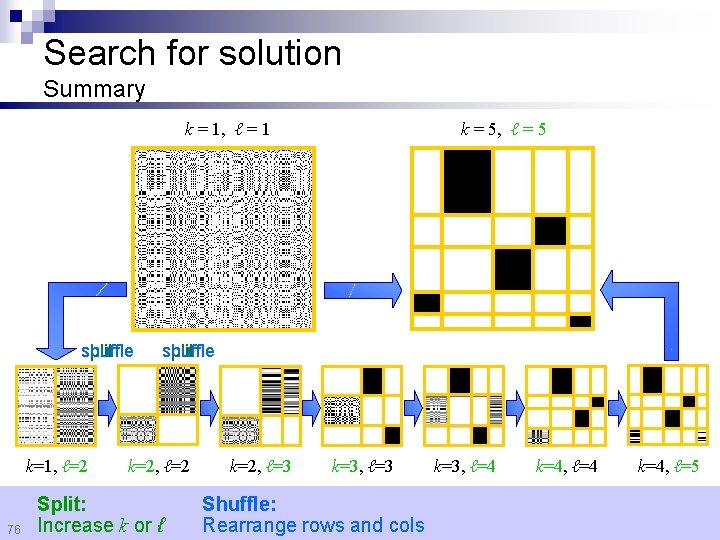

Search for solution Summary k = 1, ℓ = 1 shuffle split k=1, ℓ=2 76 k = 5, ℓ = 5 shuffle split k=2, ℓ=2 Split: Increase k or ℓ k=2, ℓ=3 k=3, ℓ=3 Shuffle: Rearrange rows and cols k=3, ℓ=4 k=4, ℓ=5

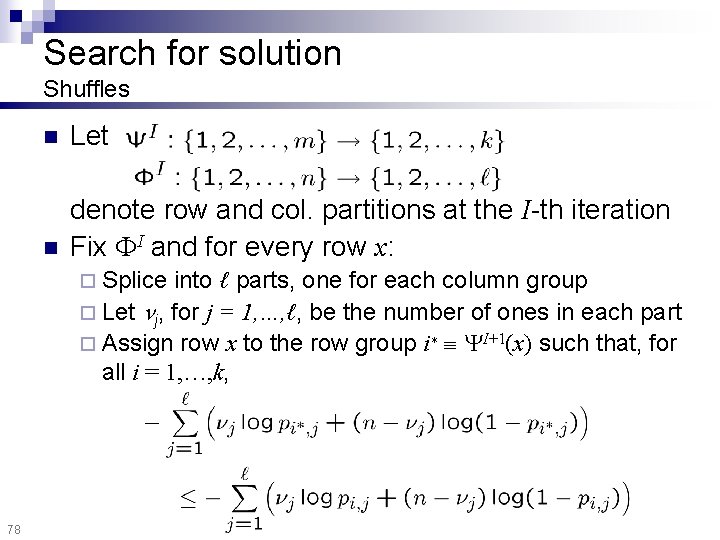

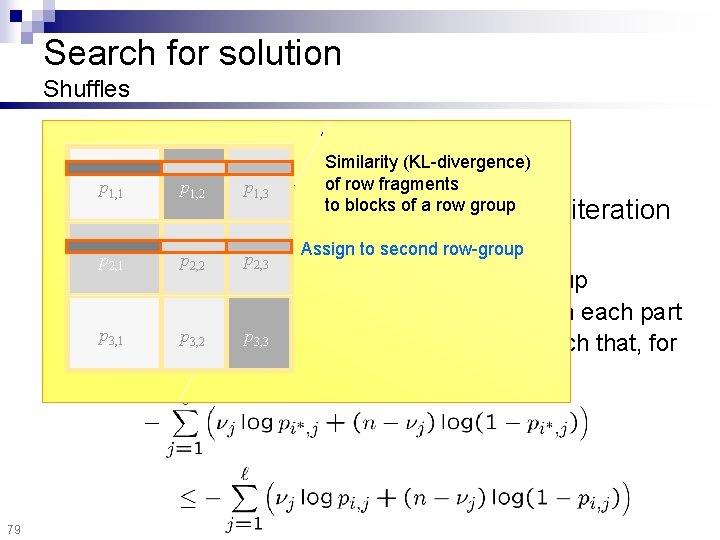

Search for solution Shuffles n Let n denote row and col. partitions at the I-th iteration Fix I and for every row x: ¨ Splice into ℓ parts, one for each column group ¨ Let j, for j = 1, …, ℓ, be the number of ones in each part ¨ Assign row x to the row group i¤ I+1(x) such that, for all i = 1, …, k, 78

Search for solution Shuffles n n Let p 1, 1 p 1, 2 2, 1 2, 2 p 1, 3 Similarity (KL-divergence) of row fragments to blocks of a row partitions at group the I-th denote row and col. Assign tox: second row-group Fixp I and for pevery row p 2, 3 ¨ Splice iteration into ℓ parts, one for each column group ¨ Let j, for j = 1, …, ℓ, be the number of ones in each part p 3, 1 p 3, 2 p 3, 3 ¨ Assign row x to the row group i¤ I+1(x) such that, for all i = 1, …, k, 79

Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp ¨ Multiple timestamps [KDD 07] ¨ Experiments ¨ Hierarchical exploration ¨ Proximity tracking n 81 Conclusions

Graph stream clustering n Given a graph of interactions or associations ¨ Evolving over time ¨ One graph snapshot per timestamp n Find simultaneously ¨ Communities ¨ Their (source and destination) number ¨ Change points of community structure 82

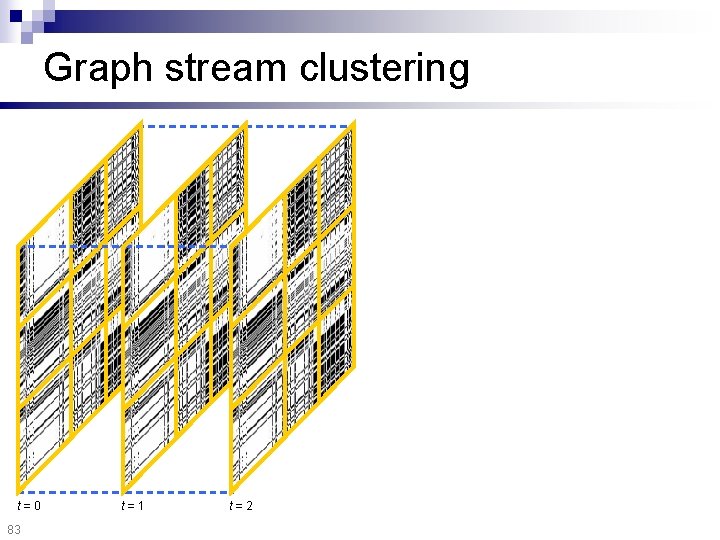

Graph stream clustering t=0 83 t=1 t=2

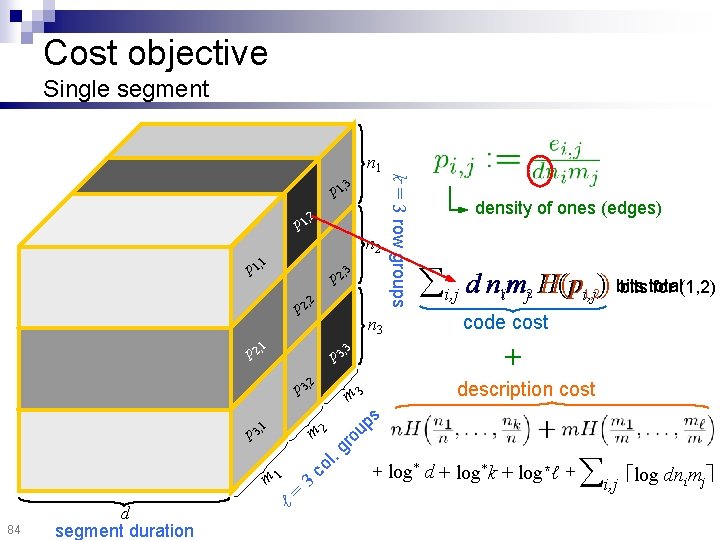

Cost objective Single segment 3 1, p 2 1, p p 2, 2 p 2, 3 p 1, 1 n 2 3, 2, p p bits total for (1, 2) i, j d nimj H(pi, j)) bits 1 2 1, 2 code cost 2 description cost 3, 2 3 ℓ= m 1 co l m 3, p 84 d segment duration . g ro up 1 s m 3 p density of ones (edges) + 3 1 n 3 k = 3 row groups n 1 + log* d + log*k + log*ℓ + i, j log dn m i j

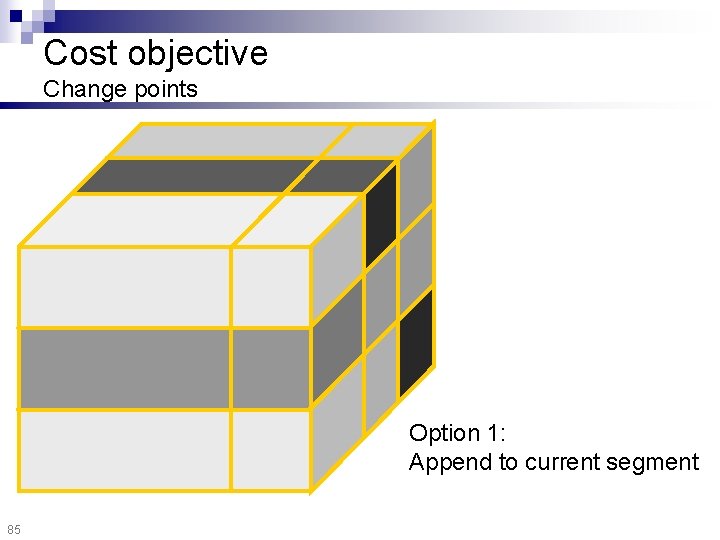

Cost objective Change points Option 1: Append to current segment 85

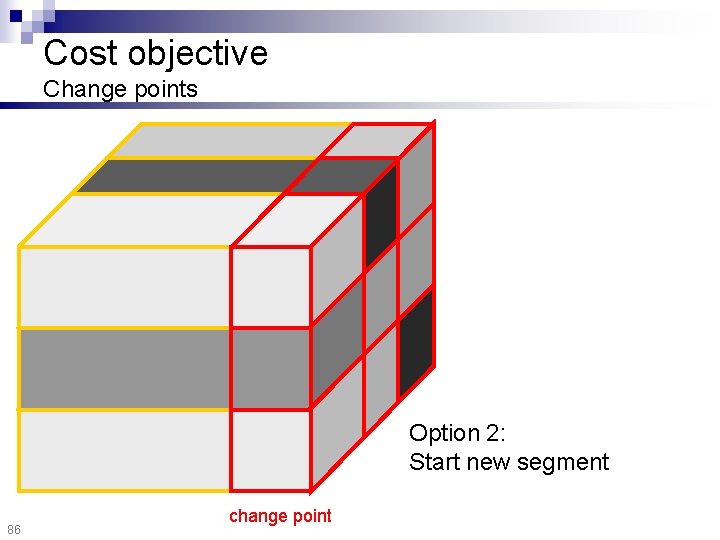

Cost objective Change points Option 2: Start new segment 86 change point

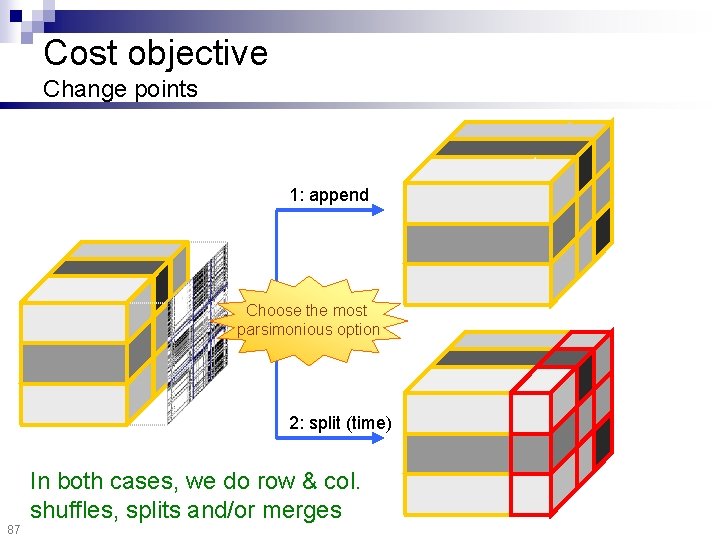

Cost objective Change points 1: append Choose the most parsimonious option 2: split (time) In both cases, we do row & col. shuffles, splits and/or merges 87

Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp ¨ Multiple timestamps ¨ Experiments ¨ Hierarchical exploration ¨ Proximity tracking n 88 Conclusions

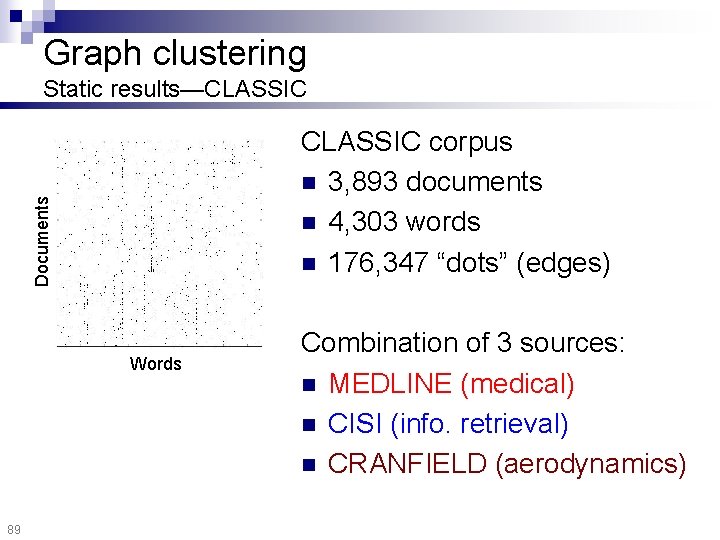

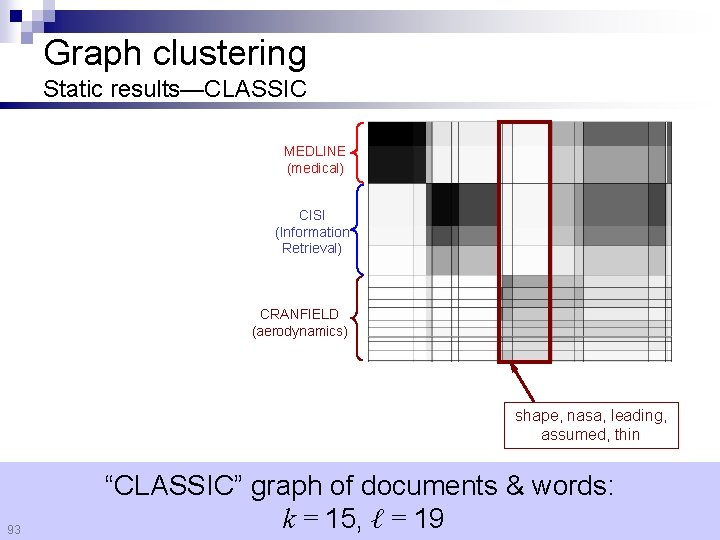

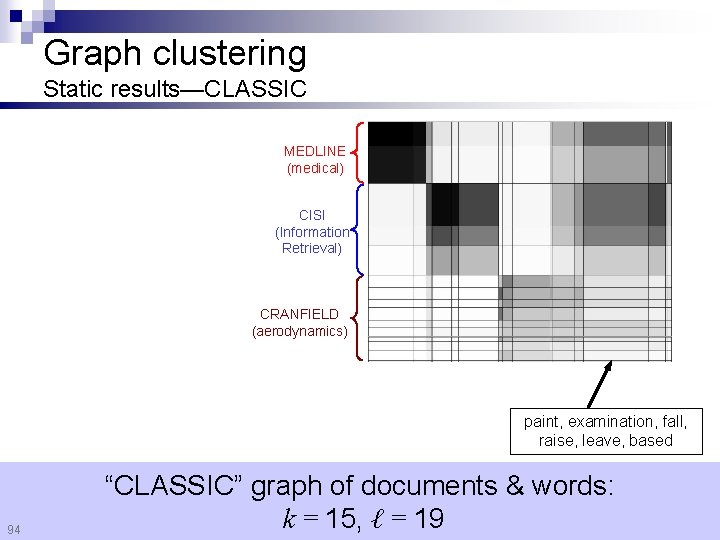

Graph clustering Static results—CLASSIC Documents CLASSIC corpus n 3, 893 documents n 4, 303 words n 176, 347 “dots” (edges) Words 89 Combination of 3 sources: n MEDLINE (medical) n CISI (info. retrieval) n CRANFIELD (aerodynamics)

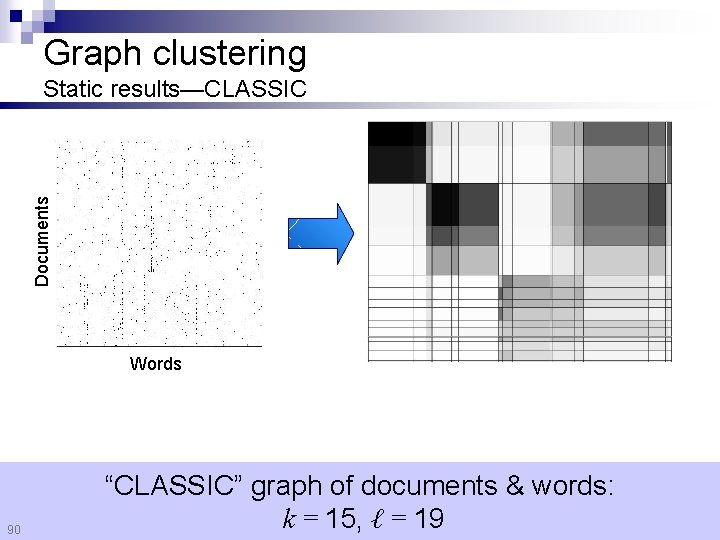

Graph clustering Documents Static results—CLASSIC Words 90 “CLASSIC” graph of documents & words: k = 15, ℓ = 19

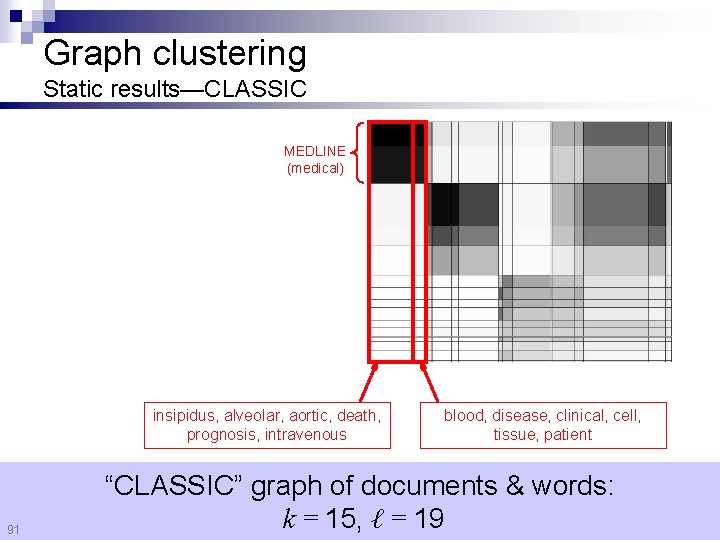

Graph clustering Static results—CLASSIC MEDLINE (medical) insipidus, alveolar, aortic, death, prognosis, intravenous 91 blood, disease, clinical, cell, tissue, patient “CLASSIC” graph of documents & words: k = 15, ℓ = 19

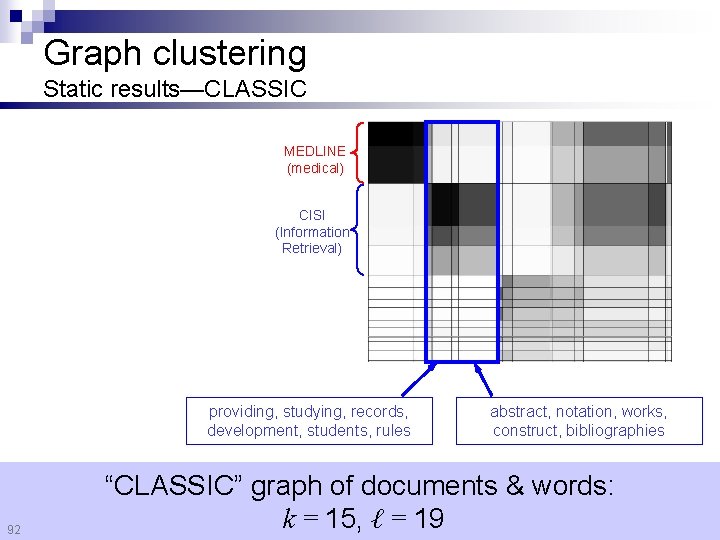

Graph clustering Static results—CLASSIC MEDLINE (medical) CISI (Information Retrieval) providing, studying, records, development, students, rules 92 abstract, notation, works, construct, bibliographies “CLASSIC” graph of documents & words: k = 15, ℓ = 19

Graph clustering Static results—CLASSIC MEDLINE (medical) CISI (Information Retrieval) CRANFIELD (aerodynamics) shape, nasa, leading, assumed, thin 93 “CLASSIC” graph of documents & words: k = 15, ℓ = 19

Graph clustering Static results—CLASSIC MEDLINE (medical) CISI (Information Retrieval) CRANFIELD (aerodynamics) paint, examination, fall, raise, leave, based 94 “CLASSIC” graph of documents & words: k = 15, ℓ = 19

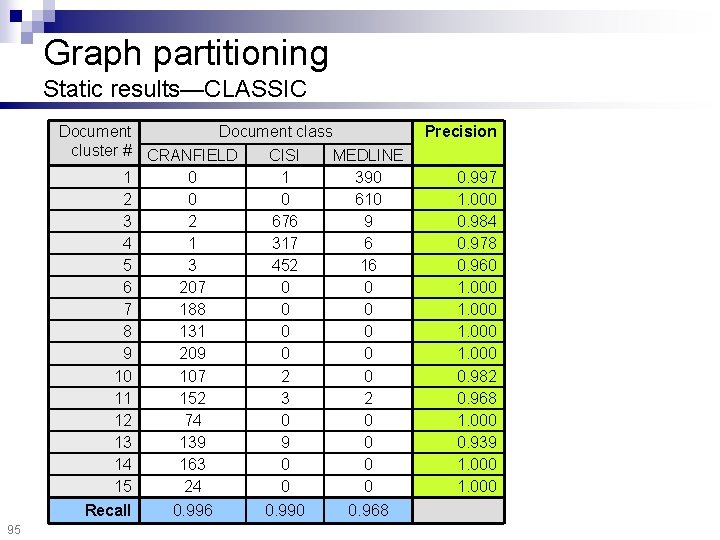

Graph partitioning Static results—CLASSIC Document class cluster # CRANFIELD CISI MEDLINE 1 0 1 390 2 0 0 610 3 2 676 9 4 1 317 6 5 3 452 16 6 207 0 0 7 188 0 0 8 131 0 0 9 209 0 0 10 107 2 0 11 152 3 2 12 74 0 0 13 139 9 0 14 163 0 0 15 24 0 0 Recall 95 0. 996 0. 990 0. 968 Precision 0. 997 1. 000 0. 984 0. 978 0. 960 1. 000 0. 982 0. 968 1. 000 0. 939 1. 000

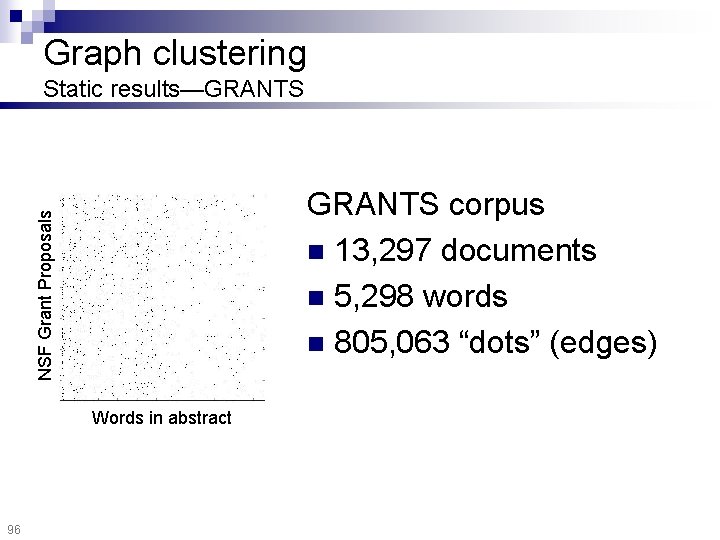

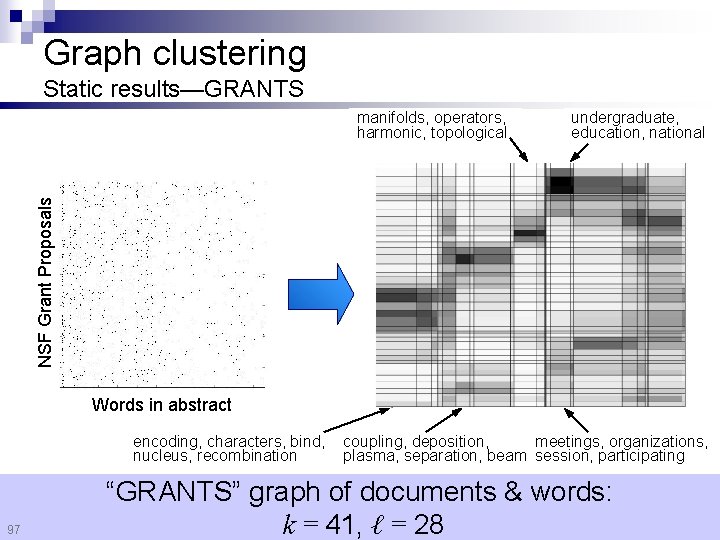

Graph clustering Static results—GRANTS NSF Grant Proposals GRANTS corpus n 13, 297 documents n 5, 298 words n 805, 063 “dots” (edges) Words in abstract 96

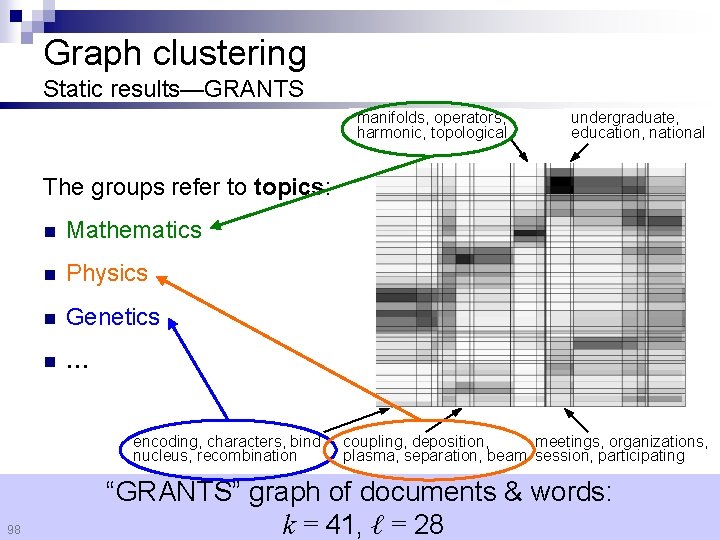

Graph clustering Static results—GRANTS undergraduate, education, national NSF Grant Proposals manifolds, operators, harmonic, topological Words in abstract encoding, characters, bind, nucleus, recombination 97 coupling, deposition, meetings, organizations, plasma, separation, beam session, participating “GRANTS” graph of documents & words: k = 41, ℓ = 28

Graph clustering Static results—GRANTS manifolds, operators, harmonic, topological undergraduate, education, national The groups refer to topics: n Mathematics n Physics n Genetics n … encoding, characters, bind, nucleus, recombination 98 coupling, deposition, meetings, organizations, plasma, separation, beam session, participating “GRANTS” graph of documents & words: k = 41, ℓ = 28

Graph clustering Static results—DBLP n Prolific authors on DBLP 3, 152 authors 25, 900 edges n Live… [http: //www. bitquill. net/trac/wiki/HCC/Start] n n 99

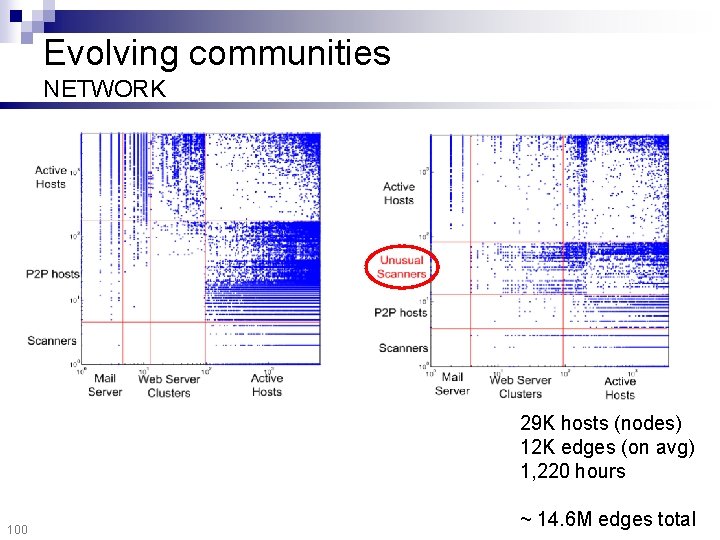

Evolving communities NETWORK 29 K hosts (nodes) 12 K edges (on avg) 1, 220 hours 100 ~ 14. 6 M edges total

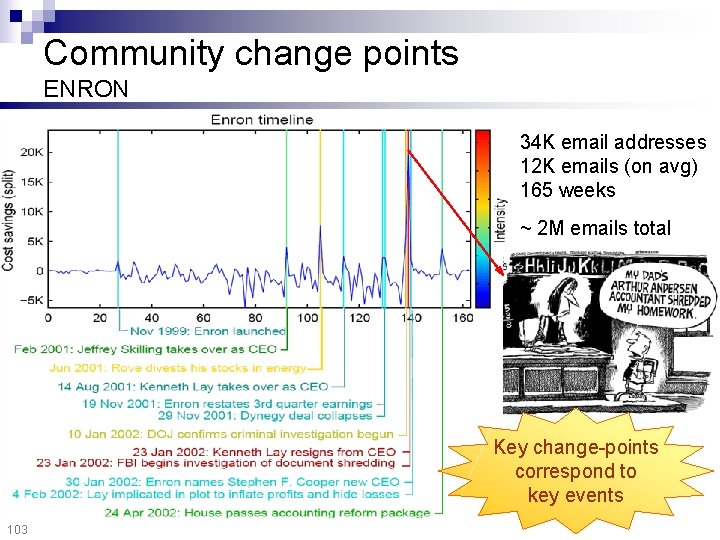

Community change points ENRON 34 K email addresses 12 K emails (on avg) 165 weeks ~ 2 M emails total Key change-points correspond to key events 103

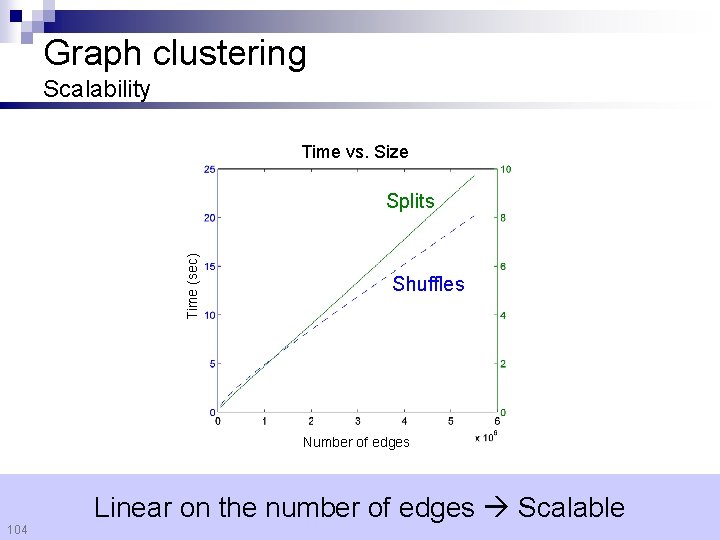

Graph clustering Scalability Time vs. Size Time (sec) Splits Shuffles Number of edges 104 Linear on the number of edges Scalable

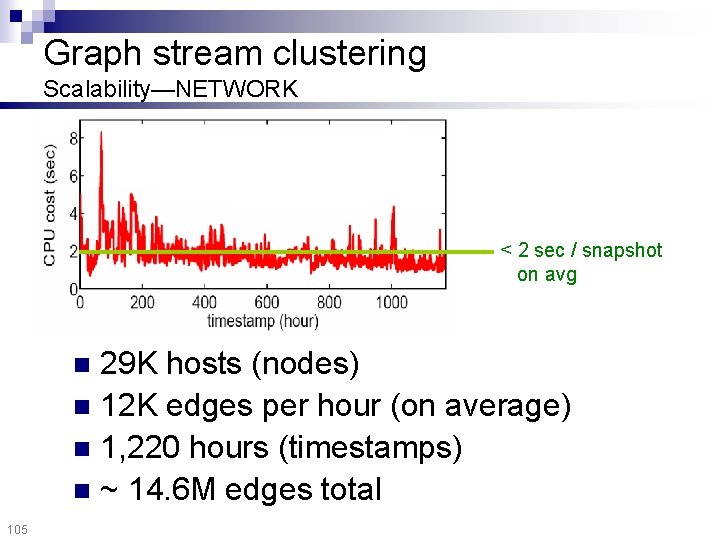

Graph stream clustering Scalability—NETWORK < 2 sec / snapshot on avg 29 K hosts (nodes) n 12 K edges per hour (on average) n 1, 220 hours (timestamps) n ~ 14. 6 M edges total n 105

Summary Organize into few, homogeneous communities Find changes in community structure Scalable Parameter-free 106 Convert raw “data” into concise “patterns”, on the fly

Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp ¨ Multiple timestamps ¨ Experiments ¨ Hierarchical exploration [PKDD 08] ¨ Proximity tracking n 107 Conclusions

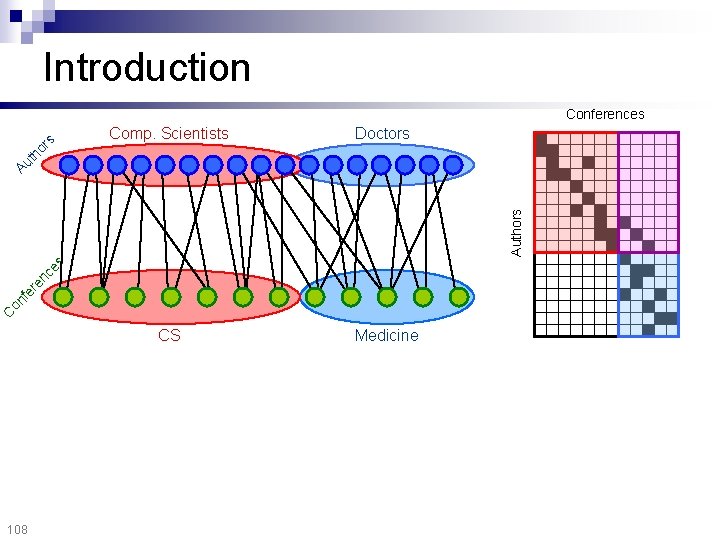

Introduction Comp. Scientists Doctors CS Medicine C on fe re n ce s Authors Au th o rs Conferences 108

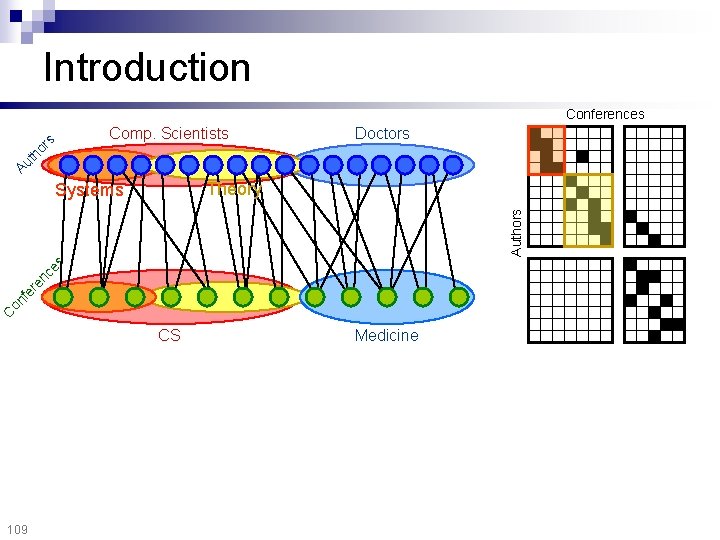

Introduction Conferences Doctors Au th o rs Comp. Scientists Theory C on fe re n ce s Authors Systems CS 109 Medicine

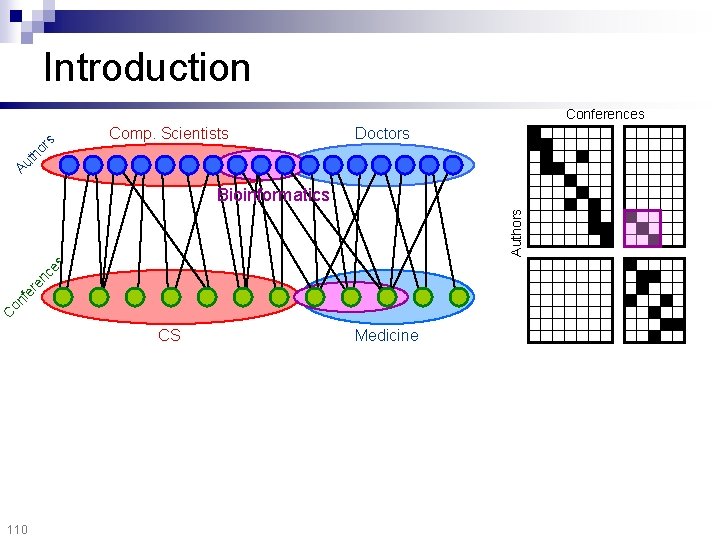

Introduction Comp. Scientists Doctors Au th o rs Conferences C on fe re n ce s Authors Bioinformatics CS 110 Medicine

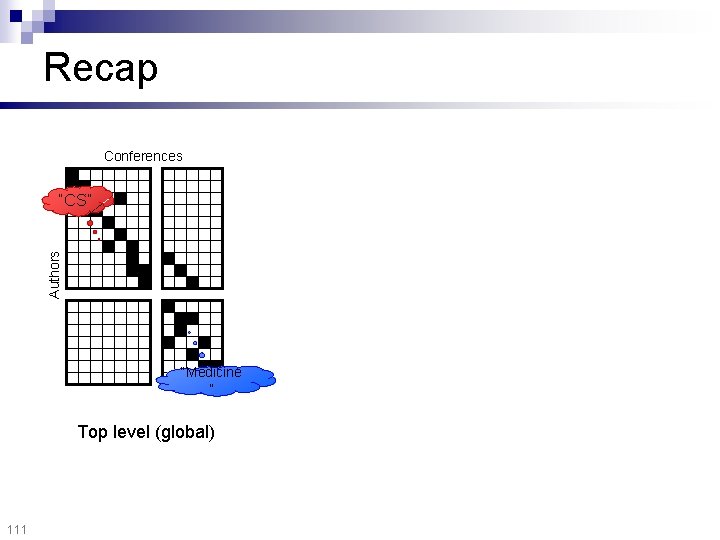

Recap Conferences Authors “CS” “Medicine ” Top level (global) 111

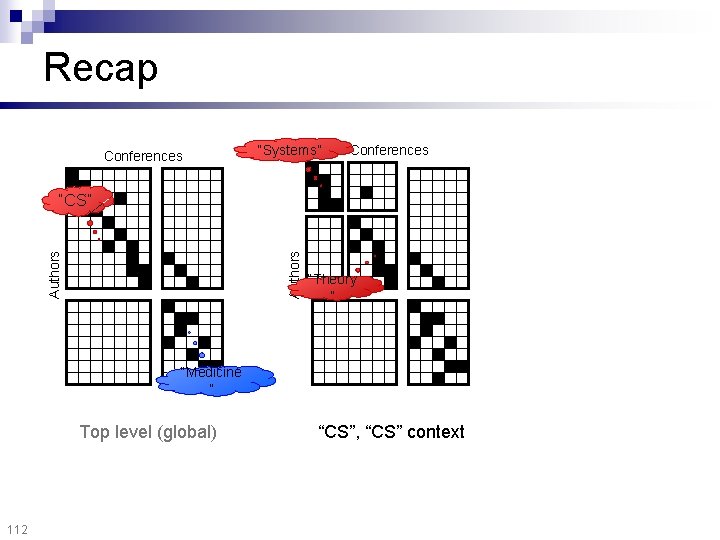

Recap Conferences “Systems” Conferences Authors “CS” “Theory ” “Medicine ” Top level (global) 112 “CS”, “CS” context

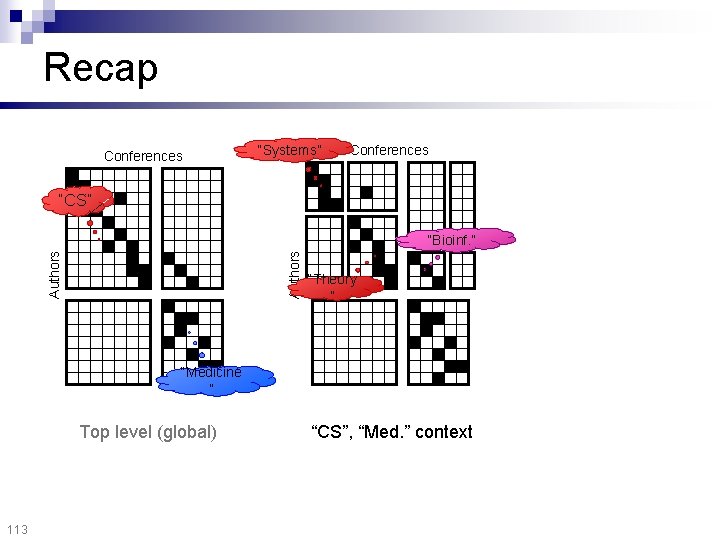

Recap Conferences “Systems” Conferences “CS” Authors “Bioinf. ” “Theory ” “Medicine ” Top level (global) 113 “CS”, “Med. ” context

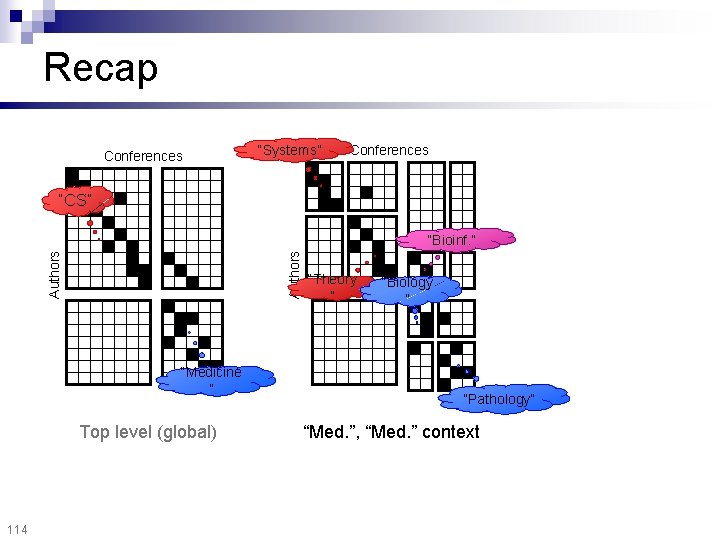

Recap Conferences “Systems” Conferences “CS” Authors “Bioinf. ” “Medicine ” Top level (global) 114 “Theory ” “Biology ” “Pathology” “Med. ”, “Med. ” context

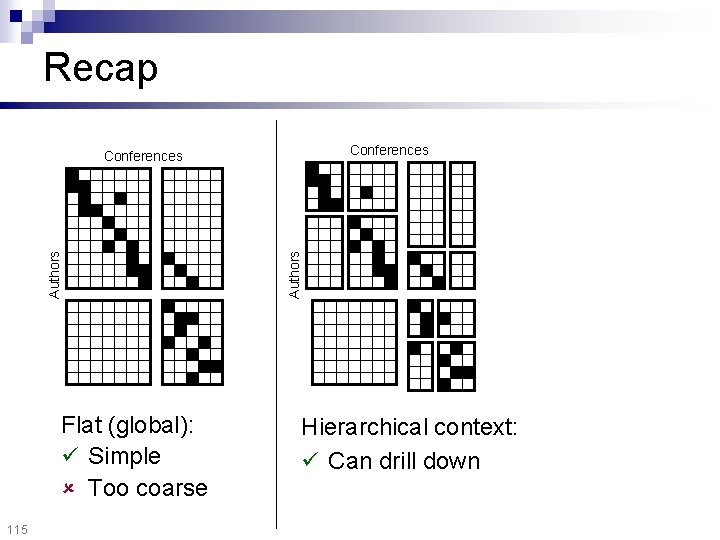

Recap Conferences Flat (global): Simple Too coarse 115 Authors Conferences Hierarchical context: Can drill down

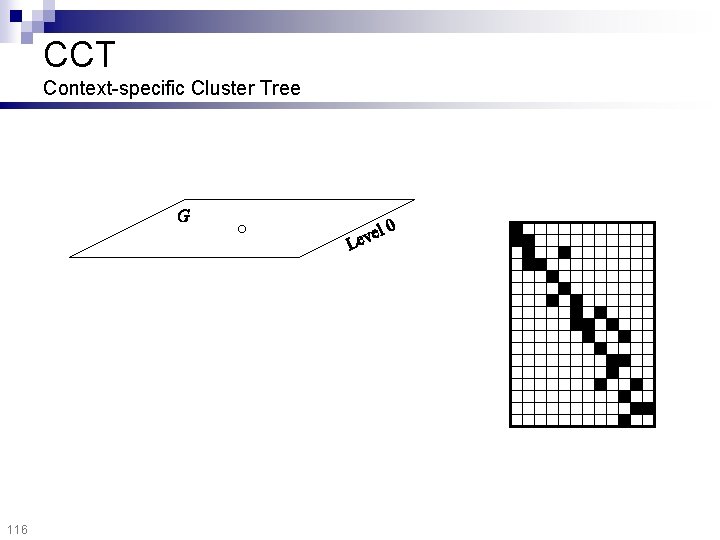

CCT Context-specific Cluster Tree 116

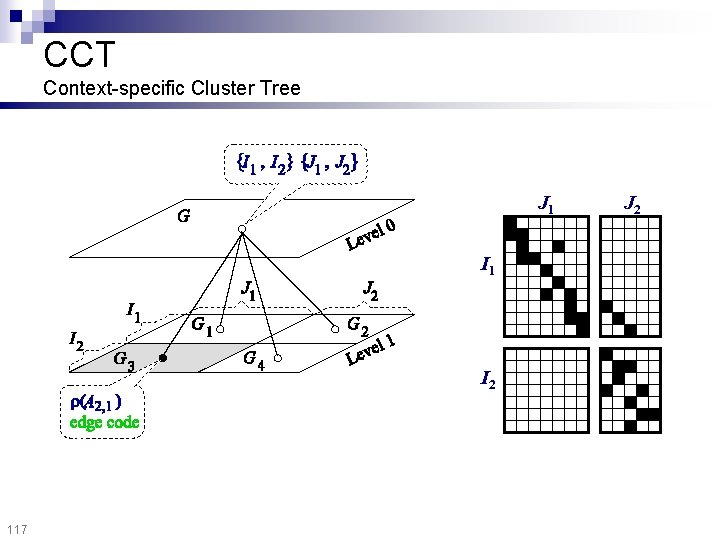

CCT Context-specific Cluster Tree J 1 I 2 117 J 2

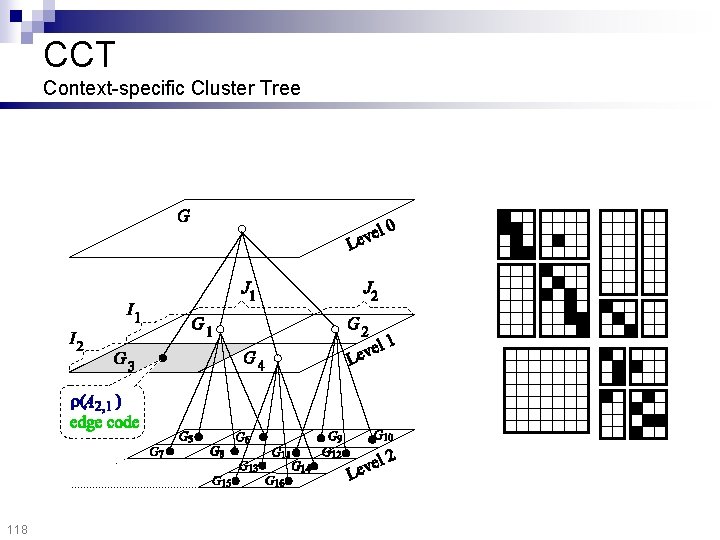

CCT Context-specific Cluster Tree 118

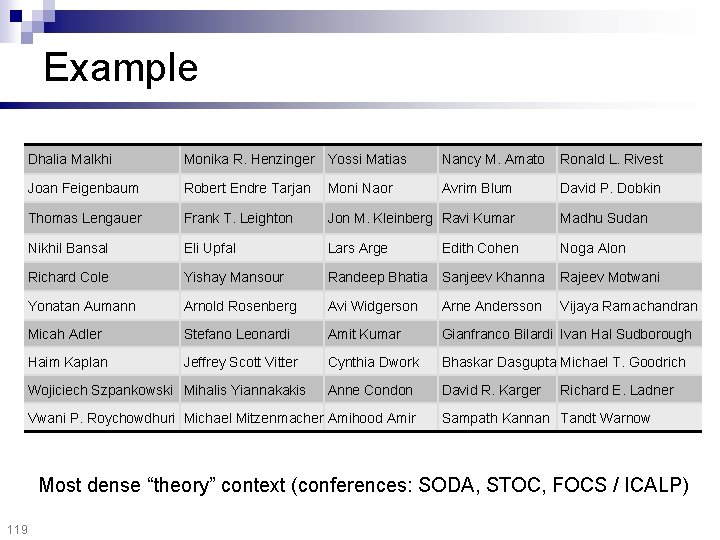

Example Dhalia Malkhi Monika R. Henzinger Yossi Matias Nancy M. Amato Ronald L. Rivest Joan Feigenbaum Robert Endre Tarjan Moni Naor Avrim Blum David P. Dobkin Thomas Lengauer Frank T. Leighton Jon M. Kleinberg Ravi Kumar Madhu Sudan Nikhil Bansal Eli Upfal Lars Arge Noga Alon Richard Cole Yishay Mansour Randeep Bhatia Sanjeev Khanna Rajeev Motwani Yonatan Aumann Arnold Rosenberg Avi Widgerson Arne Andersson Vijaya Ramachandran Micah Adler Stefano Leonardi Amit Kumar Gianfranco Bilardi Ivan Hal Sudborough Haim Kaplan Jeffrey Scott Vitter Cynthia Dwork Bhaskar Dasgupta Michael T. Goodrich Anne Condon David R. Karger Wojiciech Szpankowski Mihalis Yiannakakis Vwani P. Roychowdhuri Michael Mitzenmacher Amihood Amir Edith Cohen Richard E. Ladner Sampath Kannan Tandt Warnow Most dense “theory” context (conferences: SODA, STOC, FOCS / ICALP) 119

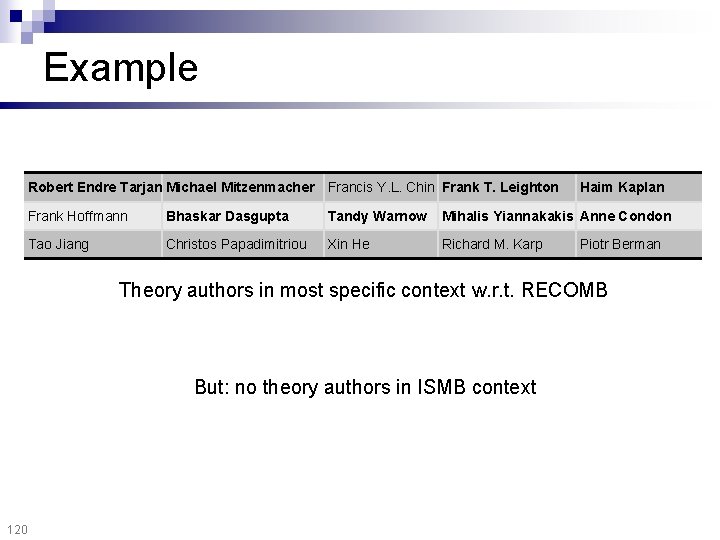

Example Robert Endre Tarjan Michael Mitzenmacher Francis Y. L. Chin Frank T. Leighton Haim Kaplan Frank Hoffmann Bhaskar Dasgupta Tandy Warnow Mihalis Yiannakakis Anne Condon Tao Jiang Christos Papadimitriou Xin He Richard M. Karp Piotr Berman Theory authors in most specific context w. r. t. RECOMB But: no theory authors in ISMB context 120

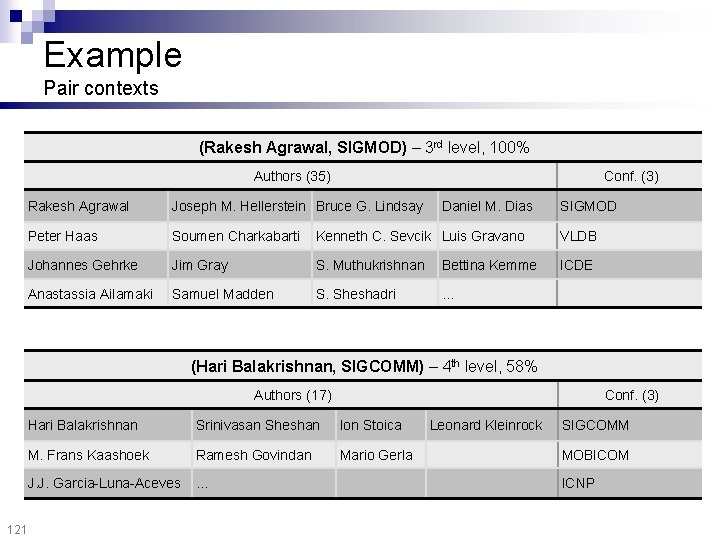

Example Pair contexts (Rakesh Agrawal, SIGMOD) – 3 rd level, 100% Authors (35) Conf. (3) Rakesh Agrawal Joseph M. Hellerstein Bruce G. Lindsay Daniel M. Dias SIGMOD Peter Haas Soumen Charkabarti Kenneth C. Sevcik Luis Gravano VLDB Johannes Gehrke Jim Gray S. Muthukrishnan Bettina Kemme ICDE Anastassia Ailamaki Samuel Madden S. Sheshadri … (Hari Balakrishnan, SIGCOMM) – 4 th level, 58% Authors (17) 121 Conf. (3) Hari Balakrishnan Srinivasan Sheshan Ion Stoica M. Frans Kaashoek Ramesh Govindan Mario Gerla J. J. Garcia-Luna-Aceves … Leonard Kleinrock SIGCOMM MOBICOM ICNP

Outline Mining numerical streams n Mining graph streams n ¨ Single timestamp ¨ Multiple timestamps ¨ Experiments ¨ Hierarchical exploration ¨ Proximity tracking [SDM 08] n 122 Conclusions

Proximity measures n Many possible measures, e. g. : ¨ Shortest-path ¨ Max-flow ¨ Random 123 walk(s)

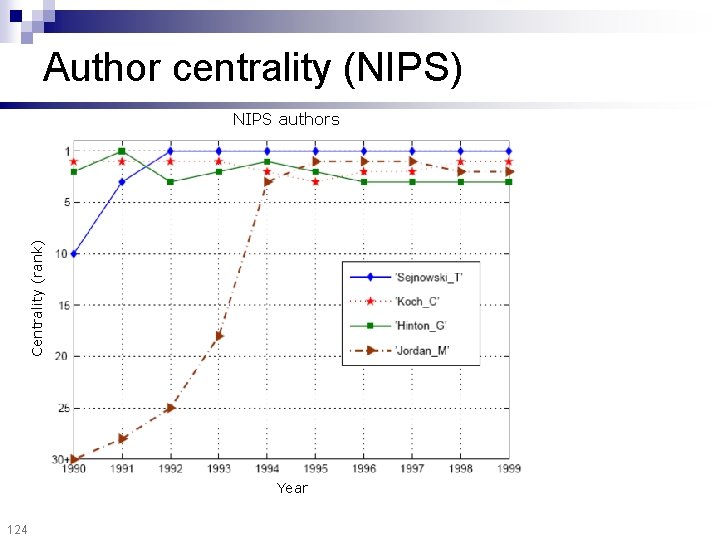

Author centrality (NIPS) Centrality (rank) NIPS authors Year 124

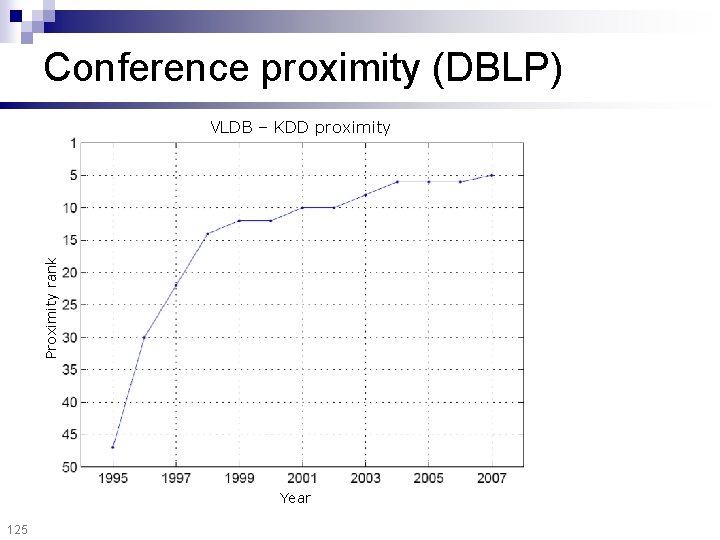

Conference proximity (DBLP) Proximity rank VLDB – KDD proximity Year 125

Outline Mining numerical streams n Mining graph streams n Conclusions n 127

Stream mining Summary Limited resources No humans in the loop Time-evolving nature n n 128 Numerical streams Graphs

Mining Stream and Graph Data Spiros Papadimitriou IBM T. J. Watson Research Center

- Slides: 122