Front End vs Back End of a Compilers

Front End vs Back End of a Compilers Ø Ø 1 The phases of a compiler are collected into front end and back end. The front end consists of those phases that depend mostly on the source program. These normally include Lexical and Syntactic analysis, Semantic analysis , and the generation of intermediate code

Front End vs Back End of a Compilers(Cont’d) l 2 A certain amount of code optimization can be done by front end as well.

Front End vs Back End of a Compilers(Cont’d) Ø 3 The BACK END includes the code optimization phase and final code generation phase, along with the necessary error handling and symbol table operations.

Front End vs Back End of a Compilers(Cont’d) Ø 4 The front end analyzes the source program and produces intermediate code while the back end produces the target program from the intermediate code.

Front End vs Back End of a Compilers(Cont’d) Ø 5 It is also tempting to compile several different languages into the same intermediate language and use a common back end for the different front ends, thereby obtaining several compilers for one machine.

Passes Ø 6 In an implementation of a complier, portion of one or more phases are combined into a module(unit) called a pass

Passes(cont’d) Ø Ø 7 Several phases of complier are usually implemented in a single pass consisting of reading an input file and writing as output file. It is common for several phases to be grouped into one pass and for the activity of these phases to be interleaved during the pass

Passes(cont’d) Ø Ø 8 For example lexical analysis , syntax analysis, semantic analysis and intermediate code generation might be grouped into one pass. If so, the token stream after lexical analysis may be translated directly into intermediate code.

Passes(cont’d) Ø 9 A pass reads the source program or out put of the previous pass make the transformation specified by its phases and writes output into an intermediate file , which may then be read by a subsequent pass.

Multi Pass Compiler Ø Ø 10 A multi pass compiler can be made a useless space than a single pass compiler. Since the space occupied by the complier program for one pass can be reused by the following pass.

Multi Pass Compiler(cont’d) Ø 11 A multi pass complier is of the course slower than a single pass compiler, because each pass reads and writes an intermediate file.

Multi Pass Compiler(cont’d) Ø 12 Thus compiler running in computers with small memory would normally use several passes while on a computer with a large random memory , a compiler with fewer passes would be possible.

Reducing The No of Passes It is desired to have relatively few passes, since it takes time to read and write intermediate files. on the other hand , if we group several phases into one pass, we may be forced to keep the entire program in memory. 13

Reducing The No of Passes(cont’d) Because one phase may need information in a different order than a previous phase produce it. 14

Reducing The No of Passes(cont’d) The internal form of the program may be considerably larger than either the source program or the target program , so this space may not be a trivial matter. 15

Reducing The No of Passes(cont’d) For some phases, grouping into one pass presents few problems. For example, the interface b/w lexical and syntactic analyzers can often be limited to a single token. 16

Reducing The No of Passes(cont’d) On the other hand, it is often very hard to perform code generation until the intermediate representation has been completely generated. 17

Reducing The No of Passes(cont’d) For example, languages like PL/I and Algol 68 permit variables to be used before they are declared. We can not generate the target code for a construct if we do not know the types of variables involved in that construct. 18

Reducing The No of Passes(cont’d) Similarly most languages allow goto`s that jump forward in the code. Ø We can not determine the target addresses of such a jump until we have seen the intervening source code and generated target code for it. Ø 19

Reducing The No of Passes(cont’d) In some cases , it is possible to leave a blank slot for missing information , and fill in the slot when the information becomes available. Ø In particular , intermediate and target code generation can often be merged into one pass using a technique called “back patching” Ø 20

Compiler Construction Tools Ø 21 A number of tools have been developed specifically to held construct compilers. These tools variously called compilers, compiler-generators, or translatorwriting systems, which produce a compiler from some form of specification of a source language and target m/c language.

Compiler Construction Tools(cont’d) Ø 22 Largely , they are concerned with around a particular model of languages and they are most suitable for generating compilers of languages similar to the model.

Compiler Construction Tools(cont’d) Ø 23 For example , it is tempting to assume that lexical analyzers for all languages are essentially the same, except for the particular key words and signs recognized.

Compiler Construction Tools(cont’d) Ø 24 Many compiler-compilers do in fact produce fixed lexical analysis routines for use in the generated compiler.

Compiler Construction Tools(cont’d) Ø 25 Some general tools have been created for the automatic design of specific compiler components.

Compiler Construction Tools(cont’d) The following is a list of some useful compiler construction tools. 1) Parser Generators 2) Scanner Generators 3) Syntax-directed translation Engines 4) Automatic Code Generators 5) Data Flow Engines 26

1) Parser Generators Ø 27 These produce syntax analyzers , normally from input that is based on a context free grammar. In early compilers, syntax analysis consumed not only a large fraction of the running time of a compiler but a large fraction of the intellectual effort of writing a compiler.

Parser Generators(cont’d) Ø Ø 28 This phase is now considered one of the easiest to implement. Many parser generators utilize powerful parsing algorithms that are too complex to be carried out by hand.

2) Scanner Generators Ø 29 These automatically generate lexical analyzer normally from a specification based on regular expressions.

3) Syntax Directed Translation Engine Ø 30 These produce collections of routines that walk the parse tree , generating intermediate cods.

4) Automatic Code Generators Ø 31 Such a tool takes a collection of rules that defines the translation of each operation of the intermediate language into the m/c for the target machine.

Automatic Code Generators(cont’d) Ø 32 The rules must include sufficient detail that we can handle the different possible access methods for data e. g. variables may be in registers or a fixed (static)location in memory or may be allocated a position on a stack.

5) Data Flow Engines. Ø 33 Much of the information needed to perform good code optimization involves “data flow analysis “ the gathering of information about how values are transmitted from one part of the programme to each part.

The Phases Of Compiler 34

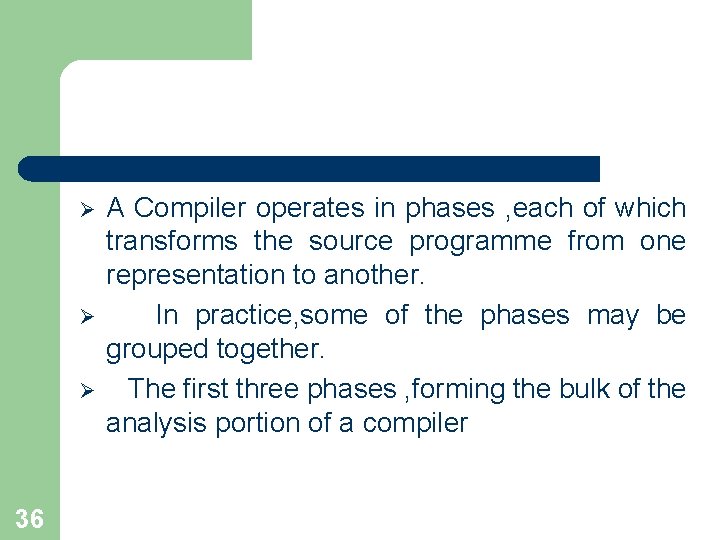

Source Program Lexical Analyzer Syntax Analyzer Symbol Table Error Handler Semantic Analyzer Manager Intermediate Code Generator Code Optimizer Code Generator Target Program 35

Ø Ø Ø 36 A Compiler operates in phases , each of which transforms the source programme from one representation to another. In practice, some of the phases may be grouped together. The first three phases , forming the bulk of the analysis portion of a compiler

Ø Ø 37 Two other activities , symbol table management and error handling, are shown interacting with the six phases of lexical analysis, syntax analysis, semantic analysis, intermediate code generation, code optimization, and code generation. Informally, we shall also call the symbol table manager and error handler Phases.

THANKS 38

- Slides: 38