Ex Tensor An Accelerator for Sparse Tensor Algebra

Ex. Tensor: An Accelerator for Sparse Tensor Algebra Kartik Hegde 1, Hadi Asghari-Moghaddam 1, Michael Pellauer 2, Neal Crago 2, Aamer Jaleel 2, Edgar Solomonik 1, Joel Emer 2, 3, Christopher W. Fletcher 1 1 UIUC 2 NVIDIA 3 MIT

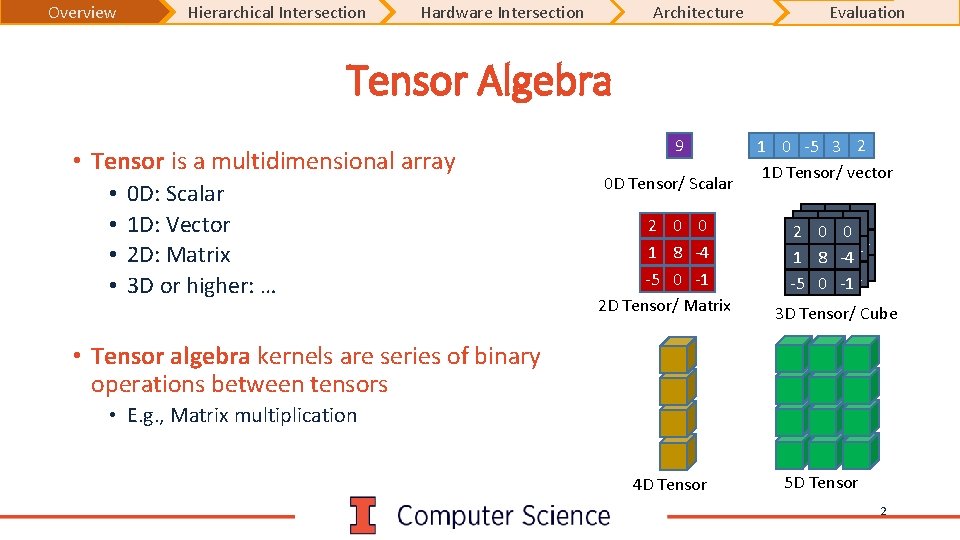

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Tensor Algebra • Tensor is a multidimensional array • • 0 D: Scalar 1 D: Vector 2 D: Matrix 3 D or higher: … 9 0 D Tensor/ Scalar 2 0 0 1 8 -4 -5 0 -1 2 D Tensor/ Matrix 1 0 -5 3 2 1 D Tensor/ vector 2 00 00 2 2 0 0 1 8 -4 11 88 -4 -4 -5 00 -1 -1 -5 -5 0 -1 3 D Tensor/ Cube • Tensor algebra kernels are series of binary operations between tensors • E. g. , Matrix multiplication 4 D Tensor 5 D Tensor 2

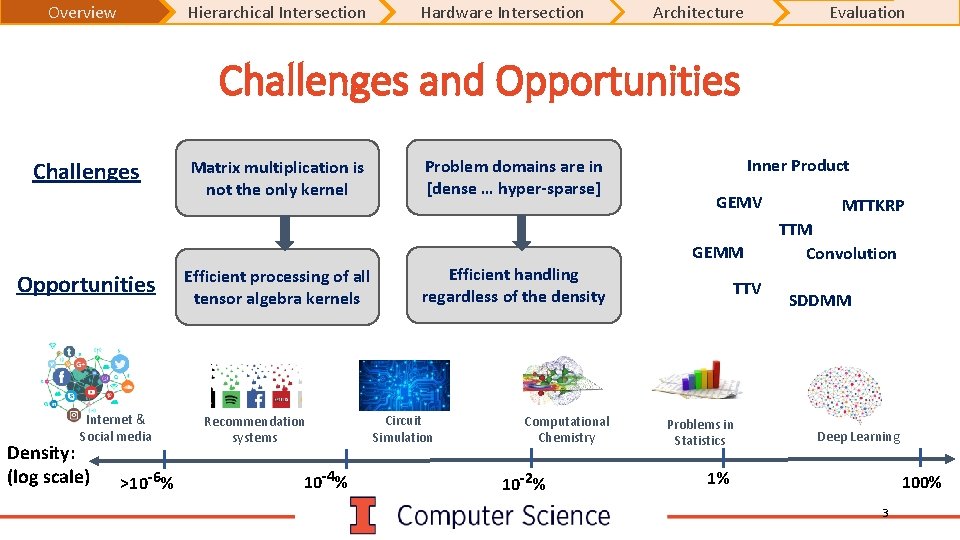

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Challenges and Opportunities Challenges Matrix multiplication is not the only kernel Problem domains are in [dense … hyper-sparse] Inner Product GEMV GEMM Opportunities Internet & Social media Density: (log scale) >10 -6% Efficient processing of all tensor algebra kernels Recommendation systems 10 -4% Efficient handling regardless of the density Circuit Simulation Computational Chemistry 10 -2% TTV Problems in Statistics MTTKRP TTM Convolution SDDMM Deep Learning 1% 100% 3

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Ex. Tensor Contributions • Ex. Tensor: The first accelerator for sparse tensor algebra • Key Idea: Hierarchical Intersection Removing operations by exploiting sparsity • Novel intersection algorithm and hardware design 4

Intersection Opportunities

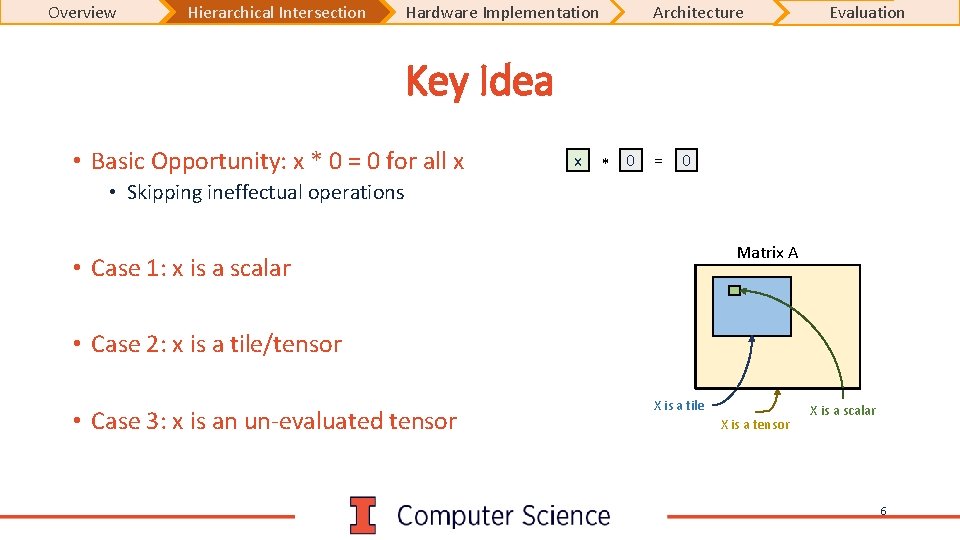

Overview Hierarchical Intersection Hardware Implementation Architecture Evaluation Key Idea • Basic Opportunity: x * 0 = 0 for all x x * 0 = 0 • Skipping ineffectual operations Matrix A • Case 1: x is a scalar • Case 2: x is a tile/tensor • Case 3: x is an un-evaluated tensor X is a tile X is a tensor X is a scalar 6

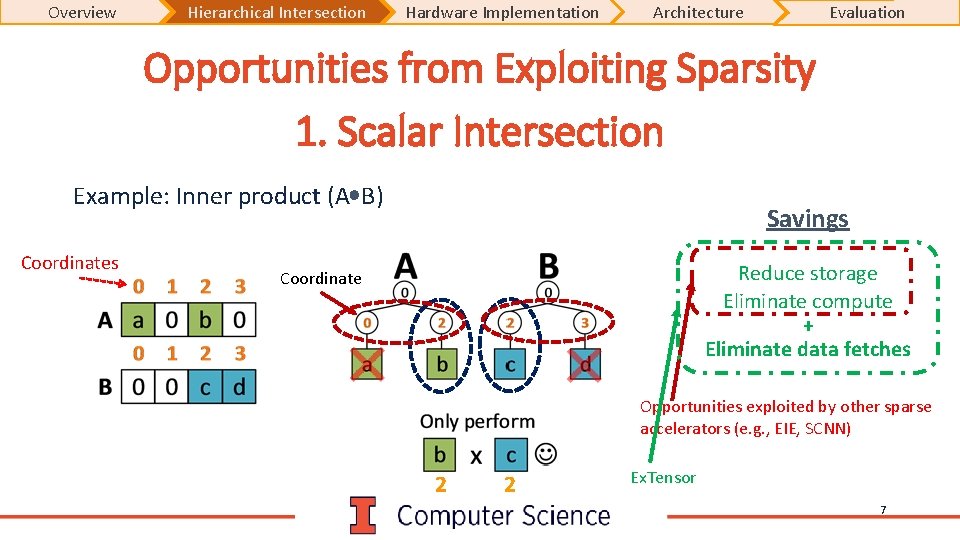

Overview Hierarchical Intersection Hardware Implementation Architecture Evaluation Opportunities from Exploiting Sparsity 1. Scalar Intersection Example: Inner product (A B) Coordinates Savings Reduce storage Eliminate compute + Eliminate data fetches Coordinate Opportunities exploited by other sparse accelerators (e. g. , EIE, SCNN) 2 2 Ex. Tensor 7

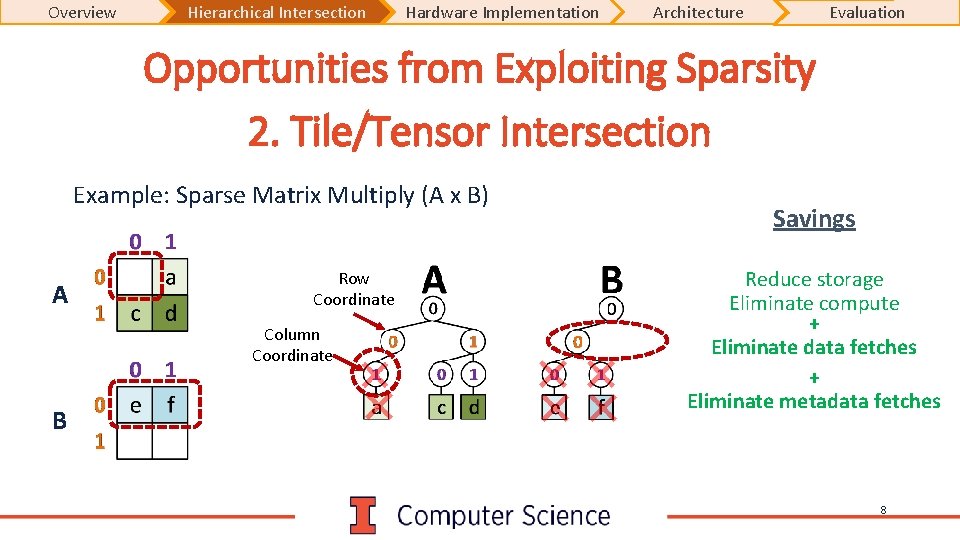

Overview Hierarchical Intersection Hardware Implementation Architecture Evaluation Opportunities from Exploiting Sparsity 2. Tile/Tensor Intersection Example: Sparse Matrix Multiply (A x B) A Row Coordinate Column Coordinate B Savings Reduce storage Eliminate compute + Eliminate data fetches + Eliminate metadata fetches 8

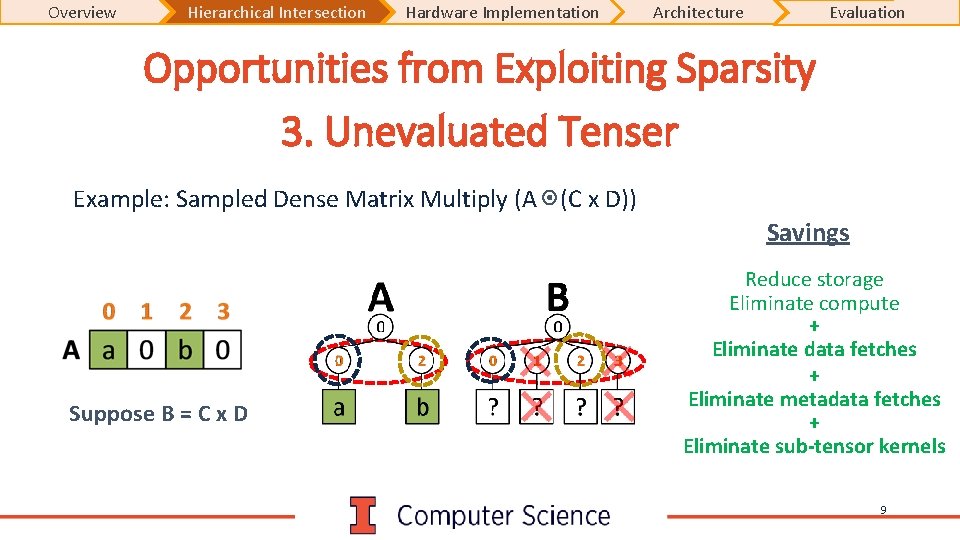

Overview Hierarchical Intersection Hardware Implementation Architecture Evaluation Opportunities from Exploiting Sparsity 3. Unevaluated Tenser Example: Sampled Dense Matrix Multiply (A (C x D)) Savings Suppose B = C x D Reduce storage Eliminate compute + Eliminate data fetches + Eliminate metadata fetches + Eliminate sub-tensor kernels 9

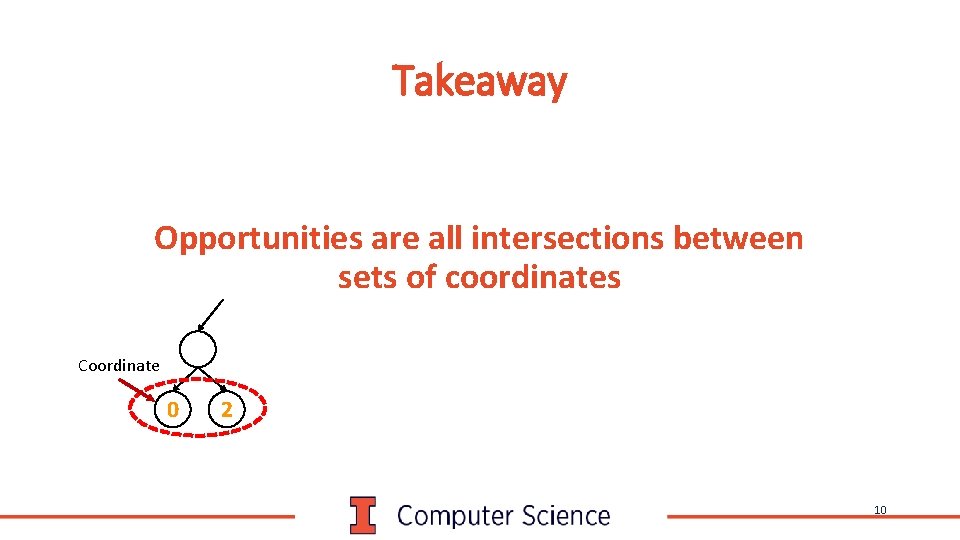

Takeaway Opportunities are all intersections between sets of coordinates Coordinate 0 2 10

Hardware for Optimized Intersection

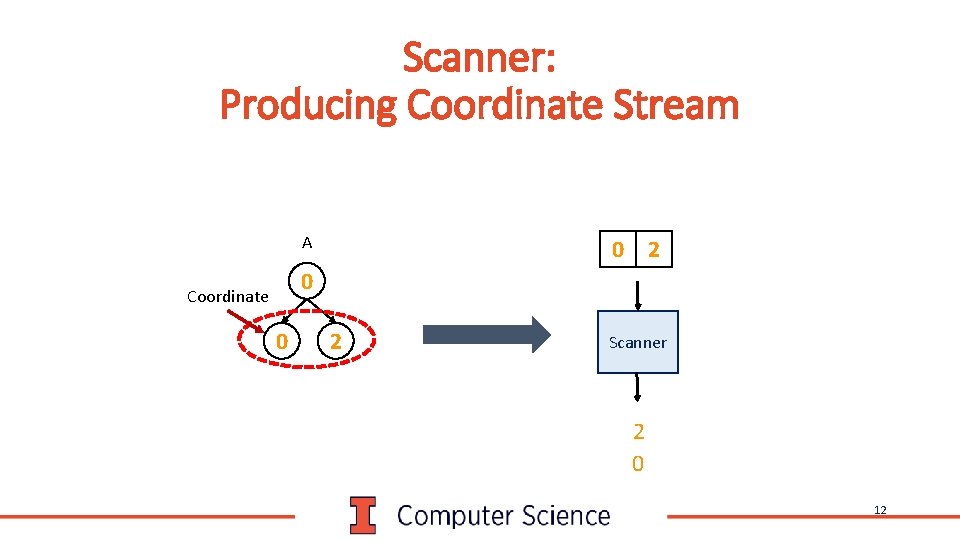

Scanner: Producing Coordinate Stream A 0 2 0 Coordinate 0 2 Scanner 2 0 12

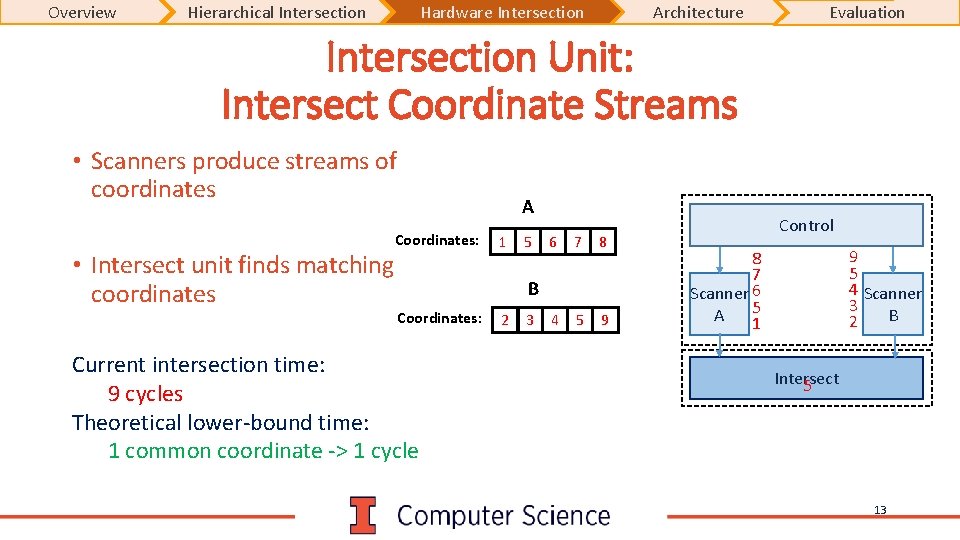

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Intersection Unit: Intersect Coordinate Streams • Scanners produce streams of coordinates • Intersect unit finds matching coordinates A Coordinates: 1 5 6 7 8 4 5 9 B Coordinates: Current intersection time: 9 cycles Theoretical lower-bound time: 1 common coordinate -> 1 cycle 2 3 Control 9 5 4 Scanner 3 B 2 8 7 Scanner 6 5 A 1 Intersect 5 13

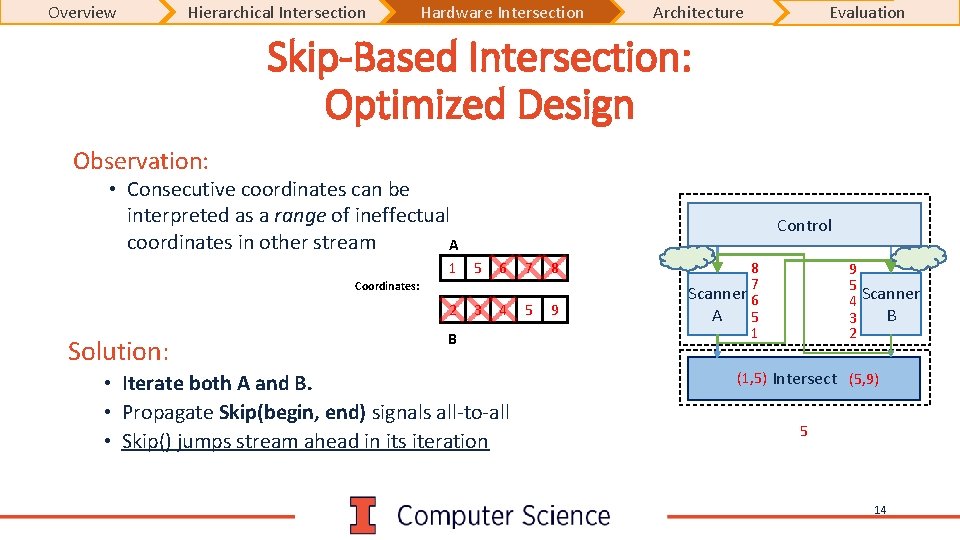

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Skip-Based Intersection: Optimized Design Observation: • Consecutive coordinates can be interpreted as a range of ineffectual coordinates in other stream A Control 1 5 6 7 8 2 3 4 5 9 Coordinates: Solution: B • Iterate both A and B. • Propagate Skip(begin, end) signals all-to-all • Skip() jumps stream ahead in its iteration 8 7 Scanner 6 A 5 1 9 5 4 Scanner B 3 2 (1, 5) Intersect (5, 9) 5 14

Extensor: Accelerator based on Hierarchical Intersection

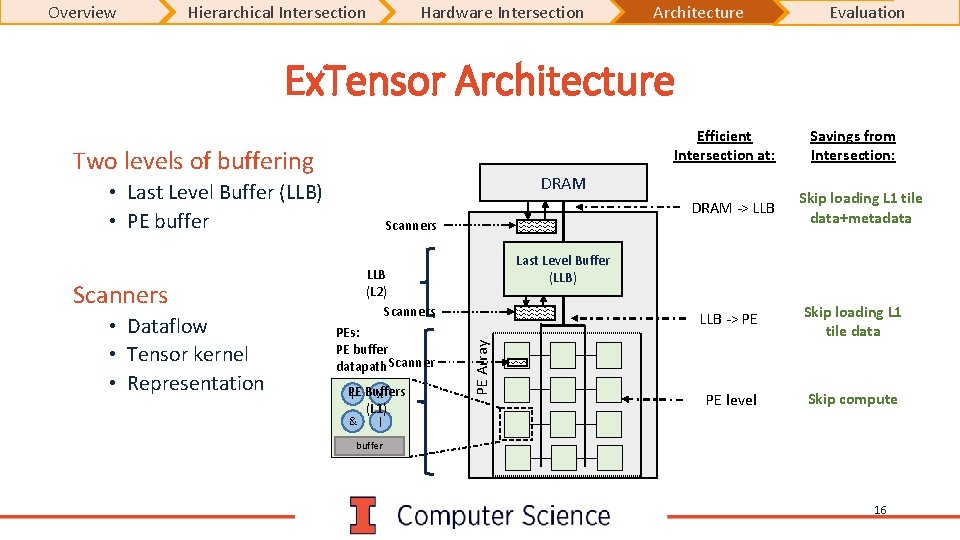

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Ex. Tensor Architecture Efficient Intersection at: Two levels of buffering DRAM • Last Level Buffer (LLB) • PE buffer Scanners PE x + Buffers (L 1) & PE Array PEs: PE buffer datapath Scanner Skip loading L 1 tile data+metadata Last Level Buffer (LLB) LLB (L 2) Scanners • Dataflow • Tensor kernel • Representation DRAM -> LLB Savings from Intersection: LLB -> PE Skip loading L 1 tile data PE level Skip compute | buffer 16

Evaluation

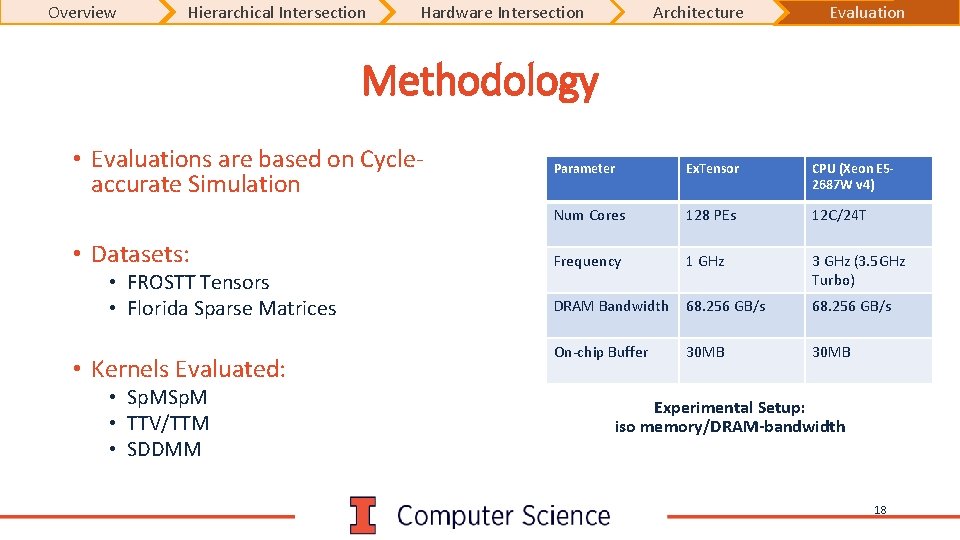

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Methodology • Evaluations are based on Cycleaccurate Simulation • Datasets: • FROSTT Tensors • Florida Sparse Matrices • Kernels Evaluated: • Sp. M • TTV/TTM • SDDMM Parameter Ex. Tensor CPU (Xeon E 52687 W v 4) Num Cores 128 PEs 12 C/24 T Frequency 1 GHz 3 GHz (3. 5 GHz Turbo) DRAM Bandwidth 68. 256 GB/s On-chip Buffer 30 MB Experimental Setup: iso memory/DRAM-bandwidth 18

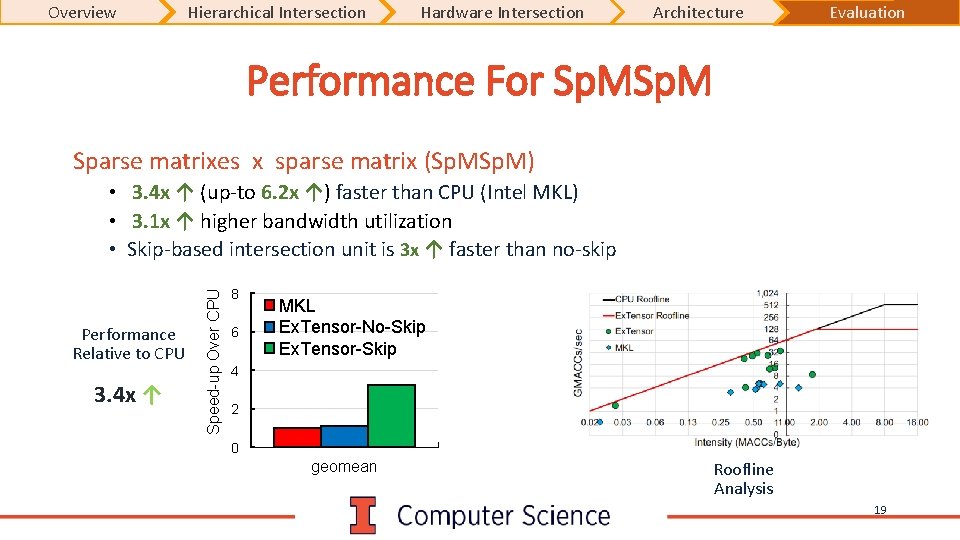

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Performance For Sp. M Sparse matrixes x sparse matrix (Sp. M) Performance Relative to CPU 3. 4 x ↑ Speed-up Over CPU • 3. 4 x ↑ (up-to 6. 2 x ↑) faster than CPU (Intel MKL) • 3. 1 x ↑ higher bandwidth utilization • Skip-based intersection unit is 3 x ↑ faster than no-skip 8 6 MKL Ex. Tensor-No-Skip Ex. Tensor-Skip 4 2 0 geomean Roofline Analysis 19

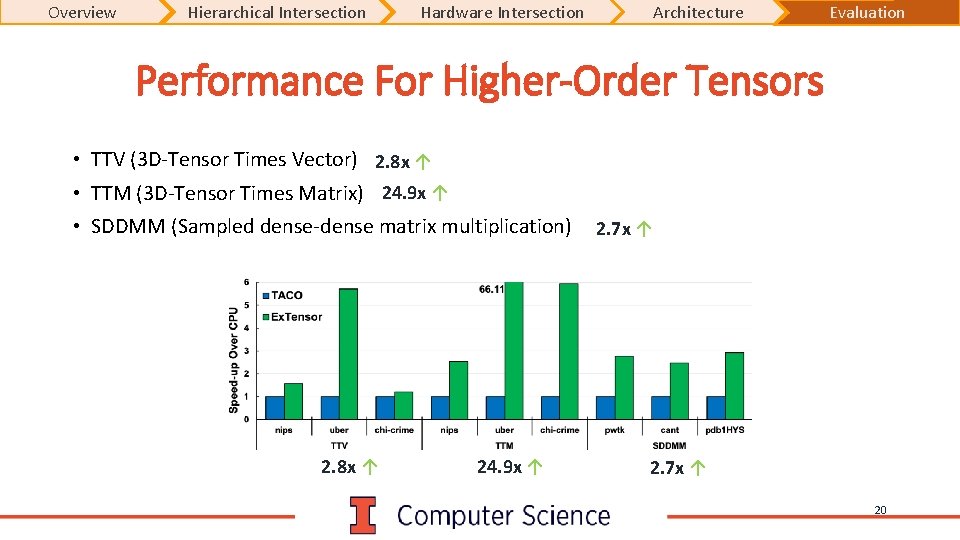

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Performance For Higher-Order Tensors • TTV (3 D-Tensor Times Vector) 2. 8 x ↑ • TTM (3 D-Tensor Times Matrix) 24. 9 x ↑ • SDDMM (Sampled dense-dense matrix multiplication) 2. 8 x ↑ 24. 9 x ↑ 2. 7 x ↑ 20

Overview Hierarchical Intersection Hardware Intersection Architecture Evaluation Conclusion • Sparse Tensor Algebra is growing in importance across domains • Variety of kernels featuring a range of sparsity • Ex. Tensor is: • A programmable accelerator for Sparse Tensor Algebra • Based on hierarchical intersection to eliminate ineffectual work • Demonstrated significant speed-up over state-of-the-art approaches • Sp. M: 3. 4 x ↑ over CPU 21

Questions 22

Backup Slides

- Slides: 23