Sparse Partitions Presented by Meiduo Wu Sparse Partitions

Sparse Partitions Presented by Meiduo Wu Sparse Partitions

Overview • Introduce some algorithms for coarsening a given partition S of a given unweighted graph G. • The general picture is similar to that of sparse covers introduced in the previous chapter, but the problem is harder and optimal bounds on radius-sparsity trade-off can’t always be achieved. 2/11/2022 Sparse Partitions 2

Low average-degree partitions • Two related algorithms: (1) The average cluster-degree partition algorithm Av_PARTc (2) The average vertex-degree partition algorithm Av_PARTv • The above two algorithms are yielded by modifying algorithm Av_COVER (in Ch 12). 2/11/2022 Sparse Partitions 3

The average cluster-degree partition algorithm Av_PARTc • algorithm Av_PARTc coarsens a given partition of an unweighted graph by a partition with low average cluster-degree. – The clusters of S don’t overlap. – Sparsity is measured by the number of neighboring clusters – Average Cluster-degree measure (Section 11. 4. 2) (S) = 1/n · | c (S)| 2/11/2022 Sparse Partitions 4

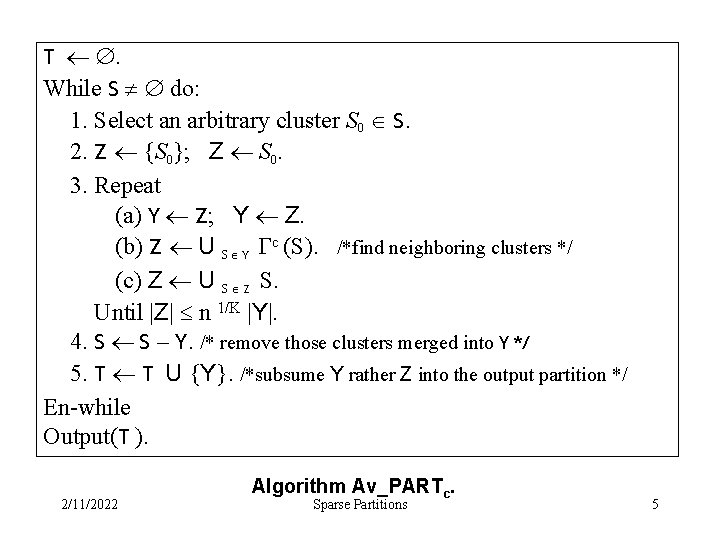

T . While S do: 1. Select an arbitrary cluster S 0 S. 2. Z {S 0}; Z S 0. 3. Repeat (a) Y Z; Y Z. (b) Z U S Y c (S). /*find neighboring clusters */ (c) Z U S Z S. Until |Z| n 1/K |Y|. 4. S S – Y. /* remove those clusters merged into Y */ 5. T T U {Y}. /*subsume Y rather Z into the output partition */ En-while Output(T ). 2/11/2022 Algorithm Av_PARTc. Sparse Partitions 5

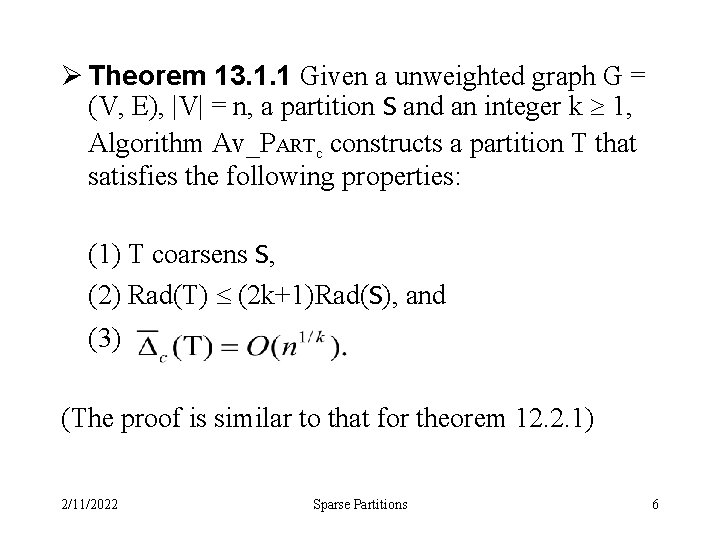

Ø Theorem 13. 1. 1 Given a unweighted graph G = (V, E), |V| = n, a partition S and an integer k 1, Algorithm Av_PARTc constructs a partition T that satisfies the following properties: (1) T coarsens S, (2) Rad(T) (2 k+1)Rad(S), and (3) (The proof is similar to that for theorem 12. 2. 1) 2/11/2022 Sparse Partitions 6

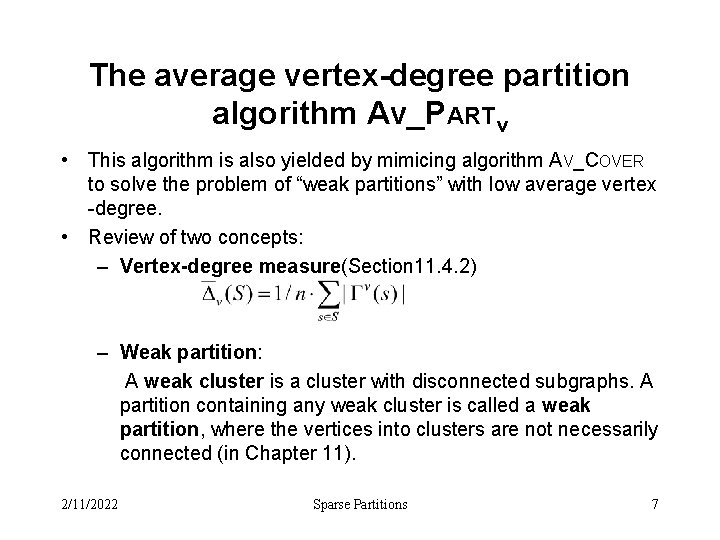

The average vertex-degree partition algorithm Av_PARTv • This algorithm is also yielded by mimicing algorithm AV_COVER to solve the problem of “weak partitions” with low average vertex -degree. • Review of two concepts: – Vertex-degree measure(Section 11. 4. 2) – Weak partition: A weak cluster is a cluster with disconnected subgraphs. A partition containing any weak cluster is called a weak partition, where the vertices into clusters are not necessarily connected (in Chapter 11). 2/11/2022 Sparse Partitions 7

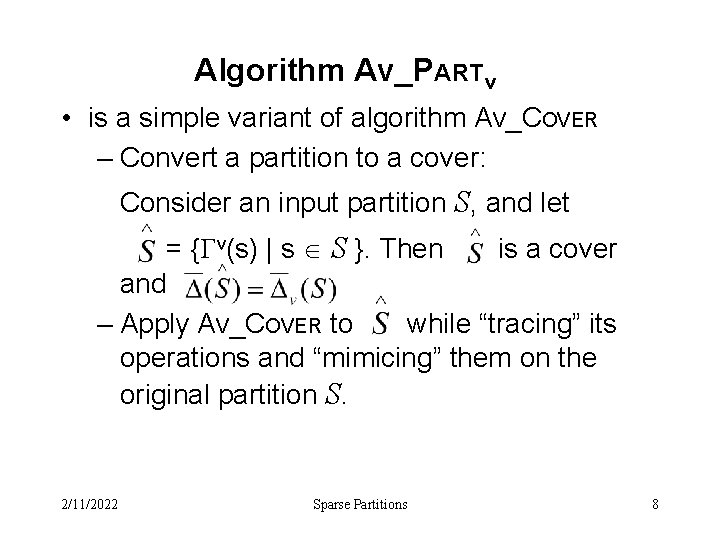

Algorithm Av_PARTv • is a simple variant of algorithm AV_COVER – Convert a partition to a cover: Consider an input partition S, and let = { v(s) | s S }. Then is a cover and – Apply AV_COVER to while “tracing” its operations and “mimicing” them on the original partition S. 2/11/2022 Sparse Partitions 8

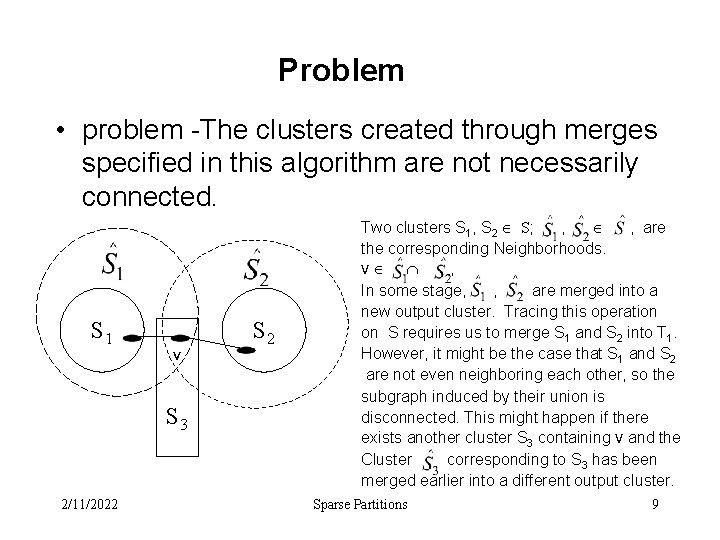

Problem • problem -The clusters created through merges specified in this algorithm are not necessarily connected. S 1 v S 3 2/11/2022 S 2 Two clusters S 1, S 2 S; , , are the corresponding Neighborhoods. V , In some stage, , are merged into a new output cluster. Tracing this operation on S requires us to merge S 1 and S 2 into T 1. However, it might be the case that S 1 and S 2 are not even neighboring each other, so the subgraph induced by their union is disconnected. This might happen if there exists another cluster S 3 containing V and the Cluster corresponding to S 3 has been merged earlier into a different output cluster. Sparse Partitions 9

AV_PARTv (con. ) • Due to the problem described, this algorithm solves a weaker problem in which the output clusters are allowed to be disconnected and cluster radii are measured in the weak sense. • We refer to this problem as the problem of “weak partitions” with low average vertexdegree. 2/11/2022 Sparse Partitions 10

Theorem established by mimicing the proof of the average cover theorem 12. 2. 1 Ø Theorem 13. 1. 2 Given an unweighted graph G = (V, E), |V| = n, a partition S and an integer k 1, Algorithm Av_PARTv constructs a weak partition T that satisfies the following properties: (1) T coarsens S, (2) W Rad(T) (2 k+1) Rad(S), and (3) 2/11/2022 Sparse Partitions 11

Low maximum-degree partitions Here we will discuss Algorithms for constructing sparse coarsening partitions according to the maximum vertex-degree and cluster-degree measures. – Start with a partition S – Proceed in phases, where each phase attempts to handle many clusters in parallel. – In each phase, the clusters of the current partition are merged to form large clusters, thus creating a new partition. – This is repeated until reaching a satisfactory partition. 2/11/2022 Sparse Partitions 12

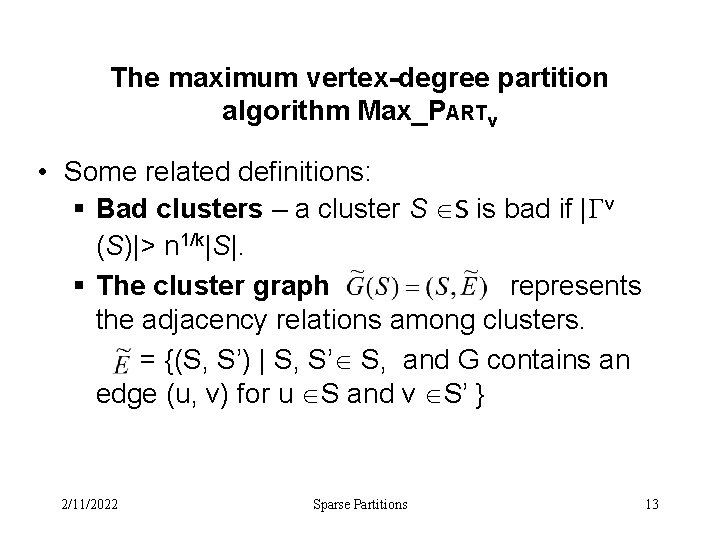

The maximum vertex-degree partition algorithm Max_PARTv • Some related definitions: § Bad clusters – a cluster S S is bad if | v (S)|> n 1/k|S|. § The cluster graph represents the adjacency relations among clusters. = {(S, S’) | S, S’ S, and G contains an edge (u, v) for u S and v S’ } 2/11/2022 Sparse Partitions 13

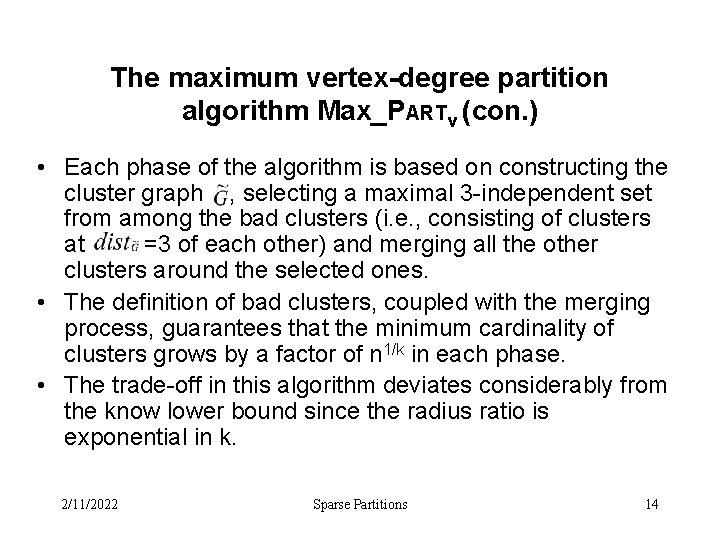

The maximum vertex-degree partition algorithm Max_PARTv (con. ) • Each phase of the algorithm is based on constructing the cluster graph , selecting a maximal 3 -independent set from among the bad clusters (i. e. , consisting of clusters at =3 of each other) and merging all the other clusters around the selected ones. • The definition of bad clusters, coupled with the merging process, guarantees that the minimum cardinality of clusters grows by a factor of n 1/k in each phase. • The trade-off in this algorithm deviates considerably from the know lower bound since the radius ratio is exponential in k. 2/11/2022 Sparse Partitions 14

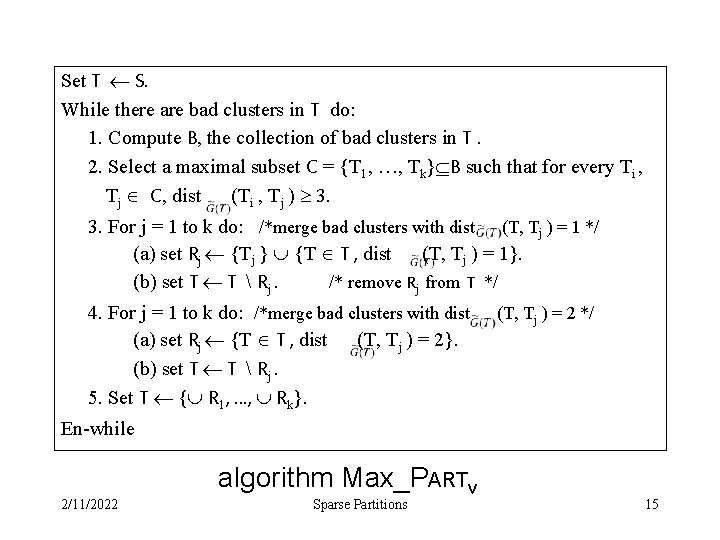

Set T S. While there are bad clusters in T do: 1. Compute B, the collection of bad clusters in T. 2. Select a maximal subset C = {T 1, …, Tk} B such that for every Ti , Tj C, dist (Ti , Tj ) 3. 3. For j = 1 to k do: /*merge bad clusters with dist (T, Tj ) = 1 */ (a) set Rj {Tj } {T T , dist (T, Tj ) = 1}. (b) set T T Rj. /* remove Rj from T */ 4. For j = 1 to k do: /*merge bad clusters with dist (a) set Rj {T T , dist (T, Tj ) = 2}. (b) set T T Rj. 5. Set T { R 1, …, Rk}. (T, Tj ) = 2 */ En-while 2/11/2022 algorithm Max_PARTv Sparse Partitions 15

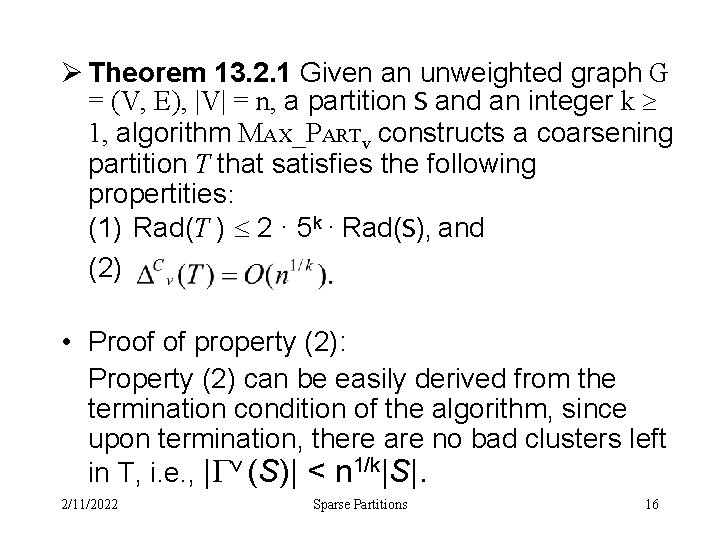

Ø Theorem 13. 2. 1 Given an unweighted graph G = (V, E), |V| = n, a partition S and an integer k 1, algorithm MAX_PARTv constructs a coarsening partition T that satisfies the following propertities: (1) Rad(T ) 2 · 5 k · Rad(S), and (2) • Proof of property (2): Property (2) can be easily derived from the termination condition of the algorithm, since upon termination, there are no bad clusters left in T, i. e. , | v (S)| < n 1/k|S|. 2/11/2022 Sparse Partitions 16

• Proof of property (1): Denote the collection T created by the ith iteration of the main loop by Ti. The input partition S is denoted T 0. Let Bi denote the subcollection of bad clusters created from Ti in step 1. – Lemma 13. 2. 2 For every i, the resulting collection Ti is a partition of G Proof by induction on i: because the case i = 0 is immediate, we assume that Ti-1 is a partition and then consider the ith iteration. For every set Rj constructed by the algorithm, Rj is a cluster(a partial partition). Ti-1 is a partition and every cluster in it was merged into Ti , so the clusters in Ti contain all vertices of V. 2/11/2022 Sparse Partitions 17

– Lemma 13. 2. 3 For every i 0, | i| n 1 -i/k and Rad(Ti) 5 i · Rad(S) + (5 i - 1) / 2. proof by induction on i: The claim are immediate for I=0. Assuming the claim for i-1, it remains to prove that | i| n-1/k | i-1| (13. 1) and Rad(Ti) 5 · Rad(Ti-1) + 2. (13. 2) • proof for 13. 1: every cluster of i-1 is merged in the ith iteration and every collection Rj consists of at least n 1/k bad clusters from i-1 and that good cluster remains good. • Proof for 13. 2: each collection Rj is concentrated around a single cluster Tj in the sense that every old cluster from Tj-1 merged into Rj neighbors either Tj or some other cluster neighboring Tj. • The two inequalities imply the claims of the lemma for i. 2/11/2022 Sparse Partitions 18

Complete the proof of Property (1) – Lemma 13. 2. 3 For every i 0, | i| n 1 -i/k and Rad(Ti) 5 i · Rad(S) + (5 i - 1) / 2. • The first claim of the lemma implies that the main loop is performed for at most K iterations. The second claim implies that Rad(Tk) 5 k ·( Rad(S) + ½) 2 · 5 k ·Rad(S). This establishes Property (1). 2/11/2022 Sparse Partitions 19

The maximum cluster-degree partition algorithm MAX_PARTc • Proceeds in phases, each merging the clusters of the current partition into larger clusters, thus creating a new partition. • The main change compared to MAX_PARTv is in the definition of badness, which now relies on cluster-neighborhoods rather than vertex-neighborhoods. That is, a cluster s S is bad if | c (s)|> n 1/k|s|. We use the same definitions for the auxiliary cluster graph and its distance. • This algorithm guarantees a polynomial radius ratio stems from its stricter criterion for cluster “badness”, which guarantees that the cardinality of clusters at least squares in each phase, hence the number of phases in at most log k. 2/11/2022 Sparse Partitions 20

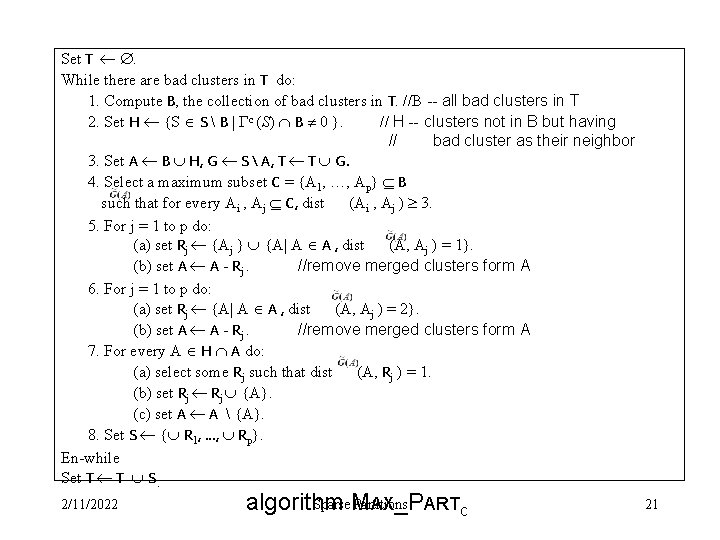

Set T . While there are bad clusters in T do: 1. Compute B, the collection of bad clusters in T. //B -- all bad clusters in T 2. Set H {S S B | c (S) B 0 }. // H -- clusters not in B but having // bad cluster as their neighbor 3. Set A B H, G S A, T T G. 4. Select a maximum subset C = {A 1, …, Ap} B such that for every Ai , Aj C, dist (Ai , Aj ) 3. 5. For j = 1 to p do: (a) set Rj {Aj } {A| A A , dist (A, Aj ) = 1}. (b) set A A - Rj. //remove merged clusters form A 6. For j = 1 to p do: (a) set Rj {A| A A , dist (A, Aj ) = 2}. (b) set A A - Rj. //remove merged clusters form A 7. For every A H A do: (a) select some Rj such that dist (A, Rj ) = 1. (b) set Rj {A}. (c) set A A {A}. 8. Set S { R 1, …, Rp}. En-while Set T T S. 2/11/2022 Sparse M Partitions algorithm AX_PARTc 21

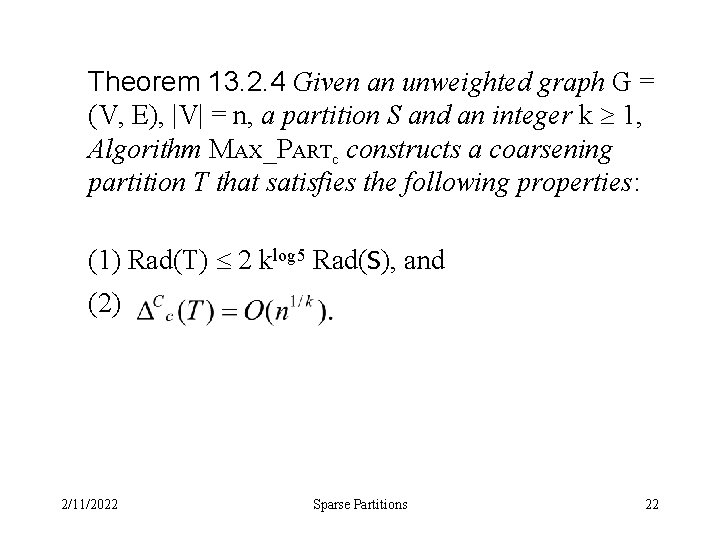

Theorem 13. 2. 4 Given an unweighted graph G = (V, E), |V| = n, a partition S and an integer k 1, Algorithm MAX_PARTc constructs a coarsening partition T that satisfies the following properties: (1) Rad(T) 2 klog 5 Rad(S), and (2) 2/11/2022 Sparse Partitions 22

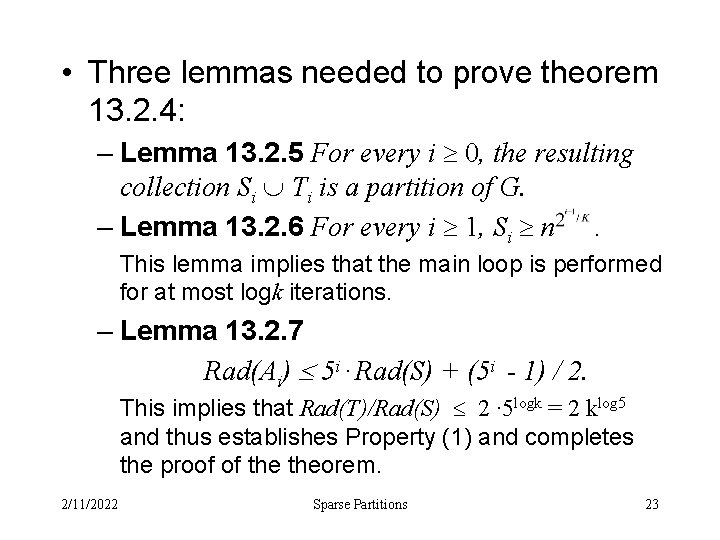

• Three lemmas needed to prove theorem 13. 2. 4: – Lemma 13. 2. 5 For every i 0, the resulting collection Si Ti is a partition of G. – Lemma 13. 2. 6 For every i 1, Si n. This lemma implies that the main loop is performed for at most logk iterations. – Lemma 13. 2. 7 Rad(Ai) 5 i · Rad(S) + (5 i - 1) / 2. This implies that Rad(T)/Rad(S) 2 · 5 logk = 2 klog 5 and thus establishes Property (1) and completes the proof of theorem. 2/11/2022 Sparse Partitions 23

End of Sparse Partitions

- Slides: 24