End to End Computing at ORNL Presented by

End to End Computing at ORNL Presented by Scott A. Klasky Computing and Computational Science Directorate Center for Computational Sciences

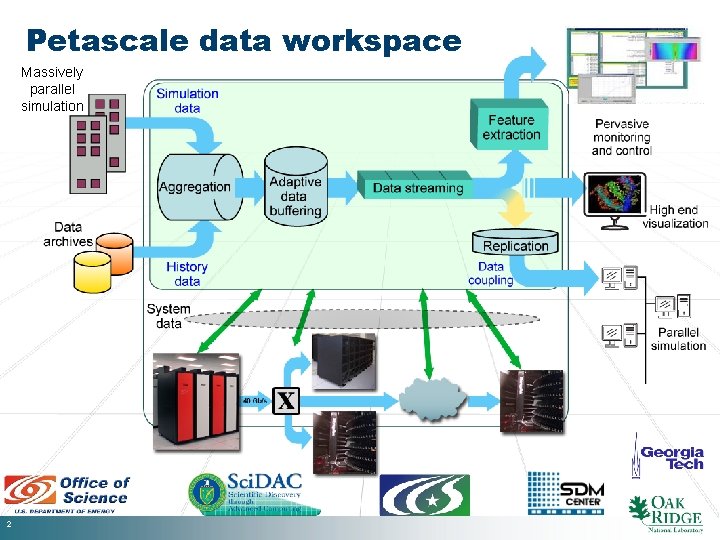

Petascale data workspace Massively parallel simulation 2

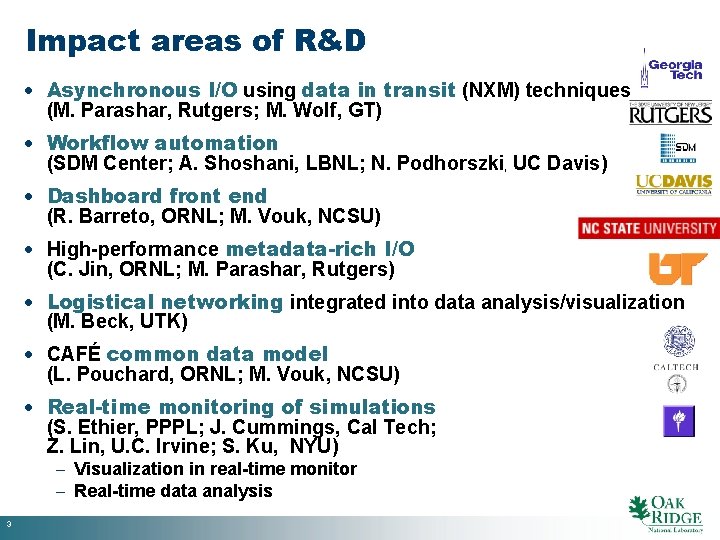

Impact areas of R&D · Asynchronous I/O using data in transit (NXM) techniques (M. Parashar, Rutgers; M. Wolf, GT) · Workflow automation (SDM Center; A. Shoshani, LBNL; N. Podhorszki, UC Davis) · Dashboard front end (R. Barreto, ORNL; M. Vouk, NCSU) · High-performance metadata-rich I/O (C. Jin, ORNL; M. Parashar, Rutgers) · Logistical networking integrated into data analysis/visualization (M. Beck, UTK) · CAFÉ common data model (L. Pouchard, ORNL; M. Vouk, NCSU) · Real-time monitoring of simulations (S. Ethier, PPPL; J. Cummings, Cal Tech; Z. Lin, U. C. Irvine; S. Ku, NYU) - Visualization in real-time monitor - Real-time data analysis 3

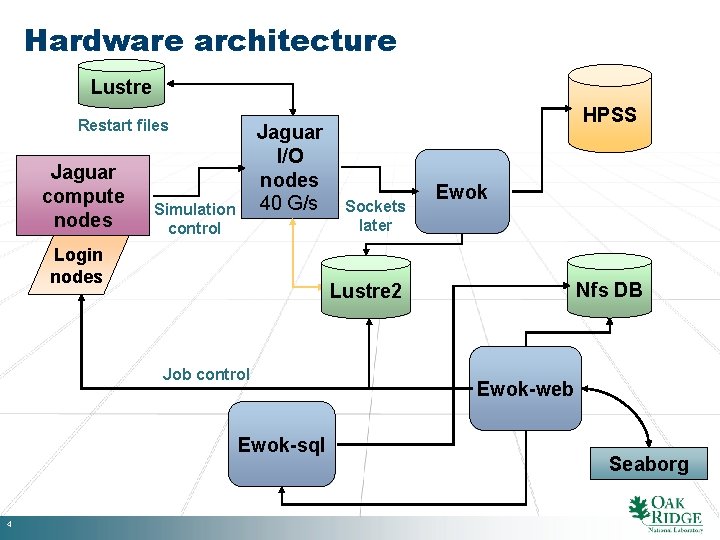

Hardware architecture Lustre Restart files Jaguar compute nodes Jaguar I/O nodes 40 G/s Simulation control Login nodes Sockets later Ewok Nfs DB Lustre 2 Job control Ewok-sql 4 HPSS Ewok-web Seaborg

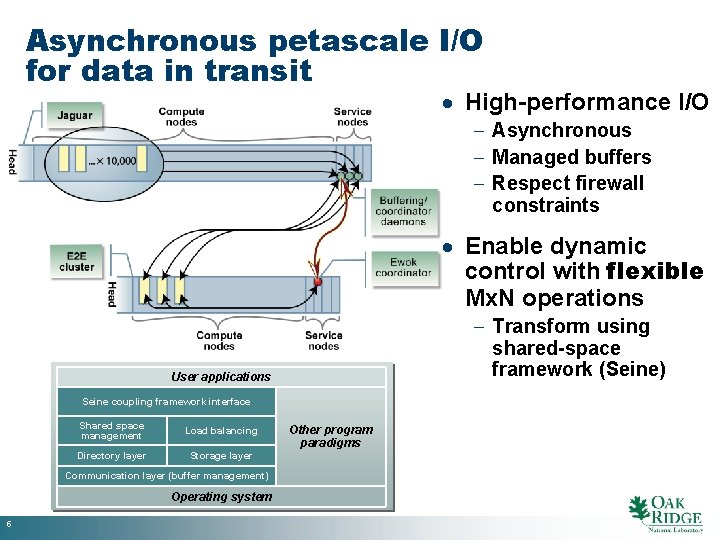

Asynchronous petascale I/O for data in transit · High-performance I/O - Asynchronous - Managed buffers - Respect firewall constraints · Enable dynamic control with flexible Mx. N operations - Transform using shared-space framework (Seine) User applications Seine coupling framework interface Shared space management Load balancing Directory layer Storage layer Communication layer (buffer management) Operating system 5 Other program paradigms

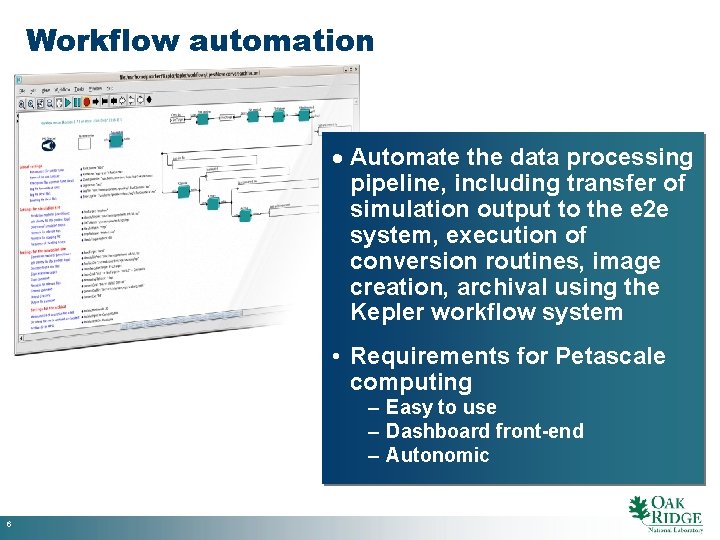

Workflow automation · Automate the data processing pipeline, including transfer of simulation output to the e 2 e system, execution of conversion routines, image creation, archival using the Kepler workflow system • Requirements for Petascale computing – Easy to use – Dashboard front-end – Autonomic 6

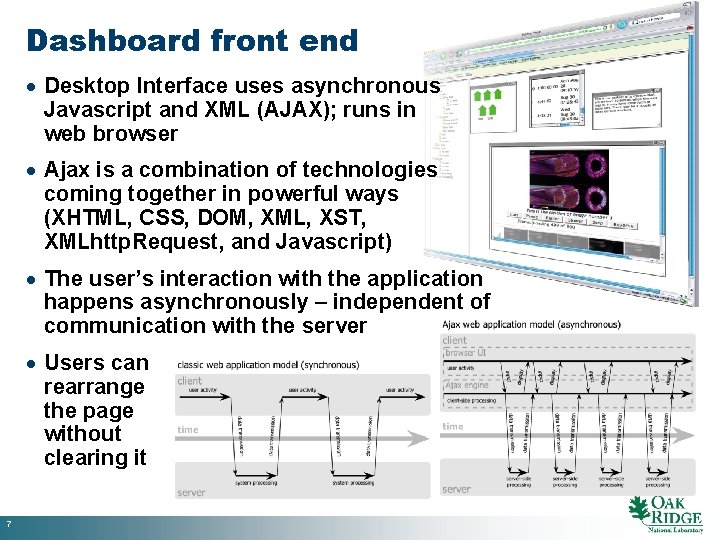

Dashboard front end · Desktop Interface uses asynchronous Javascript and XML (AJAX); runs in web browser · Ajax is a combination of technologies coming together in powerful ways (XHTML, CSS, DOM, XML, XST, XMLhttp. Request, and Javascript) · The user’s interaction with the application happens asynchronously – independent of communication with the server · Users can rearrange the page without clearing it 7

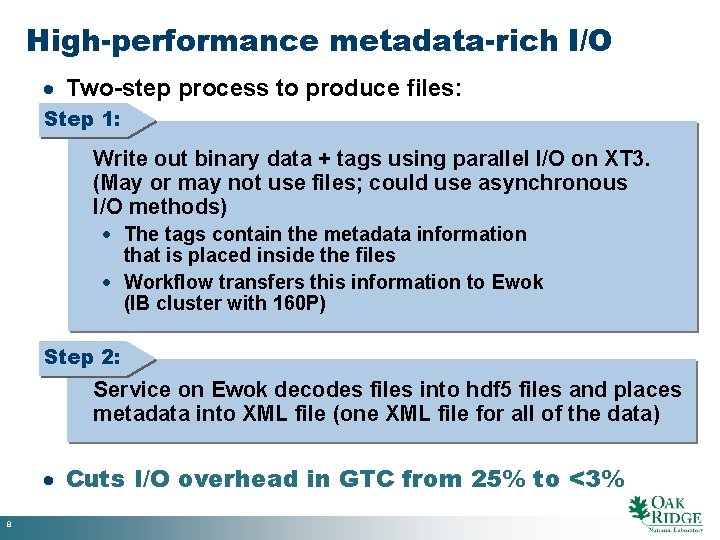

High-performance metadata-rich I/O · Two-step process to produce files: Step 1: Write out binary data + tags using parallel I/O on XT 3. (May or may not use files; could use asynchronous I/O methods) · The tags contain the metadata information that is placed inside the files · Workflow transfers this information to Ewok (IB cluster with 160 P) Step 2: Service on Ewok decodes files into hdf 5 files and places metadata into XML file (one XML file for all of the data) · Cuts I/O overhead in GTC from 25% to <3% 8

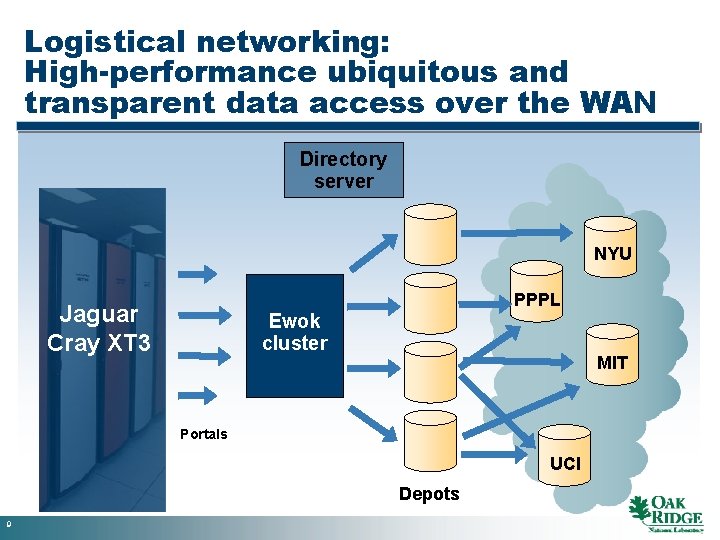

Logistical networking: High-performance ubiquitous and transparent data access over the WAN Directory server NYU Jaguar Cray XT 3 PPPL Ewok cluster MIT Portals UCI Depots 9

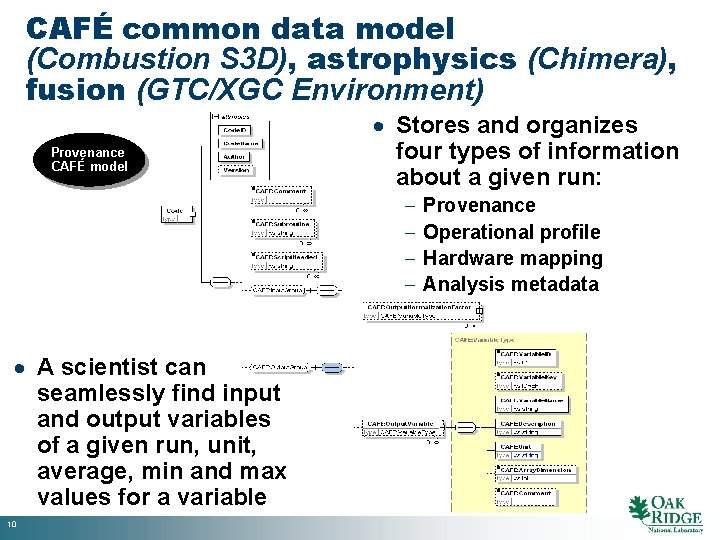

CAFÉ common data model (Combustion S 3 D), astrophysics (Chimera), fusion (GTC/XGC Environment) Provenance CAFÉ model · Stores and organizes four types of information about a given run: - · A scientist can seamlessly find input and output variables of a given run, unit, average, min and max values for a variable 10 Provenance Operational profile Hardware mapping Analysis metadata

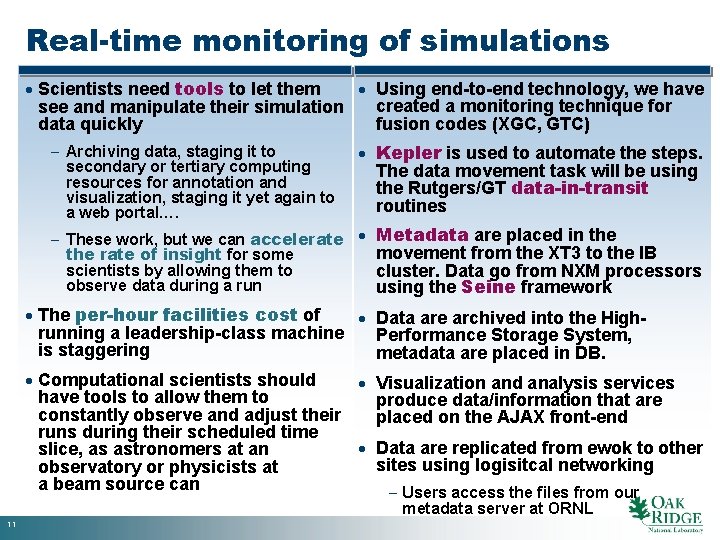

Real-time monitoring of simulations · Scientists need tools to let them · Using end-to-end technology, we have created a monitoring technique for see and manipulate their simulation fusion codes (XGC, GTC) data quickly - Archiving data, staging it to secondary or tertiary computing resources for annotation and visualization, staging it yet again to a web portal…. · Kepler is used to automate the steps. The data movement task will be using the Rutgers/GT data-in-transit routines - These work, but we can accelerate · Metadata are placed in the movement from the XT 3 to the IB the rate of insight for some scientists by allowing them to cluster. Data go from NXM processors observe data during a run using the Seine framework · The per-hour facilities cost of · Data are archived into the Highrunning a leadership-class machine Performance Storage System, is staggering metadata are placed in DB. · Computational scientists should · Visualization and analysis services have tools to allow them to produce data/information that are constantly observe and adjust their placed on the AJAX front-end runs during their scheduled time · Data are replicated from ewok to other slice, as astronomers at an sites using logisitcal networking observatory or physicists at a beam source can - Users access the files from our metadata server at ORNL 11

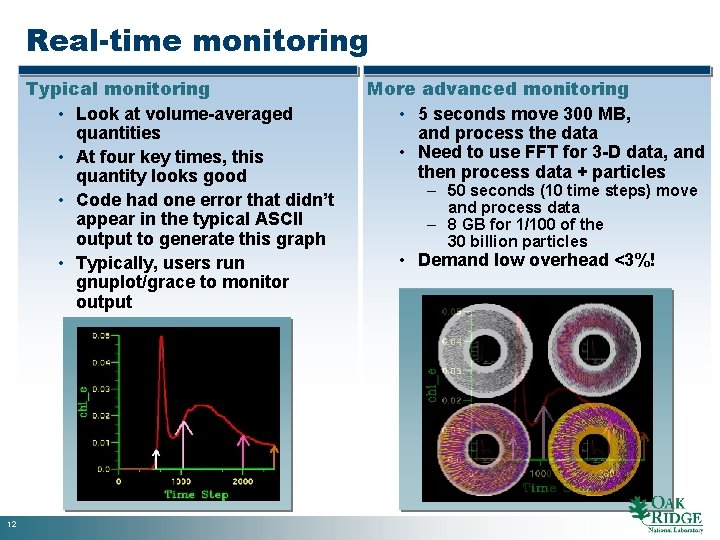

Real-time monitoring Typical monitoring • Look at volume-averaged quantities • At four key times, this quantity looks good • Code had one error that didn’t appear in the typical ASCII output to generate this graph • Typically, users run gnuplot/grace to monitor output 12 More advanced monitoring • 5 seconds move 300 MB, and process the data • Need to use FFT for 3 -D data, and then process data + particles – 50 seconds (10 time steps) move and process data – 8 GB for 1/100 of the 30 billion particles • Demand low overhead <3%!

Contact Scott A. Klasky Lead, End-to-End Solutions Center for Computational Sciences (865) 241 -9980 klasky@ornl. gov 13 Klasky_E 2 E_0611

- Slides: 13