CSCI 5593 Team 6 Branch Prediction Strategies Simulation

CSCI 5593 Team #6 Branch Prediction Strategies Simulation and Comparison Presented by: Shengchao Huangfu, Yi Li, Xiaozheng He Spring 2016

Outline • • • Introduction Static Prediction – Always Taken – Always Not Taken Dynamic Prediction – Bimodal 1 -bit & 2 -bit – GShare – Tournament Prediction – Loop Prediction Neural Branch Prediction – Perceptron Our Project Reference

Why do we need Branch Prediction? • The techniques of Instruction Level Parallelism (ILP) and pipeline have been used well to speed up the execution of instructions[N. P Jouppi 89]. • However, when higher degrees of instruction level parallelism have been applied, poorer performance of CPU might occur that is a result from the unresolved branches stalls. • For conditional branches, the target instruction cannot be fetched until the target address has been calculated and the conditional branch has been resolved, whereas the unconditional branch is not resolved until the target address is calculated. Whether with conditional or unconditional branches, pipeline stalls could occur once the number of cycles is taken to resolve the branch. The performance of CPU decreases when the pipeline bubbles increase.

Why do we need Branch Prediction? • Branch prediction is a way to reduce the execution penalty due to branches by predicting, prefetching and initiating execution of the branch target before the branch is resolved.

Branch Prediction Subproblems • The branch performance problem can be divided into two subproblems. • 1. Prediction of the branch direction is needed. • 2. For taken branches, the instructions from the branch target must be available for execution with minimal delay. One way to provide the target instructions quickly is to use a Branch Target Buffer, which is a special instruction cache designed to store the target instructions • Lee and Smith[LS 84].

Project Goal • The goal of our project : Simulate different types of predictors, Perform comparisons between different techniques of branch prediction.

Branch Prediction Schemes • In order to achieve better CPU performance, many schemes of branch prediction have been utilized. • These schemes sometimes can be categorized as programbased predictors vs. profile-based predictors, or static vs. dynamic schemes.

Branch Prediction Schemes • Static: -Always taken -Always Not taken • Dynamic: -Bimodal Prediction with 1 -Bit History -Bimodal Prediction with 2 -Bit History -Gshare Prediction -Loop prediction -Tournament Prediction

Static Branch Prediction • Decided before runtime -Always taken -Always Not taken • Unfortunately, the static strategy can provide up to 68 percent accuracy. Lee and Smith [LS 84].

![Static VS. Dynamic • Su and Zhou [SZ 95] concluded the static predictor has Static VS. Dynamic • Su and Zhou [SZ 95] concluded the static predictor has](http://slidetodoc.com/presentation_image_h/19272552007b01ee1a3360c434da666b/image-10.jpg)

Static VS. Dynamic • Su and Zhou [SZ 95] concluded the static predictor has the worst performance. • All of the dynamic predictors have much better performance than static predictors and can at least achieve 90 percent accuracy as reported by Mc. Farling [M 93].

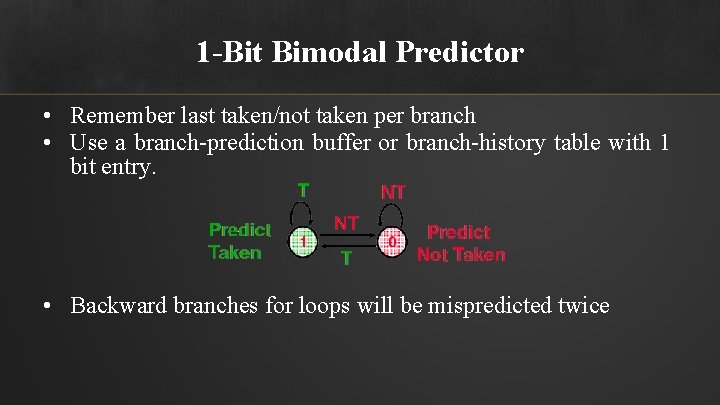

1 -Bit Bimodal Predictor • Remember last taken/not taken per branch • Use a branch-prediction buffer or branch-history table with 1 bit entry. • Backward branches for loops will be mispredicted twice

![2 -Bit Bimodal Predictor • Lee and Smith [LS 84] proposed a better structure. 2 -Bit Bimodal Predictor • Lee and Smith [LS 84] proposed a better structure.](http://slidetodoc.com/presentation_image_h/19272552007b01ee1a3360c434da666b/image-12.jpg)

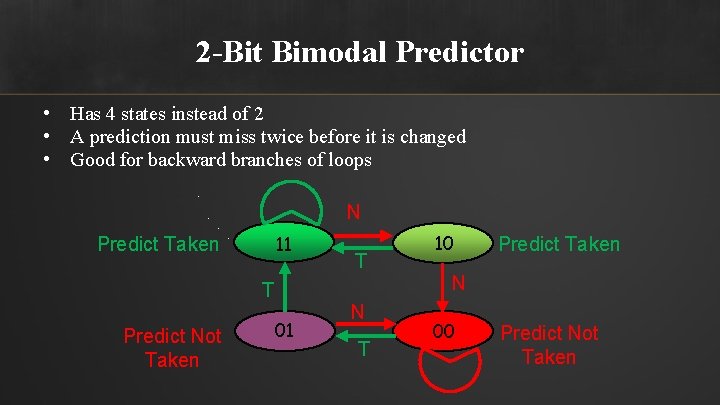

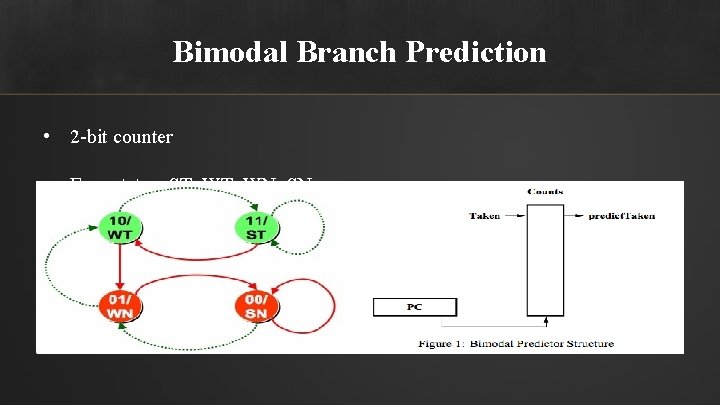

2 -Bit Bimodal Predictor • Lee and Smith [LS 84] proposed a better structure. • Mc. Farling [M 93] referred to it as the bimodal branch prediction. • Hennessy and Patterson [HP 96] introduced the two-bit prediction scheme. • Three-bit or higher counters do not make much significant than two-bit counter does.

2 -Bit Bimodal Predictor • Has 4 states instead of 2 • A prediction must miss twice before it is changed • Good for backward branches of loops N 11 Predict Taken T Predict Not Taken 01 T N T 10 Predict Taken N 00 Predict Not Taken

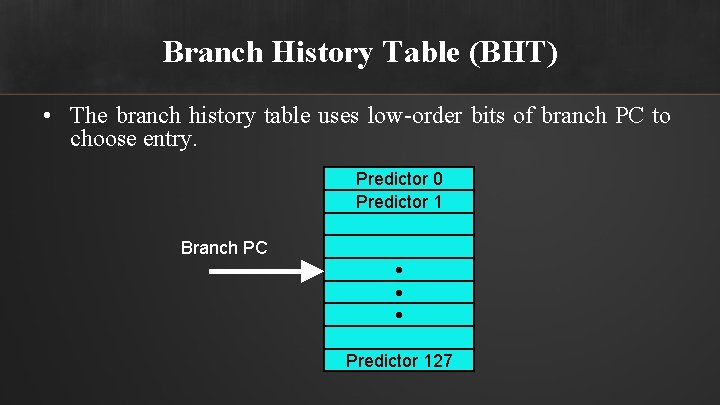

Branch History Table (BHT) • The branch history table uses low-order bits of branch PC to choose entry. Predictor 0 Predictor 1 Branch PC • • • Predictor 127

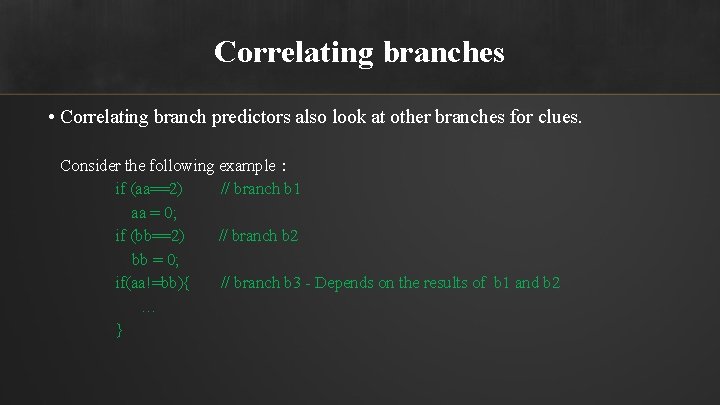

Correlating branches • Correlating branch predictors also look at other branches for clues. Consider the following example: if (aa==2) // branch b 1 aa = 0; if (bb==2) // branch b 2 bb = 0; if(aa!=bb){ // branch b 3 - Depends on the results of b 1 and b 2 … }

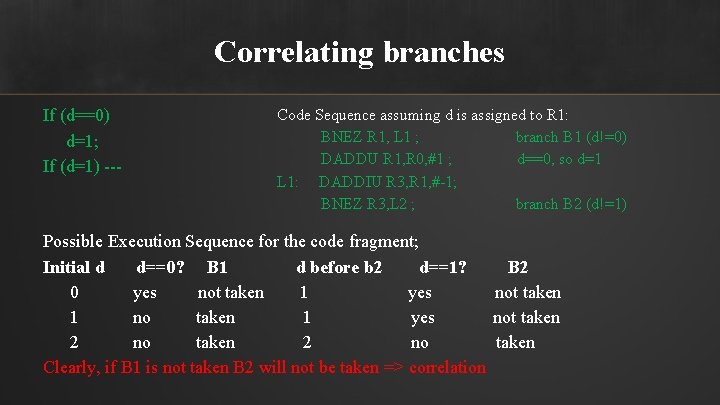

Correlating branches If (d==0) d=1; If (d=1) --- Code Sequence assuming d is assigned to R 1: BNEZ R 1, L 1 ; branch B 1 (d!=0) DADDU R 1, R 0, #1 ; d==0, so d=1 L 1: DADDIU R 3, R 1, #-1; BNEZ R 3, L 2 ; branch B 2 (d!=1) Possible Execution Sequence for the code fragment; Initial d d==0? B 1 d before b 2 d==1? B 2 0 yes not taken 1 no taken 1 yes not taken 2 no taken Clearly, if B 1 is not taken B 2 will not be taken => correlation

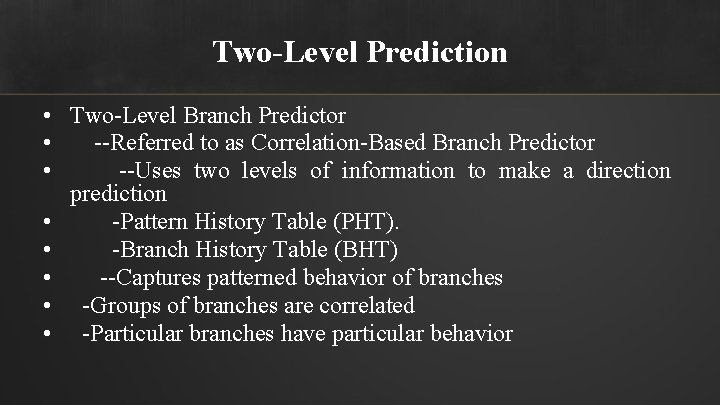

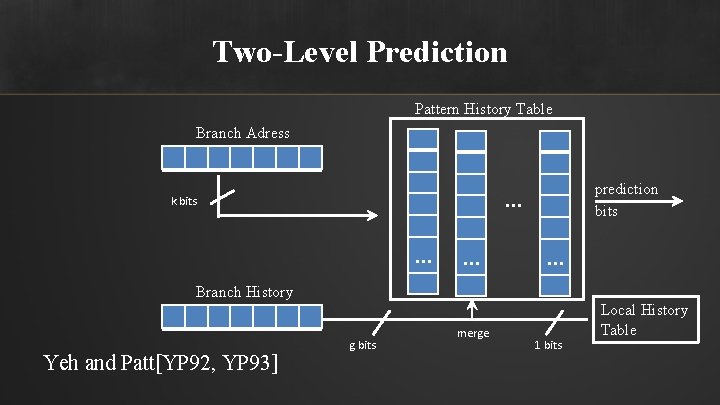

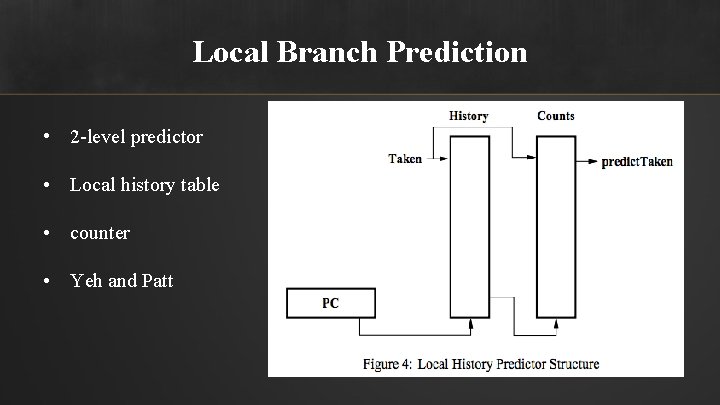

Two-Level Prediction • Two-Level Branch Predictor • --Referred to as Correlation-Based Branch Predictor • --Uses two levels of information to make a direction prediction • -Pattern History Table (PHT). • -Branch History Table (BHT) • --Captures patterned behavior of branches • -Groups of branches are correlated • -Particular branches have particular behavior

Two-Level Prediction Pattern History Table Branch Adress prediction bits . . . k bits . . Branch History Yeh and Patt[YP 92, YP 93] g bits merge 1 bits Local History Table

Gshare Prediction • Gshare scheme is similar to bimodal predictor or branch history table. • However, like correlation-based prediction, this method records history of branches into the shift register. Then, it uses the address of the branch instruction and branch history (shift register) to XOR's them together.

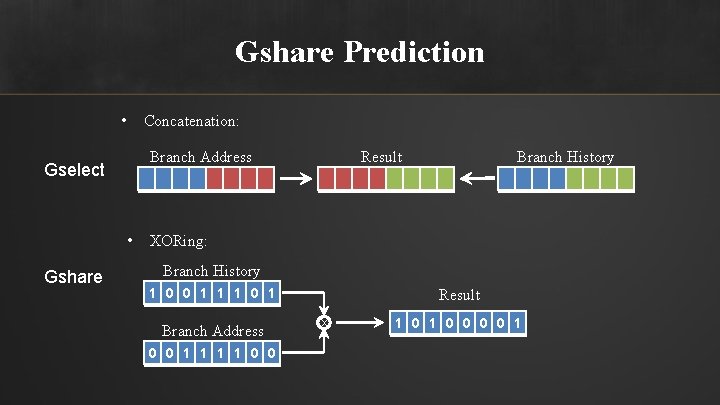

Gshare Prediction • Concatenation: • Gshare Result Branch Address Gselect Branch History XORing: Branch History 1 0 0 1 1 1 0 1 Branch Address 0 0 1 1 0 0 Result X 1 0 0 0 0 1

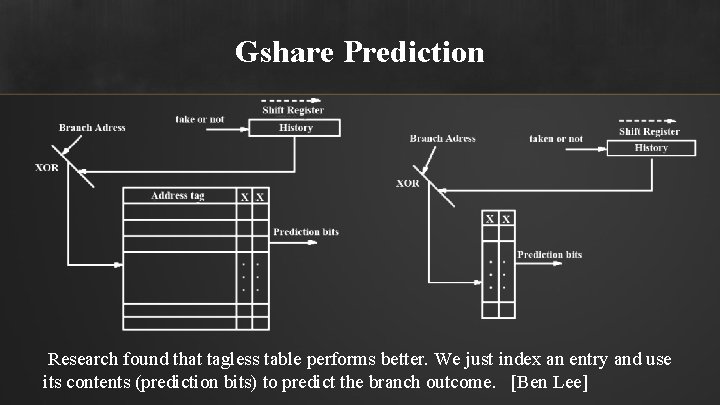

Gshare Prediction Research found that tagless table performs better. We just index an entry and use its contents (prediction bits) to predict the branch outcome. [Ben Lee]

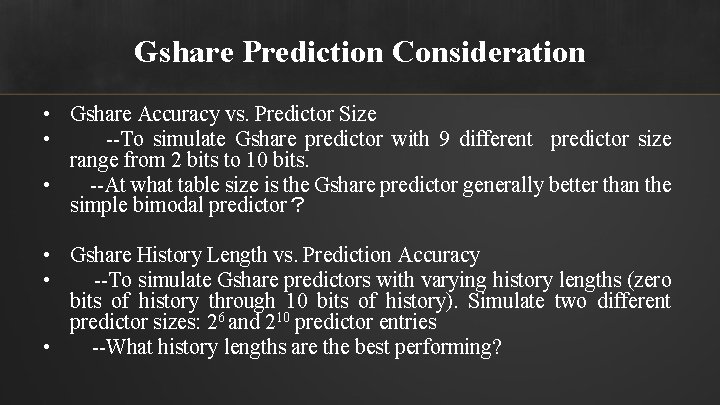

Gshare Prediction Consideration • Gshare Accuracy vs. Predictor Size • --To simulate Gshare predictor with 9 different predictor size range from 2 bits to 10 bits. • --At what table size is the Gshare predictor generally better than the simple bimodal predictor? • Gshare History Length vs. Prediction Accuracy • --To simulate Gshare predictors with varying history lengths (zero bits of history through 10 bits of history). Simulate two different predictor sizes: 26 and 210 predictor entries • --What history lengths are the best performing?

Tournament Prediction • Combining Branch Predictor • Bimodal Branch predictor • Local Branch predictor • Global Branch predictor

Bimodal Branch Prediction • 2 -bit counter • Four status: ST, WN, SN

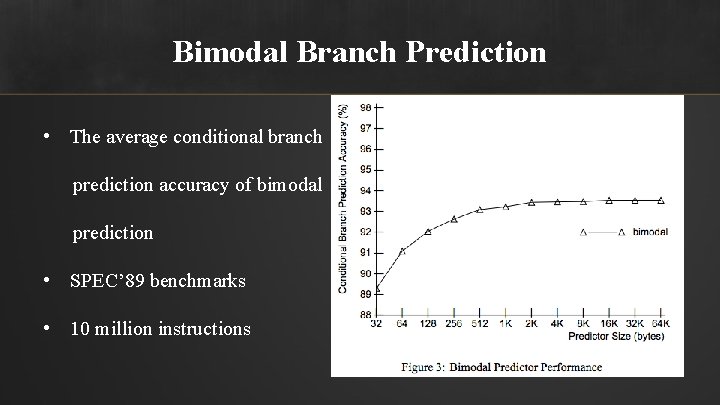

Bimodal Branch Prediction • The average conditional branch prediction accuracy of bimodal prediction • SPEC’ 89 benchmarks • 10 million instructions

Local Branch Prediction • 2 -level predictor • Local history table • counter • Yeh and Patt

Local Branch Prediction • Example for(i = 1; i <= 4; i ++){. . . } The pattern is (1110)n • Two contentions if there are more branches in program The branch history may reflect a mix of histories of all the branches that map to each history entry. Conflict between patterns. (1110)n and (0110)n for 3 -bit counter.

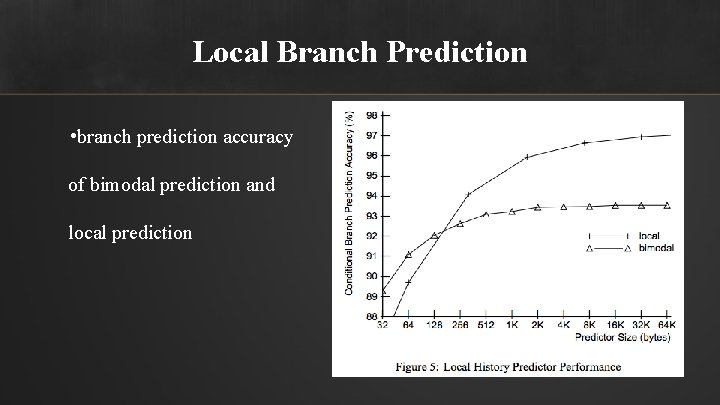

Local Branch Prediction • branch prediction accuracy of bimodal prediction and local prediction

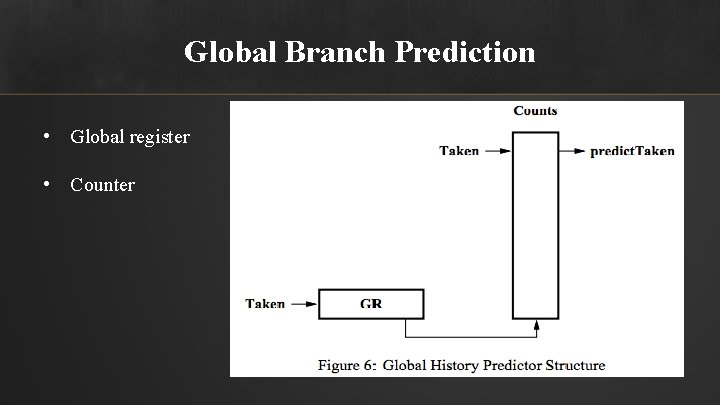

Global Branch Prediction • Global register • Counter

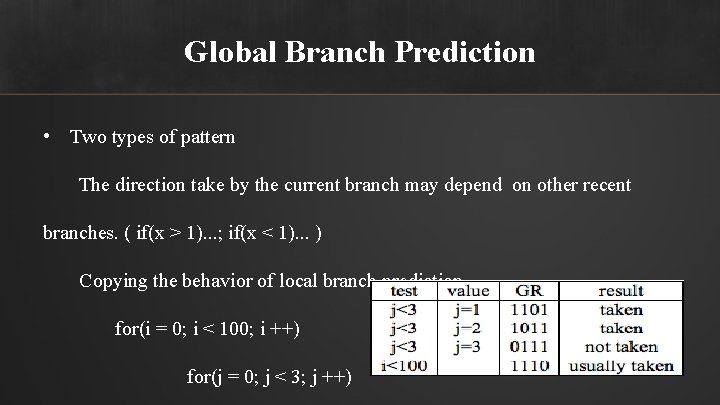

Global Branch Prediction • Two types of pattern The direction take by the current branch may depend on other recent branches. ( if(x > 1). . . ; if(x < 1). . . ) Copying the behavior of local branch prediction. for(i = 0; i < 100; i ++) for(j = 0; j < 3; j ++)

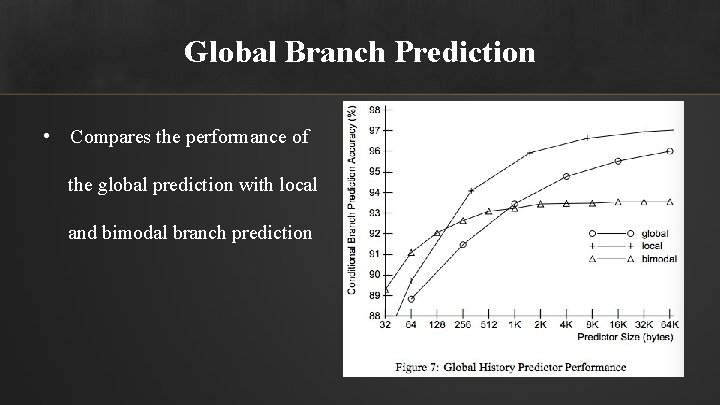

Global Branch Prediction • Compares the performance of the global prediction with local and bimodal branch prediction

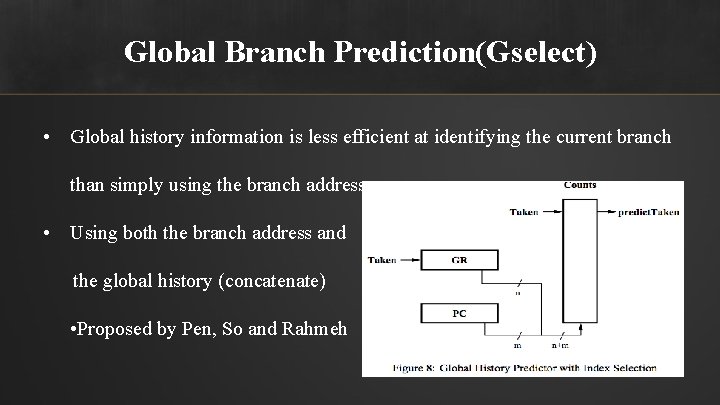

Global Branch Prediction(Gselect) • Global history information is less efficient at identifying the current branch than simply using the branch address • Using both the branch address and the global history (concatenate) • Proposed by Pen, So and Rahmeh

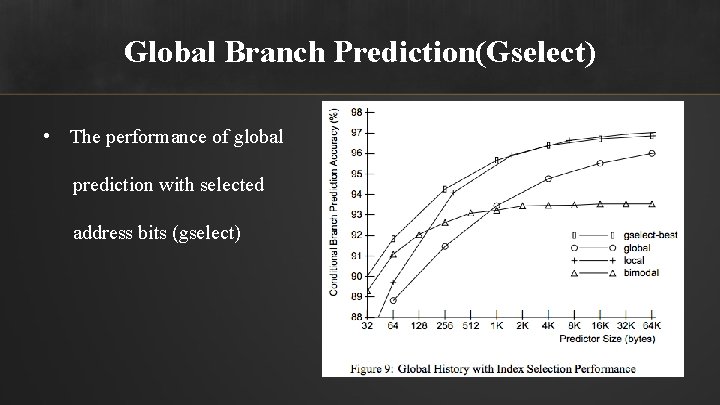

Global Branch Prediction(Gselect) • The performance of global prediction with selected address bits (gselect)

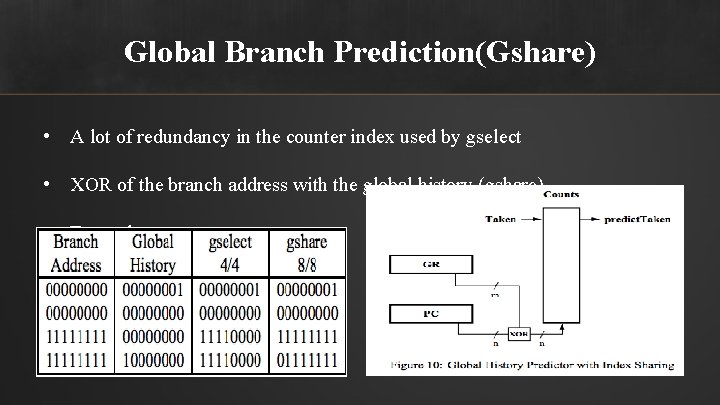

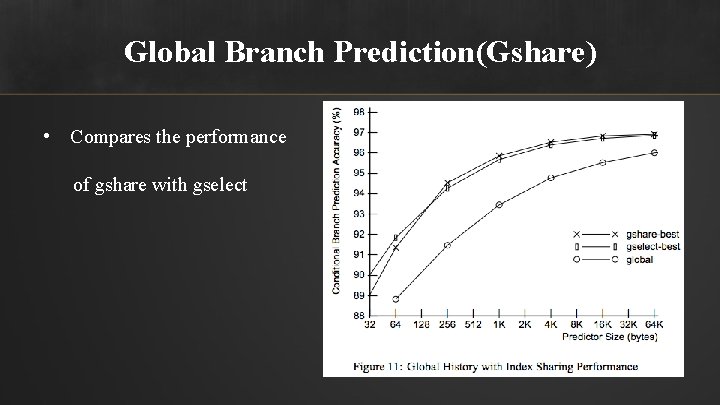

Global Branch Prediction(Gshare) • A lot of redundancy in the counter index used by gselect • XOR of the branch address with the global history (gshare) • Example

Global Branch Prediction(Gshare) • Compares the performance of gshare with gselect

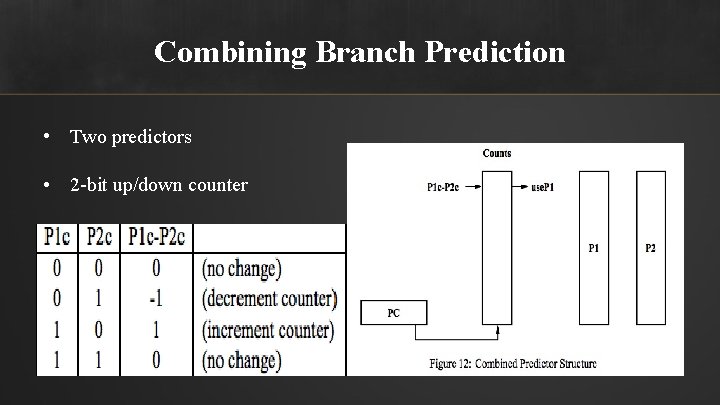

Combining Branch Prediction • Two predictors • 2 -bit up/down counter

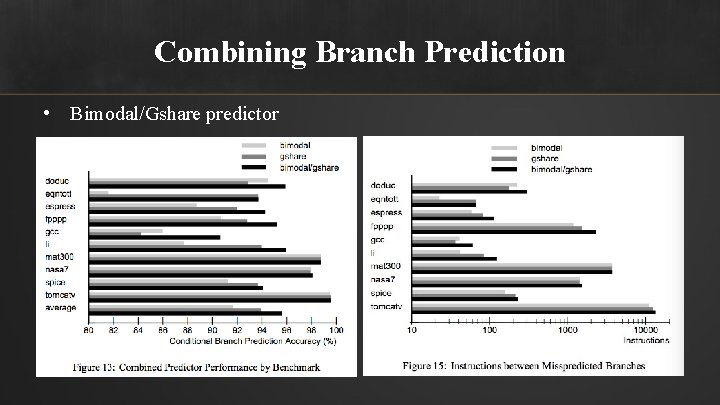

Combining Branch Prediction • Bimodal/Gshare predictor

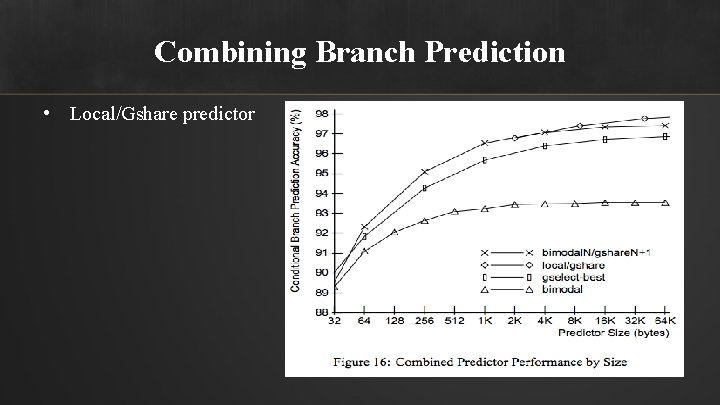

Combining Branch Prediction • Local/Gshare predictor

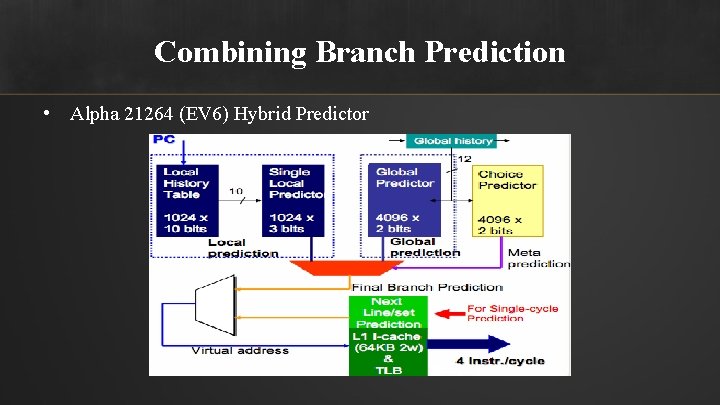

Combining Branch Prediction • Alpha 21264 (EV 6) Hybrid Predictor

Loop Prediction • Part of Hybird predictor where a meta-predictor detects whether the conditional jump has a loop behavior • Example: For(i = 0; i < 6; i ++) A conditional jump at the bottom of the loop, (111110) A conditional jump at the top of the loop, (000001) • Loop counter

Loop Prediction • Use for many microprocessors • The PM(Pentium-M) and Core 2 have a loop counter with 6 bits, allowing loops with a maximum period of 64 to be predicted perfectly

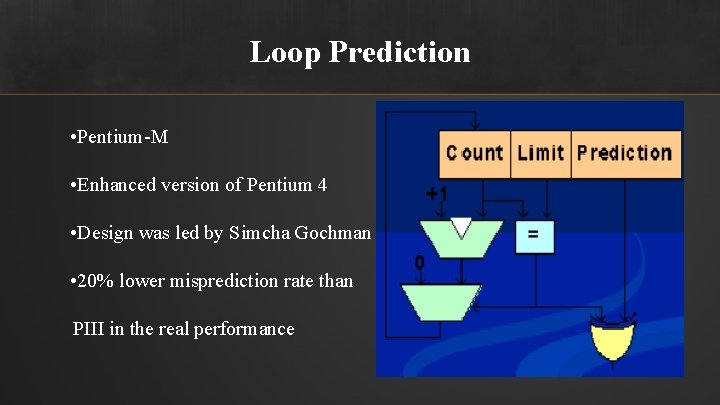

Loop Prediction • Pentium-M • Enhanced version of Pentium 4 • Design was led by Simcha Gochman • 20% lower misprediction rate than PIII in the real performance

Neural Branch Prediction • Currently normal branch prediction – Static • Always taken, not taken, etc… – Dynamic • Gshare, Tournament Prediction, etc… • Machine learning techniques – Provides a new approach to branch prediction – Apply neural networks to prediction

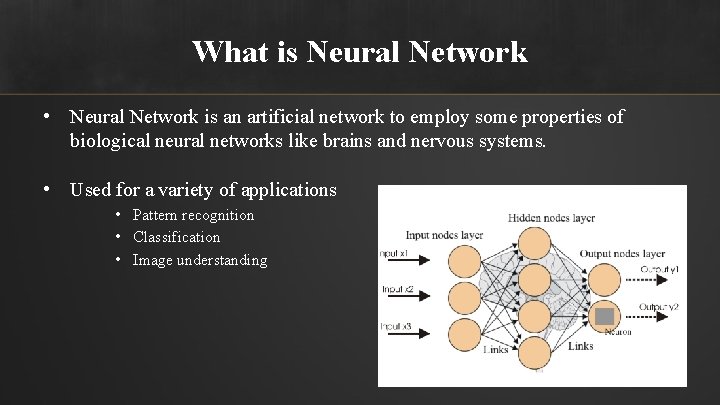

What is Neural Network • Neural Network is an artificial network to employ some properties of biological neural networks like brains and nervous systems. • Used for a variety of applications • Pattern recognition • Classification • Image understanding

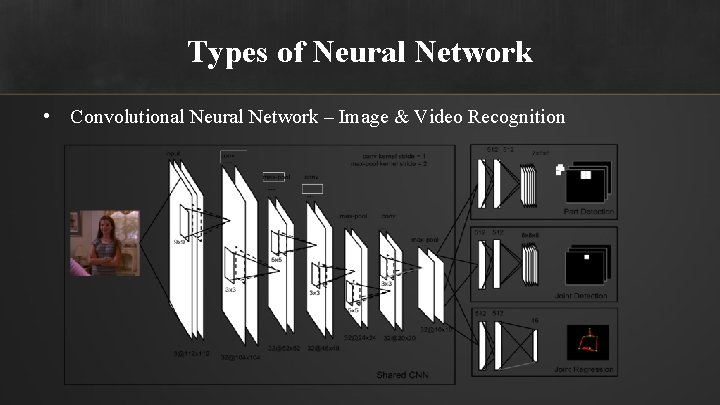

Types of Neural Network • Convolutional Neural Network – Image & Video Recognition

Types of Neural Network • Google Deep. Mind – Deep Neural Network + Monte Carlo Tree Search

Types of Neural Network • Alpha. GO – Beat Lee Sedol in a five-game match.

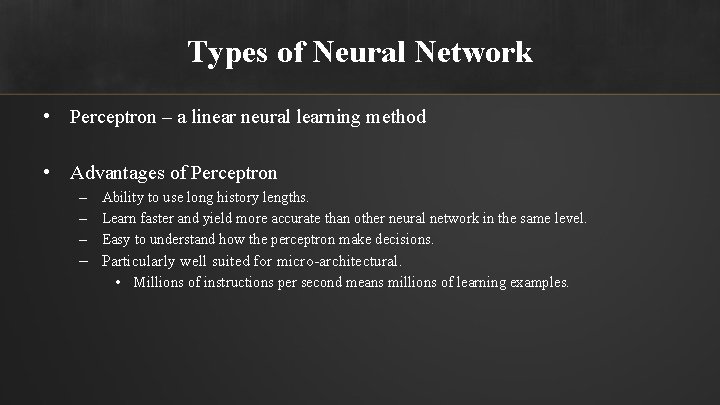

Types of Neural Network • Perceptron – a linear neural learning method • Advantages of Perceptron – Ability to use long history lengths. – Learn faster and yield more accurate than other neural network in the same level. – Easy to understand how the perceptron make decisions. – Particularly well suited for micro-architectural. • Millions of instructions per second means millions of learning examples.

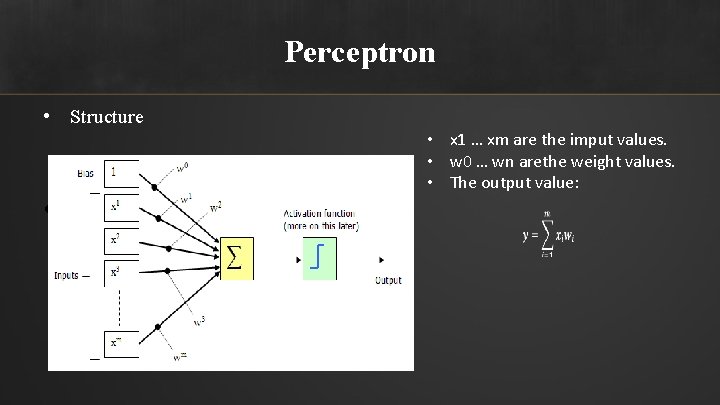

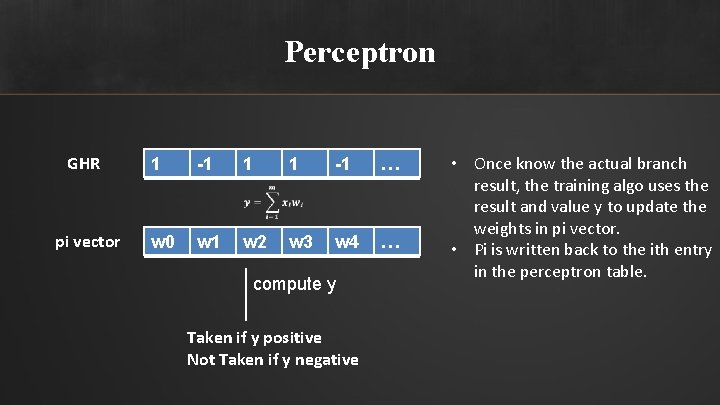

Perceptron • Structure • y=i=1 mxiwi • x 1 … xm are the imput values. • w 0 … wn arethe weight values. • The output value:

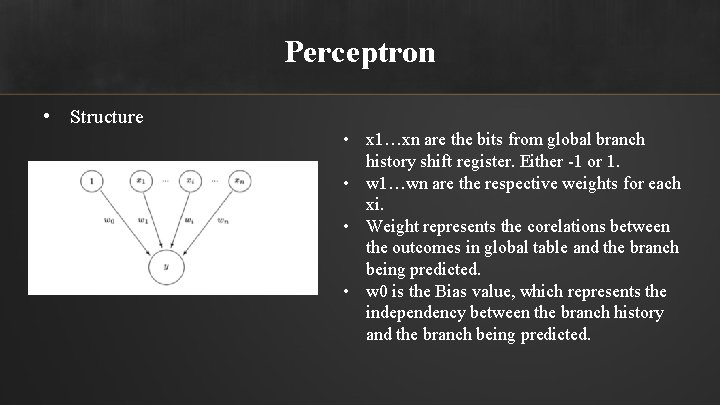

Perceptron • Structure • x 1…xn are the bits from global branch history shift register. Either -1 or 1. • w 1…wn are the respective weights for each xi. • Weight represents the corelations between the outcomes in global table and the branch being predicted. • w 0 is the Bias value, which represents the independency between the branch history and the branch being predicted.

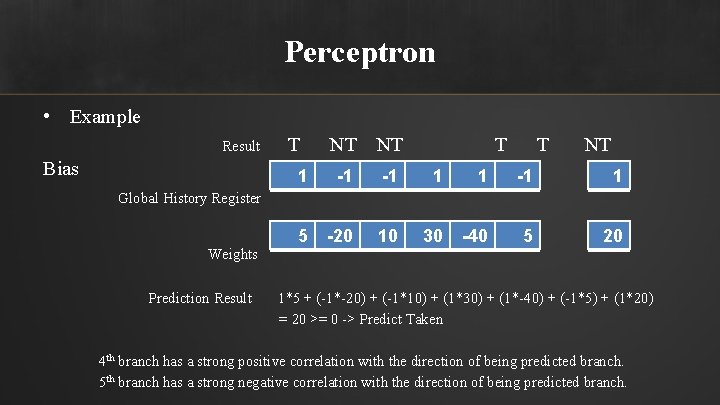

Perceptron • Example Result Bias T NT NT T T NT 1 -1 -1 1 5 -20 10 30 -40 5 20 Global History Register Weights Prediction Result 1*5 + (-1*-20) + (-1*10) + (1*30) + (1*-40) + (-1*5) + (1*20) = 20 >= 0 -> Predict Taken 4 th branch has a strong positive correlation with the direction of being predicted branch. 5 th branch has a strong negative correlation with the direction of being predicted branch.

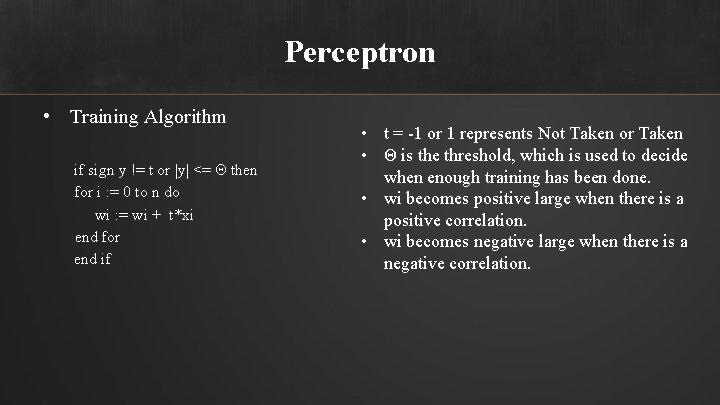

Perceptron • Training Algorithm if sign y != t or |y| <= Θ then for i : = 0 to n do wi : = wi + t*xi end for end if • t = -1 or 1 represents Not Taken or Taken • Θ is the threshold, which is used to decide when enough training has been done. • wi becomes positive large when there is a positive correlation. • wi becomes negative large when there is a negative correlation.

Perceptron

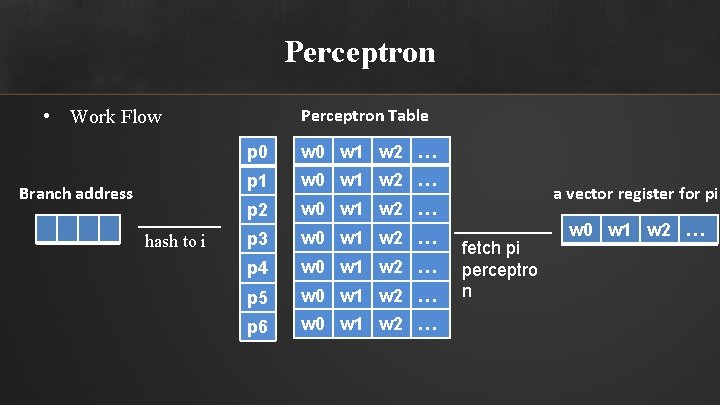

Perceptron Table • Work Flow Branch address hash to i p 0 w 1 w 2 … p 1 w 0 w 1 w 2 … p 2 w 0 w 1 w 2 … p 3 w 0 w 1 w 2 … p 4 w 0 w 1 w 2 … p 5 w 0 w 1 w 2 … p 6 w 0 w 1 w 2 … a vector register for pi fetch pi perceptro n w 0 w 1 w 2 …

Perceptron GHR pi vector 1 -1 1 1 -1 … w 0 w 1 w 2 w 3 w 4 … compute y Taken if y positive Not Taken if y negative • Once know the actual branch result, the training algo uses the result and value y to update the weights in pi vector. • Pi is written back to the ith entry in the perceptron table.

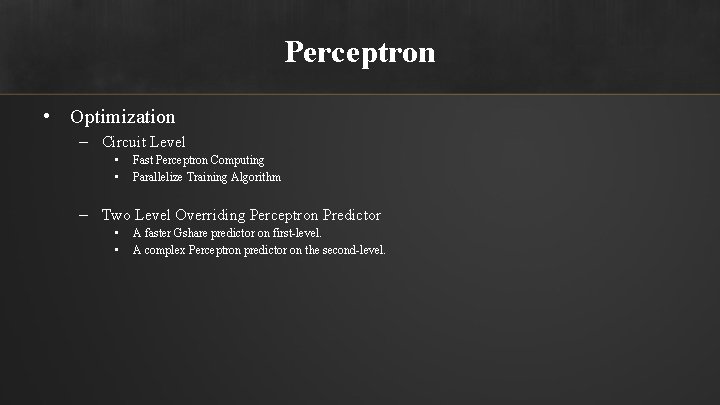

Perceptron • Optimization – Circuit Level • • Fast Perceptron Computing Parallelize Training Algorithm – Two Level Overriding Perceptron Predictor • • A faster Gshare predictor on first-level. A complex Perceptron predictor on the second-level.

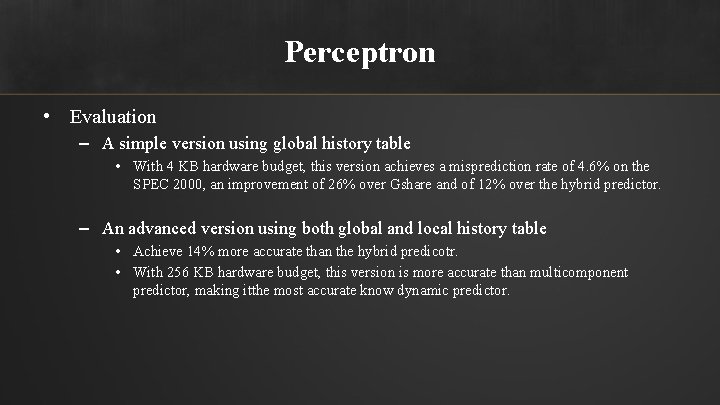

Perceptron • Evaluation – A simple version using global history table • With 4 KB hardware budget, this version achieves a misprediction rate of 4. 6% on the SPEC 2000, an improvement of 26% over Gshare and of 12% over the hybrid predictor. – An advanced version using both global and local history table • Achieve 14% more accurate than the hybrid predicotr. • With 256 KB hardware budget, this version is more accurate than multicomponent predictor, making itthe most accurate know dynamic predictor.

Our Project • We are going to implement following branch prediction strategies: – – – Static Prediction – Always taken Static prediction – Not take Bimodal prediction 1 -bit 2 -bit with different table size Gshare predictor with different global register size Tournamet predictor with fixed table size May implement Perceptron predictor • Test and evaluate the accuracy of each predictor.

![References [1] N. P Jouppi and D. Wall, "Available Instruction-Level Parallelism for Superscalar and References [1] N. P Jouppi and D. Wall, "Available Instruction-Level Parallelism for Superscalar and](http://slidetodoc.com/presentation_image_h/19272552007b01ee1a3360c434da666b/image-59.jpg)

References [1] N. P Jouppi and D. Wall, "Available Instruction-Level Parallelism for Superscalar and Superpipelined Machines. " Proc. 3 rd Int'l Conf. Architecture Support for Programming Languages and Operating Systems, 1989, pp. 272 -282. [2] J. K. L. Lee and A. J. Smith. Branch prediction strategies and branch target buffer design. Computer, 17(1), January 1984. [3] Zhendong Su and Min Zhou, “A Comparative Analysis of Branch Prediction Schemes, ” University of California at Berkeley, Computer Architecture Project, 1995. [4] S. Mc. Farling, "Combining Branch Predictors", WRL Technical Note TN-36, Digital Equipment Corporation, June 1993. [5] J. Hennessy and D. Patterson, "Computer Architecture: A Quantitative Approach, 2 nd Edition, " Morgan Kaufmann Publishers, Inc. , 1996. [6] T. -Y. Yeh and Y. N. Patt, "Two-level Adaptive Branch Prediction", 24 th ACM/IEEE International Symposium on Microarchitecture, Nov. 1991 [7] T. Y. Yeh and Y. N. Patt. A comparison of dynamic branch predictors that usetwo levels of branch history. In Proc. 20 th Int. Sym. on Computer Architecture, pages 257– 266, May 1993.

![References [8] Scott Mc. Farling. "Combining Branch Predictors" — introduces combined predictors. Western Research References [8] Scott Mc. Farling. "Combining Branch Predictors" — introduces combined predictors. Western Research](http://slidetodoc.com/presentation_image_h/19272552007b01ee1a3360c434da666b/image-60.jpg)

References [8] Scott Mc. Farling. "Combining Branch Predictors" — introduces combined predictors. Western Research Laboratory. 1993. [9] Fog, Agner. "The microarchitecture of Intel, AMD and VIA CPUs". Technical University of Denmark. Copyright © 1996 - 2016. Last updated 2016 -01 -16. Retrieved 2009 -10 -01. [10] Daniel A. Jim´enez Calvin Lin, “Dynamic Branch Prediction with Perceptrons. ” Department of Computer Sciences The University of Texas at Austin.

Thank You! • Questions?

- Slides: 61