Branch Prediction J Nelson Amaral Why Branch Prediction

Branch Prediction J. Nelson Amaral

Why Branch Prediction? • Every 5 -7 instruction of a program is a branch • Not predicting, or miss-predicting, is very costly in architectures with deep pipelines or with many functional units. Baer p. 129

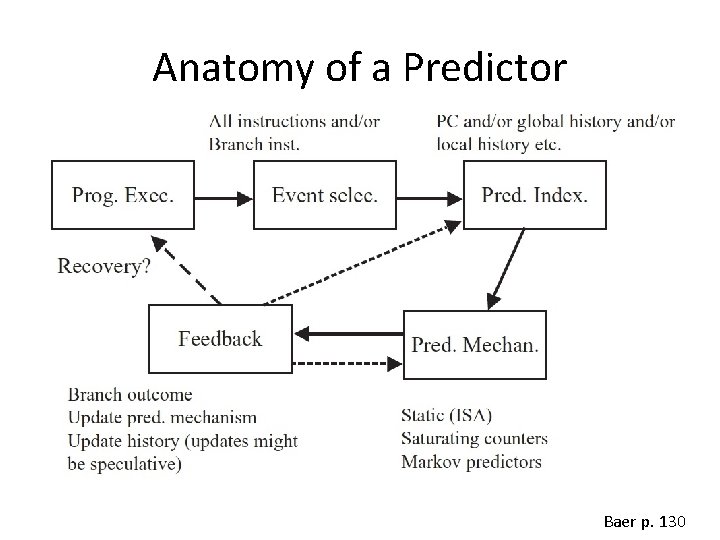

Anatomy of a Predictor Baer p. 130

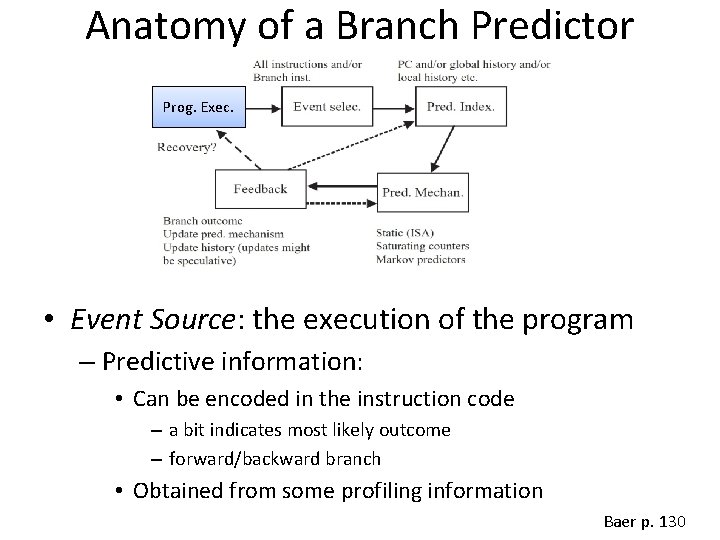

Anatomy of a Branch Predictor Prog. Exec. • Event Source: the execution of the program – Predictive information: • Can be encoded in the instruction code – a bit indicates most likely outcome – forward/backward branch • Obtained from some profiling information Baer p. 130

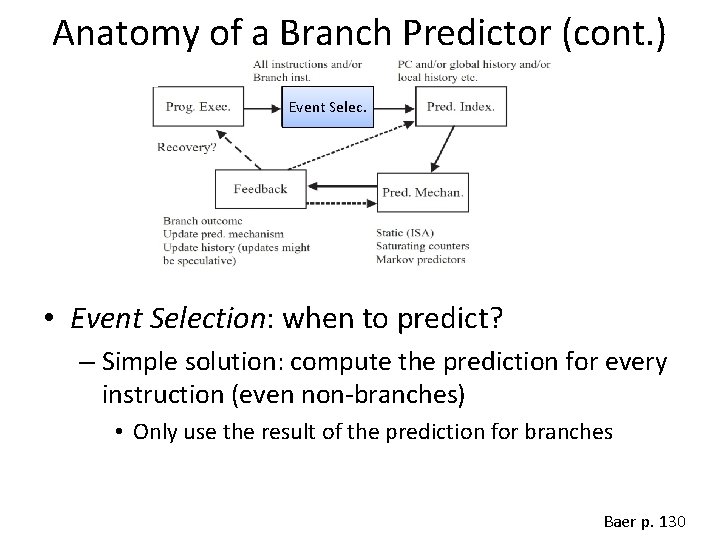

Anatomy of a Branch Predictor (cont. ) Event Selec. • Event Selection: when to predict? – Simple solution: compute the prediction for every instruction (even non-branches) • Only use the result of the prediction for branches Baer p. 130

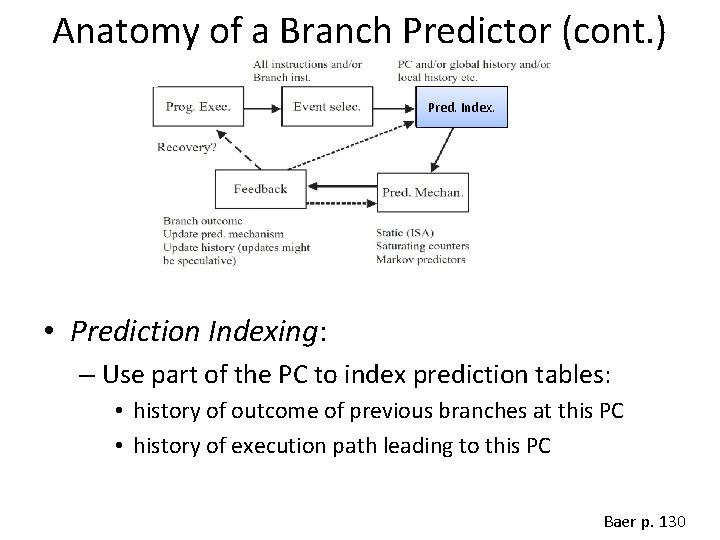

Anatomy of a Branch Predictor (cont. ) Pred. Index. • Prediction Indexing: – Use part of the PC to index prediction tables: • history of outcome of previous branches at this PC • history of execution path leading to this PC Baer p. 130

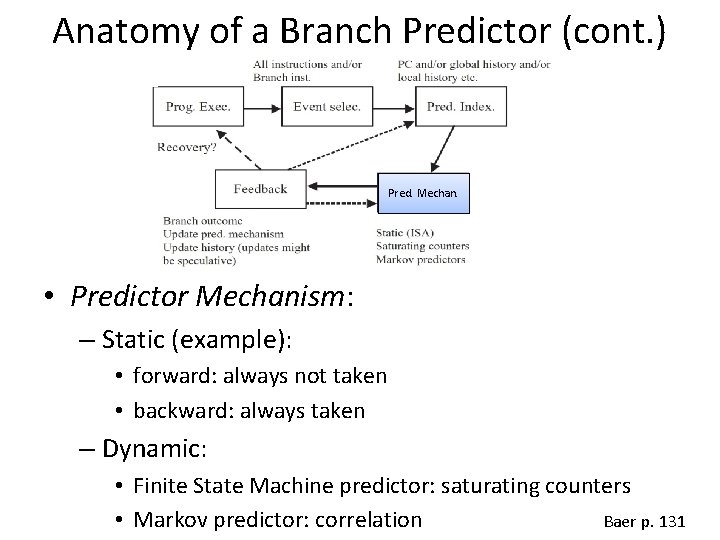

Anatomy of a Branch Predictor (cont. ) Pred. Mechan. • Predictor Mechanism: – Static (example): • forward: always not taken • backward: always taken – Dynamic: • Finite State Machine predictor: saturating counters Baer p. 131 • Markov predictor: correlation

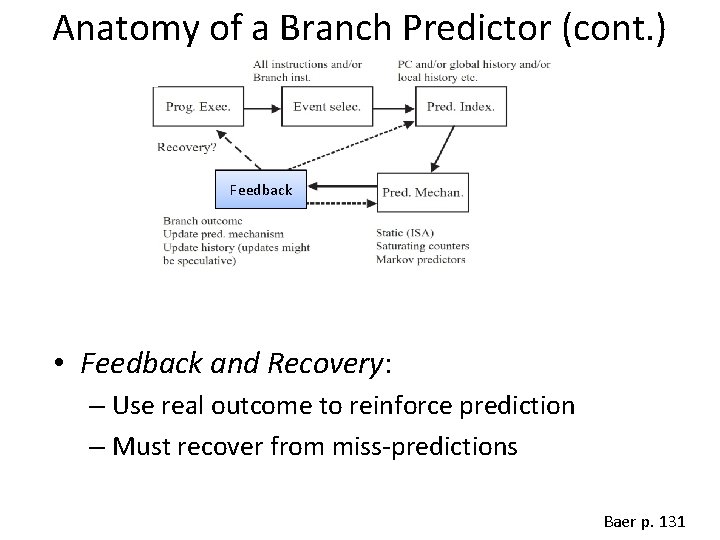

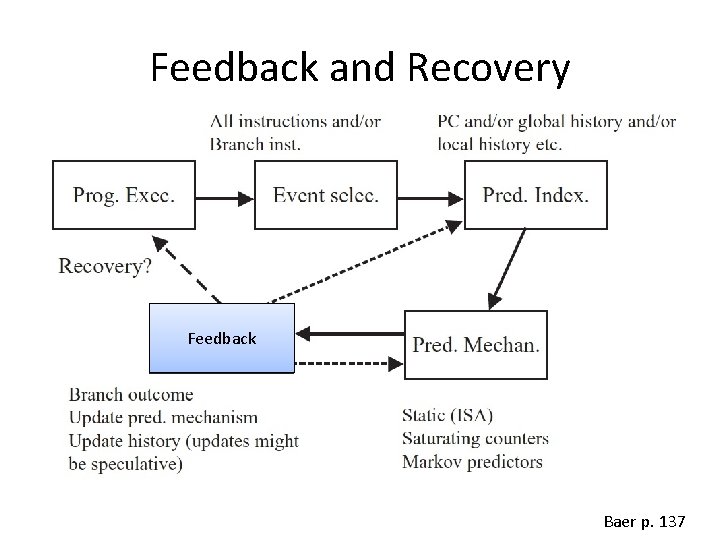

Anatomy of a Branch Predictor (cont. ) Feedback • Feedback and Recovery: – Use real outcome to reinforce prediction – Must recover from miss-predictions Baer p. 131

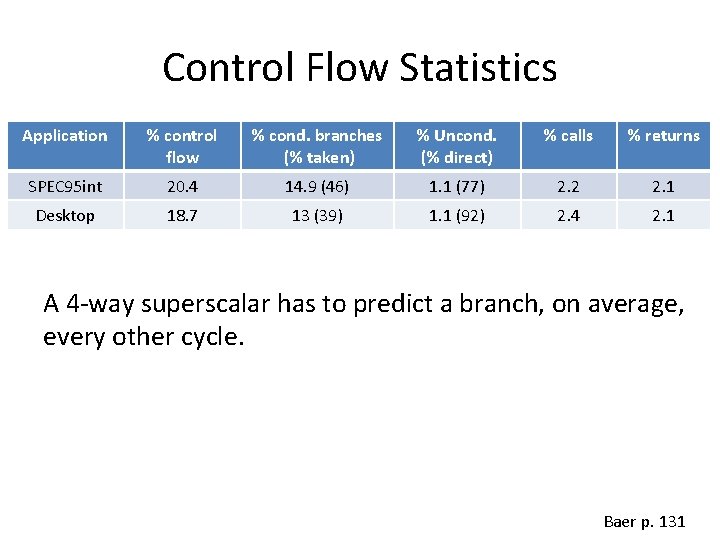

Control Flow Statistics Application % control flow % cond. branches (% taken) % Uncond. (% direct) % calls % returns SPEC 95 int 20. 4 14. 9 (46) 1. 1 (77) 2. 2 2. 1 Desktop 18. 7 13 (39) 1. 1 (92) 2. 4 2. 1 A 4 -way superscalar has to predict a branch, on average, every other cycle. Baer p. 131

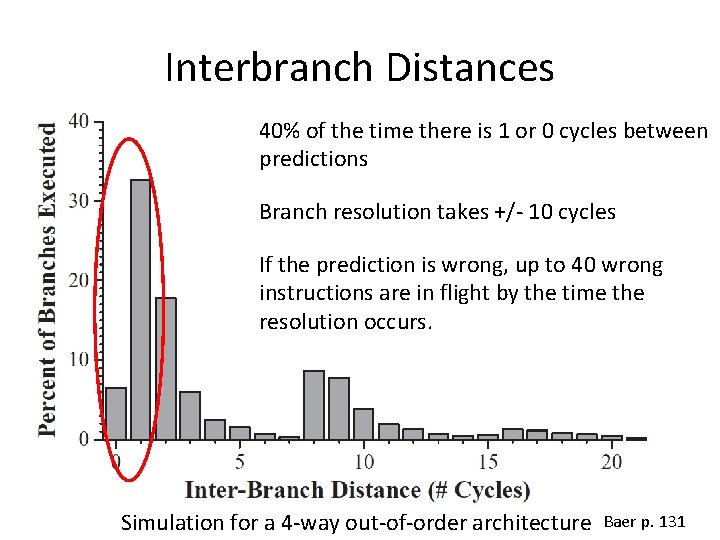

Interbranch Distances 40% of the time there is 1 or 0 cycles between predictions Branch resolution takes +/- 10 cycles If the prediction is wrong, up to 40 wrong instructions are in flight by the time the resolution occurs. Simulation for a 4 -way out-of-order architecture Baer p. 131

Static Predictions OR Always Taken Always Not Taken Baer p. 132

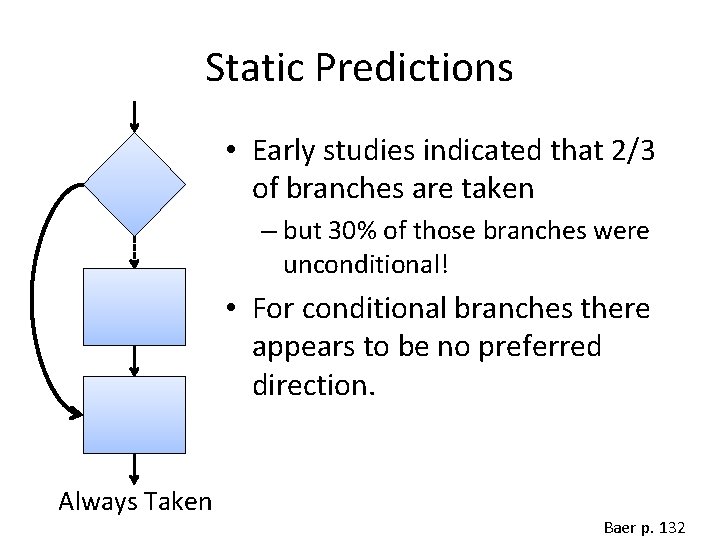

Static Predictions • Early studies indicated that 2/3 of branches are taken – but 30% of those branches were unconditional! • For conditional branches there appears to be no preferred direction. Always Taken Baer p. 132

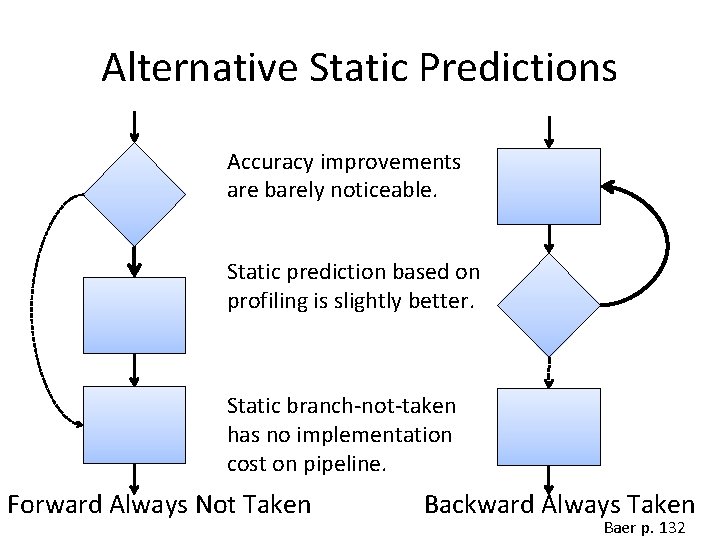

Alternative Static Predictions Accuracy improvements are barely noticeable. Static prediction based on profiling is slightly better. Static branch-not-taken has no implementation cost on pipeline. Forward Always Not Taken Backward Always Taken Baer p. 132

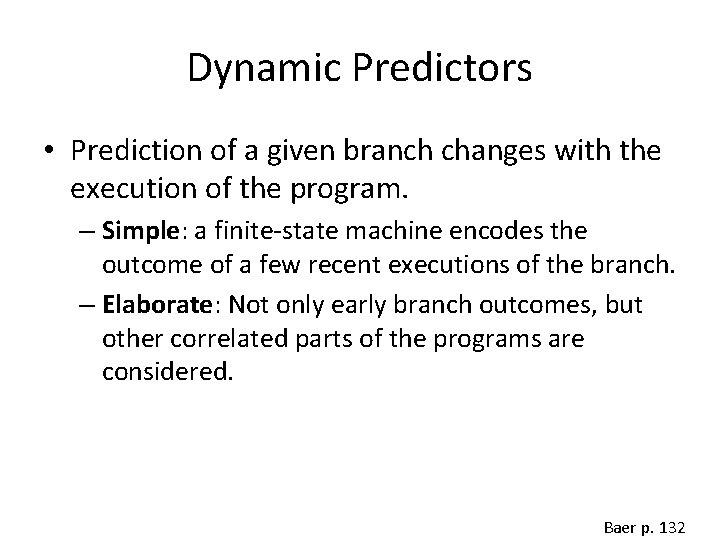

Dynamic Predictors • Prediction of a given branch changes with the execution of the program. – Simple: a finite-state machine encodes the outcome of a few recent executions of the branch. – Elaborate: Not only early branch outcomes, but other correlated parts of the programs are considered. Baer p. 132

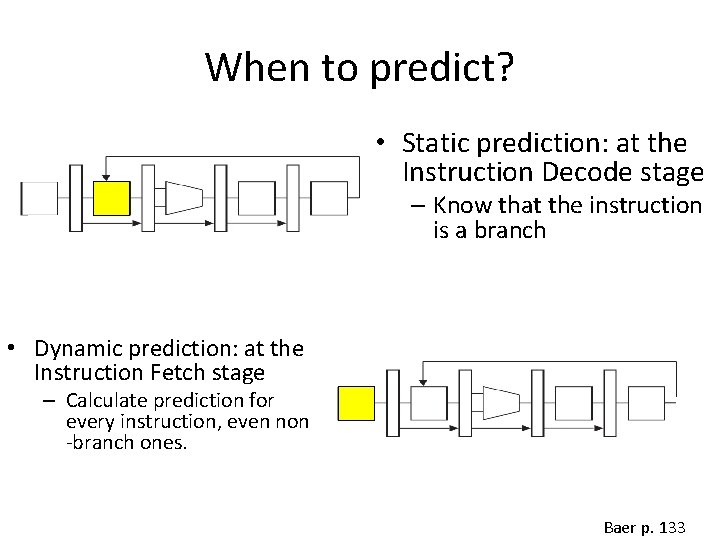

When to predict? • Static prediction: at the Instruction Decode stage – Know that the instruction is a branch • Dynamic prediction: at the Instruction Fetch stage – Calculate prediction for every instruction, even non -branch ones. Baer p. 133

What to Predict? • Branch Direction: Is branch taken on not? • Branch Target: Address of next instruction for a taken branch Baer p. 133

Predicting Direction • Where we find the prediction? Look at the recent past: What was the direction the last time this same branch was executed? • How to encode the prediction? A single bit encodes the prediction: Prediction bit is set at prediction time. Baer p. 133

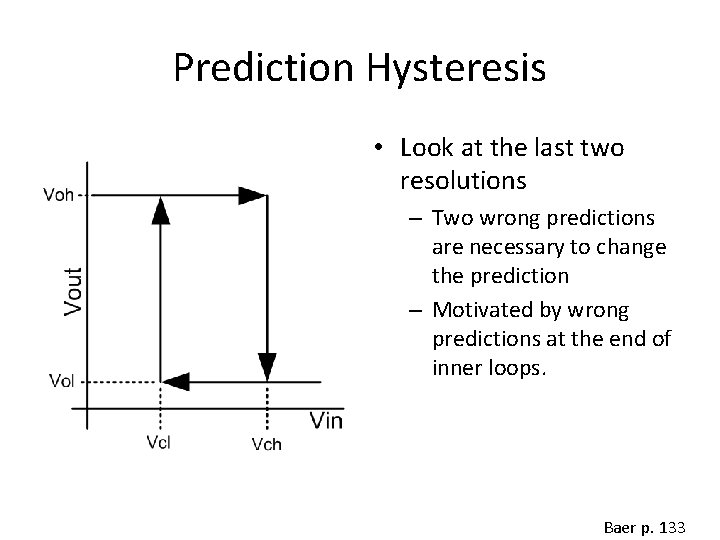

Prediction Hysteresis • Look at the last two resolutions – Two wrong predictions are necessary to change the prediction – Motivated by wrong predictions at the end of inner loops. Baer p. 133

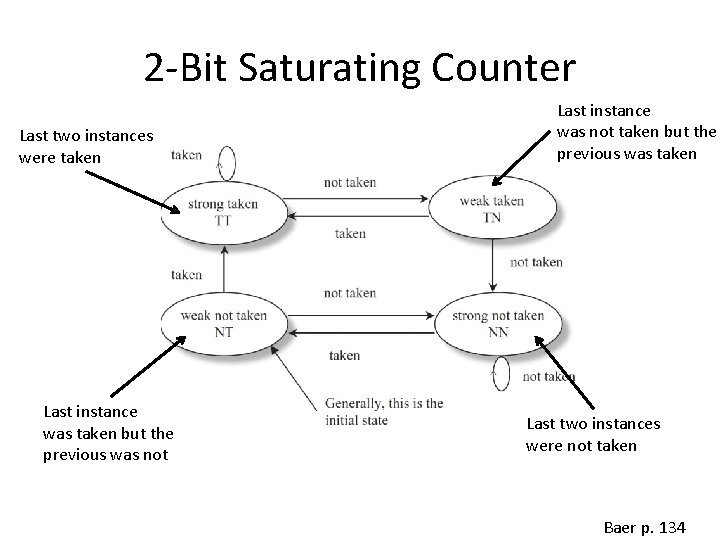

2 -Bit Saturating Counter Last two instances were taken Last instance was taken but the previous was not Last instance was not taken but the previous was taken Last two instances were not taken Baer p. 134

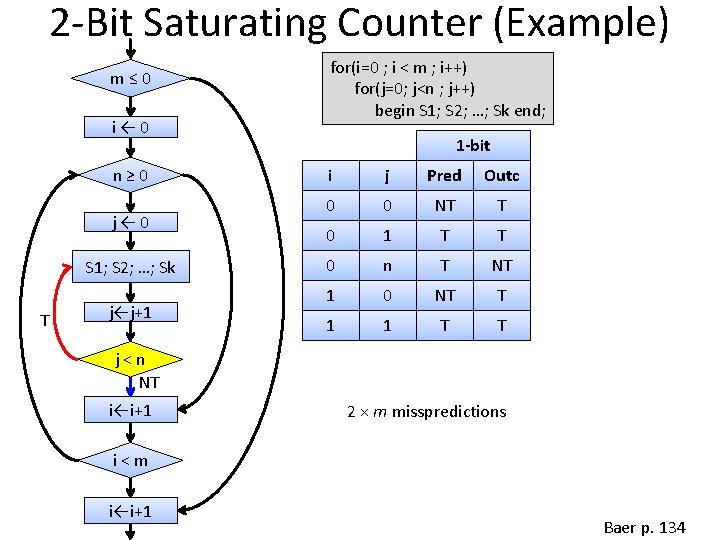

2 -Bit Saturating Counter (Example) m≤ 0 i← 0 n≥ 0 j← 0 S 1; S 2; …; Sk T j←j+1 for(i=0 ; i < m ; i++) for(j=0; j<n ; j++) begin S 1; S 2; …; Sk end; 1 -bit i j Pred Outc 0 0 NT T 0 1 T T 0 n T NT 1 0 NT T 1 1 T T j<n NT i←i+1 2 × m misspredictions i<m i←i+1 Baer p. 134

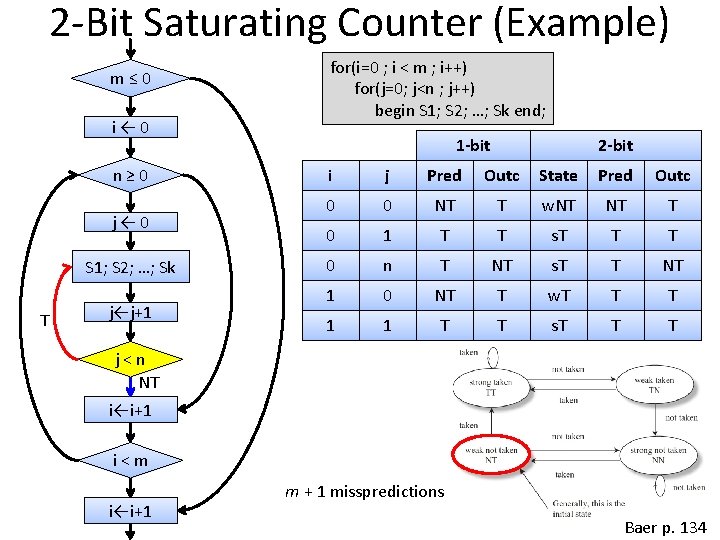

2 -Bit Saturating Counter (Example) m≤ 0 i← 0 n≥ 0 j← 0 S 1; S 2; …; Sk T j←j+1 for(i=0 ; i < m ; i++) for(j=0; j<n ; j++) begin S 1; S 2; …; Sk end; 1 -bit 2 -bit i j Pred Outc State Pred Outc 0 0 NT T w. NT NT T 0 1 T T s. T T T 0 n T NT s. T T NT 1 0 NT T w. T T T 1 1 T T s. T T T j<n NT i←i+1 i<m i←i+1 m + 1 misspredictions Baer p. 134

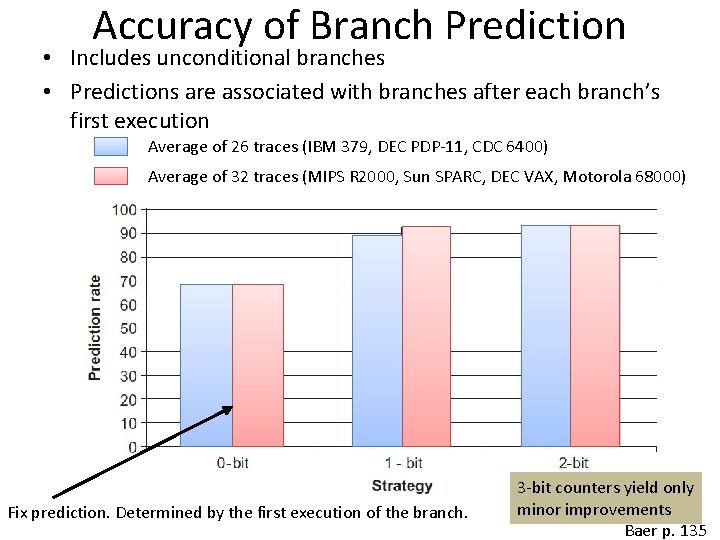

Accuracy of Branch Prediction • Includes unconditional branches • Predictions are associated with branches after each branch’s first execution Average of 26 traces (IBM 379, DEC PDP-11, CDC 6400) Average of 32 traces (MIPS R 2000, Sun SPARC, DEC VAX, Motorola 68000) Fix prediction. Determined by the first execution of the branch. 3 -bit counters yield only minor improvements Baer p. 135

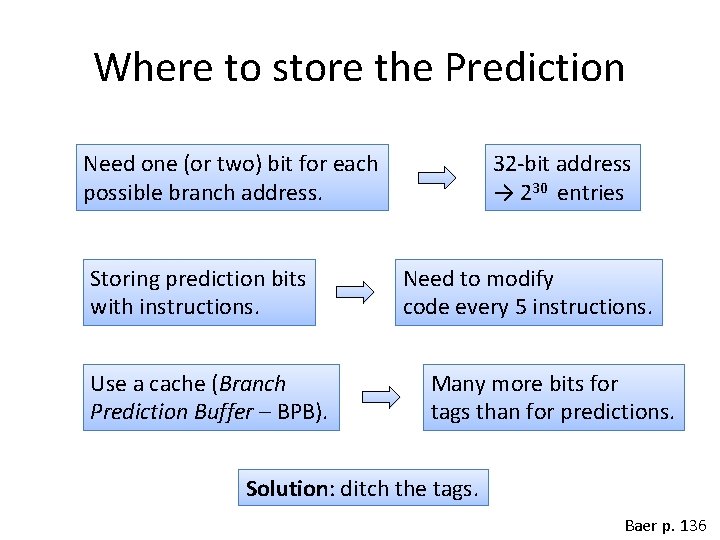

Where to store the Prediction Need one (or two) bit for each possible branch address. Storing prediction bits with instructions. Use a cache (Branch Prediction Buffer – BPB). 32 -bit address → 230 entries Need to modify code every 5 instructions. Many more bits for tags than for predictions. Solution: ditch the tags. Baer p. 136

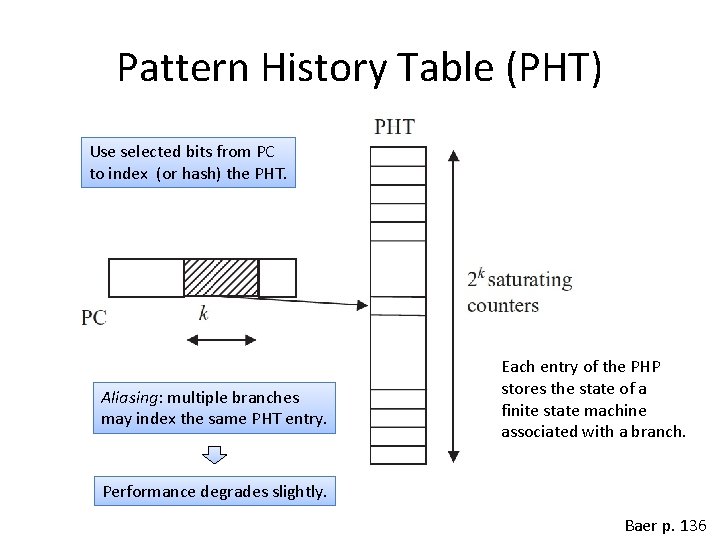

Pattern History Table (PHT) Use selected bits from PC to index (or hash) the PHT. Aliasing: multiple branches may index the same PHT entry. Each entry of the PHP stores the state of a finite state machine associated with a branch. Performance degrades slightly. Baer p. 136

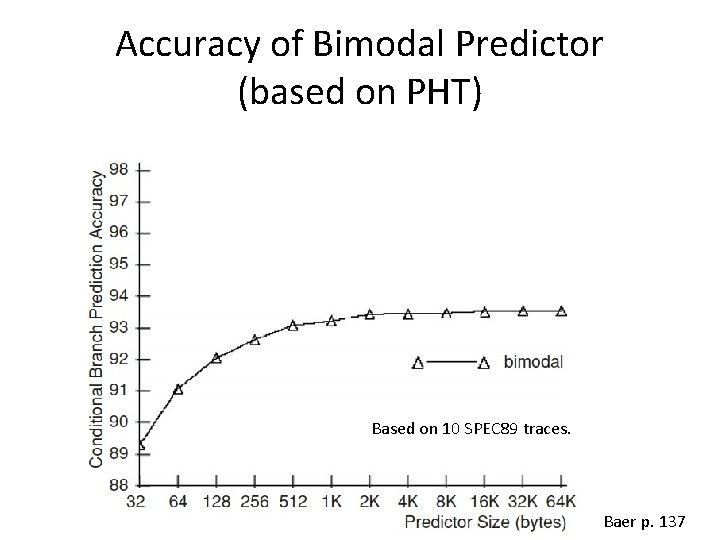

Accuracy of Bimodal Predictor (based on PHT) Based on 10 SPEC 89 traces. Baer p. 137

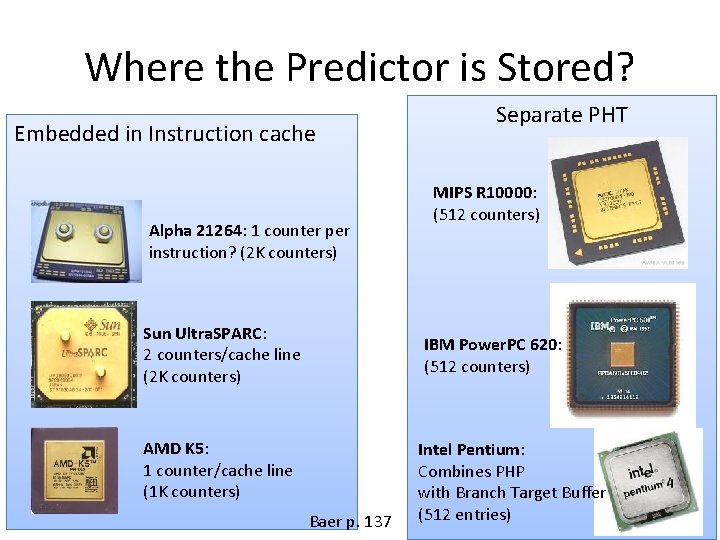

Where the Predictor is Stored? Embedded in Instruction cache Alpha 21264: 1 counter per instruction? (2 K counters) Sun Ultra. SPARC: 2 counters/cache line (2 K counters) Separate PHT MIPS R 10000: (512 counters) IBM Power. PC 620: (512 counters) AMD K 5: 1 counter/cache line (1 K counters) Baer p. 137 Intel Pentium: Combines PHP with Branch Target Buffer (512 entries)

Feedback and Recovery Feedback Baer p. 137

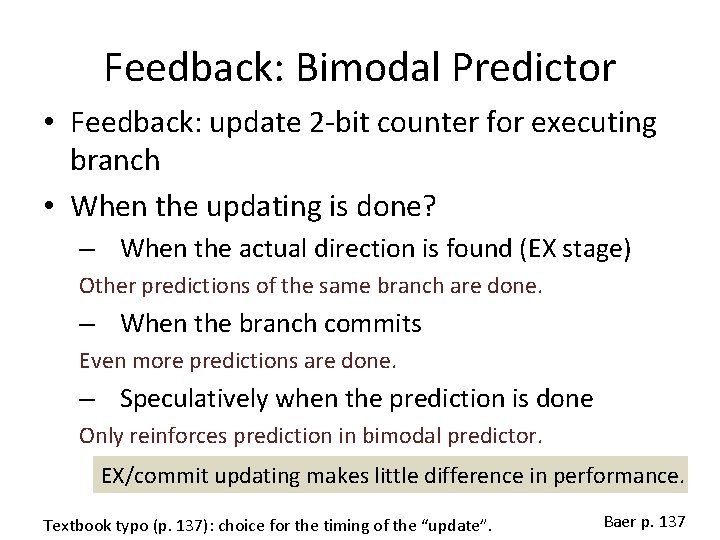

Feedback: Bimodal Predictor • Feedback: update 2 -bit counter for executing branch • When the updating is done? – When the actual direction is found (EX stage) Other predictions of the same branch are done. – When the branch commits Even more predictions are done. – Speculatively when the prediction is done Only reinforces prediction in bimodal predictor. EX/commit updating makes little difference in performance. Textbook typo (p. 137): choice for the timing of the “update”. Baer p. 137

Local × Global Predictor • Local: – Only use history of the branch to be predicted • Global: – Use history of other branches that precede the branch to be predicted. Baer p. 138

Motivation for Global Prediction • Example from SPEC program eqntott: if (aa == 2) aa = 0; if (bb == 2) bb = 0; if(aa != bb){ …. } /* b 1 */ /* b 2 */ /* b 3 */ If b 1 and b 2 are taken, then b 3 is not taken. Baer p. 138

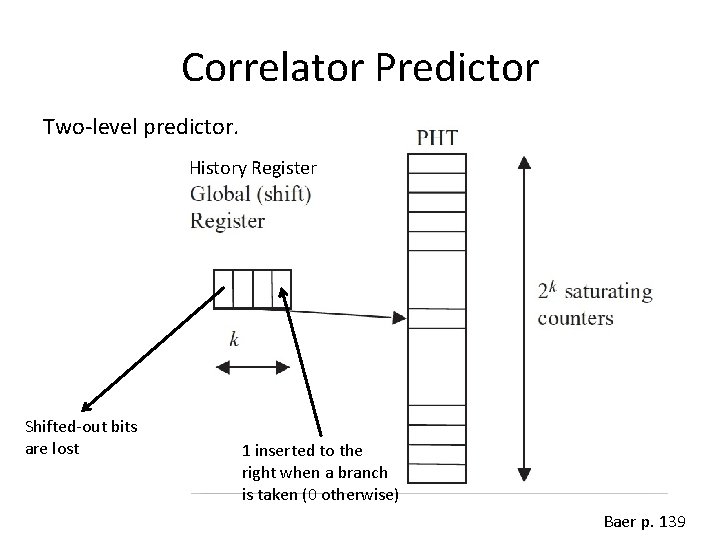

Correlator Predictor Two-level predictor. History Register Shifted-out bits are lost 1 inserted to the right when a branch is taken (0 otherwise) Baer p. 139

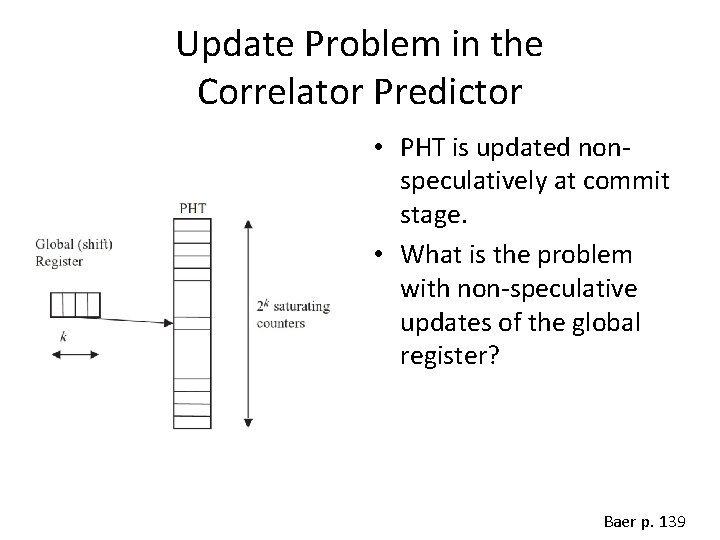

Update Problem in the Correlator Predictor • PHT is updated nonspeculatively at commit stage. • What is the problem with non-speculative updates of the global register? Baer p. 139

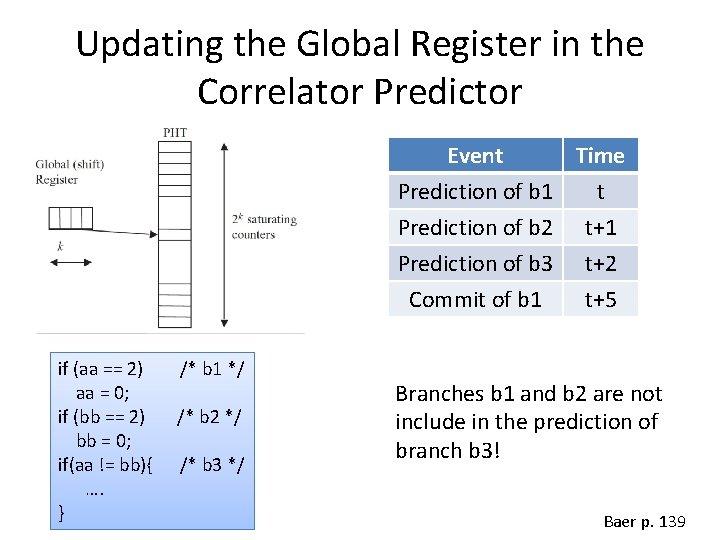

Updating the Global Register in the Correlator Predictor Event Time Prediction of b 1 t Prediction of b 2 t+1 Prediction of b 3 t+2 Commit of b 1 if (aa == 2) aa = 0; if (bb == 2) bb = 0; if(aa != bb){ …. } /* b 1 */ /* b 2 */ /* b 3 */ t+5 Branches b 1 and b 2 are not include in the prediction of branch b 3! Baer p. 139

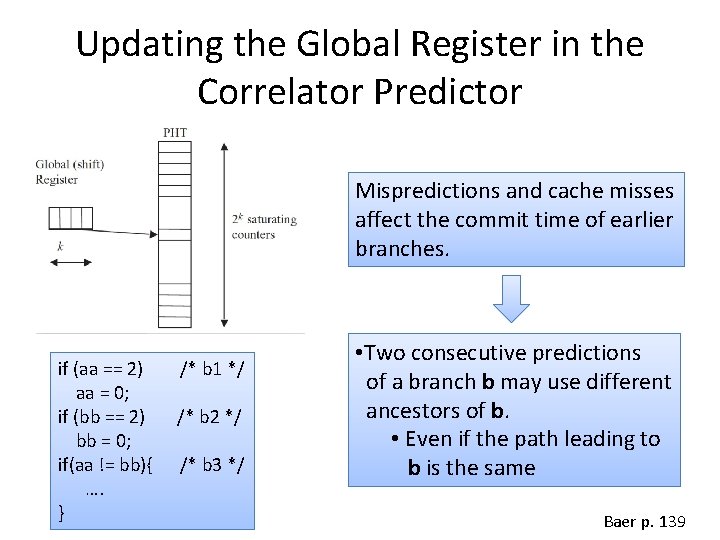

Updating the Global Register in the Correlator Predictor Mispredictions and cache misses affect the commit time of earlier branches. if (aa == 2) aa = 0; if (bb == 2) bb = 0; if(aa != bb){ …. } /* b 1 */ /* b 2 */ /* b 3 */ • Two consecutive predictions of a branch b may use different ancestors of b. • Even if the path leading to b is the same Baer p. 139

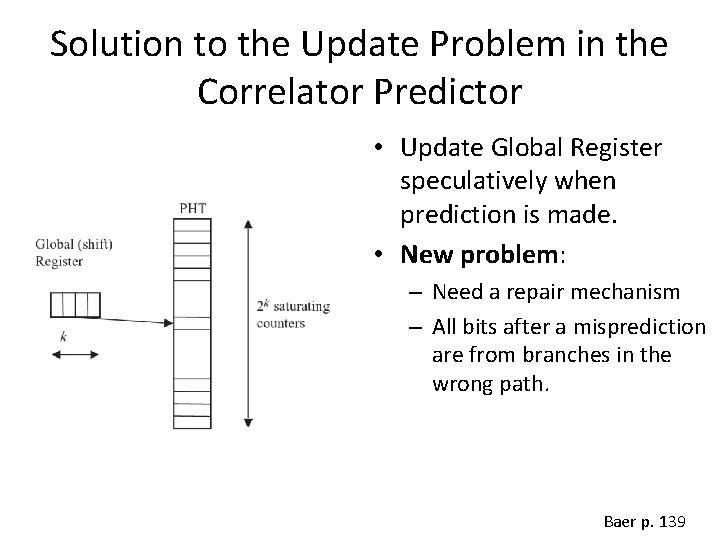

Solution to the Update Problem in the Correlator Predictor • Update Global Register speculatively when prediction is made. • New problem: – Need a repair mechanism – All bits after a misprediction are from branches in the wrong path. Baer p. 139

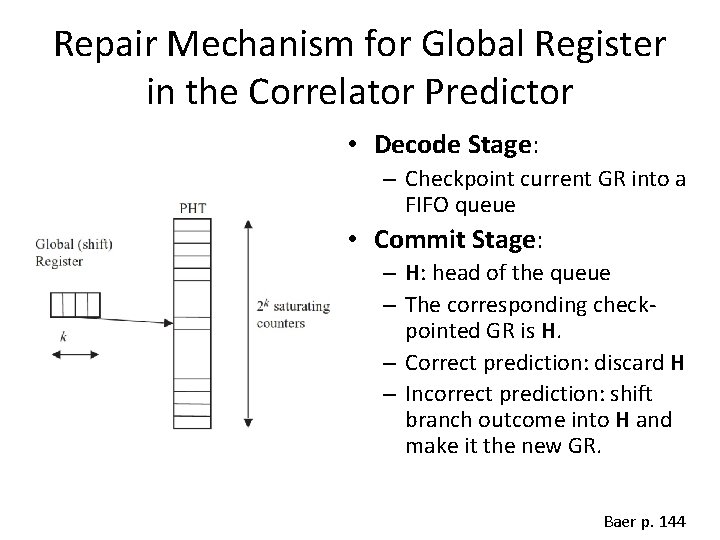

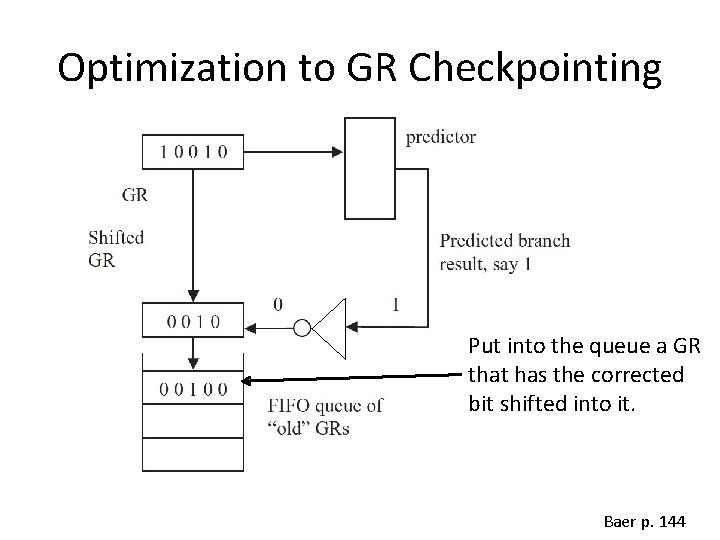

Repair Mechanism for Global Register in the Correlator Predictor • Decode Stage: – Checkpoint current GR into a FIFO queue • Commit Stage: – H: head of the queue – The corresponding checkpointed GR is H. – Correct prediction: discard H – Incorrect prediction: shift branch outcome into H and make it the new GR. Baer p. 144

Optimization to GR Checkpointing Put into the queue a GR that has the corrected bit shifted into it. Baer p. 144

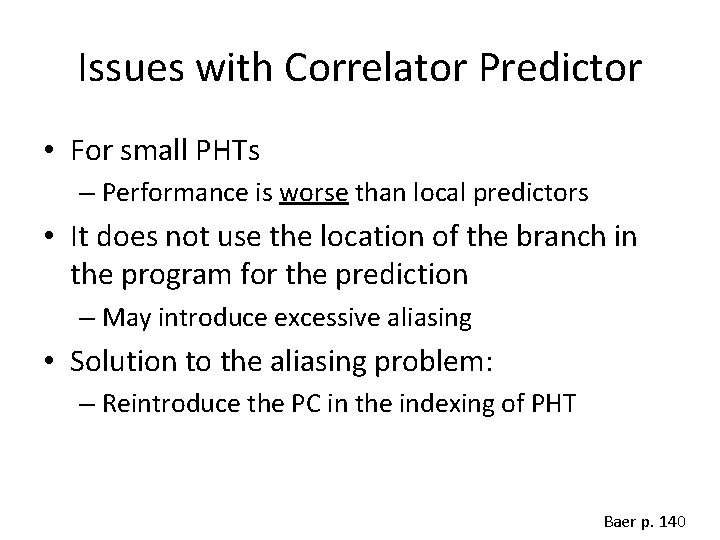

Issues with Correlator Predictor • For small PHTs – Performance is worse than local predictors • It does not use the location of the branch in the program for the prediction – May introduce excessive aliasing • Solution to the aliasing problem: – Reintroduce the PC in the indexing of PHT Baer p. 140

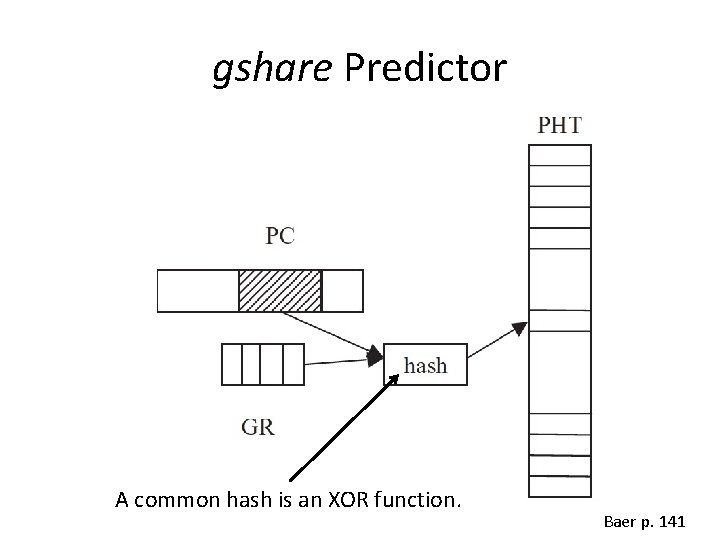

gshare Predictor A common hash is an XOR function. Baer p. 141

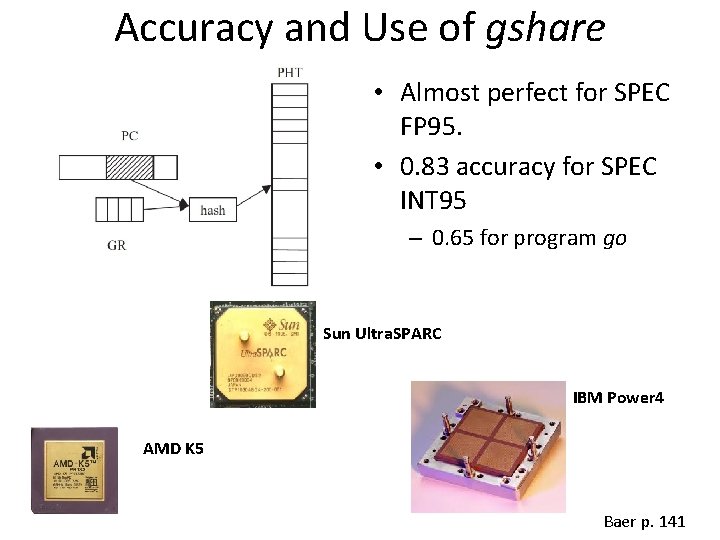

Accuracy and Use of gshare • Almost perfect for SPEC FP 95. • 0. 83 accuracy for SPEC INT 95 – 0. 65 for program go Sun Ultra. SPARC IBM Power 4 AMD K 5 Baer p. 141

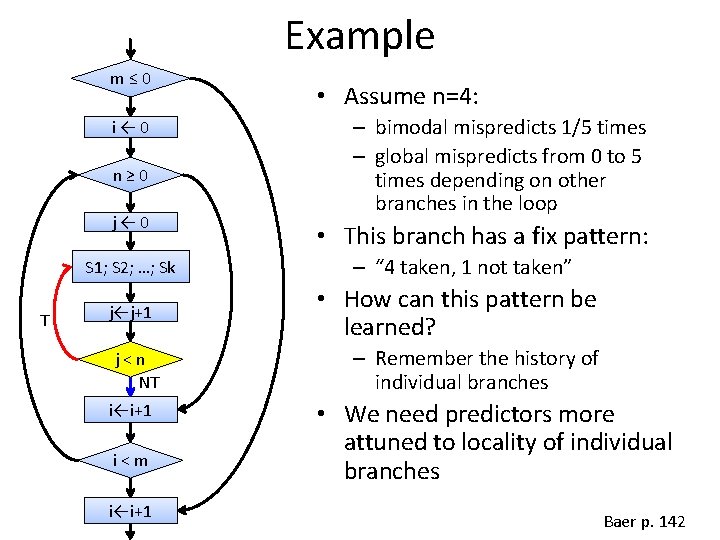

Example m≤ 0 i← 0 n≥ 0 j← 0 S 1; S 2; …; Sk T j←j+1 j<n NT i←i+1 i<m i←i+1 • Assume n=4: – bimodal mispredicts 1/5 times – global mispredicts from 0 to 5 times depending on other branches in the loop • This branch has a fix pattern: – “ 4 taken, 1 not taken” • How can this pattern be learned? – Remember the history of individual branches • We need predictors more attuned to locality of individual branches Baer p. 142

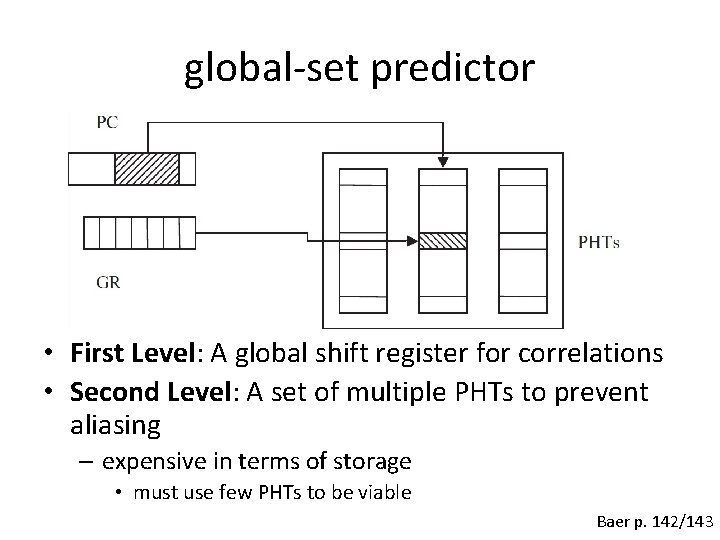

global-set predictor • First Level: A global shift register for correlations • Second Level: A set of multiple PHTs to prevent aliasing – expensive in terms of storage • must use few PHTs to be viable Baer p. 142/143

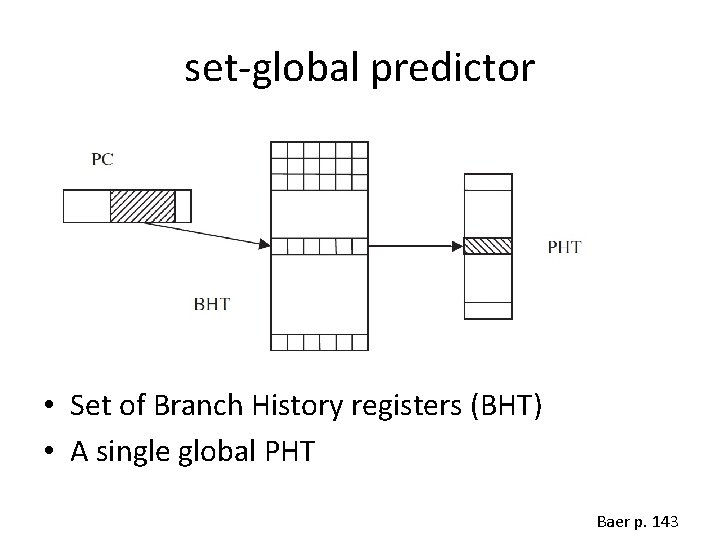

set-global predictor • Set of Branch History registers (BHT) • A single global PHT Baer p. 143

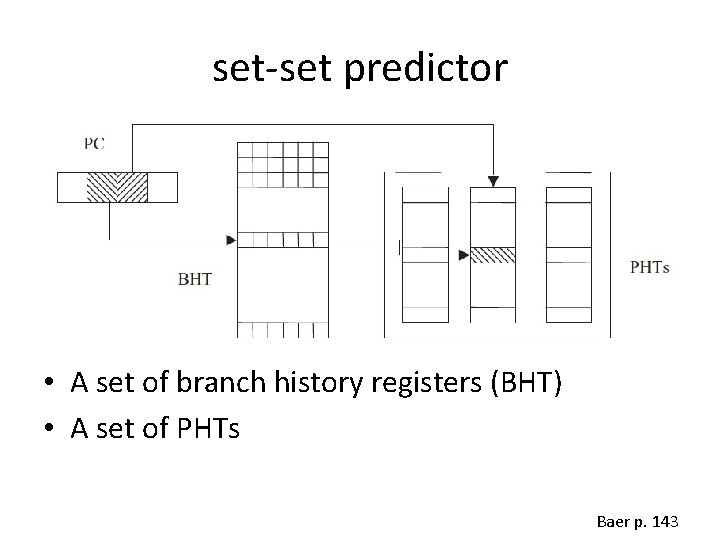

set-set predictor • A set of branch history registers (BHT) • A set of PHTs Baer p. 143

Predicting the Branch Target • When is the target of a branch computed? – In a superscalar architecture (p. e. , the IA-32 of the Intel P 6) after several pipeline stages. • What is the point of predicting direction early if we don’t know where the branch goes? – Need to also predict the branch target address. Baer p. 145

Branch Target Buffer (BTB) • A cachelike storage that records branch addresses and associated targets • If there is a hit in BTB for branch predicted taken: – PC ← Target in BTB for branch Baer p. 146

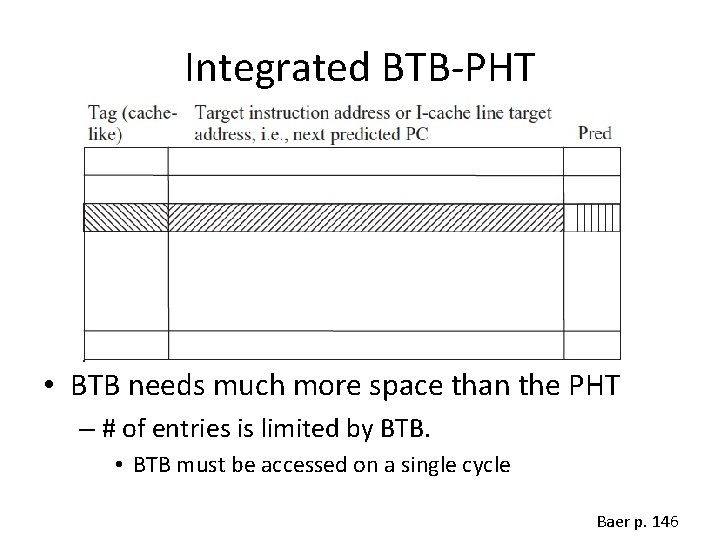

Integrated BTB-PHT • BTB needs much more space than the PHT – # of entries is limited by BTB. • BTB must be accessed on a single cycle Baer p. 146

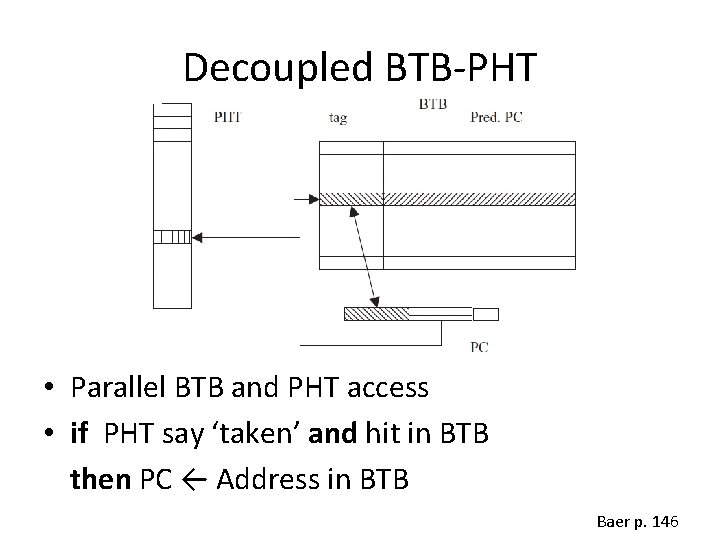

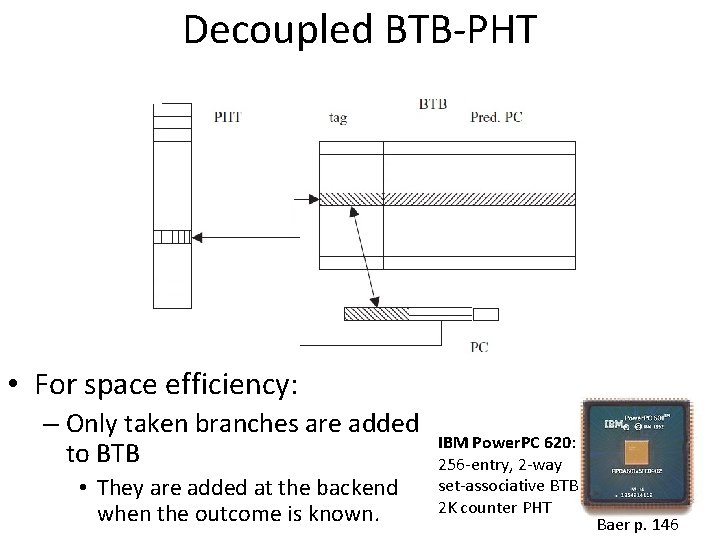

Decoupled BTB-PHT • Parallel BTB and PHT access • if PHT say ‘taken’ and hit in BTB then PC ← Address in BTB Baer p. 146

Decoupled BTB-PHT • For space efficiency: – Only taken branches are added to BTB • They are added at the backend when the outcome is known. IBM Power. PC 620: 256 -entry, 2 -way set-associative BTB 2 K counter PHT Baer p. 146

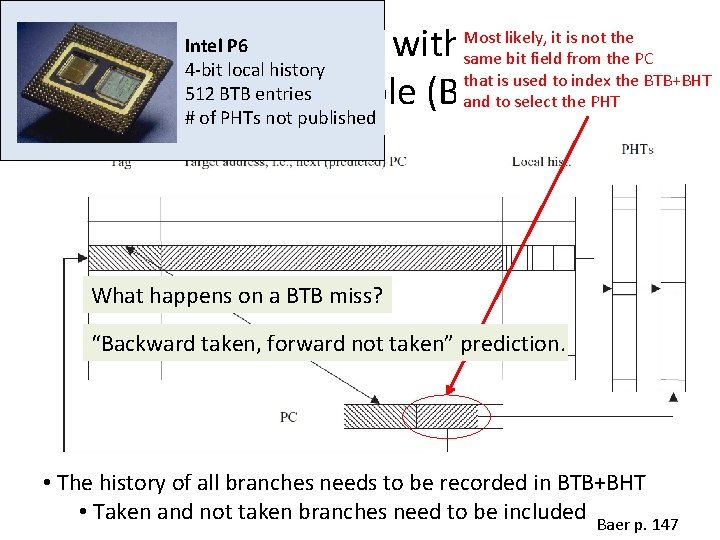

Integrating the BTB with the Branch History Table (BHT) Intel P 6 4 -bit local history 512 BTB entries # of PHTs not published Most likely, it is not the same bit field from the PC that is used to index the BTB+BHT and to select the PHT What happens on a BTB miss? “Backward taken, forward not taken” prediction. • The history of all branches needs to be recorded in BTB+BHT • Taken and not taken branches need to be included Baer p. 147

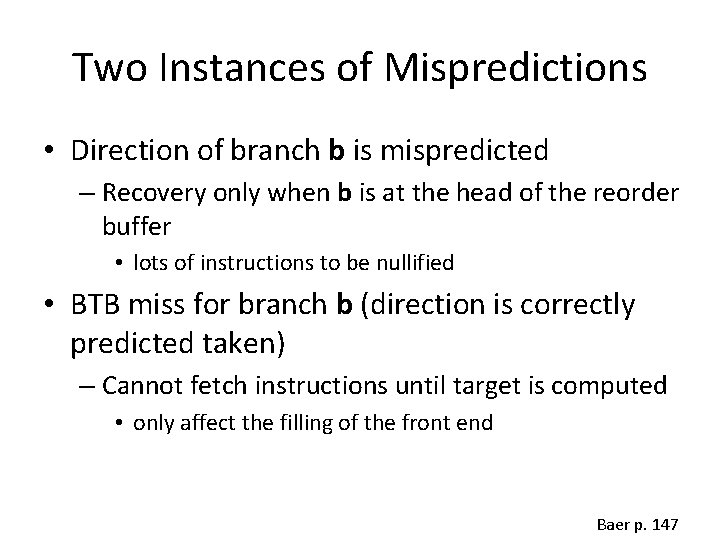

Two Instances of Mispredictions • Direction of branch b is mispredicted – Recovery only when b is at the head of the reorder buffer • lots of instructions to be nullified • BTB miss for branch b (direction is correctly predicted taken) – Cannot fetch instructions until target is computed • only affect the filling of the front end Baer p. 147

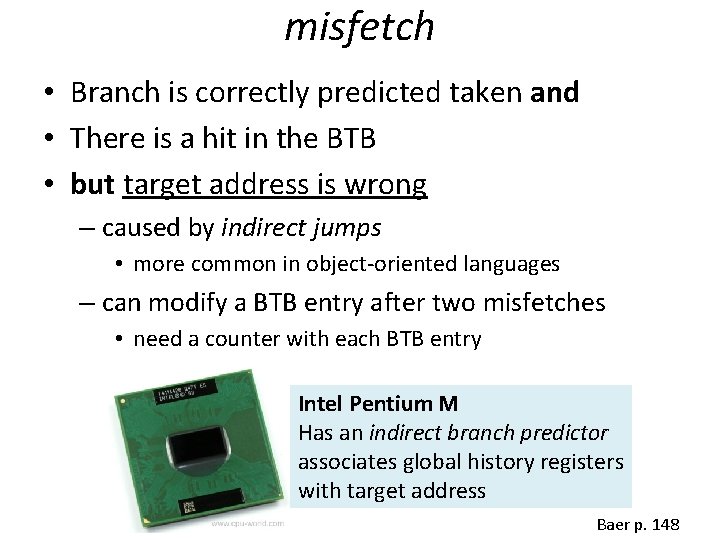

misfetch • Branch is correctly predicted taken and • There is a hit in the BTB • but target address is wrong – caused by indirect jumps • more common in object-oriented languages – can modify a BTB entry after two misfetches • need a counter with each BTB entry Intel Pentium M Has an indirect branch predictor associates global history registers with target address Baer p. 148

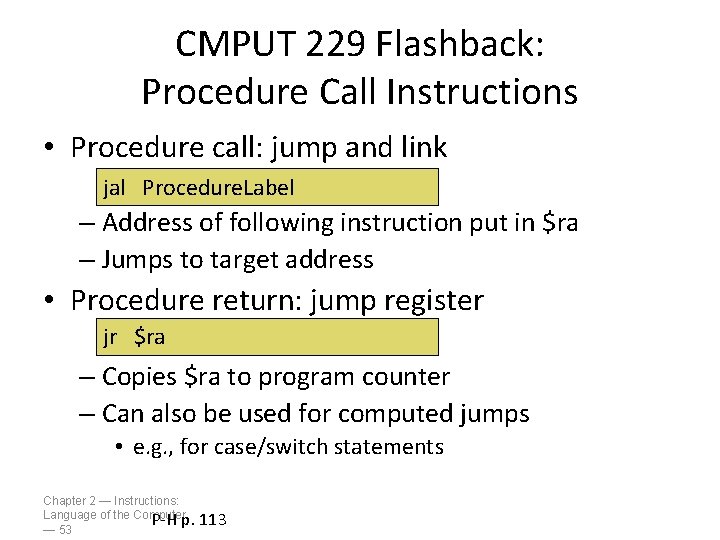

CMPUT 229 Flashback: Procedure Call Instructions • Procedure call: jump and link jal Procedure. Label – Address of following instruction put in $ra – Jumps to target address • Procedure return: jump register jr $ra – Copies $ra to program counter – Can also be used for computed jumps • e. g. , for case/switch statements Chapter 2 — Instructions: Language of the Computer P-H p. — 53 113

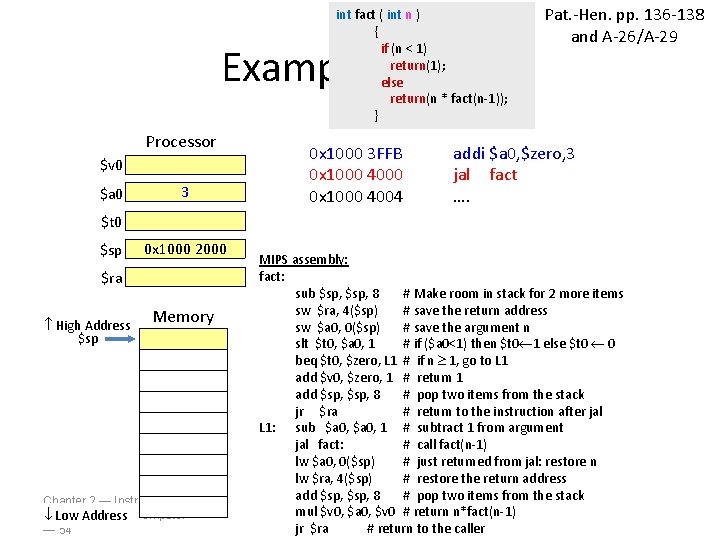

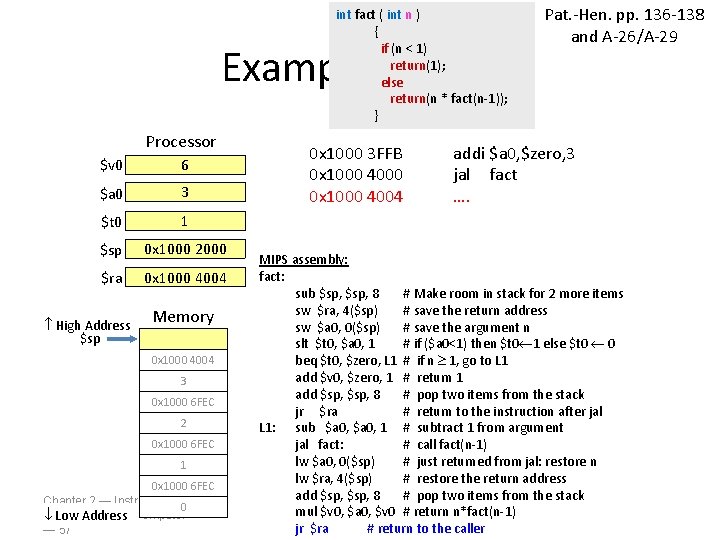

int fact ( int n ) { if (n < 1) return(1); else return(n * fact(n-1)); } Example fact(3) Processor $v 0 $a 0 3 0 x 1000 3 FFB 0 x 1000 4000 0 x 1000 4004 Pat. -Hen. pp. 136 -138 and A-26/A-29 addi $a 0, $zero, 3 jal fact …. $t 0 $sp 0 x 1000 2000 $ra High Address $sp Memory Chapter 2 — Instructions: Language of the Computer Low Address — 54 MIPS assembly: fact: sub $sp, 8 # Make room in stack for 2 more items sw $ra, 4($sp) # save the return address sw $a 0, 0($sp) # save the argument n slt $t 0, $a 0, 1 # if ($a 0<1) then $t 0 1 else $t 0 0 beq $t 0, $zero, L 1 # if n 1, go to L 1 add $v 0, $zero, 1 # return 1 add $sp, 8 # pop two items from the stack jr $ra # return to the instruction after jal L 1: sub $a 0, 1 # subtract 1 from argument jal fact: # call fact(n-1) lw $a 0, 0($sp) # just returned from jal: restore n lw $ra, 4($sp) # restore the return address add $sp, 8 # pop two items from the stack mul $v 0, $a 0, $v 0 # return n*fact(n-1) jr $ra # return to the caller

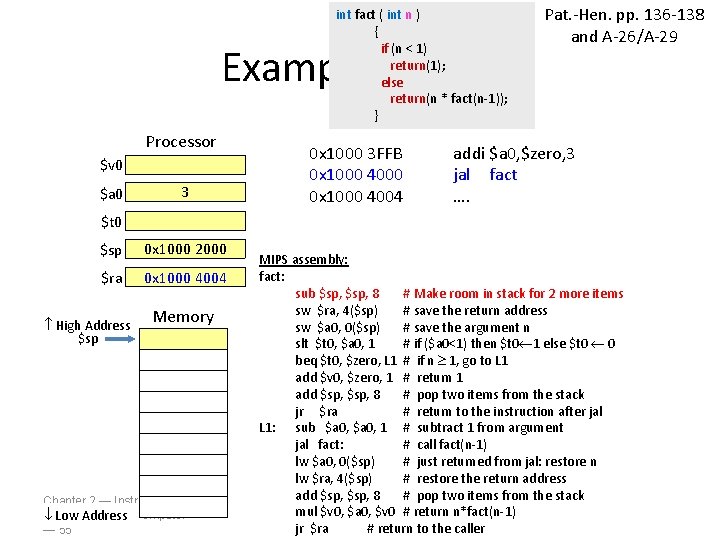

int fact ( int n ) { if (n < 1) return(1); else return(n * fact(n-1)); } Example fact(3) Processor $v 0 $a 0 3 0 x 1000 3 FFB 0 x 1000 4000 0 x 1000 4004 Pat. -Hen. pp. 136 -138 and A-26/A-29 addi $a 0, $zero, 3 jal fact …. $t 0 $sp 0 x 1000 2000 $ra 0 x 1000 4004 High Address $sp Memory Chapter 2 — Instructions: Language of the Computer Low Address — 55 MIPS assembly: fact: sub $sp, 8 # Make room in stack for 2 more items sw $ra, 4($sp) # save the return address sw $a 0, 0($sp) # save the argument n slt $t 0, $a 0, 1 # if ($a 0<1) then $t 0 1 else $t 0 0 beq $t 0, $zero, L 1 # if n 1, go to L 1 add $v 0, $zero, 1 # return 1 add $sp, 8 # pop two items from the stack jr $ra # return to the instruction after jal L 1: sub $a 0, 1 # subtract 1 from argument jal fact: # call fact(n-1) lw $a 0, 0($sp) # just returned from jal: restore n lw $ra, 4($sp) # restore the return address add $sp, 8 # pop two items from the stack mul $v 0, $a 0, $v 0 # return n*fact(n-1) jr $ra # return to the caller

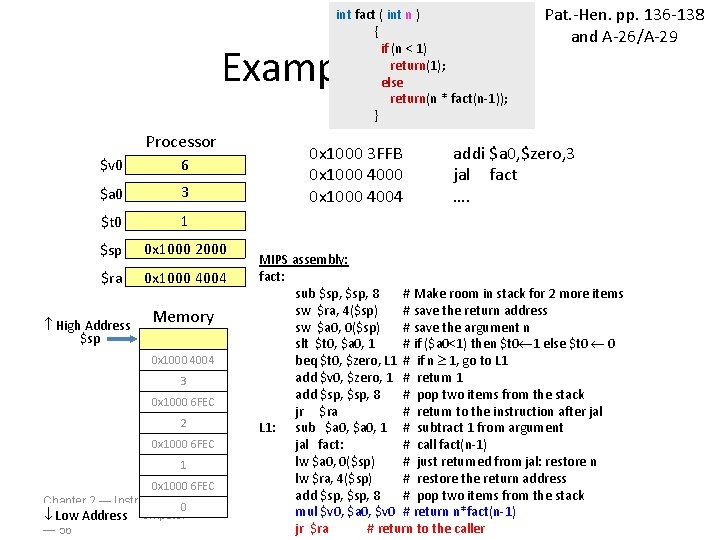

int fact ( int n ) { if (n < 1) return(1); else return(n * fact(n-1)); } Example fact(3) Processor $v 0 6 $a 0 3 $t 0 1 $sp 0 x 1000 2000 $ra 0 x 1000 4004 High Address $sp Memory 0 x 1000 4004 3 0 x 1000 6 FEC 2 0 x 1000 6 FEC 1 0 x 1000 6 FEC Chapter 2 — Instructions: 0 Language of the Computer Low Address — 56 0 x 1000 3 FFB 0 x 1000 4000 0 x 1000 4004 Pat. -Hen. pp. 136 -138 and A-26/A-29 addi $a 0, $zero, 3 jal fact …. MIPS assembly: fact: sub $sp, 8 # Make room in stack for 2 more items sw $ra, 4($sp) # save the return address sw $a 0, 0($sp) # save the argument n slt $t 0, $a 0, 1 # if ($a 0<1) then $t 0 1 else $t 0 0 beq $t 0, $zero, L 1 # if n 1, go to L 1 add $v 0, $zero, 1 # return 1 add $sp, 8 # pop two items from the stack jr $ra # return to the instruction after jal L 1: sub $a 0, 1 # subtract 1 from argument jal fact: # call fact(n-1) lw $a 0, 0($sp) # just returned from jal: restore n lw $ra, 4($sp) # restore the return address add $sp, 8 # pop two items from the stack mul $v 0, $a 0, $v 0 # return n*fact(n-1) jr $ra # return to the caller

int fact ( int n ) { if (n < 1) return(1); else return(n * fact(n-1)); } Example fact(3) Processor $v 0 6 $a 0 3 $t 0 1 $sp 0 x 1000 2000 $ra 0 x 1000 4004 High Address $sp Memory 0 x 1000 4004 3 0 x 1000 6 FEC 2 0 x 1000 6 FEC 1 0 x 1000 6 FEC Chapter 2 — Instructions: 0 Language of the Computer Low Address — 57 0 x 1000 3 FFB 0 x 1000 4000 0 x 1000 4004 Pat. -Hen. pp. 136 -138 and A-26/A-29 addi $a 0, $zero, 3 jal fact …. MIPS assembly: fact: sub $sp, 8 # Make room in stack for 2 more items sw $ra, 4($sp) # save the return address sw $a 0, 0($sp) # save the argument n slt $t 0, $a 0, 1 # if ($a 0<1) then $t 0 1 else $t 0 0 beq $t 0, $zero, L 1 # if n 1, go to L 1 add $v 0, $zero, 1 # return 1 add $sp, 8 # pop two items from the stack jr $ra # return to the instruction after jal L 1: sub $a 0, 1 # subtract 1 from argument jal fact: # call fact(n-1) lw $a 0, 0($sp) # just returned from jal: restore n lw $ra, 4($sp) # restore the return address add $sp, 8 # pop two items from the stack mul $v 0, $a 0, $v 0 # return n*fact(n-1) jr $ra # return to the caller

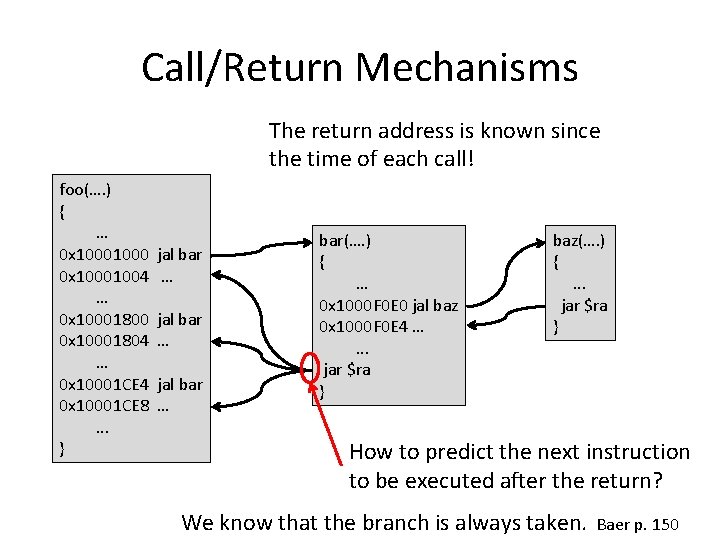

Call/Return Mechanisms The return address is known since the time of each call! foo(…. ) { … 0 x 10001004 … 0 x 10001800 0 x 10001804 … 0 x 10001 CE 4 0 x 10001 CE 8. . . } jal bar … bar(…. ) { … 0 x 1000 F 0 E 0 jal baz 0 x 1000 F 0 E 4 …. . . jar $ra } baz(…. ) {. . . jar $ra } How to predict the next instruction to be executed after the return? We know that the branch is always taken. Baer p. 150

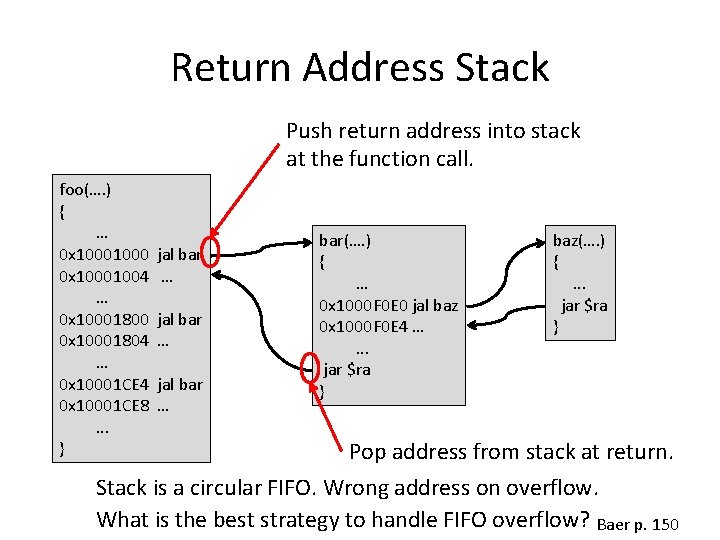

Return Address Stack Push return address into stack at the function call. foo(…. ) { … 0 x 10001004 … 0 x 10001800 0 x 10001804 … 0 x 10001 CE 4 0 x 10001 CE 8. . . } jal bar … bar(…. ) { … 0 x 1000 F 0 E 0 jal baz 0 x 1000 F 0 E 4 …. . . jar $ra } baz(…. ) {. . . jar $ra } Pop address from stack at return. Stack is a circular FIFO. Wrong address on overflow. What is the best strategy to handle FIFO overflow? Baer p. 150

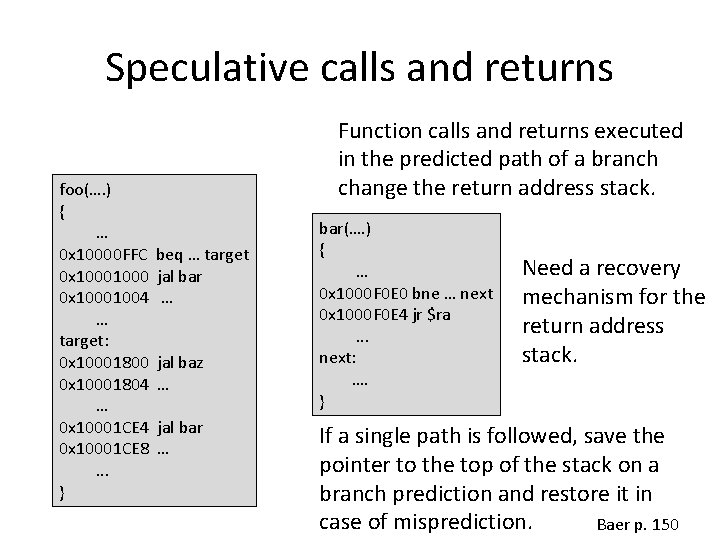

Speculative calls and returns foo(…. ) { … 0 x 10000 FFC 0 x 10001004 … target: 0 x 10001800 0 x 10001804 … 0 x 10001 CE 4 0 x 10001 CE 8. . . } Function calls and returns executed in the predicted path of a branch change the return address stack. beq … target jal bar … jal baz … jal bar … bar(…. ) { … 0 x 1000 F 0 E 0 bne … next 0 x 1000 F 0 E 4 jr $ra. . . next: …. } Need a recovery mechanism for the return address stack. If a single path is followed, save the pointer to the top of the stack on a branch prediction and restore it in case of misprediction. Baer p. 150

Return Stacks MIPS R 10000: 1 -entry return stack DEC Alpha 21164: 12 -entry return stack Intel Pentium III: 16 -entry return stack Baer p. 151

A different way of doing things… Don’t know which way to go? “Some people go both ways. ” (Scarecrow, The Wizard of Oz) Baer p. 151

IBM System 360/91 • Upon decoding a branch: – fetch, decode, and enqueue both the taken and the not taken paths into separate buffers • Upon branch resolution: – one buffer becomes the execution path – the other is discarded Baer p. 151

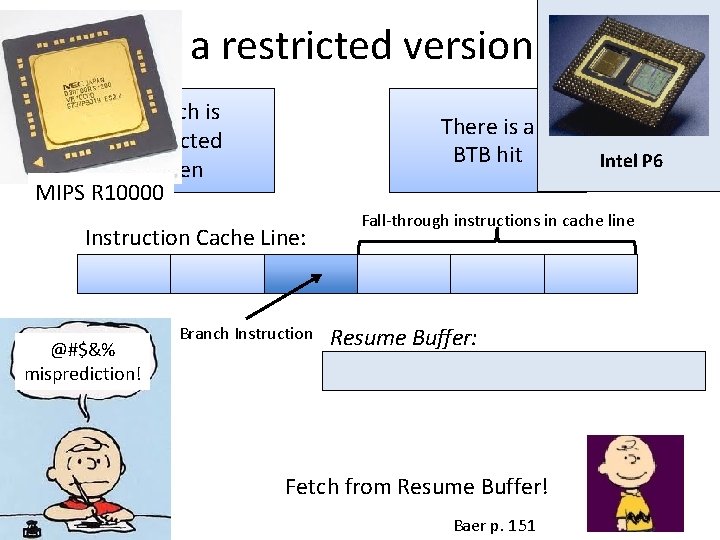

In a restricted version … Branch is predicted taken MIPS R 10000 There is a BTB hit Instruction Cache Line: @#$&% misprediction! Branch Instruction Intel P 6 Fall-through instructions in cache line Resume Buffer: Fetch from Resume Buffer! Baer p. 151

Loop Detector • A separate loop predictor detects loop patterns: – TTTTTTTNTTTTTTTNTT…. • Uses a separate counter for each recognized loop Intel Pentium M Baer p. 151

Sophisticated Predictors • Tension: – Branch Correlation (global information) × Individual Branch Patterns (local information) • neutral aliasing – between branches biased the same way • destructive aliasing – between branches with opposite bias • bias bit – added to BTB – PHT predicts if direction agrees with the bias bit • two branches with strong opposite bias that alias do not destroy each other prediction. Baer p. 152

skewed predictor • Goal: reduce aliasing • Use three PHTs – different hashing function for each PHT – Take majority vote Baer p. 153

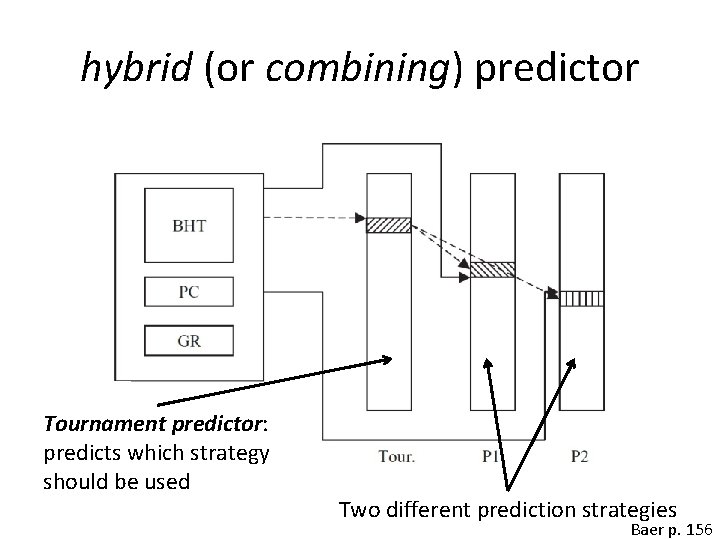

hybrid (or combining) predictor Tournament predictor: predicts which strategy should be used Two different prediction strategies Baer p. 156

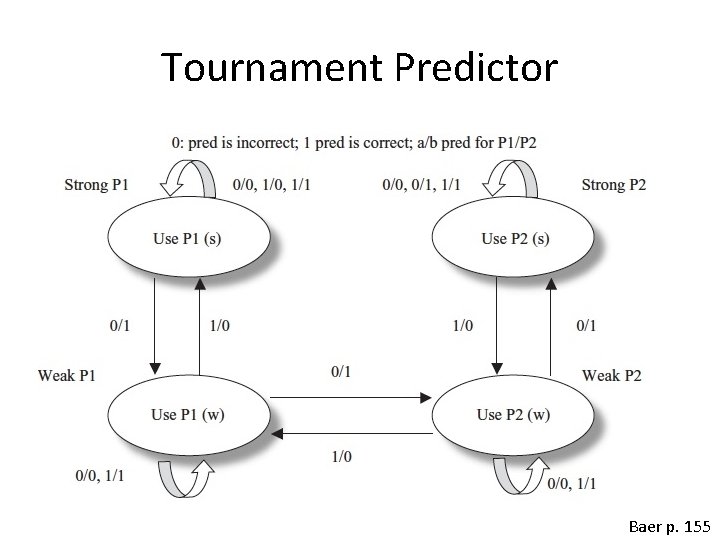

Tournament Predictor Baer p. 155

- Slides: 69