CS 6501 Vision and Language Word Embeddings Today

CS 6501: Vision and Language Word Embeddings

Today • Distributional Semantics • Word 2 Vec CS 6501: Vision and Language

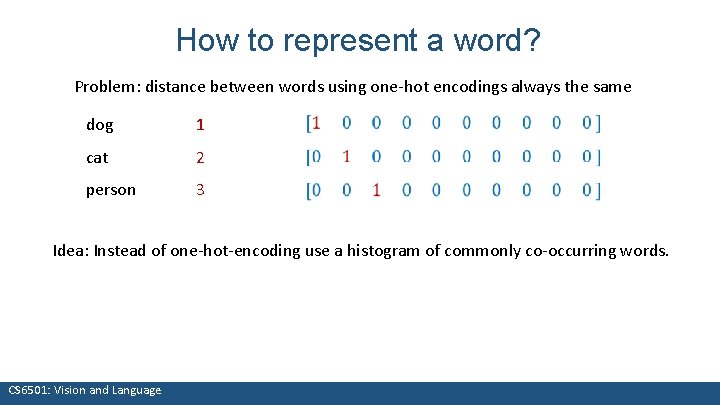

How to represent a word? Problem: distance between words using one-hot encodings always the same dog 1 cat 2 person 3 Idea: Instead of one-hot-encoding use a histogram of commonly co-occurring words. What we see CS 6501: Vision and Language

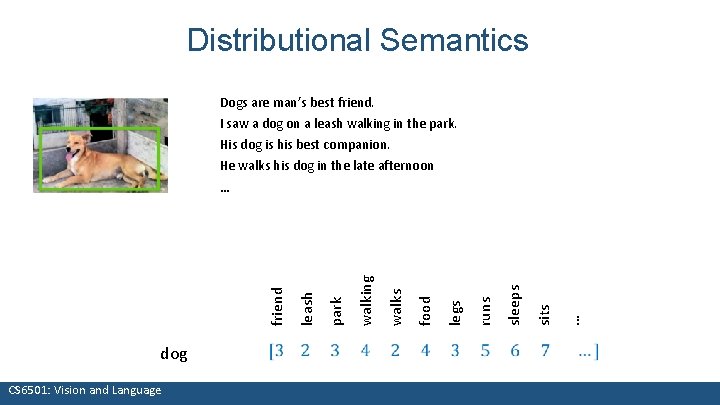

Distributional Semantics Dogs are man’s best friend. I saw a dog on a leash walking in the park. His dog is his best companion. He walks his dog in the late afternoon dog What we see CS 6501: Vision and Language … sits sleeps runs legs food walks walking park leash friend …

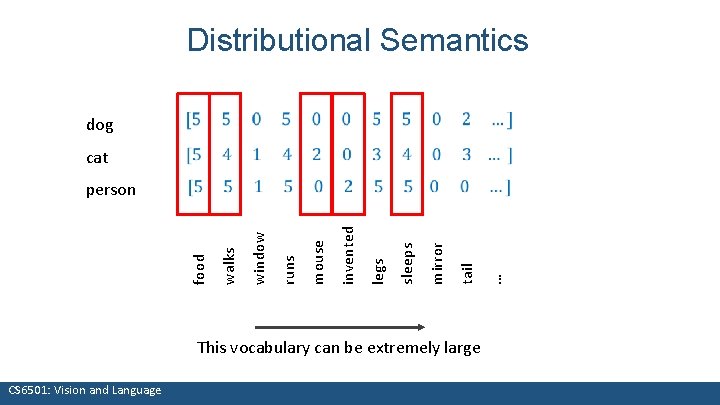

This vocabulary can be extremely large What we see CS 6501: Vision and Language … tail mirror sleeps legs invented person mouse runs cat window food dog walks Distributional Semantics

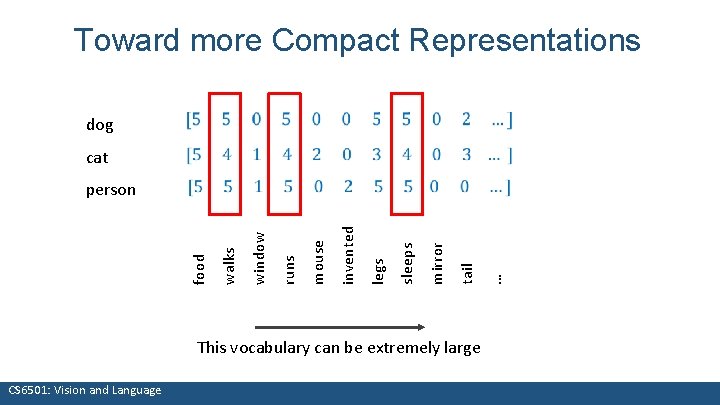

This vocabulary can be extremely large What we see CS 6501: Vision and Language … tail mirror sleeps legs invented person mouse runs cat window food dog walks Toward more Compact Representations

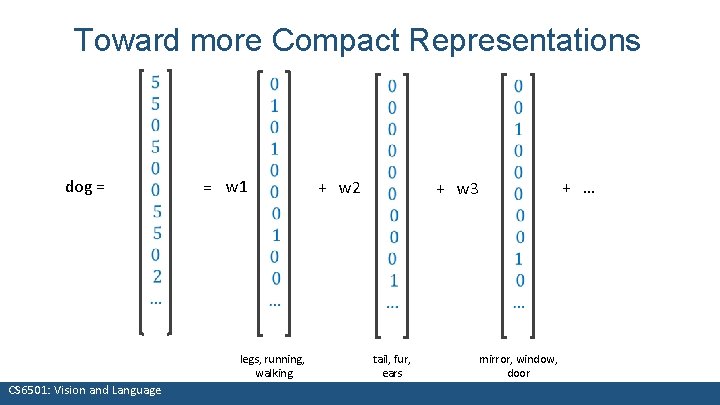

Toward more Compact Representations dog = = w 1 legs, running, What we see walking CS 6501: Vision and Language + w 2 + w 3 tail, fur, ears mirror, window, door + …

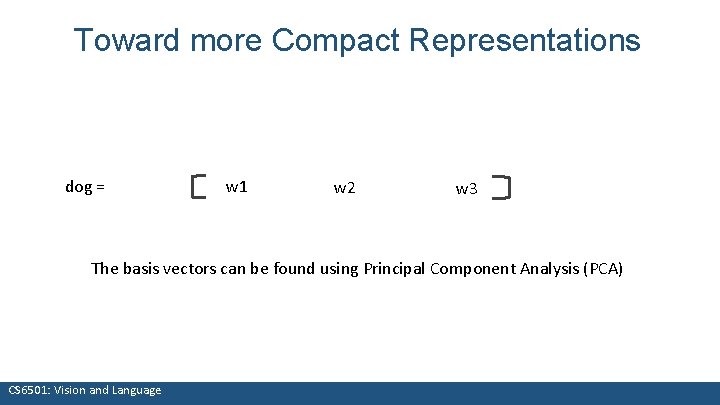

Toward more Compact Representations dog = w 1 w 2 w 3 The basis vectors can be found using Principal Component Analysis (PCA) What we see CS 6501: Vision and Language

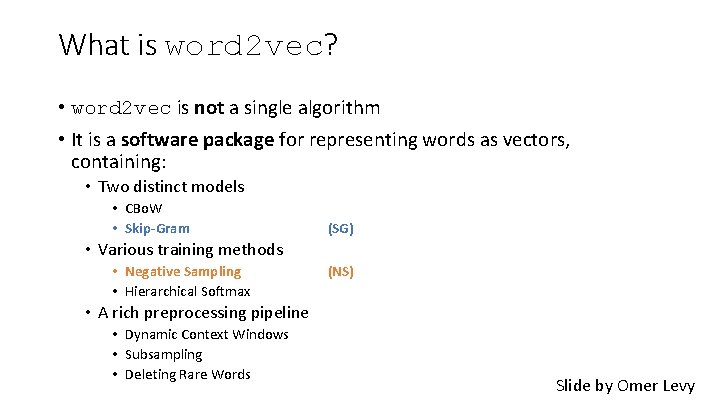

What is word 2 vec? • word 2 vec is not a single algorithm • It is a software package for representing words as vectors, containing: • Two distinct models • CBo. W • Skip-Gram (SG) • Various training methods • Negative Sampling • Hierarchical Softmax (NS) • A rich preprocessing pipeline • Dynamic Context Windows • Subsampling • Deleting Rare Words Slide by Omer Levy

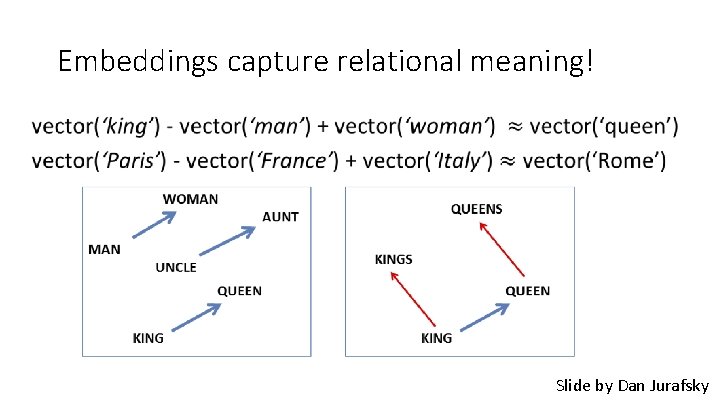

Embeddings capture relational meaning! • Slide by Dan Jurafsky

Skip-Grams with Negative Sampling (SGNS) Marco saw a furry little wampimuk hiding in the tree. “word 2 vec Explained…” Goldberg & Levy, ar. Xiv 11 2014

Skip-Grams with Negative Sampling (SGNS) Marco saw a furry little wampimuk hiding in the tree. “word 2 vec Explained…” Goldberg & Levy, ar. Xiv 12 2014

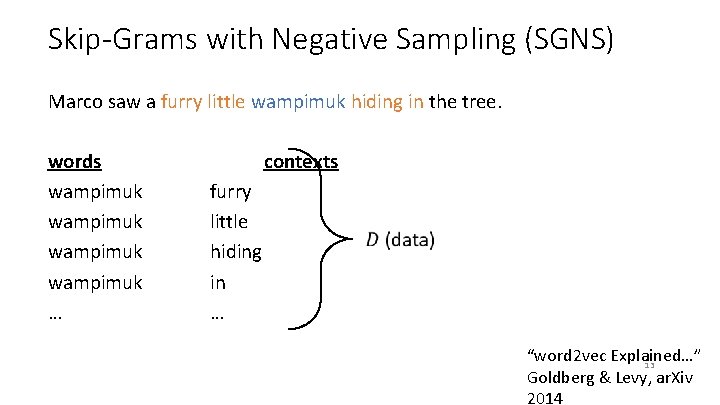

Skip-Grams with Negative Sampling (SGNS) Marco saw a furry little wampimuk hiding in the tree. words wampimuk … contexts furry little hiding in … “word 2 vec Explained…” 13 Goldberg & Levy, ar. Xiv 2014

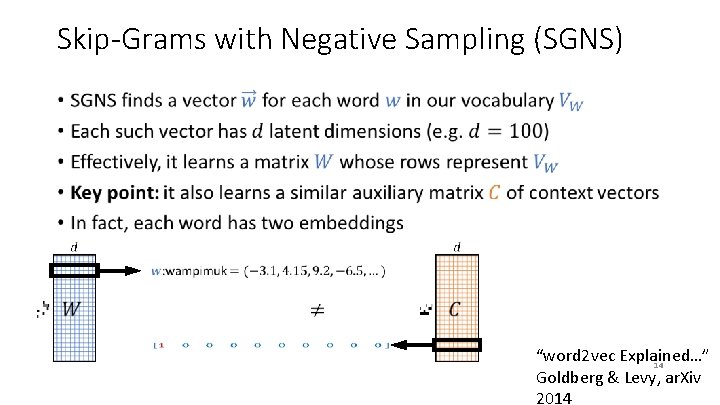

Skip-Grams with Negative Sampling (SGNS) • “word 2 vec Explained…” 14 Goldberg & Levy, ar. Xiv 2014

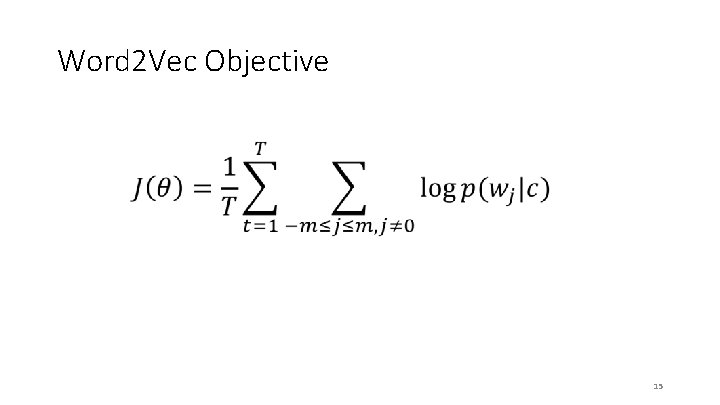

Word 2 Vec Objective 15

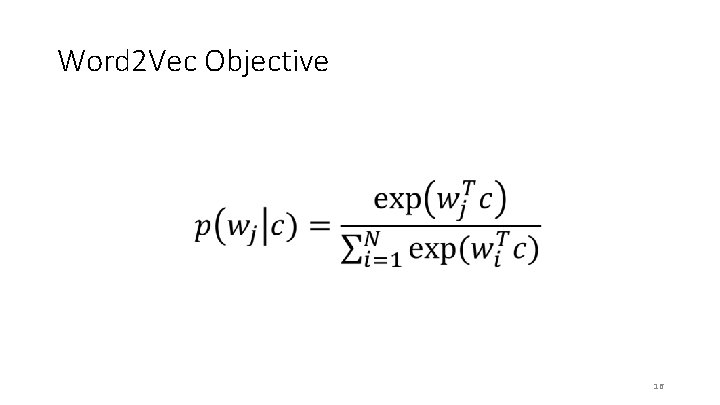

Word 2 Vec Objective 16

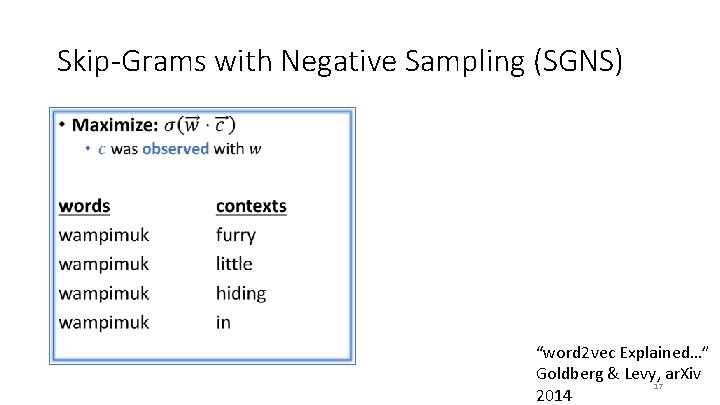

Skip-Grams with Negative Sampling (SGNS) • “word 2 vec Explained…” Goldberg & Levy, ar. Xiv 17 2014

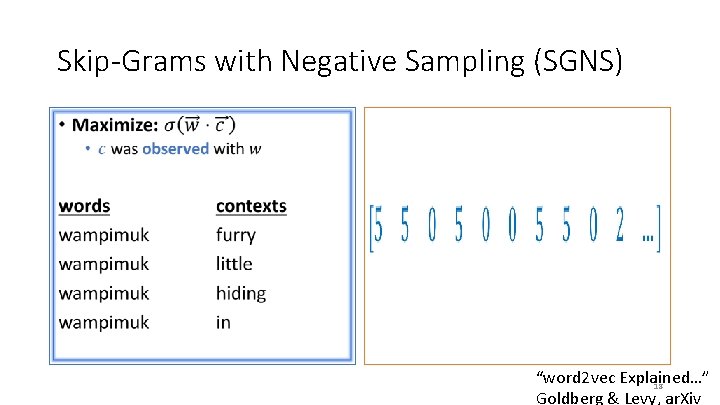

Skip-Grams with Negative Sampling (SGNS) • • “word 2 vec Explained…” 18 Goldberg & Levy, ar. Xiv

Skip-Grams with Negative Sampling (SGNS) • 19

Questions? CS 6501: Vision and Language 20

- Slides: 20