CS 6501 Vision and Language Deep Learning About

CS 6501: Vision and Language Deep Learning

About the Course CS 6501: Vision and Language • Instructor: Vicente Ordonez • Email: vicente@virginia. edu • Website: http: //www. cs. virginia. edu/~vicente/vislang • Location: Thornton Hall E 316 Times: Tuesday - Thursday 12: 30 PM - 1: 45 PM • Faculty Office hours: Tuesdays 3 - 4 pm (Rice 310) • Discuss in Piazza: http: //piazza. com/virginia/spring 2017/cs 6501004 CS 6501: Vision and Language

Today • Quick review into Machine Learning. • Linear Regression • Neural Networks • Backpropagation CS 6501: Vision and Language

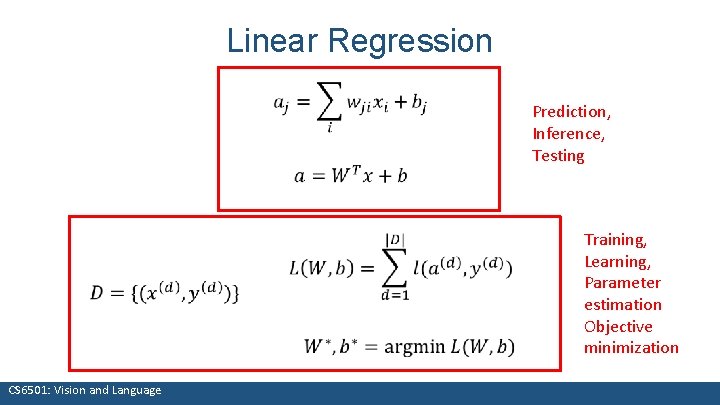

Linear Regression Prediction, Inference, Testing CS 6501: Vision and Language Training, Learning, Parameter estimation Objective minimization

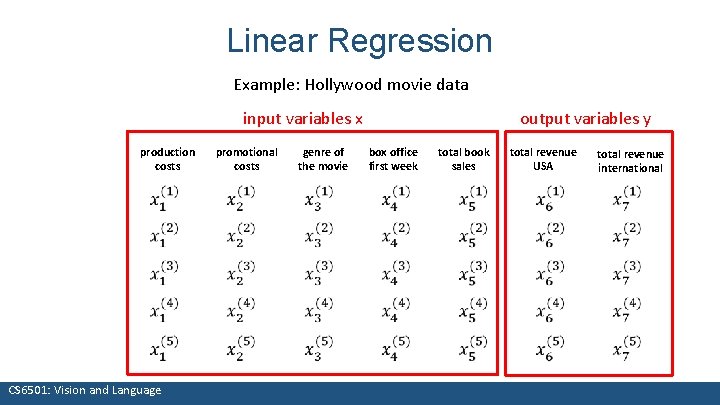

Linear Regression Example: Hollywood movie data input variables x production costs promotional costs genre of the movie output variables y box office first week total book sales total revenue USA total revenue international CS 6501: Vision and Language

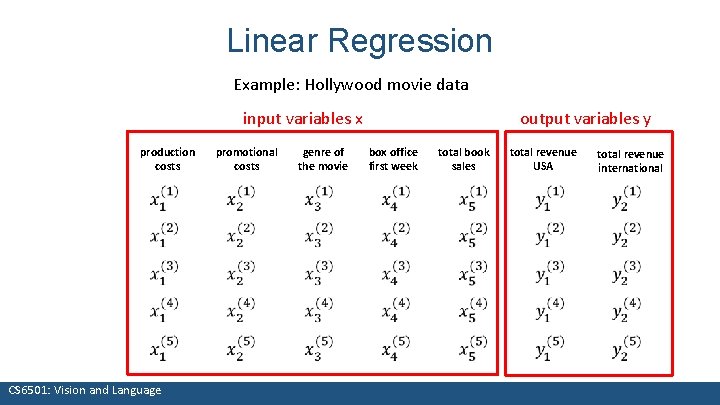

Linear Regression Example: Hollywood movie data input variables x production costs promotional costs genre of the movie output variables y box office first week total book sales total revenue USA total revenue international CS 6501: Vision and Language

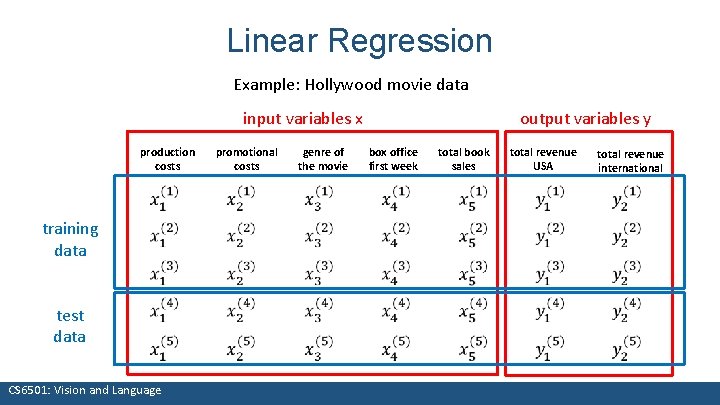

Linear Regression Example: Hollywood movie data input variables x production costs training data test data promotional costs genre of the movie output variables y box office first week total book sales total revenue USA total revenue international CS 6501: Vision and Language

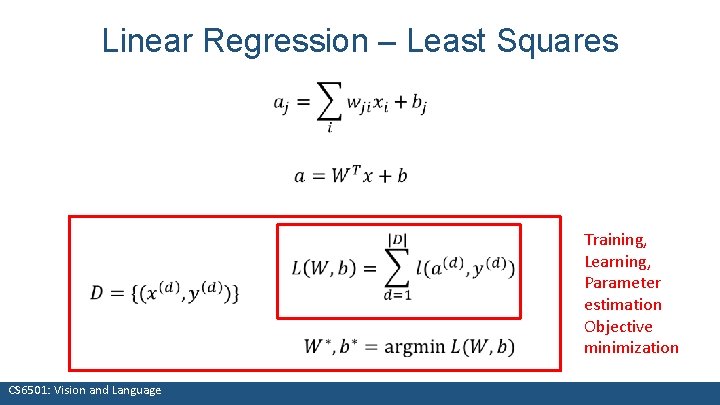

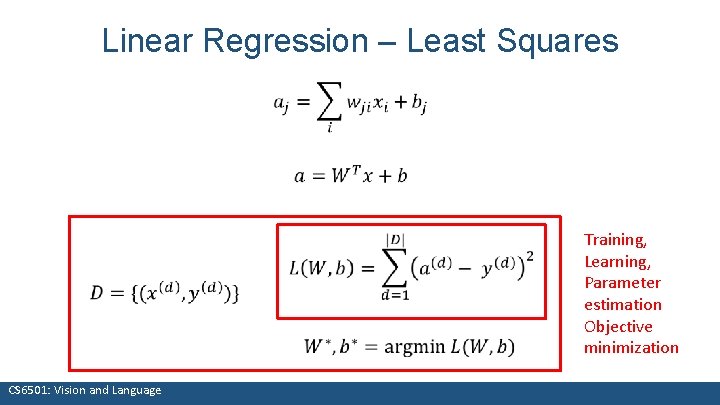

Linear Regression – Least Squares CS 6501: Vision and Language Training, Learning, Parameter estimation Objective minimization

Linear Regression – Least Squares CS 6501: Vision and Language Training, Learning, Parameter estimation Objective minimization

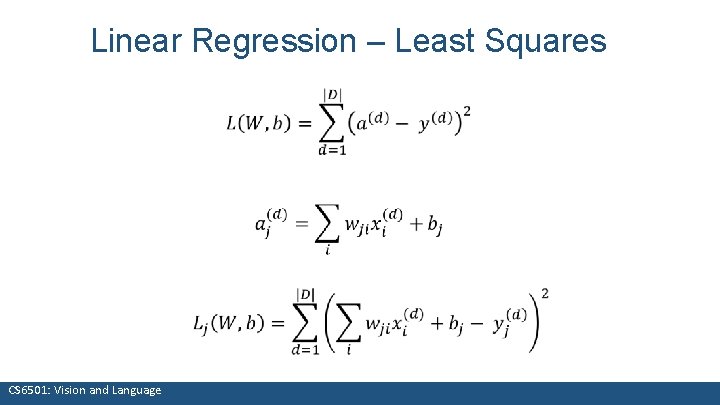

Linear Regression – Least Squares CS 6501: Vision and Language

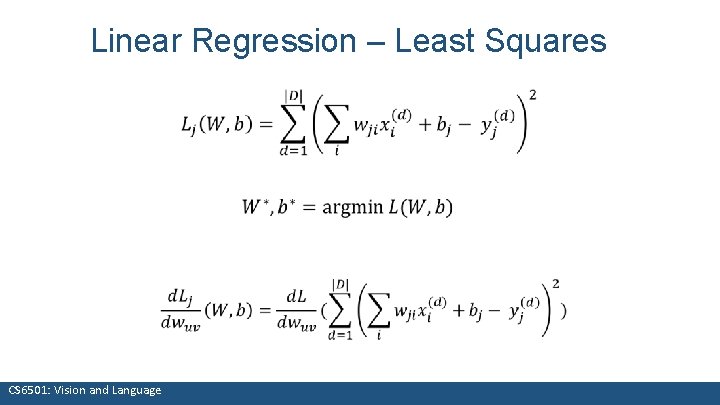

Linear Regression – Least Squares CS 6501: Vision and Language

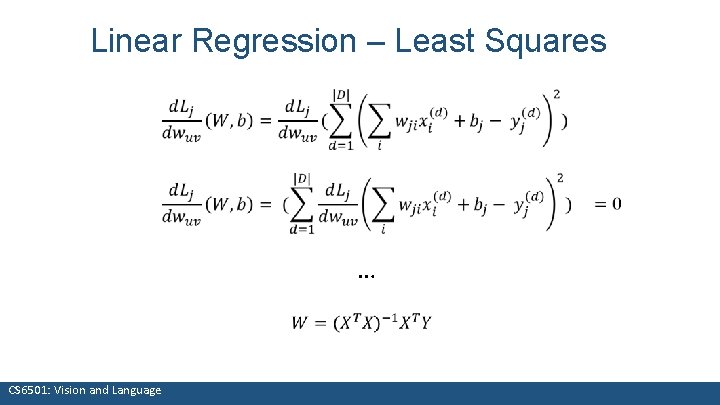

Linear Regression – Least Squares … CS 6501: Vision and Language

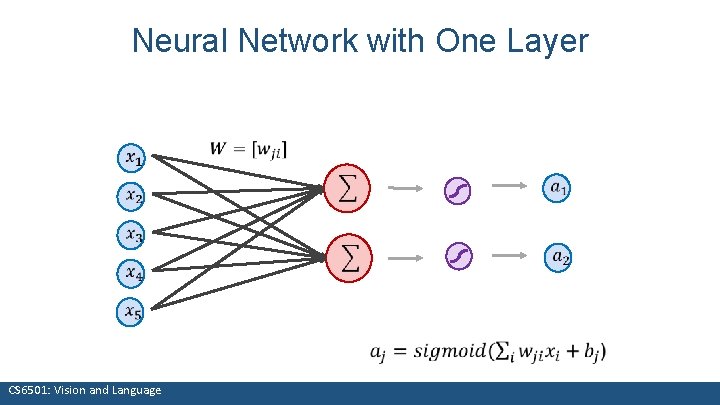

Neural Network with One Layer CS 6501: Vision and Language

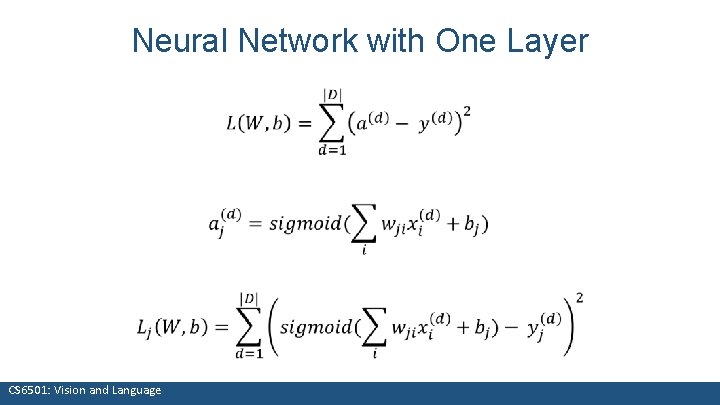

Neural Network with One Layer CS 6501: Vision and Language

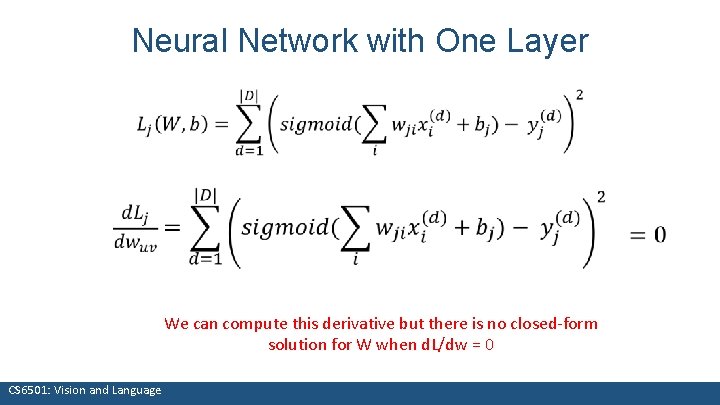

Neural Network with One Layer We can compute this derivative but there is no closed-form solution for W when d. L/dw = 0 CS 6501: Vision and Language

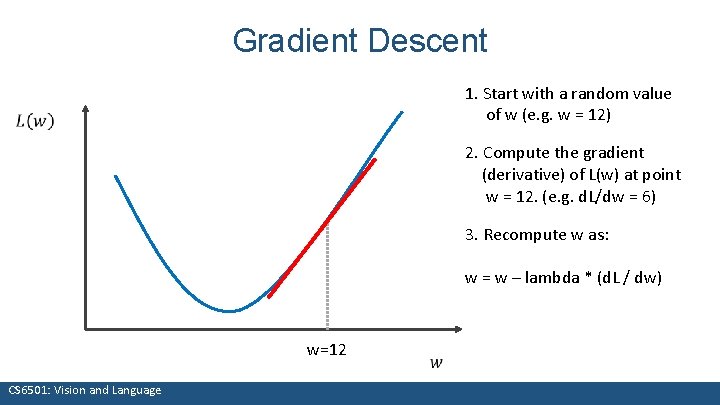

Gradient Descent 1. Start with a random value of w (e. g. w = 12) 2. Compute the gradient (derivative) of L(w) at point w = 12. (e. g. d. L/dw = 6) 3. Recompute w as: w = w – lambda * (d. L / dw) w=12 CS 6501: Vision and Language

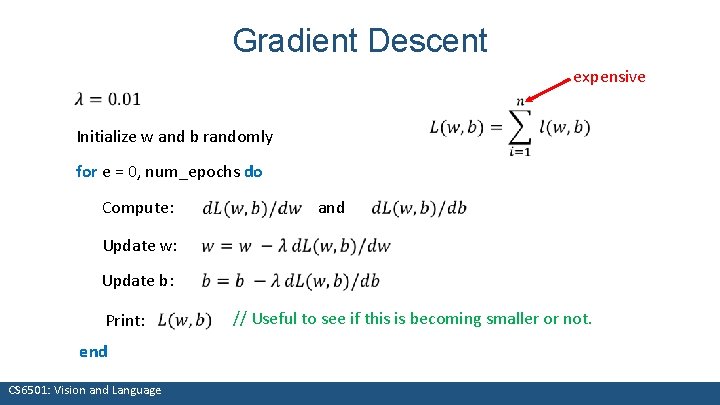

Gradient Descent expensive Initialize w and b randomly for e = 0, num_epochs do Compute: Update w: Update b: Print: end CS 6501: Vision and Language and // Useful to see if this is becoming smaller or not.

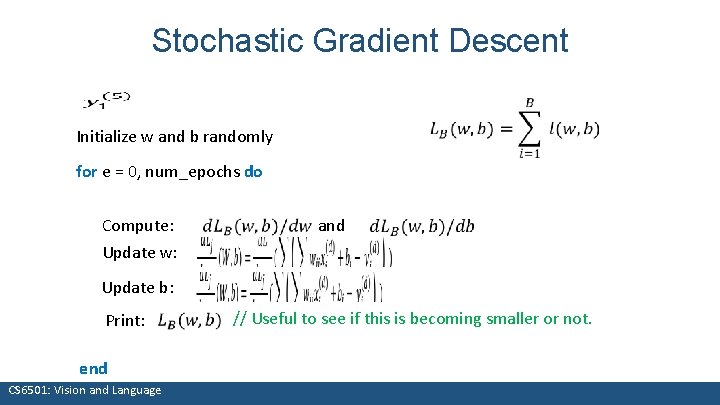

Stochastic Gradient Descent Initialize w and b randomly for e = 0, num_epochs do Compute: Update w: Update b: Print: end CS 6501: Vision and Language and // Useful to see if this is becoming smaller or not.

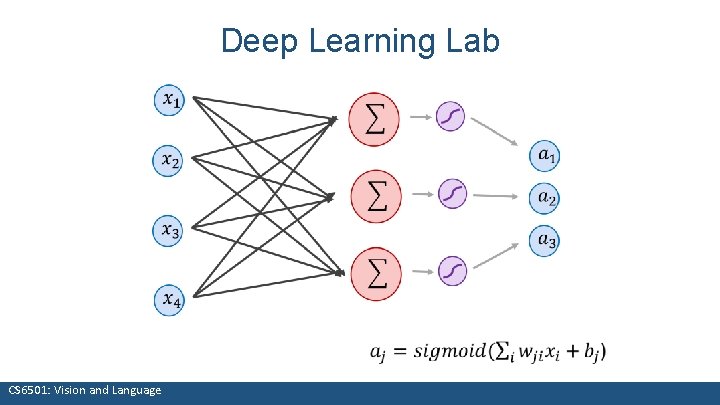

Deep Learning Lab CS 6501: Vision and Language

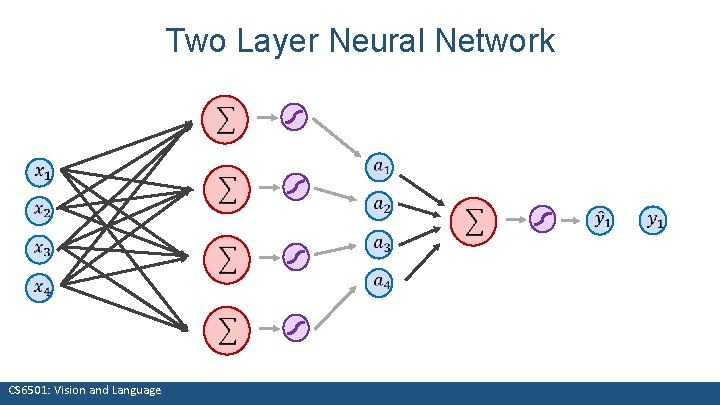

Two Layer Neural Network CS 6501: Vision and Language

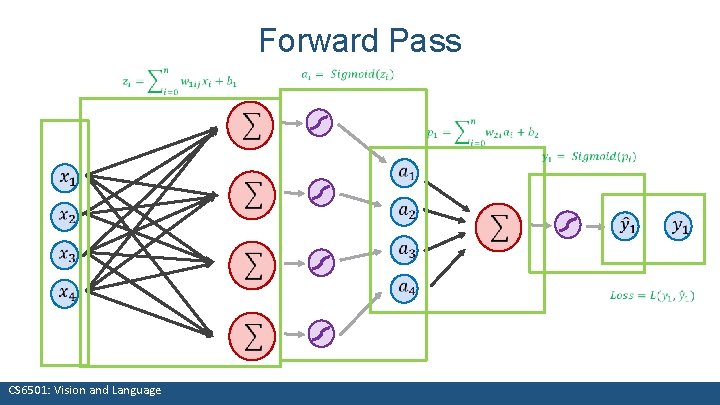

Forward Pass CS 6501: Vision and Language

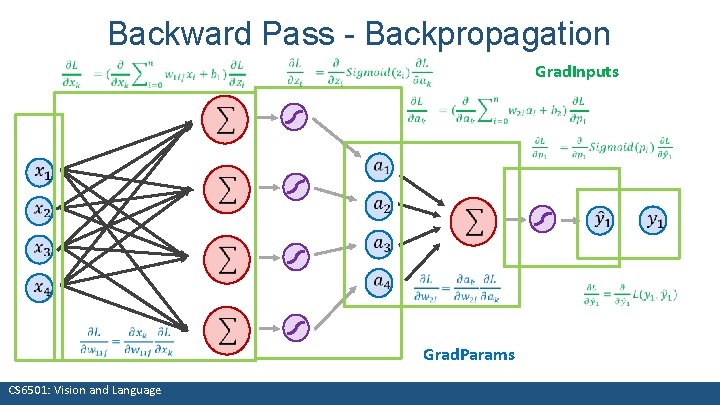

Backward Pass - Backpropagation Grad. Inputs CS 6501: Vision and Language Grad. Params

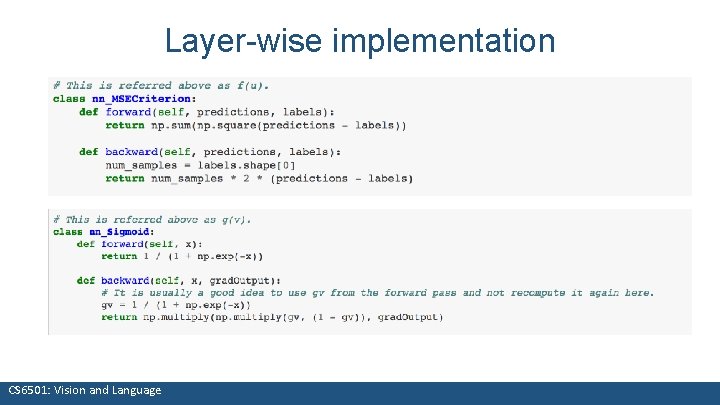

Layer-wise implementation CS 6501: Vision and Language

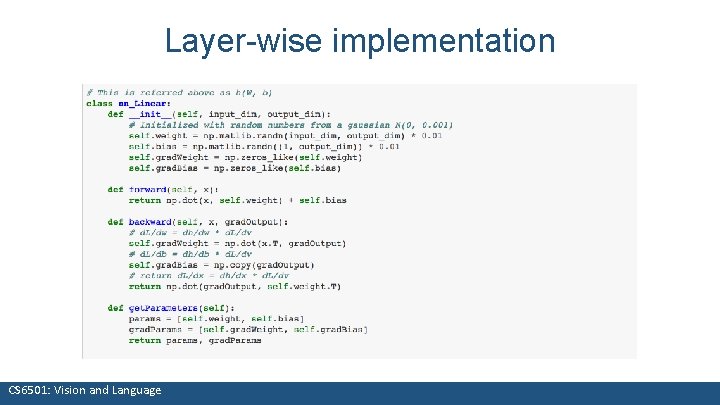

Layer-wise implementation CS 6501: Vision and Language

Automatic Differentiation You only need to write code for the forward pass, backward pass is computed automatically. https: //github. com/nlintz/Tensor. Flow. Tutorials/blob/master/03_net. ipynb CS 6501: Vision and Language

Questions? CS 6501: Vision and Language 26

- Slides: 26