Specializing Word Embeddings for Parsing by Information Bottleneck

Specializing Word Embeddings (for Parsing) by Information Bottleneck Threshing Word Embeddings Xiang Lisa Li and Jason Eisner 1

Pre-trained Word Embeddings Syntactic Knowledge Bert ELMo 2

How to extract syntax automatically? Specialize the Embeddings! for the task (parsing) 3

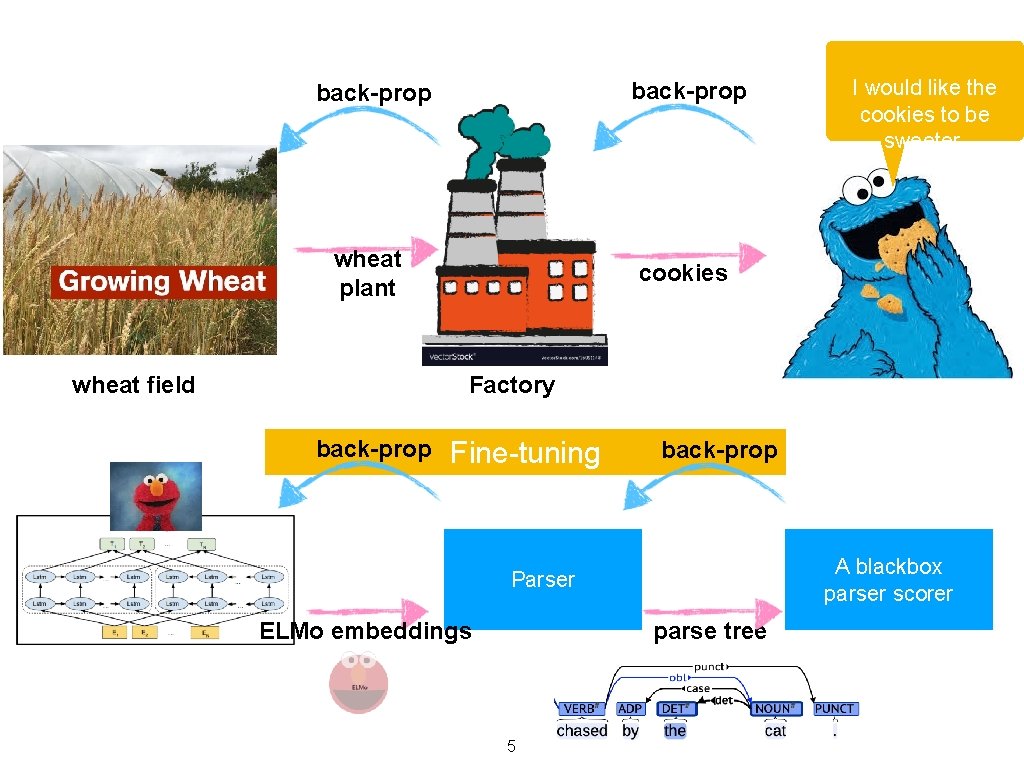

wheat field back-prop wheat plant cookies Factory Fine-tuning 4 I would like the cookies to be sweeter.

back-prop wheat plant cookies I would like the cookies to be sweeter. Factory wheat field back-prop Fine-tuning back-prop A blackbox parser scorer Parser parse tree ELMo embeddings 5

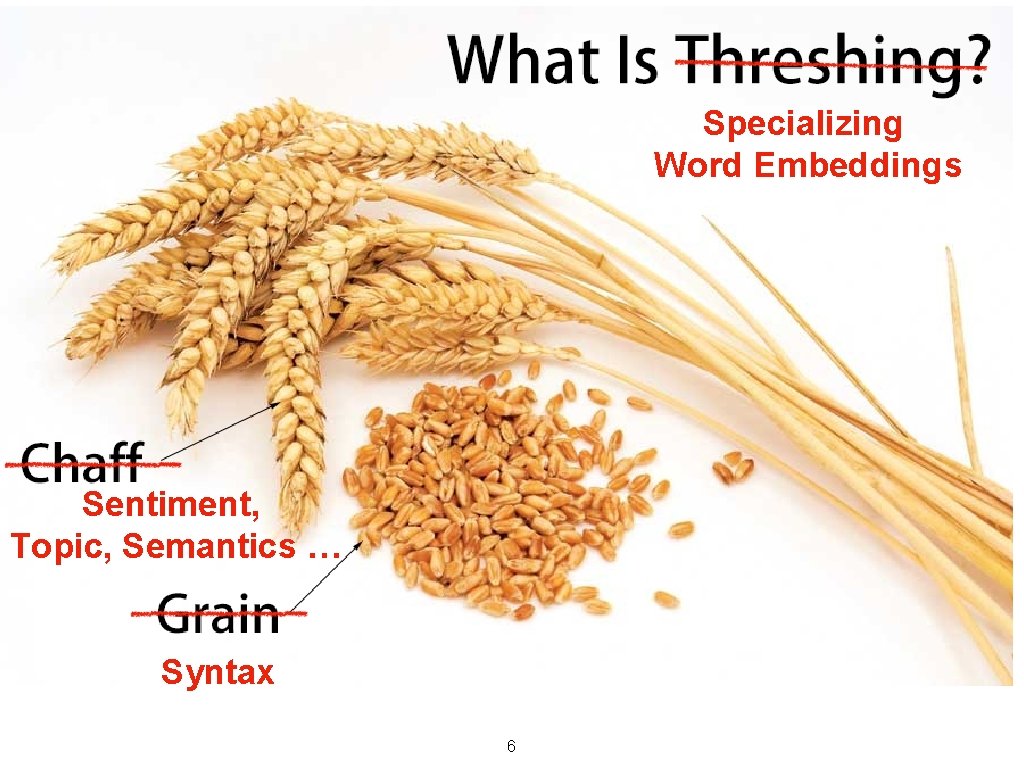

Specializing Word Embeddings Sentiment, Topic, Semantics … Syntax 6

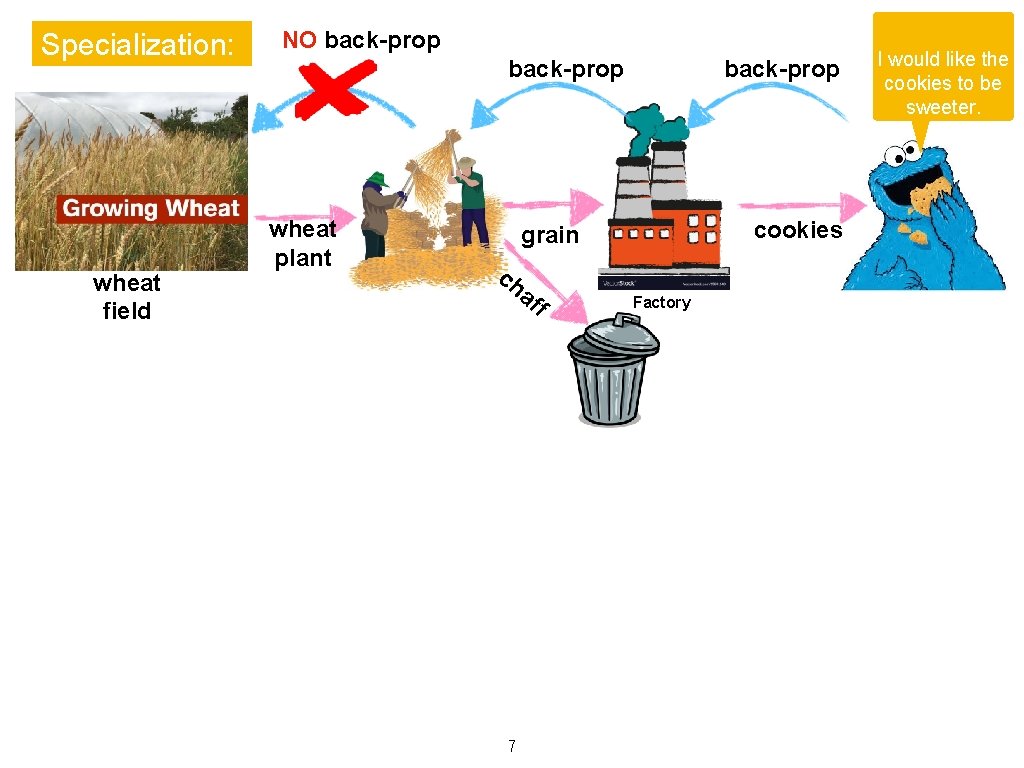

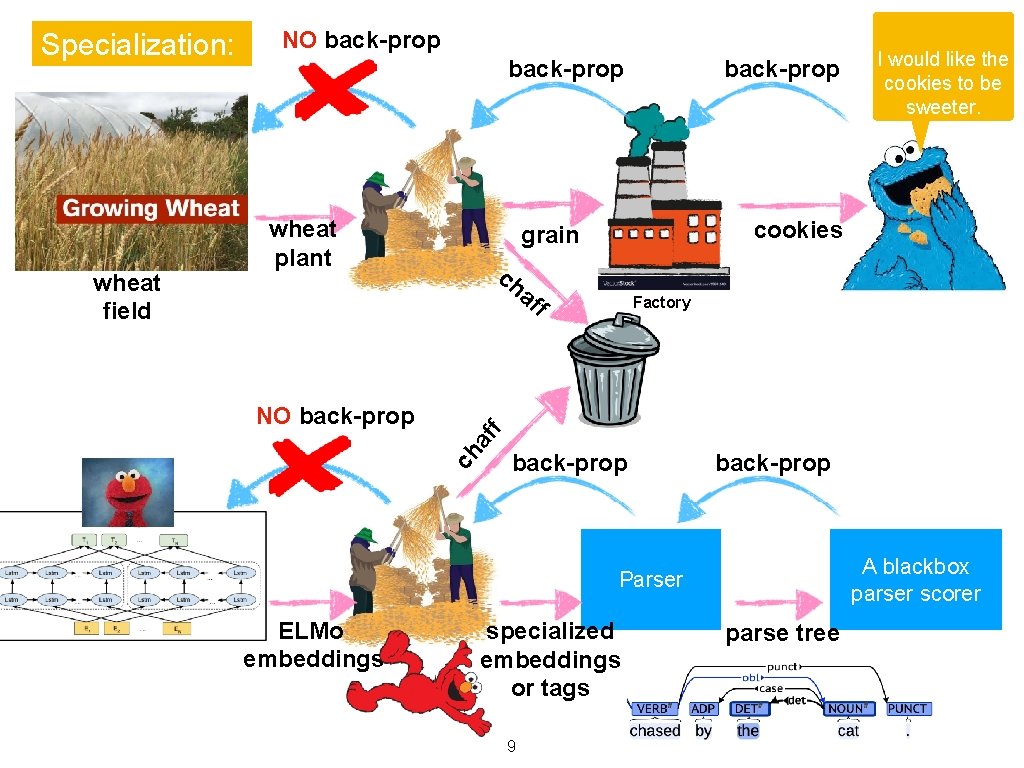

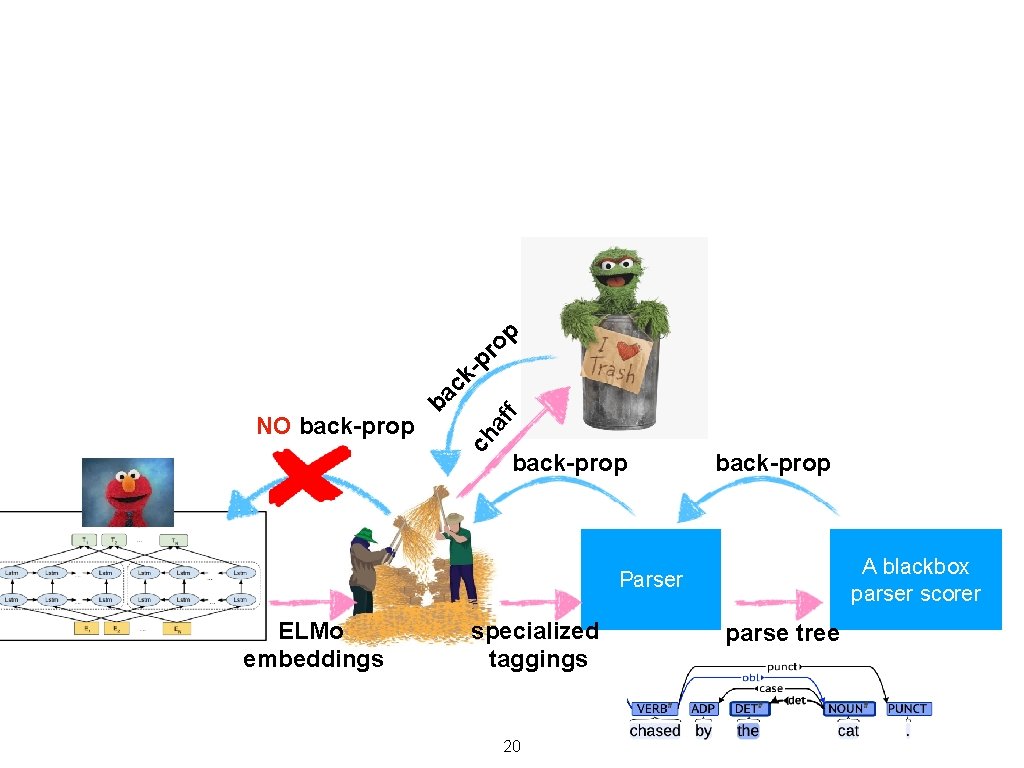

Specialization: wheat field NO back-prop wheat plant back-prop cookies grain ch af 7 f Factory I would like the cookies to be sweeter.

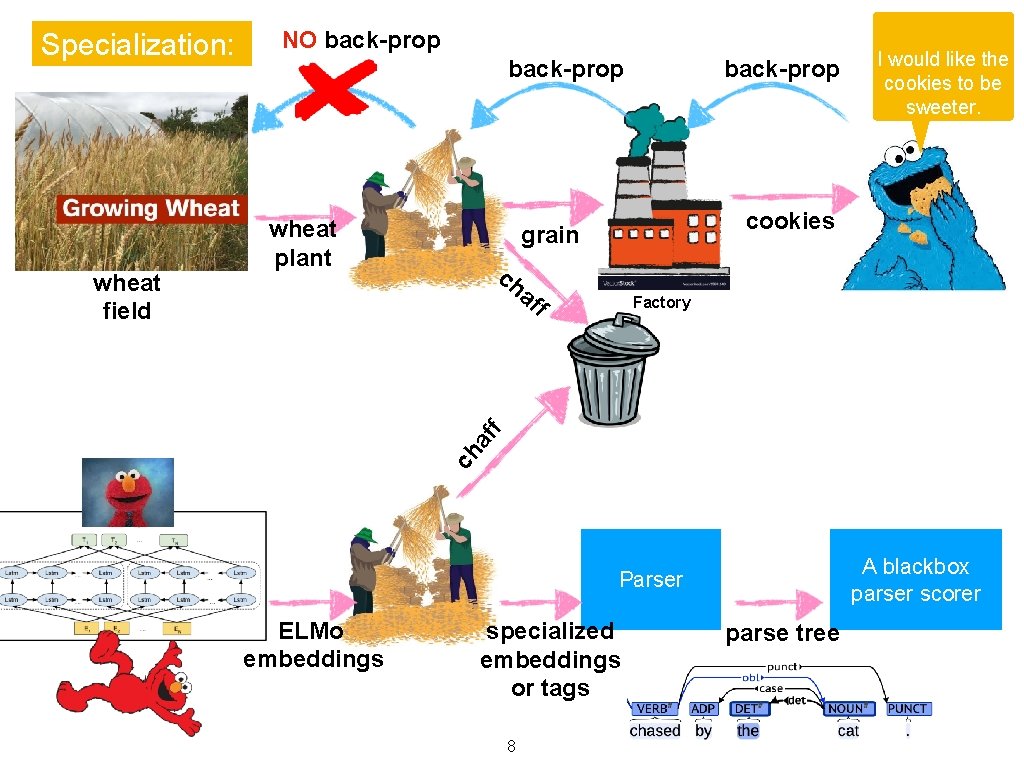

back-prop wheat plant back-prop cookies grain ch f Factory f af I would like the cookies to be sweeter. af wheat field NO back-prop ch Specialization: A blackbox parser scorer Parser ELMo embeddings specialized embeddings or tags 8 parse tree

back-prop wheat plant ch cookies f Factory back-prop A blackbox parser scorer Parser ELMo embeddings I would like the cookies to be sweeter. f af NO back-prop grain af wheat field NO back-prop ch Specialization: specialized embeddings or tags 9 parse tree

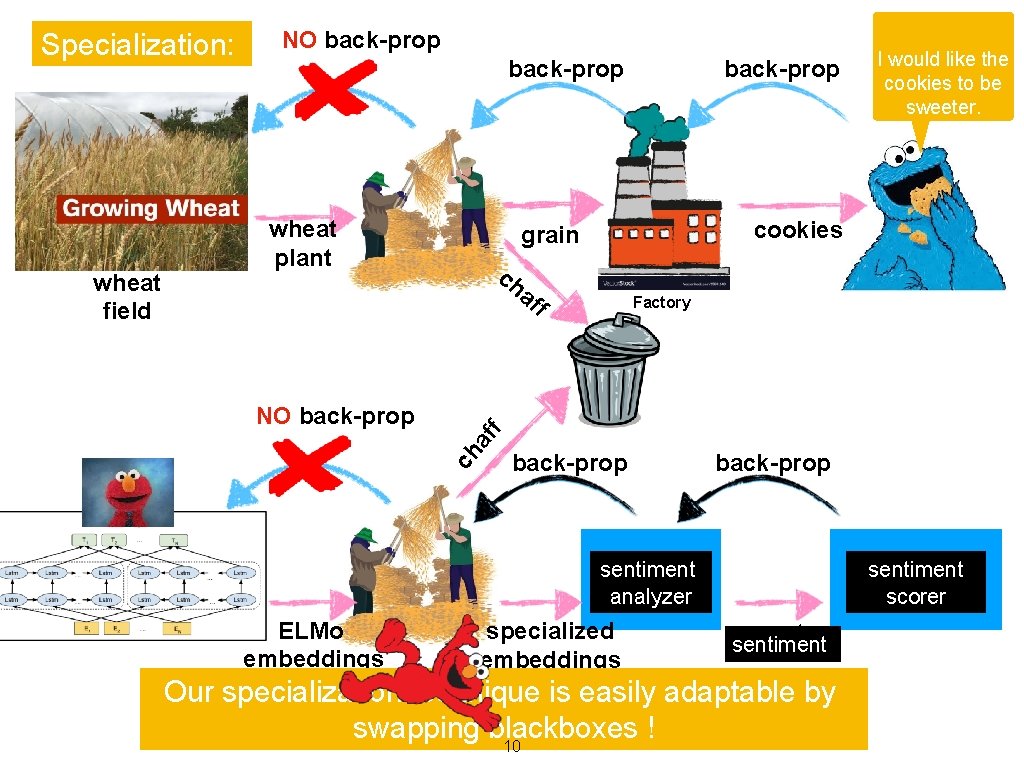

Specialization: back-prop wheat plant back-prop ch af NO back-prop f Factory f af back-prop Asentiment blackbox parser scorer sentiment Parser analyzer ELMo embeddings I would like the cookies to be sweeter. cookies grain ch wheat field NO back-prop specialized embeddings or tags technique is easily parse tree sentiment Our specialization adaptable by swapping blackboxes ! 10

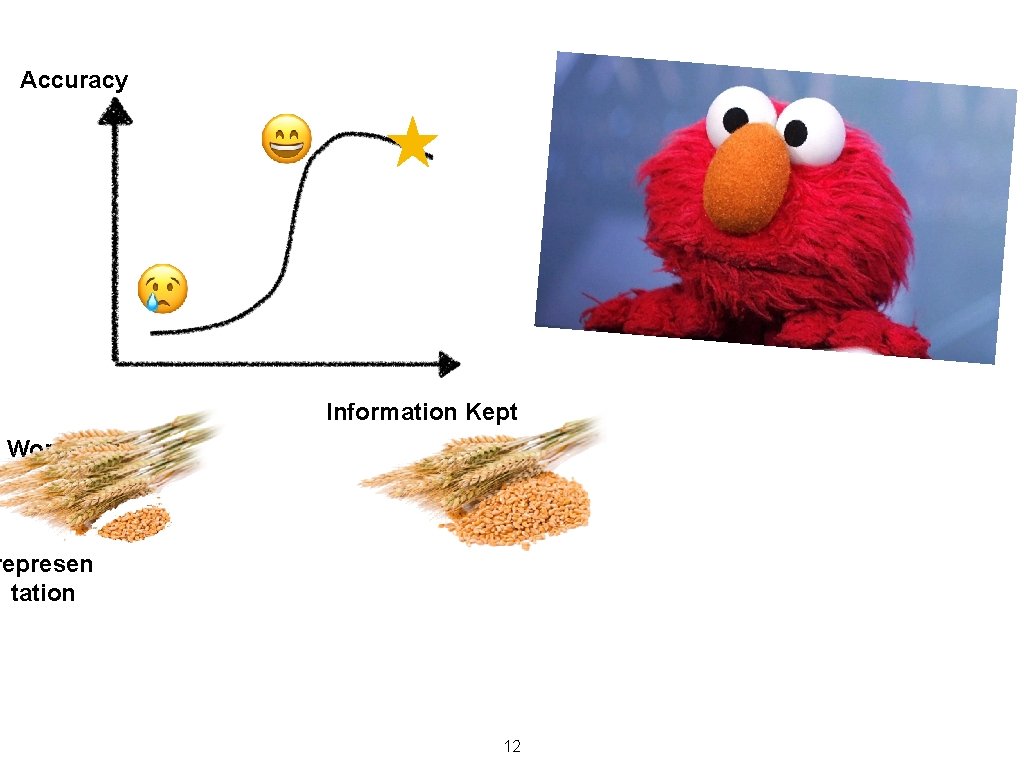

Pros of Specialization vs. Fine Tuning • Faster — trains on 100 sentences per second • Better generalization — few parameters, so harder to overfit. 11

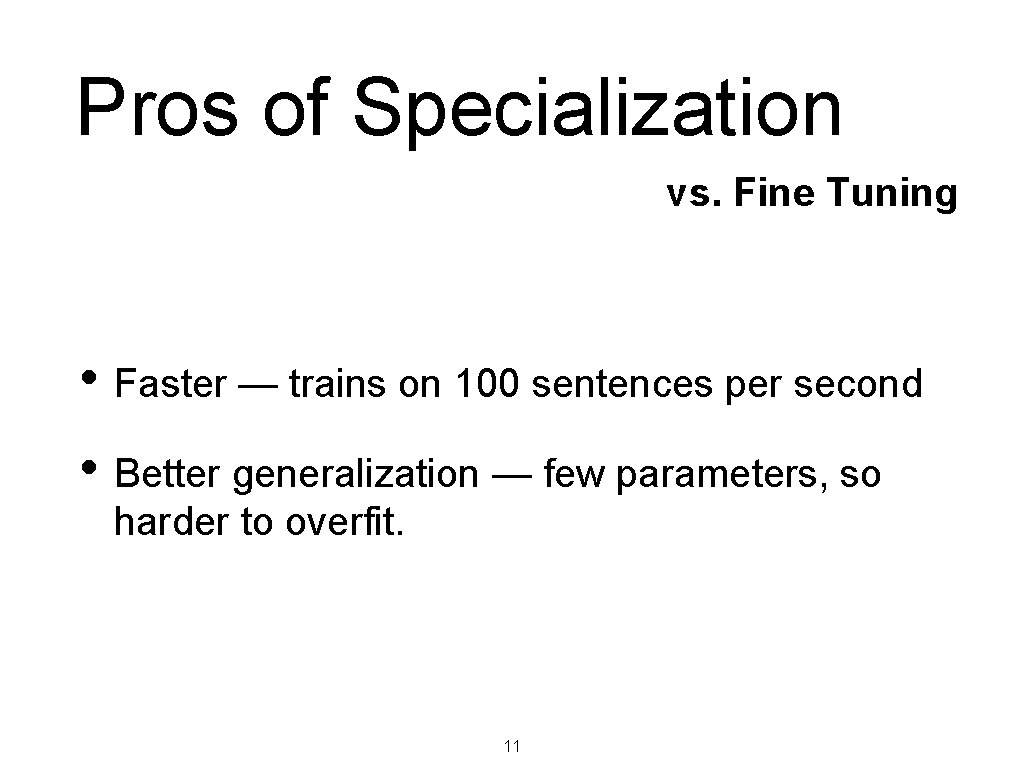

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 12

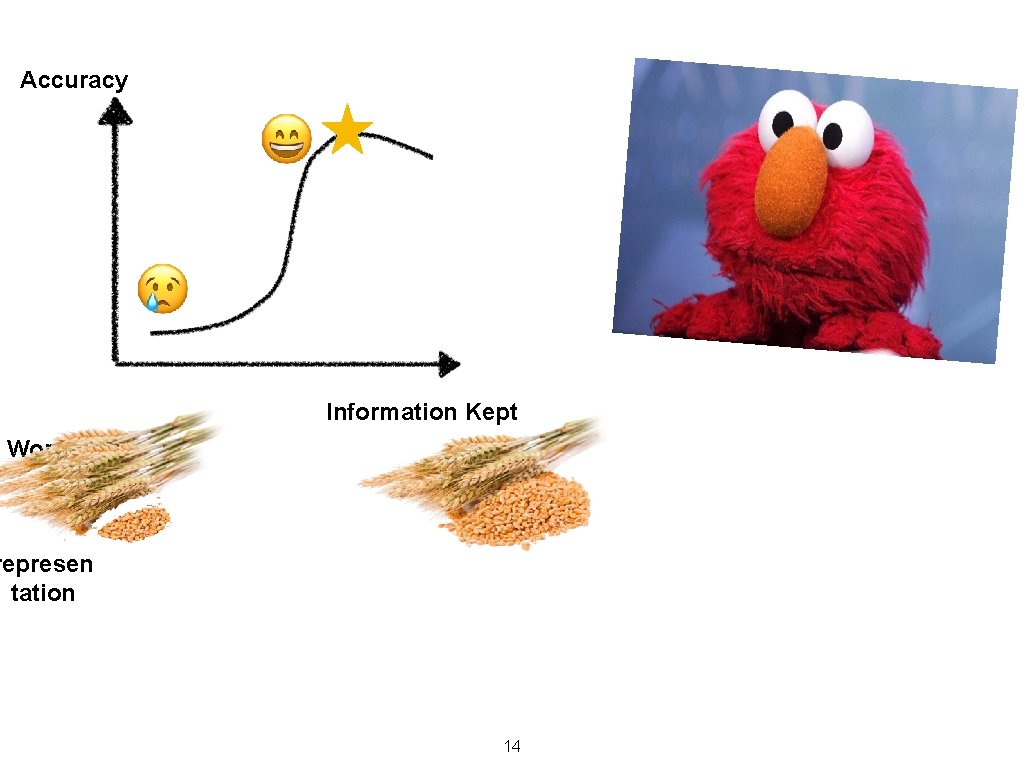

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 14

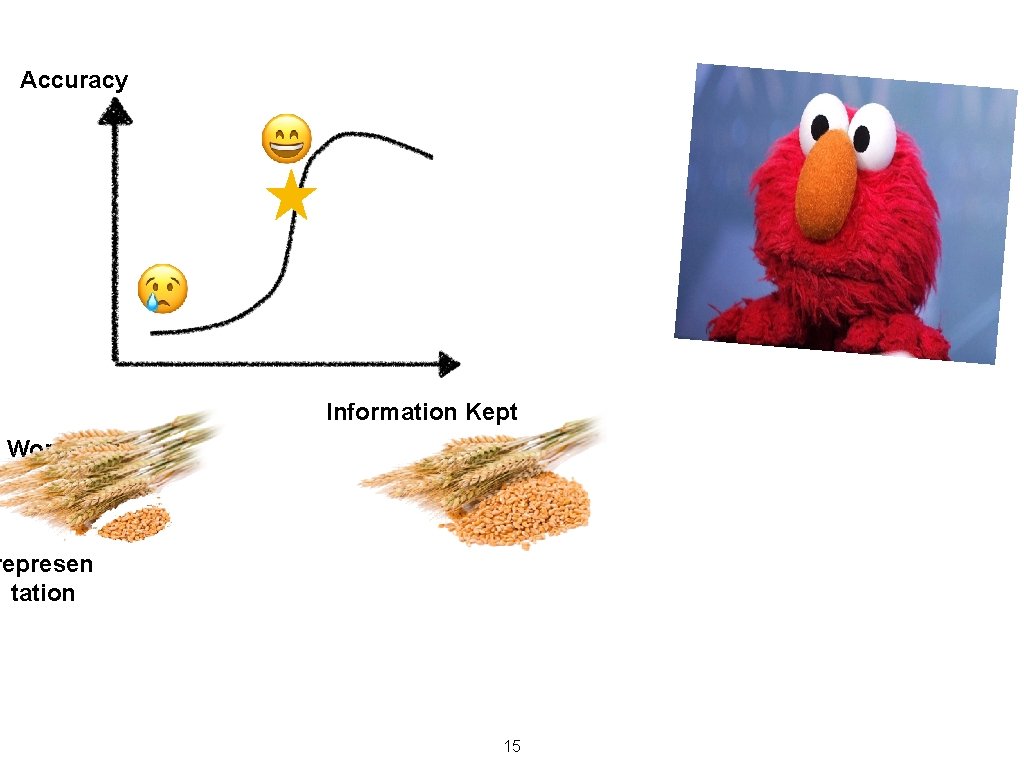

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 15

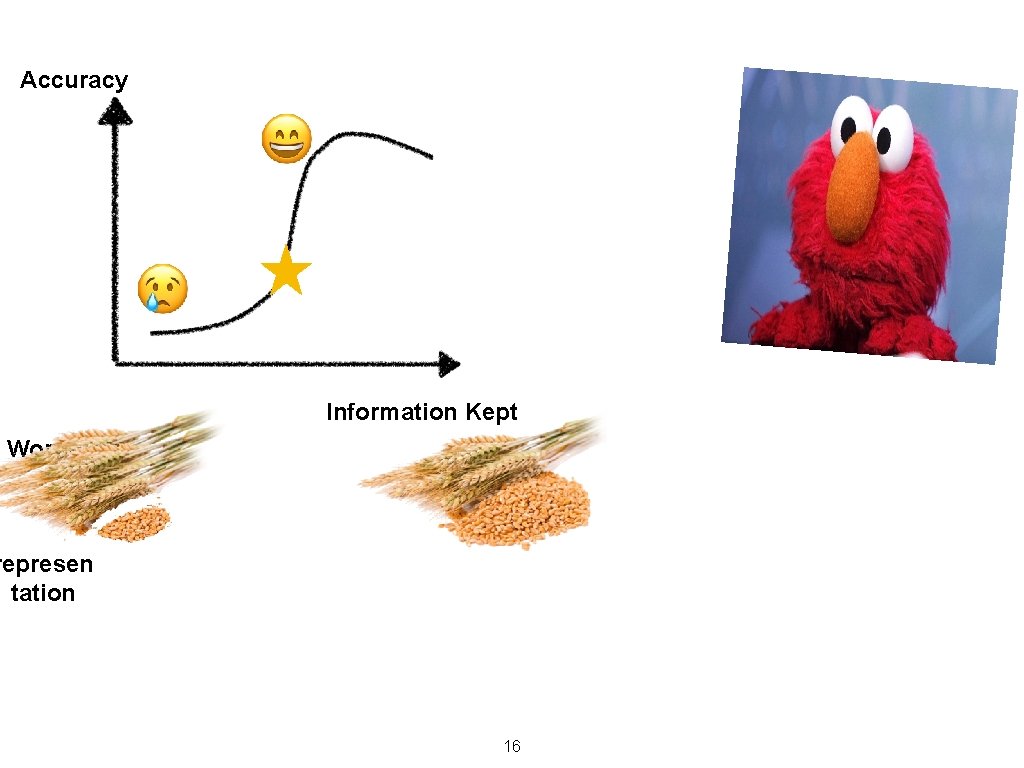

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 16

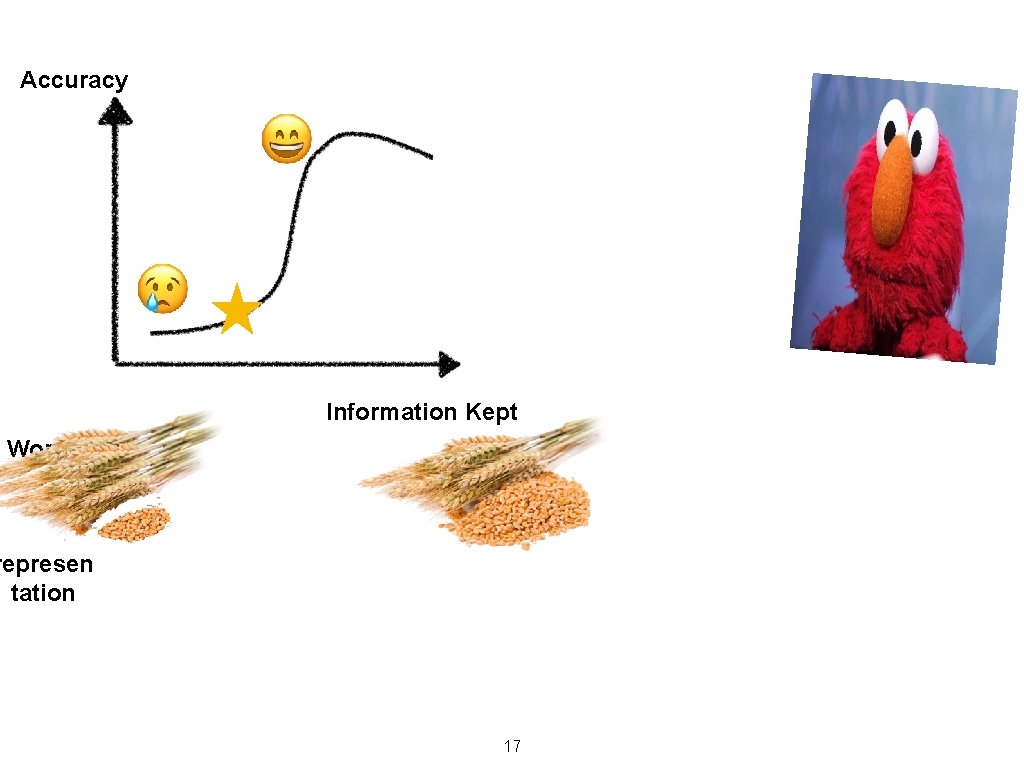

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 17

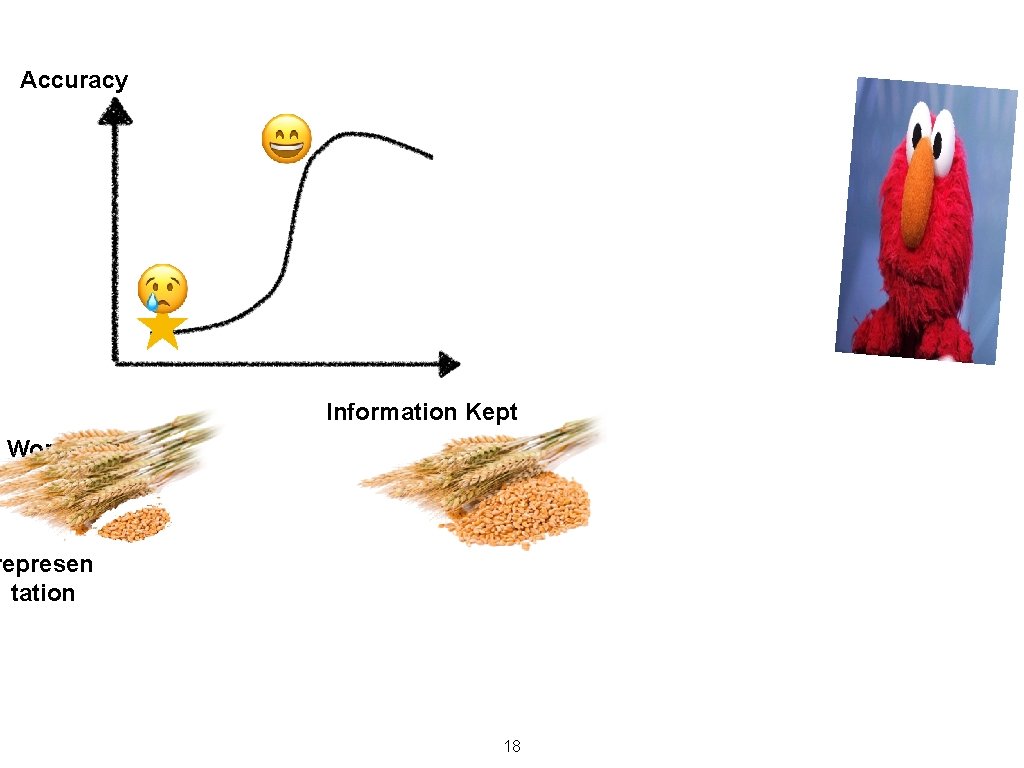

Accuracy Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 18

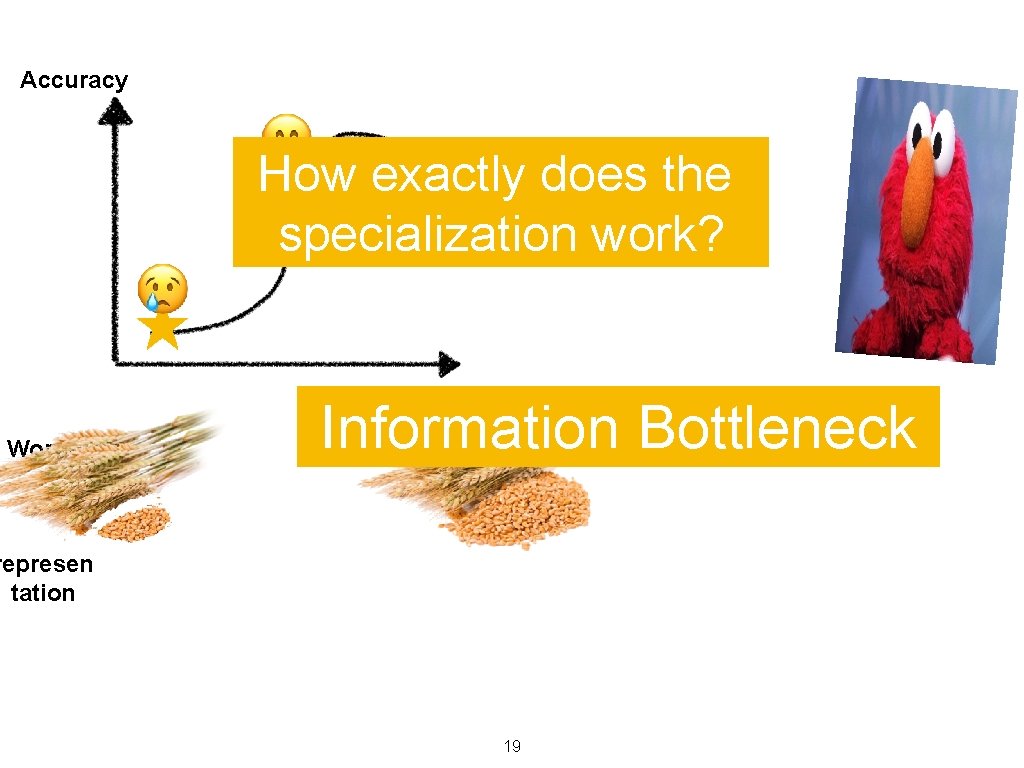

Accuracy How exactly does the specialization work? Information Bottleneck Information Kept Worse Accurac Best Accuracy y Smaller represen tation Baseline Accuracy Worse Accuracy Bigger representation Smaller representation 19

p o r p f af ch NO back-prop ba k c back-prop A blackbox parser scorer Parser ELMo embeddings specialized taggings 20 parse tree

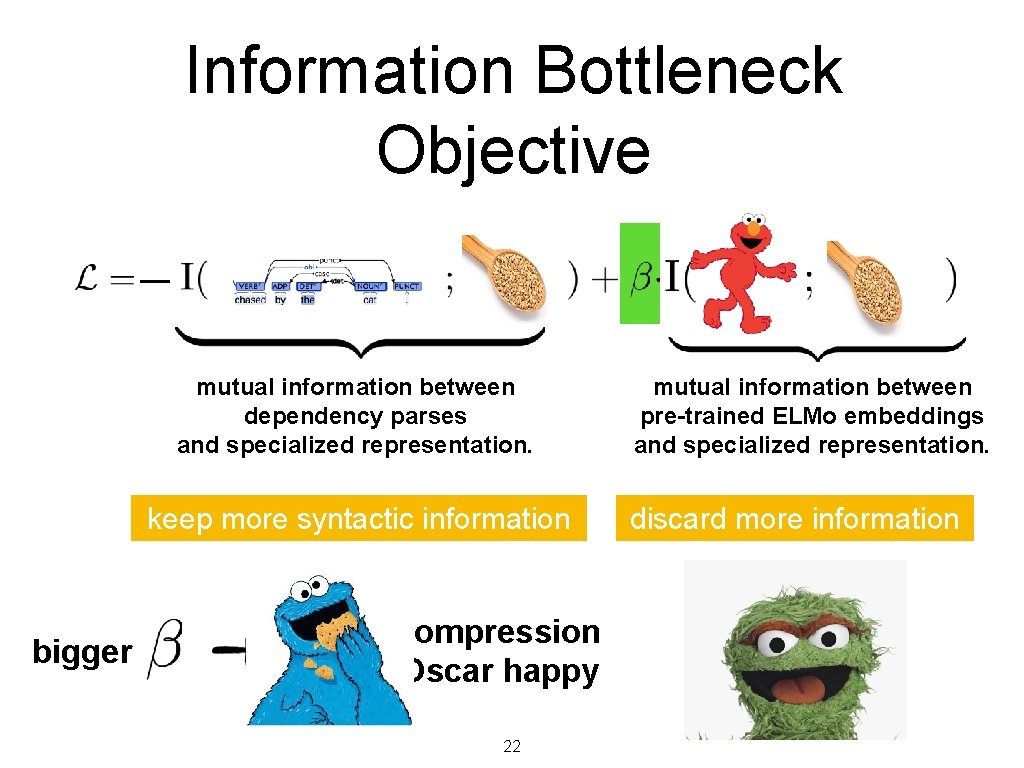

Information Bottleneck Objective — mutual information between dependency parses and specialized representation. keep more syntactic information bigger more compression make Oscar happy 22 mutual information between pre-trained ELMo embeddings and specialized representation. discard more information

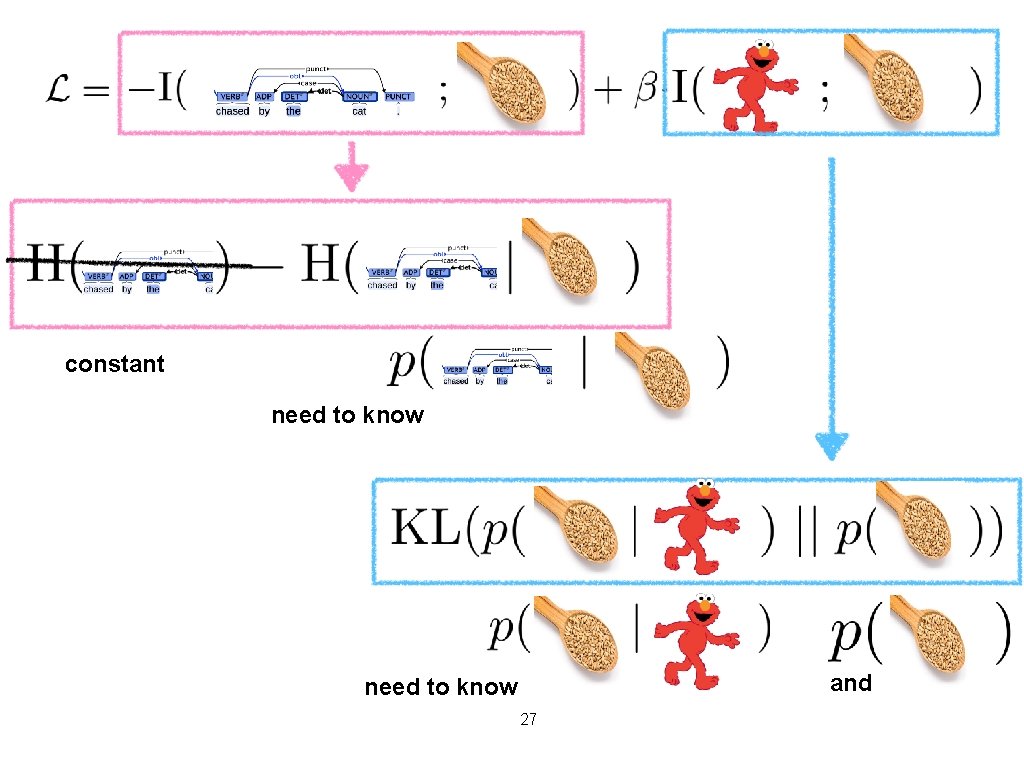

constant need to know and need to know 27

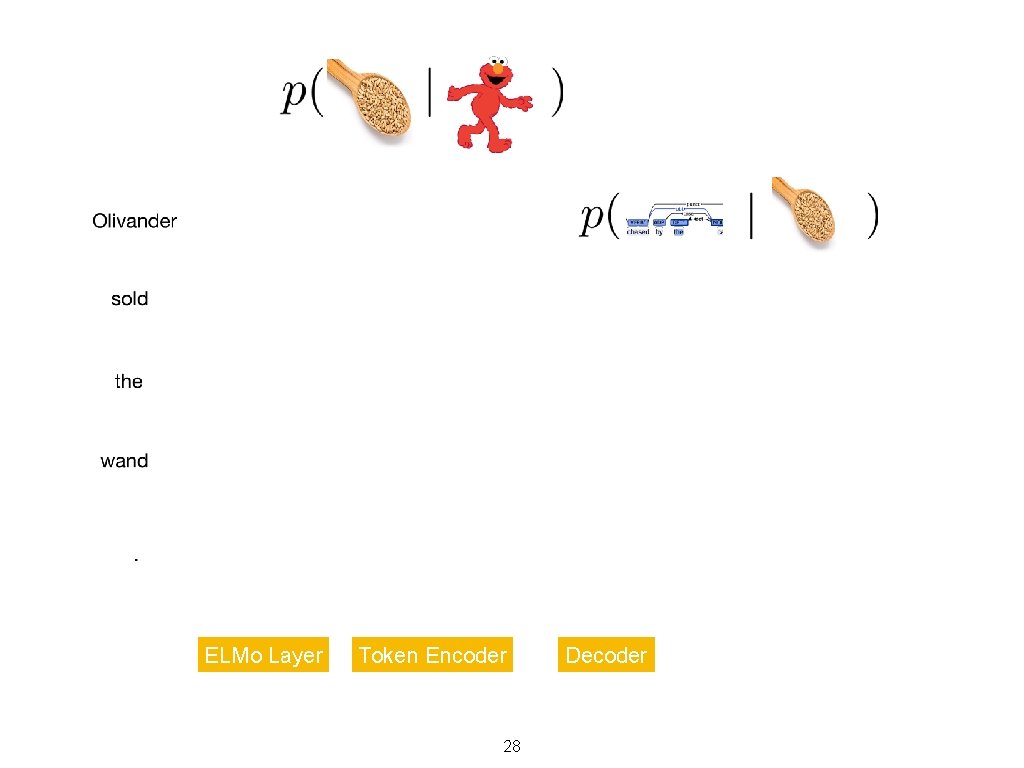

ELMo Layer Token Encoder 28 Decoder

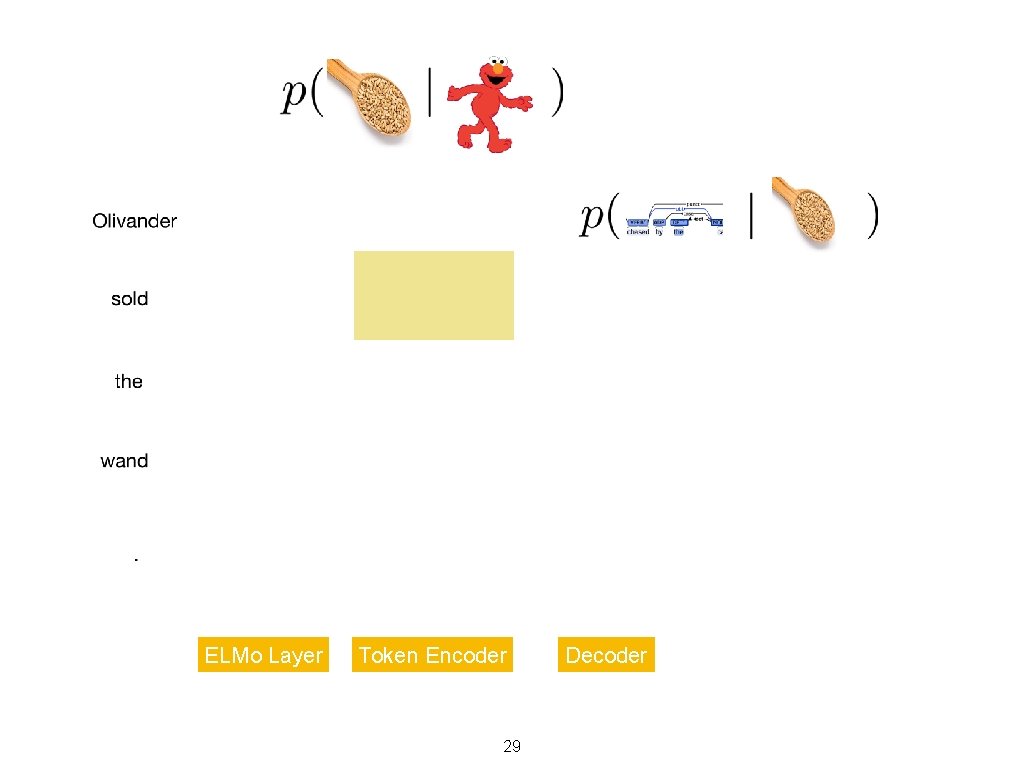

ELMo Layer Token Encoder 29 Decoder

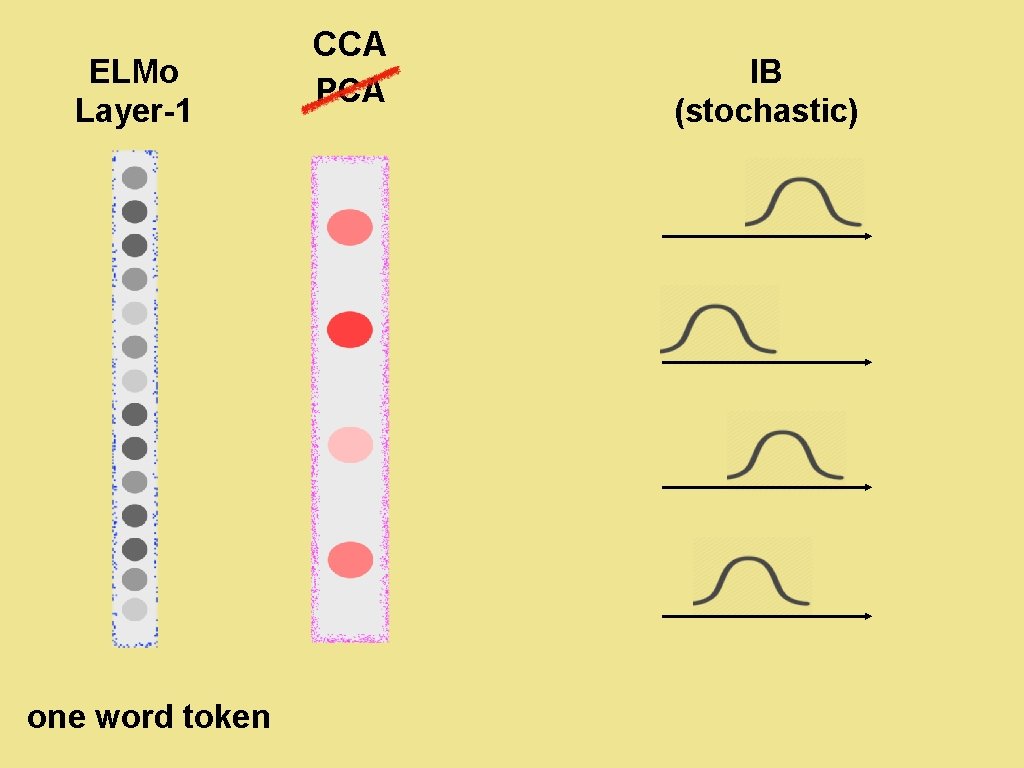

ELMo Layer-1 CCA IB (stochastic) PCA one word token 30

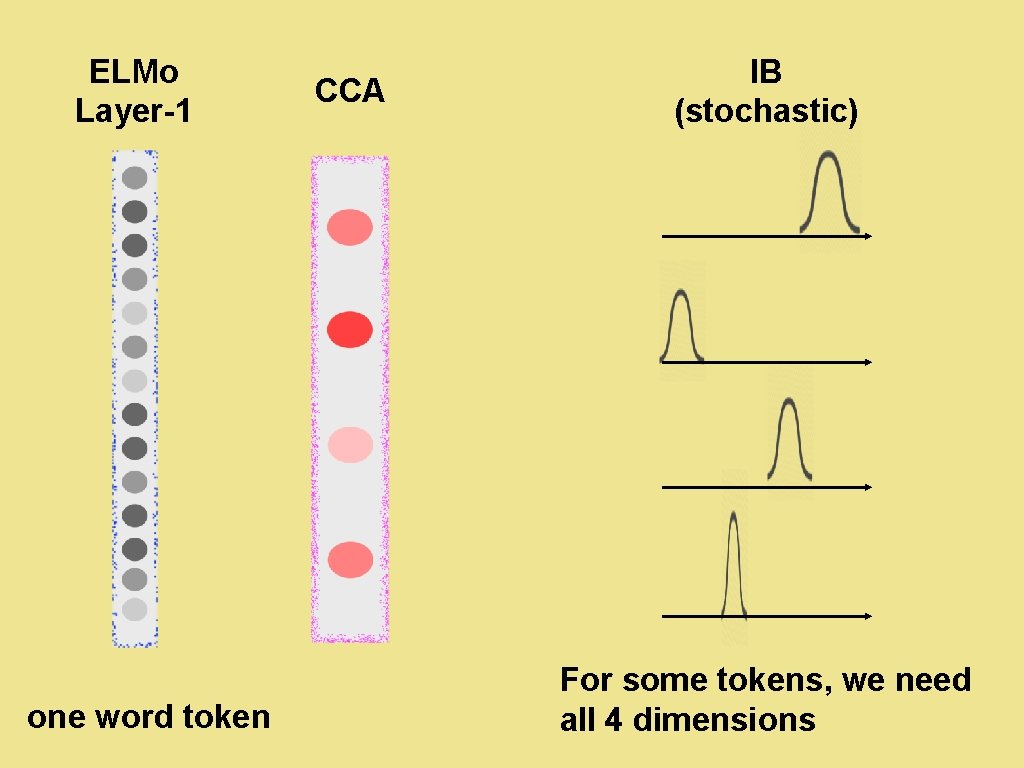

ELMo Layer-1 IB (stochastic) CCA one word token 31 For some tokens, we need all 4 dimensions

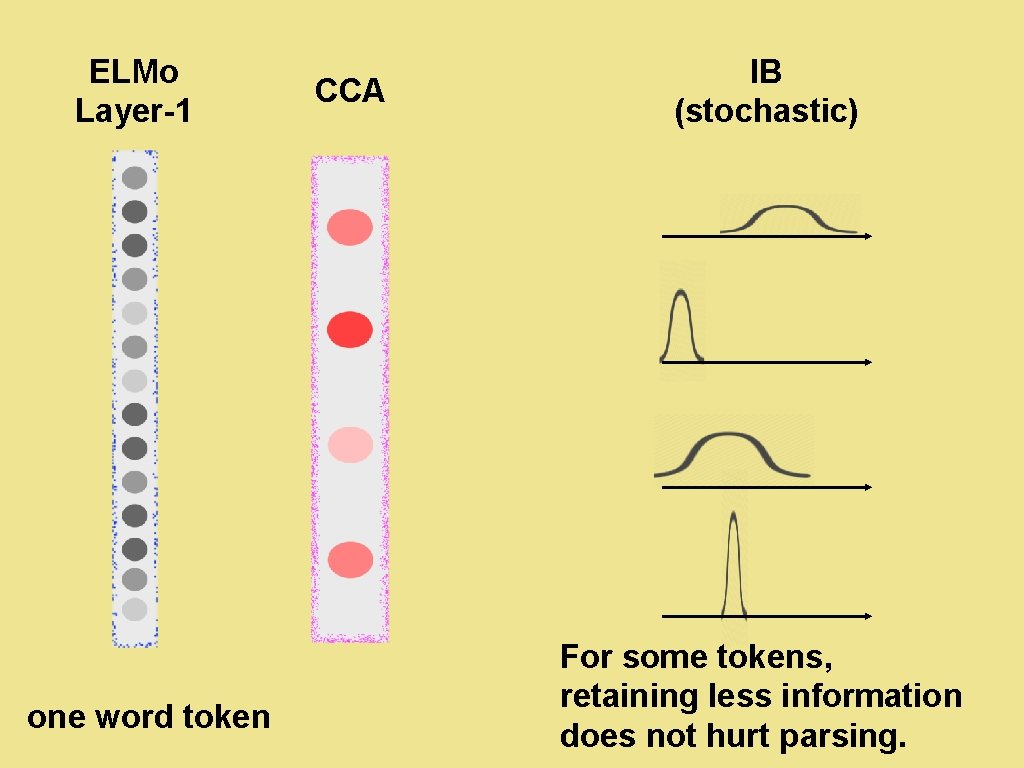

ELMo Layer-1 IB (stochastic) CCA one word token 32 For some tokens, retaining less information does not hurt parsing.

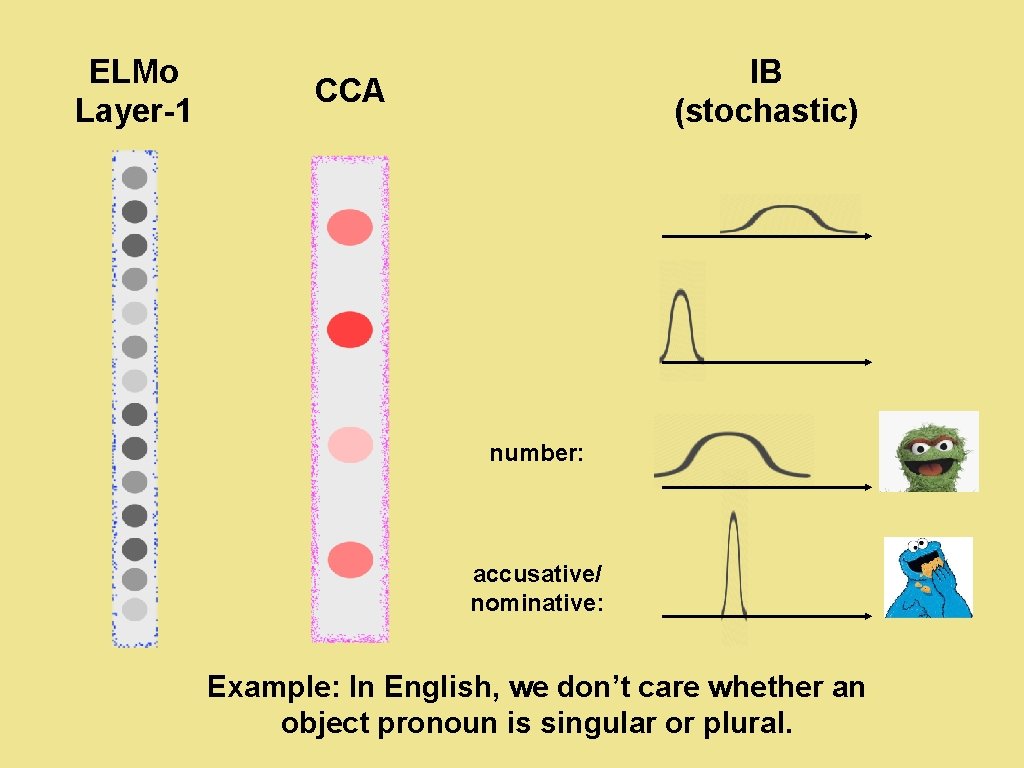

ELMo Layer-1 IB (stochastic) CCA number: accusative/ nominative: Example: In English, we don’t care whether an object pronoun is singular or plural. 33

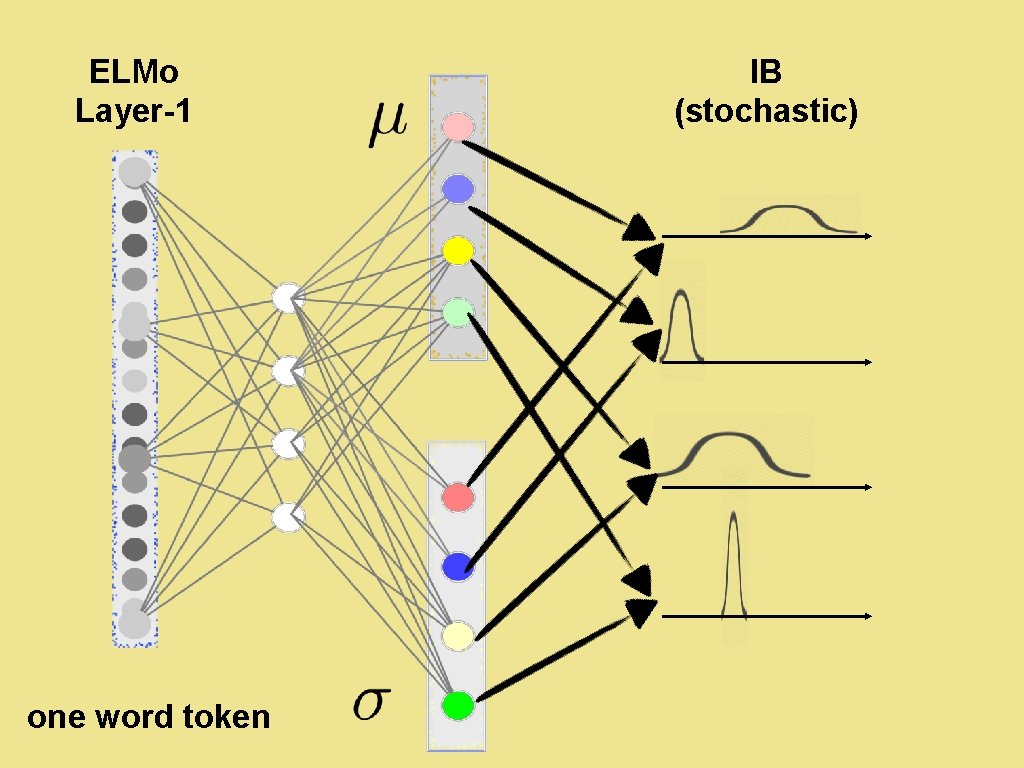

ELMo Layer-1 IB (stochastic) one word token 34

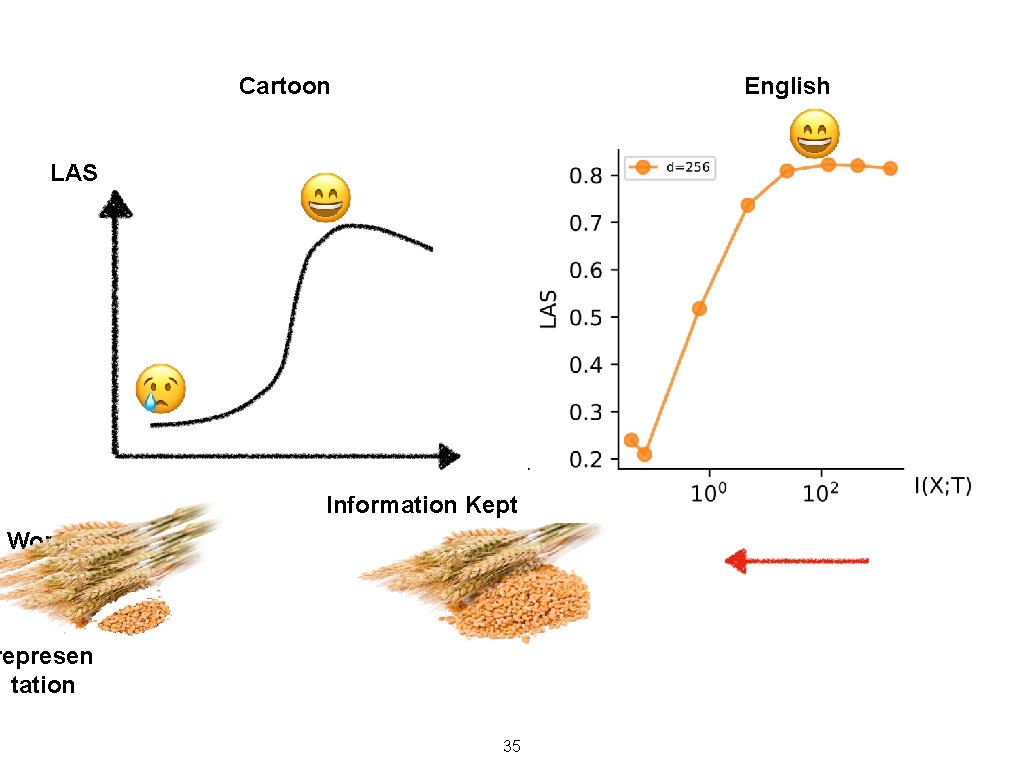

Cartoon English LAS Information Kept Worse Accurac y Smaller represen tation 35

Q: Is our specialization contextual? A: YES, because we compress ELMo layer 1, which depends on the context. 36

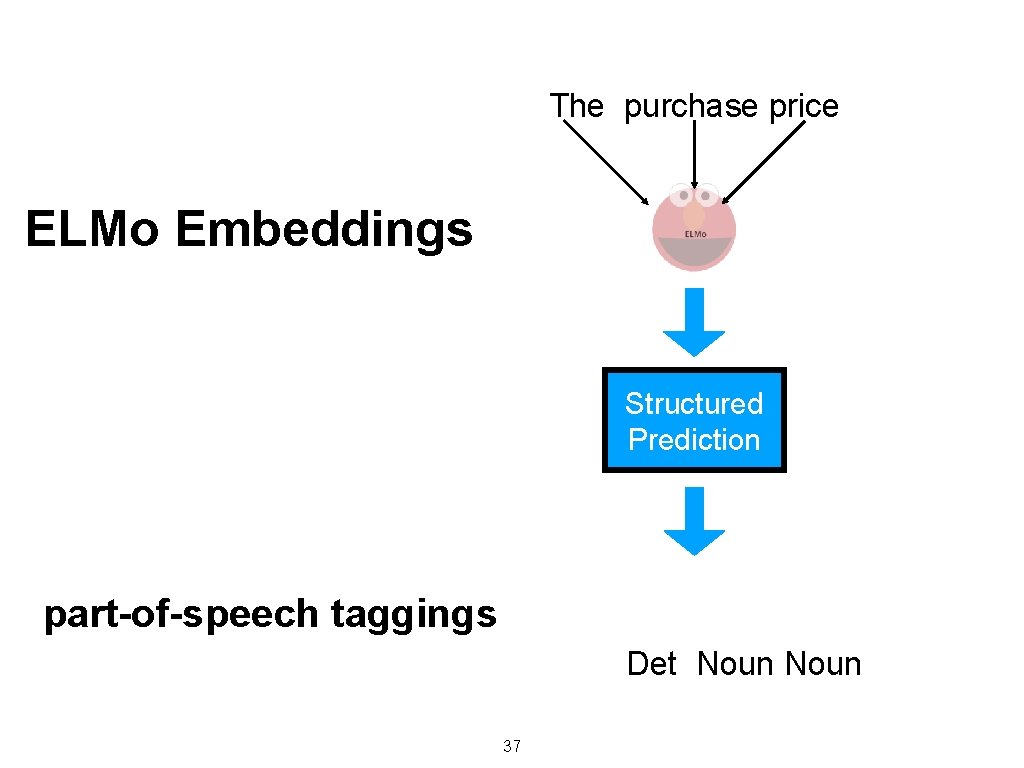

The purchase price ELMo Embeddings Structured Prediction part-of-speech taggings Det Noun 37

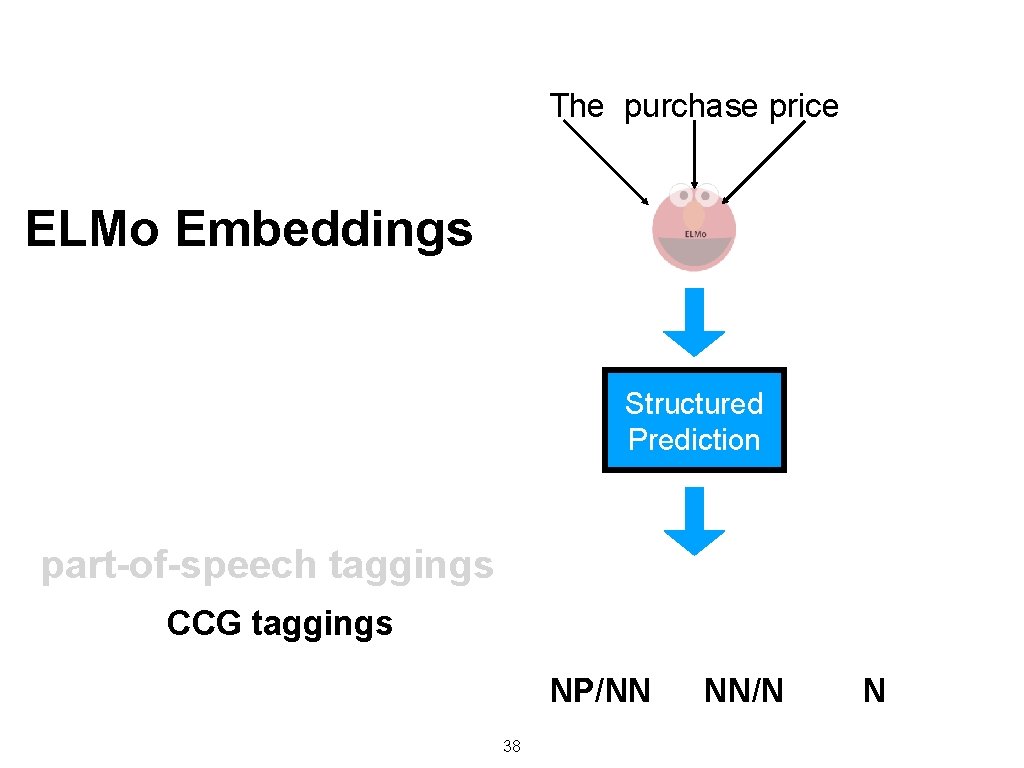

The purchase price ELMo Embeddings Structured Prediction part-of-speech taggings CCG taggings NP/NN 38 NN/N N

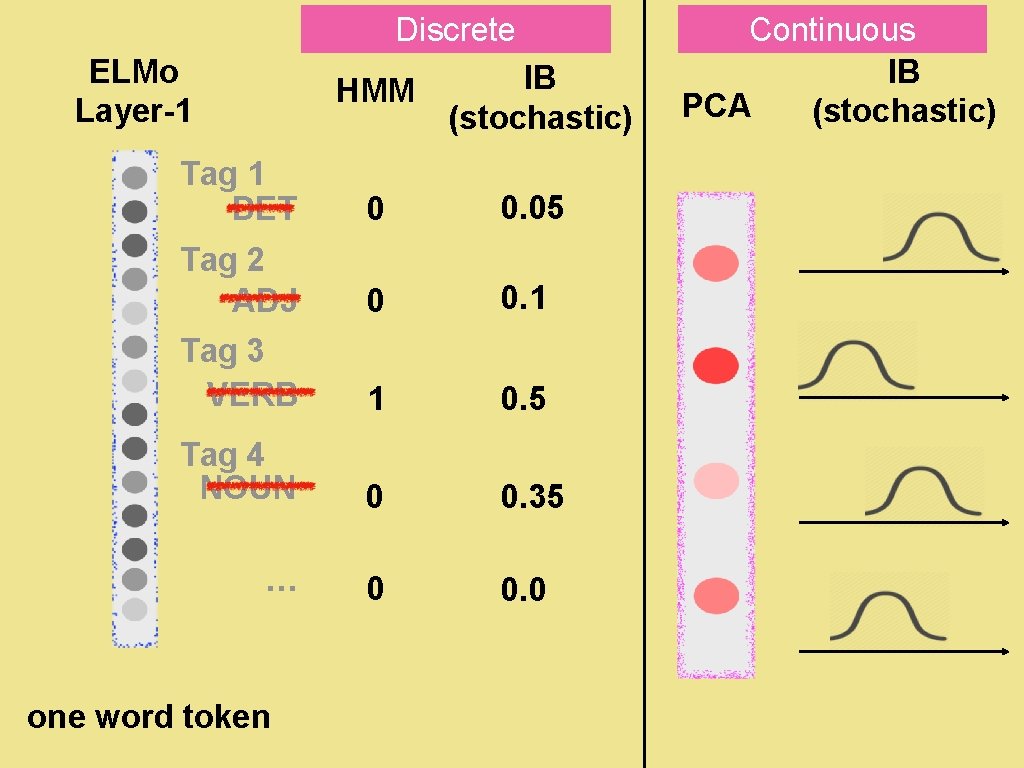

Discrete ELMo Layer-1 HMM IB (stochastic) Tag 1 DET 0 0. 05 Tag 2 ADJ 0 0. 1 Tag 3 VERB 1 0. 5 Tag 4 NOUN 0 0. 35 … 0 0. 0 one word token 39 Continuous IB PCA (stochastic)

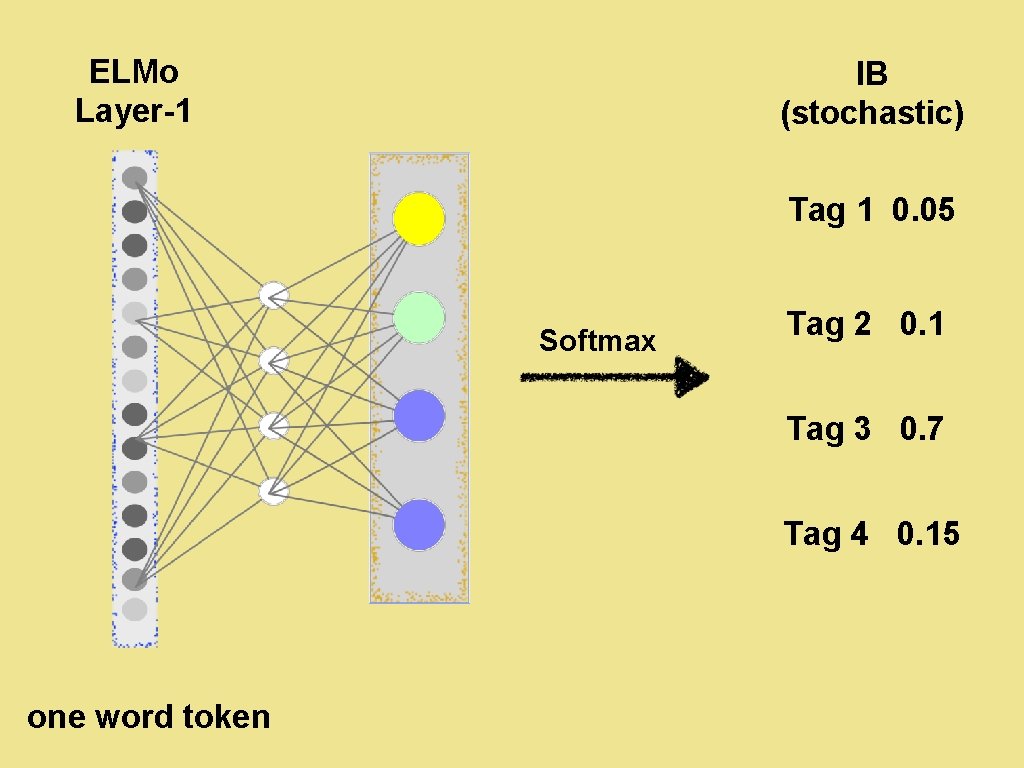

ELMo Layer-1 IB (stochastic) Tag 1 0. 05 Softmax Tag 2 0. 1 Tag 3 0. 7 Tag 4 0. 15 one word token 40

ELMo Layer Decoder Token Encoder 41

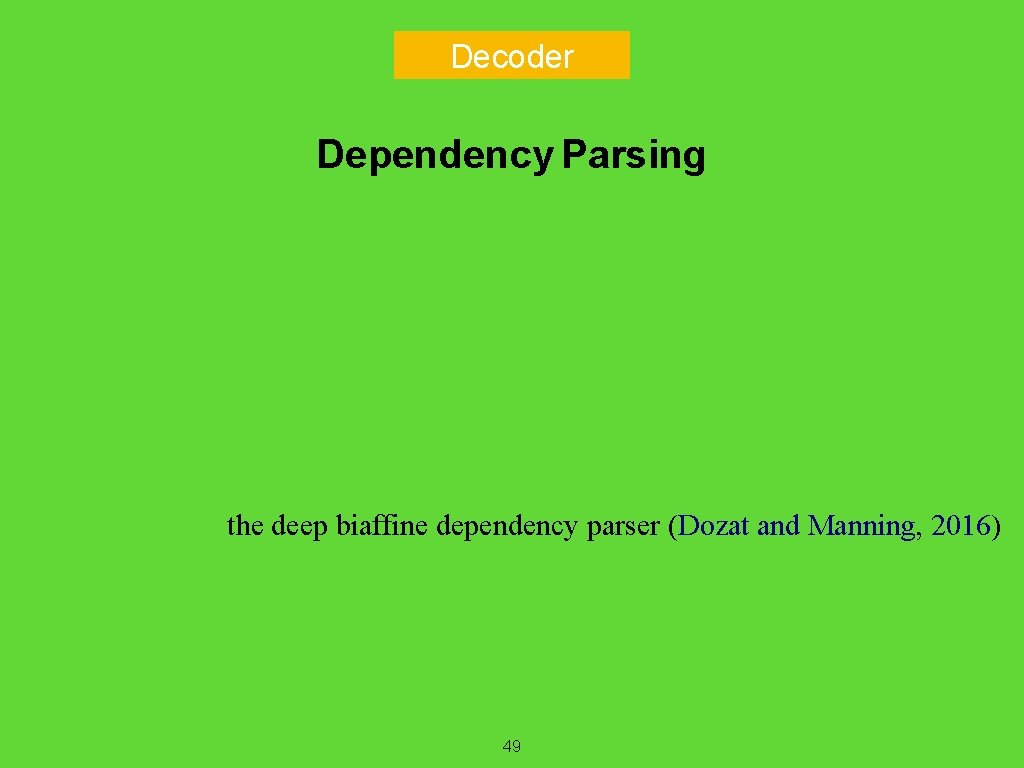

Decoder Dependency Parsing the deep biaffine dependency parser (Dozat and Manning, 2016) 49

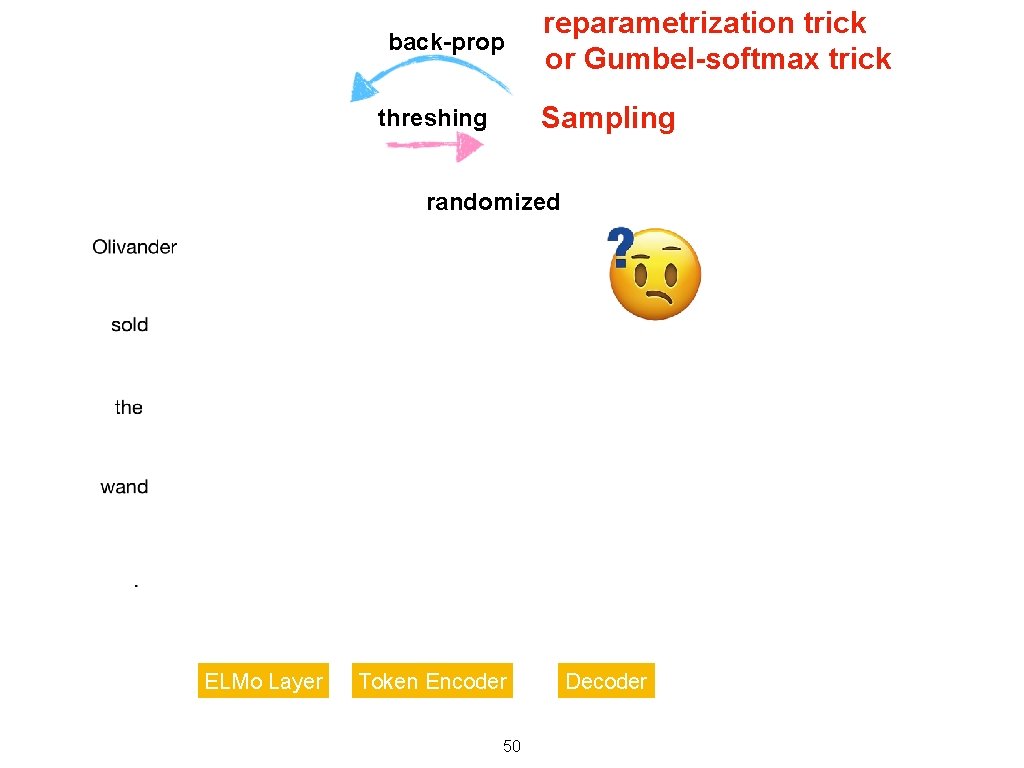

back-prop reparametrization trick or Gumbel-softmax trick Sampling threshing randomized ELMo Layer Token Encoder 50 Decoder

Variational Upper Bound • To minimize the IB objective directly, some terms are intractable. • • So we instead minimize a variational upper bound. • • and Which is what the previous slide estimated by sampling For mathematical details, please refer to our paper ; ) 56

Do our specialized tags correlate with tradition Yes! 57

Results 58

![LAS What do specialized tags look like? [Continuous] Information Kept Too much Compression Moderate LAS What do specialized tags look like? [Continuous] Information Kept Too much Compression Moderate](http://slidetodoc.com/presentation_image_h2/fa46a773a8bcded9c810d089b5d4e96b/image-41.jpg)

LAS What do specialized tags look like? [Continuous] Information Kept Too much Compression Moderate Compression 59 All Information Kept

![ADJ What our specialized tags look like? [Discrete] ADJ VERB NOUN ADV poss (NOUN) ADJ What our specialized tags look like? [Discrete] ADJ VERB NOUN ADV poss (NOUN)](http://slidetodoc.com/presentation_image_h2/fa46a773a8bcded9c810d089b5d4e96b/image-42.jpg)

ADJ What our specialized tags look like? [Discrete] ADJ VERB NOUN ADV poss (NOUN) NOM (NOUN) NOUN ACC (NOUN) PUNCT anaphors PREP 61 CONJ

Do our specialized tags correlate with POS tags? Yes! Our results agree with the intuition that POS keeps information abou POS is contextual. Our taggings are contextual as well !!! WAIT!? The word type (without context) should also be a strong predictor of POS. How do we specialize so that we mostly depend on type information? 62

1. We could use ELMo Layer-0. ELMo layer-0 is based on a character-level convolutional network. Thus Specializing is to extract from existing embeddings, which is also non-c 2. We could do a softer version — make the specialized tagging depe 63

![Parsing Performance [continuous] LAS ELMo : train by 1024 dimensional ELMo-layer 2 PCA : Parsing Performance [continuous] LAS ELMo : train by 1024 dimensional ELMo-layer 2 PCA :](http://slidetodoc.com/presentation_image_h2/fa46a773a8bcded9c810d089b5d4e96b/image-45.jpg)

Parsing Performance [continuous] LAS ELMo : train by 1024 dimensional ELMo-layer 2 PCA : train by 256 dimensional embeddings after applying PCA to ELMo-layer 2 o M EL A C P P L M MLP: train a non-linear cleanup layer after the ELMo embeddings jointly with the parser. c B I V English VIBc: our method ; ) Wins or ties across 9 languages. 1. 0 point improvement on average 71

![LAS Parsing Performance [discrete] POS: train the parser on gold POS tags VIBd: Our LAS Parsing Performance [discrete] POS: train the parser on gold POS tags VIBd: Our](http://slidetodoc.com/presentation_image_h2/fa46a773a8bcded9c810d089b5d4e96b/image-46.jpg)

LAS Parsing Performance [discrete] POS: train the parser on gold POS tags VIBd: Our discrete version. POS VIBd 72

![Parsing Performance [continuous] ELMo : train by 1024 dimensional ELMo-layer 2 PCA : train Parsing Performance [continuous] ELMo : train by 1024 dimensional ELMo-layer 2 PCA : train](http://slidetodoc.com/presentation_image_h2/fa46a773a8bcded9c810d089b5d4e96b/image-47.jpg)

Parsing Performance [continuous] ELMo : train by 1024 dimensional ELMo-layer 2 PCA : train by 256 dimensional embeddings after applying PCA to ELMo-layer 2 EL Mo A C P LP M VI Bc e n tu e if n MLP: train a non-linear cleanup layer after the ELMo embeddings jointly with the parser. VIBc: our method ; ) English finetune + mild hyperparameter tuning 73

Thanks 74

- Slides: 48