Concurrent Probabilistic Temporal Planning CPTP Mausam Joint work

Concurrent Probabilistic Temporal Planning (CPTP) Mausam Joint work with Daniel S. Weld University of Washington Seattle

Motivation l Three features of real world planning domains : l Durative actions l l Concurrency l l All actions (navigation between sites, placing instruments etc. ) take time. Some instruments may warm up Others may perform their tasks Others may shutdown to save power. Uncertainty l All actions (pick up the rock, send data etc. ) have a probability of failure.

Motivation (contd. ) l Concurrent Temporal Planning (widely studied with deterministic effects) l l l Concurrent planning with uncertainty (Concurrent MDPs – AAAI’ 04) l l l Extends classical planning Doesn’t easily extend to probabilistic outcomes. Handle combinations of actions over an MDP Actions take unit time. Few planners handle three in concert!

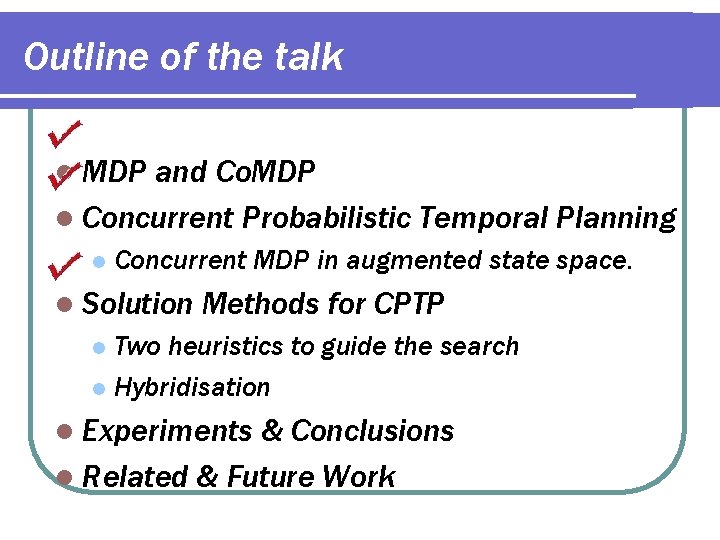

Outline of the talk l MDP and Co. MDP l Concurrent Probabilistic Temporal Planning l Concurrent MDP in augmented state space. l Solution Methods for CPTP Two heuristics to guide the search l Hybridisation l l Experiments & Conclusions l Related & Future Work

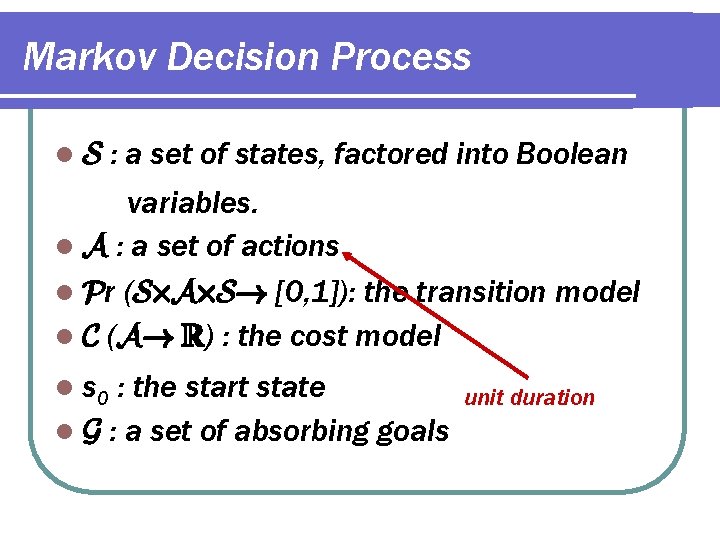

Markov Decision Process l. S : a set of states, factored into Boolean variables. l A : a set of actions l Pr (S£A£S! [0, 1]): the transition model l C (A! R) : the cost model l s 0 : the start state l G : a set of absorbing goals unit duration

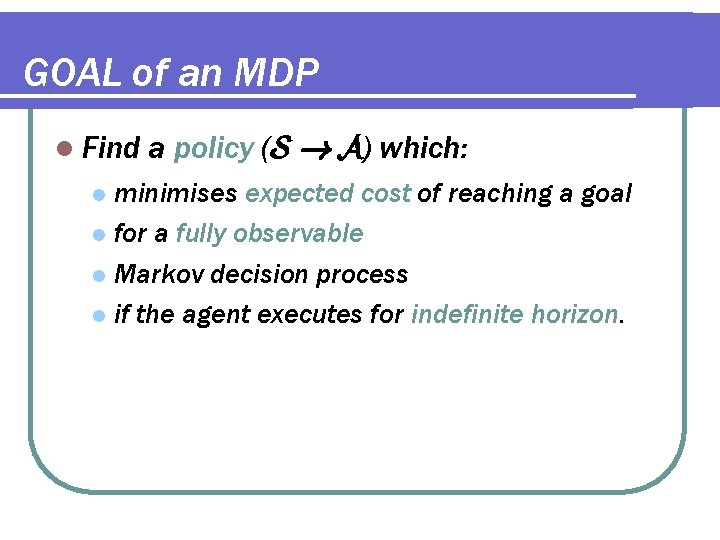

GOAL of an MDP l Find a policy (S ! A) which: minimises expected cost of reaching a goal l for a fully observable l Markov decision process l if the agent executes for indefinite horizon. l

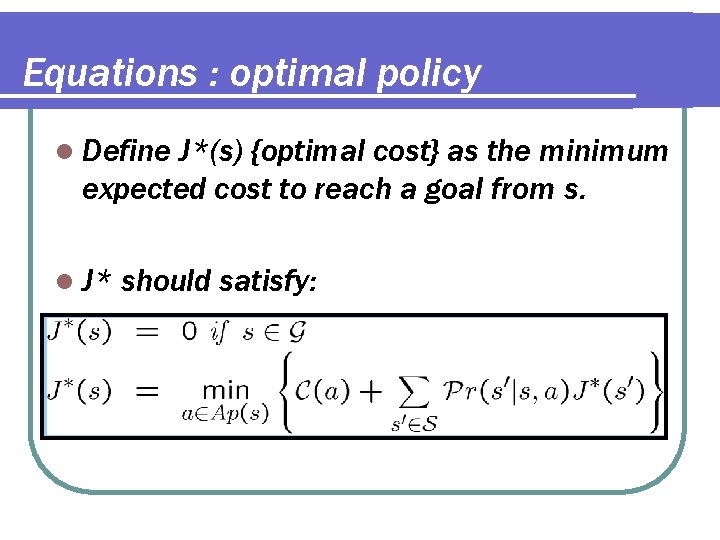

Equations : optimal policy l Define J*(s) {optimal cost} as the minimum expected cost to reach a goal from s. l J* should satisfy:

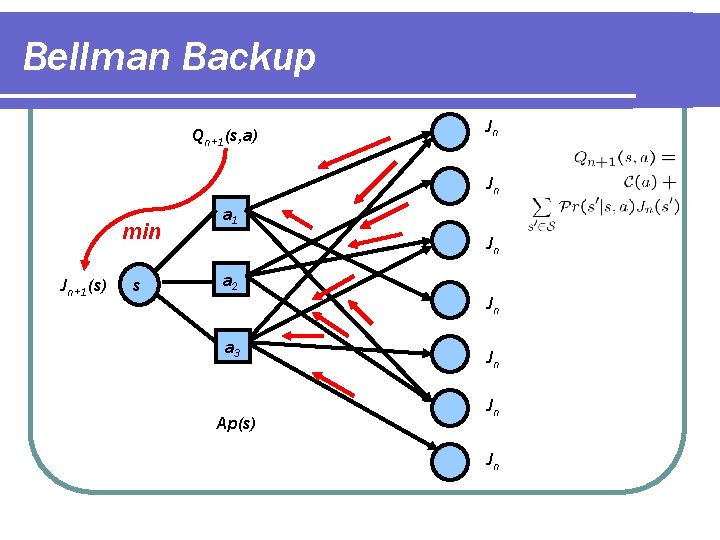

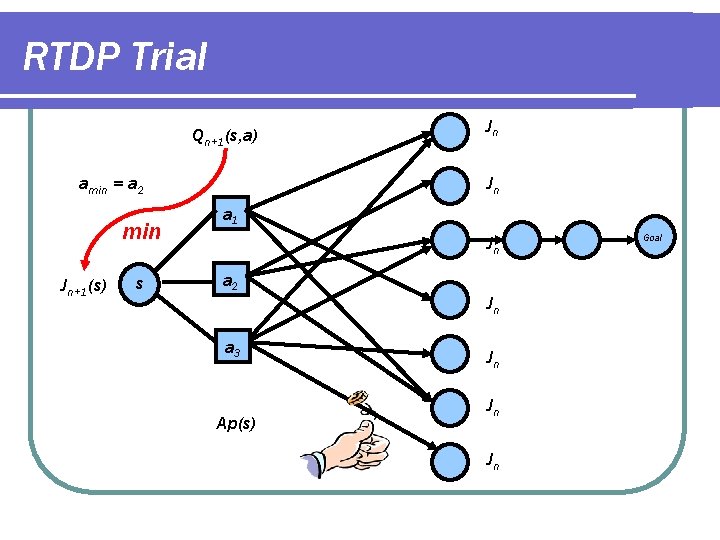

Bellman Backup Qn+1(s, a) Min min Jn+1(s) s Jn Jn a 1 Jn a 2 a 3 Ap(s) Jn Jn

RTDP Trial Qn+1(s, a) amin = a 2 min Jn+1(s) s Min Jn Jn a 1 Jn a 2 a 3 Ap(s) Jn Jn Goal

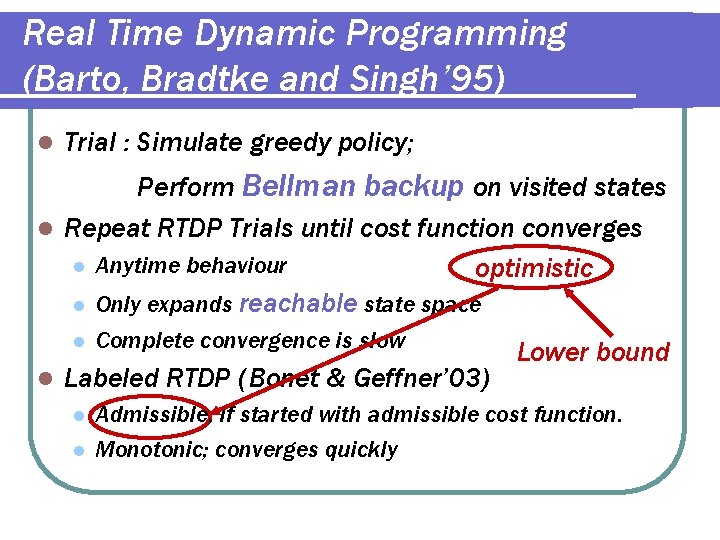

Real Time Dynamic Programming (Barto, Bradtke and Singh’ 95) l Trial : Simulate greedy policy; Perform Bellman backup on visited states l Repeat RTDP Trials until cost function converges l Anytime behaviour optimistic l Only expands reachable state space l l Complete convergence is slow Labeled RTDP (Bonet & Geffner’ 03) l l Lower bound Admissible, if started with admissible cost function. Monotonic; converges quickly

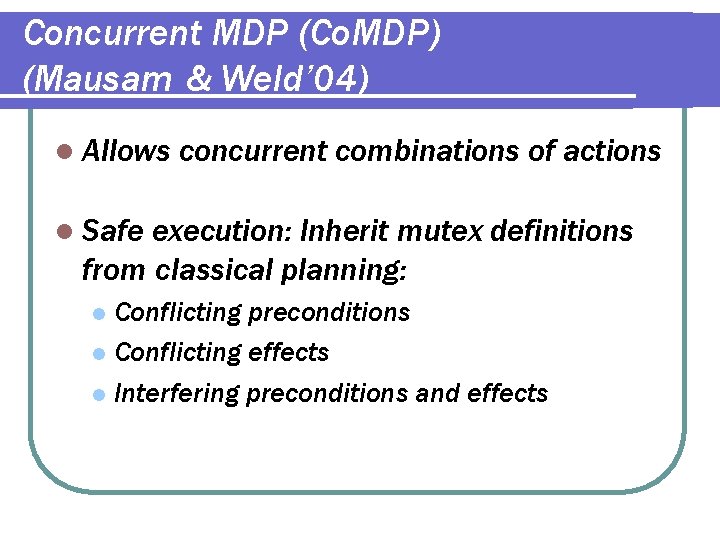

Concurrent MDP (Co. MDP) (Mausam & Weld’ 04) l Allows concurrent combinations of actions l Safe execution: Inherit mutex definitions from classical planning: Conflicting preconditions l Conflicting effects l Interfering preconditions and effects l

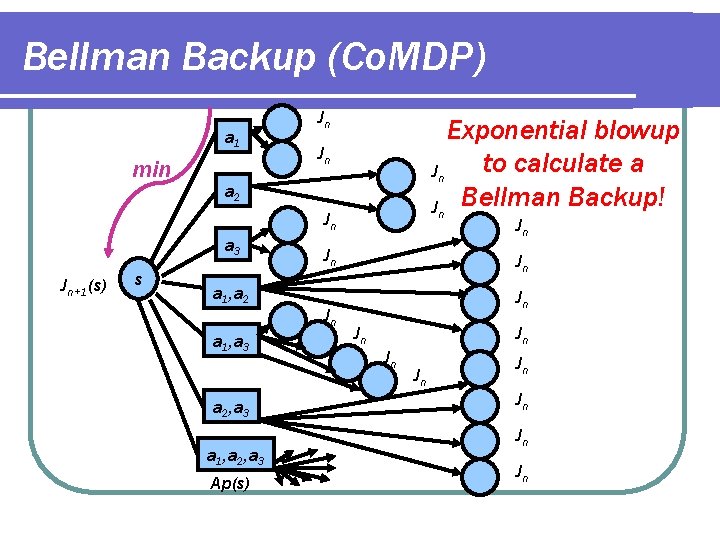

Bellman Backup (Co. MDP) a 1 min Jn Exponential blowup to calculate a Jn Jn Bellman Backup! Jn a 2 Jn a 3 Jn+1(s) s a 1, a 2 a 1, a 3 a 2, a 3 a 1, a 2, a 3 Ap(s) Jn Jn Jn Jn

Sampled RTDP l RTDP with Stochastic (partial) backups: Approximate l Always try the last best combination l Randomly sample a few other combinations l l In practice Close to optimal solutions l Converges very fast l

Outline of the talk l MDP and Co. MDP l Concurrent Probabilistic Temporal Planning l Concurrent MDP in augmented state space. l Solution Methods for CPTP Two heuristics to guide the search l Hybridisation l l Experiments & Conclusions l Related & Future Work

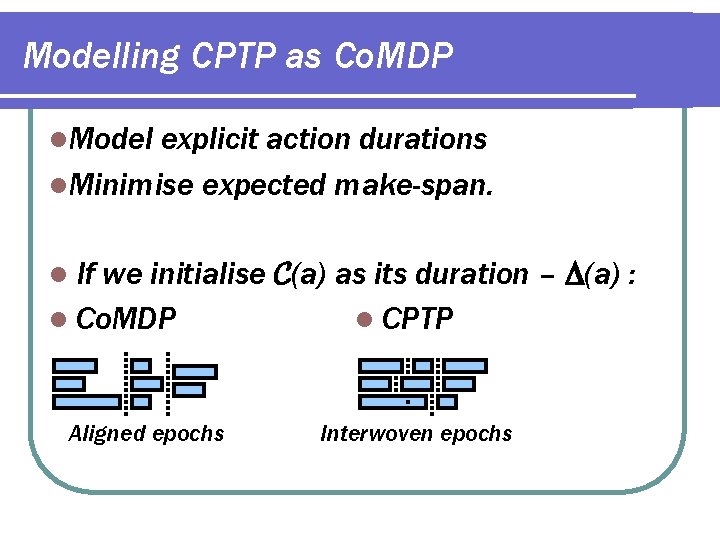

Modelling CPTP as Co. MDP l. Model explicit action durations l. Minimise expected make-span. If we initialise C(a) as its duration – (a) : l Co. MDP l CPTP l Aligned epochs Interwoven epochs

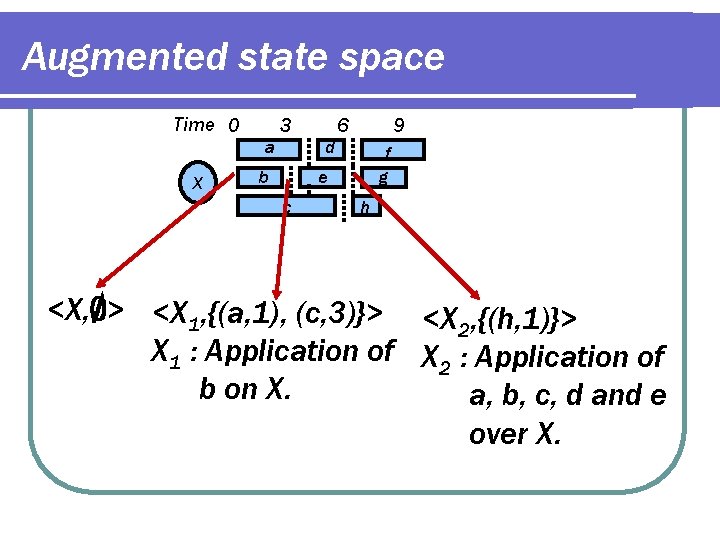

Augmented state space Time 0 3 a X 6 9 d b f g e c h <X, ; > <X 1, {(a, 1), (c, 3)}> <X , {(h, 1)}> 2 X 1 : Application of X 2 : Application of b on X. a, b, c, d and e over X.

Simplifying assumptions All actions have deterministic durations. l All action durations are integers. l Action model l l Preconditions must hold until end of action. Effects are usable only at the end of action. Properties : l l Mutex rules are still required. Sufficient to consider only epochs when an action ends

Completing the Co. MDP l Redefine l l Applicability set Transition function Start and goal states. Example: l Transition function is redefined l Agent moves forward in time to an epoch where some action completes. l Start state : <s 0, ; > l etc.

Solution l CPTP = Co. MDP in interwoven state space. l Thus one may use our sampled RTDP (etc) l PROBLEM: Exponential blowup in the size of the state space.

Outline of the talk l MDP and Co. MDP l Concurrent Probabilistic Temporal Planning l Concurrent MDP in augmented state space. l Solution Methods for CPTP Solution 1 : Two heuristics to guide the search l Solution 2 : Hybridisation l l Experiments & Conclusions l Related & Future Work

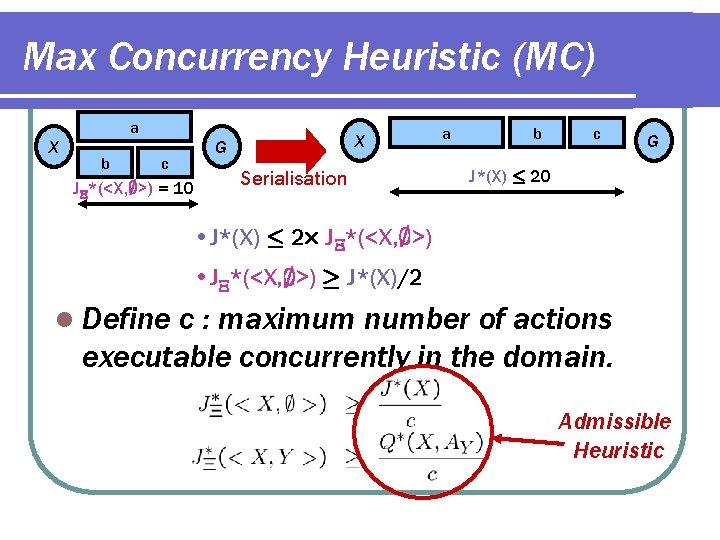

Max Concurrency Heuristic (MC) a X b X G c J *(<X, ; >) = 10 Serialisation a b c G J*(X) · 20 • J*(X) · 2£ J *(<X, ; >) • J *(<X, ; >) ¸ J*(X)/2 l Define c : maximum number of actions executable concurrently in the domain. Admissible Heuristic

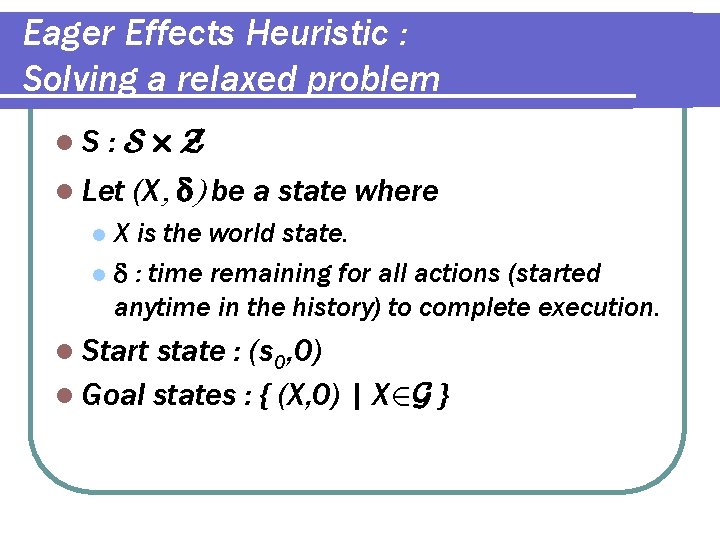

Eager Effects Heuristic : Solving a relaxed problem l. S : S£Z l Let (X , d) be a state where X is the world state. l d : time remaining for all actions (started anytime in the history) to complete execution. l l Start state : (s 0, 0) l Goal states : { (X, 0) | X 2 G }

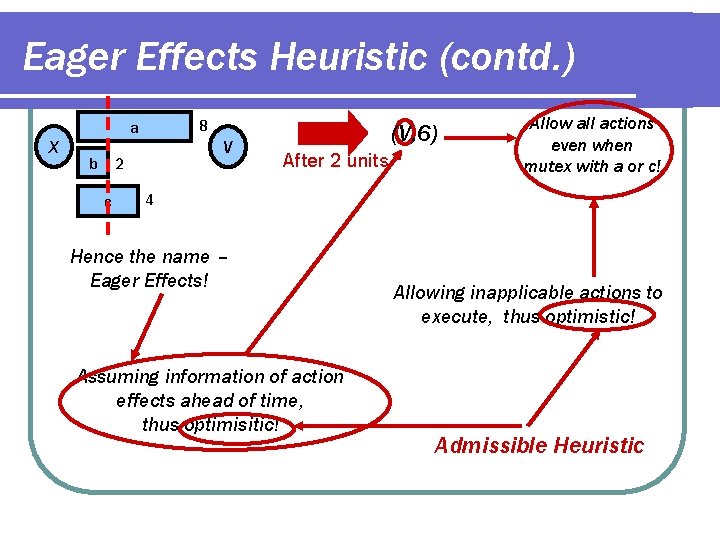

Eager Effects Heuristic (contd. ) 8 a X b V 2 c (V, 6) After 2 units Allow all actions even when mutex with a or c! 4 Hence the name – Eager Effects! Assuming information of action effects ahead of time, thus optimisitic! Allowing inapplicable actions to execute, thus optimistic! Admissible Heuristic

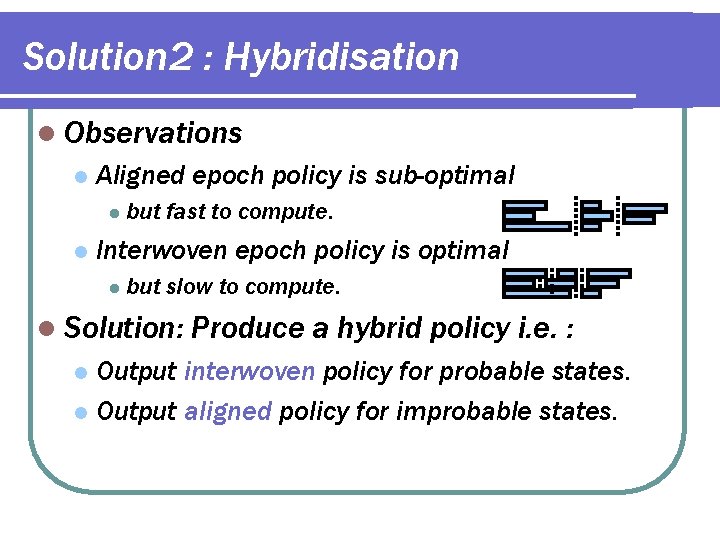

Solution 2 : Hybridisation l Observations l Aligned epoch policy is sub-optimal l l but fast to compute. Interwoven epoch policy is optimal l but slow to compute. l Solution: Produce a hybrid policy i. e. : Output interwoven policy for probable states. l Output aligned policy for improbable states. l

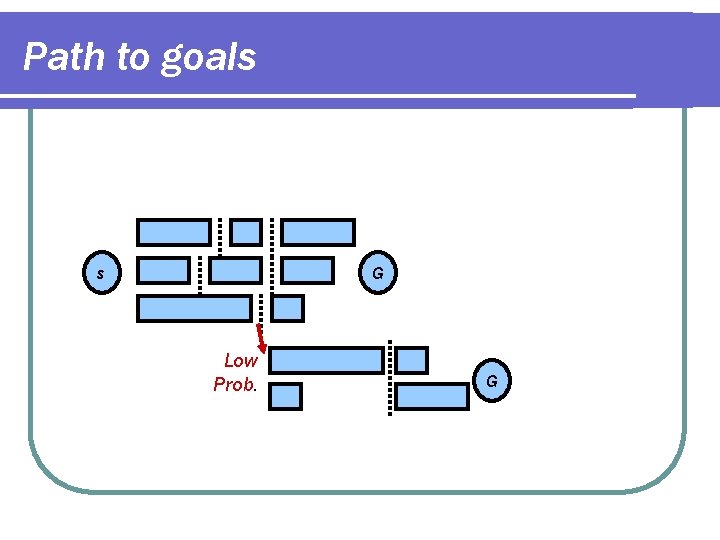

Path to goals s G Low Prob. G

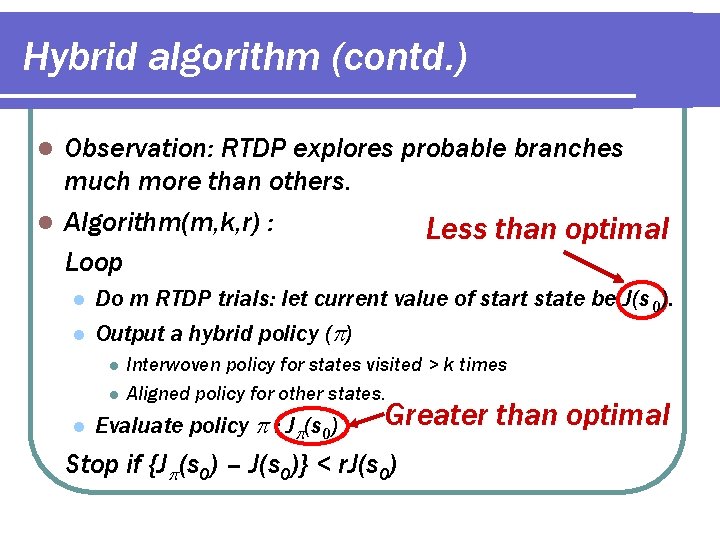

Hybrid algorithm (contd. ) Observation: RTDP explores probable branches much more than others. l Algorithm(m, k, r) : Less than optimal Loop l l l Do m RTDP trials: let current value of start state be J(s 0). Output a hybrid policy ( ) l l l Interwoven policy for states visited > k times Aligned policy for other states. Evaluate policy : J (s 0) Greater than optimal Stop if {J (s 0) – J(s 0)} < r. J(s 0)

Hybridisation l Outputs a proper policy : l l l Policy defined at all reachablepolicy states Policy guaranteed to take agent to goal. Has an optimality ratio (r) parameter l Controls balance between optimality & running times. Can be used as an anytime algorithm. l Is general – l l l we can hybridise two algorithms in other cases e. g. in solving original concurrent MDP.

Outline of the talk l MDP and Co. MDP l Concurrent Probabilistic Temporal Planning l Concurrent MDP in augmented state space. l Solution Methods for CPTP Two heuristics to guide the search l Hybridisation l l Experiments & Conclusions l Related & Future Work

Experiments l Domains Rover l Machine. Shop l Artificial l l State Variables: 14 -26 l Durations: 1 -20

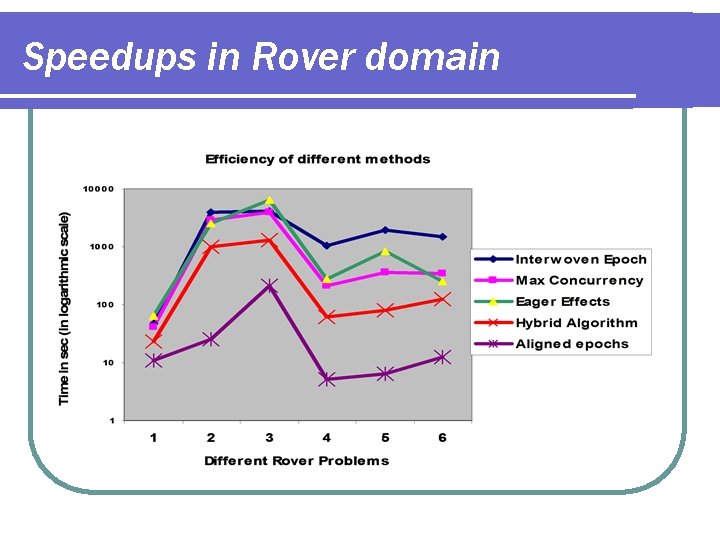

Speedups in Rover domain

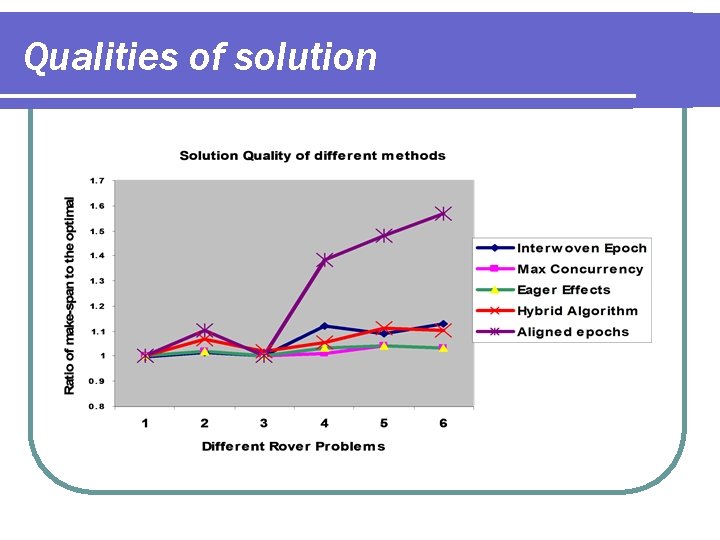

Qualities of solution

Experiments : Summary l Max Concurrency heuristic l l l Eager Effects heuristic l l l High quality Can be expensive in some domains. Hybrid algorithm l l l Fast to compute Speeds up the search. Very fast Produces good quality solutions. Aligned epoch model l l Superfast Outputs poor quality solutions at times.

Related Work l Prottle (Little, Aberdeen, Thiebaux’ 05) l Generate, test and debug paradigm (Younes & Simmons’ 04) l Concurrent options (Rohanimanesh & Mahadevan’ 04)

Future Work l Other applications of hybridisation l l Co. MDP Over. Subscription Planning Relaxing the assumptions l l l Handling mixed costs Extending to PDDL 2. 1 Stochastic action durations Extensions to metric resources l State space compression/aggregation l

- Slides: 34