Cache Replacement Policy Using Mapbased Adaptive Insertion Yasuo

Cache Replacement Policy Using Map-based Adaptive Insertion Yasuo Ishii 1, 2, Mary Inaba 1, and Kei Hiraki 1 1 The University of Tokyo 2 NEC Corporation

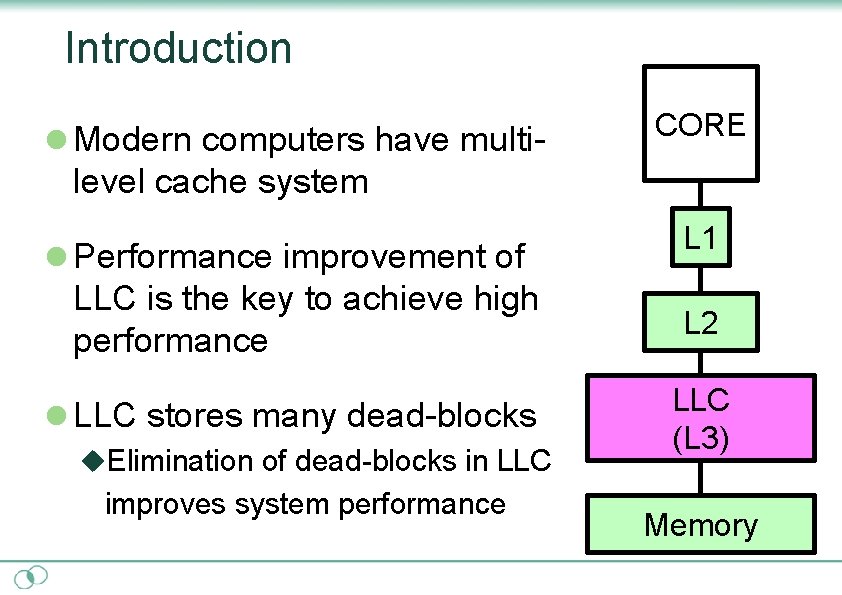

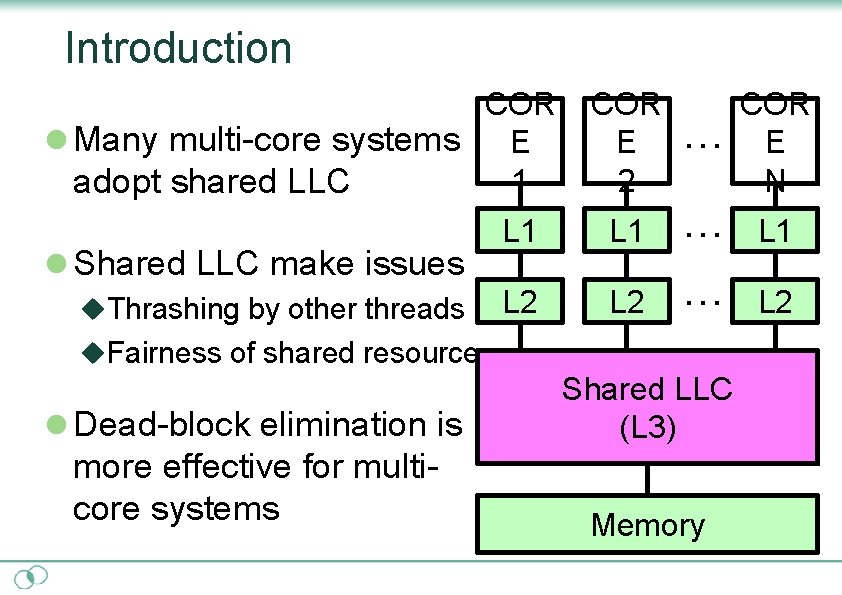

Introduction l Modern computers have multi- CORE level cache system l Performance improvement of LLC is the key to achieve high performance l LLC stores many dead-blocks u. Elimination of dead-blocks in LLC improves system performance L 1 L 2 LLC (L 3) Memory

Introduction COR l Many multi-core systems E 1 adopt shared LLC l Shared LLC make issues u. Thrashing by other threads COR E ・・・ E 2 N L 1 ・・・ L 1 L 2 ・・・ L 2 u. Fairness of shared resource l Dead-block elimination is Shared LLC (L 3) more effective for multicore systems Memory

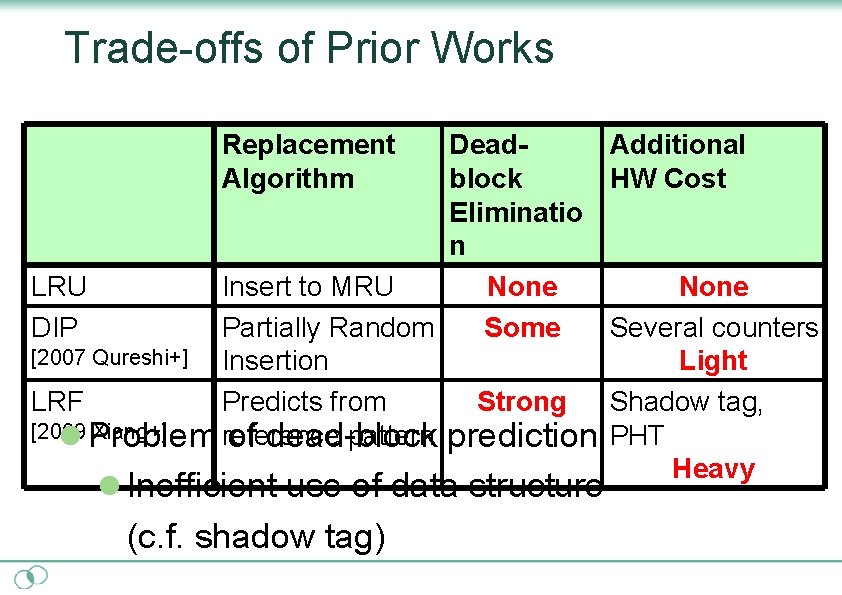

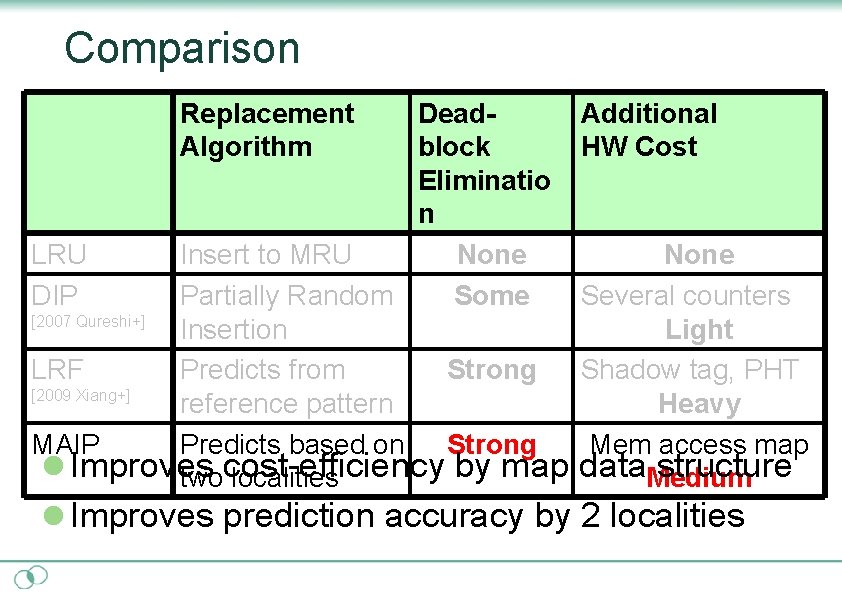

Trade-offs of Prior Works Replacement Algorithm Dead. Additional block HW Cost Eliminatio n LRU Insert to MRU None DIP Partially Random Some Several counters [2007 Qureshi+] Insertion Light LRF Predicts from Strong Shadow tag, [2009 Xiang+] reference pattern prediction PHT l Problem of dead-block Heavy l Inefficient use of data structure (c. f. shadow tag)

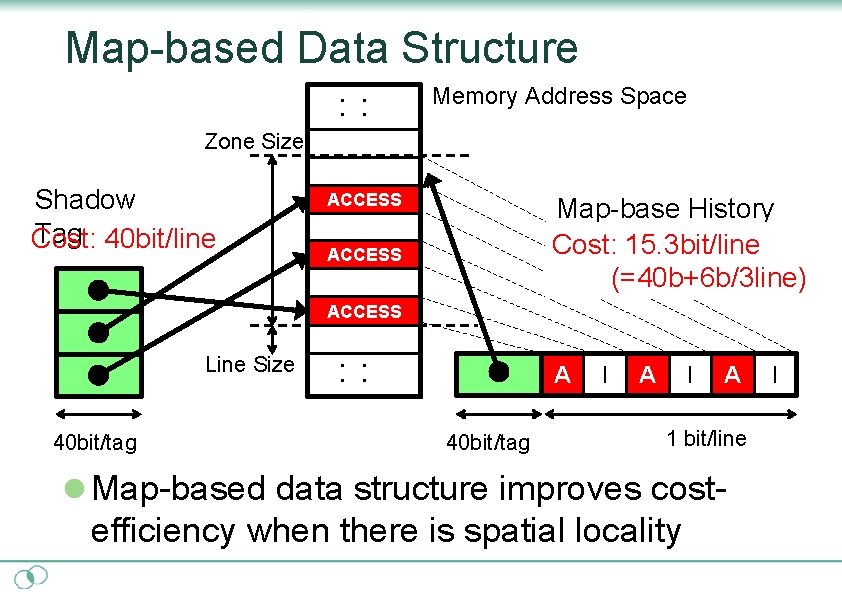

Map-based Data Structure ・ ・ Memory Address Space Zone Size Shadow Tag 40 bit/line Cost: ACCESS Map-base History Cost: 15. 3 bit/line (=40 b+6 b/3 line) ACCESS Line Size 40 bit/tag ・ ・ AI 40 bit/tag I A I I AI 1 bit/line l Map-based data structure improves cost- efficiency when there is spatial locality I

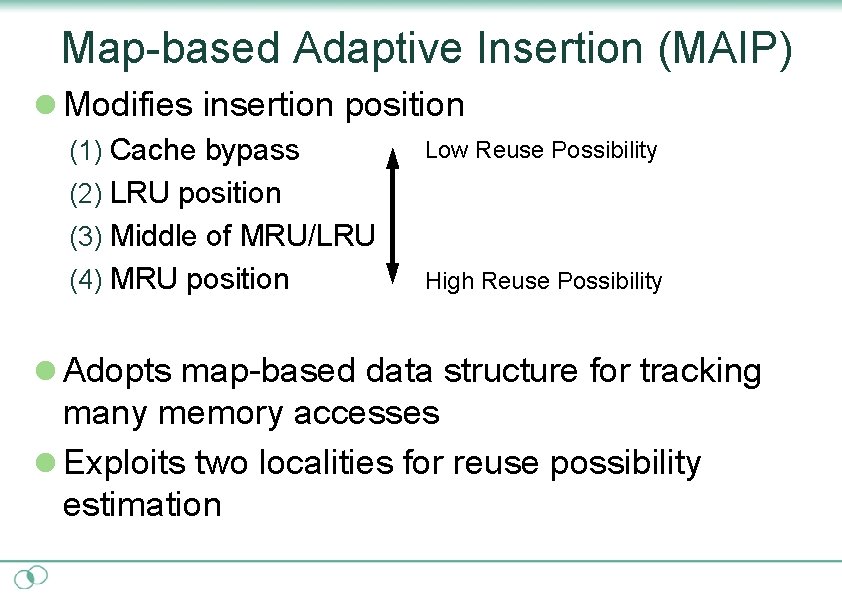

Map-based Adaptive Insertion (MAIP) l Modifies insertion position (1) Cache bypass Low Reuse Possibility (2) LRU position (3) Middle of MRU/LRU (4) MRU position High Reuse Possibility l Adopts map-based data structure for tracking many memory accesses l Exploits two localities for reuse possibility estimation

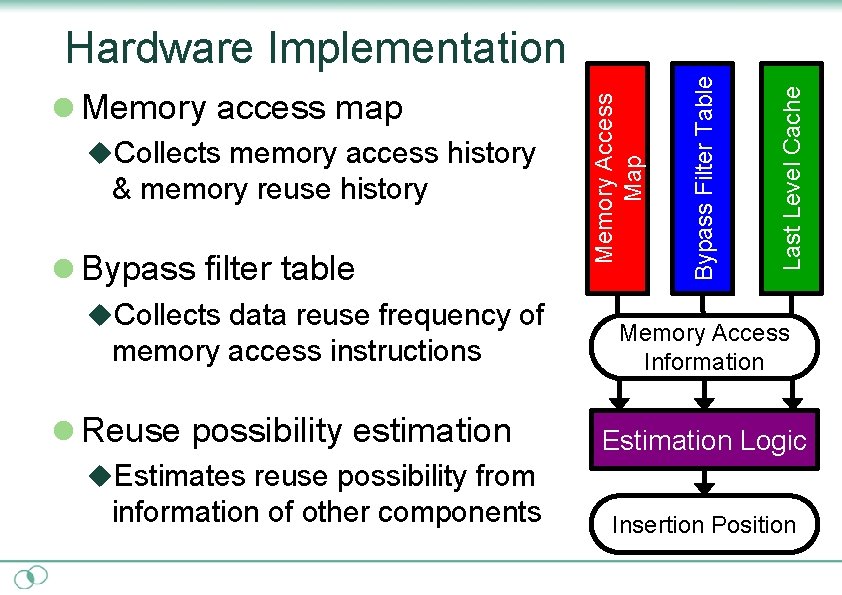

& memory reuse history l Bypass filter table u. Collects data reuse frequency of memory access instructions l Reuse possibility estimation Last Level Cache u. Collects memory access history Bypass Filter Table l Memory access map Memory Access Map Hardware Implementation Memory Access Information Estimation Logic u. Estimates reuse possibility from information of other components Insertion Position

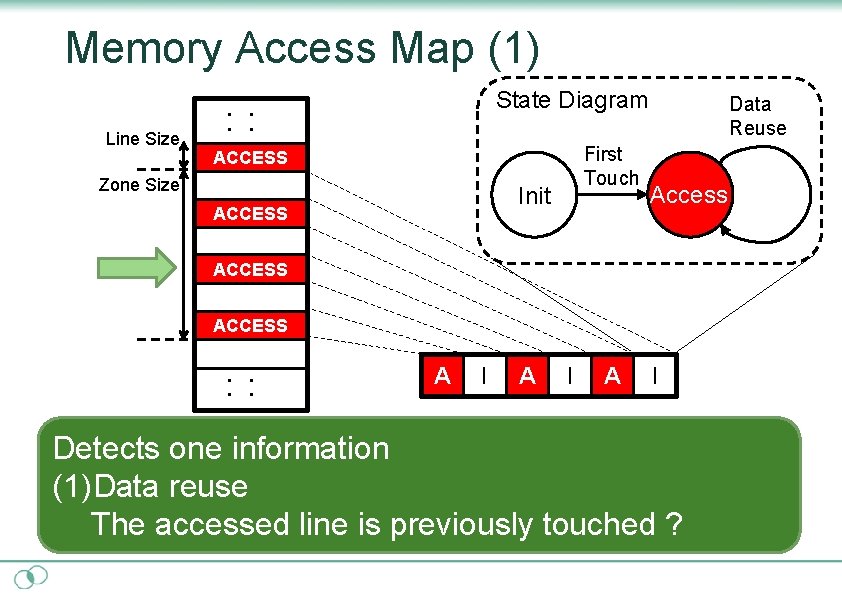

Memory Access Map (1) Line Size State Diagram ・ ・ First Touch ACCESS Zone Size Init ACCESS Data Reuse Access ACCESS ・ ・ AI I AI I Detects one information (1)Data reuse The accessed line is previously touched ?

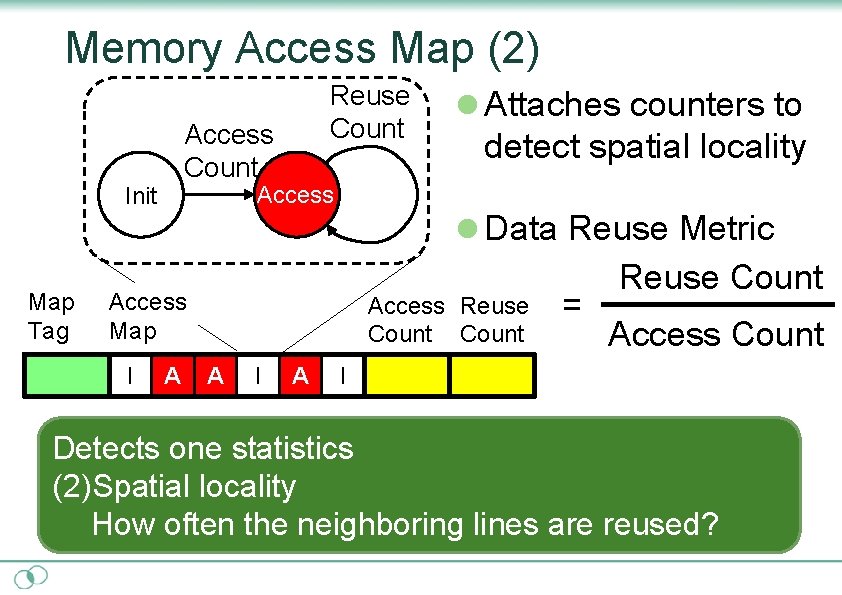

Memory Access Map (2) Access Count detect spatial locality l Data Reuse Metric Access Map I l Attaches counters to Access Init Map Tag Reuse Count A Access Reuse Count A I A = Reuse Count Access Count I Detects one statistics (2)Spatial locality How often the neighboring lines are reused?

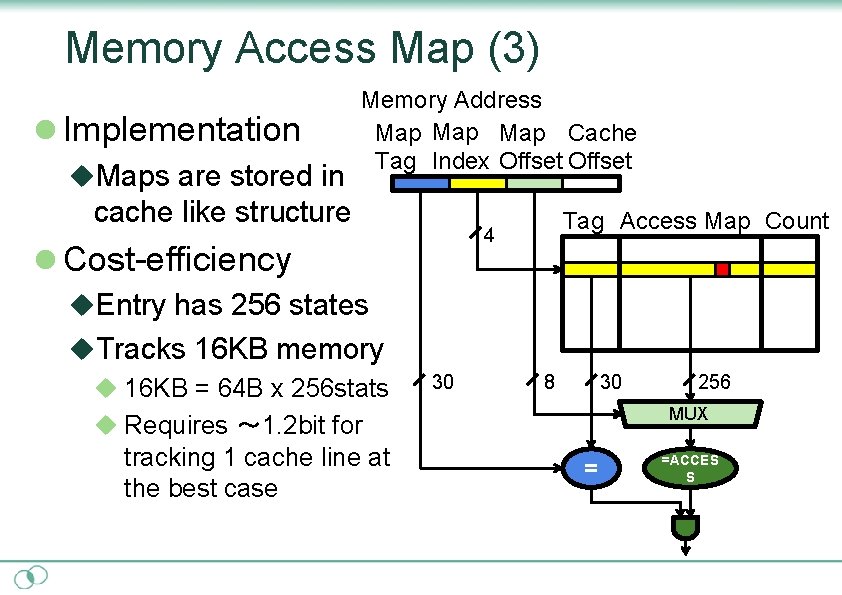

Memory Access Map (3) l Implementation u. Maps are stored in Memory Address Map Map Cache Tag Index Offset cache like structure Tag Access Map Count 4 l Cost-efficiency u. Entry has 256 states u. Tracks 16 KB memory u 16 KB = 64 B x 256 stats 30 8 30 MUX u Requires ~ 1. 2 bit for tracking 1 cache line at the best case 256 = =ACCES S

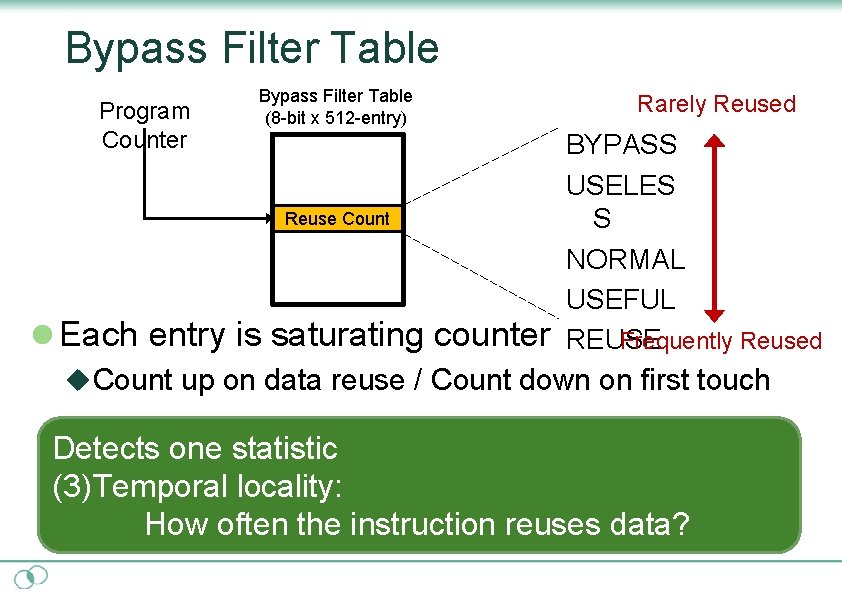

Bypass Filter Table Program Counter Bypass Filter Table (8 -bit x 512 -entry) Reuse Count l Each entry is saturating counter Rarely Reused BYPASS USELES S NORMAL USEFUL Frequently Reused REUSE u. Count up on data reuse / Count down on first touch Detects one statistic (3)Temporal locality: How often the instruction reuses data?

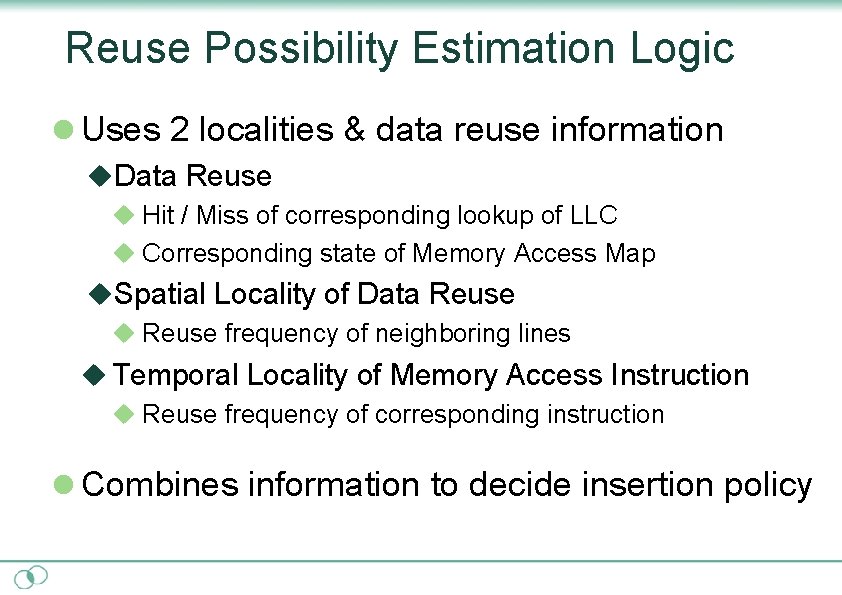

Reuse Possibility Estimation Logic l Uses 2 localities & data reuse information u. Data Reuse u Hit / Miss of corresponding lookup of LLC u Corresponding state of Memory Access Map u. Spatial Locality of Data Reuse u Reuse frequency of neighboring lines u Temporal Locality of Memory Access Instruction u Reuse frequency of corresponding instruction l Combines information to decide insertion policy

![Additional Optimization Adaptive dedicated set reduction(ADSR) l Enhancement of set dueling [2007 Qureshi+] Set Additional Optimization Adaptive dedicated set reduction(ADSR) l Enhancement of set dueling [2007 Qureshi+] Set](http://slidetodoc.com/presentation_image_h/dc3f7ca0cb12bfbbf39101e55a9a825f/image-13.jpg)

Additional Optimization Adaptive dedicated set reduction(ADSR) l Enhancement of set dueling [2007 Qureshi+] Set 0 Set 1 Set 2 Set 3 Set 4 Set 5 Set 6 Set 7 LRU Dedicated Set MAIP Dedicated Set Additional Follower Set l Reduces dedicated sets when PSEL is strongly biased

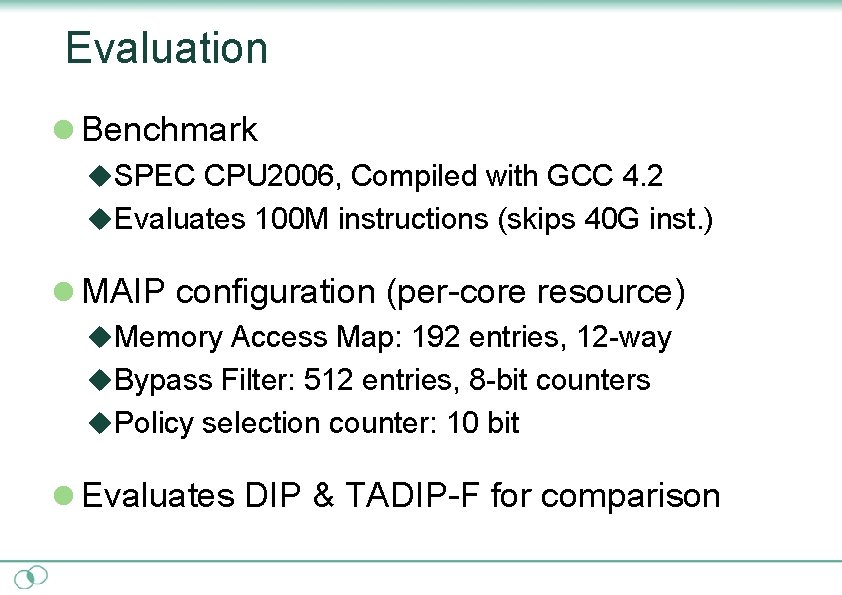

Evaluation l Benchmark u. SPEC CPU 2006, Compiled with GCC 4. 2 u. Evaluates 100 M instructions (skips 40 G inst. ) l MAIP configuration (per-core resource) u. Memory Access Map: 192 entries, 12 -way u. Bypass Filter: 512 entries, 8 -bit counters u. Policy selection counter: 10 bit l Evaluates DIP & TADIP-F for comparison

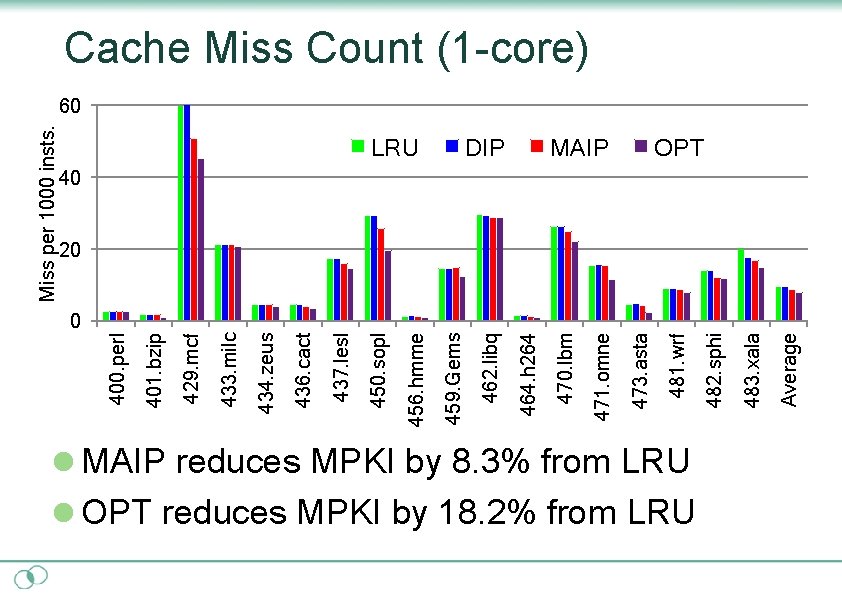

0 l MAIP reduces MPKI by 8. 3% from LRU l OPT reduces MPKI by 18. 2% from LRU Average 483. xala 482. sphi MAIP 481. wrf 473. asta 471. omne DIP 470. lbm 464. h 264 LRU 462. libq 459. Gems 456. hmme 450. sopl 437. lesl 436. cact 434. zeus 433. milc 429. mcf 401. bzip 400. perl Miss per 1000 insts. Cache Miss Count (1 -core) 60 OPT 40 20

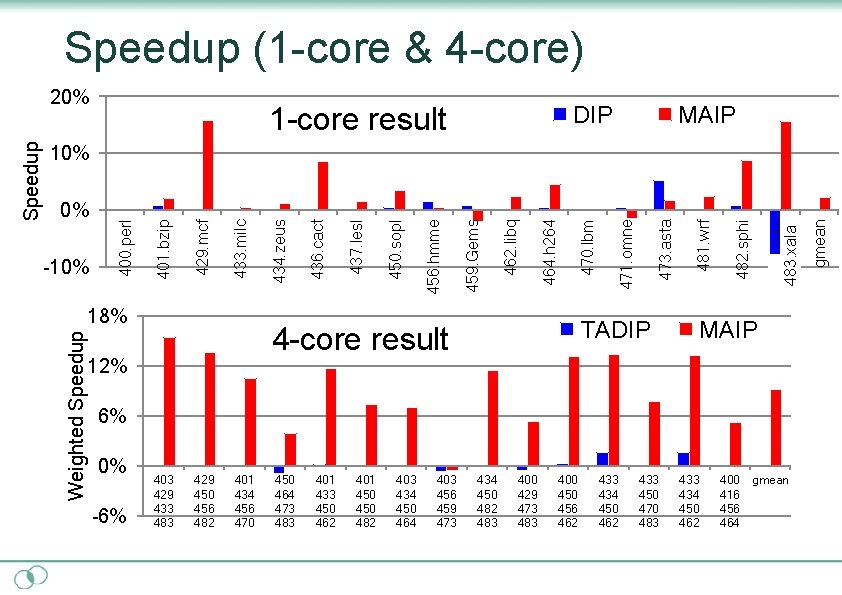

Speedup (1 -core & 4 -core) DIP 1 -core result MAIP 18% MAIP 6% 0% -6% 403 429 433 483 429 450 456 482 401 434 456 470 450 464 473 483 401 433 450 462 401 450 482 403 434 450 464 403 456 459 473 434 450 482 483 400 429 473 483 400 456 462 433 434 450 462 433 450 470 483 434 450 462 400 416 456 464 gmean 483. xala 482. sphi 481. wrf 473. asta 471. omne TADIP 4 -core result 12% 470. lbm 464. h 264 462. libq 459. Gems 456. hmme 450. sopl 437. lesl 436. cact 434. zeus 433. milc 429. mcf -10% 401. bzip 0% 400. perl 10% Weighted Speedup 20%

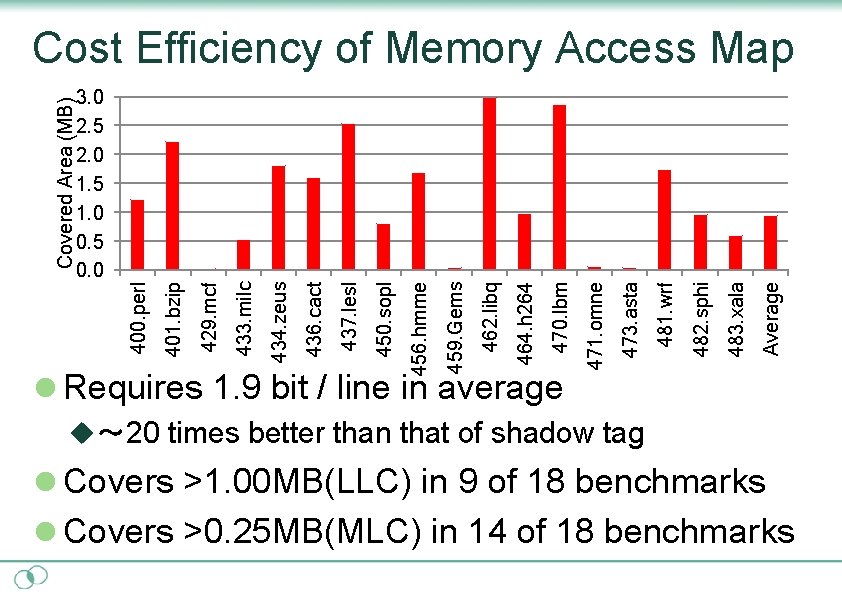

l Requires 1. 9 bit / line in average Average 483. xala 482. sphi 481. wrf 473. asta 471. omne 470. lbm 464. h 264 462. libq 459. Gems 456. hmme 450. sopl 437. lesl 436. cact 434. zeus 433. milc 429. mcf 401. bzip 3. 0 2. 5 2. 0 1. 5 1. 0 0. 5 0. 0 400. perl Covered Area (MB) Cost Efficiency of Memory Access Map u~ 20 times better than that of shadow tag l Covers >1. 00 MB(LLC) in 9 of 18 benchmarks l Covers >0. 25 MB(MLC) in 14 of 18 benchmarks

![Related Work l Uses spatial / temporal locality u. Using spatial locality [1997, Johnson+] Related Work l Uses spatial / temporal locality u. Using spatial locality [1997, Johnson+]](http://slidetodoc.com/presentation_image_h/dc3f7ca0cb12bfbbf39101e55a9a825f/image-18.jpg)

Related Work l Uses spatial / temporal locality u. Using spatial locality [1997, Johnson+] u. Using different types of locality [1995, González+] l Prediction-base dead-block elimination u. Dead-block prediction [2001, Lai+] u. Less Reused Filter [2009, Xiang+] l Modified Insertion Policy u. Dynamic Insertion Policy [2007, Qureshi+] u. Thread Aware DIP[2008, Jaleel+]

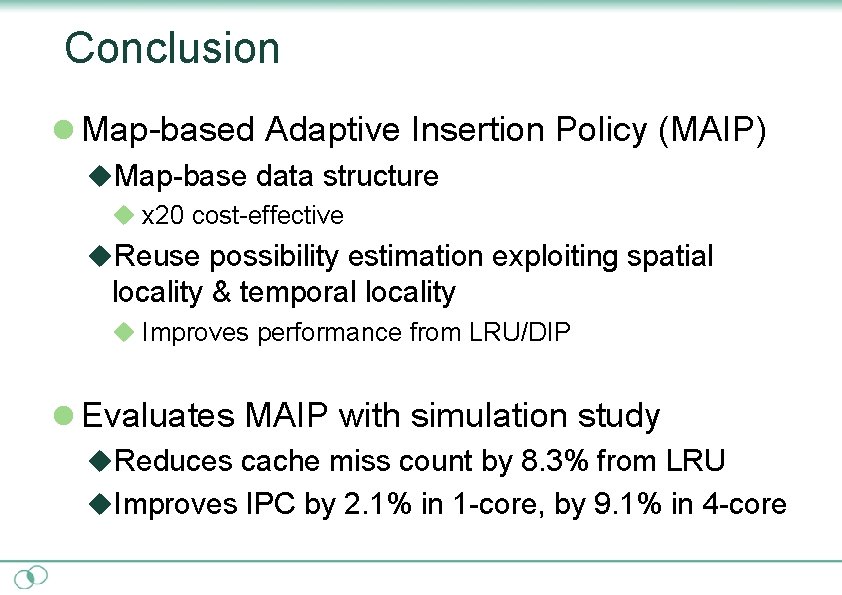

Conclusion l Map-based Adaptive Insertion Policy (MAIP) u. Map-base data structure u x 20 cost-effective u. Reuse possibility estimation exploiting spatial locality & temporal locality u Improves performance from LRU/DIP l Evaluates MAIP with simulation study u. Reduces cache miss count by 8. 3% from LRU u. Improves IPC by 2. 1% in 1 -core, by 9. 1% in 4 -core

Comparison Replacement Algorithm Deadblock Eliminatio n LRU Insert to MRU None DIP Partially Random Some [2007 Qureshi+] Insertion LRF Predicts from Strong [2009 Xiang+] reference pattern MAIP Predicts based on Strong l Improves by map twocost-efficiency localities Additional HW Cost None Several counters Light Shadow tag, PHT Heavy Mem access map data. Medium structure l Improves prediction accuracy by 2 localities

Q&A

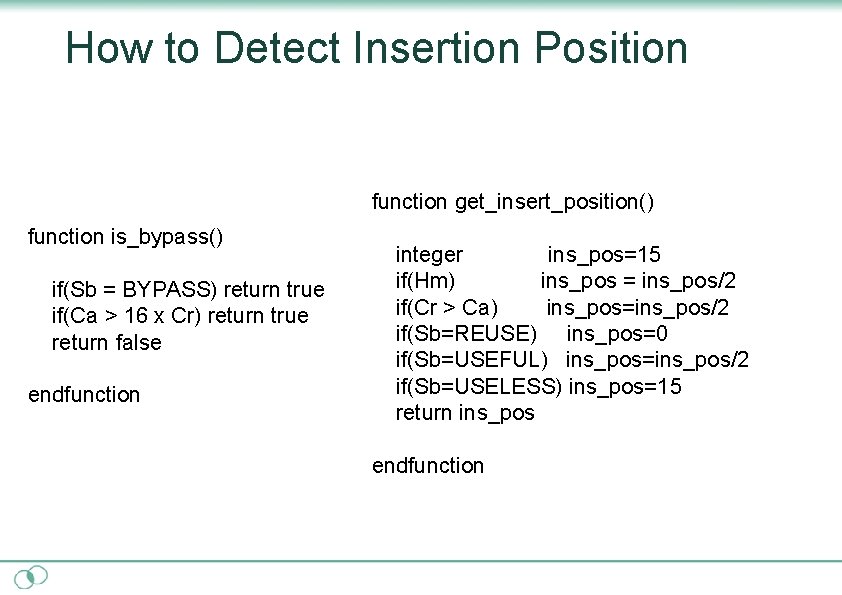

How to Detect Insertion Position function get_insert_position() function is_bypass() if(Sb = BYPASS) return true if(Ca > 16 x Cr) return true return false endfunction integer ins_pos=15 if(Hm) ins_pos = ins_pos/2 if(Cr > Ca) ins_pos=ins_pos/2 if(Sb=REUSE) ins_pos=0 if(Sb=USEFUL) ins_pos=ins_pos/2 if(Sb=USELESS) ins_pos=15 return ins_pos endfunction

- Slides: 22